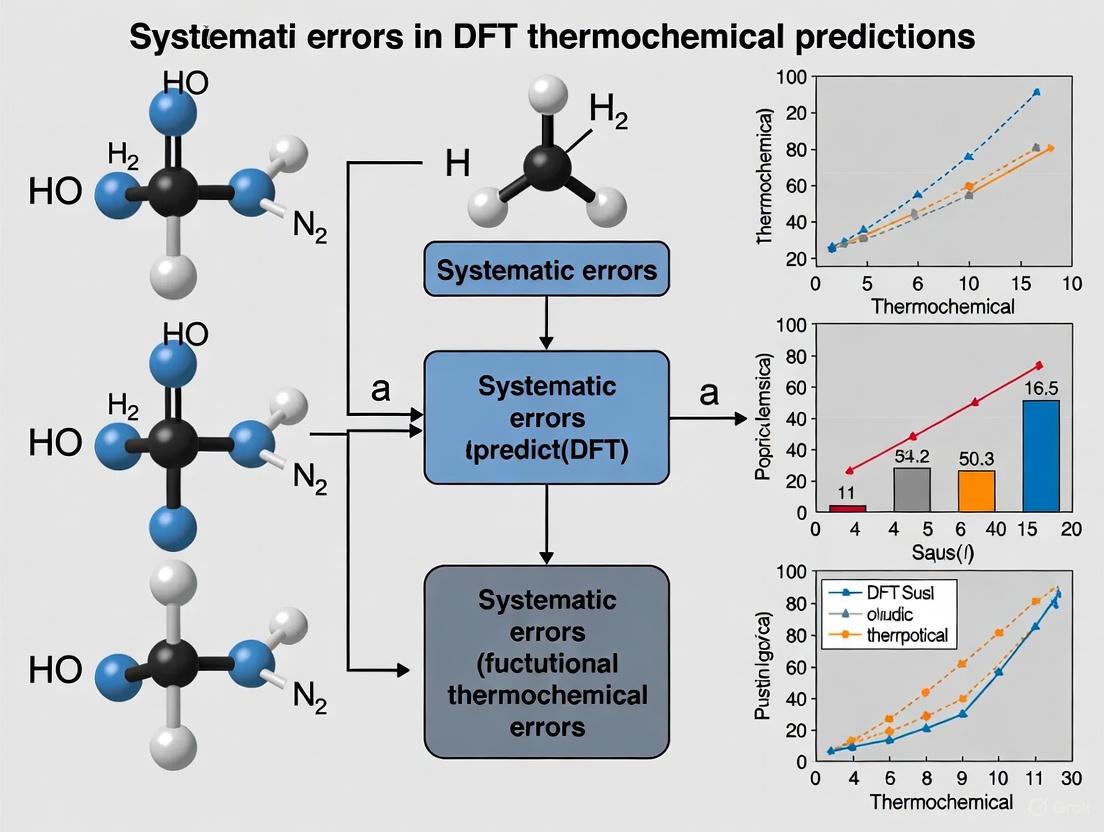

Addressing Systematic Errors in DFT Thermochemical Predictions: From Fundamental Challenges to Practical Solutions for Drug Development

Density Functional Theory (DFT) is indispensable in drug development for predicting molecular properties, but its accuracy is limited by systematic errors in thermochemical predictions.

Addressing Systematic Errors in DFT Thermochemical Predictions: From Fundamental Challenges to Practical Solutions for Drug Development

Abstract

Density Functional Theory (DFT) is indispensable in drug development for predicting molecular properties, but its accuracy is limited by systematic errors in thermochemical predictions. This article explores the fundamental origins of these errors, particularly in exchange-correlation functional approximations, and their critical impact on biochemical applications like binding affinity calculations. We review recent methodological advances, including non-empirical functionals, error correction schemes, and machine learning potentials that achieve DFT-level accuracy with enhanced efficiency. Practical strategies for functional selection, error quantification, and validation are presented, alongside comparative analyses of different approaches. This comprehensive guide empowers researchers to improve prediction reliability in drug discovery and biomolecular simulations.

Understanding the Roots of DFT Thermochemical Errors in Molecular Systems

Frequently Asked Questions (FAQs)

FAQ 1: What is the single most significant source of error in my DFT calculation? The most significant source of error is typically the approximation made for the exchange-correlation (XC) functional [1] [2]. DFT is in principle an exact theory, but in practice, the true functional for describing the many-electron interactions is unknown. The choice of approximate functional (e.g., LDA, GGA, hybrid) introduces systematic errors, as each has its own strengths and weaknesses for different properties and materials systems [3] [2]. This is often referred to as the "exchange-correlation problem."

FAQ 2: My calculation ran without errors, but the results are physically unreasonable. What went wrong? Your calculation may have converged to an incorrect electronic state or be suffering from numerical errors. Common issues include:

- Insufficient Integration Grid: The numerical grid used to integrate the XC energy can be too coarse, especially for modern functionals (e.g., meta-GGAs like M06 or SCAN) or for calculating sensitive properties like free energies. This can cause energies to change by several kcal/mol depending on the molecule's orientation [4].

- Self-Interaction Error (SIE): Approximate functionals do not fully cancel the electron's interaction with itself, leading to overly delocalized electrons. This adversely describes bond dissociation, charge-transfer systems, and reaction barriers [1] [5].

- Incorrect Electronic State: For open-shell systems (e.g., transition metals), multiple low-energy electronic states may exist. The calculation may have converged to a metastable state rather than the true ground state [6].

FAQ 3: Why does my calculation fail to converge during the self-consistent field (SCF) procedure? SCF convergence failures are often due to difficulties in finding a stable electron density, particularly for systems with metallic character, open shells, or near-degeneracies [4]. This can be mitigated by:

- Using robust convergence algorithms like a hybrid DIIS/ADIIS strategy.

- Applying a small level shift (e.g., 0.1 Hartree) to virtual orbitals.

- Tightening the tolerance for calculating two-electron integrals [4].

FAQ 4: For which types of systems is DFT known to perform poorly? DFT, with standard functionals, has known limitations for several classes of systems, many linked to the XC problem [1] [2]:

- Strongly Correlated Systems: Materials with localized d- or f-electrons (e.g., many transition metal oxides).

- Dispersion (van der Waals) Interactions: Standard LDA and GGA functionals do not capture these long-range interactions, requiring empirical corrections or non-local vdW functionals [3] [2].

- Charge-Transfer Excitations: In Time-Dependent DFT (TDDFT), charge-transfer excititations are severely underestimated with local functionals.

- Anions and Radicals: These are poorly described due to SIE, which makes it artificially easy to add an electron [1].

Troubleshooting Common Functional Failures

Lattice Parameter and Bulk Modulus Inaccuracies

Different XC functionals systematically over- or under-bind, leading to errors in predicted lattice constants and bulk moduli. The following table summarizes the performance of several common functionals for binary and ternary oxides [3].

Table 1: Systematic Errors in Lattice Constants for Various XC Functionals

| XC Functional | Mean Absolute Relative Error (MARE) | Tendency | Recommended for |

|---|---|---|---|

| LDA | 2.21% | Systematic underestimation (overbinding) | – |

| PBE | 1.61% | Systematic overestimation (underbinding) | – |

| PBEsol | 0.79% | Near zero average error | Solids, oxides |

| vdW-DF-C09 | 0.97% | Near zero average error | Sparse and dense structures |

Protocol: To diagnose and address these errors:

- Calculate the equilibrium lattice parameters and bulk modulus for your material of interest using two or more different functionals (e.g., PBE and PBEsol).

- Compare the results against experimental data or high-accuracy benchmarks if available.

- Select the functional whose systematic error best aligns with your required accuracy. For high-throughput screening without prior experimental data, PBEsol or vdW-DF-C09 are often better starting points for solids than PBE or LDA [3].

DFT+U Convergence and Occupancy Issues

DFT+U is used to correct for strong on-site correlation in localized electron shells, but it introduces its own set of challenges [6].

Table 2: Common DFT+U Problems and Solutions

| Problem | Potential Cause | Solution |

|---|---|---|

| "Pseudopotential not yet inserted" | Element not recognized for Hubbard correction, or incorrect ordering in input. | Check pseudopotential header; ensure Hubbard_U(n) corresponds to the correct species in the ATOMIC_SPECIES list [6]. |

| Unphysical Occupation Matrix | Non-normalized occupations from the pseudopotential's atomic orbital basis. | Change U_projection_type to 'norm_atomic' (note: this may disable forces/stresses) [6]. |

| Over-elongated Bonds | Using an arbitrarily large, non-system-specific U value. | Use a structurally consistent U procedure: compute U for the DFT geometry, relax with DFT+U, recompute U, and iterate until U and structure are consistent [6]. |

| Total energies at different U values are incomparable | The +U term acts as a potential shift, making total energies dependent on U. | Compare energies of different structures at the same, averaged U value. Significant variations in U with geometry can lead to errors [6]. |

Managing Numerical Errors in Forces and Datasets

Forces are critical for geometry optimization and molecular dynamics, and their accuracy is paramount for training reliable machine learning interatomic potentials. Suboptimal DFT settings can introduce significant numerical noise [7].

Protocol: Ensuring Well-Converged Forces

- Check Net Forces: A clear indicator of numerical errors is a non-zero net force on a system in the absence of external fields. For reliable forces, the net force per atom should be well below 1 meV/Å [7].

- Avoid Approximations: In some quantum chemistry codes (e.g., ORCA), the RIJCOSX approximation for evaluating integrals, while speeding up calculations, can be a primary source of force errors. Disabling it can significantly improve force accuracy [7].

- Use Tight Settings: Employ tightly converged parameters for the SCF procedure and the integration grid, especially when generating data for benchmarks or machine learning [4] [7].

Advanced Diagnostics: Decomposing DFT Errors

For cases where standard troubleshooting fails, or when a deeper understanding of functional failure is needed, you can decompose the total DFT error into two components [5]:

- Functional Error ((\Delta E_{func})): The error inherent to the functional, even with the exact electron density.

- Density-Driven Error ((\Delta E_{dens})): The error arising because the self-consistent DFT density is inaccurate.

The total error is: (\Delta E = E{DFT}[\rho{DFT}] - E[\rho] = \Delta E{dens} + \Delta E{func}) [5]

Experimental Protocol for Error Decomposition:

- Compute the Standard DFT Energy: Perform a self-consistent field calculation to obtain (E{DFT}[\rho{DFT}]).

- Compute a HF-DFT Single-Point Energy: Perform a Hartree-Fock calculation to obtain an SIE-free density, (\rho{HF}). Use this density, without reconverging, in a single-point DFT energy evaluation to get (E{DFT}[\rho_{HF}]).

- Calculate Error Components:

- The density sensitivity (\Delta E{DFT}[\rho{HF}] - E{DFT}[\rho{DFT}]) is a practical measure of the density-driven error.

- The remaining error, when compared to a gold-standard reference like CCSD(T), is the functional error [5].

This decomposition helps determine if a functional's poor performance is due to a bad density (suggesting a hybrid functional or HF-DFT might help) or a fundamentally flawed functional form [5].

Diagram: Workflow for Decomposing DFT Errors into Functional and Density-Driven Components

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Diagnosing XC Problems

| Tool / 'Reagent' | Function | Role in Diagnosing XC Problems |

|---|---|---|

| PBEsol Functional [3] | A GGA functional optimized for solids. | Serves as a benchmark for lattice parameter predictions; helps identify if PBE's overbinding or LDA's underbinding is the source of error. |

| Hybrid Functionals (e.g., B3LYP, PBE0, ωB97X) [1] [2] | Mix a portion of exact (Hartree-Fock) exchange with DFT exchange. | Used to assess and reduce delocalization error (SIE) in anions, charge-transfer systems, and reaction barriers. |

| DFT+U/V [6] | Adds a Hubbard model correction to standard DFT for localized states. | The primary "reagent" for correcting strongly correlated systems. A structurally consistent U value is critical. |

| Hartree-Fock Density [5] | Provides an SIE-free electron density. | The key ingredient in the HF-DFT protocol to isolate and quantify density-driven errors. |

| Tight Integration Grid (≥99,590) [4] | A dense numerical grid for XC integration. | Eliminates numerical noise in energies and forces, especially for meta-GGA and double-hybrid functionals. |

| Local CCSD(T) Methods [5] | Provides gold-standard reference energies near chemical accuracy (~1 kcal/mol). | The ultimate benchmark for quantifying total DFT error and validating error decomposition analyses. |

Systematic Error Patterns in Biomolecular Interactions and Charged Systems

Troubleshooting Guides

Guide 1: Diagnosing and Correcting Basis Set Superposition Error (BSSE)

Problem: Interaction energies between molecular fragments are artificially overestimated, leading to inaccurate binding affinities or conformational energies.

Explanation: BSSE is an artifact of using incomplete quantum chemical basis sets. A fragment in the system can artificially lower its energy by using the basis functions of a nearby fragment, making interactions appear stronger than they are [8].

Diagnosis Checklist:

- Check 1: Are you using a small or medium-sized basis set (e.g., 6-31G*) in quantum chemistry calculations?

- Check 2: Is the error more pronounced for non-covalent interactions like hydrogen bonds or van der Waals contacts?

- Check 3: Does the error increase as the number of fragment interactions in the system grows?

Solution: Apply a BSSE correction. The standard method is the counterpoise correction, which involves recalculating the energy of each fragment using the full basis set of the entire complex [8]. For large systems like proteins, a fast estimation method using a geometry-dependent model can be used instead of the computationally expensive full counterpoise [8].

Experimental Protocol: Counterpoise Correction for a Dimer (A-B)

- Calculate the energy of the full complex A-B in the full basis set: E(A-B)

- Calculate the energy of fragment A in the full basis set of A-B (i.e., with "ghost" basis functions of B present): E(A')

- Calculate the energy of fragment B in the full basis set of A-B (with "ghost" basis functions of A present): E(B')

- The BSSE is then: ΔE_BSSE = [E(A') - E(A)] + [E(B') - E(B)], where E(A) and E(B) are the energies of the isolated fragments.

- The corrected interaction energy is: ΔEcorrected = E(A-B) - E(A) - E(B) - ΔEBSSE [8].

Guide 2: Managing Systematic Errors in Density Functional Theory (DFT) Functional Performance

Problem: DFT predictions for reaction energies, lattice parameters, or binding affinities show consistent, predictable deviations from experimental or high-level benchmark data.

Explanation: The approximation for the exchange-correlation (XC) functional is a primary source of systematic error in DFT. Different functionals have known biases, such as overbinding or underbinding, and their performance is highly dependent on the chemical system and property being calculated [9] [10].

Diagnosis Checklist:

- Check 1: Does your calculated property (e.g., lattice constant, reaction free energy) consistently deviate from experimental values in one direction (e.g., always too high or too low)?

- Check 2: Is the error correlated with specific chemical elements or types of bonding (e.g., worse for transition metals or van der Waals interactions)?

- Check 3: Does the error persist even with increased basis set size and rigorous convergence, indicating it is not a basis set artifact?

Solution: Select an appropriate functional based on established benchmarks for your chemical system. Alternatively, use a multi-functional approach or apply empirical corrections.

Experimental Protocol: Functional Selection for Rhodium-Catalyzed Reactions

- Benchmarking: If possible, test several functionals on a small, related system with known experimental data.

- Selection: Refer to benchmarking studies. For Rh-mediated reactions, PBE0-D3 and MPWB1K-D3 have shown low mean unsigned errors across multiple reaction types [10].

- Validation: For high-throughput screening, use materials informatics to predict the expected error of a functional based on the system's electron density and composition [9].

Table 1: Common DFT Functional Error Patterns

| Functional Class | Typical Systematic Error Patterns | Recommended for Systems/Properties |

|---|---|---|

| LDA | Overbinding; underestimates lattice constants and bond lengths [9] | Not generally recommended for molecular systems. |

| GGA (e.g., PBE) | Underbinding; overestimates lattice constants [9] | General purpose; often a starting point. |

| Meta-GGA (e.g., SCAN) | Improved accuracy over GGA, but computationally more expensive [9] | Solid-state and molecular systems. |

| Hybrid (e.g., PBE0, B3LYP) | Mixes exact exchange, often improving reaction energies and band gaps [9] [10] | Molecular thermochemistry, reaction barriers. |

| Double-Hybrid | High accuracy for thermochemistry, but very high computational cost [10] | Small molecule benchmark-quality calculations. |

Guide 3: Addressing Electrostatic Truncation Errors in Molecular Dynamics

Problem: Potentials of mean force (PMF) for ion pairing or charged side-chain interactions are inaccurate and depend on the size of the simulation box.

Explanation: In molecular dynamics, using a group-based cutoff for electrostatic interactions (e.g., truncating by molecule center) in a reaction-field (RF) method introduces significant systematic errors in mixed media like salt solutions. This error arises from an incorrect treatment of the inhomogeneous dielectric environment around ions [11].

Diagnosis Checklist:

- Check 1: Are you using a reaction-field or simple cutoff method for electrostatics?

- Check 2: Is the truncation scheme based on charge groups rather than individual atoms?

- Check 3: Does the association energy of ions change when you vary the simulation box size?

Solution: Switch to an atom-based truncation scheme for the reaction field or, preferably, use a lattice-sum method like Particle-Mesh Ewald (PME) for accurate electrostatic treatment in heterogeneous systems [11].

Experimental Protocol: Switching to Atom-Based Reaction Field

- In your simulation parameters, identify the setting for electrostatic truncation.

- Change from a "group-based" or "molecule-based" cutoff to an "atom-based" cutoff.

- Ensure the cutoff distance is appropriate (typically 1.0-1.2 nm).

- Re-run the simulation and compare the results (e.g., PMF of ion pairing) with the previous group-based results. The atom-based method should show better agreement with PME and reduced box-size dependence [11].

Frequently Asked Questions (FAQs)

FAQ 1: How do I know if the error in my calculation is systematic or random?

- A: Systematic errors are consistent and reproducible biases in one direction. For example, if a functional always predicts longer bond lengths than experiment, that is a systematic error. Random errors are unpredictable fluctuations around the true value. In energy functions, systematic error (bias, μ) is corrected by subtracting it, while random error (imprecision, σ) is reported as an uncertainty [12] [13].

FAQ 2: Why do errors become larger in bigger systems like proteins?

- A: The total error in a biomolecular system can be approximated as the sum of errors from individual fragment interactions (systematic error) and the square root of the sum of their variances (random error). As the system size grows and the number of interactions (N) increases, the total systematic error grows with N, and the random error grows with √N [13]. Even small per-interaction errors can accumulate to very large overall errors.

FAQ 3: How can I reduce random errors in my computed free energies?

- A: Move away from single "end-point" calculations. Instead, use methods that incorporate sampling of multiple microstates. The random error in a free energy estimate is the Pythagorean sum of the weighted random errors from each sampled microstate. Sampling multiple conformations naturally reduces this random error, unlike an end-point method which retains the full random error of a single structure [12].

FAQ 4: What is the best way to handle errors for high-throughput screening?

- A: For high-throughput DFT studies, it is impractical to test every functional. Instead, use materials informatics and machine learning to predict the expected error ("error bar") for a given functional on a specific class of materials. This allows you to choose the functional with the smallest predicted error for your screening project [9].

Research Reagent Solutions

Table 2: Key Computational Tools and Methods for Error Management

| Reagent / Method | Function / Purpose | Application Context |

|---|---|---|

| Counterpoise Correction | Corrects for Basis Set Superposition Error (BSSE) in quantum chemistry [8]. | Intermolecular interaction energy calculations. |

| Fast BSSE Estimation Model | Quickly estimates BSSE magnitude without additional QM calculations using a geometry-based descriptor [8]. | Large biomolecular systems where full counterpoise is too expensive. |

| Best Linear Unbiased Estimator (BLUE) | Combines results from multiple DFT functionals to exploit error correlations and improve accuracy [14]. | Improving thermochemical predictions (e.g., as in the PF3 method). |

| Particle-Mesh Ewald (PME) | A lattice-sum method for treating long-range electrostatics accurately in molecular dynamics. | Reference method for simulating ionic solutions and biomolecules [11]. |

| Atom-Based Reaction Field | An improved cutoff scheme that reduces systematic errors in electrostatic potentials of mean force [11]. | Alternative to PME when using reaction-field electrostatics. |

| Fragment-Based Error Estimation | A statistical method to estimate and correct systematic and random errors in potential energy functions for large systems [13]. | Proteins and protein-ligand complexes modeled with force fields or QM. |

Workflow and Relationship Diagrams

Error Diagnosis and Management Workflow

The following diagram outlines a logical pathway for diagnosing and addressing systematic errors in computational experiments.

Fragment-Based Error Propagation Logic

This diagram visualizes the core concept of how small errors in modeling fragment interactions propagate to create large errors in a full biomolecular system.

Impact of Functional Choice on Non-Covalent Interaction Energy Predictions

Non-covalent interactions (NCIs) are fundamental in numerous chemical and biological processes, governing molecular recognition, protein folding, supramolecular assembly, and drug binding. Within the framework of Density Functional Theory (DFT), the accurate prediction of NCI energies remains a significant challenge due to the heavy reliance on the approximate exchange-correlation functional. This guide addresses the systematic errors introduced by functional choice in DFT thermochemical predictions for NCIs, providing troubleshooting and methodological guidance for researchers.

Troubleshooting Common DFT-NCI Problems

FAQ 1: Why are my calculated binding energies for a supramolecular complex significantly underestimated compared to experimental values?

This is a classic symptom of missing dispersion interactions. Many popular functionals, especially those developed without non-empirical dispersion corrections, do not adequately capture these long-range, weak correlation forces [15].

- Problem: The functional (e.g., B3LYP without a dispersion correction) fails to describe the attractive London dispersion forces, leading to underbound complexes and unrealistically large intermolecular distances [16] [15].

- Solution: Employ a functional that includes a treatment of dispersion.

- Add an Empirical Correction: Use a method with an added dispersion correction, such as B3LYP-D3, PBE-D3, or ωB97M-D3 [17] [18].

- Use a vdW Functional: Select a van der Waals density functional like vdW-DF-C09, which can provide high accuracy for lattice parameters and binding [3].

- Choose a Parameterized Functional: Functionals like M06-2X and ωB97X-D have parameters optimized for NCIs and include medium-range correlation effects [17].

FAQ 2: My DFT calculations for a stack of nucleobases show large errors. How can I diagnose and fix this?

Stacking interactions in π-systems are dominated by dispersion, which is poorly described by standard functionals. A recent study showed that the HF-SCAN functional, while excellent for pure water, systematically underbinds stacked cytosine dimers by about 2.5 kcal/mol due to missing dispersion [18].

- Problem: The chosen functional lacks an accurate description of dispersion, a critical component of π-π stacking.

- Diagnosis: Compare your results with higher-level methods or benchmark data. Calculate the interaction energy using a method like HF-r2SCAN-DC4, which was specifically designed to recover dispersion interactions while maintaining accuracy for hydrogen-bonded systems like water [18].

- Solution: Switch to a dispersion-corrected functional validated for biochemical NCIs, such as HF-r2SCAN-DC4 or B3LYP-D3(BJ) [18].

FAQ 3: Why do I get inconsistent reaction barrier heights with different hybrid functionals?

This discrepancy can arise from density-driven errors, where the self-consistent DFT density is flawed. This error is separate from the inherent error of the functional itself [5] [1].

- Problem: The self-interaction error (SIE) in the functional can cause excessive delocalization of the electron density, which disproportionately affects transition states and reaction barriers [5].

- Diagnosis & Solution: Perform a density-functional error decomposition [5].

- Calculate the total energy with the self-consistent density:

E_DFT[ρ_DFT]. - Calculate the single-point energy using a more reliable density (e.g., from Hartree-Fock):

E_DFT[ρ_HF]. - The difference,

ΔE_dens = E_DFT[ρ_DFT] - E_DFT[ρ_HF], is the density-driven error. A large value indicates this error is significant. - If the density-driven error is large, using the HF-DFT approach (evaluating your functional on the HF density) can significantly improve results [5] [18].

- Calculate the total energy with the self-consistent density:

FAQ 4: How can I quickly improve the accuracy of NCI energies from a standard DFT calculation without recomputing everything?

Machine learning (ML) corrections offer a powerful post-processing solution.

- Solution: Apply a machine learning correction to your DFT-computed NCI energy [17].

- The corrected energy is:

E_nci^(DFT-ML) = E_nci^(DFT) + E_nci^(Corr) - The correction term (

E_nci^(Corr)) is predicted by a model trained on benchmark datasets (e.g., S22, S66, X40). This approach can reduce the root-mean-square error of DFT calculations by at least 70%, achieving accuracy near high-level ab initio methods at a fraction of the cost [17].

- The corrected energy is:

Quantitative Comparison of Functional Performance

The table below summarizes the performance of various functionals for key properties, highlighting the impact of functional choice.

Table 1: Performance of Select Density Functionals for Various Properties

| Functional | Type | Non-Covalent Interactions | Thermochemistry | Reaction Barriers | Lattice Constants (MARE%) |

|---|---|---|---|---|---|

| B3LYP | Hybrid GGA | Poor without dispersion correction [16] [15] | Good for small covalent systems [16] | Poor [16] | - |

| B3LYP-D3 | Empirical Dispersion-Corrected | Good [17] | - | - | - |

| M06-2X | Parameterized Meta-Hybrid | Good [17] | - | - | - |

| ωB97M-D3(BJ) | Empirical Dispersion-Corrected | Good [7] | - | - | - |

| PBE | GGA | Poor for dispersion [15] | - | - | 1.61% [3] |

| PBEsol | GGA | - | - | - | 0.79% [3] |

| LDA | LDA | Poor for dispersion [15] | - | - | 2.21% [3] |

| vdW-DF-C09 | vdW-DF | Good [3] | - | - | 0.97% [3] |

| XYG3 | Doubly Hybrid | Accurate for nonbond interactions [16] | Remarkably accurate [16] | Accurate [16] | - |

| HF-SCAN | Meta-GGA (HF density) | Poor for dispersion-dominated NCIs [18] | - | - | - |

| HF-r2SCAN-DC4 | DC-DFT with Dispersion | Good for diverse NCIs and water [18] | - | - | - |

Table 2: Mean Absolute Relative Error (MARE) of Lattice Constants for Oxides [3]

| Functional | MARE (%) | Standard Deviation (%) |

|---|---|---|

| PBEsol | 0.79 | 1.35 |

| vdW-DF-C09 | 0.97 | 1.57 |

| PBE | 1.61 | 1.70 |

| LDA | 2.21 | 1.69 |

Experimental Protocols for Reliable NCI Predictions

Protocol 1: A Step-by-Step Workflow for Validating Functional Choice in NCI Studies

This workflow helps you systematically select and validate a functional for your specific system.

Workflow for Functional Selection

- Define System and Interactions: Clearly identify the types of NCIs (H-bonding, π-π stacking, dispersion, etc.) present in your system.

- Literature Review: Investigate which functionals have been successfully applied to similar molecules or problems.

- Benchmark on a Model System:

- Create a Smaller Model: Extract a smaller, computationally manageable fragment that contains the essential NCI motifs.

- Generate High-Level Reference: Compute highly accurate interaction energies for the model system using a gold-standard method like LNO-CCSD(T)/CBS, which can provide chemical accuracy (~1 kcal/mol) [5].

- Test Candidate Functionals: Calculate the interaction energies for the model system using a range of candidate functionals (e.g., B3LYP-D3, ωB97M-V, M06-2X, HF-r2SCAN-DC4).

- Statistical Comparison: Compute the Mean Absolute Error (MAE) and Root-Mean-Square Error (RMSE) of the candidate functionals against the reference data.

- Select and Apply Functional: Choose the functional that provides the best agreement with the benchmark data for your production calculations on the full system.

Protocol 2: Diagnosing Density-Driven Errors with HF-DFT

This protocol helps determine if your calculation suffers from significant density-driven errors [5] [18].

- Perform a Standard Self-Consistent DFT Calculation: Optimize the geometry and compute the single-point energy (

E_SCF) for your system (reactant, transition state, product). - Perform a Hartree-Fock Calculation: Using the same geometry, run a Hartree-Fock calculation to generate a density.

- Perform a Single-Point Energy Calculation: Using the functional from Step 1, perform a single-point energy calculation on the Hartree-Fock density. This yields

E_DFT[ρ_HF]. - Calculate the Density-Driven Error:

ΔE_dens = E_SCF - E_DFT[ρ_HF]

- Interpret the Results:

- A large

ΔE_dens(e.g., > 1-3 kcal/mol) indicates a significant density-driven error. - In such cases,

E_DFT[ρ_HF](the HF-DFT energy) is often a more reliable prediction than the self-consistentE_SCF.

- A large

The Scientist's Toolkit: Key Research Reagents and Computational Solutions

Table 3: Essential Tools for DFT-NCI Research

| Tool / "Reagent" | Function / Purpose |

|---|---|

| Dispersion Corrections (D3, D4) [17] [18] | Empirical add-ons to account for missing dispersion interactions in standard functionals. |

| Fifth-Rung Doubly Hybrid Functionals (XYG3) [16] | Include information from unoccupied orbitals via second-order perturbation theory, improving descriptions of thermochemistry, barriers, and NCIs. |

| vdW Density Functionals (vdW-DF-C09) [3] | A class of non-empirical functionals designed to include non-local correlation, capturing dispersion without empirical parameters. |

| Density-Corrected DFT (DC-DFT) [5] [18] | A framework that separates functional and density errors. HF-DFT is a common implementation that uses the HF density to eliminate density-driven errors. |

| Benchmark Databases (S22, S66, X40) [17] | Curated sets of molecules with highly accurate reference interaction energies for validating and training computational methods. |

| Machine Learning Corrections [17] | Post-processing models that map DFT-calculated NCI energies to near-CCSD(T) accuracy, dramatically reducing errors with low computational overhead. |

| Local Correlation Methods (LNO-CCSD(T)) [5] | Efficient implementations of coupled-cluster theory that enable gold-standard reference calculations for larger systems than previously possible. |

Advanced Visualization: The Error Decomposition Framework

The following diagram illustrates the process of decomposing the total DFT error into its functional and density-driven components, a key concept for advanced troubleshooting [5].

DFT Error Decomposition

Troubleshooting Guide: Common DFT Errors and Solutions

This section addresses frequent challenges in calculating the thermochemical properties of ruthenium oxides (RuO(_x)) using Density Functional Theory (DFT) and provides practical solutions.

Table: Troubleshooting Common DFT Errors for RuO(_x) Systems

| Problem | Underlying Cause | Solution | Preventative Measures |

|---|---|---|---|

| Systematic underestimation of formation energies, error scales with number of O atoms [19] | Repulsive interactions among Ru–O bonds not adequately described by standard functionals [19] | Apply a simple, system-specific correction scheme that scales linearly with oxygen content [19] | Test multiple functionals (GGAs, meta-GGAs, hybrids) to understand error range [19] |

| Inaccurate Pourbaix diagrams, leading to incorrect electrochemical stability predictions [19] | Errors in formation energies of RuO(_x) species propagate into the thermodynamic models [19] | Use corrected formation energies to construct Pourbaix diagrams; this aligns computational results with experimental data [19] | Always validate computed Pourbaix diagrams against known experimental stability windows [19] |

| Difficulty identifying active ArM variants | Case-specific engineering strategies and low-throughput screening methods [20] | Adopt a systematic, reaction-independent platform using a pre-defined, sequence-verified Sav mutant library [20] | Use a periplasmic compartmentalization strategy in E. coli for consistent, high-throughput assays [20] |

| ArM activity results conflated with expression levels | Low-expressing Sav variants falsely appear less active if cofactor concentration is too high [20] | Set cofactor concentration (e.g., ≤10 μM) below the biotin-binding site concentration for >90% of library variants [20] | Quantify expression levels of all protein mutants using a quenching assay with biotinylated fluorescent probes [20] |

Frequently Asked Questions (FAQs)

Q1: Why do my DFT calculations for RuO(_2) formation energy show significant deviations from experimental values, and how can I fix this?

The inaccuracies are systematic and originate from the inability of many standard exchange-correlation functionals (including GGAs, meta-GGAs, and hybrids) to correctly describe the repulsive interactions among multiple Ru–O bonds in a compound. This error grows predictably with the number of oxygen atoms (x) in RuO(x) [19]. Solution: Implement a systematic correction scheme. Research indicates that the total error for a compound RuO(x) can be mitigated by applying a functional-specific correction that scales linearly with x. This approach has been shown to bring computational predictions, such as Pourbaix diagrams, much closer to experimental results [19].

Q2: What is a key consideration when setting up a high-throughput screening for artificial metalloenzymes (ArMs) to ensure results reflect true activity?

A critical factor is decoupling the protein's expression level from the measured activity. If the cofactor concentration exceeds the number of available biotin-binding sites for a given mutant, low-expressing variants may be misclassified as inactive. Solution: Determine the expression level of your entire protein library first. Then, set the cofactor concentration for the assay below the concentration of biotin-binding sites for the majority of your variants (e.g., 10 μM). This ensures the measured activity is due to the specific catalytic efficiency of the mutant and not its expression level [20].

Q3: In metrology, what is a more nuanced way to think about systematic error (bias) in my measurements?

Traditional models often treat systematic error as a constant offset. A more advanced view distinguishes between two components: a Constant Component of Systematic Error (CCSE), which is correctable through calibration, and a Variable Component of Systematic Error (VCSE(t)), which behaves as a time-dependent function and cannot be efficiently corrected. This model recognizes that long-term quality control data are not normally distributed and that the standard deviation from such data includes both random error and the variable bias component [21].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Components for Systematic ArM Engineering and RuO(_x) Studies

| Reagent/Material | Function/Description | Application Context |

|---|---|---|

| Streptavidin (Sav) 112X 121X Library | A full-factorial, sequence-defined library of 400 Sav mutants, diversifying two key amino acid positions near the catalytic metal site [20]. | Systematic engineering of ArMs; serves as a universal starting point for optimizing activity for various reactions [20]. |

| Biotinylated Metal Cofactors | Organometallic catalysts (e.g., Biot-NHC)Ru, (Biot-HQ)CpRu) linked to biotin for anchoring within Sav [20]. | Imparting new-to-nature reactivity into the protein scaffold for catalysis (e.g., ring-closing metathesis, deallylation) [20]. |

| OmpA Signal Peptide | An N-terminal fusion tag that directs the mature Sav protein to the periplasm of E. coli [20]. | Enables periplasmic compartmentalization for whole-cell ArM assembly and screening, improving cofactor access and reaction compatibility [20]. |

| Exchange-Correlation Functionals (PBE, SCAN, HSE06) | Mathematical approximations to solve the quantum mechanical equations in DFT. Their accuracy varies for different systems [19]. | Computational study of RuO(_x) thermochemistry. Comparing results across multiple functionals (GGA, meta-GGA, hybrid) helps identify and quantify systematic errors [19]. |

Experimental & Computational Workflows

Workflow for Systematic ArM Engineering and Screening

Workflow for Assessing and Correcting RuOxStability

Electron Density and Hybridization Effects on Prediction Accuracy

Troubleshooting Guides

Guide 1: Addressing Incorrect Lattice Parameters and Over/Under-binding

- Problem: Optimized crystal structures show lattice parameters that are consistently too long or too short compared to experimental values.

- Underlying Cause: This is a classic sign of systematic errors in the exchange-correlation (XC) functional, often linked to its inaccurate treatment of electron density and orbital hybridization, leading to over-binding (shorter lattices) or under-binding (longer lattices) [3].

- Diagnosis & Resolution:

- Quantify the Error: Calculate the percentage error in your lattice parameters against reliable experimental data.

- Consult Error Tables: Compare your error against typical errors for common functionals, as shown in Table 1. This helps identify if your chosen functional is inappropriate for your material class [3].

- Switch Functionals: If errors are large, switch to a functional with better performance for solid-state materials, such as PBEsol or vdW-DF-C09 [3].

Guide 2: Correcting Force Errors in Molecular Dynamics and MLIP Training

- Problem: Forces on atoms are inaccurate, leading to faulty geometries in optimizations, unphysical dynamics, or high errors when training Machine Learning Interatomic Potentials (MLIPs) [7].

- Underlying Cause: Numerical errors from unconverged electron densities or approximations in the DFT setup. A clear indicator is a significant non-zero net force on a molecule, which should be zero in the absence of external fields [7].

- Diagnosis & Resolution:

- Check Net Forces: Always check the magnitude of the net force on your system. A net force above 1 meV/Å per atom indicates significant force component errors [7].

- Tighten Numerical Settings:

- Disable RIJCOSX: In ORCA, disabling the RIJCOSX approximation for Coulomb and exact exchange integrals can eliminate large net forces [7].

- Use Dense Integration Grids: For modern functionals (e.g., ωB97M, SCAN), use dense grids like (99, 590). Small grids can cause large, orientation-dependent errors in energies and forces [4].

- Recompute References: For critical datasets, recompute forces on a subset of configurations with tighter, more reliable DFT settings to quantify and correct the errors [7].

Guide 3: Improving Band Gap and Density of States Predictions

- Problem: DFT-predicted band gaps are severely underestimated, or the Density of States (DOS) does not align with experimental photoemission spectra.

- Underlying Cause: Semilocal functionals (LDA, GGA) suffer from self-interaction error and are poor at describing the localization and delocalization of electrons. This directly impacts band gaps and the position of energy levels in the DOS [22].

- Diagnosis & Resolution:

- Analyze Projected DOS (PDOS): Check for incorrect orbital hybridization in the PDOS. Errors are often concentrated in chemically meaningful bonding regions [23].

- Employ Hybrid Functionals: Use hybrid functionals like PBE0 or HSE06, which mix in a portion of exact Hartree-Fock exchange. These provide a more accurate description of electron localization and significantly improve band gap predictions [22].

- Consider DFT+U: For strongly correlated systems (e.g., transition metal oxides), use the DFT+U method to apply a corrective potential and better localize electrons in specific orbitals [22].

Guide 4: Managing Low-Frequency Modes in Thermochemical Predictions

- Problem: Spurious low-frequency vibrational modes lead to explosively large and inaccurate entropic contributions, corrupting free energy predictions [4].

- Underlying Cause: These modes may arise from incomplete geometric optimization or may be inherent quasi-rotational/translational modes. When treated as genuine vibrations, they inflate the entropy [4].

- Diagnosis & Resolution:

- Inspect Frequencies: Always check for vibrational modes below 100 cm⁻¹.

- Apply the Truhlar Correction: Follow the recommended Cramer-Truhlar correction: raise all non-transition-state frequencies below 100 cm⁻¹ to 100 cm⁻¹ for the purpose of computing entropic corrections. This prevents spurious modes from dominating the free energy [4].

Frequently Asked Questions (FAQs)

Q1: How do I know if the errors in my DFT calculation are due to the electron density or the functional itself? You can use real-space analysis tools like Quantum Chemical Topology (QCT). "Well-built" functionals tend to show electron density errors that are localized to chemically meaningful regions (e.g., bonding areas), making them predictable and understandable. In contrast, heavily parametrized functionals can show isotropic, non-chemical error distributions that are harder to trace and correct [23].

Q2: My system is a multi-component material with interfaces. What electron density-related errors should I watch for? A key parameter is the Work Function (WF) difference between different phases or surfaces. A large WF difference at a heterointerface drives interfacial polarization, but standard GGA functionals can misestimate the WF and the degree of electron localization at the interface. Use hybrid functionals or GW methods for more accurate WF predictions, which are critical for understanding interfacial properties in composites [22].

Q3: Why do my calculated forces change when I re-orient the molecule in the unit cell, even with the same functional and basis set? This is a classic sign of an insufficient integration grid. The DFT grid is not perfectly rotationally invariant. For modern meta-GGA and hybrid functionals, a dense grid (e.g., 99,590) is essential. Using a small grid can lead to energy and force errors that depend on the molecule's orientation, sometimes varying by several kcal/mol [4].

Q4: How can I systematically estimate the "error bar" for my DFT-predicted lattice constants? Recent approaches use materials informatics and machine learning. You can train models on datasets where DFT calculations are compared to experimental measurements for a wide range of materials. These models learn to predict the expected error based on material features like electron density and elemental composition, effectively providing a functional- and material-specific error bar [3].

Data Tables

| XC Functional | Type | Mean Absolute Relative Error (MARE) | Standard Deviation | Best For |

|---|---|---|---|---|

| LDA | LDA | 2.21% | 1.69% | --- |

| PBE | GGA | 1.61% | 1.70% | --- |

| PBEsol | GGA | 0.79% | 1.35% | Solids, oxides |

| vdW-DF-C09 | vdW-DF | 0.97% | 1.57% | Layered, sparse materials |

| Dataset | Level of Theory | Average Force Error vs. Reference (meV/Å) | Notes |

|---|---|---|---|

| ANI-1x (large basis) | ωB97x/def2-TZVPP | 33.2 | Severe errors; RIJCOSX suspected |

| Transition1x | ωB97x/6-31G(d) | 13.6 | Significant errors |

| AIMNet2 | ωB97M-D3(BJ)/def2-TZVPP | 5.3 | Moderate errors |

| SPICE | ωB97M-D3(BJ)/def2-TZVPPD | 1.7 | Relatively good |

Experimental Protocols

Protocol 1: Benchmarking Force Accuracy in a Molecular Dataset

Purpose: To quantify and correct systematic force errors in a dataset intended for MLIP training [7]. Steps:

- Subsample: Randomly select a representative subset (e.g., 1000 configurations) from your dataset.

- Recompute with High-Fidelity Settings: Single-point force calculations on these configurations using the same functional and basis set, but with more robust numerical settings:

- Disable the RIJCOSX approximation (in ORCA) or similar integral approximations.

- Use a dense integration grid (e.g., (99,590) or its equivalent in your code).

- Tighten SCF convergence and energy thresholds.

- Calculate Error: For each configuration, compute the root-mean-square error (RMSE) between the original forces and the recomputed high-fidelity forces.

- Analyze and Filter: Report the average force error across the subset (see Table 2 for examples). Consider filtering out configurations with errors above a chosen threshold (e.g., > 1 meV/Å) before MLIP training.

Protocol 2: Real-Space Analysis of Electron Density Errors

Purpose: To localize and understand the source of electron density inaccuracies in different density functional approximations [23]. Steps:

- Calculate Reference Density: For a small model system (e.g., N₂, CO), compute a highly accurate electron density using a high-level wavefunction method (e.g., CCSD(T)) with a large basis set.

- Calculate DFT Densities: Compute the electron density for the same system using various DFT functionals (e.g., a GGA, a meta-GGA, and a hybrid).

- Perform Topological Analysis: Use a quantum chemical topology program (e.g., Multiwfn) to analyze the densities. Calculate the Electron Localization Function (ELF) or perform an Atoms in Molecules (AIM) analysis.

- Plot Error Distributions: Create real-space maps of the density difference (DFT density minus reference density). Analyze how errors are distributed: whether they are concentrated in bonding, non-bonding, or core regions, and if the pattern is chemically meaningful [23].

Diagrams

Diagram Title: DFT Error Diagnosis and Resolution Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| Hybrid Functionals (e.g., HSE06, PBE0) | Mix a portion of exact exchange to reduce self-interaction error, improving band gaps and electron localization descriptions [22]. |

| Dense Integration Grid (e.g., (99,590)) | Ensures numerical accuracy in evaluating XC energy and potentials, preventing orientation-dependent errors, especially for modern functionals [4]. |

| Quantum Chemical Topology (QCT) Software | Provides real-space descriptors (ELF, AIM) to visualize and localize electron density errors in bonding regions, avoiding error compensation in integrated metrics [23]. |

| Bayesian Error Estimation | Uses an ensemble of XC functionals to represent calculated quantities as a distribution; the spread provides an uncertainty quantification for the prediction [3]. |

| DFT+U / DFT+DMFT | Adds a corrective potential (Hubbard U) to treat strong electron correlation in localized d or f orbitals, correcting hybridization and localization errors [22]. |

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: Why does my molecular docking simulation yield incorrect binding poses, even when the binding affinity score seems reasonable? Incorrect binding poses often result from inadequate sampling of ligand conformations, particularly the failure to properly model rotatable bonds and ligand flexibility. The sampling algorithm may not explore the full conformational space, leading to poses that are physically unrealistic. Furthermore, limitations in the scoring function can fail to penalize these incorrect poses adequately, especially for ligands with high molecular weight or a large number of rotatable bonds [24]. Standard docking programs can struggle with torsion sampling, which is a known source of error [24].

- Troubleshooting Protocol:

- Visual Inspection: Always visually inspect the top-ranked docking poses in a molecular visualization tool (e.g., UCSF Chimera, PyMOL). Check for unrealistic bond angles, torsions, or clashes with the protein.

- Torsion Analysis: Use a tool like TorsionChecker to compare the torsional angles of your docked ligand against known distributions from structural databases like the Cambridge Structural Database (CSD) or Protein Data Bank (PDB) [24].

- Pose Validation: If experimental data is available (e.g., a co-crystal structure), calculate the Root-Mean-Square Deviation (RMSD) of your predicted pose against the experimental one. An RMSD of <2.0 Å is generally considered successful [25].

- Rescoring: Submit your top poses to a different scoring function or a more rigorous method, such as free energy perturbation (FEP), to see if the pose ranking changes.

Q2: My virtual screening successfully identified hits with excellent docking scores, but they showed no activity in experimental assays. What are the potential causes? This common discrepancy can be attributed to several systematic errors. The scoring function may have a bias toward compounds with higher molecular weight, rewarding size over genuine complementary interactions [24]. More fundamentally, the force field used in the docking may inaccurately describe key physicochemical interactions, such as van der Waals forces, hydrogen bonding, or hydrophobic effects, leading to systematic errors in affinity prediction [26] [27]. Finally, the simulation may have neglected critical environmental factors, such as the presence of water molecules, cofactors, or specific buffer ions, which can modulate binding affinity by 1-2 kcal/mol or more [26].

- Troubleshooting Protocol:

- Control Docking: Re-dock a known active ligand (positive control) to verify your docking protocol is functioning correctly for that specific target.

- Property Analysis: Calculate the physicochemical properties (e.g., molecular weight, logP, number of rotatable bonds) of your top hits. Check if their excellent scores are correlated with unusually high molecular weight, which may indicate a scoring function bias [24].

- Consider the Environment: Re-run docking or more advanced molecular dynamics (MD) simulations that explicitly include crystallographic water molecules and physiological ion concentrations [26].

- Address Protonation States: Ensure the protonation states of key protein residues and the ligand are correct for the simulated pH conditions, as this can drastically alter electrostatics [26].

Q3: How can I assess and improve the generalizability of my deep learning model for binding affinity prediction? A major pitfall in affinity prediction is train-test data leakage, where models perform well on benchmarks but fail in real-world applications. This occurs when the training data (e.g., from PDBbind) and test data (e.g., from the CASF benchmark) contain structurally similar protein-ligand complexes. Consequently, the model memorizes patterns instead of learning the underlying physics of binding, leading to poor generalization [28].

- Troubleshooting Protocol:

- Data Audit: Implement a structure-based filtering algorithm to analyze your training and test sets. This should assess protein similarity (using TM-score), ligand similarity (Tanimoto score), and binding conformation similarity (pocket-aligned ligand RMSD) [28].

- Strict Splitting: Create a "clean" dataset split, like PDBbind CleanSplit, that removes all training complexes that are structurally similar to any complex in your test set. This ensures a genuinely independent test [28].

- Ablation Studies: Perform an ablation test where you omit protein information from the model input. If the model's performance remains high, it is likely relying on ligand memorization rather than learning protein-ligand interactions [28].

- Architecture Choice: Utilize model architectures, such as certain graph neural networks (GNNs), that are explicitly designed to model protein-ligand interactions and can be combined with transfer learning to improve generalization on limited, non-redundant data [28].

Q4: What are the primary sources of systematic error in calculating formation enthalpies with DFT, and how do they impact virtual screening of drug-like molecules? Systematic errors in Density Functional Theory (DFT) calculations primarily stem from the approximation of the exchange-correlation (XC) functional. For drug discovery, this is critical when calculating the enthalpies of formation for metal-containing compounds or ligands with specific anions (e.g., O, N, S). Functionals like LDA tend to overbind and underestimate lattice parameters, while GGA functionals like PBE often overestimate them [9]. These errors can propagate to incorrect predictions of a compound's stability or reactivity. For instance, an error of several hundred meV/atom can misclassify an unstable polymorph as stable [29].

- Troubleshooting Protocol:

- Functional Selection: For systems involving transition metals and anions, use a functional known for better accuracy, such as PBEsol or vdW-DF-C09, which have shown lower errors in lattice parameter predictions [9].

- Apply Corrections: Implement empirical correction schemes (e.g., FERE, CCE) that apply element-specific or coordination-specific energy corrections to DFT-computed energies to align them more closely with experimental formation enthalpies [29].

- Quantify Uncertainty: Use materials informatics and statistical analysis to predict the expected error for your specific compound based on its chemistry. This provides an "error bar" for your DFT-predicted values, helping to contextualize the reliability of your screening results [9] [29].

Troubleshooting Guides

Guide 1: Resolving Docking Pose Failures and Sampling Errors

Problem: The docking algorithm produces ligand binding poses that are geometrically unrealistic or significantly different from experimentally validated structures.

Solution Workflow: The following diagram outlines a systematic workflow for diagnosing and resolving docking pose failures.

Detailed Steps:

- Verify with Experimental Data: If a crystal structure of the protein-ligand complex exists, calculate the RMSD between your top predicted pose and the experimental ligand conformation. An RMSD greater than 2.0 Å indicates a significant failure [25].

- Analyze Ligand Torsions: Use a tool like TorsionChecker to compare the dihedral angles of rotatable bonds in your docked pose against statistical distributions derived from high-resolution crystal structures. This identifies conformations that are sterically strained or otherwise unlikely [24].

- Increase Sampling: If torsions are incorrect, reconfigure your docking software to increase the exhaustiveness of the search. For systematic search algorithms (like in DOCK), this may involve increasing the number of initial orientations. For stochastic methods (like in AutoDock Vina), increase the "exhaustiveness" parameter [24].

- Rescore and Re-rank: Extract the generated poses and score them using a different scoring function or a more advanced, potentially machine-learning-based affinity prediction model. This can help identify the correct pose that was generated but poorly ranked by the original function [28].

Guide 2: Mitigating Data Bias and Overfitting in Affinity Prediction Models

Problem: A machine learning model for binding affinity prediction performs excellently on validation benchmarks but fails to predict accurately for novel, unrelated protein-ligand complexes.

Solution Workflow: This workflow provides a strategy to identify and mitigate data bias in training datasets for affinity prediction.

Detailed Steps:

- Audit for Data Leakage: Systematically compare your training and test sets using multi-modal similarity measures. Calculate protein similarity (TM-score), ligand similarity (Tanimoto coefficient), and binding site alignment (pocket RMSD). Remove any training complex that exceeds similarity thresholds (e.g., TM-score > 0.8, Tanimoto > 0.9, RMSD < 2.0 Å) with any complex in your test set [28].

- Reduce Redundancy: Within the training set itself, identify and remove redundant complexes that form tight similarity clusters. Training on a highly redundant dataset encourages memorization rather than generalizable learning. Using a de-redundanted dataset forces the model to learn fundamental interactions [28].

- Retrain on a Clean Split: Use a rigorously filtered dataset like PDBbind CleanSplit for training and validation. This ensures that the model's performance on standard benchmarks like CASF reflects its true ability to generalize [28].

- Validate with Ablation Tests: To confirm your model is learning protein-ligand interactions and not just ligand properties, retrain it with the protein node information removed. A significant drop in performance indicates that the original model's predictions were genuinely based on complex interactions [28].

Table 1: Comparison of Docking Program Performance on the DUD-E Dataset [24]

| Docking Program | Search Algorithm Type | Scoring Function Type | Early Enrichment (EF1) | Computational Efficiency |

|---|---|---|---|---|

| UCSF DOCK 3.7 | Systematic search | Physics-based (vdW, electrostatics, desolvation) | Superior | Superior |

| AutoDock Vina | Stochastic search | Empirical (trained on PDBbind) | Comparable | Good |

Table 2: Systematic Errors and Performance of Select DFT Exchange-Correlation Functionals for Oxides [9]

| XC Functional | Error Trend in Lattice Parameters | Mean Absolute Relative Error (MARE) | Remarks |

|---|---|---|---|

| LDA | Systematic underestimation | 2.21% | Tends to overbind |

| PBE (GGA) | Systematic overestimation | 1.61% | Common choice, but less accurate for solids |

| PBEsol (GGA) | Centered around experimental value | 0.79% | Recommended for solid-state materials |

| vdW-DF-C09 | Centered around experimental value | 0.97% | Includes long-range van der Waals interactions |

Table 3: Example DFT Energy Corrections and Associated Uncertainties [29]

| Element/Specie | Correction (eV/atom) | Uncertainty (meV/atom) | Applicable Compound Category |

|---|---|---|---|

| O (oxide) | -1.41 | 2 | All compounds with O in oxide environment |

| N | -0.62 | 8 | Any compound with N as an anion |

| H | -0.32 | 10 | Any compound with H as an anion (e.g., LiH) |

| Fe | -2.21 | 25 | Transition metal oxides/fluorides (GGA+U) |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Software and Databases for Molecular Docking and Affinity Prediction

| Tool Name | Type | Primary Function | Key Consideration |

|---|---|---|---|

| AutoDock Vina [25] [24] | Docking Software | Predicts ligand binding modes and affinities using a stochastic search algorithm and empirical scoring. | Fast and user-friendly; scoring function shows bias toward higher molecular weight [24]. |

| UCSF DOCK [25] [24] | Docking Software | Performs docking using systematic search algorithms and physics-based scoring. | Often shows better early enrichment and computational efficiency than Vina in large-scale screens [24]. |

| PDBbind [28] | Database | A comprehensive database of protein-ligand complex structures and their experimental binding affinities. | The standard training set for affinity prediction models, but contains redundancies and data leakage with common benchmarks [28]. |

| CASF Benchmark [28] | Benchmarking Set | A curated set used to evaluate the performance of scoring functions. | Common benchmark performance is inflated due to structural similarities with PDBbind; requires careful usage [28]. |

| TorsionChecker [24] | Analysis Tool | Validates the torsional angles of docked ligands against statistical distributions from structural databases. | Critical for diagnosing failures in conformational sampling during docking [24]. |

Advanced Methods and Correction Schemes for Improved Thermochemical Accuracy

Frequently Asked Questions (FAQs)

Q1: What is the primary advantage of (r2SCAN+MBD)@HF over standard DFT methods for charged systems?

A1: The (r2SCAN+MBD)@HF method significantly improves accuracy for non-covalent interactions (NCIs) involving charged systems, which previously exhibited systematic errors of up to tens of kcal/mol in standard dispersion-enhanced DFT. This approach provides a balanced treatment of short- and long-range correlation by combining the r2SCAN functional with many-body dispersion (MBD), both evaluated on Hartree-Fock densities, without empirically fitted parameters [30].

Q2: When should I consider using (r2SCAN+MBD)@HF in my drug design research?

A2: You should prioritize this method when studying systems where accurate thermodynamic profiling is critical, particularly for:

- Protein-ligand interactions involving charged species

- Metal ion-protein complexes

- Systems where polarization effects are significant

- Generating high-quality training data for machine-learning force fields This approach is especially valuable in early-stage drug development to speed the process toward an optimal energetic interaction profile [30] [31].

Q3: My calculations show unexpected thermodynamic profiles despite good structural data. How can (r2SCAN+MBD)@HF help?

A3: This addresses a fundamental limitation of structure-only approaches. Isostructural complexes with similar binding affinities can have disparate binding thermodynamics, providing only a partial picture. (r2SCAN+MBD)@HF delivers a more complete energetic picture by accurately capturing the interplay between electrostatics, polarization, and dispersion, which is crucial for understanding entropy-enthalpy compensation effects common in drug development [31].

Q4: What are the key differences between SCAN, rSCAN, and r2SCAN functionals?

A4: The original SCAN functional satisfied all 17 known constraints for meta-GGAs but suffered from numerical instability. rSCAN improved numerical stability but broke some constraints, reducing transferability. r2SCAN successfully combines the transferability of SCAN with the numerical stability of rSCAN, restoring all constraints fulfilled by the original SCAN while maintaining computational efficiency [32].

Q5: How does the HF-DFT approach in (r2SCAN+MBD)@HF address density-driven errors?

A5: By using converged Hartree-Fock densities instead of self-consistent ones for the final evaluation of the exchange-correlation functional, this approach separates errors into functional imperfections and density-driven errors. For systems prone to self-interaction errors (such as those with stretched bonds, halogen, and chalcogen binding), this significantly improves performance compared to self-consistent application of the same functionals [32].

Troubleshooting Guides

Issue 1: Grid Dependence and Numerical Instability

Problem: Inconsistent results with different integration grids or numerical instability in calculations.

Solution:

- Use the r2SCAN functional instead of SCAN for much milder grid dependence [32]

- Employ the DEFGRID3 integration grid in ORCA packages [32]

- For critical calculations, verify convergence with an unpruned 590-point Lebedev angular grid and a 150 Euler-Maclaurin radial grid [32]

Prevention: Always specify dense integration grids in computational setups, particularly for systems prone to density-driven errors like reaction barrier heights and halogen/chalcogen binding [32].

Issue 2: Inaccurate Treatment of Charged Systems

Problem: Systematic errors of tens of kcal/mol for non-covalent interactions involving charged molecules.

Solution:

- Implement the full (r2SCAN+MBD)@HF protocol rather than individual components

- Ensure proper evaluation of many-body dispersion on Hartree-Fock densities

- Validate against standard benchmarks for charged systems before applying to novel systems

Verification: Test method performance on the Metal Ion Protein Clusters dataset or similar charged system benchmarks [30].

Issue 3: Poor Performance for Transition Metal Compounds

Problem: Inaccurate description of electronic properties in transition-metal compounds with open-shell d-electrons.

Solution:

- Leverage r2SCAN's demonstrated capability for correlated systems without requiring Hubbard U corrections in many cases [33]

- For particularly challenging systems, consider hybrid approaches like r2SCAN0-D4 with 25% HF exchange, which shows 35% mean improvement for organometallic reaction energies and barriers [34]

Validation: Compare results for known transition-metal compound benchmarks to establish method reliability for your specific system type [33].

Issue 4: Entropy-Enthalpy Compensation Masking Design Improvements

Problem: Compound modifications yield the desired effect on ΔH but with concomitant undesired effects on ΔS, showing little net ΔG improvement.

Solution:

- Use (r2SCAN+MBD)@HF to obtain accurate separation of ΔG into component ΔH and ΔS terms

- Apply thermodynamic optimization plots and enthalpic efficiency index for analysis [31]

- Focus on enthalpic optimization through precise atomic interactions rather than defaulting to hydrophobic decoration [31]

Quantitative Performance Data

Table 1: Comparison of DFT Approaches for Non-Covalent Interactions

| Method | Key Features | Performance for Charged NCIs | Recommended Application |

|---|---|---|---|

| (r2SCAN+MBD)@HF | No empirical parameters; HF densities; many-body dispersion | Significantly improved accuracy | Charged biomolecular systems; training ML force fields [30] |

| r2SCAN0-D4 | 25% HF exchange; D4 dispersion correction | Robust for organometallic systems | General use for main-group and organometallic thermochemistry [34] |

| Standard DFT-D | Posteriori dispersion corrections | Systematic errors up to tens of kcal/mol for charged systems | Neutral systems with minimal charge separation [30] |

| M05-2X | Specialized functional for NCIs | MAD: 0.41-0.49 kcal/mol (aug-cc-pVDZ) | Hydrogen-bonded complexes with robust triple-ζ basis [35] |

Table 2: Thermodynamic Parameters for Molecular Interactions in Drug Design

| Parameter | Equation | Drug Design Significance |

|---|---|---|

| ΔG (Free Energy) | ΔG = ΔG° + RT ln Q | Determines spontaneity of binding; negative values favor interaction [31] |

| ΔH (Enthalpy) | ΔHᵥH = -R δlnKₐ/δ(1/T) | Net bond formation/breakage; negative values indicate favorable interactions [31] |

| ΔS (Entropy) | ΔG = ΔH - TΔS | System disorder changes; positive values often from water release [31] |

| ΔCₚ (Heat Capacity) | ΔCₚ = (δΔH/δT)ₚ | Indicates hydrophobic interactions & conformational changes [31] |

Experimental Protocols

Protocol 1: Implementing (r2SCAN+MBD)@HF for Protein-Ligand Systems

Purpose: To accurately characterize non-covalent interactions in charged drug-target systems.

Methodology:

- System Preparation

- Obtain protein-ligand complex structures from crystallography or homology modeling

- Process structures to ensure proper protonation states at physiological pH

Computational Setup

Calculation Execution

- Perform geometry optimization with tight convergence criteria

- Conduct single-point energy calculations with dense integration grids

- Calculate interaction energies with counterpoise correction for BSSE

Data Analysis

- Extract binding energies and decompose into contributions

- Compare to reference data from the Metal Ion Protein Clusters dataset [30]

- Generate thermodynamic profiles (ΔG, ΔH, ΔS) for binding events

Validation: Verify method performance against the GMTKN55 database subsets relevant to your system [32].

Protocol 2: Thermodynamic Optimization in Drug Design

Purpose: To implement thermodynamically-driven drug design using accurate DFT methods.

Methodology:

- Initial Characterization

- Determine complete thermodynamic profile (ΔG, ΔH, ΔS) for lead compound

- Identify entropy-enthalpy compensation patterns [31]

Structure-Thermodynamics Correlation

- Relate thermodynamic parameters to structural features

- Identify opportunities for enthalpic optimization through precise atomic interactions

Iterative Optimization

- Design compound modifications targeting improved enthalpy

- Calculate thermodynamic parameters for derivatives using (r2SCAN+MBD)@HF

- Apply thermodynamic optimization plots and enthalpic efficiency index [31]

- Balance enthalpy optimization with entropy considerations

Selectivity Assessment

- Evaluate binding thermodynamics against off-target proteins

- Ensure favorable selectivity profile beyond mere affinity

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Resources

| Resource | Function | Application Notes |

|---|---|---|

| r2SCAN Functional | Meta-GGA exchange-correlation functional | Preferred over SCAN for better numerical stability; maintains constraint satisfaction [32] |

| Many-Body Dispersion (MBD) | Accounts for long-range correlation | Critical for charged systems; evaluated on HF densities in this implementation [30] |

| Hartree-Fock Density | Reference electron density | Reduces density-driven errors in final functional evaluation [32] |

| GMTKN55 Database | Benchmark suite for validation | 55 subsets covering diverse chemistry; use WTMAD2 as primary metric [32] |

| def2 Basis Sets | Gaussian-type basis functions | def2-QZVPP for general use; def2-QZVPPD for anionic systems [32] |

| D4 Dispersion Correction | Semi-classical dispersion correction | Can be parameterized for specific functionals; improves thermochemical accuracy [34] |

Methodological Workflow

Key Technical Insights

The implementation of (r2SCAN+MBD)@HF represents a paradigm shift in addressing systematic errors in DFT thermochemical predictions, particularly for the charged systems ubiquitous in pharmaceutical applications. By moving beyond the limitations of standard functionals through the synergistic combination of constraint-satisfying meta-GGAs, many-body dispersion, and Hartree-Fock densities, this approach enables researchers to obtain the accurate thermodynamic profiles essential for rational drug design. The methodology addresses the critical need for balanced treatment of short- and long-range correlations that standard DFT methods fail to provide for charged non-covalent interactions, ultimately supporting more efficient development of therapeutics with optimized interaction profiles.

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental purpose of applying a Hubbard U correction in DFT calculations?

The Hubbard U correction is primarily applied to mitigate self-interaction error (SIE) in Density Functional Theory (DFT) calculations, which is particularly problematic for systems with strongly correlated electrons, such as transition metals and rare-earth compounds containing partially filled d and f orbitals. Standard approximate functionals like LDA and GGA tend to over-delocalize these electrons, leading to inaccurate predictions of electronic and magnetic properties. The DFT+U method introduces an on-site Coulomb repulsion term to better describe electron localization, offering a good compromise between computational cost and accuracy [36] [37]. Historically, it was thought to treat strong correlations, but it is now understood to primarily cure self-interactions and restore the flat-plane condition of the functional [36].

FAQ 2: How do I determine an appropriate U value for my system?

There is no single universally correct U value, as it depends on the specific chemical system and the property of interest. Common first-principles approaches include:

- Linear-response theory: This is a widely used ab initio method for computing U [36].

- Constrained random phase approximation (cRPA): Another first-principles technique [36].

- Benchmarking against experiments or higher-level theories: U parameters can be calibrated by matching selected properties—such as band gaps, lattice constants, or formation energies—to reliable experimental data or results from more accurate (but computationally expensive) methods like hybrid functionals (e.g., HSE06) [38]. For instance, in CrI₃ monolayers, optimal U values were found by aligning the DFT+U density of states with HSE06 results [38]. It is critical to note that U values are not transferable and should be determined for each unique material system. The choice of method can lead to variations in U values, which is an active area of research [36].

FAQ 3: My DFT+U calculation yields improved band gaps but inaccurate magnetic exchange couplings or formation energies. Why does this happen?

This is a known challenge. The Hubbard U correction, while improving electron localization and band gaps, can sometimes over-correct or incorrectly describe other properties. For example, in iron oxides, U correction weakens ferromagnetic interactions and lowers calculated Curie temperatures, bringing them closer to experiment, but the effect varies across different compounds [39]. This highlights that U is a semi-empirical correction that shifts energies in a specific way, and it may not simultaneously correct all property predictions with a single parameter. Advanced strategies involve applying different U parameters to different atomic orbitals (e.g., both metal d and ligand p orbitals) or using more complex functionals, but these require careful validation [38].

FAQ 4: Are there systematic error trends beyond the Hubbard U that I should consider?

Yes, systematic errors are pervasive in DFT. A significant source of error is the inaccurate description of anionic species and diatomic molecules, most notably the O₂ molecule [29] [19]. The repulsive interactions among M–O bonds in oxides can lead to errors that grow linearly with the number of oxygen atoms [19]. These errors systematically affect the calculated formation energies of oxides and, consequently, predictions of phase stability and electrochemical properties (e.g., Pourbaix diagrams) [19]. Empirical energy correction schemes have been developed to address these specific errors [29].

FAQ 5: How can I quantify the uncertainty in my DFT-corrected formation energies?

Uncertainty can be quantified by considering the errors introduced during the fitting of empirical corrections. This uncertainty arises from both the underlying experimental errors in the reference data and the sensitivity of the corrections to the dataset used for fitting. One framework involves fitting corrections simultaneously for multiple species using a weighted least-squares approach, from which standard deviations for each correction can be extracted. These uncertainties can then be propagated to estimate the probability that a computed compound is thermodynamically stable [29].

Troubleshooting Guides

Guide 1: Diagnosing and Addressing Common DFT+U Issues

| Symptom | Potential Cause | Recommended Solution |

|---|---|---|

| Metallic prediction for a known semiconductor/insulator (e.g., FeO predicted as metallic) | Severe electron over-delocalization due to self-interaction error in localized d/f electrons. | Apply Hubbard U correction to the relevant transition metal or rare-earth cation. Recompute electronic structure [39]. |

| Underestimated band gap | Insufficient correction of electron localization. | Increase the U value systematically and monitor the band gap. Calibrate against experimental gap or hybrid functional benchmark [39] [38]. |

| Overestimated band gap | The U value is too large, leading to excessive electron localization. | Reduce the U value. Validate against other properties like structural parameters or magnetic moments [38]. |

| Inaccurate formation energies or phase stability | Systematic error from inaccurate description of O₂ molecule and other references. | Apply empirical energy corrections tailored to the anion (e.g., O) and its bonding environment in addition to, or instead of, U [29] [19]. |

| Inconsistent magnetic moments | Improper treatment of electron correlations affecting spin polarization. | Apply U and possibly Hund's J parameter. Ensure the magnetic state is consistent with experimental knowledge [39]. |

Guide 2: A Step-by-Step Protocol for Linear-Response Hubbard U Calculation

This protocol outlines the methodology for calculating the Hubbard U parameter using linear-response theory, as implemented in many modern DFT codes [36].

Objective: To compute the effective Hubbard U parameter for a transition metal cation in a solid from first principles.

Workflow Overview: The diagram below illustrates the logical sequence for determining the Hubbard U parameter using linear-response theory.

Required Reagent Solutions (Computational Tools):

| Item | Function |

|---|---|

| DFT Code (e.g., Quantum ESPRESSO) | Performs the ground-state and perturbed calculations. |

| Pseudopotentials | Represents ion-electron interactions (e.g., from SSSP library). |

| Post-Processing Tool | Analyzes the response to perturbations (e.g., hp.x in Quantum ESPRESSO). |

Detailed Procedure:

Initial Ground-State Calculation: Begin with a well-converged, standard DFT calculation of your system to obtain the equilibrium electronic ground state. Use a symmetric supercell that is large enough to avoid interactions between periodic images of the perturbed site.

Apply Perturbations: The code applies a series of small, finite perturbations to the potential acting on the localized manifold (e.g., 3d orbitals) of the atom of interest. This is typically done by constraining the orbital occupations.