Adiabatic Potential Energy Surfaces: From Theoretical Foundations to Biomedical Applications

This comprehensive review explores adiabatic potential energy surfaces (PES) as fundamental frameworks for understanding molecular quantum dynamics.

Adiabatic Potential Energy Surfaces: From Theoretical Foundations to Biomedical Applications

Abstract

This comprehensive review explores adiabatic potential energy surfaces (PES) as fundamental frameworks for understanding molecular quantum dynamics. Covering both foundational theory and cutting-edge computational methodologies, we examine how accurate PES construction enables precise prediction of chemical reaction pathways, nonadiabatic transitions at conical intersections, and quantum dynamics in complex systems. Special emphasis is placed on comparative analyses between adiabatic and diabatic representations, emerging machine learning approaches for surface generation, and the implications of these advances for drug discovery and biomedical research, particularly in understanding reaction mechanisms and molecular interactions relevant to biological systems.

Understanding Adiabatic Potential Energy Surfaces: Quantum Foundations and Molecular Interactions

The Born–Oppenheimer (BO) approximation represents a foundational pillar in quantum chemistry and molecular physics, enabling the practical computation of molecular wavefunctions and properties. This approximation introduces an adiabatic separation between nuclear and electronic motions, based on the significant mass disparity between nuclei and electrons. Since nuclei are thousands of times heavier than electrons, they move considerably slower, allowing electrons to respond almost instantaneously to nuclear displacements [1] [2].

The approximation was first proposed in 1927 by J. Robert Oppenheimer, then a 23-year-old graduate student working with Max Born, during a period of intense development in quantum mechanics [1]. This adiabatic separation forms the theoretical justification for the concept of molecular structure itself—the modeling of molecules with well-defined geometries—and provides the framework for understanding potential energy surfaces that govern chemical reactivity, spectroscopy, and molecular dynamics [1] [3].

Theoretical Foundation

Mathematical Formalism

The complete molecular Hamiltonian for a system containing both electrons and nuclei is given by:

[ \hat{H} = \hat{T}e + \hat{T}N + \hat{V}{ee} + \hat{V}{NN} + \hat{V}_{eN} ]

where (\hat{T}e) and (\hat{T}N) represent the kinetic energy operators for electrons and nuclei respectively, while (\hat{V}{ee}), (\hat{V}{NN}), and (\hat{V}_{eN}) denote the potential energy operators for electron-electron repulsion, nucleus-nucleus repulsion, and electron-nucleus attraction [3].

In atomic units, this expands explicitly to:

[ \hat{H} = - \sum{i=1}^{n} \frac{1}{2 me} \nablai^2 - \sum{\alpha=1}^{\nu} \frac{1}{2 M\alpha} \nabla\alpha^2 - \sum{\alpha=1}^{\nu} \sum{i=1}^{n} \frac{Z\alpha}{r{\alpha i}} + \sum{\alpha=1}^{\nu} \sum{\beta > \alpha} \frac{Z\alpha Z\beta}{r{\alpha \beta}} + \sum{i=1}^{n} \sum{j > i} \frac{1}{r{ij}} ]

Here, lowercase indices denote electrons, uppercase denote nuclei, (Z\alpha) represents atomic number, (me) electron mass, and (M_\alpha) nuclear mass [4].

The Born-Oppenheimer Approximation

The BO approximation consists of two sequential steps:

First Step - Electronic Structure: The nuclear kinetic energy term (\hat{T}_N) is neglected (clamped-nuclei approximation), and the electronic Schrödinger equation is solved for fixed nuclear positions (\mathbf{R}):

[ \hat{H}{el} \chik(\mathbf{r}, \mathbf{R}) = Ek(\mathbf{R}) \chik(\mathbf{r}, \mathbf{R}) ]

where the electronic Hamiltonian is (\hat{H}{el} = \hat{T}e + \hat{V}{ee} + \hat{V}{eN}), (\chik) represents the electronic wavefunction for the k-th state, and (Ek(\mathbf{R})) is the corresponding electronic energy [1] [3].

Second Step - Nuclear Dynamics: The nuclear Schrödinger equation is solved using the electronic energy (E_k(\mathbf{R})) as a potential energy surface:

[ [\hat{T}N + Ek(\mathbf{R})] \phik(\mathbf{R}) = E \phik(\mathbf{R}) ]

where (\phi_k(\mathbf{R})) describes the nuclear motion (vibrational, rotational, translational) [1].

Table 1: Energy Component Separation in Molecular Spectroscopy

| Energy Component | Mathematical Representation | Physical Origin |

|---|---|---|

| Electronic Energy | (E_{electronic}) | Electron distribution in fixed nuclear framework |

| Vibrational Energy | (E_{vibrational}) | Nuclear oscillations within potential well |

| Rotational Energy | (E_{rotational}) | Molecular rotation as a rigid rotor |

| Nuclear Spin Energy | (E_{nuclear\ spin}) | Nuclear spin interactions |

The total molecular energy can be expressed as a sum of independent terms [1]:

[ E{total} = E{electronic} + E{vibrational} + E{rotational} + E_{nuclear\ spin} ]

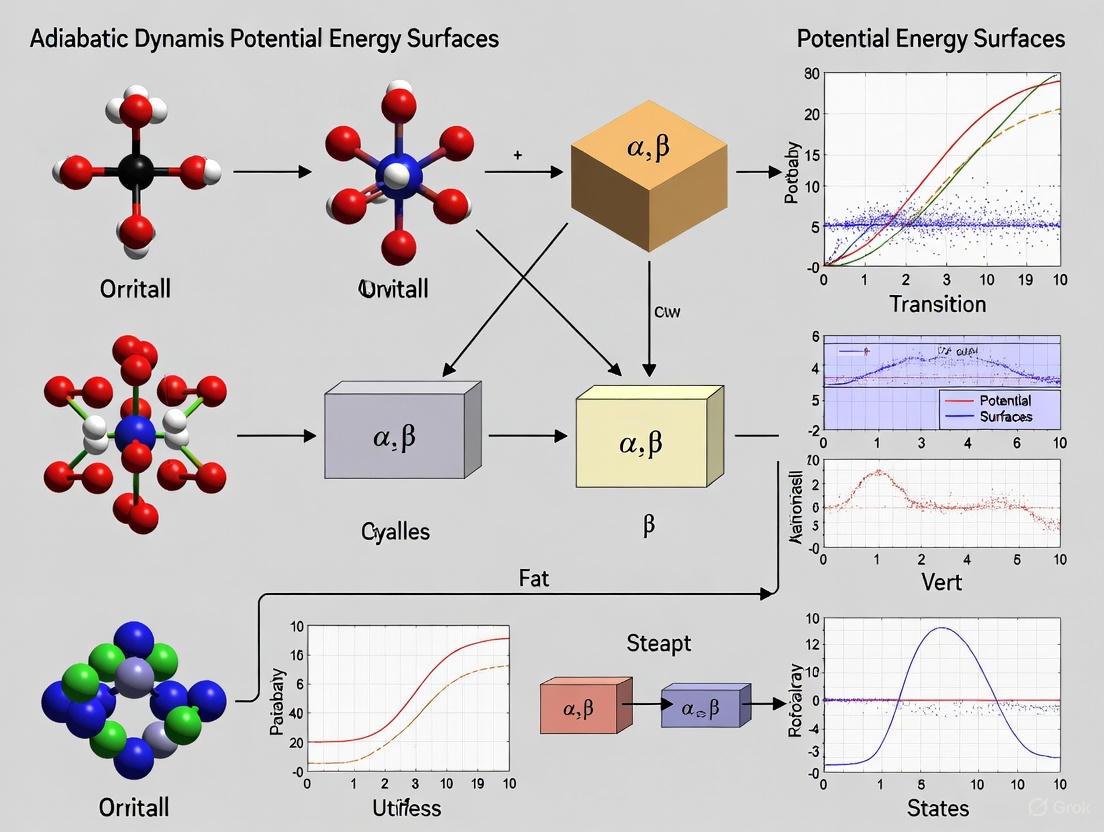

Conceptual Workflow

The following diagram illustrates the sequential decision process and computational workflow underlying the Born-Oppenheimer approximation:

Computational Protocols

Electronic Structure Calculations

Protocol 1: Electronic Energy Computation for Fixed Nuclear Geometry

System Preparation

- Define molecular geometry with nuclear coordinates (\mathbf{R} = {\mathbf{R}1, \mathbf{R}2, ..., \mathbf{R}_N})

- Specify basis set for electronic wavefunction expansion

- Select electronic structure method (Hartree-Fock, DFT, CI, CCSD, etc.)

Electronic Hamiltonian Construction

- Compute one-electron integrals (kinetic energy and electron-nuclear attraction)

- Compute two-electron integrals (electron-electron repulsion)

- Construct Fock matrix or equivalent operator representation

Wavefunction Solution

- Solve electronic eigenvalue problem: (\hat{H}{el} \chik(\mathbf{r}, \mathbf{R}) = Ek(\mathbf{R}) \chik(\mathbf{r}, \mathbf{R}))

- Employ self-consistent field procedure if necessary

- Obtain electronic energy (Ek(\mathbf{R})) and wavefunction (\chik(\mathbf{r}, \mathbf{R}))

Iteration Over Geometries

- Repeat calculations for varied nuclear configurations

- Map complete potential energy surface (E_k(\mathbf{R}))

Protocol 2: Non-Adiabatic Molecular Dynamics with Surface Hopping

For systems where the BO approximation breaks down, implement the following protocol based on the ANT 2025 software package [5] [6]:

Initialization

- Prepare initial nuclear coordinates and momenta

- Select initial electronic state

- Choose electronic structure method for on-the-fly dynamics

Multi-Surface Propagation

- Propagate nuclear coordinates using classical equations of motion

- Compute electronic wavefunction coefficients: (i\hbar \frac{\partial \psi}{\partial t} = \hat{H}_{el} \psi)

- Calculate non-adiabatic coupling vectors: (\mathbf{d}{ij} = \langle \chii | \nablaR \chij \rangle)

Surface Hopping Decision

- Evaluate hopping probabilities: (P{i \to j} = \frac{-2Re(Ci^* Cj \mathbf{v} \cdot \mathbf{d}{ij})}{|C_i|^2} \Delta t)

- Generate random number (\xi \in [0,1])

- Execute hop if (\sum{k=1}^{j-1} P{i \to k} < \xi \leq \sum{k=1}^{j} P{i \to k})

Momentum Adjustment

- Rescale nuclear momenta along non-adiabatic coupling direction

- Ensure total energy conservation

- Apply decoherence corrections if using decoherence-enabled methods

Table 2: Computational Scaling of Molecular Quantum Calculations

| Molecular System | Number of Coordinates | BO Approximation | Full Quantum Treatment |

|---|---|---|---|

| Benzene (C₆H₆) | 12 nuclei + 42 electrons = 162 total | Solve 126 electronic + 36 nuclear coordinates separately | Single calculation with 162 coupled coordinates |

| Computational Complexity | (O(n^2)) scaling | Significantly reduced via separation | Prohibitively expensive for large systems |

| Practical Implementation | Divide-and-conquer approach | Parallelizable over nuclear configurations | Limited to very small molecules |

Potential Energy Surface Construction

Protocol 3: Grid-Based PES Mapping

Coordinate Selection

- Identify relevant internal coordinates (bond lengths, angles, dihedrals)

- Define coordinate ranges and grid spacing

- Select appropriate symmetry-adapted coordinates if applicable

Parallel Electronic Structure Calculations

- Distribute grid points across computational resources

- Perform electronic energy calculations at each grid point

- Store energies, wavefunctions, and property derivatives

Surface Fitting and Interpolation

- Employ spline functions or neural networks for surface representation

- Ensure smoothness and differentiability of fitted surface

- Validate surface quality through derivative continuity checks

Applications in Drug Development

Molecular Docking and Binding Affinity

The BO approximation enables the computational prediction of drug-receptor interactions through:

Protein-Ligand Binding Energy Calculations:

- Electronic structure calculations provide accurate interaction energies

- Potential energy surfaces guide conformational sampling of flexible ligands

- Vibrational frequency calculations yield entropy contributions to binding free energy

Protocol 4: Drug-Receptor Binding Affinity Protocol

Receptor Preparation

- Obtain protein crystal structure or homology model

- Add hydrogen atoms and assign protonation states

- Define active site and potential binding regions

Ligand Docking

- Generate ligand conformers and orientations

- Evaluate interaction energy using molecular mechanics or QM/MM methods

- Rank binding poses by electronic interaction energy

Binding Free Energy Calculation

- Perform geometry optimization of bound and unbound states

- Compute vibrational frequencies for zero-point energy and thermal corrections

- Calculate binding free energy: (\Delta G{bind} = \Delta E{electronic} + \Delta E_{vibrational} - T\Delta S)

Reaction Pathway Analysis for Drug Metabolism

The BO approximation facilitates the study of enzymatic reaction mechanisms relevant to drug metabolism:

Protocol 5: Enzymatic Reaction Pathway Mapping

Reactive Complex Modeling

- Construct enzyme-substrate complex model using QM/MM methods

- Identify reactive regions for high-level quantum treatment

- Define reaction coordinates

Transition State Search

- Locate saddle points on the potential energy surface

- Verify transition state with single imaginary frequency

- Calculate intrinsic reaction coordinate (IRC) pathways

Kinetic Parameter Estimation

- Compute activation energies from electronic energy differences

- Apply transition state theory: (k = \frac{k_B T}{h} e^{-\Delta G^\ddagger / RT})

- Incorporate tunneling corrections for hydrogen transfer reactions

Limitations and Breakdowns

When the Approximation Fails

The BO approximation loses validity (breaks down) under several important circumstances:

Metallic Systems and Graphene: In graphene, which behaves as a zero-bandgap semiconductor that becomes metallic under gate voltage, the BO approximation fails to describe phonon-induced renormalization of electronic properties, particularly the stiffening of the Raman G peak [7].

Conical Intersections and Avoided Crossings: When potential energy surfaces approach degeneracy, non-adiabatic coupling terms become significant, invalidating the adiabatic separation [1] [4].

Non-Adiabatic Transitions: Electronic state changes during molecular dynamics, such as photochemical reactions, excited state relaxation, and charge transfer processes, require treatment beyond the standard BO approximation [5] [6].

Table 3: Systems Where Born-Oppenheimer Approximation Breaks Down

| System Type | Failure Mechanism | Alternative Approaches |

|---|---|---|

| Metallic Systems | Zero electronic bandgap enables efficient electron-phonon coupling | Explicit non-adiabatic dynamics, Eliashberg theory |

| Conical Intersections | Degenerate electronic states create singular non-adiabatic couplings | Diabatic representation, multi-configurational methods |

| Charge Transfer States | Non-local electronic correlations break local potential assumption | State-averaged methods, fragment orbital approaches |

| Light Element Systems | Reduced mass disparity decreases time scale separation | Non-adiabatic molecular dynamics, exact factorization |

Advanced Treatment: Effective Field Theory Framework

Recent work has reformulated the BO approximation within an effective field theory (EFT) framework, particularly for systems containing both heavy and light degrees of freedom [8] [9]. This approach:

- Sequentially integrates out high-energy degrees of freedom

- Provides systematic improvement through order-by-order expansion

- Enables high-precision calculations up to (\mathcal{O}(m\alpha^5)) for molecular systems

- Facilitates application to QCD states containing heavy quarks with light quark or gluonic excitations

The EFT formulation demonstrates that the BO approximation emerges naturally as the leading-order term when separating energy scales, with non-adiabatic couplings appearing as higher-order corrections [9].

The Scientist's Toolkit

Table 4: Essential Computational Tools for Born-Oppenheimer Calculations

| Tool/Software | Function | Application Context |

|---|---|---|

| ANT 2025 | Adiabatic and nonadiabatic trajectory simulations | Molecular dynamics with electronic state changes [5] [6] |

| Quantum Chemistry Packages (e.g., Gaussian, Q-Chem, ORCA) | Electronic structure calculations | Potential energy surface mapping, transition state location |

| Diabatic Surface Construction Tools | Analytic fits for diabatic potentials | Non-adiabatic dynamics in the diabatic representation [6] |

| Direct Dynamics Interfaces | On-the-fly force calculation during dynamics | Ab initio molecular dynamics without pre-computed surfaces |

Research Reagent Solutions

Table 5: Key Methodological Approaches for Advanced Applications

| Method/Approach | Function | Theoretical Basis |

|---|---|---|

| Surface Hopping with Decoherence | Models non-adiabatic transitions between states | Stochastic algorithm with quantum-classical coupling [5] |

| Semiclassical Ehrenfest Dynamics | Mean-field trajectory on multiple surfaces | Wavefunction propagation with classical nuclei [6] |

| Coherent Switching with Decoherence (CSDM) | Nonadiabatic dynamics with improved accuracy | Decay of mixing model with curvature-driven approximation [6] |

| Fast-Quasiadiabatic (fast-QUAD) Protocols | Optimal control for adiabatic operations | Quantum metric tensor framework for geodesic paths [10] |

Visualization of Non-Adiabatic Coupling

The diagram below illustrates the regions where non-adiabatic effects become significant and the breakdown of the Born-Oppenheimer approximation must be addressed:

This framework enables researchers to identify when the standard Born-Oppenheimer treatment becomes inadequate and select appropriate advanced methodologies for systems exhibiting significant non-adiabatic behavior.

The calculation of potential energy surfaces (PESs) forms the cornerstone of theoretical chemistry, providing the foundational framework for understanding molecular structure, reactivity, and dynamics. Within this domain, two principal representations—adiabatic and diabatic—offer complementary perspectives for conceptualizing and solving the molecular Schrödinger equation. The adiabatic representation, derived directly from the Born-Oppenheimer approximation, provides electronic states that diagonalize the electronic Hamiltonian, while the diabatic representation offers an alternative basis set where the nuclear kinetic energy operator remains diagonal. These representations are not merely mathematical curiosities; they represent fundamentally different approaches to describing quantum phenomena in molecular systems, particularly in regions where potential energy surfaces approach one another. The choice between adiabatic and diabatic frameworks carries significant implications for computational efficiency, conceptual clarity, and physical interpretation in quantum chemistry and dynamics simulations, especially in complex systems relevant to drug development and materials science.

Theoretical Foundations

The Adiabatic Representation

The adiabatic representation emerges naturally from the Born-Oppenheimer approximation, which exploits the significant mass disparity between electrons and nuclei. In this framework, the electronic Schrödinger equation is solved with the nuclear coordinates treated as fixed parameters:

$\hat{H}_{el}\psi(r;R) = E(R)\psi(r;R)$

where $\hat{H}_{el}$ represents the electronic Hamiltonian, $\psi(r;R)$ denotes the electronic wavefunction dependent on electronic coordinates (r) and parametrically on nuclear coordinates (R), and E(R) constitutes the resulting adiabatic potential energy surface [11]. The adiabatic states diagonalize the electronic Hamiltonian, yielding potential energy surfaces that correspond physically to the electronic energy as a function of molecular geometry. The tremendous utility of this representation stems from the fact that when nuclear motion is sufficiently slow, the system tends to remain on a single adiabatic surface, enabling tremendous simplification of molecular dynamics problems.

The nonadiabatic coupling terms that emerge between adiabatic states are proportional to $\langle \chik | \nablaR \chi_m \rangle$, where $\chi$ represents electronic states [12]. These terms remain typically small except in regions where adiabatic potential energy surfaces approach minimal energy gaps, such as avoided crossings or conical intersections. At these critical points, the Born-Oppenheimer approximation deteriorates, and the adiabatic representation becomes computationally challenging due to the sharply peaked nature of these coupling elements.

The Diabatic Representation

The diabatic representation constitutes a transformed basis designed to address the limitations of the adiabatic framework in regions of strong nonadiabatic coupling. In this representation, the focus shifts to diagonalizing the nuclear kinetic energy operator while allowing the electronic Hamiltonian to contain off-diagonal elements [12]. Mathematically, this transformation from adiabatic ($\chi$) to diabatic ($\varphi$) states for a two-state system takes the form of a unitary rotation:

$ \begin{pmatrix} \varphi1(r;R) \ \varphi2(r;R)

\end{pmatrix}

\begin{pmatrix} \cos\gamma(R) & \sin\gamma(R) \ -\sin\gamma(R) & \cos\gamma(R) \end{pmatrix} \begin{pmatrix} \chi1(r;R) \ \chi2(r;R) \end{pmatrix} $

where $\gamma(R)$ represents the diabatic mixing angle [12]. The primary objective of this transformation is to minimize or eliminate the problematic derivative couplings that plague the adiabatic representation, replacing them with potential couplings that typically exhibit smoother spatial variation. Although strictly diabatic states do not generally exist for polyatomic systems, carefully constructed pseudo-diabatic states provide tremendous practical utility in quantum dynamics calculations [12].

Diabatic states frequently correspond to chemically intuitive configurations, such as ionic versus covalent states in bond dissociation or charge-transfer versus locally-excited states in molecular complexes. For example, in the NaCl system, the diabatic states correspond to distinct physical configurations: the ionic state ($Na^+Cl^-$) exhibits a deep potential well due to Coulombic attraction, while the neutral state ($Na^0 + Cl^0$) displays predominantly repulsive character [13]. This chemical interpretability represents a significant advantage when analyzing reaction mechanisms and electronic structure changes along reaction pathways.

Table 1: Fundamental Characteristics of Adiabatic and Diabatic Representations

| Feature | Adiabatic Representation | Diabatic Representation |

|---|---|---|

| Hamiltonian Diagonalization | Electronic Hamiltonian is diagonal | Nuclear kinetic energy operator is diagonal |

| Basis Set Origin | Derived from Born-Oppenheimer approximation | Unitary transformation of adiabatic states |

| Coupling Elements | Derivative couplings ($\langle \chik | \nablaR \chi_m \rangle$) | Potential energy couplings ($V_{km}$) |

| Wavefunction Dependence | Parametric dependence on nuclear coordinates (R) | Reduced dependence on R |

| Physical Interpretation | Electronic eigenstates | Chemically intuitive states |

| Computational Behavior | Couplings diverge at surface intersections | Smooth, well-behaved couplings |

| Region of Optimal Use | Away from surface intersections | Near avoided crossings and conical intersections |

Comparative Analysis: Key Similarities and Differences

Fundamental Similarities

Despite their distinctive characteristics, adiabatic and diabatic representations share several fundamental commonalities. Both frameworks provide complete basis sets for expanding the total molecular wavefunction, meaning they can describe identical physical systems and observables when implemented correctly [11]. The transformation between representations is unitary, preserving the physical content while redistributing it between the potential and kinetic energy terms of the molecular Hamiltonian. Both representations must yield identical experimental predictions for measurable properties, ensuring their physical equivalence despite mathematical dissimilarities.

Additionally, both representations face challenges in regions where potential energy surfaces become degenerate or nearly degenerate. In the adiabatic picture, these challenges manifest as singular derivative couplings, while in the diabatic picture, they appear as strong potential couplings between states. The fundamental physics of nonadiabatic transitions remains invariant, though the computational handling differs significantly between representations. This shared difficulty underscores the intrinsic complexity of nonadiabatic dynamics regardless of representation choice.

Critical Differences

The distinctions between adiabatic and diabatic representations manifest most prominently in their mathematical structure, physical interpretation, and computational behavior. The following table summarizes these key differentiating factors:

Table 2: Comparative Analysis of Adiabatic and Diabatic Representations

| Aspect | Adiabatic Representation | Diabatic Representation |

|---|---|---|

| Mathematical Foundation | Born-Oppenheimer approximation | Unitary transformation |

| Hamiltonian Structure | Diagonal potential, off-diagonal kinetic coupling | Off-diagonal potential, diagonal kinetic |

| Coupling Nature | Derivative couplings (kinetic) | Potential couplings (scalar) |

| Computational Tractability | Challenging near intersections | Smoother couplings, better numerical stability |

| Chemical Interpretability | Less intuitive, particularly at crossings | More intuitive (ionic/covalent, etc.) |

| Surface Crossing Behavior | Avoided crossings typical | True crossings possible |

| Region of Preference | Single-surface dynamics | Nonadiabatic dynamics, surface hopping |

| Experimental Connection | Spectroscopic states | Valence bond-like states |

The adiabatic representation provides the most natural connection to spectroscopic observations and electronic structure calculations, as these typically probe stationary states of the electronic Hamiltonian. In contrast, the diabatic representation often aligns more closely with chemical intuition, particularly for processes involving electron transfer or bond rearrangement, as it maintains the character of specific electronic configurations across nuclear geometries [13].

The behavior at surface intersections represents another critical distinction. Adiabatic surfaces of the same symmetry typically exhibit avoided crossings due to the noncrossing rule, while diabatic surfaces can cross freely. At these avoided crossings, the adiabatic coupling elements become large and sharply peaked, creating numerical challenges for quantum dynamics simulations. The diabatic representation transforms these problematic derivative couplings into smoother potential couplings, offering significant computational advantages.

Diagram 1: Selection workflow for adiabatic versus diabatic representations in quantum dynamics calculations.

Computational Protocols and Methodologies

Protocol for Adiabatic State Calculations

The computation of adiabatic potential energy surfaces represents the standard approach in electronic structure theory, with the following established protocol:

System Preparation and Electronic Structure Setup

- Define molecular geometry and nuclear coordinates

- Select appropriate basis set and electronic structure method (e.g., CASSCF, MRCI, DFT)

- Specify electronic state manifold of interest

Adiabatic Surface Calculation

- Solve electronic Schrödinger equation at each nuclear configuration: $\hat{H}{el}(\mathbf{r};\mathbf{R})\psik(\mathbf{r};\mathbf{R}) = Ek(\mathbf{R})\psik(\mathbf{r};\mathbf{R})$

- Compute adiabatic energies $E_k(\mathbf{R})$ across relevant coordinate space

- Calculate nonadiabatic coupling elements: $\mathbf{d}{kj}(\mathbf{R}) = \langle \psik | \nablaR \psij \rangle$

Surface Analysis and Characterization

- Identify regions of minimal energy gap between surfaces

- Locate and characterize conical intersections or avoided crossings

- Validate convergence with respect to basis set and active space

This protocol generates the fundamental data required for subsequent quantum dynamics simulations or spectroscopic predictions. The computational cost scales with the number of nuclear degrees of freedom and the complexity of the electronic structure method.

Protocol for Diabatic State Construction

The construction of diabatic states follows a more nuanced procedure, as true diabatic states do not generally exist for polyatomic systems. The following protocol outlines the construction of pseudo-diabatic states:

Reference Adiabatic Calculation

- Compute adiabatic states and energies as in Section 4.1

- Calculate nonadiabatic coupling elements throughout configuration space

Diabatization Strategy Selection

- Choose diabatization method:

- Property-based: Maximize uniformity of electronic properties

- Energy-based: Maximize smoothness of potential couplings

- Block-diagonalization: Specific electronic state character preservation

Diabatic Transformation

- Construct transformation matrix $\mathbf{U}(\mathbf{R})$ that minimizes derivative couplings

- Apply transformation to obtain diabatic potentials and couplings: $\mathbf{W}(\mathbf{R}) = \mathbf{U}^\dagger(\mathbf{R})\mathbf{E}(\mathbf{R})\mathbf{U}(\mathbf{R})$

- Verify smoothness of resulting diabatic potential matrix elements

Validation and Refinement

- Check physical reasonableness of diabatic states

- Ensure transformation maintains accuracy of observable predictions

- Refine diabatization through comparison with experimental data when available

This protocol produces diabatic states that maintain their character across nuclear configurations, with smooth potential couplings that facilitate quantum dynamics calculations.

Protocol for Nonadiabatic Dynamics Simulations

The practical application of these representations emerges most clearly in nonadiabatic dynamics simulations, where the following integrated protocol applies:

Representation Selection

- Assess nature of nonadiabatic coupling in system

- For weak, localized couplings: employ adiabatic representation

- For strong, extended couplings: employ diabatic representation

Hamiltonian Construction

- For adiabatic dynamics: use derivative coupling elements directly

- For diabatic dynamics: use transformed potential coupling terms

- Implement appropriate quantum propagation method (wavepacket, surface hopping, etc.)

Dynamics Propagation

- Initialize system on appropriate initial state

- Propagate according to time-dependent Schrödinger equation: $i\hbar\frac{\partial}{\partial t}\Psi = \hat{H}\Psi$

- Monitor population transfer between states

- Track quantum phases for interference effects

Analysis and Interpretation

- Calculate transition probabilities and reaction yields

- Compare with experimental observables (spectra, cross-sections)

- Extract mechanistic insights into nonadiabatic processes

Diagram 2: Computational workflow for nonadiabatic dynamics simulations incorporating both adiabatic and diabatic approaches.

Successful implementation of adiabatic and diabatic representation methods requires specialized computational tools and theoretical resources. The following table outlines key components of the researcher's toolkit for effective work in this domain:

Table 3: Research Reagent Solutions for Adiabatic/Diabatic Calculations

| Tool Category | Specific Examples | Function/Purpose |

|---|---|---|

| Electronic Structure Codes | MOLPRO, MOLCAS, COLUMBUS, GAUSSIAN | Compute adiabatic potential energy surfaces and wavefunctions |

| Nonadiabatic Coupling Calculators | BO_DRIVER, NEWTON-X, SHARC | Evaluate derivative couplings between adiabatic states |

| Diabatization Methods | Boys localization, ER, DQ, 4-fold way | Construct diabatic representations from adiabatic data |

| Quantum Dynamics Packages | MCTDH, MESS, Tully's surface hopping | Propagate nuclear motion on coupled potential surfaces |

| Analysis and Visualization | VMD, Molden, Jmol | Interpret and visualize complex multidimensional data |

| Reference Systems | NaCl, butatriene, pyrazine | Benchmark systems for method development and validation |

The computational tools listed in Table 3 represent essential resources for researchers investigating nonadiabatic phenomena. Electronic structure codes provide the fundamental adiabatic data, while specialized algorithms facilitate the transformation to diabatic representations when needed. Quantum dynamics packages then leverage these representations to simulate the time evolution of molecular systems, with specific codes often optimized for particular representations. Benchmark systems like the NaCl molecule, with its characteristic avoided crossing between ionic and covalent states, provide critical validation for methodological developments [13].

Applications in Chemical Research and Drug Development

The practical implications of adiabatic versus diabatic representations extend across numerous domains of chemical research, with particularly significant applications in photochemistry and drug development. In photodynamic therapy, for instance, the intersystem crossing processes that enable generation of cytotoxic singlet oxygen depend critically on nonadiabatic transitions between electronic states, optimally described in a diabatic representation. Similarly, the design of photovoltaic materials benefits from understanding charge transfer dynamics through conical intersections where diabatic states provide clearer mechanistic insights.

In drug discovery, the accurate prediction of metabolic pathways requires understanding electron transfer reactions in cytochrome P450 enzymes, where diabatic representations clarify the electronic reorganization during oxidation processes. The burgeoning field of photoactivated chemotherapy likewise depends on understanding nonradiative relaxation pathways in transition metal complexes, where the choice of representation significantly impacts the accuracy of predicted quantum yields.

Recent methodological advances continue to enhance the applicability of both representations to biologically relevant systems. Multiscale quantum mechanics/molecular mechanics (QM/MM) approaches now enable the embedding of high-level quantum treatments within complex biological environments, while machine learning techniques accelerate the construction of potential energy surfaces and coupling elements. These developments promise to further bridge the gap between formal theoretical representations and practical applications in pharmaceutical research.

In the realm of quantum chemistry and molecular dynamics, potential energy surfaces (PESs) form the foundational landscape upon which nuclear motion occurs. Within these multidimensional surfaces, specific critical regions—conical intersections and avoided crossings—govern the outcomes of fundamental photochemical and non-adiabatic processes. These features represent points where the Born-Oppenheimer approximation breaks down, enabling rapid transitions between electronic states that underpin phenomena from vision and photosynthesis to DNA photostability and drug activity [14].

Conical intersections represent actual degeneracies between electronic states where potential energy surfaces intersect conically, forming funnels through which wave packets can efficiently transfer between electronic states. In contrast, avoided crossings occur when two electronic states of the same symmetry approach but do not cross due to electronic coupling, creating a region of close proximity where non-adiabatic transitions remain probable though less efficient than at conical intersections. The distinction between these phenomena has profound implications for understanding and controlling molecular photochemistry and reaction dynamics in biological systems.

Theoretical Foundations

Conical Intersections: Molecular Degeneracy Points

Conical intersections are defined as molecular geometry points where two or more adiabatic potential energy surfaces become degenerate (intersect) and the non-adiabatic couplings between these states are non-vanishing [14]. These intersections form what are often described as "diabolical points" or "molecular funnels" that enable rapid non-radiative transitions between electronic states. The significance of conical intersections extends across photochemistry, where they serve as paradigms for understanding reaction mechanisms in excited states, analogous to the role of transition states in thermal chemistry [14].

The mathematical description of conical intersections involves the eigenvalues of the electronic Hamiltonian. In the vicinity of the intersection point, the potential energy surfaces form a double cone. The remaining 3N-8 dimensions (where N is the number of atoms) constitute the "seam space" where the degeneracy is maintained. For a triatomic system, this creates a one-dimensional seam line along which degeneracy persists [14].

Avoided Crossings: Near-Degeneracies with Critical Implications

Avoided crossings represent regions where two potential energy surfaces approach closely but do not intersect due to electronic coupling between the states. Unlike conical intersections, which represent true degeneracies, avoided crossings maintain an energy gap between the surfaces, with the minimum gap defining the region of strongest non-adiabatic coupling. The distinction is particularly crucial in diatomic molecules, where conical intersections cannot exist due to insufficient vibrational degrees of freedom, and potential energy curves instead exhibit avoided crossings when they share the same point group symmetry [14].

Characterization and Energetic Profiles

The fundamental differences between conical intersections and avoided crossings can be quantified through specific energetic and coupling parameters. The following table summarizes the key distinguishing characteristics:

Table 1: Characteristic Signatures of Conical Intersections and Avoided Crossings

| Feature | Conical Intersection | Avoided Crossing |

|---|---|---|

| Energetic Separation | Zero energy gap (degeneracy) | Non-zero minimum energy gap |

| Branching Space Dimension | Two-dimensional (g & h vectors) | Typically one-dimensional |

| Non-adiabatic Coupling | Divergent at intersection point | Finite, peaks at minimum gap |

| Seam Space Dimension | 3N-8 dimensional seam | No seam space |

| Topography | Double cone structure | Repulsive "hump" or "neck" |

| Electronic Wavefunction | Geometric phase effect | No geometric phase |

The branching space vectors that lift the degeneracy at conical intersections include the difference gradient vector (g) and the non-adiabatic coupling vector (h). These vectors span the two-dimensional space in which the degeneracy is lifted linearly, creating the characteristic conical topography [14]. For avoided crossings, the primary direction of significance is typically along the reaction coordinate where the avoided crossing occurs, with the energy gap determined by the electronic coupling between the states.

Table 2: Computational Approaches for Characterizing Critical Regions

| Method | Conical Intersections | Avoided Crossings | Key Applications |

|---|---|---|---|

| MCSCF/MRCI | Direct optimization of intersection points | Tracking state energies along coordinates | High-accuracy mapping of PESs [15] [16] |

| Vibronic Coupling Models | Simulate Jahn-Teller effects and spectroscopic signatures | Model transition probabilities | Optical absorption spectra [17] |

| Diabatic Representation | Construct diabatic surfaces intersecting conically | Model coupling terms between diabatic states | Reaction dynamics [15] |

| Quantum Computing Algorithms | VQE-SA-CASSCF for intersection points [18] | Energy gap estimation | Small molecular systems [18] |

Spectroscopic and Dynamical Manifestations

Spectroscopic Signatures of Conical Intersections

The presence of conical intersections creates distinctive spectroscopic effects that deviate markedly from standard vibronic patterns. As demonstrated in a seminal vibronic coupling model involving two electronic states and two vibrational modes, when the conical intersection occurs within the Franck-Condon zone, the resulting optical absorption spectrum exhibits extremely complex vibronic structure [17]. This complexity arises from the strong non-adiabatic couplings that mix the electronic states, rendering traditional approximations like the adiabatic and Franck-Condon approximations inadequate for interpreting these spectra.

The breakdown of these approximations is significantly more pronounced than in one-dimensional vibronic coupling scenarios without conical intersections. Even when the intersection point lies outside the immediate Franck-Condon region, the upper adiabatic electronic state remains strongly affected by non-adiabatic coupling, leading to anomalous intensity distributions and band broadening in absorption spectra [17]. These spectroscopic anomalies serve as experimental indicators of conical intersections in molecular systems.

Ultrafast Dynamics Through Conical Intersections

The primary dynamical significance of conical intersections lies in their role as efficient funnels for non-radiative relaxation between electronic states. When a molecule is excited to an upper electronic state, the wave packet evolves on the potential energy surface and may encounter a conical intersection that facilitates rapid transition to a lower electronic state. This mechanism is fundamental to numerous biological photoprotection processes, such as DNA's resilience to UV damage [14].

The efficiency of this funneling effect depends critically on the location and topography of the conical intersection relative to the Franck-Condon region. Molecular systems can be engineered or naturally evolve to position conical intersections strategically to either facilitate or impede specific photochemical pathways, with profound implications for photostability and quantum yield in molecular photochemistry.

Computational Methodologies

Protocol 1: Ab Initio Mapping of Conical Intersections

The accurate determination of conical intersections requires high-level electronic structure methods capable of describing degenerate or nearly-degenerate electronic states:

Electronic Structure Method Selection: Employ multi-configurational self-consistent field (MCSCF) followed by multi-reference configuration interaction (MRCI) calculations to properly describe static correlation effects essential for degeneracy regions [15] [16]. The MCSCF active space must encompass sufficient orbitals to describe all relevant electronic states.

Basis Set Requirements: Use correlation-consistent basis sets with diffuse functions (e.g., aug-cc-pV5Z) for accurate description of potential energy surfaces in interaction regions [15]. Larger basis sets are particularly crucial for weak interaction regions and van der Waals complexes.

Coordinate System Definition: For triatomic systems, employ Jacobi coordinates (r, R, θ) for comprehensive sampling of configuration space, where r represents bond length, R the distance between atom and center of mass, and θ the angle between them [15].

Extensive Configuration Sampling: Calculate thousands of adiabatic potential energy points across relevant geometric ranges to ensure adequate sampling of the seam space and branching plane. For the He + H2 system, this required 34,848 points for reliable surface construction [15].

Surface Fitting Procedure: Utilize fitting methods such as B-spline representation to generate continuous potential energy surfaces from discrete ab initio points, enabling dynamical calculations [15].

Protocol 2: Diabatic Representation Construction

The diabatic representation provides a more convenient framework for dynamical calculations near conical intersections:

Mixing Angle Calculation: Determine the transformation angle α that relates adiabatic and diabatic wavefunctions through a rotation matrix [15]:

Ψ₁ᵈ = cos(α)φ₁ᵃ + sin(α)φ₂ᵃ Ψ₂ᵈ = -sin(α)φ₁ᵃ + cos(α)φ₂ᵃ

Diabatic State Optimization: Construct diabatic states that maximize smoothness with nuclear coordinates while maintaining electronic character, typically by minimizing the derivative couplings between states.

Potential Energy Matrix Elements: Fit the diagonal and off-diagonal elements of the diabatic potential matrix to reproduce the adiabatic energy surfaces and non-adiabatic couplings in the region of interest.

Conical Intersection Optimization: Locate points of exact degeneracy in the diabatic representation, where the energy difference vanishes and the coupling element produces the conical topology.

Protocol 3: Quantum Computing Approaches

Emerging quantum algorithms offer new pathways for studying conical intersections:

Hybrid Quantum-Classical Framework: Implement the variational quantum eigensolver (VQE) for state-average complete active space self-consistent field (SA-CASSCF) calculations on superconducting quantum processors [18].

Active Space Representation: Map molecular active spaces to qubit registers, employing unitary coupled-cluster or hardware-efficient ansatzes for parameter optimization.

State-Averaging Procedure: Simultaneously optimize multiple electronic states to ensure balanced description of degeneracy regions, crucial for conical intersection characterization.

Noise Mitigation Strategies: Employ error suppression and mitigation techniques to enhance result fidelity on current noisy intermediate-scale quantum devices.

This approach has been successfully demonstrated for prototypical systems like ethylene and triatomic hydrogen, laying groundwork for application to more complex systems [18].

Experimental Probes and Detection Methods

While conical intersections involve ultrafast femtosecond dynamics that challenge direct observation, advanced spectroscopic techniques provide signatures of their involvement:

Ultrafast X-ray Transient Absorption Spectroscopy: Proposes to directly track electronic structure changes during conical passage through element-specific core-level transitions [14].

Two-Dimensional Electronic Spectroscopy: Detects vibrational frequency modulations of coupling modes that signal conical intersection involvement through characteristic peak oscillations and lineshapes [14].

Quantum Simulation in Trapped-Ion Systems: A 2023 breakthrough experiment slowed down the interference pattern of a single atom caused by a conical intersection by a factor of 100 billion using a trapped-ion quantum computer, enabling direct observation of the geometric phase effect [14].

Time-Resolved Photoelectron Spectroscopy: Maps temporal evolution through conical intersections by measuring kinetic energy and angular distributions of ejected electrons following pump-probe excitation sequences.

Research Reagent Solutions

Table 3: Essential Computational Tools for Conical Intersection Research

| Research Tool | Function | Application Example |

|---|---|---|

| MOLPRO Software Package | High-level ab initio calculations | MCSCF/MRCI computations for adiabatic PES points [15] |

| aug-cc-pV5Z Basis Sets | Atomic orbital basis functions | Accurate description of electron correlation in weak interactions [15] |

| B-spline Fitting Method | Analytical representation of PES | Continuous surface fitting from discrete ab initio points [15] |

| Vibronic Coupling Model | Model Hamiltonian for dynamics | Simulation of optical absorption spectra [17] |

| VQE-SA-CASSCF Algorithm | Quantum computing electronic structure | Conical intersection computation on quantum processors [18] |

| Jacobian Coordinates | Molecular coordinate system | Triatomic system potential energy surface scans [15] |

Biological and Medical Implications

The functional role of conical intersections extends to crucial biological processes and potential therapeutic applications:

DNA Photostability: The remarkable resilience of DNA to ultraviolet radiation damage stems from conical intersections that facilitate ultrafast non-radiative relaxation from excited electronic states back to the ground state, preventing harmful photochemical reactions [14].

Visual Phototransduction: The initial step in vision involves photoisomerization of retinal in rhodopsin, a process guided by conical intersections that direct the stereoselective reaction pathway on ultrafast timescales.

Photosynthetic Efficiency: Light harvesting in photosynthetic complexes may exploit quantum effects mediated by conical intersections to achieve remarkable energy transfer efficiency.

Photodynamic Therapy Optimization: Understanding conical intersections in photosensitizer molecules could enable rational design of more efficient therapeutic agents for cancer treatment.

Drug Photostability Assessment: Evaluation of pharmaceutical compound stability under light exposure requires understanding of non-radiative relaxation pathways through conical intersections.

Visualization of Critical Concepts

Conical intersections and avoided crossings represent critical topological features that govern non-adiabatic processes across chemistry and biology. While both facilitate transitions between electronic states, conical intersections provide more efficient funnels due to their true degeneracy and multidimensional branching space. The computational methodology for characterizing these features has advanced significantly, from traditional high-level ab initio approaches to emerging quantum computing algorithms. Experimental techniques continue to evolve toward direct observation and characterization of these ultrafast phenomena. Understanding these critical regions in potential energy landscapes provides fundamental insights into molecular photochemistry with broad implications for biological function and therapeutic development.

Accurate description of molecular electronic structure is fundamental to predicting chemical behavior, particularly in complex scenarios involving bond breaking, excited states, and degenerate electronic configurations where single-reference quantum chemical methods fail. Multi-configurational self-consistent field (MCSCF) and multi-reference configuration interaction (MRCI) represent two cornerstone approaches in high-level ab initio quantum chemistry for treating such systems. These methods are particularly crucial for constructing accurate potential energy surfaces (PESs) in adiabatic dynamics research, where mapping the electronic energy as a function of nuclear coordinates provides the foundation for understanding reaction pathways, spectroscopic properties, and nonadiabatic processes [15] [19].

The MCSCF method generates qualitatively correct reference states for molecules where Hartree-Fock and density functional theory are inadequate, such as molecular ground states quasi-degenerate with low-lying excited states or bond-breaking situations [20]. It uses a linear combination of configuration state functions to approximate the exact electronic wavefunction, varying both the CSF coefficients and the basis function coefficients in the molecular orbitals to obtain the total electronic wavefunction with the lowest possible energy [20]. MRCI then builds upon MCSCF reference wavefunctions to incorporate dynamic electron correlation, dramatically improving quantitative accuracy for energy predictions and property calculations [15] [19].

Theoretical Foundations

Multi-Configurational Self-Consistent Field (MCSCF)

The MCSCF wavefunction extends beyond the single-determinant approximation of Hartree-Fock theory by employing a linear combination of configuration state functions (CSFs) or configuration determinants:

[ \Psi{\text{MC}} = \sum{I} CI \PhiI ]

where (\PhiI) represents different electronic configurations and (CI) are their coefficients [20]. This approach is particularly valuable for systems with static correlation effects, where multiple electronic configurations contribute significantly to the wavefunction. In the H₂ molecule dissociation example, Hartree-Fock fails dramatically at large bond distances because it inappropriately maintains equal contributions of ionic and covalent terms, whereas MCSCF properly describes dissociation into neutral hydrogen atoms by including configurations with anti-bonding orbitals [20].

The complete active space SCF (CASSCF) method represents a particularly important MCSCF approach where the linear combination of CSFs includes all possible distributions of a specific number of electrons among a designated set of active orbitals [20]. This "full-optimized reaction space" approach ensures balanced treatment of all relevant configurations within the active space, though computational cost grows rapidly with active space size. The restricted active space SCF (RASSCF) method provides a more computationally tractable alternative by constraining the electron excitations within subdivided active orbital spaces [20].

Multi-Reference Configuration Interaction (MRCI)

MRCI extends MCSCF wavefunctions by including excited configurations from the reference space, effectively capturing dynamic electron correlation. The MRCI wavefunction can be expressed as:

[ \Psi{\text{MRCI}} = \sum{I} CI \PhiI^{\text{ref}} + \sum{S} CS \PhiS^{\text{single}} + \sum{D} CD \PhiD^{\text{double}} + \cdots ]

where the expansions include single, double, and sometimes higher excitations from the reference configurations [21]. Traditional MRCI methods individually consider excitations from every configuration state function in the reference wavefunction, leading to rapid computational cost increases with reference space size [21]. Internally-contracted MRCI approaches offer improved computational efficiency by defining excitations with respect to the entire reference wavefunction rather than individual CSFs [21].

MRCI implementations must address several methodological challenges, including size consistency issues where the energy of non-interacting fragments does not equal the sum of individual fragment energies [21]. Approximate corrections such as ACPF (averaged coupled-pair functional) and AQCC (averaged quadratic coupled cluster) help mitigate these problems [21]. Selection of reference spaces remains the most critical step in MRCI calculations, requiring careful consideration of which orbitals and electrons to include in the active space based on chemical intuition and preliminary calculations [22] [21].

Computational Protocols and Methodologies

Active Space Selection Strategies

Selecting appropriate active spaces represents the most critical step in MCSCF calculations, requiring both chemical insight and systematic validation. The active space is defined by the number of active electrons and orbitals, denoted CAS(n,m) for n electrons in m orbitals. PySCF offers several strategies for active space selection [22]:

Table 1: Active Space Selection Strategies in MCSCF Calculations

| Strategy | Description | Applicability | Implementation Considerations |

|---|---|---|---|

| Default Selection | Automatically selects orbitals around the Fermi level matching specified electron and orbital counts | Limited utility; often poor for systems with significant static correlation | Implemented when no additional orbital specification provided |

| Manual Orbital Selection | User specifies molecular orbital indices based on visual analysis | General applicability, particularly for small systems | Use sort_mo function with 1-based orbital indices after orbital visualization |

| Symmetry-Based Selection | Specifies orbital counts per irreducible representation | Systems with high symmetry | Employ sort_mo_by_irrep with dictionary specifying ncas per irrep |

| Automated Strategies (AVAS/DMET_CAS) | Algorithmic selection based on atomic orbital targets | Complex systems where manual selection is challenging | AVAS and DMET_CAS modules identify active spaces based on target AOs |

Visualization of chosen active orbitals before expensive calculations is strongly recommended, typically accomplished by writing MO coefficients to a molden file and examining with visualization software [22]. For transition metal complexes and other challenging systems, initial calculations at lower levels of theory (MP2 or CISD natural orbitals) can help identify suitable active spaces through examination of orbital occupation numbers [22].

Workflow for Potential Energy Surface Construction

The construction of accurate adiabatic and diabatic potential energy surfaces follows a systematic computational workflow:

Figure 1: Computational workflow for constructing accurate potential energy surfaces using MCSCF/MRCI methods.

For the He + H₂ system, researchers employed Jacobi coordinates (r, R, θ) to describe the triatomic system, with r representing the H-H bond length, R the distance from He to the H₂ center of mass, and θ the angle between them [15]. Comprehensive grid scans covered 34,848 adiabatic potential energy points calculated at MCSCF/MRCI level with aug-cc-pV5Z basis sets [15]. The B-spline fitting method provided accurate interpolation between calculated points to generate continuous surfaces [15].

For nonadiabatic dynamics, diabatization becomes essential. The mixing angle approach transforms adiabatic surfaces to diabatic representations using:

[ \begin{pmatrix} \Psi1^d \ \Psi2^d

\end{pmatrix}

\begin{pmatrix} \cos\alpha & \sin\alpha \ -\sin\alpha & \cos\alpha \end{pmatrix} \begin{pmatrix} \phi1^a \ \phi2^a \end{pmatrix} ]

where α represents the mixing angle determined through relation to additional electronic states or property matrix elements [15] [19].

Protocol for MCSCF/MRCI Calculation of Li₂H System

The Li₂H system protocol demonstrates a complete implementation [19]:

System Preparation: Define molecular geometry in Cs point group symmetry with 7 electrons in 2 closed-shell orbitals.

Electronic Structure Calculation:

- Method: MCSCF/MRCI with aV5Z basis set

- Active Space: 13 active orbitals (10A′ + 3A″) with 319 external orbitals

- Reference Space: 570 determinants in MCSCF, 705,202 contracted configurations in MRCI

- Core Treatment: 2 orbitals in core with 3 electrons in valence space

Coordinate System and Scanning:

- Jacobi coordinates for both reactant (H + Li₂) and product (LiH + Li) channels

- Extensive grid scanning: 74,070 adiabatic potential energy points

- Radial grids: r (0.6-3.0 Å, Δ=0.1 Å; 3.0-5.0 Å, Δ=0.2 Å)

- Angular grids: θ from 0° to 180° with 10° increments

Surface Construction: Three-dimensional B-spline fitting to interpolate potential energy surfaces

Diabatic Transformation: Calculate mixing angles using the third adiabatic state as intermediary

This protocol successfully constructed global accurate diabatic potential surfaces enabling nonadiabatic dynamics studies of the H + Li₂ reaction [19].

Research Reagent Solutions: Computational Components

Table 2: Essential Computational Components for MCSCF/MRCI Calculations

| Component | Representative Examples | Function | Selection Criteria |

|---|---|---|---|

| Basis Sets | aug-cc-pV5Z, aV5Z, cc-pVTZ | Describe spatial distribution of molecular orbitals | Accuracy requirements, system size, computational resources |

| Electronic Structure Packages | MOLPRO, PySCF, ORCA | Implement MCSCF/MRCI algorithms | Features, performance, availability, compatibility with research needs |

| Active Space Schemes | CAS(n,m), RAS, RAS-SF | Define reference space for multiconfigurational treatment | System complexity, electronic structure characteristics, computational constraints |

| Integral Evaluation | Conventional, Density Fitting (RI) | Compute electron repulsion integrals | Accuracy requirements, system size, available memory |

| Solvers | CASCI, CASSCF, DMRG, FCIQMC | Solve electronic Schrödinger equation | Active space size, computational resources, accuracy requirements |

| Analysis Tools | Molden, Jmol | Visualize orbitals and electron density | Interpretation needs, compatibility with calculation outputs |

The aug-cc-pV5Z and similar correlation-consistent basis sets provide high accuracy for MRCI calculations, with the augmented functions critically important for describing electron correlation and diffuse electrons [15] [19]. Software packages like MOLPRO 2012 and PySCF provide implementations of MCSCF/MRCI methods, with PySCF particularly emphasizing the CASSCF approach where the wavefunction includes all possible electron configurations within a designated active space [15] [22].

Applications in Potential Energy Surface Construction

Triatomic Systems: He + H₂ and H + Li₂

High-level MCSCF/MRCI calculations have successfully constructed accurate adiabatic and diabatic potential energy surfaces for fundamental triatomic systems. For He + H₂, researchers calculated 34,848 adiabatic potential energy points at MCSCF/MRCI/aug-cc-pV5Z level, followed by B-spline fitting to produce continuous surfaces [15]. The study carefully examined avoided crossing regions and optimized conical intersections, enabling construction of diabatic surfaces essential for nonadiabatic dynamics [15]. Similarly, for H + Li₂, 74,070 energy points computed at MCSCF/MRCI/aV5Z level enabled mapping of global adiabatic PESs for the lowest three states, with subsequent diabatization providing surfaces for nonadiabatic reaction dynamics studies [19].

These surfaces enabled accurate determination of vibrational states and corresponding energies for the diatomic reactants and products, with the one-dimensional Schrödinger equation:

[ \left[-\frac{\hbar^2}{2\mu}\frac{d^2}{dr^2} + Vj(r) - E{v,j}\right]\psi_{v,j}(r) = 0 ]

where μ is the reduced mass, Vj(r) contains the rotationless potential and centrifugal term, and E{v,j} represents the rovibrational energy levels [19].

Near-Dissociation States and Spectroscopy

For H₂⁺–He complexes, MRCI and full configuration interaction (FCI) potential energy surfaces enabled determination of all bound rovibrational states for the ground electronic state [23]. Comparison of transition frequencies for near-dissociation states allowed assignment of the 15.2 GHz line to a J = 2 e/f parity doublet of ortho-H₂⁺–He, while the 21.8 GHz line corresponded to a (J = 0) → (J = 1) e/e transition in para-H₂⁺–He [23]. Such precise spectroscopic predictions demonstrate the quantitative accuracy achievable with high-level MRCI methods.

Advanced Methodological Considerations

Orbital Optimization and Convergence

MCSCF calculations require careful attention to orbital optimization and convergence behavior. The PySCF implementation distinguishes between CASCI (fixed orbitals) and CASSCF (orbital optimization) approaches [22]. In CASSCF, orbitals are relaxed to minimize the CASCI energy through multiple CASCI steps followed by orbital updates, analogous to Hartree-Fock iterative algorithms [22]. Convergence difficulties may arise when using Hartree-Fock orbitals for systems with significant static correlation, making density functional theory orbitals sometimes preferable starting points [22].

Frozen orbital techniques can reduce computational effort in CASSCF calculations by fixing specific orbitals throughout optimization. Users may specify either the number of lowest orbitals to freeze or a list of orbital indices (0-based) encompassing occupied, virtual, or active orbitals [22].

Spin State Control

Multiconfigurational wavefunctions in PySCF are typically spin-adapted, but direct control of spin multiplicity (S² value) is not always available [22]. Nevertheless, spin projection Sz can be defined by adjusting the number of alpha and beta electrons in the active space, which often indirectly controls spin multiplicity [22]. For transition metal systems with open d shells, common protocols begin with single-reference maximum-Sz states before switching to complicated low-spin states in CASSCF [22].

Performance and Approximation Considerations

MRCI calculations face significant computational challenges, with several approximation strategies available to manage costs:

- RI approximation: Uses resolution of the identity to construct electron repulsion integrals, reducing memory requirements but introducing small errors (~1 mEh) [21]

- Individual selection: Includes only CSFs interacting more strongly than threshold T_sel with zeroth-order approximations to target states [21]

- Reference space reduction: Eliminates initial references contributing less than threshold T_pre to zeroth-order states [21]

- Singles handling: Including all single excitations improves accuracy but significantly increases computational cost [21]

The ORCA documentation notes that selecting MRCI calculations with Tsel = 10⁻⁶ Eh typically selects about 15% of possible double excitations, with energies within 2 kcal/mol of full MRCI results [21]. Such approximations enable application to larger systems while maintaining acceptable accuracy for many chemical applications.

MCSCF and MRCI methods provide powerful approaches for accurate electronic structure calculations, particularly for constructing potential energy surfaces in adiabatic dynamics research. The success of these methods depends critically on appropriate active space selection, balanced treatment of static and dynamic correlation, and careful validation of results. As computational resources advance and method implementations improve, these high-level ab initio approaches continue to expand their applicability to increasingly complex chemical systems, providing fundamental insights into molecular structure, spectroscopy, and reaction dynamics.

This document provides detailed application notes and protocols for investigating reactive molecular complexes, framed within broader thesis research on adiabatic dynamics potential energy surfaces (PES) calculations. The accurate mapping of PES is fundamental to understanding and predicting chemical reaction pathways, kinetics, and dynamics. These surfaces define the potential energy of a molecular system as a function of nuclear coordinates, serving as the cornerstone for simulating how chemical reactions proceed. The case studies herein—focusing on He+H₂, NaH₂, and SiH₂ complexes—illustrate the application of advanced experimental and computational techniques to characterize such systems, with a particular emphasis on the highly benchmarked H₂He⁺ ion.

Case Study 1: The H₂He⁺ Molecular Ion

The H₂He⁺ molecular ion is a fundamental system composed of two protons, one alpha particle, and three electrons. It represents a crucial intermediate in reactions such as H₂⁺ + He and HeH⁺ + H, making it astronomically relevant since it involves the most abundant elements in the primordial universe [24]. Its first detection dates back to 1925 via mass spectrometry [24].

Potential Energy Surface and Structure

The ground-state PES of H₂He⁺ features a linear H–H–He equilibrium geometry with C∞v point-group symmetry.

- The global minimum has an equilibrium dissociation energy of De = 2735 cm⁻¹ [24].

- The ground-state PES is characterized by a relatively deep well, allowing the ion to support numerous vibrational and rovibrational states.

- The system can be viewed as a semi-rigid linear triatomic molecule or as a floppy van der Waals complex with a hindered rotation of He around the H₂⁺ core [24].

Table 1: Energetics and Structure of H₂He⁺ and D₂He⁺

| Property | H₂He⁺ | D₂He⁺ | Notes |

|---|---|---|---|

| Dissociation Energy (D₀) | 1794 cm⁻¹ (para), 1852 cm⁻¹ (ortho) | Information Missing | Relative to H₂⁺(v=0) + He [24] |

| H-H/D-D Stretch Fundamental | 1840 cm⁻¹ (±0.5%) | 1309 cm⁻¹ (±0.5%) | Bright IR-active mode [24] |

| H₂⁺/D₂⁺–He Bend Fundamental | 632 cm⁻¹ | 473 cm⁻¹ | [24] |

| H₂⁺/D₂⁺–He Stretch Fundamental | 732 cm⁻¹ | 641 cm⁻¹ | [24] |

Experimental Protocol: Vibrational Spectroscopy via FELION

The following protocol details the methodology for obtaining the vibrational spectrum of H₂He⁺ [24].

1. Ion Generation and Selection:

- Precursor Gas: Use n-H₂ (normal hydrogen) with an ortho:para ratio of 3:1, which is transferred to the H₂He⁺ ions.

- Ion Source: Generate molecular ions in an external storage ion source via electron impact ionization (< 20 eV) of a precursor gas mixture.

- Mass Selection: Pass the generated ions through a linear quadrupole mass filter to select a specific mass-to-charge ratio (m/z), isolating the H₂He⁺ ions.

2. Cryogenic Trapping and Cooling:

- Apparatus: Inject the mass-selected ions into the 22-pole ion trap machine, FELION, maintained at ~4 K using a closed-cycle helium cryostat.

- Buffer Gas: The trap is filled with a dense, cold helium gas (~0.1 mm pressure).

- Cooling: The ions undergo ~10⁵ collisions with the cold buffer gas, leading to thermalization and radiative cooling of their internal degrees of freedom to the trap temperature.

3. Irradiation and Photodissociation:

- Light Source: Expose the trapped, cold ions to infrared radiation from the Free-Electron Laser FELIX.

- Induced Process: When the laser frequency is resonant with a vibrational transition of H₂He⁺, the complex undergoes multi-photon absorption, leading to photodissociation into H₂⁺ and He fragments.

- Wavelength Scanning: Tune the FELIX laser across a range of wavelengths (e.g., 500-2000 cm⁻¹) to probe different vibrational modes.

4. Fragment Detection and Analysis:

- Mass Spectrometry: After irradiation, eject all ions from the trap and analyze them with a quadrupole mass spectrometer.

- Signal Normalization: The photodissociation signal is determined by the decrease in the parent H₂He⁺ ion count and/or the increase in the H₂⁺ fragment count.

- Data Presentation: The final spectrum is a plot of the normalized photodissociation yield as a function of the laser wavenumber, revealing the vibrational fingerprint of the ion.

The workflow for this protocol is summarized in the diagram below:

Computational Protocol: Rovibrational State Calculation

The interpretation of experimental spectra is aided by accurate calculations of rovibrational energy levels.

1. Potential Energy Surface (PES):

- Utilize a high-quality, pre-calculated three-dimensional PES. For H₂He⁺, the FCI PES from Koner et al. (2019) is recommended [24].

2. Selection of Computational Method:

- Variational Calculations (High Accuracy): For states across the entire energy range, especially those close to dissociation where wavefunctions become delocalized, use accurate variational methods. The DVR3D code or Coupled-Channels Variational Method (CCVM) have been successfully applied to H₂He⁺ [24].

- Vibrational Perturbation Theory (VPT2) (Lower Cost): For energies significantly below the dissociation limit (e.g., below ~1300 cm⁻¹ for H₂He⁺), second-order Vibrational Perturbation Theory based on a linear model can provide good agreement with variational results at a lower computational cost [24].

3. Execution and Assignment:

- Perform the chosen calculation to obtain a list of rovibrational energy levels and their wavefunctions.

- Compute theoretical transition frequencies and intensities.

- Assign the calculated states by analyzing the wavefunctions, identifying the dominant motions (e.g., H-H stretch, H₂⁺-He bend). These assignments are then used to interpret the experimental photodissociation spectrum [24].

The Scientist's Toolkit: Key Research Reagents and Materials

Table 2: Essential Materials for H₂He⁺ Spectroscopy

| Item | Function / Description |

|---|---|

| Precursor Gas (n-H₂) | Source of H₂⁺ and H₂He⁺ ions upon electron impact ionization. The ortho:para ratio influences the trapped ion population. |

| Helium Buffer Gas | High-purity helium used for cryogenic cooling of trapped ions through collisional energy transfer. |

| 22-Pole Ion Trap (FELION) | Cryogenic ion trap apparatus for storing, thermalizing, and preparing cold ions for spectroscopy. |

| Free-Electron Laser (FELIX) | A widely tunable, high-intensity infrared laser source used to vibrationally excite the trapped ions. |

| Quadrupole Mass Spectrometer | Used for mass selection of parent ions before trapping and for detection of parent and fragment ions after irradiation. |

| Ab Initio PES | A pre-computed, high-accuracy potential energy surface (e.g., the FCI PES) essential for theoretical calculations and spectral assignment. |

Case Study 2: The NaH₂ Reactive Complex

The NaH₂ system serves as a benchmark for studying non-adiabatic dynamics in atom-diatom reactions, particularly those involving alkali metals. Its relevance extends to combustion and atmospheric chemistry. The interaction often involves multiple electronic PESs that come very close in energy or cross, making the Born-Oppenheimer approximation break down. Dynamics on multiple coupled PESs are crucial for accurately modeling the reaction outcome.

Computational Protocol for Non-Adiabatic Dynamics

1. Multi-Surface PES Construction:

- Perform high-level ab initio calculations (e.g., MRCI, CASPT2) for multiple low-lying electronic states (e.g., 1²A', 2²A') over a wide range of geometries.

- Calculate and fit the diabatic couplings between these states, as non-adiabatic transitions predominantly occur in the diabatic representation.

2. Quantum Dynamics Simulation:

- Method: Use the Time-Dependent Wave Packet (TDWP) method extended to multiple electronic states.

- Hamiltonian: The Hamiltonian for the triatomic reaction in the reactant's Jacobi coordinates must be expanded to include multiple electronic states and their couplings.

- Propagation: The wave packet is propagated on the coupled PESs using a split-operator scheme. The non-adiabatic transitions are inherently included through the coupling terms.

3. Data Analysis:

- Analyze the flux of the wave packet passing through a surface in the product channel to calculate state-to-state reaction probabilities.

- Integrate probabilities over collision energy and initial states to obtain total reaction cross-sections and thermal rate constants.

Case Study 3: The SiH₂ Reactive Complex

The SiH₂ system is a prototype for understanding the insertion dynamics of atomic carbene analogs into H-H bonds. The singlet S(¹D) + H₂ → SH + H reaction, which proceeds via the H₂Si complex, is a barrierless insertion reaction characterized by a deep well on the PES, leading to the formation of a long-lived collision complex [25]. Understanding its PES and dynamics is critical for modeling chemical processes in semiconductor fabrication and astrochemistry.

Protocol for PES Construction and Statistical Dynamics

1. Building a Global PES with Neural Networks:

- Ab Initio Data Generation: Calculate a large grid of ~20,000 accurate ab initio energy points (e.g., using MRCI+Q/aug-cc-pVQZ) over a large configuration space [25].

- Fitting: Employ the Neural Network (NN) method to fit the PES to the ab initio data. This method can achieve very low fitting errors (e.g., RMS error of 1.68 meV) and offers a flexible and powerful way to construct a global PES [25].

2. Statistical Quantum Dynamics on a New PES:

- PES Validation: Verify the new PES by comparing its equilibrium geometry and reaction exothermicity with experimental data [25].

- Dynamics Calculation: Perform Time-Dependent Wave Packet (TDWP) calculations on the newly constructed PES.

- Mechanism Analysis: For a barrierless reaction with a deep well, expect the following:

The relationships between PES characteristics, computational methods, and observed dynamics for the SiH₂ system are visualized below:

These case studies demonstrate the integrated application of sophisticated experimental and theoretical protocols in modern chemical physics. The study of H₂He⁺ showcases the power of combining cryogenic ion trapping with tunable IR lasers and variational quantum calculations to extract precise vibrational information from a fundamental molecular ion. The protocols for NaH₂ and SiH₂ highlight the critical importance of accurately constructed potential energy surfaces, whether for modeling non-adiabatic transitions or statistical insertion dynamics. The methodologies outlined herein—from the FELION apparatus to neural network PES fitting and wave packet dynamics—constitute an essential toolkit for researchers aiming to elucidate reaction mechanisms and energetics at the most detailed level, a prerequisite for advances in fields ranging from drug development to astrochemistry.

In the study of molecular dynamics, particularly in the calculation of adiabatic dynamics potential energy surfaces (PES), the choice of coordinate system is fundamentally important. Potential energy surfaces describe the energy of a system, especially a collection of atoms, in terms of certain parameters, normally the positions of the atoms [26]. The surface might define the energy as a function of one or more coordinates; if there is only one coordinate, it is called a potential energy curve [27]. For theoretical treatments of molecular systems, two coordinate frameworks are particularly significant: Jacobi coordinates and molecular internal coordinates (also known as geometric coordinates). These systems provide distinct approaches to representing molecular configurations and are selected based on the specific requirements of the chemical system and research objectives.

Jacobi coordinates are extensively employed in the theory of many-particle systems to simplify the mathematical formulation, finding particular utility in treating polyatomic molecules, chemical reactions, and celestial mechanics [28]. In contrast, molecular geometries or internal coordinates describe molecular structure through intuitively chemical parameters such as bond lengths, bond angles, and dihedral angles, which directly correspond to structural features recognizable to chemists [29]. The selection between these coordinate systems carries significant implications for the computational efficiency, conceptual clarity, and practical application of subsequent dynamics simulations within broader thesis research on adiabatic dynamics PES calculations.

Theoretical Foundation of Jacobi Coordinates

Mathematical Definition and Algorithm

In the context of the N-body problem, Jacobi coordinates are defined through a specific recursive algorithm that systematically reduces the many-body problem to a more tractable form. For an N-body system, the Jacobi coordinates are constructed as follows [28]:

Relative position vectors: For j ∈ {1, 2, …, N-1}: [ \boldsymbol{r}j = \frac{1}{m{0j}} \sum{k=1}^{j} mk \boldsymbol{x}k - \boldsymbol{x}{j+1} ] where ( m{0j} = \sum{k=1}^{j} m_k )

Center-of-mass vector: [ \boldsymbol{r}N = \frac{1}{m{0N}} \sum{k=1}^{N} mk \boldsymbol{x}_k ]

This construction process can be conceptually understood as a binary tree algorithm: we choose two bodies with position coordinates xj and xk, replacing them with one virtual body at their center of mass, while defining the relative position coordinate rjk = xj - x_k. This process repeats with the N-1 bodies (the remaining N-2 plus the new virtual body) until after N-1 steps we obtain Jacobi coordinates consisting of relative positions and one coordinate for the final center of mass position [28].

The result is a system of N-1 translationally invariant coordinates r1, …, rN-1 and one center-of-mass coordinate r_N, with an associated Jacobian equal to 1, significantly simplifying the mathematical treatment of many-body systems [28].

Application in Triatomic Systems

For triatomic systems (A + BC), Jacobi coordinates are typically described by three parameters: the bond length within the diatomic molecule (r), the distance from the atom to the center of mass of the diatom (R), and the angle between these two vectors (θ) [15]. This coordinate system is particularly advantageous for scattering problems and reaction dynamics as it naturally describes the approach and separation of fragments.

Table 1: Jacobi Coordinate Parameters for Triatomic Systems