AI-Driven Molecular Property Prediction: Accelerating Drug Discovery with Advanced Machine Learning

This article provides a comprehensive overview of the transformative role of artificial intelligence in predicting molecular properties for pharmaceutical compounds.

AI-Driven Molecular Property Prediction: Accelerating Drug Discovery with Advanced Machine Learning

Abstract

This article provides a comprehensive overview of the transformative role of artificial intelligence in predicting molecular properties for pharmaceutical compounds. It explores the evolution from traditional expert-crafted features to modern deep learning approaches, including graph neural networks, pretrained foundation models, and innovative multimodal strategies. The content examines critical methodological advancements in molecular representation learning, addresses practical implementation challenges such as data heterogeneity and model interpretability, and presents rigorous validation frameworks for assessing model performance. Designed for researchers, scientists, and drug development professionals, this resource synthesizes current state-of-the-art techniques while highlighting emerging trends that are reshaping early-stage drug discovery and development pipelines.

The Essential Foundation: Understanding Molecular Property Prediction in Modern Drug Discovery

The Critical Role of Molecular Property Prediction in Reducing Drug Development Costs and Timelines

Molecular property prediction has emerged as a cornerstone of modern drug discovery, leveraging machine learning (ML) to accurately forecast the absorption, distribution, metabolism, excretion, and toxicity (ADMET) profiles of small molecules. This capability is fundamentally reducing the time and cost associated with bringing new therapeutics to market. By prioritizing compounds with higher probability of success before synthesis and experimental testing, AI-driven platforms can compress traditional discovery timelines from 5-6 years to as little as 18-24 months for some candidates [1]. This paradigm shift replaces labor-intensive, human-driven workflows with AI-powered discovery engines capable of exploring vast chemical and biological search spaces, thereby redefining the speed and scale of modern pharmacology [1].

The economic implications are substantial. Companies like Exscientia report in silico design cycles approximately 70% faster than traditional methods, requiring 10x fewer synthesized compounds to identify viable clinical candidates [1]. Furthermore, the growth of AI-derived drug candidates has been exponential, with over 75 molecules reaching clinical stages by the end of 2024, compared to essentially none at the start of 2020 [1]. This represents nothing less than a transformation in how pharmaceutical research and development is conducted, with molecular property prediction at its core.

Quantitative Impact of AI-Driven Prediction on Drug Development

The integration of molecular property prediction into pharmaceutical R&D pipelines has yielded measurable improvements across key performance indicators. The following table summarizes comparative metrics between traditional and AI-enhanced approaches for early-stage discovery.

Table 1: Comparative Performance of AI-Enhanced vs. Traditional Drug Discovery

| Metric | Traditional Approach | AI-Enhanced Approach | Source/Example |

|---|---|---|---|

| Early-stage timeline | ~5 years | 18-24 months (reported cases) | Insilico Medicine's IPF drug [1] |

| Design cycle efficiency | Baseline | ~70% faster | Exscientia platform report [1] |

| Compounds synthesized | Baseline | 10x fewer | Exscientia industry analysis [1] |

| Clinical candidates (by end of 2024) | N/A | >75 AI-derived molecules | Industry-wide analysis [1] |

| Data regime for effective prediction | Large, homogeneous datasets | As few as 29 labeled samples | ACS method validation [2] |

These quantitative gains translate into direct cost savings by reducing late-stage attrition, particularly through improved prediction of ADMET properties which account for approximately 60% of drug failures. Platforms demonstrating these capabilities include Exscientia's generative chemistry approach, Schrödinger's physics-enabled design strategy (with a TYK2 inhibitor advancing to Phase III trials), and Insilico Medicine's generative-AI-designed idiopathic pulmonary fibrosis drug which progressed from target discovery to Phase I in 18 months [1].

Key Methodologies and Experimental Protocols

Data Consistency Assessment (DCA) Prior to Modeling

Purpose: To identify and mitigate dataset misalignments arising from differences in experimental protocols, feature shifts, and applicability domains that can introduce noise and degrade model performance [3].

Principles: Data heterogeneity and distributional misalignments pose critical challenges for ML models, often compromising predictive accuracy. These issues are particularly acute in preclinical safety modeling where limited data and experimental constraints exacerbate integration problems [3].

Procedure:

- Dataset Collection: Gather molecular property data from multiple public and proprietary sources (e.g., Obach et al., Lombardo et al., TDC, ChEMBL) [3].

- Statistical Summary: Generate descriptive statistics for each dataset, including number of molecules, endpoint statistics (mean, standard deviation, quartiles for regression; class counts for classification), and feature similarity metrics [3].

- Distribution Analysis: Perform pairwise two-sample Kolmogorov-Smirnov tests for regression tasks or Chi-square tests for classification tasks to identify significant endpoint distribution differences [3].

- Chemical Space Visualization: Use UMAP (Uniform Manifold Approximation and Projection) to project datasets into a lower-dimensional space and assess coverage and potential applicability domains [3].

- Inconsistency Detection: Apply tools like AssayInspector to identify outliers, batch effects, conflicting annotations for shared molecules, and datasets with significantly different value ranges [3].

- Informed Integration: Based on diagnostic reports, decide whether to aggregate, transform, or exclude specific datasets to ensure consistency before model training.

Applications: Critical for integrating public ADME datasets for properties like half-life and clearance, where significant misalignments between benchmark and gold-standard sources have been documented [3].

Multi-Task Learning with Adaptive Checkpointing and Specialization (ACS)

Purpose: To mitigate negative transfer (NT) in multi-task learning while preserving the benefits of inductive transfer, especially in ultra-low data regimes and imbalanced training datasets [2].

Principles: Multi-task learning leverages correlations among related molecular properties to alleviate data bottlenecks, but is often undermined when updates from one task detrimentally affect another. The ACS training scheme combines task-agnostic and task-specific components to balance shared learning with task-specific protection [2].

Procedure:

- Architecture Setup: Implement a shared graph neural network (GNN) backbone based on message passing to learn general-purpose molecular representations. Connect this to task-specific multi-layer perceptron (MLP) heads for each property prediction task [2].

- Training with Loss Masking: Train the model on all available tasks simultaneously, using loss masking for missing labels to maximize data utilization [2].

- Validation Monitoring: Continuously monitor the validation loss for every task throughout the training process [2].

- Adaptive Checkpointing: For each task, checkpoint the model parameters (both backbone and specific head) whenever that task's validation loss reaches a new minimum [2].

- Specialization: Upon completion of training, each task retains its best-performing specialized backbone-head pair, effectively protecting it from detrimental parameter updates from other tasks [2].

Applications: Validated on MoleculeNet benchmarks (ClinTox, SIDER, Tox21) and real-world scenarios like predicting sustainable aviation fuel properties with as few as 29 labeled samples. ACS consistently surpassed or matched state-of-the-art supervised methods, showing particular strength in imbalanced data conditions [2].

Data Augmentation via Multi-Task Learning for Sparse Datasets

Purpose: To enhance prediction quality for data-scarce molecular properties by augmenting training with additional, even potentially sparse or weakly related, molecular data [4].

Principles: The effectiveness of ML for molecular property prediction is often limited by scarce and incomplete experimental datasets. Multi-task learning facilitates training in these low-data regimes by sharing representations across tasks [4].

Procedure:

- Primary Task Identification: Define the primary molecular property task for which data is scarce (e.g., fuel ignition properties).

- Auxiliary Task Selection: Identify and gather data for auxiliary molecular properties, which can be larger in scale but potentially related (e.g., other physicochemical properties from QM9 dataset) [4].

- Model Architecture Design: Implement a multi-task graph neural network architecture capable of handling multiple prediction outputs.

- Controlled Training: Train the model on progressively larger subsets of the auxiliary data alongside the primary, sparse dataset.

- Performance Evaluation: Systematically evaluate the conditions under which multi-task learning outperforms single-task models for the primary target.

- Recommendation Formulation: Establish guidelines for selecting and integrating auxiliary data to maximize predictive accuracy for the primary, data-constrained task.

Applications: Systematically investigated using QM9 datasets and extended to practical real-world datasets of fuel ignition properties that are small and inherently sparse [4].

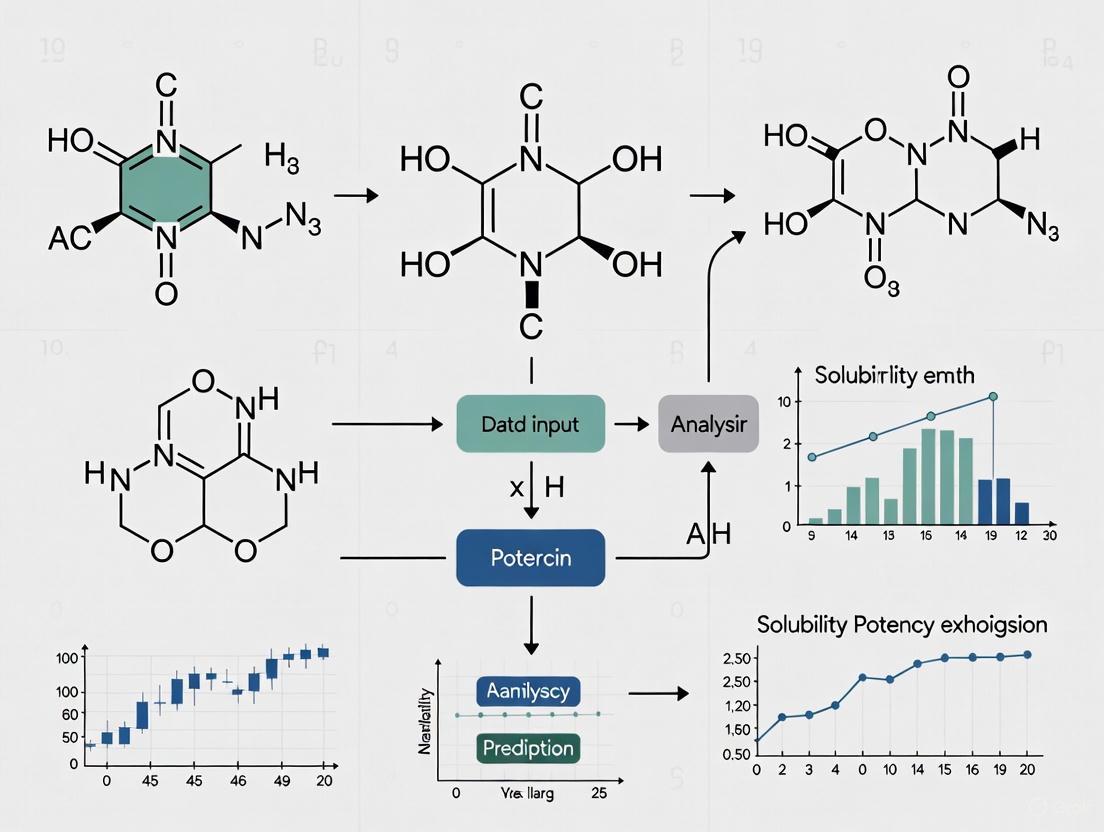

Visualization of Key Workflows

Data Consistency Assessment (DCA) Workflow

Diagram 1: DCA workflow for reliable data integration.

ACS Multi-Task Training Scheme

Diagram 2: ACS training process mitigating negative transfer.

Table 2: Key Resources for Molecular Property Prediction Research

| Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| AssayInspector | Software Package (Python) | Data consistency assessment prior to modeling; identifies outliers, batch effects, and dataset discrepancies [3]. | Preprocessing and integration of heterogeneous ADME datasets. |

| ACS Training Scheme | Algorithm/Method | Multi-task learning with adaptive checkpointing to mitigate negative transfer in low-data regimes [2]. | Training robust models when labeled data is scarce or imbalanced. |

| Therapeutic Data Commons (TDC) | Data Repository | Provides standardized benchmarks and curated molecular property data for predictive modeling [3]. | Accessing pre-processed ADME and toxicity datasets for model training. |

| RDKit | Software Library | Calculates chemical descriptors (ECFP4 fingerprints, 1D/2D descriptors) for molecular representation [3]. | Featurization of chemical structures for machine learning input. |

| Graph Neural Network (GNN) | Model Architecture | Learns directly from molecular graph structures, capturing complex structure-property relationships [2]. | End-to-end molecular property prediction from structure. |

| Multi-Task GNN | Model Architecture | Leverages correlations between related properties to improve data efficiency and generalization [4]. | Simultaneous prediction of multiple ADMET endpoints. |

The successful development of a pharmaceutical compound is predicated on a comprehensive understanding of its key molecular properties across multiple domains. These properties encompass not only a compound's Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) but also its fundamental drug-likeness and potential environmental fate upon release. Accurately predicting these characteristics early in the drug discovery pipeline is essential for selecting candidates with optimal pharmacokinetics, minimal toxicity, and reduced ecological impact [5] [6]. Failures in clinical stages are often attributable to suboptimal pharmacokinetic profiles and unforeseen toxicity, underscoring the urgent need for robust predictive methodologies [5]. This application note details the core concepts, experimental protocols, and computational frameworks for evaluating these critical molecular properties, providing researchers with practical tools for integrated compound assessment.

Core Molecular Property Domains

ADMET Properties

ADMET evaluation is fundamental to determining a drug candidate's clinical success. These properties govern pharmacokinetics (PK) and safety, directly influencing bioavailability, therapeutic efficacy, and the likelihood of regulatory approval [5].

- Absorption: Determines the rate and extent of drug entry into systemic circulation. Key parameters include permeability (e.g., from Caco-2 assays), solubility, and interactions with efflux transporters like P-glycoprotein (P-gp) [5].

- Distribution: Reflects drug dissemination across tissues and organs, affecting therapeutic targeting and off-target effects. A key parameter is blood-brain barrier (BBB) penetration logBB [6].

- Metabolism: Describes biotransformation processes, primarily by hepatic enzymes, which influence drug half-life and bioactivity. Cytochrome P450 (CYP) enzyme interactions are critically assessed [5].

- Excretion: Facilitates drug and metabolite clearance, impacting duration of action and potential accumulation [5].

- Toxicity: A pivotal consideration for evaluating adverse effects and overall human safety, encompassing endpoints like mutagenicity (Ames test) and hepatotoxicity [5] [6].

Table 1: Key ADMET Properties and Experimental Assays

| ADMET Property | Key Parameters | Common Experimental Assays |

|---|---|---|

| Absorption | Permeability, Solubility, P-gp substrate | Caco-2 cell lines, PAMPA, Solubility assays |

| Distribution | Blood-Brain Barrier (BBB) Penetration | LogBB measurement, MDR1-MDCKII assay [6] |

| Metabolism | Metabolic Stability, CYP Inhibition/Induction | Human/Mouse Liver Microsomal Clearance [6] [7] |

| Excretion | Clearance, Half-life | In vivo PK studies, Biliary excretion models |

| Toxicity | Mutagenicity, Hepatotoxicity | Ames test, Liver microsome toxicity assays |

Drug-Likeness

Drug-likeness is a qualitative concept that evaluates the probability of a compound becoming an oral drug based on its physicochemical properties [8]. A common approach to assess this is by applying a set of rules, the most famous being Lipinski's Rule of Five [9]. This rule states that a compound is more likely to have poor absorption or permeability if it violates more than one of the following criteria:

- Molecular weight < 500 Da

- Number of Hydrogen Bond Donors < 5

- Number of Hydrogen Bond Acceptors < 10

- Calculated Log P (CLogP) < 5 [9]

An alternative approach to quantifying drug-likeness is the Quantitative Estimate of Drug-likeness (QED), which considers a weighted combination of multiple physicochemical properties [10]. It is crucial to remember that a positive drug-likeness score indicates the presence of structural fragments common in drugs but does not guarantee balanced properties, such as acceptable lipophilicity [8].

Environmental Fate

Environmental fate describes the journey and transformation of a chemical substance after its release into the environment [11]. For pharmaceutical compounds, this is critical for understanding ecological risks. The primary processes involved are:

- Transport: The movement of substances through environmental compartments like air, water, and soil via processes like advection, runoff, and leaching [11].

- Transformation: The change in a chemical's structure through biodegradation (by microorganisms), hydrolysis (reaction with water), photolysis (degradation by sunlight), and other reactions [12] [11].

- Accumulation: The buildup of substances in specific environmental compartments (e.g., sediments) or within living organisms, potentially leading to biomagnification up the food chain [11].

Emerging contaminants (ECs), a category that includes many pharmaceuticals, are of particular concern due to their persistence and potential biological effects even at trace concentrations [12].

Experimental Protocols & Computational Workflows

Protocol: Drug-Likeness Prediction Using ADMETlab2.0

This protocol provides a step-by-step guide for predicting the drug-like properties of compounds using the ADMETlab2.0 platform [9].

1. Purpose To rapidly evaluate the drug-likeness of candidate compounds based on key pharmaceutical rules and properties, including Lipinski's Rule of Five, mutagenicity, and carcinogenicity.

2. Research Reagent Solutions & Materials

Table 2: Essential Research Reagents and Tools for Drug-Likeness Screening

| Item Name | Function/Description | Example/Source |

|---|---|---|

| Compound Libraries | Collections of molecules in standardized chemical file formats (e.g., SDF, SMILES) for screening. | In-house database, ZINC, PubChem |

| ADMETlab2.0 Server | A web-based platform for the computational prediction of ADMET and drug-like properties. | https://admetmesh.scbdd.com/ |

| pkCSM Server | An online tool used as an orthogonal validator for specific toxicity endpoints, such as liver toxicity. | http://biosig.unimelb.edu.au/pkcsm/ |

3. Procedure

- Compound Input: Prepare and upload the chemical structures of all candidate compounds to the ADMETlab2.0 server. Acceptable input formats include SMILES strings or common structural files (e.g., SDF, MOL).

- Property Selection: In the tool's interface, select the relevant drug-likeness parameters for prediction. These typically include:

- Lipinski's rule violations (Molecular Weight, H-bond donors/acceptors, Log P)

- Mutagenicity (Ames test)

- Carcinogenicity

- Other relevant physicochemical properties

- Job Submission and Analysis: Run the prediction. Upon completion, download and analyze the results.

- Data Filtering:

- For a compound to pass Lipinski's rule, it should exhibit no more than one violation.

- For mutagenicity and carcinogenicity, the probability value should typically be < 0.5 to be considered of low concern [9].

- Select compounds with the greatest number of favorable drug-like properties for further studies.

- Orthogonal Validation: Use the pkCSM server or similar tools to cross-validate specific toxicity predictions, such as liver toxicity [9].

4. Expected Output A structured table of results for each compound, indicating pass/fail status for selected rules and quantitative or qualitative predictions for other ADMET endpoints.

Workflow: Machine Learning for ADMET Prediction

Machine learning (ML) is revolutionizing ADMET prediction by deciphering complex structure-property relationships, providing scalable, efficient alternatives to resource-intensive experimental methods [5]. The following diagram illustrates a robust ML workflow for building predictive ADMET models, incorporating best practices from recent research.

ML Workflow for ADMET Prediction

1. Data Curation and Standardization

- Source Data: Gather large-scale experimental ADMET data from public databases like ChEMBL, PubChem, and BindingDB [6].

- Key Challenge: Experimental results for the same compound can vary significantly due to different conditions (e.g., buffer, pH). A multi-agent Large Language Model (LLM) system can be employed to automatically extract and standardize experimental conditions from unstructured assay descriptions, which is crucial for creating high-quality benchmarks like PharmaBench [6].

- Data Splitting: Split the dataset using scaffold-based methods to ensure the model generalizes to novel chemical structures, not just those similar to the training set [7].

2. Molecular Featurization (Representation) Convert molecular structures into numerical representations that ML models can process. State-of-the-art methods include:

- Graph Neural Networks (GNNs): Natively represent a molecule as a graph of atoms (nodes) and bonds (edges), effectively capturing structural information [5].

- Pharmacophore-Based Features: Encode the spatial arrangement of key chemical features (e.g., hydrogen bond donors, hydrophobic regions) critical for biological activity [10].

- Fingerprints: Use binary vectors representing the presence or absence of specific substructures (e.g., MACCS keys) or pharmacophore patterns (e.g., CATS descriptors) [13].

3. Model Training and Selection

- Algorithm Choice: Employ multitask deep neural networks, ensemble learning, and graph neural networks which have demonstrated superior performance by learning shared representations across related ADMET tasks [5] [7].

- Federated Learning: For organizations with proprietary data, federated learning enables collaborative training of models across distributed datasets without sharing confidential data, significantly expanding chemical space coverage and improving model robustness [7].

4. Model Validation and Evaluation

- Rigorous Benchmarking: Evaluate models against rigorous, transparent benchmarks like the Polaris ADMET Challenge. Use multiple random seeds and scaffold-based cross-validation to ensure results are statistically significant [7].

- Performance Metrics: Assess models based on predictive accuracy, applicability domain, and generalization to unseen chemical scaffolds.

Framework: Pharmacophore-Guided Molecular Generation

A promising application of AI in early drug discovery is the de novo generation of novel drug-like molecules. The diagram below outlines a generative framework that uses pharmacophore similarity to create bioactive compounds with high structural novelty [10] [13].

Pharmacophore-Guided Generative Design

1. Input and Pharmacophore Definition

- Reference Set: Provide a custom set of known active compounds, such as FDA-approved drugs or clinical candidates [13].

- Pharmacophore Model: Define the essential spatial arrangement of chemical features (e.g., hydrogen bond donors/acceptors, hydrophobic spots) required for biological activity. This can be derived from the reference set or a protein target's structure [10].

2. Molecular Generation and Optimization

- Generative Model: Models like PGMG (Pharmacophore-Guided Molecule Generation) use a graph neural network to encode the pharmacophore and a transformer decoder to generate molecules in an autoregressive manner [10]. Alternatively, Reinforcement Learning (RL) frameworks like FREED++ can be used.

- Reward Function: The key to success is a carefully designed reward function that balances multiple objectives [13]:

- Maximize Pharmacophore Similarity: Uses metrics like cosine similarity on pharmacophore descriptors (e.g., CATS).

- Minimize Structural Similarity: Uses the Tanimoto coefficient or MAP4 fingerprints on structural fingerprints (e.g., MACCS keys) to ensure novelty.

- Optimize Drug-Likeness: Incorporates scores like QED (Quantitative Estimate of Drug-likeness) and Synthetic Accessibility (SA).

3. Output and Validation

- The output is a set of novel molecules that retain the pharmacophoric features of active compounds but are structurally distinct, enhancing potential for patentability and functional innovation [13].

- Generated molecules should be validated using orthogonal methods, including docking studies and checks for synthetic accessibility [13].

The future of molecular property prediction lies in the integration of advanced computational techniques across the ADMET, drug-likeness, and environmental fate domains. The convergence of large-scale benchmarking data (PharmaBench), sophisticated ML models (GNNs, Multitask Learning), and collaborative training paradigms (Federated Learning) is systematically addressing the historical limitations of data scarcity and poor generalizability [6] [7]. Furthermore, generative AI approaches are shifting the paradigm from passive prediction to active design, creating novel, optimized molecular entities from the outset [10] [13].

Simultaneously, the regulatory and ecological landscape is evolving to consider the complete lifecycle of a pharmaceutical compound. Understanding a molecule's environmental fate—its transport, transformation, and potential for accumulation in aquatic and terrestrial ecosystems—is becoming an integral part of a comprehensive risk assessment [12] [11]. By adopting these integrated and forward-looking strategies, researchers and drug development professionals can significantly de-risk the discovery pipeline, accelerate the development of safer therapeutics, and fulfill their role as responsible stewards of both human and environmental health.

Molecular representation learning has catalyzed a paradigm shift in computational chemistry and pharmaceutical research, transitioning from reliance on manually engineered descriptors to the automated extraction of features using deep learning. This evolution enables more accurate predictions of molecular properties, which is crucial for accelerating drug discovery and development processes. In the pharmaceutical industry, where bringing a new drug to market traditionally costs between $161 million to over $4.5 billion and takes up to 15 years, advances in molecular representation learning offer promising, efficient alternatives for preclinical screening of drug-like molecules. These approaches are particularly valuable for early evaluation of absorption, distribution, metabolism, excretion, toxicity, and physicochemical (ADMET-P) properties, which can significantly reduce research and development costs while mitigating the risk of side effects and toxicities.

The global molecular modeling market, valued at $8.25 billion in 2024 and projected to reach $9.44 billion in 2025, reflects the growing importance of these computational approaches in pharmaceutical research and development. This review comprehensively examines the evolution of molecular representations, from traditional expert-crafted features to modern learned embeddings, with specific applications in pharmaceutical compound research.

Historical Perspective: Expert-Crafted Molecular Representations

Traditional Molecular Descriptors and Fingerprints

Before the advent of learned representations, molecular representation relied heavily on expert-crafted features designed by cheminformatics specialists. These traditional representations can be broadly categorized into molecular descriptors and molecular fingerprints, both of which translate chemical structures into computationally tractable formats while emphasizing different aspects of molecular information.

Molecular descriptors provide detailed physicochemical information through numerical computation, including:

- Physicochemical descriptors: Quantify properties like molecular weight, logP, and molar refractivity

- Topological descriptors: Encode structural patterns using graph-theoretical indices

- Quantum chemical descriptors: Capture electronic properties derived from quantum mechanical calculations

Molecular fingerprints employ a more structured encoding method, generating binary or hashed codes by identifying structural fragments, functional groups, or substructures within molecules. Common fingerprint approaches include:

- Extended-Connectivity Fingerprints (ECFP): Capture molecular features based on atom connectivity

- MACCS keys: Encode specific chemical substructures using a predefined dictionary

- Pharmacophore descriptors: Contain information about the spatial orientation and interactions of a molecule

Table 1: Performance Comparison of Molecular Fingerprints Across Task Types

| Fingerprint Type | Classification Tasks (Avg. AUC) | Regression Tasks (Avg. RMSE) | Key Characteristics |

|---|---|---|---|

| ECFP | 0.830 | - | Excellent for local structure and atomic environment |

| RDKit | 0.830 | - | Structural pattern recognition |

| MACCS | - | 0.587 | Effective for continuous property prediction |

| EState | 0.783 | - | Electronic state and atomic environment focus |

| ECFP+RDKit (Combination) | 0.843 | - | Complementary features for classification |

| MACCS+EState (Combination) | - | 0.464 | Comprehensive description for regression |

Limitations of Traditional Approaches

While traditional molecular representations enabled significant advances in quantitative structure-activity relationship (QSAR) modeling, they present several limitations:

- Information loss: Structural fingerprints and descriptors discard some molecular structural information and heavily rely on prior knowledge

- Fixed nature: Cannot easily adapt to represent dynamic behaviors of molecules in different environments

- Task dependency: Performance varies significantly across different prediction tasks

- Limited generalization: Struggle to capture complex, non-linear relationships in molecular data

These limitations motivated the development of more sophisticated, data-driven representation learning approaches that could automatically extract relevant features from molecular data.

Modern Approaches: Learned Molecular Representations

Graph-Based Representations

Graph-based representations have introduced a transformative dimension to molecular encoding by explicitly representing atoms as nodes and bonds as edges in a graph structure. This approach naturally aligns with molecular topology and enables more nuanced structural depiction.

Graph Neural Networks (GNNs) have emerged as particularly effective architectures for learning from molecular graphs. Variants include:

- Graph Convolutional Networks (GCNs): Aggregate features through convolution operations on graph structures

- Graph Attention Networks (GATs): Assign different importance weights to neighbors of each node

- Directed Message Passing Neural Networks (D-MPNN): Extract molecular features through directed message passing

The MoleculeFormer architecture exemplifies modern graph-based approaches, implementing a multi-scale feature integration model based on Graph Convolutional Network-Transformer architecture. It uses independent GCN and Transformer modules to extract features from atom and bond graphs while incorporating rotational equivariance constraints and prior molecular fingerprints, capturing both local and global features with invariance to rotation and translation.

Advanced Architectures for Imperfectly Annotated Data

Real-world pharmaceutical datasets often face challenges of imperfect annotation, where properties are labeled in a scarce, partial, and imbalanced manner due to the prohibitive cost of experimental evaluation. Novel architectures have emerged to address these limitations:

OmniMol represents a unified and explainable multi-task molecular representation learning framework that formulates molecules and corresponding properties as a hypergraph. This approach extracts three key relationships: among properties, molecule-to-property, and among molecules. Key innovations include:

- Task-routed mixture of experts (t-MoE) backbone: Captures correlations among properties and produces task-adaptive outputs

- SE(3)-encoder: Enables chirality awareness from molecular conformations without expert-crafted features

- Equilibrium conformation supervision: Applies recursive geometry updates and scale-invariant message passing

This architecture addresses imperfect annotation issues, avoids synchronization difficulties associated with multiple-head models, and maintains O(1) complexity independent of the number of tasks.

Table 2: Performance Comparison of Molecular Representation Learning Models

| Model | Architecture Type | Key Innovations | Reported Performance |

|---|---|---|---|

| OmniMol | Hypergraph-based Multi-task | Task-routed MoE, SE(3)-encoder, equilibrium conformation supervision | State-of-the-art in 47/52 ADMET-P tasks |

| MoleculeFormer | GCN-Transformer Hybrid | Multi-scale feature integration, rotational equivariance, 3D structure incorporation | Robust performance across 28 datasets |

| HRGCN+ | Modified GNN | Combines molecular graphs and descriptors as input | Simple but highly efficient modeling |

| FP-GNN | Graph Attention Network | Integrates three types of molecular fingerprints with GAT | Enhanced performance and interpretability |

| KPGT | Graph Transformer | Knowledge-guided pre-training strategy | Robust representations for drug discovery |

Experimental Protocols and Methodologies

Protocol 1: Implementing Multi-Task Learning with OmniMol for ADMET-P Prediction

Purpose: To predict multiple ADMET-P properties simultaneously from imperfectly annotated data using hypergraph-based representation learning.

Materials and Reagents:

- Computational Resources: High-performance computing cluster with GPU acceleration (minimum 16GB VRAM)

- Software Dependencies: Python 3.8+, PyTorch 1.12+, RDKit 2022.09+, OmniMol framework

- Data Sources: ADMETLab 2.0 dataset (approximately 250k molecule-property pairs covering 40 classification and 12 regression tasks)

Procedure:

- Data Preprocessing:

- Convert SMILES representations to molecular graphs with atom and bond features

- Normalize experimental property values using robust scaling

- Construct hypergraph structure linking molecules to their annotated properties

Model Initialization:

- Initialize task embeddings using task-related meta-information encoder

- Configure task-routed mixture of experts (t-MoE) backbone with 8 expert networks

- Set up SE(3)-encoder for physical symmetry with equilibrium conformation supervision

Training Protocol:

- Implement multi-task optimization with uncertainty-weighted loss function

- Train for 500 epochs with batch size of 64 using AdamW optimizer

- Apply recursive geometry updates every 50 epochs

- Utilize learning rate scheduling with warmup and cosine decay

Evaluation:

- Assess performance on hold-out test set across all tasks

- Generate explainability maps for molecule-property relationships

- Compare against single-task and multi-head baselines

Troubleshooting:

- For unstable training, increase the number of expert networks in t-MoE module

- If conformer generation fails, implement fallback to distance geometry

- Address class imbalance using focal loss for classification tasks

Protocol 2: Evaluating Representation Quality with Topological Data Analysis

Purpose: To systematically evaluate and select molecular representations based on topological characteristics of feature spaces.

Materials and Reagents:

- Software: TopoLearn framework, scikit-learn 1.2+, Persim 0.3+, Gudhi 3.7.0+

- Datasets: 12 benchmark molecular datasets with diverse property landscapes

- Representations: 25 molecular representations (including fingerprints, descriptors, and learned embeddings)

Procedure:

- Feature Space Construction:

- Generate molecular representations using each encoding method

- Compute pairwise distances using Tanimoto (for fingerprints) and Euclidean (for continuous) metrics

- Apply dimensionality reduction for visualization (UMAP or t-SNE)

Topological Descriptor Calculation:

- Compute persistent homology descriptors using Vietoris-Rips complex construction

- Calculate QSAR landscape indices (SALI, SARI, MODI, ROGI)

- Extract topological features including Betti curves and persistence images

Modelability Assessment:

- Train machine learning models (Random Forest, GNN, Transformer) on each representation

- Evaluate generalization error using nested cross-validation

- Correlate topological descriptors with model performance metrics

Representation Selection:

- Apply TopoLearn predictive model to estimate generalization error

- Select optimal representation based on topological characteristics

- Validate selection against empirical performance

Troubleshooting:

- For computational efficiency, subsample large datasets before persistent homology calculation

- If topological descriptors show weak correlation, experiment with alternative distance metrics

- Address representation dimensionality effects using ROGI-XD instead of ROGI

Visualization Framework

Workflow Diagram: Molecular Representation Learning Pipeline

Architecture Diagram: OmniMol Hypergraph Framework

Table 3: Essential Computational Tools for Molecular Representation Learning

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| RDKit | Cheminformatics Library | Molecular descriptor calculation, fingerprint generation, and graph construction | Fundamental toolkit for all molecular representation tasks |

| PyTorch Geometric | Deep Learning Library | Graph neural network implementations and molecular graph processing | GNN-based representation learning |

| OmniMol Framework | Specialized Architecture | Multi-task learning with hypergraph representations | ADMET-P prediction with imperfect annotation |

| TopoLearn | Analysis Framework | Topological data analysis for representation evaluation | Representation selection and quality assessment |

| ADMETLab 2.0 Dataset | Benchmark Data | Curated molecular properties for ADMET-P prediction | Model training and validation |

| Open Catalyst 2020 | Large-Scale Dataset | Quantum mechanical calculations for catalyst properties | Pre-training and transfer learning |

| Flare V7 | Molecular Modeling Platform | Combines ligand-based and structure-based drug design | Molecular dynamics and docking studies |

The evolution of molecular representations from expert-crafted features to learned embeddings represents a fundamental transformation in computational drug discovery. Modern approaches, particularly graph-based representations and specialized architectures like OmniMol, have demonstrated remarkable capabilities in addressing real-world challenges such as imperfectly annotated data and complex property landscapes.

The integration of physical principles through SE(3)-equivariant networks and conformational supervision bridges the gap between data-driven approaches and fundamental chemical knowledge. Furthermore, topological data analysis provides systematic frameworks for evaluating representation quality beyond empirical benchmarking.

As the field advances, key future directions include:

- Development of foundation models for chemistry through self-supervised learning on large-scale molecular datasets

- Improved integration of quantum mechanical properties and 3D structural information

- Cross-modal fusion strategies that combine graphs, sequences, and quantum descriptors

- Enhanced explainability frameworks for translating model insights to chemical intuition

These advances in molecular representation learning are poised to significantly accelerate drug discovery pipelines, reduce development costs, and enable more precise targeting of therapeutic interventions, ultimately contributing to the development of novel treatments for diseases with significant unmet needs.

In the pursuit of novel pharmaceutical compounds, the accurate prediction of molecular properties is a cornerstone of efficient drug discovery. However, this field is perpetually challenged by three fundamental issues: the scarcity of high-quality experimental data, the inherent variability of biological experiments, and the perplexing phenomenon of activity cliffs, where minute structural changes cause drastic differences in biological potency. This Application Note delineates these interconnected challenges and provides structured data, validated protocols, and visual workflows to aid researchers in navigating this complex landscape. Framed within the context of molecular property prediction, the content herein is designed to equip scientists with strategies to enhance the reliability and predictive power of their computational models.

Data Scarcity in Molecular Property Prediction

Data scarcity remains a major obstacle to effective machine learning in molecular property prediction, affecting diverse domains from pharmaceuticals to energy carriers [2]. The development of robust predictive models is constrained by the limited availability of reliable, high-quality labels for many properties of interest.

Strategies to Overcome Data Scarcity

Several machine learning strategies have been developed to mitigate the impact of limited data:

- Multi-Task Learning (MTL): MTL leverages correlations among related molecular properties to improve predictive performance by sharing learned representations across tasks. However, its efficacy can be degraded by negative transfer in imbalanced datasets [2].

- Adaptive Checkpointing with Specialization (ACS): A advanced training scheme for multi-task graph neural networks that mitigates detrimental inter-task interference while preserving the benefits of MTL. It combines a shared, task-agnostic backbone with task-specific heads, checkpointing the best model parameters when a task's validation loss reaches a new minimum [2]. On benchmarks like ClinTox, SIDER, and Tox21, ACS consistently matched or surpassed the performance of recent supervised methods and demonstrated the ability to learn accurate models with as few as 29 labeled samples for sustainable aviation fuel properties [2].

- One-Shot Learning (OSL): A technique for developing a model on a training set consisting of one or a few instances through the transfer of information contained in other models [14] [15].

- Federated Learning (FL): An emerging technology that enables collaborative model training across multiple organizations without sharing the underlying data, thus overcoming data privacy concerns and silos [14].

- Leveraging Patent Data: Patent data can provide a rich, commercially relevant source of information that is often absent from public academic databases, helping to fill critical gaps about failed experiments and strategic compound design [16].

Table 1: Strategies for Mitigating Data Scarcity in AI-Driven Drug Discovery

| Strategy | Core Principle | Reported Advantage | Considerations |

|---|---|---|---|

| Multi-Task Learning (MTL) [2] [14] | Learns multiple related tasks simultaneously to share inductive bias. | Improves generalization by leveraging commonalities between tasks. | Prone to negative transfer with low task relatedness or imbalanced data. |

| Adaptive Checkpointing with Specialization (ACS) [2] | A MTL variant that uses task-specific early stopping and model checkpointing. | Mitigates negative transfer; demonstrated accurate predictions with as few as 29 samples. | Requires careful monitoring of per-task validation loss during training. |

| Transfer Learning (TL) [14] | Transfers knowledge from a data-rich source task to a data-poor target task. | Reduces the amount of target task data needed for effective learning. | Performance depends on the relatedness between source and target domains. |

| One-Shot Learning (OSL) [14] [15] | Models are built to learn from one or a very small number of examples. | Enables model development in extremely low-data regimes. | Often relies on prior knowledge or meta-learning across many tasks. |

| Data Augmentation (DA) [14] | Artificially expands the training set by creating modified versions of existing data. | Increases effective dataset size and can improve model robustness. | Chemically valid transformations are non-trivial compared to image rotation. |

Diagram 1: ACS workflow for multi-task learning, showing shared backbone and task-specific heads with checkpointing.

Experimental Variability: A Pervasive Hurdle

Experimental variability introduces significant noise into training data for predictive models, undermining model accuracy and generalizability. This variability is an inherent feature of biological systems and measurement techniques.

Case Studies in Experimental Variability

- Chronic Toxicity Data (LOAEL): A study comparing (Q)SAR predictions with experimental variability of chronic lowest-observed-adverse-effect levels (LOAELs) from in vivo rat studies found that predictions within the model's applicability domain had variability comparable to the experimental training data itself [17]. This highlights that even optimal models are constrained by the noise present in their training data.

- In Vitro Plasma Protein Binding (PPB): A rigorous statistical analysis of PPB measurements, a critical parameter in pharmacokinetics, identified multiple sources of variability. These included well position in assay plates, day-to-day reproducibility, and, most significantly, site-to-site (inter-laboratory) differences. The loss of physical integrity of the equilibrium dialysis membrane due to pipetting errors was a major contributor [18].

Table 2: Sources and Mitigation Strategies for Experimental Variability

| Assay Type | Key Sources of Variability | Impact on Data Quality | Recommended Mitigation Strategies |

|---|---|---|---|

| Chronic Toxicity (LOAEL) [17] | Inter-study differences, animal model heterogeneity, subjective endpoint assessment. | Reduces reliability of data used for model training and validation. | Use of automated read-across ((Q)SAR) models with strict applicability domains; transparent data reporting. |

| Plasma Protein Binding [18] | Pipetting errors damaging dialysis membranes, lack of pH control, volume shift, laboratory-specific protocols. | Leads to inaccurate fraction unbound (fu) values, misinforming PK/PD models. | Standardization of protocols, use of in-well controls, Design of Experiments (DOE) for parameter optimization. |

| Genetic Variability [19] [20] | Naturally occurring missense variants in drug target genes across populations. | Affects pocket geometry & drug binding, leading to inter-individual efficacy differences. | Integration of genomic data and structural information to guide personalized drug selection. |

Protocol: Robust Plasma Protein Binding Assay

This protocol is adapted from methodologies that employed Six Sigma and Design of Experiments (DOE) to minimize variability [18].

1. Principle: Equilibrium dialysis is used to separate protein-bound from unbound drug across a semi-permeable membrane at a constant temperature and pH, allowing calculation of the fraction unbound (fu).

2. Key Reagents and Materials:

- Equilibrium Dialysis Device: 96-well format.

- Dialysis Membrane: Physico-chemically stable under assay conditions.

- Test Compound(s)

- Control Plasma: Human plasma from a certified supplier.

- Buffer: Phosphate-buffered saline (PBS), isotonic.

- In-Well Control Compound: A reference compound with well-established binding characteristics.

3. Procedure: 1. Preparation: Pre-condition the dialysis membrane according to manufacturer's instructions. Fill the buffer chambers with PBS. 2. Dosing: Add the test and control compounds to the plasma chamber. The in-well control must be included in every run. 3. Equilibration: Seal the device and incubate with gentle shaking at 37°C under controlled CO₂ levels (if bicarbonate buffer is used) for a predetermined time (e.g., 4-24 hours). Time-to-equilibrium must be validated for challenging compounds. 4. Termination & Sampling: After equilibration, sample from both the plasma and buffer chambers. 5. Analysis: Quantify drug concentrations in both chambers using a highly specific method (e.g., LC-MS/MS).

4. Data Analysis: * Fraction unbound (fu) = Concentration in buffer chamber / Concentration in plasma chamber. * Acceptance Criteria: The measured fu for the in-well control must fall within a pre-defined, statistically derived range for the entire experiment to be accepted.

The Activity Cliff Problem

Activity cliffs (ACs) are pairs of structurally similar compounds that exhibit a large, unexpected difference in their binding affinity for a given target [21]. They represent a significant challenge for Quantitative Structure-Activity Relationship (QSAR) modeling, as they directly defy the foundational similarity principle in chemoinformatics.

Predicting and Interpreting Activity Cliffs

- QSAR Model Performance: Studies demonstrate that QSAR models frequently fail to predict ACs. The sensitivity for detecting ACs is low when the activities of both compounds are unknown, but improves substantially if the actual activity of one compound in the pair is provided [21]. Graph isomorphism networks (GINs) have shown competitive or superior performance to classical molecular representations for AC-classification tasks [21].

- Structure-Based Predictions: Advanced structure-based methods, including ensemble docking against multiple receptor conformations, can successfully predict and rationalize ACs. The 3D interpretation suggests that small structural modifications can alter key interactions with the target (e.g., H-bonds, lipophilic contacts) or disrupt the receptor's ability to adopt a favorable conformation, leading to drastic potency changes [22].

Table 3: Analysis of Activity Cliff (AC) Prediction Methods

| Method Category | Molecular Representation | Reported Performance & Challenges |

|---|---|---|

| Ligand-Based QSAR [21] | Extended-Connectivity Fingerprints (ECFPs), Graph Isomorphism Networks (GINs), Physicochemical-Descriptor Vectors (PDVs). | Low AC-sensitivity when predicting both compounds' activity; superior general QSAR performance from ECFPs. |

| Structure-Based Methods [22] | High-resolution crystal structures of drug-target complexes; ensemble docking. | Achieves significant accuracy in predicting ACs by analyzing differences in 3D binding modes and interactions. |

| Matched Molecular Pairs (MMPs) [22] | Focuses on small, defined structural transformations between two compounds. | Provides a consistent and context-aware definition for identifying ACs across large datasets. |

Diagram 2: A workflow for the identification and rationalization of activity cliffs to improve predictive models.

Protocol: Structure-Based Rationalization of an Activity Cliff

This protocol outlines steps to analyze a confirmed activity cliff using structural information [22].

1. Objective: To understand the structural and energetic basis for a large potency difference between two highly similar compounds.

2. Prerequisites:

- A pair of compounds confirmed to be an activity cliff (high similarity, large potency difference).

- A high-resolution crystal structure of the target protein in complex with one of the cliff partners (the more active compound is preferable).

3. Procedure: 1. Structure Preparation: Prepare the protein structure by adding hydrogen atoms, assigning protonation states, and optimizing side-chain orientations for unresolved residues, if necessary. 2. Ligand Docking: * Dock the more active and less active cliff partner into the binding site using a robust docking program. * Critical Step: Employ ensemble docking if multiple receptor conformations are available, as the cliff may be due to a receptor conformational change [22]. 3. Interaction Analysis: Meticulously compare the predicted binding modes of the two compounds. Focus on: * Loss or gain of key hydrogen bonds or salt bridges. * Changes in hydrophobic contact surfaces. * Steric clashes introduced by the small structural change. * The role of explicit water molecules in mediating interactions. 4. Energetic Analysis (Optional but Recommended): For a more quantitative estimate, use advanced methods like Free Energy Perturbation (FEP) or MM-PB/GB-SA to calculate the relative binding free energy difference between the cliff partners [22].

4. Output: A structural rationale explaining the potency difference, which can be used to guide further medicinal chemistry efforts and improve predictive models.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagent Solutions for Featured Experiments

| Reagent / Material | Function / Application | Experimental Context |

|---|---|---|

| Graph Neural Network (GNN) [2] | A deep learning architecture that operates directly on graph representations of molecules, learning features from atom and bond arrangements. | Core model architecture for molecular property prediction in low-data regimes (e.g., ACS). |

| MolPrint2D Fingerprints [17] | A dynamic fingerprint using atom environments as molecular representation, capturing functional groups without a predefined list. | Similarity search and neighbor identification for read-across and (Q)SAR predictions. |

| 96-Well Equilibrium Dialysis Device [18] | A high-throughput format for conducting plasma protein binding assays, enabling robotic automation. | Critical hardware for standardizing and scaling protein binding measurements. |

| In-Well Control Compound [18] | A reference compound with well-characterized plasma protein binding, run concurrently with test compounds. | Monitors assay performance and validates the acceptability of each experimental run. |

| Matched Molecular Pair (MMP) [22] | A defined transformation representing the structural difference between two closely related compounds. | Systematic identification and analysis of activity cliffs across large chemical datasets. |

| Crystal Structure of Drug-Target Complex [19] [22] | A high-resolution 3D snapshot of a drug molecule bound to its protein target. | Enables structure-based analysis of activity cliffs and genetic variant effects on drug binding. |

Advanced Methodologies: Cutting-Edge AI Techniques for Accurate Property Prediction

In pharmaceutical compound research, accurately predicting molecular properties is a critical yet challenging task. Traditional machine learning methods often rely on hand-crafted molecular descriptors or fingerprints, which can overlook intricate topological and chemical structures [23]. Graph Neural Networks (GNNs) have emerged as transformative tools by natively representing molecules as graphs, where atoms constitute nodes and bonds form edges [24]. This representation allows GNNs to directly learn from molecular structures without manual feature engineering, enabling them to capture complex structural relationships essential for predicting bioactivity, toxicity, and other pharmacologically relevant properties [23]. The integration of GNNs throughout the drug discovery pipeline is revolutionizing the field by improving predictive accuracy, reducing development costs, and decreasing late-stage failures [24].

Performance Benchmarks of GNN Architectures

Extensive benchmarking of GNN architectures across standardized molecular datasets provides crucial insights for model selection in pharmaceutical applications. The performance of a model is highly dependent on its architectural alignment with specific molecular property traits [23].

Table 1: Performance Comparison of GNN Architectures on Molecular Property Prediction Tasks

| Model Architecture | log Kow (MAE) | log Kaw (MAE) | log K_d (MAE) | MolHIV (ROC-AUC) | Key Strengths |

|---|---|---|---|---|---|

| Graphormer | 0.18 | 0.29 | 0.27 | 0.807 | Global attention mechanisms, excellent for complex bioactivity classification [23] |

| EGNN | 0.21 | 0.25 | 0.22 | 0.781 | E(n)-equivariance, superior for 3D geometry-sensitive properties [23] |

| GIN | 0.24 | 0.31 | 0.29 | 0.763 | Strong local substructure capture, effective baseline for 2D topology [23] |

| KA-GNN | 0.15* | 0.23* | 0.20* | 0.82* | Fourier-based KAN modules, enhanced expressivity & interpretability [25] |

Note: KA-GNN performance values are estimated from experimental results showing consistent improvement over conventional GNNs [25]

For environmental fate prediction involving partition coefficients, EGNN with its E(n)-equivariant updates and 3D coordinate integration achieves the lowest mean absolute error on geometry-sensitive properties like log Kaw (0.25) and log K_d (0.22) [23]. Graphormer achieves the best performance on log Kow (MAE = 0.18) and MolHIV classification (ROC-AUC = 0.807), leveraging its attention-based global reasoning capabilities [23].

Advanced GNN Architectures for Molecular Representation

Kolmogorov-Arnold Graph Neural Networks (KA-GNNs)

KA-GNNs represent a recent advancement that integrates Kolmogorov-Arnold network (KAN) modules into the three fundamental components of GNNs: node embedding, message passing, and readout [25]. Unlike conventional GNNs that use fixed activation functions, KA-GNNs adopt learnable univariate functions on edges, offering improved expressivity, parameter efficiency, and interpretability [25]. The framework implements Fourier-series-based univariate functions within KAN layers to effectively capture both low-frequency and high-frequency structural patterns in molecular graphs [25].

Two architectural variants have been developed: KA-Graph Convolutional Networks (KA-GCN) and KA-Augmented Graph Attention Networks (KA-GAT) [25]. In KA-GCN, each node's initial embedding is computed by passing the concatenation of its atomic features and the average of its neighboring bond features through a KAN layer, encoding both atomic identity and local chemical context via data-dependent trigonometric transformations [25]. Experimental results across seven molecular benchmarks show that KA-GNNs consistently outperform conventional GNNs in both prediction accuracy and computational efficiency while providing improved interpretability by highlighting chemically meaningful substructures [25].

Multi-task Graph Prompt Learning (MGPT)

For few-shot learning scenarios common in drug development, Multi-task Graph Prompt (MGPT) learning provides a unified framework for few-shot drug association prediction [26]. MGPT constructs a heterogeneous graph network where nodes represent entity pairs (e.g., drug-protein, drug-disease) and utilizes self-supervised contrastive learning in pre-training [26]. For downstream tasks, MGPT employs learnable functional prompts embedded with task-specific knowledge to enable robust performance across multiple tasks with limited data [26].

MGPT demonstrates exceptional capability in seamless task switching and outperforms competitive approaches in few-shot scenarios, surpassing the strongest baseline, GraphControl, by over 8% in average accuracy [26]. This approach is particularly valuable in pharmaceutical research where obtaining large-scale annotated data is both expensive and time-consuming [26].

Adaptive Checkpointing with Specialization (ACS)

Data scarcity remains a major obstacle to effective machine learning in molecular property prediction [2]. Adaptive Checkpointing with Specialization (ACS) is a training scheme for multi-task GNNs that mitigates detrimental inter-task interference while preserving the benefits of multi-task learning [2]. ACS integrates a shared, task-agnostic backbone with task-specific trainable heads, adaptively checkpointing model parameters when negative transfer signals are detected [2].

This approach dramatically reduces the amount of training data required for satisfactory performance, achieving accurate predictions with as few as 29 labeled samples—capabilities unattainable with single-task learning or conventional MTL [2]. ACS has been validated on multiple molecular property benchmarks, where it consistently surpasses or matches the performance of recent supervised methods [2].

Experimental Protocols & Methodologies

Protocol: Implementing KA-GNN for Molecular Property Prediction

Objective: Implement and train a KA-GNN model for predicting molecular properties using the Fourier-based KAN framework.

Materials:

- Molecular datasets (QM9, ZINC, OGB-MolHIV)

- Deep learning framework (PyTorch or TensorFlow)

- KA-GNN model implementation

- Hardware: GPU-enabled computing environment

Procedure:

Data Preprocessing:

- Represent molecules as graphs with atoms as nodes and bonds as edges

- Normalize node features (atom types) to a range of 0-1

- For 3D geometric models, include spatial coordinates

- Split dataset into training (80%) and testing (20%) sets using Murcko-scaffold splitting to ensure generalization [2]

Model Configuration:

- Implement Fourier-based KAN layers using the following mathematical formulation:

- For a function (f(x)), the Fourier-KAN layer approximates it as: (f(x) ≈ Σ{k=0}^K (ak cos(kω0x) + bk sin(kω_0x))) [25]

- Set the number of harmonics (K) based on the complexity of the target function

- Initialize Fourier coefficients (ak) and (bk) randomly

- Implement Fourier-based KAN layers using the following mathematical formulation:

Architecture Integration:

Training Protocol:

- Use Adam optimizer with learning rate 0.001

- Employ mean squared error loss for regression tasks, cross-entropy for classification

- Implement early stopping with patience of 50 epochs

- Train for maximum 1000 epochs with batch size 32

Interpretation & Analysis:

- Visualize learned KAN functions to identify important molecular substructures

- Analyze frequency components to understand captured patterns

- Validate identified substructures against known chemical motifs

Protocol: Few-Shot Learning with MGPT Framework

Objective: Utilize MGPT for drug association predictions in low-data scenarios.

Procedure:

Heterogeneous Graph Construction:

- Create nodes as entity pairs (drug-protein, drug-disease, etc.)

- Establish edges based on known associations and similarities

Pre-training Phase:

- Apply self-supervised contrastive learning to graph nodes

- Use sub-graph sampling strategies for efficient training [26]

Prompt Tuning:

- Introduce learnable task-specific prompt vectors

- Fine-tune prompts with limited labeled data (few-shot settings)

- Utilize cosine similarity to measure task relatedness [26]

Evaluation:

- Test on downstream tasks: drug-target interactions, drug-side effects, drug-disease relationships

- Compare against supervised baselines (GCN, GAT, GraphSAGE) and unsupervised methods (DGI) [26]

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Computational Tools for GNN-based Molecular Property Prediction

| Research Reagent | Type | Function | Example Applications |

|---|---|---|---|

| Benchmark Datasets | Data | Model training & evaluation | QM9 (quantum chemistry), ZINC (drug-like molecules), OGB-MolHIV (bioactivity) [23] |

| OMC25 Dataset | Data | Molecular crystal property prediction | Contains over 27 million molecular crystal structures with DFT relaxation trajectories [27] |

| FGBench | Data | Functional group-level reasoning | 625K molecular property reasoning problems with annotated functional groups [28] |

| Graph Neural Network Frameworks | Software | Model implementation | PyTor Geometric, Deep Graph Library (DGL), TensorFlow Graph Neural Networks |

| Kolmogorov-Arnold Networks | Algorithm | Learnable activation functions | Replace fixed MLP transformations in GNN components [25] |

| Multi-task Graph Prompt | Framework | Few-shot drug association prediction | Learns generalizable representations for multiple tasks with limited data [26] |

| Adaptive Checkpointing | Training scheme | Mitigates negative transfer | Enables effective multi-task learning with imbalanced datasets [2] |

GNNs represent a paradigm shift in molecular property prediction for pharmaceutical research by natively capturing structural relationships through graph-based representations. Advanced architectures including KA-GNNs, MGPT, and ACS-enhanced models are addressing critical challenges in expressivity, few-shot learning, and data efficiency. The integration of these approaches throughout the drug discovery pipeline—from lead optimization to toxicity assessment—is accelerating the development of novel therapeutics while reducing costs and late-stage failures. As these technologies continue to evolve, they promise to further enhance our ability to navigate the complex chemical space and design targeted molecular interventions with precision.

The discovery and development of new pharmaceuticals remains constrained by a multidimensional challenge that requires a comprehensive balance of various drug properties [29]. With approximately 90% of drug candidates failing during clinical phases due to the high cost of experimental trials and inadequate biomedical properties, the pharmaceutical industry faces substantial inefficiencies [29]. Traditional experimental approaches are unfeasible for proteome-wide evaluation of molecular targets, creating an urgent need for computational solutions that can reduce costs and time throughout the drug discovery pipeline [30] [31].

Artificial intelligence-based methods have emerged as promising solutions, with self-supervised pretraining frameworks representing a paradigm shift in molecular property prediction [29] [30]. These frameworks leverage massive unlabeled molecular datasets to learn generalized representations, which can then be fine-tuned for specific downstream tasks with limited labeled data. This approach is particularly valuable in drug discovery, where obtaining annotated experimental data is expensive and time-consuming, while unlabeled molecular data is abundantly available [32].

This application note examines three advanced self-supervised pretraining frameworks—SCAGE, ImageMol, and Uni-Mol—that utilize different molecular representations and pretraining strategies to advance molecular property prediction. We provide detailed experimental protocols, performance comparisons, and practical implementation guidelines to enable researchers to leverage these frameworks in pharmaceutical compound research.

The landscape of self-supervised molecular representation learning has evolved beyond traditional sequence-based and fingerprint-based methods to incorporate more sophisticated structural information. SCAGE, ImageMol, and Uni-Mol represent distinct approaches to this challenge, each with unique advantages for molecular property prediction in drug discovery contexts.

Table 1: Comparative Overview of Self-Supervised Pretraining Frameworks

| Framework | Molecular Representation | Pretraining Data Scale | Key Architectural Innovations | Primary Applications |

|---|---|---|---|---|

| SCAGE | 2D graph + 3D conformational data | ~5 million drug-like compounds [29] | Multitask pretraining (M4), Multi-scale Conformational Learning (MCL) [29] | Molecular property prediction, structure-activity cliff identification [29] [33] |

| ImageMol | Molecular images | 10 million drug-like compounds [30] [34] | Multi-granularity chemical clusters classification, molecular rationality discrimination [30] | Drug target prediction, toxicity assessment, metabolic property prediction [30] [31] |

| Uni-Mol | 3D molecular structures | 209 million molecular conformations [35] | SE(3)-equivariant transformer architecture [35] | 3D spatial tasks, binding pose prediction, conformation generation [35] |

SCAGE employs a self-conformation-aware graph transformer that integrates both 2D and 3D structural information through its innovative Multi-scale Conformational Learning (MCL) module [29] [33]. The framework utilizes a multitask pretraining paradigm called M4, which incorporates four supervised and unsupervised tasks: molecular fingerprint prediction, functional group prediction using chemical prior information, 2D atomic distance prediction, and 3D bond angle prediction [29]. This comprehensive approach enables learning of conformation-aware prior knowledge, enhancing generalization across various molecular property tasks.

ImageMol takes a unique approach by representing molecules as images and applying computer vision techniques to molecular property prediction [30] [34]. The framework employs five pretraining strategies to extract biologically relevant structural information from molecular images, including multi-granularity chemical clusters classification and molecular rationality discrimination tasks [30] [31]. This image-based representation allows the model to capture both local and global structural characteristics of molecules directly from pixels.

Uni-Mol utilizes a universal 3D molecular representation learning framework based on an SE(3) Transformer architecture, pretrained on an extensive dataset of 209 million molecular conformations [35]. Unlike approaches that treat molecules as 1D sequential tokens or 2D topology graphs, Uni-Mol directly incorporates 3D spatial information, significantly enlarging the representation ability and application scope for downstream tasks, particularly those involving 3D geometry prediction and generation [35].

Table 2: Performance Comparison on Molecular Property Prediction Benchmarks

| Framework | BBBP | Tox21 | ClinTox | BACE | HIV | FreeSolv (RMSE) | ESOL (RMSE) |

|---|---|---|---|---|---|---|---|

| SCAGE | Significant improvements reported [29] | Significant improvements reported [29] | - | - | - | - | - |

| ImageMol | 0.952 [30] | 0.847 [30] | 0.975 [30] | 0.939 [30] | 0.814 [30] | 1.149 [30] | 0.690 [30] |

| Uni-Mol | State-of-the-art in 14/15 tasks [35] | State-of-the-art in 14/15 tasks [35] | - | - | - | - | - |

Experimental Protocols

SCAGE Implementation Protocol

Data Preparation and Preprocessing

- Molecular Input: Begin with molecular structures in SMILES (Simplified Molecular-Input Line-Entry System) format.

- Graph Conversion: Convert SMILES strings to molecular graph representations where atoms are represented as nodes and chemical bonds as edges.

- Conformation Generation: Utilize the Merck Molecular Force Field (MMFF) to obtain stable molecular conformations. Select the lowest-energy conformation as it represents the most stable state under given conditions [29].

- Data Partitioning: For downstream tasks, employ scaffold splitting to divide datasets according to molecular substructures, ensuring disjoint substructures between training, validation, and test sets to evaluate model generalizability [29].

Pretraining Procedure

- Model Architecture: Implement the self-conformation-aware graph transformer with Multi-scale Conformational Learning (MCL) module [29] [33].

- Multitask Pretraining: Apply the M4 framework with four pretraining tasks:

- Molecular fingerprint prediction

- Functional group prediction using chemical prior information

- 2D atomic distance prediction

- 3D bond angle prediction [29]

- Training Configuration:

Fine-tuning for Downstream Tasks

- Task-specific Adaptation: Modify the output layer of the pretrained SCAGE model to match the target property prediction task.

- Transfer Learning: Initialize weights with pretrained SCAGE model and fine-tune on specific molecular property datasets.

- Evaluation: Assess performance on molecular property prediction benchmarks and structure-activity cliff identification tasks [29] [33].

ImageMol Implementation Protocol

Molecular Image Generation

- SMILES Preprocessing: Use canonical SMILES representation and preprocess using standard methods [34].

- Image Conversion: Transform SMILES strings to molecular images using the Smiles2Img function with recommended image size of 224x224 pixels [34].

- Data Augmentation: Apply standard image augmentation techniques including rotation, scaling, and color jittering to improve model robustness.

Pretraining Strategy

- Encoder Architecture: Implement a convolutional neural network (CNN) encoder to extract latent features from molecular images [30] [31].

- Multi-task Pretraining: Employ five pretraining tasks simultaneously:

- Multi-granularity chemical clusters classification

- Molecular image reconstruction

- Image mask contrastive learning

- Molecular rationality discrimination

- Jigsaw puzzle prediction [30]

- Training Specifications:

- Pretrain on 10 million unlabeled drug-like, bioactive molecules from PubChem [30]

- Use Adam optimizer with learning rate of 1e-4

- Train with batch size of 256 for approximately 500 epochs

Fine-tuning for Specific Applications

- Target-specific Datasets: Curate benchmark datasets for specific molecular properties (e.g., toxicity, metabolic stability, target binding) [30] [31].

- Transfer Learning: Replace the final classification layer while maintaining the pretrained encoder weights.

- Evaluation: Assess performance on various drug discovery tasks including blood-brain barrier penetration (BBBP), Tox21 toxicity screening, and cytochrome P450 inhibition prediction [30].

Uni-Mol Implementation Protocol

3D Structure Preparation

- Conformation Generation: Generate multiple conformations for each molecule using tools like RDKit or OMEGA.

- Structure Optimization: Apply energy minimization to obtain stable conformations.

- Data Formatting: Represent molecules as 3D structures with atomic coordinates and element information.

Pretraining Methodology

- Architecture: Implement SE(3) Transformer architecture that respects rotational and translational symmetry [35].

- Pretraining Tasks:

- Masked atom prediction

- 3D position denoising (after adding noise to molecular coordinates) [35]

- Training Setup:

- Pretrain on 209 million molecular conformations [35]

- Use AdamW optimizer with learning rate of 1e-4

- Apply linear warmup followed by cosine decay learning rate schedule

- Train with batch size of 512 for approximately 500,000 steps

Downstream Application

- Property Prediction: Fine-tune pretrained model on molecular property prediction tasks by adding task-specific output layers.

- 3D Spatial Tasks: Utilize the model for protein-ligand binding pose prediction and molecular conformation generation without significant architectural changes [35].

- Evaluation: Assess performance on molecular property benchmarks and 3D spatial tasks, comparing against state-of-the-art methods [35].

Table 3: Essential Resources for Self-Supervised Molecular Representation Learning

| Resource | Type | Function | Availability |

|---|---|---|---|

| PubChem | Database | Provides access to millions of drug-like compounds for pretraining [30] [36] | https://pubchem.ncbi.nlm.nih.gov |

| ChEMBL | Database | Curated bioactive molecules with drug-like properties [31] | https://www.ebi.ac.uk/chembl |

| ZINC | Database | Commercially available compounds for virtual screening [31] | http://zinc.docking.org |

| RDKit | Software | Cheminformatics and machine learning tools for molecular processing | https://www.rdkit.org |

| GNPS | Mass Spectrometry Database | Repository of mass spectrometry data for molecular representation learning [37] | https://gnps.ucsd.edu |

| SCAGE Code | Framework Implementation | Official implementation of SCAGE framework [33] | https://github.com/KazeDog/SCAGE |

| ImageMol Code | Framework Implementation | Official implementation of ImageMol framework [34] | https://github.com/HongxinXiang/ImageMol |

| Uni-Mol Code | Framework Implementation | Official implementation of Uni-Mol framework [35] | https://github.com/dptech-corp/Uni-Mol |

Self-supervised pretraining frameworks represent a transformative approach to molecular property prediction in pharmaceutical research. SCAGE, ImageMol, and Uni-Mol offer complementary strengths: SCAGE excels in integrating 2D and 3D structural information through its innovative multitask learning approach; ImageMol provides a unique image-based representation that captures both local and global molecular characteristics; while Uni-Mol offers superior performance in 3D spatial tasks through its extensive pretraining on molecular conformations [29] [30] [35].

The implementation protocols provided in this application note enable researchers to leverage these advanced frameworks for their drug discovery projects. As the field continues to evolve, these self-supervised approaches will play an increasingly important role in reducing drug development costs and improving success rates by providing more accurate molecular property predictions and insights into quantitative structure-activity relationships.

By adopting these frameworks, pharmaceutical researchers can accelerate the identification of promising drug candidates, better understand structure-activity relationships, and ultimately contribute to more efficient and effective drug development pipelines.

The accurate prediction of molecular properties is a critical challenge in pharmaceutical research, directly impacting the efficiency and success of drug discovery. Traditional computational methods, which often rely on a single type of molecular representation, such as structural or sequential data, provide a fragmented view and struggle with the complexity of biological systems [38] [39]. This limitation has catalyzed a shift towards multimodal integration, an approach that synergistically combines diverse data types to build a more holistic and predictive model of molecular behavior [40] [41].

In the context of molecular property prediction (MPP), multimodality primarily involves the fusion of three key representations:

- Structural representations (e.g., 2D/3D molecular graphs) that define atomic connectivity and spatial configuration.

- Sequential representations (e.g., SMILES strings) that provide a linear, text-based description of the molecule.

- Knowledge-based representations derived from scientific literature and domain expertise, often extracted using Large Language Models (LLMs) [42] [43].