Basis Set Selection for Accurate Molecular Property Prediction: A Practical Guide for Drug Development

Accurately predicting molecular properties is crucial for accelerating drug discovery and materials design.

Basis Set Selection for Accurate Molecular Property Prediction: A Practical Guide for Drug Development

Abstract

Accurately predicting molecular properties is crucial for accelerating drug discovery and materials design. This article provides a comprehensive guide for researchers and development professionals on selecting and applying quantum chemical basis sets to achieve predictive accuracy. We cover foundational concepts, practical selection protocols for properties like solvation and protein-ligand interactions, strategies for troubleshooting common errors, and robust validation techniques using benchmarking and multi-level approaches. By balancing computational cost with accuracy, this guide empowers scientists to make informed methodological choices that enhance the reliability of computational models in biomedical research.

Understanding Basis Sets: The Foundation of Quantum Chemical Accuracy

What is a Basis Set? Defining the Building Blocks of Molecular Orbitals

FAQs: Core Concepts

What is a basis set in computational chemistry? A basis set is a set of mathematical functions, termed basis functions, used to represent the electronic wave function of a molecule. By expressing complex molecular orbitals as a linear combination of these simpler basis functions, the partial differential equations of quantum chemical models are transformed into algebraic equations that can be solved efficiently on a computer [1]. This approach is fundamental to most electronic structure calculations.

Why are Gaussian-type orbitals (GTOs) the most common choice? While Slater-type orbitals (STOs) are physically better motivated, as they mimic the exponential decay of atomic orbitals far from the nucleus and satisfy the "cusp condition" at the nucleus, the evaluation of two-electron integrals with STOs is computationally expensive [1] [2]. Frank Boys realized that STOs could be approximated as linear combinations of Gaussian-type orbitals [1]. The key advantage is that the product of two GTOs can be written as a linear combination of other GTOs, which allows the necessary integrals to be computed with high efficiency, leading to massive computational savings [1] [3].

What do the terms "minimal," "polarization," and "diffuse" functions mean?

- Minimal Basis Sets: The smallest possible sets, using a single basis function for each orbital in a Hartree-Fock calculation on the free atom. For example, an atom in the second period (Li to Ne) would be described by five functions (two s-type and three p-type) [1]. They are fast but offer low accuracy.

- Polarization Functions: These are functions with higher angular momentum than the valence orbitals (e.g., d-functions on a carbon atom, or p-functions on a hydrogen atom). They add flexibility to the basis set, allowing the electron density to change its shape and better describe the polarization of atoms within a molecule, which is crucial for modeling chemical bonding [1].

- Diffuse Functions: These are Gaussian functions with very small exponents, meaning they are spatially extended. They provide flexibility to the "tail" of the electron density, far from the nucleus. They are essential for accurately describing anions, systems with weak intermolecular interactions, dipole moments, and excited states [1].

What is the difference between Pople and Dunning basis sets?

- Pople Basis Sets (e.g., 6-31G, 6-311G): These are split-valence basis sets, meaning the core orbitals are represented with a fixed number of basis functions, while the valence orbitals are split into multiple functions to allow for flexibility in bonding [1]. They are very efficient for Hartree-Fock and Density Functional Theory (DFT) calculations [1].

- Dunning Basis Sets (e.g., cc-pVDZ, cc-pVTZ): Known as correlation-consistent basis sets, they are designed to systematically converge the results of post-Hartree-Fock (correlated) calculations, such as coupled-cluster theory, to the complete basis set (CBS) limit [1]. They are typically the preferred choice for high-accuracy wavefunction-based methods.

Troubleshooting Guides

Issue: Unexpected Basis Set Output or Performance

Problem: A calculation completes, but the reported basis set size or the results are different from expectations. A specific example, as noted in a forum, involved a Krypton atom calculation with the 6-31G keyword where the output basis functions did not match the expected 6-31G structure [4].

Solution:

- Check Element Compatibility: Be aware that for some elements, particularly heavier atoms (e.g., Gallium to Krypton), the

6-31Gkeyword in some software packages like Gaussian may not use a standard 6-31G style basis set. For historical and performance reasons, it may default to a different, non-standard basis set [4]. - Consult Software Documentation: Always check your electronic structure program's manual to understand its specific implementation of basis sets for all elements in your system.

- Use External Repositories: Rely on trusted sources like the Basis Set Exchange (BSE) to obtain consistent and well-documented basis set definitions that can be directly input into your calculations [4] [5].

Diagnosis Flowchart

Issue: Selecting an Appropriate Basis Set

Problem: A researcher is unsure how to choose a basis set for their project on molecular property prediction and is frequently questioned about their choice at conferences [6].

Solution:

- Define Your Target Accuracy and Resources: The selection is almost always a trade-off between accuracy and computational cost [3]. Larger basis sets yield more accurate results but make calculations run significantly longer [3]. Establish what is feasible for your system size and available computational resources.

- Follow a Systematic Hierarchy: Use basis sets in a hierarchical manner. You can start with a double-zeta polarized set (e.g., 6-31G or cc-pVDZ) for initial geometry optimizations and move to triple-zeta (e.g., cc-pVTZ) or larger sets for final energy and property calculations [1] [6]. This provides a controlled path to more accurate results.

- Consider the Chemical System and Property:

- Leverage the Literature: The most robust justifications are a previous benchmark study for your specific system or property, or the use of a basis set that is well-established and commonly used in published research for similar molecules [6].

Basis Set Selection Workflow

The Scientist's Toolkit: Basis Set Reference

Table 1: Common Basis Set Types and Their Characteristics

| Basis Set Type | Key Features | Common Examples | Typical Use Case |

|---|---|---|---|

| Minimal | One basis function per atomic orbital; fast but inaccurate. | STO-3G [1] | Very large systems; initial scanning. |

| Split-Valence | Valence orbitals described by multiple functions; good balance. | 6-31G, 6-311G [1] | Standard for HF/DFT geometry optimization. |

| Polarized | Adds higher angular momentum functions (d, f). | 6-31G, cc-pVDZ [1] | Accurate bonding, vibrational frequencies. |

| Diffuse | Adds spatially extended functions for "electron tail". | aug-cc-pVDZ, 6-31+G [1] | Anions, excited states, weak interactions. |

| Correlation-Consistent | Designed for systematic convergence to CBS limit. | cc-pVXZ (X=D,T,Q,5,...) [1] | High-accuracy correlated (e.g., CCSD(T)) calculations. |

Table 2: Basis Set Selection Guide for Different Scenarios

| Research Goal | Recommended Starting Point | Justification |

|---|---|---|

| Initial Geometry Optimization | 6-31G or cc-pVDZ | Provides double-zeta quality with polarization for reasonable bond lengths and angles [6]. |

| Final Single-Point Energy | cc-pVTZ or larger | Higher-level triple-zeta basis improves energy accuracy [6]. |

| Non-Covalent Interactions | aug-cc-pVDZ or better | Diffuse functions are mandatory to model the long-range electron density [1] [6]. |

| Transition Metal Chemistry | Specialized sets (e.g., def2-SVP, cc-pVDZ-PP) | Requires relativistic effective core potentials (ECPs) and careful treatment of valence space [7]. |

Frequently Asked Questions

Q: What is the single most important factor in choosing a basis set for geometry optimizations of organic systems?

- A: The DZP (Double Zeta plus Polarization) basis set is recommended as it provides a reasonably good balance for geometry optimizations of organic systems [8]. It is computationally efficient enough for these calculations while offering improved accuracy over minimal basis sets.

Q: Why are my calculated band gaps inaccurate even with a DZ basis set?

- A: The DZ (Double Zeta) basis set lacks polarization functions, which leads to a very poor description of the virtual orbital space. This makes properties like band gaps rather inaccurate. For accurate band gaps, a basis set with polarization functions, such as TZP (Triple Zeta plus Polarization) or better, is required [8].

Q: Which basis set offers the best overall balance between performance and accuracy for general use?

- A: The TZP (Triple Zeta plus Polarization) basis set is generally recommended as it offers the best balance between performance and accuracy for a wide range of properties [8].

Q: When should I use an all-electron calculation instead of the frozen core approximation?

- A: You should use an all-electron calculation (

Core None) in several specific cases [8]:- When using Hybrid or Meta-GGA density functionals.

- When calculating properties that depend on the core electron density, such as Properties at Nuclei.

- When performing optimizations under pressure.

- A: You should use an all-electron calculation (

Q: My calculation is too slow. What is the fastest basis set I can use for a preliminary test?

- A: The SZ (Single Zeta) basis set is the smallest and least accurate but is computationally the most efficient. It can be useful for running a very quick test calculation to check for setup errors or to get a rough idea of the results [8].

Basis Set Performance and Hierarchy

The hierarchy of standard basis sets, from the smallest and least accurate to the largest and most accurate, is as follows [8]: SZ < DZ < DZP < TZP < TZ2P < QZ4P

The table below summarizes the accuracy and computational cost of these basis sets for a (24,24) carbon nanotube, using the QZ4P results as a reference [8].

| Basis Set | Energy Error (eV/atom) | CPU Time Ratio (Relative to SZ) |

|---|---|---|

| SZ | 1.8 | 1.0 |

| DZ | 0.46 | 1.5 |

| DZP | 0.16 | 2.5 |

| TZP | 0.048 | 3.8 |

| TZ2P | 0.016 | 6.1 |

| QZ4P | reference | 14.3 |

The Researcher's Toolkit: Essential Basis Set Types

| Basis Set | Full Name | Key Features & Recommended Use |

|---|---|---|

| SZ | Single Zeta | Minimal basis; fast tests and pre-checks [8]. |

| DZ | Double Zeta | Pre-optimization of structures; no polarization [8]. |

| DZP | Double Zeta + Polarization | Good for geometry optimizations in organic systems [8]. |

| TZP | Triple Zeta + Polarization | Best performance/accuracy balance; general recommendation [8]. |

| TZ2P | Triple Zeta + Double Polarization | Accurate; use when a good description of virtual orbitals is needed [8]. |

| QZ4P | Quadruple Zeta + Quadruple Polarization | Largest available set; for benchmarking and high-accuracy studies [8]. |

Experimental Protocol: Basis Set Convergence Study

Objective: To determine the optimal basis set for calculating the formation energy of a (24,24) carbon nanotube by evaluating the trade-off between accuracy and computational cost.

Methodology:

- System Preparation: Obtain or generate the atomic coordinates for a (24,24) carbon nanotube.

- Single-Point Energy Calculations: Perform a series of single-point energy calculations on the same structure using the SZ, DZ, DZP, TZP, TZ2P, and QZ4P basis sets. Keep all other computational parameters (density functional, convergence criteria, etc.) identical.

- Data Analysis:

- Calculate the formation energy per atom for each basis set.

- Using the QZ4P result as the reference value, compute the absolute error in the formation energy per atom for each of the other basis sets.

- Record the CPU time for each calculation and compute the ratio relative to the SZ basis set time.

- Visualization: Plot the energy error and CPU time ratio against the basis set to visualize the convergence and cost relationship [8].

Interpretation: This protocol allows researchers to identify the point of diminishing returns, where using a larger basis set yields negligible accuracy improvement for a significant increase in computational cost. It is crucial to note that errors in absolute energies are often systematic and can partially cancel out when calculating energy differences (e.g., reaction barriers or binding energies) [8].

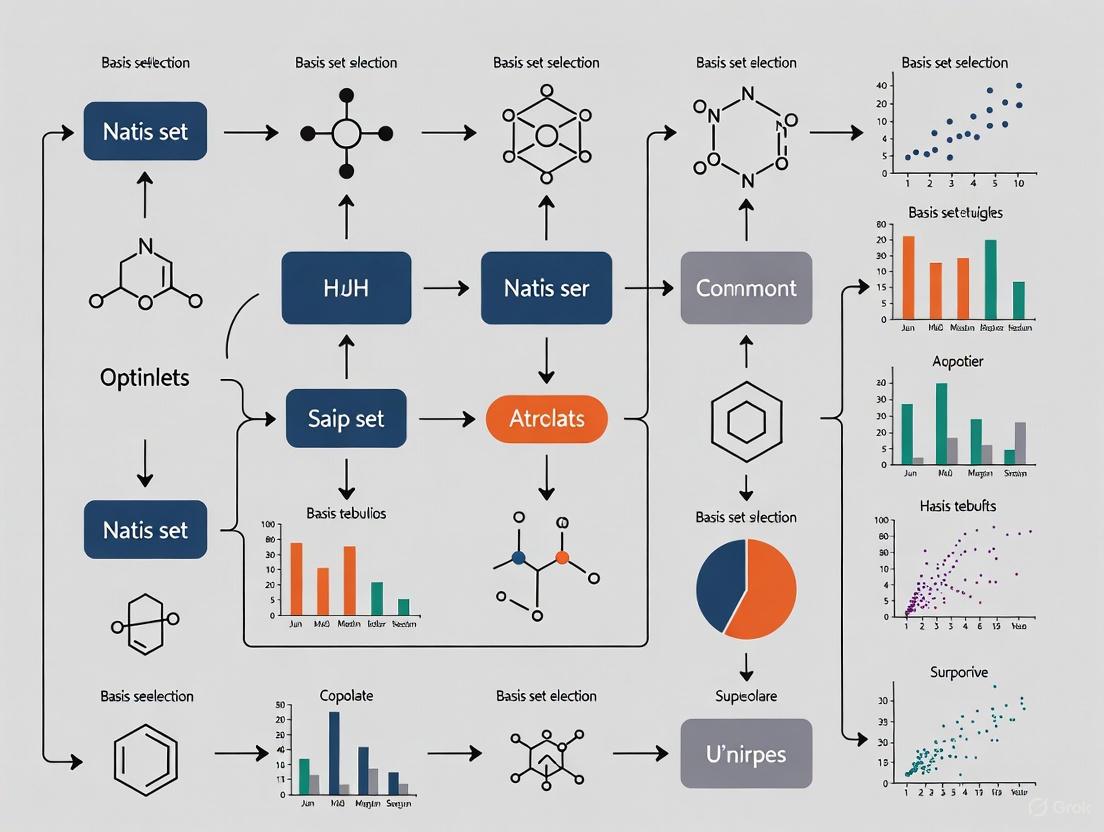

Basis Set Selection Workflow

The following diagram outlines a logical workflow for selecting an appropriate basis set based on the calculation objective and available resources.

In computational chemistry, a basis set is a set of functions (called basis functions) that represents electronic wave functions, turning complex partial differential equations into algebraic equations suitable for computers [1]. The choice of basis set is a critical step in quantum chemical calculations, as it directly impacts the accuracy of molecular property predictions, from bond energies to electronic excitations. This technical support center provides troubleshooting guidance and FAQs to help researchers navigate basis set selection for accurate molecular property prediction research.

Basis Set Types and Hierarchies

Gaussian-Type Orbitals and Historical Development

Modern computational chemistry primarily uses Gaussian-type orbitals (GTOs) rather than the physically motivated but computationally challenging Slater-type orbitals (STOs) [1]. Frank Boys realized that STOs could be approximated as linear combinations of GTOs, and because the product of two GTOs can be written as a linear combination of GTOs, this leads to huge computational savings [1].

The most common minimal basis set is STO-nG, where 'n' represents the number of Gaussian primitive functions used to represent each Slater-type orbital [1]. These minimal basis sets (e.g., STO-3G, STO-4G, STO-6G) typically give rough results that are insufficient for research-quality publication but are computationally inexpensive [1].

Pople Basis Sets

The Pople-style basis sets, developed by John Pople and coworkers, use a notation typically formatted as X-YZg [1]. In this notation:

- X represents the number of primitive Gaussians comprising each core atomic orbital basis function

- Y and Z indicate the valence orbitals are composed of two basis functions each [1]

These are split-valence basis sets, recognizing that valence electrons principally take part in molecular bonding [1]. Common Pople basis sets include:

Table: Common Pople Basis Sets and Their Characteristics

| Basis Set | Type | Polarization | Diffuse Functions | Typical Use Cases |

|---|---|---|---|---|

| 3-21G | Double-zeta | None | None | Preliminary calculations |

| 6-31G | Double-zeta | None | None | Standard DFT calculations |

| 6-31G(d) | Double-zeta | d-functions on heavy atoms | None | Standard geometry optimization |

| 6-31G(d,p) | Double-zeta | d-functions on heavy atoms, p-functions on H | None | Improved H atom description |

| 6-31+G(d) | Double-zeta | d-functions on heavy atoms | On heavy atoms | Anions, weak interactions |

| 6-311+G(d,p) | Triple-zeta | d-functions on heavy atoms, p-functions on H | On heavy atoms | Higher accuracy calculations |

Polarization functions are denoted by "" for heavy atoms only or "*" for all atoms, though modern notation explicitly specifies the functions in parentheses as (d,p) where 'd' indicates polarization on heavy atoms and 'p' on hydrogen [1]. Diffuse functions are indicated by adding "+" (heavy atoms only) or "++" (all atoms) before the 'G' [1].

Dunning Correlation-Consistent Basis Sets

Dunning's correlation-consistent basis sets are designed for systematically converging post-Hartree-Fock calculations to the complete basis set (CBS) limit [1]. These basis sets follow the pattern cc-pVXZ where:

- 'cc-p' stands for 'correlation-consistent polarized'

- 'V' indicates they are valence basis sets

- X = D, T, Q, 5, 6... (D for double-zeta, T for triple-zeta, etc.) [1]

These basis sets include polarization functions by definition and are systematically organized by angular momentum [9]:

Table: Dunning Correlation-Consistent Basis Set Composition

| Atoms | cc-pVDZ | cc-pVTZ | cc-pVQZ | cc-pV5Z |

|---|---|---|---|---|

| H, He | 2s,1p | 3s,2p,1d | 4s,3p,2d,1f | 5s,4p,3d,2f,1g |

| Li-Be | 3s,2p,1d | 4s,3p,2d,1f | 5s,4p,3d,2f,1g | 6s,5p,4d,3f,2g,1h |

| B-Ne | 3s,2p,1d | 4s,3p,2d,1f | 5s,4p,3d,2f,1g | 6s,5p,4d,3f,2g,1h |

| Na-Ar | 4s,3p,1d | 5s,4p,2d,1f | 6s,5p,3d,2f,1g | 7s,6p,4d,3f,2g,1h |

The correlation-consistent basis sets can be augmented with diffuse functions by adding the "aug-" prefix, which is particularly important for describing anions, excited states, and noncovalent interactions [10]. "Calendar" basis sets (jul-, jun-, and may-) provide intermediate options with fewer diffuse functions to mitigate linear dependency issues [10].

Other Specialized Basis Sets

Several other basis set families have been developed for specific applications:

- Jensen's polarization-consistent (pcseg-n) basis sets: Optimized for DFT methods, offering lower basis set errors than Pople basis sets of formal equivalent quality [11]

- Karlsruhe basis sets (def2-series): Including Def2SVP, Def2TZVP, and Def2QZVP, widely used in DFT calculations [9]

- Effective Core Potential (ECP) basis sets: Such as LANL2DZ and SDD, which replace core electrons with pseudopotentials for heavier elements [9]

- Specialized property basis sets: For example, EPR-II and EPR-III basis sets optimized for hyperfine coupling constant calculations [9]

Troubleshooting Guides & FAQs

Basis Set Selection

Q: How do I choose an appropriate basis set for my system?

A: Basis set selection involves multiple considerations [6]:

- System size: For large systems (>50 atoms), double-zeta basis sets (6-31G, cc-pVDZ) are often necessary for computational feasibility

- Property of interest:

- Ground-state energies: Standard polarized double- or triple-zeta basis sets

- Reaction energies: Use at least triple-zeta quality

- Weak interactions: Include diffuse functions (e.g., aug-cc-pVDZ, 6-31+G(d))

- Electronic excitations: Diffuse functions are essential

- NMR properties: Specialized property basis sets

- Method:

- HF/DFT: Pople or Jensen basis sets are efficient

- Correlated methods: Dunning cc-pVXZ sets with extrapolation to CBS limit

- Elements present: Heavier elements may require ECP basis sets

Q: What is the practical difference between Pople and Dunning basis sets?

A: While formally similar in zeta-level, there are important practical differences [11]:

- Pople basis sets (6-31G, 6-311G) were designed decades ago for HF and MP2 calculations, with constraints on exponents for computational efficiency

- Dunning cc-pVXZ sets are systematically constructed with balanced errors and better performance for correlation energy

- Jensen's pcseg-n sets are optimized specifically for DFT methods

- In practice, pcseg-1 gives roughly 3x lower basis set error than 6-31G(d,p), and pcseg-2 gives ~5x lower error than 6-311G(2df,2pd) [11]

Computational Issues

Q: My calculation fails with "linear dependence" errors. How can I resolve this?

A: Linear dependence occurs when basis functions become numerically redundant, often with large basis sets containing diffuse functions [10]:

- Use "calendar" basis sets (jun-cc-pVXZ) that remove the highest angular momentum diffuse functions

- For Pople basis sets, avoid using "++" diffuse functions on all atoms unless necessary

- Increase the integration grid or precision settings in your computational package

- Consider using a slightly smaller basis set or removing the highest angular momentum functions

Q: How do I handle basis set selection for metals and heavy elements?

A: For elements beyond the third period (Z>18):

- Use effective core potential (ECP) basis sets (LANL2DZ, SDD, cc-pVXZ-PP) to reduce computational cost

- Ensure consistent treatment of all heavy elements in your system

- Validate with all-electron basis sets for lighter elements where feasible

- Consider relativistic effects for very heavy elements (Z>50)

Performance and Accuracy

Q: How can I estimate the computational cost of different basis sets?

A: Computational cost scales approximately with the fourth power of the number of basis functions [6]. Use these guidelines:

- Minimal basis sets (STO-3G): ~1x cost (reference)

- Double-zeta (6-31G): ~5-10x cost

- Double-zeta polarized (6-31G(d)): ~10-20x cost

- Triple-zeta polarized (6-311G(d,p)): ~50-100x cost

- Quadruple-zeta (cc-pVQZ): ~500-1000x cost

Q: My molecular properties are not converging with basis set size. What should I check?

A:

- Ensure you're using a systematically convergent series (e.g., cc-pVDZ → cc-pVTZ → cc-pVQZ)

- Verify that the property requires the highest angular momentum functions in your basis set

- Check for possible intramolecular basis set superposition error (BSSE) using counterpoise correction

- Confirm that your electronic structure method is appropriate for the property of interest

Basis Set Selection Workflow

The following diagram illustrates the systematic decision process for selecting an appropriate basis set:

Research Reagent Solutions: Essential Computational Materials

Table: Key Basis Set Families and Their Applications in Molecular Property Prediction

| Basis Set Family | Key Members | Optimized For | Performance Characteristics | Limitations |

|---|---|---|---|---|

| Pople | 3-21G, 6-31G, 6-311G | HF/DFT methods | Computationally efficient with spd combined shells | Not optimal for correlated methods; unbalanced for high accuracy [11] |

| Dunning cc-pVXZ | cc-pVDZ, cc-pVTZ, cc-pVQZ | Correlated wavefunction methods | Systematic convergence to CBS limit; excellent for extrapolation | Higher computational cost than Pople sets for same formal zeta-level [1] |

| Jensen pcseg-n | pcseg-0, pcseg-1, pcseg-2 | DFT methods | Lower basis set error than Pople sets; segmented for efficiency | Less common in literature; may require manual input in some codes [11] |

| Karlsruhe def2- | def2-SVP, def2-TZVP, def2-QZVP | General purpose DFT | Good balance of cost and accuracy; widely validated | Primarily for main-group elements; ECPs needed for heavy elements [9] |

| ECP Basis Sets | LANL2DZ, SDD, cc-pVXZ-PP | Heavy elements | Reduced computational cost for elements >Kr | Core electrons not explicitly treated; potential accuracy loss [9] |

Experimental Protocols for Basis Set Benchmarking

Protocol 1: Systematic Convergence Study

Purpose: To determine the basis set requirement for a target molecular property.

Methodology:

- Select a systematically improvable basis set series (e.g., cc-pVDZ → cc-pVTZ → cc-pVQZ or pcseg-0 → pcseg-1 → pcseg-2)

- Calculate the target property at each basis set level

- Plot property versus basis set cardinal number (X^{-3} for correlation energy)

- Extrapolate to the complete basis set (CBS) limit using established formulas [12]

- Determine the smallest basis set that provides results within acceptable error of the CBS limit

Expected Results: Exponential or power-law convergence of the property with increasing basis set size [12].

Protocol 2: Cost-Benefit Analysis for Large Systems

Purpose: To identify the optimal basis set balancing accuracy and computational cost for large molecular systems.

Methodology:

- Select representative fragments of your large system

- Calculate target properties with a range of basis sets from minimal to triple-zeta

- Record computational time and resources for each calculation

- Plot accuracy gain versus computational cost

- Identify the "knee in the curve" where additional basis functions provide diminishing returns

Expected Results: A basis set recommendation that maximizes accuracy within practical computational constraints.

Frequently Asked Questions

1. What are polarization functions, and why are they critical for calculations? Polarization functions are auxiliary basis functions with one additional node added to a basis set to provide the flexibility needed for describing the distortion of electron density in molecular environments [13]. They allow atomic orbitals to shift from their spherical or symmetrical shapes, which is crucial for accurately modeling chemical bonds [14] [1]. For example, adding p-type functions to hydrogen atoms or d-type functions to first-row atoms (like carbon) enables a more asymmetric distribution of electron density around the nucleus, which is essential for correctly predicting molecular geometries and reaction barriers [1] [13]. Without them, the description of bonding is often poor.

2. When must I include diffuse functions in my basis set? Diffuse functions are Gaussian functions with very small exponents, designed to better represent the "tail" portion of electron density far from the atomic nucleus [1] [13]. They are essential for:

- Anions and weakly bound systems: Accurate calculations for species like F⁻ or OH⁻ require diffuse functions to describe the more spread-out electron density [15].

- Non-covalent interactions: The accurate computation of weak intermolecular forces (e.g., van der Waals, hydrogen bonds) in supramolecular systems often necessitates diffuse functions to span the interaction region [16].

- Molecular properties: Properties such as polarizabilities, hyperpolarizabilities, and high-lying excitation energies are sensitive to the outer reaches of the electron cloud and require diffuse functions [15]. Be aware that adding diffuse functions can increase computational cost and sometimes lead to linear dependency issues, which may require technical interventions in the software [15].

3. What is the practical difference between the * and notations in Pople basis sets?

In Pople-style basis sets (e.g., 6-31G), the notation indicates the addition of polarization functions [1] [13].

- A single asterisk

*(e.g.,6-31G*) signifies that polarization functions have been added to heavy atoms (all atoms except hydrogen and helium). For carbon, this means adding a set of d-type orbitals. - A double asterisk

(e.g.,6-31G) indicates that polarization functions are added to both heavy atoms and light atoms (H, He). For hydrogen, this means adding a set of p-type orbitals [1] [13]. Theis synonymous with the more explicit notation(d,p).

4. My geometry optimization of a large molecule is slow. Can I use a smaller basis set?

Yes, but you must choose carefully. For large systems, double-zeta polarized (DZP) basis sets like DZP in ADF or def2-SVP in other packages often provide an excellent compromise between speed and accuracy for geometry optimizations [17] [15]. It is strongly advised against using minimal basis sets (e.g., STO-3G) or unpolarized double-zeta basis sets for production research, as they suffer from severe inherent errors and poor description of bonding [18] [17] [1]. Modern composite methods also utilize specially optimized double-zeta basis sets like vDZP to achieve high accuracy at low cost [17].

5. What are the consequences of Basis Set Superposition Error (BSSE), and how can I mitigate it? BSSE is an error that causes an artificial overestimation of interaction energies (e.g., in complexes or transition states) because fragments can "borrow" basis functions from their neighbors [17] [16]. This error is most pronounced with small basis sets and can dramatically impact predictions of thermochemistry and barrier heights [18] [16]. Standard mitigation strategies include:

- The Counterpoise (CP) correction: A common but computationally more expensive method that accounts for the BSSE by calculating the energy of each fragment using the entire basis set of the complex [16].

- Using larger basis sets: BSSE diminishes with increasing basis set size and becomes negligible with quadruple-zeta basis sets [16].

- Basis set extrapolation: Schemes exist to extrapolate results to the complete basis set (CBS) limit, which can serve as an alternative to CP correction [16].

Troubleshooting Guides

Problem: Unrealistically Low Binding Energy in Complexes

Description Calculation of intermolecular interaction energy (e.g., for a host-guest system or protein-ligand docked pose) yields a value that is significantly less negative (weaker) than expected from experimental data or higher-level benchmarks.

Diagnosis and Solution Flow

Step-by-Step Resolution

- Apply Counterpoise Correction: BSSE is a common cause of error. Recalculate the interaction energy using the CP method to account for the artificial stabilization [16]. For double- and triple-zeta basis sets, this correction is often mandatory for reliable results [16].

- Verify Dispersion Corrections: Many modern density functionals do not adequately describe long-range dispersion forces. Ensure your functional includes an empirical dispersion correction, such as Grimme's D3 or D4 [18] [16]. This is crucial for weak interactions.

- Check for Diffuse Functions: Weak interactions occur in regions far from atomic nuclei. If your basis set lacks diffuse functions (e.g., you are using

cc-pVDZinstead ofaug-cc-pVDZor6-31G*instead of6-31+G*), the interaction energy will be poorly described [16] [13]. Switch to an augmented or "+" version of your basis set. - Upgrade Basis Set Quality: If the above steps do not resolve the issue, the basis set itself may be too small. Residual BSSE and basis set incompleteness error (BSIE) can be substantial with double-zeta basis sets [17]. Moving to a triple-zeta basis set (e.g., from

def2-SVPtodef2-TZVP) is recommended for higher accuracy [18] [17].

Problem: Poor Convergence in Anion or Excited State Calculations

Description The Self-Consistent Field (SCF) procedure fails to converge when calculating an anionic system, or the computed excitation energies for Rydberg states are inaccurate.

Diagnosis and Solution Flow

Step-by-Step Resolution

- Add Diffuse Functions: The electron density in anions and Rydberg excited states is much more diffuse. Standard basis sets are not sufficient. You must use a basis set that includes diffuse functions, such as

aug-cc-pVDZfor Dunning sets or6-31+G*for Pople sets [15] [13]. - Manage Linear Dependency: The addition of many diffuse functions can make the basis set numerically linearly dependent. If the SCF still fails to converge after adding diffuse functions, use your software's built-in tools to handle this. For example, in ADF, the

DEPENDENCYkeyword with a threshold (e.g.,bas=1d-4) can remove linear combinations [15]. - Review Functional Choice: The choice of functional is as important as the basis set. For challenging electronic structures like some excited states, standard hybrid functionals may perform poorly. Consider using range-separated hybrid functionals or functionals specifically designed for the property of interest [18] [15].

Table 1: A guide to the key modifiers in basis sets and their impact on calculations.

| Basis Set Component | Symbol (Pople) | Prefix/Notation (Dunning) | Primary Function | Critical For |

|---|---|---|---|---|

| Polarization Functions | * (heavy atoms), |

Included in name (e.g., -pVDZ) |

Allows orbital distortion to accurately model bonding and molecular geometry [14] [13]. | Bond energies, reaction barrier heights, molecular structures [18]. |

| Diffuse Functions | + (heavy atoms), ++ (all atoms) |

aug- (e.g., aug-cc-pVDZ) |

Describes the "tail" of electron density far from the nucleus [13]. | Anions, weak intermolecular interactions, excited states, polarizabilities [16] [15]. |

The Scientist's Toolkit: Essential Research Reagents

Table 2: A selection of common basis set families and their typical use cases in computational research.

| Basis Set Family | Examples | Key Characteristics | Recommended Use Cases |

|---|---|---|---|

| Pople | 6-31G, 6-311+G* | Split-valence; efficient for HF and DFT; intuitive naming [9] [14] [1]. | Routine DFT calculations on medium-sized molecules; initial geometry scans [14] [6]. |

| Dunning correlation-consistent | cc-pVXZ, aug-cc-pVXZ (X=D,T,Q,...) | Systematic hierarchy; designed to converge to CBS limit [9] [1]. | High-accuracy energy calculations; post-HF methods (e.g., CCSD(T)); benchmark studies [14] [6]. |

| Ahlrichs (Karlsruhe) | def2-SVP, def2-TZVP, def2-TZVPP | Segmented contraction; good coverage of periodic table; efficient for DFT [9] [14]. | General-purpose DFT calculations across a wide range of elements [9] [14]. |

| Jensen (Polarization-consistent) | pcseg-1, pcseg-2, aug-pcseg-1 | Optimized for DFT; segmented for computational efficiency [14]. | High-quality DFT property calculations [14] [6]. |

| Specialized/Composite | vDZP | Designed to minimize BSSE; often used with effective core potentials [17]. | Low-cost composite DFT methods (e.g., ωB97X-3c); efficient calculations on large systems [17]. |

Connecting Basis Sets to Molecular Property Prediction Goals

Frequently Asked Questions

1. What is the most important factor when choosing a basis set for molecular property prediction? The most critical factor is balancing computational cost against the required accuracy for your specific application. Larger basis sets (triple-zeta, quadruple-zeta) provide higher accuracy but dramatically increase computational cost. For density functional theory (DFT) calculations, triple-zeta basis sets generally offer the best tradeoff between cost and accuracy, while for post-Hartree-Fock methods like coupled-cluster, larger basis sets with diffuse functions are often necessary [19].

2. Can I use small basis sets without sacrificing accuracy? Recent research indicates that specially optimized double-zeta basis sets can approach triple-zeta accuracy for certain applications. The vDZP basis set, developed for the ωB97X-3c composite method, has shown effectiveness across multiple density functionals with minimal reparameterization. In benchmarks, vDZP maintained reasonable accuracy while reducing computational cost by approximately 5-fold compared to standard triple-zeta basis sets [17].

3. How does basis set selection affect different molecular properties? Basis set requirements vary significantly by property type. Basic molecular properties and isomerization energies can be reasonably predicted with smaller basis sets, while barrier heights and non-covalent interactions typically require more sophisticated basis sets with diffuse functions to minimize basis set superposition error (BSSE) and basis set incompleteness error (BSIE) [17].

4. What are the limitations of small basis sets? Small basis sets typically suffer from several pathologies: poor electron density description (basis-set incompleteness error), overestimated interaction energies due to fragments "borrowing" adjacent basis functions (basis-set superposition error), and potentially incorrect predictions of thermochemistry, geometries, and barrier heights [17].

5. Are there new approaches to basis set selection? Machine learning approaches are emerging that generate adaptive basis sets tailored to local chemical environments. These methods construct polarized atomic orbitals (PAOs) as linear combinations of traditional basis functions, optimizing them for each molecular geometry to maintain accuracy with minimal basis set size [20].

Basis Set Performance Comparison

Table 1: Weighted Mean Absolute Deviations (WTMAD2) for Various Functionals and Basis Sets on GMTKN55 Main-Group Thermochemistry Benchmark

| Functional | def2-QZVP | vDZP | 6-31G(d) | def2-SVP | pcseg-1 |

|---|---|---|---|---|---|

| B97-D3BJ | 8.42 | 9.56 | - | - | - |

| r2SCAN-D4 | 7.45 | 8.34 | - | - | - |

| B3LYP-D4 | 6.42 | 7.87 | - | - | - |

| M06-2X | 5.68 | 7.13 | - | - | - |

| ωB97X-D4 | 3.73 | 5.57 | - | - | - |

Note: Lower values indicate better performance. Data compiled from benchmark studies [17].

Table 2: Recommended Basis Sets for Different Computational Scenarios

| Application | Recommended Basis Sets | Key Considerations |

|---|---|---|

| Routine DFT for drug discovery | def2-TZVP, vDZP | Triple-zeta recommended for accuracy; vDZP offers speed advantage |

| Post-Hartree-Fock methods | aug-cc-pVTZ, aug-cc-pVQZ | Diffuse functions critical for correlation energy |

| Large system screening | def2-SVP, vDZP | Balance of speed and reasonable accuracy |

| Non-covalent interactions | aug-cc-pVTZ, aug-pcseg-2 | Diffuse functions essential for weak interactions |

| Transition metal systems | def2-TZVP with ECPs | Effective core potentials reduce cost for heavy elements |

Experimental Protocols

Protocol 1: Benchmarking Basis Set Performance for Molecular Property Prediction

Objective: Systematically evaluate basis set performance for predicting specific molecular properties.

Materials and Computational Setup:

- Software: Psi4 1.9.1 or later [17]

- Reference Data: Experimental or high-level computational data for target properties

- Benchmark Set: GMTKN55 database for main-group thermochemistry [17]

- Integration Grid: (99,590) with "robust" pruning [17]

- Density Fitting: Employ for all calculations to accelerate computation [17]

- SCF Convergence: Apply level shift of 0.10 Hartree [17]

Methodology:

- Select target molecular properties and appropriate benchmark molecules

- Perform geometry optimization with consistent method across all basis sets

- Calculate target properties with each basis set under evaluation

- Compare results to reference data using statistical metrics (MAE, RMSE, WTMAD2)

- Analyze computational cost (CPU time, memory requirements) for each basis set

Protocol 2: Adaptive Basis Set Generation Using Machine Learning

Objective: Generate and validate machine-learned adaptive basis sets for specific chemical environments.

Materials:

- Primary Basis Set: Traditional atom-centered basis set as starting point [20]

- Training Set: Diverse molecular geometries representing chemical space of interest [20]

- ML Framework: Rotationally invariant potential parametrization [20]

Methodology:

- Define local chemical environment descriptor capturing key atomic interactions [20]

- Construct auxiliary atomic Hamiltonian with polarization potential terms [20]

- Train machine learning model to predict optimal polarized atomic orbitals (PAOs) [20]

- Validate performance on test set of molecular structures [20]

- Compare accuracy and computational efficiency against traditional basis sets [20]

Workflow Diagrams

Basis Set Selection Workflow

The Scientist's Toolkit

Table 3: Essential Computational Resources for Basis Set Selection Research

| Resource | Type | Function | Availability |

|---|---|---|---|

| GMTKN55 Database | Benchmark Data | Comprehensive main-group thermochemistry for method validation | Publicly available |

| vDZP Basis Set | Specialized Basis | Optimized double-zeta basis with minimal BSSE for efficient calculations | Custom implementation required [17] |

| def2 Family | Standard Basis Sets | Consistent quality across periodic table with ECPs for heavy elements | Standard in quantum chemistry packages [19] |

| Psi4 | Software Platform | Open-source quantum chemistry package with comprehensive basis set library | Free download [17] |

| Polarized Atomic Orbitals (PAOs) | Adaptive Basis | Machine-learning derived basis functions adapted to local chemical environment | Research implementation [20] |

Troubleshooting Guides

Problem: Calculations are too slow for large molecular systems

- Potential Cause: Basis set too large for system size

- Solution: Switch to optimized double-zeta basis sets (vDZP, def2-SVP) or explore machine learning adaptive basis sets that reduce basis function count while maintaining accuracy [20] [17]

Problem: Inaccurate prediction of non-covalent interaction energies

- Potential Cause: Lack of diffuse functions in basis set

- Solution: Use augmented basis sets (aug-cc-pVXZ, aug-pcseg-X) with diffuse functions to properly describe weak interactions and electron density tails [6] [19]

Problem: Significant basis set superposition error (BSSE)

- Potential Cause: Small basis set leading to "basis borrowing" between fragments

- Solution: Apply counterpoise correction or use larger basis sets with better coverage. The vDZP basis set is specifically designed to minimize BSSE [17]

Problem: Inconsistent performance across different molecular properties

- Potential Cause: Basis set not balanced for diverse property types

- Solution: Conduct benchmark across multiple property types (geometries, energies, frequencies) and select basis set that provides best overall performance [17]

Problem: Poor convergence with basis set size for high-accuracy methods

- Potential Cause: Insufficient basis functions for correlation energy recovery

- Solution: For wavefunction methods, use correlation-consistent basis sets (cc-pVXZ) specifically designed for systematic convergence to complete basis set limit [19]

Practical Protocols: Selecting the Right Basis Set for Your Molecular System

Best-Practice Decision Trees for Systematic Method Selection

In molecular property prediction research, selecting the appropriate computational methods and basis sets is a complex, multi-faceted decision. Decision tree analysis provides a structured, visual framework to map out these choices, their required inputs, potential outcomes, and uncertainties [21]. This systematic approach turns complex decision-making into a more manageable process, helping researchers and drug development professionals identify the strategy most likely to achieve accurate and reliable results [22]. By breaking down broad categories into finer levels of detail, decision trees move thinking step by step from generalities to specifics, which is crucial when evaluating competing computational approaches for a given research objective [23].

Core Components of a Decision Tree

A decision tree uses a standardized set of symbols to represent different elements of the decision-making process. Understanding these components is essential for both creating and interpreting trees for method selection [24] [21].

- Decision Nodes: Represented by squares, these indicate points where you must make a conscious choice between alternative actions (e.g., "Select basis set type") [21] [22].

- Chance Nodes: Represented by circles, these signify uncertain outcomes or events outside your direct control (e.g., "Expected accuracy on a benchmark set") [21] [22]. The branches emanating from a chance node should reflect all possible outcomes and are typically assigned probabilities.

- End Nodes: Also known as terminal nodes and represented by triangles, these mark the final outcome of a path through the tree (e.g., "Recommended: DFT-D3 with def2-TZVP basis set") [21] [22].

- Branches: Lines that connect nodes, representing the pathways from decisions to chance events and finally to outcomes.

Table 1: Decision Tree Components and Their Functions

| Symbol | Name | Function in Method Selection |

|---|---|---|

| □ | Decision Node | A choice under the researcher's control (e.g., which software to use). |

| ○ | Chance Node | An uncertain outcome (e.g., computational cost exceeding budget). |

| △ | End Node | The final result or recommendation of a path. |

| ---→ | Branch | Connects elements, showing the flow from decision to outcome. |

Step-by-Step Protocol for Constructing a Decision Tree

The following structured protocol, adapted from general decision tree analysis, provides a detailed methodology for building a systematic method selection framework [21].

Step 1: Define the Core Decision

Formulate the primary, actionable question that needs an answer. The question should be specific and have clear, mutually exclusive alternatives.

- Example Question: "Which level of theory and basis set should be selected for predicting the solvation free energy of a new drug candidate?"

- Methodology: Frame the decision in concrete terms. Avoid vague questions like "How to improve accuracy?" Instead, use a decision-making template to clarify each option. Ensure all alternatives are realistic and feasible within computational constraints.

Step 2: Identify and Map All Possible Outcomes

Brainstorm all potential consequences, both positive and negative, for each initial choice. This requires thorough scenario planning.

- Methodology: Gather input from different knowledge domains (e.g., quantum chemistry, statistics, HPC resources). Use techniques like affinity diagrams to group similar outcomes, aiming for comprehensive coverage without overwhelming detail [23]. Document key performance indicators (KPIs) like accuracy, speed, and resource requirements for each outcome.

Step 3: Structure the Tree with Decision and Chance Nodes

Create the visual structure of the decision tree, beginning with the main decision on the left and branching out to the right.

- Methodology: Use consistent spacing and descriptive labels. Follow a logical left-to-right flow based on process analysis best practices. Clearly distinguish between paths that lead to new decisions and those that represent final endpoints. Visual collaboration tools can be effective for building and refining these structures as a team [21].

Step 4: Assign Probabilities and Quantitative Values

Transform the qualitative map into a quantitative analysis tool by estimating the likelihood and impact of each uncertain outcome.

- Probability Estimation:

- Data Sources: Use historical benchmarking data, results from published literature, meta-analyses, and expert judgment from experienced computational chemists when data is limited.

- Protocol: For each chance node, ensure the probabilities of all emanating branches sum to 1.0 (100%).

- Value Assignment:

- Metrics: Assign quantitative values to endpoints using consistent measurements relevant to the research goal (e.g., Mean Absolute Error (MAE) for accuracy, CPU hours for cost, a composite score).

- Documentation: Record all assumptions and data sources transparently to ensure the reasoning is clear and the model can be easily updated.

Step 5: Calculate Expected Values and Analyze Optimal Paths

Work backward through the tree to calculate the expected value of each decision path, then analyze the results beyond pure numerical output.

- Expected Value Calculation:

- Formula: For each chance node, the Expected Value (EV) is the sum of the value of each outcome multiplied by its probability:

EV = Σ (Probability_of_Outcome_i * Value_of_Outcome_i). - Protocol: Calculate the EV for each chance node, then use these values to determine the EV for the decision nodes at the preceding step. The path with the highest EV often represents the optimal choice based on the data [21].

- Formula: For each chance node, the Expected Value (EV) is the sum of the value of each outcome multiplied by its probability:

- Path Analysis:

- Factors to Consider:

- Risk Profile: The variability in potential outcomes (e.g., a method with a high best-case but also a low worst-case accuracy).

- Resource Requirements: The computational budget, software licensing costs, and expertise needed.

- Strategic Alignment: How well the path supports broader project goals (e.g., speed for high-throughput screening vs. high accuracy for final validation).

- Factors to Consider:

Step 6: Implement, Document, and Monitor

Document the entire decision-making process and set up a system to monitor the real-world performance of the selected method against predictions.

- Documentation: Record the final decision tree, all assumptions, data sources, probability assignments, and the final recommendation in a decision log or electronic lab notebook.

- Implementation Plan: Create an action plan that breaks the chosen path into specific tasks with owners and deadlines.

- Monitoring: Compare the actual performance of the selected computational method (e.g., its achieved accuracy and cost) against the model's predictions. This feedback loop is critical for refining future decision trees and building institutional knowledge.

Visualizing the Decision Process: A Workflow for Basis Set Selection

The following diagram, generated using Graphviz DOT language, illustrates a simplified decision workflow for basis set selection, incorporating the core components and logic described in the protocol.

Diagram 1: A simplified decision workflow for selecting a basis set based on project requirements.

Troubleshooting Guide and FAQs

Q1: Our decision tree has become too large and complex to be practical. How can we simplify it? A1: Prune the tree by focusing on the most critical decisions and outcomes. Group similar outcomes into broader categories (e.g., "Acceptable Accuracy" vs. "Unacceptable Accuracy") and eliminate paths with very low probability or negligible impact on the final goal. The principle of a "necessary-and-sufficient" check can help—ask if all items at one level are truly necessary for the level above, and if they are sufficient to define it [23].

Q2: How do we handle situations where there is very little historical data to assign probabilities? A2: In the absence of robust data, use structured expert judgment. Elicit estimates from multiple domain experts and use techniques like the Delphi method to reach a consensus. Document these assumptions explicitly as "Expert-Estimated Probabilities" so they can be updated easily when new data becomes available [21].

Q3: The calculated expected value suggests one path, but our team is leaning towards another due to unquantifiable factors. How should we proceed? A3: The expected value is a guide, not an absolute rule. Decision trees help inform the decision-making process but should not replace strategic judgment. Factors like strategic alignment with long-term research goals, implementation complexity, or the potential for future methodological developments are valid reasons to choose a path with a slightly lower expected value [21]. Use the tree as a discussion tool to make these trade-offs explicit.

Q4: What are the main limitations of using decision trees for this purpose? A4: Decision trees can be unstable, meaning small changes in probability or value estimates can lead to large changes in the recommended path [22]. They can also become computationally complex with many linked outcomes. To mitigate this, use sensitivity analysis to test how changes in key assumptions affect the final recommendation, ensuring the model's robustness.

The Scientist's Toolkit: Research Reagent Solutions

In the context of computational experiments, "research reagents" refer to the essential software, data, and hardware resources required to conduct the research. The following table details key components of the computational toolkit for molecular property prediction.

Table 2: Essential Computational Tools and Resources for Method Selection

| Tool/Resource | Type | Function in Method Selection |

|---|---|---|

| Quantum Chemistry Software | Software | Provides the algorithms and computational engines (e.g., for DFT, MP2, CCSD(T)) to evaluate methods and basis sets. |

| Benchmark Datasets | Data | Curated collections of molecular systems with high-quality reference data (e.g., S66, GMTKN55) used to validate and assign accuracy values. |

| High-Performance Computing (HPC) | Hardware | Provides the necessary processing power to run computationally intensive calculations for method benchmarking. |

| Systematic Review Literature | Knowledge | Peer-reviewed frameworks and comparisons of modelling approaches provide foundational data for building the decision tree [25]. |

| Data Visualization Tools | Software | Aids in creating and interpreting the decision tree itself, making complex relationships easier to understand and communicate [24]. |

Basis Set and Functional Pairing Recommendations for Common Properties

Frequently Asked Questions

FAQ 1: What is the best general-purpose functional and basis set to replace the outdated B3LYP/6-31G* combination? The B3LYP/6-31G* combination suffers from known weaknesses, including missing London dispersion effects and a significant basis set superposition error (BSSE) [18]. Modern, more robust and accurate alternatives are recommended. For general-purpose calculations on organic and main-group molecules, use a range-separated hybrid or meta-GGA functional like ωB97X-D or SCAN, paired with a polarized triple-zeta basis set such as def2-TZVP [18] [26]. For an excellent balance of cost and accuracy, composite methods like r²SCAN-3c or B97M-V/def2-SVPD are highly recommended as they systematically correct for the shortcomings of older methods without a significant computational cost increase [18].

FAQ 2: My geometry optimization is slow with a large basis set. What is a more efficient strategy? A dual-level approach is highly efficient. First, perform a geometry optimization using a smaller, faster basis set like def2-SVP or 6-31G* [26]. Subsequently, perform a more accurate single-point energy calculation on the optimized geometry using a larger target basis set (e.g., def2-TZVP or cc-pVTZ). For the most accurate and efficient results, ensure the smaller basis is a proper subset of the larger target basis (e.g., using rcc-pVTZ with cc-pVTZ) [27].

FAQ 3: My calculation for an anion failed to converge or gives an unrealistic energy. What is wrong? Anions and systems with diffuse electron densities require basis sets with diffuse functions. Standard basis sets lack the necessary flexibility to describe these systems accurately [15]. Use basis sets explicitly designed with diffuse functions, such as aug-cc-pVZ series, or the minimally augmented def2-X*VP basis sets (e.g., ma-def2-SVP, ma-def2-TZVP) for a more cost-effective solution [26]. This is critical for achieving accurate results for electron affinities and anionic systems [26].

FAQ 4: How do I choose a basis set for a molecule containing heavy atoms (e.g., transition metals)? For elements heavier than krypton, relativistic effects become important [26]. You should use either:

- Effective Core Potentials (ECPs): Basis sets like LANL2DZ or the def2 series paired with appropriate ECPs replace core electrons, making the calculation more efficient [28] [26].

- Relativistic Hamiltonians: Methods like ZORA (Zero-Order Regular Approximation) with specially optimized all-electron basis sets (e.g., ZORA-def2-TZVP) provide a more accurate treatment for core-dependent properties [15] [26].

FAQ 5: What special considerations are needed for calculating molecular spectroscopy properties? Molecular properties related to the chemical core of atoms (e.g., chemical shifts, spin-spin couplings, electric field gradients) usually require specialized, uncontracted basis sets for high accuracy [26]. For properties like polarizabilities, hyperpolarizabilities, and high-lying excitation energies, basis sets with diffuse functions are essential, especially for smaller molecules [15].

The table below lists key resources required for running quantum chemical calculations, from software components to hardware infrastructure.

| Item Name | Type | Function / Purpose |

|---|---|---|

| Density Functional (e.g., ωB97X-D, B97M-V) | Software/Model | Defines the exchange-correlation energy approximation; the functional choice is the primary determinant of accuracy for many chemical properties [18]. |

| Atomic Orbital Basis Set (e.g., def2-TZVP, cc-pVTZ) | Software/Model | A set of functions representing atomic orbitals; describes the spatial distribution of electrons and determines the basis set error [26]. |

| Auxiliary Basis Set (e.g., def2/J, def2-TZVP/C) | Software/Model | Used in the Resolution-of-the-Identity (RI) approximation to accelerate the computation of two-electron integrals, significantly speeding up calculations [26]. |

| High-Performance Computing (HPC) Cluster | Hardware/Infrastructure | Provides the intensive computational resources (many CPU cores, high-speed interconnect, large memory) needed for routine calculations on medium-to-large systems [29]. |

| GPU Accelerators (e.g., NVIDIA RTX 6000 Ada) | Hardware/Infrastructure | Graphics Processing Units (GPUs) offload computationally intensive tasks from CPUs, drastically accelerating molecular dynamics and quantum chemistry simulations [30]. |

| Leadership-Class Supercomputers (e.g., Frontier) | Hardware/Infrastructure | World's most powerful supercomputers for open science; enable massive simulations (e.g., millions of atoms) that are impossible on standard HPC clusters [31]. |

Basis Set and Functional Pairing Recommendations

The following tables provide specific recommendations for functional and basis set combinations tailored to different molecular properties and system sizes.

Table 1: Recommended Basis Sets by Tier and Type

| Basis Set | Tier | Key Characteristics | Recommended Use Cases |

|---|---|---|---|

| def2-SVP | Double-Zeta | Balanced speed and accuracy for its size [26]. | Initial geometry optimizations; large systems (>100 atoms). |

| 6-31G* | Double-Zeta | Historically popular; known for BSSE issues; being superseded [18]. | Initial geometry scans (if necessary). |

| def2-TZVP | Triple-Zeta | A robust, modern choice for production calculations [26]. | Default for final energies/geometries (DFT); single-point energies; frequency calculations. |

| cc-pVTZ | Triple-Zeta | Correlation-consistent; designed for post-HF methods [28]. | High-accuracy DFT and wavefunction theory (e.g., MP2, CCSD(T)). |

| ma-def2-TZVP | Triple-Zeta (Diffuse) | Minimally augmented; cost-effective diffuse functions [26]. | Anions, excited states, weak interactions, polarizabilities. |

| aug-cc-pVTZ | Triple-Zeta (Diffuse) | Includes standard diffuse functions [26]. | High-accuracy work on anions and Rydberg states (wavefunction methods). |

| def2-QZVP | Quadruple-Zeta | Approaches the basis set limit [15]. | Ultimate accuracy for DFT energies and properties. |

| ZORA/QZ4P | Quadruple-Zeta | Relativistic, all-electron basis for high accuracy [15]. | Near basis-set limit calculations with ZORA relativistic method. |

Table 2: Functional and Basis Set Pairings for Molecular Properties

| Target Property | Recommended Functional(s) | Recommended Basis Set(s) | Protocol & Special Considerations |

|---|---|---|---|

| Ground-State Geometry & Energy | ωB97X-D, r²SCAN-3c, B97M-V | def2-TZVP, cc-pVTZ | Protocol: Optimize geometry and calculate frequencies for thermal corrections. Use a larger basis (e.g., def2-QZVP) for a final single-point energy on the optimized structure for higher accuracy. Considerations: Composite methods like r²SCAN-3c are excellent for this purpose [18]. |

| Reaction Barrier Heights | ωB97X-D, M06-2X | def2-TZVP, ma-def2-TZVP | Protocol: Locate transition state (TS) and reactants/products. Calculate frequency to confirm one imaginary mode for TS. Perform intrinsic reaction coordinate (IRC) calculation. Considerations: Use a functional with good kinetics performance. Diffuse functions can be important for TS structures [18]. |

| Non-Covalent Interactions | ωB97X-D, B97M-V | ma-def2-TZVP, aug-cc-pVTZ | Protocol: Optimize complex and monomers using a basis set with diffuse functions. Apply an empirical dispersion correction (D3, D4) if not included in the functional. Considerations: Essential to correct for Basis Set Superposition Error (BSSE) via counterpoise correction [18]. |

| Electronic Spectra (UV-Vis) | ωB97X-D, CAM-B3LYP | ma-def2-TZVP, aug-cc-pVTZ | Protocol: Perform a TD-DFT calculation on the ground-state optimized geometry. Considerations: Range-separated hybrids are crucial for accurate charge-transfer states. Diffuse functions are necessary for Rydberg excitations [15]. |

| Core-Dependent Properties (NMR) | PBE0, WP04 | specialized core-property basis sets (e.g., pcSseg-2) | Protocol: Perform a single-point calculation on an optimized geometry using a specialized, uncontracted basis set. Considerations: Standard basis sets are inadequate. All-electron relativistic methods (e.g., ZORA) are required for heavy elements [26]. |

Workflow for Basis Set and Functional Selection

The diagram below outlines a systematic decision-making process for selecting an appropriate computational protocol, adapted from general considerations in computational chemistry [18].

Detailed Methodologies for Key Computational Experiments

Protocol 1: Accurate Calculation of Non-Covalent Interaction Energies

- Objective: To determine the binding energy between two molecules (e.g., a drug and its receptor pocket) with minimal error from BSSE.

- System Preparation:

- Optimize the geometry of the isolated monomers (A and B) and the complex (A·B) using a functional like ωB97X-D and a medium-sized basis set (e.g., def2-SVP).

- Perform a frequency calculation to confirm all structures are minima (no imaginary frequencies).

- High-Level Single-Point Energy Calculation:

- Perform a single-point energy calculation for the complex and each monomer using a larger target basis set with diffuse functions (e.g., ma-def2-TZVP or aug-cc-pVTZ) on the optimized geometries.

- BSSE Correction (Counterpoise Method):

- To calculate the BSSE-corrected interaction energy, the energy of monomer A is recalculated in the presence of the ghost orbitals of monomer B (i.e., using the entire basis set of the complex but with the nuclei and electrons of B removed). This is repeated for monomer B with the ghost orbitals of A [18].

- The interaction energy is calculated as: ΔE = E(A·B) − [E(A with ghost B) + E(B with ghost A)].

- Analysis: The counterpoise-corrected energy provides a more reliable estimate of the true interaction energy, crucial for studying supramolecular chemistry and drug binding.

Protocol 2: Dual-Level Geometry Optimization and Energy Refinement

- Objective: To efficiently obtain a highly accurate energy for a molecular system using a robust geometry.

- Initial Geometry Optimization:

- High-Accuracy Single-Point Energy Calculation:

- Using the optimized geometry from the previous step, perform a new single-point energy calculation.

- For this step, use a larger, more complete basis set that the initial basis is a subset of, such as def2-TZVP or rcc-pVTZ (the reduced subset for cc-pVTZ) [27]. This strategy leverages integral screening for efficiency.

- This final energy is your best estimate of the electronic energy.

- Analysis: This protocol ensures that computational resources are allocated efficiently, with the expensive large basis set used only for the final energy evaluation on a geometry that is already very good.

Frequently Asked Questions (FAQs)

Q1: What are the key computational approaches for predicting drug-target interactions (DTIs), and what are their limitations? Several computational methods exist for DTI prediction [32]:

- Structure-based approaches (e.g., molecular docking, molecular dynamics simulations) provide insights but require 3D protein structures and are computationally expensive.

- Ligand-based approaches (e.g., QSAR) depend on known ligands for a target, limiting their predictive power for novel targets.

- Machine learning-based methods learn from known drug and target data to predict interactions but often struggle with the "cold start" problem for new drugs or targets with limited data.

Q2: Why is distinguishing the mechanism of action (MoA) in DTIs important, and how can it be addressed? Predicting whether a drug activates or inhibits its target is critical for clinical application [32]. For example, dopamine receptor activators treat Parkinson's disease, while inhibitors treat psychosis. Advanced frameworks like DTIAM use self-supervised learning on molecular graphs and protein sequences to predict DTIs, binding affinities, and MoA, showing substantial improvement in cold-start scenarios [32].

Q3: What methods are available for predicting solvation free energies, and which are suitable for drug-like molecules? Multiple techniques are used [33]:

- Knowledge-based empirical approaches (e.g., XlogP, ALOGP) are cost-effective.

- Implicit solvation models (e.g., COSMO, SMD) combine QM calculations with parametric models.

- Molecular Dynamics (MD) simulations with explicit solvent are computationally demanding but can capture microsolvation effects. The ABCG2 protocol, a fixed-charge parametrization method, has shown remarkable accuracy for water-octanol transfer free energies (LogP) of drug-like molecules, benefiting from systematic error cancellation [33].

Q4: How does dataset quality and size impact molecular property prediction? The performance of AI models, particularly representation learning models, is highly dependent on dataset size and quality [34]. Real-world drug discovery often faces data scarcity. Studies show that representation learning models can exhibit limited performance without sufficient data, and the presence of "activity cliffs" (large property changes from small structural changes) can significantly impact prediction accuracy [34]. Meta-learning, which learns from multiple related tasks, is one approach to improve performance in low-data regimes [35].

Q5: How can multi-task learning improve molecular property prediction? Multi-task learning allows a model to learn shared representations across multiple related prediction tasks [36]. This is particularly promising when experimental data for a primary property is scarce. By augmenting the primary dataset with auxiliary data from other properties—even if sparse or weakly related—multi-task learning can enhance predictive accuracy and model robustness compared to single-task models [36].

Troubleshooting Guides

Table 1: Troubleshooting Drug-Target Interaction Prediction

| Problem | Possible Cause | Solution |

|---|---|---|

| Poor generalization to new drugs/targets (Cold Start) | Insufficient labeled data for novel entities. | Use self-supervised pre-training frameworks (e.g., DTIAM) on large, unlabeled molecular graph and protein sequence data to learn robust representations [32]. |

| Inability to distinguish Activation vs. Inhibition | Models are only trained for binary interaction or affinity prediction. | Employ unified frameworks that specifically include MoA as a prediction task, leveraging attention mechanisms to interpret key binding sites [32]. |

| Low predictive accuracy on benchmark datasets | Over-reliance on a single data split or evaluation metric; inherent dataset variability. | Perform rigorous statistical analysis with multiple data splits and seeds. Ensure evaluation metrics (e.g., true positive rate) are relevant to the practical application [34]. |

Table 2: Troubleshooting Solvation Free Energy Calculations

| Problem | Possible Cause | Solution |

|---|---|---|

| High error in solvation free energies for drug-like molecules | Inaccurate electrostatic parametrization (fixed atomic charges); poor handling of conformational landscapes. | Use the updated ABCG2 charge model, which has been shown to outperform its predecessor AM1/BCC and HF/6-31G* for complex, polyfunctional molecules [33]. |

| Inaccurate LogP (water-octanol transfer free energy) predictions | Inadequate force field parameters; lack of error cancellation. | Implement MD-based alchemical approaches (e.g., nonequilibrium fast-growth) with the ABCG2 protocol, which benefits from systematic error cancellation between solvents [33]. |

| Poor performance for heterocyclic compounds | Known limitations of force fields (e.g., GAFF2) for atoms with lone pairs (N, S). | Consider using charges derived from more expensive QM/MM simulations for these specific chemical types to improve accuracy [33]. |

| Method / Framework | Input Representation | Key Capabilities | Performance Notes |

|---|---|---|---|

| DTIAM | Molecular Graphs & Protein Sequences | DTI, Binding Affinity (DTA), Mechanism of Action (MoA) | Substantial improvement over baselines, especially in cold-start scenarios. Robust generalization. |

| DeepDTA | SMILES Strings & Protein Sequences | Binding Affinity (DTA) | Uses CNN to learn representations; limited interpretability. |

| MONN | Complex Structure Data | Binding Affinity & Non-covalent interactions | Uses additional supervision to capture key binding sites; increased interpretability. |

| Charge Derivation Protocol | Water Solvation Free Energy (kcal/mol) | 1-Octanol Solvation Free Energy (kcal/mol) | Water-Octanol Transfer Free Energy (LogP, kcal/mol) |

|---|---|---|---|

| AM1/BCC | Reported as unsatisfactory for polyfunctional molecules | Reported as unsatisfactory for polyfunctional molecules | Higher error than ABCG2 |

| HF/6-31G* | Reported as unsatisfactory for polyfunctional molecules | Reported as unsatisfactory for polyfunctional molecules | Higher error than ABCG2 |

| QM/MM | High accuracy | High accuracy | ~0.9 (High accuracy) |

| ABCG2 | Reported as unsatisfactory for polyfunctional molecules | Reported as unsatisfactory for polyfunctional molecules | ~0.9 (Excellent accuracy, benefits from error cancellation) |

Experimental Protocols

Protocol 1: Predicting Drug-Target Interactions and Mechanism of Action with DTIAM

The DTIAM framework provides a unified approach for predicting drug-target interactions (DTI), binding affinities (DTA), and mechanisms of action (MoA) [32].

Methodology:

- Drug Representation Pre-training:

- Input: Represent the drug molecule as a molecular graph.

- Segmentation: The graph is segmented into molecular substructures.

- Self-Supervised Learning: The model is pre-trained on large, unlabeled molecular data using three tasks:

- Masked Language Modeling

- Molecular Descriptor Prediction

- Molecular Functional Group Prediction

- Output: A contextualized embedding matrix representing the drug and its substructures.

Target Representation Pre-training:

- Input: The primary amino acid sequence of the target protein.

- Learning: A Transformer model uses unsupervised language modeling on large protein sequence databases to learn representations and contact maps.

- Output: Feature representations for individual amino acid residues.

Drug-Target Prediction:

- Integration: The learned drug and target representations are combined.

- Prediction Module: An automated machine learning model (using multi-layer stacking and bagging) learns the complex relationships between the paired representations to make final predictions for DTI, DTA, and MoA.

Protocol 2: Calculating Solvation Free Energies Using Alchemical Fast-Growth MD

This protocol details the use of nonequilibrium alchemical fast-growth Molecular Dynamics (MD) for calculating solvation free energies in water and 1-octanol [33].

Methodology:

- System Setup:

- Molecule Preparation: Obtain the 3D structure of the drug-like molecule.

- Force Field Parametrization: Assign parameters. The study recommends using the ABCG2 protocol for deriving fixed atomic charges.

- Solvation: Place the solute in a simulation box filled with explicit solvent molecules (e.g., ~512 SPC/E water molecules). Add counter-ions to neutralize the system.

Equilibration:

- Run classical NPT MD simulations (e.g., at T=300 K and p=1 atm) to equilibrate the system.

Nonequilibrium Fast-Growth Simulation:

- Alchemical Transformation: The solute is annihilated (or decoupled) from the solvent over a series of short, independent simulation trajectories.

- Work Measurement: The work required for this transformation is calculated for each trajectory.

- Free Energy Calculation: The solvation free energy is derived from the distribution of these work values using the Crooks fluctuation theorem or Jarzynski's equality.

Workflow and Pathway Diagrams

DTIAM Prediction Workflow

Solvation Free Energy via MD

The Scientist's Toolkit: Research Reagent Solutions

Table 5: Essential Computational Tools for Biomolecular Modeling

| Item / Resource | Function / Description | Relevance to Field |

|---|---|---|

| BIOVIA Discovery Studio | A software suite for simulation and modeling that includes best-in-class molecular dynamics programs like CHARMm and NAMD [37]. | Enables explicit solvent MD simulations, protein solvation, and free energy calculations for studying drug-target interactions and solvation. |

| RDKit | An open-source cheminformatics toolkit that can compute 200+ 2D molecular descriptors and generate fingerprints (e.g., Morgan/ECFP) [34]. | Used for generating fixed molecular representations (fingerprints, descriptors) that serve as input for traditional machine learning models. |

| AMBER/AmberTools | A package of molecular simulation programs with the ABCG2 (AM1-BCC-GAFF2) force field protocol [33]. | Provides the updated ABCG2 model for accurate parametrization of small molecules for solvation free energy and LogP calculations. |

| QM9 & MoleculeNet Datasets | Public benchmark datasets for molecular property prediction [34] [35]. | Standard benchmarks for training and evaluating models; however, their direct relevance to real-world drug discovery should be critically assessed [34]. |

| Graph Neural Networks (GNNs) | A class of deep learning models designed to work on graph-structured data, such as molecular graphs [34]. | The primary architecture for modern representation learning models in molecular property prediction and DTI forecasting. |

Frequently Asked Questions (FAQs)

1. What is a multi-level computational approach? A multi-level approach, often referred to as a quantum embedding method, partitions a molecular system into different regions that are treated with varying levels of computational theory. Typically, a small, chemically active region is described with a high-level theory (e.g., a large basis set), while the surrounding environment is treated with a lower-level, more efficient method (e.g., a smaller basis set). This strategy balances accuracy and computational cost [38].

2. Why should I consider using a multi-level approach? Multi-level approaches are essential when studying large systems like solvated molecules, biological matrices, or material interfaces, where a full high-level calculation is computationally prohibitive. They allow you to focus computational resources on the part of the system most critical to the property you are investigating, leading to significant time savings without a major sacrifice in accuracy [38].

3. My multi-level calculation converged to an unexpected energy value. What could be wrong? Inconsistent energies can stem from Basis Set Superposition Error (BSSE). This error occurs when basis functions from one fragment artificially improve the description of a neighboring fragment. To correct for this, perform geometry optimization on a counterpoise (CP)-corrected potential energy surface. This is particularly crucial for properties sensitive to non-covalent interactions, like hydrogen bonding energies [39].

4. How do I choose an appropriate basis set combination? Your choice depends on the target property and available resources. For general main-group thermochemistry, the vDZP basis set has been shown to work effectively with a variety of density functionals like B97-D3BJ and r2SCAN-D4, offering a good speed-accuracy trade-off [17]. For response properties like polarizability, doubly-augmented basis sets (e.g., d-aug-cc-pVnZ) are often necessary to describe the electron density tail accurately [40]. The table below provides a performance comparison.

Table 1: Performance of Select Basis Sets with Different Density Functionals on the GMTKN55 Thermochemistry Benchmark [17]

| Functional | Basis Set | Overall Weighted Mean Absolute Deviation (WTMAD2) |

|---|---|---|

| B97-D3BJ | def2-QZVP (Large Quadruple-Zeta) | 8.42 |

| B97-D3BJ | vDZP (Double-Zeta) | 9.56 |

| r2SCAN-D4 | def2-QZVP | 7.45 |

| r2SCAN-D4 | vDZP | 8.34 |

| M06-2X | def2-QZVP | 5.68 |

| M06-2X | vDZP | 7.13 |

5. The geometry of my hydrogen-bonded system seems incorrect. How can I fix this? Geometric distortions, especially in flatter potential energy surfaces like that of the water dimer, are a known issue when using smaller basis sets. As with the energy issue, optimizing the geometry on a CP-corrected surface (CP-OPT) can yield structures much closer to those obtained with large, high-quality basis sets [39].

Troubleshooting Guides

Issue 1: Slow Convergence in Multilevel DFT (MLDFT) Calculations

Problem: The Self-Consistent Field (SCF) procedure in your MLDFT calculation is converging slowly or failing to converge.

Solution: