Benchmarking DFT vs. CCSD(T): A Practical Guide for Accurate Molecular Property Prediction in Drug Development

This article provides a comprehensive benchmark and practical guide for researchers and drug development professionals navigating the trade-offs between computational efficiency and quantum chemical accuracy.

Benchmarking DFT vs. CCSD(T): A Practical Guide for Accurate Molecular Property Prediction in Drug Development

Abstract

This article provides a comprehensive benchmark and practical guide for researchers and drug development professionals navigating the trade-offs between computational efficiency and quantum chemical accuracy. We explore the foundational principles establishing CCSD(T) as the gold standard, detail methodological advances like machine learning and multi-task networks that bridge the accuracy-cost gap, address troubleshooting for common pitfalls in functional selection and out-of-distribution prediction, and present validation frameworks for comparative analysis of energies, geometries, and electron densities. The synthesis offers a clear pathway for selecting the right computational strategy to accelerate reliable molecular discovery.

The Quantum Chemistry Landscape: Why CCSD(T) is the Gold Standard and Where DFT Falls Short

In the pursuit of accurately predicting molecular behavior, computational chemists rely on high-level theoretical methods that can deliver reliable, experimentally-verifiable results. Among these, the coupled cluster with single, double, and perturbative triple excitations (CCSD(T)) method has emerged as the undisputed gold standard for quantum chemical calculations [1] [2]. This status is not merely conferred by tradition but is built upon a robust theoretical foundation that enables CCSD(T) to achieve remarkable accuracy across diverse chemical systems. While density functional theory (DFT) offers computational efficiency and has proven valuable for many applications, its dependence on the selected functional can lead to inconsistent performance and systematic errors, particularly for properties beyond molecular energies [1] [3]. This comparison guide examines the formal foundations of CCSD(T) accuracy, presents objective performance comparisons with alternative methods, and details experimental protocols that demonstrate why this method serves as the critical benchmark in molecular properties research, particularly for pharmaceutical applications where prediction reliability directly impacts drug development outcomes.

Table 1: Key Methodological Comparisons in Computational Chemistry

| Method | Theoretical Foundation | Computational Scaling | Typical Applications | Known Limitations |

|---|---|---|---|---|

| CCSD(T) | Coupled cluster theory with perturbative triples | N⁷ (expensive) | Benchmark calculations, small to medium molecules [2] | High computational cost limits system size |

| DFT | Electron density functionals | N³–N⁴ (efficient) | Large systems, materials science [4] [3] | Functional-dependent accuracy, bandgap underestimation [3] |

| MP2 | Møller-Plesset perturbation theory (2nd order) | N⁵ (moderate) | Initial screening, dispersion interactions | Overbinding, basis set sensitivity |

| DFT-SAPT | Symmetry-adapted perturbation theory | N⁵–N⁶ (moderate) | Non-covalent interactions, molecular forces | Limited for covalent bonding scenarios |

Theoretical Foundations: Why CCSD(T) Works

The exceptional performance of CCSD(T) originates from its sophisticated theoretical architecture, which represents a significant advancement over earlier quantum chemical methods. Conventional analysis based on Hartree-Fock perturbation theory cannot satisfactorily explain why the specific fifth-order terms included in CCSD(T) should be chosen over other possibilities [5]. The method was originally motivated as an attempt to treat the effects of triply excited determinants upon both single and double excitation operators on an equal footing [5].

A particularly insightful perspective demonstrates that the terms appearing in CCSD(T) can be justified if one takes the biorthogonal representation of the CCSD state as the zeroth-order wavefunction rather than the conventional Hartree-Fock reference [5]. This theoretical framework provides the foundation for understanding why the method works so well in practice. The CCSD(T) approach incorporates two principal contributions to the CCSD energy: the first contains the same terms as in the CCSD+T(CCSD) approximation, while the second contains contributions from fifth and higher-order terms in the conventional perturbation expansion [5]. This additional term is nearly always positive, effectively counterbalancing the characteristic overestimation of triple excitation effects that plagues simpler methods.

The method's remarkable accuracy stems from this balanced treatment of electron correlation effects, particularly its systematic approach to capturing the contributions of triple excitations without the prohibitive computational cost of full CCSDT calculations [5]. This theoretical elegance translates to practical reliability, making CCSD(T) predictions as trustworthy as experimental results for many molecular systems [2].

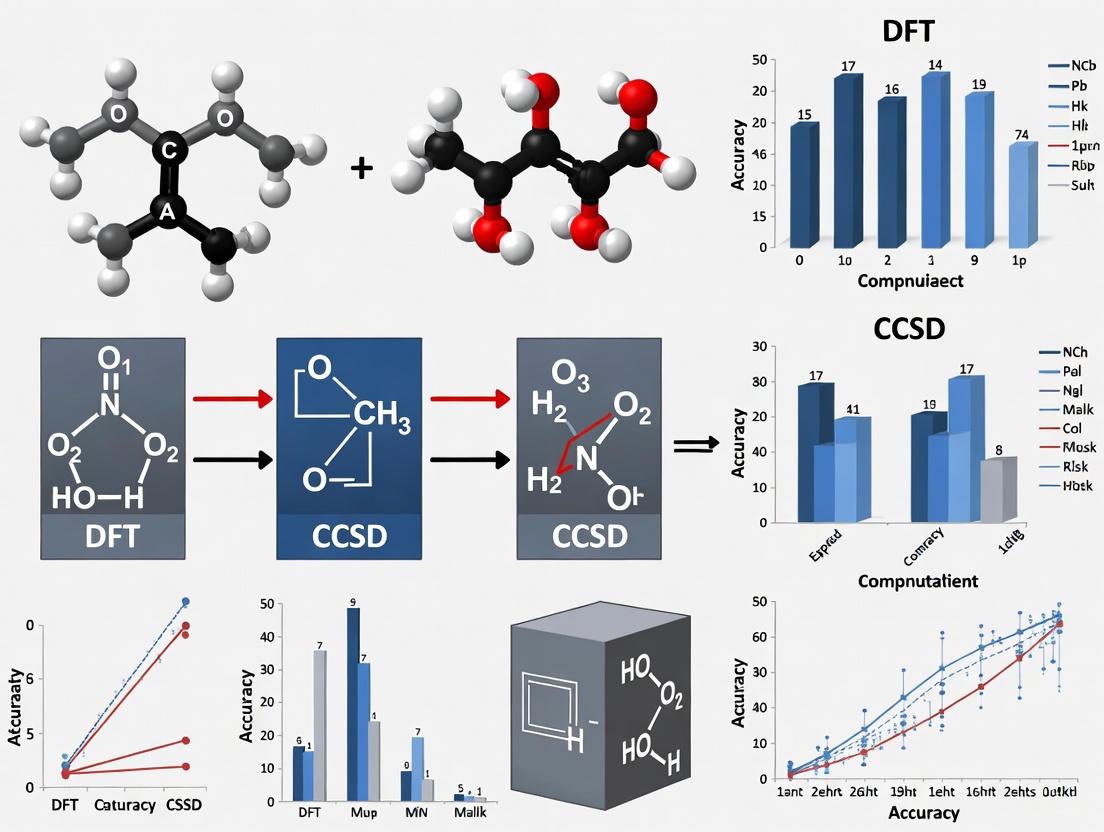

Figure 1: CCSD(T) Computational Workflow

Performance Comparison: CCSD(T) Versus Alternative Methods

Accuracy for Molecular Interactions and Properties

Comprehensive benchmarking studies consistently demonstrate the superior accuracy of CCSD(T) across diverse molecular properties. In a definitive study on the uracil dimer, CCSD(T) interaction energies were determined at the aug-cc-pVDZ and aug-cc-pVTZ levels, with subsequent complete basis set (CBS) limit extrapolation establishing new standards for hydrogen-bonded and stacked structures [6]. These calculations revealed that CCSD(T)/CBS interaction energies differ only slightly regardless of whether researchers employ direct extrapolation of CCSD(T) correlation energies or the sum of extrapolated MP2 interaction energies with extrapolated ΔCCSD(T) correction terms, demonstrating remarkable methodological robustness [6].

When compared to other computational approaches including SCS-MP2, SCS(MI)-MP2, MP3, DFT-D, M06-2X, and DFT-SAPT, CCSD(T) consistently sets the performance standard [6]. Notably, the DFT-SAPT method also yields remarkably good binding energies, while both tested DFT techniques (DFT-D and M06-2X) produce similarly good interaction energies, though still trailing CCSD(T) in absolute accuracy [6].

For dipole moment calculations, CCSD(T) generally delivers accurate predictions, though a detailed analysis of diatomic molecules revealed cases where disagreement with experimental values cannot be satisfactorily explained via relativistic or multi-reference effects [1]. This finding underscores the importance of comprehensive benchmarking beyond energy and geometry properties, as accuracy in one domain does not automatically guarantee accuracy in all electron density-derived properties [1].

Table 2: Performance Comparison for Molecular Interaction Energies (kcal/mol)

| Method | H-Bonded Uracil Dimer | Stacked Uracil Dimer | Deviation from Reference | Computational Cost |

|---|---|---|---|---|

| CCSD(T)/CBS (Reference) | -17.18 | -15.75 | — | Very High |

| SCS(MI)-MP2 | -17.25 | -15.82 | 0.07 | High |

| DFT-SAPT | -17.10 | -15.50 | 0.25 | Medium |

| M06-2X | -17.35 | -15.95 | 0.25 | Medium |

| DFT-D | -17.30 | -16.00 | 0.30 | Medium |

Performance for Complex Systems: Nucleic Acid-Metal Interactions

The superior performance of CCSD(T) extends to biologically relevant systems, as demonstrated in investigations of group I metal interactions with nucleic acids. Researchers have generated complete CCSD(T)/CBS datasets of binding energies for 64 complexes involving group I metals (Li+, Na+, K+, Rb+, or Cs+) directly coordinated to various sites in nucleic acid components [7]. This comprehensive reference dataset enabled rigorous testing of 61 DFT methods, revealing that functional performance depends significantly on metal identity (with errors increasing as group I is descended) and nucleic acid binding site (with larger errors for select purine coordination sites) [7].

For these critical biological interactions, the mPW2-PLYP double-hybrid and ωB97M-V range-separated hybrid functionals demonstrated the best performance among DFT methods (≤1.6% mean percentage error; <1.0 kcal/mol mean unsigned error) when benchmarked against CCSD(T)/CBS references [7]. For more computationally efficient approaches, the TPSS and revTPSS local meta-GGA functionals served as reasonable alternatives (≤2.0% MPE; <1.0 kcal/mol MUE) [7]. This systematic comparison highlights how CCSD(T) reference data enables informed selection of appropriate DFT functionals for specific chemical systems.

Experimental Protocols and Methodologies

Complete Basis Set Extrapolation Techniques

The exceptional accuracy of CCSD(T) is fully realized when combined with complete basis set (CBS) extrapolation techniques. For the uracil dimer study, researchers employed two distinct extrapolation approaches to establish reliable reference values [6]. The first involved direct extrapolation of CCSD(T) correlation energies obtained with the aug-cc-pVDZ and aug-cc-pVTZ basis sets. The second approach combined extrapolated MP2 interaction energies (from aug-cc-pVTZ and aug-cc-pVQZ basis sets) with extrapolated ΔCCSD(T) correction terms (the difference between CCSD(T) and MP2 interaction energies) [6]. The minimal difference between results from these techniques demonstrates their mutual reliability and robustness.

For property calculations beyond interaction energies, such as dipole moments, researchers employ core-correlated CCSD(T) computations using basis sets like the augmented Dunning's weighted core-valence basis set (aug-cc-pwCVTZ and aug-cc-pwCVQZ) to account for core-valence correlations [1]. The CBS limits for molecular properties are predicted using standard two-point extrapolation schemes, while for equilibrium bond lengths, predictions at the quadruple-ζ level often suffice due to their rapid convergence [1].

Validation Against Experimental Data

Rigorous benchmarking of CCSD(T) incorporates comparison with accurate experimental data, particularly for diatomic molecules where high-precision measurements exist. One comprehensive study analyzed CCSD(T) performance for equilibrium bond length, vibrational frequency, and dipole moment versus experimental data for 32 diatomic molecules representing diverse chemical bonding environments [1]. The dataset included main-group metal and non-metal compounds showing covalent and ionic bonds, plus 8 transition metal compounds, providing broad chemical diversity for method validation [1].

For dipole moment calculations, researchers compute both the equilibrium dipole moment (μe) and the zero-point vibrational corrected dipole moment (μ0). The latter includes vibrational average corrections, typically calculated using the discrete variable representation (DVR) method for vibrational wavefunctions, with overlaps obtained by numerical integration [1]. This rigorous approach ensures that comparisons with experimental data account for vibrational effects that influence measured values.

Advanced Applications and Recent Developments

Machine Learning Enhancement of CCSD(T)

Recent innovations aim to overcome the primary limitation of CCSD(T)—its high computational cost—while preserving its exceptional accuracy. MIT researchers have developed a neural network architecture called the "Multi-task Electronic Hamiltonian network" (MEHnet) that can wring more information out of electronic structure calculations [2]. This approach utilizes CCSD(T) calculations performed on conventional computers to train a specialized neural network, which can subsequently perform similar calculations much faster through approximation techniques [2].

Unlike traditional models that assess different properties with separate models, MEHnet employs a multi-task approach using just one model to evaluate multiple electronic properties simultaneously, including dipole and quadrupole moments, electronic polarizability, and the optical excitation gap [2]. The model incorporates an E(3)-equivariant graph neural network, where nodes represent atoms and edges represent bonds between atoms, with customized algorithms that embed physics principles directly into the model [2]. When tested on hydrocarbon molecules, this CCSD(T)-informed model outperformed DFT counterparts and closely matched experimental results from published literature [2].

Pharmaceutical and Biomolecular Applications

In pharmaceutical research and drug development, CCSD(T) serves as the critical benchmark for modeling molecular interactions relevant to drug binding and biomolecular function. The method's ability to accurately characterize nucleic acid-metal interactions has particular relevance for understanding cellular functions, disease progression, and pharmaceutical mechanisms [7]. Such fundamental information is required to understand the roles of metals in basic biological functions and to design nucleic acid sensors that target metal contaminants [7].

The technology holds promise for future drug design applications through its ability to analyze large molecules with thousands of atoms while maintaining CCSD(T)-level accuracy at lower computational cost than DFT [2]. This capability could enable researchers to invent new polymers or materials for drug delivery systems and to characterize hypothetical pharmaceutical compounds before synthetic investment.

Table 3: Essential Computational Resources for CCSD(T) Calculations

| Resource Category | Specific Tools/Solutions | Function/Purpose |

|---|---|---|

| Software Packages | CFOUR, Molpro, Gaussian | Implement CCSD(T) algorithm with various basis sets [1] |

| Basis Sets | aug-cc-pVDZ, aug-cc-pVTZ, aug-cc-pVQZ, aug-cc-pwCVTZ | Systematic improvement of electron distribution description [6] [1] |

| Reference Data | DELTA50 (NMR), S22 set | Experimental validation datasets for method calibration [6] [8] |

| Machine Learning Extensions | MEHnet architecture | Acceleration of CCSD(T) calculations while preserving accuracy [2] |

| High-Performance Computing | National Energy Research Scientific Computing Center, MIT SuperCloud | Computational infrastructure for resource-intensive calculations [2] |

The CCSD(T) method rightfully maintains its status as the gold standard of quantum chemistry due to its robust theoretical foundations, consistently superior performance across diverse molecular systems, and well-established experimental protocols. While DFT methods offer computational advantages for specific applications and system sizes, their functional-dependent accuracy and systematic errors in properties like band gaps and interaction energies necessitate careful benchmarking against CCSD(T) references [3]. The continued development of machine learning approaches that leverage CCSD(T) accuracy while reducing computational cost promises to expand the method's applicability to larger systems relevant to pharmaceutical research and materials design [2]. For researchers requiring the highest possible accuracy in molecular properties calculations, particularly in drug development where prediction reliability directly impacts outcomes, CCSD(T) remains the indispensable benchmark against which all other methods must be measured.

Density Functional Theory (DFT) stands as one of the most widely used computational methods in quantum chemistry and materials science, offering a compelling balance between computational cost and accuracy for predicting molecular properties, reaction energies, and electronic structures. Despite its prominence, DFT faces a fundamental challenge known as the "exchange-correlation problem," where the exact functional form that describes the quantum mechanical interactions between electrons remains unknown. This compromise necessitates the use of approximate exchange-correlation functionals, whose performance varies significantly across different chemical systems and properties of interest. Within the broader context of benchmarking DFT against the highly accurate Coupled Cluster Singles, Doubles, and perturbative Triples (CCSD(T)) method for molecular properties research, this guide objectively compares the performance of various DFT functionals, providing researchers with experimental data and methodologies to inform their computational choices.

The exchange-correlation energy in DFT must account for all quantum effects not captured by the simple electrostatic terms in the Kohn-Sham equations. This includes complex electron-electron interactions such as self-interaction correction, static correlation in multi-reference systems, and non-covalent van der Waals forces. The development of approximate functionals has followed Jacob's Ladder, progressing from local density approximations (LDA) to generalized gradient approximations (GGA), meta-GGAs, hybrid functionals (which incorporate exact Hartree-Fock exchange), and increasingly sophisticated double-hybrid and range-separated functionals. Each rung on this ladder aims to better approximate the exact exchange-correlation functional while maintaining computational feasibility, yet no single functional performs equally well across all chemical systems.

Theoretical Framework and Benchmarking Methodology

The CCSD(T) Gold Standard

Coupled Cluster theory with single, double, and perturbative triple excitations (CCSD(T)) is widely regarded as the "gold standard" in quantum chemistry for achieving high accuracy where its computational cost is feasible. CCSD(T) provides benchmark-quality results for molecular geometries, vibrational frequencies, and reaction energies, typically serving as the reference against which DFT functionals are evaluated. The method systematically accounts for electron correlation effects through its cluster operator expansion, with the perturbative treatment of triple excitations providing an excellent balance between accuracy and computational cost for single-reference systems. However, its steep computational scaling (N7, where N is proportional to system size) limits its application to small and medium-sized molecules, creating the need for reliable DFT approximations for larger systems.

Benchmark studies typically employ CCSD(T) at the complete basis set (CBS) limit as their reference standard, often extrapolated from hierarchical basis sets such as cc-pVXZ (where X = D, T, Q, 5). For systems containing heavier elements, additional considerations like relativistic effects and core-valence correlation may require specialized basis sets and treatment. As noted in studies of tungsten-containing molecules, CCSD(T)/cc-pVQZ energies approach the complete basis set limit, with core correlation contributions becoming significant (3-5%) for accurate thermochemical predictions [9].

DFT Functional Categories

DFT functionals can be categorized into distinct classes based on their theoretical construction:

- Generalized Gradient Approximations (GGAs): Incorporate local density and its gradient (e.g., PBE)

- Meta-GGAs: Additionally include the kinetic energy density (e.g., SCAN)

- Hybrid Functionals: Mix in exact Hartree-Fock exchange with GGA exchange-correlation (e.g., B3LYP, PBE0)

- Range-Separated Hybrids: Use different treatments for short- and long-range electron exchange (e.g., ωB97XD, M11)

- Double Hybrids: Incorporate both exact exchange and a perturbative MP2-like correlation term (e.g., B2GP-PLYP)

Standard Benchmarking Protocol

A rigorous DFT benchmarking study follows a systematic protocol to ensure meaningful comparisons:

Reference Data Generation: High-level CCSD(T) calculations are performed to establish reference values for molecular properties including equilibrium geometries, atomization energies, vibrational frequencies, and reaction barrier heights.

Basis Set Selection: Consistent, high-quality basis sets are employed, typically triple-zeta quality or higher, with appropriate treatment for different elements (e.g., cc-pVTZ, def2-TZVP).

Chemical Space Sampling: A diverse set of molecules and reactions is selected to represent the chemical space of interest, including various bonding types and electronic environments.

Error Metrics Calculation: Statistical measures including Mean Absolute Error (MAE), Mean Absolute Deviation (MAD), and root-mean-square error are computed to quantify functional performance.

Core Correlation Assessment: For heavier elements, the effect of inner core electrons on molecular properties is evaluated, potentially requiring all-electron relativistic treatments or small-core pseudopotentials.

The diagram below illustrates this standard benchmarking workflow:

Comparative Performance of DFT Functionals

Performance Across Elemental Systems

Beryllium, Tungsten, and Hydrogen Systems

A comprehensive study of neutral molecules containing beryllium, tungsten, and hydrogen (Ben, BenHm, Wn, WnBem, and WnHm with m + n ≤ 4) compared 16 density functionals from various rungs of Jacob's ladder against CCSD(T) reference data [9]. The performance across three key molecular properties revealed significant functional-dependent variations:

Table 1: Functional Performance for Be/W/H Systems [9]

| Functional | Atomization Energy MAE | Bond Length MAE | Vibrational Frequency MAE | Overall Ranking |

|---|---|---|---|---|

| ωB97XD | Best | 2nd | 2nd | 1st |

| B97D | 2nd | - | - | 2nd |

| M06 | 3rd | - | - | 3rd |

| B3LYP | 4th | - | 2nd | 4th |

| M11 | 5th | 1st | 3rd | 5th |

| HSEH1PBE | - | 3rd | 1st | 6th |

The range-separated hybrid functional ωB97XD demonstrated exceptional performance for atomization energies, while closely competing with M11 for bond lengths and vibrational frequencies. The M11 functional stood out as accurate across all three properties, showing particular strength for bond length prediction. The study also highlighted that CCSD(T)/cc-pVQZ energies approach the complete basis set limit, with core correlation contributing 3-5% to atomization energies for tungsten-containing molecules.

Silicon-Oxygen-Carbon-Hydrogen Systems

In Si-O-C-H molecular systems, which are particularly relevant in materials science and combustion chemistry, different functionals excelled for different properties [10]:

Table 2: Functional Performance for Si-O-C-H Systems [10]

| Functional | Enthalpy of Formation MAE | Vibrational Frequencies MAE | Reaction Energies MAE |

|---|---|---|---|

| M06-2X | Best | - | - |

| SCAN | - | Best | - |

| B2GP-PLYP | - | - | Best |

| PW6B95 | Good | Good | Good |

The M06-2X functional provided the most accurate enthalpies of formation, while the SCAN meta-GGA functional excelled in predicting vibrational frequencies and zero-point energies. For reaction energies involving relative stabilities of species within the same reaction system, the double-hybrid B2GP-PLYP functional showed the smallest errors. The PW6B95 functional emerged as the most consistently performing across all studied properties in silicon chemistry.

Performance for Transition Metal Catalysis

Transition metal systems present particular challenges for DFT due to complex electronic structures with near-degeneracies and multi-reference character. A benchmark study investigating activation energies of various covalent main-group single bonds by Pd, PdCl-, PdCl2, and Ni catalysts evaluated 23 functionals against CCSD(T)/CBS reference data [11].

Table 3: Functional Performance for Transition Metal Catalyzed Bond Activation [11]

| Functional | Type | MAD (kcal mol⁻¹) | Notes |

|---|---|---|---|

| PBE0-D3 | Hybrid GGA | 1.1 | Best for complete set |

| PW6B95-D3 | Hybrid meta-GGA | 1.9 | Excellent performance |

| B3LYP-D3 | Hybrid GGA | 1.9 | Reliable choice |

| PWPB95-D3 | Double Hybrid | 1.9 | Robust for barriers |

| M06 | Hybrid meta-GGA | 4.9 | Moderate performance |

| M06-2X | Hybrid meta-GGA | 6.3 | Lower accuracy |

| M06-HF | Hybrid meta-GGA | 7.0 | Poor performance |

The study revealed that hybrid functionals with dispersion corrections (D3) generally performed best, with PBE0-D3 showing the lowest mean absolute deviation (MAD = 1.1 kcal mol⁻¹). Double-hybrid functionals also performed well, though some exhibited larger errors for nickel-containing systems due to partial breakdown of the perturbative treatment in cases with multi-reference character. The Minnesota functionals (M06 suite) showed considerably higher errors, with M06-HF performing poorest in this chemical space.

Experimental Protocols and Computational Methodologies

Reference Data Generation with CCSD(T)

The accuracy of any DFT benchmark study fundamentally depends on the quality of the reference data. The standard protocol for generating CCSD(T) reference values involves:

Geometry Optimization: Initial molecular geometries are optimized at a high level of theory, typically using a hybrid functional with a triple-zeta basis set.

Basis Set Selection: Dunning's correlation-consistent basis sets (cc-pVXZ) are employed in a hierarchical approach. For molecules containing heavier elements, specifically tailored basis sets are necessary (e.g., cc-pVXZ-PP for transition metals with pseudopotentials).

Energy Extrapolation to CBS: CCSD(T) energies are calculated with increasing basis set sizes (e.g., cc-pVTZ, cc-pVQZ, cc-pV5Z) and extrapolated to the complete basis set limit using established extrapolation formulas (e.g., Helgaker's scheme).

Core Correlation Evaluation: The contribution of inner-shell electrons to molecular properties is assessed by comparing all-electron calculations with those using frozen-core approximations. For tungsten-containing molecules, core correlation contributes 3-5% to atomization energies [9].

Relativistic Effects: For systems containing heavy elements (e.g., tungsten), scalar relativistic effects are incorporated through appropriate pseudopotentials or relativistic Hamiltonians.

Thermochemical Corrections: Zero-point vibrational energies and thermal corrections are computed from harmonic vibrational frequencies to convert electronic energies into thermodynamic properties.

DFT Calculations and Error Analysis

The DFT benchmarking process follows a systematic approach to ensure fair functional comparisons:

Functional Selection: Representative functionals are selected from each rung of Jacob's Ladder, covering various theoretical constructions.

Consistent Computational Settings: All calculations employ identical integration grids, SCF convergence criteria, and geometry optimization protocols to eliminate technical variations.

Property Calculation: For each functional, the following properties are computed:

- Equilibrium bond lengths (Å)

- Atomization energies (kJ/mol)

- Harmonic vibrational frequencies (cm⁻¹)

- Reaction energies and activation barriers (kcal/mol)

Error Quantification: Deviations from CCSD(T) reference values are calculated for each property and functional, followed by statistical analysis including:

- Mean Absolute Error (MAE)

- Mean Signed Error (MSE)

- Root-Mean-Square Error (RMSE)

- Maximum Error

Chemical Space Analysis: Errors are analyzed across different chemical domains (e.g., bond types, element combinations) to identify functional strengths and weaknesses.

The relationship between different computational methods and their respective accuracy/computational cost is visualized below:

Research Reagent Solutions

Successful DFT benchmarking requires careful selection of computational tools and protocols. The following table details essential components of a robust benchmarking workflow:

Table 4: Essential Computational Tools for DFT Benchmarking

| Tool Category | Specific Examples | Function and Importance |

|---|---|---|

| Electronic Structure Packages | TURBOMOLE, ORCA, NWChem, MOLPRO | Provide implementations of various DFT functionals and wavefunction methods with optimized algorithms for different computational architectures. |

| Basis Set Libraries | Basis Set Exchange, EMSL Basis Set Library | Standardized collections of Gaussian basis sets ensuring consistent comparisons across studies and systems. |

| Wavefunction Methods | CCSD(T), MP2, CASSCF | High-level reference methods for generating benchmark data and treating multi-reference systems. |

| Dispersion Corrections | D3, D4, vdW-DF | Account for long-range dispersion interactions missing in many standard functionals, crucial for non-covalent interactions. |

| Relativistic Methods | ECPs, ZORA, DKH | Pseudopotentials (ECPs) and relativistic Hamiltonians for heavy elements where relativistic effects become significant. |

| Thermochemistry Tools | GoodVibes, Shermo | Process frequency calculations to obtain thermochemical corrections (ZPVE, enthalpies, free energies). |

| Error Analysis Scripts | Custom Python/R scripts | Automated statistical analysis of deviations between DFT and reference data across multiple chemical systems. |

The comprehensive benchmarking of DFT functionals against CCSD(T) reference data reveals a complex landscape where functional performance significantly depends on the chemical system and molecular properties of interest. No single functional emerges as universally superior, necessitating careful selection based on the specific application.

For systems containing beryllium, tungsten, and hydrogen, range-separated hybrids (ωB97XD) and the M11 functional provide excellent overall performance [9]. In silicon-oxygen-carbon-hydrogen systems, different functionals excel for different properties: M06-2X for enthalpies of formation, SCAN for vibrational frequencies, and B2GP-PLYP for reaction energies [10]. For transition metal catalysis involving bond activation, hybrid functionals with dispersion corrections (PBE0-D3, PW6B95-D3, B3LYP-D3) deliver the most reliable results [11].

These findings underscore the critical importance of context-specific functional selection in computational chemistry and materials science research. The "DFT compromise" remains an unavoidable aspect of electronic structure calculations, but systematic benchmarking against high-level wavefunction methods provides a rational foundation for navigating this compromise. As new functionals continue to emerge and computational resources expand, this benchmarking paradigm will remain essential for advancing the reliability and predictive power of computational chemistry across diverse chemical domains.

In modern drug discovery, the accurate prediction of key molecular properties—such as binding energetics, molecular geometries, and interaction forces—is paramount for understanding molecular recognition and optimizing lead compounds. Computational chemistry provides powerful tools for this task, with Density Functional Theory (DFT) and the coupled-cluster with single, double, and perturbative triple excitations (CCSD(T)) method representing two dominant approaches with a well-documented trade-off between computational cost and accuracy [12] [13]. While DFT, with its favorable scaling of approximately N³ (where N is system size), is the workhorse for calculating properties of large molecular systems, its accuracy for many molecules is limited to 2-3 kcal·mol⁻¹, which is often insufficient for reliably predicting binding affinities [12]. In contrast, CCSD(T), widely regarded as the "gold standard" of quantum chemistry, provides superior accuracy but at a prohibitive computational cost that scales as N⁷, effectively limiting its application to small molecules [12] [14]. This guide provides a comprehensive benchmark comparison of these methods, focusing on their performance in predicting essential properties for drug development.

Comparative Accuracy of DFT and CCSD(T) for Core Molecular Properties

The reliability of computational methods in drug discovery depends on their accuracy across multiple molecular properties. The table below summarizes the performance of DFT and CCSD(T) for key properties critical to drug development.

Table 1: Benchmarking DFT vs. CCSD(T) on Key Molecular Properties

| Molecular Property | DFT Performance | CCSD(T) Performance | Significance in Drug Discovery |

|---|---|---|---|

| Total Energy | Accuracy limited to ~2-3 kcal·mol⁻¹ with standard functionals [12] | Quantum chemical accuracy (errors <1 kcal·mol⁻¹) [12] | Determines binding free energy and stability [15] |

| Molecular Geometries | Generally reliable for equilibrium structures; fails for strained geometries [12] | High accuracy across diverse conformations [12] | Affects binding pose and molecular recognition |

| Non-Covalent Interactions | Varies widely; often requires empirical dispersion corrections [16] | Highly accurate for weak interactions [16] | Governs protein-ligand binding and specificity [15] |

| Reaction Mechanisms | Can study reaction paths; accuracy depends on functional [13] | High accuracy for barrier heights and reaction paths [13] | Essential for covalent inhibitor design |

| Charge Distribution | Modern meta-GGA/hybrid functionals provide good accuracy [17] | Provides benchmark-quality charge densities [17] | Influences electrostatic interactions and solubility |

| Forces for MD Simulations | Adequate with accurate functionals; limited by energy surface fidelity [12] | Provides highest quality forces for dynamics [16] | Enables accurate molecular dynamics simulations |

The performance of DFT is heavily influenced by the choice of the exchange-correlation (XC) functional [13] [17]. Early functionals like the Local Density Approximation (LDA) have been superseded by Generalized Gradient Approximation (GGA) functionals like PBE, and more advanced meta-GGA and hybrid functionals, which generally provide improved accuracy for properties like atomization energies and charge densities [13] [17].

Advanced Protocols: Bridging the Accuracy-Speed Divide

Machine Learning-Enhanced Computational Chemistry

To overcome the limitations of both DFT and CCSD(T), researchers have developed advanced protocols that leverage machine learning (ML). The Δ-DFT (delta-DFT) approach is particularly powerful, where a model learns the energy difference between a DFT calculation and a CCSD(T) calculation as a functional of the DFT electron density [12]. This method significantly reduces the amount of training data required and can achieve quantum chemical accuracy (errors below 1 kcal·mol⁻¹) while retaining the computational speed of DFT [12]. This facilitates running gas-phase molecular dynamics simulations with CCSD(T) quality, even for challenging cases like strained geometries and conformer changes where standard DFT fails [12].

Another innovative approach is the Multi-task Electronic Hamiltonian network (MEHnet) developed by MIT researchers. This neural network architecture is trained on CCSD(T) data and can subsequently predict multiple electronic properties at once—including dipole moments, electronic polarizability, and excitation gaps—at a computational cost lower than DFT [14]. This multi-task approach enables comprehensive molecular characterization from a single model.

Quantum Monte Carlo for Accurate Forces

Quantum Monte Carlo (QMC) has emerged as a powerful alternative for generating reference-quality data, particularly for atomic forces used in molecular dynamics simulations. Studies on fluxional molecules like ethanol have demonstrated that forces obtained from diffusion Monte Carlo (DMC) with a single determinant can achieve accuracy comparable to CCSD(T) [16]. These QMC forces can then be used to train machine-learning force fields that faithfully reproduce spectroscopic properties and dynamics at coupled-cluster quality [16].

Table 2: Advanced Protocols for High-Accuracy Molecular Property Prediction

| Protocol | Methodology | Advantages | Limitations |

|---|---|---|---|

| Δ-DFT [12] | Machine-learning the CCSD(T)-DFT energy difference from DFT densities | Reaches quantum chemical accuracy; reduces training data needs; exploits molecular symmetries | Requires initial CCSD(T) training data; system-specific |

| MEHnet [14] | E(3)-equivariant graph neural network trained on CCSD(T) data | Multi-task prediction (energy, forces, electronic properties); high data efficiency | Training complexity; computational demands for large systems |

| QMC Forces [16] | Using DMC or VMC to compute forces for ML force field training | CCSD(T)-level accuracy for forces; favorable scaling for larger molecules | Statistical noise; wave function optimization required |

The following diagram illustrates a generalized workflow for employing these advanced protocols in drug discovery research:

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Successful implementation of the benchmarking protocols described requires familiarity with both conceptual frameworks and practical computational tools. The following table details key "research reagent solutions" essential for molecular property prediction in drug development.

Table 3: Essential Computational Tools for Molecular Property Research

| Tool Category | Specific Examples | Function in Research |

|---|---|---|

| DFT Functionals | PBE (GGA), meta-GGA, hybrid functionals [13] [17] | Approximate exchange-correlation energy; balance of accuracy and speed for large systems |

| Wave Function Methods | CCSD(T), CCSD [12] [17] [16] | Provide benchmark-quality reference data for energies and properties |

| Quantum Monte Carlo | VMC, DMC with VD approximation [16] | Generate accurate forces and energies for molecular dynamics training data |

| Machine Learning Models | Δ-DFT, MEHnet, kernel ridge regression [12] [14] | Learn complex mappings from electronic structure to properties; accelerate predictions |

| Molecular Descriptors | Electron density, Hirshfeld charges [12] [17] | Represent molecular identity for ML models; analyze charge transfer |

| Basis Sets | Correlation-consistent (cc-pVTZ, cc-pVQZ) [16] | Expand molecular orbitals; larger basis needed for density convergence [17] |

The electron density plays a particularly crucial role as both a fundamental quantum mechanical observable and a powerful molecular descriptor. According to the Hohenberg-Kohn theorems, the ground state electron density uniquely determines all molecular properties [12] [13]. Modern DFT functionals, particularly meta-GGA and hybrid functionals, can provide highly accurate charge densities when used with large basis sets [17]. These densities are essential for calculating properties like Hirshfeld charges, which measure charge transfer and are used in advanced machine-learning potentials to model long-range electrostatics [17].

Benchmarking studies consistently demonstrate that while DFT provides a practical balance of efficiency and accuracy for many drug discovery applications, CCSD(T) remains the uncompromised standard for molecular property prediction. The emergence of machine-learning protocols like Δ-DFT and MEHnet, along with advanced quantum methods like QMC, is rapidly bridging the historical gap between these approaches. These hybrid strategies leverage the accuracy of CCSD(T) with the scalability of DFT, enabling previously infeasible simulations with quantum chemical accuracy.

Future advancements will likely focus on developing more generalizable models that cover broader chemical spaces with minimal training, extending these approaches to heavier elements across the periodic table, and further integrating them into automated drug discovery pipelines [14]. As these computational techniques continue to mature, they will increasingly become indispensable tools for researchers seeking to understand and optimize the molecular interactions that underpin successful therapeutic development.

Coupled-cluster theory with single, double, and perturbative triple excitations, known as CCSD(T), has firmly established itself as the uncontested reference method in computational chemistry for predicting molecular properties, reaction energies, and interaction strengths. Dubbed the "gold standard" of quantum chemistry, CCSD(T) provides the benchmark against which all other, more approximate methods—particularly various density functional theory (DFT) approximations—are measured [18] [19]. This status is not merely ceremonial; it stems from the method's exceptional accuracy and systematically improvable nature, which have been consistently validated against experimental data and full configuration interaction calculations [20] [5]. In the context of molecular data generation for fields such as drug development and materials science, CCSD(T) provides the critical reference points that enable researchers to identify systematic errors in faster, more applicable methods and develop more robust computational protocols.

The critical challenge, however, has been the prohibitive computational cost of conventional CCSD(T) calculations, which traditionally limited its application to small molecules of approximately 20-25 atoms [20]. This review explores how recent methodological and computational advances are systematically overcoming this barrier, extending the reach of CCSD(T) accuracy to medium and large molecular systems relevant to pharmaceutical and materials research, thereby solidifying its role as the cornerstone for reliable molecular benchmarking.

Theoretical Foundation and Methodological Advances

The Theoretical Underpinnings of CCSD(T)

The CCSD(T) method builds upon the coupled-cluster singles and doubles (CCSD) approach by adding a non-iterative perturbative correction for connected triple excitations, denoted as (T) [5]. The remarkable success of CCSD(T) can be understood from a theoretical perspective that treats the biorthogonal representation of the CCSD state as the zeroth-order wavefunction, rather than the conventional Hartree-Fock reference [5]. This theoretical framework explains why CCSD(T) maintains excellent accuracy even in challenging cases where simpler perturbation theories fail. The method's balanced treatment of the single ((T1)) and double ((T2)) excitation operators against the triple excitations provides a delicate counterbalance that prevents the overestimation of correlation effects characteristic of earlier approximations like CCSD+T(CCSD) [5]. This theoretical robustness translates into practical reliability across diverse chemical systems.

Cost-Reduction Techniques Extending the Applicability Domain

Recent years have witnessed groundbreaking advances that dramatically reduce the computational cost of CCSD(T) calculations without sacrificing accuracy:

Frozen Natural Orbitals (FNOs): This approach compresses the virtual molecular orbital space by discarding orbitals that contribute minimally to the electron correlation energy. Conservative FNO truncation thresholds can maintain an accuracy of better than 1 kJ/mol compared to canonical CCSD(T) while reducing the computational cost by up to an order of magnitude [20]. This enables the application of CCSD(T) to systems of 50-75 atoms, a size range previously inaccessible without local approximations [20].

Natural Auxiliary Functions (NAFs): Analogous to FNOs, NAFs compress the auxiliary basis set used in density fitting approximations. By reducing the number of functions needed to describe the electron repulsion integrals, NAFs further decrease computational and memory requirements, particularly when combined with FNOs [20].

Domain-Based Local Pair Natural Orbitals (DLPNO): The DLPNO-CCSD(T) method leverages the local nature of electron correlation by expressing the wavefunction in a basis of pair natural orbitals localized in spatial domains. This achieves linear scaling computational cost with system size, enabling applications to very large systems including ionic liquids and microsolvated clusters [21] [19]. Achieving "spectroscopic accuracy" of 1 kJ/mol for non-covalent interactions, however, often requires tighter convergence settings and iterative treatment of triple excitations, increasing computational cost approximately 2.5-fold [19].

Parallelized Algorithms: Modern hybrid OpenMP/Message Passing Interface (MPI) parallel implementations distribute the computational load efficiently across multiple processor cores and nodes. These implementations express intermediates using density fitting formalism with only three-index quantities, minimizing data storage and communication overhead [22]. Such implementations demonstrate excellent parallel scaling for cost-determining operations up to hundreds of processor cores, making accurate calculations on systems with 60 atoms and 2500 orbitals feasible [22].

The combination of these techniques represents a paradigm shift, making "gold standard" CCSD(T) quality computations accessible for a considerably larger portion of the chemical compound space using affordable resources and reasonable wall times [20].

CCSD(T) Benchmarking Data and Performance Assessment

The true value of CCSD(T) emerges in its role for generating benchmark-quality reference data that enables critical evaluation of more efficient computational methods. The following table summarizes key benchmark studies and their findings.

Table 1: Overview of CCSD(T) Benchmark Studies and Key Findings

| System Studied | Reference Method | Benchmarked Methods | Key Finding | Source |

|---|---|---|---|---|

| Group I Metal–Nucleic Acid Complexes (64 complexes) | CCSD(T)/CBS | 61 DFT functionals | mPW2-PLYP (double-hybrid) and ωB97M-V performed best (MPE ≤1.6%, MUE <1.0 kcal/mol) | [7] |

| N-Methylacetamide (NMA)-Water Complexes | CCSD(T)/CBS | MP2, Double-hybrid and hybrid DFT | Double-hybrid functionals (DSD-PBEP86-D3BJ, B2PLYP-D3BJ) showed best performance | [23] |

| Ionic Liquids (Intermolecular Interactions) | CCSD(T) | DLPNO-CCSD(T) | DLPNO-CCSD(T) achieved chemical accuracy with tight settings; spectroscopic accuracy required iterative triples | [19] |

| Organocatalytic & Transition-Metal Reactions | FNO-CCSD(T) | Canonical CCSD(T) | FNO-CCSD(T) maintained 1 kJ/mol accuracy with massive cost reduction | [20] |

| Li+ Association with Organic Carbonates | DLPNO-CCSD(T)/CBS | Various DLPNO-based protocols and DFT | Accurate protocols (deviations <0.2 kcal/mol) established; PWPB95-D4 was best DFT | [21] |

Critical Insights from Benchmark Studies

Several critical patterns emerge from these benchmark studies that guide method selection for computational investigations:

DFT Performance is System-Dependent: The performance of DFT approximations varies significantly depending on the chemical system and property studied. For group I metal-nucleic acid complexes, the best-performing functionals were the double-hybrid mPW2-PLYP and the range-separated hybrid ωB97M-V, while the local meta-GGA functionals TPSS and revTPSS offered reasonable compromises between cost and accuracy [7]. In contrast, for the binding energies of Li+ with organic carbonates, the double-hybrid PWPB95-D4 functional outperformed others [21].

The Critical Role of London Dispersion: For condensed systems like ionic liquids, London dispersion forces can contribute up to 150 kJ/mol in large-scale clusters [19]. Methods that lack proper dispersion corrections, such as the historically popular B3LYP/6-31G* combination, fail dramatically for such systems. Modern composite methods and dispersion-corrected functionals are essential for credible results [18].

Basis Set Convergence: The slow basis set convergence of correlation energies necessitates the use of at least triple-ζ and ideally quadruple-ζ basis sets, followed by extrapolation to the complete basis set (CBS) limit to obtain reliable benchmark data [7] [20]. The DLPNO-CCSD(T) binding energies converge much faster with Ahlrichs' def2 basis sets compared to Dunning's correlation-consistent basis sets [21].

Best-Practice Protocols for Molecular Benchmarking

Workflow for Generating Reference-Quality Data

The following diagram illustrates a robust, generalized workflow for generating and utilizing CCSD(T)-level benchmark data, integrating the methodological advances discussed.

Figure 1: CCSD(T) Benchmarking Workflow

Detailed Methodological Specifications

For researchers implementing these protocols, the following technical specifications are critical:

FNO-CCSD(T) Protocol: Employ conservative FNO and NAF truncation thresholds (e.g., those preserving 99.95% of the canonical correlation energy) to maintain accuracy within 1 kJ/mol. Use triple- and quadruple-ζ basis sets (e.g, cc-pwCVTZ/cc-pwCVQZ) with CBS extrapolation [20]. This approach is particularly suited for systems of 50-75 atoms where high accuracy is paramount.

DLPNO-CCSD(T) Protocol: For larger systems or screening applications, use TightPNO or VeryTightPNO settings with def2 basis sets for faster convergence [21]. To achieve spectroscopic accuracy (∼1 kJ/mol) for challenging non-covalent interactions, particularly those involving hydrogen bonds or halides, employ iterative triples correction (T1) and tighten the TCutPNO and TCutMKN settings by two orders of magnitude compared to default [19].

DFT Benchmarking Protocol: When evaluating DFT methods against CCSD(T) benchmarks, ensure proper treatment of dispersion corrections (e.g., D3(BJ) or D4), and account for basis set superposition error (BSSE) via counterpoise corrections where necessary [7] [23]. Test multiple functional classes (double-hybrid, hybrid, meta-GGA) as performance is system-dependent [7] [18].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Essential Computational Tools for CCSD(T) Benchmarking

| Tool / Method | Function | Application Context |

|---|---|---|

| FNO-CCSD(T) | Cost-reduced canonical CCSD(T) | High-accuracy benchmarks for medium systems (50-75 atoms) [20] [24] |

| DLPNO-CCSD(T) | Linear-scaling local coupled cluster | Large systems (>100 atoms), screening, non-covalent interactions [21] [19] |

| CBS Extrapolation | Estimates complete basis set limit | Eliminates basis set error in reference energies [7] |

| Double-Hybrid DFT | Incorporates MP2 correlation | Highest-accuracy DFT tier (e.g., PWPB95-D4, mPW2-PLYP) [21] [7] |

| Dispersion Corrections | Accounts for London dispersion | Essential for non-covalent interactions (D3(BJ), D4) [18] [19] |

| Composite Methods | Balanced cost-accuracy recipes | Efficient property prediction (e.g., r2SCAN-3c, B97M-V) [18] |

The evolution of CCSD(T) from a benchmark method for small systems to a practical tool for molecular systems of pharmaceutical and materials science relevance represents a transformative advancement in computational chemistry. Through sophisticated cost-reduction techniques like FNOs and DLPNO, coupled with efficient parallel implementations, the gold standard of quantum chemistry is now accessible for a significantly expanded range of molecular applications.

The rigorous benchmarking against CCSD(T) references has revealed the system-dependent performance of DFT approximations and underscored the importance of dispersion interactions and robust basis set convergence. As these advanced CCSD(T) protocols become more integrated into automated workflows and multi-level schemes—such as generating training data for machine learning potentials [24]—the reliability of computational predictions across drug discovery and materials design will continue to improve. For the practicing computational chemist, the strategic application of these protocols, choosing the appropriate cost-accuracy balance through FNO-CCSD(T) or DLPNO-CCSD(T) based on the system size and accuracy requirements, now enables the routine generation of reference-quality data that underpins robust molecular science.

Bridging the Accuracy-Cost Gap: Modern Strategies for CCSD(T)-Level Results

Density Functional Theory (DFT) stands as a cornerstone in computational chemistry and materials science, offering a practical balance between computational cost and accuracy for simulating electronic structures. However, its approximations can lead to significant errors, particularly for properties like reaction barriers, van der Waals interactions, and strongly correlated systems [12] [25]. This guide objectively compares two modern machine learning (ML) paradigms—Δ-Learning and Machine-Learned Hohenberg-Kohn (ML-HK) Maps—that aim to correct DFT densities and energies, elevating their accuracy towards the gold-standard coupled-cluster (CCSD(T)) level. Framed within a broader thesis on benchmarking DFT against CCSD(T) for molecular properties research, this analysis provides experimental data, detailed protocols, and practical toolkits for researchers and drug development professionals seeking to implement these advanced corrections.

Comparative Analysis of ML Correction Approaches

The following table summarizes the core characteristics, performance, and applicability of the two primary ML correction methods for DFT.

Table 1: Comparison of Δ-Learning and ML-HK Map Approaches

| Feature | Δ-Learning (Delta-Learning) | ML-HK Maps (Machine-Learned Hohenberg-Kohn Maps) |

|---|---|---|

| Core Concept | Learns the difference (Δ) between a high-level (e.g., CCSD(T)) and a low-level (e.g., DFT) energy from a DFT-calculated electron density [12]. | Learns a direct mapping from the external potential (or nuclear coordinates) to the electron density and/or total energy, bypassing the Kohn-Sham equations [26] [27]. |

| Primary Input | Self-consistent DFT electron density ((n^{DFT}(\mathbf{r}))) [12]. | External potential ((v_{ext}(\mathbf{r}))) defined by nuclear charges and positions [26]. |

| Target Output | Correction energy (ΔE) to be added to the DFT total energy [12]. | Electron density ((n(\mathbf{r}))) and/or total energy (E) [26] [27]. |

| Key Advantage | Significantly reduces the amount of high-level training data required; corrects systematic DFT errors [12]. | Provides a direct route to properties, including excited states, and can be more physically grounded [26]. |

| Reported Accuracy | Errors below 1 kcal·mol⁻¹ for coupled-cluster energies from PBE densities [12]. | Chemical accuracy (~1-3 kcal·mol⁻¹) for energies; capable of excited-state dynamics [26] [27]. |

| Computational Workflow | DFT → ML Δ-Correction → Corrected Energy | ML-HK Prediction → Density/Energy (Bypasses SCF) |

| Demonstrated Application | Gas-phase molecular dynamics of resorcinol with CCSD(T) accuracy [12]. | Excited-state molecular dynamics of malonaldehyde [26]. |

Quantitative Performance Benchmarking

Experimental data from key studies demonstrates the capacity of both methods to achieve high accuracy across different molecular systems.

Table 2: Summary of Quantitative Performance from Key Studies

| Study (Method) | Molecular System(s) | Reference Method | Target Property | Reported Accuracy (MAE unless noted) |

|---|---|---|---|---|

| Δ-Learning [12] | Water (H₂O), Ethanol, Benzene, Resorcinol | CCSD(T) | Total Energy | < 1 kcal·mol⁻¹ (Quantum Chemical Accuracy) |

| ML-HK (Excited States) [26] | Malonaldehyde | LR-TDDFT | S₁, S₂ Excited State Energies | ~0.05 eV (for dynamics leading to correct proton transfer kinetics) |

| Deep Learning DFT [27] | Organic Molecules, Polymer Crystals | DFT (PBE) | Total Energy, Forces, Band Gap | Energy: ~25 meV/atom, Forces: ~0.1 eV/Å, Band Gap: ~0.3 eV |

| Neural Functional (Grad DFT) [28] | Transition Metal Dimers | Experimental Dissociation Energies | Dissociation Energy | Improved generalization over standard DFAs |

Detailed Experimental Protocols

Δ-Learning for CCSD(T) Accuracy from DFT Densities

The following workflow outlines the core steps for implementing the Δ-Learning method as described in the benchmark study [12].

Figure 1: Workflow for achieving quantum chemical accuracy via Δ-Learning. The ML model is trained to predict the energy difference (ΔE) between a high-level method and DFT using the DFT density as input [12].

Protocol Steps:

Training Set Generation:

- Geometry Sampling: Generate a diverse set of molecular geometries for the target system. This can be achieved through finite-temperature molecular dynamics (MD) simulations using an affordable DFT functional [12].

- Reference Data Calculation: For each geometry in the training set:

- Perform a standard DFT calculation (e.g., using the PBE functional) to obtain the self-consistent electron density, (n^{DFT}(\mathbf{r})), and the DFT total energy, (E^{DFT}) [12].

- Perform a high-level ab initio calculation (e.g., CCSD(T)) to obtain the reference energy, (E^{CCSD(T)}). Calculate the target value for the ML model: (\Delta E = E^{CCSD(T)} - E^{DFT}) [12].

Model Training:

- Descriptor: Use the DFT electron density, (n^{DFT}(\mathbf{r})), as the primary input descriptor for the model. The density may be represented on a real-space grid or using a suitable basis set [12] [27].

- Algorithm: Train a machine learning model (e.g., Kernel Ridge Regression) to learn the mapping: (n^{DFT}(\mathbf{r}) \rightarrow \Delta E) [12].

- Symmetry Exploitation: To drastically reduce the amount of required training data, incorporate molecular point group symmetries into the training process, effectively augmenting the dataset [12].

Application/Production:

- For a new, unseen molecular geometry, run a standard DFT calculation to get (n^{DFT}(\mathbf{r})) and (E^{DFT}).

- Feed (n^{DFT}(\mathbf{r})) into the trained ML model to obtain the predicted energy correction (\Delta E^{ML}).

- The final, corrected energy is computed as (E_{corrected} = E^{DFT} + \Delta E^{ML}) [12].

ML-HK Maps for Direct Density and Energy Prediction

This protocol details the methodology for constructing a Machine-Learned Hohenberg-Kohn map, which bypasses the self-consistent field procedure [26].

Figure 2: Workflow for the ML-HK map approach. The model learns the fundamental map from the external potential to the electron density, from which the total energy can be derived [26].

Protocol Steps:

Data Generation for Mapping:

- Configuration Sampling: Select a representative set of nuclear configurations (geometries) for the molecule(s) of interest.

- Target Density and Energy Calculation: For each configuration, compute the electron density (n(\mathbf{r})) and total energy (E) at the desired level of theory (e.g., a high-level DFT functional or a wavefunction-based method). This serves as the target for the ML model [26] [27].

Model Construction and Training:

- Representation of Input: The external potential, (v{ext}(\mathbf{r}) = -\suma Za / |\mathbf{r} - \mathbf{R}a|), defined by the nuclear charges (Za) and positions (\mathbf{R}a), is used as the input. This is a unique descriptor for the system [26].

- Learning the Density Functional: Train a machine learning model (e.g., a neural network) to map the external potential directly to the electron density: (v_{ext}(\mathbf{r}) \rightarrow n(\mathbf{r})). This is the ML-HK map [26].

- Learning the Energy Functional: Alternatively, or in addition, train a model to map the predicted electron density to the total energy: (n_{ML}(\mathbf{r}) \rightarrow E). This step emulates the universal functional of DFT [26] [27].

Application/Production:

- For a new nuclear configuration, the ML-HK map directly predicts the electron density (n_{ML}(\mathbf{r})) without performing a self-consistent DFT calculation.

- The predicted density is then fed into the ML energy functional to obtain the total energy.

- This workflow can be extended to excited states within a multistate HK (ML-MSHK) framework, enabling direct prediction of excited-state densities and energies for molecular dynamics simulations [26].

The Scientist's Toolkit: Essential Research Reagents

Implementing the aforementioned ML correction strategies requires a combination of software, computational resources, and data.

Table 3: Essential Tools and Resources for ML-Enhanced DFT Research

| Tool Category | Specific Examples | Function and Relevance |

|---|---|---|

| Electronic Structure Software | Gaussian, VASP, PySCF, Q-Chem | Generate high-quality training data (densities, energies, forces) at DFT and ab initio levels [27] [29]. |

| Machine Learning Libraries | TensorFlow, PyTorch, JAX, Scikit-learn | Provide the algorithms and frameworks for building and training models like neural networks and kernel ridge regression [28]. |

| Specialized ML-DFT Software | Grad DFT (JAX-based), SchNarc | Offer differentiable, end-to-end frameworks for developing and testing machine-learned functionals and corrections. Grad DFT, for instance, enables quick prototyping of neural network-based XC functionals [28]. |

| Molecular Descriptors & Fingerprints | AGNI fingerprints, SOAP, Molecular graphs (SMILES) | Convert atomic structures into machine-readable formats. AGNI fingerprints, for example, are used to represent the chemical environment of atoms for deep learning models predicting charge density [27]. |

| Reference Datasets | QM9, MD17, Curated transition metal dimers | Provide standardized, high-quality data for training and benchmarking models. Custom datasets for specific properties (e.g., BF3 affinity) are also crucial [29] [28]. |

This guide has provided a side-by-side comparison of two powerful machine-learning strategies for correcting Density Functional Theory. Δ-Learning excels in its data efficiency, leveraging the systematic trends in DFT error to achieve quantum chemical accuracy with relatively small training sets, making it ideal for correcting specific properties like reaction energies and barriers [12]. In contrast, ML-HK Maps offer a more foundational approach by learning the direct map from molecular structure to electron density and energy. This paradigm not only achieves high accuracy but also bypasses the SCF cycle, offering potential speedups and a direct route to challenging properties like electronic excitations [26].

The choice between them hinges on the research objective. For projects demanding rapid, highly accurate corrections to DFT energies for a specific molecular system or reaction, Δ-Learning is a robust and efficient choice. For investigations requiring a more general electronic structure tool, including access to excited states or a complete bypass of traditional DFT solvers, the ML-HK framework presents a compelling, though potentially more data-intensive, alternative. Both methods significantly advance the thesis of benchmarking DFT against CCSD(T), providing practical pathways to transcend the inherent limitations of standard density functional approximations in molecular properties research.

Multi-Task Learning and Specialized Architectures for Ultra-Low Data Regimes

Data scarcity remains a formidable obstacle to effective machine learning across diverse scientific domains, from molecular property prediction in drug discovery to medical image analysis. This challenge is particularly acute in fields where data annotation requires specialized expertise, expensive experimental procedures, or faces regulatory hurdles. In molecular and materials science, the scarcity of reliable, high-quality labels impedes the development of robust property predictors essential for accelerating discovery pipelines [30]. Similarly, in medical imaging, the creation of annotated segmentation masks is both time-intensive and costly, as it necessitates pixel-level labeling by domain experts [31]. These constraints often lead to ultra-low data regimes—scenarios where annotated training samples are remarkably scarce—causing conventional deep learning approaches to overfit and exhibit poor generalization.

Multi-task learning (MTL) has emerged as a promising strategy to alleviate data bottlenecks by leveraging correlations among related tasks. Through inductive transfer, MTL utilizes training signals from one task to improve performance on another, enabling models to discover and utilize shared structures for more accurate predictions. However, traditional MTL approaches are frequently undermined by negative transfer (NT), a phenomenon where updates driven by one task detrimentally affect another [30]. Beyond task dissimilarity, NT can arise from architectural mismatches, optimization conflicts, and particularly from task imbalance—situations where certain tasks have far fewer labeled examples than others [30].

This review examines specialized architectures and training methodologies designed to overcome these limitations in ultra-low data environments. We focus particularly on their application to molecular property prediction and the broader context of benchmarking density functional theory (DFT) against coupled cluster theory for molecular properties research. By comparing the performance of these innovative approaches with traditional alternatives and providing detailed experimental protocols, we aim to equip researchers with practical insights for selecting and implementing these methods in their own data-constrained applications.

Comparative Analysis of Advanced Methodologies

Adaptive Checkpointing with Specialization (ACS) for Molecular Property Prediction

The Adaptive Checkpointing with Specialization (ACS) framework addresses negative transfer in multi-task graph neural networks by combining shared backbone architectures with task-specific components and strategic checkpointing [30] [32]. ACS employs a single graph neural network (GNN) based on message passing as its backbone to learn general-purpose latent molecular representations. These representations are then processed by task-specific multi-layer perceptron (MLP) heads that provide specialized learning capacity for each individual task [30].

During training, ACS monitors the validation loss of every task and checkpoints the best backbone-head pair whenever a task's validation loss reaches a new minimum. This design promotes inductive transfer among sufficiently correlated tasks while protecting individual tasks from deleterious parameter updates. Each task ultimately obtains a specialized backbone-head pair optimized for its specific characteristics [30].

Table 1: Performance Comparison of ACS Against Alternative Approaches on Molecular Property Benchmarks

| Method | ClinTox (Avg AUROC) | SIDER (Avg AUROC) | Tox21 (Avg AUROC) | Sustainable Aviation Fuels (MAE) | Minimum Data Requirement |

|---|---|---|---|---|---|

| ACS | 0.923 | 0.895 | 0.842 | Accurate with 29 samples | ~29 labeled samples [30] |

| Single-Task Learning (STL) | 0.801 | 0.861 | 0.798 | N/A | Substantially higher |

| MTL without Checkpointing | 0.833 | 0.868 | 0.811 | N/A | N/A |

| MTL with Global Loss Checkpointing | 0.836 | 0.872 | 0.815 | N/A | N/A |

| D-MPNN | 0.915 | 0.892 | 0.839 | N/A | N/A |

In practical applications, ACS has demonstrated remarkable data efficiency. For predicting sustainable aviation fuel properties, ACS learned accurate models with as few as 29 labeled samples—capabilities unattainable with single-task learning or conventional MTL [30] [32]. The method consistently matched or surpassed state-of-the-art supervised methods across multiple molecular property benchmarks including ClinTox, SIDER, and Tox21 [30].

Generative AI Approaches for Data Augmentation

GenSeg for Medical Image Segmentation

The GenSeg framework addresses data scarcity in medical image segmentation through a generative deep learning approach that produces high-quality image-mask pairs as auxiliary training data [31]. Unlike traditional generative models that separate data generation from model training, GenSeg uses multi-level optimization (MLO) for end-to-end data generation, allowing segmentation performance to directly guide the generation process [31].

GenSeg employs a reverse generation mechanism that initially generates segmentation masks, then produces corresponding medical images—adhering to a progression from simpler to more complex tasks. The framework integrates a generative adversarial network (GAN) within a three-tiered MLO process: the first level trains the weight parameters of the data generation model; the second level uses this model to produce synthetic image-mask pairs for training a segmentation model; and the third level validates the segmentation model using real medical images, with the validation performance guiding optimization of the generation model's architecture [31].

Table 2: Performance Improvement of GenSeg in Ultra-Low Data Regimes Across Medical Imaging Tasks

| Segmentation Task | Backbone Model | Baseline Performance (Dice) | GenSeg Performance (Dice) | Absolute Improvement | Training Set Size |

|---|---|---|---|---|---|

| Placental Vessels | DeepLab | 0.31 | 0.516 | 20.6% | 50 |

| Skin Lesions | DeepLab | 0.485 | 0.630 | 14.5% | 40 |

| Polyps | DeepLab | 0.507 | 0.620 | 11.3% | 40 |

| Intraretinal Cystoid Fluid | DeepLab | 0.507 | 0.620 | 11.3% | 50 |

| Foot Ulcers | DeepLab | 0.521 | 0.630 | 10.9% | 50 |

| Breast Cancer | DeepLab | 0.546 | 0.650 | 10.4% | 100 |

When evaluated across 11 medical image segmentation tasks and 19 datasets, GenSeg demonstrated strong generalization capabilities, improving performance by 10-20% in absolute terms in both same-domain and out-of-domain settings [31]. The framework also exhibited remarkable data efficiency, matching or exceeding baseline performance while requiring 8-20 times fewer labeled samples [31].

Catalysis Training for Neuromolecular Imaging

The Catalysis Training pipeline addresses data scarcity in neuromolecular imaging by augmenting real data with high-quality synthetic data generated by a Wasserstein Conditional Generative Adversarial Network (WCGAN) [33]. Applied to histone deacetylase (HDAC) PET/MR imaging in Alcohol Use Disorder (AUD), the approach extracts 1-D standardized uptake value ratio (SUVR) tabular features representing HDAC enzyme expression density across eight cingulate subregions.

When synthetic data was incorporated into the training process, classification accuracy improved significantly: +26% for XGBoost and Random Forest (from 59% to 85%), and +18% for SVM (from 70% to 88%) [33]. The synthetic samples not only boosted accuracy but also improved model generalizability, enabling the identification of key hemispheric and subregional cingulate HDAC patterns as potential biomarkers for AUD [33].

Foundation Model Adaptation for Medical Imaging

Another approach for addressing data scarcity involves adapting foundation models to specialized domains with limited data. In medical image segmentation, researchers have developed bi-level optimization methods to effectively adapt the general-domain Segment Anything Model (SAM) to the medical domain using only a few medical images [34]. This approach has demonstrated strong generalization across eight segmentation tasks involving various diseases, organs, and imaging modalities, requiring 8-12 times less training data than baselines to achieve comparable performance [34].

Experimental Protocols and Methodologies

Protocol for ACS Implementation and Validation

Dataset Preparation and Task Formulation

- Collect molecular datasets with multiple property annotations (e.g., ClinTox, SIDER, Tox21)

- Implement Murcko-scaffold splitting to ensure generalization to novel molecular scaffolds

- Define task imbalance ratio using the formula: ( Ii = 1 - \frac{Li}{\max{Lj}} ) where ( Li ) is the number of labeled entries for task i [30]

- Apply loss masking for missing labels to maximize data utilization

Model Architecture and Training Configuration

- Implement a graph neural network backbone based on message passing [30]

- Design task-specific multi-layer perceptron (MLP) heads for each property prediction task

- Configure training with adaptive checkpointing triggered by validation loss minima per task

- Set early stopping criteria based on task-specific performance plateaus

Validation and Benchmarking

- Evaluate against single-task learning baselines with equivalent capacity

- Compare with conventional MTL without checkpointing and MTL with global loss checkpointing

- Assess performance metrics including AUROC for classification and MAE for regression tasks

- Deploy in practical scenarios (e.g., sustainable aviation fuel property prediction) to validate real-world efficacy

Protocol for Generative Data Augmentation

Data Generation Process (GenSeg)

- Implement reverse generation mechanism: create segmentation masks first, then corresponding images

- Train generative adversarial network with multi-level optimization

- Use basic image augmentation operations on expert-annotated real segmentation masks to produce augmented masks

- Feed augmented masks into deep generative model to produce corresponding medical images

Multi-Level Optimization Framework

- Level 1: Train weight parameters of data generation model within GAN framework

- Level 2: Use trained model to produce synthetic image-mask pairs for segmentation model training

- Level 3: Validate segmentation model using real medical images with expert-annotated masks

- Jointly solve all three levels of nested optimization problems end-to-end

Quality Validation

- Train segmentation models on generated data and evaluate on real validation sets

- Compare performance with models trained exclusively on limited real data

- Assess generalization across diverse datasets and imaging modalities

- Conduct multiple runs with different random seeds to ensure statistical significance

Visualization of Method Workflows

ACS Training Workflow

GenSeg Multi-Level Optimization Architecture

Essential Research Reagent Solutions

Table 3: Key Research Tools and Resources for Ultra-Low Data Regime Research

| Resource Name | Type | Primary Function | Domain Application |

|---|---|---|---|

| LibMTL | Software Library | PyTorch-based implementation of multi-task learning algorithms | General MTL Research [35] |

| OMol25 | Dataset | Large-scale DFT calculations for biomolecules, metal complexes, and electrolytes | Molecular Chemistry [36] |

| Universal Model for Atoms (UMA) | Model | Machine learning interatomic potential trained on 30B+ atoms | Molecular Behavior Prediction [36] |

| WCGAN | Algorithm | Generative adversarial network variant for high-quality synthetic data | Neuromolecular Imaging [33] |

| Multi-Level Optimization | Framework | Nested optimization for end-to-end data generation | Medical Image Segmentation [31] |

| Graph Neural Networks | Architecture | Message passing networks for molecular graph representation | Molecular Property Prediction [30] |

| Segment Anything Model | Foundation Model | General-domain segmentation adaptable to specialized domains | Medical Imaging [34] |

The advancing methodologies for ultra-low data regimes represent a paradigm shift in how we approach machine learning for scientific discovery. Adaptive Checkpointing with Specialization effectively mitigates negative transfer in multi-task learning while preserving the benefits of inductive transfer, demonstrating that accurate molecular property prediction is possible with as few as 29 labeled samples [30]. Meanwhile, generative approaches like GenSeg and Catalysis Training show that synthetically augmenting training data through multi-level optimization and GANs can overcome data scarcity challenges across diverse domains from medical imaging to neuromolecular classification [31] [33].

These specialized architectures share a common principle: strategically balancing shared representations with task-specific specialization while using performance-guided optimization to maximize information extraction from limited data. As these approaches continue to mature, they promise to significantly accelerate research in drug development, materials science, and medical imaging by reducing dependency on large, expensively-annotated datasets. The integration of these techniques with emerging foundation models and large-scale datasets like OMol25 [36] points toward a future where AI-driven discovery becomes increasingly accessible across scientific domains, even for researchers and applications with limited data resources.

Density Functional Theory (DFT) serves as the workhorse of modern computational chemistry and materials science, striking a balance between computational cost and accuracy that enables the study of complex molecular systems. Its widespread application ranges from drug design to catalyst development. The core challenge in DFT lies in the exchange-correlation (XC) functional, which encapsulates complex many-body electron interactions. While traditional functionals, developed through physical approximations and empirical parameterization, have seen decades of refinement, the recent emergence of neural network-based functionals represents a paradigm shift. Among these, DM21 (DeepMind 21), developed by Google DeepMind, stands out as a highly recognizable candidate that promises to leverage the pattern recognition capabilities of deep learning to approximate the exact functional with unprecedented accuracy.

This review objectively assesses the performance of DM21, focusing specifically on its application to predicting molecular geometries—a task fundamental to understanding chemical reactivity and properties. We frame this evaluation within the broader context of benchmarking DFT methods against the coupled cluster singles, doubles, and perturbative triples [CCSD(T)] method, often considered the "gold standard" in quantum chemistry for its high accuracy. For researchers in molecular properties research and drug development, the choice of functional can significantly impact the reliability of computational predictions, making a clear understanding of DM21's practical capabilities and limitations essential.

Theoretical Promise: The AI-Designed Functional