Benchmarking Quantum Accuracy: How Quantum Monte Carlo and Coupled Cluster Theory Are Revolutionizing Drug Design

Accurate prediction of molecular interactions is paramount in drug design, where errors of just 1 kcal/mol can lead to erroneous conclusions about a compound's efficacy.

Benchmarking Quantum Accuracy: How Quantum Monte Carlo and Coupled Cluster Theory Are Revolutionizing Drug Design

Abstract

Accurate prediction of molecular interactions is paramount in drug design, where errors of just 1 kcal/mol can lead to erroneous conclusions about a compound's efficacy. This article explores the critical benchmark roles of Quantum Monte Carlo (QMC) and Coupled Cluster (CC) theories in providing this necessary accuracy. We first establish the foundational principles of these high-level quantum-mechanical methods, then delve into their methodological applications for simulating biological systems like ligand-pocket interactions. The discussion covers troubleshooting computational challenges and optimizing these methods for practical use, and concludes with a rigorous validation of their performance against other computational approaches. By synthesizing findings from recent landmark studies, this review provides researchers and drug development professionals with a clear understanding of how these 'gold standard' methods are setting new benchmarks for accuracy in computational chemistry and biophysics.

The Quest for Quantum Accuracy: Understanding QMC and CC Fundamentals

The Critical Need for Sub-kcal/mol Accuracy in Drug Binding Affinity Predictions

In the field of computer-aided drug design (CADD), the accuracy of binding affinity predictions is a critical determinant of success. The ability to predict how strongly a small molecule (ligand) binds to its target protein is fundamental to guiding the optimization of lead compounds. Achieving sub-kcal/mol accuracy—meaning prediction errors of less than 1 kilocalorie per mole—has emerged as a crucial requirement for effective structure-based drug design. This level of precision enables researchers to reliably rank congeneric ligands and make informed decisions during lead optimization, significantly increasing the probability of successful drug development.

The necessity for such high accuracy stems from the exponential relationship between binding affinity and drug potency. A difference of 1.36 kcal/mol in binding free energy translates to an order of magnitude (10-fold) difference in potency. Consequently, prediction errors exceeding this threshold can misdirect optimization efforts, wasting valuable resources and time. This article provides a comparative analysis of contemporary computational methods, evaluating their performance against the sub-kcal/mol benchmark and detailing the experimental protocols that underpin their validation.

Methodologies for High-Accuracy Affinity Prediction

Computational approaches for predicting protein-ligand binding affinities span a wide spectrum, balancing computational cost with predictive accuracy. These methods can be broadly categorized into three groups: high-accuracy/high-cost simulation methods, empirical and knowledge-based scoring functions, and emerging machine learning and artificial intelligence approaches that aim to bridge the gap between these extremes.

- Physics-Based Simulation Methods: These include rigorous approaches such as Free Energy Perturbation (FEP), Thermodynamic Integration (TI), and Molecular Mechanics with Poisson-Boltzmann or Generalized Born Surface Area (MM/PBSA and MM/GBSA). These methods leverage physical principles and force fields to model molecular interactions, often providing high accuracy at the cost of substantial computational resources.

- Machine Learning and AI Approaches: Recent advances include deep learning models such as Graph Neural Networks (GNNs), Convolutional Neural Networks (CNNs), and specialized architectures like Siamese Networks that learn from known protein-ligand complex structures and their experimentally determined binding affinities.

- Hybrid Methods: These approaches combine elements of physics-based and machine learning methods, integrating physical energy functions with neural networks to enhance both accuracy and interpretability.

Table 1: Overview of Binding Affinity Prediction Method Categories

| Method Category | Representative Examples | Typical Compute Time | Key Strengths |

|---|---|---|---|

| Alchemical Free Energy | FEP+, FEP | Days to weeks (GPU) | High accuracy, rigorous physical basis |

| End-Point Methods | MM/GBSA, MM/PBSA | Hours to days (CPU/GPU) | Better sampling than docking, lower cost than FEP |

| Machine Learning Scoring | PBCNet, AK-Score2, HPDAF | Minutes to hours (GPU) | High throughput, good accuracy |

| Traditional Docking | AutoDock Vina, Glide SP | Minutes to hours (CPU) | Very high throughput, easy to use |

Performance Benchmarking of Leading Methods

Quantitative Accuracy Comparison

Rigorous benchmarking against experimental data is essential for evaluating the performance of affinity prediction methods. The following table summarizes the performance metrics of several state-of-the-art methods on established benchmark datasets.

Table 2: Performance Metrics of High-Accuracy Prediction Methods

| Method | Type | Test Set | Pearson's R | RMSE (kcal/mol) | MAE (kcal/mol) | Key Requirement |

|---|---|---|---|---|---|---|

| FEP+ [1] | Alchemical | Diverse targets | ~0.80 | ~1.00 | - | Extensive sampling, expert setup |

| SILCS [2] | Co-solvent MD | 8 targets, 407 ligands | - | - | 0.899 (MUE) | Pre-computed FragMaps |

| PBCNet [1] | Graph Neural Network | FEP1 set | ~0.80 | 1.11 | - | Congeneric ligands, docking poses |

| AK-Score2 [3] | Hybrid ML/Physics | CASF-2016 | >0.80 | - | - | Diverse decoy sets for training |

| HPDAF [4] | Multimodal Deep Learning | CASF-2016 | 0.858 | 1.24 | 0.98 | Protein sequence, structure, ligands |

Critical Analysis of Benchmarking Practices

Recent research has revealed significant challenges in the validation of binding affinity prediction methods. A critical issue is data leakage between training and test sets, which can substantially inflate performance metrics. Studies have shown that nearly half of the complexes in commonly used benchmarks like CASF share exceptionally high similarity with complexes in the training data, enabling models to achieve high scores through memorization rather than genuine predictive capability [5].

To address this concern, the field is moving toward more rigorous validation protocols such as the PDBbind CleanSplit dataset, which applies strict structure-based filtering to eliminate redundancies and ensure proper separation between training and test complexes. When top-performing models are retrained on this cleaned dataset, their performance typically drops substantially, indicating that previously reported metrics may have been overly optimistic [5].

Experimental Protocols for Method Validation

SILCS Methodology

The Site Identification by Ligand Competitive Saturation (SILCS) technology employs a combined grand canonical Monte Carlo (GCMC)-molecular dynamics (MD) approach to map functional group affinity patterns. The protocol involves:

- Simulation Setup: Running multiple individual 100 ns GCMC/MD simulations with different co-solvents representing various functional groups against the target protein in aqueous solution.

- FragMap Generation: Converting functional group probability distributions from the simulations into free energy maps (FragMaps).

- Ligand Scoring: Using the precomputed FragMaps to estimate binding affinities for candidate ligands through Monte Carlo docking calculations, with each ligand taking approximately 8.5 minutes on a single processor core [2].

The method achieves an average accuracy of up to 77-82% for rank-ordering ligand affinities across eight protein targets, with the ability to process hundreds to thousands of ligands in a single day once FragMaps are generated [2].

PBCNet Framework

The Pairwise Binding Comparison Network (PBCNet) employs a physics-informed graph attention mechanism specifically designed for ranking relative binding affinity among congeneric ligands. The experimental protocol includes:

Diagram Title: PBCNet Workflow Architecture

- Input Preparation: A pair of pocket-ligand complexes with structurally similar ligands and identical protein pockets.

- Message Passing Phase:

- Apply Graph Convolutional Network (GCN) to update atom representations of the protein pocket.

- Combine updated protein pocket with two ligands by building edges between atoms within 5.0 Å.

- Use message-passing network to transmit information across molecular graphs.

- Readout Phase: Obtain molecular representations of ligands using Attentive FP readout operation, then compute molecular-pair representations.

- Prediction Phase: Optimize losses for two tasks: predicting affinity differences and probabilities that one ligand has greater affinity than another [1].

AK-Score2 Development

AK-Score2 employs a triple-network architecture trained on carefully constructed datasets:

Dataset Creation:

- Native set of crystal structures

- Conformational decoy set generated by redocking native ligands

- Cross-docked decoy set using randomly selected ligands from other complexes

- Random decoy set with unrelated ligands

Model Architecture: Three independent sub-networks:

- Classification model for binary prediction of binding

- Regression model for binding affinity prediction

- Regression model for pose quality (RMSD) prediction

Training Strategy: Incorporates both binding affinity errors and pose prediction uncertainties into the loss function, using extensive decoy sets to improve robustness [3].

Research Reagent Solutions Toolkit

Table 3: Essential Computational Tools for Binding Affinity Prediction

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| PDBbind [3] [4] | Database | Curated collection of protein-ligand complexes with binding data | Training and benchmarking affinity prediction methods |

| AutoDock-GPU [3] | Docking Software | Generation of ligand binding poses and conformations | Pose generation for machine learning training |

| PLANTS [6] | Docking Software | Protein-ligand docking with scoring functions | Structure-based virtual screening |

| @TOME Server [6] | Web Platform | Integrated workflow for docking and affinity prediction | Virtual screening and pose analysis |

| RDKit [3] | Cheminformatics | Molecular descriptor calculation and manipulation | Ligand preparation and feature generation |

The pursuit of sub-kcal/mol accuracy in binding affinity prediction continues to drive methodological innovations in computational drug discovery. While classical simulation methods like FEP offer high accuracy for specific systems, their computational cost and requirement for expert intervention limit broad application. Emerging machine learning approaches, particularly those incorporating physical principles and rigorous benchmarking protocols, show promising potential to deliver the combination of accuracy, speed, and robustness needed to accelerate drug discovery.

The critical challenges moving forward include ensuring proper dataset curation to prevent train-test leakage, improving model generalizability across diverse protein families, and enhancing interpretability to provide medicinal chemists with actionable insights. As these methods continue to mature, integration with experimental validation through platforms like Cellular Thermal Shift Assay (CETSA) will be essential for building confidence in predictions and ultimately reducing attrition in later stages of drug development [7].

Coupled Cluster (CC) theory, particularly the method of coupled-cluster singles and doubles with perturbative triples (CCSD(T)), has long been regarded as the "gold standard" in quantum chemistry for predicting molecular energies and interactions. However, its supremacy is being challenged by emerging quantum Monte Carlo (QMC) methods and other approximations, which promise comparable or superior accuracy at a lower computational cost, especially for large systems like those found in drug development. This guide objectively compares the performance of CCSD(T) against these modern alternatives, supported by recent experimental data and benchmarking studies.

The Reign of a Gold Standard and Emerging Challenges

For decades, CCSD(T) has been the cornerstone for high-accuracy quantum chemical calculations, providing reliable reference data for the development of more approximate methods. Its status is summarized by the fact that it is often the benchmark against which other methods are measured [8]. However, this reign is facing two significant challenges.

First, for large, polarizable molecules, CCSD(T) has been found to over-correlate electrons, leading to an overestimation of interaction energies. Recent investigations pin this on the truncation of the perturbative triples expansion, an issue comparable to the infrared divergence problem in metallic systems [9]. For instance, in the parallel-displaced coronene dimer, a system modeling π-π stacking interactions, CCSD(T) calculates an interaction energy of -21.1 ± 0.5 kcal/mol, while Diffusion Monte Carlo (DMC) results are significantly less attractive at approximately -17.8 ± 1.1 kcal/mol [9]. This discrepancy is large enough to cause qualitative errors in predicting material properties, a critical concern for drug design.

Second, the computational cost of CCSD(T), which scales as (O(N^7)) with system size, makes it prohibitively expensive for large systems like protein-ligand complexes [10] [11]. This has driven the search for more efficient methods that can deliver "gold standard" or better accuracy without the crippling computational overhead.

Quantitative Performance Comparison of Quantum Chemical Methods

The table below summarizes the performance of CCSD(T) and its competitors across various chemical systems, as reported in recent benchmark studies.

Table 1: Accuracy and Computational Scaling of High-End Quantum Chemical Methods

| Method | Computational Scaling | Key Systems Tested | Reported Accuracy (Mean Absolute Error) | Key Advantage |

|---|---|---|---|---|

| CCSD(T) | (O(N^7)) [10] [11] | Main group molecules, non-covalent interactions [8] | Traditional "Gold Standard" | Well-established, high reliability for small systems |

| Auxiliary Field QMC (AFQMC) | (O(N^6)) [10] [11] | Challenging main group and transition metal molecules [10] [11] | More accurate than CCSD(T) [10] [11] | Higher accuracy at lower computational cost |

| GW Approximation (G0W0@PBE0) | Lower than CCSD(T) [12] [13] | Open-shell 3d transition-metal atoms and molecules [12] [14] [13] | 0.30 - 0.60 eV for IPs/EA vs. ΔCCSD(T) [12] [14] | Compelling alternative for extended transition-metal systems |

| Equation-of-Motion CCSD (EOM-CCSD) | Similar to CCSD(T) | Open-shell 3d transition-metal atoms and molecules [12] [14] | 0.19 - 0.41 eV for IPs/EA vs. ΔCCSD(T) [12] [14] | High accuracy for ionization potentials and electron affinities |

Table 2: Specific Benchmarking Results for Non-Covalent Interactions (A24 Dataset)

| Method | Description | Performance vs. CCSDT(Q) |

|---|---|---|

| CCSD(T) | Traditional "Gold Standard" | Used as a reference but shows known discrepancies for large systems [9] |

| DC-CCSDT | Distinguishable Cluster CCSDT | Outperforms CCSD(T) and CCSDT, closer to CCSDT(Q) results [8] |

| SVD-DC-CCSDT+/-* | Singular Value Decomposed DC-CCSDT with corrections | Excellent, low-cost tool for achieving near-CCSDT(Q) accuracy [8] |

Detailed Methodologies of Key Benchmarking Experiments

Benchmarking GW and CC for Transition Metals

A comprehensive 2025 study directly benchmarked the GW approximation and EOM-CCSD against ΔCCSD(T) for open-shell 3d transition metals [12] [14] [13].

- System Preparation: The benchmark set included 10 atoms and 44 molecules. Molecular geometries were optimized, and electronic structures were prepared for subsequent single-point energy calculations [12] [13].

- Reference Method: ΔCCSD(T) was used as the reference. This approach calculates the ionization potential (IP) or electron affinity (EA) as the difference between the total energies of the neutral and ionized systems at the CCSD(T) level of theory [12].

- Methods Tested: The performance of EOM-CCSD and several variants of the GW approximation (G0W0, evGW, qpGW) was assessed. The G0W0 method was initiated from the PBE0 density functional [12] [14].

- Accuracy Assessment: The mean absolute errors (MAE) of the IPs and EAs relative to ΔCCSD(T) were computed. EOM-CCSD achieved an MAE of 0.19-0.33 eV, while G0W0@PBE0 achieved an MAE of 0.30-0.47 eV, demonstrating comparable accuracy with higher computational efficiency [12].

Establishing a "Platinum Standard" for Ligand-Pocket Interactions

To resolve discrepancies between CC and QMC, the 2025 "QUID" study established a higher benchmark for biological systems [15].

- Dataset Creation: The "QUID" framework was created, containing 170 molecular dimers (42 equilibrium, 128 non-equilibrium) of up to 64 atoms. These systems model diverse ligand-pocket interaction motifs, including aliphatic-aromatic, H-bonding, and π-stacking [15].

- Reference Energy Calculation: Instead of relying on a single method, a "platinum standard" was defined by achieving tight agreement (within 0.5 kcal/mol) between two fundamentally different high-level methods: LNO-CCSD(T) and Fixed-Node Diffusion Monte Carlo (FN-DMC) [15].

- Performance Analysis: The robust interaction energies from this platinum standard were then used to assess the performance of various density functional approximations, semi-empirical methods, and force fields [15].

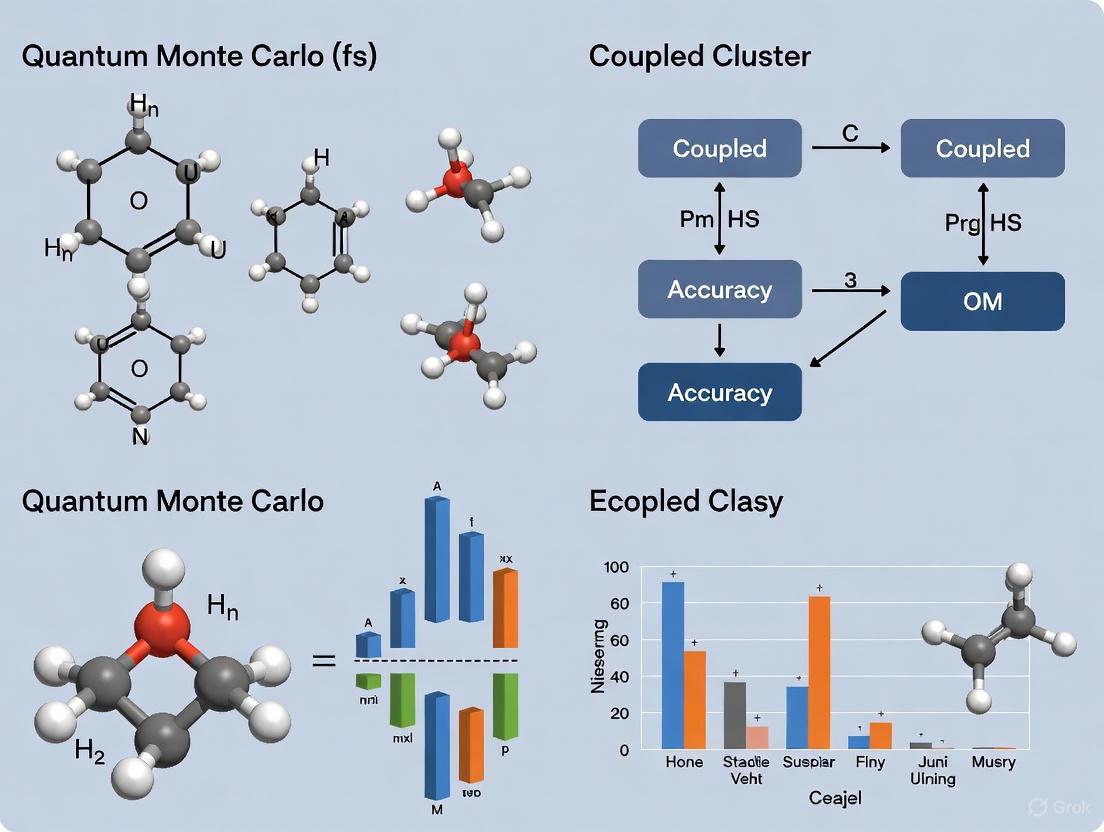

Visualizing the Benchmarking Workflow

The following diagram illustrates the logical workflow for benchmarking quantum chemical methods, as employed in the studies discussed.

The Scientist's Toolkit: Key Computational Research Reagents

Table 3: Essential Computational Tools and Methods

| Tool/Method | Function in Research |

|---|---|

| CCSD(T) | Serves as the traditional benchmark for generating reference data for smaller molecular systems. |

| Auxiliary Field QMC (AFQMC) | Provides highly accurate energy estimates, potentially surpassing CCSD(T) accuracy for transition metal complexes and large systems at a lower computational scaling [10] [11]. |

| GW Approximation | Provides a computationally efficient route for calculating ionization potentials and electron affinities in large systems, such as extended transition-metal complexes, with accuracy rivaling CC methods [12] [13]. |

| Plane Wave Basis Sets | Used in conjunction with CC methods to avoid potential errors from atom-centered Gaussian basis sets, ensuring more reliable results for large, densely-packed structures [9]. |

| Benchmark Datasets (A24, QUID, S66) | Collections of molecular systems with high-level reference interaction energies. They are essential for uniformly testing, validating, and developing new quantum chemical methods [15] [8]. |

| Local Correlation Approximations (e.g., DLPNO) | Techniques that reduce the computational cost of CC calculations by leveraging the locality of electron correlation, making larger systems tractable, though requiring careful error management [9]. |

Quantum Monte Carlo (QMC) represents a family of stochastic computational methods that provide an alternative pathway to high-accuracy quantum chemistry simulations. Unlike deterministic approaches such as density functional theory (DFT) or coupled cluster theory, QMC methods use random sampling to solve the many-body Schrödinger equation, enabling them to capture electron correlation effects with potentially superior accuracy and favorable scaling. These characteristics make QMC particularly valuable for challenging systems where traditional methods struggle, including strongly correlated materials, van der Waals heterostructures, and complex molecular systems relevant to drug development and materials science [16].

The fundamental advantage of QMC methods lies in their ability to provide benchmark-high accuracy for many-body calculations across a wide spectrum of systems, from weakly bound molecules to strongly correlated solids [16]. This accuracy comes from their direct treatment of electron correlation without the systematic biases that can affect more approximate methods. For pharmaceutical researchers and materials scientists, this translates to more reliable predictions of molecular properties, reaction mechanisms, and material behaviors that are crucial for rational drug design and development.

Methodological Landscape: QMC Approaches and Their Computational Characteristics

Quantum Monte Carlo encompasses several distinct techniques, each with unique strengths and application domains. The table below summarizes the key QMC methods, their fundamental characteristics, and computational profiles.

Table 1: Comparison of Major Quantum Monte Carlo Methodologies

| Method | Key Principle | Computational Scaling | Key Applications | Notable Advantages |

|---|---|---|---|---|

| Auxiliary-Field QMC (AFQMC) | Uses auxiliary fields to decouple electron-electron interactions | O(N⁶) [10] | Transition metal complexes, main group molecules [10] | Lower scaling than CCSD(T); black-box implementation |

| Variational QMC (VQMC) | Minimizes energy expectation value using trial wavefunctions | Varies with wavefunction complexity | Spin systems, long-range interactions [17] | Flexible ansätze; combined with machine learning |

| Quantum-Classical AFQMC (QC-AFQMC) | Hybrid quantum-classical algorithm for force calculations | Problem-dependent | Carbon capture materials, molecular dynamics [18] | Accurate atomic force computation; industrial applications |

The methodological diversity within QMC allows researchers to select the most appropriate technique based on their specific system characteristics and accuracy requirements. Auxiliary-Field QMC (AFQMC) has recently demonstrated particular promise, achieving accuracy beyond the traditional "gold standard" of quantum chemistry, coupled cluster singles and doubles with perturbative triples (CCSD(T), while maintaining a lower computational scaling of O(N⁶) compared to the O(N⁷) scaling of CCSD(T) [10]. This improved scaling enables applications to larger molecular systems that were previously computationally prohibitive at this level of accuracy.

Accuracy Benchmarks: QMC Versus Established Quantum Chemistry Methods

Quantitative comparisons between QMC and traditional quantum chemistry methods reveal a consistent pattern: QMC methods can match or exceed the accuracy of established high-accuracy methods while often being applicable to systems where those methods face challenges. The following table summarizes key benchmark results from recent studies.

Table 2: Accuracy Comparison Between QMC and Traditional Quantum Chemistry Methods

| Method | Computational Scaling | Accuracy Relative to CCSD(T) | System Types Demonstrated | Notable Performance |

|---|---|---|---|---|

| AFQMC with CISD Trials | O(N⁶) [10] | Exceeds CCSD(T) accuracy [10] | Main group and transition metal molecules [10] | Consistent outperformance of CCSD(T) |

| CCSD(T) | O(N⁷) [10] | Reference "gold standard" | Single-reference dominated systems | Accuracy decreases for strong correlation |

| Hybrid QMC-Classical | Problem-dependent | More accurate than classical alone [18] | Complex chemical systems, carbon capture [18] | Superior atomic force precision |

Recent innovations in quantum-classical hybrid approaches have further expanded QMC's applicability. For instance, IonQ's implementation of the quantum-classical auxiliary-field quantum Monte Carlo (QC-AFQMC) algorithm has demonstrated more accurate computation of atomic-level forces compared to purely classical methods [18]. This capability is particularly valuable for modeling materials that absorb carbon more efficiently and for tracing reaction pathways – essential applications in both environmental science and pharmaceutical development.

Experimental Protocols and Workflows

Protocol: AFQMC for Molecular Energy Calculation

The application of AFQMC for high-accuracy molecular energy calculations follows a systematic protocol to ensure reliability:

System Preparation: Select molecular geometry and active space, defining the Hamiltonian in second quantized form. For the BODIPY molecule study, this involved preparing active spaces ranging from 4e4o (8 qubits) to 14e14o (28 qubits) [19].

Trial Wavefunction Selection: Employ configuration interaction singles and doubles (CISD) trial states to guide the AFQMC simulation. This selection critically impacts the accuracy and statistical efficiency of the method [10].

Auxiliary Field Integration: Implement the imaginary-time propagation using Hubbard-Stratonovich transformations to decouple electron-electron interactions, introducing auxiliary fields that are sampled stochastically.

Constraint Application: Apply the phaseless approximation to control the fermionic sign problem, ensuring computational stability while maintaining high accuracy.

Statistical Accumulation: Collect samples of the energy estimator during the propagation, typically requiring 10⁵-10⁷ samples to achieve chemical precision (1.6×10⁻³ Hartree) depending on system size and complexity [19].

Error Analysis: Employ block averaging or bootstrapping techniques to quantify statistical uncertainties, ensuring reported energies include reliable error estimates.

This protocol has demonstrated capability to consistently provide more accurate energy estimates than CCSD(T), particularly for challenging molecular systems containing transition metals or exhibiting strong correlation effects [10].

Protocol: Quantum-Enhanced Force Calculations for Molecular Dynamics

The calculation of atomic forces enables QMC methods to inform molecular dynamics simulations and geometry optimizations:

Wavefunction Preparation: Initialize the system wavefunction, which can be a Hartree-Fock state or more sophisticated correlated state.

Nuclear Perturbation: Apply infinitesimal displacements to nuclear coordinates to compute numerical forces or implement analytical force estimators.

QC-AFQMC Execution: Implement the quantum-classical auxiliary-field algorithm to compute forces at critical configuration points where significant changes occur [18].

Classical Integration: Feed the calculated forces into classical computational chemistry workflows to trace reaction pathways and improve estimated rates of change within chemical systems.

Material Property Prediction: Utilize the force information to aid in the design of more efficient materials, such as carbon capture substrates or catalytic systems.

This approach has demonstrated practical utility in industrial settings, with IonQ reporting successful implementation in collaboration with a top Global 1000 automotive manufacturer to simulate complex chemical systems with relevance to climate change mitigation [18].

QMC Molecular Energy Calculation Workflow

Integration with Machine Learning and High-Performance Computing

The integration of machine learning with QMC methods has created powerful synergies that address traditional computational bottlenecks. Set-equivariant architectures and transfer learning approaches have demonstrated remarkable effectiveness in accelerating QMC calculations, particularly for quantum spin systems with long-range interactions [17].

Machine learning ansätze have greatly expanded the accuracy and reach of variational QMC calculations by providing more flexible functional forms for regression and classification tasks within the QMC framework [17]. The set-transformer architecture represents a particularly significant advancement, enabling permutation-invariant processing of QMC samples that achieves a computational speedup of three to four orders of magnitude for expectation value calculations compared to direct approaches [17].

The implementation of transfer learning further enhances computational efficiency by allowing knowledge obtained from smaller systems to be transferred to larger ones. For example, after pre-training for a chain of 50 spins, extending the model to 100 and 150 spins requires only approximately 70% additional computational effort rather than starting from scratch [17].

For pharmaceutical researchers, these advances translate to practical capabilities for simulating larger molecular systems, including protein-ligand complexes and drug candidate molecules, with high accuracy in reasonable timeframes. The availability of massive datasets such as Meta's Open Molecules 2025 (OMol25), containing over 100 million quantum chemical calculations, further empowers these approaches by providing extensive training data for machine-learning-enhanced QMC simulations [20].

Successful implementation of Quantum Monte Carlo methods requires familiarity with both computational tools and theoretical frameworks. The following table outlines key resources that form the essential toolkit for researchers embarking on QMC calculations.

Table 3: Essential Research Reagent Solutions for Quantum Monte Carlo

| Tool/Resource | Type | Function/Purpose | Accessibility |

|---|---|---|---|

| QMCPACK [16] | Software Package | Open-source QMC code for molecular and solid-state systems | Publicly available |

| Set-Transformer Architecture [17] | ML Algorithm | Accelerates expectation value calculations in variational QMC | Research implementation |

| QC-AFQMC Algorithm [18] | Hybrid Algorithm | Enables accurate force calculations for molecular dynamics | Commercial implementation |

| OMol25 Dataset [20] | Training Data | Provides reference quantum chemical calculations for ML-QMC | Publicly available |

| Quantum Detector Tomography [19] | Error Mitigation | Reduces readout errors in quantum computing enhancements | Experimental implementation |

Contemporary QMC research benefits tremendously from educational resources such as the QMC Summer School, which provides introductory materials and hands-on examples for both molecular and solid-state systems [16]. These resources lower the barrier to entry for researchers seeking to apply QMC methods to pharmaceutical and materials science challenges.

For drug development professionals, the practical implication of these advancements is the ability to predict molecular properties and interactions with benchmark accuracy, potentially reducing the experimental screening burden in early-stage drug discovery. The application of QMC to complex biomolecular systems, including protein-ligand interactions, represents an emerging frontier with significant promise for the pharmaceutical industry.

Quantum Monte Carlo methods occupy a unique and increasingly important position in the computational chemistry landscape. Their ability to deliver high-accuracy results for challenging molecular systems, combined with favorable computational scaling compared to traditional high-accuracy methods, makes them particularly valuable for pharmaceutical research and drug development.

The continuing evolution of QMC – through integration with machine learning, development of quantum-classical hybrids, and enhancement of computational efficiency – suggests a expanding role for these methods in addressing the complex molecular challenges facing modern chemistry and biology. For researchers seeking the highest possible accuracy for systems beyond the practical reach of conventional methods, Quantum Monte Carlo represents a powerful and increasingly accessible approach.

In the field of computational chemistry and physics, predicting the electronic structure of molecules with high accuracy is fundamental to advancing scientific discovery, particularly in areas like drug design where binding affinity errors of just 1 kcal/mol can lead to erroneous conclusions [15]. Two sophisticated quantum-mechanical methods have emerged as leading approaches for achieving benchmark accuracy: the deterministic Coupled Cluster (CC) family of methods and stochastic Quantum Monte Carlo (QMC) techniques. While both aim to solve the electronic Schrödinger equation accurately, they diverge fundamentally in their theoretical foundations, computational strategies, and practical application. This guide provides a comprehensive comparison of these methodologies, framed within the context of research comparing their accuracy, with particular attention to the needs of researchers and drug development professionals who require the most reliable predictions for complex molecular systems.

Deterministic Coupled Cluster methods, particularly CCSD(T) often regarded as the "gold standard" in quantum chemistry, provide exact, reproducible solutions through well-defined mathematical operations [15]. In contrast, Quantum Monte Carlo methods employ stochastic processes—specifically random walks in configuration space—to approximate solutions through statistical sampling [10] [21]. The ongoing research to establish a "platinum standard" for benchmark accuracy increasingly relies on achieving tight agreement between these two fundamentally different approaches [15]. Understanding their key theoretical differences is essential for selecting the appropriate method for specific scientific applications, especially when working with biologically relevant ligand-pocket interactions where multiple types of non-covalent interactions simultaneously come into play [15].

Theoretical Foundations and Algorithmic Approaches

Deterministic Coupled Cluster Theory

The Coupled Cluster method is a deterministic, wavefunction-based approach for solving the electronic Schrödinger equation. Its determinism stems from the fact that for a given basis set and system, the method will always produce the identical result when repeated, as it follows a precise mathematical pathway without random elements. The fundamental ansatz of CC theory expresses the wavefunction as an exponential expansion: Ψ = eTΦ0, where Φ0 is a reference wavefunction (typically Hartree-Fock) and T is the cluster operator that generates excited determinants [21]. The cluster operator is usually truncated at a certain excitation level, T = T1 + T2 + T3 + ... + Tn, where n represents the highest order of excitations considered.

The different flavors of CC theory are characterized by their truncation level: CCSD (singles and doubles), CCSDT (singles, doubles, and triples), and CCSDTQ (singles, doubles, triples, and quadruples). With each increasing excitation level, the computational cost grows significantly, with CCSD(T)—CCSD with perturbative triples—often serving as the best compromise between accuracy and computational feasibility for many applications [10]. The determinism in CC methods arises from the explicit algebraic equations that must be solved iteratively until convergence is reached, producing the same result every time for the same molecular system and basis set.

Stochastic Quantum Monte Carlo

Quantum Monte Carlo methods encompass a family of stochastic approaches that use random sampling to solve the electronic Schrödinger equation. Unlike deterministic methods, QMC incorporates inherent randomness—the same set of parameters and initial conditions will lead to an ensemble of different outputs, though these outputs cluster around the true solution with statistical uncertainty [22]. The stochastic nature of QMC comes from its use of random walks to explore the configuration space of electrons, making it fundamentally probabilistic in character.

Auxiliary Field Quantum Monte Carlo (AFQMC) is one prominent variant that operates by projecting the ground state wavefunction through imaginary time propagation [10]. Another approach, Full Configuration Interaction Quantum Monte Carlo (FCIQMC), solves the electronic structure problem through a stochastic simulation of the CI equations [21]. These methods employ statistical sampling rather than deterministic computation, which allows them to handle large systems while maintaining high accuracy, often at a lower asymptotic computational cost than high-level CC methods [10]. The random walks in QMC collectively build up a representation of the wavefunction, with the accuracy improving as more samples are collected, reducing the statistical error bars.

Table: Core Theoretical Foundations of Deterministic CC and Stochastic QMC Methods

| Feature | Deterministic Coupled Cluster | Stochastic Quantum Monte Carlo |

|---|---|---|

| Theoretical Basis | Wavefunction exponential ansatz Ψ = eTΦ0 [21] | Statistical sampling of wavefunction through random walks [10] |

| Nature of Solution | Exact, reproducible mathematical solution | Statistical distribution around true solution |

| Key Approximation | Truncation of cluster operator (e.g., CCSD, CCSDT) [21] | Controlled statistical error, trial wavefunction quality [10] |

| Primary Output | Specific energy value | Energy distribution with confidence intervals |

| Wavefunction Representation | Explicit algebraic expansion | Ensemble of electronic configurations |

Computational Characteristics and Performance

Computational Scaling and Resource Requirements

The computational cost of electronic structure methods is typically characterized by their scaling with system size, which becomes a decisive factor when studying larger molecules relevant to biological systems. Deterministic CC methods exhibit steep polynomial scaling: CCSD(T) scales as O(N7), where N represents the system size [10]. This severe scaling arises from the treatment of connected triple excitations and limits practical applications to systems with approximately 50-100 atoms, depending on the basis set and available computational resources.

In contrast, stochastic QMC methods like AFQMC can achieve high accuracy at a lower asymptotic computational cost, scaling as O(N6) when using configuration interaction singles and doubles (CISD) trial states [10]. This reduced scaling enables applications to larger systems that would be prohibitively expensive for high-level CC methods. However, this theoretical advantage must be balanced against the statistical nature of QMC results, which require multiple independent runs to quantify uncertainties and may need extensive sampling to achieve small error bars for energy differences such as binding affinities.

Treatment of Electron Correlation

A fundamental distinction between CC and QMC methods lies in their approach to electron correlation, which is crucial for accurate predictions of molecular properties and interactions. Deterministic CC methods systematically recover electron correlation through the exponential cluster operator, with the truncation level determining what fraction of correlation energy is captured. CCSD(T) typically recovers over 99% of the correlation energy for many systems, explaining its designation as the "gold standard" in quantum chemistry [15].

Stochastic QMC methods, particularly diffusion Monte Carlo (DMC) and AFQMC, in principle can recover the exact correlation energy within the fixed-node approximation, which is the primary source of error in these methods [10] [15]. The fixed-node error arises from the use of trial wavefunctions whose nodes constrain the solution. Recent advances using CISD trial wavefunctions in AFQMC have demonstrated exceptional accuracy, consistently providing more accurate energy estimates than CCSD(T) for challenging main group and transition metal-containing molecules [10]. This suggests that for systems with strong electron correlation effects, QMC methods may surpass CC in accuracy while maintaining favorable computational scaling.

Table: Performance Comparison for Molecular Systems

| Characteristic | Deterministic CC | Stochastic QMC |

|---|---|---|

| Computational Scaling | O(N7) for CCSD(T) [10] | O(N6) for AFQMC with CISD trial [10] |

| Typical System Size Limit | ~50-100 atoms (dependent on basis set) | Larger systems feasible due to better scaling |

| Statistical Uncertainty | None (deterministic result) | Inherent statistical error (~0.1-0.5 kcal/mol) [15] |

| Memory Requirements | High (storage of amplitude tensors) | Moderate (depends on walker population) |

| Parallelization Efficiency | Moderate (algebraic operations) | High (embarrassingly parallel sampling) |

Accuracy Comparison in Chemical Applications

Benchmark Studies and Validation

The assessment of methodological accuracy requires careful benchmarking against reliable reference data, which presents challenges given the absence of exact solutions for most chemically relevant systems. Recent research has addressed this by establishing a "platinum standard" through agreement between completely different "gold standard" methods, specifically comparing CC and QMC results [15]. In the QUID (QUantum Interacting Dimer) benchmark framework containing 170 non-covalent systems modeling ligand-pocket interactions, robust binding energies were obtained using complementary CC and QMC methods, achieving remarkable agreement of 0.5 kcal/mol [15]. This tight agreement between fundamentally different methodologies substantially reduces the uncertainty in highest-level quantum mechanical calculations and provides reliable benchmark data for evaluating more approximate methods.

For equilibrium molecular geometries where dynamic correlation dominates, CCSD(T) near the complete basis set limit has traditionally been considered the most reliable reference. However, for systems with significant static correlation or multireference character—such as transition metal complexes, biradicals, or dissociating bonds—the limitations of single-reference CC methods become apparent, and QMC approaches may provide superior accuracy [10] [21]. Studies on the automerization of cyclobutadiene and double dissociation of water molecules have demonstrated the ability of semi-stochastic CC methods to recover CCSDT and CCSDTQ energies even when electronic quasi-degeneracies and triply and quadruply excited clusters become substantial [21].

Performance in Drug-Related Applications

Accurate prediction of protein-ligand binding affinities is crucial for rational drug design, where even small errors can mislead development efforts. The QUID benchmark study revealed that both CC and QMC methods provide the necessary accuracy for modeling non-covalent interactions (NCIs) in ligand-pocket systems, which include aliphatic-aromatic interactions, H-bonding, and π-stacking frequently found in protein-ligand complexes [15]. These interactions dominate binding affinity determinations and require precise treatment of electron correlation effects.

While several dispersion-inclusive density functional approximations were found to provide accurate energy predictions when benchmarked against high-level CC and QMC references, their atomic van der Waals forces differed significantly in magnitude and orientation [15]. This suggests that force fields parameterized against high-level reference data from either CC or QMC calculations could improve the reliability of molecular dynamics simulations for drug discovery. Semiempirical methods and empirical force fields were found to require improvements in capturing NCIs for out-of-equilibrium geometries, highlighting the continued need for high-accuracy benchmarks from both CC and QMC methodologies [15].

Table: Key Research Reagent Solutions for Electronic Structure Calculations

| Tool/Resource | Function/Purpose | Application Context |

|---|---|---|

| LNO-CCSD(T) | Linearized coupled cluster implementation for larger systems | High-accuracy single-point energies for systems up to ~100 atoms [15] |

| AFQMC with CISD Trials | Auxiliary Field QMC using configuration interaction wavefunctions | Challenging systems with strong correlation beyond CCSD(T) capability [10] |

| CC(P;Q) Methodology | Moment expansions combining CC and stochastic approaches | Recovering CCSDT/CCSDTQ energies from stochastic propagations [21] |

| QUID Dataset | 170 non-covalent dimers modeling ligand-pocket motifs | Benchmarking NCIs in biologically relevant systems [15] |

| PBE0+MBD Functional | Density functional with many-body dispersion correction | Initial geometry optimizations for subsequent high-level calculations [15] |

Methodological Workflows and Implementation

The practical application of these advanced electronic structure methods follows distinct workflows that reflect their theoretical differences. The deterministic CC pathway begins with defining molecular geometry and basis set, followed by a Hartree-Fock calculation to generate the reference wavefunction. The CC calculation then proceeds through iterative solution of the amplitude equations, gradually converging to a self-consistent solution. Finally, property analysis and energy decomposition provide chemical insights from the converged wavefunction.

The stochastic QMC workflow shares the initial steps of geometry and basis set definition, but then diverges by requiring selection and preparation of a trial wavefunction, which critically influences the accuracy through the fixed-node approximation. The core QMC calculation involves propagating walkers through configuration space via random walks, statistically sampling the wavefunction. Results from multiple independent runs must be aggregated and analyzed to determine the final energy with statistical confidence intervals, before proceeding to property analysis.

The following diagram illustrates the key methodological pathways for both approaches:

Computational Pathways: CC vs. QMC Workflows

The comparison between deterministic Coupled Cluster and stochastic Quantum Monte Carlo methodologies reveals a nuanced landscape where method selection depends critically on the specific scientific problem, system characteristics, and available computational resources. Deterministic CC methods, particularly CCSD(T), provide well-established, reproducible results without statistical uncertainty and remain the gold standard for systems where they are computationally feasible. Their systematic improvability through increased excitation levels and well-developed analytic properties make them ideal for studying equilibrium molecular properties and non-covalent interactions in small to medium-sized systems.

Stochastic QMC approaches offer compelling advantages for larger systems, strongly correlated electrons, and situations where their favorable O(N⁶) scaling provides access to chemical problems intractable for high-level CC methods. The ability of modern AFQMC with sophisticated trial wavefunctions to consistently surpass CCSD(T) accuracy for challenging molecules suggests a shifting paradigm in quantum chemistry benchmarking [10]. For drug discovery professionals working with ligand-pocket interactions, the emergence of benchmark datasets like QUID that leverage agreement between CC and QMC methods provides unprecedented reliability for validating more approximate methods used in high-throughput virtual screening [15].

The most promising future direction may lie in hybrid approaches that combine strengths from both methodologies, such as the semi-stochastic CC(P;Q) framework that uses stochastic information to recover high-level CC energetics [21]. As both methodological strands continue to advance, their convergence on benchmark results with tight agreement establishes a new "platinum standard" that substantially reduces uncertainties in predictive quantum chemistry, ultimately accelerating scientific discovery across chemistry, materials science, and pharmaceutical development.

The Emergence of a 'Platinum Standard' from QMC-CC Consensus

In the high-stakes fields of drug discovery and materials science, computational predictions of molecular behavior guide billion-dollar investment decisions. The reliability of these predictions hinges on the accuracy of the underlying quantum-mechanical methods used to simulate molecular interactions. For decades, the "gold standard" in quantum chemistry has been the Coupled Cluster with Single, Double, and perturbative Triple excitations (CCSD(T)) method, renowned for its high accuracy for various molecular properties [23]. However, its application to large, complex systems like drug-protein interactions remains computationally challenging. Similarly, Quantum Monte Carlo (QMC) methods have emerged as powerful alternative ab initio techniques capable of handling electron correlation effectively [24].

Recently, a transformative development has occurred: the emergence of a "platinum standard" through consensus between these two powerful computational approaches. This paradigm shift establishes unprecedented reliability for benchmarking molecular interactions, particularly for biologically relevant systems where accurate binding affinity predictions are crucial [15]. When CC and QMC—two fundamentally different approaches to solving the Schrödinger equation—converge on nearly identical results, the scientific community gains a level of confidence previously unattainable with either method alone. This consensus is redefining benchmark quality in computational chemistry and opening new frontiers for predictive molecular modeling in pharmaceutical research.

Establishing the Platinum Standard: The QUID Framework

The Quantum Interacting Dimer (QUID) Benchmark

The groundbreaking development of the "platinum standard" originates from the QUID (QUantum Interacting Dimer) benchmark framework, introduced in a landmark 2025 Nature Communications study [15]. This innovative framework addresses a critical gap in computational chemistry: the scarcity of robust quantum-mechanical benchmarks for complex ligand-pocket systems that model drug-protein interactions.

The QUID dataset comprises 170 chemically diverse molecular dimers (42 equilibrium and 128 non-equilibrium structures) containing up to 64 atoms and incorporating the H, N, C, O, F, P, S, and Cl elements most relevant to pharmaceutical compounds [15]. These systems model the three most frequent interaction types found in protein-ligand surfaces: aliphatic-aromatic interactions, H-bonding, and π-stacking. The dataset was constructed by exhaustively exploring different binding sites of nine drug-like molecules from the Aquamarine dataset, systematically probed with benzene or imidazole as representative ligand motifs [15].

The Consensus Protocol

The "platinum standard" is established through a rigorous validation protocol that brings together two fundamentally different computational approaches:

- Coupled Cluster Methodology: Specifically, the local natural orbital CCSD(T) (LNO-CCSD(T)) method, which reduces computational cost while maintaining high accuracy.

- Quantum Monte Carlo Technique: Specifically, the fixed-node diffusion Monte Carlo (FN-DMC) method, which uses stochastic sampling to solve the Schrödinger equation.

The key breakthrough reported in the QUID study is the remarkable agreement of 0.5 kcal/mol between these two independent "gold standard" methods [15]. This tight convergence between completely different theoretical frameworks dramatically reduces uncertainty in highest-level quantum mechanical calculations and establishes a new reference point for benchmarking approximate methods.

Table 1: Key Features of the QUID Benchmark Framework

| Feature | Description | Significance |

|---|---|---|

| System Size | Up to 64 atoms | Represents biologically relevant molecular complexes |

| Chemical Diversity | H, N, C, O, F, P, S, Cl elements | Covers most atom types in drug discovery |

| Interaction Types | Aliphatic-aromatic, H-bonding, π-stacking | Models most frequent protein-ligand interactions |

| Structural Variety | 42 equilibrium + 128 non-equilibrium geometries | Samples binding pathways and out-of-equilibrium states |

| Reference Accuracy | 0.5 kcal/mol agreement between CC and QMC | Establishes "platinum standard" reliability |

Comparative Performance of Computational Methods

Assessment Against the Platinum Standard

The establishment of the QUID "platinum standard" enables rigorous evaluation of more computationally efficient methods that are practical for drug discovery applications. The benchmark analysis reveals a varied landscape of methodological performance:

Density Functional Theory (DFT): Several dispersion-inclusive density functional approximations provide accurate energy predictions close to the platinum standard, despite their atomic van der Waals forces differing in magnitude and orientation from the reference methods [15]. Double-hybrid functionals such as PWPB95-D3(BJ) and B2PLYP-D3(BJ) have demonstrated particular promise, achieving mean absolute errors below 3 kcal/mol for transition metal spin-state energetics [25].

Semiempirical Methods and Force Fields: These approaches require significant improvements in capturing non-covalent interactions, especially for out-of-equilibrium geometries [15]. Their performance limitations highlight the value of the platinum standard in identifying areas for methodological development.

Coupled Cluster Standalone Performance: In benchmark studies against experimental data for transition metal complexes, CCSD(T) alone achieved a mean absolute error of 1.5 kcal/mol with a maximum error of -3.5 kcal/mol, outperforming all tested multireference methods [25].

Quantitative Performance Comparison

Table 2: Method Performance Against Platinum and Experimental Standards

| Method | Mean Absolute Error (kcal/mol) | Maximum Error (kcal/mol) | Key Applications |

|---|---|---|---|

| CCSD(T) | 1.5 (expt) [25] | -3.5 (expt) [25] | Transition metal spin-states [25] |

| QMC-CC Consensus | 0.5 (between methods) [15] | N/A | Ligand-pocket interactions [15] |

| Double-Hybrid DFT | <3 (expt) [25] | <6 (expt) [25] | Transition metal complexes [25] |

| Common DFT (B3LYP*, TPSSh) | 5-7 (expt) [25] | >10 (expt) [25] | General quantum chemistry [25] |

Experimental Protocols and Methodologies

Platinum Standard Generation Protocol

The experimental workflow for establishing the platinum standard consensus follows a meticulous multi-stage process:

System Selection: Nine chemically diverse, flexible, chain-like drug molecules (≈50 atoms) are selected from the Aquamarine dataset [15].

Dimer Construction: Each large molecule is paired with small monomer probes (benzene or imidazole) representing common ligand motifs, with initial aromatic ring alignment at 3.55±0.05 Å distance [15].

Geometry Optimization: Dimers are optimized at the PBE0+MBD level of theory, resulting in 42 equilibrium structures categorized by folding degree: Linear, Semi-Folded, and Fully-Folded [15].

Non-Equilibrium Sampling: A subset of 16 dimers is used to generate 128 non-equilibrium conformations along dissociation pathways using a dimensionless factor q (0.90 to 2.00), where q=1.00 represents the equilibrium dimer [15].

Consensus Calculation: Interaction energies for all systems are computed independently using both LNO-CCSD(T) and FN-DMC methodologies, with agreement verified within the 0.5 kcal/mol threshold [15].

Method-Specific Technical Details

Coupled Cluster Protocol:

- The CCSD(T) calculations employ the local natural orbital (LNO) approximation to enhance computational efficiency for larger systems [15].

- For charged excitations in periodic systems, the Equation-of-Motion Coupled Cluster (EOM-CC) framework is utilized, based on the similarity-transformed Hamiltonian H̄=e^{-T̂}Ĥe^{T̂}, where T̂ is the cluster operator of ground-state CC theory [26].

Quantum Monte Carlo Protocol:

- The fixed-node diffusion Monte Carlo (FN-DMC) approach is implemented, which requires trial wavefunctions to fix the node location to control the fermion sign problem [24].

- Trial wavefunctions are typically obtained from Hartree-Fock, MCSCF, or CI calculations multiplied by a Jastrow factor to explicitly include electron-electron correlations [24].

- The DMC method demonstrates favorable scaling of n³ with system size, compared to the n⁷ scaling of coupled cluster methods, offering potential computational advantages for larger systems [24].

Diagram 1: Platinum Standard Generation Workflow. This diagram illustrates the multi-stage protocol for establishing consensus reference data, showing the parallel application of CC and QMC methodologies that converge to create the platinum standard.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Computational Tools for Platinum Standard Research

| Tool Category | Specific Examples | Function & Application |

|---|---|---|

| High-Level Electronic Structure Codes | LNO-CCSD(T) implementations, FN-DMC packages | Generate reference data with quantum mechanical accuracy [15] |

| Dispersion-Corrected Density Functionals | PBE0+MBD, PWPB95-D3(BJ), B2PLYP-D3(BJ) | Provide more computationally efficient alternatives with good accuracy [15] [25] |

| Semiempirical Quantum Methods | GFN2-xTB | Enable rapid screening and geometry optimization for large systems [23] |

| Hybrid Tensor Network & Neural Network States | BDG-RNN, RBM-inspired correlators | Address strongly correlated systems with recent innovations [27] |

| Benchmark Datasets | QUID, SSE17, Aquamarine | Provide standardized systems for method validation [15] [25] |

Implications for Drug Discovery and Materials Science

The emergence of the platinum standard arrives at a critical juncture for computational chemistry in pharmaceutical research. The field faces dual challenges: an expanding chemical space containing billions of synthesizable molecules, and declining R&D productivity with high failure rates in drug development [28] [29]. In this context, the enhanced predictive accuracy offered by the QMC-CC consensus has transformative potential:

Binding Affinity Prediction: Even errors of 1 kcal/mol can lead to erroneous conclusions about relative binding affinities [15]. The platinum standard provides reliable benchmarks for developing more accurate scoring functions in molecular docking.

Transition Metal Systems: For transition metal complexes—ubiquitous in catalysis and bioinorganic chemistry—CCSD(T) has demonstrated remarkable accuracy with mean absolute errors of 1.5 kcal/mol against experimental spin-state energetics [25].

Force Field Validation: The consensus data enables rigorous testing and refinement of molecular mechanics force fields, particularly for non-covalent interactions that are crucial for biomolecular simulations [15] [23].

Machine Learning Potentials: The high-quality training data generated through platinum standard calculations can fuel the development of more accurate machine learning interatomic potentials [23] [27].

As quantum computing advances, the platinum standard also provides essential validation for emerging quantum algorithms targeting electronic structure problems, creating a foundation for future methodologies that may eventually surpass classical computational capabilities [30] [29].

The emergence of the 'platinum standard' from QMC-CC consensus represents a paradigm shift in computational chemistry benchmarks. By demonstrating remarkable agreement between two fundamentally different high-level quantum methods, this approach provides unprecedented reliability for assessing molecular interactions relevant to drug discovery and materials design.

The implications extend across multiple domains: it establishes rigorous validation protocols for more approximate methods, guides the development of next-generation force fields and machine learning potentials, and creates foundational reference data for emerging quantum computing algorithms. As the field progresses toward increasingly complex and biologically relevant systems, the platinum standard framework offers a pathway to maintain methodological rigor while expanding application boundaries.

For researchers in pharmaceutical and materials science, this development signals an opportunity to increase confidence in computational predictions, potentially reducing costly experimental failures. The convergence of multiple theoretical approaches toward consensus answers embodies the scientific method at its most powerful, providing the computational chemistry community with a new standard of excellence for years to come.

From Theory to Practice: Applying QMC and CC to Biological Systems

Accurately predicting the binding affinity of ligands to protein pockets is a cornerstone of modern drug design, yet achieving quantum-mechanical (QM) accuracy for these complex biological systems has remained a formidable challenge [31]. The flexibility of ligand-pocket motifs involves a intricate balance of attractive and repulsive electronic interactions during binding, which classical computational methods often struggle to capture with sufficient reliability [15]. Furthermore, a puzzling disagreement between established "gold standard" methods like Coupled Cluster (CC) and Quantum Monte Carlo (QMC) has cast doubt on the validity of existing benchmarks for larger non-covalent systems [32].

To address this critical gap, researchers have introduced the QUantum Interacting Dimer (QUID) framework, a novel benchmark designed to redefine the state-of-the-art for QM benchmarks of ligand-protein systems [31] [15]. QUID moves beyond traditional benchmarks by establishing a "platinum standard" through tight agreement (within 0.3 to 0.5 kcal/mol) between two fundamentally different high-level QM methods: LNO-CCSD(T) and FN-DMC [31] [32]. This robust validation provides an unprecedented level of confidence in the benchmark data, enabling a critical evaluation of the approximate methods used in real-world drug discovery applications.

QUID Framework Design and Methodology

Systematic Construction of a Representative Dataset

The QUID framework is built upon a carefully curated set of 170 molecular dimers that model chemically and structurally diverse ligand-pocket motifs [15]. Its design encompasses both equilibrium and non-equilibrium geometries, providing a comprehensive testbed for computational methods. The dataset construction followed a rigorous protocol:

- Source Molecules: Nine large, flexible, chain-like drug molecules were selected from the Aquamarine dataset, incorporating H, N, C, O, F, P, S, and Cl atoms, thus covering most atom types relevant to drug discovery [15].

- Ligand Probes: Two small monomers were chosen to represent common ligand interactions: benzene (an archetypal aromatic ring) and imidazole (a reactive motif present in histidine) [15].

- Structural Diversity: The resulting 42 optimized equilibrium dimers were categorized into 'Linear', 'Semi-Folded', and 'Folded' geometries, modeling everything from open surface pockets to crowded binding sites [15]. This classification mimics the variety of packing densities found in real protein pockets.

- Non-Equilibrium Sampling: To model the binding process, 128 non-equilibrium conformations were generated for a selection of 16 dimers by sampling along the non-covalent bond dissociation pathway [15]. This is crucial for testing how methods perform away from the idealized equilibrium geometry.

The 'Platinum Standard': Achieving Consensus Between CC and QMC

A key innovation of the QUID framework is its resolution of the methodological discrepancy between high-level QM methods. It establishes a robust benchmark by obtaining mutual agreement between two entirely different "gold standard" approaches:

- Localized Natural Orbital Coupled Cluster (LNO-CCSD(T)): A highly accurate wavefunction-based method that scales more efficiently to larger systems.

- Fixed-Node Diffusion Monte Carlo (FN-DMC): A stochastic QMC method that directly solves the Schrödinger equation.

The QUID benchmark achieved a remarkable agreement of 0.3 to 0.5 kcal/mol between these methods, largely reducing the uncertainty in highest-level QM calculations and setting a new "platinum standard" for interaction energies in complex molecular systems [31] [32].

The following diagram illustrates the comprehensive workflow for establishing the QUID benchmark, from dataset creation to the final validation of methods.

Performance Comparison of Computational Methods

The true value of the QUID benchmark lies in its ability to objectively evaluate the performance of the computational methods used in practical drug discovery. The analysis reveals a varied landscape of accuracy and reliability.

Comparative Performance Across Method Types

Table 1: Performance summary of computational methods against the QUID benchmark

| Method Category | Representative Examples | Performance on Equilibrium Geometries | Performance on Non-Equilibrium Geometries | Key Limitations Identified |

|---|---|---|---|---|

| Dispersion-Inclusive Density Functional Theory (DFT) | PBE0+MBD, others | Accurate energy predictions [31] | Good performance | Discrepancies in atomic van der Waals forces (magnitude/orientation) [31] [32] |

| Semiempirical Methods | Not specified | Require improvements [31] | Poor performance | Inadequate capture of non-covalent interactions (NCIs) [15] |

| Empirical Force Fields | Not specified | Require improvements [31] | Poor performance | Inadequate capture of NCIs for out-of-equilibrium geometries [31] [32] |

| Quantum Algorithms | Extended Grover search [33] | Effective for docking site identification (proof-of-concept) [33] | Not assessed in source | Scalable but limited by current quantum hardware qubit counts [33] |

Detailed Analysis of Method Performance

Dispersion-Inclusive DFT Methods: Several density functional approximations that include dispersion corrections were able to provide accurate predictions of interaction energies, making them a strong candidate for studying ligand-pocket systems where cost prohibits the use of CC or QMC methods [31]. However, the benchmark revealed a critical shortcoming: these methods exhibited significant discrepancies in the magnitude and orientation of the atomic van der Waals forces [31] [32]. This implies that while DFT can predict whether a ligand will bind, it may be unreliable for simulating the dynamics of the binding process or for applications in structure optimization where forces are critical.

Semiempirical Methods and Force Fields: These computationally inexpensive methods, widely used for molecular dynamics and docking, were found to require substantial improvements [31]. They performed particularly poorly for non-equilibrium geometries sampled along the dissociation path, indicating a fundamental challenge in accurately capturing the physics of non-covalent interactions across different structural configurations [15] [32]. This limitation could lead to inaccurate predictions of binding pathways and kinetics.

Emerging Quantum Algorithms: A separate but related study demonstrates a novel quantum algorithm for protein-ligand docking site identification based on an extended Grover search algorithm [33]. This algorithm, tested on both a quantum simulator and a real quantum computer, shows that quantum computers can effectively identify docking sites. The algorithm is designed to be highly scalable, poised to harness the potential of increased qubit counts in the future, presenting a promising long-term alternative [33].

Experimental Protocols & Research Toolkit

Key Experimental and Computational Methodologies

To ensure reproducibility and provide clarity on how the benchmark was established, the key methodologies employed in the QUID study are detailed below.

Geometry Optimization and Initial Energetics: The initial generation and optimization of all 170 QUID dimer structures were performed at the PBE0+MBD level of theory [15]. This dispersion-inclusive DFT method was used to prepare the equilibrium and non-equilibrium structures before their interaction energies were recalculated at higher levels of theory.

Benchmark Interaction Energy Calculation: Robust binding energies for the benchmark were obtained using two complementary, high-level quantum mechanical methods:

- LNO-CCSD(T): The Linearized Natural Orbital Coupled Cluster Singles, Doubles, and perturbative Triples method, which provides near-exact energies for non-covalent interactions with controlled approximations to make the calculation feasible for larger systems [31] [15].

- FN-DMC: The Fixed-Node Diffusion Monte Carlo method, a stochastic approach that projects out the ground state energy of the system, serving as a completely independent check on the CC results [31] [32]. The agreement between these methods defines the "platinum standard."

Interaction Energy Decomposition: To understand the physical nature of the interactions in the dimers, Symmetry-Adapted Perturbation Theory (SAPT) was used. This decomposes the total interaction energy into fundamental physical components: electrostatics, exchange-repulsion, induction, and dispersion [31] [15]. This analysis confirmed that the QUID dataset broadly covers diverse non-covalent binding motifs.

The Researcher's Toolkit for Ligand-Pocket Benchmarking

Table 2: Essential research reagents and computational resources for ligand-pocket interaction studies

| Item / Resource | Type | Function / Purpose | Example from QUID Context |

|---|---|---|---|

| QUID Dataset | Benchmark Data | Provides 170 reference systems with "platinum standard" interaction energies for method validation and training. | 42 equilibrium + 128 non-equilibrium dimers [15] |

| LNO-CCSD(T) Code | Software | Calculates highly accurate wavefunction-based quantum chemical energies for benchmark creation. | e.g., MRCC, ORCA, or other quantum chemistry packages |

| QMC Package | Software | Provides an independent stochastic quantum method for benchmark validation. | e.g., QMCPACK |

| SAPT Code | Software | Decomposes interaction energies into physical components to understand binding nature. | e.g., Psi4, SAPT2020 |

| Dispersion-Inclusive DFT | Software | Offers a practical balance of accuracy and cost for structure optimization and property prediction. | PBE0+MBD [15] |

| Molecular Force Fields | Software/Parameters | Enables high-throughput screening and molecular dynamics simulations of large systems. | OPLS, CHARMM, AMBER (noting limitations per QUID) [31] |

| Quantum Algorithm Simulator | Software | Allows development and testing of quantum computing algorithms for drug discovery. | Qiskit (used for testing docking algorithm) [33] |

The relationship between the computational methods, their accuracy, and their computational cost is a fundamental consideration for researchers. The following diagram maps this landscape, helping to guide the selection of an appropriate method for a given study.

The QUID framework represents a significant leap forward in the rigorous benchmarking of computational methods for drug discovery. By establishing a "platinum standard" of accuracy through the consensus of CC and QMC methods, it provides an unambiguous reference for evaluating and improving the faster, approximate methods used in practical applications. Its key findings reveal that while dispersion-inclusive DFT performs well for energy predictions, all lower-cost methods, including force fields and semiempirical approaches, require substantial improvement, particularly for modeling non-equilibrium interactions.

The insights from QUID are already guiding the development of next-generation computational tools. The benchmark data is invaluable for training machine learning potentials and for validating new quantum algorithms [33] [34], which are emerging as promising future pathways. As these technologies mature, the availability of robust, chemically diverse benchmarks like QUID will be essential for ensuring they deliver on their promise to accelerate the discovery of new therapeutics.

Non-covalent interactions are fundamental forces governing molecular recognition, self-assembly, and materials properties in chemical and biological systems. Understanding the precise nature and relative strength of hydrogen bonding (H-bonding), π-stacking, and halogen bonding is crucial for advancing fields ranging from drug design to materials science. This guide provides a comparative analysis of these interactions based on recent experimental and computational studies, with particular emphasis on methodological approaches for their accurate characterization and quantification.

Comparative Energetics and Properties

Table 1: Key Characteristics of Major Non-Covalent Interactions

| Interaction Type | Typical Energy Range (kJ/mol) | Dominant Components | Directionality | Role in Molecular Systems |

|---|---|---|---|---|

| Hydrogen Bonding | 4-60 (single) [35]; Up to 120 (multiple networks) [35] | Electrostatic > Orbital > Dispersion [36] | High | Protein-carbohydrate recognition [37], polymer mechanical properties [35] |

| π-Stacking | ~-43 to -50 (computed for IDNB complexes) [36] | Dispersion > Electrostatic ≈ Orbital [36] | Moderate | Charge-transfer complexes [36], cocrystal stabilization [38] |

| Halogen Bonding | Varies with DFT functional [39] [40] | Electrostatic > Orbital [36] | High | Cocrystal engineering [41] [36], materials design [39] |

| CH-π Interactions | Favorable; Tryptophan > Tyrosine > Phenylalanine [42] | Dispersion, Electrostatic [42] | Broad orientational landscape [42] | Protein-carbohydrate binding [42] [37] |

Table 2: Quantitative Interaction Energies from Selected Systems

| System | Interaction Type | Experimental/Computational Method | Energy/Strength |

|---|---|---|---|

| PVA/HCPA polymer [35] | Multiple H-bonds | Tensile testing | 48% increase in strength, 370% increase in toughness |

| IDNB-TMPD complex [36] | π-stacked | DFT calculations | -50.2 kJ/mol |

| IDNB-TMPD complex [36] | Halogen-bonded | DFT calculations | -43.3 kJ/mol |

| Tryptophan-galactose [42] | CH-π | QM calculations | More favorable than tyrosine or phenylalanine |

| Heavy pnictogen complexes [39] [40] | Halogen bonding | Charge density analysis, DFT benchmarking | Varies significantly with DFT functional |

Experimental and Computational Methodologies

Quantum Mechanical Approaches

Quantum mechanical calculations provide fundamental insights into interaction energies and electronic properties. For CH-π interactions in protein-carbohydrate systems, quantum mechanical calculations combined with random forest models successfully predict interaction strengths, revealing tryptophan-containing interactions are more favorable than those with tyrosine or phenylalanine [42]. The orientational flexibility of these interactions can be explored using well-tempered metadynamics simulations to obtain binding free energy landscapes [37].

For halogen bonding, density functional theory (DFT) requires careful functional selection. Experimental charge density analysis of halogen-bonded cocrystals containing I···P, I···As, and I···Sb interactions serves as a crucial benchmark for theoretical predictions [39] [40]. Different DFT functionals produce widely varying interaction geometries, energies, and charge density characteristics [39].

Multicomponent Nuclear-Electronic Orbital DFT (NEO-DFT) enables simultaneous quantum treatment of protonic and electronic wave functions, providing accurate predictions of anharmonic OH vibrational shifts in hydrogen-bonded systems with root mean square deviation values below 10 cm⁻¹ [43].

Spectroscopic Characterization Techniques

Solid-state NMR spectroscopy, particularly relaxation dispersion experiments using the Carr-Purcell-Meiboom-Gill (CPMG) pulse sequence, enables investigation of hydrogen bond dynamics in bulk polymers by monitoring end-group dissociation events directly and independently of polymer segment relaxation [44].

For halogen bonding, NMR contact shifts in paramagnetic cocrystals provide evidence of supramolecular covalency through analysis of hyperfine interactions governed by the Fermi-contact mechanism, demonstrating electron sharing between halogen-bonded molecules [41].

Infrared spectroscopy tracks hydrogen bonding through characteristic blue shifts of hydroxyl groups upon cross-linker addition in polymer systems [35]. The expanded HyDRA database of hydrogen-bonded monohydrates serves as a benchmark for computational spectroscopy methods [43].

Crystallographic and Materials Analysis

X-ray diffraction analysis reveals distinct binding preferences in cocrystal formation. Iodo-2,5-dinitrobenzene (IDNB) forms π-stacks of alternating donors and acceptors with tetramethyl-p-phenylenediamine (TMPD), while 1,4-diiodotetrafluorobenzene (DITFB) forms halogen-bonded associations with the same compound [36].

Statistical analysis of the Cambridge Structural Database demonstrates that stacking and T-type interactions contribute equally to hydrogen bonds in stabilizing cocrystal lattices, challenging the traditional hydrogen bond-dominated paradigm of crystal engineering [38].

Small-angle X-ray scattering (SAXS) investigates toughening mechanisms in hydrogen-bonded polymers, revealing how nanodomains deform under stress by breaking and rapidly reconstructing H-bonds [35].

Research Workflow and Method Validation

The following diagram illustrates the integrated experimental and computational approach for characterizing non-covalent interactions:

Table 3: Key Reagents and Materials for Non-Covalent Interaction Studies

| Resource | Function/Application | Example Use |

|---|---|---|

| Hydrogen-Bond Cross-linkers (e.g., HCPA) [35] | Form multiple H-bonded networks in polymers | Enhance strength and toughness in polyvinyl alcohol films |

| Halogen Bond Donors (e.g., DITFB, IPFB) [36] | Form directional halogen bonds in cocrystals | Create specific supramolecular architectures with nitrogen acceptors |