Benchmarking Quantum Chemical Methods: Statistical Analysis of Accuracy for Drug Discovery Applications

This article provides a comprehensive statistical analysis of the accuracy of quantum chemical methods, crucial for researchers and professionals in drug development.

Benchmarking Quantum Chemical Methods: Statistical Analysis of Accuracy for Drug Discovery Applications

Abstract

This article provides a comprehensive statistical analysis of the accuracy of quantum chemical methods, crucial for researchers and professionals in drug development. It explores the foundational principles of quantum chemistry, examines current methodological approaches and their real-world applications in simulating drug-target interactions, addresses key challenges and optimization strategies for improving computational efficiency and accuracy, and presents rigorous validation frameworks and comparative performance of different methods. By synthesizing the latest advances, including novel density functionals, quantum computing, and AI-enhanced simulations, this review serves as a critical guide for selecting and implementing quantum chemical methods to achieve predictive accuracy in biomedical research.

The Quantum Chemical Landscape: Principles, Promises, and the Critical Need for Accuracy

The pursuit of new therapeutics is fundamentally a molecular-level endeavor, where success hinges on precisely predicting how potential drug candidates interact with biological targets. Classical computational methods have long served as valuable tools, but they often rely on approximations that struggle with the complex quantum mechanical effects governing covalent bonding, electron transfer, and reaction pathways. These limitations contribute to high failure rates in drug development. The emergence of quantum computing and advanced quantum mechanical (QM) methods marks a paradigm shift, offering a path to chemically accurate simulations that are becoming non-negotiable for tackling the most persistent challenges in modern drug design, from covalent inhibitor development to prodrug activation strategies.

The Accuracy Imperative: Quantum vs. Classical Methods in Drug Design

Accurate simulation is crucial because small errors in calculating molecular interaction energies can lead to complete failure in predicting a drug's efficacy or toxicity.

Table 1: Comparison of Computational Methods in Drug Design Challenges

| Computational Method | Key Strengths | Primary Limitations | Representative Application in Drug Design |

|---|---|---|---|

| Molecular Mechanics (MM) | Computational efficiency for large systems (e.g., proteins) [1]. | Does not explicitly model electrons; inadequate for reaction processes and covalent bonding [1]. | Initial screening and molecular dynamics of large biomolecular systems. |

| Density Functional Theory (DFT) | Good balance of accuracy and cost; widely used for molecular properties [2]. | Struggles with systems featuring strong electron correlation (e.g., transition metal complexes) [2]. | Studying reaction mechanisms and predicting spectroscopic properties. |

| Multiconfiguration Pair-Density Functional Theory (MC-PDFT) | High accuracy for complex systems at lower computational cost than advanced wave-function methods [2]. | Functional form and parameters require careful optimization for different systems [2]. | Modeling bond-breaking processes and excited states in photochemistry. |

| Quantum Computing (e.g., VQE) | Potential to compute exact solutions; superior accuracy for electron correlation; scalable system modeling [3]. | Limited by qubit coherence, noise, and measurement shot budget on near-term devices [3]. | Precise Gibbs free energy profiling for covalent bond cleavage in prodrugs [3]. |

Benchmarking Accuracy: Quantum Computing in Real-World Case Studies

Moving beyond theoretical potential, recent research demonstrates the application of hybrid quantum-classical pipelines to genuine drug discovery problems.

Case Study 1: Prodrug Activation via C–C Bond Cleavage

A hybrid quantum computing pipeline was developed to study a carbon-carbon (C–C) bond cleavage prodrug strategy for β-lapachone, an anticancer agent [3].

- Experimental Protocol: The subsystem was simplified using an active space approximation to a two-electron/two-orbital system. The fermionic Hamiltonian was transformed into a qubit Hamiltonian using parity transformation. Researchers used the Variational Quantum Eigensolver (VQE) framework with a hardware-efficient (R_y) ansatz and a single-layer parameterized quantum circuit run on a 2-qubit device. Standard readout error mitigation was applied. Single-point energy calculations incorporated solvation effects (water) using the ddCOSMO model and the 6-311G(d,p) basis set. Thermal Gibbs corrections were calculated at the HF level [3].

- Result: The quantum computation successfully calculated the energy barrier for C–C bond cleavage, a critical determinant of whether the reaction proceeds spontaneously under physiological conditions. The results were consistent with classical Complete Active Space Configuration Interaction (CASCI) calculations and, crucially, with wet-lab experimental validation [3].

Case Study 2: Covalent Inhibition of the KRAS G12C Protein

The KRAS G12C mutation is a prevalent oncogenic driver. Inhibitors like Sotorasib (AMG 510) act through covalent bonding to the target, a process demanding highly accurate simulation [3].

- Experimental Protocol: A hybrid quantum computing workflow was implemented to calculate molecular forces for QM/MM (Quantum Mechanics/Molecular Mechanics) simulations [3]. This approach treats the reactive site (where covalent bond formation occurs) with quantum mechanics for accuracy, while modeling the surrounding protein environment with molecular mechanics for efficiency.

- Result: This methodology provides a powerful tool for a detailed examination of drug-target interactions, enhancing the understanding of covalent inhibitors and aiding in the design of next-generation therapies [3].

Case Study 3: Large-Scale Simulation on a Quantum-Centric Supercomputer

A recent hybrid approach used an IBM quantum device (with a Heron processor) and the RIKEN Fugaku supercomputer to study a complex [4Fe-4S] molecular cluster, a biologically crucial system found in enzymes like nitrogenase [4].

- Experimental Protocol: The team used the quantum computer (utilizing up to 77 qubits) to identify the most important components of the enormous Hamiltonian matrix, a task where classical algorithms rely on approximations. This refined, smaller matrix was then fed into the classical supercomputer to solve for the exact wave function [4].

- Result: This "quantum-centric supercomputing" demonstration provides a scalable blueprint for solving complex quantum chemistry problems that are beyond the reach of purely classical methods [4].

Essential Research Reagents and Computational Tools

The experimental protocols rely on a suite of specialized software, algorithms, and hardware.

Table 2: Research Reagent Solutions for Quantum Simulation

| Item Name | Type | Function in Research |

|---|---|---|

| Variational Quantum Eigensolver (VQE) | Algorithm | A hybrid quantum-classical algorithm used on near-term quantum devices to find the ground state energy of a molecular system [3]. |

| TenCirChem | Software Package | A Python-based quantum computational chemistry package used to implement entire quantum simulation workflows, including VQE and solvation models [3]. |

| Polarizable Continuum Model (PCM) | Solvation Model | A method to simulate the solvation effect of molecules in a solvent (e.g., water in the human body) within a quantum computation [3]. |

| Quantum-Centric Supercomputing | Computing Architecture | Integrates quantum processors with classical supercomputers to solve large-scale quantum chemistry problems [4]. |

| Multiconfiguration Pair-DFT (MC-PDFT) | Classical QM Method | An advanced density functional theory that provides high accuracy for systems with strong electron correlation at a manageable computational cost [2]. |

The Path Forward: Integration and Future Outlook

The integration of quantum simulations into drug discovery is accelerating. Industry estimates suggest quantum computing could create $200–500 billion in value for the life sciences industry by 2035, primarily by enabling predictive in silico research and reducing reliance on lengthy wet-lab experiments [5]. Major pharmaceutical companies, including AstraZeneca, Boehringer Ingelheim, and Amgen, are actively collaborating with quantum technology firms to explore applications ranging from protein folding and electronic structure simulation to clinical trial optimization [5].

The convergence of quantum computing, advanced classical QM algorithms like MC-PDFT, and quantum-informed machine learning is creating a powerful new toolkit. This will allow researchers to navigate the vast chemical space of billions of synthesizable molecules with unprecedented accuracy [6]. As these technologies mature, high-accuracy quantum simulations will transition from a specialized advantage to a non-negotiable component of efficient and successful drug design pipelines, ultimately accelerating the delivery of novel therapeutics to patients.

Core Quantum Mechanical Principles Governing Molecular Behavior

The accurate computational prediction of molecular behavior is a cornerstone of modern scientific research, with profound implications for drug discovery, materials science, and catalytic reaction modeling. At its foundation lie core quantum mechanical principles that govern electron interactions, molecular structure, and energy landscapes. The central challenge in applied quantum chemistry involves selecting computational methods that best approximate the Schrödinger equation with sufficient accuracy for large, complex systems. This guide provides an objective comparison of leading quantum chemical methods, benchmarking their performance against experimental data and detailing the protocols that yield the most reliable results for molecular systems.

A diverse ecosystem of software packages implements these quantum chemical methods, each with unique capabilities, basis set preferences, and performance characteristics [7]. The choice of method involves critical trade-offs between computational cost and predictive accuracy, making evidence-based comparisons essential for research planning and resource allocation.

Core Quantum Mechanical Principles and Methodologies

Foundational Theoretical Frameworks

Quantum chemistry methods approximate solutions to the Schrödinger equation through different theoretical frameworks, each with distinct approaches to modeling electron correlation and interactions:

Wave Function Theory (WFT): Methods based on directly solving for the electronic wave function, including Hartree-Fock (HF) as a starting point, with post-Hartree-Fock approaches like Møller-Plesset perturbation theory (MP2, MP4), Coupled Cluster (CCSD, CCSD(T)), and Configuration Interaction (CI) adding increasingly accurate electron correlation treatments [7].

Density Functional Theory (DFT): A practical alternative that determines molecular properties through electron density rather than wave functions, using exchange-correlation functionals of varying sophistication (LDA, GGA, meta-GGA, hybrid, double-hybrid) [8].

Quantum Monte Carlo (QMC): A stochastic approach that uses random sampling to solve the Schrödinger equation, providing high accuracy but with substantial computational demands [9].

Key Principles Governing Molecular Behavior

Several quantum principles fundamentally dictate molecular structure and reactivity:

The Quantum Many-Body Problem: Describes how electrons interact within molecular systems, governing chemical bonding, reactivity, and electrical properties [8].

Electron Density and Exchange-Correlation: In DFT, the exchange-correlation functional approximates quantum mechanical interactions between electrons, with the universal functional remaining unknown but crucial for accurate predictions [8].

Spin-State Energetics: Particularly important for transition metal complexes, where accurate prediction of energy differences between spin states is essential for modeling catalytic mechanisms and materials properties [10].

Superposition and Entanglement: Quantum systems can exist in multiple states simultaneously (superposition), while entangled particles maintain correlated states even when separated, principles increasingly relevant for quantum-inspired statistical approaches [11].

Performance Benchmarking of Quantum Chemical Methods

Benchmarking Against Experimental Spin-State Energetics

Recent research has established credible reference data for benchmarking quantum chemistry methods, notably the SSE17 dataset containing experimental spin-state energetics for 17 transition metal complexes with diverse ligands [10]. This benchmark enables conclusive assessment of method performance for open-shell transition metal systems.

Table 1: Performance of Quantum Chemistry Methods for Spin-State Energetics (SSE17 Benchmark)

| Method Category | Specific Methods | Mean Absolute Error (kcal mol⁻¹) | Maximum Error (kcal mol⁻¹) | Computational Cost |

|---|---|---|---|---|

| Coupled Cluster | CCSD(T) | 1.5 | -3.5 | Very High |

| Double-Hybrid DFT | PWPB95-D3(BJ), B2PLYP-D3(BJ) | <3.0 | <6.0 | High |

| Multireference Methods | CASPT2, MRCI+Q, CASPT2/CC, CASPT2+δMRCI | Variable, outperformed by CCSD(T) | Variable | Very High |

| Standard Recommended DFT | B3LYP*-D3(BJ), TPSSh-D3(BJ) | 5-7 | >10 | Medium |

| Machine Learning Force Fields | FeNNix-Bio1 (Foundation Model) | Approaches QMC accuracy | Not specified | Low (after training) |

Accuracy in Reproducing Solid-State Structures

Beyond energy predictions, reproducing experimental molecular structures is crucial for pharmaceutical applications. Benchmarking against high-quality X-ray structures below 30 K provides rigorous assessment of structural prediction accuracy [12].

Table 2: Performance of Computational Methods for Solid-State Structure Reproduction

| Method | Basis Set/Functional Considerations | Accuracy vs. Experiment | Computational Efficiency | Best Applications |

|---|---|---|---|---|

| Molecule-in-Cluster (MIC) DFT-D | In QM:MM framework | High, matches full-periodic computations | High for large systems | Pharmaceutical solid-state optimization |

| Full-Periodic (FP) Solid-State | Plane wave basis sets | High | Computationally demanding | Ideal periodic systems |

| Machine Learning Foundation Models | FeNNix-Bio1 trained on multi-level quantum data | Approaches QMC accuracy, handles bond breaking/formation | Efficient for large systems after training | Biomolecular systems, reactive MD |

Experimental Protocols for Method Validation

Benchmarking Spin-State Energetics (SSE17 Protocol)

The SSE17 benchmark methodology provides a rigorous approach for validating quantum chemical methods [10]:

Reference Data Collection: Obtain experimental data from spin crossover enthalpies or energies of spin-forbidden absorption bands for 17 transition metal complexes containing Fe(II), Fe(III), Co(II), Co(III), Mn(II), and Ni(II) with chemically diverse ligands.

Data Correction: Apply suitable back-correction for vibrational and environmental effects to obtain reference values for adiabatic or vertical spin-state splittings.

Method Testing: Compute spin-state energetics using various quantum chemistry methods, including DFT with different functionals, wave function methods (CCSD(T), CASPT2, MRCI+Q), and multireference approaches.

Error Calculation: Calculate mean absolute errors and maximum errors relative to experimental reference values to quantify method performance.

Statistical Analysis: Rank methods by accuracy, identifying best-performing functionals and theoretical approaches for transition metal systems.

Structure Validation Protocol

For validating computational methods against crystallographic data [12]:

Test Set Curation: Select 22 very low-temperature (below 30 K) high-quality organic small-molecule crystal structures with high resolution (typically around d = 0.5 Å) to minimize thermal motion effects.

Structure Optimization: Perform computations using various methods (MIC DFT-D in QM:MM framework, full-periodic computations, semiempirical methods).

Restraint Generation: Enforce computed structure-specific restraints in crystallographic least-squares refinements.

Accuracy Assessment: Evaluate methods based on:

- Crystallographic R1(F) factor differences

- Root mean square Cartesian displacements (RMSCD) between computed and experimental structures

- Bond distance and angle comparisons, particularly for non-hydrogen atoms

Efficiency Evaluation: Compare computational resource requirements and scalability for larger systems.

Machine Learning Force Field Training Protocol

The development of quantum-accurate neural network potentials follows an advanced multi-level protocol [9]:

Multi-Level Data Generation:

- Generate broad molecular configurations using Density Functional Theory (DFT)

- Compute selected cases using high-accuracy Quantum Monte Carlo (QMC)

- Apply multi-determinant configuration interaction (CI) methods for extra precision

Foundation Model Training:

- Initial training on large DFT dataset to learn general molecular interaction landscape

- Transfer learning using smaller QMC dataset to refine accuracy

- Train on the "delta" (difference between QMC and DFT predictions) to propagate high-fidelity knowledge

Model Validation:

- Test on bond breaking/forming reactions (e.g., proton transfer)

- Validate against experimental hydration free energies

- Assess stability in nanosecond-scale molecular dynamics simulations

- Benchmark on large systems (up to million atoms)

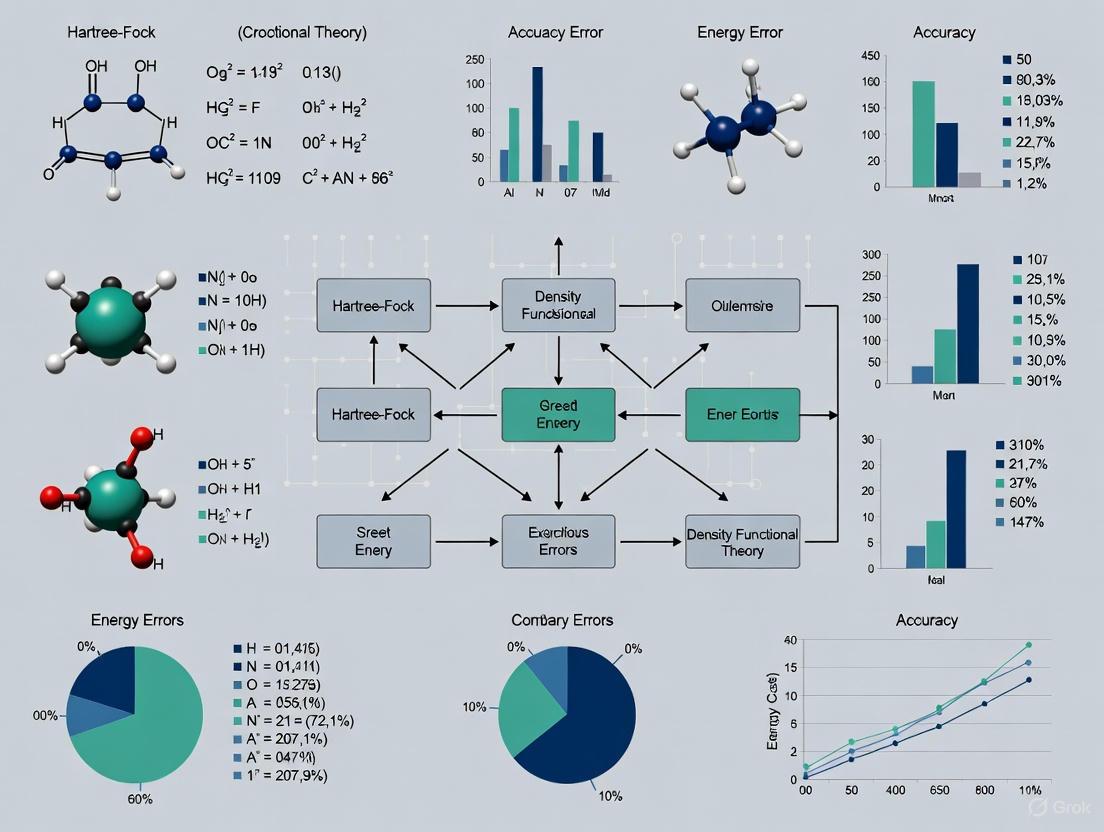

Quantum Chemistry Validation Workflow: This diagram illustrates the integrated workflow for validating quantum chemical methods, showing the relationship between experimental data, computation, validation, and machine learning approaches.

Quantum Chemistry Software Solutions

The quantum chemistry software landscape includes both open-source and commercial packages with varying capabilities, basis set implementations, and performance characteristics [7].

Table 3: Essential Quantum Chemistry Software and Capabilities

| Software Package | License | Key Methods Supported | Basis Sets | Parallelization | Special Features |

|---|---|---|---|---|---|

| Gaussian | Commercial | HF, MP, CC, DFT, TDDFT | GTO | Limited | User-friendly, comprehensive methods |

| Q-Chem | Academic, Commercial | HF, CC, DFT, TDDFT, EOM-CC | GTO | MPI, OpenMP, GPU plugins | Advanced electron correlation |

| ORCA | Academic, Commercial | HF, MP, CC, DFT, MRCI | GTO | MPI | Excellent for transition metals |

| CP2K | Free, GPL | DFT, DFTB, HF, MP2, RPA | Hybrid GTO, PW | MPI, OpenMP, GPU | Excellent for periodic systems |

| Quantum ESPRESSO | Free, GPL | DFT, HF, GW | PW | MPI, OpenMP, GPU | Solid-state physics focus |

| PySCF | Free, BSD | HF, DFT, MP, CC, CASSCF | GTO | MPI, OpenMP, GPU plugins | Python-based, customizable |

| NWChem | Free, ECL v2 | HF, DFT, MP, CC, CASSCF | GTO | MPI, OpenMP, GPU | Comprehensive, good scalability |

Emerging Methodologies and Tools

Machine Learning Foundation Models: FeNNix-Bio1 represents a new class of neural network potentials trained on multi-level quantum chemistry data, enabling quantum-accurate simulations of million-atom systems with capability for bond breaking/formation [9].

Quantum Computing Platforms: Amazon Braket, IBM Quantum Experience, and Rigetti Forest provide access to emerging quantum computing resources for quantum chemistry applications [13] [14].

Quantum-Inspired Statistical Frameworks: New approaches incorporating quantum principles like superposition and entanglement into statistical analysis for capturing complex, multimodal data patterns in fields like finance and healthcare [11].

The benchmarking data presented enables evidence-based selection of quantum chemical methods tailored to specific research requirements. For the highest accuracy in spin-state energetics, CCSD(T) remains the gold standard, while double-hybrid DFT functionals (PWPB95-D3(BJ), B2PLYP-D3(BJ)) offer the best compromise between accuracy and computational cost for transition metal systems. For solid-state structure prediction and pharmaceutical applications, molecule-in-cluster DFT-D computations in a QM:MM framework provide accuracy matching full-periodic computations with superior efficiency.

Emerging machine learning approaches trained on multi-level quantum chemistry data represent a paradigm shift, offering quantum-level accuracy for large biomolecular systems while dramatically reducing computational costs. As quantum chemistry continues evolving, these validated benchmarking protocols and performance comparisons provide essential guidance for researchers navigating the complex landscape of computational methods to accurately model molecular behavior.

Computational quantum chemistry provides powerful tools for predicting the properties and behaviors of molecules and materials, forming a critical component of modern research in drug development and materials science. At the heart of this field lies a fundamental tradeoff: the balance between computational accuracy and resource expenditure. Researchers must constantly navigate this spectrum, choosing between highly accurate ab initio (first-principles) methods that come with significant computational costs and more efficient Density Functional Theory (DFT) approaches that rely on approximations of the exact exchange-correlation functional. This balancing act is particularly crucial in pharmaceutical applications, where even errors of 1 kcal/mol can lead to erroneous conclusions about relative binding affinities, potentially derailing drug discovery pipelines [15].

The progression of quantum chemical methods forms a hierarchy often described as "Jacob's Ladder," with each rung representing increased complexity and potential accuracy at the expense of greater computational demand [16] [17]. This guide provides a comprehensive comparison of these methods, focusing on their accuracy-cost characteristics across various chemical systems, with special attention to applications relevant to drug development professionals and research scientists.

Methodological Landscape: From First Principles to Density Approximations

TheAb Initio(Wavefunction-Based) Hierarchy

Ab initio methods, including Coupled Cluster (CC) and Quantum Monte Carlo (QMC), strive to solve the Schrödinger equation with minimal approximations, providing systematically improvable results often considered the "gold standard" for quantum chemical calculations. The Coupled Cluster Singles, Doubles, and perturbative Triples (CCSD(T)) method is particularly renowned for its excellent accuracy across diverse chemical systems [15]. NEVPT2 (N-Electron Valence State Perturbation Theory) represents another high-accuracy approach, especially valuable for systems with multireference character, such as the verdazyl radicals studied in organic electronic materials [18]. Symmetry-Adapted Perturbation Theory (SAPT) provides detailed decompositions of non-covalent interaction energies, offering valuable physical insights into binding phenomena [15].

Despite their accuracy, these methods face severe computational limitations. The computational cost of CCSD(T) scales with the seventh power of system size (O(N⁷)), while QMC, though potentially more scalable, introduces statistical uncertainty and requires careful control of approximations [15]. These constraints render pure ab initio calculations prohibitively expensive for the large molecular systems typical in drug discovery, where ligands and protein pockets can encompass hundreds of atoms.

Density Functional Theory and its Approximations

Density Functional Theory bypasses the complexity of the many-electron wavefunction by focusing on the electron density, significantly reducing computational cost while maintaining reasonable accuracy for many applications. The Kohn-Sham DFT energy functional is expressed as:

[E[\rho] = T\text{s}[\rho] + V\text{ext}[\rho] + J[\rho] + E_\text{xc}[\rho]]

where (T\text{s}) is the kinetic energy of non-interacting electrons, (V\text{ext}) is the external potential energy, (J) is the classical Coulomb energy, and (E\text{xc}) is the exchange-correlation energy that encapsulates all quantum many-body effects [16]. The accuracy of DFT hinges entirely on the approximation used for (E\text{xc}), as its exact form remains unknown.

DFT functionals are systematically improved by increasing their "non-locality" and incorporating exact Hartree-Fock exchange:

- Generalized Gradient Approximation (GGA): Functionals like PBE and BLYP include the gradient of the electron density ((∇ρ)) to account for inhomogeneities, offering improved molecular properties over the local density approximation (LDA) [16].

- meta-GGA: Functionals like TPSS and M06-L incorporate the kinetic energy density ((τ(r))), providing significantly more accurate energetics than GGAs with only slightly increased cost [18] [16].

- Hybrid Functionals: Global hybrids like B3LYP and PBE0 mix in a fraction of exact Hartree-Fock exchange to address self-interaction error, substantially improving accuracy at increased computational cost due to the need to construct the exact exchange matrix [19] [16].

- Range-Separated Hybrids (RSH): Functionals like CAM-B3LYP, ωB97X, and M11 employ a distance-dependent mixing of HF and DFT exchange, offering superior performance for charge-transfer species, stretched bonds, and excited states [18] [16].

Quantitative Comparison of Methods

Table 1: Accuracy-Cost Characteristics of Quantum Chemical Methods

| Method | Computational Scaling | Typical Application | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Coupled Cluster (CCSD(T)) | O(N⁷) | Benchmark calculations (<50 atoms) [15] | "Gold standard" accuracy [15] | Prohibitive cost for large systems |

| Quantum Monte Carlo (QMC) | O(N³)-O(N⁴) | Benchmark calculations [15] | High accuracy for large systems; favorable scaling | Statistical uncertainty; fixed-node error |

| SCS-MP2 | O(N⁵) | Enzyme reaction modeling [19] | Good agreement with CC; more robust than DFT for certain mechanisms [19] | Higher cost than DFT |

| NEVPT2 | O(N⁵)-O(N⁶) | Multireference systems (e.g., radicals) [18] | High accuracy for challenging electronic structures | Large active space required; high cost |

| Range-Separated Hybrid (M11, ωB97M-V) | O(N⁴) | Multireference systems, charge-transfer, excited states [18] [17] | Excellent for radicals; correct asymptotic behavior [18] | High computational cost vs pure DFT |

| Hybrid Meta-GGA (M06, TPSSh) | O(N⁴) | General purpose; transition metals [18] | Good balance for energetics and geometries | Sensitive to grid size; higher cost |

| Meta-GGA (M06-L, r²SCAN) | O(N³)-O(N⁴) | General purpose; large systems [18] | Improved energetics over GGA; no HF exchange cost | Can underestimate dispersion |

| GGA (PBE, BLYP) | O(N³) | Geometry optimization; large systems [16] | Computationally efficient; reasonable structures | Poor energetics; self-interaction error [16] |

Table 2: Performance of Select Methods Against High-Accuracy Benchmarks

| Method | Functional Type | Performance on Verdazyl Radical Dimers [18] | Performance on QUID Ligand-Pocket Benchmark [15] | Performance on Chorismate Synthase Reaction [19] |

|---|---|---|---|---|

| M11 | Range-Separated Hybrid Meta-GGA | Top performer (with MN12-L, M06, M06-L) [18] | Information not available in search results | Information not available in search results |

| MN12-L | meta-Nonseparable Gradient Approximation | Top performer (with M11, M06, M06-L) [18] | Information not available in search results | Information not available in search results |

| M06 | Hybrid Meta-GGA | Top performer (with M11, MN12-L, M06-L) [18] | Information not available in search results | Information not available in search results |

| B3LYP | Global Hybrid GGA | Information not available in search results | Information not available in search results | Qualitatively wrong reaction energetics and mechanistic predictions [19] |

| SCS-MP2 | Ab Initio (Wavefunction) | Information not available in search results | Information not available in search results | Accurate results agreeing with coupled cluster and experiment [19] |

| PBE0+MBD | Hybrid GGA + Dispersion Correction | Information not available in search results | Used for geometry optimization of benchmark set [15] | Information not available in search results |

Case Studies in Accuracy Assessment

Case Study 1: Multireference Radical Systems (Verdazyl Radicals)

Experimental Context: Verdazyl radicals are organic compounds with unpaired electrons, making them promising candidates for new electronic and magnetic materials. Their electronic structure often exhibits multireference character, where a single determinant description is insufficient, presenting a significant challenge for computational methods [18].

Methodology and Protocols: A 2025 benchmark study evaluated the performance of various DFT functionals and ab initio methods for calculating interaction energies in verdazyl radical dimers. Reference energies were established using the high-level NEVPT2 method with a (14,8) active space, comprising the verdazyl π orbitals. This reference was used to assess the accuracy of multiple density functionals from different families [18].

Key Findings:

- The range-separated hybrid meta-GGA functional M11, the meta-nonseparable gradient approximation functional MN12-L, and the hybrid meta-GGA M06 and its pure DFT counterpart M06-L emerged as the top-performing functionals for these challenging systems [18].

- This study demonstrates that members of the Minnesota functional family, particularly those incorporating meta-GGA components and range separation, can achieve accuracy approaching high-level ab initio methods for multireference systems while maintaining substantially lower computational costs [18].

Case Study 2: Enzyme Reaction Energetics (Chorismate Synthase)

Experimental Context: Modeling reaction mechanisms in enzymes is crucial for understanding biological catalysis and designing inhibitors. The conversion of 5-enolpyruvylshikimate-3-phosphate (EPSP) to chorismate in chorismate synthase represents a complex biological transformation where accurate energetics are essential [19].

Methodology and Protocols: Researchers employed QM/MM (Quantum Mechanics/Molecular Mechanics) methods, with the enzyme environment treated with molecular mechanics (CHARMM27 force field). The quantum region was studied using both B3LYP (a DFT functional) and SCS-MP2 (an ab initio wavefunction method), with final energies refined using the local coupled cluster method LCCSD(T) [19].

Key Findings:

- The widely used B3LYP functional predicted reaction energetics that were "qualitatively wrong," potentially leading to incorrect mechanistic conclusions [19].

- In contrast, the SCS-MP2 method provided results in good agreement with both coupled cluster benchmarks and experimental data, correctly identifying the reaction pathway in which phosphate elimination precedes proton transfer [19].

- This case highlights a critical failure mode of certain DFT approximations and underscores the need for careful method validation against ab initio benchmarks or experimental data before drawing mechanistic conclusions.

Case Study 3: Non-Covalent Interactions in Drug-Relevant Systems (QUID Benchmark)

Experimental Context: Non-covalent interactions (NCIs) dominate ligand-protein binding, making their accurate description paramount in drug design. The "QUID" (QUantum Interacting Dimer) benchmark framework was developed to address this need, containing 170 chemically diverse molecular dimers modeling ligand-pocket motifs [15].

Methodology and Protocols: The QUID benchmark establishes a "platinum standard" by obtaining tight agreement (within 0.5 kcal/mol) between two fundamentally different high-level methods: LNO-CCSD(T) (a localized orbital variant of Coupled Cluster) and FN-DMC (Fixed-Node Diffusion Monte Carlo) [15]. This robust reference enables unbiased evaluation of more approximate methods.

Key Findings:

- Several dispersion-inclusive density functional approximations provided accurate energy predictions for these complex NCIs, confirming their utility in drug discovery applications [15].

- However, these same DFT methods showed significant variation in their predictions of atomic van der Waals forces (both magnitude and orientation), suggesting potential limitations for molecular dynamics simulations where forces drive nuclear motion [15].

- Semi-empirical methods and empirical force fields generally required improvements for accurately capturing NCIs, particularly for "out-of-equilibrium" geometries encountered during binding processes [15].

Emerging Paradigms: Machine Learning and Hybrid Approaches

Machine-Learned Density Functionals

Traditional functional development follows a physically motivated path up "Jacob's Ladder." A new paradigm uses supervised machine learning to create functionals like NeuralXC, which are trained on high-fidelity ab initio data to correct the deficiencies of baseline functionals (e.g., PBE) [17]. These ML functionals learn a meaningful representation of physical information, making them transferable across similar systems. For example, a NeuralXC functional optimized for water outperformed other methods in characterizing bond breaking and agreed well with experimental results [17].

Another approach trains ML models on exact energies and potentials from quantum many-body calculations, not just energies. Potentials highlight small differences more clearly, allowing models to capture subtle changes more effectively. Models trained this way have demonstrated striking accuracy, even when applied to systems beyond their training data, while keeping computational costs manageable [20].

Machine-Learned Interatomic Potentials (MLIPs)

MLIPs revolutionize materials simulation by offering near-quantum accuracy with the computational efficiency of classical force fields. A key challenge lies in balancing their accuracy against the computational cost of both training and evaluation [21].

Research shows that this trade-off can be optimized by jointly considering:

- Training Set Precision: Using reduced-precision DFT training sets can be sufficient if energy and force contributions are appropriately weighted during training [21].

- Training Set Size: Systematic sub-sampling techniques can identify the most informative atomic configurations, drastically reducing the required training set size [21].

- Model Complexity: Selecting the right model complexity (e.g., linear SNAP vs. complex graph neural networks) based on application needs (simulation size, timescale) is crucial. For many applications, simpler, optimized MLIPs offer a better accuracy/cost balance than complex "universal" models that require fine-tuning and retain high evaluation costs [21].

Diagram 1: Decision workflow for selecting quantum chemical methods based on system size, electronic complexity, and research goals.

Essential Research Reagent Solutions

Table 3: Key Computational Tools and Resources

| Tool / Resource | Type | Primary Function | Relevance to Accuracy-Cost Tradeoff |

|---|---|---|---|

| LNO-CCSD(T) [15] | Ab Initio Method | High-accuracy energy calculations for large systems | Extends the reach of "gold standard" coupled cluster to larger molecules relevant to drug design. |

| NEVPT2 with tailored active spaces [18] | Ab Initio Method | Accurate treatment of multireference systems | Provides benchmark references for challenging open-shell systems like radicals. |

| Minnesota Functionals (M11, M06, MN12-L) [18] | DFT Functional Family | Broad applicability across various chemical systems | Offers top-tier DFT performance for specific challenges like multireference character at reasonable cost. |

| SAPT [15] | Energy Decomposition Method | Detailed analysis of non-covalent interactions | Provides physical insights into binding components (electrostatics, dispersion, induction) for rational design. |

| NeuralXC [17] | Machine-Learned Functional | Lifts baseline DFT accuracy toward coupled-cluster level | A promising path to bypass functional development limitations; specialized for specific system types. |

| MLIPs (e.g., SNAP, qSNAP) [21] | Machine-Learned Potential | Large-scale molecular dynamics with near-DFT accuracy | Dramatically reduces cost of accurate dynamics simulations after initial training investment. |

| QUID Dataset [15] | Benchmark Database | 170 non-covalent dimers modeling ligand-pocket motifs | Provides a robust "platinum standard" for validating methods on pharmaceutically relevant systems. |

The accuracy-cost tradeoff between ab initio methods and DFT remains a central consideration in computational chemistry and materials science. While high-level ab initio methods provide essential benchmarks, carefully selected DFT functionals—particularly modern meta-GGAs, hybrids, and range-separated hybrids—can provide an excellent balance for many applications, including drug design [18] [15].

Emerging approaches, particularly machine-learned functionals and interatomic potentials, are poised to reshape this landscape. By leveraging accurate quantum data, these methods create a new Pareto front, offering enhanced accuracy without the traditional computational cost increase [20] [21] [17]. For the practicing researcher, the optimal strategy involves: (1) understanding the specific electronic structure challenges of their system (multireference character, charge transfer, strong correlation), (2) selecting methods validated for similar problems, and (3) leveraging machine-learning accelerators where appropriate. As these computational tools continue evolving, they will further empower scientists to make accurate predictions of molecular properties and behaviors, accelerating the discovery of new materials and therapeutic agents.

In the field of computational drug discovery, the prediction of protein-ligand binding affinity represents a fundamental challenge with direct implications for therapeutic development. The concept of "benchmark accuracy" is anchored by the sub-1 kcal/mol threshold, a target often termed "chemical accuracy" due to its alignment with the experimental uncertainty of isothermal titration calorimetry (ITC) measurements [22] [23]. Achieving this level of predictive precision is critical because an error of just 1 kcal/mol translates to an almost 6-fold error in binding constant (Kd), potentially leading to erroneous conclusions about relative binding affinities and derailing drug optimization efforts [24]. This guide provides a comprehensive comparison of contemporary methods for binding affinity prediction, evaluating their performance against this rigorous benchmark standard through structured experimental data and detailed methodological analysis.

Methodological Approaches and Their Accuracy Benchmarks

Computational methods for predicting binding affinity span multiple theoretical frameworks, each with distinct trade-offs between accuracy, computational cost, and applicability. The performance of these methods is quantitatively assessed through metrics comparing predicted values against experimentally determined binding affinities, most commonly reported as Root Mean Square Error (RMSE) in kcal/mol.

Table 1: Comparative Performance of Binding Affinity Prediction Methods

| Method Category | Representative Methods | Reported RMSE (kcal/mol) | Key Applications | Computational Cost |

|---|---|---|---|---|

| Quantum Mechanical | LNO-CCSD(T), FN-DMC | 0.5 (benchmark) | Benchmarking, Small Systems | Extremely High (Days-Weeks) |

| Absolute FEP | AB-FEP (FEP+) | ~1.1 | Lead Optimization | High (Hours-Days) |

| Relative FEP | RBFE (OPLS4) | 1.39 (Nucleic Acids) | Congeneric Series | High (Hours per Perturbation) |

| Machine Learning | DualBind (ToxBench) | ~1.75 | Virtual Screening | Low (Minutes) |

| Semi-Empirical QM | g-xTB (PLA15) | N/A (Interaction Energy) | Interaction Energy Estimation | Medium (Hours) |

| Docking | Various | 2-4 | High-Throughput Screening | Very Low (Seconds-Minutes) |

Quantum Mechanical Methods: The Platinum Standard

Quantum mechanical approaches represent the highest accuracy tier for binding affinity prediction, with recent advances establishing a "platinum standard" through agreement between complementary methodologies.

The QUID Benchmark Framework: The "QUantum Interacting Dimer" (QUID) framework contains 170 non-covalent systems modeling chemically and structurally diverse ligand-pocket motifs. This benchmark employs symmetry-adapted perturbation theory to ensure broad coverage of non-covalent binding motifs and energetic contributions [24].

Achieving Platinum Standard Accuracy: By obtaining tight agreement (0.5 kcal/mol) between two fundamentally different "gold standard" methods—LNO-CCSD(T) and FN-DMC—QUID establishes a robust reference point for assessing more approximate methods. This agreement significantly reduces the uncertainty inherent in highest-level QM calculations [24].

Performance of Density Functional Approximations: Analysis within the QUID framework reveals that several dispersion-inclusive density functional approximations provide accurate energy predictions, though their atomic van der Waals forces differ substantially in magnitude and orientation. Conversely, semiempirical methods and empirical force fields require significant improvements in capturing non-covalent interactions for out-of-equilibrium geometries [24].

Free Energy Perturbation Methods: The Industry Standard

Free energy perturbation (FEP) methods bridge the accuracy-scalability gap, offering sufficiently high accuracy for practical drug discovery applications.

Absolute Binding FEP (AB-FEP): AB-FEP calculations via molecular dynamics simulations in explicit solvent achieve accuracy comparable to experimental assays, with the Schrödinger FEP+ implementation reporting RMSE of approximately 1.1 kcal/mol against experimental affinities in validation studies [22]. The ToxBench dataset provides 8,770 ERα-ligand complex structures with binding free energies computed via AB-FEP, with a subset validated against experimental affinities at 1.75 kcal/mol RMSE [22].

Relative Binding FEP (RBFE): For congeneric series, RBFE calculations demonstrate strong performance in lead optimization contexts. Recent assessments of nucleic acid targeting ligands report average pairwise RMSE of 1.39 kcal/mol across more than 100 ligands with diverse binding modes, demonstrating FEP's applicability beyond traditional protein targets [25].

Methodological Limitations: Despite these successes, FEP calculations face challenges with significant conformational changes, binding modes, and specific chemical modifications. Large-scale applications in industrial drug discovery projects reveal instances where FEP struggles, particularly with scaffold modifications, ring expansion, and water displacement scenarios [23].

Machine Learning Approaches: Emerging Capabilities

Machine learning methods offer rapid predictions by learning patterns from existing data, though their accuracy depends heavily on training data quality and volume.

DualBind Model: The DualBind model employs a dual-loss framework combining supervised mean squared error (MSE) loss with unsupervised denoising score matching (DSM) loss to effectively learn the binding energy function. When trained on the ToxBench dataset, this approach demonstrates potential to approximate AB-FEP accuracy at a fraction of the computational cost [22].

Data Quality Challenges: ML models face significant challenges due to data quality issues and potential data leakage. The PDBBind dataset, a common training resource, has demonstrated limitations where models learn dataset-specific biases rather than underlying protein-ligand interactions [22]. Proper data partitioning strategies, such as UniProt-based splitting, are essential for accurate performance assessment, though they often reveal lower real-world accuracy compared to random splitting [26].

Semi-Empirical Quantum and Neural Network Potentials

Lower-cost quantum methods and neural network potentials offer intermediate options between force fields and full quantum calculations.

PLA15 Benchmark Performance: Assessment of various semi-empirical methods and neural network potentials (NNPs) on the PLA15 benchmark set reveals g-xTB as a top performer with 6.1% mean absolute percent error for protein-ligand interaction energies. Notably, models trained on the OMol25 dataset (eSEN-s, UMA-s, UMA-m) achieve approximately 11% error, while other NNPs demonstrate significantly higher errors [27].

Charge Handling Limitations: A critical finding from PLA15 benchmarking is that the worst-performing NNPs are those that don't explicitly take total molecular charge as input. Since every complex in PLA15 contains either a charged ligand or charged protein, proper charge handling emerges as an essential requirement for accurate interaction energy prediction [27].

Experimental Protocols and Benchmarking Standards

Best Practices for Benchmark Construction

Robust benchmarking requires careful attention to experimental data curation, system preparation, and statistical analysis to ensure meaningful results.

Data Curation Standards: High-quality benchmarks require experimental data with well-understood potential pitfalls and complications. The protein-ligand-benchmark initiative provides a curated, versioned, open, standardized set adherent to these standards, emphasizing the importance of reliable structural and bioactivity data [23].

Domain of Applicability: Benchmarks should realistically represent the intended application domain. For binding affinity prediction, this means including systems with challenging conformational sampling requirements rather than only simplified systems selected for methodological tractability [23].

Statistical Power Considerations: Meaningful benchmarks require sufficient statistical power to detect clinically relevant differences. Underpowered datasets may fail to provide realistic accuracy estimates, leading to overconfidence in method performance [23].

The ToxBench Dataset Protocol

The ToxBench dataset establishes a standardized protocol for AB-FEP benchmarking focused on the pharmaceutically critical Human Estrogen Receptor Alpha (ERα) target.

Dataset Composition: ToxBench contains 8,770 ERα-ligand complex structures with binding free energies computed via AB-FEP. The dataset incorporates non-overlapping ligand splits to assess model generalizability, closely aligning with real-world structure-based virtual screening scenarios where extensive ligand libraries are screened against a single target [22].

Experimental Validation: A subset of the AB-FEP calculations is validated against experimental affinities, achieving 1.75 kcal/mol RMSE. This validation provides crucial experimental grounding for the computational results [22].

Accessibility: The dataset is publicly available via Hugging Face datasets, while the DualBind implementation is accessible through GitHub, promoting transparency and community adoption [22].

The QUID Framework Methodology

The QUID framework implements rigorous protocols for establishing quantum mechanical benchmark accuracy.

System Selection: QUID includes 42 equilibrium and 128 non-equilibrium dimers of up to 64 atoms, incorporating H, N, C, O, F, P, S, and Cl elements. The selection exhaustively explores different binding sites of nine large flexible chain-like drug molecules probed with benzene or imidazole [24].

Non-Equilibrium Sampling: For a representative selection of 16 dimers, non-equilibrium conformations are generated along eight points along the dissociation pathway, modeling snapshots of ligand binding. These conformations are characterized by a dimensionless factor q (0.90 to 2.00), where q=1.00 represents the equilibrium dimer [24].

Reference Method Agreement: The "platinum standard" is established through complementary CC and QMC methods, achieving 0.5 kcal/mol agreement. This tight convergence between fundamentally different theoretical approaches significantly reduces uncertainty in reference values [24].

QM Benchmark Workflow

Essential Research Reagent Solutions

The experimental and computational protocols described require specific methodological tools and resources to implement effectively.

Table 2: Essential Research Reagents and Computational Tools

| Resource Category | Specific Tools/Datasets | Primary Function | Access Method |

|---|---|---|---|

| Benchmark Datasets | ToxBench, QUID, PLA15 | Method Validation & Training | Hugging Face, Academic Repositories |

| Force Fields | OPLS4, AMBER, CHARMM | Molecular Mechanics Potentials | Commercial & Academic Software |

| Quantum Chemistry Software | Schrödinger FEP+, OpenMM, GROMACS | Binding Affinity Calculation | Commercial, Open Source |

| Machine Learning Models | DualBind, ATOMICA, NNPs | Rapid Affinity Prediction | GitHub, Research Publications |

| Statistical Analysis Tools | Arsenic, Custom Scripts | Benchmark Performance Assessment | Open Source, Custom Development |

| Visualization & Analysis | TensorBoard, Encord, FiftyOne | Model Interpretation & Data QC | Commercial, Open Source |

The pursuit of sub-1 kcal/mol accuracy in binding affinity prediction continues to drive methodological innovations across computational chemistry. While quantum mechanical methods establish the fundamental accuracy ceiling with their 0.5 kcal/mol "platinum standard," practical drug discovery increasingly relies on FEP methods achieving approximately 1.1 kcal/mol RMSE for well-behaved systems. Machine learning approaches show promising acceleration potential but face data quality and generalizability challenges that must be addressed through improved benchmarking practices. As these methods evolve, standardized benchmarks like ToxBench, QUID, and PLA15 provide critical validation frameworks to ensure reported accuracies reflect real-world predictive performance rather than dataset-specific artifacts. The field moves toward increasingly reliable binding affinity predictions that can genuinely impact drug discovery pipelines while maintaining transparency about current limitations and domains of applicability.

The Role of Quantum Statistical Mechanics in Modeling Biomolecular Systems

Quantum Statistical Mechanics (QSM) provides the fundamental theoretical framework for connecting the microscopic world of molecular interactions to the macroscopic observable properties of biomolecular systems. In computational chemistry and drug discovery, this connection is crucial for predicting how proteins, ligands, and other biological molecules behave in complex, dynamic environments. The field is currently undergoing a transformative shift as traditional quantum mechanical approaches converge with advanced statistical sampling techniques and machine learning (ML) to overcome longstanding limitations in accuracy and computational feasibility. This evolution is particularly evident in the development of more accurate density functional theory (DFT) methods and the creation of neural network potentials that approach quantum-level accuracy at a fraction of the computational cost [28] [29].

The integration of these methodologies enables researchers to tackle fundamental challenges in biomolecular modeling, including predicting ligand-binding affinities, understanding conformational dynamics, and characterizing reaction mechanisms in physiological environments. By framing these advances within the context of accuracy statistical analysis, this guide objectively compares the performance of emerging computational tools against established alternatives, providing researchers with evidence-based insights for selecting appropriate methodologies for their specific biomolecular applications.

Comparative Analysis of Computational Methodologies

Table 1: Comparative Analysis of Quantum Chemical and ML Methods for Biomolecular Systems

| Methodology | Theoretical Basis | Computational Scaling | Key Accuracy Limitations | Typical System Size | Representative Platforms/Tools |

|---|---|---|---|---|---|

| Density Functional Theory (DFT) | Electron density functional [29] | O(N³) [28] | Exchange-correlation functional approximation; Strong correlation systems [29] | Hundreds of atoms [28] | Gaussian 16, Psi4, DMol3 [30] [31] |

| Post-Hartree-Fock (CCSD(T)) | Wavefunction theory [29] | Exponential [28] | Computational intractability for large systems [29] | Small molecules (<20 atoms) [29] | Psi4 [30] |

| Quantum Mechanics/Molecular Mechanics (QM/MM) | Hybrid quantum/classical mechanics [29] | Depends on QM region size | QM/MM boundary artifacts; Polarization across boundary [29] | Entire proteins with quantum active sites [29] | CHARMm, NAMD [31] |

| Neural Network Potentials (NNPs) | Machine learning on quantum data [32] [29] | Near classical MD | Training data dependency; Transferability [32] | 100,000+ atoms [32] | Egret-1, AIMNet2, OMol25 eSEN [32] |

| Enhanced Sampling MD (GaMD) | Statistical mechanics with boosted potential [31] | Comparable to classical MD | Reweighting challenges; Potential distortion [31] | Full biomolecular complexes [31] | BIOVIA Discovery Studio [31] |

The performance metrics in Table 1 reveal critical trade-offs between computational feasibility and physical accuracy that researchers must navigate. DFT strikes a practical balance for many biomolecular applications but faces fundamental accuracy limitations due to the exchange-correlation functional approximation, an active research area where machine learning approaches are showing significant promise [28] [29]. Recent breakthroughs include ML-based approaches that achieve third-rung DFT accuracy at second-rung computational cost by inverting the quantum many-body problem, potentially moving closer to the elusive universal functional [28].

For large-scale biomolecular simulations, NNPs represent a paradigm shift, enabling quantum-level accuracy for systems comprising hundreds of thousands of atoms, which was previously computationally prohibitive [32]. These data-driven potentials are trained on high-quality quantum mechanical data and can capture complex electronic effects while maintaining the computational efficiency of classical force fields, effectively bridging the quantum-statistical divide in biomolecular modeling.

Accuracy Benchmarking Across Methodologies

Table 2: Accuracy Benchmarking for Biomolecular Properties and Interactions

| Target Property | High-Accuracy Reference | DFT Performance | NNP Performance | Traditional MM Performance | Key Experimental Validation |

|---|---|---|---|---|---|

| Binding Free Energy | Experimental IC₅₀/Kd values | ~2-3 kcal/mol error with hybrid functionals [29] | ~1-2 kcal/mol error vs. quantum reference [32] | ~3-5 kcal/mol error with correction [31] | Free Energy Perturbation (FEP) [31] |

| Reaction Barriers | CCSD(T) [29] | ~3-5 kcal/mol error for transition metals [29] | <1 kcal/mol error for trained systems [32] | N/A (requires QM) | Experimental kinetics [29] |

| Protein-Ligand Pose Prediction | X-ray crystallography | N/A (geometry optimization) | N/A (scoring) | ~1-2 Å RMSD with flexible docking [31] | Cross-docking studies [33] |

| pKa Prediction | Experimental titration | ~0.5-1.0 pKa units with implicit solvation [29] | ~0.3-0.6 pKa units (Starling model) [32] | ~1.0-2.0 pKa units with empirical correction | Potentiometric titration [32] |

| Conformational Dynamics | NMR/MD ensembles | Limited to small systems due to cost | Quantitative agreement with long MD [32] | Qualitative agreement, force field dependent | Hydrogen-deuterium exchange [31] |

The accuracy benchmarking data in Table 2 highlights how hybrid methodologies are advancing the field. For binding free energy predictions, NNPs demonstrate remarkable accuracy approaching chemical significance (1-2 kcal/mol), making them increasingly valuable for drug discovery applications where predicting small affinity differences is critical [32]. The ML-corrected DFT approaches show particular promise for reaction barrier prediction, potentially offering CCSD(T)-level accuracy for complex biochemical reactions involving enzymatic catalysis [28] [29].

For pKa prediction, physics-informed ML models like Starling achieve significantly higher accuracy than traditional methods, enabling more reliable prediction of protonation states in drug discovery [32]. This demonstrates the power of integrating quantum statistical principles with data-driven approaches to overcome limitations of purely physical or purely empirical models.

Experimental Protocols and Workflows

Protocol for Enhanced Sampling with Gaussian accelerated Molecular Dynamics (GaMD)

The GaMD protocol implemented in platforms such as BIOVIA Discovery Studio provides a robust methodology for enhancing conformational sampling in biomolecular systems while maintaining the ability to recover original thermodynamic properties [31]. The detailed workflow consists of the following steps:

System Preparation: Construct the solvated biomolecular system using explicit solvent molecules (TIP3P water model) and counterions to achieve physiological ionic strength. For membrane proteins, embed the system in an appropriate lipid bilayer using membrane solvation tools [31].

Conventional MD Equilibration: Perform energy minimization followed by gradual heating to the target temperature (typically 310 K for biological systems) and equilibration under constant pressure (NPT ensemble) for sufficient time to stabilize system density and potential energy (typically 10-50 ns).

GaMD Parameterization: From the conventional MD trajectory, calculate the maximum, minimum, average, and standard deviation values of the system potential energy. Determine the boost potential parameters (k₀ and σ₀) to ensure the boost potential follows a Gaussian distribution, which facilitates accurate reweighting [31].

GaMD Production Run: Perform multiple independent GaMD simulations (typically 3-5 replicas of 100-500 ns each) with the parameterized boost potential to ensure adequate sampling of conformational states. The boost potential reduces energy barriers, enabling more efficient transitions between low-energy states.

Reweighting and Free Energy Calculation: Apply the cumulant expansion to the second order to reweight the GaMD trajectory and recover the original free energy landscape. Project the free energy onto relevant collective variables (e.g., root-mean-square deviation, dihedral angles, or distance metrics) to identify metastable states and transition pathways [31].

This protocol enables simultaneous unconstrained enhanced sampling and free energy calculations, providing significant advantages over traditional accelerated MD methods for studying complex biomolecular processes such as ligand binding, protein folding, and conformational changes [31].

Workflow for Neural Network Potential Training and Validation

The development of accurate NNPs for biomolecular systems follows a rigorous workflow to ensure transferability and physical consistency:

Reference Data Generation: Perform high-level quantum mechanical calculations (CCSD(T)/DFT with appropriate functional) on diverse molecular configurations, including variations in bond lengths, angles, dihedral angles, and non-covalent interactions. For biomolecular systems, include representative fragments of proteins, nucleic acids, and small molecules [32] [29].

Active Learning and Configuration Sampling: Employ iterative active learning cycles where the NNP is used to run short MD simulations, and configurations where the model is uncertain are selected for additional quantum mechanical calculations to expand the training set efficiently [32].

Network Architecture Selection: Implement a suitable neural network architecture such as AIMNet2 or Egret-1 that incorporates physical constraints such as rotational and translational invariance, long-range interactions, and appropriate asymptotic behavior [32].

Model Training and Regularization: Train the network using the reference quantum data with appropriate loss functions for energy and forces. Apply regularization techniques to prevent overfitting and ensure smooth potential energy surfaces. Typically, 80% of data is used for training, 10% for validation, and 10% for testing [32].

Validation Against Benchmark Systems: Evaluate the trained NNP on benchmark systems not included in the training set, comparing against both quantum mechanical results and experimental data where available. Key validation metrics include energy errors (<1 kcal/mol), force errors (<1 kcal/mol/Å), and vibrational frequency accuracy [32].

This workflow produces NNPs that can accurately capture quantum mechanical effects while enabling nanosecond to microsecond timescale simulations of large biomolecular systems, effectively bridging the gap between accuracy and scalability in biomolecular modeling [32].

Conceptual Workflow for Biomolecular System Modeling

Biomolecular Modeling Workflow

The workflow diagram illustrates the integrated computational approaches for biomolecular system modeling, highlighting critical decision points where accuracy considerations dictate methodological choices. The accuracy versus cost decision represents the fundamental trade-off that researchers must navigate, with different paths leading to methodologies with distinct precision and computational demand characteristics [28] [29].

Essential Research Reagent Solutions

Table 3: Essential Computational Tools for Biomolecular Quantum Simulations

| Tool Category | Specific Solutions | Key Functionality | Applicable Systems | Licensing/ Accessibility |

|---|---|---|---|---|

| Quantum Chemistry Packages | Gaussian 16, Psi4, DMol3 [30] [31] | Electronic structure calculation, Geometry optimization, Frequency analysis [30] | Small molecules, Enzyme active sites, Reaction centers [29] | Commercial, Academic licensing [30] |

| Molecular Dynamics Engines | NAMD, CHARMm, OpenMM [31] | Classical MD simulation, Enhanced sampling, Free energy calculations [31] | Full proteins, Solvated complexes, Membrane systems [31] | Academic, Commercial [31] |

| Neural Network Potentials | Egret-1, AIMNet2, OMol25 eSEN [32] | High-accuracy force evaluation, Quantum-level MD, Property prediction [32] | Large biomolecules, Molecular crystals, Materials [32] | Open-source, Platform-based [32] |

| Hybrid QM/MM Platforms | BIOVIA, CHARMm/DMol3 [31] | Multi-scale modeling, Reaction mechanism study, Spectroscopic property calculation [31] [29] | Enzyme reactions, Catalytic sites, Photobiological systems [29] | Commercial [31] |

| Free Energy Tools | FEP, MM/GBSA, MSLD [31] | Relative binding affinity, Solvation free energy, Ligand efficiency [31] | Protein-ligand complexes, Host-guest systems [33] | Commercial suite [31] |

The computational tools summarized in Table 3 represent the essential "reagent solutions" for modern biomolecular simulation research. These platforms enable the implementation of quantum statistical mechanical principles across various system sizes and complexity levels, from electronic structure calculations of active sites to statistical sampling of entire biomolecular assemblies [32] [31] [29].

For researchers focusing on drug discovery applications, integrated platforms such as Rowan and Schrödinger provide streamlined workflows that combine multiple methodological approaches, offering specialized tools for property prediction including pKa, logD, blood-brain barrier permeability, and binding affinity [32] [33]. These platforms increasingly incorporate machine learning techniques to enhance the accuracy of physical models while maintaining computational efficiency essential for high-throughput virtual screening campaigns [32] [33].

The integration of quantum statistical mechanics with biomolecular modeling has entered an transformative phase, driven by methodological innovations that successfully address the traditional trade-off between computational accuracy and feasibility. Machine learning-corrected DFT approaches achieve higher-accuracy results at lower computational costs, effectively advancing the quest for the universal exchange-correlation functional [28]. Neural network potentials trained on quantum mechanical data enable quantum-accurate simulations of systems comprising hundreds of thousands of atoms, bridging traditional methodological divides [32] [29].

These advances are particularly significant for the pharmaceutical and biotechnology sectors, where predicting molecular interactions with quantitative accuracy directly impacts drug discovery efficiency. The continuing evolution of multi-scale modeling frameworks that seamlessly integrate quantum, classical, and machine learning components promises to further expand the accessible time- and length-scales for biomolecular simulation while maintaining physical rigor [29]. As these computational methodologies mature, they establish a more robust foundation for rational biomolecular design, potentially reducing reliance on empirical screening approaches and accelerating the development of novel therapeutic agents and biomaterials.

For researchers navigating this rapidly evolving landscape, the optimal methodology selection depends critically on the specific biological question, required accuracy, and available computational resources. The comparative data presented in this guide provides an evidence-based framework for these strategic decisions, enabling more informed selection of computational approaches that appropriately balance physical rigor with practical constraints in biomolecular research.

Modern Computational Approaches: From Advanced DFT to Quantum Computing and AI

A long-standing goal of the computational chemistry community is the ability to accurately and efficiently model molecular systems, particularly those with strong electron correlation that pose challenges for conventional methods [34]. Understanding molecular behavior at the quantum level is crucial for designing better materials, creating new medicines, and solving environmental challenges [2]. Traditional Kohn-Sham Density Functional Theory (KS-DFT) revolutionized quantum simulations by balancing accuracy and computational efficiency, but faces significant challenges with systems where electron interactions are complex and cannot be accurately described by a single-determinant wave function [2]. These limitations are particularly pronounced in transition metal complexes, bond-breaking processes, molecules with near-degenerate electronic states, and magnetic systems—precisely the areas where advances could yield breakthroughs in catalysis, photochemistry, and materials science [2].

Multiconfiguration Pair-Density Functional Theory (MC-PDFT) represents a fundamental advance in addressing these challenges. Developed over the past decade by Prof. Laura Gagliardi and Prof. Don Truhlar, MC-PDFT combines the advantages of wave function theory and density functional theory to better treat strongly correlated systems [2] [35]. The recent introduction of the MC23 functional marks a significant milestone in this field, offering high accuracy without the steep computational cost of other advanced methods [2]. This review provides a comprehensive comparison of MC-PDFT's performance against established quantum chemical methods, with particular focus on the innovative MC23 functional and its potential to transform computational chemistry research.

Theoretical Foundations: From MC-PDFT to MC23

The MC-PDFT Framework

Multiconfiguration Pair-Density Functional Theory represents a generalization of Kohn-Sham DFT that addresses its fundamental limitations for strongly correlated systems [35]. While KS-DFT calculates the electronic energy using a single Slater determinant as reference wave function, MC-PDFT employs a multiconfigurational reference wave function, typically generated from methods like Complete Active Space Self-Consistent Field (CASSCF) theory [35]. The key innovation lies in how MC-PDFT computes the total energy: it splits the energy into classical components (kinetic energy, nuclear attraction, and Coulomb energy) obtained from the multiconfigurational wave function, and nonclassical energy (exchange-correlation energy) approximated using a density functional based on both the electron density and the on-top pair density [2].

The on-top pair density is a crucial element that distinguishes MC-PDFT from conventional DFT—it provides a measure of the likelihood of finding two electrons close together [2]. By incorporating this additional information about electron correlation, MC-PDFT can more accurately describe systems with significant static correlation where multiple electronic configurations contribute substantially to ground or excited states [2]. This hybrid approach makes MC-PDFT particularly valuable for studying chemical phenomena that have proven challenging for traditional computational methods, including bond dissociation, transition metal chemistry, and electronically excited states [35].

The MC23 Functional Advance

The MC23 functional represents the latest evolution in MC-PDFT methodology, addressing a fundamental limitation of earlier approaches. Previous MC-PDFT implementations relied primarily on translated generalized gradient approximation (GGA) functionals from KS-DFT that were not specifically optimized for pair-density functional theory [36]. MC23 introduces a critical innovation by incorporating kinetic energy density into the functional, enabling a more accurate description of electron correlation [2].

This "hybrid meta" on-top functional was specifically parameterized for MC-PDFT through extensive training on a diverse database containing a wide variety of systems with diverse chemical characteristics [36]. The result is a versatile functional that demonstrates improved performance for both strongly and weakly correlated systems compared to KS-DFT functionals [36]. By fine-tuning the functional parameters across this broad training set, the developers created a tool that maintains high accuracy across the spectrum of chemical complexity, particularly excelling in challenges such as spin splitting, bond energies, and multiconfigurational systems where previous functionals showed limitations [2].

Table: Evolution of MC-PDFT Functionals

| Functional Type | Key Ingredients | Limitations | Representative Examples |

|---|---|---|---|

| Translated LDA/GA | Electron density (ρ), density gradient (∇ρ), on-top pair density (Π) | Not optimized for MC-PDFT; limited accuracy for complex correlation | tPBE, tPBE0 |

| Meta-GGA | ρ, ∇ρ, Π, kinetic energy density (τ) | Improved accuracy but not specifically parameterized for MC-PDFT | Translated meta-GGAs |

| Hybrid Meta (MC23) | ρ, ∇ρ, Π, τ with optimized parameters | Specifically trained for MC-PDFT across diverse systems | MC23 |

Methodological Approaches: Computational Protocols for Accuracy Assessment

Benchmarking Databases and Training Sets

The development and validation of the MC23 functional followed rigorous computational protocols centered around comprehensive training databases. Unlike earlier functionals that were adapted from KS-DFT, MC23 was specifically optimized for MC-PDFT using a database "developed as part of the present work that contains a wide variety of systems with diverse characters" [36]. This systematic approach to functional parameterization represents a significant methodological advancement, as it ensures the functional performs reliably across different types of chemical systems and properties, from simple molecules to highly complex ones [2].

For excited-state properties, the QUEST database has emerged as a particularly valuable benchmark tool. This extensive dataset includes 441 vertical excitation energies across diverse molecular systems and excitation types [34]. Researchers have utilized QUEST to benchmark both MC-PDFT and Linearized PDFT (L-PDFT) calculations using various meta-GGA on-top functionals, providing robust statistical assessment of methodological accuracy [34]. The comprehensive nature of this database allows for meaningful comparisons between methods and identification of systematic strengths and weaknesses.

Implementation Advances: Nuclear Gradients and Beyond

Recent theoretical work has significantly expanded the practical utility of MC-PDFT with meta-GGA functionals through the derivation and implementation of analytic nuclear gradients [34]. This development enables efficient geometry optimizations and dynamics simulations for both ground and excited states using the new class of functionals. The implementation encompasses state-specific MC-PDFT (SS-MC-PDFT) and state-averaged MC-PDFT (SA-MC-PDFT), with and without density fitting [34].

The availability of analytic gradients represents more than just a technical improvement—it dramatically expands the range of chemical problems that can be studied with high accuracy. Researchers can now efficiently optimize molecular geometries, map potential energy surfaces, and study photochemical reactions using MC23 with computational costs significantly lower than traditional wave function methods [34]. This development has been validated through benchmark studies on systems like s-trans-butadiene and benzophenone, demonstrating the method's robustness for both ground-state and excited-state geometry optimization [34].

Diagram Title: MC-PDFT Computational Workflow with MC23

Performance Comparison: MC23 Against Competing Methods

Ground-State Properties and Strong Correlation

The performance of MC23 for ground-state properties demonstrates significant improvements over both conventional KS-DFT and earlier MC-PDFT functionals. For strongly correlated systems where KS-DFT typically struggles, MC23 maintains high accuracy while requiring less computational resources than advanced wave function methods [2]. This balanced performance makes it particularly valuable for studying transition metal complexes, bond dissociation processes, and systems with near-degenerate electronic states—all areas where accurate treatment of electron correlation is essential [2].

In comprehensive assessments of ground-state geometries, MC23 shows comparable accuracy to established functionals like tPBE0 and high-level wave function methods such as NEVPT2 (N-electron valence state second-order perturbation theory) [34]. The method's ability to handle multireference character while incorporating dynamic correlation through the density functional component makes it particularly robust for systems where static and dynamic correlation effects are both important. This represents a substantive advance over either pure wave function methods or conventional DFT alone.

Perhaps the most rigorous assessment of MC23 comes from benchmark studies on excited-state properties, particularly vertical excitation energies. In comprehensive evaluations using the QUEST database of 441 vertical excitations, MC23 emerges as the best performer among nine meta and hybrid meta functionals tested [34]. The functional demonstrates accuracy comparable to the high-level NEVPT2 multireference wave function method while being computationally less demanding [34].

When directly compared to time-dependent DFT (TD-DFT) results, MC-PDFT with the MC23 functional consistently outperforms even the best-performing Kohn-Sham density functionals [34]. This performance advantage is particularly pronounced for challenging excited states with significant multireference character, charge-transfer character, or Rydberg states where conventional TD-DFT often fails systematically. The robust performance across diverse excitation types highlights the fundamental advantages of the MC-PDFT approach for excited-state modeling.

Table: Performance Comparison for Vertical Excitation Energies (QUEST Database)

| Method | Functional Type | Mean Absolute Error (eV) | Computational Cost | Key Strengths |

|---|---|---|---|---|

| MC23 | Hybrid meta MC-PDFT | Lowest among tested MC-PDFT functionals | Moderate | Excellent across all excitation types |

| tPBE0 | Hybrid translated MC-PDFT | Low | Moderate | Good general performance |

| NEVPT2 | Wave function theory | Comparable to MC23 | High | High accuracy, theoretical rigor |

| CASPT2 | Wave function theory | Low | Very high | Established benchmark method |

| TD-DFT (Best) | Kohn-Sham DFT | Higher than MC-PDFT | Low to Moderate | Computational efficiency |

Computational Efficiency and Scalability

A critical advantage of MC-PDFT with the MC23 functional is its favorable computational scaling compared to traditional wave function methods. While methods like CASPT2 and NEVPT2 provide high accuracy, their computational cost often limits application to small or medium-sized molecules [35]. MC-PDFT, in contrast, adds negligible additional cost beyond the reference wave function calculation, making it feasible for larger systems that would be prohibitively expensive with pure wave function methods [2] [35].