Benchmarking Quantum Chemistry: A Practical Guide to Validating Conformational Energies with DFT and Post-HF Methods in Drug Discovery

Accurate calculation of conformational energies is a cornerstone of reliable computational chemistry in drug design, impacting everything from docking poses to property prediction.

Benchmarking Quantum Chemistry: A Practical Guide to Validating Conformational Energies with DFT and Post-HF Methods in Drug Discovery

Abstract

Accurate calculation of conformational energies is a cornerstone of reliable computational chemistry in drug design, impacting everything from docking poses to property prediction. This article provides a comprehensive guide for researchers and development professionals on validating these critical energies. We first explore the fundamental importance of conformational analysis and establish DLPNO-CCSD(T) as the modern reference 'gold standard.' The guide then details best-practice protocols for applying DFT and post-Hartree-Fock methods, highlighting common pitfalls and optimization strategies for robust workflows. Finally, we present a systematic framework for the comparative validation of different computational methods against high-level benchmarks, empowering scientists to select and trust the right tool for their specific drug discovery project.

The Critical Role of Conformational Energies in Drug Design: From Fundamentals to Gold Standards

Why Conformational Energy Accuracy Matters for Bioactive Pose Prediction and Property Calculation

In rational drug design, the biological activity and physicochemical properties of a molecule are not determined by a single static structure, but by an ensemble of accessible three-dimensional conformations. The accurate prediction of this conformational landscape and its associated energetics is a cornerstone of computational chemistry, directly impacting the success of structure-based and ligand-based drug discovery campaigns. The energy differences between conformers are often subtle, yet they govern the population of specific states, including the bioactive conformation that a molecule adopts when bound to its protein target. Inaccuracies in calculating these conformational energies can lead to failures in predicting binding poses, estimating binding affinities, and optimizing lead compounds. This guide examines the critical importance of conformational energy accuracy, comparing the performance of various computational methods and providing a framework for their practical application in drug discovery.

Performance Comparison of Conformational Sampling Methods

Force Fields and Solvation Models in Bioactive Conformation Retrieval

The ability of a computational method to generate a conformational ensemble that includes a structure closely resembling the experimentally observed bioactive pose is a fundamental benchmark. A study evaluating drug-like ligands from protein-ligand complexes in the Protein Data Bank (PDB) investigated the performance of various force fields and solvation settings for this task.

Table 1: Impact of Force Field and Solvation on Bioactive Conformation Retrieval (Root Mean Square Deviation < 1.0 Å)

| Method Category | Specific Method | Performance in Bioactive Conformation Retrieval | Key Findings |

|---|---|---|---|

| Force Fields | Various (e.g., OPLS_2005) | Only small differences in likelihood | Modern force fields show comparable performance in sampling ability. [1] |

| Solvation Models | Implicit Water (GB/SA) | High likelihood | Crucial for achieving low RMSD (<1.0 Å) to crystal pose. [1] |

| Solvation Models | Vacuum / Other Solvents | Lower likelihood | Less effective for achieving high geometric accuracy. [1] |

| Search Parameters | Duplicate Removal (RMSD ~0.6 Å) | Large impact | Stringent duplicate removal is critical for success. [2] |

| Search Parameters | High Energy Cut-off (e.g., 5 kcal/mol) | High impact | A generous energy window increases retrieval rate. [2] |

The findings indicate that while the choice of modern force field introduces only minor variations, the inclusion of an appropriate solvation model, particularly implicit water, is critically important. Furthermore, the parameters controlling the conformational search itself, such as the thresholds for considering two conformers as duplicates and the energy window for saving conformers, have a substantial impact on the success rate. [1] [2]

Quantum Mechanical Methods for High-Accuracy Conformational Energies

For higher accuracy, especially when dealing with non-covalent interactions or electronic properties, quantum mechanical (QM) methods are essential. However, a direct trade-off exists between computational cost and accuracy.

Table 2: Performance and Cost of Quantum Mechanical Workflows for Conformational Energies

| Method / Workflow | Typical Application | Relative Cost | Key Advantages & Limitations |

|---|---|---|---|

| Semi-Empirical (GFN2-xTB) | Initial conformational sampling | Low | Fast; good for initial ensemble generation, but energy rankings may differ from DFT. [3] [4] |

| Composite "3c" Methods (B97-3c) | Ensemble re-ranking and pre-optimization | Medium | Good balance of speed and accuracy; reduces spurious minima from GFN2-xTB. [4] |

| Density Functional Theory (ωB97X-D) | High-quality final energies & geometries | High | High accuracy for energies and geometries; considered a benchmark for many applications. [4] |

| Workflow (CREST → B97-3c → DFT) | Full DFT-quality ensemble generation | High | Efficiently produces reliable ensembles; avoids coalescence of spurious conformers. [4] |

The performance of DFT functionals themselves can be benchmarked for specific properties. For instance, assessing core-electron binding energies (CEBEs), which are sensitive to the electronic environment, reveals functional-dependent accuracy.

Table 3: DFT Functional Performance for Core-Electron Binding Energy (CEBE) Prediction

| DFT Functional | Performance (RMSD for C1s CEBEs) | Key Insight |

|---|---|---|

| PW86x-PW91c | 0.1735 eV | Good general performance. [5] |

| mPW1PW | ~0.132 eV (AAD) | Improved accuracy for polar C-X bonds (X=O, F). [5] |

| PBE50 | ~0.132 eV (AAD) | Improved accuracy for polar C-X bonds; highlights role of HF exchange. [5] |

Experimental Protocols for Conformational Benchmarking

Protocol 1: Evaluating Bioactive Conformation Retrieval

This protocol is adapted from studies that benchmark conformational search tools against high-resolution crystal structures of protein-ligand complexes. [1] [2]

- Ligand Curation: Select a diverse set of drug-like ligands from the PDB. Key criteria include:

- High-resolution complexes (e.g., ≤ 2.0 Å).

- No covalent bonding to the protein.

- Drug-like properties (MW 150-650, ≤ 15 rotatable bonds).

- Exclusion of common cofactors and crystallization agents. [1]

- Ligand Preparation: Add hydrogen atoms using a curated library, predict the most probable protonation state at the crystallization pH, and assign formal charges. [1]

- Conformational Search: Perform a two-step conformational search for each ligand.

- Step 1 (Debiasing): Use a force field like OPLS_2005 with a GB/SA water model and a mixed MCMM/Low-Mode algorithm. Generate a large pool of conformers (e.g., up to 1000) with a high energy cut-off (e.g., 50.0 kcal/mol from the global minimum). [1]

- Step 2 (Production): Use the generated conformers as input for the method being tested (e.g., OMEGA, CREST, MacroModel), varying key parameters like the RMSD duplicate cut-off and maximum number of output conformers. [2]

- Analysis: For each ligand's conformer pool, calculate the heavy-atom Root Mean Square Deviation (RMSD) of every generated conformer to the experimental bioactive conformation. The performance metric is the best achievable RMSD and the success rate in retrieving a conformation below a defined threshold (e.g., 1.0 Å or 0.5 Å). [1] [2]

Protocol 2: Generating DFT-Quality Conformational Ensembles

This modern workflow leverages efficient semi-empirical sampling followed by higher-level theory refinement to produce accurate conformational ensembles for property prediction. [4]

- Initial Ensemble Generation: Use the CREST program with the GFN2-xTB method to generate a broad, initial set of conformers. CREST uses RMSD-biased metadynamics to thoroughly explore the conformational space, typically returning all conformers within a ~6 kcal/mol energy window. [3] [4]

- Intermediate Re-optimization and Re-ranking: Re-optimize the entire CREST-generated ensemble using a cost-effective composite DFT method (e.g., B97-3c). This step significantly improves geometries and energy rankings over the GFN2-xTB level while remaining computationally feasible for large ensembles. [4]

- Duplicate Removal: Identify and discard duplicate conformers from the re-optimized set based on an RMSD threshold.

- High-Level DFT Refinement: Re-optimize the remaining unique, low-energy conformers using a higher-level DFT method (e.g., ωB97X-D/def2-SVP). This provides final, high-quality geometries. [4]

- Final Energetics Calculation: Perform a final single-point energy calculation on the refined geometries using an even larger basis set (e.g., ωB97X-V/def2-QZVPP) to obtain highly accurate relative energies. Vibrational frequency calculations can be used to derive thermodynamic corrections and free energies. [4]

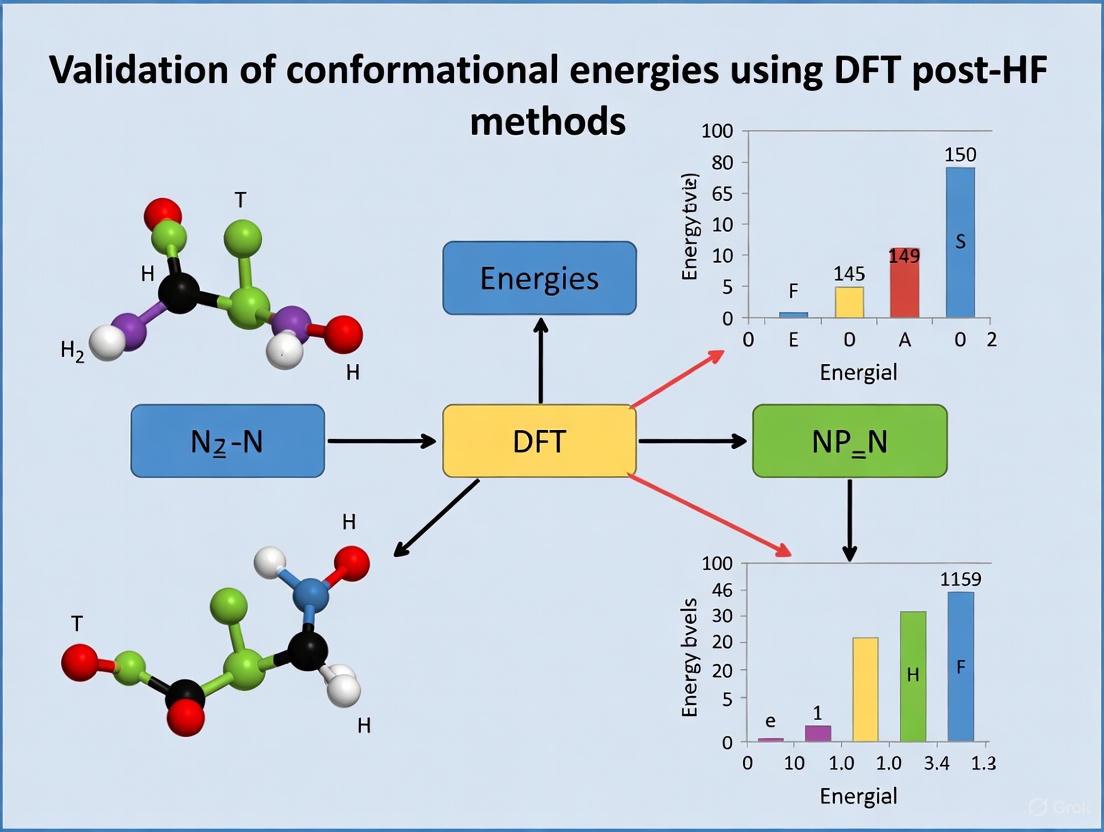

Diagram 1: Workflow for generating DFT-quality conformational ensembles, balancing thorough sampling with high accuracy.

The Scientist's Toolkit: Essential Research Reagents and Software

Table 4: Key Software and Computational Tools for Conformational Analysis

| Tool Name | Category | Primary Function | Relevance to Conformational Energy |

|---|---|---|---|

| MacroModel | Software Suite | Conformational Search & Minimization | Implements various force fields and algorithms (e.g., MCMM) for generating conformers in solvation. [1] |

| OMEGA | Software | Rule-based Conformer Generation | Rapidly generates large conformational ensembles; performance depends on parameters like duplicate RMSD. [2] |

| CREST | Software | Conformer-Rotamer Ensemble Sampling | Uses GFN2-xTB and metadynamics for extensive sampling, forming the basis for high-level workflows. [3] [4] |

| GEOM Dataset | Database | Energy-Annotated Molecular Conformations | Provides a large benchmark of conformers with semi-empirical and DFT energies for method development. [3] |

| FlexiSol | Database | Solvation Benchmark with Conformer Ensembles | Enables testing of solvation models on flexible molecules using conformational ensembles. [6] |

| ORCA | Software | Quantum Chemistry | Performs high-level DFT and composite method calculations for final geometry and energy refinement. [4] |

Accurate conformational energies are not merely an academic exercise but a practical necessity in modern computational drug discovery. The choice of computational method, from classical force fields to advanced DFT workflows, directly influences the reliability of bioactive pose prediction and the calculation of conformationally dependent molecular properties. As benchmarked against experimental data, method performance varies significantly, with key factors being the treatment of solvation, the parameters of conformational search, and the underlying level of theory used for energy evaluation. The integration of robust experimental protocols, modern software tools, and curated benchmark datasets, as detailed in this guide, provides a pathway for researchers to critically evaluate and apply these methods. This ensures that the critical role of molecular conformation is accurately captured, thereby de-risking the drug design process and increasing the likelihood of success in bringing new therapeutics to patients.

In the fields of computational chemistry and computer-aided drug design, accurately predicting the energy and three-dimensional structure of molecules is fundamental. The "gold standard" for such calculations has long been the coupled-cluster with single, double, and perturbative triple excitations (CCSD(T)) method, celebrated for its excellent agreement with experimental data [7]. However, its overwhelming computational cost, which scales to the seventh power with system size, renders it prohibitive for the large, drug-like molecules of practical interest [7] [8].

The breakthrough came with the development of local correlation approximations. Among these, the Domain-based Local Pair Natural Orbital (DLPNO) approach has made it feasible to perform CCSD(T) calculations on molecules containing hundreds of atoms [9] [8]. The DLPNO-CCSD(T) method achieves this by restricting electron correlation to local domains and using compressed sets of virtual orbitals for each electron pair, dramatically reducing computational cost while striving to retain the accuracy of its canonical predecessor [7] [10]. This article explores how DLPNO-CCSD(T) has established itself as a modern benchmark for calculating conformational energies and non-covalent interactions, providing a reliable standard for validating force fields, density functional theory (DFT), and other quantum mechanical methods in drug discovery research [9].

Performance Benchmarks: How DLPNO-CCSD(T) Compares

Extensive benchmarking studies have systematically evaluated the performance of DLPNO-CCSD(T) against canonical CCSD(T) and other computational methods across various chemical systems.

Accuracy Against Canonical CCSD(T)

The primary validation for any approximate method is its agreement with the established gold standard. Research consistently shows that with appropriate settings, DLPNO-CCSD(T) delivers exceptional accuracy:

- For hydrogen atom transfer (HAT) reactions in closed-shell systems, the standard deviation in calculated barrier heights between DLPNO-CCSD(T) and canonical CCSD(T) was found to be less than 0.2 kcal mol⁻¹ across multiple basis sets [7].

- For water clusters (H₂O)ₙ=₃–₇, DLPNO-CCSD(T) relative energies compared to explicitly correlated CCSD(T)-F12b benchmarks showed mean absolute differences (MADs) of ≤ 0.13 kcal/mol when triple-zeta and larger basis sets were employed [11].

- For atmospheric molecular clusters, DLPNO-CCSD(T0)-F12 with a tightPNO criterion demonstrated remarkable precision, with a mean deviation of 0.10 kcal/mol and a maximum deviation of 0.20 kcal/mol from the CCSD(F12*)(T)/CBS reference [10].

Performance as a Reference for Conformational Energies

A comprehensive 2023 study evaluated the accuracy of various force fields and quantum mechanics methods for calculating the conformational energies of 145 reference organic molecules, using DLPNO-CCSD(T) as the reference [9]. The results underscore its role as a definitive benchmark. The table below summarizes the performance of different method classes against the DLPNO-CCSD(T) reference.

Table 1: Mean Error in Conformational Energies of Organic Molecules vs. DLPNO-CCSD(T) Reference [9]

| Method Class | Specific Method | Mean Error (kcal mol⁻¹) |

|---|---|---|

| Wavefunction Methods | MP2 | 0.35 |

| B3LYP | 0.69 | |

| HF | 0.81 – 1.00 | |

| Advanced Force Fields | MMFF94 | 1.30 |

| MM3-00 | 1.28 | |

| MM3-96 | 1.40 | |

| Traditional Force Fields | MMX | 1.77 |

| MM+ | 2.01 | |

| MM4 | 2.05 | |

| Generic Force Fields | DREIDING | 3.63 |

| UFF | 3.77 |

This study highlights that while certain ab initio methods like MP2 show excellent agreement, commonly used force fields can introduce significant errors. The DLPNO-CCSD(T) method provides the high-accuracy reference data needed to identify these discrepancies and guide the parameterization of improved force fields [9].

Experimental Protocols: Implementing DLPNO-CCSD(T) Calculations

To achieve high accuracy with DLPNO-CCSD(T), researchers must follow careful computational protocols. The workflow below outlines the key stages of a typical study using DLPNO-CCSD(T) to benchmark conformational energies.

Diagram 1: DLPNO-CCSD(T) Benchmarking Workflow

Detailed Methodology

System Preparation and Geometry Optimization: The process begins with generating accurate molecular structures.

- Initial Conformer Generation: For drug-like molecules, diverse low-energy conformers are typically generated using tools like OMEGA or RDKit, often employing force fields like MMFF94 for initial screening [9].

- Geometry Optimization: These initial structures are then optimized at a higher level of theory to locate true energy minima. Proven methods include:

Reference Energy Calculation with DLPNO-CCSD(T): Single-point energy calculations at the optimized geometries provide the benchmark energies.

- Method and Keywords: The DLPNO-CCSD(T) method is used, often with the

TightPNOsetting to enhance accuracy for sensitive properties like conformational energies [10] [12]. For open-shell systems or those with potential multireference character, adjusting theTcutPNOparameter may be necessary [7]. - Basis Sets: Large basis sets from Dunning's correlation-consistent series (e.g.,

cc-pVTZ,cc-pVQZ) are standard. Diffuse functions are frequently added to heavy atoms (e.g.,aug-cc-pVTZ) to better capture non-covalent interactions [11]. - Explicit Correlation (F12): Using the explicitly correlated F12 variant (e.g., DLPNO-CCSD(T)-F12) significantly accelerates basis set convergence, allowing the use of smaller basis sets to achieve near-complete-basis-set (CBS) limit accuracy [10].

- Extrapolation to CBS Limit: For the highest accuracy, energies are computed with a series of basis sets of increasing size (e.g., triple-, quad-, and pentuple-zeta). These results are then extrapolated to the CBS limit using established functions to eliminate residual basis set error [11].

- Method and Keywords: The DLPNO-CCSD(T) method is used, often with the

Benchmarking Other Methods: The DLPNO-CCSD(T)/CBS energies serve as the reference to evaluate the performance of other methods. For each conformer, the energy difference relative to the global minimum is calculated using both the reference method and the method being tested. Statistical measures like Mean Absolute Deviation (MAD) and Root-Mean-Square Deviation (RMSD) are then computed to quantify performance [9].

The Scientist's Toolkit: Essential Research Reagents and Solutions

The following table details key computational "reagents" and resources essential for conducting research with DLPNO-CCSD(T).

Table 2: Essential Research Reagents and Computational Tools

| Item/Software | Function in Research | Key Considerations |

|---|---|---|

| ORCA | A widely used software package for ab initio calculations; features a highly efficient, widely cited implementation of the DLPNO-CCSD(T) method. | The main platform for DLPNO calculations; developed by the Neese group [7]. |

| Molpro | Another quantum chemistry software that offers local coupled-cluster methods like PNO-LCCSD(T)-F12, used for high-accuracy reference calculations. | Often used for canonical CCSD(T) and explicitly correlated methods [8]. |

Basis Sets (e.g., cc-pVNZ, aug-cc-pVNZ) |

Mathematical sets of functions used to represent molecular orbitals; larger sets (higher N) increase accuracy but also computational cost. | Augmented sets (aug-) are critical for non-covalent interactions [11]. The haNZ convention (e.g., haTZ) uses different basis sets for H and heavy atoms [11]. |

PNO Settings (TightPNO, NormalPNO) |

Computational parameters that control the size of the Pair Natural Orbitals, trading between accuracy and speed. | TightPNO or vTightPNO settings are recommended for benchmarking to minimize errors in energy differences [10] [12]. |

DFT Functionals (e.g., ωB97X-D) |

Used for efficient geometry optimization of molecular structures prior to high-level DLPNO-CCSD(T) single-point energy calculations. | Functionals with dispersion corrections are crucial for obtaining correct geometries of organic molecules and non-covalent clusters [11]. |

Force Fields (e.g., MMFF94, MM3) |

Classical potentials used for initial conformational sampling and rapid energy estimation; their parameters are often refined against DLPNO-CCSD(T) data. | Performance varies significantly; DLPNO-CCSD(T) benchmarks identify which force fields are suitable for a given class of molecules [9]. |

DLPNO-CCSD(T) has unequivocally emerged as a modern gold standard in computational chemistry, particularly for generating benchmark-quality data on conformational energies and non-covalent interactions relevant to drug discovery. By providing a near-indistinguishable alternative to canonical CCSD(T) at a fraction of the cost, it enables the rigorous validation and improvement of more approximate methods like density functional theory and molecular mechanics force fields [9] [11]. As computational efforts increasingly focus on large, pharmaceutically relevant molecules, DLPNO-CCSD(T) stands as the critical benchmark, ensuring that the predictive models used in computer-aided drug design are built upon a foundation of chemical accuracy.

Fragment-based drug discovery (FBDD) has established itself as a powerful and complementary approach to high-throughput screening (HTS) for identifying novel drug leads [13]. This methodology involves screening low molecular weight compounds (typically ≤ 20 heavy atoms) against biological targets, followed by structure-guided optimization of these weakly binding hits into potent drug candidates [14]. The fundamental advantage of FBDD lies in its efficient sampling of chemical space; a small library of 1,000-2,000 fragments can provide proportionately greater coverage than much larger HTS libraries comprising larger, more complex molecules [13]. This approach has already yielded significant clinical successes, including six marketed drugs such as vemurafenib, venetoclax, and sotorasib—the latter highlighting FBDD's utility against targets like KRASG12C that were long considered "undruggable" [13] [14].

The selection of appropriate organic fragments is paramount for constructing reliable validation sets to benchmark computational methods in drug discovery. These fragments serve as critical test cases for evaluating the performance of quantum chemical methods, including Density Functional Theory (DFT) and post-Hartree-Fock (post-HF) approaches, in predicting conformational energies, binding affinities, and other physiochemical properties essential for drug development [15] [16]. A well-designed fragment validation set must encompass diverse chemical space while maintaining pharmaceutical relevance, enabling robust assessment of computational protocols against experimental data.

Defining Key Organic Fragments and Their Properties

Characteristics of Optimal Fragments

In FBDD, fragments are defined as small organic molecules with simple chemical structures. While historically guided by the "Rule of Three" (molecular weight ≤ 300 Da, H-bond donors ≤ 3, H-bond acceptors ≤ 3, cLogP ≤ 3), modern fragment libraries often include compounds that strategically violate these criteria to access novel chemical space [13]. Successful fragments typically exhibit high ligand efficiency (binding energy per heavy atom), enabling efficient optimization during later stages of drug development. Despite their small size, fragments form high-quality, "atom-efficient" interactions with protein targets, making them ideal starting points for medicinal chemistry campaigns [13].

The concept of fragment sociability has emerged as a crucial consideration in fragment selection. Sociable fragments contain well-established synthetic growth vectors and have numerous commercially available analogues, facilitating rapid structure-activity relationship (SAR) exploration during optimization [17]. Conversely, unsociable fragments lack robust synthetic methodology or accessible analogues, often impeding hit-to-lead progression despite promising initial binding [17].

Advantages Over Traditional Screening Approaches

Compared to conventional HTS, FBDD offers several distinct advantages beyond efficient chemical space sampling. Fragment hits typically display lower molecular complexity, reducing the probability of suboptimal interactions or steric clashes with target proteins [13]. Additionally, fragment screening provides valuable insights into target "druggability" and can identify binding hotspots at protein-protein interfaces or allosteric sites that are often challenging to address with larger compounds [13]. The weak affinities of initial fragment hits (typically in the μM-mM range) necessitate highly sensitive detection methods, including surface plasmon resonance (SPR), nuclear magnetic resonance (NMR), and X-ray crystallography, with orthogonal techniques frequently employed for hit validation [13] [18].

Methodologies for Building Representative Validation Sets

Experimental Protocols for Fragment Screening and Validation

Building a comprehensive fragment validation set requires integration of multiple experimental techniques to thoroughly characterize fragment-target interactions. The following workflow outlines a robust protocol for experimental fragment validation:

Experimental Workflow for Fragment Validation Set Construction

Primary Screening Using Biophysical Methods

Initial fragment screening employs sensitive biophysical techniques capable of detecting weak interactions (Kd values in the μM-mM range). Surface plasmon resonance (SPR) provides real-time kinetic data on fragment binding, including association and dissociation rates [18]. Protein-observed NMR, particularly 19F NMR, offers a rapid method for fragment screening and can detect binding even for challenging targets like GPCRs [18]. These primary screens typically yield hit rates of 0.5-5%, significantly higher than HTS campaigns [13].

Hit Validation with Orthogonal Techniques

Confirmed hits from primary screening undergo validation using orthogonal biophysical methods to eliminate false positives and pan-assay interference compounds (PAINS). Differential scanning fluorimetry (DSF) and microscale thermophoresis (MST) provide additional confirmation of binding, each with distinct sensitivity profiles and potential biases [18]. This multi-technique approach ensures robust hit identification; studies reveal that different detection methods often yield surprisingly low overlap in hit lists, highlighting the importance of orthogonal validation [18].

Structural Characterization via X-ray Crystallography

High-resolution X-ray crystallography represents the gold standard in FBDD, providing atomic-level details of fragment binding modes [17]. This structural information is crucial for identifying optimal growth vectors—specific positions on the fragment scaffold where chemical modifications can enhance potency and properties [17]. For example, in a urokinase-type plasminogen activator (uPA) program, X-ray structures guided the selection of fragments with accessible growth vectors toward the catalytic triad, enabling significant affinity improvements [17].

Structure-Activity Relationship (SAR) Expansion

Sociable fragments with commercially available analogues enable rapid SAR exploration through purchase and testing of related compounds [17]. For instance, the fragment hit mexiletine in the uPA program had over 100 commercially available analogues, facilitating comprehensive mapping of the binding pocket and resulting in a potent lead compound (IC50 = 0.07 μM) [17]. This empirical SAR data provides invaluable validation for computational predictions of binding energies and molecular properties.

Computational Approaches for Fragment Validation

Computational methods play an increasingly important role in fragment validation, complementing experimental techniques. The integration of machine learning (ML) and quantum mechanical calculations enables prediction of fragment properties, binding modes, and synthetic accessibility.

Table 1: Computational Methods for Fragment Validation

| Method Category | Specific Approaches | Application in Fragment Validation | Key Considerations |

|---|---|---|---|

| Quantum Chemical Methods | DFT (ωB97M-D3(BJ), B3LYP-D3, ωB97X-D), MP2 | Conformational energy ranking, tautomer stability, protonation state prediction | Functional/basis set selection crucial; dispersion corrections essential for accuracy [16] [19] |

| Machine Learning Potentials | Neural network potentials, ANI models, QDπ dataset | Rapid energy evaluation, conformational sampling, property prediction | Training data quality determines transferability; active learning reduces redundancy [15] |

| Descriptor Analysis | DompeKeys (DK), Molecular Anatomy, ECFP | Chemical space mapping, functional group identification, toxicity alert detection | Hierarchical descriptors capture different complexity levels [20] |

| Conformer Generation | CREST, GEOM dataset, RDKit stochastic methods | Ensemble property prediction, Boltzmann-weighted averages | Coverage of thermally accessible conformers critical for accuracy [3] |

The QDπ dataset represents a significant advance for computational validation, incorporating 1.6 million molecular structures with energies and forces calculated at the ωB97M-D3(BJ)/def2-TZVPPD level of theory [15]. This dataset employs active learning strategies to maximize chemical diversity while minimizing redundant information, making it particularly valuable for developing universal machine learning potentials applicable to drug-like molecules [15].

The GEOM dataset provides another essential resource, containing 37 million molecular conformations for over 450,000 molecules, with specific annotation for 1,511 species with BACE-1 inhibition data [3]. This dataset enables benchmarking of models that predict properties from conformer ensembles, crucial for accurate representation of flexible fragments under physiological conditions.

Commercial Fragment Libraries and Their Characteristics

Several commercial fragment libraries are available, each with distinct properties and coverage of chemical space. While these provide excellent starting points, they often require curation and filtering to optimize diversity and pharmaceutical relevance.

Table 2: Comparison of Fragment Libraries and Validation Resources

| Library/Resource | Size (Compounds) | Key Characteristics | Applications in Validation | Notable Features |

|---|---|---|---|---|

| Commercial Fragment Libraries | 1,000-5,000 | MW ≤ 300, Ro3 compliance, varying sp3 character | Primary screening, hit identification | Often include "3D-shaped" fragments with enhanced sp3 character [13] |

| QDπ Dataset [15] | 1.6 million structures | ωB97M-D3(BJ)/def2-TZVPPD level, 13 elements | ML potential training, conformational energy validation | Active learning strategy minimizes redundancy while maximizing diversity |

| GEOM Dataset [3] | 37 million conformers (450k molecules) | CREST/GFN2-xTB sampling, experimental bioactivity data | Conformer ensemble prediction, Boltzmann-weighted properties | Includes explicit solvation data for BACE inhibitors |

| DompeKeys (DK) [20] | 1,064 curated SMARTS | Hierarchical substructure definition (5 complexity levels) | Chemical space mapping, functional group analysis | Specifically designed for pharmaceutical applications |

Best Practices for DFT Protocol Selection in Fragment Validation

Accurate computational validation of fragments requires careful selection of quantum chemical methods. Benchmark studies demonstrate that method choice significantly impacts prediction accuracy for conformational energies and other key properties.

Computational Protocol for Fragment Conformational Analysis

Evaluation of quantum chemical methods using A-value (conformational energy difference in substituted cyclohexanes) estimation provides valuable benchmarking data [19]. Studies reveal that B3LYP without dispersion correction significantly overestimates A-values, highlighting the importance of proper treatment of London dispersion forces [19]. Functionals like ωB97X-D and M06-2X generally provide better performance, though systematic errors may occur for specific substituents like tert-butyl groups [19]. Solvation effects, incorporated via implicit models like PCM, prove particularly important for polar substituents, reducing A-values by approximately 0.15 kcal mol-1 on average [19].

Table 3: Essential Research Reagent Solutions for Fragment-Based Discovery

| Resource Category | Specific Tools/Solutions | Function in Fragment Research | Key Providers/Platforms |

|---|---|---|---|

| Biophysical Screening | SPR (Biacore platforms), NMR spectrometers, MST | Detection of weak fragment binding, kinetic parameter determination | GE Healthcare, Bruker, NanoTemper |

| Structural Biology | X-ray crystallography platforms, Cryo-EM | High-resolution structure determination of fragment-protein complexes | Rigaku, Thermo Fisher Scientific |

| Chemical Databases | ZINC, eMolecules, CAS | Source of commercially available fragments and analogues | Multiple commercial suppliers |

| Computational Chemistry | Gaussian, ORCA, CREST, RDKit | Quantum chemical calculations, conformer generation, property prediction | Academic and commercial software |

| Specialized Libraries | Covalent fragment libraries, 3D-shaped fragments, Natural product-derived fragments | Access to underrepresented chemical space for challenging targets | Enamine, Life Chemicals, WuXi AppTec |

The construction of representative validation sets for organic fragments in drug-like molecules requires integrated experimental and computational approaches. Key considerations include fragment sociability for efficient optimization, comprehensive conformational sampling to account for molecular flexibility, and robust quantum chemical methods with proper treatment of non-covalent interactions. Publicly available resources like the QDπ and GEOM datasets provide essential benchmarking data for method development and validation.

Emerging trends in fragment-based discovery include increased incorporation of covalent fragments, expanded use of machine learning for hit identification and optimization, and growing emphasis on 3D-shaped fragments with enhanced sp3 character for challenging targets [13] [14]. As these methodologies continue to evolve, well-validated fragment sets will remain indispensable for advancing computational drug discovery and tackling previously "undruggable" targets.

Molecular mechanics force fields provide the foundational framework for simulating the structures, motions, and interactions of biological macromolecules and drug-like compounds in full atomic detail. The accuracy of these simulations, particularly in computer-aided drug design (CADD), is critically dependent on the force field chosen. Force fields are computational models that use mathematical functions and parameter sets to calculate the potential energy of a system based on atomic coordinates. Their functional form typically includes terms for bonded interactions (bonds, angles, dihedrals) and non-bonded interactions (electrostatics, van der Waals forces). Despite their widespread use and continual refinement, traditional force fields possess inherent limitations that impact their predictive power for crucial properties such as conformational energies and interaction strengths. This review establishes a comprehensive baseline of common force fields and their limitations, creating an essential reference point for comparing and validating more computationally intensive quantum chemical methods, including density-functional theory (DFT) and post-Hartree-Fock approaches, in conformational energy research.

Force Field Fundamentals and Evolution

Historical Development and Basic Principles

The conceptual foundation for modern force fields was established with the Consistent Force Field (CFF) in 1968, which introduced a methodology for deriving and validating parameters to describe a wide range of compounds and physical observables. Early protein-specific force fields like ECEPP (Empirical Conformational Energy Program for Peptides) emerged in 1975, relying on crystal data and semi-empirical quantum mechanical (QM) calculations for parameterization. The Allinger force fields (MM1-MM4), developed between 1976-1996, represented another significant advancement, targeting data from electron diffraction, vibrational spectra, heats of formation, and crystal structures.

The basic functional form of potential energy in most molecular mechanics force fields can be expressed as:

[E{\text{total}} = E{\text{bonded}} + E_{\text{nonbonded}}]

where:

[E{\text{bonded}} = E{\text{bond}} + E{\text{angle}} + E{\text{dihedral}}]

and:

[E{\text{nonbonded}} = E{\text{electrostatic}} + E_{\text{van der Waals}}]

This additive approach utilizes simple harmonic potentials for bond and angle deformations, cosine series for dihedral angles, and a combination of Lennard-Jones potentials with Coulomb's law for non-bonded interactions.

The United-Atom to All-Atom Transition

A significant evolution in force field design was the development of united-atom models, which represented nonpolar carbons and their bonded hydrogens as a single particle to enhance computational efficiency. This approach, pioneered with UNICEPP in 1978, could significantly reduce system size since approximately half the atoms in biological macromolecules are hydrogens. While adequate for representing molecular vibrations and bulk properties of small molecules, united-atom force fields demonstrated limitations in accurately treating hydrogen bonds, π-stacking in aromatic systems, and dipole moments when hydrogens were combined with polar heavy atoms. These shortcomings led to a widespread return to all-atom models in force fields like CHARMM22, AMBER ff99, OPLS-AA, and OPLS-AA/L to increase accuracy.

Table 1: Major Biomolecular Force Fields and Their Characteristics

| Force Field | Class | Target Systems | Parameterization Philosophy | Key Strengths |

|---|---|---|---|---|

| AMBER | All-atom, fixed-charge | Proteins, nucleic acids | RESP charges fitted to QM electrostatic potential; focus on structures and non-bonded energies | Accurate description of biomolecular structures |

| CHARMM | All-atom, fixed-charge | Proteins, nucleic acids, lipids | Overestimation of gas-phase dipole moments to implicitly include polarization | Balanced treatment of various biomolecular systems |

| OPLS | All-atom, fixed-charge | Organic molecules, proteins | Optimized for liquid-state properties and thermodynamic observables | Accurate thermodynamic properties |

| GROMOS | United-atom | Biomolecular systems | Parameterized for condensed phase simulations | Computational efficiency for large systems |

| CGenFF | All-atom, fixed-charge | Drug-like molecules | Transferable parameters compatible with CHARMM | Broad coverage of chemical space relevant to drug design |

Current Major Force Fields and Their Limitations

Additive Force Fields in Biomolecular Simulations

The workhorses of contemporary biomolecular simulations include all-atom, fixed-charge force fields such as AMBER, CHARMM, GROMOS, and OPLS. These force fields share a common limitation: the use of fixed atomic charges to model electrostatic interactions, which fails to account for the many-body polarization effects that vary significantly depending on chemical and physical environments. Consequently, non-polarizable force fields cannot capture the conformational dependence of electrostatic properties, which is crucial for accurately modeling phenomena like ligand binding and membrane permeation.

The AMBER force field, first introduced with ff99 in 1999, employs Restrained Electrostatic Potential (RESP) charges derived from quantum mechanical calculations without empirical adjustments. Its philosophy assumes that using these QM-derived charges should necessitate fewer torsional potentials compared to models with empirical charge derivation. The CHARMM force fields utilize a similar approach but typically overestimate gas-phase dipole moments by approximately 20% or more to implicitly account for polarization effects in condensed phases—a recognition that molecular dipole moments are generally larger in condensed phases than in gas phase.

The CHARMM General Force Field (CGenFF) and General AMBER Force Field (GAFF) represent specialized extensions for drug-like molecules. These transferable force fields face the particular challenge of covering vast chemical space with limited parameters, requiring that drug-like molecules be treated as collections of individual chemical group parts whose properties are assumed to remain largely consistent across different molecular environments.

Fundamental Limitations and Systematic Errors

Despite extensive refinement efforts, traditional force fields exhibit several fundamental limitations that constrain their predictive accuracy:

Fixed-Charge Approximation: The atom-centered point charge model cannot describe the anisotropy of charge distribution or account for charge penetration effects (deviation of electrostatic interaction from Coulomb form due to electron shielding when atomic electron clouds overlap). These effects determine equilibrium geometry and energy in molecular complexes. This limitation becomes particularly problematic when molecules transition between environments with different polar characters, such as during ligand binding to proteins or small molecule permeation through membranes.

Lack of Polarization: Electronic polarizability, where electron density adjusts in response to local electric fields, is treated only in a mean-field average way in additive force fields. This simplification fails to capture how charge distribution changes in response to varying local environments, potentially leading to inaccurate representations of intermolecular interactions.

Functional Form Limitations: The simple functional forms of potential energy functions in traditional force fields have been recognized as a major source of inaccuracy. As statistical errors in simulations have decreased with increasing computing power, deficiencies in force fields have become more apparent, manifesting as large deviations in various observables and an inability to accurately predict conformations of proteins and peptides.

Transferability Challenges: Parameters derived from small model compounds may not accurately represent the behavior of larger, more complex systems, particularly in conjugated systems where the properties of chemical groups can vary significantly based on neighboring moieties.

Quantitative Comparison and Experimental Validation

Systematic Force Field Validation Studies

A systematic validation of eight protein force fields against experimental data revealed that while force fields have improved over time, they still exhibit varying performance in describing protein structure and fluctuations. The study, which compared simulation data with experimental NMR measurements and quantified biases toward different secondary structure types, found that recent force field versions provide reasonable descriptions of many structural and dynamical properties but remain imperfect. The research highlighted that deficiencies in force fields became more detectable as statistical errors caused by insufficient sampling were reduced through longer simulation timescales.

Benchmarking with Small Molecule Conformational Analysis

Quantum chemistry calculations using A-value estimation (the free energy difference of cyclohexane conformers) provide a valuable benchmark for evaluating computational methods. Studies comparing calculated A-values with experimental data demonstrate that methods neglecting dispersion forces (like HF) or electron correlation (like standard B3LYP) systematically overestimate A-values, highlighting the significance of properly accounting for these interactions. This benchmark is particularly relevant because A-values depend not only on steric repulsion but also on Baeyer strain, torsional strain, electrostatic interaction, and dispersion forces—all of which must be properly modeled for accurate conformational analysis.

Table 2: Performance of Computational Methods for A-Value Prediction

| Computational Method | Treatment of Dispersion | Typical Accuracy for A-Values | Computational Cost | Key Limitations |

|---|---|---|---|---|

| HF | Neglected | Poor systematic overestimation | Low | No dispersion or electron correlation |

| B3LYP | Indirect, incomplete | Moderate overestimation | Medium-low | Incomplete dispersion treatment |

| B3LYP-D3 | Empirical correction | Good for most substituents | Medium | Empirical parameters |

| M06-2X | Parametrized | Good except for bulky substituents | Medium-high | Overestimation for t-Bu |

| ωB97X-D | Empirical correction | Good overall | Medium-high | Slight overestimation for F |

| MP2 | From wavefunction | Good with adequate basis sets | High | Basis set sensitivity |

| PBE0+MBD | Many-body dispersion | Excellent | High | Computational cost |

Emerging Solutions: Polarizable Force Fields and Advanced Functional Forms

Addressing the Polarization Challenge

To overcome the limitations of fixed-charge models, significant efforts have been directed toward developing polarizable force fields that explicitly treat electronic polarization. These include models based on classical Drude oscillators, fluctuating charges, or induced point dipoles. The Drude polarizable force field, for instance, represents electronic polarization by attaching a charged massless particle (a Drude oscillator) to each atom, connected by a harmonic spring. This approach allows atomic charges to adjust in response to the local electric field, providing a more physical representation of intermolecular interactions.

Simulations of biological systems using polarizable force fields have demonstrated improvements over additive models in multiple contexts, including ion distribution near water-air interfaces, ion permeation through channel proteins, water-lipid bilayer interactions, protein folding, and protein-ligand binding. The explicit treatment of polarization enables more accurate modeling of molecular systems in environments with different polar characters, addressing a fundamental limitation of additive force fields.

Advanced Functional Forms for Improved Physical Representation

Beyond incorporating polarization, next-generation force fields are addressing other limitations in traditional functional forms:

Geometry-Dependent Charge Flux (GDCF): Models that consider how atomic charges change with molecular geometry, implemented in advanced force fields like AMOEBA+(CF), address the assumption of fixed partial charges regardless of local molecular geometry.

Many-Body Dispersion (MBD): Traditional pairwise additive Lennard-Jones potentials fail to capture many-body dispersion effects, which can be significant in larger systems. Incorporation of MBD treatments improves the description of van der Waals interactions.

Sigma-Hole Potentials: Simple solutions to model anisotropic charge distribution in atoms like halogens include attaching off-centered positive charges to represent regions of lower electron density (σ-holes), providing more accurate descriptions of halogen bonding.

Experimental Protocols for Force Field Validation

Conformational Energy Benchmarking

Protocol for A-value comparison of computational methods:

System Selection: Monosubstituted cyclohexanes with diverse substituents (e.g., methyl, tert-butyl, halogen, polar groups) for which experimental A-values are available.

Geometry Optimization: Conformers (equatorial and axial) are optimized using target theoretical methods (e.g., HF, B3LYP, B3LYP-D3, M06-2X, ωB97X-D, MP2) with basis sets such as 6-31G* or LANL2DZ for heavier atoms.

Frequency Calculations: Verification of stationary points as minima (no imaginary frequencies) and calculation of thermal corrections to free energy.

Energy Evaluation: Single-point energy calculations at optimized geometries using higher-level theory (e.g., def2-TZVPD or 6-311+G(2df,2p) basis sets) with solvent correction via Polarizable Continuum Model (PCM) for non-polar substituents.

A-value Calculation: Free energy difference between axial and equatorial conformers: ΔG = Gaxial - Gequatorial.

Statistical Analysis: Comparison with experimental values using correlation coefficients, mean absolute errors, and systematic deviation analysis.

Protein Force Field Validation Protocol

Systematic validation of protein force fields against experimental data:

Folded Protein Simulations: Extensive MD simulations of structured proteins reaching microsecond timescales.

NMR Comparison: Calculation of NMR observables (chemical shifts, J-couplings, residual dipolar couplings) from simulations and comparison with experimental data.

Secondary Structure Propensity: Evaluation of biases toward different secondary structure types by comparing simulation data with experimental data for small peptides that preferentially populate either helical or sheet-like structures.

Folding Simulations: Testing force fields' abilities to fold small proteins (both α-helical and β-sheet structures) to native states.

Error Quantification: Statistical analysis of deviations from experimental data across multiple force fields and systems.

Validation Workflow: Diagram illustrating the systematic protocol for validating force fields against experimental data.

Table 3: Key Computational Resources for Force Field Development and Validation

| Resource Name | Type | Primary Function | Relevance to Force Field Research |

|---|---|---|---|

| GAUSSIAN 09W/16 | Quantum Chemistry Software | Electronic structure calculations | Reference quantum chemical calculations for parameterization and validation |

| CHARMM | Molecular Simulation Package | Biomolecular simulations | Development and application of CHARMM force fields |

| AMBER | Molecular Simulation Package | Biomolecular simulations | Application of AMBER force fields and MD simulation |

| CGenFF Program | Parameterization Tool | Automatic parameter generation | Transferable force field parameter assignment for drug-like molecules |

| AnteChamber | Parameterization Tool | Automatic parameter generation | GAFF and AMBER topology generation |

| CREST | Conformer Sampling Tool | Conformational ensemble generation | Exploration of conformational landscapes for validation |

| Aquamarine (AQM) Dataset | Quantum Chemical Database | Benchmark structures and properties | Validation dataset for large drug-like molecules with solvent effects |

| WebAIM Contrast Checker | Accessibility Tool | Color contrast verification | Ensuring diagram accessibility in publications |

Traditional molecular mechanics force fields provide computationally efficient tools for simulating biomolecular systems but face fundamental limitations in their ability to accurately predict conformational energies and environment-dependent interactions. The fixed-charge approximation, lack of explicit polarization, and simplified functional forms constrain their quantitative accuracy, particularly for properties sensitive to electronic effects. These limitations establish a clear baseline against which quantum mechanical methods must demonstrate superior performance to justify their increased computational cost. As force field development continues to address these challenges through polarizable models and more sophisticated functional forms, the validation benchmarks and protocols outlined here provide critical frameworks for assessing progress. The ongoing refinement of force fields, coupled with emerging quantum chemical approaches and machine learning techniques, promises enhanced accuracy in modeling molecular systems for drug discovery and materials design.

Selecting and Applying Computational Methods: Best-Practice Protocols for DFT and Post-HF

Density Functional Theory (DFT) stands as a cornerstone computational method across chemistry, materials science, and drug development, enabling the prediction of molecular properties from first principles. The accuracy of these predictions, however, is critically dependent on the selection of the exchange-correlation functional [21]. For decades, the B3LYP functional with the 6-31G* basis set has served as a widely used default, particularly in organic chemistry and drug discovery [22]. Yet, as computational chemistry expands into more complex chemical spaces—including transition metal catalysts, surface adsorption, and supramolecular systems—researchers increasingly recognize that no single functional performs universally well across all chemical domains [21] [23]. This reality necessitates a careful, system-specific approach to functional selection grounded in comprehensive validation studies and a deep understanding of functional limitations.

The challenge stems from the fundamental approximations inherent in DFT. While the Hohenberg-Kohn theorem establishes that ground-state properties are uniquely determined by electron density, the exact form of the exchange-correlation functional remains unknown [24]. Accordingly, dozens of functionals have been developed, each with distinct parameterizations and trade-offs between accuracy, computational cost, and applicability. As reaction networks in heterogeneous catalysis and sophisticated drug design place increasing demands on predictive accuracy, the scientific community requires robust guidelines for functional selection that move beyond historical precedent toward evidence-based decision making [21] [24]. This guide provides a comprehensive comparison of DFT functional performance across diverse chemical systems, empowering researchers to make informed choices supported by experimental and high-level computational validation.

Methodological Framework: Benchmarking Approaches and Metrics

Validation Databases and Reference Methods

Assessing functional performance requires comparison against reliable reference data, typically derived from two sources: high-level wavefunction theory and experimental measurements. Coupled Cluster theory with singles, doubles, and perturbative triples (CCSD(T)) is often considered the "gold standard" for molecular energetics, providing reference values for atomization energies, reaction energies, and conformational differences [25] [26]. For transition metal systems with significant static correlation, multireference methods like CASPT2 provide crucial benchmarks, particularly for spin state energies and reaction barriers [23]. Experimental validation includes data from single-crystal adsorption microcalorimetry for surface adsorption enthalpies [21], crystallographic measurements for geometric parameters [27], and spectroscopic data for vibrational frequencies.

Large-scale benchmark studies employ diverse datasets to evaluate functional performance across multiple properties. The Por21 database, for instance, assesses spin state energies and binding properties for iron, manganese, and cobalt porphyrins [23]. Validation sets for organometallic catalysis include transition metal compounds with known crystal structures and thermochemical properties [27]. For surface science, adsorption energies of small molecules on transition metal surfaces provide critical testing grounds [21]. These comprehensive datasets enable statistical evaluation of functional performance across chemical space, moving beyond anecdotal evidence toward quantitative assessment.

Performance Metrics and Statistical Evaluation

Rigorous functional validation employs statistical metrics to quantify performance, including mean unsigned error (MUE), root mean square deviation (RMSD), and linear correlation coefficients (R²) [21] [25]. The "chemical accuracy" target of 1.0 kcal/mol represents the ideal, though this is rarely achieved across diverse systems [23]. Benchmark studies typically report performance against these metrics for multiple properties simultaneously—geometries, energies, and frequencies—as a functional may excel in one area while failing in another [26].

Statistical analysis reveals whether functional errors are systematic or random, informing correction strategies. Some studies employ percentile ranking or grading systems to facilitate functional selection [23]. For instance, a grade "A" functional might fall in the top 20th percentile for a specific dataset, while grade "F" functionals perform unacceptably poorly. This categorical approach helps researchers quickly identify promising candidates for their specific systems while avoiding known pitfalls.

Comparative Performance Across Chemical Systems

Surface Science and Heterogeneous Catalysis

The adsorption of atoms and small molecules on transition metal surfaces represents a critical test for functionals in heterogeneous catalysis. A 2020 benchmark study evaluated multiple functionals for describing adsorption of CH₃I, CH₃, I, H, and CH₄ dissociation on Ni(111) compared to single-crystal adsorption microcalorimetry experiments [21]. The results demonstrated significant functional dependence, with no single functional achieving quantitative accuracy (within experimental error) for all adsorption processes studied. However, PBE-D3 and RPBE-D3 emerged as the most consistently accurate when allowing for a ±20 kJ/mol error margin [21].

Table 1: Performance of Select Functionals for Adsorption on Ni(111)

| Functional | CH₃I Molecular Adsorption | CH₃ Adsorption | I Adsorption | H Adsorption | CH₄ Dissociative Adsorption |

|---|---|---|---|---|---|

| PBE-D3 | Accurate within ±20 kJ/mol | Accurate | Accurate | Accurate | Accurate |

| RPBE-D3 | Accurate within ±20 kJ/mol | Accurate | Accurate | Accurate | Accurate |

| optB88-vdW | Most accurate | Less accurate | Less accurate | Less accurate | Less accurate |

| BEEF-vdW | Less accurate | Most accurate | Less accurate | Less accurate | Less accurate |

| SCAN | Less accurate | Less accurate | Most accurate | Less accurate | Less accurate |

| SCAN-RVV10 | Less accurate | Less accurate | Less accurate | Most accurate | Less accurate |

The study highlights that quantitative agreement between DFT and experiment is both system- and functional-dependent, emphasizing the need for continued experimental benchmarking [21]. For surface chemistry applications, dispersion-corrected GGA functionals like PBE-D3 and RPBE-D3 provide the most consistent starting point, though system-specific validation remains essential.

Transition Metal Chemistry and Organometallic Catalysis

Transition metal systems present particular challenges due to complex electronic structures, multireference character, and delicate spin state balances. A comprehensive 2023 benchmark evaluated 250 electronic structure methods for spin states and binding properties of iron, manganese, and cobalt porphyrins [23]. The results revealed that current approximations fail to achieve chemical accuracy by a significant margin, with the best-performing methods achieving MUEs of ~15 kcal/mol—far from the 1 kcal/mol target.

Table 2: Top-Performing Functionals for Transition Metal Porphyrins (Por21 Database)

| Functional | Grade | Type | MUE (kcal/mol) | Spin State Performance | Binding Energy Performance |

|---|---|---|---|---|---|

| GAM | A | GGA | <15.0 | Excellent | Excellent |

| revM06-L | A | meta-GGA | <15.0 | Excellent | Excellent |

| M06-L | A | meta-GGA | <15.0 | Excellent | Excellent |

| r2SCAN | A | meta-GGA | <15.0 | Excellent | Excellent |

| r2SCANh | A | Hybrid meta-GGA | <15.0 | Excellent | Excellent |

| HCTH | A | GGA | <15.0 | Excellent | Excellent |

| B3LYP | C | Hybrid GGA | ~20-23 | Moderate | Moderate |

| B2PLYP | F | Double-hybrid | >30 | Poor | Poor |

Notably, local functionals (GGAs and meta-GGAs) generally outperformed hybrid and double-hybrid functionals for these challenging transition metal systems [23]. Functionals with high percentages of exact exchange, including range-separated and double-hybrid functionals, often exhibited catastrophic failures for spin state energetics. This finding contradicts the conventional wisdom that exact exchange improves treatment of static correlation, highlighting the specialized challenges of transition metal applications.

For geometric properties of transition metal compounds, a 2012 validation study of catalysts for olefin metathesis and other homogeneous reactions found that dispersion-corrected functionals like B97D, wB97XD, and M06 provided the most accurate structures [27]. The study emphasized that dispersion corrections are essential for accurate geometries, particularly for weakly interacting systems prevalent in organometallic chemistry.

Main-Group Molecules and Non-Covalent Interactions

For main-group molecules and non-covalent interactions, modern range-separated and dispersion-corrected functionals typically deliver superior performance. A study on beryllium, tungsten, and hydrogen-containing molecules identified ωB97XD as the top performer for atomization energies, closely followed by B97D, M06, B3LYP, and M11 [26]. For vibrational frequencies, HSEH1PBE, B3LYP, and M11 showed the best accuracy, while M11, ωB97XD, and HSEH1PBE excelled for bond lengths [26].

The importance of dispersion corrections emerges consistently across studies. For example, in the r2SCAN functional series, the dispersion-corrected r2SCAN-D4 variant significantly outperformed its uncorrected counterpart for non-covalent interactions [23]. Similarly, in crystal structure prediction—where dispersion forces dominate packing arrangements—the r2SCAN-D3 functional enables highly accurate lattice energy rankings [28].

Specialized Applications: From Drug Development to Energy Materials

Pharmaceutical Development and Biomolecular Systems

In pharmaceutical development, DFT guides drug design through molecular electrostatic potential maps, Fukui functions, and reaction site identification [24]. The B3LYP functional with 6-31G(d,p) basis set remains widely employed for these applications due to its balanced performance and computational efficiency [22]. For drug formulation design, DFT elucidates API-excipient interactions, co-crystal formation, and nanocarrier optimization [24]. Recent advances integrate DFT with molecular mechanics in multiscale frameworks like ONIOM, enabling accurate modeling of drug-receptor interactions while managing computational cost [24].

For protein energy landscapes—relevant to aggregation propensity and misfolding diseases—DFT provides insights into conformational dynamics, though classical force fields typically handle larger-scale simulations [29] [30]. Experimental studies using nanoaperture optical tweezers have directly resolved energy landscapes of single proteins like bovine serum albumin, revealing three-state conformation dynamics with temperature [30]. These experimental benchmarks could inform future DFT development for biomolecular systems.

Information-Theoretic Approach for Correlation Energies

Beyond traditional DFT, the information-theoretic approach (ITA) offers promising alternatives for predicting electron correlation energies. Using descriptors derived from Hartree-Fock electron density—including Shannon entropy, Fisher information, and relative Rényi entropy—linear regression models can predict post-Hartree-Fock correlation energies at significantly reduced computational cost [25]. This approach has demonstrated chemical accuracy for diverse systems including octane isomers, polymeric structures, and molecular clusters [25].

The LR(ITA) protocol achieves RMS deviations of <2.0 mH for octane isomers and ~1.5-4.0 mH for linear polymers, rivaling the accuracy of more expensive embedded fragmentation methods like the generalized energy-based fragmentation (GEBF) approach [25]. While not replacing conventional DFT, ITA quantities provide computationally efficient descriptors for correlation energy prediction, particularly in large systems where post-Hartree-Fock calculations become prohibitive.

Functional Selection Workflow and Recommendations

Based on comprehensive benchmarking data, we propose a systematic workflow for functional selection (illustrated above) with the following specific recommendations:

For transition metal systems (particularly spin states and binding energies): Prefer local functionals like GAM, revM06-L, M06-L, or r2SCAN, which outperform hybrids for these challenging applications [23]. Avoid double-hybrids and high-exact-exchange functionals.

For non-covalent interactions and molecular clusters: Select dispersion-corrected functionals like ωB97XD, B97D, or r2SCAN-D3 [25] [26]. Dispersion corrections are essential for accurate binding energies and geometries.

For surface science and adsorption phenomena: Use PBE-D3 or RPBE-D3, which provide the most consistent performance across various adsorption systems [21].

For main-group thermochemistry and organic molecules: B3LYP remains reasonable, though ωB97XD, M11, and HSEH1PBE often provide superior accuracy [26].

For geometric properties: M11, ωB97XD, and HSEH1PBE deliver excellent bond lengths, while dispersion corrections are critical for non-covalent interactions [26] [27].

Regardless of the initial selection, system-specific validation against experimental data or high-level reference calculations remains essential [21]. When possible, test multiple functionals from different classes to quantify uncertainty in predictions.

Experimental Protocols for Functional Validation

Adsorption Calorimetry Benchmarking

Single-crystal adsorption microcalorimetry provides experimental benchmarks for surface science applications [21]. The protocol involves:

- Preparing well-defined single crystal surfaces under ultra-high vacuum

- Measuring heats of adsorption via microcalorimetry at controlled temperatures (e.g., 160 K)

- Comparing DFT-calculated adsorption enthalpies with experimental values

- Evaluating functional performance using statistical metrics (MUE, RMSD)

This approach revealed that while each adsorption process studied on Ni(111) had at least one accurately describing functional, none performed quantitatively well across all systems [21].

High-Level Wavefunction Theory Benchmarks

For molecular systems, CCSD(T) with complete basis set extrapolation provides reference energies [26]. The standard protocol includes:

- Geometry optimization at CCSD(T)/cc-pVQZ level

- Energy evaluation with cc-pVQZ/cc-pV5Z extrapolation to CBS limit

- Accounting for core correlation effects (3-5% for heavy elements)

- Comparing DFT results against these reference values

For transition metals with potential multireference character, CASPT2 references provide crucial validation, though reference quality must be critically assessed [23].

Research Reagent Solutions: Computational Tools

Table 3: Essential Computational Tools for DFT Validation Studies

| Tool Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Quantum Chemistry Software | VASP [21], Gaussian, ORCA | DFT calculations with various functionals | Production calculations across chemical systems |

| Wavefunction Theory Codes | MOLPRO, CFOUR, MRCC | High-level reference calculations (CCSD(T), CASPT2) | Generating benchmark data for validation |

| Analysis Tools | Multiwfn, IGMH, NCIPLOT | Electron density analysis, non-covalent interaction visualization | Information-theoretic approach, bonding analysis |

| Database Resources | Por21 database [23], GMTKN55, NIST CCCBDB | Curated benchmark datasets | Functional testing and validation |

| Crystal Structure Prediction | r2SCAN-D3 method [28] | Polymorph prediction and ranking | Pharmaceutical development, materials design |

The functional landscape beyond B3LYP/6-31G* is rich and varied, with specialized performers emerging for different chemical domains. No single functional currently achieves universal chemical accuracy, necessitating careful, system-aware selection. Key trends include the superiority of local functionals for challenging transition metal systems, the critical importance of dispersion corrections for non-covalent interactions, and the consistent performance of PBE-D3/RPBE-D3 for surface science.

Future developments will likely focus on machine-learning-augmented functionals, systematic uncertainty quantification, and efficient implementations of high-performing functionals like r2SCAN [24]. The information-theoretic approach offers promising alternatives for correlation energy prediction [25], while multiscale frameworks integrate DFT with molecular mechanics for complex systems [24]. As these methods mature, functional selection may become more systematic and less empirical. Until then, the guidelines presented here—grounded in comprehensive benchmark studies—provide researchers with evidence-based strategies for navigating the complex functional landscape, ultimately enhancing the reliability of computational predictions across chemistry, materials science, and drug development.

Basis Set Selection and the Role of Composite Methods for Efficiency and Accuracy

Computational chemistry, like all attempts to simulate reality, is defined by tradeoffs. Reality is far too complex to simulate perfectly, and scientists have developed a plethora of approximations, each of which reduces both the cost and the accuracy of the simulation [31]. The responsibility of the researcher is to choose an appropriate method that best balances speed and accuracy for the task at hand. This balance can be framed in terms of Pareto optimality, where a "frontier" exists of optimal speed/accuracy combinations, with suboptimal combinations being inefficient [31]. The choice of basis set—the set of mathematical functions used to represent molecular orbitals—is one of the most critical approximations influencing this balance. It heavily influences the accuracy, CPU time, and memory usage of a calculation [32]. Simultaneously, the development of composite methods represents a strategic approach to accessing high accuracy without prohibitive computational cost by combining results from several calculations using different levels of theory and basis sets [33]. This guide provides a comparative analysis of basis set selection strategies and composite methods, framed within the context of validating conformational energies and other properties in Density Functional Theory (DFT) and post-Hartree-Fock (post-HF) research.

Basis Set Fundamentals and Hierarchy

A basis set in quantum chemistry consists of a set of basis functions (atomic orbitals) used to build molecular orbitals. The fundamental trade-off is that a larger, more complete basis set typically yields a more accurate result but at a significantly higher computational cost [32].

Standard Basis Set Types and Nomenclature

Basis sets are systematically improved by increasing both the number of basis functions per atom and their flexibility. The standard hierarchy, from smallest/least accurate to largest/most accurate, is summarized in the table below [32].

Table 1: Standard Basis Set Hierarchy and Typical Performance Characteristics

| Basis Set | Description | Typical Use Case | Computational Cost (Relative to SZ) | Energy Error (Example) [eV] |

|---|---|---|---|---|

| SZ | Single Zeta | Minimal basis; quick test calculations [32] | 1 (Reference) | 1.8 [32] |

| DZ | Double Zeta | Pre-optimization of structures [32] | 1.5 | 0.46 [32] |

| DZP | Double Zeta + Polarization | Geometry optimizations of organic systems [32] | 2.5 | 0.16 [32] |

| TZP | Triple Zeta + Polarization | Recommended default for best performance/accuracy balance [32] | 3.8 | 0.048 [32] |

| TZ2P | Triple Zeta + Double Polarization | Accurate results; good description of virtual space [32] | 6.1 | 0.016 [32] |

| QZ4P | Quadruple Zeta + Quadruple Polarization | Benchmarking [32] | 14.3 | Reference [32] |

Polarization functions are crucial for describing the deformation of electron clouds when bonds form, while diffuse functions (often denoted with "++" or "aug-") are important for anions and weak interactions like hydrogen bonding [34] [33].

Quantitative Performance of Basis Sets

The impact of basis set selection on accuracy and cost can be quantified. For instance, in a study on a carbon nanotube, the energy error per atom decreased dramatically from 1.8 eV with an SZ basis set to 0.016 eV with a TZ2P basis, but the computational cost increased by a factor of 14 when moving to QZ4P [32]. It is critical to note that errors in absolute energies are often systematic and can partially cancel when calculating energy differences, such as conformational energies, reaction barriers, or binding energies. Therefore, a smaller basis like DZP might yield excellent results for energy differences even though its absolute energy error is relatively large [32].

Composite Methods: Strategic Combinations for High Accuracy

Composite methods aim for high accuracy, often to within 1 kcal/mol of experimental values (chemical accuracy), by combining the results of several calculations. They strategically use high-level theory with small basis sets and lower-level theory with large basis sets, relying on error cancellation [33].

Gaussian-n Theories

The Gaussian-n theories (G1, G2, G3, G4) were the first systematic composite models with broad applicability [33].

- G2 Theory: This method uses a multi-step procedure. The geometry is optimized at the MP2/6-31G(d) level. Subsequently, single-point energy calculations are performed at higher levels, including QCISD(T)/6-311G(d) and MP4 with larger basis sets to account for polarization and diffuse functions. The final energy includes an empirical "Higher Level Correction" (HLC) and a scaled zero-point vibrational energy [33].

- G3/G4 Theories: These are evolutions of G2. G3 uses smaller basis sets (6-31G) for some steps and a larger one (G3large) for the final MP2 calculation, also incorporating a spin-orbit correction. G4 introduces further refinements, such as extrapolation to the Hartree-Fock basis set limit, using CCSD(T) instead of QCISD(T) for the highest-level calculation, and employing B3LYP for geometries and thermochemical corrections [33].

Table 2: Comparison of Selected Composite Methods

| Method | Core Philosophy | Key Components | Target Accuracy | Applicability |

|---|---|---|---|---|

| Gaussian-4 (G4) | Fixed recipe with empirical correction [33] | B3LYP geometries, CCSD(T) energy, large MP2 basis set, HLC [33] | ~1 kcal/mol for thermochemistry [33] | Main group elements, transition metals [33] |

| ccCA | Non-empirical, uses Dunning's basis sets [33] | MP2/CBS reference, ΔCCSD(T) correction, core-valence, relativistic, ZPVE corrections [33] | High accuracy without fitted parameters [33] | Main group elements [33] |

| Feller-Peterson-Dixon (FPD) | Flexible, system-dependent sequence [33] | CCSD(T) with very large basis sets (up to aug-cc-pV8Z), extrapolation to CBS, core/valence & relativistic corrections [33] | ~0.3 kcal/mol RMS error (benchmarked) [33] | Small systems (≤10 first/second row atoms) [33] |

| B97-3c | Simplified DFT composite [31] | B97 functional, modified def2-TZVP basis (mTZVP), D3 dispersion, SRB correction [31] | Benchmark-accuracy for key properties [31] | Large systems, general purpose [31] |

| r²SCAN-3c | Modern meta-GGA composite [31] | r²SCAN functional, modified TZVP basis, D4 dispersion, gCP correction [31] | High accuracy and robustness [31] | Large-scale screenings [31] |

Grimme's "3c" Methods for Efficiency

A distinct class of composite methods, pioneered by Grimme, is designed for high efficiency on large systems. These methods use a reduced-cost base method (e.g., HF or DFT with a small basis set) and layer on physically motivated corrections to recover accuracy [31].

- HF-3c: Uses Hartree-Fock with a minimal basis set (MINIS), corrected with D3 dispersion, a geometric counterpoise (gCP) correction for basis set incompleteness error, and a short-range basis (SRB) correction. It is surprisingly good for geometry optimization and non-covalent interactions [31].

- PBEh-3c: A DFT-based composite method using a modified polarized double-zeta basis set (def2-mSVP), the D3 and gCP corrections, and a reparameterized PBEh functional [31].

- B97-3c and r²SCAN-3c: These represent more advanced DFT composites. B97-3c uses a modified triple-zeta basis (mTZVP) and the D3 and SRB corrections, often outperforming standard DFT with much larger basis sets. The r²SCAN-3c method is noted for its robustness and benchmark accuracy, drastically shifting the balance between efficiency and accuracy for large systems [31].

Experimental Protocols for Validation

Validating the performance of basis sets and composite methods requires comparison against reliable benchmark data, which can be experimental results or highly accurate theoretical values.

Protocol for Energetic Properties

A robust protocol for validating conformational energies, atomization energies, or interaction energies involves:

- Reference Data Selection: Use a well-established benchmark set, such as the GMTKN55 database for general main-group thermochemistry, kinetics, and non-covalent interactions, or the S66 set for non-covalent interactions [31].

- Geometry Optimization: Optimize all molecular structures for each benchmark system using a consistent, reasonably accurate method. Many composite methods like G4 and ccCA use B3LYP/6-31G(2df,p) or similar for this step [33].

- Single-Point Energy Calculations: Calculate the single-point energies for the optimized structures using the method/basis set combination being tested. For composite methods, this involves the specific sequence of calculations defined by the method [33].