Benchmarking Quantum Chemistry: A Practical Guide to Wave Function Theory vs. Density Functional Theory

This article provides a comprehensive analysis of benchmark studies comparing Wave Function Theory and Density Functional Theory for researchers and drug development professionals.

Benchmarking Quantum Chemistry: A Practical Guide to Wave Function Theory vs. Density Functional Theory

Abstract

This article provides a comprehensive analysis of benchmark studies comparing Wave Function Theory and Density Functional Theory for researchers and drug development professionals. It covers foundational concepts, methodological applications across chemistry and materials science, common pitfalls and optimization strategies, and rigorous validation protocols. The guide synthesizes the most current benchmark data to empower scientists in selecting the most accurate and efficient computational methods for predicting molecular properties, binding affinities, and material characteristics, with specific implications for accelerating drug discovery and materials design.

The Quantum Accuracy Frontier: Understanding WFT and DFT Fundamentals

The Grand Challenge of Predictive Power in Computational Chemistry

Computational chemistry stands as a cornerstone of modern molecular science, bridging theoretical frameworks with experimental observations to provide detailed insights into the structural, electronic, and reactive properties of molecules and materials [1]. The grand challenge in this field lies in achieving predictive power—the ability for computational methods to accurately forecast molecular behavior and properties before experimental verification. This predictive capability is particularly crucial in applications such as drug discovery, catalysis, and materials engineering, where reliable computational predictions can significantly accelerate development cycles and reduce costs [1].

The foundation of predictive modeling in computational chemistry rests on three methodological pillars: wave function-based quantum chemistry (QC), density functional theory (DFT), and emerging approaches such as machine learning interatomic potentials (MLIPs) [1]. Each approach presents distinct trade-offs between computational cost and accuracy, making benchmark studies essential for guiding method selection based on the specific chemical system and properties of interest. This review examines recent benchmarking efforts that evaluate the performance of these computational approaches across diverse chemical systems, with a particular focus on insights derived from wave function theory and density functional theory benchmarks [1] [2].

Theoretical Frameworks and Computational Approaches

The Methodological Spectrum

Computational chemistry employs a hierarchy of methods that span different levels of theory, from highly accurate wave function-based approaches to efficient machine learning potentials. Understanding this spectrum is essential for selecting appropriate methods for specific predictive tasks.

Table 1: Computational Methods in Modern Chemistry

| Method Category | Representative Methods | Theoretical Basis | Strengths | Limitations |

|---|---|---|---|---|

| Wave Function Theory | CCSD(T), CASPT2, MRCI+Q | Electron correlation via wave function expansion | High accuracy, considered the "gold standard" | Computationally expensive, limited to small systems |

| Density Functional Theory | B97M-V, PWPB95-D3(BJ), B2PLYP-D3(BJ) | Electron density with exchange-correlation functionals | Favorable cost-accuracy balance | Functional-dependent performance |

| Machine Learning Potentials | PFP, eSEN-OAM, MACE | Data-driven potential energy surfaces | High speed for large systems | Training data dependent, transferability concerns |

| Hybrid QM/MM | ONIOM, FMO, EFP | Quantum mechanics embedded in molecular mechanics | Balances accuracy and scope for large systems | Boundary region artifacts |

Wave function theory methods, particularly coupled cluster theory with single, double, and perturbative triple excitations (CCSD(T)), serve as the gold standard for accuracy in quantum chemistry, providing benchmark-quality reference data for evaluating other methods [1] [2]. These methods systematically approximate the electronic wave function but suffer from steep computational scaling that limits their application to small and medium-sized molecules [1].

Density functional theory offers a more computationally efficient alternative that has become the workhorse of computational chemistry, striking a balance between accuracy and computational cost that enables the study of larger systems [3]. The performance of DFT, however, strongly depends on the selection of exchange-correlation functionals, which has motivated extensive benchmarking efforts to guide functional selection for specific applications [3] [4] [2].

The emerging paradigm of machine learning interatomic potentials represents a transformative development, enabling nearly quantum-accurate molecular simulations at significantly reduced computational cost [1] [5]. These data-driven approaches learn potential energy surfaces from reference quantum mechanical calculations and can achieve high accuracy while being several orders of magnitude faster than direct quantum chemical computations [5].

Experimental Validation Workflows

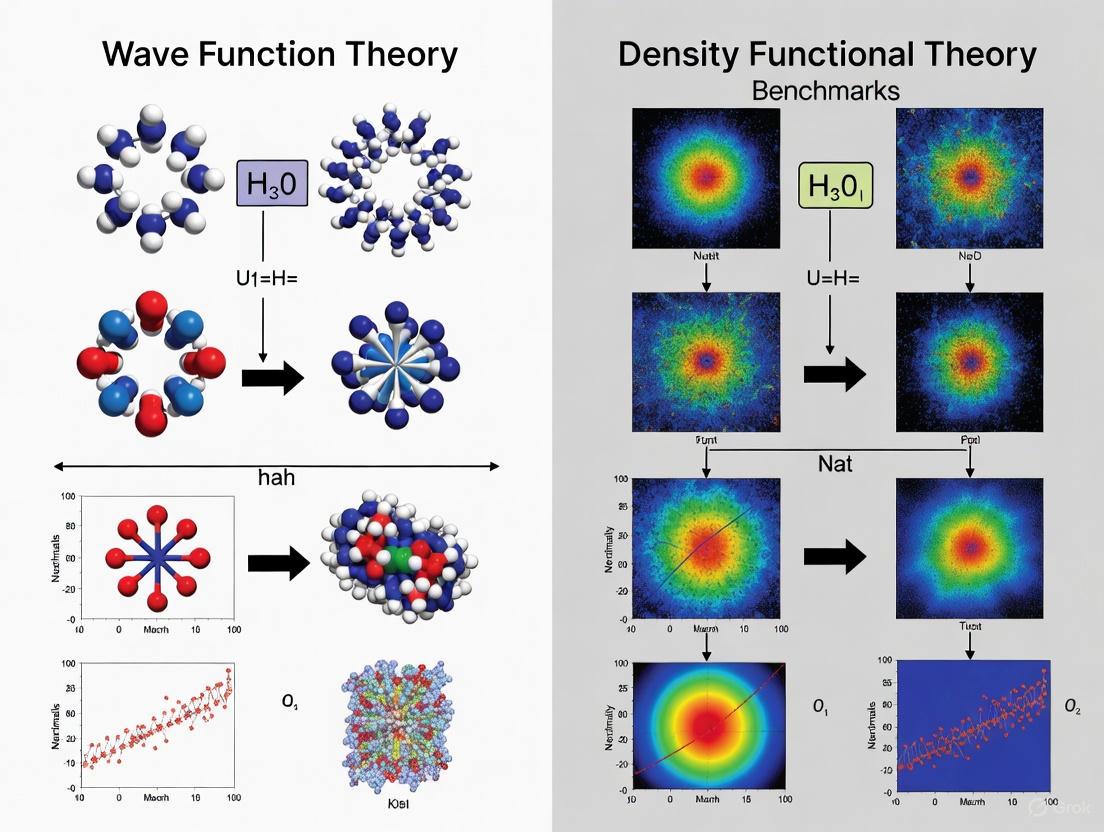

Benchmarking computational methods requires robust validation frameworks that compare theoretical predictions with reliable reference data. The following diagram illustrates the generalized workflow for establishing and validating computational benchmarks across different chemical systems:

This validation framework demonstrates how different sources of reference data—whether from high-level quantum chemical calculations, experimental measurements, or curated databases—inform the assessment of computational method performance across diverse chemical systems [3] [5] [2].

Benchmarking Studies: Quantitative Comparisons

Performance for Non-covalent Interactions

Non-covalent interactions, particularly hydrogen bonding, play crucial roles in molecular self-organization and supramolecular chemistry. A comprehensive 2025 benchmark study evaluated 152 density functional approximations for their accuracy in predicting interaction energies in 14 quadruply hydrogen-bonded dimers, using coupled-cluster reference values extrapolated to the complete basis set limit [3].

Table 2: DFT Performance for Hydrogen Bonding Energies (Top 10 Functionals)

| Rank | Functional | Category | Key Characteristics | Performance Notes |

|---|---|---|---|---|

| 1 | B97M-V | Berkeley Family | With D3BJ dispersion correction | Best overall performance |

| 2 | ωB97M-V | Berkeley Family | Range-separated with non-local correlation | Excellent for non-covalent interactions |

| 3 | B97M-D3BJ | Berkeley Family | Empirical dispersion correction | Consistent accuracy |

| 4 | ωB97X-V | Berkeley Family | Range-separated hybrid | Strong performance |

| 5 | MN15 | Minnesota 2011 | Meta-NGA with non-separable form | Top non-Berkeley functional |

| 6 | B97M-rV | Berkeley Family | Modified non-local correlation | Robust performance |

| 7 | ωB97X-D3BJ | Berkeley Family | Range-separated with dispersion | Reliable for diverse systems |

| 8 | B97K-D3BJ | Berkeley Family | Designed for kinetics | Good all-around performance |

| 9 | MN15-D3BJ | Minnesota 2011 | With empirical dispersion | Enhanced with dispersion |

| 10 | ωB97M-D3BJ | Berkeley Family | Range-separated meta-GGA | Excellent with dispersion |

The benchmark revealed that eight variants of the Berkeley functionals, particularly those from the B97 family, dominated the top performers, consistently demonstrating superior accuracy for these challenging non-covalent interactions [3]. The study highlighted the importance of empirical dispersion corrections, with the D3(BJ) correction significantly improving performance across multiple functional families [3].

Accuracy for Transition Metal Spin-State Energetics

Predicting spin-state energetics in transition metal complexes represents one of the most challenging problems in computational chemistry, with enormous implications for modeling catalytic mechanisms and materials discovery. A groundbreaking 2024 study introduced the SSE17 benchmark set derived from experimental data of 17 transition metal complexes containing Fe(II), Fe(III), Co(II), Co(III), Mn(II), and Ni(II) with chemically diverse ligands [2].

Table 3: Performance for Transition Metal Spin-State Energetics (SSE17 Benchmark)

| Method Category | Specific Methods | Mean Absolute Error (kcal mol⁻¹) | Maximum Error (kcal mol⁻¹) | Performance Assessment |

|---|---|---|---|---|

| Wave Function Theory | CCSD(T) | 1.5 | -3.5 | Gold standard accuracy |

| Double-Hybrid DFT | PWPB95-D3(BJ) | <3.0 | <6.0 | Top-tier DFT performance |

| Double-Hybrid DFT | B2PLYP-D3(BJ) | <3.0 | <6.0 | Excellent for spin states |

| Commonly Recommended DFT | B3LYP*-D3(BJ) | 5-7 | >10.0 | Moderate performance |

| Commonly Recommended DFT | TPSSh-D3(BJ) | 5-7 | >10.0 | Moderate performance |

| Multireference WFT | CASPT2 | Variable | Variable | Inconsistent performance |

| Multireference WFT | MRCI+Q | Variable | Variable | Inconsistent performance |

The SSE17 benchmark demonstrated that the CCSD(T) method achieved remarkable accuracy with a mean absolute error of just 1.5 kcal mol⁻¹, establishing it as the most reliable approach for spin-state energetics [2]. Among DFT approaches, double-hybrid functionals including PWPB95-D3(BJ) and B2PLYP-D3(BJ) delivered the best performance with mean absolute errors below 3 kcal mol⁻¹, significantly outperforming the commonly recommended functionals like B3LYP*-D3(BJ) and TPSSh-D3(BJ), which exhibited substantially larger errors [2].

Thermodynamic Properties for Combustion Reactions

The accurate prediction of thermodynamic properties is essential for modeling chemical processes such as combustion. A 2025 benchmarking study evaluated DFT methods for calculating enthalpy, Gibbs free energy, and entropy of alkane combustion reactions, comparing results across alkanes with 1-10 carbon atoms [4].

The study revealed a linear relationship between the number of carbon atoms and reaction parameters, with deviations arising from method-dependent approximations [4]. The LSDA functional and dispersion-corrected methods demonstrated closer agreement with experimental values when paired with correlation-consistent basis sets, while higher-rung functionals like PBE and TPSS exhibited significant errors, particularly with split-valence basis sets [4]. Notably, convergence issues were observed for n-hexane with PBE and TPSS, attributed to near-degenerate states and SCF instability, highlighting the importance of careful functional selection [4].

Machine Learning Potentials for Materials Science

The performance of machine learning interatomic potentials (MLIPs) has been systematically evaluated through benchmarks such as MOFSimBench, which assesses predictive capabilities for metal-organic frameworks (MOFs)—complex materials with applications in catalysis and CO₂ capture [5].

Table 4: Machine Learning Potential Performance on MOFSimBench Tasks

| MLIP Model | Structure Optimization (Success Rate) | Molecular Dynamics Stability (Success Rate) | Bulk Modulus MAE | Heat Capacity MAE | Overall Ranking |

|---|---|---|---|---|---|

| PFP | 92/100 structures | Top performer | Second best | Excellent accuracy | 1st |

| eSEN-OAM | High performance | Top performer | Best accuracy | Good accuracy | 2nd |

| orb-v3-omat+D3 | Excellent performance | Top performer | Moderate | Excellent accuracy | 3rd |

| uma-s-1p1 | Excellent performance | Not evaluated | Good accuracy | Excellent accuracy | 4th |

| MACE | Moderate performance | Moderate | Moderate | Moderate | Mid-tier |

| SevenNet | Lower performance | Lower | Higher errors | Higher errors | Lower tier |

The benchmark demonstrated that PFP and eSEN-OAM delivered consistently superior performance across all tasks, including structure optimization, molecular dynamics stability, bulk modulus prediction, and heat capacity calculation [5]. While eSEN-OAM achieved slightly better accuracy for bulk modulus prediction, PFP excelled in structure optimization and demonstrated superior computational speed, being approximately 3.75 times faster than the MatterSim-v1-5M model for systems with 1000 atoms [5].

Modern computational chemistry relies on a sophisticated toolkit of software, hardware, and methodological resources that enable predictive simulations across diverse chemical systems.

Table 5: Essential Computational Resources for Predictive Chemistry

| Resource Category | Specific Tools/Methods | Primary Function | Performance Considerations |

|---|---|---|---|

| Quantum Chemistry Software | FHI-aims, Gaussian, ORCA | Electronic structure calculations | Performance varies by processor (GRACE, AMD EPYC outperform A64FX) |

| Machine Learning Platforms | Matlantis (PFP), UMA, MACE | Fast, accurate property prediction | PFP offers 3.75× speedup over MatterSim for 1000-atom systems |

| Wave Function Methods | CCSD(T), CASPT2, MRCI+Q | High-accuracy reference data | Computational cost limits system size but provides benchmark quality |

| Density Functional Approximations | B97M-V, ωB97M-V, PWPB95-D3(BJ) | Balanced accuracy-efficiency | Top performers for non-covalent interactions and spin-state energetics |

| Benchmark Databases | SSE17, MOFSimBench, QMOF | Method validation and comparison | Provide curated reference data for specific chemical challenges |

The performance of computational resources exhibits significant hardware dependence, as demonstrated by benchmarks of the FHI-aims DFT code across different processors [6]. The study revealed that AMD, GRACE, and Intel processors perform similarly, while the A64FX processor was in some cases an order of magnitude slower for generalized gradient approximation and hybrid functional calculations [6]. These hardware considerations are essential for planning computational research projects and allocating resources efficiently.

Integrated Workflows for Predictive Modeling

The integration of multiple computational approaches has emerged as a powerful strategy for addressing the grand challenge of predictive power in computational chemistry. The following diagram illustrates a comprehensive workflow that leverages the complementary strengths of wave function theory, density functional theory, and machine learning approaches:

This integrated approach enables researchers to leverage the gold-standard accuracy of wave function methods like CCSD(T) for benchmarking and small systems, the balanced performance of density functional theory for medium-sized systems, and the exceptional speed of machine learning potentials for high-throughput screening and large-scale simulations [1] [5] [2]. Such synergistic workflows are narrowing the gap between computational predictions and experimental observations, advancing the field toward truly predictive computational chemistry [1].

The grand challenge of predictive power in computational chemistry is being addressed through rigorous benchmarking studies that evaluate method performance across diverse chemical systems. Several key insights emerge from current research:

For non-covalent interactions, particularly challenging systems like quadruple hydrogen bonds, Berkeley-family functionals such as B97M-V with D3BJ dispersion corrections deliver top-tier performance [3]. For transition metal spin-state energetics, the CCSD(T) method remains the gold standard, while double-hybrid functionals like PWPB95-D3(BJ) offer the best DFT-based accuracy [2]. In materials science applications, machine learning potentials such as PFP and eSEN-OAM demonstrate remarkable accuracy and efficiency for predicting structural, mechanical, and thermal properties of complex materials like metal-organic frameworks [5].

The integration of quantum chemistry, molecular mechanics, and machine learning into cohesive modeling strategies represents the future of predictive computational chemistry [1]. As benchmark studies continue to refine our understanding of method performance across chemical space, and as computational hardware and algorithms advance, the field moves progressively closer to achieving truly predictive power across all domains of molecular science.

In the field of computational chemistry and materials science, accurately solving the electronic Schrödinger equation is the cornerstone of predicting molecular structure, properties, and reactivity. Two dominant paradigms have emerged for this task: the highly accurate but computationally expensive Wave Function Theory (WFT) and the more efficient but approximate Density Functional Theory (DFT). WFT methods, which treat the many-electron wavefunction explicitly, are traditionally considered the gold standard for quantum chemical simulations, providing benchmark-quality results that guide the development of more efficient methods [7]. This guide provides a comparative analysis of the performance between sophisticated WFT and DFT approaches, focusing on their application in drug development and materials science where reliable predictions are critical.

The fundamental challenge stems from the many-body problem in quantum mechanics, where the computational resources required to obtain exact solutions grow exponentially with the number of electrons. This review synthesizes recent advances that seek to navigate the trade-offs between computational cost and predictive accuracy, providing scientists with a framework for selecting appropriate methodologies for specific research applications, from catalyst design to pharmaceutical development.

The Theoretical Divide: WFT and DFT

Wave Function Theory (WFT): Pursuing the Exact Solution

Wave Function Theory approaches seek to directly approximate the full many-electron wavefunction, a complex mathematical object that contains all information about a quantum system. The accuracy of these methods is systematically improvable by expanding the wavefunction in terms of Slater determinants (configurations).

- Traditional WFT Methods: These include coupled cluster (CCSD, CCSD(T)), configuration interaction (CI), and quantum Monte Carlo (QMC) techniques. Their principal strength is providing reliable benchmark results for small to medium-sized molecular systems [8] [9].

- Neural Network Wavefunctions: A recent breakthrough uses deep neural networks as wavefunction ansatzes in variational Monte Carlo (DL-VMC). This approach offers high expressivity with favorable ({\mathcal{O}}({{n}_{{\rm{el}}}}^{3-4})) scaling, challenging traditional approximations [10].

Density Functional Theory (DFT): Computational Efficiency

Density Functional Theory bypasses the complex many-electron wavefunction, instead using the electron density as the fundamental variable. While computationally efficient with ({\mathcal{O}}({{n}_{{\rm{el}}}}^{3})) scaling, its accuracy hinges entirely on the approximate exchange-correlation (XC) functional [7].

Kohn-Sham DFT (KS-DFT) revolutionized quantum simulations by balancing accuracy and efficiency, enabling studies of large systems like proteins and nanomaterials [11]. However, it faces significant challenges with strongly correlated systems where multiple electronic configurations contribute substantially, such as transition metal complexes, bond-breaking processes, and magnetic systems [11].

Quantitative Benchmarks: Accuracy vs. Cost

The trade-off between computational expense and accuracy defines the choice between WFT and DFT methods. The following data synthesizes performance comparisons from recent studies.

Table 1: Benchmark Comparison of Quantum Chemistry Methods

| Method | Computational Scaling | Key Strengths | Key Limitations | Representative Accuracy |

|---|---|---|---|---|

| DL-VMC (Wavefunction) | ({\mathcal{O}}({{n}_{{\rm{el}}}}^{3-4})) [10] | High accuracy for strongly correlated systems; increasingly applicable to solids | High cost of optimizing neural network weights for each system [10] | Near-exact for small molecules; slightly lower energies than other methods for H-chains [10] |

| Coupled Cluster (e.g., CCSD) | ({\mathcal{O}}({{n}_{{\rm{el}}}}^{6})) | "Gold standard" for molecular systems; high accuracy for ground and excited states | Prohibitive cost for large systems; difficult to apply to solids | ~10% relative error for excited-state dipoles [8] |

| MC-PDFT (Hybrid) | Lower than advanced WFT [11] | Accuracy for multiconfigurational systems at reduced cost | Still relies on approximate functionals | Improved performance for spin splitting and bond energies vs KS-DFT [11] |

| ΔSCF-DFT | ({\mathcal{O}}({{n}_{{\rm{el}}}}^{3})) | Access to double excitations; ground-state technology for excited properties [8] | Broken-symmetry solutions; overdelocalization error for charge-transfer states [8] | Reasonable for doubly excited states; suffers for charge-transfer states [8] |

| TDDFT | ({\mathcal{O}}({{n}_{{\rm{el}}}}^{3})) | Efficient for excited states of large systems | Fails for double excitations; charge-transfer inaccuracies | ~28-60% relative error for excited-state dipoles with common functionals [8] |

Table 2: Performance in Specific Chemical Applications

| System Type | High-Accuracy WFT Results | Typical DFT Performance | Recommended Methods |

|---|---|---|---|

| Strongly Correlated Materials | Accurate treatment of electron correlation (DL-VMC) [10] | Often qualitatively wrong with local/semilocal functionals [10] | DL-VMC, MC-PDFT, DMC |

| Excited States (Singlet) | Spin-pure solutions with high accuracy | ~28-60% error in dipole moments with common functionals [8] | EOM-CCSD, ADC(2), spin-purified ΔSCF |

| Charge-Transfer States | Correct description of charge separation | Severe overdelocalization error in ΔSCF; better in TDDFT [8] | CAM-B3LYP, ωB97X-D, LC functionals |

| Double Excitations | Naturally described by WFT | Inaccessible to conventional TDDFT [8] | ΔSCF, MRCI, CC methods |

| Laser-Driven Dynamics | TD-CIS provides correct population inversion [9] | RT-TDDFT fails for population inversion [9] | TD-CIS, MCTDH, high-level wavepacket |

Case Study: Hydrogen Chains

One-dimensional hydrogen chains serve as a benchmark for strongly correlated systems. Recent transferable neural wavefunction approaches achieved energies of -565.24(2) mHa per atom in the thermodynamic limit, slightly outperforming previous DeepSolid results at approximately 1/50 of the computational cost [10]. This demonstrates how advanced WFT methods, when optimized for transferability, can dramatically reduce the expense of high-accuracy simulations.

Excited-State Dipole Moments

The accuracy of excited-state properties reveals significant methodological divides. CCSD produces excited-state dipole moments with approximately 10% average relative error, while common DFT functionals like PBE0 and B3LYP show errors around 60%, typically overestimating dipole magnitudes [8]. The ΔSCF approach offers advantages for certain doubly-excited states but suffers from DFT's inherent limitations, particularly for charge-transfer states [8].

Emerging Hybrid and Transferable Approaches

Multiconfiguration Pair-Density Functional Theory (MC-PDFT)

MC-PDFT represents a hybrid approach that combines the multiconfigurational wavefunction of WFT with the efficiency of density functional theory. By using a multiconfigurational wavefunction to capture static correlation and a density functional to account for dynamic correlation, MC-PDFT achieves high accuracy without the steep computational cost of advanced WFT methods [11].

The recently developed MC23 functional incorporates kinetic energy density, enabling more accurate description of electron correlation. This advancement improves performance for spin splitting, bond energies, and multiconfigurational systems compared to previous MC-PDFT and KS-DFT functionals, making it particularly valuable for transition metal complexes and catalytic processes relevant to pharmaceutical development [11].

Transferable Neural Wavefunctions

A groundbreaking development in WFT is the creation of transferable neural network wavefunctions that can be optimized across multiple systems. Traditional DL-VMC requires optimizing a new neural network for each system, making studies of solids—which require numerous calculations across different geometries, boundary conditions, and supercell sizes—prohibitively expensive [10].

By training a single ansatz to represent wavefunctions for multiple system variations, researchers demonstrated optimization steps can be reduced by a factor of 50 when transferring from 32-electron to 108-electron supercells of LiH [10]. This transferability approach enables:

- Accurate extrapolation to the thermodynamic limit

- Denser twist averaging to reduce finite-size effects

- Rapid fine-tuning for new systems or larger supercells

Transferable Neural Wavefunction Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Quantum Simulations

| Tool/Resource | Function | Application Context |

|---|---|---|

| Quantum Many-Body Theories | Provide benchmark results by solving Schrödinger equation directly | Training data for machine-learned functionals; small system benchmarks [7] |

| Density Functional Approximations | Describe electron interactions with varying accuracy | Balancing computational cost and accuracy for large systems [11] [7] |

| Neural Network Wavefunctions | Flexible ansatze for representing complex quantum states | High-accuracy calculations for molecules and solids [10] |

| Quantum Computers | Test quantum foundations; potentially exponential speedup for quantum chemistry | Testing quantumness via PBR tests; future applications in drug discovery [12] [13] |

| Supercomputing Resources | Provide computational power for demanding quantum simulations | National labs allocate ~1/3 of time to materials and chemical reactions [7] |

Computational Method Selection Guide

Future Directions and Implications for Drug Development

The UN's declaration of 2025 as the International Year of Quantum Science and Technology highlights the growing importance of these fields in addressing global challenges [11] [13]. For pharmaceutical researchers, several developments are particularly promising:

Machine Learning-Augmented Simulations: Researchers at the University of Michigan developed a machine learning approach to infer the exchange-correlation functional by inverting the DFT problem. Their method achieved third-rung DFT accuracy at second-rung computational cost, potentially offering significant speedups for drug discovery simulations [7].

Quantum Computer Validation: Recent tests on IBM quantum computers used the PBR theorem to verify the "quantumness" of small qubit systems [12]. While currently limited by noise, such validation techniques could ensure the reliability of future quantum simulations for molecular systems, potentially revolutionizing in silico drug design.

Methodological Cross-Fertilization: The convergence of WFT and DFT approaches through methods like MC-PDFT and transferable neural wavefunctions suggests a future where researchers can select from a continuum of methods tailored to their specific accuracy requirements and computational resources.

For drug development professionals, these advances translate to more reliable predictions of drug-receptor interactions, more accurate modeling of metabolic pathways, and accelerated screening of candidate compounds through increasingly trustworthy computational prescreening.

Density Functional Theory (DFT) stands as a cornerstone computational method in quantum chemistry and materials science, enabling the investigation of electronic structures in atoms, molecules, and condensed phases. Its popularity stems from a favorable balance of computational cost and accuracy, positioning it between highly accurate but expensive wave function-based methods and faster but less reliable classical force fields. This guide provides a comparative analysis of DFT's performance against alternative computational methods, detailing its inherent trade-offs through structured experimental data and protocols relevant to researchers and drug development professionals.

Density Functional Theory is a computational quantum mechanical modelling method used to investigate the electronic structure of many-body systems, such as atoms, molecules, and condensed phases. Its fundamental principle, derived from the Hohenberg-Kohn theorems, is that all properties of a multi-electron system can be determined from its electron density, a function of just three spatial coordinates, rather than the more complex many-body wave function [14]. This simplification is the source of both its efficiency and its limitations. In the context of wave function theory benchmarks, DFT serves as a pragmatic workhorse, often providing satisfactory accuracy for a wide range of applications at a fraction of the computational cost of more sophisticated ab initio methods. Its applications span from solid-state physics to drug design, where it helps elucidate molecular interactions, reaction mechanisms, and material properties [15] [16]. However, the accuracy of any given DFT calculation is critically dependent on the approximation used for the exchange-correlation functional—the term that encapsulates all non-classical electron-electron interactions. This dependency creates a landscape of accuracy trade-offs, which this guide will explore in detail.

Theoretical Foundations and Methodological Comparison

Core Principles of DFT

DFT operates on the foundation laid by the Kohn-Sham equations, which reformulate the intractable many-body problem of interacting electrons into a tractable problem of non-interacting electrons moving in an effective potential [14]. This potential includes the external potential and the effects of Coulomb interactions between electrons, namely exchange and correlation. The accuracy of a DFT calculation is almost entirely governed by how well this exchange-correlation functional is approximated. The self-consistent field (SCF) method is typically employed, iteratively optimizing the Kohn-Sham orbitals until convergence is achieved, yielding ground-state electronic structure parameters [15].

Comparative Analysis of Computational Methods

The table below benchmarks DFT against other prominent quantum chemical and computational methods, highlighting its position in the accuracy-efficiency spectrum.

| Computational Method | Theoretical Scaling | Key Strengths | Key Limitations | Typical System Size |

|---|---|---|---|---|

| Density Functional Theory (DFT) | O(N³) | Good balance of speed/accuracy; Solid-state properties; Reaction pathways [14] | Exchange-correlation error; Van der Waals forces; Band gaps [14] | Hundreds to thousands of atoms [17] |

| Hartree-Fock (HF) | O(N⁴) | Simple wave function; No self-interaction error | Lacks electron correlation; Poor thermochemistry | Dozens of atoms |

| Post-Hartree-Fock Methods (e.g., CCSD(T)) | O(N⁷) or higher | "Gold standard" for small molecules; High accuracy [14] | Extremely high computational cost | A few dozen atoms |

| Neural Network Potentials (NNPs) | ~O(N) | Near-DFT accuracy; High efficiency for MD [18] | Requires large training datasets; Transferability issues [18] | Millions of atoms [18] |

| Classical Force Fields | O(N²) | Very fast; Largest system sizes | No electronic structure; Poor for reactions [18] | Millions of atoms |

This comparison illustrates DFT's role as a versatile and powerful method for systems where chemical bonding and electronic structure are important, but where system size precludes the use of more accurate wave function-based methods.

Accuracy Trade-offs: The Jacob's Ladder of DFT

The pursuit of more accurate DFT functionals is often described as climbing "Jacob's Ladder," where each rung represents a higher tier of functional complexity and, ideally, accuracy. The following table details the performance of different rungs on key chemical properties, with errors benchmarked against high-level wave function theory or experimental data.

| Functional Type | Representative Examples | Atomization Energy Error (eV) | Band Gap Error (eV) | Reaction Barrier Error (eV) | Recommended Use Cases |

|---|---|---|---|---|---|

| Local Density Approximation (LDA) | SVWN | 0.5 - 1.0 [14] | ~50% Underestimation [14] | High | Simple metals, crystal structures [15] |

| Generalized Gradient Approximation (GGA) | PBE, BLYP | 0.2 - 0.5 | ~40% Underestimation | Moderate | Molecular properties, hydrogen bonding [15] |

| Meta-GGA | SCAN | 0.1 - 0.3 | ~30% Underestimation | Moderate | Atomization energies, chemical bonds [15] |

| Hybrid GGA | B3LYP, PBE0 | 0.1 - 0.2 | ~30% Underestimation | Lower | Reaction mechanisms, molecular spectroscopy [15] [19] |

| Machine Learning Functional | Skala (Microsoft) | ~0.1 (for small molecules) [20] | Not Fully Validated | Not Fully Validated | Small molecule energies [20] |

Experimental Protocol for Functional Benchmarking: The quantitative errors listed are typically determined through a standard protocol. A set of molecules with well-established experimental or high-level ab initio (e.g., CCSD(T)) data is selected. For each functional, properties like atomization energies (from total energy calculations), band gaps (from the difference between HOMO and LUMO energies in solids), and reaction barrier heights (from transition state optimizations) are computed. The mean absolute error (MAE) across the benchmark set is then reported, providing a quantitative measure of the functional's performance for that property [20].

Experimental Protocols and Workflows

A Standard DFT Workflow in Pharmaceutical Research

The application of DFT in drug formulation design follows a systematic workflow to ensure reliability and relevance to physiological conditions [15].

Detailed Methodology:

- System Definition and Geometry Optimization: The molecular structure of the drug molecule, excipient, or complex is built and subjected to a geometry optimization calculation. This process minimizes the total energy of the system with respect to the nuclear coordinates, resulting in a stable equilibrium structure. In pharmaceutical studies, this is often performed with the hybrid functional B3LYP and the 6-31G(d,p) basis set [19].

- Frequency Calculation: A vibrational frequency analysis is conducted on the optimized geometry. This serves two critical purposes: confirming that a true minimum (no imaginary frequencies) has been found, and providing thermodynamic properties like zero-point vibrational energy, entropy, and heat capacity [19].

- Electronic Property Analysis: Single-point energy calculations are performed on the optimized structure to extract electronic properties. Key descriptors include the energies of the Highest Occupied and Lowest Unoccupied Molecular Orbitals (HOMO-LUMO), which gauge chemical reactivity, Molecular Electrostatic Potential (MEP) maps for identifying electrophilic and nucleophilic sites, and Fukui functions for predicting reaction sites [15].

- Solvation Modeling: To simulate physiological conditions, solvation effects are incorporated using implicit solvation models like COSMO or SMD. These models treat the solvent as a continuous dielectric medium, providing critical corrections to energies and properties for processes in solution [15].

- QSPR Model Development: The DFT-derived descriptors (e.g., HOMO-LUMO gap, dipole moment) are correlated with experimental biological activities or physicochemical properties using Quantitative Structure-Property Relationship (QSPR) models, such as curvilinear regression, to predict the behavior of new drug candidates [19].

Workflow for Hybrid Functional Calculations on Large Systems

A cutting-edge protocol combines machine learning with DFT to overcome the high computational cost of hybrid functionals for large systems, enabling calculations on over ten thousand atoms [17].

Detailed Methodology:

- Input Structure: The atomic coordinates of the large system (e.g., a twisted van der Waals material like bilayer graphene or MoS₂) are defined.

- Machine Learning Hamiltonian: The DeepH method is applied. This model learns a mapping from the local atomic environment to the Hamiltonian of the system from a limited set of training data. Once trained, it can predict the Hamiltonian for new structures, bypassing the need for the most expensive part of the DFT calculation.

- DFT Software Integration: The machine-learned Hamiltonian is fed into a DFT software package like HONPAS.

- Hybrid Functional Calculation: The HSE06 hybrid functional calculation is performed. Because the Hamiltonian is provided by the ML model, the self-consistent field (SCF) iterations are significantly accelerated or bypassed, drastically reducing computation time.

- Output: The result is a highly accurate electronic structure, including properties like band gaps, which are traditionally poorly described by standard DFT but are crucial for predicting material behavior [17].

The Scientist's Toolkit: Essential Research Reagents and Materials

In computational chemistry, the "research reagents" are the software, functionals, and basis sets used to conduct experiments in silico. The table below details key components of a modern DFT toolkit for researchers in drug development and materials science.

| Tool Category | Specific Tool / Functional | Primary Function | Key Considerations |

|---|---|---|---|

| Exchange-Correlation Functional | B3LYP | Hybrid functional for general organic molecules and reaction mechanisms [19]. | Often the default; good for organic chemistry but can struggle with dispersion forces. |

| Exchange-Correlation Functional | HSE06 | Hybrid functional for solid-state materials; provides improved band gaps [17]. | More computationally expensive than GGA functionals. |

| Exchange-Correlation Functional | Skala (ML) | Machine-learned functional for high accuracy on small molecule energies [20]. | Currently limited to small molecules; performance on metals/solids is uncertain [20]. |

| Basis Set | 6-31G(d,p) | A double-zeta basis set with polarization functions on all atoms [19]. | A common, reliable choice for geometry optimizations of drug-like molecules. |

| Basis Set | def2-TZVP | A larger triple-zeta basis set for higher-accuracy single-point energy calculations. | More accurate but computationally demanding. |

| Software Package | HONPAS | DFT software specialized in linear-scaling and hybrid functional calculations [17]. | Effective for large systems when combined with ML methods like DeepH [17]. |

| Software Package | BIOVIA Materials Studio | Integrated modeling environment with a DMol³ module for DFT [19]. | User-friendly GUI; widely used in industry and academia for drug and material design. |

| Solvation Model | COSMO | Implicit solvation model to simulate the effect of a solvent environment [15]. | Critical for modeling drug behavior in physiological conditions. |

Density Functional Theory maintains its status as an indispensable computational method by navigating a careful balance between accuracy and computational feasibility. Its performance, while not universally superior to all alternatives, provides the broadest utility across chemistry, materials science, and drug discovery. The ongoing integration of machine learning, as seen in the development of new functionals like Skala and workflows like DeepH-HONPAS, is pushing the boundaries of this trade-off, enabling higher accuracy for larger systems than ever before. For the researcher, the critical task remains the informed selection of functional, basis set, and methodology that is most appropriate for the specific scientific question at hand, leveraging DFT's strengths while consciously mitigating its known weaknesses.

Key Physical Properties and Chemical Systems for Benchmarking

Benchmarking quantum chemical methods is essential for validating their accuracy and establishing their applicability across diverse chemical systems. By comparing theoretical predictions to reliable experimental or high-level theoretical reference data, researchers can identify methodological limitations and guide future development. This guide provides a structured overview of key physical properties and representative chemical systems crucial for comprehensive benchmarking studies, with a specific focus on wave function theory (WFT) and density functional theory (DFT) methodologies. The comparative data and protocols presented herein serve as a foundation for selecting appropriate computational methods across various research domains, from materials science to drug development.

Benchmarking Chemical Systems and Properties

Core Chemical Systems for Benchmarking

Table 1: Essential Chemical Systems for Method Benchmarking

| Chemical System | Key Benchmarking Properties | Physical Significance | Recommended Methods |

|---|---|---|---|

| Transition Metal Complexes [2] | Spin-state energetics, electronic spectra, binding energies | Strong electron correlation, multi-reference character, catalytic activity | CCSD(T), CASPT2, MRCI, Double-hybrid DFT |

| Hydrogen-Bonded Assemblies [3] | Binding energies, equilibrium geometries, interaction energies | Non-covalent interactions, molecular self-organization, supramolecular chemistry | CCSD(T)/CBS, B97M-D3(BJ), Range-separated hybrids |

| Strongly Correlated Materials [21] | Ground state energy, band gap, magnetic order | Strong electron correlation, Mott insulation, superconductivity | VQE, DFA 1-RDMFT, Hybrid DFT |

| Metal-Organic Frameworks [22] | Lattice parameters, pore descriptors, elastic moduli | Porosity, gas storage, separation, chemical diversity | PBE-D2, PBE-D3, vdW-DF2 |

| Organic Molecules & Chromophores [8] [23] | Excited-state dipole moments, oscillator strengths, absorption energies | Charge transfer, optical properties, photochemistry | ΔSCF, TDDFT (CAM-B3LYP), CC2, CCSD |

Key Physical Properties for Assessment

Table 2: Critical Physical Properties for Benchmarking Studies

| Property Category | Specific Properties | Experimental Reference | High-Level Theory Reference |

|---|---|---|---|

| Energetics [2] [3] | Spin-state energy splitting (ΔEHL), Reaction enthalpies, Hydrogen bond energies | Spin crossover enthalpies [2], Combustion calorimetry [4] | CCSD(T)/CBS [2] [3] |

| Electronic Structure [21] [8] [23] | Excited-state energies/dipoles, Oscillator strengths, Charge distributions | Electronic spectroscopy [2] [23] | CC3, QR-CCSD, EOM-CCSD [23] |

| Structural Parameters [22] | Lattice constants, Bond lengths, Pore diameter, Unit cell volume | X-ray crystallography [22] | DFT with dispersion corrections [22] |

| Wavefunction Quality [21] | State fidelity, Correlation energy recovery, Multi-reference character | N/A | Full Configuration Interaction (FCI) |

Detailed Benchmarking Protocols

Protocol for Spin-State Energetics in Transition Metal Complexes

The accurate prediction of spin-state energetics is critical for modeling catalytic processes and inorganic systems.

- Reference Data Source: The SSE17 benchmark set provides experimental reference data for 17 first-row transition metal complexes (FeII/III, CoII/III, MnII, NiII). Values are derived from spin-crossover enthalpies or energies of spin-forbidden absorption bands, back-corrected for vibrational and environmental effects [2].

- Target Property: Adiabatic or vertical energy splitting between high-spin and low-spin states.

- Computational Workflow:

- Geometry Optimization: Optimize the molecular structure of each spin state using a robust method (e.g., B3LYP-D3(BJ)/def2-SVP).

- Single-Point Energy Calculation: Perform high-level energy calculations on the optimized geometries.

- Energy Difference Calculation: Compute ΔEHL = EHigh-Spin - ELow-Spin.

- Methodology Comparison:

- Gold Standard: Coupled-cluster CCSD(T) demonstrates exceptional accuracy, with a mean absolute error (MAE) of 1.5 kcal mol−1 and a maximum error of -3.5 kcal mol−1 against the SSE17 set [2].

- Double-Hybrid DFT: Functionals like PWPB95-D3(BJ) and B2PLYP-D3(BJ) perform well, with MAEs < 3 kcal mol−1.

- Standard Hybrid DFT: Popular functionals for spin states such as B3LYP*-D3(BJ) and TPSSh-D3(BJ) show significantly larger errors, with MAEs of 5–7 kcal mol−1 and maximum errors exceeding 10 kcal mol−1 [2].

Protocol for Non-Covalent Interactions in Hydrogen-Bonded Dimers

Accurate description of hydrogen bonding is vital for understanding biological systems and supramolecular assembly.

- Reference Data Source: A benchmark set of 14 quadruply hydrogen-bonded dimers with coupled-cluster bonding energies extrapolated to the complete basis set (CBS) limit, with electron correlation contributions extrapolated using a continued-fraction approach [3].

- Target Property: Hydrogen bond energy (binding energy).

- Computational Workflow:

- Monomer Preparation: Optimize the geometry of each isolated monomer.

- Dimer Optimization: Optimize the geometry of the hydrogen-bonded dimer.

- Binding Energy Calculation: Compute the counterpoise-corrected interaction energy: Ebind = Edimer - Emonomer A - Emonomer B.

- Basis Set Superposition Error (BSSE): Apply the Boys-Bernardi counterpoise correction.

- Methodology Comparison:

- Gold Standard: CCSD(T)/CBS.

- Top-Performing DFAs: The Berkeley family of functionals (especially B97M-V with empirical D3(BJ) dispersion correction) and some Minnesota 2011 functionals with added dispersion corrections show the best performance [3].

- General Trend: Density functional approximations (DFAs) that lack an explicit dispersion correction typically perform poorly for hydrogen bonding.

Protocol for Excited-State Properties of Organic Chromophores

Benchmarking excited-state properties is key for designing materials for optoelectronics, sensors, and phototherapy.

- Reference Data Source:

- For dipole moments, reference data can be sourced from high-level wave function theory (CCSD, ADC(2)) or experimental measurements [8].

- For excited-state absorption, the quadratic-response CC3 (QR-CC3) method provides reference oscillator strengths for 53 transitions between 71 excited states in 23 molecules [23].

- Target Properties: Excited-state dipole moment, vertical transition energy, oscillator strength for excited-state absorption (ESA).

- Computational Workflow:

- Ground-State Optimization: Optimize the ground-state geometry.

- Excited-State Calculation: Employ the target method (e.g., ΔSCF, TDDFT, CC2) to calculate excited-state properties.

- For ΔSCF Dipole Moments: Use the electron density from the converged non-Aufbau determinant to compute the property [8].

- For ESA: Calculate the transition energy and oscillator strength between two excited states (Si → Sj) [23].

- Methodology Comparison:

- Excited-State Dipole Moments:

- ΔSCF: Offers reasonable accuracy for doubly excited states but suffers from DFT overdelocalization error for charge-transfer states [8].

- TDDFT: CAM-B3LYP yields an average relative error of ~28%, while PBE0 and B3LYP show larger errors (~60%) and tend to overestimate dipole magnitudes [8].

- Wave Function Methods: CCSD provides the most accurate results, with average relative errors around 10% [8].

- Excited-State Absorption:

- Excited-State Dipole Moments:

The Scientist's Toolkit

Table 3: Essential Computational Methods and Resources for Benchmarking

| Tool Category | Specific Examples | Primary Function | Applicability Notes |

|---|---|---|---|

| High-Accuracy WFT [2] [3] [23] | CCSD(T), CC3, CASPT2, MRCI+Q | Provides reference-level energies and properties | High computational cost; applicable to small/medium systems |

| Robust Density Functionals [2] [3] [22] | B97M-V/D3(BJ), PWPB95-D3(BJ), PBE-D3, vdW-DF2 | Balanced accuracy/cost for diverse properties and systems | Performance is system-dependent; careful selection required |

| Wave Function Analysis | Multi-reference diagnostics, Natural Bond Orbital (NBO) analysis | Quantifies strong correlation and characterizes chemical bonding | Guides method selection (e.g., single- vs multi-reference) |

| Reference Datasets [2] [23] | SSE17, QUEST | Curated experimental or theoretical data for validation | Critical for objective method evaluation |

| Specialized Algorithms [21] [8] | Variational Quantum Eigensolver (VQE), ΔSCF (MOM, IMOM) | Targets specific problems like strong correlation or excited states | Can access states challenging for conventional methods |

This guide synthesizes key benchmarking practices for wave function theory and density functional theory. The data demonstrates that method performance is highly system-dependent. For transition metal spin states, CCSD(T) and double-hybrid functionals are superior, while for non-covalent interactions, dispersion-corrected functionals like B97M-V are essential. For excited-state properties, the choice between ΔSCF and TDDFT involves trade-offs, with CAM-B3LYP often representing a robust TDDFT choice. The growing availability of well-curated experimental and theoretical benchmark sets, such as SSE17 and those derived from QUEST, provides an critical resource for the continued development and validation of more accurate and broadly applicable quantum chemical methods.

The relentless pursuit of high-accuracy reference data for quantum chemical methods constitutes a cornerstone of computational chemistry and materials science. Such benchmarks are indispensable for validating existing electronic structure methods and guiding the development of new ones. Among the plethora of available computational approaches, Coupled Cluster (CC) and Quantum Monte Carlo (QMC) methods have emerged as leading contenders for generating reference-quality data, particularly for systems where traditional density functional theory (DFT) exhibits significant limitations. This guide provides a comprehensive objective comparison of these two advanced families of methods, framing them within the broader context of wave function theory and density functional theory benchmark research. The performance of CC and QMC is critically evaluated across key chemical properties, with supporting experimental data summarized for direct comparison. Detailed methodologies are provided to empower researchers to implement these protocols, and essential research tools are catalogued to facilitate adoption within the scientific community. As the demand for reliable predictions in complex chemical systems—such as drug candidate interactions and catalytic materials—continues to grow, understanding the respective strengths and limitations of these "platinum standard" methods becomes increasingly crucial.

The CC and QMC approaches offer distinct pathways to solving the many-electron Schrödinger equation, each with its unique theoretical foundations and practical considerations.

Coupled Cluster (CC) Theory, particularly the CCSD(T) variant which includes single, double, and perturbative triple excitations, is often dubbed the "gold standard" of quantum chemistry for single-reference systems. Its reputation stems from exceptional accuracy for typical main-group molecular systems at equilibrium geometries. The computational cost of CC methods, however, scales steeply with system size (often as N⁷ for CCSD(T)), which can limit practical application to larger molecules relevant in drug development [24].

Quantum Monte Carlo (QMC) encompasses a suite of stochastic methods, with Variational Monte Carlo (VMC) and Diffusion Monte Carlo (DMC) being most prominent for electronic structure calculations. Unlike CC, QMC scales more favorably with system size (typically as N³ to N⁴), making it potentially suitable for larger systems. A significant challenge in QMC is the fixed-node error, which arises from the approximate nodal surface of the trial wave function. The development of multi-determinant wave functions has demonstrated impressive performance in systematically reducing this error, achieving chemical accuracy for first-row dimers and the G1 test set [25]. In fact, when compared to traditional quantum chemistry methods like MP2, CCSD(T), and various DFT approximations, QMC shows marked improvement, with only explicitly-correlated CCSD(T) with large basis sets producing more accurate results [25].

Table 1: Fundamental Comparison of CC and QMC Methodologies

| Feature | Coupled Cluster (CC) | Quantum Monte Carlo (QMC) |

|---|---|---|

| Theoretical Basis | Deterministic, wave-function expansion | Stochastic, random sampling of wave function |

| Computational Scaling | High (e.g., N⁷ for CCSD(T)) | Moderate (N³ to N⁴) |

| Key Strength | High accuracy for single-reference systems | Ability to handle strong correlation and larger systems |

| Primary Limitation | Cost prohibitive for large systems; struggles with strong static correlation | Fixed-node error; more complex implementation |

| System Size Suitability | Small to medium molecules | Medium to large systems |

Performance Comparison: Accuracy Across Chemical Properties

Benchmarking studies reveal nuanced performance differences between CC and QMC methods across various chemical properties. The assessment of 240 density functional approximations against high-level CASPT2 reference data for metalloporphyrins highlights the critical need for reliable benchmarks in transition metal chemistry, where many DFT functionals fail to achieve chemical accuracy by a significant margin [26].

For main-group chemistry and equilibrium properties, CCSD(T) with large basis sets often provides exceptional accuracy. However, QMC with multi-determinant expansions has demonstrated the potential to match or even surpass this accuracy. In systematic applications to the G1 test set and first-row dimers, large-scale multi-determinant QMC achieved chemical accuracy, outperforming not only standard DFT approximations but also conventional CC calculations without explicit correlation [25].

In systems with significant strong correlation or multi-reference character—such as transition metal complexes, bond breaking, and excited states—the limitations of standard CC methods become more apparent. In these regimes, QMC exhibits a distinct advantage due to its ability to accurately capture strong electron correlation effects without the prohibitive computational scaling of multi-reference CC methods. QMC has been successfully applied as a benchmarking tool for density functional theory in strongly inhomogeneous electron gases, providing insights that go beyond the local density approximation (LDA) and generalized gradient approximation (GGA) [27].

Table 2: Performance Comparison for Key Chemical Properties

| Chemical Property | Coupled Cluster (CC) Performance | Quantum Monte Carlo (QMC) Performance | Supporting Evidence |

|---|---|---|---|

| Atomization Energies | Excellent with large basis sets & perturbative triples | Excellent with multi-determinant expansions; can surpass CC | Near chemical accuracy for G1 set [25] |

| Molecular Geometries | Highly accurate | Accurate, with slightly larger deviations than CC | |

| Transition Metal Spin States | Challenging for single-reference variants | More robust handling of near-degeneracy | Outperforms most DFT functionals [26] |

| Excitation Energies | Requires EOM-CC variants; good accuracy | Promising for direct excitation calculation | |

| Binding Energies | Good for non-covalent with corrections | Accurate for various binding types | Used to benchmark DFT for porphyrin binding [26] |

Experimental Protocols: Implementation and Benchmarking Guidelines

Rigorous benchmarking requires careful experimental design and implementation. The following protocols provide guidelines for conducting reliable comparisons between CC, QMC, and other electronic structure methods.

Benchmarking Design Principles

Essential guidelines for computational method benchmarking emphasize the importance of defining clear purpose and scope, appropriate method selection, and careful dataset curation [28]. For neutral benchmarks aimed at comprehensive method comparison, inclusion of all relevant methods is ideal, though practical constraints may necessitate defining justified inclusion criteria. The selection of reference datasets should include both real data representing actual chemical systems and simulated data with known ground truth to enable quantitative error assessment. It is crucial to demonstrate that simulations accurately reflect relevant properties of real data through empirical summaries [28].

CC Method Implementation Protocol

- System Preparation: Generate molecular geometries using reliable experimental or theoretical structures.

- Baseline Calculation: Perform Hartree-Fock calculation with appropriate basis set.

- CC Calculation: Execute CCSD calculation, followed by perturbative triples (T) correction for CCSD(T).

- Basis Set Selection: Employ correlation-consistent basis sets (cc-pVDZ, cc-pVTZ, cc-pVQZ) and perform extrapolation to complete basis set limit.

- Core Consideration: Apply frozen-core approximation for heavy elements, but include core correlation for highest accuracy.

- Relativistic Effects: Incorporate scalar relativistic corrections for systems containing heavy elements.

QMC Method Implementation Protocol

- Trial Wave Function Preparation: Generate multi-determinant wave functions from preliminary DFT or Hartree-Fock calculations. The quality of the trial wave function is critical for controlling fixed-node error [25].

- VMC Optimization: Optimize trial wave function parameters using variational Monte Carlo to minimize energy.

- DMC Calculation: Perform diffusion Monte Carlo calculations with fixed-node approximation.

- Time Step Testing: Conduct careful time step extrapolation to eliminate time step bias.

- Population Control: Implement walker population controls to manage statistical uncertainties.

- Analysis: Perform statistical analysis of energies and properties with proper error estimation.

Diagram 1: Benchmarking workflow for CC and QMC methods

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Successful implementation of CC and QMC methodologies requires both specialized software tools and conceptual understanding of key components. The following table catalogs essential "research reagent solutions" for electronic structure benchmarking.

Table 3: Essential Research Reagent Solutions for CC and QMC Benchmarking

| Research Reagent | Function/Purpose | Implementation Examples |

|---|---|---|

| Multi-Determinant Expansions | Reduces fixed-node error in QMC; improves accuracy for multi-reference systems | CIPSI (Configuration Interaction using Perturbative Selectivity) selections; CAS-type wave functions [25] |

| Correlation-Consistent Basis Sets | Systematic improvement towards complete basis set limit in CC calculations | cc-pVXZ (X=D,T,Q,5) series; aug-cc-pVXZ for diffuse functions |

| Pseudopotentials | Enables QMC studies of systems with heavy elements by replacing core electrons | Burkatzki-Filippi-Dolg (BFD) pseudopotentials; correlation-consistent pseudopotentials |

| Jastrow Factors | Describes electron correlation effects in QMC trial wave functions | Three-body electron-electron-nucleus correlation functions [25] |

| Perturbative Triples Corrections | Adds connected triple excitations to CC methods at reduced computational cost | (T) correction in CCSD(T); ΛCCSD(T) for properties |

| Stochastic Optimization Methods | Optimizes many parameters in QMC trial wave functions | Linear method; stochastic reconfiguration |

The synergy between CC and QMC methods is particularly powerful. While CC provides highly accurate references for systems within its capabilities, QMC offers a complementary approach that remains feasible for larger systems and those with stronger correlation. As benchmarking practices in quantum chemistry continue to evolve, the principles of rigorous comparison—including comprehensive method selection, diverse dataset curation, and multiple evaluation metrics—will ensure that these methods fulfill their potential as emerging platinum standards [28]. For drug development professionals and researchers, this combined approach offers a robust framework for validating computational models against reliable reference data, ultimately enhancing the predictive power of computational chemistry in pharmaceutical applications.

From Theory to Practice: Method Selection for Real-World Applications

Benchmarking Thermochemical Accuracy for Organic Molecules

Accurate prediction of thermochemical properties is a cornerstone of computational chemistry, with direct implications for drug design, reaction engineering, and materials science. For organic molecules, even marginal errors in quantities like formation enthalpies or atomization energies can significantly impact the reliability of virtual screening and mechanistic studies [29] [30]. Within the broader context of wave function theory (WFT) and density functional theory (DFT) benchmarks research, this guide objectively compares the performance of contemporary quantum chemical methods for organic thermochemistry. We synthesize findings from recent high-profile benchmarking studies to provide researchers with evidence-based recommendations for method selection.

Performance Comparison of Quantum Chemical Methods

Total Atomization Energy Benchmarks

Total Atomization Energy (TAE) represents the energy required to separate a molecule into its constituent atoms, serving as a rigorous stress test for quantum chemical methods due to the complete absence of error cancellation [30]. The GDB9-W1-F12 database, comprising 3,366 molecules with up to eight non-hydrogen atoms at the CCSD(T)/CBS level, provides a robust benchmark for assessing functional performance [30].

Table 1: Performance of Select DFT Functionals for Total Atomization Energies (GDB9-W1-F12 Database)

| Functional | Jacob's Ladder Rung | Mean Absolute Deviation (kcal mol⁻¹) |

|---|---|---|

| B97-D | Pure GGA | 10.0 |

| B97M-V | meta-GGA | 2.9 |

| CAM-B3LYP-D4 | Hybrid GGA | 4.0 |

| M06-2X | Hybrid meta-GGA | 1.8 |

As shown in Table 1, hybrid meta-GGA functionals like M06-2X deliver the highest accuracy for TAEs, with a mean absolute deviation (MAD) of 1.8 kcal mol⁻¹ [30]. The meta-GGA B97M-V also shows strong performance (MAD 2.9 kcal mol⁻¹), establishing it as an excellent lower-cost alternative [30].

Enthalpy of Formation Benchmarks

Standard enthalpies of formation (ΔHf°) are critical for predicting reaction energies and stability. A comprehensive benchmark of 284 model chemistries, including semiempirical methods, DFT, and composite WFT approaches, provides extensive performance data [31].

Table 2: Performance of Selected Methods for Enthalpy of Formation Calculations

| Method | Class | Reported Accuracy (MAD, kcal mol⁻¹) | Key Characteristics |

|---|---|---|---|

| Recommended Composite Methods | |||

| CBS-QB3 | Composite WFT | ~1.5 (est.) | High-accuracy benchmark |

| G4(MP2) | Composite WFT | ~1.5 (est.) | Balanced cost/accuracy |

| Recommended DFT Functionals | |||

| B97M-V | meta-GGA | < 2.0 | Top performer for diverse properties |

| M06-2X | Hybrid meta-GGA | < 2.0 | Excellent for main-group thermochemistry |

| ωB97X-V | Hybrid meta-GGA | < 2.0 | Good all-around performance |

| Semiempirical Methods | |||

| GFN2-xTB | Semiempirical TB | ~3-5 | Very fast, reasonable accuracy |

| PM7 | Semiempirical | ~4-6 | Fast, parametrized for organics |

The benchmark indicates that composite WFT methods (e.g., CBS-QB3, G4(MP2)) achieve the highest accuracy, with MADs typically around 1.5 kcal mol⁻¹ [31]. Among DFT functionals, the top performers for ΔHf° calculations include B97M-V, M06-2X, and ωB97X-V, all achieving average errors below 2 kcal mol⁻¹ [30] [31]. These functionals successfully balance the treatment of dynamic and static correlation.

Performance for Non-Covalent and Specialized Interactions

Non-covalent interactions (NCIs) profoundly influence molecular recognition in drug binding. The QUID (QUantum Interacting Dimer) benchmark assesses methods on 170 complex dimer systems modeling ligand-pocket interactions [29].

For NCIs, robust "platinum standard" energies are established by achieving tight agreement (0.5 kcal/mol) between two fundamentally different high-level methods: local natural orbital coupled cluster (LNO-CCSD(T)) and fixed-node diffusion Monte Carlo (FN-DMC) [29]. Several dispersion-inclusive DFT approximations (e.g., B97M-V, PBE0+MBD) provide accurate NCI energy predictions, though their atomic van der Waals forces can show significant directional deviations [29]. Semiempirical methods and force fields often struggle with out-of-equilibrium geometries common in binding processes [29].

For specific interactions like hydrogen bonding, specialized benchmarks are essential. A 2025 study on 14 quadruple hydrogen-bonded dimers identified B97M-V with D3(BJ) dispersion correction as the top-performing functional, outperforming 152 other DFAs [3].

Experimental Protocols for Benchmarking

The W1-F12 Composite Method Protocol

The W1-F12 protocol provides CCSD(T)/CBS reference data with sub-chemical accuracy (<1 kcal/mol) for benchmarking [30].

Workflow Description:

- Geometry Optimization and Validation: Molecular structures are first optimized at the B3LYP-D3(BJ)/def2-TZVP level, followed by harmonic frequency calculations to confirm all real frequencies and establish the equilibrium structure [30].

- Hartree-Fock (HF) Energy Extrapolation: The HF energy component is extrapolated to the complete basis set (CBS) limit using VDZ-F12 and VTZ-F12 basis sets [30].

- CCSD-F12b Correlation Extrapolation: The CCSD-F12b correlation energy is similarly extrapolated to the CBS limit using VDZ-F12 and VTZ-F12 basis sets [30].

- (T) Correlation Extrapolation: The perturbative triples (T) contribution is extrapolated using the aug'-cc-pVDZ and aug'-cc-pVTZ basis sets [30].

- Core-Valence (CV) Correction: A core-valence correction is computed at the CCSD(T)/cc-pCVTZ level to account for inner-shell electron effects [30].

- Scalar Relativistic (SR) Correction: A scalar relativistic correction is included via the Douglas-Kroll-Hess Hamiltonian [30].

- TAE Calculation: The total atomization energy is derived from the final composite energy and the energies of the constituent atoms [30].

High-Throughput DFT Benchmarking Workflow

Automated frameworks like DREAMS (DFT-based Research Engine for Agentic Materials Screening) enable systematic benchmarking with minimal human intervention [32].

Workflow Description:

- Task Definition: The system receives the benchmarking task, including the set of molecules and target properties (e.g., formation enthalpies) [32].

- Plan Generation: A planner LLM agent devises an execution strategy, including method selection and workflow steps [32].

- Structure Preparation: A specialized agent generates physically reasonable initial 3D molecular geometries [32].

- Parameter Convergence: A convergence agent systematically determines optimal DFT parameters (cutoff energy, k-point mesh) through iterative testing [32].

- HPC Execution: Calculations are submitted to high-performance computing clusters with appropriate resource allocation [32].

- Error Handling: A dedicated agent manages computational errors (SCF convergence, geometry optimization failures) through predefined recovery protocols [32].

- Data Analysis: Results are automatically extracted from output files and analyzed against reference data to determine method accuracy [32].

Table 3: Key Computational Resources for Thermochemical Benchmarking

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| Reference Datasets | ||

| GDB9-W1-F12 Database [30] | Reference Data | Provides 3,366 highly accurate CCSD(T)/CBS total atomization energies for benchmarking. |

| QUID Framework [29] | Reference Data | Offers 170 dimer interaction energies for validating non-covalent interactions. |

| Software & Tools | ||

| DREAMS Framework [32] | Automation Tool | Enables autonomous DFT calculations and benchmarking with error handling. |

| qmbench [33] | Benchmarking Portal | Provides challenges and datasets for testing quantum chemical methods. |

| Method Implementations | ||

| W1-F12 Theory [30] | Composite Method | Delivers near-exact reference energies for small organic molecules. |

| LNO-CCSD(T) [29] | Wave Function Method | Provides "gold standard" coupled cluster accuracy for larger systems. |

| FN-DMC [29] | Quantum Monte Carlo | Offers an alternative high-level benchmark method for validation. |

This comparison guide synthesizes current evidence on thermochemical accuracy for organic molecules. Composite WFT methods (W1-F12, CBS-QB3) and select double-hybrid DFT functionals provide the most reliable benchmarks for property prediction. For general applications, hybrid meta-GGA functionals like M06-2X and B97M-V offer an excellent balance of accuracy and computational feasibility, while recent neural network potentials show promise but require further validation for charge-dependent properties. Robust benchmarking requires careful attention to reference data quality, with emerging automated frameworks like DREAMS potentially reducing expertise barriers while maintaining high fidelity.

Accurate Modeling of Non-Covalent Interactions in Drug-like Systems

Non-covalent interactions (NCIs) are fundamental forces that govern the assembly of complex molecular architectures, including drug-like systems, without forming permanent chemical bonds. [34] Accurately modeling these interactions is a central challenge in computational chemistry and drug design. The field is characterized by a trade-off between the high accuracy of wave function theory (WFT) methods and the computational efficiency of Density Functional Theory (DFT). For researchers and drug development professionals, selecting the appropriate computational method is critical for reliable predictions of binding affinities, molecular stability, and reaction mechanisms. This guide provides a comparative analysis of current methodologies, software, and best practices for modeling NCIs in pharmaceutical contexts, framed within the broader thesis of WFT and DFT benchmark research.

Theoretical Foundations and Methodological Comparisons

The accurate computational prediction of molecular properties begins with solving the electronic Schrödinger equation. For systems with N particles, the wave function depends on 3N variables, making direct solutions impossible for more than a few particles. [35] This fundamental challenge has led to the development of two primary computational approaches:

- Wave Function Theory (WFT) Methods: These methods, such as Coupled-Cluster (CC) and Diffusion Quantum Monte Carlo (DQMC), aim to approximate the many-electron wave function itself. They are often considered highly accurate but are computationally demanding. Coupled-cluster theory using single, double, and perturbative triple particle-hole excitation operators (CCSD(T)) is often called the ‘gold standard’ of molecular quantum chemistry for weakly correlated systems. [36]

- Density Functional Theory (DFT): In 1964, Hohenberg and Kohn proved that the exact energy of a ground state of an electronic system can be predicted knowing only its electron density, an object dependent on just three variables for any system. [35] This is a much simpler approach than dealing with the full wave function. However, while an exact density functional exists, it is unknown, so one must use density functional approximations (DFAs). The work of John Perdew and others in designing robust DFAs has made DFT the main predictive computational tool in physics and materials science. [35]

The central dilemma in modern computational drug design is balancing the accuracy of WFT methods with the speed and scalability of DFT. Recent research highlights alarming discrepancies between predicted interaction energies for large molecules when using two of the most widely-trusted WFT theories: DMC and CCSD(T). [36] These discrepancies are large enough to cause qualitative differences in calculated material properties, with significant implications for drug design and functional materials discovery.

Performance Benchmarking of Computational Methods

Accuracy of Density Functional Approximations for Hydrogen Bonding

Hydrogen bonding is a critical non-covalent interaction in molecular self-organization and supramolecular structures. A 2025 benchmark study evaluated 152 different DFAs on their ability to reproduce highly accurate coupled-cluster hydrogen bonding energies for 14 quadruply hydrogen-bonded dimers. [3]

Table 1: Top-Performing Density Functional Approximations for Hydrogen Bonding Energies (2025 Benchmark)

| Density Functional Approximation (DFA) | Type / Family | Dispersion Correction | Reported Performance |

|---|---|---|---|

| B97M-V | Berkeley Functional | D3BJ | Best overall performance [3] |

| Other Berkeley Variants | Berkeley Functional | Various (D3BJ, etc.) | 8 variants in top 10 [3] |

| Minnesota 2011 Functionals | Minnesota Functional | Additional D3 | 2 functionals in top 10 [3] |

The study concluded that the B97M-V functional, with its non-local correlation functional replaced by an empirical D3BJ dispersion correction, was the best-performing DFA for these systems. [3] The dominance of Berkeley functionals and the critical role of empirical dispersion corrections highlight key trends in modern functional development aimed at improving accuracy for NCIs.

Comparative Performance of Wave Function Theory and DFT-Based Methods

The "gold standard" status of CCSD(T) has recently been scrutinized, particularly for large, polarizable molecules. A 2025 investigation revealed that CCSD(T) can overestimate noncovalent interaction energies in such systems, a phenomenon linked to its truncation of the triple particle-hole excitation operator. [36] This can lead to an "infrared catastrophe" in systems with very high polarizability, like metals, where the energy diverges.

Table 2: Method Performance for Non-Covalent Interaction Energies in Large Molecules

| Method | Theoretical Class | Key Findings | Computational Cost |

|---|---|---|---|

| CCSD(T) | WFT (Coupled-Cluster) | Overestimates interactions for large, polarizable molecules; "gold standard" status questioned for these systems. [36] | Very High |

| CCSD(cT) | WFT (Coupled-Cluster) | Includes higher-order terms to screen the (T) contribution; excellent agreement with DMC; averts infrared catastrophe. [36] | High |

| DFT (Top DFAs, e.g., B97M-V) | DFT | Offers a good balance of accuracy and speed for many systems; performance highly dependent on the chosen functional and dispersion correction. [3] | Medium |

| Diffusion Monte Carlo (DMC) | WFT (Stochastic) | Considered a highly reliable benchmark method; used to validate other approaches. [36] | Very High |

| Hybrid QM/MM Docking | Mixed Quantum/Classical | Outperforms classical docking for metalloproteins; comparable for covalent complexes; slightly lower success for standard non-covalent complexes. [37] | Medium to High |

The study found that using a modified approach, CCSD(cT), which includes selected higher-order terms, restored excellent agreement with DMC findings. [36] For the coronene dimer, the CCSD(cT) binding energy was nearly 2 kcal/mol closer to the DMC estimate than CCSD(T), achieving chemical accuracy (1 kcal/mol). This demonstrates that for large molecules, higher-order correlations beyond standard CCSD(T) are crucial for accuracy.

Software and Tools for Drug Discovery

The theoretical methods are implemented in a variety of software platforms that are essential for practical drug discovery applications.

Table 3: Key Software Tools for Computational Drug Discovery (2025 Landscape)

| Software / Platform | Primary Methodology | Key Features & Applications | Noted Considerations |

|---|---|---|---|

| Schrödinger | Physics-based simulations, ML, FEP [38] [39] | Comprehensive platform (Maestro); molecular dynamics, quantum mechanics, virtual screening. [38] | Higher licensing costs; complexity for beginners. [38] |

| OpenEye Cadence | Molecular modeling, toolkits [38] | Scalability for high-throughput screening; flexible, customizable toolkits. [38] | Steeper learning curve; can be resource-intensive. [38] |

| Cresset's Flare | QM/MM, FEP, MM/GBSA [39] | Protein-ligand modeling, free energy calculations, handling of different ligand charges. [39] | - |

| Attracting Cavities (AC) | Hybrid QM/MM Docking [37] | Models covalent binding, metal coordination, polarization; outperforms classical docking for metalloproteins. [37] | - |

| PLIP | Interaction Profiling [40] | Web server & tool for analyzing non-covalent interactions in protein structures; useful for docking prioritization. [40] | Free, open-source tool. [40] |

| Chemical Computing Group (MOE) | Molecular modeling, QSAR [39] | All-in-one platform for drug discovery; structure-based design, cheminformatics. [39] | - |

| deepmirror | Generative AI [39] | Augments hit-to-lead optimization; predicts protein-drug binding. [39] | - |