Beyond E=hf: Applying Planck's Constant in Modern Quantum Chemistry Calculations for Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on the practical application of Planck's constant (h) in quantum chemistry calculations.

Beyond E=hf: Applying Planck's Constant in Modern Quantum Chemistry Calculations for Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the practical application of Planck's constant (h) in quantum chemistry calculations. Moving beyond foundational theory, it explores how h serves as the fundamental quantum of action in computational methods like Density Functional Theory (DFT) and QM/MM, enabling precise modeling of electronic structures, binding affinities, and reaction mechanisms. The scope covers methodological implementation, troubleshooting of common challenges like computational cost and electron correlation, and validation techniques to achieve chemical accuracy, with a specific focus on applications in kinase inhibitor design, covalent drug discovery, and biomolecular simulations.

The Quantum Bedrock: Understanding Planck's Constant as the Action Quantum in Chemistry

The year 2025 marks the centenary of the formal development of matrix mechanics by Werner Heisenberg, a cornerstone of modern quantum mechanics, and has been declared the International Year of Quantum Science and Technology [1]. This celebration commemorates a transformative century in which quantum mechanics evolved from a series of revolutionary postulates into an indispensable framework for physical chemistry. The journey began with Max Planck's 1900 solution to the ultraviolet catastrophe, which introduced the radical concept that energy is emitted in discrete packets or "quanta" [1]. This foundational idea, later expanded by Albert Einstein's explanation of the photoelectric effect and Niels Bohr's quantum model of the atom, ultimately culminated in the sophisticated computational quantum chemistry methods that now empower researchers to predict molecular behavior with unprecedented accuracy.

At the heart of this quantum revolution lies Planck's constant (h = 6.626 × 10⁻³⁴ J·s), a fundamental parameter that quantifies the relationship between energy and frequency in the quantum realm [2] [1]. The equation ΔE = hν, which connects energy differences to electromagnetic frequency, provides the theoretical foundation for understanding molecular spectra, chemical bonding, and reaction dynamics. A century of development has transformed this basic relationship into powerful computational protocols that now achieve coupled-cluster theory [CCSD(T)] accuracy—considered the gold standard of quantum chemistry—for systems of increasing size and complexity, accelerating the discovery of novel molecules and materials for drug development and beyond [3].

Historical Foundations: From Theoretical Postulates to Chemical Applications

The development of quantum mechanics represented a fundamental shift from deterministic classical mechanics to a probabilistic description of matter at atomic and molecular scales [2]. The pivotal period of 1925-1926 witnessed the formulation of two equivalent but mathematically distinct frameworks: Heisenberg's matrix mechanics and Schrödinger's wave mechanics [1]. While Heisenberg's approach represented physical quantities as matrices with non-commutative properties, Schrödinger's wave equation, ĤΨ = EΨ, provided a powerful mathematical foundation for predicting atomic and molecular properties through wave functions and their associated probability distributions [2] [1].

The quantum perspective fundamentally altered chemical thinking by revealing that electrons exist in probability clouds defined by wave functions rather than following precise trajectories [2]. This understanding explained atomic stability, chemical bond formation, and molecular spectral lines through the quantization of energy levels, spin states, and orbital angular momentum. The 1927 Heisenberg Uncertainty Principle further cemented the probabilistic nature of quantum systems by establishing fundamental limits on simultaneously knowing complementary properties like position and momentum [1].

Table 1: Key Historical Milestones in Quantum Science Development

| Year | Scientist | Breakthrough | Significance in Quantum Chemistry |

|---|---|---|---|

| 1900 | Max Planck | Energy Quanta | Introduced discrete energy packets, solving ultraviolet catastrophe |

| 1905 | Albert Einstein | Photoelectric Effect | Demonstrated wave-particle duality of light |

| 1913 | Niels Bohr | Quantum Atom Model | Explained atomic spectra and stability through quantized energy levels |

| 1925-1926 | Heisenberg/Schrödinger | Quantum Mechanics | Provided mathematical framework for predicting atomic/molecular behavior |

| 1927 | Werner Heisenberg | Uncertainty Principle | Established fundamental limits of simultaneous measurement |

| 1928 | Paul Dirac | Dirac Equation | Combined quantum mechanics with special relativity, predicted antimatter |

| 1964 | John Bell | Bell's Theorem | Showed no local hidden variable theories can reproduce quantum predictions |

| 1998 | Walter Kohn | Density Functional Theory | Nobel Prize for DFT development enabling practical electronic structure calculations |

The subsequent development of quantum electrodynamics (QED) by Feynman, Schwinger, and Tomonaga in 1947, along with Walter Kohn's density functional theory (DFT) that earned him the 1998 Nobel Prize in Chemistry, provided increasingly sophisticated mathematical tools for describing electron interactions in molecular systems [3]. These theoretical advances established the foundation for the computational quantum chemistry methods that would emerge in the latter half of the 20th century and continue to evolve today.

Modern Computational Quantum Chemistry: Methodologies and Protocols

Fundamental Quantum Principles in Chemical Calculations

Modern computational quantum chemistry rests on several foundational principles that directly enable the prediction of molecular properties and reactivities. The Schrödinger equation, ĤΨ = EΨ, serves as the cornerstone, where the Hamiltonian operator (Ĥ) represents the total energy of the system, Ψ is the wave function containing complete information about the system's quantum state, and E represents the energy eigenvalues corresponding to observable energy levels [2].

The Born-Oppenheimer approximation, which separates electronic and nuclear motion by assuming electrons adjust instantaneously to nuclear positions due to their smaller mass (Ψtotal = Ψelectronic × Ψ_nuclear), enables the practical computation of molecular wave functions and potential energy surfaces [2]. This approximation, combined with the variational principle that provides an upper bound for ground state energy, forms the theoretical basis for most electronic structure calculation methods.

Quantum chemistry further incorporates several phenomena with profound implications for chemical reactivity. Zero-point energy, expressed as EZPE = (1/2)ℏω for a quantum harmonic oscillator, reveals that molecular systems retain vibrational energy even at absolute zero temperature due to the Heisenberg uncertainty principle [2]. This energy affects bond lengths, vibrational frequencies, and reaction rates, particularly for reactions involving light atoms like hydrogen, where it explains significant kinetic isotope effects through the equation kH/kD = exp[(EZPE,D – E_ZPE,H)/RT] [2]. Quantum tunneling, with probability P ∝ exp(-2κa), allows particles to penetrate classically insurmountable energy barriers, significantly accelerating certain reaction rates, especially for proton transfer reactions [2].

High-Accuracy Electronic Structure Methods

Coupled-Cluster Theory and Neural Network Enhancement

Coupled-cluster theory, particularly CCSD(T), represents the "gold standard" of quantum chemistry, providing highly accurate results that closely match experimental data [3]. Traditional implementations, however, face severe computational scaling limitations—doubling the number of electrons increases computation cost 100-fold—restricting applications to small molecules of approximately 10 atoms [3]. Recent breakthroughs have addressed this limitation through innovative machine learning approaches.

The Multi-task Electronic Hamiltonian network (MEHnet) represents a significant advancement, utilizing an E(3)-equivariant graph neural network architecture where nodes represent atoms and edges represent chemical bonds [3]. This physics-informed neural network is trained on CCSD(T) calculations and can subsequently perform these calculations with dramatically improved computational efficiency while extracting multiple electronic properties from a single model [3]. The network achieves CCSD(T)-level accuracy for systems containing thousands of atoms, far surpassing previous limitations [3].

Table 2: Computational Quantum Chemistry Methods Comparison

| Method | Theoretical Basis | Accuracy Level | Computational Scaling | Typical System Size |

|---|---|---|---|---|

| Density Functional Theory (DFT) | Electron density distribution | Moderate | Favorable | Hundreds of atoms |

| Coupled-Cluster CCSD(T) | Electron correlation via cluster operators | High (Gold Standard) | Very expensive (~N⁷) | Tens of atoms (traditional) |

| MEHnet (CCSD(T)-NN) | CCSD(T) + Equivariant Graph Neural Network | High (CCSD(T)-level) | Favorable after training | Thousands of atoms |

| pUNN (Hybrid Quantum-Neural) | pUCCD quantum circuit + Neural network | Near-chemical accuracy | Moderate (O(N³) for NN) | Medium-sized molecules |

Key properties calculable with these advanced methods include dipole and quadrupole moments, electronic polarizability, optical excitation gaps (energy needed to promote electrons from ground to excited states), infrared absorption spectra related to molecular vibrations, and properties of both ground and excited states [3]. When tested on hydrocarbon molecules, the MEHnet model outperformed DFT counterparts and closely matched experimental results from published literature [3].

Hybrid Quantum-Neural Wavefunction Protocol

The pUNN (paired unitary coupled-cluster with neural networks) algorithm represents a cutting-edge hybrid approach that combines quantum circuits with neural networks to represent molecular wavefunctions [4]. This method employs a linear-depth paired Unitary Coupled-Cluster with double excitations (pUCCD) circuit to learn molecular wavefunctions in the seniority-zero subspace, complemented by a neural network that accounts for contributions from unpaired configurations [4].

Experimental Protocol: pUNN Implementation

- Circuit Initialization: Prepare the pUCCD ansatz state |ψ⟩ using a parameterized quantum circuit on N qubits, representing the seniority-zero subspace where electrons are paired.

- Hilbert Space Expansion: Add N ancilla qubits and apply an entanglement circuit Ê consisting of N parallel CNOT gates to create correlations between original and ancilla qubits, producing the expanded state |Φ⟩ = Ê(|ψ⟩ ⊗ |0⟩).

- Perturbation Application: Apply a low-depth perturbation circuit to the ancilla qubits using single-qubit rotation gates (R_y with angle 0.2) to divert the state from the seniority-zero subspace.

- Neural Network Processing: Process the combined quantum state through a neural network with binary input representation of bitstrings |k⟩ ⊗ |j⟩, L dense layers with ReLU activation, and hidden layer size of 2KN (typically K=2).

- Particle Number Conservation: Apply a mask defined by m(k,j) = δ(Nₐₗₚₕₐ(k)+Nₐₗₚₕₐ(j), Nₐ)δ(Nᵦₑₜₐ(k)+Nᵦₑₜₐ(j), Nᵦ) to eliminate non-particle-conserving configurations.

- Measurement and Energy Calculation: Compute expectation values ⟨Ψ|Ĥ|Ψ⟩ and ⟨Ψ|Ψ⟩ using an efficient measurement protocol that avoids quantum state tomography, then calculate energy as E = ⟨Ψ|Ĥ|Ψ⟩/⟨Ψ|Ψ⟩.

This hybrid approach retains the low qubit count and shallow circuit depth of pUCCD while achieving accuracy comparable to high-level methods like UCCSD and CCSD(T) [4]. The method has demonstrated particular effectiveness for challenging multi-reference systems like the isomerization reaction of cyclobutadiene and maintains high accuracy and noise resilience on superconducting quantum processors [4].

Quantum Error Correction for Chemical Computation

Advanced quantum error correction methods are essential for maintaining computational accuracy in quantum chemistry simulations, particularly on noisy intermediate-scale quantum (NISQ) devices. The color code approach to quantum error correction represents a significant advancement beyond the more established surface code, offering more efficient logical operations despite requiring more complex stabilizer measurements and decoding techniques [5] [6].

Experimental Protocol: Color Code Implementation

- Qubit Organization: Arrange physical qubits in a trivalent (three-way) lattice structure where each vertex connects to three differently colored regions (red, green, blue).

- Stabilizer Measurement: Implement higher-weight stabilizer measurements across the color code lattice to detect errors, requiring optimized circuits on superconducting processors.

- Error Decoding: Apply advanced decoding algorithms to interpret stabilizer measurement outcomes and identify physical qubit errors.

- Logical Operation Execution: Perform fault-tolerant logical operations including:

- Transversal Clifford gates (applying operations to each physical qubit separately)

- Magic state injection with post-selection

- Lattice surgery for multi-qubit operations

- Performance Validation: Use logical randomized benchmarking to assess logical gate errors and teleport logical states between color codes to verify operation fidelity.

Recent implementations scaling the code distance from three to five have demonstrated a 1.56-fold reduction in logical error rates, with transversal Clifford gates adding only 0.0027 error per operation [5] [6]. Magic state injection, a critical process for universal quantum computation, has achieved fidelities exceeding 99% with 75% data retention rates, while lattice surgery techniques have enabled logical state teleportation with fidelities between 86.5% and 90.7% [5] [6]. These error correction advances provide the foundation for increasingly accurate quantum computational chemistry on emerging hardware platforms.

The Scientist's Toolkit: Research Reagents and Computational Materials

Table 3: Essential Research Reagents and Computational Materials for Quantum Chemistry

| Tool/Reagent | Function/Application | Specifications/Protocol Notes |

|---|---|---|

| MEHnet Architecture | Multi-property prediction with CCSD(T) accuracy | E(3)-equivariant graph neural network; requires initial CCSD(T) training data |

| pUNN Framework | Hybrid quantum-neural wavefunction representation | Combines pUCCD quantum circuit with classical neural network (K=2, L=N-3) |

| Color Code QEC | Fault-tolerant quantum computation | Trivalent lattice structure; requires high-weight stabilizer measurements |

| Logical Randomized Benchmarking | Validation of logical gate performance | Applies random gate sequences to measure average error rates |

| Magic State Injection | Enables non-Clifford gates for universality | Requires post-selection; typical 75% data retention rate for 99% fidelity |

| Lattice Surgery | Fault-tolerant multi-qubit operations | Enables logical state teleportation between code patches |

| Variational Quantum Eigensolver (VQE) | Near-term quantum computational chemistry | Parameterized quantum circuits; balance needed between depth and accuracy |

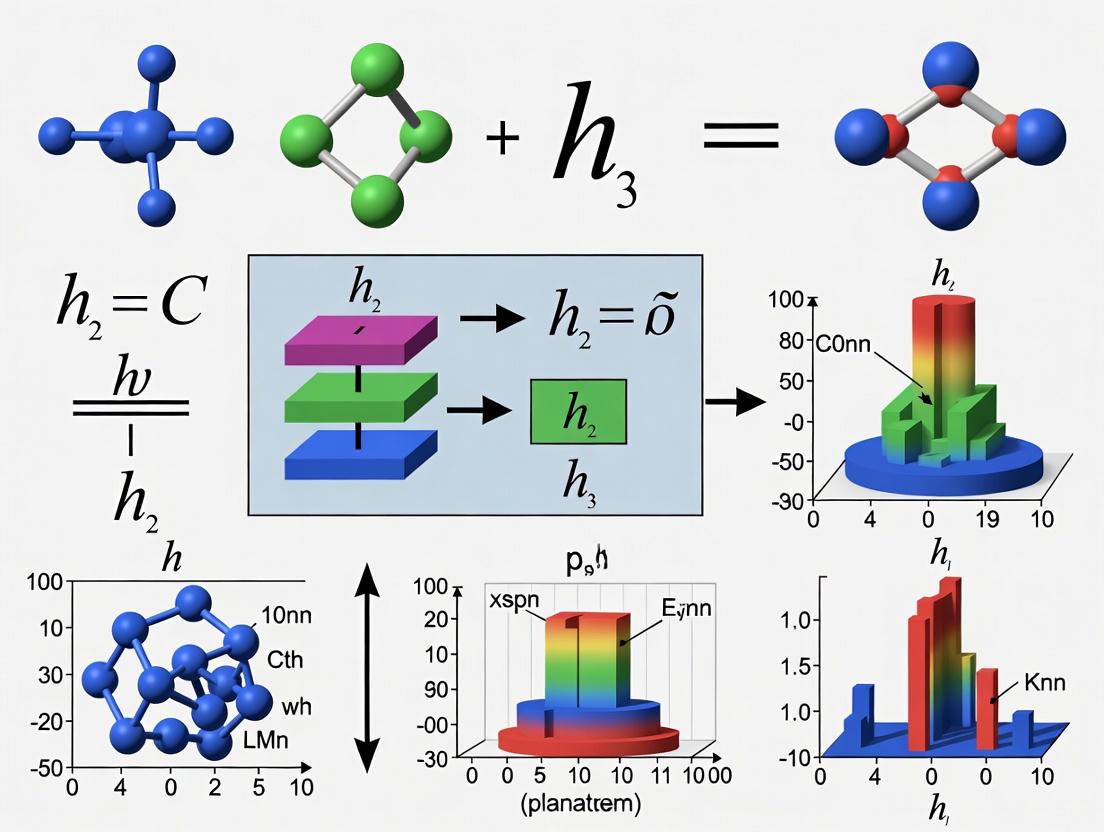

Workflow and System Architecture Visualization

Diagram 1: Quantum Chemistry Computational Evolution

Diagram 2: Color Code Quantum Error Correction Architecture

A century after the foundational developments in quantum mechanics, the field of quantum chemistry stands at the precipice of a new era defined by the synergistic integration of computational methods across classical machine learning, quantum computing, and advanced error correction. The pioneering work that began with Planck's quanta has evolved into sophisticated tools like the MEHnet architecture that achieves CCSD(T)-level accuracy for thousands of atoms, hybrid quantum-neural wavefunction methods that maintain accuracy on noisy quantum hardware, and color code error correction that enables fault-tolerant logical operations [3] [4] [5]. These advances collectively empower researchers to explore chemical spaces and molecular interactions with unprecedented precision.

Looking forward, the ambition to "cover the whole periodic table with CCSD(T)-level accuracy at lower computational cost than DFT" represents a guiding vision for the next decade of quantum chemistry development [3]. Realizing this goal will require continued advancement across multiple domains: improving physical qubit performance to support more complex quantum simulations, developing more efficient decoding algorithms for real-time error correction, and creating hybrid approaches that leverage the complementary strengths of different computational strategies [6]. As these technical challenges are addressed, quantum chemistry promises to accelerate the discovery of novel pharmaceuticals, advanced materials, and efficient energy solutions, ultimately demonstrating the profound practical impact of a century of quantum theoretical development.

Planck's constant (h), a fundamental constant of nature, is most famously recognized in the Planck-Einstein relation, E = hf, which dictates that the energy of a photon is proportional to its frequency [7] [8]. However, its role as the "quantum of action" extends far beyond this equation, forming the very foundation of quantum theory and its application in modern computational chemistry and drug discovery [2]. The action, a quantity in physics with dimensions of energy × time, is quantized in units of Planck's constant, meaning that in the quantum realm, actions occur in discrete steps of h rather than varying continuously [8].

In 2025, declared the International Year of Quantum Science and Technology, we mark a century since the development of the formal mathematical frameworks of quantum mechanics [1]. This year has already seen revolutionary breakthroughs, including the discovery of fractional excitons and quantum simulations achieving chemical accuracy with 0.15 milli-Hartree precision, surpassing classical computational methods [2]. These advances underscore the growing importance of a deep understanding of Planck's constant for researchers pushing the boundaries of molecular design and pharmaceutical development.

Fundamental Principles: The Nature of Planck's Constant

Historical Context and Definition

Planck's constant was first introduced by Max Planck in 1900 as a necessary proposition to solve the problematic "ultraviolet catastrophe" in blackbody radiation theory [7] [9] [10]. Classical physics predicted that a blackbody would emit infinite energy at short wavelengths, a prediction clearly at odds with experimental observations [9]. Planck's revolutionary solution was to postulate that electromagnetic energy is emitted and absorbed not continuously, but in discrete packets of energy called "quanta" [9] [10]. The energy of each quantum is given by E = hf, where f is the frequency of the radiation and h is the fundamental constant that now bears his name [7] [9].

The constant has since been precisely defined in the International System of Units (SI) with an exact value, which has profound implications for metrology [7] [10].

Table 1: Fundamental Values of Planck's Constant

| Constant | Symbol | Value | Units | Significance |

|---|---|---|---|---|

| Planck Constant | h | 6.62607015 × 10⁻³⁴ | J·s | Elementary quantum of action [7] [10] |

| Reduced Planck Constant | ħ (h-bar) | 1.054571817... × 10⁻³⁴ | J·s | ħ = h/2π; quantization of angular momentum [7] |

The Reduced Planck Constant and the Quantization of Angular Momentum

A closely related quantity, the reduced Planck constant (ħ = h/2π), is often more fundamental in the mathematical formulations of quantum mechanics [7]. While h governs the relationship between energy and frequency, ħ is the fundamental quantum of angular momentum [11]. The angular momentum of an electron bound to an atomic nucleus, for instance, is quantized and can only exist in integer multiples of ħ [8]. This quantization is not just a theoretical concept but has observable consequences, explaining the stability of atoms and the discrete nature of atomic spectra [11].

Planck's Constant in Quantum Chemistry Formalism

The Schrödinger Equation and the Hamiltonian

The time-independent Schrödinger equation, ĤΨ = EΨ, is the cornerstone of quantum chemistry [2]. Here, Ĥ is the Hamiltonian operator, which represents the total energy of the system, Ψ is the wave function containing all information about the system's state, and E is the energy eigenvalue [2]. The reduced Planck constant (ħ) appears explicitly within the Hamiltonian operator. For a single particle, the Hamiltonian is given by:

Ĥ = - ( ħ² / 2m ) ∇² + V(r)

In this formulation, the first term represents the kinetic energy operator, where m is the particle's mass and ∇² is the Laplacian operator. The presence of ħ in the kinetic energy term is a direct manifestation of the wave-like nature of matter and is non-negotiable for accurate predictions of molecular properties [2].

The Heisenberg Uncertainty Principle

Planck's constant sets a fundamental limit on what is knowable at the quantum scale, formalized by Werner Heisenberg's uncertainty principle [7] [1]. For position (x) and momentum (p), the principle states:

Δx Δp ≥ ħ/2

This inequality means that it is impossible to simultaneously know both the exact position and exact momentum of a particle [7]. The more precisely one is determined, the less precisely the other can be known. This is not a limitation of our measuring instruments but a fundamental property of the universe, with ħ setting the scale of this indeterminacy [11]. This principle has direct implications for molecular simulations, as it defines the inherent "fuzziness" of electron distributions and atomic vibrations [2].

Figure 1: The conceptual relationship between Planck's constant and core quantum mechanical principles.

Key Applications in Chemical Research

Spectroscopy and Energy Transitions

Spectroscopy is a direct experimental window into the quantized energy levels of atoms and molecules, and Planck's constant is the key that unlocks this window [2]. The energy difference (ΔE) between two quantum states is directly related to the frequency (ν) of light absorbed or emitted during a transition:

ΔE = hν

This relationship allows researchers to use spectroscopic data to determine energy level spacings in molecules, a crucial parameter for understanding chemical reactivity and stability [2]. Different spectroscopic techniques probe different types of energy levels, all governed by this fundamental relationship.

Table 2: Planck's Constant in Spectroscopic Transitions

| Spectroscopy Type | Energy Transition | Governing Equation with h | Application in Drug Development |

|---|---|---|---|

| UV-Vis | Electronic | ΔEelec = hc/λ | Probing chromophores, protein folding, ligand binding [2] |

| Infrared (IR) | Vibrational | Ev = ħω(v + 1/2) | Identifying functional groups, monitoring reaction progress [2] |

| Rotational (Microwave) | Rotational | EJ = B J(J+1), where B ∝ ħ² | Determining molecular structure and bond lengths [2] |

Zero-Point Energy and Quantum Tunneling

Even at absolute zero, quantum systems possess a minimum, irreducible energy known as zero-point energy (ZPE) [2]. For a quantum harmonic oscillator, which models molecular vibrations, this energy is EZPE = (1/2)ħω [2]. This phenomenon is a direct consequence of the Heisenberg uncertainty principle—a system with exactly zero energy would have a perfectly defined position and momentum, which is forbidden [2].

ZPE has significant chemical consequences, particularly in reaction rates involving light atoms like hydrogen. It is the origin of kinetic isotope effects; a C–D bond is stronger and has a lower ZPE than a C–H bond, leading to different reaction rates that can be used to probe reaction mechanisms [2].

Closely related is the phenomenon of quantum tunneling, where a particle can traverse an energy barrier that would be insurmountable according to classical physics [2] [1]. The probability of tunneling is proportional to exp(-2κa), where κ is related to the barrier height and the mass of the particle, and a is the barrier width [2]. This effect, governed by the wave-like nature of particles described by the Schrödinger equation, is significant in proton-transfer reactions and enzymatic catalysis, explaining why certain biochemical reactions proceed at unexpectedly high rates [2] [1].

Computational Chemistry and the Electronic Structure Problem

Solving the Schrödinger equation for multi-electron molecules is the central challenge of electronic structure theory. Planck's constant is embedded in the core of all modern computational methods used by pharmaceutical researchers.

- Density Functional Theory (DFT): Modern DFT calculations rely on the Kohn-Sham equations, which, like the Schrödinger equation, include ħ in the kinetic energy term. These calculations are workhorses for predicting molecular geometry, binding affinities, and electronic properties of drug candidates [2].

- Ab Initio Calculations: Methods like Hartree-Fock and post-Hartree-Fock explicitly solve the electronic Schrödinger equation. The accuracy of these methods in predicting interaction energies is crucial for rational drug design, and recent advances in 2025 have seen quantum computing achieve chemical accuracy (0.15 milli-Hartree precision) in these simulations [2].

Experimental Protocols and Metrology

The Kibble Balance and the SI Kilogram

In a landmark decision that took effect in 2019, the international scientific community redefined the kilogram based on a fixed, exact value of Planck's constant [10]. This redefinition moved the standard of mass from a physical artifact to an invariant of nature. The key instrument enabling this change is the Kibble balance (formerly known as the watt balance) [10].

Protocol: Principle of the Kibble Balance

- Balancing Mass with Electromagnetic Force: A test mass m is placed on a coil suspended in a magnetic field. The gravitational force (F = mg) is balanced by the electromagnetic Lorentz force created by passing a current *I through the coil. The force is F = B L I, where B is the magnetic flux density and L is the coil length.

- Weighing Mode: This establishes the equivalence: m * g* = B * L* * I*.

- Moving the Coil: In a second, separate step, the coil is moved vertically through the same magnetic field at a known velocity v. This induces a voltage V across the coil given by V = B * L* * v*.

- Velocity Mode: By combining the equations from the two modes, the mass m can be expressed in terms of electrical measurements and velocity: m * g* * v* = V * I*.

- Link to Planck's Constant: Using quantum electrical standards—the Josephson effect (which defines voltage in terms of h and the elementary charge e via KJ = 2e/h) and the quantum Hall effect (which defines resistance via RK = h/e²)—the electrical power V * I* can be expressed exclusively in terms of h, a frequency, and fundamental constants. Thus, the Kibble balance measures mass in terms of Planck's constant [10].

This protocol demonstrates that even on macroscopic scales, mass is inherently related to h [10].

Protocol for Demonstrating the Quantization of Angular Momentum

While direct measurement of h requires sophisticated metrology, its effects can be demonstrated through the quantization of angular momentum.

Indirect Experimental Verification via Atomic Spectra

- Objective: To observe the discrete nature of atomic energy levels, which is a direct consequence of the quantization of angular momentum in units of ħ.

- Materials:

- Hydrogen gas discharge tube

- Power supply

- Spectrometer or diffraction grating with a detector

- Wavelength calibration source (e.g., mercury lamp)

- Procedure:

- Energize the hydrogen lamp using the power supply.

- Use the spectrometer to observe the emitted line spectrum.

- Precisely measure the wavelengths of the distinct spectral lines in the visible range (e.g., the Balmer series).

- Convert the measured wavelengths to energy using ΔE = hc/λ.

- Data Analysis:

- The energies of the observed lines will correspond exactly to the differences between the quantized energy levels of the hydrogen atom, given by En = -(hcR∞)/n², where R∞ is the Rydberg constant.

- The success of this model, which derives from the quantization of angular momentum, is a powerful validation of the role of ħ in governing atomic structure [7] [2].

Figure 2: The operational workflow of a Kibble balance, which defines mass in terms of Planck's constant.

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Quantum Chemistry Investigations

| Item / Reagent | Function / Role | Application Context |

|---|---|---|

| Kibble Balance | A precision instrument that measures mass by balancing gravitational force against electromagnetic force, directly linking mass to Planck's constant [10]. | Redefinition of the SI kilogram; fundamental metrology. |

| Ultra-Pure Silicon-28 Spheres | Used in the Avogadro project to count the number of atoms in a crystal lattice, providing an independent method to determine the Avogadro constant and Planck's constant [10]. | Fundamental constant determination; competition to the Kibble balance method. |

| Josephson Junction Arrays | Devices that exhibit the AC Josephson effect, where a voltage is related to a frequency via KJ = 2e/h. Used as a primary standard for voltage [10]. | Realizing electrical standards traceable to h; used in Kibble balance experiments. |

| Quantum Hall Resistors | Devices that exhibit the quantum Hall effect, where resistance is quantized in units of RK = h/e². Used as a primary standard for resistance [10]. | Realizing electrical standards traceable to h; used in Kibble balance experiments. |

| Computational Chemistry Software (e.g., for DFT, Ab Initio) | Software that implements quantum mechanical equations (Schrödinger equation) containing ħ to compute molecular properties from first principles [2]. | Drug design; materials science; prediction of molecular properties and reaction pathways. |

Planck's constant, born from the problem of blackbody radiation, has evolved into a cornerstone of modern science and technology. Its role extends far beyond the simple E = hf relationship, underpinning the very framework of quantum chemistry. From defining the SI kilogram via the Kibble balance to enabling the prediction of drug-receptor interactions through advanced computational models, the "quantum of action" is deeply embedded in the tools and theories driving innovation in chemical research.

As we progress through the International Year of Quantum Science and Technology in 2025, the continued exploration of quantum phenomena like fractional excitons and the integration of AI with quantum calculations promise to further revolutionize the field [2]. For researchers in drug development and molecular sciences, a firm grasp of Planck's constant and its multifaceted applications is not merely academic—it is an essential component of the toolkit needed to design the next generation of therapeutics and materials.

The Schrödinger equation forms the foundational pillar of quantum mechanics, providing a wave-like description of particles that supersedes classical Newtonian mechanics at atomic and subatomic scales [12]. At the heart of this revolutionary equation lies Planck's constant (h, or its reduced form ħ = h/2π), a fundamental parameter of nature that quantizes the relationship between energy and frequency [13] [14]. This mathematical framework enables researchers to calculate probability distributions for particle positions and momenta rather than deterministic predictions, reflecting the inherent uncertainty in quantum systems [12].

In computational quantum chemistry, Planck's constant serves as the critical bridge between microscopic quantum phenomena and macroscopic observable properties. The constant appears intrinsically in the Hamiltonian operator (Ĥ), which governs the total energy of quantum systems, and dictates the spatial and temporal evolution of the wave function Ψ [15] [16]. For drug development professionals and research scientists, understanding where and how Planck's constant enters computational frameworks is essential for interpreting results from quantum chemical calculations, particularly when modeling molecular interactions, reaction mechanisms, and electronic properties of potential pharmaceutical compounds.

Mathematical Foundation: Planck's Constant in the Equation Framework

The Schrödinger equation exists in two primary forms, both intrinsically dependent on Planck's constant. The time-dependent Schrödinger equation governs the evolution of quantum systems over time:

Time-Dependent Formulation

iħ ∂Ψ(x,t)/∂t = ĤΨ(x,t) [15] [16]

where:

iis the imaginary unitħ = h/2πis the reduced Planck's constantΨ(x,t)is the wave function of the systemĤis the Hamiltonian operator

For systems where potential energy does not depend explicitly on time, we utilize the time-independent Schrödinger equation to find stationary states:

Time-Independent Formulation

ĤΨ = EΨ [2] [16]

In this eigenvalue equation, E represents the energy eigenvalue corresponding to the stationary state described by wave function Ψ. The Hamiltonian operator Ĥ itself contains Planck's constant in its kinetic energy term:

Ĥ = -ħ²/2m ∇² + V(r) [2]

where the first term represents the kinetic energy operator with ∇² as the Laplacian operator, m is particle mass, and V(r) is the potential energy function.

Table 1: Physical Constants in the Schrödinger Equation

| Constant | Symbol | Value | Role in Schrödinger Equation |

|---|---|---|---|

| Planck's Constant | h | 6.62607015 × 10⁻³⁴ J·s | Fundamental quantum of action |

| Reduced Planck's Constant | ħ | 1.054571817 × 10⁻³⁴ J·s | Appears directly in differential form |

| Electron Mass | mₑ | 9.10938356 × 10⁻³¹ kg | Mass in kinetic energy term |

Computational Protocols: Numerical Implementation with Planck's Constant

Semiclassical Regime and Computational Challenges

In the semiclassical regime where the Planck constant ε ≪ 1, the Schrödinger equation generates highly oscillatory solutions with O(ε) scaled oscillations in both space and time [17]. This presents significant computational challenges that require specialized numerical methods. The Crank-Nicolson (CN) discretization scheme, combined with the Constraint Energy Minimization Generalized Multiscale Finite Element Method (CEM-GMsFEM), has emerged as an effective approach for handling these oscillations in systems with high-contrast potentials [17].

The convergence requirements for numerical solutions explicitly depend on Planck's constant through the relationships:

- For ε ≤ δ:

H/√Λ = O(ε⁵/⁴)andΔt = O(ε⁵/⁴) - For δ < ε:

H/√Λ = O(ε¹/⁴δ)andΔt = O(δ²/ε³/⁴)

where H represents the maximum diameter of coarse elements, Λ is the minimal eigenvalue associated with eigenvectors not included in the auxiliary space, Δt is the time step, and δ describes the multiscale structure of the potential [17].

Protocol: Crank-Nicolson CEM-GMsFEM Implementation

Objective: Solve the time-dependent Schrödinger equation for systems with high-contrast multiscale potentials.

Materials and Software Requirements:

- High-performance computing system with sufficient memory

- Scientific computing environment (Python, MATLAB, or C++)

- Mesh generation software

- Linear algebra solvers (e.g., LAPACK, PETSc)

Procedure:

- Problem Setup:

- Define the computational domain Ω

- Specify the potential function V(r) with multiscale characteristics

- Set the initial wave function Ψ(r,0)

- Determine the semiclassical parameter ε = ħ/√(2m)

Spatial Discretization:

- Generate coarse mesh with maximum diameter H satisfying H/√Λ = O(ε⁵/⁴)

- Construct oversampling domains with size dependent on ε

- Solve local spectral problems to form auxiliary multiscale space

Basis Function Construction:

- Apply constraint energy minimization to obtain multiscale basis functions

- Ensure basis functions capture ε-scaled oscillations

- Form the finite-dimensional subspace Vₕ

Temporal Discretization:

- Set time step Δt according to convergence requirements

- Apply Crank-Nicolson scheme for time integration:

(I + iΔt/2ħ H)Ψⁿ⁺¹ = (I - iΔt/2ħ H)Ψⁿ

Matrix Assembly and Solution:

- Assemble Hamiltonian matrix H in the multiscale basis

- Solve the resulting linear system using iterative methods

- Update wave function at each time step

Postprocessing:

- Compute physical observables from wave function

- Analyze probability densities and energy eigenvalues

Validation:

- Verify convergence with respect to ε

- Check conservation of probability density

- Compare with analytical solutions for simplified potentials

Table 2: Computational Parameters and Their Dependence on Planck's Constant

| Parameter | Symbol | Dependence on Planck's Constant | Computational Implication |

|---|---|---|---|

| Spatial Mesh Size | Δx | O(ε) | Finer grids required for small ε |

| Time Step | Δt | O(ε⁵/⁴) for ε ≤ δ | Smaller time steps for accuracy |

| Oversampling Size | - | O(ε⁻¹/⁴ log(1/ε)) | Larger oversampling for small ε |

| Basis Function Count | N_basis | O(ε⁻ᵈ) for dimension d | Exponential growth in basis size |

Experimental Determination of Planck's Constant for Computational Validation

Protocol: Determining Planck's Constant via Photoelectric Effect

Objective: Experimental determination of Planck's constant for validation in quantum chemistry computations.

Materials:

- Photoelectric effect apparatus with mercury light source

- Set of optical filters for wavelength selection

- Sb-Cs photocell with spectral response from UV to visible light

- Voltage source and precision voltmeter

- Current amplifier for photocurrent measurement

- Data acquisition system [13]

Procedure:

- Apparatus Setup:

- Assemble photoelectric experiment with mercury lamp as light source

- Install selected wavelength filter between source and photocell

- Connect photocell to variable voltage source in reverse bias configuration

- Connect sensitive ammeter in series to measure photocurrent

Data Collection:

- For each wavelength filter (λ), measure photocurrent (I) versus applied voltage (V)

- Determine stopping voltage Vₕ for each wavelength by identifying voltage where photocurrent reaches zero

- Record at least five measurements for each wavelength to establish statistical significance

Data Analysis:

- Convert wavelengths to frequencies using f = c/λ

- Plot stopping voltage Vₕ versus frequency f

- Perform linear regression: Vₕ = (h/e)f - W₀/e

- Extract Planck's constant from slope: h = slope × e

- Calculate work function from intercept: W₀ = -intercept × e

Calculations:

Using the photoelectric equation derived from Einstein's explanation:

eVₕ = hf - W₀ [13]

where e is electron charge, Vₕ is stopping voltage, f is photon frequency, and W₀ is work function of the photocathode material.

Expected Results:

- Linear relationship between Vₕ and f with slope h/e

- Planck's constant value: h = (6.626 ± 0.032) × 10⁻³⁴ J·s [13]

- Threshold frequency fₚ = W₀/h where Vₕ = 0

Alternative Experimental Methods

Table 3: Experimental Methods for Determining Planck's Constant

| Method | Physical Principle | Key Measurements | Accuracy Considerations |

|---|---|---|---|

| Photoelectric Effect [13] | Electron emission from metal surface | Stopping voltage vs. light frequency | Sensitive to surface contamination |

| Blackbody Radiation [13] | Stefan-Boltzmann law | I-V characteristics of incandescent filament | Requires precise filament area measurement |

| LED Characteristics [13] | Semiconductor band gap | Threshold voltage vs. emission wavelength | Affected by non-monochromatic emission |

| Hydrogen Spectrum [13] | Atomic energy level transitions | Wavelengths of spectral lines | Relies on precise wavelength calibration |

Quantum Chemistry Applications: Planck's Constant in Electronic Structure Methods

Ab Initio Quantum Chemistry Framework

In computational quantum chemistry, Planck's constant enters through the fundamental time-independent electronic Schrödinger equation within the Born-Oppenheimer approximation:

Ĥ(rₑ; Rₙ)Ψ(rₑ; Rₙ) = EΨ(rₑ; Rₙ) [18]

where rₑ represents electronic coordinates and Rₙ represents fixed nuclear coordinates. The Hamiltonian operator explicitly contains ħ in its kinetic energy component:

Ĥ = -∑ᵢ(ħ²/2mₑ)∇ᵢ² - ∑_A(ħ²/2M_A)∇_A² + V(rₑ, Rₙ) [18]

The Hartree-Fock method, foundational to modern quantum chemistry, transforms this many-body problem into a set of one-electron equations through the Roothaan-Hall formulation:

FC = SCε [18]

where F is the Fock matrix containing ħ-dependent operators, C is the coefficient matrix, S is the overlap matrix, and ε represents orbital energies that scale with ħ.

The Scientist's Toolkit: Essential Computational Methods

Table 4: Quantum Chemistry Methods and Their Scaling with System Size

| Method | Computational Scaling | Role of Planck's Constant | Typical Applications |

|---|---|---|---|

| Hartree-Fock (HF) [18] | O(N⁴) | Kinetic energy operator: -ħ²/2m ∇² | Initial wavefunction, molecular orbitals |

| Density Functional Theory (DFT) [14] | O(N³) | Embedded in Kohn-Sham equations | Ground state properties, band structures |

| Møller-Plesset Perturbation (MP2) [18] | O(N⁵) | Enters perturbation expansion | Electron correlation corrections |

| Coupled Cluster (CCSD) [18] | O(N⁶) | In Hamiltonian for cluster operator | High-accuracy energy calculations |

| Full Configuration Interaction (FCI) [18] | Exponential | Fundamental in many-body Hamiltonian | Exact solutions for small systems |

Advanced Computational Frameworks: Real-Valued Formulations and Multiscale Methods

Schrödinger's Fourth-Order Matter-Wave Equation

Recent research has revealed an alternative formulation of quantum mechanics using Schrödinger's fourth-order, real-valued matter-wave equation. This approach produces the precise eigenvalues of the conventional second-order complex-valued equation while introducing an equal number of negative mirror eigenvalues [19]. The fourth-order formulation:

- Eliminates complex numbers from the fundamental equation

- Incorporates spatial derivatives of the potential V(r)

- Generates identical positive eigenvalues plus mirror negative energy levels

- Provides a complete real-valued description of non-relativistic quantum mechanics [19]

This formulation offers computational advantages for certain classes of problems while maintaining all physical predictions of standard quantum mechanics.

Protocol: Constraint Energy Minimization GMsFEM for High-Contrast Systems

Objective: Efficient solution of Schrödinger equations with high-contrast potentials using multiscale finite element methods.

Computational Procedure:

- Coarse-Scale Discretization:

- Generate coarse grid with parameter H satisfying convergence conditions

- Define oversampling regions for each coarse element

Multiscale Basis Construction:

- Solve local spectral problems in oversampling domains

- Apply constraint energy minimization to construct localized basis functions

- Ensure basis functions capture ε-scaled oscillations

Global System Solution:

- Project Hamiltonian onto multiscale space

- Solve reduced-dimensionality system

- Reconstruct fine-scale solution [17]

Advantages:

- Contrast-independent convergence rates

- Exponential decay of error with oversampling size

- Efficient handling of multiscale potential features

Planck's constant serves as the fundamental link between theoretical quantum mechanics and practical computational chemistry, appearing at every level of the Schrödinger equation framework. From the fundamental differential operators to advanced numerical implementations, this universal constant dictates the scale and behavior of quantum phenomena in computational models. For researchers in drug development and materials science, understanding the role of ħ in these computational frameworks enables more accurate interpretation of quantum chemical calculations, particularly when modeling molecular interactions, reaction pathways, and electronic properties relevant to pharmaceutical design. The continued development of efficient numerical methods that properly account for the scale set by Planck's constant remains essential for advancing quantum computational capabilities across chemical and biomedical research.

The 2019 revision of the International System of Units (SI) represents a paradigm shift in metrology, transforming the definitions of base units from physical artifacts to fundamental constants of nature. This revision redefined the kilogram, ampere, kelvin, and mole by fixing the exact numerical values of the Planck constant ((h)), the elementary electric charge ((e)), the Boltzmann constant ((kB)), and the Avogadro constant ((NA)), respectively [20] [21]. This foundational change assures the long-term stability of the measurement system and enables the development of new technologies, including quantum technologies, to implement the definitions [22]. For researchers in quantum chemistry and drug development, this revision provides an unwavering foundation for computational and experimental work, creating a direct, traceable chain from quantum mechanical calculations to real-world measurable quantities.

The 2019 SI Redefinition: From Artifacts to Invariants

The Defining Constants

The SI is now defined by a set of seven defining constants, which include fundamental constants of physics and nature [22]. The system's stability is now derived from the presumed invariability of these constants.

Table 1: The Seven Defining Constants of the Revised SI (effective from 20 May 2019)

| Constant | Symbol | Exact Value | Unit |

|---|---|---|---|

| Hyperfine transition frequency of Cs-133 | ( \Delta \nu_{Cs} ) | 9 192 631 770 | Hz |

| Speed of light in vacuum | ( c ) | 299 792 458 | m/s |

| Planck constant | ( h ) | 6.626 070 15 × 10–34 | J s |

| Elementary charge | ( e ) | 1.602 176 634 × 10–19 | C |

| Boltzmann constant | ( k_B ) | 1.380 649 × 10–23 | J/K |

| Avogadro constant | ( N_A ) | 6.022 140 76 × 1023 | mol–1 |

| Luminous efficacy | ( K_{cd} ) | 683 | lm/W |

Impact on Base Units

The revision fundamentally changed the definition of four base units, linking them directly to invariants of nature.

Table 2: Changes in SI Base Unit Definitions from the 2019 Revision

| Unit | Pre-2019 Definition Basis | Post-2019 Definition Basis |

|---|---|---|

| Kilogram (kg) | International Prototype of the Kilogram (a physical artifact) | Fixed numerical value of the Planck constant ( h ) [20] [21] |

| Ampere (A) | Force between two parallel wires | Fixed numerical value of the elementary charge ( e ) [20] |

| Kelvin (K) | Triple point of water | Fixed numerical value of the Boltzmann constant ( k_B ) [20] |

| Mole (mol) | Mass of a substance | Fixed numerical value of the Avogadro constant ( N_A ) [20] |

| Second (s) | (Unchanged) Hyperfine splitting of caesium-133 | |

| Metre (m) | (Unchanged) Speed of light | |

| Candela (cd) | (Unchanged) Luminous efficacy |

The motivation for this change was profound. Physical artifacts, like the former international prototype kilogram, were subject to drift and degradation over time, with detected drifts of up to 20 micrograms per year in national prototypes relative to the international standard [20]. The new definitions, based on universal constants, are inherently stable and reproducible anywhere in the universe, independent of human-made objects.

The Central Role of the Planck Constant

Historical and Theoretical Significance

The Planck constant ((h)), originally postulated by Max Planck in 1900, is a fundamental quantity in quantum mechanics that relates the energy of a photon to its frequency via the Planck-Einstein relation (E = hf) [7]. Its reduced form, (\hbar = h/2\pi), appears ubiquitously in quantum theory, from the Schrödinger equation to the canonical commutation relations between position and momentum operators [7]. The Planck constant essentially defines the scale at which quantum mechanical effects become significant.

The Planck Constant and the Kilogram

In the revised SI, the kilogram is defined by fixing the numerical value of the Planck constant. Specifically, the definition states that "the Planck constant (h) is (6.626 070 15 \times 10^{–34}) joule-seconds" [22]. One can then express the kilogram in terms of (h), through the relationship (1\ \text{kg} = \frac{h}{6.62607015 \times 10^{-34}} \cdot \frac{\Delta \nu_{Cs}}{c^2}) [22]. This definition is practically realized through instruments such as the Kibble (watt) balance, which compares mechanical power to electromagnetic power, with (h) providing the crucial link [20].

Quantum Chemistry Calculations: A Metrological Foundation

The Schrödinger Equation and Fundamental Constants

Quantum chemistry relies on solving the electronic Schrödinger equation for molecular systems: [ \hat{H}\Psi = E\Psi ] where (\hat{H}) is the Hamiltonian operator representing the total energy, (\Psi) is the wave function, and (E) is the energy eigenvalue [2]. The Hamiltonian itself is built from fundamental constants. For a hydrogen atom, it takes the form: [ \hat{H} = -\frac{\hbar^2}{2m} \nabla^2 - \frac{ke^2}{r} ] where (\hbar = h/2\pi) is the reduced Planck constant, (e) is the elementary charge, (m) is the electron mass, and (k) is Coulomb's constant [2]. The 2019 redefinition fixed the values of (h) and (e), thereby stabilizing the very foundation upon which computational quantum chemistry rests.

Energy Calculations and the Planck Constant

The quantized energy levels of molecular systems are directly expressed through relationships involving fundamental constants. For a particle in a box, the energy levels are: [ En = \frac{n^2 h^2}{8mL^2} ] where (n) is the quantum number [2]. For the quantum harmonic oscillator model used in vibrational spectroscopy, the energy levels are: [ Ev = \hbar\omega (v + \frac{1}{2}) ] where (v) is the vibrational quantum number and (\omega) is the angular frequency [2]. The Planck constant is an indispensable component in calculating these energies, which are crucial for predicting molecular structure, stability, and reactivity.

Experimental Protocol: Utilizing the Revised SI in Quantum Chemistry Workflows

This protocol outlines the methodology for employing the revised SI definitions in quantum chemical calculations, ensuring metrological traceability from fundamental constants to predicted chemical properties.

Protocol: First-Principles Calculation of Molecular Properties with SI-Traceable Constants

Purpose: To compute molecular properties using density functional theory (DFT) with input parameters traceable to the defined SI constants.

Materials and Reagents: Table 3: Research Reagent Solutions for Quantum Chemistry Calculations

| Item | Function | Relevance to SI Redefinition |

|---|---|---|

| High-Performance Computing Cluster | Performs computationally intensive solving of the electronic Schrödinger equation. | Calculations utilize fundamental constants ((h), (e), (m_e)) with exact defined values. |

| Quantum Chemistry Software (e.g., Gaussian, ORCA, PySCF) | Implements algorithms for solving quantum chemical equations. | Software's internal physical constants updated to 2019 SI values. |

| Reference Datasets (e.g., QCML) | Provides training/validation data from ab initio calculations [23]. | Ensures consistency; datasets like QCML contain 33.5 million DFT calculations based on fundamental constants [23]. |

| Molecular Structure Files | Defines the nuclear coordinates and atomic numbers of the system. | Atomic masses are now based on the kilogram defined via (h). |

Procedure:

System Definition:

- Input the molecular geometry (Cartesian coordinates or internal coordinates) of the target system.

- Specify the atomic numbers, which determine the nuclear charge via the elementary charge (e).

- Metrological Link: The atomic masses used are now traceable to the kilogram defined through the Planck constant (h).

Basis Set Selection:

- Choose an appropriate Gaussian or plane-wave basis set to represent the electronic wavefunctions.

- The basis functions themselves have mathematical forms whose normalization relies on dimensional consistency backed by the SI.

Method Selection and Parameterization:

- Select a computational method (e.g., DFT) and an exchange-correlation functional (e.g., B3LYP).

- Metrological Link: The Hamiltonian operator is constructed using the fixed values of (\hbar) and (e). The electron mass, a key input, is defined in kilograms, which is now based on (h).

Self-Consistent Field (SCF) Calculation:

- Run the SCF procedure to solve the Kohn-Sham equations and converge the electron density.

- The total energy computed is in joules, traceable to the defined values of (h) and other constants.

Property Calculation:

- Calculate derived properties such as:

- Forces: Derivatives of the total energy with respect to nuclear positions.

- Dipole Moments: Electrical multipole moments depend directly on the value of the elementary charge (e).

- Vibrational Frequencies: Derived from the second derivatives of the energy (Hessian), related to (\hbar\omega).

- Calculate derived properties such as:

Validation:

- Compare computed properties (e.g., bond lengths, reaction energies) against high-quality reference data from datasets like QCML [23], which are also built upon the same consistent foundation of constants.

Diagram: Traceability from Constants to Chemical Prediction

Applications in Drug Development and Materials Discovery

The stability provided by the revised SI directly benefits research fields that rely heavily on computational predictions.

Machine-Learned Force Fields

Large-scale quantum chemical datasets, such as the QCML (Quantum Chemistry Machine Learning) dataset, are instrumental in training machine-learned force fields (MLFFs) [23]. The QCML dataset contains properties calculated for both equilibrium and off-equilibrium molecular structures, including energies and forces from 33.5 million DFT and 14.7 billion semi-empirical calculations [23]. The accuracy of these training data, and hence the reliability of the resulting MLFFs, is fundamentally tied to the constants used in the underlying quantum chemistry calculations. This enables accurate molecular dynamics simulations of large systems, such as proteins in solution, which would be prohibitively expensive with direct ab initio methods [23].

Molecular Design and Discovery

The exploration of chemical space for new drug candidates or materials is increasingly guided by computational predictions. The redefined SI ensures that properties like binding energies, reaction barriers, and spectroscopic characteristics predicted by quantum chemistry are based on a stable, universal standard. This reduces uncertainties when transitioning from computational predictions to experimental synthesis and testing in the lab.

The 2019 redefinition of the SI marks a historic achievement, anchoring the global measurement system to the immutable fabric of the universe. For quantum chemists and drug development professionals, this provides an unshakable foundation. The Planck constant and other defined constants are not merely abstract concepts; they are the bedrock parameters in the equations that predict molecular behavior. This creates a seamless, traceable chain from the definition of the kilogram to the prediction of a drug candidate's binding affinity, enhancing the reliability and reproducibility of computational science and accelerating the discovery of new molecules and materials.

Planck's constant, ( h ) (and its reduced form ( \hbar = h/2\pi )), is a fundamental physical constant that defines the scale of quantum effects. With a value of exactly ( 6.62607015 \times 10^{-34} ) J·s (joule-seconds) as defined in the SI system, it serves as the bridge between the macroscopic and quantum realms [7]. In quantum chemistry and drug development research, understanding the principles governed by ( h ) is crucial for accurately modeling molecular behavior, electron transfer processes, and quantum effects in biological systems.

Fundamental Constants & Quantitative Relationships

Values of Planck's Constant

| Constant | Symbol | Value | Units |

|---|---|---|---|

| Planck Constant | ( h ) | 6.62607015 × 10⁻³⁴ | J·s |

| Reduced Planck Constant | ( \hbar ) | 1.054571817... × 10⁻³⁴ | J·s |

| Planck Constant | ( h ) | 4.135667696... × 10⁻¹⁵ | eV·Hz⁻¹ |

| Reduced Planck Constant | ( \hbar ) | 6.582119569... × 10⁻¹⁶ | eV·s |

Core Equations Governed by ( h )

| Principle | Equation | Key Variables |

|---|---|---|

| Planck-Einstein Relation | ( E = hf ) | ( E ): Energy, ( f ): Frequency [7] [24] |

| de Broglie Relation | ( \lambda = \frac{h}{p} ) | ( \lambda ): Wavelength, ( p ): Momentum [7] |

| Heisenberg Uncertainty Principle | ( \Delta x \Delta p \geq \frac{\hbar}{2} ) | ( \Delta x ): Position uncertainty, ( \Delta p ): Momentum uncertainty [25] [26] |

| Energy-Time Uncertainty | ( \Delta t \Delta E \geq \frac{\hbar}{2} ) | ( \Delta E ): Energy uncertainty, ( \Delta t ): Time uncertainty [26] |

| Tunneling Probability (Approx.) | ( P \approx \exp\left(\frac{-2a\sqrt{2m(V-E)}}{\hbar}\right) ) | ( P ): Tunneling probability, ( a ): Barrier width, ( V ): Barrier height, ( m ): Particle mass, ( E ): Particle energy [27] |

Quantum Tunneling: Theory & Applications

Theoretical Framework

Quantum tunneling is a direct consequence of the wave-like nature of quantum particles. When a particle with energy ( E ) encounters a potential barrier of height ( V > E ), its wavefunction does not terminate abruptly but decays exponentially within the barrier. This finite probability density at the far side of the barrier enables the particle to "tunnel" through a classically forbidden region [28] [29].

The probability of tunneling is highly sensitive to three key parameters [30]:

- Particle mass: Lighter particles (electrons, protons) tunnel more readily than heavier ones.

- Barrier width: Probability decreases exponentially with increasing barrier thickness.

- Energy difference: Probability decreases with increasing ( (V-E) ).

For a rectangular barrier, the transmission coefficient can be derived from the time-independent Schrödinger equation and is approximately given by ( T \approx e^{-2\kappa a} ), where ( \kappa = \sqrt{\frac{2m(V-E)}{\hbar^2}} ) and ( a ) is the barrier width [29].

Tunneling Experimental Protocol: Scanning Tunneling Microscopy (STM)

Purpose: To achieve atomic-scale resolution imaging of conductive surfaces by measuring electron tunneling current [28] [30].

Materials & Equipment:

- Conductive sample (metals, semiconductors)

- Sharp metallic tip (Pt-Ir or tungsten)

- Piezoelectric positioners for sub-Ångstrom control

- Vibration isolation system

- Current amplifier

- Computer control and data acquisition system

Procedure:

- Tip Preparation: Electrochemically etch a wire to create an atomically sharp tip.

- Sample Mounting: Secure the conductive sample on the STM stage.

- Approach: Use coarse positioners to bring the tip within ~1 μm of the surface, then engage piezoelectric fine control.

- Tunneling Current Establishment: Apply a bias voltage (1 mV - 2 V) between tip and sample. As the tip approaches within nanometers, electrons tunnel across the gap, creating a measurable current.

- Imaging (Two Modes):

- Constant Current Mode: Adjust tip height to maintain constant tunneling current while raster scanning. The height variation maps surface topography.

- Constant Height Mode: Maintain constant tip height while measuring current variations during scanning.

Data Analysis:

- Convert tip height or current variations into a topographic image.

- Atomic resolution is achieved when individual atoms appear as periodic corrugations in the image.

Key Parameters:

- Typical tunneling currents: 0.1-10 nA

- Typical tip-sample distances: 0.5-1.0 nm

- Bias voltage polarity determines electron flow direction

Research Reagent Solutions for Tunneling Studies

| Reagent/Material | Function/Application |

|---|---|

| Pt-Ir Alloy Wire | Fabrication of stable, sharp STM tips with good conductivity [30] |

| HOPG (Highly Oriented Pyrolytic Graphite) | Atomically flat calibration standard for STM |

| Gold Single Crystals | Well-defined substrates for molecular adsorption studies |

| Tungsten Wire | Alternative tip material that can be electrochemically sharpened |

| Piezoelectric Ceramics | Provide precise tip positioning with sub-Ångstrom resolution |

Quantization: Theory & Applications

Theoretical Framework

Quantization refers to the phenomenon where physical quantities take on only discrete, rather than continuous, values. This principle originated with Max Planck's solution to the blackbody radiation problem, which required the assumption that energy is exchanged in discrete packets or "quanta" of size ( E = hf ) [31].

In quantum chemistry, the most significant manifestation is the quantization of electron energies in atoms and molecules. The Bohr model of the hydrogen atom gives energy levels:

[ En = -\frac{me e^4}{8\epsilon_0^2 h^2} \cdot \frac{1}{n^2} ]

where ( n = 1, 2, 3, \ldots ) is the principal quantum number [24]. In modern quantum chemistry, this is generalized through solutions of the Schrödinger equation for molecular systems, where wavefunctions and their corresponding energies are quantized.

Quantization Experimental Protocol: Measuring Atomic Emission Spectra

Purpose: To verify energy level quantization in atoms through observation of discrete emission spectra [31].

Materials & Equipment:

- Gas discharge tubes (H, He, Ne)

- High-voltage power supply

- Spectrometer or diffraction grating with photodetector

- Wavelength calibration source (e.g., mercury lamp)

- Data acquisition system

Procedure:

- Setup: Place the gas discharge tube in front of the spectrometer slit. Ensure proper alignment.

- Excitation: Apply high voltage to the discharge tube to excite gas atoms.

- Calibration: Use a mercury vapor lamp with known spectral lines to calibrate the wavelength scale of the spectrometer.

- Measurement: Record the intensity of light as a function of wavelength for the sample gas.

- Data Collection: Measure the wavelengths of all observable emission lines.

Data Analysis:

- For hydrogen, use the Rydberg formula to verify quantization:

[ \frac{1}{\lambda} = R \left( \frac{1}{n1^2} - \frac{1}{n2^2} \right) ]

where ( R ) is the Rydberg constant, related to Planck's constant by ( R = \frac{me e^4}{8\epsilon0^2 h^3 c} ).

- Compare observed wavelengths with theoretical predictions.

- Calculate energy differences between levels using ( \Delta E = \frac{hc}{\lambda} ).

Uncertainty Principle: Theory & Applications

Theoretical Framework

The Heisenberg Uncertainty Principle states fundamental limits to the precision with which certain pairs of physical properties can be simultaneously known [25] [26]. The most familiar form relates position and momentum:

[ \Delta x \Delta p \geq \frac{\hbar}{2} ]

This is not a limitation of measurement instruments but rather a fundamental property of quantum systems arising from the wave-like nature of particles. When a particle is described by a well-localized wavefunction (small ( \Delta x )), its momentum space wavefunction becomes spread out (large ( \Delta p )), and vice versa [25].

Uncertainty Principle Experimental Protocol: Spectral Linewidth Measurements

Purpose: To demonstrate the energy-time uncertainty principle through measurement of natural linewidths in atomic spectra [26].

Materials & Equipment:

- High-resolution spectrometer (e.g., Fabry-Pérot interferometer)

- Atomic emission source with well-characterized transitions

- Photodetector with fast response

- Data acquisition system

Procedure:

- Source Preparation: Select an atomic transition with minimal broadening mechanisms (pressure, Doppler).

- High-Resolution Measurement: Use the high-resolution spectrometer to measure the intensity profile of a spectral line.

- Data Collection: Precisely measure the full width at half maximum (FWHM) of the spectral line.

- Lifetime Measurement: Independently measure the excited state lifetime using time-resolved spectroscopy.

Data Analysis:

- The energy uncertainty ( \Delta E ) is related to the natural linewidth ( \Gamma ) by ( \Delta E = \frac{\hbar \Gamma}{2} ).

- The time uncertainty ( \Delta t ) corresponds to the measured excited state lifetime ( \tau ).

- Verify that the product ( \Delta E \Delta t \approx \frac{\hbar}{2} ) within experimental uncertainty.

Quantum Principles Workflow Diagram

STM Operational Diagram

The three fundamental quantum principles governed by Planck's constant—quantization, uncertainty, and tunneling—provide the theoretical foundation for modern quantum chemistry and materials research. The experimental protocols and applications detailed in these notes enable researchers to probe and manipulate matter at the atomic scale. For drug development professionals, understanding these principles is particularly valuable for studying molecular interactions, enzyme mechanisms, and electron transfer processes in biological systems, where quantum effects can play significant roles.

From Theory to Practice: Implementing Planck's Constant in Computational Drug Design

The application of quantum mechanics to chemistry is founded upon the principles first elucidated by Max Planck, who proposed that energy is exchanged in discrete quanta [7]. The Planck constant, h, and its reduced form, ℏ, are fundamental in this regard, defining the relationship between the energy of a quantum and the frequency of its associated electromagnetic wave via the Planck-Einstein relation, E = hf [7]. This relationship is not merely a historical footnote but is embedded in the very fabric of modern computational chemistry, from the quantization of molecular energy levels in the Bohr model to the Heisenberg uncertainty principle that underpins the limits of simultaneous measurement [7] [18]. The challenge famously articulated by Paul A. M. Dirac in 1929 persists: while the physical laws governing chemistry are completely known, the exact application of these laws leads to equations that are too complex to solve exactly for any but the simplest systems [32] [18]. The primary difficulty lies in the exponential scaling of the wave function's complexity with each added particle, making exact simulations on classical computers inefficient and often intractable for molecules of practical interest [32] [18]. This application note provides a structured guide to navigating the landscape of computational quantum chemistry methods, with a focus on their scaling properties and practical application in research and drug development.

The pursuit of solutions to the electronic Schrödinger equation has spawned a hierarchy of computational methods. These methods represent a trade-off between computational cost, often expressed as how the resource requirements scale with system size (e.g., the number of basis functions, M), and the accuracy of the result. Table 1 summarizes key methods, their scaling behavior, and primary use cases, providing a critical reference for project selection.

Table 1: Scaling and Characteristics of Computational Chemistry Methods

| Method | Theoretical Scaling (with M basis functions) | Key Principle | Primary Use Case |

|---|---|---|---|

| Hartree-Fock (HF) [18] | O(M⁴) | Approximates the wavefunction as a single Slater determinant; does not include electron correlation. | Baseline calculation; starting point for more accurate methods. |

| Density Functional Theory (DFT) | O(M³) to O(M⁴) | Uses electron density instead of a wavefunction to compute energy; includes approximate correlation. | Workhorse for ground-state properties of medium-to-large molecules. |

| Coupled Cluster (CC) with Singles, Doubles (and perturbative Triples) [18] | O(M⁶) to O(M⁷) | Accounts for electron correlation by exciting electrons from occupied to virtual orbitals. | High-accuracy "gold standard" for small-to-medium molecules. |

| Full Configuration Interaction (FCI) [32] [18] | Exponential | The exact solution within a given basis set; considers all possible electron excitations. | Benchmarking for small systems (<10 electrons); numerically exact result. |

| Variational Quantum Eigensolver (VQE) [32] [33] | Circuit depth depends on ansatz; O(M⁶/ϵ²) measurements for naive approach [32]. | Hybrid quantum-classical algorithm that uses a parameterized quantum circuit to prepare and measure trial states. | Near-term quantum hardware; finding ground-state energies on devices with limited coherence. |

| Quantum Phase Estimation (QPE) [32] | Coherent runtime: O(2^(ω+1)) [32]. | A quantum algorithm to directly read out the energy eigenvalue of a prepared state. | Fault-tolerant quantum computing; exact energy estimation (requires deep circuits). |

The scaling problem is particularly acute for post-Hartree-Fock wavefunction methods. As illustrated in Figure 1, the computational cost increases steeply with the desired accuracy, with the Full Configuration Interaction (FCI) method being combinatorially expensive and thus restricted to very small systems [18].

Figure 1: A simplified hierarchy of quantum chemistry methods, showing the path from the least accurate (Hartree-Fock) to the most accurate (FCI) and the approximate incorporation of electron correlation energy. Dashed lines indicate a different theoretical approach.

Quantum Computational Chemistry: Protocols and Applications

The limitations of classical scaling have spurred the development of quantum computational chemistry, which uses quantum computers to simulate chemical systems more efficiently [32]. These algorithms are anticipated to have run-times that scale polynomially with system size and desired accuracy [32]. Below are detailed protocols for two leading quantum algorithms.

Protocol 1: Variational Quantum Eigensolver (VQE) for Ground-State Energy

The VQE is a hybrid quantum-classical algorithm designed for near-term quantum hardware [32] [33]. Its objective is to find the ground-state energy of a molecular Hamiltonian.

Experimental Protocol:

- Problem Mapping: Map the electronic structure problem of the target molecule onto a qubit Hamiltonian. This involves: a. Selecting a basis set (e.g., Gaussian-type orbitals, plane waves) [32]. b. Applying a fermion-to-qubit mapping such as the Jordan-Wigner transformation [32]. This encodes fermionic creation (a†) and annihilation (a) operators into Pauli spin operators (X, Y, Z) acting on qubits, preserving the anti-symmetry of the wavefunction.

- Ansatz Initialization: Choose a parameterized quantum circuit (ansatz) to prepare trial wavefunctions. The Unitary Coupled Cluster (UCC) ansatz is a common, chemistry-inspired choice [33].

- Quantum Execution: On the quantum processor, prepare the state |ψ(θ)⟩ using the ansatz with initial parameters θ.

- Measurement: Measure the expectation value ⟨ψ(θ)|H|ψ(θ)⟩. This typically involves measuring the individual Pauli terms of the Hamiltonian, which can be grouped to reduce the number of measurements [32].

- Classical Optimization: The classical computer uses the energy measurement from the quantum processor as the objective function for an optimization routine (e.g., gradient descent) to find the parameters θ that minimize the energy.

- Iteration: Steps 3-5 are repeated until the energy converges to a minimum, which provides an upper bound to the true ground-state energy, in accordance with the variational principle [32].

Recent Application: A 2022 experimental study demonstrated a scaled-up implementation of VQE with an optimized UCC ansatz on 12 qubits. The researchers achieved chemical accuracy for H₂ at all bond distances and for LiH at small bond distances by employing significant error suppression techniques, pushing the boundaries of experimental quantum computational chemistry [33].

Protocol 2: Quantum Phase Estimation (QPE) for Exact Energy Eigenvalues

QPE is a quantum algorithm that, in principle, can provide an exact energy eigenvalue of a Hamiltonian, but it requires more robust, fault-tolerant quantum hardware [32].

Experimental Protocol:

- Initialization: The qubit register is initialized in a starting state |ψ⟩ that has a non-zero overlap with the target eigenstate (e.g., the ground state |E₀⟩). This state is a superposition of energy eigenstates: |ψ⟩ = Σᵢ cᵢ |Eᵢ⟩ [32].

- Ancilla Preparation: A register of ω ancilla qubits is prepared, each placed into a superposition state by applying a Hadamard gate [32].

- Controlled Unitary Evolution: A series of controlled-unitary operations are applied, where the unitary is the time evolution operator, e^(-iHt), conditioned on the ancilla qubits. This step encodes the phase information, which is related to the energy, onto the ancilla register [32].

- Inverse Quantum Fourier Transform (QFT†): An inverse QFT is applied to the ancilla qubits. This converts the phase information into a readable binary output on the ancilla register [32].

- Measurement: The ancilla qubits are measured in the computational basis. The output bitstring corresponds to a binary fraction representing the phase φ, from which the energy E can be computed via E = 2πφ/t. The main register collapses into the corresponding energy eigenstate |Eᵢ⟩ with probability |cᵢ|² [32].

Key Considerations: Effective state preparation is critical, as a randomly chosen initial state would have an exponentially small probability of collapsing to the desired ground state after measurement [32]. Furthermore, the number of ancilla qubits ω determines the precision and coherent evolution time required, making the algorithm demanding for current hardware [32].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful computational chemistry research, particularly on emerging quantum hardware, relies on a suite of theoretical and hardware "reagents." Table 2 details these essential components.

Table 2: Key Research Reagent Solutions in Quantum Computational Chemistry

| Item / Solution | Function / Purpose | Example / Note |

|---|---|---|

| Gaussian Basis Sets [32] | A set of functions (modeled on atomic orbitals) used to expand molecular orbitals in calculations. | Common in molecular electronic structure calculations on classical computers. |

| Plane Wave Basis Sets [32] | A set of periodic functions suitable for simulating periodic systems, such as crystals and surfaces. | Often used in material science simulations; advancements have improved algorithm efficiency for these sets [32]. |

| Jordan-Wigner Encoding [32] | A specific method for mapping fermionic operators (electrons) to qubit operators (Pauli matrices). | Preserves the antisymmetric nature of fermionic wavefunctions; can introduce non-local string operators. |

| Fermionic SWAP (FSWAP) Network [32] | A network of quantum gates used to rearrange the ordering of fermions on qubits. | Mitigates inefficiency from non-local interactions in mappings like Jordan-Wigner, reducing gate complexity. |

| Error Mitigation Techniques [33] | A collection of software and algorithmic methods to reduce the impact of noise on results from current quantum processors. | Crucial for achieving high-precision results on today's noisy hardware, as demonstrated in recent experimental works [33]. |

| Unitary Coupled Cluster (UCC) Ansatz [33] | A chemically inspired, parameterized form for a quantum circuit that is used as a trial wavefunction in VQE. | More scalable and accurate than non-unitary classical counterparts; used in state-of-the-art experiments [33]. |

The workflow integrating these components is shown in Figure 2.

Figure 2: A generalized workflow for a quantum computational chemistry experiment, from problem definition to result, highlighting the role of key tools and reagents.