Beyond Hartree-Fock: A Practical Guide to Post-Hartree-Fock Methods for Accurate Molecular Calculations in Drug Discovery

This article provides a comprehensive overview of post-Hartree-Fock (post-HF) methods, essential for achieving high-accuracy quantum chemical predictions in molecular calculations.

Beyond Hartree-Fock: A Practical Guide to Post-Hartree-Fock Methods for Accurate Molecular Calculations in Drug Discovery

Abstract

This article provides a comprehensive overview of post-Hartree-Fock (post-HF) methods, essential for achieving high-accuracy quantum chemical predictions in molecular calculations. Tailored for researchers and drug development professionals, it covers the foundational theory behind electron correlation, details key methodological approaches from MP2 to CCSD(T), and addresses practical challenges and optimization strategies for their application. The scope extends to troubleshooting computational bottlenecks, validating results against benchmarks, and exploring emerging trends, including the integration of machine learning and the prospective role of quantum computing, offering a vital resource for leveraging these powerful tools in biomedical research.

The Quest for Accuracy: Understanding Electron Correlation and the Limits of Hartree-Fock

The Hartree-Fock (HF) method serves as the foundational approximation in quantum chemistry for solving the electronic structure of molecules. However, its mean-field approach, where each electron experiences only the average electrostatic field of all other electrons, inherently neglects the instantaneous repulsive interactions between electrons [1] [2]. This neglected component of the electron-electron interaction is what defines the electron correlation problem. The correlation energy is formally defined as the difference between the exact, non-relativistic energy of a system and its Hartree-Fock energy: ( E{\text{corr}} = E{\text{exact}} - E_{\text{HF}} ) [3] [2] [4]. Although this energy difference typically constitutes a small fraction (around 1%) of the total electronic energy, its contribution is crucial for achieving chemical accuracy in computational predictions, as it directly influences molecular properties, reaction energetics, and the description of chemical bonding [3] [2].

The limitations of the HF method become particularly evident in specific chemical scenarios. For instance, the dissociation of the H₂ molecule is poorly described by restricted Hartree-Fock (RHF), which fails to correctly separate the molecule into two hydrogen atoms [5]. Similarly, the HF approximation often fails to predict the stability of anions where the binding mechanism relies on electron correlation effects, such as in the case of the C₂⁻ anion [5]. These qualitative and quantitative failures underscore the necessity for post-Hartree-Fock methods, which are designed to recover a significant portion of the missing correlation energy [1] [6].

Defining Electron Correlation

Physical Interpretation and the Coulomb Hole

Electron correlation describes the interaction between electrons in a quantum system, specifically how the motion of one electron is influenced by the instantaneous positions of all others [4]. In the HF approximation, the probability of finding two electrons at a given separation is effectively overestimated at small distances and underestimated at large distances because the model does not account for their mutual Coulombic repulsion [4]. The concept of the Coulomb hole visually represents this deficiency. It is defined as the difference in the intracule density distribution (the probability distribution of interelectronic distances) between a correlated wavefunction and the Hartree-Fock wavefunction [3]. This hole illustrates how correlated electrons "avoid" each other more effectively than the HF model predicts.

Dynamic and Static Correlation

Electron correlation is broadly categorized into two types, each with distinct physical origins and requiring different theoretical treatments [2] [4].

- Dynamic Correlation: This arises from the short-range, instantaneous repulsive interactions between electrons as they move. It is a ubiquitous effect present in all electronic systems and is characterized by rapid fluctuations in electron positions. Methods like Møller-Plesset Perturbation Theory and Coupled-Cluster theory are particularly effective at capturing dynamic correlation [6] [2].

- Static (Non-Dynamical) Correlation: This occurs in systems where the ground electronic state cannot be accurately described by a single Slater determinant. This is common in situations with (near-)degenerate electronic configurations, such as in molecules with stretched or broken bonds (e.g., during dissociation), diradicals, and many transition metal complexes [2] [5] [4]. Static correlation is a qualitative failure of the single-determinant picture and requires a multi-reference description from the outset, as provided by Multi-Configurational Self-Consistent Field (MCSCF) methods [6] [4].

Table 1: Key Characteristics of Electron Correlation Types

| Feature | Dynamic Correlation | Static Correlation |

|---|---|---|

| Physical Origin | Instantaneous electron-electron repulsion [2] | Near-degeneracy of electronic configurations [2] [4] |

| Primary Methods | MP2, CCSD(T), CISD [6] [2] | CASSCF, MCSCF [6] [4] |

| Typical Systems | Closed-shell molecules near equilibrium geometry [2] | Dissociating bonds, diradicals, transition metal complexes [2] [5] |

Post-Hartree-Fock methods comprise a suite of computational approaches developed to address the electron correlation problem. They can be broadly classified into several families based on their underlying theoretical principles.

Wavefunction-Based Correlation Methods

These methods build upon the HF wavefunction by introducing excitations into virtual orbitals.

- Configuration Interaction (CI): The CI method expands the total wavefunction as a linear combination of the HF reference determinant and excited-state determinants (e.g., single (S), double (D), etc., excitations) [6] [7]. The coefficients of this expansion are determined variationally. Full CI, which includes all possible excitations, provides the exact solution for a given basis set but is computationally intractable for all but the smallest systems [3] [2]. Truncated methods like CISD are more practical but lack size-consistency, meaning their accuracy deteriorates with increasing system size [6] [7].

- Coupled-Cluster (CC) Theory: This method uses an exponential ansatz for the wavefunction (e.g., ( \Psi{CC} = e^{T} \Phi0 )) to ensure size-consistency [7]. The cluster operator ( T ) generates single, double, triple, etc., excitations. The CCSD method includes singles and doubles, while the CCSD(T) method, often called the "gold standard" of quantum chemistry, adds a perturbative treatment of triples. This method offers excellent accuracy for dynamic correlation but at a high computational cost [6] [7].

- Møller-Plesset Perturbation Theory (MPn): This is a class of non-variational methods that treat electron correlation as a perturbation to the HF Hamiltonian [6]. The second-order correction, MP2, is one of the most widely used post-HF methods due to its favorable balance of cost and accuracy, capturing a significant portion of dynamic correlation [6] [2].

- Multi-Configurational Methods: For systems with strong static correlation, methods like the Multi-Configurational Self-Consistent Field (MCSCF) and its variant Complete Active Space SCF (CASSCF) are employed [2] [4]. These methods optimize both the orbital coefficients and the configuration expansion coefficients within a carefully selected active space of orbitals, providing a qualitatively correct zero-order wavefunction. Dynamical correlation can then be added on top via methods like CASPT2 (CAS with second-order perturbation theory) [6].

Table 2: Comparison of Key Post-Hartree-Fock Methods

| Method | Theoretical Principle | Handles Correlation | Key Advantage | Key Limitation |

|---|---|---|---|---|

| MP2 [6] | Perturbation Theory (2nd order) | Dynamic | Low cost, good scaling [6] | Fails for static correlation, not variational [6] |

| CISD [6] [7] | Variational (Single + Double excitations) | Primarily Dynamic | Simple concept, variational [7] | Not size-consistent [7] |

| CCSD(T) [6] [7] | Exponential cluster operator | Dynamic | High accuracy, size-consistent [7] | Very high computational cost [7] |

| CASSCF [2] [4] | Variational (Multi-reference) | Static | Corrects for near-degeneracy [4] | Choice of active space is non-trivial [2] |

Practical Protocols for Post-HF Calculations

Protocol 1: Assessing Dynamic Correlation with MP2

The MP2 method provides a cost-effective first assessment of electron correlation effects.

- Aim: To obtain an initial estimate of the dynamical correlation energy for a molecular system near its equilibrium geometry.

- Prerequisites: A converged Hartree-Fock calculation.

- Workflow:

- Geometry Optimization: Perform a geometry optimization at the HF level to find a stable molecular structure.

- HF Energy Calculation: Execute a single-point energy calculation at the HF level to obtain the reference energy, ( E{\text{HF}} ).

- MP2 Correlation Energy Calculation: Using the HF orbitals as a basis, compute the second-order Møller-Plesset energy correction, ( E{\text{MP2}} ).

- Total Energy Evaluation: The total MP2 energy is given by ( E{\text{total}} = E{\text{HF}} + E_{\text{MP2}} ).

- Interpretation: The MP2 energy is typically lower than the HF energy. The correlation energy is ( E{\text{corr}}(\text{MP2}) = E{\text{MP2}} ). This protocol is suitable for systems where static correlation is not significant.

Protocol 2: Handling Strong Correlation with CASSCF

For molecules with known multi-reference character (e.g., diradicals, dissociating bonds), a CASSCF calculation is the appropriate starting point.

- Aim: To generate a qualitatively correct reference wavefunction that accounts for static correlation.

- Prerequisites: A foundational understanding of the system's electronic structure to define the active space.

- Workflow:

- Active Space Selection: This is the most critical step. Select a set of active electrons and active orbitals (e.g., 2 electrons in 2 orbitals for a stretched H₂ molecule, or the π-system in butadiene). This is denoted as (ne, no), e.g., (2,2).

- State Specification: Define the number of states and spin symmetry (e.g., singlet, triplet) to be averaged.

- Orbital Optimization: Perform the CASSCF calculation to self-consistently optimize both the molecular orbitals and the configuration coefficients within the active space.

- Analysis: Inspect the resulting natural orbitals and their occupation numbers. Non-integer occupation numbers (e.g., not close to 2 or 0) indicate strong static correlation.

- Interpretation: The CASSCF energy includes static but not dynamic correlation. For quantitative results, a subsequent dynamic correlation method like CASPT2 or MRCI must be applied.

The Scientist's Toolkit: Essential Computational Reagents

Table 3: Key Reagents and Parameters for Post-HF Calculations

| Item | Function/Description | Considerations for Selection |

|---|---|---|

| Basis Set | A set of mathematical functions (e.g., Gaussian-type orbitals) used to represent molecular orbitals [7]. | Larger basis sets (e.g., triple-ζ, quadruple-ζ) improve accuracy but drastically increase cost. Correlation-consistent basis sets (e.g., cc-pVXZ) are designed for post-HF methods [7]. |

| Active Space (for CASSCF) | The set of chemically relevant molecular orbitals and electrons included in the full CI expansion [2]. | Selection requires chemical insight. It should include orbitals involved in bond breaking/forming, frontier orbitals, and unpaired electrons. |

| Reference Wavefunction | The initial guess wavefunction, typically from a converged HF calculation. | For open-shell systems, an Unrestricted HF (UHF) reference can be used, but may suffer from spin contamination. |

| Integral Grid | Numerical grid used for evaluating integrals in post-HF algorithms, particularly in Density Functional Theory (DFT). | A finer grid is needed for higher accuracy, at the cost of increased computation time. |

The Hartree-Fock method, while foundational, is fundamentally insufficient for quantitative quantum chemistry due to its neglect of electron correlation. This limitation manifests in erroneous predictions for bond dissociation energies, properties of anions, and systems with degenerate or near-degenerate electronic states. The development of post-Hartree-Fock methods—including Configuration Interaction, Coupled-Cluster theory, Møller-Plesset Perturbation Theory, and Multi-Configurational approaches—provides a systematic pathway for recovering the missing correlation energy. The choice of an appropriate post-HF method depends critically on the nature of the system and the type of correlation (dynamic or static) that dominates. While these advanced methods come with increased computational cost, they are indispensable tools for achieving the accuracy required for modern molecular design, including applications in drug development and materials science.

In quantum chemistry, the Hartree-Fock (HF) method provides a foundational wave function-based approach for computing molecular electronic structures. It approximates the many-electron wave function as a single Slater determinant, where each electron moves in the average field created by all other electrons [8]. However, this mean-field approximation neglects electron correlation, which refers to the instantaneous, repulsive interactions between electrons. The energy discrepancy arising from this simplification is termed the correlation energy, defined as the difference between the exact non-relativistic energy of a system and its Hartree-Fock energy: ( E{\text{corr}} = E{\text{exact}} - E_{\text{HF}} ) [2]. While this correlation energy is typically a small fraction of the total electronic energy, recovering it is crucial for achieving chemical accuracy in predicting molecular properties, reaction energies, and binding affinities [2].

Electron correlation is conventionally divided into two primary types: dynamic correlation and static correlation. Accurately describing both forms is essential for modeling complex chemical systems, particularly in drug discovery where precise energy calculations underpin the design of novel therapeutics [8]. Post-Hartree-Fock methods comprise a suite of computational strategies developed specifically to address these electron correlation effects, going beyond the limitations of the basic HF approximation [1].

Defining Dynamic and Static Correlation

Dynamic Correlation

Dynamic correlation arises from the instantaneous Coulombic repulsion between electrons, which causes them to avoid each other as they move through space [2]. It reflects the rapid fluctuations in electron positions and represents the cumulative effect of numerous, small electron-electron repulsions that are not captured by the HF mean-field potential. This type of correlation is pervasive in all molecular systems and is particularly significant in systems with weakly interacting electrons [2].

From a computational perspective, dynamic correlation is often described as "short-range" and can be efficiently treated using perturbation theory or coupled cluster methods [2]. For example, in the reaction coordinate of a simple bond formation or cleavage, dynamic correlation accounts for the energy correction needed to properly describe the electron repulsion in the vicinity of the equilibrium bond length.

Static Correlation

Static correlation, also known as non-dynamic or near-degeneracy correlation, occurs when multiple electronic configurations possess similar energies [2]. This phenomenon is prominent in systems with near-degenerate orbitals, such as molecules with stretched or breaking bonds, diradicals, and many transition metal complexes [2]. In these cases, a single Slater determinant (as used in HF theory) provides a qualitatively incorrect description of the electronic structure.

Static correlation is considered a "long-range" effect and requires multi-reference methods for its accurate treatment [2]. For instance, in the dissociation of a H₂ molecule, the HF method fails dramatically as the bond is stretched, whereas a multi-configurational approach that includes both covalent and ionic configurations can correctly describe the dissociation limit.

Table 1: Comparative Analysis of Dynamic and Static Correlation

| Feature | Dynamic Correlation | Static Correlation |

|---|---|---|

| Physical Origin | Instantaneous Coulomb repulsion between electrons [2] | Near-degeneracy of multiple electronic configurations [2] |

| Character | Short-range, local electron avoidance [2] | Long-range, qualitative electronic structure effect [2] |

| Dominant In | Systems with weakly interacting electrons near equilibrium geometry [2] | Stretched bonds, diradicals, transition metal complexes [2] |

| Primary Treatment Methods | Møller-Plesset Perturbation Theory (MP2, MP4), Coupled Cluster (CCSD, CCSD(T)) [9] [1] | Multi-Configurational SCF (MCSCF), Complete Active Space SCF (CASSCF) [2] |

| Impact on Wavefunction | Small corrections to a single-reference wavefunction | Requires wavefunction with multiple dominant configurations |

Methodological Approaches and Protocols

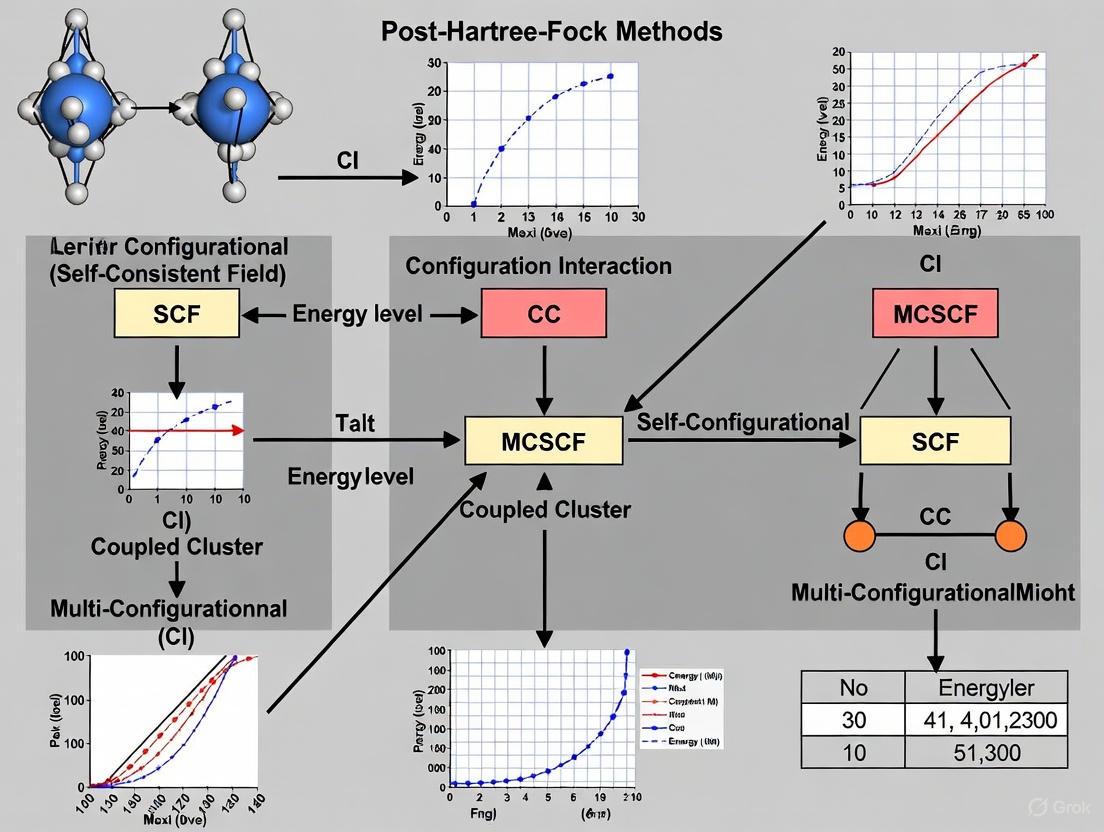

The development of post-Hartree-Fock methods is largely driven by the need to treat dynamic and static correlation with varying degrees of accuracy and computational efficiency. The relationship between these methods can be visualized as a strategic decision tree.

Diagram Title: Method Selection Workflow for Electron Correlation

Protocol 1: Single-Reference Methods for Dynamic Correlation

This protocol details the application of single-reference methods, which are appropriate when dynamic correlation dominates and a single HF configuration provides a qualitatively correct description of the system [2].

A. Møller-Plesset Perturbation Theory (MP2)

Principle: MP2 is a second-order perturbation theory that treats the electron correlation as a small perturbation to the HF Hamiltonian. It is one of the most computationally efficient post-HF methods for capturing dynamic correlation [10] [1].

Procedure:

- Converge a Restricted HF (RHF) calculation: Perform a standard HF calculation to obtain a set of canonical molecular orbitals and orbital energies. Ensure the wavefunction is stable.

- Transform two-electron integrals: Transform the atomic orbital basis two-electron integrals (( g_{pqrs} )) into the molecular orbital basis. This step is often the computational bottleneck.

- Compute the MP2 correlation energy: Calculate the second-order energy correction using the following standard formula: [ E^{(2)} = \frac{1}{4} \sum{ijab} \frac{ | \langle ij || ab \rangle |^2 }{ \epsiloni + \epsilonj - \epsilona - \epsilon_b } ] where ( i,j ) denote occupied orbitals, ( a,b ) denote virtual orbitals, ( \langle ij || ab \rangle ) are antisymmetrized two-electron integrals in the molecular orbital basis, and ( \epsilon ) are the HF orbital energies [1].

- Calculate total energy: The total MP2 energy is given by ( E{\text{MP2}} = E{\text{HF}} + E^{(2)} ).

B. Coupled Cluster Singles and Doubles (CCSD)

Principle: The Coupled Cluster method uses an exponential wavefunction ansatz ( e^{\hat{T}} | \Psi{\text{HF}} \rangle ) to model electron correlation. The cluster operator ( \hat{T} = \hat{T}1 + \hat{T}2 + \ldots ) generates all possible excitations from the reference HF determinant. CCSD includes all single (( \hat{T}1 )) and double (( \hat{T}_2 )) excitations [9] [1].

Procedure:

- Converge a Restricted HF calculation: As with MP2, start with a well-converged RHF wavefunction.

- Set up the coupled cluster equations: The equations are derived by projecting the Schrödinger equation with the exponential ansatz onto the reference and all singly and doubly excited determinants.

- Solve amplitude equations iteratively: Solve the following non-linear equations for the ( t ) amplitudes (excitation strengths) iteratively until self-consistency is achieved: [ \langle \Phi{i}^{a} | \bar{H} | \Phi{\text{HF}} \rangle = 0 \quad \text{and} \quad \langle \Phi{ij}^{ab} | \bar{H} | \Phi{\text{HF}} \rangle = 0 ] where ( \bar{H} = e^{-\hat{T}} H e^{\hat{T}} ) is the similarity-transformed Hamiltonian.

- Compute the CCSD energy: The correlation energy is calculated as ( E{\text{corr}} = \langle \Phi{\text{HF}} | \bar{H} | \Phi{\text{HF}} \rangle - E{\text{HF}} ).

Protocol 2: Multi-Reference Methods for Static Correlation

This protocol is selected when significant static correlation is present, such as in bond-breaking reactions or systems with open-shell transition metals [2].

A. Complete Active Space Self-Consistent Field (CASSCF)

Principle: CASSCF is a specific type of Multi-Configurational SCF (MCSCF) method. It divides molecular orbitals into an inactive space (doubly occupied), an active space (with variable occupancy), and a virtual space (unoccupied). A Full Configuration Interaction (FCI) calculation is performed within the active space, while the orbitals are optimized simultaneously [2].

Procedure:

- Define the Active Space: This is the most critical step. Select ( N ) electrons in ( M ) orbitals to form the active space, denoted as CAS(( N, M )). This space must include all orbitals essential for the chemical process (e.g., bonding/antibonding orbital pairs for bond breaking, d-orbitals in transition metals).

- Perform an initial guess: Obtain initial orbitals, often from an HF calculation.

- Solve the CI problem: Within the active space, construct and diagonalize the CI matrix to obtain the configuration interaction coefficients and the energy for the current set of orbitals.

- Optimize the orbitals: Update the molecular orbitals to minimize the total energy. This step combines aspects of HF orbital optimization with the multi-configurational wavefunction.

- Iterate to convergence: Repeat steps 3 and 4 until both the CI coefficients and the orbitals are self-consistent, yielding the final CASSCF energy and wavefunction.

B. Multi-Reference Perturbation Theory (e.g., CASPT2)

Principle: A CASSCF calculation correctly treats static correlation but often lacks dynamic correlation. CASPT2 adds a second-order perturbation theory correction on top of the CASSCF reference wavefunction to account for dynamic correlation [2].

Procedure:

- Perform a CASSCF calculation: Follow Protocol 2A to obtain a converged multi-reference wavefunction.

- Use the CASSCF wavefunction as the reference: The zeroth-order Hamiltonian is built using the CASSCF orbitals and energies.

- Compute the second-order energy correction: The first-order wavefunction and second-order energy are calculated by considering excitations out of the entire CASSCF reference space. This step is computationally demanding but captures the bulk of the dynamic correlation missing in CASSCF.

- Calculate the total energy: The final total energy is ( E{\text{CASPT2}} = E{\text{CASSCF}} + E^{(2)} ).

Table 2: Key Reagent Solutions for Post-Hartree-Fock Calculations

| Research Reagent / Resource | Function and Description |

|---|---|

| Gaussian Basis Sets (e.g., cc-pVDZ, cc-pVTZ) | Pre-optimized sets of atom-centered Gaussian functions used to expand molecular orbitals. Larger "triple-zeta" basis sets offer better resolution but increase cost [10]. |

| Active Space (for CASSCF) | A carefully selected set of molecular orbitals and electrons in which a full configuration interaction is performed. It is the central "reagent" for treating static correlation [2]. |

| Pseudopotentials / Effective Core Potentials (ECPs) | Replace the core electrons of heavy atoms with an effective potential, reducing computational cost while maintaining accuracy for valence electron effects. |

| Two-Electron Integrals (( g_{pqrs} )) | The computational representation of electron-electron repulsion, calculated over quartets of basis functions. They are fundamental inputs for all correlated methods [9] [10]. |

| Quantum Chemistry Software (e.g., Gaussian, Q-Chem, ORCA, Molpro) | Software packages that implement the complex algorithms for SCF, integral transformation, and post-HF solvers, providing user-friendly interfaces [8]. |

Application in Drug Discovery

The accurate treatment of electron correlation is not merely an academic exercise but has profound implications in drug discovery, where predicting molecular properties and interactions with high fidelity is essential [8].

QM/MM Simulations: In enzyme catalysis, the reactive site (e.g., a covalent inhibitor forming a bond with a catalytic residue) often exhibits strong static correlation, necessitating a multi-reference QM method (like CASSCF) for the active site. This QM region is embedded within a larger protein environment treated with molecular mechanics (MM), balancing accuracy and computational feasibility [8].

Binding Affinity Prediction: The strength of non-covalent interactions between a drug candidate and its protein target—such as hydrogen bonding, π-π stacking, and dispersion forces—is heavily influenced by dynamic correlation. Methods like MP2 or CCSD(T) within a QM/MM framework can provide superior accuracy compared to HF or pure MM force fields, especially for "undruggable" targets with metalloenzymes or unusual bonding [8].

Reaction Mechanism Elucidation: For covalent inhibitors, the process of bond formation and breaking along the reaction pathway involves a transition from static to dynamic correlation dominance. Multi-reference methods are critical for correctly modeling the transition state and reaction energy barrier, guiding the rational design of more effective and selective inhibitors [8].

Post-Hartree-Fock (post-HF) methods encompass a suite of computational quantum chemistry approaches designed to overcome the central limitation of the Hartree-Fock (HF) approximation: the neglect of electron correlation. The HF method, while providing a qualitative description of electronic structure, treats electron-electron interactions only in an average sense and fails to capture the correlated motion of electrons. This missing electron correlation energy is crucial for quantitative predictions of molecular properties, reaction energies, and spectroscopic phenomena [11] [6]. In practical terms, HF accounts for the majority of the exact total energy, but the missing correlation component, though small, is chemically significant [7]. Post-HF methods systematically recover this correlation energy, bridging the gap between mean-field approximations and the exact solution of the Schrödinger equation within a given basis set.

The importance of these methods extends across computational chemistry, physics, and materials science, with growing applications in drug development for accurately modeling molecular interactions, excitation energies, and properties of excited states. This overview details the theoretical foundations, methodological categories, practical protocols, and performance characteristics of mainstream post-HF approaches, providing researchers with a framework for selecting and implementing these methods.

Theoretical Foundations of Electron Correlation

Electron correlation is conventionally separated into two distinct types: static (non-dynamical) and dynamic correlation. Static correlation arises in systems with (near-)degenerate electronic configurations, such as those encountered during bond dissociation or in transition metal complexes. A single Slater determinant provides a qualitatively inadequate description in these cases. Dynamic correlation, conversely, accounts for the instantaneous, short-range repulsion between electrons that is averaged in the HF picture. This separation, while somewhat artificial, informs the development and application of different post-HF strategies [6].

The electron correlation energy is formally defined as the difference between the exact, non-relativistic energy of a system and its HF energy calculated with a complete basis set [11]. Accurately capturing this energy presents a significant challenge because its magnitude is small relative to the total energy, yet its contribution to chemically relevant energy differences is substantial.

Categories of Post-Hartree-Fock Methods

Post-HF methods can be broadly classified into several categories based on their theoretical approach, each with distinct strengths and limitations suited to particular chemical problems. Table 1 provides a comparative summary of these methods.

Table 1: Overview of Major Post-Hartree-Fock Methods

| Method | Theoretical Approach | Handled Correlation Type | Key Strength | Key Limitation | Computational Scaling |

|---|---|---|---|---|---|

| MPn | Many-Body Perturbation Theory | Primarily Dynamic | Size-consistent; systematic improvement | Divergence in strongly correlated systems; not variational | MP2: O(N⁵), MP3: O(N⁶), MP4: O(N⁷) |

| CI | Configuration Interaction | Static & Dynamic (depending on truncation) | Variational; conceptually simple | Not size-extensive if truncated; exponential cost | CISD: O(N⁶), FCI: Factorial |

| CC | Coupled-Cluster | Static & Dynamic | Size-extensive; gold standard for small molecules | Non-variational; high computational cost | CCSD: O(N⁶), CCSD(T): O(N⁷) |

| CASSCF | Multi-Reference Wavefunction | Primarily Static | Handles strong correlation; chemically intuitive active space | Depends on active space choice; misses dynamic correlation | Factorial with active space size |

| CASPT2/ NEVPT2 | Multi-Reference Perturbation Theory | Static & Dynamic | Adds dynamic correlation to CASSCF | Costly; depends on CASSCF reference | High (typically O(N⁵) or worse) |

Perturbation Theory: Møller-Plesset (MP) Methods

Møller-Plesset perturbation theory is a cornerstone of post-HF methods, treating electron correlation as a perturbation to the HF Hamiltonian. The second-order correction, MP2, is the most widely used due to its favorable balance of cost and accuracy. MP2 captures a substantial portion of the dynamical correlation energy and is size-consistent. However, MP methods can exhibit divergent behavior for systems with significant static correlation, such as open-shell transition metal complexes, and are not variational [6]. The MP2 method is often employed as a proof-of-concept for more efficient protocols due to its relatively low computational cost [11].

Wavefunction Expansion: Configuration Interaction (CI)

The Configuration Interaction (CI) method constructs the many-electron wavefunction as a linear combination of Slater determinants, generated by exciting electrons from occupied HF orbitals to virtual orbitals. Full CI (FCI), which includes all possible excitations, provides the exact solution within the chosen basis set but is computationally feasible only for the smallest systems [6] [7]. Truncated CI methods, such as CISD (including single and double excitations), offer a practical compromise. A major drawback of truncated CI is its lack of size-extensivity, meaning the energy does not scale correctly with system size, leading to non-cancellation of errors in energy difference calculations [7].

Exponential Ansatz: Coupled-Cluster (CC) Methods

Coupled-Cluster theory employs an exponential wavefunction ansatz (e.g., ( \Psi{CC} = e^{T} \Phi{0} )) and is widely regarded as the most accurate general-purpose method for single-reference systems. The CCSD method (including single and double excitations) is size-extensive. The inclusion of a perturbative treatment of triple excitations, CCSD(T), often referred to as the "gold standard," delivers exceptional accuracy for thermochemical properties [11] [7]. The primary limitation of CC methods is their high computational cost, which restricts their application to systems of modest size.

Multi-Reference Methods: CASSCF and Beyond

For systems with strong static correlation, multi-reference methods are essential. The Complete Active Space Self-Consistent Field (CASSCF) method performs a full CI within a carefully selected set of active orbitals, which typically include the orbitals directly involved in the chemical process of interest. CASSCF provides a qualitatively correct wavefunction but recovers only a small fraction of the dynamic correlation energy [12] [6]. To address this, multi-reference perturbation theories like CASPT2 and NEVPT2 are used to add dynamic correlation on top of the CASSCF reference. These methods are powerful but require significant expertise in selecting an appropriate active space [12] [6].

Quantitative Performance of Post-HF Methods

The performance of post-HF methods can be quantified by their accuracy in predicting electron correlation energies. Recent research has demonstrated that information-theoretic approach (ITA) quantities, derived from the electron density, can predict post-HF correlation energies with linear regression [LR(ITA)], achieving chemical accuracy at the cost of a HF calculation. Table 2 summarizes the performance of LR(ITA) for various system types.

Table 2: Accuracy of LR(ITA) in Predicting MP2 Electron Correlation Energies for Various Systems [11]

| System Type | Example Systems | Best-Performing ITA Quantities | Linear Correlation (R²) | Root Mean Square Deviation (RMSD) |

|---|---|---|---|---|

| Isomers | 24 Octane Isomers | Fisher Information (I_F) |

> 0.990 | < 2.0 mH |

| Linear Polymers | Polyyne, Polyene | Shannon Entropy (S_S), Fisher Information (I_F) |

~1.000 | ~1.5 - 4.0 mH |

| Molecular Clusters | (H₂O)ₙ, (CO₂)ₙ | Onicescu information energy (E_2, E_3) |

1.000 | 2.1 - 9.3 mH |

| Metallic/Covalent Clusters | Beₙ, Mgₙ, Sₙ | Multiple ITA quantities | > 0.990 | ~17 - 42 mH |

Experimental Protocols

Protocol 1: Accurate Modeling of a Solid-State Color Center (NV⁻ in Diamond)

This protocol outlines a WFT-based approach for studying point defects with strong multi-determinant character, as demonstrated for the NV⁻ center in diamond [12].

Research Reagent Solutions

- Software: Quantum chemistry package capable of CASSCF/NEVPT2 (e.g., MOLPRO, ORCA, MOLFDIR).

- Cluster Model: A finite diamond lattice cluster, passivated by hydrogen atoms. The size must be determined via convergence tests.

- Active Space: For NV⁻, a CASSCF(6e,4o) is used, comprising four defect orbitals (a₁, a₁*, eₓ, e_y) originating from the dangling bonds of the three carbon atoms and the nitrogen atom adjacent to the vacancy.

- Basis Set: A standard Gaussian-type orbital basis set (e.g., cc-pVDZ, 6-311++G(d,p)).

Step-by-Step Workflow

Cluster Construction and Partial Optimization:

- Generate a hydrogen-terminated nanodiamond cluster of increasing size.

- Optimize atomic positions only in the immediate vicinity of the vacancy while keeping the outer shells fixed at the perfect diamond geometry to reflect the stiffness of the crystal. This is critical for convergence.

State-Specific CASSCF Geometry Optimization:

- For each electronic state of interest (e.g., the ground triplet state ( ^3A_2 )), perform a state-specific (SS) CASSCF geometry optimization. This optimizes the molecular orbitals and CI coefficients for a single state, yielding an accurate equilibrium geometry for that specific state.

State-Averaged CASSCF Single-Point Calculation:

- At the optimized geometry, perform a state-averaged (SA) CASSCF calculation. This involves requesting multiple roots (e.g., five triplet and eight singlet roots for NV⁻) with equal weights to obtain a consistent set of orbitals for describing multiple electronic states.

Dynamic Correlation Correction with NEVPT2:

- Using the SA-CASSCF wavefunction as a reference, perform a NEVPT2 calculation. This step incorporates the dynamic correlation effects from the electrons not included in the active space, which is crucial for quantitative accuracy.

Property Calculation:

- Calculate the properties of interest, such as excitation energies, zero-phonon lines (ZPLs), and fine structure, from the final CASSCF-NEVPT2 energies and wavefunctions.

The following workflow diagram illustrates this multi-step protocol:

Protocol 2: Predicting Correlation Energy via Information-Theoretic Approach (ITA)

This protocol describes the use of density-derived ITA quantities to predict post-HF correlation energies, avoiding expensive post-HF computations [11].

Research Reagent Solutions

- Software: A quantum chemistry program to perform HF calculations (e.g., NWChem, Gaussian).

- ITA Scripts: Code to compute ITA quantities (Shannon entropy, Fisher information, etc.) from the HF electron density.

- Reference Data: A small set of high-level (e.g., MP2, CCSD(T)) calculations on a training set of molecules to establish the linear regression model.

Step-by-Step Workflow

Training Set Calculation:

- For a set of representative molecules (e.g., octane isomers, water clusters), perform a standard HF calculation.

- For the same set, perform a high-level post-HF calculation (e.g., MP2) to obtain the reference electron correlation energy.

ITA Quantity Computation:

- From the HF electron density of each molecule, compute a set of 11 ITA quantities, such as Shannon entropy ((SS)), Fisher information ((IF)), and Onicescu information energy ((E2), (E3)).

Linear Regression Model Fitting (LR(ITA)):

- For each ITA quantity, perform a linear regression against the reference post-HF correlation energies to obtain a scaling equation (slope and intercept) for that quantity.

Prediction for New Systems:

- For a new, unknown system, perform only a HF calculation.

- Compute the ITA quantities from the HF density.

- Use the pre-established linear regression equations to predict the post-HF correlation energy.

Current Research and Future Outlook

The field of post-HF methods is actively evolving to address the dual challenges of computational cost and application scope. One promising direction is the information-theoretic approach (ITA), which uses physically inspired density-based descriptors to predict correlation energies. The LR(ITA) protocol has shown success for diverse systems, including polymers and molecular clusters, achieving chemical accuracy at the cost of a HF calculation [11]. This represents a potential paradigm shift towards machine learning-inspired, descriptor-based prediction of quantum chemical properties.

For large-scale systems, fragmentation methods like the generalized energy-based fragmentation (GEBF) method are crucial. These methods decompose a large system into smaller, tractable fragments, and the total energy is assembled from fragment calculations. The accuracy of LR(ITA) has been shown to be comparable to GEBF for large benzene clusters, highlighting its potential for massive systems [11].

Another critical frontier is the development of more efficient computational kernels. Research into approximate Fock exchange operators using low-rank decomposition and two-level nested self-consistent field iterations aims to drastically reduce the memory and computational bottlenecks associated with the nonlocal exchange operator, which is fundamental to both HF and hybrid DFT [13]. These advances are essential for extending the reach of high-accuracy electronic structure theory to biologically relevant systems and functional materials.

Post-Hartree-Fock methods form an essential hierarchy in the computational chemist's toolkit, enabling the systematic and controlled recovery of electron correlation energy. From the cost-effective MP2 to the highly accurate CCSD(T) and the robust multi-reference CASSCF/NEVPT2, each method offers a unique balance of accuracy, computational cost, and applicability. The choice of method depends critically on the chemical problem at hand: single-reference closed-shell molecules versus multi-reference systems like reaction transition states or open-shell transition metal complexes.

Emerging trends, including the information-theoretic approach and advanced computational approximations, are pushing the boundaries of system size and complexity that can be treated with high accuracy. As these methods continue to mature and integrate with high-performance computing and machine learning, their role in drug discovery and materials design is poised to expand significantly, providing researchers with ever more powerful tools to probe and predict molecular behavior.

In the field of computational chemistry, the pursuit of accurate predictions of molecular structure, energetics, and properties is fundamentally governed by the trade-off between computational cost and accuracy. This balance is particularly pronounced in post-Hartree-Fock methods, which were developed specifically to improve upon the limitations of the Hartree-Fock (HF) approximation by adding electron correlation effects [1]. The Hartree-Fock method itself provides a mean-field approximation that neglects the instantaneous repulsions between electrons, modeling them as interacting only with an average field [14] [15]. While HF establishes the theoretical foundation for modern electronic structure theory, its neglect of electron correlation limits its accuracy for many chemical problems, including molecular dissociation, excited states, and non-covalent interactions [16] [1].

Post-Hartree-Fock methods address this limitation by systematically accounting for electron correlation, but at a significantly increased computational cost. As these methods form the core of a broader thesis on advanced molecular calculations, understanding their specific cost-accuracy profiles is essential for selecting appropriate methodologies for research applications in areas such as drug discovery and materials design [16] [17]. This article provides a structured analysis of this fundamental trade-off, presenting quantitative data, detailed protocols, and practical guidance to inform methodological choices in scientific research.

Quantitative Analysis of Methodological Trade-Offs

The computational cost of quantum chemistry methods typically scales with system size, often expressed as a power of the number of basis functions (M) used to represent electron orbitals. The following table summarizes the characteristic scaling and accuracy of prominent methods.

Table 1: Characteristic Scaling and Accuracy of Quantum Chemistry Methods

| Method | Computational Scaling | Key Description | Typical Applications |

|---|---|---|---|

| Hartree-Fock (HF) | O(M⁴) [14] | Mean-field approximation; neglects electron correlation [1]. | Suitable for initial geometry optimizations; provides reference orbitals for post-HF methods [15]. |

| Density Functional Theory (DFT) | O(M³) to O(M⁴) [16] | Incorporates electron correlation via exchange-correlation functionals; favorable cost-accuracy balance [16]. | Workhorse for ground-state properties of medium/large systems (e.g., reaction mechanisms, material properties) [16] [18]. |

| Møller-Plesset Perturbation Theory (MP2) | O(M⁵) [16] | Adds electron correlation via 2nd-order perturbation theory [1]. | Accurate for non-covalent interactions and thermochemistry; often used for system pre-screening [16] [11]. |

| Coupled-Cluster Singles, Doubles & Perturbative Triples (CCSD(T)) | O(M⁷) [16] | "Gold standard" for single-reference systems; high accuracy but very high cost [16]. | Benchmark calculations for smaller systems (<50 atoms) [16] [11]. |

The practical implications of this scaling are profound. For instance, a recent benchmark study on predicting molecular hyperpolarizability compared Hartree-Fock and various Density Functional Theory (DFT) functionals [19]. The results demonstrated that for simple push-pull chromophores, the HF method with a modest 3-21G basis set achieved a 45.5% Mean Absolute Percentage Error (MAPE) but required only 7.4 minutes per molecule, and, crucially, provided a perfect pairwise ranking of molecules [19]. This highlights its potential as an efficient fitness function in evolutionary design algorithms where relative ordering is more critical than absolute accuracy. In contrast, more sophisticated functionals like CAM-B3LYP and M06-2X with the same basis set offered no significant improvement in accuracy for this specific property but doubled or tripled the computational cost [19].

Table 2: Illustrative Benchmarking Data for Hyperpolarizability Calculations [19]

| Method | Basis Set | Mean Absolute Percentage Error (MAPE) | Computational Time per Molecule (minutes) | Pairwise Rank Agreement |

|---|---|---|---|---|

| HF | STO-3G | 60.5% | 2.7 | Perfect (10/10 pairs) |

| HF | 3-21G | 45.5% | 7.4 | Perfect (10/10 pairs) |

| B3LYP | 3-21G | 50.1% | 14.9 | Perfect (10/10 pairs) |

| CAM-B3LYP | 3-21G | 47.8% | 28.1 | Perfect (10/10 pairs) |

| M06-2X | 3-21G | 48.4% | 35.0 | Perfect (10/10 pairs) |

Beyond the choice of electronic structure method, the selection of a basis set is a critical factor in defining the cost-accuracy balance. The basis set size directly controls the number of basis functions (M), which in turn dictates the cost of the calculation. Moving from a minimal basis set (e.g., STO-3G) to a split-valence basis set (e.g., 3-21G) provides the most significant gain in accuracy per unit of computation [19]. Further expansions, such as adding polarization and diffuse functions (e.g., 6-311++G(d,p)), yield diminishing returns and can dramatically increase the number of two-electron integrals that need to be computed, a process that scales with O(M⁴) [11] [14].

Protocol for Selecting a Computational Methodology

Workflow for Method Selection

The following diagram outlines a systematic workflow for selecting an appropriate computational method based on system size, property of interest, and available resources.

Detailed Protocol 1: High-Throughput Screening for Drug Discovery

Objective: To rapidly and accurately screen a large library of drug candidates for binding affinity.

System Preparation:

- Obtain 3D molecular structures of ligands from databases or generate them using molecular building tools.

- Perform initial geometry optimization using molecular mechanics (MM) or semiempirical quantum methods (e.g., GFN2-xTB) to eliminate steric clashes [16].

Initial Quantum Chemical Pre-optimization:

- Employ a cost-effective quantum method for further refinement. HF or a hybrid DFT functional (e.g., B3LYP) with a moderate basis set (e.g., 6-31G is often sufficient [19] [18].

- Conduct geometry optimization and frequency calculations to confirm the presence of a true minimum on the potential energy surface.

Electronic Property Calculation:

- Calculate electronic properties (e.g., electrostatic potential, HOMO-LUMO energies) from the pre-optimized structures using the same method as in step 2. These properties can serve as descriptors for machine learning models [17].

Binding Affinity Prediction:

- Option A (ML-Augmented): Input the quantum-derived descriptors into a pre-trained machine learning model (e.g., a graph neural network or random forest) to predict binding affinities [17]. This approach bypasses expensive explicit binding site calculations.

- Option B (QMMM): For a more detailed but costly analysis, embed the ligand in the protein active site using a hybrid Quantum Mechanics/Molecular Mechanics (QM/MM) scheme, where the ligand is treated with DFT and the protein with a molecular mechanics force field [16].

Validation:

- Validate the screening protocol by comparing predictions against experimental binding data or high-level CCSD(T) calculations for a small subset of representative molecules [16].

Detailed Protocol 2: Achieving Benchmark Accuracy with LR(ITA)

Objective: To accurately predict post-Hartree-Fock correlation energies for molecular clusters or polymers at a fraction of the computational cost.

Reference Data Generation (Training Set):

- Select a representative set of molecular structures (e.g., 24 octane isomers, protonated water clusters H⁺(H₂O)ₙ, or acenes of varying length) [11].

- For these structures, perform a single-point energy calculation at the Hartree-Fock (HF) level with a standard basis set (e.g., 6-311++G(d,p)) [11].

- For the same structures, perform a more expensive post-HF calculation (e.g., MP2, CCSD, or CCSD(T)) with the same basis set to obtain the reference electron correlation energy [11].

Information-Theoretic Descriptor Calculation:

- From the HF electron density obtained in the previous step, compute a set of information-theoretic approach (ITA) quantities. These include:

- Shannon Entropy (Sₛ): Characterizes the global delocalization of electron density.

- Fisher Information (I_F): Quantifies the local sharpness and inhomogeneity of the density.

- Onicescu Information Energy (E₂, E₃): Measures the concentration of the density distribution.

- Relative Rényi Entropy (R₂ᵣ, R₃ᵣ): Quantifies the difference between two density distributions [11].

- From the HF electron density obtained in the previous step, compute a set of information-theoretic approach (ITA) quantities. These include:

Model Training:

- Construct a linear regression model (LR(ITA)) for the training set, where the post-HF correlation energy is the dependent variable and one or more of the ITA quantities are the independent variables [11].

- Validate the model's accuracy by examining the coefficient of determination (R²) and the root-mean-squared deviation (RMSD) between predicted and calculated correlation energies.

Prediction for New Systems:

- For a new system of interest, perform only an HF calculation.

- Compute the same ITA quantities from the HF density.

- Use the pre-trained linear regression model to predict the post-HF correlation energy, effectively obtaining CCSD(T)-level accuracy at near-HF cost [11].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Software and Computational "Reagents" for Post-Hartree-Fock Research

| Tool / Resource | Category | Primary Function | Relevance to Cost-Accuracy Trade-off |

|---|---|---|---|

| GPU-Accelerated Fock Matrix Builders [14] | HPC Software | Dramatically speeds up the integral evaluation and Fock matrix construction in HF/DFT calculations. | Reduces the cost of the baseline SCF calculation, making subsequent post-HF steps more accessible. |

| Linear Regression (LR) & ITA Quantities [11] | Algorithmic Approach | Predicts high-level correlation energies using low-level density descriptors. | Directly addresses the cost-accuracy problem by providing benchmark-quality energies at low cost. |

| Hybrid DFT Functionals (e.g., B3LYP, CAM-B3LYP, PBE0) [16] [19] | Theoretical Method | Provides a balanced description of electron correlation for diverse molecular properties. | Offers a practical compromise, being more accurate than HF and less costly than MP2 or CCSD(T). |

| Polarized Basis Sets (e.g., 6-31G(d), 6-311++G(d,p)) [11] [19] | Basis Set | Improves the description of electron distribution by adding angular flexibility and diffuse character. | Allows for systematic improvement of accuracy at a known computational cost increase, enabling controlled trade-offs. |

| Fragment-Based Methods (e.g., GEBF) [11] | Scalability Method | Enables quantum chemical calculations on large systems by decomposing them into smaller fragments. | Extends the application of accurate post-HF methods to large molecular clusters that would otherwise be intractable. |

The fundamental trade-off between computational cost and accuracy is an inescapable and defining aspect of computational chemistry, particularly in the realm of post-Hartree-Fock methods. Navigating this trade-off effectively is not about finding a single "best" method, but rather about making strategic choices informed by the specific research question, system size, and available computational resources. As demonstrated, strategies range from the pragmatic selection of method and basis set combinations to the adoption of innovative approaches like machine learning augmentation and information-theoretic descriptors. The ongoing integration of high-performance computing and artificial intelligence with foundational quantum chemical principles promises to further push the boundaries of this trade-off, enabling researchers to tackle increasingly complex problems in molecular science with greater confidence and efficiency.

A Deep Dive into Key Post-HF Methods and Their Applications in Drug Discovery

Non-covalent interactions (NCIs) are fundamental weak forces that govern molecular recognition, protein folding, supramolecular assembly, and drug-receptor binding. Accurate quantum mechanical treatment of these interactions requires sophisticated electron correlation methods beyond the mean-field approximation. Møller-Plesset perturbation theory, particularly at second order (MP2) and beyond, provides a balanced approach for modeling NCIs with reasonable computational cost [20] [21]. This application note examines the performance, limitations, and practical implementation of MP2 and higher-order methods for NCI prediction in chemical and pharmaceutical research contexts.

The critical importance of electron correlation for NCIs stems from the dominant role of dispersion forces in many molecular complexes. While Hartree-Fock (HF) theory completely misses dispersion, and density functional theory (DFT) requires empirical corrections, MP2 naturally incorporates these effects through its perturbative treatment of electron-electron correlations [21]. However, standard MP2 exhibits systematic overestimation of certain NCIs, necessitating methodological refinements including regularization, orbital optimization, and higher-order corrections [20] [22] [21].

Theoretical Foundation

MP2 Theory and Formulation

The MP2 correlation energy expression derives from Rayleigh-Schrödinger perturbation theory using the HF Hamiltonian as the zeroth-order operator:

Figure 1: Computational workflow for conventional MP2 theory. The method builds upon Hartree-Fock solutions through a well-defined perturbative procedure.

The MP2 correlation energy is expressed as:

$$ E{\text{MP2}} = -\frac{1}{4} \sum{ijab} \frac{|\langle ij || ab \rangle|^2}{\Delta_{ij}^{ab}} $$

where i,j denote occupied orbitals, a,b virtual orbitals, $\langle ij || ab \rangle$ represents antisymmetrized two-electron integrals, and $\Delta{ij}^{ab} = \epsilona + \epsilonb - \epsiloni - \epsilon_j$ is the orbital energy gap [20]. This formulation treats electron correlation as pairwise additive contributions from double excitations, providing a natural description of dispersion interactions missing in HF theory.

Limitations of Conventional MP2

Despite its utility, MP2 exhibits systematic deficiencies for certain NCI types:

- π-stacked complexes: MP2 notoriously overestimates interaction energies in conjugated systems like slipped benzene dimers and DNA base pairs, with errors exceeding 100% for large aromatics like the coronene dimer [20].

- Transition metal complexes: Dative bonds in organometallic compounds show overestimated binding energies due to non-additive correlation effects [20].

- Large-gap dependence: The method becomes unreliable as frontier orbital energy gaps decrease, manifesting divergent behavior for small-gap systems [20] [22].

These limitations stem from MP2's treatment of correlation as purely pairwise additive, neglecting higher-order collective effects that become important in delocalized systems with small energy denominators [20].

Methodological Advances

Regularized MP2 Methods

κ-regularization addresses MP2's divergence issues by damping contributions from small energy denominators:

$$ E{\kappa-\text{MP2}}(\kappa) = -\frac{1}{4} \sum{ijab} \frac{|\langle ij || ab \rangle|^2}{\Delta{ij}^{ab}} \left(1 - e^{-\kappa(\Delta{ij}^{ab})}\right)^2 $$

The regularization parameter $\kappa$ (typically 1.1-1.45 Eh⁻¹) attenuates terms with small denominators while preserving the standard MP2 expression for large gaps [20] [21]. This approach significantly improves performance for NCIs, reducing errors by approximately 50% across benchmark sets [21] [23].

Orbital-Optimized Variants

Orbital-optimized MP2 (OOMP2) determines orbitals self-consistently in the presence of the MP2 correlation potential, reducing spin contamination and improving descriptions of symmetry-breaking problems [21]. Combining OOMP2 with κ-regularization (κ-OOMP2) further enhances performance, particularly for systems where HF references exhibit artifactual symmetry-breaking [21].

Higher-Order Perturbation Theory

Third-order MP (MP3) and scaled variants like MP2.5 (which averages MP2 and MP3 energies) provide improved treatment of non-additive correlations:

$$ E{\text{MP2.5}} = \frac{1}{2}(E{\text{MP2}} + E_{\text{MP3}}) $$

These methods demonstrate enhanced accuracy for NCIs, particularly when combined with improved reference orbitals from κ-OOMP2 or density functional theory [21] [23].

Performance Benchmarking

Quantitative Assessment Across NCI Types

Table 1: Performance comparison of MP-based methods for non-covalent interactions (mean absolute errors in kcal/mol) [21] [23]

| Method | Hydrogen Bonding | Dispersion | Halogen Bonding | Mixed | Overall |

|---|---|---|---|---|---|

| MP2 | 0.24 | 0.89 | 0.31 | 0.52 | 0.67 |

| κ-MP2 | 0.18 | 0.41 | 0.25 | 0.31 | 0.32 |

| OOMP2 | 0.26 | 0.78 | 0.28 | 0.48 | 0.59 |

| κ-OOMP2 | 0.19 | 0.35 | 0.22 | 0.26 | 0.29 |

| MP2.5 | 0.12 | 0.28 | 0.15 | 0.19 | 0.21 |

| MP2.5:κ-OOMP2 | 0.07 | 0.12 | 0.09 | 0.08 | 0.10 |

Data compiled from testing across 19 benchmark sets (A24, S22, S66, X40, etc.) covering diverse NCI types [21] [23]. Results demonstrate the significant improvement achieved through regularization and reference orbital optimization.

Comparison with Higher-Level Methods

Table 2: Method scalability and accuracy trade-offs for NCI prediction [20] [22] [21]

| Method | Computational Scaling | Typical System Size | NCI Accuracy | Key Limitations |

|---|---|---|---|---|

| HF | O(N⁴) | ~100 atoms | Poor (no dispersion) | Neglects electron correlation |

| MP2 | O(N⁵) | ~50-100 atoms | Moderate | Overestimates dispersion |

| κ-MP2 | O(N⁵) | ~50-100 atoms | Good | Parameter dependence |

| MP2.5:κ-OOMP2 | O(N⁶) | ~30-80 atoms | Excellent | Increased computational cost |

| CCSD(T) | O(N⁷) | ~20-30 atoms | Excellent (gold standard) | Prohibitive for large systems |

| CCSD(cT) | O(N⁷) | ~20-30 atoms | Superior for large systems | Addresses CCSD(T) overcorrelation |

Recent developments like CCSD(cT) address the overcorrelation issues in CCSD(T) for large, polarizable systems by including additional diagrammatic terms that screen the bare Coulomb interaction [22]. For the coronene dimer, CCSD(cT) reduces binding energy errors by nearly 2 kcal/mol compared to CCSD(T), achieving chemical accuracy against diffusion Monte Carlo benchmarks [22].

Experimental Protocols

Standard Protocol for κ-Regularized MP2 Calculations

Objective: Compute accurate NCI energies for molecular complexes using κ-MP2.

Required Resources:

- Quantum chemistry software (e.g., Psi4, Q-Chem, ORCA)

- Aug-cc-pVTZ basis set (recommended)

- Molecular geometries (optimized at appropriate level)

Procedure:

- Geometry Preparation: Obtain optimized structures of monomers and complex using DFT with dispersion correction (e.g. ωB97M-V) or HF/MP2 with appropriate basis.

- Single-Point Energy Calculation:

- Perform HF calculation to obtain reference orbitals and orbital energies

- Compute MP2 correlation energy using κ-regularization (κ = 1.1-1.45 Eh⁻¹)

- For optimal results, use κ-OOMP2 with κ = 1.45 Eh⁻¹ [21]

- Binding Energy Calculation:

- Compute total energies for complex (Ecomplex) and monomers (EA, EB)

- Calculate interaction energy: ΔE = Ecomplex - (EA + EB)

- Apply basis set superposition error (BSSE) correction via counterpoise method

- Validation: Compare against benchmark values for representative systems (e.g., S66 subset)

Expected Results: κ-MP2 typically reduces MP2 overbinding by 30-60% for dispersion-dominated complexes while maintaining accuracy for hydrogen-bonded systems [20] [21].

Advanced Protocol: MP2.5 with Optimized Reference Orbitals

Objective: Achieve CCSD(T)-level accuracy for NCIs at reduced computational cost.

Procedure:

- Reference Orbital Generation:

- MP3 Energy Calculation:

- Compute MP3 correlation energy using optimized reference orbitals

- Scale third-order contribution by 0.5 (MP2.5 = MP2 + 0.5*(MP3-MP2))

- Binding Energy Analysis:

- Calculate interaction energies as in Protocol 5.1

- For large systems, consider local correlation approximations (DLPNO-CCSD(T)) for validation [22]

Performance: MP2.5:κ-OOMP2 achieves RMSD of 0.10 kcal/mol for S66 dataset, rivaling CCSD(T) accuracy at O(N⁶) cost [21] [23].

The Scientist's Toolkit

Table 3: Essential computational resources for MP-based NCI studies

| Resource Type | Specific Tools | Application Purpose | Key Considerations |

|---|---|---|---|

| Software Packages | Psi4, Q-Chem, ORCA, Gaussian | Quantum chemistry calculations | Psi4 offers excellent MP2.5 implementation; Q-Chem supports κ-OOMP2 |

| Basis Sets | aug-cc-pVTZ, aug-cc-pVDZ | Electron wavefunction expansion | Augmented correlation-consistent basis sets essential for NCIs |

| Benchmark Sets | S22, S66, A24, HSG | Method validation and parameterization | Provide diverse NCI types for balanced assessment |

| Analysis Tools | NCIplot, QTAIM, SAPT | Interaction decomposition and visualization | Reveal nature and strength of specific non-covalent contacts |

| Reference Data | CCSD(T)/CBS, DMC | High-accuracy benchmarks | Essential for method validation where experimental data is scarce |

Application in Drug Discovery

MP2-based methods provide critical insights for structure-based drug design, particularly for targeting challenging binding sites:

- Fragment-based screening: MP2.5 methods accurately rank fragment binding affinities by capturing dispersion contributions to binding [8] [24]

- Halogen bonding: κ-MP2 correctly models σ-hole interactions important in drug-receptor recognition [21] [25]

- Dispersion-dominated binding: For targets with hydrophobic pockets, MP2.5 provides superior affinity predictions compared to standard DFT [8] [24]

Case studies demonstrate successful application in optimizing kinase inhibitors and targeting metalloenzymes where accurate NCI treatment is essential for binding affinity predictions [8].

MP2 and its advanced variants represent a sweet spot in the accuracy-cost trade-off for NCI prediction in drug discovery applications. The methodological developments in regularization, orbital optimization, and higher-order corrections have addressed many of conventional MP2's limitations while maintaining computational feasibility for pharmaceutical-sized systems.

Future directions include:

- Integration with machine learning for rapid screening of NCI energies across chemical space [24]

- Quantum computing implementations of post-Hartree-Fock methods for potentially exponential speedup [26]

- Advanced local correlation methods enabling MP2.5/CCSD(T) accuracy for entire drug-receptor complexes [22]

As these technologies mature, MP-based methods will continue bridging the gap between computational efficiency and chemical accuracy for non-covalent interactions in pharmaceutical research.

Coupled-Cluster with Singles, Doubles, and perturbative Triples (CCSD(T)) is widely regarded as the "gold standard" in quantum chemistry for computing molecular energies and properties. This status is attributed to its remarkable ability to deliver high accuracy—often within 1 kJ/mol or 1 kcal/mol of experimental values—for molecules with predominantly single-reference electronic character. The method achieves this by systematically treating electron correlation effects through a wavefunction ansatz that includes all single and double excitations from a reference determinant (usually Hartree-Fock) and incorporates a non-iterative perturbative correction for connected triple excitations.

Despite its superior accuracy, the application of CCSD(T) has been historically limited by its steep computational scaling, which formally reaches for the (T) correction, where N represents the system size. This has traditionally restricted conventional implementations to systems of approximately 20-25 atoms. However, recent methodological and computational advances have significantly extended the reach of CCSD(T), enabling applications to molecules with 50-75 atoms and beyond, while maintaining its gold-standard accuracy. These developments are making CCSD(T) an increasingly powerful tool for researchers and drug development professionals tackling complex chemical problems.

Recent Methodological Advances Extending the Reach of CCSD(T)

The prohibitive computational cost of conventional CCSD(T) implementations has motivated the development of several cost-reduction strategies that dramatically extend its applicability while preserving high accuracy.

Key Approximation Techniques

Frozen Natural Orbitals (FNOs) compress the virtual molecular orbital space by discarding orbitals with low occupation numbers, as determined from a lower-level wavefunction (typically MP2). This leads to a significant reduction in computational cost, as the number of virtual orbitals is the primary determinant of the steep scaling. The error introduced by this truncation can be systematically controlled and corrected. [27] [28]

Density Fitting (DF) or Resolution-of-the-Identity (RI) approximates the four-center two-electron repulsion integrals using expansions in an auxiliary basis set. This reduces the memory, storage, and computational burdens associated with handling these integrals. [27]

Natural Auxiliary Functions (NAFs) further compress the auxiliary basis set used in DF, providing additional speedups without sacrificing accuracy. This is particularly effective when combined with FNOs, as the reduced orbital space requires less auxiliary description. [27] [28]

Explicitly Correlated (F12) Methods improve the slow convergence of correlation energy with basis set size by introducing terms in the wavefunction that depend explicitly on the interelectronic distance (r~12~). This allows for the use of smaller basis sets to achieve near-complete-basis-set-limit accuracy, offering substantial computational savings. [28]

Table 1: Summary of Key Cost-Reduction Techniques for CCSD(T)

| Technique | Underlying Principle | Primary Benefit | Reported Speedup |

|---|---|---|---|

| Frozen Natural Orbitals (FNO) | Truncation of the virtual orbital space based on natural occupation numbers. | Reduces scaling with the number of virtual orbitals. | Up to an order of magnitude [27] |

| Density Fitting (DF) | Approximation of two-electron integrals using an auxiliary basis. | Reduces storage, I/O, and pre-factor costs. | ~5-10x for integral processing |

| Natural Auxiliary Functions (NAF) | Compression of the DF auxiliary basis set. | Further reduces cost of DF integral assembly. | 1.5-3x on top of DF [28] |

| Explicitly Correlated F12 | Explicit inclusion of the interelectronic distance in the wavefunction. | Drastically improves basis set convergence. | Enables use of smaller basis sets for CBS-quality results [28] |

The combination of FNO and NAF approximations with efficient, parallelized algorithms has been shown to deliver overall speedups of 5 to 10 times for triple-ζ basis sets, making CCSD(T) calculations on systems with 50-75 atoms feasible on affordable computing resources within a few days. [27] [28] A very recent preprint (2025) confirms that these hybrid parallel approaches enable calculations on systems of up to 60 atoms and 2500 orbitals, which were previously beyond reach without local approximations. [29]

Application Protocols for Specific Chemical Systems

The accurate application of CCSD(T) requires carefully designed protocols tailored to different types of chemical systems and properties of interest.

Protocol for Thermochemistry and Reaction Energies

This protocol is designed for computing accurate reaction energies, barrier heights, and atomization energies.

- Geometry Optimization: Optimize the molecular geometry of all reactants and products using a robust, lower-cost method. Density Functional Theory (DFT) with a functional like ωB97M-V and a triple-ζ basis set (e.g., def2-TZVP) is a suitable choice. [30]

- Reference Energy Calculation: Perform a single-point Hartree-Fock calculation with a large correlation-consistent basis set (e.g., aug-cc-pVTZ) to obtain the reference energy and orbital set.

- FNO-CCSD(T) Energy Calculation:

- Perform a MP2 calculation to generate the virtual orbital density matrix.

- Diagonalize the density matrix to obtain Natural Orbitals (NOs) and their occupation numbers.

- Retain the subset of NOs with occupation numbers above a conservative threshold (e.g., corresponding to an energy error of <1 µE~h~). This defines the FNO space. [27]

- Execute the CCSD(T) calculation within the truncated FNO space.

- (Optional but Recommended) Compute a MP2-level correction, ΔMP2, which is the difference between the MP2 energy in the full space and the FNO-truncated space. Add this correction to the final FNO-CCSD(T) energy to improve accuracy. [28]

- Basis Set Extrapolation (For Highest Accuracy): Repeat Step 3 with a series of basis sets (e.g., aug-cc-pVTZ and aug-cc-pVQZ) and extrapolate the correlation energy to the complete basis set (CBS) limit using established formulas. The F12 approach can serve as an alternative to this extrapolation. [28]

Protocol for Non-Covalent Interactions (NCIs)

NCIs, such as π-stacking and hydrogen bonding, are crucial in drug binding and materials science. Their accurate description is notoriously challenging.

- Geometry and Counterpoise Correction: Use CCSD(T)-quality geometries if available, often from high-level DFT. To account for Basis Set Superposition Error (BSSE), the counterpoise correction is essential. [30]

- High-Level Single-Point Calculations:

- For the dimer and each monomer, perform a FNO-CCSD(T) calculation using a large, diffuse-function-augmented basis set (e.g., aug-cc-pVTZ). The monomer calculations must be performed in the full dimer basis (counterpoise correction).

- The interaction energy is calculated as: E~int~ = E~dimer~ - E~monomer A (in dimer basis)~ - E~monomer B (in dimer basis)~.

- Accuracy Assessment for Large Systems: For very large π-stacked systems (e.g., acene dimers), be aware that post-CCSD(T) contributions (e.g., full triples, quadruple excitations) might become non-negligible. The linear evolution of the correlation energy with monomer size can be used as a probe to assess the behavior of CCSD(T) for large systems. [30]

Table 2: Recommended Computational Protocols for Different Chemical Problems

| Application | Recommended Method | Recommended Basis Set | Key Considerations | Target Accuracy |

|---|---|---|---|---|

| General Thermochemistry | FNO-CCSD(T) + ΔMP2 correction | aug-cc-pVTZ / aug-cc-pVQZ | Use tight FNO truncation thresholds; CBS extrapolation is beneficial. | ~1 kJ/mol [27] |

| Non-Covalent Interactions | FNO-CCSD(T) with counterpoise correction | aug-cc-pVTZ or cc-pVTZ-F12 | Essential to use basis sets with diffuse functions; monitor performance via interaction energy slopes. | ~0.1-0.5 kcal/mol |

| Transition Metal Reactions | FNO-CCSD(T) / PNO-LCCSD(T) | cc-pVTZ / cc-pVQZ for non-metals; aug-cc-pwCVTZ for metals | Open-shell systems require specific implementations; static correlation may be a concern. | ~2-4 kJ/mol |

| Extended Polymers & Clusters | FNO-CCSD(T) or Linear Regression (ITA) | 6-311++G(d,p) or larger | For very large systems, information-theoretic approach (ITA) can predict correlation from HF. [11] | Chemical accuracy possible [11] |

Workflow Visualization

Successful application of advanced coupled-cluster methods requires a suite of well-defined "research reagents" in the form of software, basis sets, and computational protocols.

Table 3: Essential Software and Basis Sets for CCSD(T) Calculations

| Tool / Reagent | Type | Primary Function | Key Features / Use Case |

|---|---|---|---|

| MRCC | Software Suite | Performs canonical and local correlation CC calculations. | Capable of high-level methods like CCSDT(Q); used for benchmarking. [30] |

| CFOUR | Software Suite | High-level quantum chemical calculations. | Used for demanding canonical post-CCSD(T) calculations. [30] |

| ORCA | Software Suite | Versatile quantum chemistry package. | Performs DLPNO-CCSD(T) and DFT geometry optimizations. [30] |

| cc-pVXZ Family | Basis Set | Systematic basis sets for correlation-consistent calculations. | The cornerstone for CCSD(T) studies (X = D, T, Q, 5...). [31] [30] |

| aug-cc-pVXZ | Basis Set | Correlation-consistent sets with diffuse functions. | Essential for anions, excited states, and non-covalent interactions. [30] |

| def2 Series | Basis Set | Efficient, generally contracted basis sets. | Good balance of cost and accuracy; popular for molecular systems. [31] |

| FNO-CCSD(T) | Method/Protocol | Reduced-cost CCSD(T) via virtual space truncation. | Default choice for systems of ~30-75 atoms. [27] [28] |

| CCSD(F12*) | Method/Protocol | Explicitly correlated CCSD with efficient triples. | Preferred for achieving CBS limits with smaller basis sets. [28] |

| DLPNO-CCSD(T) | Method/Protocol | Local correlation approximation for large systems. | Enables calculations on systems with hundreds of atoms. [30] |

The accurate prediction of drug-receptor binding affinities and reaction mechanisms is a central challenge in computational drug discovery. While classical molecular mechanics (MM) methods offer speed, they lack the quantum mechanical (QM) precision required to model electronic phenomena such as charge transfer, bond breaking/formation, and polarization [8] [10]. Ab initio quantum chemistry methods, particularly post-Hartree-Fock (post-HF) methods, address this need by providing a more physically realistic description of electron correlation, which is neglected in the foundational Hartree-Fock (HF) approximation [6] [1]. The HF method's mean-field approach, where each electron interacts with the average field of the others, leads to significant errors in the calculation of interaction energies, a critical shortcoming for predicting ligand binding [8] [7]. Post-HF methods systematically improve upon HF by accounting for the instantaneous, correlated motion of electrons, thereby offering a pathway to high-accuracy predictions of binding affinities and reaction pathways that are crucial for rational drug design [6] [32].

The applicability of conventional post-HF methods to large biological systems has historically been limited by their formidable computational cost and poor scaling with system size [6] [7]. However, recent methodological advances are bridging this gap. The development of fragmentation approaches, such as the Molecules-in-Molecules (MIM) method, combined with efficient approximations like Domain-based Local Pair Natural Orbital (DLPNO) techniques, now enables post-HF quality calculations on systems as large as protein-ligand complexes [32] [33]. This article details the application of these advanced post-HF protocols, providing researchers with a framework for achieving benchmark accuracy in modeling drug-receptor interactions.

Quantitative Performance of Post-HF Methods

The selection of an appropriate electronic structure method requires a careful balance between accuracy and computational feasibility. The performance of various methods for key properties relevant to drug discovery is summarized in Table 1.

Table 1: Performance Benchmarking of Quantum Chemical Methods for Drug Discovery Applications

| Method | Electron Correlation Treatment | Typical Binding Energy Error | Computational Scaling | Best for Applications Involving |

|---|---|---|---|---|

| Hartree-Fock (HF) | None (Mean-Field) | High (Systematic Underestimation) [8] | O(N⁴) [8] | Initial geometries, charge distributions [8] |

| Møller-Plesset 2nd Order (MP2) | Perturbation Theory [6] | Moderate (~a few kcal/mol) [6] | O(N⁵) | Dynamical correlation; relatively small systems [6] |

| Coupled-Cluster Singles, Doubles & Perturbative Triples (CCSD(T)) | Exponential Cluster Operator [7] | Very Low (< 1 kcal/mol) - "Gold Standard" [33] | O(N⁷) | High-fidelity benchmark calculations for small models [7] |

| DLPNO-CCSD(T) | Local Approximation to CCSD(T) [33] | Low (~1 kcal/mol) [33] | ~O(N⁴) to O(N⁵) [33] | Accurate single-point energies for large systems [32] [33] |

| Configuration Interaction Singles & Doubles (CISD) | Variational (Limited Excitations) [6] | Moderate (Lacks Size-Extensivity) [7] | O(N⁶) | Small system wavefunctions; historical use [6] |

| Complete Active Space SCF (CASSCF) | Variational (Active Space) [6] | High for Dynamical Correlation | Exponential with active space | Static (Non-Dynamical) Correlation; bond breaking, excited states [6] |

| Density Functional Theory (DFT) | Approximate Functional [8] | Functional-Dependent (Low with Hybrids) [8] | O(N³) [8] | General-purpose ground-state properties, reaction mechanisms [8] |