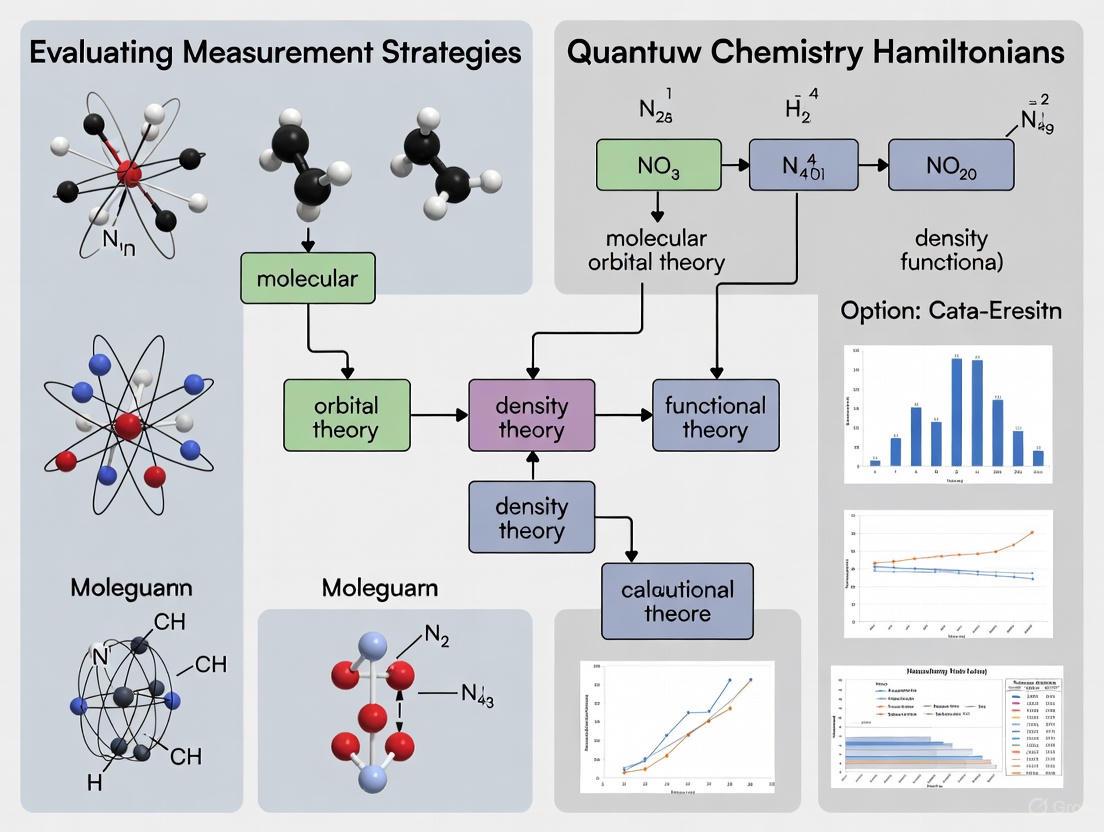

Beyond the Hype: Evaluating Quantum Measurement Strategies for Chemical Hamiltonian Accuracy in Drug Discovery

This article provides a comprehensive evaluation of measurement strategies for quantum chemistry Hamiltonians, a critical bottleneck in applying quantum computing to drug and materials discovery.

Beyond the Hype: Evaluating Quantum Measurement Strategies for Chemical Hamiltonian Accuracy in Drug Discovery

Abstract

This article provides a comprehensive evaluation of measurement strategies for quantum chemistry Hamiltonians, a critical bottleneck in applying quantum computing to drug and materials discovery. We explore the foundational principles of quantum simulations in chemical environments, detail cutting-edge methodological advances tested on real hardware, and present practical troubleshooting techniques for achieving chemical accuracy on noisy devices. Through comparative analysis of industry platforms and validation case studies, this resource offers researchers and development professionals a grounded framework for selecting and optimizing quantum measurement approaches to accelerate R&D pipelines.

The Quantum Chemistry Hamiltonian Challenge: From Theory to Real-World Complexity

Bridging the Quantum-Classical Divide in Molecular Simulation

The simulation of complex molecules is a fundamental challenge in chemistry, materials science, and drug discovery. While classical computers struggle with the exponential scaling of quantum mechanical calculations, current quantum processors are not yet fault-tolerant. This has led to the emergence of hybrid quantum-classical algorithms that strategically divide the computational workload. This guide objectively compares several prominent strategies—DMET-SQD, Joint Measurement, and Co-optimization frameworks—evaluating their performance, resource requirements, and suitability for near-term quantum hardware. The focus is on their efficacy in handling the critical task of measuring quantum chemistry Hamiltonians.

Comparative Analysis of Hybrid Strategies

The following table summarizes the core performance metrics and characteristics of three leading hybrid quantum-classical approaches for molecular simulation.

Table 1: Comparison of Hybrid Quantum-Classical Simulation Strategies

| Strategy | Core Methodology | Reported Accuracy & Performance | Quantum Resource Requirements | Key Advantages |

|---|---|---|---|---|

| DMET-SQD [1] [2] | Fragments molecule via Density Matrix Embedding Theory (DMET); uses Sample-Based Quantum Diagonalization (SQD) on fragments. | Energy differences within 1 kcal/mol of classical benchmarks for cyclohexane conformers [1]. | 27-32 qubits for molecules like cyclohexane and hydrogen rings [1]. | Reduces full-molecule problem to tractable fragments; demonstrated on real healthcare-focused hardware (IBM ibm_cleveland). |

| Joint Measurement [3] | Uses a constant-size set of fermionic Gaussian unitaries and occupation number measurement to jointly estimate non-commuting observables. | Sample complexity of ( \mathcal{O}(N^2 \log(N)/\epsilon^2) ) for quartic Majorana monomials; performance comparable to fermionic classical shadows [3]. | Circuit depth of ( \mathcal{O}(N^{1/2}) ) with ( \mathcal{O}(N^{3/2}) ) two-qubit gates on a 2D lattice [3]. | Reduces circuit depth and gate count compared to classical shadows; mitigates sampling bottleneck for Hamiltonians. |

| DMET-VQE Co-optimization [2] | Integrates DMET with a Variational Quantum Eigensolver (VQE) in a single optimization loop for geometry optimization. | Accurately determined equilibrium geometry of glycolic acid (C₂H₄O₃), a molecule previously intractable for quantum methods [2]. | Significantly reduces qubit count for large molecules; avoids nested optimization loops, lowering computational cost [2]. | Enables study of larger, chemically relevant molecules; more efficient than conventional nested optimization. |

Experimental Protocols and Workflows

To ensure reproducibility and provide a clear understanding of how these strategies are implemented, this section details their core experimental protocols.

Protocol for DMET-SQD Implementation

The DMET-SQD protocol, as used to simulate cyclohexane conformers and hydrogen rings, involves the following steps [1]:

- System Fragmentation: The target molecule is partitioned into smaller, manageable fragments.

- Embedding: Each fragment is embedded into an approximate electronic environment described by the rest of the molecule.

- Quantum Processing: The embedded fragment Hamiltonian is processed on the quantum computer using the SQD algorithm. SQD relies on sampling quantum circuits and projecting results into a subspace to solve the Schrödinger equation.

- Error Mitigation: Techniques such as gate twirling and dynamical decoupling are applied to mitigate errors on the noisy quantum hardware.

- Classical Post-processing: The results from the quantum computer are combined with classical computations to reconstruct the properties of the full molecule. This process is iterated to self-consistency.

Protocol for Joint Measurement of Fermionic Observables

The joint measurement strategy for estimating quadratic and quartic fermionic observables (Majorana monomials) proceeds as follows [3]:

- Unitary Randomization: A unitary is sampled at random from a constant-size set of specially chosen fermionic Gaussian unitaries. For quantum chemistry Hamiltonians, four specific unitaries are sufficient.

- Occupation Number Measurement: The rotated quantum state is measured in the fermionic occupation number basis.

- Classical Post-processing: The single-qubit measurement outcomes are processed to obtain estimates for the expectation values of all desired Majorana pairs and quadruples. This strategy effectively measures a jointly measurable observable from which the noisy versions of the target operators can be inferred.

Workflow for Multiscale Quantum-Classical Simulation

Advanced applications, such as simulating chemical systems in solvent environments, require integrating quantum computations into a broader multiscale framework [4]. The following diagram illustrates this nested workflow.

Diagram 1: Multiscale simulation workflow for embedding quantum computational capabilities within a surrounding classical molecular dynamics environment [4].

The Scientist's Toolkit: Essential Research Reagents

Successful execution of hybrid quantum-classical simulations relies on a suite of specialized tools, libraries, and hardware. The table below lists key components of the research ecosystem.

Table 2: Key Resources for Hybrid Quantum-Classical Molecular Simulation

| Category / Resource | Specific Examples | Function & Application |

|---|---|---|

| Quantum Software Libraries | Qiskit, Tangelo | Provides implementations of quantum algorithms (e.g., SQD), error mitigation techniques, and interfaces for embedding methods like DMET [1]. |

| Quantum Hardware Platforms | IBM Quantum (e.g., ibm_cleveland), Quantinuum H-Series, IonQ Forte | Offer cloud-accessible QPUs with varying qubit counts, architectures, and fidelities for executing quantum circuits [1] [5] [6]. |

| Classical Embedding Theories | Density Matrix Embedding Theory (DMET), Projection-Based Embedding (PBE) | Enable the partitioning of large molecular systems into smaller, tractable fragments for quantum simulation while preserving entanglement [1] [4] [2]. |

| Measurement Strategies | Joint Measurement, Classical Shadows, Grouped Measurement | Techniques to reduce the number of quantum measurements required to estimate the energy of a molecular Hamiltonian, a major performance bottleneck [3]. |

| Error Mitigation Techniques | Gate Twirling, Dynamical Decoupling, Zero-Noise Extrapolation | A set of software and hardware techniques to mitigate the impact of noise on current-generation quantum processors without the overhead of full quantum error correction [1]. |

The bridge between quantum and classical computing for molecular simulation is actively being built. Strategies like DMET-SQD and Joint Measurement demonstrate that accurate results meeting chemical accuracy thresholds can be achieved with today's quantum resources by clever algorithmic design. The DMET-VQE co-optimization framework further extends this capability to geometrically complex molecules previously beyond reach. The choice of strategy depends on the specific research goal: DMET-SQD has been experimentally validated on real hardware for energy calculations, Joint Measurement offers a theoretically efficient path for Hamiltonian estimation, and the co-optimization framework is promising for full geometry optimization. As quantum hardware continues to improve in scale and fidelity, these hybrid approaches provide a practical and evolving pathway toward quantum utility in molecular science.

Why Measurement Strategy is the Critical Bottleneck for Chemical Accuracy

For researchers in quantum chemistry, achieving chemical accuracy—typically defined as an error of 1.6 × 10⁻³ Hartree in energy calculations—is a paramount goal for obtaining reliable results in areas like drug development and materials design [7]. However, the path to this level of precision is fraught with challenges, and the choice of measurement strategy often emerges as the most significant bottleneck. This guide objectively compares the performance of contemporary measurement strategies used for estimating quantum chemistry Hamiltonians, providing a detailed analysis of their protocols, overheads, and suitability for near-term quantum hardware.

The Measurement Challenge in Quantum Chemistry

In quantum computations, the energy of a molecule is determined by measuring the expectation values of its Hamiltonian, a complex mathematical object composed of many non-commuting observable terms [3] [7]. The computational effort required does not stem from preparing the quantum state itself, but from the vast number of precise measurements needed to estimate the Hamiltonian's energy to within the narrow margin of chemical accuracy [7]. This challenge is compounded on near-term quantum devices by inherent noise, limited circuit depth, and finite sampling resources, making an efficient measurement strategy not just beneficial, but critical [8] [7].

Comparative Analysis of Measurement Strategies

The following table compares three advanced measurement strategies, highlighting their core principles, resource demands, and performance on key metrics.

| Strategy | Core Principle | Circuit Depth & Gate Count | Key Performance Metrics | Compatible Error Mitigation |

|---|---|---|---|---|

| Joint Measurement [3] | Measures noisy versions of multiple fermionic observables simultaneously using tailored fermionic Gaussian unitaries. | Depth: ( \mathcal{O}(N^{1/2}) )2-Qubit Gates: ( \mathcal{O}(N^{3/2}) ) (on a 2D lattice) [3] | Sample complexity for ϵ precision:- Quadratic terms: ( \mathcal{O}(N \log(N)/\epsilon^{2} ) )- Quartic terms: ( \mathcal{O}(N^2 \log(N)/\epsilon^{2} ) ) [3] | Randomized error mitigation (potential, as estimates use ≤2 qubits) [3] |

| Classical Shadows (Fermionic) [3] | Builds a classical approximation of the quantum state via randomized measurements for estimating non-commuting observables. | Depth: ( \mathcal{O}(N) )2-Qubit Gates: ( \mathcal{O}(N^{2}) ) (on a 2D lattice) [3] | Matches sample complexity of Joint Measurement strategy [3]. Performance is comparable in benchmarks [3]. | Standard techniques applicable, though not specifically highlighted. |

| IC Measurements with Advanced Post-Processing [7] | Uses informationally complete (IC) measurements to enable Quantum Detector Tomography (QDT) and shot-efficient estimators like locally biased sampling. | Dependent on the specific IC measurement implemented. | Achieved 0.16% measurement error for BODIPY molecule energy estimation on IBM hardware, a >6x reduction from baseline (1-5%) [7]. | QDT and blended scheduling are integral for mitigating readout errors and time-dependent noise [7]. |

Detailed Experimental Protocols and Data

This strategy is designed for Hamiltonians decomposed into products of Majorana operators (pairs and quadruples).

- State Preparation: Prepare the target quantum state (e.g., Hartree-Fock state) on the quantum processor.

- Randomized Unitary Evolution:

- Sample a unitary from a predefined set that realizes products of Majorana operators.

- Further sample a fermionic Gaussian unitary from a small, constant-sized set (e.g., 2 for pairs, 9 for quadruples) designed to rotate disjoint blocks of Majorana operators.

- Measurement: Perform a measurement of the fermionic occupation numbers in the new basis.

- Post-Processing: Classically process the single-qubit measurement outcomes to compute unbiased estimates for the expectation values of all targeted Majorana monomials.

Supporting Data: This strategy was benchmarked on exemplary molecular Hamiltonians, showing performance comparable to fermionic classical shadows but with reduced circuit depth, making it more suitable for devices with limited connectivity [3].

This protocol focuses on maximizing information extraction and mitigating readout errors on noisy hardware.

- State Preparation: Prepare the target state (e.g., Hartree-Fock state for BODIPY molecule).

- Informationally Complete Measurement: Execute a series of different measurement settings (circuits) on the quantum computer. The settings can be chosen at random or biased based on the Hamiltonian.

- Parallel Quantum Detector Tomography (QDT): Interleave circuits dedicated to QDT with the main experiment. This builds a model of the device's noisy readout effects.

- Blended Scheduling: Execute all circuits (for the Hamiltonian and for QDT) in an interleaved, or "blended," manner to average out time-dependent noise.

- Classical Post-Processing:

- Use the QDT model to create an unbiased estimator of the energy.

- Employ locally biased random measurements to allocate more shots to measurement settings that have a larger impact on the final energy estimate, reducing shot overhead [7].

Supporting Data: In an experiment estimating the energy of the BODIPY molecule on an IBM Eagle r3 processor, this protocol reduced the measurement error from 1-5% to 0.16%, bringing it close to the threshold of chemical accuracy [7].

This approach optimizes the measurement of matrix elements in quantum Krylov subspace diagonalization (QKSD), where sampling error can distort the generalized eigenvalue problem.

- Matrix Element Measurement: Measure the matrix elements ( \langle \psii | H | \psij \rangle ) for the Krylov basis states using either the linear combination of unitaries (LCU) or diagonalizable fragments Hamiltonian decomposition.

- Apply Error Reduction Strategies:

- Shifting Technique: Identify and remove redundant Hamiltonian components that annihilate either the bra or ket state, eliminating their contribution to sampling variance.

- Coefficient Splitting: Optimize the measurement of Hamiltonian terms that are common across different matrix elements ( \langle \psii | H | \psij \rangle ) to avoid repeated measurements.

- Solve Generalized Eigenvalue Problem (GEVP): Use the resulting estimates of the matrix pairs to solve the GEVP and approximate the ground state energy.

Supporting Data: Numerical experiments on small molecules demonstrated that these strategies can reduce sampling costs by a factor of 20 to 500 within a fixed quantum circuit repetition budget [8].

Workflow Diagram: Joint Measurement Strategy

The diagram below illustrates the core workflow for the Joint Measurement strategy [3].

Successful implementation of high-precision measurement strategies requires both specialized computational tools and certified physical materials.

| Resource/Solution | Function & Purpose | Example Use-Case |

|---|---|---|

| Certified Reference Materials (CRMs) [9] | Calibrate instruments and verify analytical method accuracy via samples with known, certified analyte concentrations. | Ensuring quantitative analytical methods for phytochemicals in botanicals are accurate, precise, and reproducible [10] [9]. |

| High-Resolution Accurate Mass (HRAM) Orbitrap | Provides ultra-high mass measurement accuracy (<1 part-per-million) for large biomolecules like glycans [11]. | Enables confident identification and study of glycans in biological samples via precise mass determination [11]. |

| Standard Reference Materials (SRMs) [9] | Physically embodied standards issued by NIST for instrument calibration and method validation [9]. | Transferring NIST's precision and accuracy capabilities to a user's laboratory for legally defensible measurements [9]. |

| Coupled-Cluster Theory [CCSD(T)] Data | Provides "gold standard" reference data for training machine learning models in computational chemistry [12]. | Training the MEHnet model to predict molecular properties with CCSD(T)-level accuracy at a lower computational cost [12]. |

| Quantum Detector Tomography (QDT) [7] | Characterizes a quantum computer's noisy readout effects to build an unbiased estimator for observables. | Mitigating readout errors during precise energy estimation on near-term quantum hardware [7]. |

Key Takeaways for Researchers

The pursuit of chemical accuracy is fundamentally constrained by measurement strategy. Joint Measurement [3] and IC Measurements with QDT [7] represent the current state-of-the-art, offering complementary advantages. The former provides provable efficiency gains and lower circuit depths for fermionic systems, while the latter demonstrates a proven, integrated system for error suppression on today's noisy hardware. For researchers focusing on quantum algorithms like QKSD, integrating sampling error reduction techniques [8] is essential. The choice of strategy is not one-size-fits-all; it must be tailored to the specific Hamiltonian, available quantum hardware, and the precision requirements of the research problem.

Expanding from Gas-Phase to Solvated Molecular Simulations

The transition from gas-phase to solvated molecular simulations represents a critical evolution in computational chemistry, particularly for applications in drug discovery and materials science where environmental effects fundamentally alter molecular properties and behaviors. While gas-phase calculations offer computational simplicity and form the foundation of many quantum chemical methods, they often fail to predict experimentally observed phenomena that emerge only in solution. Continuum solvation models, such as the Conductor-like Polarizable Continuum Model (CPCM), provide a computationally efficient framework for incorporating solvent effects by treating the solvent as a polarizable dielectric continuum rather than explicit molecules. This guide objectively evaluates the performance, computational requirements, and application scope of various methodologies for simulating solvated systems, with particular emphasis on their effectiveness in predicting experimentally relevant properties.

Theoretical and computational studies consistently demonstrate that appropriate inclusion of solvation in quantum chemical calculations is absolutely critical for correct prediction of molecular coordination, thermochemistry, and reactivity in aqueous environments. For instance, while gas-phase calculations may predict that five-coordinated silicate anions are more stable than their four-coordinated counterparts, experimental evidence from in-situ NMR studies clearly shows that deprotonated silicic acid in alkaline aqueous solutions exists predominantly in four-coordinated configurations. This discrepancy highlights how solvation energetics generally favors formation of smaller, more compact anions in high dielectric constant solvents like water, necessitating computational approaches that explicitly account for these environmental effects [13].

Theoretical Foundations: From Gas-Phase to Solvated Environments

Fundamental Challenges in Solvation Modeling

The theoretical framework for understanding solvation effects begins with recognizing that molecular species experience significant electronic and structural reorganization when transferred from vacuum to solution. Multiply charged anions of silicic acid, for instance, are found to be electronically unstable in the gas phase but become stabilized sufficiently in high dielectric constant solvents to enable direct computation of their thermodynamic quantities. This stabilization occurs because the polar solvent molecules effectively screen the electrostatic repulsion between negatively charged atoms, allowing species to exist in solution that would be thermodynamically unfavorable in isolation [13].

When employing density functional theory (DFT) calculations, researchers must carefully consider how solvation affects predicted molecular properties. Gas-phase DFT calculations typically predict that the five-coordinated anion H₅SiO₅⁻ would be the most stable singly charged anion of silicic acid in the presence of hydroxide ligands. However, when solvation effects are properly incorporated through continuum models or explicit solvent molecules, the calculations correctly predict the experimental observation that H₃SiO₄⁻ represents the most stable configuration in aqueous alkaline solutions. The energy difference between these configurations can be substantial—approximately 5 kcal/mol in Gibbs free energy—highlighting the substantial thermodynamic influence of the solvent environment [13].

Electronic Structure Considerations

The electronic structure of molecules undergoes significant modification in solution compared to gas phase. Solvent effects can alter molecular geometries, charge distributions, orbital energies, and reaction pathways. For charged species particularly, the solvent environment stabilizes localized charges through polarization effects, which can fundamentally change the potential energy surfaces governing molecular conformation and reactivity. These electronic structure modifications necessitate computational approaches that either implicitly or explicitly account for solute-solvent interactions to achieve predictive accuracy for experimentally measurable properties [13].

Methodological Approaches: A Comparative Analysis

Density Functional Theory with Implicit Solvation

Density functional theory augmented with implicit solvation models represents a balanced approach that maintains reasonable computational cost while incorporating essential solvent effects. The key advantage of this methodology lies in its ability to capture the bulk electrostatic effects of the solvent environment without the exponential increase in computational cost associated with explicit solvent molecules. Implementation typically involves solving the quantum mechanical equations for the solute molecule embedded in a cavity within a dielectric continuum characterized by the solvent's dielectric constant [13].

The performance of various DFT functionals for predicting reduction potentials and electron affinities has been systematically evaluated against experimental data. As shown in Table 1, the B97-3c functional demonstrates particularly strong performance for main-group species, with a mean absolute error (MAE) of 0.260 V for reduction potential prediction. The computational protocol for these calculations typically involves geometry optimization of both reduced and oxidized species followed by single-point energy calculations incorporating solvation effects through continuum models such as CPCM or COSMO [14].

Table 1: Performance of Computational Methods for Reduction Potential Prediction

| Method | System Type | MAE (V) | RMSE (V) | R² |

|---|---|---|---|---|

| B97-3c | Main-group (OROP) | 0.260 | 0.366 | 0.943 |

| B97-3c | Organometallic (OMROP) | 0.414 | 0.520 | 0.800 |

| GFN2-xTB | Main-group (OROP) | 0.303 | 0.407 | 0.940 |

| GFN2-xTB | Organometallic (OMROP) | 0.733 | 0.938 | 0.528 |

| eSEN-S | Main-group (OROP) | 0.505 | 1.488 | 0.477 |

| eSEN-S | Organometallic (OMROP) | 0.312 | 0.446 | 0.845 |

| UMA-S | Main-group (OROP) | 0.261 | 0.596 | 0.878 |

| UMA-S | Organometallic (OMROP) | 0.262 | 0.375 | 0.896 |

| UMA-M | Main-group (OROP) | 0.407 | 1.216 | 0.596 |

| UMA-M | Organometallic (OMROP) | 0.365 | 0.560 | 0.775 |

Neural Network Potentials with OMol25 Training

The recent development of neural network potentials (NNPs) trained on the Open Molecules 2025 (OMol25) dataset represents a promising alternative to traditional quantum chemical methods. Surprisingly, these NNPs demonstrate competitive performance despite not explicitly considering charge-based Coulombic interactions in their architecture. The UMA Small (UMA-S) model, in particular, shows remarkable accuracy for predicting reduction potentials of organometallic species, achieving an MAE of 0.262 V and R² of 0.896, outperforming both GFN2-xTB and the more complex UMA Medium model for this specific application [14].

The standard protocol for employing OMol25-trained NNPs involves geometry optimization of both reduced and oxidized structures using the neural network potential, followed by computation of solvent-corrected electronic energies using continuum solvation models such as the Extended Conductor-like Polarizable Continuum Model (CPCM-X). The reduction potential is then calculated as the difference between the electronic energy of the reduced structure and that of the non-reduced structure, converted to volts. This approach demonstrates that NNPs can effectively capture complex electronic structure effects despite their lack of explicit physical modeling of charge interactions [14].

Semiempirical Quantum Mechanical Methods

Semiempirical quantum mechanical (SQM) methods such as GFN2-xTB offer a computationally efficient alternative for high-throughput screening applications. While these methods generally show good performance for main-group compounds, their accuracy decreases significantly for organometallic systems, as evidenced by the substantial increase in MAE from 0.303 V for main-group species to 0.733 V for organometallic species in reduction potential prediction. This performance discrepancy highlights the challenges in parameterization for diverse chemical environments and the complex electronic effects present in transition metal systems [14].

The standard implementation of SQM methods for solvation studies involves geometry optimization in the gas phase or with implicit solvation, followed by single-point energy calculations with solvation corrections. For GFN2-xTB, an empirical correction of 4.846 eV is typically applied to energy differences to account for self-interaction energy inherent in the method. Thermostatistical corrections, including zero-point vibrational energy corrections, are often incorporated to improve accuracy, particularly for comparison with experimental thermodynamic data [14].

Quantum Computing Approaches for Hamiltonian Measurements

Emerging quantum computing approaches offer novel strategies for measuring electronic Hamiltonians of solvated systems, with potential advantages for strongly correlated systems where classical methods struggle. The variational quantum eigensolver (VQE) algorithm has shown particular promise for estimating molecular energies, though efficient measurement strategies remain challenging. Techniques such as fermionic classical shadows and fluid fermionic fragments have been developed to reduce measurement requirements while maintaining accuracy [15].

Recent advances include joint measurement strategies that enable estimation of all quadratic and quartic Majorana monomials with favorable scaling. For an N-mode fermionic system, these approaches can estimate expectation values to precision ε using O(Nlog(N)/ε²) and O(N²log(N)/ε²) measurement rounds for quadratic and quartic terms, respectively. When implemented on quantum processors with rectangular lattice qubit layouts, these strategies can achieve circuit depths of O(N¹/²) with O(N³/²) two-qubit gates, offering significant improvements over previous approaches that required depth O(N) and O(N²) two-qubit gates [3].

Experimental Protocols and Benchmarking

Reduction Potential Calculations

The accurate prediction of reduction potentials represents a stringent test for computational methods transitioning from gas-phase to solvated environments. The standard experimental protocol involves obtaining reduction potential data from curated datasets such as those compiled by Neugebauer et al., which include 193 main-group species and 120 organometallic species with corresponding experimental values, solvent identities, and optimized geometries for both reduced and oxidized forms [14].

For computational benchmarking, researchers typically perform geometry optimization of both redox states using the method under evaluation, followed by computation of solvent-corrected electronic energies using appropriate continuum solvation models. The reduction potential is calculated as the energy difference between the reduced and oxidized species, with careful attention to thermodynamic cycles and standard states. Statistical metrics including mean absolute error (MAE), root mean square error (RMSE), and coefficient of determination (R²) provide quantitative measures of method performance against experimental data [14].

Electron Affinity Calculations

Electron affinity calculations provide a complementary benchmark that focuses on gas-phase properties but remains relevant for understanding fundamental electronic structure features that persist in solution. The standard protocol involves comparing computed electron affinities against experimental gas-phase values for well-characterized main-group and organometallic species. Methodologies that perform well for both reduction potentials and electron affinities demonstrate robust parameterization across different chemical environments and electronic structure regimes [14].

For electron affinity calculations, the computational approach typically involves geometry optimization of both the neutral and anionic species, followed by single-point energy calculations. Special care must be taken to ensure proper convergence of the self-consistent field procedure for anionic systems, which can be challenging due to diffuse electron distributions. Some methods may require second-order self-consistent field approaches or level-shifting techniques to achieve convergence, particularly for functional groups with large electron affinities [14].

Table 2: Performance Comparison for Electron Affinity Prediction

| Method | System Type | MAE (eV) | Key Strengths |

|---|---|---|---|

| r2SCAN-3c | Main-group | 0.032 | Excellent across diverse systems |

| ωB97X-3c | Main-group | 0.035 | Strong for organic molecules |

| g-xTB | Main-group | 0.041 | Computational efficiency |

| GFN2-xTB | Main-group | 0.048 | Balance of speed and accuracy |

| UMA-S | Main-group | 0.038 | Competitive with DFT |

| UMA-S | Organometallic | 0.025 | Superior to DFT for complexes |

Quantum Measurement Strategies for Electronic Hamiltonians

Classical Shadows and Locally-Biased Variants

The estimation of quantum observables for electronic Hamiltonians represents a significant bottleneck in variational quantum algorithms. Classical shadows using random Pauli measurements provide a framework for efficiently estimating expectation values of complex observables without increasing quantum circuit depth. This approach randomly selects Pauli bases for measurement across qubits, then provides non-zero estimates for all Pauli operators that qubit-wise commute with the measurement bases [16].

The locally-biased classical shadows technique enhances this approach by optimizing the measurement basis probability distribution on each qubit based on knowledge of the target Hamiltonian and a classical approximation of the quantum state. This optimization reduces the variance of the estimator without increasing circuit depth, making it particularly valuable for near-term quantum devices with limited gate counts and coherence times. The variance reduction achieved through local biasing can be substantial for molecular Hamiltonians, where prior knowledge from Hartree-Fock solutions or multi-reference perturbation theory provides effective reference states [16].

Fluid Fermionic Fragments

The fluid fermionic fragments approach represents another strategy for optimizing quantum measurements of electronic Hamiltonians in the variational quantum eigensolver framework. This methodology exploits flexibility in the form of fermionic fragments to lower measurement variances by repartitioning components between one-electron and two-electron fragments. Due to idempotency of occupation number operators, certain parts of two-electron fragments can be converted into one-electron fragments, which are then collected in a purely one-electron fragment [15].

This repartitioning does not affect the expectation value of the Hamiltonian but provides non-vanishing contributions to the variance of each fragment. The optimal repartitioning is determined using variances estimated through classically efficient proxies for the quantum wavefunction. Numerical tests on several molecular systems demonstrate that this repartitioning of one-electron terms can reduce the number of required measurements by more than an order of magnitude, providing significant efficiency improvements for quantum computational chemistry [15].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools for Solvated Molecular Simulations

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| OMol25 Dataset | Training Data | Provides reference calculations for NNP development | Benchmarking and training machine learning potentials |

| CPCM-X | Solvation Model | Implicit solvation corrections | Electronic energy calculations in solution |

| geomeTRIC 1.0.2 | Software | Geometry optimization | Molecular structure refinement |

| Psi4 1.9.1 | Software Suite | Quantum chemical calculations | DFT, coupled-cluster, and SCF computations |

| Fermionic Classical Shadows | Algorithm | Efficient observable estimation | Quantum computational chemistry |

| Fluid Fermionic Fragments | Algorithm | Hamiltonian measurement optimization | Variational quantum eigensolver |

| Locally-Biased Classical Shadows | Algorithm | Variance reduction in measurements | Near-term quantum device applications |

The expansion from gas-phase to solvated molecular simulations represents an essential evolution in computational chemistry methodology, with profound implications for drug discovery, materials science, and fundamental chemical research. Our comparative analysis demonstrates that no single approach universally outperforms others across all chemical domains and target properties. Instead, researchers must carefully select methodologies based on the specific chemical systems, target properties, and computational resources available.

Neural network potentials trained on comprehensive datasets such as OMol25 show remarkable promise, achieving accuracy competitive with traditional DFT methods despite their lack of explicit physical modeling of charge interactions. Meanwhile, quantum computing approaches offer exciting long-term potential, particularly for strongly correlated systems where classical methods face fundamental limitations. As computational hardware and algorithms continue to advance, the integration of multiple methodological approaches—combining the strengths of classical simulations with emerging quantum and machine learning techniques—will likely provide the most productive path forward for predictive simulations of solvated molecular systems across the chemical sciences.

The pursuit of more effective drugs and advanced materials is being transformed by key industry drivers, particularly the adoption of artificial intelligence (AI) and quantum computing technologies. These tools are accelerating discovery, enhancing precision, and reducing the high costs and failure rates traditionally associated with research and development. This guide compares emerging strategies within the critical context of evaluating measurement strategies for quantum chemistry Hamiltonians, providing researchers and drug development professionals with a data-driven comparison of current methodologies.

The Pharmaceutical R&D Evolution: AI and Regulatory Modernization

The pharmaceutical industry is leveraging new technologies to overhaul a historically lengthy and high-attrition development process.

AI-Driven Discovery and Development: Artificial intelligence has evolved from a disruptive concept to a foundational platform in modern R&D. Its applications are multifaceted, moving beyond early-stage discovery into clinical development [17].

- Target and Compound Prioritization: Machine learning models now routinely inform target prediction, compound prioritization, and pharmacokinetic property estimation. For instance, integrating pharmacophoric features with protein-ligand interaction data has been shown to boost hit enrichment rates by more than 50-fold compared to traditional methods [17].

- Hit-to-Lead Acceleration: The hit-to-lead (H2L) phase is being compressed through AI-guided retrosynthesis and high-throughput experimentation (HTE). One 2025 study used deep graph networks to generate over 26,000 virtual analogs, resulting in sub-nanomolar inhibitors with a 4,500-fold potency improvement over initial hits [17].

- Biology-First AI in Clinical Trials: A significant shift is occurring from "black box" AI models to "biology-first Bayesian causal AI" [18]. These models use mechanistic priors grounded in biology to infer causality, not just correlation. This allows for smarter trial designs, including real-time adaptive trials where investigators can adjust dosing or modify inclusion criteria based on emerging data, thereby increasing precision and success rates [18].

Regulatory Modernization as a Driver: Regulatory bodies are actively enabling innovation. The FDA's Drug Development Tool (DDT) Qualification Program, established under the 21st Century Cures Act, creates a framework for qualifying biomarkers, clinical outcome assessments, and other tools [19]. Once qualified, these DDTs can be used across multiple drug development programs without needing re-evaluation, streamlining regulatory review. Furthermore, the FDA has announced plans to issue guidance on using Bayesian methods in clinical trials by September 2025, signaling strong support for more efficient and adaptive trial designs [18].

The Critical Role of Target Engagement: Mechanistic uncertainty remains a major cause of clinical failure. Technologies that provide direct, in-situ evidence of drug-target interaction are becoming strategic assets. The Cellular Thermal Shift Assay (CETSA), for example, is used to validate direct binding of a drug to its target in intact cells and tissues, closing the gap between biochemical potency and cellular efficacy [17].

Materials Science: Engineering Solids for Performance

In materials and pharmaceutical solids science, the core driver is the precise engineering of material properties to control performance and manufacturability.

Crystal Engineering: This field involves the understanding and manipulation of intermolecular interactions in crystal packing to design solids with desired physical and chemical properties [20]. For Active Pharmaceutical Ingredients (APIs), the chosen crystal form (e.g., polymorph, salt, cocrystal) profoundly affects stability, bioavailability, and ease of manufacture [20]. Crystal engineering principles are applied to solve formulation, processing, and product performance problems.

Amorphous Solid Dispersions: To enhance the bioavailability of poorly soluble drugs, advantages can be taken of packing disorders. Designing less crystalline or amorphous materials, such as solid dispersions, can lead to dissolution-controlled bioavailability improvement, as seen in products like griseofulvin [20].

Particle Engineering: Particulate properties are often manipulated post-crystallization. Technologies like milling, controlled crystallization, and spherical crystallization are used to improve bioavailability, optimize compaction behavior for tableting, and enhance content uniformity in final formulations [20].

Quantum Computing: A New Paradigm for Molecular Simulation

Quantum computing represents a frontier driver for both pharmaceuticals and materials science, offering a potential path to simulate molecular systems with unparalleled accuracy.

The Promise in Quantum Chemistry: Quantum computers are positioned to tackle problems in quantum chemistry and simulation that are currently intractable for classical computers, with fermionic systems being of particular interest [3]. The ability to determine low-energy states of molecular Hamiltonians is crucial for understanding chemical reactions and properties [3].

Progress Toward Practical Application: Research is now bridging the gap between theoretical simulation and real-world application. A landmark 2025 study successfully simulated solvated molecules on IBM quantum hardware by integrating a classical solvent model (IEF-PCM) with a quantum algorithm (Sample-based Quantum Diagonalization) [21]. This approach achieved solvation free energies that matched classical benchmarks within chemical accuracy (e.g., less than 0.2 kcal/mol difference for methanol), demonstrating that quantum computers can now begin to address chemically relevant problems in realistic environments [21].

Comparing Measurement Strategies for Quantum Hamiltonians

A critical bottleneck in quantum simulation is the efficient estimation of the Hamiltonian, which requires measuring the expectation values of many non-commuting observables. The following section objectively compares several state-of-the-art measurement strategies.

Experimental Protocols & Methodologies

Protocol 1: Joint Measurement Strategy for Fermionic Observables This strategy is designed to estimate fermionic observables and Hamiltonians relevant in quantum chemistry [3].

- State Preparation: Prepare the quantum state of the N-mode fermionic system.

- Randomized Unitary Evolution:

- Apply a unitary, sampled at random from a constant-size set of specially chosen fermionic Gaussian unitaries.

- Measurement: Perform a measurement of the fermionic occupation numbers in the new basis.

- Post-Processing: Classically process the measurement outcomes to reconstruct estimates for the expectation values of all quadratic and quartic Majorana monomials [3].

Protocol 2: Fermionic Classical Shadows This is a established randomized measurement strategy for learning properties of quantum states [3].

- State Preparation: Prepare the quantum state of interest.

- Randomized Unitary Evolution: Apply a unitary drawn randomly from the entire set of fermionic Gaussian unitaries (matchgate ensemble).

- Measurement: Measure the occupation numbers.

- Classical Snapshot: Use the measurement outcome and knowledge of the unitary to construct a "classical shadow" of the quantum state. This shadow can then be used to estimate expectation values of various observables [19] [3].

Protocol 3: Quantum Krylov Subspace Diagonalization (QKSD) with Error Mitigation This algorithm performs approximate Hamiltonian diagonalization via projection onto a quantum Krylov subspace, suitable for early fault-tolerant quantum computers (EFTQC) [8].

- State Preparation: Prepare a set of quantum reference states.

- Circuit Repetition and Measurement: Execute quantum circuits to measure matrix elements of the projected Hamiltonian. This can be done via Hamiltonian decomposition methods (e.g., Linear Combination of Unitaries or diagonalizable fragments).

- Error Mitigation: Apply strategies like the "shifting technique" (to eliminate redundant Hamiltonian components) and "coefficient splitting" (to optimize measurement of common terms) to reduce finite sampling error.

- Classical Diagonalization: Solve a generalized eigenvalue problem (GEVP) classically using the measured matrix to find ground and excited state energies [8].

Performance Data Comparison

The table below summarizes the performance of these strategies based on recent research, providing a direct comparison for researchers.

Table 1: Comparative Performance of Quantum Hamiltonian Measurement Strategies

| Strategy | Key Measured Observables | Sample Complexity (ϵ precision) | Key Performance Metrics | Implementation Considerations |

|---|---|---|---|---|

| Joint Measurement Strategy [3] | Quadratic & quartic Majorana monomials | $\mathcal{O}(N \log(N)/\epsilon^2)$ (quadratic), $\mathcal{O}(N^2 \log(N)/\epsilon^2)$ (quartic) | Matches fermionic classical shadows performance; Potential for easier error mitigation as estimates depend on ≤2 qubits. | Circuit depth: $\mathcal{O}(N^{1/2})$ (2D lattice). Two-qubit gates: $\mathcal{O}(N^{3/2})$ (2D lattice). |

| Fermionic Classical Shadows [3] | Non-commuting fermionic observables | $\mathcal{O}(N \log(N)/\epsilon^2)$ (quadratic), $\mathcal{O}(N^2 \log(N)/\epsilon^2)$ (quartic) | Provides state-of-the-art sample complexity scalings. | Circuit depth: $\mathcal{O}(N)$ (2D lattice). Two-qubit gates: $\mathcal{O}(N^2)$ (2D lattice). Requires randomization over a large unitary set. |

| QKSD with Error Mitigation [8] | Projected Hamiltonian matrix elements | N/A (Reduces sampling cost by a factor of 20–500 in small molecules) | Reduces the dominant sampling error in the GEVP; Demonstrates effectiveness on small molecule electronic structures. | Designed for the EFTQC regime; Strategies are agnostic to the specific Hamiltonian decomposition method used. |

Workflow Visualization

The diagram below illustrates the logical flow and key differentiators of the Joint Measurement Strategy [3].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key materials, tools, and software essential for conducting research in this interdisciplinary field.

Table 2: Essential Research Tools and Materials

| Item / Solution | Function / Application | Example Use-Case |

|---|---|---|

| CETSA (Cellular Thermal Shift Assay) [17] | Validates direct drug-target engagement in intact cells and native tissues. | Quantifying dose-dependent stabilization of a target protein (e.g., DPP9) in rat tissue, confirming cellular efficacy [17]. |

| Crystal Engineering Platforms [20] | Designs and manipulates solid-state properties (polymorphs, salts, cocrystals) of APIs. | Improving the solubility, stability, and tableting performance of a new drug candidate through cocrystal formation [20]. |

| AI/ML Modeling Software [17] [22] | Accelerates target ID, compound prioritization, and ADMET prediction via in-silico screening. | Using tools like AutoDock and SwissADME to filter virtual compound libraries for binding potential and drug-likeness before synthesis [17]. |

| Quantum Chemistry Datasets [22] | Provides high-quality data for training and benchmarking machine learning models. | Using the OMol25 dataset (~83M unique molecular systems) to develop next-generation ML force fields with quantum chemical accuracy [22]. |

| Fermionic Quantum Simulators [3] | Implements measurement strategies (e.g., joint measurement, classical shadows) for Hamiltonian estimation. | Estimating the energy of a molecular Hamiltonian on a quantum device with a rectangular qubit lattice using the joint measurement strategy [3]. |

The quest to accurately and efficiently simulate chemical systems drives innovation across both classical and quantum computational paradigms. For classical computing, this manifests in the development of sophisticated AI agents that automate complex research workflows. For quantum computing, the focus is on preparing for utility-scale advantage by benchmarking performance on foundational problems like estimating molecular energies. Defining success in both fields requires a clear set of metrics: chemical accuracy (typically 1 kcal/mol or ~1.6 mHa error in energy calculations), computational efficiency (time and resource requirements), and scalability to larger, more complex molecules. This guide objectively compares the current performance of leading approaches, from AI-driven autonomous labs to nascent quantum hardware, providing researchers with a clear landscape of the tools available for tackling computational chemistry challenges.

Classical Benchmark: AI and Autonomous Agents for Chemical Synthesis

The LLM-RDF Framework and Performance

A significant advancement in classical computational chemistry is the development of the Large Language Model-based Reaction Development Framework (LLM-RDF). This system employs six specialized AI agents (Literature Scouter, Experiment Designer, Hardware Executor, Spectrum Analyzer, Separation Instructor, and Result Interpreter) to guide an end-to-end synthesis process, from literature search to product purification [23]. Demonstrated on the copper/TEMPO-catalyzed aerobic alcohol oxidation reaction, this framework successfully automates the entire development cycle, significantly reducing the need for manual intervention and coding expertise [23].

Table: Capabilities of LLM-RDF Agents in Chemical Synthesis

| LLM-RDF Agent | Primary Function | Key Performance Outcome |

|---|---|---|

| Literature Scouter | Automated literature search and data extraction from databases like Semantic Scholar | Identified and recommended the Cu/TEMPO catalytic system based on sustainability and selectivity metrics [23] |

| Experiment Designer | Designs high-throughput screening (HTS) experiments | Plans substrate scope and condition screening experiments to overcome reproducibility challenges [23] |

| Hardware Executor | Interfaces with automated lab equipment | Executes designed HTS experiments, lowering the barrier for chemists without coding experience [23] |

| Spectrum Analyzer | Analyzes spectral data (e.g., Gas Chromatography) | Automates the analysis of large volumes of HTS results [23] |

| Result Interpreter | Interprets experimental outcomes and suggests next steps | Analyzes HTS results to guide subsequent optimization cycles [23] |

Experimental Protocol for AI-Driven Synthesis

The experimental workflow for the LLM-RDF, as applied to aerobic alcohol oxidation, follows a structured, iterative protocol [23]:

- Literature Synthesis: The Literature Scouter agent is prompted with a natural language request (e.g., "Searching for synthetic methods that can use air to oxidize alcohols into aldehydes") to query the Semantic Scholar database. It extracts and summarizes detailed experimental procedures for the recommended method.

- HTS Experiment Design: The Experiment Designer agent formulates a plan for investigating substrate scope, specifying reactants, conditions, and controls. It addresses challenges such as solvent volatility and catalyst stability.

- Automated Execution: The Hardware Executor translates the designed experiments into commands for automated high-throughput laboratory platforms, executing the reactions in open-cap vials.

- Automated Analysis: The Spectrum Analyzer agent processes the raw GC data from the HTS, converting it into quantitative yield information.

- Interpretation and Optimization: The Result Interpreter analyzes the yield data, identifies trends, and suggests subsequent experiments for kinetic studies or condition optimization, closing the design-make-test-analyze loop.

Quantum Benchmark: Algorithms for Hamiltonian Simulation

Key Quantum Algorithms and Strategies

On quantum hardware, the focus shifts to estimating the ground-state energy of molecular Hamiltonians—a core task in quantum chemistry. Current research benchmarks several algorithms and measurement strategies for the Noisy Intermediate-Scale Quantum (NISQ) and Early Fault-Tolerant Quantum Computing (EFTQC) eras.

Table: Comparison of Quantum Algorithms for Chemistry Hamiltonians

| Algorithm / Strategy | Computational Paradigm | Reported Performance and Metrics |

|---|---|---|

| Variational Quantum Eigensolver (VQE) | NISQ (Hybrid quantum-classical) | Benchmarked on small Al clusters (Al⁻, Al₂, Al₃⁻); percent errors vs. classical data (CCCBDB) consistently below 0.2% [24]. Performance highly dependent on optimizer and circuit choice [24]. |

| Quantum Krylov Subspace Diagonalization (QKSD) | EFTQC | Projected Hamiltonian solved via generalized eigenvalue problem. Exponential convergence possible but sensitive to sampling errors [25]. |

| Quantum-Classical AFQMC (IonQ) | Quantum-enhanced classical | Demonstrated accurate computation of atomic-level forces for carbon capture modeling; claimed more accurate than classical methods alone [6]. |

| Fermionic Joint Measurement | EFTQC | Estimates quadratic/quartic Majorana observables for N-mode system with O(N log N / ε²) to O(N² log N / ε²) rounds. Offers lower circuit depth (O(N¹¹/₂)) vs. classical shadows (O(N)) on 2D qubit lattices [3]. |

| Sampling Error Mitigation (for QKSD) | EFTQC | "Shifting technique" and "coefficient splitting" reduce sampling cost by a factor of 20–500 for small molecule electronic structure problems [25]. |

Experimental Protocol for Quantum Chemistry Benchmarks

A typical benchmarking protocol for a quantum algorithm like VQE or QKSD involves several standardized steps [24] [25]:

- Problem Definition: Select a target molecular Hamiltonian (e.g., for Al clusters or small molecules like H₂ or LiH).

- Qubit Mapping: Map the fermionic Hamiltonian to qubits using a transformation such as the Jordan-Wigner or Bravyi-Kitaev encoding.

- Algorithm Execution:

- For VQE: Prepare a parameterized trial wavefunction (ansatz) on the quantum processor. Measure the expectation value of the Hamiltonian through repeated circuit executions. Use a classical optimizer to vary the parameters and minimize the energy [24].

- For QKSD: Prepare a reference state |ϕ₀⟩. Apply a series of real-time evolution operators (e.g., e^{-iĤkΔt}, approximated via Trotterization) to create a basis for the Krylov subspace. Measure the matrix elements Hₖₗ = ⟨ϕₖ|Ĥ|ϕₗ⟩ and Sₖₗ = ⟨ϕₖ|ϕₗ⟩ [25].

- Classical Post-Processing: Solve the resulting generalized eigenvalue problem (for QKSD) or identify the minimal energy (for VQE) on a classical computer.

- Error Analysis and Mitigation: Compare the computed ground-state energy to classically exact results (e.g., from Full Configuration Interaction). Apply error mitigation strategies, such as the shifting technique for QKSD, which removes redundant Hamiltonian components to reduce sampling error [25].

Table: Key Resources for Quantum Chemistry Experiments

| Resource Solution | Function / Description | Application Context |

|---|---|---|

| Classical AI Agent Framework (LLM-RDF) | An integrated suite of AI agents to autonomously plan, execute, and analyze chemical synthesis experiments [23]. | Automated reaction discovery and optimization in synthetic chemistry. |

| Fermionic Classical Shadows | A randomized measurement technique to efficiently estimate multiple non-commuting fermionic observables simultaneously [3]. | Quantum simulation of molecular systems, reducing total measurement rounds. |

| Quantum Krylov Subspace Diagonalization (QKSD) | A quantum algorithm that projects the Hamiltonian into a subspace built from time-evolved states, solved classically for eigenvalues [25]. | Ground and excited state energy calculation on early fault-tolerant quantum computers. |

| Sampling Error Mitigation Strategies | Techniques like "coefficient splitting" and the "shifting technique" to minimize statistical noise in quantum measurements within a fixed budget [25]. | Essential for all quantum algorithms (VQE, QKSD) where finite sampling is a dominant error source. |

| Hardware Benchmarking Suite (e.g., SVB) | Scalable benchmarks like Subcircuit Volumetric Benchmarking (SVB) that test processor performance on subroutines from utility-scale algorithms [26]. | Objectively comparing QPU performance and tracking progress towards utility-scale quantum chemistry. |

The landscape for computational chemistry is diversifying rapidly. Classical AI approaches, exemplified by autonomous agent frameworks like LLM-RDF, are already demonstrating tangible utility by automating complex, end-to-end experimental workflows in the laboratory [23]. In the quantum domain, while hardware is still advancing, clear success metrics and sophisticated algorithms like QKSD and efficient fermionic measurement strategies are being rigorously defined and tested on current hardware [3] [25]. The path to utility-scale quantum advantage in chemistry is being paved by these precise benchmarks and the continuous co-design of algorithms and hardware, moving the field beyond isolated demonstrations toward integrated, practical tools for research and industry.

Advanced Measurement Protocols: From Theory to Hardware Implementation

Informationally Complete (IC) Measurements for Multi-Observable Estimation

Accurately estimating the expectation values of multiple observables is a fundamental challenge in quantum chemistry and simulation. For near-term quantum devices, strategies based on Informationally Complete (IC) measurements have emerged as a powerful framework for reducing the resource overhead associated with this task. Unlike traditional methods that require separate measurement circuits for each non-commuting observable, IC measurements allow for the estimation of many different observables from a single set of quantum measurements, leveraging classical post-processing to reconstruct the desired quantities [7] [27].

This guide provides an objective comparison of the performance of several leading IC and joint measurement strategies, focusing on their application to fermionic Hamiltonians in quantum chemistry. We summarize experimental data and theoretical performance, detail key experimental protocols, and provide resources to help researchers select the optimal strategy for their specific application, such as drug development projects involving molecular energy calculations.

Comparative Analysis of IC Measurement Strategies

The table below compares the performance and characteristics of four prominent measurement strategies for quantum chemistry applications.

Table 1: Performance Comparison of Multi-Observable Estimation Strategies

| Strategy | Key Principle | Reported Performance / Variance | Circuit Depth | Key Applications Demonstrated |

|---|---|---|---|---|

| IC-POVMs [7] [27] | Measures a single, overcomplete set of POVMs to reconstruct many observables. | Reduced measurement errors to 0.16% (from 1-5%) for BODIPY molecule energy estimation [7]. | Not Specified | Molecular energy estimation (BODIPY), quantum Equation of Motion (qEOM) for thermal averages [27]. |

| Fermionic Joint Measurement [3] | Uses randomization over fermionic Gaussian unitaries to jointly measure Majorana operators. | Estimates all 2- and 4-body Majorana terms to precision ε with (O(N^2 \log N / \epsilon^2)) rounds, matching fermionic classical shadows [3]. | (O(N^{1/2})) (2D lattice) | Estimating electronic structure Hamiltonians; benchmarked on various molecules. |

| Locally Biased Classical Shadows [7] | A variant of classical shadows that biases measurements towards important observables (e.g., the Hamiltonian). | Enabled high-precision measurement of complex Hamiltonians on up to 28-qubit systems [7]. | Not Specified | Reducing shot overhead for molecular energy estimation on near-term hardware. |

| Adaptive Quantum Gradient Estimation (QGE) [28] | Uses an adaptive, entangled probe system to collectively encode multiple observables. | Achieved a 100x reduction in query complexity for FeMoco and 500x for Fermi-Hubbard models vs. prior QGE algorithms [28]. | Not Specified | Estimating fermionic 2-RDMs (reduced density matrices) for large correlated systems. |

Experimental Protocols and Workflows

Protocol 1: IC-POVMs for Molecular Energy Estimation

This protocol, used to achieve high-precision energy estimation for the BODIPY molecule, combines IC-POVMs with advanced error mitigation and scheduling techniques [7].

- Step 1: State Preparation – Prepare the target quantum state. In the demonstrated experiment, the Hartree-Fock state of the BODIPY molecule in active spaces of up to 28 qubits was used, as it is separable and requires no two-qubit gates, thereby isolating measurement errors [7].

- Step 2: Informationally Complete Measurement – Instead of measuring in commuting Pauli groups, perform a set of informationally complete positive operator-valued measure (IC-POVM) measurements on the prepared state. This single set of measurements is informationally complete, meaning the collected data can be used to compute the expectation values of a vast number of non-commuting observables that constitute the molecular Hamiltonian [7] [27].

- Step 3: Parallel Quantum Detector Tomography (QDT) – In parallel with the main experiment, repeatedly run circuits dedicated to QDT. This step characterizes the actual, noisy measurement effects of the device, which are used to build an unbiased estimator for the molecular energy and significantly reduce systematic errors [7].

- Step 4: Blended Scheduling – Execute all circuits (state preparations and QDT circuits) in a blended, interleaved manner rather than in large sequential blocks. This technique averages out the impact of time-dependent noise (e.g., drift) across the entire experiment, ensuring that all energy estimations are affected homogeneously [7].

- Step 5: Shot Allocation and Post-Processing – Use the collected measurement data from the IC-POVMs and the calibrated noise model from QDT to classically compute the expectation value of the Hamiltonian. Techniques like locally biased random measurements can be employed to optimize shot allocation, reducing the number of measurements required to achieve the target precision [7].

Protocol 2: Fermionic Joint Measurement for Majorana Observables

This strategy provides a simplified and hardware-efficient approach for jointly measuring fermionic observables like those found in molecular Hamiltonians [3].

- Step 1: Prepare Fermionic State – Initialize the quantum system in the desired fermionic state, encoded onto qubits via a transformation like Jordan-Wigner.

- Step 2: Apply Random Fermionic Gaussian Unitary – Apply a unitary operation ( U ), randomly selected from a small, pre-defined set of fermionic Gaussian unitaries (e.g., a set of 4 for electronic structure Hamiltonians). These unitaries rotate the underlying fermionic modes and are key to making non-commuting operators jointly measurable [3].

- Step 3: Measure in Occupation Number Basis – Perform a projective measurement in the computational (occupation number) basis. This yields a binary string corresponding to the occupation of each fermionic mode [3].

- Step 4: Classical Post-Processing – For each measurement outcome, classically compute the expectation values of the noisy versions of the target Majorana operators (e.g., all pairs and quadruples). The entire set of these noisy operators forms a single joint measurement. Averaging over many runs and different random unitaries provides unbiased estimates for the original, non-commuting observables of the Hamiltonian [3].

The Scientist's Toolkit: Key Research Reagents

Table 2: Essential Materials and Tools for IC Experiments

| Item / Technique | Function in Experiment | Example Use Case |

|---|---|---|

| Quantum Detector Tomography (QDT) | Characterizes the real, noisy measurement process of the quantum device, enabling the creation of an unbiased estimator. | Mitigating readout errors in molecular energy estimation [7]. |

| Fermionic Gaussian Unitaries | A specific family of unitaries that map fermionic creation/annihilation operators to linear combinations of themselves. | Core component for implementing fermionic joint measurements [3]. |

| Hartree-Fock State | A simple, separable initial state often used as a reference point in quantum chemistry calculations. | Used as the prepared state to isolate and study measurement errors [7]. |

| Locally Biased Random Measurements | A shot-frugal technique that prioritizes measurement settings with a larger impact on the final observable. | Reducing the total number of shots required for accurate energy estimation [7]. |

| Blended Scheduling | An execution method that interleaves different circuit types to average out time-dependent noise. | Ensuring homogeneous noise impact across all measurements in an experiment [7]. |

| Classical Shadows Post-Processing | An efficient classical algorithm that uses measurement outcomes to predict many observables. | Reconstructing expectation values from randomized measurements [3]. |

Sample-Based Quantum Diagonalization (SQD) for Solvated Molecules

The simulation of complex molecular systems, such as solvated molecules, represents a central challenge in computational chemistry and drug development. For solvated molecules, where the solvent environment significantly impacts electronic structure, the computational cost of exact methods becomes prohibitive. Sample-Based Quantum Diagonalization (SQD) has emerged as a hybrid quantum-classical algorithm designed to address this challenge by leveraging quantum processors as sampling engines while offloading the expensive diagonalization task to classical computers [29]. This guide evaluates SQD's performance against other near-term quantum algorithms within the broader research goal of developing efficient measurement strategies for quantum chemistry Hamiltonians. SQD is particularly promising for concentrated wave functions—those supported on a small subset of the full Hilbert space—a property often exhibited by ground states of many chemical systems [30].

Comparative Analysis of Quantum Algorithms for Ground-State Energy

This section objectively compares SQD's methodology and performance against other prominent quantum algorithms for finding molecular ground-state energies: the Variational Quantum Eigensolver (VQE) and Quantum Phase Estimation (QPE).

Table 1: Key Algorithm Characteristics and Hardware Requirements

| Algorithm | Key Methodology | Circuit Depth | Measurement/Classical Cost | Theoretical Guarantees |

|---|---|---|---|---|

| Sample-Based Quantum Diagonalization (SQD) | Quantum computer samples bitstrings to form a subspace; Hamiltonian is diagonalized classically within this subspace [29] [30]. | Moderate (varies with ansatz, e.g., LUCJ) [29]. | High classical diagonalization cost, but avoids variational measurement bottleneck [30]. | Convergence proven for concentrated wave functions [30]. |

| Variational Quantum Eigensolver (VQE) | Parameterized quantum circuit is optimized via classical minimization of the measured energy expectation value [30]. | Low to Moderate | Extremely high measurement overhead for energy estimation; classical optimization can get stuck in local minima [30]. | Heuristic; no general convergence guarantees. |

| Quantum Phase Estimation (QPE) | Uses quantum coherence and phase kickback to read the energy eigenvalue directly from a phase [30]. | Very High | Minimal classical processing. | Proven convergence to true ground state [30]. |

Performance Benchmarking on Real Molecules

The following table summarizes published experimental results for SQD and compares its performance with other methods on specific molecular systems, demonstrating its utility-scale capabilities.

Table 2: Experimental Performance on Molecular Systems

| Molecular System | Algorithm | Qubits | Key Performance Metric | Comparison to Classical Methods |

|---|---|---|---|---|

| N₂ Molecule (Dissociation) | SQD (LUCJ ansatz) [29] | 58 | Accurately captures bond dissociation curve, overcoming limitations of classical CCSD [29]. | Outperforms CCSD in strong correlation regime [29]. |

| [2Fe-2S] Cluster | SQD (LUCJ ansatz) [29] | 45 | Achieves accurate ground-state energy in a system biologically relevant to drug development [29]. | Comparable to high-accuracy HCI [29]. |

| [4Fe-4S] Cluster | SQD (LUCJ ansatz) [29] | 77 | Successfully computes ground-state properties on Heron processor, beyond exact diagonalization scale [29]. | Surpasses the scale of classical Full CI [29]. |

| Polycyclic Aromatic Hydrocarbons | SqDRIFT (Randomized SQD) [31] [30] | Up to 48 | Calculates electronic ground-state energy for systems like coronene on current quantum processors [30]. | Reaches system sizes beyond exact diagonalization [31] [30]. |

Experimental Protocols for SQD Implementation

The general SQD workflow involves specific, replicable steps for generating and processing quantum information. The following diagram illustrates this workflow and the synergistic quantum-classical interaction.

Protocol 1: Standard SQD with LUCJ Ansatz

This protocol, used for simulating molecules like the [4Fe-4S] cluster, details the steps for leveraging the Local Unitary Cluster Jastrow (LUCJ) ansatz [29].

- Problem Formulation: The second-quantized electronic structure Hamiltonian is mapped to a qubit operator using a transformation such as Jordan-Wigner or parity encoding.

- Initial State Preparation: Prepare a reference state, often the Hartree-Fock (HF) state, on the quantum processor.

- Ansatz Circuit Execution: Execute the LUCJ quantum circuit. The LUCJ ansatz is designed with local approximations to maintain a manageable circuit depth while accurately capturing electron correlation effects. It is compiled into native single- and two-qubit gates for the target processor (e.g., a Heron superconducting processor) [29].

- Quantum Sampling: The quantum computer is used multiple times to sample bitstrings (measurement outcomes) in the computational basis from the output state of the LUCJ circuit. This does not require measuring expectation values.

- Classical Post-Processing:

- The sampled bitstrings define a subspace of the full Hilbert space.

- The molecular Hamiltonian is projected into this subspace.

- The projected Hamiltonian is diagonalized using classical algorithms (e.g., the Davidson method within the PySCF library), yielding an approximation to the ground-state energy and wave function [29].

- Noise Mitigation: Techniques like "reset mitigation" and symmetry restoration (e.g., for spin operators Ŝz and Ŝ²) are applied to improve the quality of results from noisy hardware [29].

Protocol 2: SqDRIFT for Randomized Krylov Subspace

For systems where Trotter-based time evolution is too deep, the SqDRIFT protocol provides a randomized, more near-term friendly alternative [31] [30].

- Problem Formulation: Same as Protocol 1.

- Initial State Preparation: Prepare an initial state with non-zero overlap with the true ground state (e.g., HF state).

- Randomized Time Evolution: Instead of a deterministic Trotter step, use the qDRIFT protocol to compile the Hamiltonian time-evolution operator

e^(-iHt). qDRIFT randomly selects Hamiltonian terms with probabilities proportional to their coefficients, generating an ensemble of shallower, randomized quantum circuits [30]. - Ensemble Sampling: Execute the ensemble of qDRIFT circuits and collect bitstring samples from all of them.

- Classical Diagonalization: The collected samples from the randomized ensemble are aggregated to form the diagonalization subspace. The Hamiltonian is then classically diagonalized within this subspace. This approach preserves convergence guarantees for concentrated wave functions while using shallower circuits [30].

The Scientist's Toolkit: Essential Research Reagents

This section details the key computational tools and methods required to implement SQD experiments, framing them as essential "research reagents" for scientists in the field.

Table 3: Key Research Reagents for SQD Experiments

| Reagent / Solution | Function in the SQD Protocol | Example Implementation |

|---|---|---|

| Local Unitary Cluster Jastrow (LUCJ) Ansatz | A parameterized quantum circuit ansatz that balances accuracy with circuit depth for chemical systems, enabling simulation of large clusters [29]. | Truncated version used for N₂, [2Fe-2S], and [4Fe-4S] simulations on Heron processor [29]. |

| qDRIFT Randomized Compiler | A compilation technique that approximates time evolution with random, shallow circuits, making Krylov-based methods (SqDRIFT) feasible on near-term devices [31] [32]. | Used in SqDRIFT algorithm to simulate polycyclic aromatic hydrocarbons like coronene [30]. |

| Classical Diagonalization Engine | A high-performance classical algorithm that solves the eigenvalue problem within the quantum-generated subspace. | Davidson method in PySCF library; distributed computing with DICE on Fugaku supercomputer [29]. |

| Symmetry Restoration Routines | Classical post-processing algorithms that project the sampled wave function onto sectors with correct quantum numbers (e.g., spin Ŝ²), mitigating noise errors [29]. | Applied to recover spin symmetries in chemical simulations, improving agreement with theoretical expectations [29]. |

| Utility-Scale Quantum Processor | A quantum processing unit with sufficient qubit count, connectivity, and gate fidelity to execute the sampling circuits. | 77-qubit simulation of [4Fe-4S] cluster on IBM's Heron processor [29]. |

This guide has provided a comparative evaluation of Sample-Based Quantum Diagonalization (SQD) as a strategic approach for tackling quantum chemistry Hamiltonians, with a focus on its applicability to complex systems like solvated molecules. The experimental data demonstrates that SQD, particularly when enhanced with randomized methods like SqDRIFT, can already handle system sizes beyond the reach of classical exact diagonalization on current quantum hardware. While challenges remain—such as the classical cost of diagonalizing very large subspaces—SQD's unique division of labor between quantum and classical resources establishes it as a leading candidate for achieving practical quantum utility in computational chemistry and drug development. Future research will focus on optimizing subspace generation and integrating error correction to push these methods toward even larger and more complex solvated systems.

The accurate modeling of solvent effects is a critical challenge in computational chemistry, particularly for applications in drug design and biomolecular simulation where most processes occur in liquid environments [33]. Implicit solvation models, which represent the solvent as a continuous medium rather than with explicit molecules, provide a powerful compromise between computational cost and physical rigor [34] [35]. Among these models, the Integral Equation Formalism Polarizable Continuum Model (IEF-PCM) has emerged as a particularly important method for incorporating solvation effects into quantum-chemical calculations [36].

The integration of IEF-PCM with emerging quantum computing approaches represents a significant advancement toward practical quantum chemistry applications. Traditional quantum simulations typically model molecules in isolation (gas phase), ignoring crucial environmental effects that dramatically influence molecular structure, reactivity, and function [21]. By embedding quantum calculations within an implicit solvent continuum, researchers can now simulate chemically relevant systems in realistic environments, bridging a critical gap that has long hindered the application of quantum computers to biological and industrial problems [21].

This guide provides a comprehensive comparison of current quantum-classical hybrid workflows implementing IEF-PCM, evaluating their performance against classical alternatives and other quantum approaches. We examine experimental data, computational requirements, and implementation strategies to assist researchers in selecting appropriate methodologies for their specific applications in quantum chemistry Hamiltonian research.

Theoretical Foundation of Implicit Solvent Models

Continuum Solvation Theory

Implicit solvation models, sometimes called continuum solvation models, replace explicit solvent molecules with a continuous medium characterized by macroscopic properties such as dielectric constant [35]. The fundamental approximation is that the averaged behavior of numerous highly dynamic solvent molecules can be represented using a potential of mean force [35]. This approach significantly reduces computational cost compared to explicit solvent simulations while still capturing essential solvation effects.

In the context of quantum chemistry, implicit solvent models are implemented as self-consistent reaction field (SCRF) methods, where the continuum solvent establishes a "reaction field" represented as additional terms in the solute Hamiltonian [36]. This reaction field depends on the solute electron density and must be updated self-consistently during iterative convergence of the wavefunction [36]. The IEF-PCM method belongs to the class of apparent surface charge models that use a molecule-shaped cavity and the full molecular electrostatic potential [36].

IEF-PCM Formulation and Implementation

The Integral Equation Formalism Polarizable Continuum Model (IEF-PCM) is a refined version of the polarizable continuum model that provides a rigorous mathematical framework for solving the electrostatic problem at the solute-solvent interface [36]. Also known as the "surface and simulation of volume polarization for electrostatics" [SS(V)PE] model, IEF-PCM employs an integral equation approach to determine the apparent surface charges that represent the solvent polarization [36].