Beyond the Mean Field: Understanding and Overcoming the Limitations of the Hartree-Fock Method in Modern Chemistry and Drug Discovery

The Hartree-Fock (HF) method is a foundational pillar of computational quantum chemistry, providing the conceptual starting point for most ab initio electronic structure calculations.

Beyond the Mean Field: Understanding and Overcoming the Limitations of the Hartree-Fock Method in Modern Chemistry and Drug Discovery

Abstract

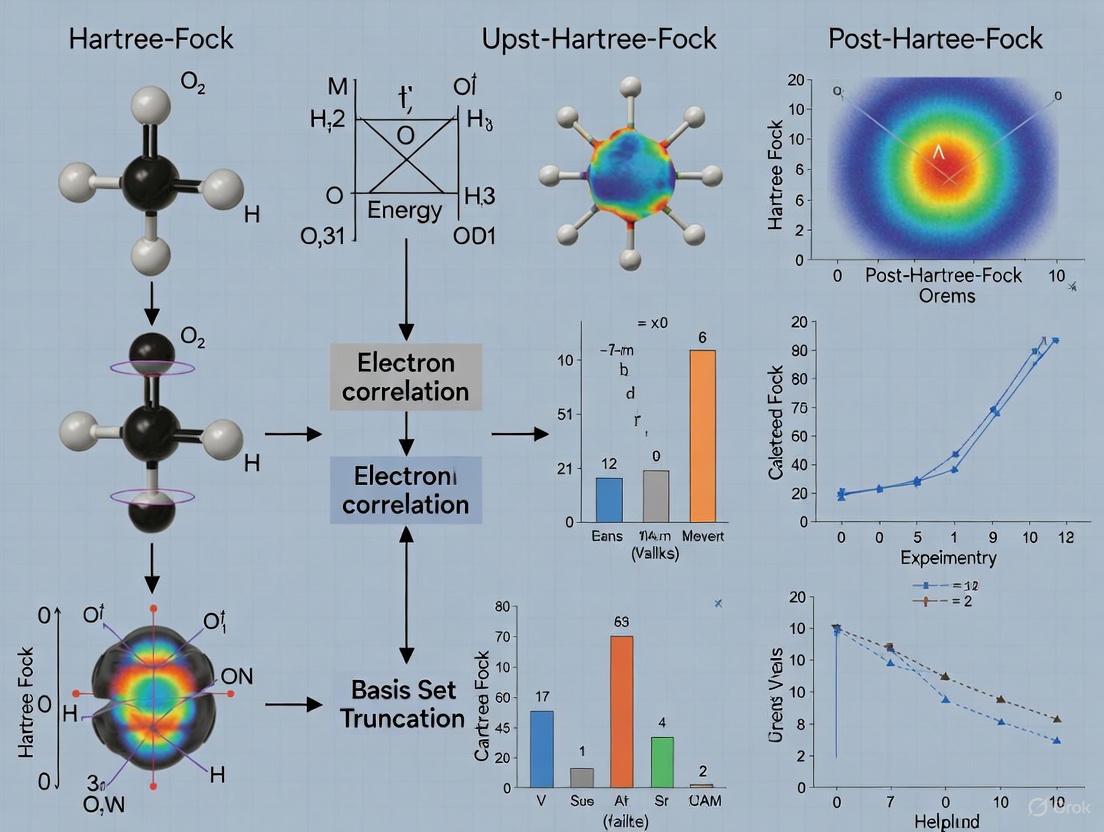

The Hartree-Fock (HF) method is a foundational pillar of computational quantum chemistry, providing the conceptual starting point for most ab initio electronic structure calculations. However, its inherent approximations introduce significant limitations that impact predictive accuracy in critical applications like drug design. This article provides a comprehensive analysis of these limitations for an audience of researchers and drug development professionals. We first explore the foundational theory of HF, including its mean-field approximation and neglect of electron correlation. We then detail the methodological consequences of these limitations in practical applications, from inaccurate binding energies to the failure to model dispersion forces. The article further surveys established and emerging troubleshooting strategies, including post-Hartree-Fock methods and hybrid quantum-classical algorithms. Finally, we present a rigorous validation and comparative framework, benchmarking HF against more advanced methods like Density Functional Theory to guide method selection for specific challenges in biomedical research.

The Core Theory: Deconstructing the Fundamental Approximations in Hartree-Fock

In quantum mechanics, the Single Slater Determinant provides a foundational approach for approximating the wave function of a multi-fermionic system [1]. This mathematical construct ensures that the wave function adheres to the Pauli exclusion principle by changing sign upon the exchange of any two electrons [1]. Named after John C. Slater, who formally introduced the determinant in 1929, the concept had appeared three years earlier in the works of Heisenberg and Dirac [1]. In the context of the Hartree-Fock method, the Slater determinant serves as the starting point for a mean-field theory description of many-electron systems [2]. While only a small subset of all possible fermionic wave functions can be represented by a single determinant, this subset forms an important and computationally tractable foundation for more advanced quantum chemical methods [1].

Mathematical Foundation

The Antisymmetry Requirement

The fundamental requirement for any multi-electron wave function is that it must be antisymmetric under the exchange of any two electrons [1]. For a two-electron system, this means that:

Ψ(x₁, x₂) = −Ψ(x₂, x₁)

where x represents both spatial and spin coordinates of an electron [1]. A simple product wave function of the form χ₁(x₁)χ₂(x₂) does not satisfy this requirement, necessitating a more sophisticated construction [1].

Definition and Formalism

For an N-electron system, the Slater determinant is defined as [1]:

$$ \Psi(\mathbf{x}1, \mathbf{x}2, \ldots, \mathbf{x}N) = \frac{1}{\sqrt{N!}} \begin{vmatrix} \chi1(\mathbf{x}1) & \chi2(\mathbf{x}1) & \cdots & \chiN(\mathbf{x}1) \ \chi1(\mathbf{x}2) & \chi2(\mathbf{x}2) & \cdots & \chiN(\mathbf{x}2) \ \vdots & \vdots & \ddots & \vdots \ \chi1(\mathbf{x}N) & \chi2(\mathbf{x}N) & \cdots & \chiN(\mathbf{x}_N) \end{vmatrix} $$

The factor $1/\sqrt{N!}$ ensures proper normalization, while the determinant structure automatically guarantees the antisymmetry property [1]. In shorthand notation, this is often written as |χ₁, χ₂, ⋯, χₙ⟩ or |1, 2, …, N⟩ [1].

Table: Key Properties of the Slater Determinant

| Property | Mathematical Expression | Physical Significance |

|---|---|---|

| Antisymmetry | Sign change under particle exchange | Satisfies Pauli exclusion principle |

| Normalization | $1/\sqrt{N!}$ prefactor | Ensures total probability equals 1 |

| Pauli principle | Determinant vanishes if two orbitals identical | No two fermions can occupy same quantum state |

| Invariance | Unitary transformation of orbitals changes only phase | Many different orbital sets can represent same physical state [3] |

Two-Electron Case Example

For a two-electron system, the Slater determinant takes the particularly simple form:

$$ \Psi(\mathbf{x}1, \mathbf{x}2) = \frac{1}{\sqrt{2}} \left[ \chi1(\mathbf{x}1)\chi2(\mathbf{x}2) - \chi1(\mathbf{x}2)\chi2(\mathbf{x}1) \right] $$

This antisymmetrized combination clearly demonstrates the required sign change when electrons 1 and 2 are exchanged [1].

Connection to the Hartree-Fock Method

The Hartree-Fock Approximation

The Hartree-Fock method makes several key approximations to solve the many-electron Schrödinger equation [2]:

- The Born-Oppenheimer approximation is inherently assumed

- Relativistic effects are completely neglected

- The wave function is approximated by a single Slater determinant

- The mean-field approximation is employed

- Effects of electron correlation (beyond exchange) are neglected

The method applies the variational principle to find the best possible single-determinant wave function, which provides an upper bound to the true ground-state energy [2].

The Fock Operator and SCF Procedure

Within the Hartree-Fock framework, the optimal orbitals are determined by solving the canonical Hartree-Fock equations [4]:

$$ \hat{F} \phii = \epsiloni \phi_i $$

where the Fock operator $\hat{F}$ is defined as [4]:

$$ \hat{F} \phii = h \phii + \sum{j}^{\text{occupied}} \left[ \hat{J}j - \hat{K}j \right] \phii $$

Here, $h$ represents the one-electron operator (kinetic energy and nuclear attraction), while $\hat{J}j$ and $\hat{K}j$ are the Coulomb and exchange operators, respectively [4]:

$$ \hat{J}j \phii = \int \phij^*(\mathbf{r}')\phij(\mathbf{r}') \frac{1}{|\mathbf{r}-\mathbf{r}'|} d\tau' \phi_i(\mathbf{r}) $$

$$ \hat{K}j \phii = \int \phij^*(\mathbf{r}')\phii(\mathbf{r}') \frac{1}{|\mathbf{r}-\mathbf{r}'|} d\tau' \phi_j(\mathbf{r}) $$

These equations are solved using a self-consistent field (SCF) procedure, where the Fock operator depends on its own solutions, requiring an iterative approach until convergence [2].

SCF Procedure Diagram Title: Hartree-Fock Self-Consistent Field Algorithm

Limitations of the Single Determinant Approach

Fundamental Limitations in Hartree-Fock Theory

Despite its utility, the single Slater determinant approximation in Hartree-Fock theory has several significant limitations that restrict its accuracy [2]:

Electron Correlation Neglect: The method neglects Coulomb correlation, treating electron interactions only in an average manner through the mean-field approximation [2].

Inability to Describe Bond Dissociation: As demonstrated in the hydrogen molecule example, restricted Hartree-Fock fails to properly describe bond dissociation, predicting incorrect energies at large nuclear separations [5].

Systematic Biases: The single determinant approach introduces biases, particularly in open-shell systems, where unrestricted Hartree-Fock may produce spin-contaminated solutions without well-defined spin states [5].

Quantitative Limitations of the Method

Table: Energy Component Treatment in Hartree-Fock Theory

| Energy Component | Treatment in HF | Limitation |

|---|---|---|

| Kinetic Energy | Exact | No inherent limitation |

| Nuclear-Electron Attraction | Exact | No inherent limitation |

| Electron-Electron Coulomb | Mean-field approximation | Lacks instantaneous correlation |

| Exchange Energy | Exact for single determinant | Correctly accounts for Fermi correlation |

| Dispersion Forces | Not captured | HF cannot describe London dispersion [2] |

Specific Failure Cases

The hydrogen molecule dissociation problem provides a clear example of the single determinant limitation [5]. At large nuclear separations, the restricted Hartree-Fock wave function of the form:

$$ \Psi\text{RHF} = \frac{1}{\sqrt{2}} \begin{vmatrix} 1sA(\mathbf{r}1)\alpha(1) & 1sB(\mathbf{r}1)\beta(1) \ 1sA(\mathbf{r}2)\alpha(2) & 1sB(\mathbf{r}_2)\beta(2) \end{vmatrix} $$

fails to properly describe the system, which should dissociate into two neutral hydrogen atoms [5]. While unrestricted Hartree-Fock can correctly describe the dissociation energy, it produces spin-contaminated wave functions that are not proper eigenfunctions of the total spin operator $\hat{S}^2$ [5].

Computational Methodology

Hartree-Fock Algorithm Protocol

The standard computational procedure for solving the Hartree-Fock equations involves the following steps [2]:

Initialization: Choose an initial guess for the molecular orbitals, typically as a linear combination of atomic orbitals (LCAO).

Iteration Cycle:

- Construct the Fock matrix using the current orbitals

- Solve the Hartree-Fock equations $\hat{F}\phii = \epsiloni\phi_i$

- Check for convergence of both orbitals and total energy

- If not converged, update orbitals and repeat

Termination: The procedure concludes when the change in electronic energy and orbital coefficients falls below a predefined threshold [2].

Research Reagent Solutions

Table: Essential Computational Components in Hartree-Fock Calculations

| Component | Function/Role | Implementation Details |

|---|---|---|

| Basis Sets | Set of functions to expand molecular orbitals | Gaussian-type orbitals (GTOs) or Slater-type orbitals (STOs); completeness affects accuracy |

| Initial Guess Algorithms | Starting point for SCF procedure | Extrapolated, core Hamiltonian, or fragment-based approaches to improve convergence |

| DIIS Extrapolation | Accelerates SCF convergence | Reduces number of iterations by extrapolating Fock matrices [2] |

| Integral Evaluation | Computes molecular integrals | Efficient algorithms for one- and two-electron integrals; most computationally intensive step |

| Density Matrix | Constructs electron density from occupied orbitals | Updated each iteration; used to build new Fock matrix |

Determinant Structure Diagram Title: Mathematical Structure of Slater Determinant

Advanced Considerations

Restricted vs. Unrestricted Formulations

The Hartree-Fock method can be implemented in different variants with distinct characteristics [5]:

Restricted Hartree-Fock (RHF): Forces doubly occupied spatial orbitals for closed-shell systems, maintaining proper spin eigenfunctions but potentially introducing systematic errors [5].

Unrestricted Hartree-Fock (UHF): Allows different spatial orbitals for α and β spins, providing more flexibility but potentially producing spin-contaminated solutions [5].

Restricted Open-Shell Hartree-Fock (ROHF): Maintains restricted formalism for open-shell systems while attempting to preserve spin purity [5].

Post-Hartree-Fock Methods

To address the limitations of the single determinant approach, numerous post-Hartree-Fock methods have been developed [2]:

Configuration Interaction (CI): Expands the wave function as a linear combination of multiple Slater determinants, systematically improving correlation treatment.

Coupled Cluster (CC): Uses an exponential ansatz to include excitations to higher order, typically providing highly accurate results.

Møller-Plesset Perturbation Theory: Applies Rayleigh-Schrödinger perturbation theory to include electron correlation effects.

Table: Comparative Accuracy of Quantum Chemical Methods

| Method | Slater Determinants | Electron Correlation | Computational Cost |

|---|---|---|---|

| Hartree-Fock | Single | None (except exchange) | Low (N³-N⁴) |

| MP2 | Single reference | 2nd order perturbation | Medium (N⁵) |

| CCSD(T) | Single reference + excitations | High (near chemical) | High (N⁷) |

| Full CI | All possible in basis set | Exact (for basis) | Prohibitive (factorial) |

The Single Slater Determinant provides a mathematically elegant and computationally efficient framework for approximating many-electron wave functions, serving as the cornerstone of the Hartree-Fock method in quantum chemistry. Its inherent antisymmetry automatically satisfies the Pauli exclusion principle, while its determinantal structure enables practical computation of molecular properties. However, the limitation to a single determinant necessitates the neglect of electron correlation effects, leading to systematic errors in bond dissociation energies, reaction barriers, and dispersion interactions. Despite these limitations, the Slater determinant remains fundamental to quantum chemistry, both as a starting point for more accurate correlated methods and as a conceptual framework for understanding electronic structure. For drug development professionals and researchers, recognizing both the power and limitations of this approach is essential for selecting appropriate computational methods and interpreting their results in the context of molecular design and property prediction.

The Mean-Field Approximation and its Physical Interpretation

Mean-field theory (MFT), also known as self-consistent field theory, represents a foundational approach for analyzing high-dimensional random models by approximating complex many-body interactions through a simpler effective model. The core premise involves replacing all interactions to any one component with an average or effective interaction, thereby reducing an intractable many-body problem into a more manageable effective one-body problem [6]. This methodological simplification provides significant computational advantages, enabling researchers to obtain physical insight into system behavior at a substantially lower computational cost compared to exact solutions [6].

In the context of quantum chemistry, the mean-field approximation manifests most prominently in the Hartree-Fock method, which forms the cornerstone of modern electronic structure theory. Within this framework, each electron is modeled as moving within an average field generated by all other electrons, effectively neglecting specific instantaneous electron-electron correlations [7]. This approximation transforms the complex many-electron Schrödinger equation into a self-consistent field (SCF) problem where the electronic configuration and the resulting mean field are iteratively refined until convergence [7]. While this approach enables practical computation of molecular wavefunctions, it introduces systematic limitations that impact predictive accuracy, particularly for systems where electron correlation effects play a significant role.

Theoretical Foundations and Mathematical Formulation

Formal Mean-Field Framework

The formal basis for mean-field theory rests on the Bogoliubov inequality, which establishes an upper bound for the free energy of a system. For a Hamiltonian ( \mathcal{H} = \mathcal{H}0 + \Delta \mathcal{H} ), the free energy satisfies ( F \leq F0 \triangleq \langle \mathcal{H} \rangle0 - TS0 ), where ( S0 ) represents the entropy of the simplified trial system [6]. The optimal mean-field approximation is obtained by minimizing this upper bound with respect to the parameters of the trial Hamiltonian ( \mathcal{H}0 ).

In the specific context of the Ising model—a paradigmatic example in statistical mechanics—the mean-field approximation leads to a self-consistency equation for the average magnetization ( m ): [ m = \tanh(zJ\beta m + h) ] where ( z ) represents the coordination number, ( J ) denotes the coupling constant, ( \beta = 1/k_B T ), and ( h ) signifies the external field [6]. This equation illustrates how the effective field experienced by individual spins depends self-consistently on the average magnetization of the surrounding system.

Hartree-Fock Method as a Mean-Field Approach

In quantum chemistry, the Hartree-Fock method implements the mean-field approximation for many-electron systems. The approach neglects specific electron-electron interactions, instead modeling each electron as interacting with the mean field exerted by the other electrons [7]. Since this mean field itself depends on the electronic configuration, the method requires an iterative self-consistent field procedure:

- Begin with an initial guess for the electron density

- Compute the effective mean-field potential

- Solve for the electronic wavefunctions in this potential

- Update the electron density based on these wavefunctions

- Repeat until convergence is achieved [7]

For most molecular systems, this SCF approach converges within 10-30 cycles, providing a practical computational pathway [7].

Table 1: Key Mathematical Formulations of Mean-Field Theory Across Disciplines

| Domain | Key Equation/Formalism | Primary Approximation |

|---|---|---|

| Statistical Mechanics | ( m = \tanh(zJ\beta m + h) ) | Replaces neighbor interactions with effective field |

| Quantum Chemistry (HF) | ( \hat{F}\psii = \epsiloni\psi_i ) | Models electron-electron repulsion as average field |

| Image Segmentation | ( \theta^{(k+1)} = \theta^{(k)} - \lambda\cdot\frac{\partial}{\partial\theta}D(Q|P) ) | Approximates posterior via factorized distribution [8] |

| Dynamical Mean-Field | ( G{loc}(i\omegan) = \sumk [i\omegan + \mu - H0(k) - \Sigma(i\omegan)]^{-1} ) | Assumes locality of self-energy [9] |

Physical Interpretation of the Mean-Field Concept

The physical interpretation of the mean-field approximation centers on the notion of molecular fields and effective interactions. In magnetic systems, this corresponds to each spin experiencing a uniform effective field proportional to the average magnetization of its neighbors, rather than fluctuating local fields from individual spins [6]. This averaging suppresses local fluctuations and replaces disordered interactions with an ordered effective field.

In quantum chemistry, the Hartree-Fock method embodies a similar physical picture: each electron experiences the average electrostatic repulsion from the total electron density of all other electrons, rather than instantaneous correlations with individual electrons [7]. This mean-field potential includes both the classical Coulomb term and a non-local exchange term that arises from the antisymmetry requirement of the wavefunction. The exchange term, while not truly representing electron correlation, accounts for Fermi statistics and provides a limited description of electron-electron interactions.

The mathematical structure of mean-field theories typically involves decoupling approximations that factorize complex many-body interactions into products of simpler single-body terms. For pairwise interactions ( V{i,j}(\xii, \xij) ), the mean-field approach approximates the contribution as ( \text{Tr}{i,j} V{i,j}(\xii, \xij) P0^{(i)}(\xii) P0^{(j)}(\xij) ), where ( P0^{(i)} ) represents the single-particle probability distribution [6]. This factorization dramatically reduces the computational complexity but eliminates specific correlations between components.

Figure 1: Physical Interpretation of Mean-Field Approximation as a Complexity Reduction from Many-Body to Effective One-Body Problem

Limitations of the Hartree-Fock Method in Quantum Chemistry

Neglect of Electron Correlation

The most significant limitation of the Hartree-Fock method stems from its neglect of electron correlation, which leads to systematic errors in predicted molecular properties [7]. While the method includes electron exchange through the antisymmetry of the wavefunction, it completely ignores the correlated motion of electrons that arises from their Coulomb repulsion. This omission manifests in several quantifiable deficiencies:

- Overestimation of bond lengths: HF calculations typically predict longer and weaker bonds compared to experimental values

- Inaccurate dissociation energies: The method fails to correctly describe bond breaking processes, particularly at dissociation limits

- Poor description of dispersion forces: Van der Waals interactions are essentially absent from Hartree-Fock predictions

- Systematic errors in reaction barriers: Activation energies are often significantly misrepresented

These limitations arise because electrons in the Hartree-Fock framework respond only to the average charge distribution of other electrons, not to their instantaneous positions. In reality, electrons avoid each other more effectively than predicted by this average potential, leading to correlation energies that can constitute 0.5-1% of the total energy—a substantial quantity in chemical terms [7].

Computational Scaling and Basis Set Dependence

The Hartree-Fock method exhibits formal scaling behavior of approximately ( O(N^4) ) with system size, primarily due to the computation of electron repulsion integrals (ERIs) [7]. For a molecule with 1000 basis functions, this necessitates evaluation of approximately ( 10^{12} ) ERIs, creating practical computational barriers for large systems. This unfavorable scaling arises from the need to compute integrals over quartets of basis functions: [ (ij|kl) = \int \int \phii^*(1)\phij(1)\frac{1}{r{12}}\phik^*(2)\phil(2)dr1dr2 ] where ( \phii ) represent basis functions.

Furthermore, Hartree-Fock results exhibit significant basis set dependence, as the molecular orbitals are constructed from linear combinations of atom-centered Gaussian functions [7]. The accuracy of the calculation depends critically on the choice of basis set, with minimal "single zeta" basis sets providing qualitatively incorrect results and larger "triple zeta" or "quadruple zeta" basis sets required for quantitative accuracy. This creates a tension between computational feasibility and accuracy that limits applications to large molecular systems relevant to drug discovery.

Table 2: Quantitative Comparison of Quantum Chemical Methods and Their Limitations

| Method | Formal Scaling | Electron Correlation | Typical Error in Bond Lengths | Typical Error in Reaction Energies |

|---|---|---|---|---|

| Hartree-Fock | ( O(N^4) ) | None (Mean-field only) | 0.01-0.02 Å | 20-40 kcal/mol |

| Density Functional Theory | ( O(N^3) ) | Approximate (Functional-dependent) | 0.005-0.015 Å | 3-10 kcal/mol |

| MP2 | ( O(N^5) ) | Perturbative treatment | 0.005-0.01 Å | 2-5 kcal/mol |

| Coupled Cluster (CCSD(T)) | ( O(N^7) ) | High-level treatment | 0.001-0.005 Å | 0.5-1 kcal/mol |

Quantitative Assessment of Limitations

The practical impact of these theoretical limitations is vividly illustrated by the speed-accuracy tradeoff in computational chemistry [7]. Hartree-Fock methods occupy an intermediate position in this landscape—significantly more accurate than molecular mechanics methods but substantially less accurate than post-Hartree-Fock wavefunction methods or well-parameterized density functional approaches. For conformational energy predictions, Hartree-Fock achieves moderate accuracy (Pearson correlation coefficient ~0.7-0.9) but requires minutes to hours of computation time for typical drug-sized molecules [7].

The systematic nature of Hartree-Fock errors has been extensively documented through benchmark studies. The method consistently overestimates band gaps in solids, underestimates cohesive energies, and provides poor descriptions of transition states and diradicals. These limitations directly impact applications in drug discovery, where accurate prediction of binding energies, conformational preferences, and reaction barriers is essential for reliable molecular design.

Advanced Mean-Field Methodologies Beyond Hartree-Fock

Dynamical Mean-Field Theory (DMFT)

Dynamical Mean-Field Theory represents a significant extension of the mean-field concept that addresses some limitations of static approaches like Hartree-Fock [9]. Unlike traditional mean-field theories that employ time-independent effective potentials, DMFT incorporates frequency-dependent (dynamical) self-energies, enabling better description of strong electron correlations. This approach has proven particularly valuable for studying strongly correlated materials where Hartree-Fock and standard density functional theory fail qualitatively.

The DMFT framework maps a lattice model onto an effective impurity model embedded in a self-consistent, time-dependent mean field [9]. This mapping preserves local temporal fluctuations while neglecting spatial correlations between different sites. For real materials, DMFT is often combined with density functional theory in the DFT+DMFT approach, which uses DFT for the non-interacting Hamiltonian and DMFT for treating correlated subspaces [9]. This hybrid approach has successfully described electronic structures of various strongly correlated systems, including superconducting cuprates, nickelates, and other perovskite-type materials.

Mean-Field Methods in Image Processing and Nuclear Physics

The mean-field approximation has found applications beyond quantum chemistry, with specialized implementations developed for diverse scientific domains. In medical image segmentation, an Active Mean Fields (AMF) approach combines conventional likelihood models with curve length priors on boundaries [8]. This method approximates posterior probabilities of tissue labels via a mean-field approach optimized through gradient descent, resulting in a level-set style algorithm.

In nuclear physics, advanced mean-field techniques have demonstrated surprising capability in describing light nuclear systems. Recent research shows that the deuteron—the simplest bound nuclear system—can be accurately described within a mean-field-based framework using a low-dimensional linear combination of non-orthogonal Bogoliubov states [10]. This approach reproduces ground-state binding energy, magnetic dipole moment, electric quadrupole moment, and root-mean-square proton radius with sub-percent accuracy, achieving this with a computational cost scaling as ( n{\text{dim}}^4 ), where ( n{\text{dim}} ) represents the dimension of the one-body Hilbert space basis [10].

Figure 2: Generalized Workflow for Advanced Mean-Field Methods like Dynamical Mean-Field Theory (DMFT)

Experimental Protocols and Computational Methodologies

Quantum Chemistry Workflow for Hartree-Fock Calculations

A standardized protocol for performing and validating Hartree-Fock calculations includes the following methodological steps:

System Preparation

- Obtain molecular coordinates from experimental data or preliminary calculations

- Define molecular charge and spin multiplicity appropriate to the electronic state

- Select an appropriate basis set based on accuracy requirements and computational resources

Initial Guess Generation

- Construct initial molecular orbitals using extended Hückel theory or superposition of atomic densities

- For complex systems, employ fragment-based approaches or results from lower-level calculations

Self-Consistent Field Iteration

- Compute one-electron integrals (kinetic energy, nuclear attraction)

- Calculate two-electron repulsion integrals over atomic orbitals

- Transform integrals to the molecular orbital basis

- Build the Fock matrix using current density matrix

- Diagonalize Fock matrix to obtain new molecular orbitals and energies

- Update density matrix using new molecular orbitals

- Check for convergence in energy and density matrix (typical thresholds: 10(^{-8}) Hartree for energy, 10(^{-6}) for density)

Property Evaluation

- Calculate molecular properties from converged wavefunction (dipole moments, population analysis)

- Evaluate vibrational frequencies through second derivatives of energy

- Perform analytical or numerical gradients for geometry optimization

Validation and Assessment

- Compare predicted geometries with experimental data where available

- Assess correlation energy importance through perturbation theory estimates

- Evaluate systematic errors for specific chemical properties

Research Reagent Solutions for Computational Studies

Table 3: Essential Computational Tools for Mean-Field Studies in Quantum Chemistry

| Tool Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Electronic Structure Packages | Gaussian, GAMESS, PSI4, PySCF | Implement SCF algorithms and integral evaluation | General quantum chemistry calculations |

| Basis Set Libraries | Basis Set Exchange, EMSL Basis Set Library | Provide standardized atomic basis sets | Ensuring transferability and reproducibility |

| Quantum Dynamics Extensions | Time-dependent SCF, MCTDH | Extend mean-field to time domain | Quantum dynamics of many-atom systems [11] |

| Embedding Schemes | DFT+DMFT workflows | Combine mean-field with many-body methods | Strongly correlated electron systems [9] |

| Wavefunction Analysis Tools | Multiwfn, Molden | Visualize and analyze electronic structure | Interpretation of computational results |

The mean-field approximation represents both a powerful conceptual framework and a practical computational tool with broad applicability across scientific disciplines. Its physical interpretation as replacing fluctuating many-body interactions with effective average fields enables tractable solutions to otherwise intractable problems. In quantum chemistry, the Hartree-Fock method embodies this approach and provides the foundation for more sophisticated electronic structure theories.

However, the limitations of the Hartree-Fock method—particularly its neglect of electron correlation and systematic errors in predicting molecular properties—highlight the fundamental compromises inherent in the mean-field approximation. These limitations have driven the development of advanced methodologies like dynamical mean-field theory and various post-Hartree-Fock approaches that incorporate correlations while maintaining computational feasibility.

The continued evolution of mean-field-based methods, including recent innovations in quantum computing implementations of DMFT [9] and surprising applications in nuclear physics [10], demonstrates the enduring value of this conceptual framework. As computational resources expand and methodological innovations continue, mean-field approximations will likely remain essential components of the theoretical toolkit for tackling complex many-body problems across physics, chemistry, and materials science.

The Hartree-Fock (HF) method serves as a cornerstone in computational quantum chemistry, providing the fundamental wavefunction ansatz from which most advanced electronic structure theories are built. This method approximates the complex many-electron wavefunction by a single Slater determinant, a mathematical construct that ensures the antisymmetry of the wavefunction as required by the Pauli exclusion principle for fermions [2]. By invoking the variational principle, the HF method derives a set of coupled equations that describe electrons moving in an average, or mean-field, potential created by all other electrons in the system [2] [12]. This elegant formulation successfully captures exchange correlation (Fermi correlation), which prevents electrons with parallel spins from occupying the same region of space, giving rise to the concept of an "exchange hole" around each electron [13] [12].

Despite its theoretical importance, the HF method contains a critical oversight: its treatment of electron-electron interactions remains incomplete. The method fundamentally neglects Coulomb correlation, which accounts for the instantaneous Coulombic repulsion between electrons and their correlated motion in space [13]. This neglect occurs because each electron in the HF framework experiences only the average electrostatic field of the other electrons, rather than their instantaneous positions [12]. Consequently, the HF energy always exceeds the exact solution of the non-relativistic Schrödinger equation within the Born-Oppenheimer approximation, with the difference termed the correlation energy [13]. This missing energy component, though typically a small fraction (∼1%) of the total electronic energy, proves chemically significant—often exceeding chemical accuracy thresholds—and its absence leads to qualitatively incorrect predictions in numerous chemically important scenarios [14] [15].

Theoretical Foundation: Defining Electron Correlation

The Mathematical Formalism of Electron Correlation

Electron correlation emerges from the inherent limitations of the single-determinant approximation in describing the true N-electron wavefunction. Mathematically, for a fully correlated two-electron system, the joint probability density of finding electron a at position ra and electron b at position rb deviates significantly from the product of their individual probability densities:

ρ(r_a, r_b) ≠ ρ(r_a)ρ(r_b) [13]

In the Hartree-Fock approximation, the joint density derived from a single Slater determinant fails to satisfy this relationship accurately. Specifically, the uncorrelated pair density becomes too large at small interelectronic distances and too small at large distances, indicating that electrons tend to "avoid" each other more effectively than the mean-field prediction suggests [13]. This deficiency manifests as an inadequate description of the Coulomb hole—the small region around each electron that other electrons avoid due to their mutual repulsion.

Dynamical vs. Static Correlation

Electron correlation phenomena are generally categorized into two distinct types, each requiring different theoretical approaches for their accurate capture:

Dynamical Correlation: This type of correlation arises from the correlated motion of electrons due to their instantaneous Coulomb repulsion and is typically associated with short-range interactions [13]. Dynamical correlation is ubiquitous in all molecular systems and can be systematically incorporated by including excited configurations (e.g., through Configuration Interaction or Coupled-Cluster methods) that account for the instantaneous avoidance of electrons [16] [13].

Static (Non-Dynamical) Correlation: This occurs when the ground state wavefunction of a system cannot be qualitatively described by a single determinant, typically due to (near) degeneracy of multiple electronic configurations [13]. Such situations arise in molecular bond dissociation, diradicals, and systems with degenerate frontier orbitals. The Restricted Hartree-Fock (RHF) method fails dramatically in these cases, often predicting qualitatively incorrect dissociation limits or electronic structures [14].

Table 1: Characteristics of Electron Correlation Types

| Correlation Type | Physical Origin | Typical Systems | Primary Theoretical Treatment |

|---|---|---|---|

| Dynamical Correlation | Instantaneous Coulombic repulsion between electrons | All molecular systems, particularly important for accurate thermochemistry | Configuration Interaction, Coupled Cluster, Møller-Plesset Perturbation Theory |

| Static Correlation | Near-degeneracy of multiple electronic configurations | Bond dissociation, diradicals, transition metal complexes, aromatic systems | Multi-Configurational Self-Consistent Field (MCSCF), Complete Active Space (CAS) methods |

Quantitative Impact: Methodological Comparisons and Numerical Evidence

Performance Across Molecular Properties

The neglect of electron correlation in the Hartree-Fock method leads to systematic errors in the prediction of key molecular properties. Advanced computational studies comparing HF with post-Hartree-Fock methods reveal consistent patterns of deviation from experimental values:

Table 2: Comparative Performance of Quantum Chemical Methods for Molecular Properties

| Method | Treatment of Correlation | Bond Length Accuracy | Vibrational Frequency Accuracy | Reaction Energy Accuracy | Computational Cost |

|---|---|---|---|---|---|

| Hartree-Fock (HF) | Neglects Coulomb correlation | Consistently underestimates (too short) | Overestimates by ~10-15% [15] | Large errors (>20 kcal/mol) [15] | Low |

| Configuration Interaction (CI) | Includes via determinant expansion | Good with large active spaces | Good improvement over HF | Systematic improvement | Very High (Full CI) |

| Coupled Cluster (CCSD(T)) | Extensive via exponential ansatz | Excellent (near experimental) | Excellent (near experimental) | High accuracy (~1 kcal/mol) | Very High |

| Density Functional Theory (DFT) | Approximate via functionals | Generally good | Generally good | Variable (depends on functional) | Medium |

Experimental Protocols for Assessing Correlation Effects

Protocol 1: Potential Energy Surface Mapping

Objective: To quantify the impact of electron correlation on bond dissociation and reaction pathways by computing potential energy surfaces (PES) using multiple theoretical methods.

Methodology:

- System Selection: Choose a molecular system exhibiting significant correlation effects (e.g., H₂, N₂, F₂, or NdO) [14] [17].

- Geometry Sampling: Calculate total energies at multiple nuclear geometries along the bond dissociation coordinate.

- Multi-Method Computation: Perform electronic structure calculations using:

- Restricted Hartree-Fock (RHF)

- Unrestricted Hartree-Fock (UHF)

- Configuration Interaction (e.g., CISD)

- Coupled Cluster (e.g., CCSD(T))

- Density Functional Theory (with appropriate functionals)

- Energy Comparison: Compute dissociation energies and compare with experimental values.

- Wavefunction Analysis: Examine determinant coefficients in multi-reference methods to assess static correlation weight.

Key Deliverables: Bond dissociation curves, quantitative comparison of dissociation energies, assessment of RHF vs. UHF performance at dissociation limits [14].

Protocol 2: Molecular Property Benchmarking

Objective: To evaluate the effect of electron correlation on equilibrium molecular properties across diverse chemical systems.

Methodology:

- Benchmark Set Construction: Select a diverse set of molecules including:

- Simple closed-shell systems (H₂O)

- Aromatic compounds (C₆H₆)

- Transition metal complexes ([Fe(CO)₅], [Cu(NH₃)₄]²⁺)

- Radical species (•OH) [15]

- Geometry Optimization: Determine equilibrium structures at each level of theory.

- Property Calculation: Compute:

- Bond lengths

- Vibrational frequencies

- Reaction energies (e.g., atomization energies)

- Error Statistical Analysis: Calculate mean absolute deviations (MAD) and maximum errors relative to experimental data.

- Correlation Energy Contribution: Partition correlation effects using perturbation theory.

Key Deliverables: Quantitative accuracy assessment for each method, identification of system-specific correlation effects, error trends across chemical space [15].

Manifestations of HF Failures in Chemical Systems

Bond Dissociation and Diradical Systems

The Hartree-Fock method fails catastrophically in describing bond dissociation processes. For example, when stretching the H-H bond in H₂ molecule, the RHF method describes the dissociated atoms incorrectly as H⁺ and H⁻ ions rather than neutral hydrogen atoms, leading to dramatically wrong dissociation energies and potential energy curves [14]. This failure occurs because the single-determinant RHF wavefunction cannot properly describe the two electrons becoming unpaired as the bond breaks. Similar problems occur for O₂ (singlet state), which is poorly described by RHF, and for F₂ dissociation, where even Unrestricted HF (UHF) produces an unbound potential [14]. These failures stem from static correlation effects that become dominant when near-degeneracy occurs between multiple electronic configurations.

Dispersion Interactions and van der Waals Complexes

London dispersion forces, which arise from correlated electron motion in different parts of a molecule or between different molecules, are completely absent in standard Hartree-Fock calculations [2] [13]. This failure occurs because dispersion interactions are fundamentally correlation effects resulting from instantaneous dipole-induced dipole interactions that cannot be captured by a mean-field approach. Consequently, HF cannot describe van der Waals complexes, π-π stacking in aromatic systems, or other weak interactions crucial in supramolecular chemistry and biological systems, consistently underestimating binding energies in these complexes.

Transition Metal Complexes and Heavy Elements

Systems containing transition metals pose particular challenges for HF due to the presence of nearly degenerate d-orbitals and significant electron correlation effects [15]. The method fails to accurately describe the electronic structure of complexes such as [Fe(CO)₅] and [Cu(NH₃)₄]²⁺, where strong correlation effects dominate the relative energies of different spin states and geometric configurations [15]. For heavy elements, relativistic effects further enhance correlation contributions, making HF particularly inadequate for quantitative predictions in lanthanide and actinide chemistry [14].

Anion Stability and Charge-Transfer Processes

The Hartree-Fock method often fails to predict the stability of anions, particularly when the primary binding mechanism involves correlation effects rather than simple electrostatic interactions [14]. For example, C₂⁻ is not predicted to be bound at the HF level because the correlation between the excess electron and the electron cloud of the neutral core is not properly captured [14]. This failure has significant implications for predicting electron attachment processes, charge-transfer states, and redox properties in chemical systems.

Diagram 1: HF Failure Manifestations. This diagram categorizes the fundamental failures of the Hartree-Fock method into static and dynamical correlation deficiencies, showing their specific chemical manifestations.

Beyond Hartree-Fock: Addressing Electron Correlation

Theoretical Framework for Post-Hartree-Fock Methods

Advanced computational methods that address the electron correlation problem beyond HF can be systematically organized based on their theoretical approach:

Diagram 2: Post-HF Method Classification. This diagram illustrates the systematic organization of electronic structure methods that address electron correlation beyond the Hartree-Fock approximation.

The Scientist's Toolkit: Essential Computational Approaches

Table 3: Research Reagent Solutions for Electron Correlation Challenges

| Method Category | Specific Methods | Key Function | Applicable Systems |

|---|---|---|---|

| Multi-Reference Methods | MCSCF, CASSCF | Handles static correlation via multiple determinants in active space | Bond dissociation, diradicals, transition metals [13] |

| Wavefunction Expansion | Configuration Interaction (CI), Full CI | Accounts for correlation via linear combination of excited determinants | Small molecules, benchmark calculations [16] |

| Exponential Ansatz | Coupled Cluster (CCSD, CCSD(T)) | Gold standard for dynamical correlation; systematic inclusion of excitations | Accurate thermochemistry, spectroscopy [15] |

| Perturbation Theory | Møller-Plesset (MP2, MP4) | Cost-effective correlation treatment via Rayleigh-Schrödinger perturbation theory | Medium-sized molecules, initial correlation estimates [13] |

| Density-Based Methods | DFT with advanced functionals | Computational efficiency via exchange-correlation functionals | Large systems, drug design, materials science [15] |

| Hybrid Approaches | CASPT2, MRCI, SORCI | Combines multi-reference with perturbation theory or CI | Strongly correlated systems, excitation energies [13] |

The critical oversight of electron correlation in the Hartree-Fock method represents both a fundamental limitation and a driving force for theoretical development in quantum chemistry. While HF provides the essential conceptual framework for understanding electronic structure, its neglect of electron correlation effects renders it quantitatively inadequate for most chemical applications and qualitatively wrong for important classes of chemical systems. The development of post-Hartree-Fock methodologies—from multi-reference methods that address static correlation to coupled cluster theories that systematically recover dynamical correlation—has dramatically expanded our ability to model complex chemical phenomena with quantitative accuracy.

Current research continues to address the challenges of treating electron correlation in increasingly large and complex systems, with recent perspectives highlighting methods for "describing dynamic electron correlation beyond a large active space" as particularly important for realistic strongly correlated systems [17]. The integration of wavefunction methods with density functional approaches, development of efficient scaling algorithms, and creation of more sophisticated exchange-correlation functionals represent active frontiers in the field. For researchers in drug development and materials science, the careful selection of electronic structure methods that appropriately balance computational cost with the required treatment of electron correlation remains essential for generating reliable predictions of molecular properties, reaction mechanisms, and spectroscopic behavior.

Distinguishing Dynamical vs. Static Correlation and the Impact on Accuracy

The Hartree-Fock (HF) method serves as the cornerstone for most electronic structure calculations in quantum chemistry, providing a mean-field approximation where each electron experiences the average field of all others [13] [2]. Despite its theoretical importance, HF's fundamental limitation is its neglect of instantaneous electron-electron repulsion, known as electron correlation [18] [13]. The energy discrepancy between the HF solution and the exact, non-relativistic solution of the Schrödinger equation is defined as the correlation energy [13]. This missing correlation energy is not merely a small quantitative correction; in many chemically relevant situations, such as bond breaking or systems with near-degenerate electronic states, its absence leads to qualitatively incorrect predictions, severely limiting the method's utility in fields like drug development where accurate prediction of molecular properties and reactivity is paramount [19] [20].

To navigate the challenges of electron correlation, quantum chemists classify it into two primary types: dynamic correlation and static correlation [18] [13]. Dynamic correlation arises from the instantaneous, short-range repulsive interactions between electrons as they avoid each other in space [18]. Static correlation, conversely, occurs when a single Slater determinant is insufficient to describe the ground state wavefunction, necessitating a multi-configurational approach even for a qualitatively correct description [13] [19]. Understanding this distinction is not academic; it directly determines the choice of computational methodology and the reliability of the resulting predictions for molecular structure, energy, and reactivity. This guide provides an in-depth technical examination of these correlation types, their physical origins, and the computational strategies employed to overcome the limitations of the Hartree-Fock method.

Theoretical Foundations: Defining Dynamical and Static Correlation

Physical Origins and Conceptual Distinctions

The two forms of electron correlation originate from distinct physical phenomena and exhibit characteristically different impacts on the electronic wavefunction.

Dynamic Correlation: This is the correlation of the localized, short-range movement of electrons [18]. Physically, it stems from the Coulomb repulsion that causes electrons to avoid close proximity to one another, an effect known as "Coulomb hole" [13]. The HF mean-field approach fails to capture this correlated motion because each electron interacts with a static charge distribution rather than with other electrons instantaneously [18]. Dynamic correlation is a universal feature of many-electron systems and is essential for achieving quantitative accuracy in computed energies and properties [19]. Its effects can often be recovered by adding a large number of small contributions from excited Slater determinants [18].

Static (Non-Dynamical) Correlation: This type of correlation arises when the multi-configurational character of the true wavefunction cannot be ignored [13]. It is prominent in situations where several electronic configurations are (near-)degenerate in energy. Classic examples include the dissociation of chemical bonds (e.g., the H₂ molecule at large bond distances) and molecules with significant diradical character, such as oxygen [20]. In these cases, the single-determinant HF wavefunction is qualitatively incorrect, and a proper description requires a linear combination of two or more Slater determinants with significant weights from the outset [18] [19]. Static correlation is therefore not about the fine details of electron motion but about selecting the correct zeroth-order description of the system.

The following conceptual diagram illustrates the relationship between these concepts and the Hartree-Fock starting point.

(Caption: Conceptual relationship between Hartree-Fock, dynamic, and static correlation, showing their distinct origins and treatment methods.)

Formal Definitions and Energetic Considerations

From a formal perspective, the correlation energy ((E{\text{corr}})) is defined as the difference between the exact energy ((E{\text{exact}})) within the Born-Oppenheimer approximation and the Hartree-Fock energy calculated with a complete basis set ((E{\text{HF}})): (E{\text{corr}} = E{\text{exact}} - E{\text{HF}}) [18] [13]. While this definition is clear, formally partitioning this total energy into static and dynamic components remains an active area of research [21]. Operationally, the static correlation energy is often identified with the energy recovered by a multi-configurational self-consistent field (MCSCF) calculation in a full valence active space, while the dynamic correlation is the remaining energy obtained by subsequent higher-level methods [19]. Recent approaches propose using the occupancy of the highest occupied natural spin-orbital as a metric to quantify static correlation [21].

Table 1: Characteristic Signatures of Dynamic and Static Correlation

| Feature | Dynamic Correlation | Static Correlation |

|---|---|---|

| Physical Origin | Instantaneous Coulomb repulsion ("Coulomb hole") [13] | Near-degeneracy of electronic configurations [13] |

| Wavefunction | Single reference determinant with many small corrections [18] | Linear combination of multiple determinants with large weights [18] |

| Impact on HF | Quantitative energy error [19] | Qualitative failure (e.g., wrong dissociation limit) [20] |

| Dominant in | Closed-shell molecules near equilibrium geometry [20] | Bond breaking, diradicals, transition metal complexes [22] [19] |

| Key Recovered Effects | Binding energies, London dispersion forces [13] | Correct dissociation limits, spin-state energetics [19] |

Computational Methodologies for Capturing Correlation

A wide array of post-Hartree-Fock methods has been developed to address electron correlation, each with specific strengths tailored toward dynamic, static, or a balanced treatment of both.

Methods for Dynamic Correlation

Methods that build upon a single, dominant reference determinant are generally effective for capturing dynamic correlation.

- Møller-Plesset Perturbation Theory (MPn): This approach treats the correlation potential as a perturbation to the HF Hamiltonian. The second-order correction (MP2) is widely used for its favorable balance of cost and accuracy, though it is not variational [13].

- Coupled-Cluster (CC) Theory: Coupled-cluster methods, particularly CCSD(T) ("gold standard"), use an exponential ansatz of excitation operators ((e^{\hat{T}})) on the reference wavefunction. They are size-extensive and highly accurate for dynamic correlation but become computationally expensive [23].

- Configuration Interaction (CI): The CI method expands the wavefunction as a linear combination of the HF determinant and excited determinants (e.g., CISD, CISDTQ). While variational, it is not size-extensive unless all excitations are included (Full CI), which is only feasible for very small systems [23].

- Explicitly Correlated Methods (F12): These methods incorporate the interelectronic distance (r_{12}) directly into the wavefunction ansatz, dramatically improving the convergence of correlation energy with respect to basis set size [13].

Methods for Static Correlation

When static correlation is dominant, a multi-reference starting point is essential.

- Multi-Configurational Self-Consistent Field (MCSCF): MCSCF methods simultaneously optimize both the CI coefficients of multiple determinants and the molecular orbitals themselves. This allows the wavefunction to describe near-degenerate situations accurately [18] [13].

- Complete Active Space SCF (CASSCF): CASSCF is a specific type of MCSCF where a full CI is performed within a user-defined active space of electrons and orbitals. This provides a robust description of static correlation but suffers from exponential scaling, limiting active space size [22] [19].

- Valence Orbital Optimized Coupled-Cluster Doubles (VOD): VOD is an active-space coupled-cluster method that approximates full valence CASSCF with lower (O(N^6)) computational cost, making it more applicable to larger systems [19].

Hybrid and Perturbative Multi-Reference Methods

For chemical accuracy, both static and dynamic correlation must be addressed. This is often achieved through hybrid multi-reference schemes.

- Multi-Reference Perturbation Theory: Methods like CASPT2 (Complete Active Space Perturbation Theory) and NEVPT2 (N-Electron Valence Perturbation Theory) use a multi-configurational wavefunction (e.g., from CASSCF) as the reference and apply second-order perturbation theory to account for dynamic correlation outside the active space [13] [22]. These are among the most reliable methods for treating strongly correlated systems. The workflow for such a calculation is methodical, as shown below.

(Caption: A standard computational workflow for treating both static and dynamic electron correlation, typical of methods like CASPT2 and NEVPT2.)

Table 2: Computational Characteristics of Key Post-Hartree-Fock Methods

| Method | Correlation Type Addressed | Key Principle | Strengths | Limitations |

|---|---|---|---|---|

| MP2 [13] | Dynamic | 2nd-order perturbation theory | Low cost, size-extensive | Not variational, poor for static correlation |

| CCSD(T) [23] | Dynamic | Exponential cluster operator | High accuracy, size-extensive | High computational cost ((O(N^7))) |

| CISD [23] | Primarily Dynamic | Linear combination of excited determinants | Variational, simple concept | Not size-extensive |

| CASSCF [13] [19] | Static | Full CI in an active space, orbital optimization | Qualitatively correct for multi-ref systems | Exponential cost, active space choice is critical |

| NEVPT2 [22] | Both | Perturbation on a CASSCF reference | Balanced treatment, size-extensive | Costly; requires 3- & 4-body RDMs |

The Scientist's Toolkit: Essential Reagents and Computational Protocols

Research Reagent Solutions for Electronic Structure Calculations

Table 3: Key Computational "Reagents" for Electron Correlation Studies

| Item | Function in Computational Experiment |

|---|---|

| Gaussian-type Basis Sets | A set of mathematical functions (e.g., cc-pVDZ, cc-pVTZ) centered on atomic nuclei to represent molecular orbitals. Larger basis sets are required to accurately capture correlation effects [23]. |

| Active Space (e.g., CAS(n,m)) | A selection of n electrons in m orbitals for multi-reference calculations. Defining this space is critical for methods like CASSCF and NEVPT2 [22] [19]. |

| Quantum Chemistry Software | Platforms (e.g., Q-Chem, ORCA, PySCF, FermiONs++) that implement the algorithms for HF, MP2, CC, CASSCF, NEVPT2, etc. [22] [19]. |

| Three- and Four-Body Reduced Density Matrices (RDMs) | Mathematical objects containing information about the correlated distribution of three and four electrons. Essential for computing correlation energies in methods like NEVPT2 [22]. |

Detailed Protocol: A Multi-Reference NEVPT2 Calculation

The following protocol outlines the steps for a Strongly-Contracted N-Electron Valence Perturbation Theory (SC-NEVPT2) calculation, a robust method for systems requiring a balanced treatment of static and dynamic correlation [22].

System Preparation and Mean-Field Calculation:

- Input: Provide the molecular geometry (in Cartesian or internal coordinates) and specify an atomic orbital basis set.

- Procedure: Perform a restricted Hartree-Fock (RHF) calculation. For open-shell systems, unrestricted Hartree-Fock (UHF) may be used. This step generates the initial set of canonical molecular orbitals.

Active Space Selection (CAS):

- Objective: Define the Complete Active Space (CAS) with

nelectrons inmorbitals (denoted CAS(n,m)). - Protocol: This is a critical, chemically informed step. The active space should include all orbitals actively involved in the bonding or correlation effects of interest (e.g., bonding/antibonding pairs in bonds being broken, d-orbitals in transition metals, frontier orbitals in diradicals). Automated tools like the ORCA automator or heuristic approaches based on atomic valence orbital counts can assist [22] [19].

- Objective: Define the Complete Active Space (CAS) with

MCSCF/CASSCF Wavefunction Optimization:

- Objective: Obtain the multi-configurational reference wavefunction that captures static correlation.

- Procedure: a. Orbital Localization: Transform the HF orbitals to localize core, active, and virtual spaces. b. Variational Optimization: Use the MCSCF algorithm to optimize both the configuration interaction (CI) coefficients of the active space and the molecular orbitals simultaneously. This yields the CASSCF energy and wavefunction, which is the reference for the perturbation step. c. Convergence Check: Ensure the energy and wavefunction are converged with respect to the orbital rotations and CI coefficients.

Perturbative Treatment of Dynamic Correlation (SC-NEVPT2):

- Objective: Compute the dynamic correlation energy from electrons outside the active space.

- Procedure: a. RDM Construction: Compute the two-, three-, and four-body Reduced Density Matrices (RDMs) from the optimized CASSCF wavefunction [22]. b. Perturbation Theory: Construct the first-order interacting space (FOIS) by considering all possible single and double excitations from the inactive (core) and active orbitals to the active and virtual (secondary) orbitals. The SC-NEVPT2 method uses the RDMs to compute the matrix elements of the perturbation Hamiltonian within this space. c. Energy Correction: Solve the perturbation equations to obtain the second-order energy correction ((E^{(2)})). d. Total Energy: The final correlated energy is (E{\text{total}} = E{\text{CASSCF}} + E^{(2)}).

Analysis and Validation:

- Analysis: Examine the natural orbital occupancies from the CASSCF wavefunction. Occupancies deviating significantly from 2 or 0 indicate strong static correlation [21].

- Validation: Compare results with experimental data (if available) or with higher-level benchmarks (e.g., Full CI in small basis sets). Test the sensitivity of the result to the choice of active space.

Impact on Predictive Accuracy in Chemical Research

The correct application of correlation methods directly dictates the accuracy of predictions in computational chemistry, with significant implications for drug discovery and materials science.

Energetics and Thermodynamics: Dynamic correlation methods like CCSD(T) are crucial for accurate computation of reaction energies, barrier heights, and interaction energies (e.g., drug-receptor binding affinity) [23]. Static correlation is essential for predicting the energy profile of bond dissociation reactions, where HF and standard DFT fail catastrophically [20].

Molecular Properties and Spectroscopy: Properties such as spin densities, electronic excitation energies, and vibrational frequencies are highly sensitive to electron correlation. For example, the ground state of the oxygen molecule is a triplet, a fact that can only be captured with a multi-reference treatment of static correlation. Dynamic correlation is necessary to accurately position the energies of excited states in UV-Vis spectroscopy [13].

Strongly Correlated Systems: In transition metal chemistry, catalysts and enzymes often involve metal centers with open d-shells. These systems are frequently strongly correlated, exhibiting both significant static and dynamic correlation effects. Methods like CASPT2 and NEVPT2 are often the only ones capable of providing a reliable description of their reaction mechanisms and spin-state energetics [22].

The advent of quantum computing offers a promising pathway for overcoming the exponential scaling of classical methods like Full CI. Recent algorithmic advances, such as resource-efficient implementations of NEVPT2 that leverage quantum simulations for the active space problem and classical computing for the perturbative correction, aim to extend high-accuracy quantum chemistry to larger, more chemically relevant systems [22]. This hybrid quantum-classical paradigm represents the frontier of research into solving the electron correlation problem.

The development of quantum mechanics in the 1920s presented both a profound revelation and a formidable challenge to theoretical physicists and chemists: how to accurately describe and compute the behavior of systems with multiple interacting electrons. The Schrödinger equation, while exact in principle, proved analytically unsolvable for any atom more complex than hydrogen, much less for molecules or extended systems. This theoretical impasse prompted the development of approximate computational methods that could bridge the gap between fundamental theory and practical calculation. The Hartree-Fock method emerged from this crucium of scientific necessity, evolving through two crucial stages: Douglas Hartree's initial self-consistent field concept in 1927-1928, which provided a workable numerical framework but overlooked quantum mechanical exchange effects, and Vladimir Fock's subsequent incorporation of wavefunction antisymmetry in 1930, which established the modern theoretical foundation for the method [2] [24]. This historical progression from Hartree's distinguishable electron model to Fock's properly antisymmetrized wavefunction represents not merely technical refinement but a fundamental advancement in understanding quantum many-body systems, even as the resulting method carries inherent limitations that continue to motivate quantum chemistry research nearly a century later.

Theoretical Foundations: From the Many-Body Problem to Mean-Field Approximation

The Fundamental Challenge in Quantum Chemistry

The core challenge addressed by both Hartree and Fock lies in the mathematical structure of the many-electron Schrödinger equation. For a system with N electrons and M nuclei, the time-independent Schrödinger equation takes the form:

ĤΨ({rᵢ}, {Rₐ}) = EΨ({rᵢ}, {Rₐ})

where the Hamiltonian Ĥ contains terms for electron kinetic energy, nuclear kinetic energy, and all Coulomb interactions between electrons and nuclei [25]. The complexity arises primarily from the electron-electron repulsion terms, V_ee = ∑ᵢ<ⱼ e²/(4πε₀|rᵢ - rⱼ|), which couple the coordinates of all electrons together, making exact solution impossible for systems with more than one electron. This coupling means the wavefunction cannot be separated into independent one-electron functions, a mathematical property known as non-separability. Early attempts to address this problem included semi-empirical methods like the Bohr model with empirical quantum defects and Erich Hückel's molecular orbital theory for conjugated π systems, but these approaches lacked ab initio predictive power [24].

Hartree's Self-Consistent Field Breakthrough

In 1927-1928, Douglas Hartree introduced a revolutionary approach to this problem that came to be known as the self-consistent field (SCF) method [2] [24]. Hartree's key insight was to approximate the complex many-electron wavefunction as a simple product of one-electron orbitals:

ΨHartree(r₁, r₂, ..., rN) = φ₁(r₁)φ₂(r₂)...φN(rN)

where each φᵢ represents the wavefunction for an individual electron [26]. This ansatz effectively decouples the electron motions, allowing each electron to be described as moving in an average potential field created by the nucleus and the charge distribution of all other electrons. Hartree implemented this concept through an iterative numerical procedure:

- Initial Guess: Begin with approximate one-electron orbitals (e.g., hydrogen-like orbitals)

- Potential Calculation: Compute the average electrostatic potential from the current electron density

- Orbital Update: Solve the one-electron Schrödinger equation in this average potential

- Iteration: Repeat steps 2-3 until the orbitals and potential stop changing (self-consistency) [24]

The central equation for the i-th orbital in Hartree's method was:

[-∇²/2 - Z/r + ∫ρ(r')/|r - r'| dr'] φi(r) = εi φ_i(r)

where ρ(r) = ∑ⱼ|φⱼ(r)|² represents the total electron density [24]. Hartree employed finite difference methods on radial grids to numerically solve these equations, achieving remarkable agreement with atomic spectra for elements from sodium to zinc and revealing electronic shell structure [24]. However, his product wavefunction violated the Pauli exclusion principle for fermions by treating electrons as distinguishable and failed to account for quantum exchange effects arising from electron indistinguishability.

Fock's Extension: Incorporating Antisymmetry and Exchange

Theoretical Advancements (1930)

In 1930, Vladimir Fock, building on independent work by John C. Slater, fundamentally advanced Hartree's method by properly accounting for the antisymmetry requirement of fermionic wavefunctions [2] [24]. Fock recognized that a simple product wavefunction fails to satisfy the quantum mechanical principle that identical fermions must be described by a wavefunction that changes sign upon exchange of any two particles:

Ψ(..., rᵢ, ..., rⱼ, ...) = -Ψ(..., rⱼ, ..., rᵢ, ...)

To enforce this requirement, Fock proposed representing the many-electron wavefunction as a Slater determinant:

ΨFock(r₁, r₂, ..., rN) = (1/√N!) × | φ₁(r₁) φ₁(r₂) ... φ₁(rN) | | φ₂(r₁) φ₂(r₂) ... φ₂(rN) | | ... ... ... ... | | φN(r₁) φN(r₂) ... φN(rN) |

This determinant form automatically ensures antisymmetry while maintaining the orbital picture [25]. When the variational principle is applied to this antisymmetrized wavefunction, the resulting equations contain an additional non-local exchange term absent in Hartree's original formulation.

The Fock Operator and Exchange Interaction

The Fock operator that emerges from the variational treatment of the Slater determinant takes the form:

f̂ = -∇²/2 - Z/r + ∑ₐ [2Jₐ(r) - Kₐ(r)]

where Jₐ is the Coulomb operator representing the average electrostatic repulsion from electrons in orbital a, and Kₐ is the exchange operator arising from antisymmetry [26] [25]. The exchange operator is defined by its action on orbitals:

Kₐ φᵢ = [∫φₐ*(r')φᵢ(r')/|r - r'| dr'] φₐ(r)

This non-local exchange term has no classical analog and represents a purely quantum mechanical effect due to fermion antisymmetry [24] [25]. The Fock equations are thus integro-differential equations that must be solved self-consistently:

f̂ φᵢ = εᵢ φᵢ

Hartree later incorporated these exchange effects into his numerical SCF calculations, notably in his 1935 study of beryllium, unifying the method into what we now call the Hartree-Fock method [24].

Table 1: Key Differences Between Hartree and Hartree-Fock Methods

| Feature | Hartree Method | Hartree-Fock Method |

|---|---|---|

| Wavefunction Form | Simple product of orbitals | Single Slater determinant |

| Antisymmetry | Not enforced | Properly enforced |

| Exchange Effects | Neglected | Included via non-local operator |

| Pauli Principle | Violated | Satisfied |

| Theoretical Basis | Semi-classical | Fully quantum mechanical |

| Computational Complexity | Lower (local potential) | Higher (non-local potential) |

Mathematical Framework and Computational Implementation

The Roothaan-Hall Equations for Molecules

While the Hartree-Fock method proved successful for atoms, its application to molecules required further development. The breakthrough came in 1951 with Clemens Roothaan's formulation of the Hartree-Fock equations in a finite basis set of atomic orbitals [24]. This transformed the integro-differential Fock equations into a matrix equation amenable to numerical computation:

FC = SCε

where F is the Fock matrix, C is the matrix of molecular orbital coefficients, S is the overlap matrix between basis functions, and ε is the diagonal matrix of orbital energies [27]. The Fock matrix elements are given by:

F{μν} = H{μν}^{core} + J{μν} - K{μν}

where H{μν}^{core} contains kinetic energy and nuclear attraction terms, while J{μν} and K_{μν} represent Coulomb and exchange contributions, respectively [27]. For closed-shell systems, the restricted Hartree-Fock (RHF) method assumes doubly occupied spatial orbitals, while open-shell systems require either restricted open-shell Hartree-Fock (ROHF) or unrestricted Hartree-Fock (UHF) formulations with different spatial orbitals for α and β spins [27].

Computational Algorithm and Workflow

The Hartree-Fock method is implemented as an iterative self-consistent field procedure with the following computational workflow:

Diagram 1: Hartree-Fock Self-Consistent Field Computational Workflow

The computational cost of the Hartree-Fock method formally scales as O(N⁴), where N is the number of basis functions, primarily due to the calculation and processing of two-electron integrals (μν|λσ) [28]. However, integral screening techniques that exploit the Schwartz inequality can reduce the effective scaling to approximately O(N²˙²⁻²˙³) for large systems [28].

Limitations of the Hartree-Fock Method

Electron Correlation Problem

Despite its theoretical elegance, the Hartree-Fock method suffers from a fundamental limitation: the neglect of electron correlation. This limitation manifests in several systematic errors:

Coulomb Correlation Error: The HF method treats electrons as moving in an average field, ignoring their instantaneous Coulomb repulsion and the resulting correlated motion [2] [25].

Systematic Energy Overestimation: The Hartree-Fock energy always exceeds the exact non-relativistic energy (variational theorem), with the difference defining the correlation energy: Ecorr = Eexact - E_HF [29].

Inadequate Bond Dissociation Description: HF fails to properly describe bond breaking, producing unrealistic potential energy surfaces and incorrect dissociation limits [30].

Weak Interaction Limitations: The method cannot capture London dispersion forces, which arise entirely from electron correlation effects [2].

Table 2: Quantitative Limitations of Hartree-Fock Method

| Property | HF Performance | Reason for Deficiency |

|---|---|---|

| Total Energy | Systematic overestimation (~0.5-1% error) | Neglect of electron correlation |

| Bond Lengths | Typically overestimated (1-5% error) | Inadequate potential energy curves |

| Dissociation Energies | Typically underestimated (10-30% error) | Incorrect dissociation limits |

| Reaction Barriers | Often overestimated | Lack of correlation stabilization |

| Dispersion Interactions | Completely missing | No dynamic correlation effects |

| Band Gaps (Solids) | Overestimated (Koopmans' theorem) | Lack of screening and relaxation |

Quantitative Assessment of Limitations

The practical impact of these limitations becomes evident in quantitative chemical applications. For molecular systems, Hartree-Fock typically recovers 99% of the total energy but misses the crucial 1% responsible for chemical bonding and reactivity [29]. This error manifests in overestimation of bond lengths by 1-5% and underestimation of dissociation energies by 10-30% compared to experimental values [30]. The method's inability to describe London dispersion forces makes it particularly unsuitable for weakly interacting systems like van der Waals complexes or π-stacked aromatic systems.

For the H₂ molecule at equilibrium geometry, the Hartree-Fock method recovers 94.3% of the binding energy, failing to describe the remaining 5.7% that constitutes correlation energy [29]. As the bond stretches, this deficiency becomes more severe, with HF completely failing to describe the proper dissociation limit into two neutral hydrogen atoms.

Modern Context: Hartree-Fock in Contemporary Computational Chemistry

Role in Quantum Chemistry and Drug Development

Despite its limitations, the Hartree-Fock method remains foundational in computational chemistry and drug development for several reasons:

Reference for Correlated Methods: HF provides the starting point for post-Hartree-Fock methods like MP2, CCSD(T), and configuration interaction, which add electron correlation on top of the HF reference [30].

Computational Efficiency: For systems with hundreds of atoms, HF remains computationally tractable where more accurate correlated methods are prohibitive [30].

Qualitative Molecular Structure: HF predicts reasonably accurate molecular geometries, particularly for organic molecules near equilibrium structures [27].

Educational Value: The method provides a conceptually clear introduction to electronic structure theory [26].

In pharmaceutical research, HF and density functional theory (DFT) methods provide initial screening of molecular properties, though higher-level methods are typically required for quantitative accuracy in binding affinity prediction [30].

Quantum Computing and Beyond-Hartree-Fock Calculations

Recent advances in quantum computing have opened new possibilities for addressing the electron correlation problem. The Quantum Computed Moments (QCM) approach represents one such innovation, using Hamiltonian moments ⟨Hᴾ⟩ computed with respect to the Hartree-Fock state to estimate correlated ground-state energies [29]. This method has demonstrated precision of about 10 mH for H₆ and as low as 0.1 mH for H₂ over a range of bond lengths, achieving 99.9% of the exact ground-state energy for H₆ [29].

Other hybrid quantum-classical algorithms like the Variational Quantum Eigensolver (VQE) also use the Hartree-Fock state as a reference, attempting to systematically improve upon it with correlated ansätze [29]. While current quantum hardware limitations restrict these applications to small systems, they represent promising directions for overcoming traditional Hartree-Fock limitations.

Table 3: Research Reagent Solutions in Electronic Structure Calculations

| Computational Tool | Function | Role in Hartree-Fock Context | |

|---|---|---|---|

| Gaussian Basis Sets | Mathematical functions to expand molecular orbitals | Determine accuracy and computational cost of HF calculations | |

| Pseudopotentials | Replace core electrons with effective potentials | Reduce computational cost for heavy elements | |

| Integral Packages | Compute two-electron integrals (μν | λσ) | Central to SCF performance; handle O(N⁴) scaling |

| Density Fitting | Approximate four-center integrals with three-center ones | Reduce computational scaling and memory requirements | |

| DIIS Algorithm | Direct Inversion in Iterative Subspace | Accelerate SCF convergence; prevent oscillations | |

| Molecular Geometry | Nuclear coordinates and atomic species | Define the molecular system for HF calculation |

The historical evolution from Hartree's self-consistent field to Fock's antisymmetric solution represents a pivotal chapter in theoretical chemistry, establishing both the power and limitations of the mean-field approach to electron correlation. While Hartree provided the crucial computational framework of iterative self-consistency, Fock's incorporation of antisymmetry through Slater determinants transformed the method into a properly quantum mechanical theory. Despite its systematic neglect of electron correlation, the Hartree-Fock method endures as the foundational reference point for modern electronic structure theory, serving as the starting point for both classical correlated methods and emerging quantum algorithms. Its continuing relevance nearly a century after its initial development testifies to the enduring power of its core conceptual insight: that much of electronic structure can be understood through electrons moving independently in an effective average potential, with correlation effects representing crucial but perturbative corrections to this fundamental picture.

Practical Consequences: How HF Limitations Impact Drug Discovery and Molecular Modeling

Systematic Errors in Binding Energy and Affinity Predictions