Computational NMR Methods: A Quantum Chemical Comparison for Drug Discovery and Biomolecular Research

This article provides a comprehensive comparison of quantum chemical methods for calculating NMR parameters, tailored for researchers and professionals in drug development.

Computational NMR Methods: A Quantum Chemical Comparison for Drug Discovery and Biomolecular Research

Abstract

This article provides a comprehensive comparison of quantum chemical methods for calculating NMR parameters, tailored for researchers and professionals in drug development. It explores the fundamental theories underlying NMR parameter computation, from non-relativistic foundations to modern relativistic corrections. The review evaluates prevalent methodological approaches, including Density Functional Theory (DFT), coupled-cluster techniques, and hybrid models, highlighting their specific applications in metabolomics and protein structure analysis. A practical guide for troubleshooting common accuracy issues and optimizing computational protocols is presented, covering basis set selection, solvent effects, and conformational sampling. Finally, the article offers a rigorous validation framework, benchmarking methodological accuracy against experimental data and introducing advanced machine learning and quantum computing approaches for the future of computational NMR.

The Quantum Foundations of NMR: From Ramsey's Theory to Modern Relativistic Frameworks

The accurate prediction of Nuclear Magnetic Resonance (NMR) parameters is a cornerstone of modern structural chemistry, enabling the elucidation of molecular identity and configuration. The entire edifice of contemporary quantum chemical computation of NMR spectra rests upon a formal foundation laid over 70 years ago: the nonrelativistic perturbation theory developed by Norman Ramsey. His pioneering work established the fundamental quantum mechanical operators that describe how nuclei interact with magnetic fields and with each other, formalizing the concepts of nuclear magnetic shielding and indirect spin-spin coupling ( [1]). While computational methods have evolved dramatically, progressing from manual calculations on small molecules to sophisticated density functional theory (DFT) and machine learning applications on biomolecular systems ( [2]), they remain fundamentally rooted in Ramsey's original formalism. This guide provides a comparative analysis of the computational NMR landscape, tracing the lineage of modern methods from their theoretical origin and benchmarking their performance against experimental data.

Theoretical Foundation: Ramsey's Formalism

The Original Framework

In his seminal 1950-1951 work, Ramsey derived the expressions for NMR shielding and spin-spin coupling constants using second-order Rayleigh–Schrödinger perturbation theory, providing the first rigorous quantum mechanical description of these phenomena ( [1]). The Hamiltonian was extended to include hyperfine interactions, and the total energy of the system was expressed as a power series of the external magnetic field flux density (B) and the nuclear magnetic moments (μ_N). The NMR parameters emerge as second derivatives of this energy ( [1]).

The nuclear shielding tensor σN, which describes the shielding of a nucleus from the external magnetic field by the surrounding electron cloud, is defined as: σN;αβ = ∂²E(B, μ) / ∂Bα ∂μN;β (evaluated at μ_N=0, B=0) ( [1])

This tensor can be separated into two distinct physical contributions:

- Diamagnetic (σ_N^dia): Arises from diamagnetic circular electron currents induced in the atomic orbitals and is proportional to the electron density at the nucleus (an analogue of Lamb's formula) ( [1]).

- Paramagnetic (σ_N^para): Results from the mixing of ground and excited electronic states by the magnetic field and depends on the presence of electrons with non-zero angular momentum ( [1]).

For comparison with solution-state NMR experiments, the isotropic shielding constant is calculated as one-third of the trace of the shielding tensor: σN,iso = (1/3)Tr(σN) ( [1]). The experimentally reported chemical shift (δ) is then a relative quantity calculated using the IUPAC formula: δ ≈ σref - σsample, where σ_ref is the isotropic shielding of a reference compound ( [1]).

The Gauge Challenge and Its Solutions

A significant theoretical challenge in Ramsey's framework is the gauge invariance problem. The magnetic vector potential describing a uniform magnetic field is not unique, and the computed shielding constants incorrectly depended on the arbitrary choice of coordinate system origin in approximate calculations ( [1]). This problem was solved by moving to local gauge origins, leading to the development of modern methods such as Gauge-Including Atomic Orbitals (GIAO) and Individual Gauge for Localized Orbitals (IGLO), which are now standard in computational NMR software ( [1]).

Table 1: Key Contributions in Ramsey's Nonrelativistic Formalism

| Concept | Mathematical Expression | Physical Significance | ||||

|---|---|---|---|---|---|---|

| Total Perturbed Energy | ( E(B, \muN) = E0 + E^{(10)} \cdot B + \sumN EN^{(01)} \cdot \muN + \sumN \muN^T EN^{(11)} B + \sum{M,N} \muM^T E{MN}^{(02)} \muN + \ldots ) | Foundation for defining all NMR parameters as energy derivatives ( [1]) | ||||

| Shielding Tensor | ( \sigma{N;\alpha\beta} = \frac{\partial^2 E(B, \mu)}{\partial B\alpha \partial \mu_{N;\beta}} \bigg | {\muN=0, B=0} ) | Describes how electron cloud shields nucleus from external magnetic field ( [1]) | |||

| Diamagnetic Shielding | ( \sigmaN^{dia} \propto \left\langle \Psi0 \left | \sumi \frac{r{i0}^T r{iN} I - r{i0} r{iN}^T}{r{iN}^3} \right | \Psi_0 \right\rangle ) | Local property, depends on ground state electron density at nucleus ( [1]) | ||

| Paramagnetic Shielding | ( \sigmaN^{para} \propto \sum{n \neq 0} (En - E0)^{-1} \langle \Psi_0 | \sumi \hat{L}{i0} | \Psin \rangle \langle \Psin | \sumj \hat{L}{jN} r_{jN}^{-3} | \Psi_0 \rangle ) | Non-local property, depends on coupling between ground and excited states ( [1]) |

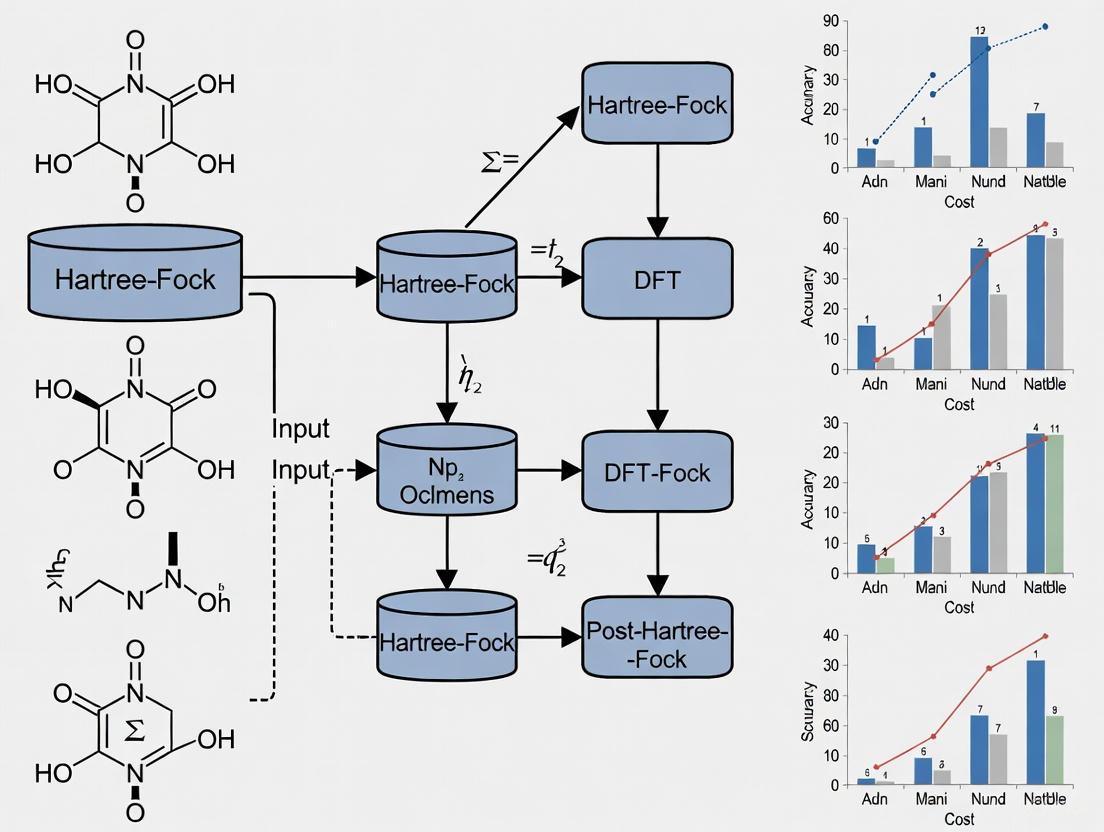

Figure 1: The theoretical evolution of NMR parameter computation, showing how Ramsey's foundational theory spurred subsequent developments to address its limitations and expand its applicability.

The Computational NMR Landscape: Evolving Beyond the Foundation

Density Functional Theory (DFT): The Workhorse Method

DFT has become the predominant method for calculating NMR parameters, offering an optimal balance between computational cost and accuracy for a wide range of chemical systems ( [2]). Modern DFT protocols can predict chemical shifts and coupling constants with remarkable reliability, enabling direct comparison with experimental spectra for structural verification. The mPW1PW91 functional with the 6-311G(d,p) basis set, for instance, has been systematically benchmarked against extensive experimental datasets, demonstrating its utility for 3D structure determination ( [3]).

Relativistic Methods: Extending to Heavy Elements

For molecules containing heavy elements, relativistic effects become significant and can profoundly influence NMR parameters ( [4]). The high nuclear charges in heavy atoms cause electron velocities to approach the speed of light, necessitating a relativistic quantum mechanical treatment. These effects are particularly dramatic for NMR properties of elements like Pt, Hg, Tl, and Pb, where they can far surpass relativistic effects on other molecular properties ( [4]). Modern four-component (4c) relativistic methods and the M-V model (a relativistic generalization of the Ramsey-Flygare relationship) now allow for the accurate determination of absolute NMR shielding scales, even for challenging systems like methyl halides ( [5]).

Machine Learning and Hybrid Approaches

The recent integration of machine learning (ML) with traditional quantum mechanics represents a paradigm shift. ML techniques leverage large datasets to automate spectral assignments, predict chemical shifts, and analyze complex NMR data with enhanced speed ( [2]). These approaches are particularly valuable for high-throughput applications in metabolomics and drug discovery, where they can drastically reduce the need for computationally intensive quantum chemical calculations on every candidate structure ( [2]).

Table 2: Comparison of Quantum Chemical Methods for NMR Parameter Prediction

| Method | Theoretical Basis | Strengths | Limitations | Ideal Use Cases |

|---|---|---|---|---|

| DFT | Density functional theory with various functionals and basis sets | Good balance of accuracy/speed; handles diverse systems ( [2]) | Accuracy depends on functional choice; standard functionals struggle with strong correlation ( [2]) | Organic molecules, drug-like compounds, medium-sized biomolecules ( [3]) |

| 4c-Relativistic DFT | Density functional theory with full 4-component relativistic Hamiltonian | Essential for heavy elements; high accuracy for 5th-6th period nuclei ( [4] [5]) | Very high computational cost; complex implementation ( [4]) | Organometallic complexes, heavy element chemistry, benchmark studies ( [5]) |

| Machine Learning | Algorithms trained on large datasets of experimental/computed NMR parameters | Very fast prediction after training; excels at pattern recognition in complex data ( [2]) | Requires extensive training data; limited transferability to new chemotypes ( [2]) | High-throughput screening, automated structure verification, spectral databases ( [2]) |

| Wavefunction-Based (CCSD) | Coupled-cluster theory with single and double excitations | High accuracy; considered a "gold standard" for small molecules ( [2] [1]) | Extremely high computational cost; limited to small systems ( [2]) | Benchmarking, small molecule precision studies, method development ( [2]) |

Experimental Benchmarking and Validation

Standardized Datasets for Method Assessment

The development of reliable computational methods depends critically on access to high-quality, validated experimental data. Recent work has produced carefully curated datasets containing over 1,000 accurately defined and validated experimental NMR parameters for fourteen complex organic molecules ( [3]). This dataset includes 775 nJCH and 300 nJHH scalar coupling constants, alongside assigned ¹H/¹³C chemical shifts and their corresponding 3D structures ( [3]). Such resources are invaluable for benchmarking the performance of computational methods, as they provide a standardized test set free from common issues like misassignment or low precision reporting.

Performance Metrics: Accuracy Across Nuclei and Couplings

Systematic benchmarking reveals characteristic performance patterns across computational methods. For the mPW1PW91/6-311G(d,p) level of theory, comparisons against experimental data show generally good agreement, though with systematic deviations that can be corrected through scaling procedures ( [3]). The accuracy of predicting long-range coupling constants (nJ_CH) is particularly valuable for determining molecular conformation and stereochemistry, as these parameters are highly sensitive to three-dimensional structure ( [3]).

Table 3: Experimental Benchmarking Data for NMR Parameter Validation (Selected from 14-Molecule Dataset) [3]

| NMR Parameter Type | Count in Full Dataset | Count in Rigid Subset | Typical Range | Key Structural Information |

|---|---|---|---|---|

| ¹H Chemical Shifts | 332 (280 sp³, 52 sp²) | 172 (146 sp³, 46 sp²) | 0.4 - 11.1 ppm | Electronic environment, functional groups |

| ¹³C Chemical Shifts | 336 (218 sp³, 118 sp²) | 237 (163 sp³, 74 sp²) | 7.6 - 203.1 ppm | Hybridization, substituent effects |

| nJ_HH (²J, ³J, ⁴J) | 300 (63 ²J, 200 ³J, 28 ⁴J) | 205 (49 ²J, 134 ³J, 16 ⁴J) | 0.8 - 17.5 Hz | Dihedral angles, stereochemistry |

| nJ_CH (²J, ³J, ⁴J) | 775 (241 ²J, 481 ³J, 79 ⁴J) | 570 (187 ²J, 337 ³J, 70 ⁴J) | 0.7 - 11.3 Hz | Conformation, stereochemistry, long-range connectivity |

Table 4: Key Research Reagent Solutions for Computational NMR Studies

| Resource / Tool | Type | Primary Function | Application Context |

|---|---|---|---|

| Validated Experimental Dataset [3] | Data Resource | Benchmarking computational methods against reliable experimental NMR parameters | Method validation, accuracy assessment, force field development |

| GIAO (Gauge-Including Atomic Orbitals) [1] | Computational Method | Solving the gauge invariance problem in NMR shielding calculations | Accurate chemical shift prediction in DFT and ab initio calculations |

| IPAP-HSQMBC [3] | Experimental NMR Technique | Measuring heteronuclear long-range coupling constants (nJ_CH) with high accuracy | Conformational analysis, stereochemical determination, structural validation |

| Relativistic DFT Codes [4] | Software/Methodology | Calculating NMR parameters for heavy element systems | Organometallic chemistry, inorganic complexes, materials science |

| PANACEA Acquisition Sequence [2] | Integrated NMR Protocol | Simultaneous collection of multiple multidimensional NMR experiments | Streamlined structural characterization of small molecules |

Figure 2: A modern computational NMR workflow for 3D structure determination, showing the integration of theoretical calculations with experimental validation. This iterative process refines structural models until agreement is achieved between computed and observed NMR parameters.

Ramsey's nonrelativistic theory established the fundamental language for describing NMR interactions, creating a formalism that has demonstrated remarkable resilience and adaptability. While modern computational methods have dramatically expanded in sophistication—addressing gauge problems, incorporating relativistic effects, and harnessing machine learning—they remain firmly grounded in the physical insights and mathematical framework first articulated over seven decades ago. The continued development of standardized benchmarking datasets and more efficient computational protocols ensures that this powerful synergy between foundational theory and modern computation will continue to drive advancements in structural biology, drug discovery, and materials science. As computational power grows and algorithms refine, Ramsey's enduring legacy persists as the foundational syntax in the language of NMR parameter computation.

Nuclear Magnetic Resonance (NMR) spectroscopy stands as a cornerstone analytical technique in modern chemical and pharmaceutical research, providing unparalleled insights into molecular structure, dynamics, and interactions. The discovery that nuclear resonance frequencies depend on the chemical environment—the chemical shift—represented a fundamental breakthrough that elevated NMR from a physical phenomenon to an essential analytical tool [6]. At the heart of NMR spectroscopy lies the concept of nuclear magnetic shielding, a tensor property that describes how electrons in a molecule modify the local magnetic field experienced by atomic nuclei. This shielding arises from complex electronic interactions that can be conceptually and computationally separated into two primary components: diamagnetic and paramagnetic shielding contributions [7] [8]. Understanding these contributions is not merely of theoretical interest; it enables researchers to interpret NMR spectra with greater accuracy, validate quantum chemical computations, and ultimately advance drug discovery programs through more reliable structural elucidation.

The theoretical framework for understanding magnetic shielding was established by Ramsey in 1950, who recognized that corrections using only Lamb's diamagnetic theory were inadequate for molecules and developed the necessary theoretical foundation to explain what would become known as the chemical shift [6]. This review examines the fundamental principles of diamagnetic and paramagnetic shielding, compares computational methodologies for their prediction, presents experimental validation protocols, and provides practical guidance for researchers seeking to leverage these concepts in structural biology and pharmaceutical development.

Theoretical Foundations of Magnetic Shielding

The Physical Basis of Nuclear Shielding

When a molecule is placed in an external magnetic field (B₀), the electrons surrounding atomic nuclei generate induced magnetic fields that alter the effective field (B_eff) experienced at the nuclear site. This phenomenon is described by the fundamental shielding relationship:

B_eff = (1 - σ)B₀

where σ represents the shielding constant [7] [8]. In diamagnetic molecules, the overall shielding (σ_i) for a nucleus i can be conceptually decomposed into three components as noted by Saika and Slichter:

σi = σi^d + σi^p + ∑(i≠j)σ_j

where σi^d represents the local diamagnetic contribution, σi^p represents the local paramagnetic contribution, and the final term accounts for modifications arising from both intra- and intermolecular effects [7] [8]. This decomposition provides a powerful framework for understanding how molecular structure and electronic environment influence observed NMR parameters.

Diamagnetic Shielding Contribution

The diamagnetic term (σ_i^d) arises from the circulation of electrons in spherical distributions around the nucleus and always produces a positive contribution to shielding, meaning it reduces the resonant frequency [7] [8]. According to the formulation provided by Pople, this term can be expressed as:

σi^d = (μ₀/4π)(e²/3me)⟨0|∑n(1/rn)|0⟩

where e represents electron charge, me is electron mass, μ₀ is free space permeability, and rn is the distance from the nth electron to an arbitrary origin [7] [8]. The diamagnetic term dominates in atoms with spherical symmetry and is particularly significant for hydrogen atoms, which lack p, d, or f electrons. In molecules, this term responds primarily to local electron density, increasing with greater s-character in bonding orbitals and decreasing with electronegative substituents that withdraw electron density.

Paramagnetic Shielding Contribution

The paramagnetic term (σ_i^p) originates from non-spherical electron distributions, particularly those involving p, d, or f electrons, and always produces a negative contribution (deshielding effect) [7] [8]. This term can be expressed as:

σi^p = -(μ₀/4π)(e²/3me)∑(k≠0)[1/(Ek - E0)]⟨0|∑n Ln|k⟩⟨k|∑n (Ln/rn³)|0⟩

where Ln represents the orbital angular momentum for the nth electron, E0 and E_k are energies of ground and excited states, respectively [7] [8]. The paramagnetic term depends critically on the accessibility of excited states (inverse energy dependence) and the matrix elements connecting these states via angular momentum operators. This term dominates for nuclei in unsymmetrical environments or those with accessible excited states, particularly heavy atoms and atoms involved in multiple bonding.

Tensor Nature of Shielding

Magnetic shielding is fundamentally a second-rank tensor property with nine independent components, meaning the screening of the external magnetic field depends on the relative orientation of the field and the molecule [6]. In single crystals, this orientation dependence manifests as changes in resonance frequencies as the crystal is rotated relative to the magnetic field. For disordered solid samples, this results in characteristic powder patterns, while in liquids, rapid molecular tumbling averages the tensor to its isotropic value:

σiso = 1/3(σxx + σyy + σzz)

The relationship between the shielding tensor (σ) and the experimentally observed chemical shift tensor (δ) is given by:

δ = 1(σ_iso - σ)

where σ_iso represents the isotropic shielding of the reference compound [6]. This tensor nature provides rich structural information in solid-state NMR that is largely lost in solution studies.

Figure 1: Conceptual diagram illustrating how diamagnetic (blue) and paramagnetic (red) shielding contributions modify the external magnetic field to determine the effective field at the nucleus and resulting NMR frequency.

Computational Methodologies for Shielding Prediction

Density Functional Theory (DFT) Approaches

Density Functional Theory has established itself as a cornerstone method for predicting NMR parameters, offering an optimal balance between computational efficiency and accuracy [2]. Most periodic DFT computations in NMR crystallography rely on functionals from the generalized gradient approximation (GGA) family, particularly the Perdew-Burke-Ernzerhof (PBE) functional, which provides reasonable predictions but doesn't always achieve precise agreement with experimental data [9]. The gauge-including projector augmented wave (GIPAW) method was specifically developed for DFT calculations of magnetic resonance properties using pseudopotentials and plane waves as the basis set for wave-function calculations, and has been successfully applied in numerous NMR crystallography studies [9].

For improved accuracy, hybrid functionals such as PBE0 incorporate exact Hartree-Fock exchange, often yielding superior agreement with experimental data. Recent studies have demonstrated that PBE0-based corrections applied to periodic PBE predictions significantly improve agreement with experimental ¹³C chemical shifts, markedly reducing root-mean-square deviations (RMSD) [9]. This approach maintains computational feasibility while achieving accuracy comparable to more expensive computational methods.

Fragment and Cluster Correction Methods

To address limitations in periodic DFT calculations, fragment-based correction methods have been developed that combine the efficiency of periodic calculations with the accuracy of higher-level methods [9]. In this approach:

- Nuclear shieldings are first calculated in the fully periodic crystal structure at the PBE level

- An isolated molecule or molecular fragment is extracted from the periodic structure

- Shielding is computed for the fragment at both PBE level and a higher computational level (e.g., hybrid DFT functional)

- The difference between these calculations serves as a correction to the periodic PBE results

This method has been successfully extended to compute quadrupolar couplings and has demonstrated particular value for predicting ¹³C chemical shifts in organic solids, with later extensions utilizing larger fragments of the crystal structure to compute corrections at higher computational levels [9]. The approach maintains periodic accuracy while incorporating higher-level electronic structure corrections.

Emerging Machine Learning Protocols

Recent machine learning approaches have revolutionized shielding predictions by offering dramatic improvements in computational efficiency. Methods like ShiftML2 can accelerate computations by several orders of magnitude while maintaining accuracy comparable to traditional quantum-chemical methods [9]. These models are trained on diverse structures from crystallographic databases, using shieldings computed at the DFT level with the PBE functional.

Machine learning models have proven particularly valuable for integrating molecular dynamics (MD) simulations with shielding predictions, providing insights into the structure of amorphous materials and enabling the analysis of dynamic systems previously inaccessible to computational NMR [9]. However, unlike traditional quantum chemical methods, ML approaches do not explicitly separate diamagnetic and paramagnetic contributions, instead learning the relationship between structure and total shielding directly from training data.

Table 1: Comparison of Computational Methods for NMR Shielding Prediction

| Method | Theoretical Foundation | Accuracy | Computational Cost | Key Applications |

|---|---|---|---|---|

| GGA-DFT (PBE) | Density Functional Theory | Moderate | Moderate | Initial screening, large systems |

| Hybrid-DFT (PBE0) | DFT with Hartree-Fock exchange | High | High | Benchmark calculations, final validation |

| Fragment-Corrected DFT | Combines periodic and molecular calculations | High | Moderate-High | Molecular crystals, pharmaceutical polymorphs |

| Machine Learning (ShiftML2) | Pattern recognition on DFT training data | Moderate-High | Very Low | High-throughput screening, MD simulations |

Experimental Protocols and Validation

Reference Standards and Absolute Shielding Scales

Experimental determination of nuclear magnetic shielding requires careful calibration against reference standards with known absolute shielding values [6]. The relationship between observed chemical shifts (δ) and shielding constants (σ) is defined as:

δi ≈ σref - σ_i

where σ_ref represents the shielding of the reference compound [7] [8]. Primary references are established through sophisticated methods involving gas-phase studies, spin-rotation constants, and theoretical calculations, which are then transferred to practical secondary standards for routine laboratory use.

Table 2: Absolute Shielding Scales for Common NMR Nuclei

| Nucleus | Primary Reference | Absolute Shielding (σ_iso) | Common Secondary Reference | Secondary Reference Shielding |

|---|---|---|---|---|

| ¹H | Hydrogen atom | 17.733 ppm | Tetramethylsilane (TMS) | ~31 ppm (derived) |

| ¹³C | Carbon monoxide | 3.20 ppm | TMS | 185.4 ppm |

| ¹⁵N | Ammonia | 264.54 ppm | Nitromethane | -135.0 ppm |

| ¹⁷O | Carbon monoxide | -42.3 ppm | Water | 307.9 ppm |

| ¹⁹F | Hydrogen fluoride | 410.0 ppm | CFCl₃ | 189.9 ppm |

| ³¹P | Phosphine | 597.0 ppm | Phosphoric acid | 356.0 ppm |

Gas-Phase NMR for Isolated Molecule Studies

Gas-phase NMR measurements are crucial for separating intrinsic molecular shielding parameters from intermolecular contributions present in condensed phases [7] [8]. By extrapolating shielding measurements to the zero-density limit, researchers can obtain shielding values (denoted as σ₀) equivalent to isolated molecules [7] [8]. These measurements provide essential benchmarks for quantum chemical calculations, which typically model molecules in isolation without environmental effects.

Experimental protocols for gas-phase NMR require specialized equipment to handle gases at controlled densities and temperatures. Measurements are performed at multiple densities and extrapolated to zero density to eliminate residual intermolecular effects, providing the true isolated molecule shielding [7] [8]. These values allow direct comparison with quantum chemical calculations without the need to model bulk solvent or crystal packing effects.

Validation Data Sets for Method Benchmarking

Comprehensive experimental datasets with validated NMR parameters are essential for benchmarking computational methods. A recent study published over 1000 accurately defined and validated experimental parameters, including 775 proton-carbon scalar coupling constants (ⁿJCH), 300 proton-proton scalar coupling constants (ⁿJHH), 332 ¹H chemical shifts, and 336 ¹³C chemical shifts for fourteen complex organic molecules [3].

The validation process involves comparing experimental NMR parameters with DFT-calculated values to identify potential misassignments. A subset of 565 ⁿJCH, 205 ⁿJHH, 172 ¹H chemical shifts, and 202 ¹³C chemical shifts from rigid portions of these molecules has been identified as particularly valuable for benchmarking computational methods for predicting NMR parameters [3]. These datasets provide robust benchmarks for evaluating the performance of different computational protocols in predicting both shielding constants and coupling parameters.

Figure 2: Experimental workflow for NMR parameter determination and computational validation, showing multiple pathways for gas-phase, solution, and solid-state measurements.

Comparative Performance Analysis

Accuracy Across Nuclear Environments

The performance of computational methods varies significantly across different nuclear environments and elements. Recent studies comparing DFT and machine-learning predictions of NMR shieldings reveal that correction schemes originally developed for periodic DFT calculations can significantly improve agreement with experimental ¹³C chemical shifts [9]. The application of PBE0-based corrections to periodic PBE predictions has markedly reduced RMSD values for carbon nuclei in molecular crystals [9].

In contrast, these corrections demonstrate minimal impact on ¹H shieldings, highlighting the differential sensitivity of various nuclei to computational methodologies [9]. This nuclear dependence reflects the varying contributions of diamagnetic and paramagnetic terms across the periodic table, with proton shielding being dominated by local diamagnetic contributions that are well-described by standard DFT functionals, while heavier elements with significant paramagnetic contributions require more sophisticated treatment.

Performance in Pharmaceutical Applications

In pharmaceutical research, where molecular complexity and conformational flexibility present particular challenges, the mPW1PW91/6-311G(d,p) level of theory has emerged as a valuable compromise between accuracy and computational feasibility [3]. This approach has been successfully applied to compute magnetic shielding tensors that are subsequently converted to experimentally relevant chemical shifts through scaling procedures.

The availability of validated experimental datasets has enabled systematic benchmarking of these computational protocols, revealing their strengths and limitations for different molecular classes [3]. For rigid substructures, modern DFT methods can achieve remarkable accuracy, while flexible regions remain challenging due to the need for extensive conformational sampling and the sensitivity of shielding to precise molecular geometry.

Table 3: Key Computational and Experimental Resources for NMR Shielding Research

| Resource Category | Specific Tools/Methods | Primary Function | Key Applications |

|---|---|---|---|

| Quantum Chemical Software | Gaussian, ORCA, CP2K, Quantum ESPRESSO | Shielding tensor calculation | Method development, benchmark calculations |

| Machine Learning Protocols | ShiftML2, Impression | Fast shielding prediction | High-throughput screening, MD integration |

| Reference Standards | TMS, DSS, Adamantane | Chemical shift referencing | Experimental calibration |

| Specialized NMR Experiments | IPAP-HSQMBC, EXSIDE | Scalar coupling measurement | Stereochemical analysis, conformation determination |

| Validation Datasets | C4X Discovery dataset [3] | Method benchmarking | Computational protocol validation |

| Solid-State NMR Methods | GIPAW DFT, Fragment corrections | Crystal structure refinement | NMR crystallography, polymorph characterization |

The deconstruction of NMR parameters into diamagnetic and paramagnetic shielding contributions provides not only fundamental theoretical insights but also practical advantages for method development and applications in structural science. While DFT remains the workhorse for shielding predictions, the emergence of machine learning protocols promises to dramatically expand the scope and scale of computational NMR applications [9] [2].

The ongoing refinement of fragment-based correction schemes offers a promising pathway to accuracy competitive with high-level quantum chemical methods at substantially reduced computational cost [9]. As validation datasets continue to expand and diversify, particularly for pharmaceutically relevant compounds [3], researchers are better equipped than ever to select appropriate computational strategies for specific applications.

Future developments will likely focus on improving accuracy for challenging nuclei, extending methods to dynamic systems, and enhancing integration with experimental structural biology workflows. The continued synergy between theoretical advances, computational innovations, and experimental validation ensures that NMR shielding analysis will remain an indispensable tool for molecular structure elucidation across chemistry, materials science, and drug discovery.

A fundamental challenge in the theoretical calculation of Nuclear Magnetic Resonance (NMR) parameters is the gauge invariance problem. In quantum chemical calculations, the computed NMR chemical shielding constants should be independent of the chosen coordinate system. However, in practice, when finite basis sets are used, the results can become gauge-dependent, meaning that the calculated NMR chemical shifts artificially vary with the origin chosen for the magnetic vector potential. This problem is particularly pronounced in calculations on large molecules or those involving heavy elements, where gauge errors can lead to significant inaccuracies that compromise the predictive value of the computations.

The gauge invariance problem arises because the presence of an external magnetic field introduces a vector potential into the quantum mechanical Hamiltonian. The exact solution to this Hamiltonian would naturally be gauge-invariant, but the use of localized atomic orbital basis sets breaks this inherent invariance. This creates a critical methodological hurdle that must be overcome to achieve chemically accurate NMR predictions, especially for applications in drug development and materials science where reliable computational predictions can guide expensive synthetic efforts. The development of robust solutions to this problem has been a central focus in computational NMR for decades, leading to several sophisticated theoretical approaches.

The Gauge-Including Atomic Orbital (GIAO) Approach

The Gauge-Including Atomic Orbital (GIAO) method, also known as London Atomic Orbitals, represents the most widely adopted solution to the gauge invariance problem in computational chemistry. The fundamental innovation of the GIAO approach involves constructing basis functions that explicitly include the magnetic field vector potential. A GIAO basis function χ is defined as χ_μ(B) = exp[(-i/2c)(B × R_μ) ⋅ r] ⋅ χ_μ(0), where χ_μ(0) is the standard field-independent atomic orbital, B is the magnetic field vector, R_μ is the position vector of the basis function's center, and r is the electron coordinate vector. This complex phase factor ensures that each atomic orbital transforms correctly under gauge transformations, making the overall wavefunction and the resulting NMR shieldings intrinsically gauge-invariant.

The GIAO method has been successfully implemented in numerous quantum chemistry packages, including the ADF software platform, where it serves as the foundation for NMR chemical shift calculations [10]. The implementation requires careful handling of both the diamagnetic and paramagnetic contributions to the shielding tensor. For practical computation, the ADF implementation requires both the adf.rkf (TAPE21) result file and a TAPE10 file that contains the SCF potential from an initial ADF calculation [10]. The GIAO method's principal advantage lies in its rapid convergence with basis set size compared to alternative approaches, typically delivering accurate results with relatively compact basis sets.

Table 1: Key Features of the GIAO (Gauge-Including Atomic Orbital) Approach

| Feature | Description | Implication for NMR Calculations |

|---|---|---|

| Basis Set Dependence | Complex basis functions with field-dependent phase factors | Reduces gauge origin error, faster convergence with basis set size |

| Implementation Complexity | Requires modification of Hamiltonian and integral derivatives | Computationally demanding but highly accurate |

| Relativistic Compatibility | Compatible with ZORA and spin-orbit treatments [10] | Suitable for heavy elements and organometallic complexes |

| Systematic Improvement | Accuracy improves with basis set quality and DFT functional | Predictable path to higher accuracy through computational cost |

Alternative Modern Approaches to Gauge Invariance

While GIAO represents the gold standard, several alternative approaches have been developed to address the gauge invariance problem, each with distinct advantages and limitations.

The Continuous Set of Gauge Transformations (CSGT) method represents an important alternative strategy. Rather than using field-dependent basis functions, CSGT calculates the shielding tensor at each point in space using a different gauge origin chosen specifically for that point—typically the point itself. This distributed gauge origin approach effectively eliminates gauge dependence but requires careful numerical integration over molecular space. CSGT implementations often leverage density functional theory and have been shown to produce results comparable to GIAO for many organic molecules, though they may exhibit different performance for metallic systems or molecules with complex electron delocalization.

The Individual Gauge for Localized Orbitals (IGLO) approach constitutes another significant methodology. IGLO uses localized molecular orbitals and assigns each orbital its own gauge origin, typically chosen at the orbital's center. This method can be computationally efficient for small to medium-sized molecules but may face challenges in systems where orbital localization is difficult or ambiguous. The performance of IGLO can be sensitive to the localization procedure employed, potentially introducing methodological dependencies that are less pronounced in the GIAO approach.

Comparative Performance of Modern Methods

The relative performance of different gauge-invariant methods depends critically on the chemical system under investigation, the chosen computational parameters, and the specific NMR parameters of interest. The table below summarizes a qualitative comparison of the most widely used approaches.

Table 2: Comparison of Gauge-Invariant Methods for NMR Chemical Shift Calculations

| Method | Gauge Handling Approach | Computational Cost | Best Application Areas | Key Limitations |

|---|---|---|---|---|

| GIAO | Field-dependent complex atomic orbitals | High (efficient with modern algorithms) | Universal application, heavy elements, aromatic systems [10] | Implementation complexity; requires analytical derivatives |

| CSGT | Distributed gauge origins in real space | Moderate to High | Organic molecules, main-element chemistry | Integration sensitivity for metallic systems |

| IGLO | Individual gauges for localized orbitals | Moderate | Small to medium organic molecules | Performance depends on localization scheme |

When implementing these methods within density functional theory, the choice of exchange-correlation functional introduces another dimension of variability. For instance, the SAOP potential has been shown to yield "isotropic chemical shifts which are substantially improved over both LDA and GGA functionals" according to ADF documentation [10]. However, certain computational restrictions apply, as "Meta-GGA's and meta-hybrids should not be used in combination with NMR chemical shielding calculations" in the ADF implementation due to incorrect inclusion of GIAO terms [10].

Experimental Protocols and Computational Methodologies

Standard Protocol for GIAO-NMR Calculations

A robust workflow for calculating NMR chemical shifts using the GIAO approach involves several critical steps that ensure gauge-invariant, chemically accurate results:

- Molecular Geometry Optimization: Begin with a carefully optimized molecular geometry using an appropriate level of theory (e.g., DFT with a functional such as B3LYP and a basis set like def2-TZVP).

- Single-Point Energy Calculation: Perform a single-point energy calculation on the optimized structure using ADF with the keyword

SAVE TAPE10to store the SCF potential [10]. - NMR Calculation Setup: Execute the NMR property module using the generated

adf.rkf(TAPE21) andTAPE10files as input [10]. - Relativistic Treatment Selection: For heavy elements, incorporate relativistic effects using the ZORA Hamiltonian, ensuring consistent use of either scaled or unscaled approaches throughout the study [10].

- Reference Compound Calculation: Compute the shielding constant for a reference compound (e.g., TMS for 1H and 13C) using identical computational parameters.

- Chemical Shift Derivation: Calculate the final chemical shift

δ_ifor nucleusiusing the formulaδ_i = σ_ref - σ_i, whereσ_refandσ_iare the shielding constants of the reference and target nuclei, respectively [10].

The following workflow diagram illustrates the standard protocol for GIAO-NMR calculations:

Special Considerations for Heavy Elements

For systems containing heavy elements, additional theoretical considerations become crucial. The ADF documentation specifically notes that "NMR calculations on systems computed by ADF with Spin Orbit relativistic effects included must have used NOSYM symmetry in the ADF calculation" [10]. Furthermore, an "improved exchange-correlation kernel, as was implemented by J. Autschbach" can be activated using the USE FXC keyword, which is particularly important for spin-orbit coupled calculations [10]. These technical details highlight the sophisticated treatment required for heavy elements, where relativistic effects significantly influence NMR parameters.

Implementing gauge-invariant NMR calculations requires access to specialized software tools and methodological components. The following table details essential "research reagent solutions" for computational chemists working in this domain.

Table 3: Essential Computational Tools for Gauge-Invariant NMR Calculations

| Tool Category | Specific Examples | Function in NMR Research |

|---|---|---|

| Quantum Chemistry Software | ADF [10], Gaussian, ORCA | Provides implementations of GIAO, CSGT, and other gauge-invariant methods |

| Relativistic Methods | ZORA (scaled/unscaled) [10], Spin-Orbit coupling | Accounts for relativistic effects critical for heavy elements |

| Exchange-Correlation Functionals | SAOP [10], GGA, Hybrid Functionals | Determines accuracy of NMR shielding predictions; some functionals have restrictions |

| Basis Sets | Slater-type orbitals, Gaussian-type orbitals | Basis set quality and completeness directly impact gauge invariance and accuracy |

| Analysis Modules | NBO analysis, shielding tensor visualization [10] | Enables interpretation of NMR parameters in terms of chemical structure |

The gauge invariance problem in computational NMR has been largely addressed through sophisticated theoretical approaches, with the Gauge-Including Atomic Orbital (GIAO) method emerging as the most robust and widely adopted solution. Its compatibility with relativistic treatments like ZORA and consistent performance across diverse chemical systems make it particularly valuable for pharmaceutical and materials science applications where predictive accuracy is paramount. While alternative methods like CSGT and IGLO offer valuable insights and occasionally computational advantages for specific systems, GIAO remains the benchmark for comprehensive NMR parameter prediction.

Future methodological developments will likely focus on enhancing the computational efficiency of gauge-invariant calculations for large biomolecular systems, improving the treatment of environmental effects through explicit solvation models, and refining relativistic methodologies for increasingly heavy elements. As quantum chemical methods continue to evolve alongside computational hardware, the integration of gauge-invariant NMR prediction into automated workflow tools will further expand its utility in drug discovery and materials characterization, solidifying its role as an indispensable component of the modern computational chemist's toolkit.

Relativistic quantum chemistry combines the principles of relativistic mechanics with quantum chemistry to accurately calculate the properties and structure of elements, particularly the heavier members of the periodic table [11]. For much of the history of quantum mechanics, relativistic effects were considered negligible for chemical systems, a sentiment famously echoed by Paul Dirac in 1929 [11]. However, since the 1970s, it became clear that this assumption fails for heavy elements, where electrons, especially those in s and p orbitals, attain significant velocities relative to the speed of light [11] [12]. Relativistic effects are formally defined as the discrepancies between values calculated by models that incorporate relativity and those that do not [11]. These effects are no longer mere curiosities but are essential for explaining fundamental chemical behaviors, from the color of gold and the liquidity of mercury at room temperature to the performance of lead-acid batteries [11] [12].

Within the specific context of Nuclear Magnetic Resonance (NMR) parameters, relativistic effects become critically important. The presence of a heavy atom in a molecule can profoundly influence the NMR chemical shifts and spin-spin coupling constants, both for itself and for nearby light atoms [13] [14]. Accurately computing these parameters for systems containing heavy elements necessitates a relativistic quantum mechanical treatment, making this a central focus in modern computational chemistry methodologies for NMR research [14].

When Relativistic Effects Matter: Key Elements and Phenomena

Relativistic effects grow roughly with the square of the atomic number (Z²), becoming substantial for elements in the 6th period and dominant in the 7th period of the periodic table [12]. The following table summarizes key elements and properties where relativistic effects are most pronounced.

Table 1: Elemental Systems and Properties Significantly Influenced by Relativistic Effects

| Element/System | Property Influenced | Non-Relativistic Expectation | Relativistic Reality |

|---|---|---|---|

| Gold (Au) | Color | Silvery, like copper and silver [11] | Yellow/Golden due to blue light absorption [11] |

| Mercury (Hg) | Physical State | Solid at room temperature, like cadmium [11] | Liquid metal (m.p. -39°C) [11] |

| Caesium (Cs) | Color | Silver-white, like other alkali metals [11] | Pale golden yellow [11] |

| Lead-Acid Battery | Voltage | Behaves like tin, low voltage [11] [12] | ~12 V (10 V from relativistic effects) [11] [12] |

| Thallium (Tl), Lead (Pb), Bismuth (Bi) | Oxidation Chemistry | Stable +3, +4, +5 states, respectively [11] | Inert-pair effect: Stable +1, +2, +3 states [11] |

| Lanthanides | Atomic Radius | Gradual decrease (Lanthanide Contraction) | ~10% of the contraction is relativistic in origin [11] |

| Superheavy Elements (Rf-Og) | Chemical Properties | Extrapolated from lighter congeners [12] | Chemistry is "predominantly controlled" by relativity [12] |

The qualitative understanding of these phenomena stems from two primary relativistic corrections: the mass-velocity correction and spin-orbit coupling. The mass-velocity correction accounts for the increase in electron mass as its speed approaches the speed of light, given by (m{\text{rel}} = me / \sqrt{1 - (v_e/c)^2}) [11]. This leads to a contraction and stabilization of s and p orbitals (direct relativistic effect) [12]. Consequently, orbitals with higher angular momentum (d and f orbitals) become more shielded from the nuclear charge and expand (indirect relativistic effect) [12]. Spin-orbit (SO) coupling, the third major relativistic effect, splits orbitals with non-zero angular momentum (e.g., p, d, f) into subsets with different total angular momentum (e.g., p₁/₂ and p₃/₂), further complicating the electronic structure of heavy atoms [12] [15].

Diagram: The Primary Mechanisms of Relativistic Effects in Heavy Atoms

Relativistic Effects on NMR Parameters: The HALA and HAHA Phenomena

In NMR spectroscopy, relativistic effects are not just a minor correction but a dominant factor for systems containing heavy atoms. Two key phenomena are observed:

- HALA (Heavy Atom on Light Atom Effect): The presence of a heavy atom can significantly shift the NMR chemical shifts of nearby light nuclei (e.g., ¹H, ¹³C, ¹⁵N, ¹⁹F) [13] [14]. This is primarily due to the spin-orbit coupling term of the heavy atom, which polarizes the electron density and alters the shielding of the light nucleus [14].

- HAHA (Heavy Atom on Heavy Atom Effect): Relativistic effects also dramatically alter the NMR parameters of the heavy atoms themselves, such as ¹⁹⁹Hg, ¹⁹⁵Pt, ²⁰⁷Pb, and halogens [16] [17]. For these nuclei, scalar relativistic effects (mass-velocity and Darwin terms) are often the dominant contributors to their chemical shifts, though spin-orbit coupling can also play a major role [17].

The importance of these effects is starkly illustrated in the hydrogen halide series (HF, HCl, HBr, HI). The experimental ¹H NMR chemical shift changes dramatically down the group, a trend that can only be reproduced computationally by including spin-orbit relativistic corrections [13]. Similarly, for ¹⁹⁹Hg, non-relativistic computational methods fail to reproduce experimental chemical shifts, while relativistic methods like ZORA (Zeroth-Order Regular Approximation) show excellent agreement, enabling the use of ¹⁹⁹Hg NMR as a robust structural descriptor [17].

Comparative Performance of Quantum Chemical Methods for NMR

The accurate calculation of NMR parameters in heavy-element systems requires methods that incorporate relativistic corrections. The table below compares the performance of different computational approaches.

Table 2: Comparison of Quantum Chemical Methods for Relativistic NMR Parameter Calculation

| Computational Method | Relativistic Treatment | Typical Application Scope | Performance & Notes |

|---|---|---|---|

| Zeroth-Order Regular Approximation (ZORA) | Scalar Relativistic (SR) or Spin-Orbit (SO) [13] | Molecules with heavy atoms (e.g., I, At, Hg) [16] [13] [17] | Excellent performance for ¹H shifts in H-X; SR good for structural trends, SO essential for accurate shifts [13]. Efficient and widely used. |

| Dirac–Kohn–Sham (Four-Component) | Full Relativistic [14] | Benchmark calculations; systems with extreme relativistic effects [18] [14] | The most theoretically rigorous approach. High computational cost but serves as a gold standard [18]. |

| Relativistic Effective Core Potentials (RECPs) | Implicit (via pseudopotential) [15] | Large systems where full relativity is prohibitive [15] | Reduces computational cost by replacing core electrons. Accuracy depends on pseudopotential quality [15]. |

| Douglas-Kroll-Hess (DKH) | Scalar Relativistic [15] | Medium-to-large molecules with heavy atoms [15] | High accuracy for scalar properties. More approximate than four-component methods but more efficient [15]. |

| Non-Relativistic Hamiltonian | None | Light elements (Z < ~30) only [16] | Fails qualitatively for NMR parameters of heavy atoms and their light neighbors (e.g., HALA effect) [16] [13]. |

The choice of methodology is critical. For instance, a study on halogen-bonded complexes showed that relativistic corrections are essential for calculating NMR parameters when involving iodine and astatine, with the ZORA Hamiltonian providing the necessary accuracy [16]. Furthermore, a 2025 study on mercury-DOTAM complexes demonstrated that relativistic cluster-based methods (ADF/ReSpect) significantly outperformed non-relativistic approaches for calculating ¹⁹⁹Hg NMR shifts [17].

Diagram: Workflow for Relativistic Computation of NMR Parameters

Experimental Protocols and Research Toolkit

Protocol: Relativistic DFT Calculation of NMR Shifts

This protocol outlines the steps for calculating NMR chemical shifts using relativistic Density Functional Theory (DFT), as demonstrated for the hydrogen halides and mercury complexes [13] [17].

Geometry Optimization: Pre-optimize the molecular structure using a relativistic method. For accurate NMR results, this can be done at the ZORA scalar relativistic level.

- Functional: A hybrid functional like PBE0 is recommended for better accuracy, though GGA functionals like PBE can be used for faster results [13].

- Basis Set: Use an all-electron triple-zeta or quadruple-zeta basis set (e.g., QZ4P, TZ2P) on all atoms, especially those for which NMR parameters are desired [13].

- Relativistic Hamiltonian: Select ZORA (scalar or spin-orbit) [13].

- Numerical Quality: Set to "Good" to ensure accurate integration grids [13].

Single-Point NMR Calculation: Using the optimized geometry, perform a single-point energy calculation with the focus on NMR properties.

- Functional and Basis Set: Consistent with the optimization step. Using QZ4P is recommended for high accuracy [13].

- Relativistic Treatment: For final NMR values, the ZORA spin-orbit Hamiltonian is often necessary to capture the full relativistic effect, especially for the HALA effect and heavy atom shifts [13].

- Property Calculation: Request the calculation of isotropic shielding constants and full shielding tensors for the nuclei of interest.

Chemical Shift Referencing: Convert the calculated absolute shielding constants (σᵢ) to the experimental chemical shift scale (δᵢ) using a reference compound: δᵢ = σref - σᵢ. For example, in the hydrogen halide series, HF is used as the reference (δ(¹H) = 0.0 ppm, σref = 28.72 ppm) [13].

The Scientist's Toolkit for Relativistic NMR

Table 3: Essential Computational Tools and Concepts for Relativistic NMR Studies

| Tool/Concept | Function & Purpose | Example Use-Case |

|---|---|---|

| ZORA Hamiltonian | An efficient method to approximate the solution to the Dirac equation; can be applied in scalar (SR) or spin-orbit (SO) forms [13] [15]. | Calculating the ¹H NMR shift in HI, where SO effects are crucial for accuracy [13]. |

| Relativistic DFT Functionals (PBE0, PB86) | The exchange-correlation functionals used in conjunction with relativistic Hamiltonians to describe electron interaction. Hybrid functionals (PBE0) generally offer better accuracy [13]. | Geometry optimization and NMR property calculation for the W@Au₁₂ cluster [18]. |

| All-Electron Basis Sets (QZ4P, TZ2P) | High-quality basis sets that explicitly describe all electrons in the system, necessary for accurate property calculations on heavy atoms [13]. | Achieving quantitative agreement with experimental ¹³C and ¹⁵N shifts in Hg-complexes [17]. |

| Relativistic Effective Core Potentials (RECPs) | Pseudopotentials that replace the core electrons of a heavy atom, incorporating relativistic effects implicitly to reduce computational cost [15]. | Modeling the electronic structure of large gold nanoclusters or actinide complexes [18] [15]. |

| Energy Decomposition Analysis (EDA) | A method to decompose interaction energies into components (electrostatic, Pauli repulsion, orbital interaction) to understand bonding [16]. | Analyzing the nature of halogen bonds in complexes involving heavy halogens like At [16]. |

Relativistic effects are not peripheral concerns but central determinants of the chemical and spectroscopic behavior of heavy elements. For researchers relying on NMR spectroscopy, ignoring these effects leads to qualitatively and quantitatively incorrect results. The development of efficient and accurate relativistic methods like ZORA and DKH within quantum chemical software has moved these tools from specialist domains to essential components of the computational chemist's arsenal. As research pushes further into the chemistry of superheavy elements and complex heavy-atom materials, and as the demand for precise structural elucidation in drug discovery and materials science grows, the role of relativistic quantum chemistry in predicting and interpreting NMR parameters will only become more critical. The continued refinement of these methods ensures that scientists have the necessary tools to explore the fascinating and non-intuitive chemistry governed by Einstein's theory of relativity.

In the field of computational chemistry, the prediction of Nuclear Magnetic Resonance (NMR) parameters relies on sophisticated quantum chemical methods that balance theoretical accuracy with computational feasibility. The virial theorem and the concept of the complete basis set (CBS) limit represent two fundamental approximations that profoundly impact the reliability of these predictions. The virial theorem governs the relationship between kinetic and potential energy in molecular systems, providing a critical check on wavefunction quality, while the CBS limit represents the theoretical target where properties become independent of basis set size. Understanding these approximations is particularly crucial for researchers and drug development professionals who depend on computational NMR for structural elucidation of complex biological molecules, metallopharmaceuticals, and novel materials.

This guide provides a comprehensive comparison of quantum chemical methods for NMR parameters research, examining how different theoretical approaches navigate the trade-offs between accuracy and computational cost. We evaluate performance across multiple methodologies, from Density Functional Theory (DFT) to wavefunction-based approaches, focusing specifically on their application to NMR chemical shift predictions in biologically relevant systems.

Theoretical Framework

The Complete Basis Set Limit in NMR Calculations

The complete basis set limit represents an idealized state where the calculated molecular properties become invariant to further expansion of the basis set. For NMR parameters, particularly chemical shielding tensors, approaching this limit is essential for obtaining results comparable to experimental data. The chemical shift (δ) is a dimensionless parameter representing the relative resonance frequency of nuclei in a sample compared to a reference standard, defined as the ratio of the frequency difference to the spectrometer's operating frequency [2].

Different quantum chemical methods approach the CBS limit at varying rates. Hartree-Fock (HF) methods show poor convergence behavior, with studies demonstrating that "HF values show quite a different tendency to MP2, and even in the CBS limit they are far from experiment for not only the isotropic shielding of carbonyl carbon but also most shielding anisotropies" [19]. In contrast, Møller-Plesset perturbation theory (MP2) demonstrates superior performance, with "MP2 results in the CBS limit show[ing] the best agreement with experiment" for chemical shielding tensors in peptide systems [19].

Interestingly, Density Functional Theory (DFT) exhibits unique behavior in basis set convergence. Research indicates that "small basis-set (double- or triple-zeta) results are often fortuitously in better agreement with the experiment than the CBS ones" due to systematic errors in functionals that partially cancel with basis set incompleteness errors [19]. This phenomenon complicates method selection for NMR parameter prediction.

The Virial Theorem in Electronic Structure Methods

The virial theorem establishes a fundamental relationship between kinetic (T) and potential (V) energy in molecular systems: 2T + V = 0 for atoms and molecules at equilibrium geometries. This theorem serves as a critical quality metric for wavefunctions - deviations from this relationship indicate inadequate description of electron correlation or basis set incompleteness.

While not explicitly discussed in the search results, the implications of the virial theorem underpin the reliability of all quantum chemical methods for NMR parameter prediction. Methods that better satisfy the virial theorem typically provide more accurate electronic distributions, which directly impacts the precision of calculated NMR parameters like chemical shifts and coupling constants. The theorem is particularly relevant when employing embedded or hybrid methods like ONIOM, where consistency between different theoretical levels is essential for accurate property predictions.

Comparative Analysis of Quantum Chemical Methods

Performance Evaluation of Theoretical Methods

Table 1: Performance Comparison of Quantum Chemical Methods for NMR Parameters

| Method | Theoretical Foundation | Basis Set Convergence | NMR Parameter Accuracy | Computational Cost | Ideal Application Scope |

|---|---|---|---|---|---|

| HF | Wavefunction theory | Slow, poor convergence | Poor for shielding anisotropies [19] | Moderate | Small molecules, educational applications |

| DFT | Electron density | Variable, error cancellation with small basis sets [19] | Good with selected functionals [2] [20] | Moderate to High | Medium to large systems, transition metals |

| MP2 | Electron correlation | Excellent, best in CBS limit [19] | Highest for peptides [19] | High | Small to medium biomolecules |

| Coupled-Cluster | High-level correlation | Excellent but expensive [2] | Reference quality [2] | Very High | Benchmark calculations |

Table 2: DFT Functional Performance for 49Ti NMR Chemical Shift Prediction

| Functional | Basis Set | Relativistic Treatment | Mean Absolute Deviation (ppm) | R² | Computational Cost |

|---|---|---|---|---|---|

| OLYP [20] | NMR-DKH (newly developed) | DKH2 | 48 | 0.9888 | Moderate |

| 4c-BLYP [20] | dyall.VDZ | 4-component relativistic | 62 | 0.9860 | High |

| cM06L [20] | pcSseg-3 | Not specified | Good but not quantified | Not specified | Very High |

| B3LYP [20] | 6-31G(d) | Non-relativistic | 67-110 | Not specified | Low |

| BPW91 [20] | Not specified | Non-relativistic | 127 | Not specified | Low |

Basis Set Selection Strategies

The choice of basis set significantly impacts the accuracy of NMR parameters. Specialized basis sets have been developed for specific applications:

NMR-DKH basis sets: Specifically designed for NMR calculations with relativistic corrections, these basis sets have shown excellent performance for transition metals including Ti, Pt, Tc, and Co [20]. The recently developed Ti NMR-DKH basis set provides "excellent agreement with experimental data and with lower computational cost" compared to full 4-component relativistic approaches [20].

Mixed basis set approach: This strategy employs different basis sets for different parts of the molecule, offering superior performance to ONIOM methods for chemical shielding calculations. Research shows "the mixed basis set method provides better results than ONIOM, compared to CBS calculations using the nonpartitioned full systems" for peptide fragments [19].

Complete basis set extrapolation: For the highest accuracy, CBS extrapolation techniques can be applied, particularly with MP2 methods which show the best performance in the CBS limit for peptide systems [19].

Experimental Protocols and Methodologies

Protocol for Transition Metal NMR Parameters

The accurate prediction of NMR parameters for transition metals requires careful method selection and validation. For titanium-49 NMR chemical shifts, the following protocol has demonstrated excellent performance:

Geometry Optimization: Optimize molecular structures at the BLYP/def2-SVP level with implicit solvation using IEF-PCM (UFF) model [20].

Chemical Shift Calculation: Compute NMR chemical shifts at the GIAO-OLYP/NMR-DKH level with the same implicit solvation model [20].

Relativistic Treatment: Apply second-order Douglas-Kroll-Hess (DKH2) relativistic corrections through the specially designed NMR-DKH basis set [20].

Validation: Compare calculated values against experimental data using [TiCl₄] as reference compound, with expected chemical shift range from -1389 to +1325 ppm [20].

This protocol achieves a mean absolute deviation of only 48 ppm with a coefficient of determination (R²) of 0.9888 across 41 Ti(IV) complexes, outperforming more computationally expensive 4-component relativistic approaches [20].

Protocol for Biomolecular NMR Parameters

For peptide and protein systems, different considerations apply:

Method Selection: MP2 methods in the CBS limit provide the best agreement with experiment for chemical shielding tensors in peptide fragments [19].

Basis Set Strategy: Employ mixed basis set approaches rather than ONIOM methods for more accurate results [19].

Error Awareness: Recognize that DFT with small basis sets may show fortuitous agreement with experiment due to error cancellation, which doesn't persist at the CBS limit [19].

Validation Metrics: Assess both isotropic shielding and shielding anisotropy for comprehensive evaluation of method performance [19].

Research Reagent Solutions

Table 3: Essential Computational Tools for NMR Parameter Prediction

| Tool/Resource | Type | Function | Application Example |

|---|---|---|---|

| NMR-DKH Basis Sets [20] | Specialized basis sets | Provides accurate NMR parameters with relativistic corrections | Transition metal NMR chemical shifts |

| GIAO Method [20] | Computational approach | Gauge-including atomic orbitals for origin-independent chemical shifts | NMR parameters in diverse molecular systems |

| IEF-PCM [20] | Solvation model | Implicit solvation treatment for solution-phase NMR | Biological molecules in aqueous environments |

| SIMPSON [2] | Simulation package | Models pulse sequences and anisotropic interactions | Solid-state NMR of powdered samples |

| Spinach Library [2] | Simulation library | Large-scale Liouville space reductions for efficient NMR simulation | Complex spin systems in solution and solid state |

Workflow and Method Selection Strategy

(NMR Parameter Prediction Workflow)

The accurate prediction of NMR parameters requires careful consideration of key approximations, particularly the complete basis set limit and the implications of the virial theorem. Our comparison reveals that method performance is highly system-dependent:

For transition metal complexes, specialized protocols using DFT with NMR-DKH basis sets provide excellent accuracy at moderate computational cost, significantly outperforming more expensive 4-component relativistic approaches for Ti-49 NMR chemical shifts [20].

For peptide and protein systems, MP2 methods in the complete basis set limit deliver superior performance for chemical shielding tensors, while DFT exhibits unusual behavior where small basis sets sometimes provide fortuitously better agreement due to error cancellation [19].

For drug development applications, where both organic fragments and metallopharmaceuticals are relevant, a multi-strategy approach is essential. The mixed basis set method offers advantages over ONIOM for fragment-based calculations, providing better balance between accuracy and computational efficiency [19].

These findings underscore the importance of selecting appropriate theoretical methods matched to specific chemical systems, rather than seeking a universal approach. The continuing development of specialized basis sets and computational protocols promises further enhancements in the accuracy and efficiency of NMR parameter prediction for pharmaceutical research and structural biology.

Methodologies in Practice: DFT, Wavefunction, and Hybrid Approaches for Biomolecules

Density Functional Theory (DFT) has established itself as the predominant quantum chemical method for predicting Nuclear Magnetic Resonance (NMR) parameters in medium-to-large molecules, occupying a crucial niche between highly accurate but computationally expensive ab initio methods and faster but less reliable empirical approaches. This balance of reasonable computational cost and acceptable accuracy makes DFT particularly valuable for researchers studying molecular structures of chemical and biological relevance. The method's significance stems from its ability to calculate electronic properties that directly influence NMR parameters, connecting molecular geometry to spectroscopic observables through quantum mechanical principles. While DFT is fundamentally an exact theory, its practical application requires approximations in the exchange-correlation functional, making the choice of functional critical for achieving reliable results [21].

The importance of DFT in molecular sciences is evidenced by its penetration across chemistry, physics, and biology, with the 1998 Nobel Prize awarded to Walter Kohn for its development [21]. For NMR parameter prediction, DFT serves as a pivotal tool that enables researchers to interpret complex spectra, validate molecular structures, and gain insights into electronic environments that experimental data alone cannot provide. This guide examines DFT's performance against alternative methods, providing experimental data and protocols to inform researchers' computational strategies.

Core Theoretical Framework

DFT calculates molecular electronic structure by determining the electron density rather than solving the many-electron wavefunction, significantly reducing computational complexity. For NMR parameters, the method computes nuclear shielding tensors and indirect spin-spin coupling constants, which correlate with experimental chemical shifts and J-couplings. The fundamental workflow involves two sequential calculations: geometry optimization followed by NMR parameter prediction using the gauge-including atomic orbital (GIAO) method, which ensures results are independent of the coordinate system choice [22] [23].

The accuracy of DFT-derived NMR parameters strongly depends on the selected exchange-correlation functional and basis set. Benchmarks across multiple studies reveal that no single functional performs optimally for all nuclei or molecular systems, requiring researchers to match computational methods to their specific applications [24] [22]. Solvation effects must be incorporated through implicit solvent models like the Polarizable Continuum Model (PCM) or Solvation Model based on Density (SMD) to approximate solution-phase conditions [22] [23].

Standard Computational Protocols

Geometry Optimization Protocol:

- Method: B3LYP-D3/6-311G(d,p) level theory, including dispersion correction D3 [22]

- Solvation: Polarizable Continuum Model (PCM) with chloroform parameters [22] [23]

- Conformational Sampling: Generate multiple conformers using ETKDG method, optimize with MMFF94 force field, then apply DFT optimization to low-energy structures [23]

- Validation: Frequency calculations to confirm optimized structures represent true minima (no imaginary frequencies)

NMR Parameter Calculation Protocol:

- Method: Gauge-Independent Atomic Orbital (GIAO) approach [22] [23]

- Functional: ωB97X-D/def2-SVP for 13C NMR; WP04/6-311++G(2d,p) for 1H NMR [22]

- Solvation: Include solvent effects consistently with optimization step [23]

- Reference: Use linear scaling relative to tetramethylsilane (TMS) calculated at same level of theory [22]

The following diagram illustrates the complete DFT workflow for NMR parameter prediction:

Performance Comparison: DFT vs. Alternative Methods

Quantitative Accuracy Assessment

Table 1: Performance Comparison of NMR Prediction Methods for Organic Molecules

| Method Category | Specific Method | 1H δ MAE (ppm) | 13C δ MAE (ppm) | Computational Cost | System Size Limit |

|---|---|---|---|---|---|

| DFT (Recommended) | ωB97X-D/def2-SVP | 0.07-0.19 | 0.5-2.9 | Hours to days | ~100 atoms |

| DFT (Cs compounds) | rev-vdW-DF2 | N/A | N/A | Similar to above | Similar to above |

| Machine Learning | IMPRESSION-G2 | 0.07 | 0.8 | Milliseconds | ~1000 g/mol |

| Machine Learning | CSTShift | 0.078-0.185 | 0.504-0.944 | Seconds | ~64 atoms |

| Coupled Cluster | CCSD(T) | <0.05 | <0.3 | Days to weeks | ~20 atoms |

| Empirical | HOSE codes | 0.1-0.3 | 1-3 | Milliseconds | No limit |

MAE = Mean Absolute Error compared to experimental values; N/A = Data not available in search results for this specific nucleus [22] [23] [25].

Application-Specific Performance

DFT's performance varies significantly across different nuclear environments and molecular systems. For light atoms (1H, 13C) in organic molecules, well-validated functionals like WP04 and ωB97X-D achieve experimental accuracy of 0.07-0.19 ppm for 1H and 0.5-2.9 ppm for 13C chemical shifts [22]. For heavier nuclei like 133Cs, specialized functionals including rev-vdW-DF2 and PBEsol+D3 provide optimal geometry and chemical shift prediction for nuclear waste immobilization studies [24].

For J-coupling constants, which are more sensitive to three-dimensional geometry, DFT methods can predict 3JHH couplings with accuracy approaching 0.15 Hz when appropriate functionals and basis sets are employed [25]. The method's ability to naturally include electron correlation effects, albeit approximately, makes it superior to Hartree-Fock for properties dependent on subtle electronic distribution changes.

Emerging Alternatives: Machine Learning Challenges

Machine learning (ML) approaches represent the most significant emerging challenge to DFT's dominance in NMR prediction. These methods learn the relationship between molecular structure and NMR parameters from large DFT-computed or experimental datasets, achieving remarkable speed improvements. The IMPRESSION-G2 model predicts approximately 5000 chemical shifts and scalar couplings per molecule in <50 milliseconds – approximately 10^6-times faster than DFT calculations starting from 3D structures [25].

When combined with fast GFN2-xTB geometry optimizations, complete ML workflows for NMR predictions are 10^3-10^4 times faster than wholly DFT-based workflows while maintaining comparable accuracy [25]. Similarly, the CSTShift model, a 3D graph neural network incorporating DFT-calculated shielding tensor descriptors, achieves mean absolute errors of 0.078-0.185 ppm for 1H and 0.504-0.944 ppm for 13C chemical shifts on benchmark datasets [23].

ML methods currently face limitations in generalizability across diverse chemical spaces and require extensive training datasets. However, their rapid advancement suggests an evolving computational landscape where ML may handle routine predictions while DFT focuses on complex cases requiring deeper theoretical analysis [23] [25].

Table 2: Key Research Reagent Solutions for DFT NMR Calculations

| Resource Category | Specific Tools | Function/Purpose | Availability |

|---|---|---|---|

| Quantum Chemistry Software | Gaussian, ORCA, FHI-aims | Perform DFT calculations including geometry optimization and NMR property prediction | Commercial and academic licenses |

| Reference Datasets | DELTA50, NMRShiftDB2, CHESHIRE | Benchmarking and validation of computational methods | Publicly available |

| Solvation Models | PCM, SMD, COSMO | Incorporate solvent effects into calculations | Integrated in major quantum chemistry packages |

| Structure Generation | RDKit, ETKDG | Generate initial 3D molecular structures and conformers | Open source |

| Machine Learning NMR | IMPRESSION-G2, CSTShift | Rapid prediction of NMR parameters using ML models | Research implementations |

| Specialized Functionals | WP04, ωB97X-D, rev-vdW-DF2 | Optimized for specific NMR nuclei and applications | Included in standard packages |

DFT maintains its position as the workhorse for NMR parameter prediction in medium-to-large molecules due to its robust theoretical foundation, extensive validation across chemical spaces, and favorable balance between computational cost and accuracy. While machine learning methods present compelling advantages in speed and are rapidly closing the accuracy gap, DFT continues to provide the fundamental theoretical framework and training data that enable these advanced ML approaches.

The future of computational NMR likely involves integrated workflows where ML handles high-throughput screening and DFT provides definitive analysis for complex cases. Method development continues to address DFT's limitations, particularly for heavy elements requiring relativistic treatments and for weak interactions like dispersion that influence NMR parameters. For researchers requiring reliable NMR predictions for molecular structure elucidation, drug development, or materials characterization, DFT remains an indispensable tool in the computational chemistry arsenal.

In the field of computational nuclear magnetic resonance (NMR), the prediction of parameters such as chemical shifts and coupling constants relies heavily on the accurate description of a molecule's electronic structure. While Density Functional Theory (DFT) offers a practical balance between cost and accuracy for many applications, wavefunction-based methods like Møller-Plesset perturbation theory (MP2) and Coupled-Cluster (CC) provide systematically improvable, high-accuracy benchmarks that are essential for validating more approximate methods and for studying challenging chemical systems. These methods explicitly treat electron correlation—the error introduced by the mean-field approximation in Hartree-Fock theory—which is crucial for predicting molecular properties, including NMR parameters. Their ability to deliver near-experimental accuracy makes them indispensable in advanced research, particularly in pharmaceutical development where reliable structural information is critical [2] [26] [27].

This guide provides a comparative analysis of MP2 and Coupled-Cluster methods, detailing their theoretical foundations, computational performance, and practical application in predicting NMR parameters. Designed for researchers and drug development professionals, it offers objective performance data and protocols to inform methodological choices in computational spectroscopy.

Theoretical Foundations and Computational Hierarchies

The Electron Correlation Problem

The Hartree-Fock (HF) method provides the foundational wavefunction for post-Hartree-Fock approaches. However, it does not account for the correlated motion of electrons, treating them as moving in an average field. This neglect of electron correlation leads to significant errors in calculated energies and molecular properties. Wavefunction-based correlation methods improve upon the HF solution by adding excitations from occupied to virtual orbitals, offering a more physically realistic model [26].

Method Formulations