Electromagnetic Field Quantization: From Quantum Theory to Biomedical Applications

This article provides a comprehensive exploration of electromagnetic field quantization, bridging fundamental quantum theory with practical applications in biomedical research and drug development.

Electromagnetic Field Quantization: From Quantum Theory to Biomedical Applications

Abstract

This article provides a comprehensive exploration of electromagnetic field quantization, bridging fundamental quantum theory with practical applications in biomedical research and drug development. It covers foundational concepts from Planck's quantum hypothesis to modern first and second quantization frameworks. The article details methodological advances for complex media like photonic gratings and single-molecule emitters, addresses key challenges in translating quantum optical phenomena into clinical tools, and offers comparative analysis of different quantization approaches. Aimed at researchers and drug development professionals, this guide synthesizes theoretical physics with the practical needs of translational science, highlighting how quantum light control can innovate diagnostics and therapeutic monitoring.

Quantum Foundations: From Light Quanta to Modern Photon Concepts

This whitepaper delineates the historical trajectory and technical foundations of Planck's quantum hypothesis and its profound role in establishing the principle of particle-wave duality. Framed within a broader thesis on understanding electromagnetic radiation quantization research, this document elucidates the fundamental break from classical physics that occurred at the dawn of the 20th century. The subsequent paradigm shift not only redefined our comprehension of light and matter but also laid the essential groundwork for modern technologies, including advanced methods in drug discovery and development where accurate molecular-level modeling is paramount [1] [2]. We provide an in-depth analysis of the core concepts, quantitative frameworks, and pivotal experiments, supplemented with structured data and visual workflows to aid researchers and scientists in navigating this critical field.

Historical Foundation: From Blackbody Radiation to Energy Quanta

The Ultraviolet Catastrophe and Planck's Radical Solution

At the end of the 19th century, physicists were unable to explain the observed spectrum of radiation emitted by a black body—an idealized object that absorbs and emits all radiation frequencies [3]. Classical physics, based on Maxwell's equations and thermodynamics, predicted that a hot object should emit radiation with intensity increasing without bound as the wavelength decreases, leading to the nonsensical prediction of infinite energy in the ultraviolet region of the spectrum. This theoretical failure was termed the "ultraviolet catastrophe" [4].

In 1900, Max Planck heuristically derived a formula that perfectly matched the experimental data across all wavelengths [3] [4]. His mathematical solution, however, required a physically radical assumption: that the energy of the electromagnetic oscillators in the blackbody walls could not vary continuously, but could only change in discrete increments, or quanta. The energy (E) of each quantum was proportional to the frequency (f) of the radiation: [ E = h f ] where (h) is the fundamental constant of nature now known as Planck's constant ((6.626 \times 10^{-34} \text{J·s})) [4]. Planck himself regarded this quantum hypothesis as a mathematical artifice initially, but it marked the birth of quantum theory [3].

Einstein and the Photoelectric Effect: Extending the Quantum Hypothesis

In 1905, Albert Einstein extended Planck's concept far beyond its original context [4]. He proposed that light itself consists of discrete energy packets, later called photons. This bold extension explained the photoelectric effect, where light striking a metal surface ejects electrons. Classical wave theory could not explain why electron energy depended on the light's frequency, not its intensity. Einstein showed that this was a natural consequence if light consisted of quanta with energy (E = h f), for which he received the Nobel Prize in 1921 [4].

The Core Principle: Particle-Wave Duality

The Double-Slit Experiment and Quantum Reality

The concept of duality is most famously demonstrated by the double-slit experiment. First performed by Thomas Young in 1801 to show light's wave nature, it took on a deeper meaning with the advent of quantum mechanics [5]. When a beam of light passes through two slits, it produces an interference pattern on a detection screen, characteristic of waves. Even when the light intensity is reduced so that only one photon is present at a time, the interference pattern gradually emerges, suggesting each photon interferes with itself [6].

Stranger still, any attempt to determine which slit a photon passes through causes the interference pattern to disappear, and the light behaves as a stream of particles [5] [6]. This demonstrates the core tenet of quantum mechanics: physical objects exhibit both particle and wave nature, but these two aspects are complementary; they cannot be observed simultaneously [5]. The act of measurement disturbs the system, collapsing the wave function and determining the state in which the object is observed [6].

An Idealized Modern Test

A 2025 study from MIT performed an idealized version of this experiment using atoms as slits and weak light beams to ensure each atom scattered at most one photon [5]. The researchers confirmed that the more information was obtained about the photon's path (its particle nature), the lower the visibility of the interference pattern (its wave nature). This result, achieved with atomic-level precision, validates the quantum description over classical intuition [5].

Quantitative Frameworks and Data Presentation

Planck's Law Formulations

Planck's radiation law describes the spectral density of electromagnetic radiation emitted by a black body in thermal equilibrium. The following table summarizes its various forms [3].

Table 1: Different Formulations of Planck's Law

| Variable | Distribution Form | Primary Domain |

|---|---|---|

| Frequency (ν) | ( B{\nu}(\nu,T) = \dfrac{2h\nu^3}{c^2} \dfrac{1}{e^{h\nu/(k{\mathrm{B}}T)} - 1} ) | Experimental |

| Wavelength (λ) | ( B{\lambda}(\lambda,T) = \dfrac{2hc^2}{\lambda^5} \dfrac{1}{e^{hc/(\lambda k{\mathrm{B}}T)} - 1} ) | Experimental |

| Angular Frequency (ω) | ( B{\omega}(\omega,T) = \dfrac{\hbar \omega^3}{4\pi^3 c^2} \dfrac{1}{e^{\hbar \omega/(k{\mathrm{B}}T)} - 1} ) | Theoretical |

| Wavenumber (ν̃) | ( B{\tilde{\nu}}({\tilde{\nu}},T) = 2hc^2{\tilde{\nu}}^3 \dfrac{1}{e^{hc{\tilde{\nu}}/(k{\mathrm{B}}T)} - 1} ) | Theoretical |

Where (k_{\mathrm{B}}) is the Boltzmann constant, (h) is the Planck constant, and (c) is the speed of light.

Key Findings from Double-Slit Experimentation

The following table synthesizes the relationship between path information and interference, a cornerstone of duality, as demonstrated in modern experiments.

Table 2: Summary of Double-Slit Experiment Findings on Wave-Particle Duality

| Experimental Condition | Particle-like Behavior | Wave-like Behavior | Simultaneous Observation |

|---|---|---|---|

| No which-path measurement | Not observed | High-visibility interference pattern | Not possible |

| Which-path measurement active | Observed (photon takes a definite path) | Interference pattern disappears | Not possible |

| Tuned "fuzziness" of slits [5] | Anti-correlated with wave behavior | Anti-correlated with particle behavior | Remains impossible |

Experimental Protocols and Methodologies

Methodology: Modern Double-Slit with Atomic Slits

The following workflow details the methodology used in the 2025 MIT experiment that confirmed quantum principles with high precision [5].

Methodology: Probing Blackbody Radiation

This workflow outlines the core logical process for investigating blackbody radiation that led to Planck's hypothesis.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key conceptual and material components essential for research in quantum mechanics and electromagnetic radiation.

Table 3: Essential Research Components for Quantum Radiation Studies

| Item/Concept | Function/Description | Relevance to Field |

|---|---|---|

| Planck's Constant (h) | Fundamental constant setting the scale of quantum effects. | Quantizes energy, links frequency and energy (E = hf). |

| Blackbody Radiator | An idealized perfect absorber and emitter of radiation. | Standardized source for studying emission spectra. |

| Ultracold Atom Lattice | A crystal-like structure of atoms cooled to near absolute zero. | Serves as precise, quantum-controlled "slits" in modern experiments. |

| Single-Photon Source | A device that emits light one photon at a time. | Enables the study of quantum behavior at the single-particle level. |

| Quantum State Preparation | The ability to initialize a quantum system in a specific state. | Allows control over variables like atomic "fuzziness" to probe duality. |

Implications for Modern Science and Drug Discovery

The principles born from Planck's hypothesis and particle-wave duality directly enable modern computational chemistry and drug discovery. A drug's action often depends on its interaction with a biological target at the quantum level, where electrons and nuclei are governed by the Schrödinger equation [1] [2]. Accurate modeling of these interactions is crucial for predicting drug efficacy and safety.

Classical computational methods face fundamental limitations in simulating large quantum systems, as the required computational resources grow exponentially [2]. This has spurred the integration of quantum computing into the drug development pipeline. Quantum computers, which use qubits to represent superposition and entanglement, natively handle quantum states, offering a potential exponential advantage for tasks like molecular simulation and the prediction of drug-target interactions [2]. This convergence of quantum physics and pharmaceutical science holds the promise of significantly reducing the time and cost of bringing new therapeutics to market [1] [2].

The photon, the fundamental quantum of light and the force carrier for the electromagnetic interaction, has been a cornerstone of physics for over a century. Despite its central role in forming the quantum backbone of technologies from lasers to quantum computers, its exact nature remains surprisingly elusive. Albert Einstein himself admitted that decades of thought had not brought him closer to fully understanding "light quanta" [7]. This whitepaper details the core principles of quantized energy and photons, framing them within contemporary research contexts that are reshaping quantum photonics and communication technologies. The quantization of electromagnetic radiation into discrete energy packets—photons—represents a foundational shift from classical descriptions, enabling the manipulation of light at the single-particle level for advanced scientific and technological applications.

Theoretical Foundations: From Classical Waves to Quantum Particles

The Photon as a Quantum Entity

In quantum optics, the photon is often treated as a single excitation of a field mode, fully delocalized in time. Conversely, in experimental practice, a photon is often considered an energy packet emitted by an atom, molecule, or quantum dot, localized in both time and space [7]. This duality necessitates a robust theoretical framework to connect mathematical formalism with physical reality.

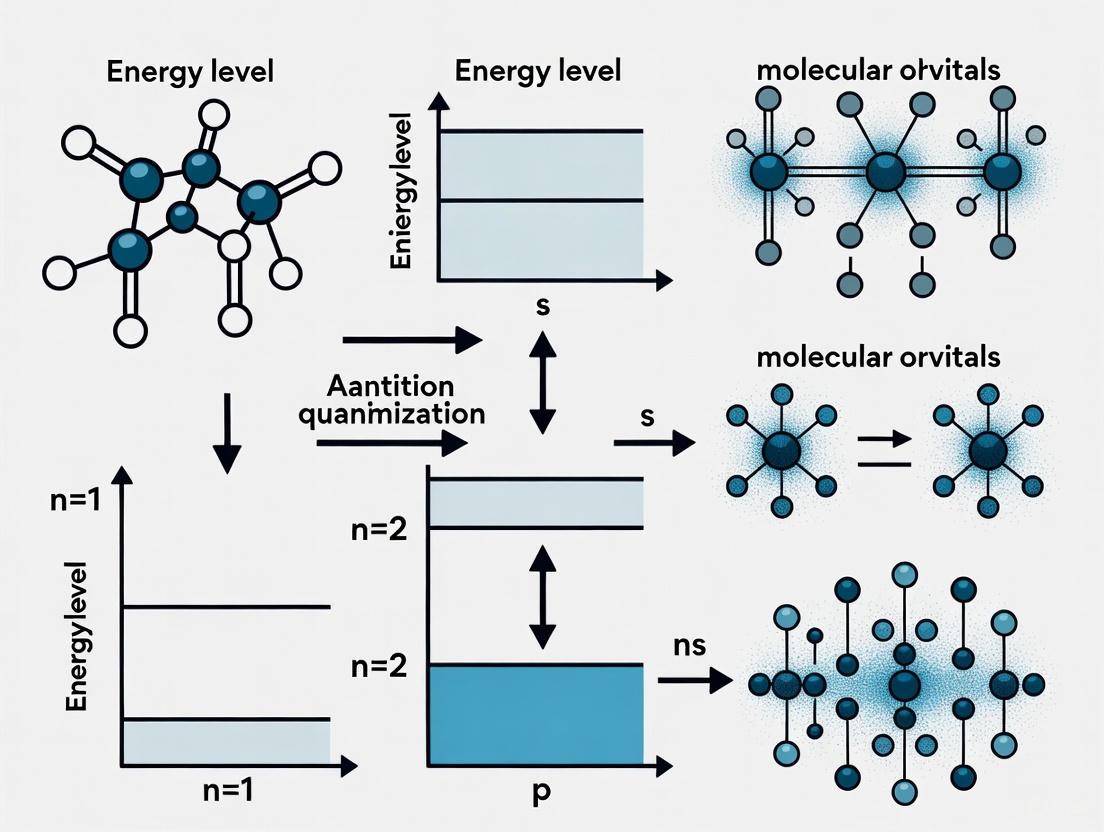

Mathematical Frameworks: First vs. Second Quantization

A significant recent theoretical advance involves applying "first quantization"—a method traditionally used for massive particles like electrons—directly to the photon. Unlike the standard "second quantization" approach, which describes light as quantized fields with varying numbers of particles, first quantization fixes the number of photons and treats them as individual quantum objects [7].

This approach yields Schrödinger-like and Dirac-like equations for photons, providing a direct link between quantum states and familiar classical light forms such as Gaussian beams, Bessel beams, and polarized wavepackets [7]. The framework reveals a direct correspondence between classical electromagnetism and quantum photon behavior, highlighting what has been termed the "quantum diversity" of photons—showing how their many classical optical forms can be understood as specific quantum states [7].

Table 1: Key Theoretical Frameworks for Photon Quantization

| Framework | Core Principle | Mathematical Description | Primary Application |

|---|---|---|---|

| First Quantization | Treats photons as individual quantum objects with fixed numbers | Vector wave functions leading to Maxwell's equations; Schrödinger-like and Dirac-like equations | Connecting quantum states directly to classical light forms (Gaussian beams, Bessel beams) |

| Second Quantization | Describes light as quantized fields with varying particle numbers | Fock space, creation and annihilation operators | Quantum field theory, quantum optics with variable photon numbers |

| Macroscopic Quantization | Quantum phenomena observable in macroscopic electrical circuits | Quantum mechanical tunnelling and energy quantisation in electric circuits | Superconducting qubits, quantum computing processors |

Contemporary Research Contexts

Macroscopic Quantum Systems

The 2025 Nobel Prize in Physics recognized groundbreaking work on macroscopic quantum mechanical tunnelling and energy quantization in electric circuits, demonstrating that quantum phenomena are not confined to the microscopic realm [8]. This research showed that electrical circuits could exhibit fundamental quantum mechanical phenomena such as tunnelling and quantized energy levels, paving the way for superconducting quantum bits (qubits) that now form the basis of several quantum computing platforms [8].

Quantum Networking and Communication

Recent advances in quantum networking rely critically on manipulating single photons and entangled photon pairs. Quantum networks have the potential to unlock new applications by allowing quantum computers to communicate using qubits rather than classical bits [9]. These qubits often take the form of photonic states that are so closely correlated, or entangled, that measuring the property of one partner automatically determines the property of the other, even at great distances [9] [10].

A critical challenge in preserving entanglement involves maintaining a well-defined, stable phase—the position of peaks within the light wave must remain fixed relative to the peaks of a standard light source. For some entangled states, changing the travel path by a mere 700 nanometers—approximately the wavelength of red light—will destroy the entanglement [10].

Phase Stabilization with Faint Light

The National Institute of Standards and Technology (NIST) has developed an innovative method that stabilizes the phase of light in optical fibers using extremely faint signals. This technique works with fewer than a million photons per second, nearly 10,000 times fainter than standard techniques require [10]. This advance removes a critical obstacle for long-distance quantum communication, where bright reference signals would disturb delicate quantum states.

The method relies on the interplay between a source of highly stable laser light and the faint light traveling through the quantum network. The stable laser acts as a reference to measure the phase of the network light using the principle of interference. If the interference is not completely destructive, the number of photons detected reveals the phase difference, which can then be adjusted to create destructive interference, effectively locking the phase of the photonic states in the network to the phase of the reference laser [10].

Diagram 1: NIST Phase Stabilization Technique

Experimental Protocols and Methodologies

Protocol: Entanglement Generation with Squeezed Light

Researchers at Fermilab and Caltech have demonstrated a protocol using "squeezed light"—a special state of light with reduced noise and enhanced sensitivity—to dramatically increase the rate at which quantum networks can generate entangled particle pairs over long distances [9].

Methodology:

- Light Preparation: Generate squeezed light at two distant locations (Node A and Node B)

- Beam Transmission: Both light sources are sent to a central site equidistant between them

- Beam Splitting: Route the light through a beam splitter that separates them into two beams (transmitted and reflected)

- Beam Recombination: The light beams return to the central location where they recombine

- Quantum Measurement: Measure the recombined light at the central station

- Entanglement Creation: The measurement destroys the light but leaves multiple pairs of long-distance entangled qubits between the original nodes due to quantum non-locality

The method's effectiveness depends on the strength of the squeezing, with current technology allowing up to 15 decibels of squeezing, potentially producing 3-4 entangled qubit pairs per operation [9].

Diagram 2: Squeezed Light Entanglement Protocol

Protocol: Phase Stabilization of Quantum Networks

The NIST protocol for phase stabilization of faint light signals enables long-distance quantum communication by maintaining phase coherence across kilometer-scale distances [10].

Methodology:

- Reference Laser: Generate a highly stable laser light source with fixed phase

- Signal Transmission: Send faint light signals (<1 million photons/second) through optical fiber network

- Interference Setup: Combine the reference laser light with the network light at a beam splitter

- Photon Counting: Use displaced photon counting to measure interference patterns

- Phase Difference Calculation: Calculate phase difference from photon detection statistics

- Feedback Loop: Apply corrective phase adjustments to create destructive interference

- Phase Lock: Maintain locked phase relationship between reference and network light

The technique demonstrated stabilization over 120 kilometers (75 miles) of optical fiber connecting NIST and the University of Maryland, with phase uncertainty comparable to pinpointing the Earth-moon distance to within a human hair's width [10].

Table 2: Quantitative Performance Metrics for Quantum Photon Experiments

| Experimental Parameter | NIST Phase Stabilization | Fermilab Squeezed Light Entanglement | Traditional Methods |

|---|---|---|---|

| Photon Flux | <1 million photons/second | Varies with squeezing strength | Trillions of photons/second |

| Distance Demonstrated | 120 km (75 miles) | Metropolitan-scale distances | Laboratory scale only |

| Entanglement Generation Rate | N/A | Significantly increased vs. standard methods | Single pair per operation |

| Phase Stability | Sub-wavelength precision over 120 km | N/A | Limited by environmental noise |

| Squeezing Level | N/A | Up to 15 decibels with current technology | Not applicable |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Quantum Photonics Experiments

| Material/Component | Function | Experimental Relevance |

|---|---|---|

| Superconducting Qubits | Artificial atoms for quantum information processing | Basis for quantum processors; enabled by macroscopic quantum phenomena recognized by 2025 Nobel Prize [8] |

| Josephson Junctions | Nonlinear circuit elements exhibiting quantum effects | Enable superconducting qubits; fundamental to macroscopic quantum tunnelling experiments [8] |

| Squeezed Light Sources | Generate light states with noise below standard quantum limit | Increase entanglement generation rates in quantum networks [9] |

| Single-Photon Detectors | Detect individual photon arrivals with precise timing | Essential for quantum key distribution and measuring faint light signals in phase stabilization [10] |

| High-Coherence Lasers | Provide stable phase reference for interference experiments | Critical for phase stabilization protocols in quantum networks [10] |

| Optical Beam Splitters | Divide and recombine light beams with precise ratios | Enable interference experiments and entanglement generation [9] [10] |

| Low-Loss Optical Fibers | Transmit photon signals over long distances with minimal loss | Infrastructure for quantum networks connecting distant nodes [9] [10] |

| Phase Modulators | Adjust optical phase of light signals with high precision | Implement feedback in phase stabilization systems [10] |

The core principles of quantized energy and photons continue to evolve through both theoretical refinements and experimental advances. The recent development of first quantization approaches for photons provides a more intuitive mathematical foundation connecting classical electromagnetism with quantum behavior [7]. Simultaneously, advances in controlling macroscopic quantum systems [8] and developing practical quantum networking technologies [9] [10] demonstrate the increasingly sophisticated manipulation of quantum light states for next-generation technologies. These interconnected developments across theoretical, experimental, and applied domains highlight the vibrant progress in understanding and utilizing the quantum nature of light, with profound implications for computation, communication, and fundamental physics.

The photon, a cornerstone of modern physics for over a century, remains surprisingly elusive. Despite its fundamental role in quantum science and technology, Albert Einstein himself admitted that decades of thought had not brought him closer to fully understanding "light quanta" [7]. This enigma manifests in the starkly different conceptions of photons across theoretical and experimental domains. In quantum optics, the photon is often treated as a single excitation of a field mode—fully delocalized in time. Conversely, experimental practice defines it as a localized energy packet emitted by atoms, molecules, or quantum dots, possessing specific temporal and spatial boundaries [7] [11]. This conceptual divide presents significant challenges for advancing quantum technologies, particularly in the rapidly developing field of quantum photonics. As 2025 marks the International Year of Quantum Science and Technology, celebrating a century of quantum science, resolving this dichotomy becomes increasingly urgent for harnessing the full potential of photons in applications ranging from quantum computing to deep-space communication [7] [11].

Theoretical Frameworks: From First Principles to Quantum Diversity

The First Quantization Approach

Traditional quantum optics predominantly employs second quantization, which describes light as quantized fields with variable particle numbers. A revolutionary framework proposed by Boris Chichkov applies "first quantization"—a method traditionally reserved for massive particles like electrons—directly to photons [7] [11]. This approach treats photons as individual quantum objects with a fixed number of particles, deriving photon wave and field equations that directly bridge quantum mechanics with classical electromagnetism [7].

The foundation of this method lies in the photon energy-momentum relation in a dielectric medium with refractive index n:

where E = ℏω represents photon energy and p = ℏk represents photon momentum (Minkowski expression) [11]. By converting this relation to quantum operators (Ê = iℏ∂/∂t for energy and p̂ = -iℏ∇ for momentum), the framework yields Schrödinger-like and Dirac-like equations for photons [11]. This operator-based approach naturally leads to Maxwell's equations, demonstrating that photon electric and magnetic fields obey the same fundamental relationships as classical electromagnetic fields [11].

Table 1: Comparison of Quantization Approaches for Photons

| Aspect | First Quantization | Second Quantization |

|---|---|---|

| Particle Number | Fixed number of photons | Variable number of photons |

| Mathematical Foundation | Wave functions, differential operators | Creation/annihilation operators, Fock states |

| Connection to Classical Theory | Direct derivation of Maxwell's equations | Quantum field operators |

| Photon Description | Individual quantum objects | Field excitations |

| Practical Applications | Single-photon technologies, photon wave packets | Cavity QED, quantum electrodynamics |

Quantum Diversity of Photons

The first quantization formalism reveals what Chichkov terms "quantum diversity" of photons—demonstrating that various classical optical forms represent specific quantum states [7]. Gaussian beams, Bessel beams, polarized wavepackets, and other structured light manifestations emerge as natural solutions to the photon wave equations [7]. This framework elegantly unifies classical optics with quantum mechanics by showing that classical light behaviors are manifestations of underlying quantum states.

The approach extends to complex scenarios including photon propagation in dispersive media, where speed depends on frequency. By incorporating the refractive index into the equations, the theory provides new expressions for photon energy density and intensity that align with established models while offering novel insights for single-photon technologies [7] [11].

Experimental Signatures: From Antibunching to Entanglement

Photon Statistics and Antibunching

Experimental photon physics relies heavily on statistical measurements to distinguish quantum light from classical light. The second-order correlation function, g²(τ), serves as a crucial experimental signature [12]. For classical light sources, g²(0) ≥ 1, indicating photon bunching. In contrast, quantum emitters exhibit antibunching with g²(0) < 1, with the ultimate quantum signature being g²(0) = 0 [12].

The pioneering 1977 experiment by Kimble et al. investigating photon statistics of light emitted from single atoms demonstrated this antibunching effect [12]. As illustrated in Figure 13.1a-b of the research, immediately after a photon emission event, the atom resides in its ground state, causing the probability for emitting another photon to drop to nearly zero before gradually recovering to the average emission probability [12]. This antibunching dip represents a purely quantum-mechanical phenomenon impossible to replicate with classical light sources.

Subsequent experiments with single trapped ions in Paul traps revealed even deeper antibunching dips with g²(0) = 0, approaching the ideal single-photon emitter characteristics [12]. When driven by intense lasers, these systems exhibit Rabi oscillations manifesting as modulations in the correlation function, providing additional insights into light-matter interactions at the quantum level [12].

Controlled Photon Emission and Entanglement

To overcome the limitations of continuous excitation—namely, the inability to control exact emission times and successive photon emissions—researchers developed pulsed excitation schemes using three-level atomic systems [12]. For instance, with a π-pulse exciting |e⟩ → |x⟩ and emission occurring from |x⟩ → |g⟩, researchers can precisely control emission timing while preventing additional emissions until the system is actively reset to its initial state |e⟩ [12].

This controlled approach enabled groundbreaking experiments in photon-matter entanglement. Researchers Monroe and Weinfurter successfully entangled photon polarization with atomic spins, creating entangled atom-photon states [12]:

Projective measurements on pairs of photons emitted from distant atoms facilitated entanglement swapping, enabling quantum state teleportation and establishing matter-light quantum interfaces that combine memory capabilities with quantum communication [12].

Table 2: Key Experimental Techniques in Quantum Photonics

| Experimental Technique | Physical Principle | Key Measurement/Outcome |

|---|---|---|

| Hanbury Brown-Twiss Interferometry | Second-order correlation function g²(τ) | Antibunching (g²(0) < 1) for quantum emitters |

| Pulsed Excitation of Three-Level Systems | Controlled population transfer | Precise emission timing, prevented multiple emissions |

| Photon-Matter Entanglement | Entanglement between photon polarization and atomic spin | Quantum state teleportation, entanglement swapping |

| Stellar Intensity Interferometry | Timing correlations between distant telescopes | High-resolution astronomical imaging |

| Single-Photon Lidar | Direct time-of-flight with single-photon detection | Enhanced range and resolution in 3D imaging |

Advanced Experimental Methodologies

Photon Detection Technologies

Cutting-edge photon detection technologies have dramatically advanced experimental capabilities. Several key detector types have emerged for specific applications:

Single-Photon Avalanche Diodes (SPADs) represent workhorse detectors across numerous applications from quantum key distribution to light detection and ranging (LiDAR) [13]. Recent innovations include perimeter-gated SPADs that mitigate edge breakdown issues and improve noise performance for low-light imaging [13]. Advanced detection strategies based on inter-arrival times of photons enhance long-distance LiDAR capabilities, with simulations showing up to 46% increase in maximum measurement range under challenging background illumination of approximately 100 kiloLux [13].

Superconducting Nanowire Single-Photon Detectors (SNSPDs) offer exceptional performance metrics with high detection efficiency and low timing jitter, making them ideal for quantum key distribution and deep-space optical communication [13]. Recent enhancements through localized helium ion irradiation have boosted the system detection efficiency of NbTiN SNSPDs from below 2% to saturating efficiency levels [13].

Novel Detection Systems continue to emerge, such as high-throughput photon counting systems based on microchannel plate photomultiplier tubes capable of nearly dead-time-free acquisition at rates up to 97MHz with temporal resolution better than 50ps FWHM [13]. These systems enable significant advancements in stellar intensity interferometry, allowing observations of dimmer stars with higher significance [13].

Quantum Photonics Experimental Workflow

The experimental workflow in quantum photonics integrates these advanced detection technologies with precise quantum state engineering and control systems.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions in Experimental Quantum Photonics

| Item | Function | Specific Examples & Performance Metrics |

|---|---|---|

| Single-Photon Emitters | Source of quantum light | Single atoms/ions, quantum dots, color centers in diamonds |

| Single-Photon Detectors | Detection of individual photons | SPADs (GeSi SPADs operating at room temperature), SNSPDs (NbTiN with >80% efficiency) [13] |

| High NA Collection Optics | Efficient photon collection from emitters | Aspheric lenses, microscope objectives (up to 25% collection efficiency from atoms) [12] |

| Time-Correlated Counting Electronics | Precise timing of photon arrival events | Time-to-digital converters, timing electronics (<40ps FWHM resolution at 10MHz rates) [13] |

| Cryogenic Systems | Temperature control for detectors and emitters | Closed-cycle cryostats, liquid helium systems (for SNSPD operation at 2-4K) |

| Optical Cavities | Enhancement of light-matter interaction | Fabry-Pérot resonators, photonic crystal cavities (increased collection efficiency into single mode) |

Emerging Applications and Future Directions

Quantum-Enabled Technologies

The reconciliation of theoretical and experimental photon perspectives enables transformative technologies across multiple domains:

Deep Space Optical Communication: NASA's Deep Space Optical Communication (DSOC) experiment employs single-photon detectors at both ground receiver and spacecraft terminals, utilizing pulse-position modulation to maximize efficiency for each signal photon across Earth-Mars separations (~0.5-2.5 AU) [13]. The Photon Counting Camera aboard the Psyche spacecraft represents cutting-edge implementation of these principles [13].

Quantum Entanglement in Space: The Space Entanglement and Annealing QUantum Experiment (SEAQUE) mission incorporates a four-channel single-photon detector module engineered to autonomously detect entangled photon pairs and register coincident detection events [13]. The compact unit (126 mm × 94 mm × 63 mm, 0.3 kg, <10W power) exemplifies the miniaturization and robustness required for space-based quantum experiments [13].

Advanced Imaging and Sensing: Single-photon LiDAR systems achieve unprecedented range and resolution through time-of-flight measurements with SPAD arrays [13]. Perimeter-gated SPADs specifically improve noise performance for low-light imaging applications in security surveillance, gesture recognition, and automotive sectors [13].

Theoretical Frontiers

The first quantization framework continues to evolve, particularly in addressing photon behavior in complex media. The derivation of novel equations for photon propagation in dispersive media provides a more robust foundation for understanding and engineering single-photon wave packets in realistic environments [7] [11]. This theoretical advancement directly supports the development of single-photon technologies for quantum communication and computation.

The conceptual clarification offered by the first quantization approach—demonstrating direct connections between classical electromagnetism and quantum photon behavior—promises to make photon physics more intuitive for students and more effective for researchers modeling next-generation quantum photonic devices [7]. As quantum photonics continues its rapid development, this theoretical coherence between classical and quantum descriptions will be essential for harnessing the full potential of photons as quantum information carriers.

The photon enigma—the longstanding divide between theoretical descriptions and experimental manifestations of light quanta—finds reconciliation through innovative theoretical frameworks like first quantization and sophisticated experimental methodologies leveraging quantum statistics and advanced detection technologies. The "quantum diversity" of photons revealed through these approaches demonstrates that varied classical optical forms represent specific quantum states, bridging the conceptual gap between wave and particle descriptions. As quantum photonics advances into its second century, this unified understanding enables transformative technologies from deep-space communication to quantum networking, fulfilling the potential of photons as versatile carriers of quantum information across increasingly sophisticated applications.

The quantization of electromagnetic radiation is a cornerstone of modern physics, yet the photon's nature continues to present conceptual challenges. Traditional quantum electrodynamics (QED) employs second quantization, describing light as quantized fields with variable particle numbers. However, an alternative approach using first quantization—treating photons as individual quantum objects with fixed particle numbers—has recently gained renewed attention [7]. This framework directly connects the mathematics of quantum mechanics with classical electromagnetic theory, allowing photons to be described using wave functions in analogy with massive particles.

The fundamental challenge in developing Schrödinger-like and Dirac-like equations for photons stems from their massless, spin-1 character. Unlike electrons, which are massive spin-1/2 particles described by the Dirac equation, photons require a mathematical formalism that respects both their gauge invariance and their transverse nature [14] [15]. Recent research by Chichkov (2025) demonstrates that photons can indeed be described using vector wave functions that naturally lead to Maxwell's equations, providing a direct link between quantum states and familiar classical light forms such as Gaussian beams and polarized wavepackets [16] [7].

This technical guide examines the mathematical foundations of Schrödinger-like and Dirac-like equations for photons, their relationship to classical electrodynamics, and their implications for understanding the quantum-classical correspondence of light.

Theoretical Foundations: First Quantization Approach

The First Quantization Framework for Photons

First quantization for photons represents a significant departure from the standard second quantization methods of quantum field theory. While second quantization describes light as quantized fields with varying particle numbers, first quantization fixes the number of photons and treats them as individual quantum objects [7]. This approach enables the description of photon states using wave functions that satisfy modified Schrödinger-like equations, bridging classical electromagnetism and quantum mechanics.

In this framework, photons are described using vector wave functions rather than the scalar wave functions used for massive particles. This vector nature is essential because photons are massless particles intrinsically tied to electromagnetic fields [7]. The resulting formalism yields Schrödinger-like and Dirac-like equations for photons that provide a direct connection between quantum states and classical optical phenomena.

The Photon Wave Function Controversy

The concept of a photon wave function in coordinate representation has been highly controversial in quantum physics. As highlighted in recent research, "The photon wavefunction notion is highly controversial, especially in the coordinate representation, due to the absence of rigorous spatial localization" [15]. This controversy dates back to 1930 with early attempts to introduce a photon wave function in coordinate representation using local electric fields, which resulted in nonlocal functions that failed Lorentz invariance [15].

The core difficulty lies in defining a proper position operator for massless particles—a problem that affects all relativistic quantum particles, even those with mass [15]. This has led to ongoing debates about the validity and interpretation of photon wave functions, though recent advances have provided more consistent mathematical frameworks.

Mathematical Formalisms

Schrödinger-like Equations for Photons

Schrödinger-like equations for photons can be derived through multiple approaches. One method introduces a photon wave function in momentum space based on Einstein's relativistic energy-momentum relationship for a massless particle (E = cp) [15]. This leads to a momentum-space wave function Φ(𝐩) that satisfies:

[i\hbar\partialt\Phi{\pm}(\mathbf{p}) = H\Phi_{\pm}(\mathbf{p}})]

with a Hermitian Hamiltonian:

[H = \pm ic(\mathbf{p} \times) = \pm c(\mathbf{s} \cdot \mathbf{p}})]

where (\mathbf{s} = (sx, sy, s_z)) represents the vector of spin-1 matrices, and the ± signs correspond to the two possible photon helicities [15].

The coordinate-space wave function can then be defined as a weighted Fourier transform:

[\Psi{\pm}(\mathbf{r},t) = (2\pi\hbar)^{-3} \int \frac{\exp\left[\frac{i(\mathbf{p} \cdot \mathbf{x} - cpt)}{\hbar}\right]}{\sqrt{cp}} \Phi{\pm}(\mathbf{p}) d\mathbf{p}}]

which satisfies a similar Schrödinger-like equation:

[i\hbar\partialt\Psi{\pm}(\mathbf{r},t) = \pm c\left(\mathbf{s} \cdot \frac{\hbar}{i}\nabla\right)\Psi_{\pm}(\mathbf{r},t})]

This formulation maintains consistency with the quantum uncertainty principle and provides a proper foundation for probability densities that satisfy continuity equations [15] [17].

Dirac-like Equations for Photons

Dirac-like equations for photons emerge from the factorization of the second-order wave equations governing electromagnetic propagation. For massive particles, Dirac famously factorized the Klein-Gordon operator to obtain his first-order equation for electrons. Similarly, for photons in confined environments like optical fibers, exact solutions of Laguerre-Gauss and Hermite-Gauss modes reveal a massive energy spectrum where the effective mass depends on confinement and orbital angular momentum [18].

The propagation in such systems is described by a one-dimensional Schrödinger equation equivalent to a 2D space-time Klein-Gordon equation via unitary transformation. The probabilistic interpretation and conservation law require factorizing this Klein-Gordon equation, leading to a 2D Dirac equation with spin [18]. The spin expectation values in this formulation correspond directly to polarization states on the Poincaré sphere, providing a fundamental connection between the Dirac equation formalism and photon polarization phenomena.

Kobe (1999) demonstrated that Maxwell's equations can be formulated as a relativistic Schrödinger-like equation for a single photon of given helicity, with the energy eigenvalue problem revealing both positive and negative energy states [19] [17]. Applying the Feynman concept of antiparticles shows that negative-energy states moving backward in time correspond to antiphoton states with opposite helicity [17].

Table 1: Comparison of Photon Wave Equations in Different Representations

| Formalism | Mathematical Structure | Helicity Treatment | Key Properties |

|---|---|---|---|

| Momentum-space Schrödinger-like | (i\hbar\partialt\Phi{\pm}(\mathbf{p}) = \pm ic(\mathbf{p} \times)\Phi_{\pm}(\mathbf{p}})) | Separate equations for each helicity | Well-defined probability interpretation in p-space |

| Coordinate-space Schrödinger-like | (i\hbar\partialt\Psi{\pm}(\mathbf{r},t) = \pm c(\mathbf{s} \cdot \frac{\hbar}{i}\nabla)\Psi_{\pm}(\mathbf{r},t})) | Separate equations for each helicity | Non-local relationship to EM fields |

| Six-component Dirac-like | Combines both helicities in single equation | Unified treatment of both helicities | Direct connection to Maxwell's equations |

Connection to Maxwell's Equations

The fundamental relationship between these quantum equations and classical electrodynamics is captured through the electromagnetic field associations. The photon wave function directly relates to the Riemann-Silberstein vector (\mathbf{F} = \mathbf{E} + ic\mathbf{B}), which combines the electric and magnetic fields into a single complex quantity [15]. This connection ensures that all knowledge about classical optical fields can be directly transferred to photons, demonstrating their "quantum diversity" [16].

Chichkov (2025) has shown that using first quantization techniques allows derivation of the Maxwell equations for photons in magneto-dielectric media, creating a direct bridge between the quantum description of individual photons and the classical behavior of electromagnetic waves [16]. This approach has been extended to describe photon propagation in dispersive media through novel equations that modify the standard expressions for photon energy density and intensity to align with established models [7].

Experimental Validation and Applications

Quantum Oscillations in Insulators

Recent experimental work at the National Magnetic Field Laboratory has revealed surprising quantum phenomena that challenge traditional distinctions between materials. Researchers discovered quantum oscillations inside insulating materials, overturning long-held assumptions about electronic behavior [20]. These oscillations, which occur when electrons behave like tiny springs vibrating in response to magnetic fields, were found to originate in the material's bulk rather than its surface.

The experiments used ytterbium boride (YbB12) subjected to magnetic fields reaching 35 Tesla—approximately 35 times stronger than a hospital MRI machine [20]. The discovery reveals what researchers term a "new duality" in materials science, where compounds may behave as both metals and insulators, presenting a fascinating puzzle for future research that may connect to fundamental photon behavior.

Quantum Networking with Squzzzed Light

Advanced applications of photon quantum states are emerging in quantum networking research. Scientists at Fermilab and Caltech have demonstrated methods using squeezed light—a special state of light with reduced noise and enhanced sensitivity—to dramatically increase the rate at which quantum networks can generate entangled particle pairs over long distances [9].

This protocol uses two optical encoding types whose combination helps overcome individual weaknesses and significantly increases the entangled pair generation rate. The method's effectiveness depends on squeezing strength, with current technology allowing up to 15 decibels of squeezing, potentially producing 3-4 entangled qubit pairs [9]. This research addresses critical bottlenecks in building large-scale quantum networks and demonstrates practical applications of advanced photon states.

Table 2: Experimental Methods in Quantum Photonics Research

| Experimental Method | Key Components | Physical Principle | Application Domain |

|---|---|---|---|

| High-field magnetic oscillation | 35 Tesla magnets, YbB12 samples, cryogenic systems | Quantum oscillations in insulator bulk | Fundamental material studies, new duality exploration |

| Squeezed light entanglement | Beam splitters, single-photon detectors, low-loss fibers | Quantum interference of squeezed states | Quantum networks, entanglement distribution |

| Photon emission mapping | Atomic/molecular emitters, dipole radiation patterns | Transition dipole moments | Quantum memory, single-photon sources |

Diagram: First Quantization Framework for Photons

Research Reagents and Materials

The experimental validation of these theoretical frameworks requires specialized materials and instrumentation. Key research components include:

Ytterbium Boride (YbB12) Crystals: Kondo insulator material showing unexpected quantum oscillations in strong magnetic fields, used to study the new conductor-insulator duality [20].

High-Field Magnet Systems: Capable of generating fields up to 35 Tesla, approximately 35 times stronger than clinical MRI systems, essential for observing quantum oscillations in insulating materials [20].

Quantum Optical Components: Including beam splitters, single-photon detectors, and phase stabilizers for manipulating squeezed light states and generating entangled photon pairs [9].

Low-Loss Optical Fibers: Specialized fiber optic cables with minimal signal attenuation, critical for distributing entanglement over long distances in quantum networks [9].

Single-Photon Emitters: Atomic, molecular, or quantum dot systems that can emit individual photons on demand for studying fundamental photon properties [7] [21].

The development of Schrödinger-like and Dirac-like equations for photons through first quantization represents a significant advancement in our understanding of electromagnetic radiation quantization. By providing a direct connection between classical electromagnetic theory and quantum photon behavior, these frameworks reveal the essential quantum diversity of photons—showing how their many classical optical forms can be understood as specific quantum states [16] [7].

This approach offers potential benefits for making photon physics more intuitive for students and researchers while providing practical tools for modeling in single-photon technologies—a rapidly growing area in quantum science [7]. The experimental discoveries of quantum oscillations in insulators and the development of squeezed light protocols for quantum networking demonstrate the continuing relevance of fundamental photon research to both basic science and emerging technologies.

Future research will likely focus on extending these first quantization methods to more complex media, exploring the implications of the conductor-insulator duality in materials, and developing practical applications in quantum computing and networking. The mathematical frameworks described here provide a solid foundation for these continued investigations into the quantum nature of light.

The conceptual divide between classical descriptions of light and its particle-like behavior as photons has long presented a challenge in quantum optics. This whitepaper introduces a paradigm of "quantum diversity" that systematically connects familiar classical light forms to their corresponding quantum states through the framework of first quantization. By treating photons as individual quantum objects with vector wave functions, we establish a direct mathematical bridge between classical electromagnetism and quantum mechanics, enabling researchers to conceptualize photon behavior through more intuitive classical analogs. We present experimental methodologies for generating and characterizing diverse quantum states, along with visualization tools and essential research resources to support practical implementation in quantum photonics research and development.

The photon, first conceptualized by Albert Einstein in 1905 to explain the photoelectric effect, remains surprisingly elusive more than a century later, with even Einstein himself admitting decades of thought had not brought him closer to fully understanding "light quanta" [7]. In quantum optics, photons are typically treated as single excitations of a field mode, fully delocalized in time, while experimental practice often treats them as energy packets emitted by atoms, molecules, or quantum dots that are localized in both time and space [7]. This dichotomy between theoretical description and experimental operation highlights the need for a more unified framework.

The concept of "quantum diversity" emerges from recognizing that the many classical optical forms of light correspond to specific quantum states when viewed through the proper mathematical framework. A recent study has demonstrated that first quantization—a method traditionally applied to particles like electrons—can bridge classical optics and quantum mechanics when applied to photons [7]. This approach provides a fresh foundation for quantum photonics by yielding Schrödinger-like and Dirac-like equations for photons, establishing a direct link between quantum states and familiar classical light forms such as Gaussian beams, Bessel beams, and polarized wavepackets.

Theoretical Foundation: Quantization Approaches

First vs. Second Quantization

The standard approach to describing photons in quantum electrodynamics employs second quantization, which treats electromagnetic fields as operators and photons as excitations of these fields. In this framework, the vector potential for the electromagnetic field is expressed as:

[ \mathbf{A}(\mathbf{r},t) = \sum{\mathbf{k}}\sum{\mu=\pm 1}\left(\mathbf{e}^{(\mu)}(\mathbf{k})a{\mathbf{k}}^{(\mu)}(t)e^{i\mathbf{k}\cdot\mathbf{r}} + \overline{\mathbf{e}}^{(\mu)}(\mathbf{k})\bar{a}{\mathbf{k}}^{(\mu)}(t)e^{-i\mathbf{k}\cdot\mathbf{r}}\right) ]

where (a{\mathbf{k}}^{(\mu)}) and (\bar{a}{\mathbf{k}}^{(\mu)}) become annihilation and creation operators acting on Fock states [22]. This method quantizes the field itself, leading to particle-like excitations we recognize as photons.

In contrast, first quantization fixes the number of photons and treats them as individual quantum objects described by vector wave functions [7]. This approach naturally connects to Maxwell's equations and allows photons to be described using wave functions analogous to those used for massive particles, making the connection to classical light forms more direct and intuitive.

Mathematical Framework of First Quantization

When applying first quantization to photons, we obtain photon wave equations that directly connect to classical electromagnetic theory. The key insight is that photons, being massless particles with spin 1, require vector wave functions rather than the scalar wave functions used for massive spin-0 particles. These vector wave functions satisfy equations that reduce to Maxwell's equations under appropriate conditions [7].

For a single photon, the state can be described by a wave function (\psi(\mathbf{r}, t)) that obeys a Schrödinger-like equation:

[ i\hbar\frac{\partial}{\partial t}\psi(\mathbf{r}, t) = \hat{H}\psi(\mathbf{r}, t) ]

where the Hamiltonian operator (\hat{H}) for the photon reflects its massless nature and connection to the electromagnetic field. The solutions to these equations directly correspond to familiar classical light forms, demonstrating the "quantum diversity" of photons—how a single quantum entity can manifest in classically distinct forms depending on its state preparation and boundary conditions.

Quantum Diversity: Classical-Quantum Correspondence

The principle of quantum diversity establishes that various forms of classical light represent specific quantum states of photons. The table below summarizes key classical-quantum correspondences:

Table 1: Correspondence Between Classical Light Forms and Quantum States

| Classical Light Form | Quantum State Description | Key Applications | Mathematical Representation | ||||

|---|---|---|---|---|---|---|---|

| Gaussian beams | Minimum uncertainty wavepackets | Optical trapping, laser optics | (\psi(\mathbf{r}) = \psi_0 e^{-\frac{r^2}{w^2}}e^{i\mathbf{k}\cdot\mathbf{r}}) | ||||

| Bessel beams | Non-diffracting eigenstates | Optical manipulation, microscopy | (Jm(kr r)e^{im\phi}e^{ik_z z}) | ||||

| Polarized wavepackets | Qubit states | Quantum communication, sensing | (\alpha | R\rangle + \beta | L\rangle) | ||

| Squeezed light [9] | Non-classical states with reduced noise | Quantum metrology, sensing | (\hat{S}(\zeta) | 0\rangle) where (\hat{S}(\zeta) = \exp\left[\frac{1}{2}(\zeta^*\hat{a}^2 - \zeta\hat{a}^{\dagger 2})\right]) | |||

| Entangled photon pairs [9] | Bell states | Quantum networking, computing | (\frac{1}{\sqrt{2}}( | H\rangle | V\rangle + | V\rangle | H\rangle)) |

This correspondence enables researchers to leverage their existing knowledge of classical optics when designing quantum experiments and devices. For instance, the well-known propagation characteristics of Bessel beams in classical optics directly inform their quantum behavior as non-diffracting states, while polarization states familiar from Jones calculus map directly to qubit representations for quantum information applications.

Energy Quantization and Quantum States

The foundation of quantum states lies in energy quantization, first proposed by Max Planck in 1900 to explain blackbody radiation [23]. Planck postulated that energy is quantized in discrete units:

[ E = h\nu ]

where (h = 6.626 \times 10^{-34} \text{J·s}) is Planck's constant and (\nu) is the frequency [23]. This fundamental quantization leads directly to the concept of quantum states—discrete energy levels accessible to a system. In atomic systems, this manifests as distinct electron orbitals; in superconducting circuits, as discrete energy levels in macroscopic quantum systems [24].

A quantum state represents a mathematical entity that contains all information about a quantum system [25]. Pure quantum states are described by vectors in Hilbert space (|\psi\rangle), while mixed states require density matrix representations. Measurements on quantum states yield probability distributions rather than deterministic outcomes, fundamentally distinguishing quantum behavior from classical physics.

Experimental Methodologies

Protocol: Quantum Secret Sharing with Qutrits

Quantum secret sharing enables secure distribution of cryptographic keys among multiple parties, with applications in secure multiparty computation and key management [26]. The following protocol demonstrates implementation using three-level quantum systems (qutrits):

Table 2: Experimental Components for Qutrit Secret Sharing

| Component | Specification | Function |

|---|---|---|

| Photon source | Entangled photon pair source | Generates initial quantum states |

| Phase modulators | Three-channel fiber-integrated | Applies unitary operations U and V |

| Single-photon detectors | Superconducting nanowire (SNSPD) | Detects qutrit states with high efficiency |

| Interferometric setup | Stable fiber-based Mach-Zehnder | Maintains phase stability for qutrit manipulation |

| Control system | FPGA-based timing system | Synchronizes operations with nanosecond precision |

Procedure:

State Preparation: Alice prepares the initial qutrit state (|\psi\rangle = \frac{1}{\sqrt{3}}(|0\rangle + |1\rangle + |2\rangle)) using a non-linear optical source.

Encoding Operation: Alice applies the operator (U^{a0}V^{a1}) to the state based on her secret input data ((a0, a1)), where: [ U = |0\rangle\langle 0| + e^{\frac{2\pi i}{3}}|1\rangle\langle 1| + e^{-\frac{2\pi i}{3}}|2\rangle\langle 2| ] [ V = |0\rangle\langle 0| + e^{\frac{2\pi i}{3}}|1\rangle\langle 1| + e^{\frac{2\pi i}{3}}|2\rangle\langle 2| ]

Sequential Processing:

- Alice sends the qutrit to Bob, who applies (U^{b0}V^{b1}) based on his input data ((b0, b1)).

- Bob forwards the qutrit to Charlie, who applies (U^{c0}V^{c1}) using his data ((c0, c1)).

Measurement: Charlie performs measurement in the Fourier basis: [ \left{ \frac{1}{\sqrt{3}}(1,1,1), \frac{1}{\sqrt{3}}(1,e^{\frac{2\pi i}{3}},e^{-\frac{2\pi i}{3}}), \frac{1}{\sqrt{3}}(1,e^{-\frac{2\pi i}{3}},e^{\frac{2\pi i}{3}}) \right} ] obtaining trit outcome (m).

Validation: Parties announce (a1, b1, c1). If (a1 + b1 + c1 = 0 \mod 3), the round is valid and the shared secret is (a0 + b0 + c_0 = 0 \mod 3). Otherwise, the round is discarded.

Error Estimation: For a sample of runs, all users publicly announce (a0, b0, c_0) to estimate the Quantum Trit Error Rate (QTER) as incorrect outcomes divided by total outcomes [26].

This protocol demonstrates how single-qudit communication enables multiparty quantum protocols with advantages in scalability over entanglement-based approaches, as it requires only a single detection event regardless of the number of parties involved.

Protocol: Entanglement Generation with Squeezed Light

Squeezed light provides a powerful resource for quantum networking applications by enabling generation of multiple entangled pairs per swapping operation [9]. The following methodology details entanglement generation using squeezed light:

Procedure:

Source Preparation: Prepare squeezed light at two distant locations (Node A and Node B) using parametric down-conversion in non-linear crystals.

Beam Combination: Both light sources are sent to a central site equidistant between them and routed through a 50:50 beam splitter that separates them into transmitted and reflected beams.

Interferometric Routing: The light beams return to the central location where they recombine interferometrically.

Measurement: Perform measurement on the recombined light, which destroys the light states but leaves multiple pairs of long-distance entangled qubits between the original nodes.

Verification: Confirm entanglement using quantum state tomography with precise timing synchronization (White Rabbit systems capable of picosecond timestamping) [27].

The effectiveness of this protocol depends on squeezing strength, with current technology allowing up to 15 decibels of squeezing, producing 3-4 entangled qubit pairs per operation [9]. This approach significantly increases the entanglement generation rate compared to conventional methods, addressing a critical bottleneck in building large-scale quantum networks.

Visualization of Quantum Protocols

The following diagrams illustrate key concepts and workflows in quantum photonics experiments, providing visual representations of the protocols and relationships discussed in this whitepaper.

Diagram 1: Qutrit Secret Sharing Protocol

Diagram 2: Quantum Diversity Framework

Diagram 3: Entanglement Swapping with Squeezed Light

Implementation of quantum photonics experiments requires specialized equipment and materials. The following table details essential research reagent solutions for establishing quantum photonics capabilities:

Table 3: Essential Research Reagents and Equipment for Quantum Photonics

| Category | Specific Solution/Device | Technical Function | Research Application |

|---|---|---|---|

| Quantum Light Sources | Entangled photon pair source | Generates correlated photon pairs | Quantum state preparation, QKD |

| Single-photon emitters (quantum dots) | Provides on-demand single photons | Quantum networking, sensing | |

| Squeezed light sources [9] | Produces light with reduced quantum noise | Enhanced precision measurements | |

| Quantum State Manipulation | Phase/amplitude modulators | Applies unitary operations to qubits | Quantum gate implementation |

| Optical circulators | Routes optical signals directionally | Quantum network configuration | |

| Polarization controllers | Manipulates photon polarization states | Qubit encoding and measurement | |

| Quantum Detection | Superconducting nanowire single-photon detectors (SNSPD) | High-efficiency single-photon detection | Quantum state measurement |

| Homodyne/heterodyne detection systems | Measures quadrature amplitudes | Continuous-variable quantum information | |

| Quantum Networking | White Rabbit timing systems [27] | Provides picosecond timing synchronization | Entanglement verification across nodes |

| Flex-grid wavelength division multiplexers [27] | Dynamically routes quantum channels | Scalable quantum network architecture | |

| Quantum memory systems | Stores and retrieves quantum states | Quantum repeater functionality | |

| Computational Tools | PyTheus digital discovery framework [28] | AI-assisted design of quantum experiments | Automated protocol optimization |

| Quantum simulation software | Models complex quantum systems | Protocol design and verification |

The framework of quantum diversity, connecting classical light forms to specific quantum states through first quantization, provides a powerful conceptual bridge between classical electromagnetism and quantum photonics. This approach enables researchers to leverage their existing knowledge of classical optics while designing and implementing quantum protocols, accelerating development in quantum technologies.

The experimental methodologies presented—from qutrit-based secret sharing to squeezed light entanglement generation—demonstrate practical implementations of these principles, with direct applications in secure communications, distributed quantum computing, and precision sensing. The essential research tools and visualization approaches provide a foundation for laboratories seeking to establish or expand quantum photonics capabilities.

Future research directions include extending the first quantization approach to more complex quantum states, developing hybrid quantum-classical networking architectures, and refining the quantum diversity framework to encompass a broader range of classical-quantum correspondences. As quantum networking technologies mature [27], the principles of quantum diversity will play an increasingly important role in designing efficient, scalable quantum systems that leverage the full spectrum of photonic quantum states.

Quantization Methods and Biomedical Implementation Strategies

Second quantization, also referred to as occupation number representation, is a fundamental formalism used to describe and analyze quantum many-body systems, with profound implications for understanding electromagnetic radiation quantization. In quantum field theory, this approach is known as canonical quantization, where fields (typically as the wave functions of matter) are treated as field operators, analogous to how physical quantities like position and momentum are treated as operators in first quantization [29]. The key ideas of this method were introduced in 1927 by Paul Dirac and were later developed notably by Pascual Jordan and Vladimir Fock [29]. This framework provides the mathematical foundation for contemporary research in quantum electromagnetism, including recent advances in quantum networking and macroscopic quantum phenomena.

The fundamental limitation of first quantization lies in its redundancy for indistinguishable particles. First quantized wave functions involve complicated symmetrization procedures to describe physically realizable many-body states because the language inherently asks "Which particle is in which state?" – questions that are not physically meaningful for identical particles [29]. Second quantization resolves this by instead asking "How many particles are there in each state?", eliminating redundant information and providing a more powerful formalism for describing quantum fields [29]. This approach becomes particularly crucial when analyzing electromagnetic fields in structured media, where the spatial properties of the medium dictate the modal structure of the quantized electromagnetic field.

Table: Core Concepts in Second Quantization Framework

| Concept | Mathematical Description | Physical Significance |

|---|---|---|

| Fock States | |[n₁, n₂, ..., n_α, ...]⟩ | Many-body quantum states specified by occupation numbers |

| Creation/Annihilation Operators | aα^†, aα | Operators that add/remove particles from single-particle state α |

| Field Operators | ψ̂(r) = ∑α ψα(r) a_α | Field operators defined via single-particle wavefunctions and creation/annihilation operators |

| Antisymmetry Principle (Fermions) | ΨF(⋯,ri,⋯,rj,⋯) = -ΨF(⋯,rj,⋯,ri,⋯) | Pauli exclusion principle, critical for electronic systems |

| Symmetry Principle (Bosons) | ΨB(⋯,ri,⋯,rj,⋯) = +ΨB(⋯,rj,⋯,ri,⋯) | Bose-Einstein statistics, applicable to photons |

Second Quantization Formalism

Fock Space and Occupation Number Representation

The mathematical framework of second quantization is built upon Fock space, which is defined as the direct sum of all n-particle Hilbert spaces, including a one-dimensional zero-particle space ℂ [29]. In this formalism, the many-body state is represented in the occupation number basis, labeled by a set of occupation numbers:

\|[nα]⟩ ≡ \|n₁, n₂, ⋯, nα, ⋯⟩

where nα represents the number of particles in the single-particle state \|α⟩ [29]. For fermions, the occupation numbers are restricted to nα = 0,1 due to the Pauli exclusion principle, while for bosons, nα can be 0,1,2,3,... with no upper bound [29]. The Fock state with all occupation numbers equal to zero is called the vacuum state, denoted \|0⟩ ≡ \|⋯,0α,⋯⟩ [29].

The construction of Fock states differs significantly between bosons and fermions. For bosons, the N-particle Fock state is given by:

\|[nα]⟩B = (1/N!∏α nα!)^{1/2} 𝒮 ⨂α ψα^{⊗n_α}

where 𝒮 represents the symmetrization operator. For fermions, the corresponding expression incorporates antisymmetrization:

\|[nα]⟩F = 1/√(N!) 𝒜 ⨂α ψα^{⊗n_α}

where 𝒜 represents the antisymmetrization operator [29]. This fundamental mathematical distinction governs the dramatically different behavior of bosonic and fermionic quantum fields in homogeneous and periodic media.

Creation and Annihilation Operators

The creation (aα^†) and annihilation (aα) operators are the fundamental mathematical tools of second quantization that facilitate the construction and manipulation of Fock states. These operators are defined by their action on the occupation number states:

For bosons: aα \|n₁, ..., nα, ...⟩ = √(nα) \|n₁, ..., nα - 1, ...⟩ aα^† \|n₁, ..., nα, ...⟩ = √(nα + 1) \|n₁, ..., nα + 1, ...⟩

For fermions: aα \|n₁, ..., nα, ...⟩ = (-1)^{∑{β<α} nβ} nα \|n₁, ..., 1 - nα, ...⟩ aα^† \|n₁, ..., nα, ...⟩ = (-1)^{∑{β<α} nβ} (1 - nα) \|n₁, ..., 1 + nα, ...⟩

The fermionic operators include a phase factor (-1)^{∑{β<α} nβ} that ensures the antisymmetry of the states [29]. These operators satisfy fundamental (anti)commutation relations that distinguish the two particle statistics:

For bosons: [aα, aβ^†] = δ{αβ}, [aα, aβ] = 0, [aα^†, aβ^†] = 0 For fermions: {aα, aβ^†} = δ{αβ}, {aα, aβ} = 0, {aα^†, aβ^†} = 0

These algebraic structures provide the mathematical foundation for quantizing fields in both homogeneous and periodic media, with profound implications for the behavior of quantum electromagnetic fields in structured environments.

Field Quantization in Homogeneous Media

Electromagnetic Field Quantization

The quantization of the electromagnetic field in homogeneous media begins with the mode expansion of the vector potential in a finite volume V:

Â(r, t) = ∑k ∑{λ=1,2} (ℏ/2ωk ε0 εr V)^{1/2} [â{kλ} ε{kλ} e^{i(k·r - ωkt)} + â{kλ}^† ε{kλ}^* e^{-i(k·r - ω_kt)}]

Here, â{kλ} and â{kλ}^† are the annihilation and creation operators for photons with wavevector k and polarization λ, ε{kλ} represents the polarization vector, ωk is the angular frequency, ε0 is the vacuum permittivity, and εr is the relative permittivity of the homogeneous medium [29]. The dispersion relation in homogeneous media is given by ωk = c|k|/√εr, where c is the speed of light in vacuum.

The Hamiltonian of the free electromagnetic field takes the form:

Ĥ = ∑k ∑{λ=1,2} ℏωk (â{kλ}^† â_{kλ} + 1/2)

This expression reveals the particle-like interpretation of the electromagnetic field, with each term ℏωk (â{kλ}^† â_{kλ} + 1/2) representing the energy of a mode plus the zero-point energy [29]. The quantization procedure ensures that the fundamental commutation relations are satisfied:

[â{kλ}, â{k'λ'}^†] = δ{kk'} δ{λλ'} [â{kλ}, â{k'λ'}] = 0 [â{kλ}^†, â{k'λ'}^†] = 0

Quantum States of Light

The Fock state formalism enables the description of various quantum states of light with distinct statistical properties. Number states \|n⟩ represent states with exactly n photons in a given mode, with â^†â \|n⟩ = n \|n⟩. Coherent states \|α⟩ are eigenstates of the annihilation operator: â \|α⟩ = α \|α⟩, and can be expressed as:

\|α⟩ = e^{-|α|²/2} ∑_{n=0}^∞ (α^n/√(n!)) \|n⟩

These states minimize the uncertainty relation and exhibit Poissonian statistics, making them the quantum states that most closely resemble classical electromagnetic waves [9]. Squeezed states represent another class of nonclassical states where the uncertainty in one quadrature is reduced below the standard quantum limit at the expense of increased uncertainty in the conjugate quadrature. Recent research has demonstrated the application of squeezed light to dramatically increase the rate at which quantum networks can generate entangled particle pairs over long distances [9].

Diagram 1: Field quantization workflow in homogeneous media, showing the sequential process from mode expansion to applications

Field Quantization in Periodic Media

Bloch Mode Expansion

In periodic media, the spatial periodicity fundamentally alters the quantization procedure. The electromagnetic field operator expansion utilizes Bloch modes rather than plane waves:

Â(r, t) = ∑n ∫{BZ} dk (ℏ/2ω{n,k} ε0 εr V)^{1/2} [â{n,k} u{n,k}(r) e^{i(k·r - ω{n,k}t)} + â{n,k}^† u{n,k}^*(r) e^{-i(k·r - ω_{n,k}t)}]

Here, n is the band index, k is the wavevector restricted to the first Brillouin zone, u{n,k}(r) are the Bloch functions with the periodicity of the crystal lattice, and ω{n,k} is the dispersion relation for the nth photonic band [29]. The periodicity leads to the formation of photonic band gaps – frequency ranges where no propagating states exist – which profoundly influences light-matter interactions and quantum optical phenomena.

The creation and annihilation operators for Bloch modes satisfy similar commutation relations:

[â{n,k}, â{n',k'}^†] = δ{nn'} δ(k - k') [â{n,k}, â{n',k'}] = 0 [â{n,k}^†, â_{n',k'}^†] = 0

The Hamiltonian in periodic media takes the form:

Ĥ = ∑n ∫{BZ} dk ℏω{n,k} (â{n,k}^† â_{n,k} + 1/2)

This expression highlights how the photonic density of states is dramatically modified in periodic structures, enabling enhanced light-matter interaction strengths and novel quantum phenomena not accessible in homogeneous media.

Table: Comparative Analysis of Field Quantization Approaches

| Quantization Aspect | Homogeneous Media | Periodic Media |

|---|---|---|

| Mode Functions | Plane waves: e^{ik·r} | Bloch functions: u_{n,k}(r)e^{ik·r} |

| Wavevector Space | Unrestricted k-space | First Brillouin zone (reduced zone scheme) |

| Dispersion Relation | ω = c|k|/√ε_r | Band structure ω_{n,k} with photonic band gaps |

| Density of States | Parabolic ~ ω² | Modified with Van Hove singularities |

| Symmetry | Continuous translation | Discrete translation symmetry |

| Quantum State Engineering | Standard approaches | Enhanced by modified density of states |

Quantum Phenomena in Periodic Media

Periodic media enable extraordinary control over quantum optical processes through engineered density of states. The spontaneous emission rate of quantum emitters, given by Fermi's golden rule as γ ∝ |d·E|² ρ(ω), can be enhanced or suppressed by positioning emitters in photonic crystals with high or low local density of states at the emission frequency. Similarly, nonlinear optical processes can be enhanced through phase-matching techniques exploiting the band structure.

Recent experimental advances have demonstrated macroscopic quantum phenomena in engineered periodic structures. The 2025 Nobel Prize in Physics recognized groundbreaking work on macroscopic quantum tunneling and energy quantization in electrical circuits, where superconducting circuits with Josephson junctions exhibited both quantum tunneling and discrete energy levels in systems large enough to be held in the hand [24] [30]. These experiments, conducted in the 1980s by John Clarke, Michel H. Devoret, and John M. Martinis, revealed that when the superconducting circuit was illuminated with microwave electromagnetic radiation, it absorbed only well-defined amounts of energy, corresponding to the differences between discrete energy levels accessible to the macroscopic current [24].

Experimental Protocols and Methodologies

Macroscopic Quantum Phenomena Detection

The experimental detection of macroscopic quantum phenomena involves sophisticated measurement techniques. The Nobel-winning experiments employed the following methodology [24] [30]:

Device Fabrication: Creation of superconducting circuits approximately one centimeter in size with Josephson junctions formed by superconducting components separated by a thin, nanometer-wide layer of insulating material.

Circuit Design and Control: Precise adjustment of circuit parameters to control the macroscopic Josephson current, which flows due to the coherent collective behavior of charged particles in the superconductor in the absence of applied voltage.

Quantum Tunneling Measurement: Detection of voltage appearance indicating transition to a new energy state via macroscopic quantum tunneling through the energy barrier.

Energy Quantization Verification: Illumination of the superconducting circuit with microwave electromagnetic radiation and observation of discrete energy absorption corresponding to differences between quantized energy levels.

This methodology gave rise to the extremely prolific field of circuit quantum electrodynamics (circuit QED), which represents a fundamental part of experimental platforms capable of manipulating information encoded in quantum systems, including the construction of quantum computers based on superconducting qubits [24].

Squeezed Light Generation Protocol

Recent advances in quantum networking employ squeezed light to enhance entanglement generation rates. The experimental protocol involves [9]:

Squeezed State Preparation: Generation of special states of light with reduced noise and enhanced sensitivity using nonlinear optical processes.

Dual-Source Configuration: Preparation of light at two distant locations with both sources sent to a central site equidistant between them.

Beam Splitting and Recombination: Routing of light beams through a beam splitter that separates them into transmitted and reflected components, which subsequently return to the central location for recombination and measurement.

Entangled Pair Generation: Quantum measurement that destroys the light but leaves multiple pairs of long-distance entangled qubits according to quantum mechanical principles.

The effectiveness of this method depends on the strength of the squeezing, with current technology limiting squeezing to approximately 15 decibels, allowing production of up to three or four entangled qubit pairs [9].

Diagram 2: Squeezed light entanglement generation protocol showing the dual-source configuration for quantum networking

Research Reagent Solutions and Materials

Table: Essential Materials for Quantum Electrodynamics Experiments

| Material/Component | Function | Research Application |

|---|---|---|

| Superconducting Qubits | Macroscopic quantum state implementation | Quantum computing, circuit QED studies [24] |

| Josephson Junctions | Nonlinear circuit element for qubits | Macroscopic quantum tunneling experiments [24] [30] |

| Zeolite Structures | Electromagnetic wave absorption | EM radiation mitigation, radar-absorbing materials [31] |

| Nonlinear Optical Crystals | Squeezed light generation | Quantum networking, entanglement distribution [9] |

| Ion-Exchanged Zeolites | Dielectric property tuning | Frequency-selective EM absorption (500MHz-50GHz) [31] |

| Single-Photon Detectors | Quantum state measurement | Verification of field quantization, quantum state tomography |