Energy Quantization in Chemical Systems: From Quantum Foundations to Revolutionary Drug Design

This article provides a comprehensive exploration of energy quantization, a fundamental quantum mechanical principle, and its critical applications in modern computational chemistry and drug discovery.

Energy Quantization in Chemical Systems: From Quantum Foundations to Revolutionary Drug Design

Abstract

This article provides a comprehensive exploration of energy quantization, a fundamental quantum mechanical principle, and its critical applications in modern computational chemistry and drug discovery. Tailored for researchers and pharmaceutical professionals, it details how discrete molecular energy levels govern interactions from atomic spectra to drug-target binding. The content spans foundational theories, practical computational methods like DFT and QM/MM, current limitations in classical simulations, and the transformative potential of emerging quantum computing technologies. By integrating foundational knowledge with cutting-edge case studies on anticancer drug development and covalent inhibitors, this review serves as a roadmap for leveraging quantum principles to achieve unprecedented accuracy in molecular design and accelerate therapeutic innovation.

Quantum Foundations: Why Energy is Quantized in Atoms and Molecules

Energy quantization represents a foundational pillar of modern physics, marking a radical departure from classical mechanics. This whitepaper traces the conceptual evolution of energy quantization from Planck's seminal solution to the blackbody radiation problem through its formalization in the Schrödinger equation. Framed within chemical systems research, we examine how discrete energy states fundamentally govern molecular behavior and reaction dynamics, with particular implications for drug discovery methodologies. The mathematical formalisms underlying quantization are explicated alongside experimental validations and contemporary research applications, providing researchers with a comprehensive technical reference on quantum principles governing chemical phenomena at molecular scales.

The principle of energy quantization posits that energy exists in discrete, indivisible units known as quanta, rather than as a continuous variable as postulated in classical physics [1]. This paradigm shift, initiated by Max Planck in 1900, fundamentally altered our understanding of atomic and molecular processes, providing the theoretical underpinnings for quantum mechanics [2]. In chemical systems research, energy quantization manifests most profoundly in the discrete electronic, vibrational, and rotational states of atoms and molecules, which directly dictate reaction pathways, spectral signatures, and binding affinities [1]. For drug development professionals, understanding these quantum mechanical principles is essential for rational drug design, as molecular interactions between pharmaceuticals and their biological targets are governed by quantized energy transitions that determine binding specificity and reaction kinetics.

Historical Foundation: Planck's Quantum Hypothesis

The Blackbody Radiation Problem

Planck's revolutionary hypothesis emerged from his investigation into blackbody radiation—the electromagnetic radiation emitted by a perfect absorber and emitter of energy [3] [4]. Classical physics predicted the "ultraviolet catastrophe," where energy emission would increase without bound at shorter wavelengths, contradicting experimental observations showing a characteristic peak in emission intensity that shifted with temperature [3]. Planck resolved this discrepancy by proposing that atomic oscillators could only emit or absorb energy in discrete multiples of a fundamental unit, or quantum [5] [4]. This quantization of energy transfer was represented mathematically as: $$E = nh\nu$$ where:

- (E) represents the energy of the quantum

- (n) is an integer (n = 1, 2, 3, ...)

- (h) is Planck's constant (6.626×10⁻³⁴ J·s)

- (\nu) is the frequency of the radiation [5] [1]

Table 1: Key Experimental Evidence for Energy Quantization

| Experiment | Classical Prediction | Quantum Explanation | Significance |

|---|---|---|---|

| Blackbody Radiation | Intensity increases infinitely at shorter wavelengths (ultraviolet catastrophe) | Energy emitted in discrete quanta (E = h\nu) [3] [4] | Resolved discrepancy between theory and observation [3] |

| Photoelectric Effect | Electron ejection depends on light intensity, not frequency | Electrons ejected only when photon energy (h\nu) exceeds work function [1] | Validated particle nature of light [1] |

| Atomic Spectra | Continuous emission spectra expected | Discrete spectral lines correspond to transitions between quantized energy levels [1] | Revealed quantized electronic structure in atoms [1] |

Einstein's Extension: The Photon Concept

In 1905, Albert Einstein expanded Planck's quantum hypothesis beyond energy exchange mechanisms to propose that electromagnetic radiation itself is quantized [5] [2]. Einstein introduced the concept of photons—discrete packets of light energy—to explain the photoelectric effect, where electrons are ejected from metal surfaces upon light irradiation [1]. His formulation established that each photon carries energy proportional to its frequency: $$E = h\nu$$ This relationship explained the observed threshold frequency in the photoelectric effect, where electron ejection occurs only when individual photons possess sufficient energy to overcome the metal's work function ((\phi)), with ejected electrons exhibiting maximum kinetic energy given by: $$K_{max} = h\nu - \phi$$ [1]

Mathematical Formalization of Energy Quantization

The Schrödinger Equation Framework

The time-dependent Schrödinger equation provides the fundamental mathematical framework for describing quantum systems, playing a role analogous to Newton's second law in classical mechanics [6] [7]. This partial differential equation governs the evolution of the wavefunction (\Psi(x,t)), which contains all information about a quantum system: $$i\hbar\frac{\partial}{\partial t}\Psi(x,t) = \left[-\frac{\hbar^2}{2m}\frac{\partial^2}{\partial x^2} + V(x,t)\right]\Psi(x,t)$$ where:

- (i) is the imaginary unit

- (\hbar) is the reduced Planck constant ((\hbar = h/2\pi))

- (m) is the particle mass

- (V(x,t)) represents the potential energy [6] [7]

For stationary states with time-independent potentials, the time-independent Schrödinger equation applies: $$\hat{H}|\Psi\rangle = E|\Psi\rangle$$ where (\hat{H}) is the Hamiltonian operator representing the total energy of the system, and (E) are the energy eigenvalues corresponding to measurable energy levels [6].

Quantization in Bound Systems

Solutions to the Schrödinger equation for bound systems, such as electrons in atoms or particles in potential wells, naturally yield discrete energy eigenvalues [6] [1]. These solutions mathematically formalize the concept of energy quantization first proposed phenomenologically by Planck. For the hydrogen atom, the energy eigenvalues are given by: $$E_n = -\frac{13.6 \text{ eV}}{n^2}$$ where (n) is the principal quantum number (n = 1, 2, 3, ...) [1]. This quantization emerges as a mathematical consequence of boundary conditions imposed on the wavefunction, requiring it to be single-valued, continuous, and normalizable [6].

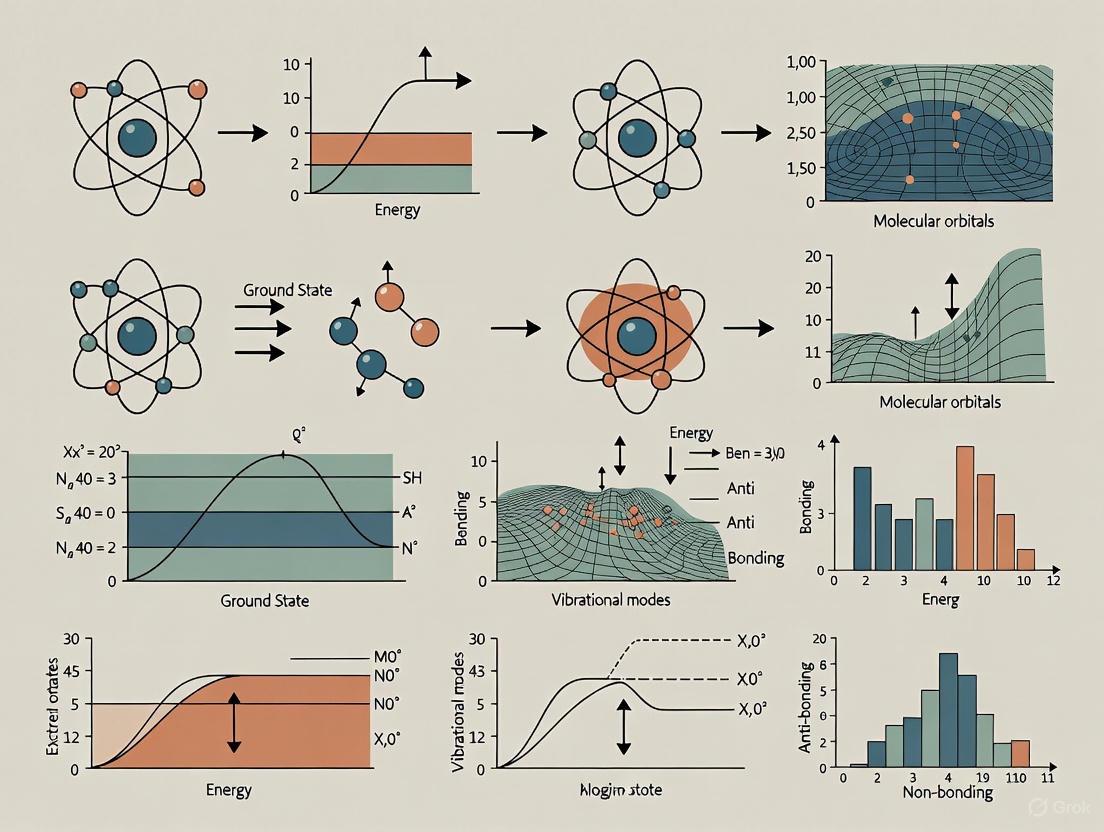

Figure 1: Historical Development of Energy Quantization Concepts

Experimental Methodologies and Validation

Blackbody Radiation Experimental Protocol

Objective: Verify Planck's quantum hypothesis by measuring the spectral distribution of blackbody radiation and comparing it to the classical Rayleigh-Jeans law and Planck's distribution.

Materials and Equipment:

- Cavity radiator with precision temperature control

- Spectrometer with wavelength range 200-2500 nm

- Thermopile detector or calibrated photodiode array

- Data acquisition system with temperature and intensity logging

Procedure:

- Prepare a cavity radiator (e.g., a hollow sphere with small aperture) to approximate an ideal blackbody.

- Heat the radiator to a stable temperature between 1000K and 6000K, using thermocouples for precise monitoring.

- Direct emitted radiation through the spectrometer, scanning across wavelengths from ultraviolet to infrared.

- Measure intensity at each wavelength interval with the thermopile detector.

- Repeat measurements at multiple temperatures to observe the spectral shift.

- Plot intensity versus wavelength for each temperature and compare with theoretical predictions.

Expected Results: The experimental data will show a characteristic peak in intensity that shifts to shorter wavelengths with increasing temperature (Wien's displacement law). The curve will follow Planck's distribution rather than the classical Rayleigh-Jeans law, which diverges at short wavelengths [3].

Photoelectric Effect Experimental Protocol

Objective: Demonstrate the particle nature of light and validate Einstein's photon concept by measuring the kinetic energy of photoelectrons as a function of incident light frequency.

Materials and Equipment:

- Vacuum phototube with photocathode of known work function (e.g., cesium, potassium)

- Monochromatic light source with adjustable frequency (e.g., mercury vapor lamp with filters or tunable laser)

- Variable voltage source and sensitive ammeter

- Retarding potential apparatus for electron energy analysis

Procedure:

- Assemble the photoelectric effect apparatus in a dark environment to eliminate stray light.

- Illuminate the photocathode with monochromatic light of a specific frequency.

- Apply a retarding potential between the cathode and collector electrode while measuring the photocurrent.

- Determine the stopping potential (V₀) where photocurrent reaches zero.

- Repeat measurements across multiple frequencies of incident light.

- Plot stopping potential versus frequency for analysis.

Expected Results: The data will show a linear relationship between stopping potential and frequency, with a threshold frequency below which no photoelectrons are emitted regardless of intensity. The slope of the line will equal (h/e), confirming Einstein's equation (K_{max} = h\nu - \phi) [1].

Table 2: Research Reagent Solutions for Quantum Phenomena Investigation

| Reagent/Equipment | Function in Experimental Protocol | Technical Specifications |

|---|---|---|

| Cavity Radiator | Approximates ideal blackbody for radiation studies | High-emissivity interior coating (e.g., carbon black); precise temperature control (±0.1K) |

| Monochromatic Light Source | Provides precise frequency illumination for photoelectric studies | Wavelength range: 200-800 nm; bandwidth: <5 nm; intensity stability: >95% |

| Photocathode Materials | Electron emission surface for photoelectric measurements | Low work function (e.g., cesium-antimonide: ~1.8eV; potassium: ~2.3eV) |

| Spectrometer | Wavelength dispersion and measurement | Resolution: <1 nm; detection range: 200-2500 nm; calibrated wavelength accuracy |

| Retarding Potential Apparatus | Measures kinetic energy of photoelectrons | Voltage range: 0-5V; resolution: 0.01V; low noise measurement circuit |

Quantization in Chemical Systems and Drug Discovery

Quantum Principles in Molecular Systems

In chemical systems, energy quantization manifests through discrete molecular orbitals, vibrational states, and rotational levels that govern molecular behavior [1]. The electronic transitions between quantized energy states produce characteristic absorption and emission spectra that serve as fingerprints for chemical identification [1]. In drug discovery research, these principles enable:

- Molecular Modeling: Quantum chemical calculations using the Schrödinger equation to predict molecular properties and reactivity [8]

- Spectroscopic Analysis: Utilizing discrete spectral lines to determine molecular structure and dynamics

- Reaction Kinetics: Understanding reaction pathways through quantized transition states

Advanced Applications in Pharmaceutical Research

The emergence of quantum computing and quantum-inspired algorithms represents the modern evolution of quantization principles in chemical research [9] [8] [10]. Quantum computers leverage quantized states (qubits) to simulate molecular systems with complexity beyond the reach of classical computers [10]. Initiatives like the Quantum Systems Accelerator (QSA) aim to achieve 1,000-fold performance gains in quantum computational power by 2030, with direct applications to drug discovery [10]:

Virtual Screening: Quantized models process millions of chemical compounds in reduced time, identifying potential drug candidates more efficiently than traditional methods [8].

Molecular Dynamics Simulations: Quantization of simulation parameters enables study of molecular interactions at reduced computational cost, accelerating drug design [8].

Predictive Toxicology: Quantized machine learning models predict toxicity of potential drug candidates with high accuracy, reducing risks in clinical trials [8].

Figure 2: Relationship Between Quantum Principles and Drug Discovery Applications

The principle of energy quantization has evolved from Planck's mathematical trick to resolve the ultraviolet catastrophe to a fundamental concept underpinning modern quantum mechanics and its applications in chemical systems research [5] [3]. The mathematical formalization through the Schrödinger equation provides a robust framework for predicting and understanding discrete energy states in atomic and molecular systems [6] [7]. For drug development professionals, these quantum principles enable increasingly sophisticated computational approaches to molecular design and optimization.

Future directions in quantization research include the development of quantum computers that directly harness quantum states for molecular simulations [10], advanced quantization techniques in machine learning for drug discovery [8], and continued refinement of quantum chemical methods to predict molecular behavior with greater accuracy. As these technologies mature, the principles of energy quantization first proposed by Planck over a century ago will continue to drive innovation in chemical research and pharmaceutical development.

The principle of energy quantization is a cornerstone of modern quantum mechanics, fundamentally distinguishing it from classical physics. This concept posits that energy, particularly within atomic and molecular systems, exists in discrete, specific amounts rather than as a continuous spectrum. The experimental verification of this principle emerged not from theoretical postulation alone but from rigorous empirical investigations into two key phenomena: atomic spectra and the photoelectric effect. These experiments provided the first conclusive evidence that energy transitions within chemical systems are quantized, meaning electrons can only occupy specific energy levels and transition between them by absorbing or emitting precise packets of energy. This framework is essential for understanding the behavior of electrons in atoms and molecules, which in turn governs chemical bonding, reactivity, and the spectroscopic properties of materials—all critical considerations in fields ranging from drug development to materials science.

This guide details the experimental methodologies and findings that underpin the concept of energy quantization, providing researchers with a thorough understanding of the historic and technical context.

Atomic Spectra: Direct Evidence of Discrete Energy Levels

Experimental Foundation and Theoretical Basis

Atomic emission spectroscopy is a foundational technique for probing the quantized energy states of atoms. When an atom's electrons are excited to higher energy orbitals—typically by thermal energy from a flame or electrical discharge—they subsequently relax to lower energy states. The energy difference between these states is emitted as a photon [11] [12]. The central quantum mechanical relationship is given by:

Ephoton = hν = Einitial - Efinal

where Ephoton is the energy of the emitted photon, h is Planck's constant, ν is the frequency of the light, and Einitial and Efinal are the energies of the initial and final electron states, respectively [12] [13]. Because these electronic states are quantized, the energy differences, and thus the frequencies of emitted light, are also discrete. This results in an emission spectrum observed as a series of bright, distinct lines at specific wavelengths, rather than a continuous rainbow of light [11] [12]. Each element possesses a unique set of energy levels, yielding a characteristic spectral "fingerprint" used for qualitative chemical analysis [12].

Detailed Experimental Protocol: Flame Emission Spectroscopy

The following workflow details the steps for a flame emission spectroscopy experiment to observe atomic spectra [12]:

```dot

The principle of energy quantization is a cornerstone of modern chemistry, fundamentally distinguishing quantum mechanical systems from classical ones. In chemical systems, energy is not continuous but exists in discrete, quantized levels. This quantization governs the behavior of electrons within atoms and molecules, as well as the vibrational and rotational motions of molecules themselves [14]. The quantum mechanical model reveals that these particles and systems can only occupy specific, allowed energy states, and transitions between these states involve the absorption or emission of precise amounts of energy [15]. This framework is not merely a theoretical construct; it provides the essential foundation for predicting molecular structure, reactivity, and spectroscopic behavior, with critical applications spanning drug discovery, materials science, and catalysis [16].

The observation of discrete spectral lines in atomic and molecular spectroscopy provided the initial experimental evidence for quantization. The failure of classical physics to explain these phenomena, such as the ultraviolet catastrophe, led to the development of quantum theory by Max Planck, who proposed that energy is exchanged in discrete packets, or quanta [15]. The subsequent work of Einstein, Bohr, Schrödinger, and Heisenberg established a robust mathematical framework, demonstrating that quantization is a natural consequence of the wave-like properties of particles confined to bound systems [14] [15].

Theoretical Foundations of Quantization

The Quantum Mechanical Model of the Atom

The quantum mechanical model of the atom replaced earlier planetary models by describing electrons not as particles in fixed orbits, but as wave-like entities occupying three-dimensional regions called atomic orbitals [14]. The probability of finding an electron in a specific region is defined by its wave function (ψ), which is a solution to the fundamental equation of quantum mechanics: the Schrödinger equation [14].

The time-independent Schrödinger equation is expressed as: [ \hat{H}\psi = E\psi ] where ( \hat{H} ) is the Hamiltonian operator (representing the total energy of the system), ( \psi ) is the wave function, and ( E ) is the energy eigenvalue [14]. Solving this equation for an atom yields discrete energy values (( E )) and the corresponding wave functions, which define the atomic orbitals.

Each electron in an atom is uniquely described by a set of four quantum numbers, which arise from the solution to the Schrödinger equation and define the quantized states of the electron [14]:

- Principal quantum number (n): Defines the main energy level or shell (( n = 1, 2, 3, ... )).

- Azimuthal quantum number (l): Defines the orbital's shape (( l = 0 ) to ( n-1 ), corresponding to s, p, d, f orbitals).

- Magnetic quantum number (mₗ): Defines the orbital's orientation in space.

- Spin quantum number (mₛ): Specifies the electron's spin direction (( +\frac{1}{2} ) or ( -\frac{1}{2} )).

A foundational principle that underscores the quantum nature of particles is the Heisenberg Uncertainty Principle, which states that it is impossible to simultaneously know both the exact position and exact momentum of an electron [14]. This inherent uncertainty necessitates a probabilistic model and definitively prohibits the concept of fixed, classical orbits.

Quantization in Bound Systems

A general result in quantum mechanics is that any bound system exhibits quantized energy levels [15]. This applies universally to electrons attracted to a nucleus, atoms bound in a molecule, or a mass connected to a spring. The confinement of a wave to a finite space leads to the formation of standing waves, which only exist for specific, discrete frequencies and their harmonics [17] [15]. This is directly analogous to a guitar string, which can only vibrate at its fundamental frequency or integer multiples thereof. In quantum systems, these standing wave conditions result in a discrete energy spectrum.

Electronic Energy Levels

Atomic and Molecular Orbitals

The solution of the Schrödinger equation for the hydrogen atom produces a set of atomic orbitals (s, p, d, f), each with a characteristic energy and shape [14]. In molecules, the combination of atomic orbitals leads to the formation of molecular orbitals, which are delocalized over the entire molecule and also possess quantized energies. The arrangement of electrons into these molecular orbitals according to the Aufbau principle, Pauli exclusion principle, and Hund's rule determines the electronic configuration and fundamental properties of the molecule [14].

Electronic Spectroscopy

Electronic spectroscopy probes transitions between quantized electronic energy levels. These transitions are typically induced by photons in the visible and ultraviolet regions of the electromagnetic spectrum (approximately 200–700 nm) [18].

In a molecule, the total energy during an electronic transition is not solely electronic. According to the Born-Oppenheimer approximation, the total energy can be expressed as the sum of electronic, vibrational, and rotational contributions: [ E{\text{total}} = E{\text{electronic}} + E{\text{vibrational}} + E{\text{rotational}} ] As a result, a single electronic transition is accompanied by a multitude of possible vibrational and rotational transitions, giving rise to a complex vibronic spectrum with characteristic "coarse" vibrational and "fine" rotational structures [18].

Table: Electronic Energy Level Structure in Diatomic Molecules

| Energy Component | Description | Typical Energy Range | Spectroscopic Region |

|---|---|---|---|

| Electronic | Energy associated with the configuration of electrons in molecular orbitals. | Largest energy difference | Visible & Ultraviolet |

| Vibrational | Energy associated with the oscillation of the atomic nuclei. | Intermediate | Infrared |

| Rotational | Energy associated with the rotation of the entire molecule. | Smallest | Microwave & Far-IR |

The following diagram illustrates the hierarchy of energy levels in a molecule and the transitions measured in electronic spectroscopy.

Vibrational Energy Levels

The Quantum Harmonic Oscillator

The vibrations within a molecule are quantized. A fundamental model for this is the quantum harmonic oscillator, which approximating the chemical bond as a spring obeying Hooke's law [15]. While the classical harmonic oscillator can possess any continuous energy value, the quantum mechanical solution yields discrete energy levels.

The Schrödinger equation for the quantum harmonic oscillator gives the allowed energy levels as: [ E_v = \hbar \omega \left( v + \frac{1}{2} \right) ] where ( v = 0, 1, 2, ...) is the vibrational quantum number, ( \hbar ) is the reduced Planck constant, and ( \omega ) is the fundamental vibrational frequency, related to the bond force constant ( k ) and reduced mass ( \mu ) by ( \omega = \sqrt{k/\mu} ) [15]. The term ( \frac{1}{2} \hbar \omega ) is the zero-point energy, the minimum energy the oscillator possesses even at the absolute zero of temperature.

The Morse Potential and Anharmonicity

A more realistic model for molecular vibrations is the Morse potential, which accounts for bond dissociation at high energies [17]. Like the harmonic oscillator, the Morse potential features discrete vibrational energy levels, denoted by horizontal lines on a potential energy curve [17]. However, the energy levels become closer together as the vibrational quantum number increases, reflecting anharmonicity. At room temperature, molecules typically occupy the lowest vibrational levels [17].

Table: Comparison of Vibrational Models

| Feature | Harmonic Oscillator | Morse Potential |

|---|---|---|

| Energy Level Spacing | Constant (( \Delta E = \hbar \omega )) | Decreases with increasing ( v ) |

| Bond Dissociation | Not accounted for (infinite energy required) | Accounted for (finite dissociation energy) |

| Mathematical Form | ( E = \hbar \omega (v + 1/2) ) | ( E = \hbar \omega (v + 1/2) - [\hbar \omega (v + 1/2)]^2 / (4D_e) ) |

| Realism | Good approximation for low ( v ) | More realistic across all ( v ) |

Rotational Energy Levels

The Quantum Rigid Rotor

The rotation of a molecule is also quantized. The simplest model is the quantum rigid rotor, which describes the rotation of a rigid dumbbell molecule [18]. The solution to the Schrödinger equation for this system yields quantized rotational energy levels: [ E_J = B J(J + 1) ] where ( J = 0, 1, 2, ...) is the rotational quantum number, and ( B ) is the rotational constant, given by ( B = \frac{h}{8\pi^2 c I} ). Here, ( I ) is the moment of inertia of the molecule [18]. The moment of inertia depends on the bond length and atomic masses, meaning that rotational spectroscopy provides a direct route to determining molecular geometries.

Rotational Fine Structure

Rotational transitions occur in the microwave region of the spectrum. In electronic or vibrational spectra, rotational transitions appear as fine structure on each vibronic or vibrational line [18]. For a diatomic molecule, the selection rules lead to the formation of P-branches (( \Delta J = -1 )) and R-branches (( \Delta J = +1 )), and sometimes a Q-branch (( \Delta J = 0 )) [18]. The rotational constant ( B' ) in an electronically excited state is often smaller than ( B'' ) in the ground state, indicating bond lengthening upon electronic excitation [18].

Experimental Methodologies and Protocols

Spectroscopic Techniques

Experimental verification of quantized states is primarily achieved through spectroscopy. The following workflow outlines a generalized protocol for acquiring and interpreting a molecular spectrum to extract quantized energy levels.

Detailed Protocol for Electronic Absorption Spectroscopy (Solution Phase):

- Sample Preparation: Prepare a dilute solution of the analyte in a spectrophotometric-grade solvent contained in a quartz cuvette (for UV-Vis measurements) [18].

- Baseline Correction: Place a matched cuvette containing only the pure solvent in the spectrometer's reference beam to subtract solvent absorption.

- Data Acquisition: Scan the monochromator across the desired wavelength range (e.g., 200–800 nm). Measure the intensity of transmitted light (( I )) relative to the reference beam intensity (( I0 )) to calculate absorbance (( A = -\log(I/I0) )).

- Data Analysis:

- Identify the wavelength (( \lambda_{\text{max}} )) of maximum absorption for each band.

- Convert wavelength to energy using ( E = hc / \lambda ).

- For vibrational progression within an electronic band, the energy separation between peaks corresponds to vibrational quanta (( \hbar \omega )).

Computational Chemistry Methods

Computational protocols provide a theoretical route to determining quantized energy levels, crucial for systems where experimental data is scarce.

Protocol for Calculating Reduction Potentials Using Neural Network Potentials (NNPs):

- Geometry Optimization: Optimize the molecular geometries of both the non-reduced and reduced species using a computational method (e.g., a neural network potential like UMA-S or eSEN, or density functional theory like B97-3c) [19]. Use a convergence threshold for the maximum force (e.g., 4.5 × 10⁻⁴ a.u.) [19].

- Energy Calculation: Input the optimized structures into a solvation model (e.g., CPCM-X) to obtain the solvent-corrected electronic energy for each species [19].

- Property Calculation: Calculate the reduction potential (( E^0 )) as the difference in electronic energy between the non-reduced and reduced structures: ( E^0 = E{\text{non-reduced}} - E{\text{reduced}} ) (in volts) [19].

- Benchmarking: Compare the calculated values against experimental data and report statistical accuracy metrics such as Mean Absolute Error (MAE) and Root Mean Squared Error (RMSE) [19].

Table: Performance of Computational Methods for Charge-Related Properties

| Method | System Type | Mean Absolute Error (MAE) | Root Mean Squared Error (RMSE) | Coefficient of Determination (R²) |

|---|---|---|---|---|

| B97-3c | Main-Group (OROP) | 0.260 V | 0.366 V | 0.943 |

| B97-3c | Organometallic (OMROP) | 0.414 V | 0.520 V | 0.800 |

| GFN2-xTB | Main-Group (OROP) | 0.303 V | 0.407 V | 0.940 |

| GFN2-xTB | Organometallic (OMROP) | 0.733 V | 0.938 V | 0.528 |

| UMA-S (NNP) | Main-Group (OROP) | 0.261 V | 0.596 V | 0.878 |

| UMA-S (NNP) | Organometallic (OMROP) | 0.262 V | 0.375 V | 0.896 |

The Scientist's Toolkit: Key Research Reagents & Materials

Table: Essential Computational and Experimental Resources

| Tool / Reagent | Type | Primary Function | Example / Vendor |

|---|---|---|---|

| OMol25 NNPs | Computational Model | Predict molecular energies across charge/spin states with high speed and accuracy for drug-relevant molecules [19]. | eSEN-S, UMA-S, UMA-M |

| Density Functional Theory | Computational Method | Calculate electronic structure and properties using approximate functionals; balances cost and accuracy [19]. | B97-3c, r2SCAN-3c, ωB97X-3c |

| Semiempirical Methods | Computational Method | Perform rapid quantum mechanical calculations using empirical parameters; useful for large systems [19]. | GFN2-xTB, g-xTB |

| UV-Vis Spectrophotometer | Instrument | Measure electronic absorption spectra to probe electronic energy levels and transitions [18]. | Agilent, Shimadzu |

| Quartz Cuvette | Labware | Hold liquid samples for UV-Vis spectroscopy; transparent down to ~200 nm [18]. | Hellma, Starna |

| FreeQuantum Pipeline | Computational Framework | A modular pipeline integrating machine learning and quantum chemistry (and eventually quantum computing) for high-accuracy binding energy calculations [16]. | Open-source (GitHub) |

Current Research and Quantum Computing Applications

The pursuit of quantum advantage—using quantum computers to solve problems intractable for classical computers—is a major frontier in computational chemistry. An international team has developed the FreeQuantum pipeline, a blueprint for achieving this in calculating molecular binding energies, a critical task in drug discovery [16].

This framework integrates machine learning, classical simulation, and high-accuracy quantum chemistry in a modular system. Its "quantum core" is designed to eventually be run on fault-tolerant quantum computers, using algorithms like Quantum Phase Estimation (QPE) to solve the electronic Schrödinger equation with certified accuracy for problems that are too complex for classical methods [16]. A benchmark study on a ruthenium-based anticancer drug demonstrated that high-accuracy quantum chemical methods within this pipeline yielded a binding free energy of -11.3 ± 2.9 kJ/mol, substantially different from the -19.1 kJ/mol predicted by classical force fields [16]. This difference of several kJ/mol can be decisive in drug candidate optimization.

Resource estimates suggest that with around 1,000 logical qubits and sufficient gate fidelities, quantum computers could compute the necessary energy data for such biochemical simulations within practical timeframes, paving the way for quantum computers to become routine tools in molecular science [16].

The particle-in-a-box model stands as a cornerstone in quantum mechanics, providing an analytically solvable framework that illuminates the fundamental principles of energy quantization in confined systems. This model, while conceptually straightforward, offers profound insights into the quantum behavior of particles, forming a foundational concept for understanding more complex chemical systems. Its utility extends far beyond a mere pedagogical exercise; it serves as a critical starting point for conceptualizing quantum confinement effects observed in nanostructured materials, conjugated organic molecules, and biological chromophores. For researchers and drug development professionals, mastering this model is not an academic formality but a practical necessity. It provides the intellectual scaffolding for understanding molecular orbital theory, electronic spectroscopy, and the quantum-mechanical basis for molecular interactions that underpin modern drug design. The model's capacity to yield exact solutions to the Schrödinger equation makes it an indispensable tool for developing intuition about quantum phenomena that would otherwise remain obscured by mathematical complexity in more realistic systems.

Theoretical Framework

Model Definition and Schrödinger Equation

The particle-in-a-box model considers a particle of mass (m) confined to a one-dimensional region of space by impenetrable barriers. The potential energy function defining this system is [20] [21]: [ V(x) = \begin{cases} 0 & \text{for } 0 \leq x \leq L \ \infty & \text{for } x < 0 \text{ or } x > L \end{cases} ] where (L) represents the length of the box. The infinite potential energy at the boundaries ensures the particle cannot exist outside the box, creating a perfect confinement system. Within the box, where (V(x) = 0), the time-independent Schrödinger equation simplifies to [22] [23]: [ -\dfrac{\hbar^2}{2m} \dfrac{d^2\psi(x)}{dx^2} = E\psi(x) ] where (\hbar) is the reduced Planck's constant, (\psi(x)) is the wave function, and (E) is the total energy of the particle. This equation describes the motion of a free particle inside the box, subject to the critical boundary conditions that the wave function must be zero at both ends: (\psi(0) = 0) and (\psi(L) = 0) [20].

Wavefunctions and Quantized Energy Levels

The general solution to the Schrödinger equation for this system is [20] [21]: [ \psi(x) = A\sin(kx) + B\cos(kx) ] where (A) and (B) are constants determined by the boundary conditions, and (k) is the wavevector related to the energy by (k^2 = 2mE/\hbar^2). Applying the boundary condition at (x = 0) ((\psi(0) = 0)) forces (B = 0), simplifying the solution to (\psi(x) = A\sin(kx)). The boundary condition at (x = L) ((\psi(L) = 0)) requires: [ A\sin(kL) = 0 ] Since (A) cannot be zero (which would give the trivial solution of no particle), it must be that (\sin(kL) = 0), which occurs when: [ kL = n\pi \quad \text{for } n = 1, 2, 3, \ldots ] The integer (n) is the quantum number for the system. This leads to discrete allowed values for (k): [ kn = \frac{n\pi}{L} ] Substituting this relationship into the energy expression yields the quantized energy levels [20] [21] [23]: [ En = \frac{\hbar^2 kn^2}{2m} = \frac{n^2\pi^2\hbar^2}{2mL^2} = \frac{n^2h^2}{8mL^2} ] where (h = 2\pi\hbar) is Planck's constant. The normalization condition (\int0^L |\psi(x)|^2 dx = 1) determines the constant (A = \sqrt{2/L}), giving the complete normalized wavefunctions: [ \psi_n(x) = \sqrt{\frac{2}{L}} \sin\left(\frac{n\pi x}{L}\right) \quad \text{for } n = 1, 2, 3, \ldots ]

Figure 1: Quantum states in a 1D infinite potential well showing wavefunctions and discrete energy levels

Quantitative Data Presentation

Energy Level and Wavefunction Properties

Table 1: Properties of particle-in-a-box wavefunctions and energy levels for quantum numbers n = 1 to 4

| Quantum Number (n) | Energy | Wavefunction ψₙ(x) | Nodes | Parity |

|---|---|---|---|---|

| n = 1 | (E_1 = \dfrac{h^2}{8mL^2}) | (\sqrt{\dfrac{2}{L}}\sin\left(\dfrac{\pi x}{L}\right)) | 0 | Odd |

| n = 2 | (E2 = 4E1) | (\sqrt{\dfrac{2}{L}}\sin\left(\dfrac{2\pi x}{L}\right)) | 1 | Even |

| n = 3 | (E3 = 9E1) | (\sqrt{\dfrac{2}{L}}\sin\left(\dfrac{3\pi x}{L}\right)) | 2 | Odd |

| n = 4 | (E4 = 16E1) | (\sqrt{\dfrac{2}{L}}\sin\left(\dfrac{4\pi x}{L}\right)) | 3 | Even |

Expectation Values and Uncertainties

Table 2: Expectation values and uncertainties for a particle in a one-dimensional box

| Physical Quantity | Mathematical Expression | Value for State n | ||

|---|---|---|---|---|

| Position expectation value | (\langle x \rangle = \int_0^L x | \psi_n(x) | ^2 dx) | (\dfrac{L}{2}) (for all n) |

| Position uncertainty | (\sigma_x = \sqrt{\langle x^2 \rangle - \langle x \rangle^2}) | (L\sqrt{\dfrac{1}{12} - \dfrac{1}{2n^2\pi^2}}) | ||

| Momentum expectation value | (\langle p \rangle = \int0^L \psin^*(x)\left(-i\hbar\dfrac{d}{dx}\right)\psi_n(x) dx) | 0 (for all n) | ||

| Momentum uncertainty | (\sigma_p = \sqrt{\langle p^2 \rangle - \langle p \rangle^2}) | (\dfrac{n\pi\hbar}{L}) | ||

| Energy expectation value | (\langle E \rangle = \int0^L \psin^*(x)\hat{H}\psi_n(x) dx) | (\dfrac{n^2h^2}{8mL^2}) |

The probability density (Pn(x) = |\psin(x)|^2 = \dfrac{2}{L}\sin^2\left(\dfrac{n\pi x}{L}\right)) reveals where the particle is likely to be found within the box. For the ground state (n=1), the particle is most likely to be found at the center of the box, while for excited states, the probability density exhibits n peaks with (n-1) nodes where the probability is zero [21].

Experimental and Computational Methodologies

Analytical Solution Protocol

The analytical solution of the particle-in-a-box model follows a systematic mathematical procedure [22] [20]:

Define the potential energy function: Establish the boundary conditions (V(x) = 0) for (0 \leq x \leq L) and (V(x) = \infty) otherwise.

Write the time-independent Schrödinger equation: [ -\dfrac{\hbar^2}{2m} \dfrac{d^2\psi}{dx^2} = E\psi \quad \text{for } 0 \leq x \leq L ]

Propose a general solution: [ \psi(x) = A\sin(kx) + B\cos(kx) \quad \text{with } k^2 = 2mE/\hbar^2 ]

Apply boundary conditions:

- At x = 0: (\psi(0) = 0) ⇒ B = 0

- At x = L: (\psi(L) = A\sin(kL) = 0) ⇒ (kL = n\pi) for n = 1, 2, 3, ...

Normalize the wavefunction: [ \int0^L |\psin(x)|^2 dx = 1 \Rightarrow A = \sqrt{\frac{2}{L}} ]

Derive the energy eigenvalues: [ E_n = \frac{n^2h^2}{8mL^2} ]

Numerical Solution Using Numerov's Method

For systems where analytical solutions are intractable, numerical methods like Numerov's algorithm provide powerful alternatives [24]:

Discretize the spatial domain: Divide the interval [0, L] into N equally spaced points with spacing (\Delta x = L/(N-1)).

Express the Schrödinger equation in discrete form: [ \frac{d^2\psi}{dx^2} \approx \frac{\psi{i+1} - 2\psii + \psi{i-1}}{(\Delta x)^2} = -ki^2\psii ] where (ki^2 = \frac{2m}{\hbar^2}[E - V(x_i)]).

Implement Numerov's recurrence relation: [ \psi{i+1} = \frac{2\left(1 - \frac{5}{12}(\Delta x)^2 ki^2\right)\psii - \left(1 + \frac{1}{12}(\Delta x)^2 k{i-1}^2\right)\psi{i-1}}{1 + \frac{1}{12}(\Delta x)^2 k{i+1}^2} ]

Apply shooting method: Guess an energy E, integrate the equation numerically, and iteratively adjust E until the boundary condition (\psi(L) = 0) is satisfied.

Normalize the numerical solution using trapezoidal or Simpson's rule integration.

Figure 2: Methodology workflow for solving quantum systems using analytical and numerical approaches

Applications to Molecular Systems

Cyanine Dyes and Conjugated Molecules

The particle-in-a-box model provides remarkable insights into the electronic properties of conjugated organic molecules, particularly cyanine dyes. Kuhn's free-electron model treats the π-electrons in polymethine dyes as particles moving freely along a one-dimensional box representing the conjugated path [25]. The box length (L) is determined by: [ L = (N + 1) \times \beta ] where (N) represents the number of carbon atoms in the conjugated chain, and (\beta) is the average bond length between single and double C-C bonds (approximately 1.40 Å). For a symmetric carbocyanine dye with N carbon atoms and two nitrogen end groups, the total number of π-electrons is (N + 3). Assuming each energy level accommodates two electrons with opposite spins, the highest occupied molecular orbital (HOMO) corresponds to (n = (N + 3)/2) and the lowest unoccupied molecular orbital (LUMO) to (n = (N + 3)/2 + 1). The HOMO-LUMO transition energy is: [ \Delta E = E{n+1} - En = \frac{h^2}{8me L^2}[(n+1)^2 - n^2] = \frac{h^2}{8me L^2}(2n+1) ] This energy difference corresponds to the wavelength of maximum absorption: [ \lambda = \frac{hc}{\Delta E} ] where (c) is the speed of light. This simple model successfully predicts the absorption maxima of various cyanine dyes and explains the bathochromic shift (red shift) observed as the conjugated chain length increases [25].

Nanostructures and Quantum Confinement

The particle-in-a-box model elegantly describes quantum confinement effects in nanoscale materials:

Quantum dots: Three-dimensional confinement is modeled using a particle in a 3D box with wavefunctions as products of one-dimensional solutions: (\psi{nx,ny,nz}(x,y,z) = \psi{nx}(x)\psi{ny}(y)\psi{nz}(z)) and energy levels (E{nx,ny,nz} = \frac{h^2}{8m}\left(\frac{nx^2}{Lx^2} + \frac{ny^2}{Ly^2} + \frac{nz^2}{Lz^2}\right)). The size-dependent band gap explains the tunable fluorescence emission of semiconductor nanocrystals [25].

Quantum wires: Electrons confined in two dimensions exhibit quantized energy levels described by a 2D box model, influencing their electronic transport properties [25].

Nanoparticles and semiconductor quantum wells: The model provides the conceptual foundation for understanding size effects on electronic and optical properties in nanoscale semiconductor structures used in optoelectronics [25].

Drug Development Applications

In pharmaceutical research, the particle-in-a-box model informs several critical areas:

Chromophore design: Predicting absorption and emission wavelengths of fluorescent tags and molecular probes used in bioimaging and drug tracking.

Molecular orbital understanding: Providing the foundational concept for more sophisticated molecular orbital calculations that predict reactivity and interaction sites in drug molecules.

Quantum confinement in drug delivery: Informing the design of nanocarriers where quantum effects influence release mechanisms and interactions with biological systems.

Spectroscopic analysis: Enabling interpretation of UV-Vis absorption spectra of conjugated systems relevant to drug molecules and their metabolites.

Research Reagent Solutions and Computational Tools

Table 3: Essential tools and computational resources for particle-in-a-box based research

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| 1D Schrödinger Solver [24] | Numerical Software | Implements Numerov's method for solving 1D Schrödinger equation | Education, preliminary research, model validation |

| Cminor [26] | Chemical Mechanism Integrator | Fortran-based ODE solver for complex chemical systems | Atmospheric chemistry, multiphase reaction systems |

| PyMOL [27] | Molecular Visualization | Renders molecular structures with customizable coloring | Drug design, structural analysis, publication graphics |

| CPK Coloring Convention [28] | Visualization Standard | Standardized atom coloring for molecular models | Structural communication, educational materials |

| Custom RGB Implementation [27] | Visualization Technique | Enables precise color specification in molecular graphics | Highlighting specific molecular features, publications |

The CPK (Corey-Pauling-Koltun) color convention, while arbitrary in origin, provides crucial standardization for molecular visualization [28]: Carbon (black), Oxygen (red), Nitrogen (blue), Hydrogen (white), Sulfur (yellow), Phosphorus (orange). For research professionals, adherence to these conventions ensures clear communication of molecular structures in publications and presentations.

The concept of energy quantization, foundational to modern chemistry and physics, reveals that energy can only be gained or lost in discrete amounts called quanta [29]. This principle extends far beyond atomic and molecular systems to dramatically influence the behavior of particles and receptors across diverse environmental confinements. This article explores how confining environments—from nanoscale quantum dots to cell membrane domains—reshape energy landscapes, dictating the stability, spatial organization, and function of chemical and biological systems. Understanding these principles provides researchers and drug development professionals with powerful frameworks for manipulating material properties and biological signaling pathways through controlled confinement.

Theoretical Foundations of Energy Landscapes and Quantization

The Principle of Energy Quantization

Energy quantization represents a fundamental departure from classical physics, which assumed energy changes in a smooth, continuous manner. The shift began with Max Planck's 1900 solution to the "ultraviolet catastrophe" in blackbody radiation, proposing that electromagnetic energy could only be emitted or absorbed in discrete packets, or quanta [29]. This quantum hypothesis successfully explained why the intensity of radiation emitted by heated objects drops sharply at shorter wavelengths, contradicting classical predictions that intensity should increase without limit.

A quantum constitutes the smallest possible unit of energy, meaning energy can only be gained or lost in integral multiples of this fundamental unit. This principle underpins all modern quantum mechanics and directly informs our understanding of how environmental confinement restricts particle motion to discrete energy states.

Energy Landscapes in Confined Systems

In confined systems, the concept of an energy landscape provides a quantitative description of the effective forces acting on particles within a restricted environment. These landscapes determine:

- Allowed energy states available to the system

- Transition probabilities between different states

- Spatial distributions of particles within the confinement

- Dynamic behavior and transport properties

When particles become confined, their degrees of freedom become restricted, leading to discrete energy levels and modified physical properties compared to bulk systems. The specific geometry and potential of the confining environment directly shape the resulting energy landscape [30] [31].

Confinement Modalities and Their Energy Landscapes

Environmental confinement occurs across multiple scales and systems, each exhibiting distinct energy landscape characteristics. The table below summarizes three primary confinement modalities and their quantitative impact on energy landscapes.

Table 1: Quantitative Comparison of Confinement Modalities and Their Energy Landscapes

| Confinement System | Confinement Scale | Energy Landscape Characteristics | Key Quantitative Parameters | Experimental Measurement Techniques |

|---|---|---|---|---|

| Quantum Dots [31] | Nanoscale (2D) | Gaussian confinement potential, Discrete electron states | Confinement depth (V₀), Confinement range (σ), Magnetic field strength (B) | Far-infrared spectroscopy, Capacitance spectroscopy, Photoluminescence |

| Cell Membrane Nanodomains [30] | Nanoscale (10-100nm) | Quadratic potential, Harmonic confinement | Confinement energy depth, Apparent domain size, Effective temperature | Single-nanoparticle tracking, Bayesian inference, Decision tree classification |

| Bulk Material Phase Transitions | Macroscale | Continuous potential wells, Multi-minima landscapes | Activation energy, Phase transition temperature, Free energy barriers | Calorimetry, X-ray diffraction, Spectroscopy |

Nanoscale Confinement in Quantum Dots

Quantum dots (QDs) represent "artificial atoms" where electrons are confined in all three dimensions within semiconductor nanostructures. Unlike the idealized infinite potential wells often used in introductory models, realistic QDs exhibit finite confining potentials that profoundly influence their electronic properties [31].

Recent studies employ Gaussian confinement potentials that behave parabolically near the center but provide more realistic boundaries. This potential form is continuous at its boundaries, features a central minimum that exerts non-zero force on particles, and allows for excitations, ionization, and tunneling processes absent in infinite well models [31].

For two-electron systems in GaAs quantum dots under magnetic fields, the energy landscape is characterized by:

- Confinement potential depth (V₀): Determines the strength of electron trapping

- Confinement range (σ): Defines the spatial extent of the potential

- Magnetic field strength (B): Introduces Zeeman effects and Landau quantization

- Spin-orbit interactions: Rashba and Dresselhaus couplings that modify energy states

The interplay between these parameters controls electron pairing stability, spatial correlations, and magnetic properties, with phase diagrams revealing transitions between singlet and triplet states as magnetic fields increase [31].

Biological Confinement in Cell Membrane Nanodomains

In biological systems, cell membrane organization creates nanoscale confinement that controls receptor localization and signaling. Single-nanoparticle tracking of membrane receptors has revealed two distinct organization modalities with characteristic energy landscapes [30]:

- Quadratic energy landscapes for receptors like EGFR, CPεTR, and CSαTR confined in cholesterol- and sphingolipid-rich raft nanodomains

- Free diffusion with steric hindrance for transferrin receptors encountering F-actin barriers

The effective energy landscape for raft-confined receptors shows harmonic (quadratic) potential characteristics, with depth modulated by interactions with the domain environment and F-actin cytoskeleton. Bayesian inference methods applied to long single-molecule trajectories have quantified these landscapes, revealing that apparent domain sizes result from Brownian exploration of the energy landscape in a steady-state-like regime at a common effective temperature [30].

Table 2: Membrane Receptor Confinement Characteristics

| Receptor Type | Confinement Mechanism | Energy Landscape Type | Molecular Interactions | Functional Role |

|---|---|---|---|---|

| EGFR | Lipid raft + F-actin | Quadratic | Cholesterol/sphingolipid + F-actin binding | Growth factor signaling, Cancer proliferation |

| CPεTR, CSαTR | Lipid raft | Quadratic | Cholesterol/sphingolipid | Toxin receptor, Viral entry |

| Transferrin Receptor | F-actin barriers | Free diffusion in confinement | Steric hindrance | Iron transport |

Experimental Methodologies for Energy Landscape Characterization

Single-Nanoparticle Tracking in Membrane Systems

Objective: To characterize the effective energy landscape experienced by membrane receptors confined in nanodomains [30].

Protocol:

- Nanoparticle Preparation:

- Synthesize Y₀.₆Eu₀.₄VO₄ nanoparticles (30nm ellipsoidal)

- Coat with aminopropyltriethoxysilane (APTES)

- Conjugate to target proteins (EGFR, CPεTR, CSαTR) using bis(sulfosuccinimidyl)suberate (BS3) crosslinker

- For EGF and transferrin receptors, use streptavidin-biotin bridging

Cell Preparation and Labeling:

- Culture MDCK (Madin-Darby canine kidney) cells

- Incubate with nanoparticle-conjugated ligands (1-3 hour, 37°C)

- Remove unbound conjugates by high-speed centrifugation (16,000g, 80 minutes)

Data Acquisition:

- Track single nanoparticles with temporal resolution of ~50ms

- Achieve localization accuracy of ~30nm

- Record long trajectories (typically 1000 points) for sufficient spatial sampling

Trajectory Analysis Pipeline:

- Apply Bayesian inference to extract diffusive profiles and effective interaction landscapes

- Implement decision trees and clustering approaches to classify confinement modalities

- Characterize energy landscape parameters (depth, curvature) through Bayesian inference

Key Controls:

- Verify receptor mobility unaffected by nanoparticle labeling due to large difference between membrane and extracellular effective viscosities

- Use appropriate coupling ratios (NP:protein 1:3 to 1:11) to ensure monovalent binding

- Confirm functional activity of conjugated ligands

Variational Approach for Quantum Dot Energy Landscapes

Objective: To compute ground-state properties of two-electron systems confined in Gaussian quantum dots under magnetic fields and spin-orbit interactions [31].

Protocol:

- Hamiltonian Formulation:

- Define system Hamiltonian incorporating kinetic energy, Gaussian confinement potential, electron-electron interaction, Zeeman term, and spin-orbit interactions

- Include both Rashba and Dresselhaus spin-orbit couplings

Wavefunction Ansatz:

- Employ Chandrasekhar-type wavefunction with three adjustable parameters

- Incorporate modified Jastrow correlation factor for electron-electron interactions

Variational Calculation:

- Minimize total energy with respect to variational parameters

- Compute key ground-state quantities: interaction energy, magnetic moment, magnetic susceptibility, and chemical potential

Stability Analysis:

- Construct phase diagrams for singlet bound states across parameter space

- Calculate electron pair density function to reveal spatial correlations

- Determine average inter-electronic distance as function of confinement parameters

Key Parameters:

- Confinement potential depth (V₀): 50-300 meV

- Confinement range (σ): 10-100 nm

- Magnetic field strength (B): 0-10 T

- Spin-orbit coupling strengths: experimental values for GaAs

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Reagents for Confinement Energy Landscape Studies

| Reagent/Material | Function/Application | Specifications | Example Use Cases |

|---|---|---|---|

| Europium-doped Yttrium Vanadate Nanoparticles | Single-molecule tracking probes | 30nm ellipsoidal, photostable, non-blinking | Membrane receptor tracking [30] |

| BS3 Crosslinker (bis(sulfosuccinimidyl)suberate) | Amine-reactive protein-NP conjugation | Water-soluble, NHS ester chemistry | Covalent attachment to membrane receptors [30] |

| GaAs Quantum Dot Substrates | Nanoscale confinement platform | Precise compositional control, tunable size | Two-electron correlation studies [31] |

| APTES (aminopropyltriethoxysilane) | NP surface functionalization | Silane coupling agent, amine termination | Pre-conjugation surface preparation [30] |

| Biotinylated Ligands (EGF, Transferrin) | Streptavidin-bridged receptor labeling | High-affinity binding, minimal perturbation | Specific receptor targeting [30] |

Visualization of Energy Landscape Concepts and Methodologies

Energy Landscape Transformation Under Confinement

Single-Nanoparticle Tracking Workflow

Quantum Dot Confinement Parameter Space

Implications for Chemical Systems Research and Drug Development

The principles of environmental confinement shaping energy landscapes have profound implications across chemical and pharmaceutical research. Understanding these relationships enables researchers to:

Design Targeted Therapeutic Interventions: By mapping the energy landscapes of membrane receptor confinement, drug development professionals can identify strategies to modulate receptor signaling. For instance, the differential confinement of EGFR in raft nanodomains with quadratic energy landscapes suggests potential interventions through cholesterol modulation or cytoskeletal targeting [30].

Optimize Nanomaterial Properties: The precise control of quantum dot confinement parameters enables tuning of optical and electronic properties for applications in sensing, imaging, and quantum information processing. The Gaussian confinement model provides a realistic framework for predicting how structural modifications will impact energy landscapes and resultant material behavior [31].

Advance Predictive Models in Chemical Research: Integration of confinement effects into computational models improves prediction accuracy for reaction rates, molecular recognition, and self-assembly processes. The experimental methodologies outlined provide validation data for refining these computational approaches across multiple scales.

The systematic investigation of how confinement shapes energy landscapes represents a converging frontier across physics, chemistry, and biology, offering unified principles for understanding and manipulating diverse systems from artificial quantum structures to cellular signaling platforms.

Computational Methods: Applying Quantum Principles to Drug Discovery

Quantum chemistry provides the fundamental framework for understanding energy quantization in atoms and molecules, where electrons occupy discrete energy levels rather than a continuous spectrum. This quantized energy landscape governs molecular structure, stability, and reactivity. For researchers and drug development professionals, computational methods that accurately capture these quantum effects are indispensable for predicting molecular behavior without recourse to costly experimentation. This whitepaper examines three cornerstone computational approaches: Hartree-Fock (HF) as the foundational wavefunction method, post-Hartree-Fock (post-HF) techniques that add crucial electron correlation, and Density Functional Theory (DFT) which offers an alternative paradigm through electron density. These methods form the computational backbone for investigating energetic molecules, protein-ligand interactions, and reaction mechanisms in pharmaceutical research [32] [33].

Theoretical Foundations

The Quantum Mechanical Basis

At the heart of quantum chemistry lies the time-independent Schrödinger equation:

ĤΨ = [T̂ + V̂ + Û]Ψ = EΨ

where Ĥ is the Hamiltonian operator, Ψ is the many-electron wavefunction, E is the total energy, T̂ represents kinetic energy operators, V̂ the external potential, and Û the electron-electron interaction potential [34]. Solving this equation exactly for many-electron systems is computationally intractable due to the exponential scaling with system size. Quantum chemical methods thus employ approximations to find solutions, with their accuracy determined by how completely they describe the quantized energy landscape and electron correlation effects [33].

Electron Correlation: A Central Challenge

Electron correlation represents the deficiency of the Hartree-Fock method and is defined as the difference between the exact energy and the Hartree-Fock energy: Ecorr = Eexact - EHF [35]. This correlation energy, though typically a small fraction (<1%) of the total energy, proves crucial for chemical accuracy in predicting molecular properties and reaction energetics [35]. Two primary types of electron correlation exist:

- Dynamic correlation: Arises from the instantaneous Coulomb repulsion between electrons, representing their correlated motion to avoid one another [35].

- Static correlation: Occurs in systems with near-degenerate electronic configurations, particularly important in molecules with stretched bonds, transition states, or transition metal complexes [35].

The treatment of electron correlation represents the fundamental distinction between HF, DFT, and post-HF methods, with significant implications for their computational cost and application domains.

Methodological Approaches

Hartree-Fock (HF) Method

The Hartree-Fock method approximates the many-electron wavefunction as a single Slater determinant, ensuring antisymmetry to satisfy the Pauli exclusion principle [33]. Each electron moves in the average field of all other electrons, simplifying the many-body problem. The HF energy is obtained by minimizing the expectation value of the Hamiltonian:

EHF = ⟨ΨHF|Ĥ|Ψ_HF⟩

where Ψ_HF is the HF wave function [33]. The method is solved iteratively via the self-consistent field (SCF) procedure. In drug discovery, HF provides baseline electronic structures for small molecules and serves as a starting point for more accurate methods [33]. However, its critical limitation is the neglect of electron correlation, leading to underestimated binding energies, particularly for weak non-covalent interactions like hydrogen bonding, π-π stacking, and van der Waals forces [33].

Post-Hartree-Fock Methods

Post-Hartree-Fock methods are designed to improve upon the HF approximation by incorporating electron correlation [36]. These methods expand the wavefunction beyond a single Slater determinant and can be categorized as follows:

Full Configuration Interaction (FCI): Provides the exact solution to the electronic Schrödinger equation within a given basis set by expanding the wavefunction as a linear combination of all possible electron configurations [35]. While serving as a benchmark for assessing other methods, FCI scales exponentially with system size, limiting its practical application [35].

Multi-Configurational Self-Consistent Field (MCSCF): Combines features of configuration interaction and SCF methods, optimizing both orbital coefficients and configuration interaction expansion coefficients [35]. MCSCF is particularly effective for treating static correlation in multi-reference systems and serves as a starting point for more advanced multi-reference methods [35].

Perturbation Theory (MP2, MP4): Adds electron correlation through Rayleigh-Schrödinger perturbation theory. MP2 (Møller-Plesset second-order perturbation theory) is popular for its favorable balance of cost and accuracy [36] [37].

Coupled Cluster (CC) Methods: Includes connected higher-order excitations systematically. CCSD(T) (coupled cluster with single, double, and perturbative triple excitations) is often called the "gold standard" of quantum chemistry for its high accuracy [36] [37].

Density Functional Theory (DFT)

DFT represents a paradigm shift from wavefunction-based methods, using the electron density ρ(r) as the fundamental variable instead of the many-electron wavefunction [34]. The theoretical foundation rests on the Hohenberg-Kohn theorems:

- The ground-state electron density uniquely determines all molecular properties [34] [38].

- The energy functional achieves its minimum value at the true ground-state density [38].

The Kohn-Sham framework introduces a system of non-interacting electrons with the same density as the real system, with the total energy functional expressed as:

E[ρ] = Ts[ρ] + Vext[ρ] + J[ρ] + E_XC[ρ]

where Ts[ρ] is the kinetic energy of non-interacting electrons, Vext[ρ] is the external potential energy, J[ρ] is the classical Coulomb energy, and EXC[ρ] is the exchange-correlation energy that contains all quantum many-body effects [38]. The success of DFT hinges on approximating EXC[ρ], as its exact form remains unknown [38].

Table 1: Evolution of Density Functional Approximations

| Functional Type | Description | Key Improvements | Example Functionals |

|---|---|---|---|

| LDA/LSDA | Local (Spin) Density Approximation: treats electron density as uniform | Foundation for all DFT methods | SVWN |

| GGA | Generalized Gradient Approximation: includes density gradient | Better for geometry optimizations | BLYP, PBE [39] |

| meta-GGA | Includes kinetic energy density | Improved energetics | TPSS, SCAN [38] [39] |

| Global Hybrids | Mixes DFT exchange with Hartree-Fock exchange | Error cancellation, better accuracy | B3LYP, PBE0 [38] |

| Range-Separated Hybrids | Varying HF/DFT mix with electron-electron distance | Better for charge-transfer, excited states | ωB97X, ωB97M [40] [38] |

Comparative Analysis of Methods

Performance and Applicability

Table 2: Method Comparisons for Drug Discovery Applications

| Method | Strengths | Limitations | Best Applications | Computational Scaling | Typical System Size |

|---|---|---|---|---|---|

| HF | Fast convergence; reliable baseline; well-established theory | No electron correlation; poor for weak interactions | Initial geometries; charge distributions; force field parameterization | O(N⁴) [33] | ~100 atoms [33] |

| DFT | High accuracy for ground states; handles electron correlation; wide applicability | Functional dependence; self-interaction error; dispersion challenges | Binding energies; electronic properties; transition states [33] | O(N³) [33] | ~500 atoms [33] |

| QM/MM | Combines QM accuracy with MM efficiency; handles large biomolecules | Complex boundary definitions; method-dependent accuracy | Enzyme catalysis; protein-ligand interactions [33] | O(N³) for QM region [33] | ~10,000 atoms [33] |

| MP2 | Accounts for electron correlation; more accurate than HF | Fails for metallic systems; no static correlation | Non-covalent interactions; preliminary correlation treatment | O(N⁵) | ~50-100 atoms |

| CCSD(T) | "Gold standard" for accuracy | Extremely computationally expensive | Final accurate energies; small system benchmarks | O(N⁷) | ~20-50 atoms |

Quantitative Accuracy Assessment

Table 3: Performance on Molecular Energy Benchmarks

| Method | Bond Length Error (Å) | Binding Energy Error (kcal/mol) | Reaction Barrier Error (kcal/mol) | Relative Cost |

|---|---|---|---|---|

| HF | 0.01-0.02 | 10-50 (overestimation) [33] | 5-15 | 1x |

| DFT (GGA) | 0.01-0.02 | 3-10 | 3-8 | 5-10x |

| DFT (Hybrid) | 0.005-0.015 | 1-5 | 1-5 | 10-50x |

| MP2 | 0.005-0.015 | 1-5 (but overbind) | 2-6 | 50-100x |

| CCSD(T) | ~0.001 | 0.1-1 | 0.1-1 | 1000-10,000x |

Experimental Protocols and Workflows

DFT Convergence Optimization Protocol

Recent advances in DFT efficiency focus on optimizing the self-consistent field (SCF) convergence:

- Initialization: Begin with default charge mixing parameters in codes like VASP [39]

- Bayesian Optimization: Apply data-efficient Bayesian algorithms to optimize charge mixing parameters [39]

- Iteration Monitoring: Track SCF iterations to convergence threshold

- Validation: Compare final energies and forces with reference calculations

This approach has demonstrated 20-40% reduction in SCF iterations across insulating, semiconducting, and metallic systems, providing significant computational savings without sacrificing accuracy [39].

Information-Theoretic Approach for Correlation Energy Prediction

For large systems where post-HF calculations are prohibitive, an information-theoretic approach (ITA) predicts electron correlation energies using linear relationships between ITA quantities computed at the HF level and post-HF correlation energies:

- Descriptor Calculation: Compute ITA quantities (Shannon entropy, Fisher information) from HF electron density [37]

- Linear Regression: Establish relationships: E_corr = a×ITA + b [37]

- Prediction: Apply regression equations to predict MP2 or CCSD(T) correlation energies at HF cost [37]

This protocol achieves chemical accuracy (∼1 kcal/mol) for various molecular clusters and polymers, enabling correlation energy estimates for systems with hundreds of atoms [37].

Neural Network Potentials for Accelerated Discovery

The Open Molecules 2025 (OMol25) initiative demonstrates a protocol for replacing expensive quantum calculations with neural network potentials (NNPs):

- Dataset Generation: Perform high-level DFT (ωB97M-V/def2-TZVPD) on diverse molecular structures [40]

- Model Training: Train NNPs (eSEN, UMA architectures) to reproduce DFT energies and forces [40]

- Validation: Benchmark against standard quantum chemistry datasets [40]

- Application: Perform molecular dynamics and geometry optimizations at quantum accuracy with significantly reduced computational cost [40]

This approach enables accurate simulations on "huge systems that I previously never even attempted to compute" according to user feedback [40].

The Scientist's Toolkit

Table 4: Essential Computational Resources

| Tool Category | Specific Tools | Function | Application Context |

|---|---|---|---|

| Quantum Chemistry Software | Gaussian, VASP [39], Qiskit [33] | Perform HF, DFT, post-HF calculations | General quantum chemistry, solid-state physics, quantum computing |

| Neural Network Potentials | eSEN, UMA models [40] | Accelerated energy and force calculations | Large biomolecules, molecular dynamics |

| Benchmark Datasets | OMol25 [40], GMTKN55 [40] | Training and validation of computational methods | Method development, machine learning |

| Analysis Tools | Multiwfn, VMD | Wavefunction analysis, visualization | Data interpretation, publication |

| Basis Sets | 6-311++G(d,p) [37], def2-TZVPD [40] | Represent molecular orbitals | All electronic structure calculations |

Current Research Trends and Future Projections

Machine Learning Integration

The integration of machine learning with quantum chemical methods is accelerating discovery across multiple fronts:

- Neural Network Potentials: Models trained on massive datasets (OMol25: 100M+ calculations) achieve DFT accuracy at significantly reduced computational cost, enabling simulations of large biomolecular systems [40].

- Property Prediction: Information-theoretic approaches using linear relationships between electron density descriptors and correlation energies bypass expensive post-HF computations while maintaining chemical accuracy [37].

- Parameter Optimization: Bayesian optimization of DFT computational parameters reduces self-consistent field iterations by 20-40%, improving computational efficiency without sacrificing accuracy [39].

Domain-Specific Advancements

Different application domains are driving specialized methodological developments:

- Drug Discovery: DFT applications now include binding energy calculations, transition state modeling for enzymatic reactions, and ADMET property prediction [33]. QM/MM approaches enable studies of complete enzyme systems [33].

- Energetic Materials: High-accuracy methods are essential for predicting stability, reactivity, and thermodynamic properties of energetic molecules [32].

- Materials Science: Advanced functionals (meta-GGAs, range-separated hybrids) address band gap prediction, magnetic properties, and surface chemistry [38].

Quantum Computing Interfaces

Emerging interfaces between quantum chemistry and quantum computing show promise for tackling currently intractable problems:

- Quantum Algorithms: Development of quantum algorithms for electronic structure problems that may exceed classical computational capabilities [33].

- Hybrid Approaches: Frameworks like El Agente Q demonstrate autonomous quantum chemistry calculations orchestrated by multiple domain-specific agents under LLM supervision [41].

The landscape of core quantum chemical methods continues to evolve, with DFT, HF, and post-HF approaches each maintaining distinct roles in the computational chemist's toolkit. For drug development professionals and researchers, method selection requires careful consideration of the accuracy-efficiency tradeoff, with HF providing initial insights, DFT offering practical accuracy for most applications, and post-HF methods delivering benchmark-quality results for critical investigations. The integration of machine learning approaches promises to further transform this landscape, making high-accuracy quantum chemical computations accessible for increasingly complex systems relevant to pharmaceutical development and materials design. As these methods continue to mature, they will enhance our fundamental understanding of energy quantization in chemical systems and accelerate the discovery of novel therapeutic agents and functional materials.

{# The Document}

Hybrid Strategies: Combining Accuracy and Scale with QM/MM

Efficient energy transduction, the conversion of energy among different forms, is a fundamental hallmark of living systems, powering essential processes from cellular motion to ATP synthesis. At the heart of these processes lie chemical reactions—such as ATP hydrolysis, proton-electron transfers, or electronic excitations—that are coupled to large-scale conformational changes in biomolecular machines. Understanding these mechanisms requires computational methods that can simultaneously describe the making and breaking of chemical bonds and the response of the massive protein and solvent environment. This is the central challenge of simulating energy quantization in chemical systems: capturing the discrete energy changes at the reactive site while accounting for the extensive biomolecular scaffold.

Hybrid Quantum Mechanical/Molecular Mechanical (QM/MM) methods have emerged as the indispensable framework for addressing this multi-scale problem [42]. By treating the reactive region with quantum mechanics and the surrounding environment with molecular mechanics, QM/MM strategies aim to combine the accuracy of quantum chemistry with the scale of classical force fields. This guide provides an in-depth technical overview of how modern QM/MM methodologies balance these often-competing demands of accuracy and scale to model complex bioenergy transduction phenomena, from long-range proton transport to photochemical reactions.

Core Methodological Foundations of QM/MM

The foundational principle of QM/MM is an intuitive partitioning of the system [43]. A relatively small region, where the electronic structure changes during a chemical reaction, is treated with a quantum mechanical (QM) method. The much larger remainder of the system, where atomic interactions are well-described by classical potentials, is treated with a molecular mechanical (MM) force field. The total energy of the system is expressed through a Hamiltonian that couples these two descriptions.

Energy Formulation and Coupling Schemes

Two primary schemes exist for calculating the total energy of a QM/MM system: the subtractive and the additive approaches [44].

Additive Scheme: This is the most common scheme in biomolecular applications. The total energy is a direct sum of the energy of the QM region, the energy of the MM region, and the explicit coupling terms between them [44]. ( E{Tot} = E{QM} + E{MM} + E{QM/MM} ) The coupling term, ( E_{QM/MM} ), typically includes electrostatic, van der Waals, and bonded interactions. A key advantage is that no MM parameters are required for the QM atoms, as their energy is computed quantum mechanically [44].

Subtractive Scheme: In this scheme, three independent calculations are performed: one on the QM region, one on the entire system at the MM level, and one on the QM region at the MM level. The total energy is then computed as ( E{Tot} = E{QM} + E{MM, full} - E{MM, QM} ). The ONIOM method is a prominent example of this scheme [44] [45]. Its main advantage is simplicity and easy implementation with standard QM and MM codes.

Electrostatic Embedding

The treatment of electrostatic interactions between the QM and MM regions is critical for accuracy. The most widely used approach is electrostatic embedding.

- Mechanism: The atomic point charges of the MM atoms are incorporated directly into the QM Hamiltonian. This means the QM wavefunction (or electron density) is computed in the presence of the electric field generated by the classical environment [44].

- Effect: This allows the polarized electron density of the QM region to respond to the MM environment, and vice-versa, providing a physically realistic description of the mutual polarization. It has been shown that electrostatic embedding can achieve accuracy close to a full QM treatment of the entire system for many applications [44].

- Considerations: At short QM-MM distances, point charges from the MM force field can cause overpolarization of the QM electron density. A more physical model to mitigate this is to "blur" the MM charges using spherical Gaussian distributions [42].

While polarized embedding, which uses polarizable force fields for the MM region, represents the most sophisticated model, its use is not yet widespread due to the complexity and computational cost of polarizable force fields [42] [44].

Covalent Boundary Treatment

A crucial technical detail is the treatment of the boundary when the QM/MM partition cuts through one or more covalent bonds, which is common when selecting an active site. Several strategies exist to handle this boundary:

- Link Atoms: The most common approach, where hydrogen atoms (or other capping atoms) are added to the dangling bonds of the QM region to satisfy its valency [42] [45]. Care must be taken to avoid artificial polarization, and it is generally advised against partitioning across highly polar covalent bonds [42].

- Frozen Orbitals: Localized molecular orbitals are frozen at the boundary to satisfy the valency of the QM fragment [42].

- Pseudopotentials: Boundary atoms are replaced with specialized pseudopotentials that represent the frozen core electrons and the core-environment interaction [42].

Table 1: Comparison of Primary QM/MM Coupling Schemes

| Feature | Additive Scheme | Subtractive Scheme (e.g., ONIOM) |

|---|---|---|

| Energy Expression | ( E{QM} + E{MM} + E_{QM/MM} ) | ( E{QM} + E{MM, full} - E_{MM, QM} ) |

| Implementation | Requires specialized, integrated QM/MM code. | Can be set up with separate QM and MM software. |

| MM Parameters for QM atoms | Not required. | Required for the QM region in the MM calculations. |