Exchange-Correlation Functionals: From Theoretical Foundations to Advanced Applications in Drug Discovery

This article provides a comprehensive guide to exchange-correlation (XC) functionals, the critical yet approximate component of Density Functional Theory (DFT) that governs its accuracy.

Exchange-Correlation Functionals: From Theoretical Foundations to Advanced Applications in Drug Discovery

Abstract

This article provides a comprehensive guide to exchange-correlation (XC) functionals, the critical yet approximate component of Density Functional Theory (DFT) that governs its accuracy. Tailored for researchers and drug development professionals, we explore the theoretical foundations of XC functionals, from Local Density Approximation to modern machine-learned models. The scope covers their practical application in targeting key proteins like SARS-CoV-2 Mpro and RdRp, outlines systematic strategies for troubleshooting common failures in complex systems, and provides a framework for validating functional performance against experimental and high-accuracy benchmark data. This resource is designed to empower scientists in selecting and applying XC functionals with greater confidence for predictive molecular modeling.

The Quantum Mechanical Heart of DFT: Unpacking Exchange-Correlation Functionals

Density Functional Theory (DFT) stands as a cornerstone of modern computational chemistry and materials science, providing a powerful framework for understanding the electronic structure of atoms, molecules, and solids. Unlike wavefunction-based methods that struggle with the computational complexity of many-electron systems, DFT dramatically simplifies the problem by using the electron density as its fundamental variable. This approach transforms the intractable many-body Schrödinger equation into a manageable set of equations that can be solved with significantly reduced computational cost. The theoretical foundation of DFT rests squarely on two pivotal developments: the Hohenberg-Kohn theorems, which established the formal validity of using electron density as the basic variable, and the Kohn-Sham equations, which provided a practical computational scheme for implementing the theory [1] [2]. These developments have made DFT an indispensable tool across numerous scientific disciplines, from drug design and materials science to catalysis and nanotechnology, enabling researchers to predict molecular properties, reaction mechanisms, and material behaviors with remarkable accuracy and efficiency.

The significance of DFT continues to grow, particularly in pharmaceutical research where it facilitates drug design by elucidating electronic interactions between potential drug molecules and their biological targets [3] [4]. By solving the Kohn-Sham equations with precision up to 0.1 kcal/mol, DFT enables accurate electronic structure reconstruction, providing theoretical guidance for optimizing drug-excipient composite systems and predicting reactive sites through analysis of Fukui functions [3]. This review examines the formal theoretical basis of DFT, focusing on the Hohenberg-Kohn theorems and Kohn-Sham equations, while framing their development within the ongoing research quest for more accurate and versatile exchange-correlation functionals.

Theoretical Foundations: The Hohenberg-Kohn Theorems

The First Hohenberg-Kohn Theorem

The first Hohenberg-Kohn theorem establishes the fundamental principle that makes DFT possible: the ground-state electron density uniquely determines all properties of a many-electron system, including the external potential and the full many-body wavefunction [2] [5]. Formally stated, for a system of N interacting electrons moving in an external potential Vext(r), this potential is uniquely determined (up to an additive constant) by the ground state electron density n₀(r) [1] [6].

This theorem represents a profound simplification of the quantum mechanical description of matter. Whereas the full many-electron wavefunction depends on 3N spatial variables (plus spins), the electron density depends on only three spatial variables, regardless of the number of electrons. The theorem proves that there exists a unique mapping between the ground state density and the external potential, meaning that distinct external potentials cannot yield the same ground state density [2] [7]. This one-to-one correspondence between density and potential legitimizes the use of electron density as the fundamental variable for describing many-electron systems.

The mathematical proof of this theorem, particularly following Levy's approach, is remarkably straightforward [1]. Consider two external potentials v₁(r) and v₂(r) that differ by more than a constant but produce the same ground-state density n(r). These would correspond to two different Hamiltonians H₁ and H₂ with ground-state wavefunctions ψ₁ and ψ₂. However, since both wavefunctions yield the same density, the Rayleigh-Ritz variational principle leads to a contradiction, proving that the initial assumption must be false [1] [2]. This elegant proof establishes the theoretical foundation for the entire DFT framework.

The Second Hohenberg-Kohn Theorem

The second Hohenberg-Kohn theorem provides the variational principle that makes DFT computationally practical [2] [5]. It states that for any trial density ñ(r) that satisfies ñ(r) ≥ 0 and ∫ñ(r)dr = N, the energy functional E[ñ] satisfies the inequality:

E₀ ≤ E[ñ]

where E₀ is the true ground-state energy [2]. This theorem guarantees that the correct ground-state density minimizes the total energy functional [5].

The total energy functional can be expressed as:

E[ñ] = F[ñ] + ∫v(r)ñ(r)dr

where F[ñ] is a universal functional of the density (independent of the external potential) that contains the kinetic energy and electron-electron interaction terms [2] [7]. The minimum value of this functional is the exact ground-state energy, achieved when the exact ground-state density is inserted [5].

Formal Refinements and Representability Issues

While the Hohenberg-Kohn theorems provide a solid theoretical foundation, their practical implementation faces challenges related to "representability" problems [2] [6]. The v-representability problem concerns whether a given density can be obtained from the ground state of a Schrödinger equation with some external potential [6]. Similarly, N-representability conditions determine whether a density can be derived from an antisymmetric wavefunction [2].

To address these limitations, the constrained-search formulation developed by Levy provides a more robust framework [2]. This approach defines the universal functional as:

F[n(r)] = min⟨Ψ|T̂ + Û|Ψ⟩ Ψ → n(r)

where the search is constrained over all wavefunctions Ψ that yield the density n(r) [2]. This formulation extends DFT to N-representable densities, which only need to satisfy the conditions: n(r) ≥ 0, ∫n(r)dr = N, and ∫|∇n(r)¹⁄₂|²dr < ∞ [2].

Recent mathematical analyses have further refined our understanding of the Hohenberg-Kohn theorems, restructuring them into two components: HK1 states that if two potentials share a common ground-state density, they share a common ground-state wavefunction; HK2 states that if two potentials share any common eigenstate that is nonzero almost everywhere, they must be equal up to a constant [6]. This refined perspective highlights that the Hohenberg-Kohn theorem is actually a consequence of a more comprehensive mathematical framework rather than being the fundamental basis itself [6].

Table 1: Key Concepts in the Hohenberg-Kohn Theorems

| Concept | Mathematical Expression | Physical Significance | ||

|---|---|---|---|---|

| First HK Theorem | Vext(r) ⇌ n₀(r) (bijective mapping) | Electron density uniquely determines all system properties | ||

| Second HK Theorem | E₀ = min E[ñ] for ñ(r) ∈ N-representable densities | Variational principle for energy minimization | ||

| Constrained Search Formulation | F[n] = min⟨Ψ | T̂+Û | Ψ⟩ for Ψ → n | Extends DFT to N-representable densities |

| Universal Functional | F[n] = T[n] + U[n] | Contains kinetic and electron-electron interaction terms |

The Kohn-Sham Equations: From Theory to Practice

The Kohn-Sham Ansatz

While the Hohenberg-Kohn theorems established the theoretical foundation for DFT, they did not provide a practical computational scheme. This limitation was addressed by Kohn and Sham in 1965 through their ingenious approach now known as the Kohn-Sham equations [8]. The fundamental insight was to replace the original interacting system with an auxiliary non-interacting system that reproduces the same ground-state density [1] [8].

The Kohn-Sham approach partitions the universal functional F[n] into three distinct components:

F[n] = Tₛ[n] + Uₕ[n] + Eₓc[n]

where:

- Tₛ[n] is the kinetic energy of a system of non-interacting electrons with density n

- Uₕ[n] is the classical Hartree electrostatic energy: Uₕ[n] = ½∫∫[n(r)n(r')/|r-r'|]drdr'

- Eₓc[n] is the exchange-correlation energy that captures all remaining many-body effects [8]

This separation is crucial because it isolates the computationally challenging components into Eₓc[n], while allowing accurate calculation of the dominant kinetic energy term Tₛ[n] through a single-particle picture [1] [8].

The Kohn-Sham Equations

Using the above separation, the total energy functional becomes:

E[n] = Tₛ[n] + ∫vₑₓₜ(r)n(r)dr + Uₕ[n] + Eₓc[n]

Applying the variational principle to this energy functional with respect to the density leads to the effective single-particle Kohn-Sham equations:

[ -½∇² + vₑff(r) ] φᵢ(r) = εᵢ φᵢ(r)

where the Kohn-Sham effective potential is:

vₑff(r) = vₑₓₜ(r) + vₕ(r) + vₓc(r)

with:

- vₑₓₜ(r) being the external potential (typically electron-nucleus attraction)

- vₕ(r) = ∫[n(r')/|r-r'|]dr' being the Hartree potential

- vₓc(r) = δEₓc[n]/δn(r) being the exchange-correlation potential [8]

The electron density is constructed from the Kohn-Sham orbitals:

n(r) = Σᵢᵛᵒᶜ |φᵢ(r)|²

These equations must be solved self-consistently since vₑff depends on the density, which in turn depends on the Kohn-Sham orbitals [8] [7].

Table 2: Components of the Kohn-Sham Energy Functional

| Energy Component | Mathematical Expression | Physical Interpretation | ||

|---|---|---|---|---|

| Non-interacting Kinetic Energy | Tₛ[n] = Σ⟨φᵢ | -½∇² | φᵢ⟩ | Kinetic energy of reference non-interacting system |

| External Potential Energy | Eₑₓₜ[n] = ∫vₑₓₜ(r)n(r)dr | Electron-nucleus attraction | ||

| Hartree Energy | Uₕ[n] = ½∫∫[n(r)n(r')/ | r-r' | ]drdr' | Classical electron-electron repulsion |

| Exchange-Correlation Energy | Eₓc[n] = (T[n] - Tₛ[n]) + (U[n] - Uₕ[n]) | Quantum mechanical many-body effects |

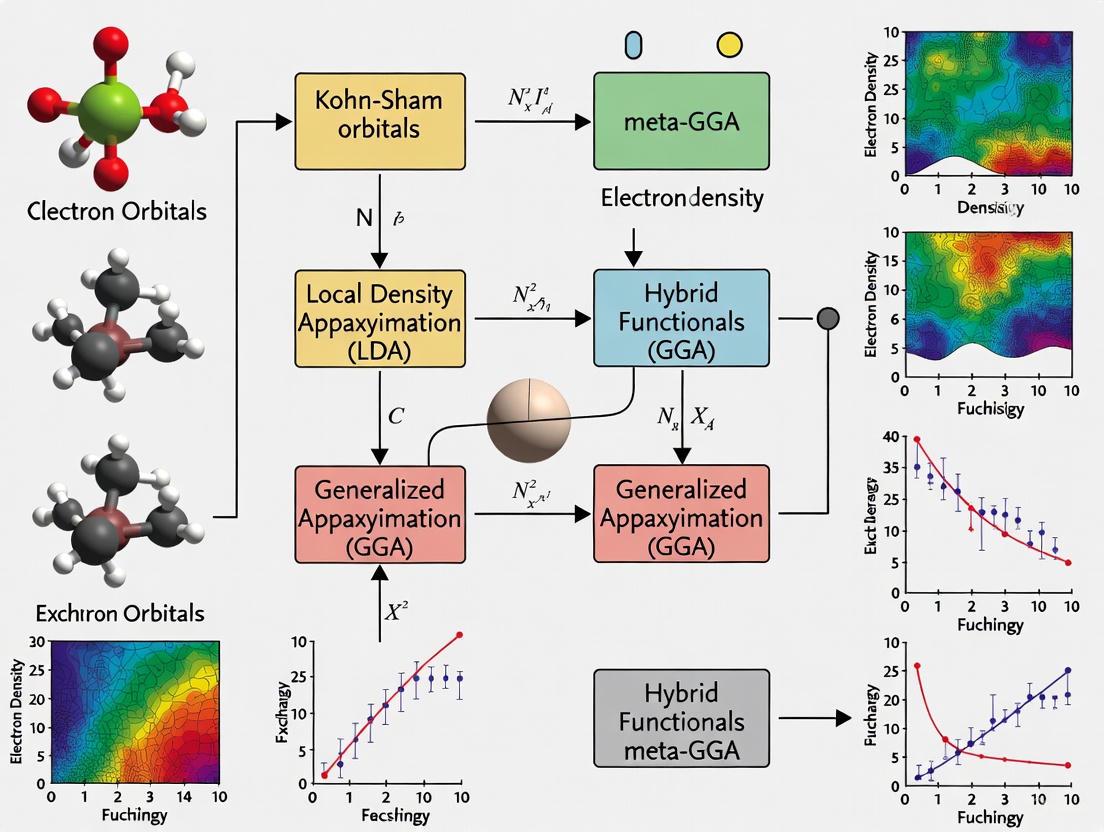

Logical Flow from Hohenberg-Kohn to Kohn-Sham

The logical progression from the Hohenberg-Kohn theorems to the practical Kohn-Sham equations represents one of the most elegant developments in theoretical chemistry and physics. The following diagram illustrates this logical structure and the self-consistent solution method:

Diagram 1: Logical flow from HK theorems to KS equations

Exchange-Correlation Functionals: The Central Challenge

The Local Density Approximation

The Local Density Approximation (LDA) represents the simplest and historically first practical approximation for the exchange-correlation functional [1]. In LDA, the exchange-correlation energy at point r is approximated as that of a homogeneous electron gas with the same density:

Eₓcᴸᴰᴰ[n] = ∫n(r)εₓc(n(r))dr

where εₓc(n) is the exchange-correlation energy per particle of a homogeneous electron gas of density n [1]. LDA works remarkably well for systems with slowly varying electron densities, such as simple metals and certain semiconductors, and often provides better results than Hartree-Fock for bulk properties of solids [1].

However, LDA suffers from several significant limitations. It tends to overbind molecules and solids, predicting bond lengths that are too short and dissociation energies that are too large [1]. More critically, LDA fails dramatically for strongly correlated systems where an independent particle picture breaks down, such as transition metal oxides (e.g., FeO, MnO, NiO) which are Mott insulators but LDA incorrectly predicts them to be metals or semiconductors [1]. Additionally, LDA does not account for van der Waals bonding and provides a poor description of hydrogen bonding, both essential for biochemical systems [1].

Generalized Gradient Approximations

The Generalized Gradient Approximation (GGA) represents a significant improvement over LDA by including the gradient of the density:

Eₓcᴳᴳᴰ[n] = ∫n(r)εₓc(n(r),∇n(r))dr

This dependence on the density gradient allows GGA to account for the inhomogeneity of real electron densities [1] [3]. Various forms of GGA have been developed, with the Perdew-Burke-Ernzerhof (PBE) functional being one of the most widely used in materials science [9].

GGA generally improves upon LDA for molecular properties, hydrogen-bonded systems, and surface/interface studies [3]. For example, in studies of the L1₀-MnAl compound, GGA provides greater accuracy in describing the electronic structure and magnetic behavior compared to LDA [9]. However, GGAs do not always represent a systematic improvement over LDA, particularly for certain metallic systems where the cancellation of errors in LDA happens to work well [1].

Advanced Functionals and Hybrid Approaches

Beyond GGA, the development of more sophisticated functionals remains an active area of research. Meta-GGA functionals incorporate the kinetic energy density in addition to the density and its gradient, providing more accurate descriptions of atomization energies, chemical bond properties, and complex molecular systems [3].

Hybrid functionals, such as B3LYP, mix a portion of exact Hartree-Fock exchange with DFT exchange:

Eₓcᴴʸᵇʳⁱᵈ = αEₓᴴᶠ + (1-α)Eₓᴰᶠᵀ + E_cᴰᶠᵀ

where α is the mixing parameter [3] [4]. Hybrid functionals are widely used for studying reaction mechanisms and molecular spectroscopy, offering improved accuracy for many chemical applications [3].

Recent advancements include double hybrid functionals that incorporate second-order perturbation theory corrections, substantially improving the accuracy of excited-state energies and reaction barrier calculations [3]. Additionally, the integration of DFT with machine learning has emerged as a promising direction, with deep learning models being used to approximate kinetic energy density functionals [3].

Table 3: Classification of Exchange-Correlation Functionals

| Functional Type | Dependence | Strengths | Limitations |

|---|---|---|---|

| LDA | n(r) | Simple metals, bulk semiconductors | Poor for correlated systems, no van der Waals |

| GGA | n(r), ∇n(r) | Molecular properties, hydrogen bonding | Inconsistent improvement over LDA |

| Meta-GGA | n(r), ∇n(r), τ(r) | Atomization energies, chemical bonds | Increased computational cost |

| Hybrid | Mixed exact and DFT exchange | Reaction mechanisms, molecular spectroscopy | Parameter dependence, higher cost |

| Double Hybrid | Includes perturbation theory | Excited states, reaction barriers | Highest computational cost |

Computational Protocols in DFT-Based Research

Standard Computational Workflow

The application of DFT to molecular and materials systems follows a well-established computational workflow. The process begins with system preparation, where the molecular geometry or crystal structure is defined. For drug design applications, this typically involves obtaining structures from crystallographic databases or generating reasonable initial geometries [3] [4].

The core computational procedure involves the self-consistent solution of the Kohn-Sham equations:

- Initialization: Construct an initial guess for the electron density, often from superposition of atomic densities

- Potential Construction: Build the effective potential vₑff(r) using the current density

- Orbital Solution: Solve the Kohn-Sham equations to obtain new orbitals and eigenvalues

- Density Update: Construct a new density from the occupied Kohn-Sham orbitals

- Convergence Check: Assess whether the density and energy have converged

- Iteration: Repeat steps 2-5 until self-consistency is achieved [8] [7]

This self-consistent field (SCF) procedure forms the computational heart of DFT calculations and is implemented in all major quantum chemistry software packages.

Protocol for Drug Design Applications

In pharmaceutical research, DFT calculations follow specific protocols tailored to biological systems. A typical protocol for studying drug-receptor interactions includes:

System Preparation: Isolate the active site of the receptor and prepare the drug molecule, often adding hydrogen atoms and assigning protonation states appropriate for physiological conditions [3] [4]

Geometry Optimization: Fully optimize the molecular geometry using medium-tier functionals (e.g., B3LYP with 6-31G* basis set) to locate minima on the potential energy surface, with convergence criteria typically set to 10⁻⁵ Ha for energy and 0.001 Ha/Å for forces [4]

Single-Point Energy Calculation: Perform higher-level single-point energy calculations using larger basis sets and more sophisticated functionals to obtain accurate energetics [3]

Electronic Analysis: Calculate molecular electrostatic potentials, frontier orbital energies, Fukui functions, and other electronic descriptors to understand reactivity and interaction patterns [3]

Solvation Effects: Incorporate solvent effects using implicit solvation models (e.g., COSMO, PCM) to simulate physiological conditions [3]

For complex systems, multiscale approaches such as the ONIOM method are employed, where DFT is used for high-precision calculations of the drug molecule core regions while molecular mechanics force fields model the protein environment [3].

The following diagram illustrates a typical DFT computational workflow in drug design:

Diagram 2: DFT computational workflow in drug design

Successful DFT investigations require a suite of computational tools and methodologies. The table below outlines key components of the modern computational chemist's toolkit for DFT studies:

Table 4: Essential Computational Resources for DFT Research

| Resource Category | Specific Tools/Components | Function/Role |

|---|---|---|

| Software Packages | VASP, Gaussian, Quantum ESPRESSO, ORCA | Implement DFT algorithms and SCF procedures |

| Exchange-Correlation Functionals | LDA, PBE (GGA), B3LYP (hybrid), ωB97X-D (range-separated) | Approximate quantum many-body effects |

| Basis Sets | Plane waves, Gaussian-type orbitals (6-31G*, def2-TZVP), numerical orbitals | Represent Kohn-Sham orbitals and electron density |

| Solvation Models | COSMO, PCM, SMD | Simulate environmental effects in solution |

| Analysis Tools | Multivfn, VESTA, ChemCraft | Visualize and analyze electronic structure properties |

| Computational Hardware | High-performance computing clusters, GPUs, cloud computing resources | Provide necessary computational power for large systems |

Applications in Drug Design and Materials Science

DFT in Pharmaceutical Research

DFT has become an indispensable tool in modern drug discovery, enabling researchers to understand and predict molecular interactions at the quantum mechanical level [3] [4]. In pharmaceutical formulation development, DFT helps elucidate the electronic driving forces governing active pharmaceutical ingredient (API)-excipient co-crystallization, allowing researchers to predict reactive sites and guide stability-oriented co-crystal design [3]. For nanodelivery systems, DFT optimizes carrier surface charge distribution through calculations of van der Waals interactions and π-π stacking energies, thereby enhancing targeting efficiency [3].

The COVID-19 pandemic highlighted the utility of DFT in drug discovery, with researchers employing time-dependent DFT (TD-DFT) to study tautomerism in the virus and investigate the anti-coronavirus potential of various compounds, including ferrocence derivatives and tetrazole-based molecules [4]. DFT calculations have also been instrumental in studying organometallic drugs, nucleic acid base derivatives for antileukemic applications, and zinc proteinases for anticancer and cardiovascular therapies [4].

Accuracy and Limitations in Drug Property Prediction

The accuracy of DFT predictions varies significantly depending on the functional employed and the molecular properties being studied. For the widely used B3LYP hybrid functional, estimated accuracies for various molecular drug properties include:

- Transition Barriers: ~1 kcal/mol accuracy

- Molecular Geometry: Bond lengths accurate to ~0.005 Å, bond angles to ~0.2°

- Ionization Energies and Electron Affinities: ~0.2 eV accuracy

- Hydrogen Bonding Energies: 1-2 kcal/mol accuracy

- Metal-Ligand Bonding: 4-5 kcal/mol accuracy [4]

However, DFT struggles with certain properties, particularly atomization energies (inaccurate by ~2.2 kcal/mol) and charge transfer interactions (1-2 kcal/mol errors) [4]. These limitations highlight the importance of functional selection and method validation for specific applications.

Materials Science Applications

Beyond pharmaceutical applications, DFT plays a crucial role in materials science, particularly in the design and characterization of novel materials for energy applications. In the study of magnetic materials, such as the L1₀-MnAl compound, DFT calculations reveal how the choice of exchange-correlation functional significantly influences the predicted electronic structure and magnetic properties [9]. GGA functionals generally provide more accurate descriptions of the electronic structure and magnetic behavior compared to LDA for such systems [9].

DFT has also proven invaluable in studying strongly correlated systems, semiconductor materials, catalytic surfaces, and energy storage materials. Despite its limitations in describing strong correlation effects, DFT remains the primary computational tool for initial screening and characterization of novel materials due to its favorable balance between computational cost and predictive accuracy.

The Hohenberg-Kohn theorems and Kohn-Sham equations together form the rigorous theoretical foundation upon which modern density functional theory is built. The Hohenberg-Kohn theorems established the formal validity of using electron density as the fundamental variable, while the Kohn-Sham equations provided the practical computational framework that makes DFT applications feasible. Despite the remarkable success of DFT across countless scientific domains, the search for more accurate and broadly applicable exchange-correlation functionals remains an active and challenging area of research.

Future developments in DFT will likely focus on several key directions. Machine learning-augmented approaches are showing promise for developing more accurate functionals and accelerating calculations [3]. The integration of DFT with multiscale modeling frameworks will extend the applicability of quantum mechanical methods to larger and more complex biological systems [3]. Additionally, methodological advances in treating excited states, van der Waals interactions, and strongly correlated systems will address current limitations and expand the boundaries of DFT applicability.

As computational power continues to grow and theoretical methods advance, DFT will remain an essential tool in the molecular modeling toolkit, providing fundamental insights into electronic structure and enabling the rational design of novel materials and pharmaceutical compounds. The continued development of exchange-correlation functionals represents not merely a technical challenge but a fundamental scientific endeavor to capture the rich physics of electron correlation within an computationally efficient single-particle framework.

In density functional theory (DFT), the exchange-correlation (XC) functional encapsulates the complex, non-classical interactions between electrons and the correction for quantum kinetic energy. This whitepaper delineates the fundamental nature of the XC functional, its mathematical formulation, and the hierarchy of approximations developed to render it computationally tractable. We further explore the transformative impact of machine learning in constructing next-generation functionals and provide a detailed protocol for their development and benchmarking, offering a critical resource for researchers in computational chemistry and materials science.

Density Functional Theory has established itself as the most widely used electronic structure method for predicting the properties of molecules and materials [10]. Its foundation is the elegant Hohenberg-Kohn theorem, which proves that the ground-state energy of a many-electron system is a unique functional of the electron density. The practical application of DFT was realized through the Kohn-Sham scheme, which introduces a fictitious system of non-interacting electrons that has the same density as the real, interacting system. The total electronic energy in this framework is expressed as:

$$E\textrm{electronic} = T\textrm{non-int.} + E\textrm{estat} + E\textrm{xc}$$ [11]

Here, (T\textrm{non-int.}) is the kinetic energy of the non-interacting electrons, (E\textrm{estat}) is the classical electrostatic energy (Hartree energy and electron-nuclear attraction), and (E_\textrm{xc}) is the exchange-correlation energy, the central unknown of DFT [11]. The XC functional must account for everything not captured by the other terms: the difference between the true kinetic energy and the non-interacting kinetic energy, the exchange energy arising from the Pauli exclusion principle, and the correlation energy from electron-electron Coulomb interactions beyond the mean-field approximation [12].

The following diagram illustrates the central role of the XC functional within the Kohn-Sham DFT self-consistent cycle:

Kohn-Sham DFT Self-Consistent Cycle. The XC functional is the critical, unknown component required to construct the effective potential.

The Mathematical and Physical Basis of the XC Functional

Formal Definition and the XC Hole

Formally, the exchange-correlation energy can be defined in terms of the exchange-correlation hole, (\rho_{xc}(\mathbf{r}, \mathbf{r}')), a concept that provides a physically intuitive picture. The XC hole represents the reduced probability of finding an electron at position (\mathbf{r}') given that there is an electron at position (\mathbf{r}). It describes the "hole" that an electron digs around itself due to exchange (Pauli repulsion) and correlation (Coulomb repulsion) effects. The XC energy is then the energy resulting from the interaction between an electron at (\mathbf{r}) and its XC hole:

$$E{xc} = \frac{1}{2} \int d^3r \int d^3r' \frac{\rho(\mathbf{r}) \rho{xc}(\mathbf{r}, \mathbf{r}')}{|\mathbf{r} - \mathbf{r}'|}$$ [12]

A key physical constraint is that the XC hole must integrate to exactly one missing electron, a sum rule that robust approximations strive to satisfy [11].

The Functional Derivative: The XC Potential

In the Kohn-Sham equations, the functional derivative of the XC energy with respect to the electron density is required. This is the exchange-correlation potential, (V_{xc}(\mathbf{r})):

$$V{xc}(\mathbf{r}) = \frac{\delta E{xc}[n]}{\delta n(\mathbf{r})}$$ [11]

This potential is a local multiplicative operator, unlike the non-local potential in Hartree-Fock theory. The accuracy of the Kohn-Sham orbitals, and consequently properties like band gaps, depends critically on this potential [13].

A Hierarchy of Approximations

The exact form of (E_{xc}[n]) is unknown, necessitating approximations. These form a hierarchy, with each level incorporating more complex information about the electron density at the cost of increased computational expense.

The Local Density Approximation (LDA)

The LDA is the simplest approximation. It assumes the XC energy per particle at a point (\mathbf{r}) in an inhomogeneous system is equal to that of a homogeneous electron gas (HEG) with the same density:

$$E{xc}^{LDA}[\rho] = \int \rho(\mathbf{r}) \epsilon{xc}^{hom}(\rho(\mathbf{r})) d\mathbf{r}$$ [14]

Here, (\epsilon{xc}^{hom}(\rho)) is the XC energy per particle of the HEG, which is decomposed into exchange and correlation parts, (\epsilonx^{hom}) and (\epsilonc^{hom}). The exchange part has a simple analytic form, (\epsilonx^{hom} \propto \rho^{1/3}), while the correlation part is derived from highly accurate quantum Monte Carlo (QMC) simulations [14] [15]. LDA is surprisingly powerful for calculating structural properties but suffers from systematic errors, such as overbinding and significant band gap underestimation [14] [13].

Generalized Gradient Approximations (GGA)

GGA improves upon LDA by including the dependence on the gradient of the density, (|\nabla \rho(\mathbf{r})|), to account for inhomogeneities:

$$E{xc}^{GGA}[\rho] = \int \epsilon{xc}^{hom}(\rho(\mathbf{r})) F_{xc}(\rho(\mathbf{r}), |\nabla \rho(\mathbf{r})|) d\mathbf{r}$$ [11] [12]

The enhancement factor, (F{xc}), is a dimensionless function that modulates the LDA energy density based on the reduced density gradient. Different forms of (F{xc}) define various GGA functionals (e.g., PBE, PW91). GGA typically improves atomization energies and lattice constants over LDA but often under-predicts band gaps [13] [12].

Meta-GGAs and Hybrid Functionals

Meta-GGAs introduce a further dependency on the kinetic energy density of the Kohn-Sham orbitals,

$$\tau(\mathbf{r}) = \frac{1}{2} \sum{i}^{occ} |\nabla \phii(\mathbf{r})|^2$$ [12]

This allows the functional to detect the local bonding character (e.g., metallic, covalent, or weak bonds) and suppress one-electron self-interaction error [11]. Functionals like SCAN and MCML are examples that offer improved accuracy for diverse systems [11] [13].

Hybrid functionals mix a fraction of the exact, non-local Hartree-Fock exchange with GGA or meta-GGA exchange and correlation:

$$Ex^{Hybrid} = a Ex^{EXX} + (1-a) E_x^{GGA}$$ [12]

where (E_x^{EXX}) is the exact exchange energy. Popular functionals like B3LYP use empirically determined mixing parameters and are highly successful in quantum chemistry, though computationally more expensive than semi-local functionals [12].

Table 1: Hierarchy of Common Exchange-Correlation Functional Approximations

| Approximation | Functional Dependence | Key Examples | Strengths | Weaknesses |

|---|---|---|---|---|

| LDA | (\rho(\mathbf{r})) | SVWN [14] | Simple, robust, good structures | Overbinding, poor band gaps |

| GGA | (\rho(\mathbf{r}), \nabla\rho(\mathbf{r})) | PBE, PW91 [12] | Better energetics than LDA | Band gap underestimation [13] |

| Meta-GGA | (\rho(\mathbf{r}), \nabla\rho(\mathbf{r}), \tau(\mathbf{r})) | SCAN, MCML [11] | Detects bond type, better for solids and surfaces | Increased complexity |

| Hybrid | (\rho(\mathbf{r}), \nabla\rho(\mathbf{r}), {\phi_i}) | B3LYP, HSE [13] [12] | Accurate for molecules, improved gaps | High computational cost |

The Vanguard: Machine-Learned XC Functionals

Machine learning (ML) is emerging as a powerful paradigm for developing XC functionals, moving beyond hand-crafted forms to data-driven models.

Neural Network Representations of the XC Functional

Neural networks (NNs) can be trained to represent the XC energy or potential directly. One approach uses a simple feed-forward NN that maps the electron density and its derivatives at a point in space to the XC energy density at that point [15]. For LDA, a point-to-point mapping suffices, while for GGA, a region-to-point mapping (e.g., a 5x5x5 cube of density values) allows the NN to learn the effect of the density gradient implicitly [15]. These NN functionals have been shown to successfully reproduce traditional LDA and GGA results and generalize to systems like bulk silicon [15].

Bayesian Error Estimation and High-Accuracy Functional Fitting

Another ML approach fits the parameters of a semi-local functional form (e.g., for the exchange enhancement factor) against a large database of high-fidelity reference data, such as atomization energies of molecules and solids [16]. Using a Bayesian linear regression framework allows for uncertainty quantification (UQ), where the fitted parameters are treated as random variables. This enables the generation of an ensemble of functionals, providing an error bar on predictions like binding energies or lattice constants, which is invaluable for predictive materials discovery [11] [16].

Large-scale efforts, such as Microsoft's Skala functional, leverage deep learning and an unprecedented volume of high-accuracy reference data generated from wavefunction-based methods. These functionals aim to achieve chemical accuracy (errors below 1 kcal/mol) for properties like atomization energies while retaining the computational cost of semi-local DFT [10].

Table 2: Machine Learning Approaches to XC Functional Development

| ML Approach | Description | Key Features | Example Functionals |

|---|---|---|---|

| Neural Network Interpolation [15] | NN trained to map electron density (grid) to XC potential. | Learns functional form directly; no a priori assumptions. | NN-LDA, NN-GGA |

| Bayesian Fitting [16] | Fits parameters of an analytical functional form using Bayesian regression. | Provides uncertainty estimates on predictions. | mBEEF, BEEF-vdW |

| Deep-Learned Functionals [10] | Uses modern deep learning architectures trained on massive, high-accuracy datasets. | Aims for chemical accuracy across broad chemistry. | Skala |

Experimental Protocols and Research Toolkit

Protocol: Developing and Training a Bayesian XC Functional

This protocol outlines the methodology for creating an XC functional with UQ capabilities, as detailed in [16].

Define the Functional Form: Choose a flexible, linear model for the exchange enhancement factor, (Fx(s)), as a function of the reduced density gradient (s). For example: ( Fx(s) = \sum{i=1}^{M} \xii fi(s) ) where (fi(s)) are known basis functions and (\xi_i) are the coefficients to be determined.

Assemble the Training Dataset: Curate a database of highly accurate, experimentally measured or quantum-chemically calculated properties. A common choice is atomization energies (for molecules) and cohesive energies (for solids) from standardized sets like G2/97.

Generate DFT Input Data: Perform DFT calculations for all systems in the training set using a preliminary functional. The goal is to compute the input features (electron density, its gradient, etc.) for the linear model, not the final energies.

Train the Bayesian Model: Employ a Relevance Vector Machine (RVM), a Bayesian sparse kernel technique, to learn the coefficients (\xi_i). The RVM automatically prunes irrelevant basis functions, preventing overfitting. The output is a posterior probability distribution for the coefficients, (P(\xi | \text{Data})).

Validate the Functional: Test the trained functional on a held-out test set of molecules and materials not used in training. Evaluate its performance on both the target properties (e.g., atomization energies) and other key properties like lattice constants and bulk moduli.

Propagate Uncertainty: To estimate the error of a predicted property (e.g., the binding energy of a new molecule), use the ensemble of functionals defined by the distribution of coefficients (P(\xi | \text{Data})). The variance of the predictions across this ensemble provides the uncertainty estimate.

The workflow for this protocol, and for the related neural network approach, is summarized below:

Workflow for ML-XC Functional Development. Two primary machine learning paths are shown: Neural Network interpolation and Bayesian fitting, culminating in a validated functional.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools and Datasets for XC Functional Research

| Tool / Reagent | Type | Function in Research | Example Sources/Implementations |

|---|---|---|---|

| Quantum Monte Carlo Data | Reference Data | Provides highly accurate correlation energies for the homogeneous electron gas, serving as the foundation for LDA. [14] [15] | Ceperley-Alder data [14] |

| High-Accuracy Molecular Databases | Training/Test Data | Provides benchmark atomization energies, reaction energies, and molecular structures for training and validating functionals. | G2/97 set [16] |

| Solid-State & Surface Datasets | Training/Test Data | Provides benchmark data for cohesive energies, lattice constants, surface adsorption energies, and band gaps. | Surface science benchmarks [11] [16] |

| Relevance Vector Machine (RVM) | Algorithm | A Bayesian sparse learning algorithm used to fit functional parameters and automatically select the most relevant features. [16] | - |

| DFT Codebase with ML Support | Software Platform | Performs Kohn-Sham calculations and can integrate custom, externally defined XC functionals. | Octopus [15] |

The exchange-correlation functional remains the central, defining unknown in Density Functional Theory. While the hierarchy of approximations from LDA to hybrids has enabled tremendous success, the field is now entering a new era driven by machine learning. ML functionals leverage vast datasets to interpolate and extrapolate beyond traditional functional forms, offering a path toward universal, chemical accuracy. Critical to this journey is the emerging capability to quantify uncertainty, which transforms DFT from a purely predictive tool into a framework for guided, reliable discovery. For researchers in drug development and materials science, these advances promise more accurate predictions of binding affinities, reaction pathways, and material properties, ultimately accelerating the design of novel molecules and advanced materials.

Density-functional theory (DFT) has emerged as the predominant first-principles approach in computational quantum chemistry and materials science, accounting for the overwhelming majority of all quantum chemistry calculations due to its proven chemical accuracy and relatively low computational expense [17]. The theoretical foundation of DFT rests upon the Hohenberg-Kohn theorems, which establish that the ground-state energy of an interacting electron system is uniquely determined by the electron density, ρ(r), and that the functional providing this energy achieves its minimum value for the true ground-state density [18]. In practice, DFT is implemented through the Kohn-Sham formalism, which introduces a system of non-interacting electrons that reproduce the same density as the true interacting system [17] [18].

The total energy functional in Kohn-Sham DFT is expressed as:

[ E[\rho] = Ts[\rho] + V{\text{ext}}[\rho] + J[\rho] + E_{\text{xc}}[\rho] ]

where (Ts[\rho]) represents the kinetic energy of non-interacting electrons, (V{\text{ext}}[\rho]) is the external potential energy, (J[\rho]) is the classical Coulomb energy, and (E{\text{xc}}[\rho]) is the exchange-correlation energy that incorporates all quantum many-body effects [18]. The accuracy of DFT calculations hinges entirely on the approximation used for (E{\text{xc}}[\rho]), since its exact form remains unknown [17] [18]. The development of increasingly sophisticated and accurate approximations for this functional is conceptually organized through the powerful metaphor of "Jacob's Ladder," introduced by John Perdew [17] [19]. This classification scheme arranges functionals onto five rungs, with each higher rung incorporating more complex ingredients from the electronic structure to achieve better accuracy while maintaining computational feasibility [17].

The Theoretical Foundation of Jacob's Ladder

The Jacob's Ladder framework systematically organizes density functionals by their increasing complexity and accuracy, where each rung corresponds to the inclusion of additional physical ingredients in the exchange-correlation functional [17] [19]. Ascending the ladder generally improves the accuracy of calculated properties but also increases computational cost. The five rungs are:

- Local Spin-Density Approximation (LSDA): Depends only on the electron density ρ [17]

- Generalized Gradient Approximation (GGA): Depends on ρ and its gradient, |∇ρ| [17] [18]

- meta-Generalized Gradient Approximation (meta-GGA): Depends on ρ, |∇ρ|, and the kinetic energy density, τ [17] [18]

- Hybrid Functionals: Incorporate exact Hartree-Fock exchange [17] [18]

- Double Hybrids: Incorporate both exact exchange and virtual orbitals [17]

Table 1: The Five Rungs of Jacob's Ladder in Density-Functional Theory

| Rung | Functional Type | Key Ingredients | Example Functionals | Typical Applications |

|---|---|---|---|---|

| 1 | Local Spin-Density Approximation (LSDA) | Electron density (ρ) | SPW92, SVWN5 [20] | Uniform electron gas, solid-state physics [18] |

| 2 | Generalized Gradient Approximation (GGA) | ρ, density gradient (∇ρ) | BLYP, PBE, revPBE [20] | Molecular geometry optimization [18] |

| 3 | meta-Generalized Gradient Approximation (meta-GGA) | ρ, ∇ρ, kinetic energy density (τ) | TPSS, revTPSS, SCAN, M06-L [17] [20] | Thermochemistry, reaction barriers [17] |

| 4 | Hybrid | ρ, ∇ρ, τ, exact exchange | B3LYP, PBE0, B97-3 [20] | Main-group thermochemistry [18] |

| 5 | Double Hybrid | ρ, ∇ρ, τ, exact exchange, virtual orbitals | — | High-accuracy energetics [17] |

This systematic organization allows researchers to select an appropriate functional based on the desired accuracy and available computational resources, while providing a clear pathway for functional development [17].

Climbing the Rungs: A Systematic Ascent

First Rung: Local Spin-Density Approximation

The Local Spin-Density Approximation (LSDA) constitutes the first and most fundamental rung of Jacob's Ladder. LSDA evaluates the exchange-correlation energy at each point in space as if the electron density were that of a uniform electron gas [18]:

[ E{\text{XC}}^{\text{LDA}}[\rho] = \int \rho(\textbf{r}) \epsilon{\text{XC}}^{\text{LDA}}(\rho(\textbf{r})) d\textbf{r} = \int \rho(\textbf{r})^{4/3} d\textbf{r} ]

This approach is exact for the infinite uniform electron gas but proves highly inaccurate for molecular properties where electron densities exhibit significant inhomogeneity [17]. LSDA tends to underestimate exchange contributions and overestimate correlation effects, resulting in predicted binding energies that are too large and bond distances that are too short [18]. Despite these limitations, LSDA remains computationally efficient and was historically crucial for early computational work [18]. Common LSDA functionals include SPW92 (Slater LSDA exchange with Perdew-Wang 1992 correlation) and SVWN5 (Slater exchange with Vosko-Wilk-Nusair 1985 correlation) [20].

Second Rung: Generalized Gradient Approximations

The Generalized Gradient Approximation (GGA) significantly improves upon LSDA by incorporating the gradient of the electron density (|∇ρ|) to account for inhomogeneities in real systems [17] [18]. The exchange-correlation energy in GGA functionals takes the form:

[ E{\text{XC}}^{\text{GGA}}[\rho] = \int \rho(\textbf{r}) \epsilon{\text{XC}}^{\text{GGA}}(\rho(\textbf{r}), \nabla\rho(\textbf{r})) d\textbf{r} ]

This inclusion of the density gradient allows GGA functionals to better describe molecular properties, particularly for geometry optimizations [18]. Notable GGA functionals include BLYP (Becke 1988 exchange with Lee-Yang-Parr correlation), PBE (Perdew-Burke-Ernzerhof), and revPBE [20]. While GGAs marked a substantial improvement over LSDA, they still exhibit systematic errors for energetics and tend to underestimate reaction barrier heights [18].

Third Rung: meta-Generalized Gradient Approximations

meta-GGA functionals constitute the third rung of Jacob's Ladder by incorporating the kinetic energy density (τ) as an additional ingredient [17] [18]:

[ E{\text{XC}}^{\text{mGGA}}[\rho] = \int \rho(\textbf{r}) \epsilon{\text{XC}}^{\text{mGGA}}(\rho(\textbf{r}), \nabla\rho(\textbf{r}), \tau(\textbf{r})) d\textbf{r} ]

where the kinetic energy density is defined as (\tau(\textbf{r}) = \frac{1}{2} \sumi |\nabla\psii(\textbf{r})|^2) [18]. The inclusion of τ provides more flexibility in the functional form and enables better description of different chemical environments. meta-GGAs such as TPSS, revTPSS, SCAN, and M06-L provide significantly more accurate energetics than GGAs, including improved thermochemistry and reaction barrier heights, at only a modest increase in computational cost [17] [20]. However, they can be more sensitive to integration grid size, often requiring larger grids and higher computational cost [18].

Fourth Rung: Hybrid Functionals

Hybrid functionals incorporate a fraction of exact (Hartree-Fock) exchange into the DFT exchange-correlation functional [17] [18]. The general form for global hybrids is:

[ E{\text{XC}}^{\text{Hybrid}}[\rho] = a E{\text{X}}^{\text{HF}}[\rho] + (1-a) E{\text{X}}^{\text{DFT}}[\rho] + E{\text{C}}^{\text{DFT}}[\rho] ]

where (a) represents the fraction of Hartree-Fock exchange [18]. For example, in the ubiquitous B3LYP functional, (a = 0.20) (20% HF exchange) [18]. The inclusion of exact exchange addresses self-interaction error and improves the asymptotic behavior of the exchange-correlation potential, leading to more accurate description of molecular properties, particularly for main-group thermochemistry [18]. B3LYP, PBE0 (25% HF exchange), and the B97 family (with varying HF exchange percentages) represent prominent examples of hybrid functionals [20]. While hybrid functionals offer significantly improved accuracy, they come at substantially increased computational cost due to the need to construct the exact exchange matrix, which scales poorly with system size [18].

Fifth Rung: Double Hybrids and Beyond

The fifth and highest rung of Jacob's Ladder contains double hybrid functionals, which incorporate not only exact exchange from occupied orbitals but also correlation contributions from virtual orbitals through methods such as MP2 or the random phase approximation (RPA) [17]. The most basic form of a double hybrid functional is:

[ E{\text{XC}}^{\text{DH}} = a E{\text{X}}^{\text{HF}} + (1-a) E{\text{X}}^{\text{DFT}} + (1-b) E{\text{C}}^{\text{DFT}} + b E_{\text{C}}^{\text{MP2}} ]

where the coefficients (a) and (b) can be theoretically motivated or empirically determined [17]. Double hybrids represent the most expensive class of density functionals but can achieve exceptional accuracy, potentially rivaling high-level wavefunction methods [17]. Recent advances also include range-separated hybrids (RSH), which split the exact exchange contribution into short-range and long-range components using a range-separation parameter, ω [17]. Popular RSH functionals include CAM-B3LYP and ωB97X, which are particularly useful for describing charge-transfer species, excited states, and systems with stretched bonds [18].

Computational Methodologies and Protocols

Standard Computational Workflow for DFT Calculations

The typical workflow for DFT calculations involves several standardized steps, regardless of the specific functional employed. The following diagram illustrates this generalized computational protocol:

Diagram 1: Standard DFT computational workflow

The self-consistent field (SCF) procedure forms the computational core of DFT calculations, where initial guesses for molecular orbital coefficients undergo iterative refinement until self-consistency is achieved [17]. During this process, the Kohn-Sham matrix equations are solved, which are directly analogous to the Pople-Nesbet equations in unrestricted Hartree-Fock theory but with modified Fock matrix elements that include exchange-correlation contributions [17]. The density is constructed using a finite basis set, represented as:

[ \rho(\textbf{r}) = \sum{\mu,\nu} P{\mu\nu} \phi{\mu}(\textbf{r}) \phi{\nu}(\textbf{r}) ]

where (P_{\mu\nu}) represents the elements of the one-electron density matrix [17].

Experimental Protocols for Functional Benchmarking

Rigorous benchmarking of exchange-correlation functionals requires standardized protocols and well-curated datasets. For electronic structure properties such as band gaps, comprehensive studies typically employ the following methodology:

- Dataset Selection: Construct a diverse set of materials spanning semiconductors and insulators with small, intermediate, and large band gaps, incorporating elements from across the periodic table [21].

- Computational Parameters: Employ consistent settings across all calculations, including:

- Functional Comparison: Calculate target properties using multiple functionals from different rungs of Jacob's Ladder [21].

- Error Analysis: Compute statistical errors (MAE, MARE) relative to experimental or high-level theoretical reference data [21].

For magnetic materials studies, such as investigations of the L1₀-MnAl compound, computational details include using Vienna Ab initio Simulation Package (VASP) with specific functionals like LDA (Ceperley-Adler parameterized by Perdew and Zunger) and GGA (Perdew-Burke-Ernzerhof PBE), with careful comparison of optimized lattice parameters, magnetic moments, and density of states against experimental values [9].

Table 2: Essential Computational Tools and Resources for DFT Research

| Tool Category | Specific Examples | Function/Purpose | Key Applications |

|---|---|---|---|

| Software Packages | Q-Chem [17] [20], VASP [9] | Perform DFT calculations with various functionals | Electronic structure, material properties |

| Local Functionals | SPW92, SVWN5 [20] | LSDA calculations with simple density dependence | Baseline studies, uniform electron gas systems |

| GGA Functionals | PBE [9] [20], BLYP [20], revPBE [20] | Include density gradient for improved accuracy | Molecular geometry optimization [18] |

| meta-GGA Functionals | TPSS [20], SCAN [20], M06-L [20] | Include kinetic energy density for better energetics | Thermochemistry, reaction barrier heights [17] |

| Hybrid Functionals | B3LYP [18] [20], PBE0 [20], HSE06 [21] | Incorporate exact exchange for improved accuracy | Main-group thermochemistry, band gaps [21] |

| Range-Separated Hybrids | CAM-B3LYP [18], ωB97X [18] | Apply exact exchange preferentially at long range | Charge-transfer species, excited states [18] |

Current Research Frontiers and Applications

Band Gap Prediction: A Critical Benchmark

The accurate prediction of electronic band gaps represents one of the most significant challenges for DFT, serving as a critical benchmark for exchange-correlation functionals [21]. The fundamental band gap is defined as:

[ E_{\text{G}} = I - A ]

where (I) is the ionization potential and (A) is the electron affinity, representing a difference between ground-state total energies [21]. In contrast, the Kohn-Sham band gap is computed as:

[ E{\text{g}}^{\text{KS}} = \varepsilon{\text{CBM}} - \varepsilon_{\text{VBM}} ]

the difference between the eigenvalues of the conduction band minimum and valence band maximum [21]. For local and semilocal functionals (LDA, GGA), these quantities differ by the derivative discontinuity (Δ({}_{\text{xc}})), which is exactly zero, leading to systematic underestimation of band gaps [21]. Modern meta-GGA and hybrid functionals can overcome this limitation through their orbital-dependent nature [21].

Large-scale benchmarking studies have identified mBJ (modified Becke-Johnson), HLE16, and HSE06 as among the most accurate functionals for band gap calculations [21]. These functionals achieve mean absolute errors significantly lower than traditional LDA and GGA approaches, with HSE06 offering a favorable balance between accuracy and computational cost for many materials systems [21].

Machine Learning Ascends Jacob's Ladder

Recent advances have integrated machine learning (ML) with DFT calculations to enhance predictive accuracy while managing computational costs [19]. Transfer learning approaches enable models initially trained on large datasets of low-level (e.g., GGA) calculations to be refined with smaller sets of high-fidelity data from higher rungs of Jacob's Ladder [19]. For optical property prediction, studies demonstrate that as few as 300 RPA (random phase approximation) calculations suffice to fine-tune a graph attention network initially trained on 10,000 IPA (independent-particle approximation) calculations, with prediction accuracy approaching that of networks directly trained on extensive RPA datasets [19]. This approach effectively allows ML models to climb Jacob's Ladder without the prohibitive computational expense of generating massive high-level datasets [19].

The following diagram illustrates this machine learning framework:

Diagram 2: Machine learning climbing Jacob's Ladder through transfer learning

Specialized Functionals for Targeted Applications

Beyond general-purpose functionals, significant research focuses on developing specialized functionals optimized for specific properties or materials classes. The non-local correlation (NLC) functionals, including VV09, VV10, and rVV10, have been developed to accurately describe dispersion interactions that pose challenges for conventional functionals [17]. These are particularly valuable for modeling non-covalent interactions in biological systems and soft materials [17]. Additionally, functionals like M06-L and M06-2X are parameterized specifically for main-group thermochemistry and kinetics, while KT1, KT2, and KT3 target improved performance for nuclear magnetic resonance shielding constants [20]. This specialization trend represents an important direction in functional development, acknowledging that different applications may benefit from tailored approaches rather than universal functionals [21].

The conceptual framework of Jacob's Ladder continues to provide a powerful paradigm for understanding and organizing the development of exchange-correlation functionals in density-functional theory. The systematic ascent from LSDA to GGA, meta-GGA, hybrid, and double-hybrid functionals represents a journey toward increasingly accurate and universal descriptions of electronic structure, with each rung incorporating more sophisticated ingredients from the electronic wavefunction [17]. This progression has enabled DFT to become an indispensable tool across chemistry, materials science, and drug development, offering a balance between computational efficiency and predictive accuracy [17].

Future developments in DFT will likely focus on several key frontiers. Machine learning approaches will increasingly complement traditional functional development, enabling accurate predictions of high-level properties while managing computational costs through transfer learning and other advanced techniques [19]. The creation of specialized functionals for targeted applications will continue, with optimized parameterizations for specific material classes or physical properties [21]. Additionally, improved treatments of dispersion interactions, strong correlation, and excited states will address remaining limitations in current functional approximations [17]. As these advances unfold, Jacob's Ladder will continue to serve as a guiding framework, reminding researchers that the quest for the exact functional represents a systematic ascent toward increasingly accurate descriptions of the quantum mechanical world.

The Local Density Approximation (LDA) represents a foundational pillar in the framework of density functional theory (DFT), serving as the first and simplest approximation for the elusive exchange-correlation (XC) energy functional [14] [22]. Its conceptual brilliance lies in reducing the complex, non-local quantum many-body problem of electron-electron interactions to a tractable model by leveraging the properties of a homogeneous electron gas (HEG) [14] [23]. Introduced by Walter Kohn and Lu Jeu Sham in 1965, LDA constructs the XC energy for an inhomogeneous system by assuming that the density varies slowly in space, effectively treating the system as a collection of infinitesimal volumes, each possessing the properties of a HEG with a local density equivalent to the system's density at that point [14] [24]. This approach yields a functional that depends solely on the value of the electronic density at each point in space, without explicit dependence on its derivatives or the Kohn-Sham orbitals [14]. Despite its conceptual simplicity, LDA has demonstrated remarkable success in predicting structural and vibrational properties for a wide range of materials, forming the essential reference point from which more sophisticated functionals are developed and against which they are benchmarked [23] [25]. Its derivation from the HEG ensures that any approximate XC functional built upon it reproduces the exact results for this fundamental model, making LDA an indispensable component in the theoretical toolbox for researching exchange-correlation functionals [14].

Theoretical Foundation: The Homogeneous Electron Gas Model

Core Physical and Mathematical Principles

The theoretical underpinning of LDA is the homogeneous electron gas (HEG), also known as the jellium model [14]. This system is constructed by placing N interacting electrons in a volume V, with a uniformly distributed positive background charge that ensures overall electrical neutrality. The thermodynamic limit is approached by taking N and V to infinity while keeping the density ρ = N / V finite [14]. The HEG is a uniquely valuable reference system because its total energy contributions—kinetic, electrostatic, and exchange-correlation—can be accurately computed, and its wavefunction is expressible in terms of plane waves [14]. Within this model, the exchange-energy density is known to be proportional to ρ^(1/3), a key result that directly informs the LDA functional form [14].

The LDA for the exchange-correlation energy is formally expressed for a spin-unpolarized system as:

[ E{xc}^{\mathrm{LDA}}[\rho] = \int \rho(\mathbf{r}) \epsilon{xc}(\rho(\mathbf{r})) d\mathbf{r} ]

Here, ( \epsilon{xc}(\rho(\mathbf{r})) ) is the exchange-correlation energy per particle of a HEG with charge density ( \rho(\mathbf{r}) ) [14] [22]. This energy is typically decomposed linearly into separate exchange (Ex) and correlation (Ec) components: ( E{xc} = Ex + Ec ) [14]. The exchange functional has a simple analytical form derived exactly from the HEG model [14] [22]:

[ E_{x}^{\mathrm{LDA}}[\rho] = -\frac{3}{4} \left( \frac{3}{\pi} \right)^{1/3} \int \rho(\mathbf{r})^{4/3} d\mathbf{r} ]

In contrast, the correlation functional lacks a simple closed-form expression for all densities. Its formulation relies on a combination of known exact limiting expressions and highly accurate numerical data, most notably from Quantum Monte Carlo (QMC) simulations [14] [22] [26]. For the low-density limit (strong correlation), the correlation energy density follows a series expansion of the form ( \epsilonc = \frac{1}{2} \left( \frac{g0}{rs} + \frac{g1}{rs^{3/2}} + \dots \right) ), whereas for the high-density limit (weak correlation), it behaves as ( \epsilonc = A\ln(rs) + B + rs(C\ln(rs) + D) ) [14]. The parameter ( rs ), the Wigner-Seitz radius, is defined by ( \frac{4}{3}\pi rs^3 = \frac{1}{\rho a0^3} ), linking the density to an effective radius [14].

Extension to Spin-Polarized Systems: The Local Spin Density Approximation (LSDA)

The LDA formalism is extended to magnetic and open-shell systems through the Local Spin Density Approximation (LSDA) [14] [22]. LSDA employs two spin-densities, ( \rho\alpha ) and ( \rho\beta ), with the total density given by ( \rho = \rho\alpha + \rho\beta ) [14]. The LSDA exchange-correlation energy functional is written as:

[ E{xc}^{\mathrm{LSDA}}[\rho\alpha, \rho\beta] = \int d\mathbf{r} \rho(\mathbf{r}) \epsilon{xc}(\rho\alpha, \rho\beta) ]

The exact spin-scaling is known for the exchange energy, which can be expressed in terms of the spin-unpolarized functional [14]:

[ Ex[\rho\alpha, \rho\beta] = \frac{1}{2} \left( Ex[2\rho\alpha] + Ex[2\rho_\beta] \right) ]

The spin-dependence of the correlation energy is incorporated by introducing the relative spin-polarization, ( \zeta = (\rho\alpha - \rho\beta) / (\rho\alpha + \rho\beta) ) [14]. The correlation energy is then parameterized to satisfy the known exact conditions for the unpolarized (( \zeta=0 )) and fully polarized (( \zeta=\pm1 )) cases [14].

Table 1: Key Quantitative Expressions in the Local Density Approximation

| Functional Component | Mathematical Expression | Parameters / Notes |

|---|---|---|

| Total LDA XC Energy | ( E{xc}^{\mathrm{LDA}}[\rho] = \int \rho(\mathbf{r}) \epsilon{xc}(\rho(\mathbf{r})) d\mathbf{r} ) [14] | Foundation of the approximation |

| Exchange Energy | ( E_{x}^{\mathrm{LDA}}[\rho] = -\frac{3}{4} \left( \frac{3}{\pi} \right)^{1/3} \int \rho(\mathbf{r})^{4/3} d\mathbf{r} ) [14] [22] | Known analytically from HEG |

| Correlation Energy (Low-Density Limit) | ( \epsilonc = \frac{1}{2} \left( \frac{g0}{rs} + \frac{g1}{r_s^{3/2}} + \dots \right) ) [14] | Strong correlation regime |

| Correlation Energy (High-Density Limit) | ( \epsilonc = A\ln(rs) + B + rs(C\ln(rs) + D) ) [14] | Weak correlation regime |

| Wigner-Seitz Radius | ( \frac{4}{3}\pi rs^3 = \frac{1}{\rho a0^3} ) [14] | Defines the density scale |

Fundamental Limitations and Systematic Errors

Despite its widespread success and computational efficiency, LDA suffers from several fundamental limitations that stem directly from its underlying assumptions. These limitations are systematic and can be traced back to the differences between a real, inhomogeneous electronic system and the idealized homogeneous electron gas.

Self-Interaction Error (SIE): In the HEG, the self-interaction error—the spurious interaction of an electron with itself—is canceled out in an average way. However, in finite systems like atoms and molecules, this cancellation is not exact within LDA [24]. This leads to unphysical results, such as the failure of the XC potential to decay correctly as -1/r in the asymptotic region far from a system [14] [24]. A critical consequence of SIE is that atomic anions are often predicted to be unstable, as LDA cannot bind the extra electron [14]. This error also contributes to the significant overestimation of binding energies in molecules and solids [22] [23].

Band Gap Underestimation: LDA (and its generalized gradient approximation cousins) is notorious for underestimating the fundamental band gap in semiconductors and insulators [14] [23]. This "band gap problem" was historically attributed to a failure of the functional. However, a more nuanced understanding has emerged, linking it partly to a misunderstanding of the second theorem of DFT, which states that the exact Kohn-Sham eigenvalues are only Lagrange multipliers for the density constraint and are not direct representations of quasi-particle energies [14]. Nonetheless, this underestimation can lead to false predictions of impurity-mediated conductivity or carrier-mediated magnetism in materials [14].

Incorrect Asymptotic Decay: The LDA exchange-correlation potential exhibits an exponential decay in finite systems, which is fundamentally incorrect [14]. The exact potential should decay in a Coulombic manner (~1/r) [14]. This erroneous decay impacts the ability of the potential to bind electrons properly, affecting the description of Rydberg states and leading to inaccurate ionization potentials when estimated via Koopmans' theorem [14].

Poor Performance in Strongly Correlated Systems: LDA typically fails in systems where electron correlations are strong and localized, such as in transition metal oxides or materials near a Mott-insulating phase [26] [25]. In these regimes, the assumption that the system can be treated as a perturbed electron gas breaks down, requiring more advanced methods to capture the correct physics.

Neglect of Density Inhomogeneity: By construction, LDA is a local functional that depends only on the density at a point. It does not account for the inhomogeneity of the electron density, i.e., the changes in the density in the vicinity of that point [22] [23]. This is a significant limitation for real systems where the electron density can vary rapidly, such as near atomic nuclei or in the bonding regions between atoms.

Table 2: Systematic Limitations of the Local Density Approximation

| Limitation | Physical Origin | Manifestation in Calculations |

|---|---|---|

| Self-Interaction Error (SIE) | Incomplete cancellation of an electron's interaction with itself [14] [24] | Overestimation of binding energies; instability of anions; incorrect asymptotic potential [14] [22] |

| Band Gap Underestimation | Kohn-Sham eigenvalues are not quasiparticle energies; derivative discontinuity [14] | Predicted band gaps in semiconductors/insulators are too small [14] [23] |

| Incorrect Asymptotic Decay | Functional form derived from HEG, which has no finite boundaries [14] | XC potential decays exponentially instead of as -1/r; poor description of excited states [14] |

| Strong Correlation | Failure of the HEG model for localized, strongly interacting electrons [26] [25] | Incorrect description of Mott insulators, transition metal oxides, and magnetic phases [26] |

| Neglect of Density Gradients | Purely local functional with no dependence on ∇ρ [22] [23] | Inaccuracies in molecules and surfaces where density varies rapidly [22] |

Methodologies for Development and Validation

Quantum Monte Carlo and the Parametrization of Correlation

The development of accurate LDA functionals, particularly for the correlation energy, relies heavily on high-precision computational data for the HEG. The most authoritative source for this data is Diffusion Monte Carlo (DMC) method, a specific flavor of QMC [26] [24]. DMC provides near-exact ground-state energies for the HEG by simulating the Schrödinger equation using a stochastic approach, effectively treating electron correlation beyond the mean-field level [26]. In a typical DMC study of the HEG, the energy is computed for a finite simulation cell with periodic boundary conditions, containing N electrons at a specific density parameterized by ( r_s ) [26]. The total energy is calculated for both spin-polarized and spin-unpolarized systems, and the corresponding Hartree-Fock energy is subtracted to isolate the correlation energy [26]. These numerical results are then fitted to an analytical form that satisfies the known perturbative limits for high and low densities, leading to parametrizations such as the famous Vosko-Wilk-Nusair (VWN) functional [22]. This methodology is not static; it continues to be applied to new systems, such as the two-dimensional HEG with screened Coulomb interactions relevant for modern 2D materials and moiré systems [26].

Diagram 1: LDA Functional Creation Workflow. This flowchart outlines the primary methodology for developing an LDA correlation functional, from system definition through Quantum Monte Carlo simulation to final analytical parametrization.

Validation and Benchmarking via Metric-Space Analysis

Assessing the performance of LDA and other density functionals has traditionally relied on comparing computed total energies with exact or highly accurate reference data. However, a more robust approach involves using metric-space analysis to quantify the functional's performance beyond total energies [25]. This method rigorously measures the "distance" between the exact and approximated system properties, including the particle density ((Dρ)), the many-body wave function ((Dψ)), and the external potential ((D_v)) [25]. The process involves constructing an "interacting-LDA" (i-LDA) system—the unique interacting system that shares the same Hamiltonian as the exact system but has a ground-state density equal to the LDA-derived density [25]. The distances between the exact and i-LDA systems are then computed using specific metrics, providing a sensitive and physically meaningful assessment of the approximation's quality and directly probing the regimes where the Hohenberg-Kohn theorem's one-to-one mappings hold [25].

Table 3: Key Computational Tools and Resources for LDA-Based Research

| Tool / Resource | Type | Primary Function in LDA Research |

|---|---|---|

| Quantum Monte Carlo (DMC) [26] [24] | Computational Method | Provides high-accuracy benchmark energies for the HEG to fit correlation functionals. |

| VWN Functional [22] | Parameterization | A widely used analytical form for the LDA correlation energy based on QMC data. |

| CASTEP, DMol³ [14] | DFT Software Packages | Production codes that implement LDA (and other functionals) for ab initio calculations on solids and molecules. |

| Homogeneous Electron Gas (HEG) [14] | Theoretical Model | The fundamental reference system from which LDA is derived and benchmarked. |

| Metric-Space Analysis [25] | Validation Technique | Quantifies the performance of LDA by measuring distances between exact and approximated densities, wavefunctions, and potentials. |

Current Research Context and Future Directions

LDA is far from a historical relic; it remains an active area of research, particularly as a foundational component for next-generation functionals and in applications to novel quantum systems. Current research directions showcase its enduring relevance.

Functional Development for Low-Dimensions and Screening: There is a growing demand for accurate LDA-type functionals tailored to low-dimensional systems and those with screened interactions, driven by the rise of 2D materials [26]. For example, state-of-the-art DMC calculations are being performed on the two-dimensional HEG subjected to symmetric dual-gate screening to construct a new LSDA functional that accounts for the presence of metallic gates in experimental setups [26]. This allows DFT/LDA calculations to more accurately model the physics of 2D moiré systems, where gate screening can qualitatively alter electronic interactions [26].

Range Separation and Hybrid Schemes: A highly successful strategy to mitigate LDA's self-interaction error is the range-separated hybrid (RSH) scheme [24]. In RSH functionals, the electron-electron interaction is partitioned into short-range (SR) and long-range (LR) components. The SR component is often treated with LDA (or semilocal functionals), while the LR component is handled by exact (Hartree-Fock) exchange [24]. This has spurred research into developing accurate SR LDA exchange (and free) energy functionals that are specifically designed for use in these RSH schemes, both at zero temperature and in finite-temperature DFT [24].

Testing Fundamental Theorems and Limits: LDA continues to serve as a testbed for exploring the fundamental limits of DFT itself. Research using metric-based analysis on lattice models has employed LDA to investigate the regimes where the Hohenberg-Kohn theorem's one-to-one mappings are practically applicable and to distinguish between functional-driven and density-driven errors in approximations [25]. These studies reveal that the potential distance can behave differently from density and wave function distances, highlighting the complex nature of functional development [25].

The Local Density Approximation stands as a testament to the power of simple, physically motivated models in theoretical physics. Its derivation from the homogeneous electron gas provides a mathematically tractable and computationally efficient framework that has enabled countless advancements in our understanding of molecules and materials. While its systematic limitations—the self-interaction error, poor description of strong correlations, and neglect of density inhomogeneities—are well-documented, they are the very drivers that have spurred the development of more sophisticated functionals like GGAs, meta-GGAs, and hybrids, many of which use LDA as their foundational reference point. Far from being obsolete, LDA remains a critical tool, both as an efficient functional for initial computational scans and as a active research subject in its own right, particularly in the development of functionals for low-dimensional and screened systems and in the ongoing quest to understand and improve the very foundations of density-functional approximations. Its simplicity, utility, and role as a stepping stone ensure that the Local Density Approximation will continue to be a cornerstone of electronic structure theory for the foreseeable future.

Density Functional Theory (DFT) has established itself as the cornerstone of modern computational materials science and quantum chemistry, providing a framework for solving the complex many-body Schrödinger equation by focusing on the manageable electron density, ρ(r), rather than the intractable many-electron wavefunction. Within the Kohn-Sham formulation of DFT, the total electronic energy is expressed as a sum of distinct components: the kinetic energy of a fictitious system of non-interacting electrons, the electrostatic interactions (Hartree potential and electron-nucleus attraction), and the exchange-correlation (XC) energy, Exc [11]. The XC term encompasses all non-classical electron-electron interactions, including quantum mechanical exchange and correlation effects. The central challenge in DFT is that the exact analytical form of Exc is unknown, necessitating approximations.

The simplest of these approximations is the Local-Density Approximation (LDA), which assumes that the exchange-correlation energy per particle at a point r in space is equal to that of a homogeneous electron gas (HEG) with the same density [14]. While LDA has been remarkably successful, particularly in predicting structural properties of solids, its inherent assumption of a uniform electron density limits its accuracy for real systems where the density is spatially inhomogeneous [14] [27]. This shortfall manifests in systematic errors, such as the overestimation of bond strengths in molecules and the underestimation of lattice constants [27].

The Generalized Gradient Approximation (GGA) represents the logical and pivotal evolution beyond LDA by introducing an explicit dependence on the gradient of the electron density, ∇ρ(r), in addition to the density itself. This critical advancement allows the functional to account for the non-uniformity of the electron density in real atoms, molecules, and solids [27]. The general form of the GGA exchange-correlation energy is given by: [ E{xc}^{GGA}[\rho] = \int \epsilon{xc}(\rho(\mathbf{r}), \nabla\rho(\mathbf{r})) d\mathbf{r} ] Often, the exchange and correlation components are treated separately, leading to the form: [ E{xc}^{GGA}[\rho] = \int \epsilon{x}^{LDA}(\rho(\mathbf{r})) F{xc}(\rho(\mathbf{r}), \nabla\rho(\mathbf{r})) d^3r ] where ( F{xc} ) is the enhancement factor, which modifies the LDA energy density based on the local density and its gradient [27] [11]. The reduced density gradient, ( s(\mathbf{r}) = |\nabla \rho(\mathbf{r})| / (2 kF(\mathbf{r}) \rho(\mathbf{r})) ), is a dimensionless quantity that is commonly used to parameterize the enhancement factor, where ( kF ) is the local Fermi wavevector [11]. By incorporating this extra information, GGA functionals can more accurately describe the nuances of chemical bonding, leading to significant improvements in the prediction of binding energies, bond lengths, and energy barriers compared to LDA [27].

Mathematical Formalism of GGA

The development of GGA functionals involves constructing specific forms for the exchange and correlation enhancement factors that satisfy known physical constraints while delivering accurate results for a wide range of systems.

GGA Exchange Functionals

The exchange energy component in GGA is typically formulated as a correction to the LDA exchange energy. The exact exchange energy for a HEG is known to scale as ( \rho^{4/3} ), and this forms the basis for the LDA expression [14]. In GGA, this is enhanced by a factor that depends on the density gradient: [ Ex^{GGA}[\rho] = \int \epsilonx^{LDA}(\rho(\mathbf{r})) Fx(s(\mathbf{r})) d\mathbf{r} ] Here, ( \epsilonx^{LDA}(\rho) = - \frac{3}{4} (\frac{3}{\pi})^{1/3} \rho^{4/3} ) (in atomic units) is the LDA exchange energy density, and ( Fx(s) ) is the exchange enhancement factor [14] [11]. Different GGA functionals are characterized by their unique forms of ( Fx(s) ). A key physical constraint that guides the construction of these functionals is the requirement that the sum of the exchange-correlation hole must amount to one missing electron [11]. Prominent GGA exchange functionals include the Perdew-Wang 1991 (PW91) and Perdew-Burke-Ernzerhof (PBE) functionals, which are designed to obey this and other constraints without empirical fitting [27].

GGA Correlation Functionals

The correlation energy is more challenging to approximate than exchange because it lacks a simple analytic form even for the HEG. Quantum Monte Carlo simulations provide highly accurate correlation energies for the HEG across a range of densities, which serve as the foundation [14] [11]. In GGA, the correlation energy is expressed in a form that incorporates the density gradient: [ Ec^{GGA}[\rho] = \int \epsilonc(\rho, \zeta, s) d\mathbf{r} ] where ( \zeta ) is the relative spin polarization, defined as ( \zeta = (\rho\alpha - \rho\beta) / (\rho\alpha + \rho\beta) ), which allows the functional to be applied to spin-polarized systems [14]. Well-known GGA correlation functionals include the Lee-Yang-Parr (LYP) functional, the P86 functional, and the correlation part of PW91 [27]. These are often combined with various exchange functionals; for instance, the BLYP functional combines the Becke 1988 (B) exchange with LYP correlation, while BP86 combines Becke exchange with P86 correlation [27].

A Comparative Analysis: LDA, GGA, and Beyond