From Thomas-Fermi to Modern Applications: The Evolving Landscape of Density Functional Theory

This article provides a comprehensive overview of the development of Density Functional Theory (DFT) from its origins in the Thomas-Fermi model to its current status as a cornerstone of computational...

From Thomas-Fermi to Modern Applications: The Evolving Landscape of Density Functional Theory

Abstract

This article provides a comprehensive overview of the development of Density Functional Theory (DFT) from its origins in the Thomas-Fermi model to its current status as a cornerstone of computational chemistry, physics, and materials science. It explores the foundational theorems that established DFT's theoretical basis, the methodological breakthroughs in exchange-correlation functionals that enabled practical applications, and the ongoing challenges in accuracy and optimization. The content highlights modern validation techniques and comparative analyses with other quantum-chemical methods, concluding with an examination of emerging trends, including machine learning and the specific implications of these advancements for biomedical research and drug discovery.

The Theoretical Bedrock: Tracing DFT from Thomas-Fermi to Hohenberg-Kohn

The Thomas-Fermi (TF) model, independently proposed by Llewellyn Thomas and Enrico Fermi in 1927, represents a seminal breakthrough in the quantum mechanical treatment of many-electron systems [1] [2]. Developed shortly after the introduction of the Schrödinger equation, this statistical model provided the first successful attempt to describe electronic structure using electron density rather than complex wave functions [1] [3]. The TF model emerged during a period of intense theoretical development in quantum mechanics, with Dirac notably observing in 1926 that the fundamental physical laws for chemistry were completely known but practically unsolvable for many-electron systems [3]. In this context, the Thomas-Fermi approach offered a computationally tractable, albeit approximate, method for describing electrons in atoms by treating them as a uniform electron gas distributed throughout the atom [4].

The core innovation of the TF model lies in its foundational premise: that in each small volume element ΔV within an atom, electrons can be treated as being distributed uniformly, akin to a homogeneous electron gas [1] [5]. This semiclassical approximation ignored the individual motions of electrons and their spin [2], but established the crucial concept that electronic properties could be determined from the electron density alone. The model effectively translates the quantum mechanical problem into a form where the electron density n(r) becomes the fundamental variable determining all ground-state properties [1] [5]. This conceptual leap established the philosophical foundation upon which modern density functional theory would eventually be built, despite the quantitative limitations of the original TF approach [1] [6].

Table 1: Historical Context of the Thomas-Fermi Model

| Year | Development | Key Contributors | Significance |

|---|---|---|---|

| 1926 | Schrödinger Equation | Erwin Schrödinger | Established quantum mechanical foundation for electronic structure |

| 1927 | Thomas-Fermi Model | L. Thomas, E. Fermi | First density-based quantum model for many-electron systems |

| 1927 | Hartree Method | Douglas Hartree | Approximate wavefunction-based approach |

| 1930 | Thomas-Fermi-Dirac Model | Paul Dirac | Added exchange energy to TF model |

| 1930 | Hartree-Fock Method | J. C. Slater, V. Fock | Incorporated Pauli principle into Hartree method |

| 1964 | Hohenberg-Kohn Theorems | P. Hohenberg, W. Kohn | Established theoretical foundation for modern DFT |

| 1965 | Kohn-Sham Equations | W. Kohn, L. J. Sham | Practical computational framework for DFT |

Theoretical Foundations and Mathematical Framework

Fundamental Assumptions and Electron Density Formulation

The Thomas-Fermi model rests upon several key physical assumptions that enable the description of a many-electron system through its electron density alone. First, the model assumes that the effective potential V(r) is spherically symmetric and depends only on the distance from the nucleus [5]. Second, it treats electrons as being uniformly distributed within each small volume element ΔV, while allowing the electron density to vary between different volume elements [1]. Third, the model employs a semiclassical phase-space approach, where pairs of electrons are distributed uniformly within each six-dimensional phase-space volume element h³ [5]. This statistical treatment effectively fills the available momentum states up to the Fermi momentum pF(r) at each point in space, creating a position-dependent Fermi sphere [1].

The relationship between electron density and Fermi momentum forms the cornerstone of the TF theory. For a small volume element ΔV at position r, the number of electrons ΔN occupying that volume is given by the number of momentum states up to pF(r) multiplied by two to account for spin degeneracy [1]:

This equation can be inverted to express the Fermi momentum in terms of the electron density [5]:

The local density approximation is inherent in this formulation, as the Fermi momentum and all derived quantities depend solely on the local electron density, without reference to the density at neighboring points or the global wavefunction [6]. This locality approximation, while computationally advantageous, represents a significant physical simplification that limits the accuracy of the TF model for real atomic and molecular systems [1].

Kinetic Energy Functional

The Thomas-Fermi model provides a particularly elegant expression for the kinetic energy of a many-electron system. By considering the classical kinetic energy of electrons filling a Fermi sphere in momentum space up to pF(r), and integrating over all volume elements, one obtains the famous TF kinetic energy functional [1] [6]:

where C({}_{kin}) is the Fermi constant [1]:

In atomic units (ħ = mₑ = e = 1), this constant simplifies to c({}_{F}) = 3(3π²)²/³/10 [5]. The kinetic energy density t(r) at each point in space is therefore proportional to the 5/3 power of the electron density [1]:

This density-powered functional relationship demonstrates the remarkable economy of the TF approach, expressing a quantum mechanical property (kinetic energy) directly in terms of the electron density without requiring wavefunctions or orbitals [1]. The 5/3 power law derives fundamentally from the geometry of the Fermi sphere in momentum space and represents a universal relationship for a non-interacting electron gas at zero temperature [6].

Total Energy Functional

The total energy in the Thomas-Fermi model incorporates three principal components: kinetic energy, electron-nucleus attraction, and electron-electron repulsion. For an atom with nuclear charge Z, the complete energy functional takes the form [1] [4]:

The three terms correspond to [1]:

- Kinetic energy of the electrons (T)

- Electron-nucleus potential energy (U({}_{eN})) due to Coulomb attraction

- Electron-electron repulsion energy (U({}_{ee})) in the classical Hartree approximation

The energy expression is remarkable for being formulated exclusively in terms of the electron density n(r), without any reference to individual electron wavefunctions [1] [5]. This establishes the TF model as the first true density functional theory, predating the formal theoretical foundation provided by the Hohenberg-Kohn theorems by nearly four decades [3]. The functional must be minimized subject to the constraint that the total integrated electron density equals the number of electrons N [1]:

Table 2: Components of the Thomas-Fermi Energy Functional

| Energy Component | Mathematical Expression | Physical Interpretation | Dependence on Density | ||

|---|---|---|---|---|---|

| Kinetic Energy | ( C_{kin} \int [n(\mathbf{r})]^{5/3} d^3r ) | Energy of non-interacting electron gas | n⁵/³ | ||

| Electron-Nucleus Attraction | ( -Z \int \frac{n(\mathbf{r})}{r} d^3r ) | Coulomb attraction to nucleus | n¹ | ||

| Electron-Electron Repulsion | ( \frac{1}{2} \iint \frac{n(\mathbf{r})n(\mathbf{r}')}{ | \mathbf{r} - \mathbf{r}' | } d^3r d^3r' ) | Classical Coulomb repulsion (Hartree) | n² |

The Thomas-Fermi Equation and Its Solution

Derivation via Variational Principle

The Thomas-Fermi equation is derived by applying the variational principle to the energy functional under the constraint of fixed total electron number. Introducing a Lagrange multiplier μ to enforce the normalization constraint, one seeks to minimize [1]:

Performing the functional derivative δΩ/δn(r) = 0 yields the fundamental equation of the TF model [1]:

Here, μ represents the electronic chemical potential (constant throughout space), V({}{N})(r) is the nuclear potential, and the integral term represents the Hartree potential due to electron-electron repulsion [1]. For an atomic system with V({}{N})(r) = -Ze²/r, the equation can be recast into a dimensionless form through appropriate variable substitutions [6].

The electron density n(r) can be expressed in terms of the total potential V(r) = V({}{N})(r) + V({}{H})(r), where V({}_{H})(r) is the Hartree potential [1]:

This expression highlights the semiclassical nature of the TF model, where electrons occupy regions where their chemical potential exceeds the local potential energy [1].

Dimensionless Form and Numerical Solution

For the case of a neutral atom, the TF equation can be transformed into a universal dimensionless form through the substitutions [1] [6]:

where φ(x) is a dimensionless function characterizing the screening of the nuclear potential by the electron cloud. Substituting these expressions into the TF equation yields the celebrated Thomas-Fermi differential equation [1] [6]:

This dimensionless equation is universal for all neutral atoms, independent of atomic number Z, subject to the boundary conditions φ(0) = 1 and φ(∞) = 0 [1] [6]. The function φ(x) describes how the nuclear potential is screened by the electron cloud, with φ(0) = 1 representing unscreened nuclear potential at the origin, and φ(∞) = 0 representing complete screening at large distances [6].

The TF equation is nonlinear and cannot be solved analytically in closed form. Bush and Caldwell obtained the first numerical solution in 1931 using the differential analyzer at MIT [6]. Sommerfeld later derived an approximate analytical solution [6]:

The electron density for a neutral atom can be approximated as a simple exponential function normalized to the total number of electrons [6]:

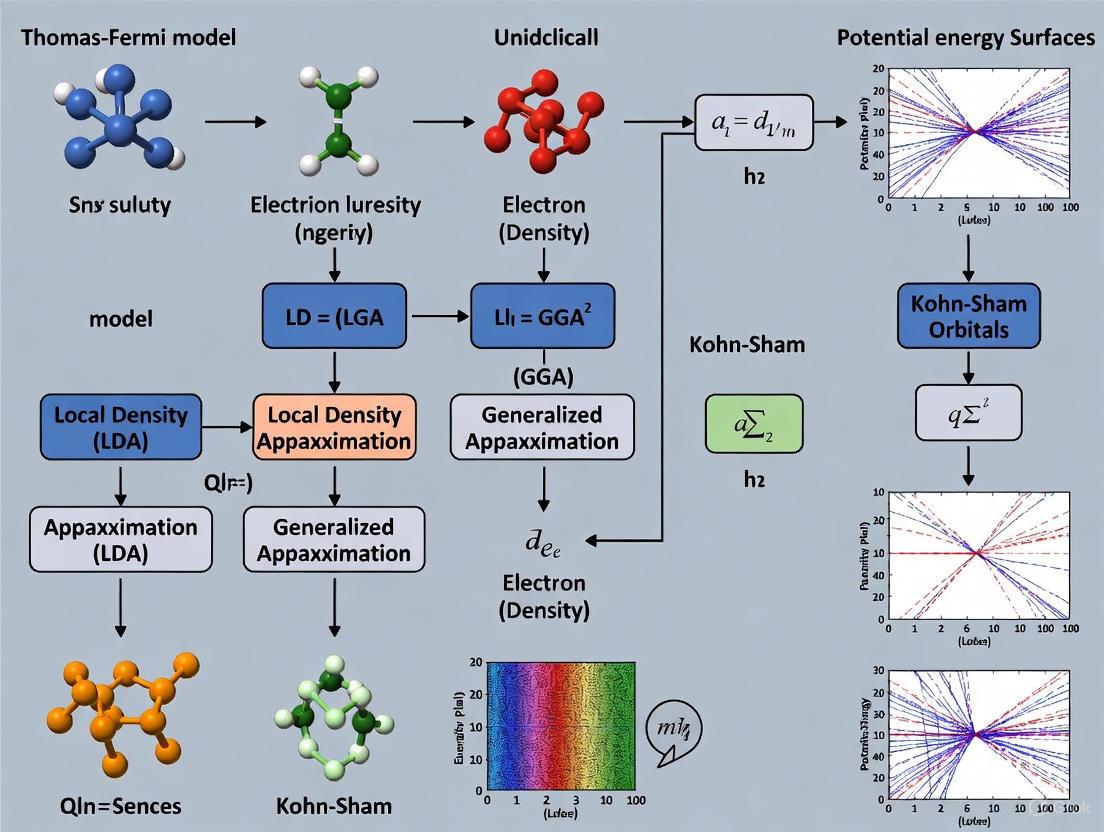

The following diagram illustrates the theoretical relationships and computational workflow of the Thomas-Fermi model:

Computational Protocols and Methodologies

Protocol: Implementing the Thomas-Fermi Atom Calculation

The following step-by-step protocol details the computational procedure for solving the Thomas-Fermi equation for a neutral atom and obtaining the electron density distribution and total energy.

Initialization Phase

- Define atomic parameters: Specify atomic number Z and electron number N (N = Z for neutral atoms).

- Set physical constants: Planck's constant h, electron mass mₑ, electron charge e, or use atomic units (ħ = mₑ = e = 1).

- Calculate derived constants: Compute the Fermi constant C({}_{kin}) = 3h²(3/π)²/³/(40mₑ) in appropriate units.

Numerical Solution Phase

- Transform to dimensionless variables:

- Compute scaling parameter b = (1/4)(9π²/(2Z))¹/³ a₀

- Define dimensionless distance x = r/b

- Define dimensionless potential φ(x) through μ - V(r) = (Ze²/r)φ(r/b)

- Implement numerical solver for TF equation:

- Discretize the dimensionless TF equation d²φ/dx² = φ³/²/√x

- Apply boundary conditions: φ(0) = 1, φ(∞) = 0

- Use finite difference method with approximately 1000 grid points

- Employ Newton-Raphson iteration for convergence tolerance of 10⁻⁸

Post-Processing Phase

- Transform back to physical variables:

- Compute electron density n(r) = (8π/3h³)[2mₑ(Ze²/b)φ(x)/x]³/²

- Calculate kinetic energy T({}{TF}) = C({}{kin} ∫ n⁵/³(r) d³r

- Compute potential energy components U({}{eN}) and U({}{ee})

- Obtain total energy E({}{TF}) = T({}{TF}) + U({}{eN}) + U({}{ee}

Validation Phase

- Verify numerical accuracy:

- Check normalization condition ∫ n(r) d³r = N

- Confirm convergence with respect to grid size

- Compare with known solutions for limiting cases

Protocol: Electron Density Analysis in Materials

This protocol adapts the Thomas-Fermi approach for analyzing electron density distributions in materials, leveraging the local density approximation for computational efficiency.

System Preparation

- Define crystal structure: Specify lattice vectors and atomic positions.

- Construct electron density grid: Create three-dimensional grid spanning unit cell with resolution of at least 0.05 Å per grid point.

Thomas-Fermi Calculation

- Compute total electron density:

- Initialize n(r) as sum of isolated atom densities

- For each grid point, calculate n(r) = (8π/3h³)p({}_{F})³(r)

- Solve self-consistent field equations:

- Calculate electrostatic potential from Poisson equation ∇²V(r) = -4πn(r)

- Update Fermi momentum p({}_{F})(r) from effective potential

- Iterate until electron density converges (Δn/n < 10⁻⁵)

Analysis and Visualization

- Calculate derived properties:

- Kinetic energy density t(r) = C({}_{kin}[n(r)]⁵/³

- Local electronic pressure P(r) = (2/3)t(r)

- Visualize results:

- Generate contour plots of electron density

- Create volumetric maps of kinetic energy density

- Plot line profiles along crystallographic directions

Table 3: Research Reagent Solutions for Thomas-Fermi Calculations

| Reagent/Software | Function/Purpose | Implementation Notes |

|---|---|---|

| Thomas-Fermi Solver | Numerical solution of TF equation | Finite difference method with Newton-Raphson iteration |

| Poisson Equation Solver | Electrostatic potential calculation | Fast Fourier Transform (FFT) or multigrid methods |

| Atomic Density Generator | Initial electron density guess | Superposition of isolated atom densities |

| Visualization Suite | Analysis of electron density distributions | ParaView, VESTA, or custom MATLAB/Python scripts |

| Convergence Analyzer | Monitoring SCF convergence | Automated tolerance checking and iteration control |

Extensions and Refinements to the Basic Model

Thomas-Fermi-Dirac Model

In 1930, Paul Dirac augmented the Thomas-Fermi model by incorporating a quantum mechanical exchange term, creating the Thomas-Fermi-Dirac (TFD) model [1] [3]. Dirac derived a local approximation for the exchange energy based on the homogeneous electron gas [6] [3]:

where C({}_{X}) = (3/4)(3/π)¹/³ in atomic units [6]. This exchange energy functional arises from the Pauli exclusion principle, which prevents electrons with parallel spins from occupying the same spatial location, thereby reducing their Coulomb repulsion [1]. The addition of this term modestly improved the accuracy of the model, particularly for aspects of electronic structure related to spin effects [1]. When incorporated into the total energy functional, the TFD model becomes [6]:

The corresponding Euler-Lagrange equation includes an additional exchange potential term [6]:

Despite this improvement, the TFD model remained insufficient for accurate quantitative predictions in chemistry, as it still failed to capture atomic shell structure and molecular bonding [1] [3].

Correlation Energy and Further Developments

Eugene Wigner addressed another limitation of the TF model in 1934 by proposing an approximate form for the correlation energy, which captures the interaction among electrons with opposite spins [1]. Wigner's local correlation functional took the form [1]:

where ε({}_{C})(n) is the correlation energy per electron of a homogeneous electron gas with density n. Wigner's expression, while approximate, provided a reasonable estimation of correlation effects for high-density systems [1].

The following diagram illustrates the historical evolution and theoretical relationships between different density-based models:

Applications and Modern Relevance

Contemporary Applications in Materials Science

Despite its quantitative limitations for molecular systems, the Thomas-Fermi model maintains relevance in several specialized domains of modern physics and materials science. The approach offers analytical tractability that enables researchers to extract qualitative trends and scaling relationships that would be obscured in more complex computational frameworks [1]. In materials physics, TF theory provides insights into the behavior of electrons under extreme conditions, such as in high-pressure physics where electron densities become relatively uniform [4]. The model serves as a foundation for developing equations of state for matter under extreme compression, where all materials theoretically approach a Thomas-Fermi-like state [4].

Another significant application lies in orbital-free density functional theory, where the TF kinetic energy functional serves as a component in more sophisticated approximations for the kinetic energy [1]. While the pure TF functional lacks the accuracy needed for most chemical applications, it provides the foundational form that is refined in modern kinetic energy density functionals. These orbital-free approaches offer computational advantages for large systems where traditional Kohn-Sham methods become prohibitively expensive [1] [5].

The TF model also finds application in the construction of interatomic potentials for molecular dynamics simulations. Based on the Thomas-Fermi-Dirac model, Abrahamson developed Born-Mayer-type potentials applicable to combinations of 104 elements at internuclear separations between approximately 0.08 and 0.42 nm [5]. These statistical potentials derived from electron distribution models provide efficient alternatives to quantum mechanical calculations for certain simulation contexts.

Limitations and Critical Assessment

The Thomas-Fermi model, while conceptually groundbreaking, suffers from several fundamental limitations that restrict its quantitative accuracy. Most notably, the model fails to predict chemical bonding - it was proven by Teller that no molecules are stable within the pure TF framework [6]. This catastrophic failure stems from the inability of the model to describe the directional covalent bonds that underlie molecular formation.

The model also lacks atomic shell structure, predicting monotonically decreasing electron densities without the oscillations corresponding to atomic orbitals [1] [5]. Furthermore, it does not exhibit Friedel oscillations in solids, which are characteristic oscillations in electron density around impurities in metals [1] [5]. These deficiencies originate from the local density approximation for kinetic energy, which neglects the quantum mechanical nature of electron motion and the nonlocal effects of the Pauli exclusion principle [1].

The TF model is quantitatively correct only in the limit of infinite nuclear charge, where the electron cloud becomes increasingly homogeneous and the semiclassical approximation becomes exact [1]. For realistic systems with finite nuclear charges, the model provides only rough qualitative trends. The incorrect asymptotic behavior of the electron density (decaying exponentially rather than as r⁻⁶ for neutral atoms) further limits its accuracy for describing long-range interactions [1] [6].

Table 4: Performance Assessment of Thomas-Fermi Model

| Property | TF Prediction | Exact/Experimental Result | Discrepancy |

|---|---|---|---|

| Molecular Bonding | No stable molecules | Molecules stable | Qualitative failure |

| Atomic Shell Structure | Monotonic density decay | Oscillatory shell structure | Missing feature |

| Kinetic Energy | Overestimated | Exact QM result | 10-50% error |

| Exchange Energy | Completely missing (TF)~ | Exact value | 100% error (TF) |

| Total Atomic Energy | ~0.76Z⁷/³ hartrees | ~0.59Z⁷/³ hartrees | ~30% overestimate |

| Density Asymptotics | Exponential decay | r⁻⁶ decay for neutral atoms | Incorrect tail behavior |

The Thomas-Fermi model represents a pioneering effort in the development of quantum mechanical methods for many-electron systems, establishing the fundamental principle that electronic properties could be determined from electron density alone [1] [3]. While quantitatively limited, its conceptual framework directly inspired the formal foundation of modern density functional theory established by Hohenberg, Kohn, and Sham [3]. The TF model introduced several key concepts that remain central to computational materials physics and chemistry, including the local density approximation, density-based kinetic energy functionals, and the self-consistent field approach for determining electron distributions [6] [3].

The historical trajectory from the Thomas-Fermi model to modern DFT illustrates how a conceptually rich but quantitatively limited theory can stimulate decades of research that ultimately yields practical computational tools [3]. Contemporary DFT, now enhanced with machine learning approaches as demonstrated by Microsoft Research's Skala functional, continues to evolve beyond the limitations of traditional Jacob's Ladder classifications [3]. Yet this progress builds upon the foundational insight of Thomas and Fermi that electron density provides a sufficient variable for describing quantum mechanical systems. Their 1927 model thus stands as a testament to the power of simple physical ideas to inspire scientific advances far beyond their original limitations, continuing to influence computational approaches to electronic structure nearly a century after its introduction.

The development of density functional theory (DFT) represents a paradigm shift in quantum mechanics, transitioning from wavefunction-based descriptions to a more computationally tractable density-based formalism. Prior to 1964, the dominant approaches for describing many-electron systems included the Thomas-Fermi model (1927) and its extension by Dirac (1930), which represented the first attempts to describe quantum mechanical systems solely through electron density rather than many-body wavefunctions [3] [6]. These early models, while pioneering, suffered from significant limitations in accuracy—the original Thomas-Fermi model could not account for molecular bonding, and its kinetic energy description was quantitatively poor [6]. The Hartree-Fock method (1930) and Slater's Xα method (1951) provided important intermediate developments but still relied either explicitly or implicitly on orbital constructions [3]. It was against this backdrop that Hohenberg and Kohn introduced their landmark theorems in 1964, establishing for the first time a rigorous foundation for density functional theory and demonstrating that an exact theory based solely on electron density was not merely an approximation but a formally exact representation of quantum mechanics [3].

Table 1: Key Historical Developments Preceding the Hohenberg-Kohn Theorems

| Year | Development | Key Innovators | Significance |

|---|---|---|---|

| 1927 | Thomas-Fermi Model | Thomas, Fermi | First statistical model using electron density instead of wavefunctions [3] |

| 1930 | Thomas-Fermi-Dirac Model | Dirac | Added exchange term to Thomas-Fermi model [3] |

| 1930 | Hartree-Fock Method | Slater, Fock | Incorporated Pauli principle but required orbital solutions [3] |

| 1951 | Slater Xα Method | Slater | Replaced Hartree-Fock exchange with density-dependent approximation [3] |

The Fundamental Theorems: A Formal Foundation

The First Hohenberg-Kohn Theorem

The first Hohenberg-Kohn theorem establishes a foundational principle: the ground-state electron density uniquely determines the external potential (and thus the entire Hamiltonian) of a many-electron system [7] [8]. Formally stated, "the ground state of any interacting many-particle system with a given fixed inter-particle interaction is a unique functional of the electron density n(r)" [7]. This represents a profound simplification, as the electron density depends on only three spatial variables (x, y, z), regardless of system size, while the many-body wavefunction depends on 3N variables for an N-electron system.

The mathematical formulation expresses the ground state energy E as a functional of the ground state density n₀(r):

E = E[n₀] = ⟨Ψ[n₀]|T̂ + V̂ + Û|Ψ[n₀]⟩ [7]

where T̂ represents the kinetic energy operator, V̂ the external potential operator, and Û the electron-electron interaction operator. The theorem demonstrates that the ground state wavefunction can be written as a unique functional of the ground state density, Ψ₀ = Ψ[n₀], enabling the calculation of all ground-state properties [7] [8].

The Second Hohenberg-Kohn Theorem

The second Hohenberg-Kohn theorem provides the variational principle essential for practical applications [7]. It states that "the electron density that minimizes the energy of the overall functional is the true electron density corresponding to the full solution of the Schrödinger equation" [7]. This establishes a crucial methodology: if the true functional form is known, one can find the ground state electron density by minimizing the energy functional with respect to the density.

The universal functional F[n] can be decomposed as:

F[n] = T[n] + Vₑₑ[n] [8]

where T[n] is the kinetic energy functional and Vₑₑ[n] is the electron-electron interaction functional. The total energy functional for a specific system with external potential v(r) then becomes:

E[v; n] = F[n] + ∫v(r)n(r)d³r [8]

The variational principle guarantees that the minimum value of this functional, obtained with the correct n(r), gives the exact ground-state energy.

Diagram 1: Logical structure of Hohenberg-Kohn theorems

Technical Implementation: From Theorems to Practical Computation

The v-Representability and N-Representability Challenges

A significant technical challenge emerged from the original Hohenberg-Kohn formulation: the v-representability problem. The theorems initially applied only to densities that could be obtained from some external potential (v-representable densities) [8]. This limitation was addressed through the constrained search approach developed by Levy and Lieb, which expanded the theory to N-representable densities—those obtainable from some antisymmetric wavefunction [8] [9].

The constrained search formulation defines a universal functional as:

F[n] = min⟨Ψ|T̂ + V̂ₑₑ|Ψ⟩ for Ψ → n(r)

where the minimization searches all wavefunctions Ψ that yield the fixed density n [8] [9]. This approach guarantees that F[n] + V[n] ≥ E₀ for N-representable densities, establishing a variational principle applicable to computational methods.

The Kohn-Sham Equations: A Practical Computational Framework

In 1965, Kohn and Sham introduced a practical computational scheme that remains the foundation of modern DFT calculations [3]. The key insight was to replace the original interacting system with an auxiliary non-interacting system that has the same ground-state density [9]. This approach isolates the problematic components of the universal functional into an exchange-correlation term.

The Kohn-Sham energy functional is expressed as:

EKS[n] = TS[n] + ∫vₑₓₜ(r)n(r)d³r + EH[n] + EXC[n]

where TS[n] is the kinetic energy of the non-interacting reference system, EH[n] is the classical Hartree electron-electron repulsion energy, and EXC[n] is the exchange-correlation functional that captures all many-body effects [9].

Table 2: Components of the Kohn-Sham Energy Functional

| Functional Component | Mathematical Form | Physical Significance | Treatment in KS Scheme | ||

|---|---|---|---|---|---|

| Non-interacting Kinetic Energy | Tₛ[n] = Σ⟨φᵢ | -½∇² | φᵢ⟩ | Kinetic energy of reference system | Calculated exactly via orbitals |

| External Potential | ∫vₑₓₜ(r)n(r)d³r | Electron-nucleus attraction | Calculated exactly | ||

| Hartree Energy | Eₕ[n] = ½∫∫[n(r₁)n(r₂)/ | r₁-r₂ | ]d³r₁d³r₂ | Classical electron repulsion | Calculated exactly |

| Exchange-Correlation | EₓC[n] | Quantum many-body effects | Approximated (LDA, GGA, hybrids) |

The minimization of the Kohn-Sham energy functional leads to the Kohn-Sham equations:

[-½∇² + vₑ₆(r) + vₕ(r) + vₓC(r)]φᵢ(r) = εᵢφᵢ(r)

where vₑ₆ is the external potential, vₕ is the Hartree potential, and vₓC is the exchange-correlation potential [9]. These single-particle equations are solved self-consistently to obtain the ground-state density and energy.

Research Reagent Solutions: Computational Tools for DFT Implementation

Table 3: Essential Computational Tools and Methods in Modern DFT

| Tool Category | Specific Examples | Function/Purpose | Theoretical Basis | ||

|---|---|---|---|---|---|

| Kinetic Energy Functionals | Thomas-Fermi (TTF), von Weizsäcker (TvW) | Orbital-free DFT calculations [10] | TTF ∼ ∫n⁵⁄³(r)d³r; TvW ∼ ∫ | ∇n¹⁄²(r) | ²d³r [10] |

| Exchange-Correlation Functionals | LDA, GGA, Hybrids (B3LYP, PBE0) | Approximate many-body quantum effects [3] | LDA: uniform electron gas; GGA: adds density gradient; Hybrids: mix HF exchange [3] | ||

| Basis Sets | Plane waves, Localized orbitals, Gaussians | Expand Kohn-Sham orbitals | Balance between completeness and computational efficiency | ||

| Pseudopotentials | Norm-conserving, Ultrasoft, PAW | Replace core electrons | Reduce computational cost while maintaining accuracy |

Experimental Protocols: Computational Methodology

Protocol 1: Self-Consistent Solution of Kohn-Sham Equations

The standard approach for DFT calculations involves an iterative self-consistent field procedure:

Initialization: Generate initial guess for electron density nᵢₙᵢₜᵢₐₗ(r), typically from atomic orbital superposition

Potential Construction: Calculate effective potential vₑ𝒻𝒻(r) = vₑₓₜ(r) + vₕ(r) + vₓC(r)

Orbital Solution: Solve Kohn-Sham equations for occupied orbitals {φᵢ(r)}

Density Update: Construct new density nₙₑw(r) = Σ|φᵢ(r)|²

Mixing & Convergence: Mix nₙₑw(r) with previous density; check convergence of total energy and density

Iteration: Repeat steps 2-5 until self-consistency is achieved (typically 10-50 iterations)

This protocol ensures that the input and output densities are consistent, satisfying the Hohenberg-Kohn condition for the ground state [7] [9].

Protocol 2: Orbital-Free DFT Implementation

For systems where computational efficiency is paramount, orbital-free DFT provides an alternative:

Functional Selection: Choose appropriate kinetic energy functional (e.g., TF, vW, or modern nonlocal functionals)

Direct Minimization: Minimize energy functional E[n] = F[n] + ∫vₑₓₜ(r)n(r)d³r with respect to density

Density Representation: Represent density on spatial grid or with appropriate basis functions

Constrained Optimization: Maintain density normalization ∫n(r)d³r = N during optimization

This approach is computationally more efficient but requires accurate kinetic energy density functionals, which remain an active research area [10] [11].

Diagram 2: Kohn-Sham self-consistent field cycle

Advanced Applications and Contemporary Developments

Extensions of the Basic Framework

The Hohenberg-Kohn theorems have been extended beyond their original formulation to address various physical scenarios:

- Time-Dependent DFT (TDDFT): For excited states and time-dependent phenomena [12]

- Finite-Temperature DFT: For systems at non-zero temperatures [9]

- Relativistic DFT: For systems containing heavy elements where relativistic effects become important [12]

- Magnetic Fields: For systems in external magnetic fields [9]

These extensions demonstrate the remarkable flexibility of the density functional approach while maintaining the core principles established by Hohenberg and Kohn.

Modern Functional Development: Climbing Jacob's Ladder

The accuracy of practical DFT calculations depends critically on the approximation used for the exchange-correlation functional. Perdew's metaphor of "Jacob's Ladder" classifies functionals in a hierarchy of increasing complexity and accuracy [3]:

- Local Density Approximation (LDA): Uses only local density n(r) [3]

- Generalized Gradient Approximation (GGA): Incorporates density gradient |∇n(r)| [3]

- Meta-GGAs: Adds kinetic energy density τ(r)

- Hybrid Functionals: Mix in exact exchange from Hartree-Fock [3]

- Double Hybrids: Include both exact exchange and perturbative correlation

Recent developments include machine-learned functionals that potentially bypass traditional approximations, representing an exciting frontier in DFT development [3].

The 1964 Hohenberg-Kohn theorems established density functional theory as a formally exact theory, providing the rigorous foundation that transformed DFT from approximate models into a powerful computational framework with unprecedented accuracy and efficiency. By demonstrating that all ground-state properties are uniquely determined by the electron density, Hohenberg and Kohn enabled the development of computational methods that have revolutionized materials science, chemistry, and drug discovery [12]. The Kohn-Sham equations, built directly upon this foundation, remain the workhorse of first-principles electronic structure calculations across scientific disciplines. As functional development continues and computational power increases, the principles established in 1964 continue to guide new generations of researchers in pushing the boundaries of quantum mechanical simulation.

Historical Context: From Thomas-Fermi to a Practical Foundation

The development of the Kohn-Sham equations in 1965 represents the pivotal moment when density functional theory (DFT) transitioned from a conceptual framework to a practical computational tool. This breakthrough was rooted in addressing the fundamental limitations of earlier models, beginning with the Thomas-Fermi model developed in 1927 [1] [3]. The Thomas-Fermi model provided the first quantum mechanical theory that used electron density alone to describe many-body systems, employing a statistical approach to approximate electron distribution in atoms [1] [4]. However, this model suffered from critical inaccuracies: it failed to reproduce essential electronic structure features like shell structure in atoms and Friedel oscillations in solids, and most significantly, it lacked an exchange-energy term accounting for the Pauli exclusion principle [1].

In 1930, Paul Dirac added an exchange energy term to the Thomas-Fermi model, creating the Thomas-Fermi-Dirac model, but it remained too inaccurate for practical chemical applications [3]. The field transformed in 1964 with the Hohenberg-Kohn theorems, which provided the rigorous mathematical foundation for DFT by proving that all ground-state properties of a many-electron system are uniquely determined by its electron density [3] [13]. While theoretically profound, this formulation remained practically difficult to implement until 1965, when Walter Kohn and Lu Jeu Sham introduced their revolutionary equations that made DFT computationally feasible [14] [3].

Table 1: Evolution of Key Density-Based Quantum Models

| Model/Theory | Year | Key Proponents | Fundamental Advancement | Primary Limitations |

|---|---|---|---|---|

| Thomas-Fermi Model | 1927 | Thomas, Fermi | First statistical model using electron density instead of wave functions [1] [3] | No exchange energy; inaccurate for molecules; no shell structure [1] |

| Thomas-Fermi-Dirac | 1930 | Dirac | Added exchange energy term [3] | Still insufficient accuracy for chemical applications [3] |

| Hohenberg-Kohn Theorems | 1964 | Hohenberg, Kohn | Rigorous proof that density uniquely determines all properties [3] [13] | Practical implementation difficulties [3] |

| Kohn-Sham Equations | 1965 | Kohn, Sham | Mapping to non-interacting system with exact kinetic energy treatment [14] [3] | Unknown exchange-correlation functional [14] |

Theoretical Foundation: The Kohn-Sham Framework

Core Conceptual Mapping

The Kohn-Sham equations fundamentally reimagined the many-electron problem by introducing an ingenious mapping procedure. Rather than directly solving the intractable problem of interacting electrons, Kohn and Sham proposed a fictitious system of non-interacting particles that exactly reproduces the electron density of the real interacting system [14]. This approach effectively decouples the formidable challenges of electron-electron interactions while maintaining the physically meaningful electron density distribution.

The Hamiltonian for this reference system is constructed such that the electrons experience an effective local potential ( v_{\text{eff}}(\mathbf{r}) ) rather than the complex many-body interactions of the original system [14]. The beauty of this construction lies in its mathematical tractability—the wavefunction for non-interacting electrons can be represented exactly as a single Slater determinant of orbitals, and the kinetic energy can be computed precisely from these orbitals [14] [13].

Mathematical Formulation

The Kohn-Sham equations are derived from the total energy functional for the real interacting system [14]:

[ E[\rho] = Ts[\rho] + \int d\mathbf{r} \, v{\text{ext}}(\mathbf{r}) \rho(\mathbf{r}) + E{\text{H}}[\rho] + E{\text{xc}}[\rho] ]

In this expression:

- ( T_s[\rho] ) represents the kinetic energy of the non-interacting reference system

- ( v_{\text{ext}}(\mathbf{r}) ) is the external potential (typically electron-nuclei interactions)

- ( E_{\text{H}}[\rho] ) is the Hartree (Coulomb) energy representing electron-electron repulsion

- ( E_{\text{xc}}[\rho] ) is the exchange-correlation energy, which captures all remaining electronic interactions

Minimization of this energy functional with respect to the orbitals, subject to orthogonality constraints, leads to the Kohn-Sham eigenvalue equations [14]:

[ \left(-\frac{\hbar^2}{2m}\nabla^2 + v{\text{eff}}(\mathbf{r})\right)\varphii(\mathbf{r}) = \varepsiloni \varphii(\mathbf{r}) ]

where the effective potential is given by:

[ v{\text{eff}}(\mathbf{r}) = v{\text{ext}}(\mathbf{r}) + e^2\int \frac{\rho(\mathbf{r}')}{|\mathbf{r} - \mathbf{r}'|} \, d\mathbf{r}' + \frac{\delta E_{\text{xc}}[\rho]}{\delta \rho(\mathbf{r})} ]

The electron density is constructed from the occupied Kohn-Sham orbitals:

[ \rho(\mathbf{r}) = \sum{i}^{N} |\varphii(\mathbf{r})|^2 ]

These equations must be solved self-consistently because the effective potential depends on the density, which in turn depends on the orbitals [15].

Diagram 1: Theoretical foundation of Kohn-Sham DFT

Energy Component Analysis

The Kohn-Sham approach achieves its practical utility by capturing the majority of the total energy in computationally tractable terms, leaving only the exchange-correlation energy as an unknown that requires approximation.

Table 2: Components of the Kohn-Sham Total Energy Functional

| Energy Component | Mathematical Expression | Physical Significance | Treatment in KS Scheme | ||

|---|---|---|---|---|---|

| Non-interacting Kinetic Energy | ( Ts[\rho] = \sum{i=1}^N \int d\mathbf{r} \, \varphii^*(\mathbf{r}) \left(-\frac{\hbar^2}{2m}\nabla^2\right) \varphii(\mathbf{r}) ) | Kinetic energy of reference non-interacting system | Exact via orbitals [14] | ||

| External Potential Energy | ( \int d\mathbf{r} \, v_{\text{ext}}(\mathbf{r}) \rho(\mathbf{r}) ) | Electron-nuclei attractions | Exact [14] | ||

| Hartree Energy | ( E_{\text{H}}[\rho] = \frac{e^2}{2} \int d\mathbf{r} \int d\mathbf{r}' \, \frac{\rho(\mathbf{r}) \rho(\mathbf{r}')}{ | \mathbf{r} - \mathbf{r}' | } ) | Classical electron-electron repulsion | Exact [14] |

| Exchange-Correlation Energy | ( E_{\text{xc}}[\rho] ) | All quantum mechanical electron interactions | Requires approximation [14] |

Computational Protocol: Kohn-Sham SCF Implementation

Self-Consistent Field Procedure

The solution of the Kohn-Sham equations follows an iterative self-consistent field (SCF) approach, which ensures that the input and output densities converge to a consistent solution [15]. The detailed protocol consists of the following steps:

Initialization

- Construct the molecular structure with nuclear coordinates and atomic numbers

- Select an appropriate basis set for expanding the Kohn-Sham orbitals

- Choose an exchange-correlation functional approximation (LDA, GGA, hybrid, etc.)

- Generate an initial guess for the electron density ( \rho^{(0)}(\mathbf{r}) ), typically from superposition of atomic densities

SCF Iteration Cycle (for iteration k = 0, 1, 2, ... until convergence)

Construct the effective potential using the current density: [ v{\text{eff}}^{(k)}(\mathbf{r}) = v{\text{ext}}(\mathbf{r}) + e^2\int \frac{\rho^{(k)}(\mathbf{r}')}{|\mathbf{r} - \mathbf{r}'|} \, d\mathbf{r}' + v{\text{xc}}^{(k)}(\mathbf{r}) ] where ( v{\text{xc}}^{(k)}(\mathbf{r}) = \frac{\delta E{\text{xc}}[\rho]}{\delta \rho(\mathbf{r})} \bigg|{\rho=\rho^{(k)}} ) [14]

Solve the Kohn-Sham eigenvalue problem: [ \left(-\frac{\hbar^2}{2m}\nabla^2 + v{\text{eff}}^{(k)}(\mathbf{r})\right)\varphii^{(k)}(\mathbf{r}) = \varepsiloni^{(k)} \varphii^{(k)}(\mathbf{r}) ] This is typically implemented as a matrix diagonalization in a basis set representation [15]

Calculate the new electron density from the occupied orbitals: [ \rho^{(k+1)}(\mathbf{r}) = \sum{i}^{\text{occupied}} |\varphii^{(k)}(\mathbf{r})|^2 ]

Check for convergence by comparing ( \rho^{(k+1)} ) and ( \rho^{(k)} ) and examining the energy change

- If not converged, prepare the next density using mixing schemes (linear, Kerker, etc.) and continue iterations

Post-SCF Analysis

- Compute the total energy using the converged density and orbitals

- Calculate molecular properties, forces, and other observables

- Perform population analysis, visualize orbitals, and interpret results

Diagram 2: Kohn-Sham self-consistent field workflow

Key Computational Considerations

The numerical implementation of the Kohn-Sham equations requires careful attention to several computational aspects:

Basis Sets: The choice of basis functions for expanding Kohn-Sham orbitals significantly impacts accuracy and efficiency. Common choices include plane waves for periodic systems, Gaussian-type orbitals for molecular systems, and numerical atomic orbitals [15]

Integration Grids: Accurate numerical integration is essential for evaluating the exchange-correlation potential, particularly for molecular systems. Adaptive grids with higher density near nuclei are typically employed

Convergence Acceleration: Density mixing schemes and advanced algorithms like direct inversion in iterative subspace (DIIS) are crucial for achieving SCF convergence in challenging systems

Parallelization: Modern implementations leverage massive parallelization to distribute the computational load across Kohn-Sham orbital bands, k-points, and real-space grids

Research Reagent Solutions: Computational Components

The practical application of Kohn-Sham DFT requires several "computational reagents" that together enable accurate simulations of electronic structure problems.

Table 3: Essential Computational Components for Kohn-Sham DFT Simulations

| Component | Function | Common Examples |

|---|---|---|

| Exchange-Correlation Functional | Approximates quantum mechanical electron interactions | LDA, PBE (GGA), B3LYP (hybrid), HSE (screened hybrid) [3] |

| Basis Set | Expands Kohn-Sham orbitals in finite representation | Plane waves, Gaussian-type orbitals, numerical atomic orbitals, augmented waves [15] |

| Pseudopotentials | Represents core electrons and nuclei, reducing computational cost | Norm-conserving, ultrasoft, PAW (Projector Augmented Wave) methods |

| Integration Grids | Enables numerical integration of exchange-correlation potential | Lebedev, Becke, Mura-Knowles grids for molecular systems |

| SCF Convergence Algorithms | Accelerates and stabilizes self-consistent field iterations | DIIS, Kerker mixing, charge density mixing, preconditioners |

Applications in Drug Development and Materials Science

The Kohn-Sham formulation has become the cornerstone of modern computational materials science and drug development due to its favorable balance between accuracy and computational efficiency. Key application areas include:

Pharmaceutical Applications

In drug discovery, Kohn-Sham DFT enables researchers to:

- Calculate binding energies between drug candidates and protein targets

- Predict reaction pathways and activation energies for drug metabolism

- Simulate electronic properties of pharmacophores and their interactions

- Model redox potentials and spectroscopic properties of pharmaceutical compounds

Materials Design

For materials science, the method provides insights into:

- Band structures and electronic density of states for semiconductors

- Catalytic activity and surface reactivity of heterogeneous catalysts

- Mechanical properties and defect formation energies in solids

- Magnetic properties and spin-dependent phenomena

The continued evolution of exchange-correlation functionals, including recent machine-learning approaches, promises to further enhance the predictive power of Kohn-Sham DFT for these applications [3].

Comparative Analysis: Thomas-Fermi vs. Kohn-Sham Formulations

The fundamental advancement of the Kohn-Sham approach becomes evident when comparing its theoretical structure and practical performance against the original Thomas-Fermi model.

Table 4: Comprehensive Comparison: Thomas-Fermi vs. Kohn-Sham Formulations

| Aspect | Thomas-Fermi Model | Kohn-Sham DFT |

|---|---|---|

| Kinetic Energy Treatment | Approximate functional of density only: ( T{TF}[\rho] = CF \int \rho^{5/3}(\mathbf{r}) d\mathbf{r} ) [1] | Exact treatment via non-interacting orbitals: ( Ts[\rho] = \sum{i=1}^N \int d\mathbf{r} \, \varphii^*(\mathbf{r}) \left(-\frac{\hbar^2}{2m}\nabla^2\right) \varphii(\mathbf{r}) ) [14] |

| Exchange-Correlation | Initially absent; later added as Dirac exchange: ( EX^{Dirac}[\rho] = CX \int \rho^{4/3}(\mathbf{r}) d\mathbf{r} ) [1] [3] | Comprehensive ( E_{xc}[\rho] ) with systematic approximations (LDA, GGA, hybrids, etc.) [14] [3] |

| Electron Density Features | Fails to capture shell structure, bond breaking, density oscillations [1] | Correctly reproduces atomic shell structure, chemical bonding, Friedel oscillations [14] |

| Computational Cost | Very low (direct minimization) | Moderate (SCF iteration with matrix diagonalization) |

| Predictive Accuracy | Qualitative trends only; quantitatively poor [1] | Quantitative for many properties; widely used in materials and chemical simulations [3] |

| Molecular Applications | Unsuccessful for molecular bonding [1] [4] | Standard method for molecular structure, bonding, and reactivity [15] [3] |

The critical distinction lies in the kinetic energy treatment. While Thomas-Fermi uses a local density approximation for kinetic energy that fails to capture essential quantum effects, Kohn-Sham computes the kinetic energy exactly for a non-interacting system with the same density as the real system [14] [1]. This fundamental improvement enables the Kohn-Sham approach to describe chemical bonding, molecular structures, and materials properties with quantitative accuracy, making it the foundation for modern computational materials science and drug development.

The development of the Thomas–Fermi (TF) model in 1927 by Llewellyn Thomas and Enrico Fermi marked a revolutionary shift in quantum mechanics, representing the first serious attempt to describe many-electron systems using only the electron density instead of the complex N-electron wavefunction [1] [5]. This semiclassical approach emerged shortly after the introduction of the Schrödinger equation and established the foundational principle that would eventually evolve into modern density functional theory (DFT) [5]. The TF model fundamentally assumed that electrons are distributed uniformly within each small volume element ΔV, enabling the derivation of a kinetic energy functional expressed solely in terms of the electron density n(r) [1].

While philosophically profound, the original Thomas–Fermi model suffered from severe quantitative limitations. It failed to reproduce essential electronic structure features such as atomic shell structure and Friedel oscillations in solids [1]. Most significantly, the model lacked any representation of exchange energy arising from the Pauli exclusion principle and completely neglected electron correlation effects [1] [5]. These critical shortcomings restricted the model's accuracy to the limiting case of infinite nuclear charge, rendering it inadequate for quantitative predictions in realistic molecular systems [1] [5].

The introduction of the Local Density Approximation (LDA) within the formal framework established by the Hohenberg–Kohn theorems provided the crucial bridge between the conceptual foundation of the Thomas–Fermi model and practically useful computational methods. By incorporating knowledge from the homogeneous electron gas (HEG), LDA became the first practical exchange-correlation functional that enabled realistic electronic structure calculations for materials and molecules [5].

Table 1: Historical Evolution from Thomas-Fermi to LDA

| Theoretical Model | Key Approximation | Strengths | Limitations |

|---|---|---|---|

| Thomas-Fermi (1927) | Kinetic energy as functional of density only [1] | First density-based model; conceptual simplicity [1] [5] | No exchange or correlation; incorrect atomic shell structure [1] |

| Thomas-Fermi-Dirac (1930) | Adds local exchange energy [1] | Improved accuracy over TF [1] | Still missing correlation energy; quantitative limitations [1] |

| Hohenberg-Kohn (1964) | Proof that density determines all ground state properties [16] | Formal foundation for DFT [16] | No practical functionals provided |

| Kohn-Sham (1965) | Introduces non-interacting reference system [16] | Exact kinetic energy via orbitals [16] | Requires approximation for exchange-correlation functional |

| LDA (1960s) | Exchange-correlation from homogeneous electron gas [5] | First practical functional; computational efficiency [16] [5] | Underestimates lattice constants; overbinds molecules [17] |

Theoretical Foundation of LDA

The Homogeneous Electron Gas Reference System

The fundamental ansatz of the Local Density Approximation is that the exchange-correlation energy at each point in space can be approximated by the value for a homogeneous electron gas of the same density [5]. Mathematically, this is expressed as:

[ E{XC}^{LDA}[n] = \int n(\mathbf{r}) \varepsilon{XC}^{HEG}(n(\mathbf{r})) d\mathbf{r} ]

where (\varepsilon_{XC}^{HEG}(n)) is the exchange-correlation energy per particle of a homogeneous electron gas with density n. This quantity is typically separated into exchange and correlation contributions:

[ \varepsilon{XC}^{HEG}(n) = \varepsilon{X}^{HEG}(n) + \varepsilon_{C}^{HEG}(n) ]

For the exchange part, an exact analytical expression exists derived from the Hartree-Fock method for the HEG:

[ \varepsilon_{X}^{HEG}(n) = -\frac{3}{4}\left(\frac{3}{\pi}\right)^{1/3} n^{1/3} ]

The correlation component (\varepsilon_{C}^{HEG}(n)) is considerably more complex and must be determined through highly accurate quantum Monte Carlo simulations of the homogeneous electron gas, with the results parameterized as a function of density for practical computations [5].

Mathematical Formulation and Key Equations

The LDA derives its mathematical structure from the Thomas–Fermi–Dirac model but incorporates it within the Kohn–Sham framework. In the Kohn–Sham DFT approach, the LDA exchange-correlation potential is obtained through the functional derivative:

[ v{XC}^{LDA}(\mathbf{r}) = \frac{\delta E{XC}^{LDA}[n]}{\delta n(\mathbf{r})} = \varepsilon{XC}^{HEG}(n(\mathbf{r})) + n(\mathbf{r})\frac{\partial \varepsilon{XC}^{HEG}(n)}{\partial n}\bigg|_{n=n(\mathbf{r})} ]

This potential enters the Kohn–Sham equations as an effective one-electron operator:

[ \left[-\frac{1}{2}\nabla^2 + v{ext}(\mathbf{r}) + v{Hartree}(\mathbf{r}) + v{XC}^{LDA}(\mathbf{r})\right] \phii(\mathbf{r}) = \epsiloni \phii(\mathbf{r}) ]

where (v{ext}) is the external potential (typically from nuclei), (v{Hartree}) is the classical electrostatic Hartree potential, and (\phii) are the Kohn–Sham orbitals that reproduce the exact density of the interacting system through (n(\mathbf{r}) = \sum{i}^{occupied} |\phi_i(\mathbf{r})|^2).

Figure 1: LDA Computational Workflow. The diagram illustrates the self-consistent procedure for solving Kohn-Sham equations with the LDA functional, connecting the inhomogeneous real system to the homogeneous electron gas reference.

Computational Protocols and Methodology

Implementation in Materials Science

The application of LDA in computational materials science follows well-established protocols centered around the plane-wave pseudopotential method [16]. This approach leverages periodic boundary conditions to model crystalline systems and requires careful attention to several computational parameters.

Basic Computational Setup:

- Software Packages: Vienna Ab initio Simulation Package (VASP), Quantum ESPRESSO [16] [17]

- Basis Set: Plane-wave basis with kinetic energy cutoff (typically 400-800 eV) [16]

- k-point Sampling: Monkhorst-Pack scheme for Brillouin zone integration [16]

- Convergence Criteria: Force convergence < 0.01 eV/Å, energy convergence < 10^-5 eV [17]

System-Specific Parameters for LDA: For the L10-MnAl compound studied in recent research, the following LDA-specific parameters were employed [17]:

- Exchange-Correlation Functional: Ceperley-Alder parameterized by Perdew and Zunger [17]

- k-point Mesh: 13×13×13 for structural optimization

- Plane-Wave Cutoff: 600 eV

- Pseudopotentials: Projector augmented-wave (PAW) method

Table 2: LDA Performance in Materials Property Prediction

| Material Property | LDA Performance | Typical Error | Comparison to GGA |

|---|---|---|---|

| Lattice Constants | Systematic underestimation [17] | 1-3% too short [17] | GGA tends to overestimate [17] |

| Cohesive Energy | Overbinding [17] | Overestimates by 10-20% | GGA provides better agreement [17] |

| Bulk Modulus | Overestimation [17] | 5-10% too high | GGA generally more accurate [17] |

| Electronic Band Gap | Severe underestimation [16] | 50-100% error | Comparable error to GGA [16] |

| Magnetic Moments | Reasonable prediction [17] | 5-15% error | GGA often superior [17] |

Step-by-Step LDA Calculation Protocol

Protocol 1: Structural Optimization Using LDA

This protocol outlines the standard procedure for determining the ground-state geometry of a crystalline material using LDA.

Initial Structure Preparation

- Obtain initial crystal structure from experimental data or database

- Define lattice vectors and atomic positions

- Set up periodic boundary conditions

Computational Parameters

- Select LDA functional (e.g., Ceperley-Alder-Perdew-Zunger)

- Choose appropriate pseudopotentials for all elements

- Set plane-wave cutoff energy based on convergence tests

- Determine k-point mesh using Monkhorst-Pack scheme

Self-Consistent Field (SCF) Calculation

- Perform initial SCF calculation to obtain electron density

- Use iterative diagonalization (Davidson, RMM-DIIS)

- Employ density mixing schemes (Pulay, Kerker) for convergence

Ionic Relaxation

- Calculate Hellmann-Feynman forces on all atoms

- Update atomic positions using optimization algorithm (BFGS, conjugate gradient)

- Repeat SCF calculation with new coordinates until forces are below tolerance (typically 0.01 eV/Å)

Property Extraction

- Extract optimized lattice parameters

- Calculate total energy, electronic density of states, band structure

- Determine derived properties: elastic constants, phonon spectra

Protocol 2: Electronic Structure Analysis with LDA

This protocol describes the procedure for calculating electronic properties once the ground-state structure is determined.

Converged Density Utilization

- Use the converged electron density from Protocol 1

- Ensure SCF convergence to at least 10^-6 eV in total energy

Band Structure Calculation

- Identify high-symmetry points in the Brillouin zone

- Perform non-self-consistent calculation along k-point paths

- Extract eigenvalue spectrum to plot band structure

Density of States (DOS) Analysis

- Use denser k-point mesh for accurate DOS (e.g., 21×21×21)

- Apply Gaussian or tetrahedron smearing for k-point integration

- Project DOS onto atomic orbitals for projected DOS (PDOS)

Post-Processing

- Analyze band gaps at high-symmetry points

- Identify orbital contributions to specific bands

- Calculate Fermi surface for metallic systems

Comparative Performance Analysis

LDA vs GGA in Magnetic Materials

Recent investigations of the L10-MnAl compound provide a compelling case study for comparing LDA and GGA performance in predicting materials properties [17]. This rare-earth-free permanent magnet candidate has been extensively studied using both functionals, revealing systematic differences in predictive accuracy.

Structural Properties: For L10-MnAl, LDA calculations yield lattice parameters a = 3.784 Å and c = 3.378 Å, representing a typical LDA underestimation compared to experimental values (a = 3.897 Å, c = 3.531 Å) [17]. The GGA approach with the PBE functional provides improved agreement with experiments (a = 3.852 Å, c = 3.451 Å), demonstrating GGA's tendency to correct LDA's overbinding [17].

Electronic and Magnetic Properties: Both LDA and GGA correctly predict the metallic character of L10-MnAl, but exhibit significant differences in their description of specific magnetic properties [17]. The magnetic moment per Mn atom calculated with LDA (2.08 μB) shows greater deviation from experimental values (approximately 2.4-2.7 μB) compared to GGA results (2.32 μB) [17]. This performance trend extends to the compound's bulk modulus, where LDA's characteristic overestimation (132 GPa) exceeds GGA's prediction (118 GPa), with both exceeding experimental measurements [17].

Performance Across Material Classes

The reliability of LDA varies significantly across different classes of materials, with systematic trends emerging from decades of computational experiments.

Metallic Systems: LDA generally performs reasonably well for simple metals and their bulk properties due to the similarity of their electron gas to the HEG reference system. The approximation captures the dominant s- and p-electron delocalization in these systems, providing adequate predictions of lattice constants (despite slight underestimation) and cohesive energies.

Semiconductors and Insulators: LDA's performance deteriorates significantly for semiconductors and insulators, most notably through the systematic band gap underestimation problem [16]. For zinc-blende CdS and CdSe compounds, LDA severely underestimates band gaps compared to experimental values, a limitation shared with GGA functionals [16]. This "band gap problem" stems from LDA's incomplete description of the exchange interaction and derivative discontinuities in the exchange-correlation potential.

Molecular Systems: For molecular systems, LDA typically overestimates binding energies and underestimates bond lengths, consistent with its general overbinding tendency. This makes LDA less suitable for predicting reaction energies and molecular geometries compared to GGA or hybrid functionals, though its computational efficiency maintains its utility for large systems where qualitative trends are sufficient.

Figure 2: DFT Functional Evolution. The historical development of exchange-correlation functionals from Thomas-Fermi to modern hybrid approaches, showing the conceptual improvements at each stage.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for LDA Calculations

| Tool Category | Specific Examples | Function | Application Notes |

|---|---|---|---|

| DFT Software Packages | VASP, Quantum ESPRESSO, ABINIT [16] [17] | Solves Kohn-Sham equations with LDA functional | VASP offers excellent pseudopotential libraries; Quantum ESPRESSO is open-source [16] |

| Pseudopotential Libraries | GBRV, PSLibrary, VASP PAW datasets [16] | Replaces core electrons with effective potential | Ultra-soft pseudopotentials reduce computational cost; PAW provides high accuracy [16] |

| Visualization Tools | VESTA, XCrySDen, VMD | Analyzes crystal structures and electron densities | Critical for interpreting computational results and preparing publications |

| Convergence Testing Scripts | AiiDA, ASE, custom Python scripts | Automates parameter testing | Essential for ensuring results are physically meaningful, not numerical artifacts |

| High-Performance Computing | SLURM, PBS workload managers | Enables parallel computation | LDA calculations scale efficiently to thousands of CPU cores |

The Local Density Approximation represents the crucial first practical step in the journey from the conceptual framework of the Thomas–Fermi model to modern, predictive density functional theory. While its quantitative limitations are well-documented—systematic underestimation of lattice constants, overbinding of molecular complexes, and severe band gap underestimation—LDA established the foundational paradigm of mapping the inhomogeneous real system to a local homogeneous electron gas reference [5] [17].

Despite being superseded by more sophisticated functionals like GGA and hybrids for quantitative predictive work, LDA maintains relevance in specific domains where its computational efficiency and reasonable accuracy for metallic systems remain advantageous [17]. Furthermore, the mathematical structure of LDA continues to inform next-generation functional development, serving as the reference point for understanding the impact of gradient corrections and exact exchange mixing.

The historical trajectory from Thomas–Fermi through LDA to contemporary functionals demonstrates the progressive refinement of exchange-correlation approximations, with each generation building upon the physical insights of its predecessors while addressing their most significant limitations. In this context, LDA remains an essential component of the materials modeling toolkit and a critical milestone in the ongoing development of accurate, computationally efficient electronic structure methods.

Density Functional Theory (DFT) represents one of the most successful theoretical frameworks in quantum chemistry and materials science, enabling researchers to predict the electronic structure and properties of molecules and materials through computational methods. The foundational journey of DFT began nearly a century ago with semiclassical approximations and has evolved into a sophisticated computational approach that balances accuracy with computational efficiency. This evolution has been marked by key theoretical breakthroughs and methodological innovations that have progressively enhanced the accuracy and expanded the applicability of DFT across scientific disciplines. The core principle underlying DFT is that the complex many-electron wave function, which depends on 3N spatial coordinates for an N-electron system, can be replaced with the electron density—a function of only three spatial coordinates—as the fundamental variable determining all ground-state properties [13] [18]. This revolutionary concept has transformed computational approaches to electronic structure calculations, making studies of large systems with hundreds of atoms computationally feasible while maintaining reasonable accuracy [18].

The development of DFT has followed a path of continuous refinement, with each milestone building upon previous insights to address limitations and expand capabilities. From its origins in the Thomas-Fermi model to the current era of machine-learning-enhanced functionals, DFT's journey exemplifies how theoretical frameworks evolve through the collaborative efforts of scientists across decades and disciplines [3]. This article traces these key developments through structured timelines, detailed methodological protocols, and practical research tools, providing a comprehensive resource for scientists engaged in electronic structure calculations and their applications in drug discovery and materials science.

Historical Timeline of DFT Development

Foundational Period (1920s-1960s)

Table 1: Key Developments in the Foundational Period of DFT

| Year | Development | Key Contributors | Significance |

|---|---|---|---|

| 1926 | Schrödinger Equation | Erwin Schrödinger | Established quantum mechanical foundation for electronic structure [3] |

| 1927 | Thomas-Fermi Model | Llewellyn Thomas, Enrico Fermi | First statistical model using electron density instead of wavefunction [3] [1] |

| 1930 | Thomas-Fermi-Dirac Model | Paul Dirac | Added exchange term to Thomas-Fermi model [3] |

| 1951 | Slater Xα Method | John C. Slater | Simplified Hartree-Fock exchange using adjustable parameter α [3] |

| 1964 | Hohenberg-Kohn Theorems | Pierre Hohenberg, Walter Kohn | Provided rigorous foundation proving density uniquely determines properties [3] [13] |

| 1965 | Kohn-Sham Equations | Walter Kohn, Lu Jeu Sham | Introduced practical computational framework with non-interacting reference system [3] |

The earliest precursor to modern DFT emerged in 1927 with the Thomas-Fermi model, which approximated the distribution of electrons in an atom using a statistical approach that treated electrons as a non-interacting Fermi gas in a local potential [1] [18]. This model calculated the kinetic energy using a local density approximation derived from the uniform electron gas, combining it with the external potential from the nucleus and the classical Coulomb repulsion between electrons [18]. While pioneering in its use of electron density as the fundamental variable, the Thomas-Fermi model suffered from significant limitations—it failed to capture atomic shell structure, performed poorly for molecules, and could not describe bonding accurately due to its neglect of quantum exchange effects and the crude approximation of kinetic energy [1] [18].

Paul Dirac enhanced the model in 1930 by incorporating a density-dependent exchange term, but the approach remained too inaccurate for most chemical applications [3] [1]. The field required a more rigorous theoretical foundation, which arrived in 1964 with the Hohenberg-Kohn theorems. These theorems established that (1) the ground-state electron density uniquely determines all properties of a many-electron system, and (2) the ground-state energy can be obtained by minimizing an energy functional with respect to the density [13] [18]. This provided the formal justification for using density as the fundamental variable. The following year, Kohn and Sham introduced their revolutionary equations, which mapped the interacting many-electron system onto a fictitious system of non-interacting electrons with the same density [3] [13]. This approach decomposed the energy functional into computable components, with all the challenging many-body effects relegated to the exchange-correlation functional, which became the focus of subsequent development efforts [3] [13].

Modern Computational Era (1980s-Present)

Table 2: Key Developments in the Modern Computational Era of DFT

| Year | Development | Key Contributors | Significance |

|---|---|---|---|

| 1980s | Generalized Gradient Approximations (GGAs) | Axel Becke, John Perdew, Robert Parr, Weitao Yang | Improved accuracy by including density gradient [3] |

| 1993 | Hybrid Functionals | Axel Becke | Mixed Hartree-Fock exchange with GGA functionals [3] |

| 1998 | Nobel Prize in Chemistry | Walter Kohn | Recognized foundational contributions to DFT [3] |

| 2001 | Jacob's Ladder of DFT | John Perdew | Classification scheme for functionals by accuracy/cost [3] |

| 2025 | Deep-Learning-Powered DFT | Microsoft Research | Used neural networks trained on large datasets to improve accuracy [3] |

The 1980s witnessed a crucial advancement with the development of Generalized Gradient Approximations (GGAs), which improved upon the Local Density Approximation (LDA) by including the gradient of the electron density in addition to its value at each point [3]. This allowed the functionals to account for the non-uniformity of electron density in real atoms and molecules, significantly improving accuracy for molecular geometries, bond energies, and reaction barriers [3] [18]. GGAs marked the point where DFT became sufficiently accurate for chemical applications, beginning its rise to prominence as the workhorse method of computational chemistry [3].

In 1993, Axel Becke introduced hybrid functionals, which mixed a fraction of exact Hartree-Fock exchange with GGA exchange-correlation functionals [3]. This approach further improved accuracy for many chemical properties, particularly atomization energies, reaction barriers, and electronic excited states, albeit at increased computational cost [3] [19]. The B3LYP functional became particularly influential in quantum chemistry due to its improved accuracy for molecular systems [18]. The growing proliferation of functionals led John Perdew to introduce "Jacob's Ladder of DFT" in 2001, a classification scheme that organized functionals in a hierarchy from least to most sophisticated, with each "rung" adding more complex ingredients to improve accuracy [3].

The most recent milestone comes from Microsoft Research, which in 2025 introduced a deep-learning-powered DFT model trained on over 100,000 data points [3]. This approach allows the model to learn which features are relevant for accuracy rather than relying on the physically motivated ingredients of Jacob's ladder, potentially escaping the traditional trade-off between computational cost and accuracy [3]. This development points toward a new era where machine learning techniques enhance or potentially replace traditional functional development.

Theoretical and Computational Framework

The Kohn-Sham Equations Methodology

The Kohn-Sham approach represents the practical implementation of DFT that enabled its widespread adoption. The fundamental concept involves replacing the original interacting many-electron system with an auxiliary system of non-interacting electrons that generates the same electron density [13] [18]. This ingenious mapping transforms an intractable many-body problem into a manageable single-particle problem.

The Kohn-Sham total energy functional takes the form:

Where:

- ( T_s[\rho] ) is the kinetic energy of the non-interacting reference system

- The second term represents the interaction with the external potential (typically from nuclei)

- The third term is the classical Hartree (Coulomb) repulsion

- ( E_{\text{xc}}[\rho] ) is the exchange-correlation functional that captures all quantum many-body effects [18]

The corresponding Kohn-Sham equations for the auxiliary system are:

With the effective potential:

And the exchange-correlation potential:

The electron density is constructed from the occupied Kohn-Sham orbitals:

These equations must be solved self-consistently because the effective potential depends on the density, which in turn depends on the orbitals [13] [18].

Computational Implementation Protocol

Protocol 1: Self-Consistent Field Procedure for Kohn-Sham DFT

Initialization

- Generate initial guess for electron density ( \rho^{(0)}(\mathbf{r}) )

- Common approaches: superposition of atomic densities, Hartree-Fock calculation, or previous calculation results

Effective Potential Construction

- Compute Hartree potential: ( V_H(\mathbf{r}) = \int \frac{\rho(\mathbf{r}')}{|\mathbf{r} - \mathbf{r}'|} d\mathbf{r}' )

- Calculate exchange-correlation potential: ( V{\text{xc}}(\mathbf{r}) = \frac{\delta E{\text{xc}}[\rho]}{\delta \rho(\mathbf{r})} )

- Construct total effective potential: ( V{\text{eff}}(\mathbf{r}) = V{\text{ext}}(\mathbf{r}) + VH(\mathbf{r}) + V{\text{xc}}(\mathbf{r}) )

Kohn-Sham Equation Solution

- Solve ( \left[-\frac{1}{2} \nabla^2 + V{\text{eff}}(\mathbf{r})\right] \phii(\mathbf{r}) = \epsiloni \phii(\mathbf{r}) )

- Typically implemented using basis set expansion (plane waves, Gaussians) or real-space grids

- Diagonalize Kohn-Sham Hamiltonian to obtain orbitals ( \phii ) and eigenvalues ( \epsiloni )

Density Update

- Construct new electron density from occupied orbitals: ( \rho^{\text{new}}(\mathbf{r}) = \sum{i=1}^{N} fi |\phi_i(\mathbf{r})|^2 )

- ( f_i ) represents orbital occupancy (typically 0, 1, or 2 for restricted calculations)

Convergence Check

- Compare new density with previous iteration (( \rho^{\text{new}} ) vs ( \rho^{\text{old}} ))

- Common convergence criteria: density change, energy change, or potential change below threshold

- If not converged, employ mixing scheme (linear, Pulay, Kerker) to generate new input density and return to step 2

Property Calculation

Visualization of DFT Theoretical Framework

Research Reagent Solutions: Computational Tools for DFT

Table 3: Essential Computational Tools and Methods for DFT Research

| Tool Category | Specific Examples | Function/Purpose | Key Applications |

|---|---|---|---|

| Exchange-Correlation Functionals | LDA, GGA (PBE), Meta-GGA, Hybrid (B3LYP, HSE), Range-Separated | Approximate quantum many-body effects; Determine accuracy/cost balance [3] [19] | Materials properties, Molecular geometries, Reaction energies |

| Basis Sets | Plane Waves, Gaussian-Type Orbitals, Numerical Orbitals, Slater-Type Orbitals | Represent Kohn-Sham orbitals; Balance between completeness and computational cost [19] | Solid-state calculations (plane waves), Molecular systems (Gaussians) |

| Pseudopotentials/PAW | Norm-Conserving, Ultrasoft, Projector Augmented Wave (PAW) | Replace core electrons; Reduce computational cost; Handle strong potentials [13] | Systems with heavy elements, Transition metals, Periodic systems |

| Software Packages | VASP, Quantum ESPRESSO, Gaussian, NWChem, ABINIT | Implement DFT algorithms; Provide user interfaces; Post-processing tools [19] | Electronic structure calculations, Molecular dynamics, Spectroscopy |

| Solvation Models | PCM, COSMO, SMD | Implicit treatment of solvent effects; Improve accuracy for solution-phase systems [19] | Drug design, Solution chemistry, Electrochemistry |

The "research reagents" of computational DFT consist of the mathematical tools and approximations that enable practical calculations. Exchange-correlation functionals form the most crucial component, as they determine the accuracy and applicability of the method. The Local Density Approximation (LDA), developed alongside the Kohn-Sham equations, assumes the exchange-correlation energy at each point equals that of a uniform electron gas with the same density [3] [13]. While reasonable for simple metals and solid-state physics, LDA proved inadequate for chemistry applications due to its overbinding tendency and poor description of molecular properties [3].

Generalized Gradient Approximations (GGAs) significantly improved upon LDA by including the gradient of the density, better capturing the non-uniformity of electron distribution in real systems [3] [19]. Functionals like PBE (Perdew-Burke-Ernzerhof) became workhorses for materials science, offering improved lattice constants, bond lengths, and surface energies. Hybrid functionals, such as B3LYP, incorporated a fraction of exact Hartree-Fock exchange with GGA, dramatically improving performance for molecular systems, particularly for atomization energies, reaction barriers, and electronic properties [3] [18] [19]. The most recent development involves machine-learning-powered functionals that learn the exchange-correlation mapping from large datasets, potentially surpassing the accuracy of traditional physically motivated functionals [3].

Basis sets represent another critical component, with the choice depending on the system type. Plane waves naturally describe periodic systems and are commonly used in materials science, while Gaussian-type orbitals excel for molecular calculations due to their efficient integral evaluation. Pseudopotentials or the Projector Augmented Wave (PAW) method handle the strong potentials of atomic cores, allowing researchers to focus computational resources on the chemically active valence electrons [13].

Advanced Applications and Future Directions

Applications in Complex Systems

DFT has expanded beyond its original scope to address increasingly complex systems and phenomena. In drug discovery and biochemistry, DFT calculations provide insights into protein-ligand interactions, reaction mechanisms in enzymes, and spectroscopic properties of biological molecules [18]. The favorable accuracy-to-cost ratio of DFT enables studies on systems with hundreds of atoms, bridging the gap between small-molecule quantum chemistry and biological complexity.