Navigating the Precision-Cost Frontier: A 2025 Guide to Computational Trade-Offs in Drug Discovery

This article provides a comprehensive analysis of the critical trade-offs between computational cost and predictive accuracy in modern drug discovery.

Navigating the Precision-Cost Frontier: A 2025 Guide to Computational Trade-Offs in Drug Discovery

Abstract

This article provides a comprehensive analysis of the critical trade-offs between computational cost and predictive accuracy in modern drug discovery. Tailored for researchers and development professionals, it explores the foundational theories of computational complexity, showcases cutting-edge methodologies from generative AI to quantum-classical hybrids, and offers practical frameworks for troubleshooting and optimization. Through comparative validation of leading platforms and techniques, this guide delivers actionable insights for making strategic, resource-aware decisions that accelerate the development of novel therapeutics without compromising scientific rigor.

The Inescapable Trade-Off: Understanding Information-Computation Theory in Biomedical Research

Frequently Asked Questions (FAQs)

Q1: What are the primary factors that drive computational complexity in modern virtual screening? Computational complexity is primarily driven by the size of the chemical space being screened and the accuracy of the scoring functions used. Virtual screening libraries have expanded from millions to billions and even trillions of compounds. Screening these "gigascale" or "ultra-large" spaces requires significant computational resources, as evaluating each compound involves predicting its 3D binding pose and affinity against a target protein, a process that can be highly calculation-intensive [1]. The choice between faster, less accurate methods and slower, physics-based simulations that account for molecular flexibility creates a direct trade-off between speed and precision [1] [2].

Q2: How can researchers strategically balance the trade-off between computational cost and prediction accuracy? A successful strategy involves iterative screening and multi-pronged approaches. Instead of running the most computationally expensive simulations on an entire library, researchers can first use fast machine learning models or simplified scoring functions to filter the library down to a smaller set of promising candidates. This enriched subset can then be analyzed with more rigorous and costly methods, such as molecular dynamics simulations or free energy perturbation calculations [1] [3]. This layered strategy optimizes resource allocation by applying high-cost, high-accuracy methods only where they are most needed.

Q3: What are the common pitfalls in AI-driven binding affinity predictions, and how can they be mitigated? A major pitfall is the dependency on the quality and breadth of training data. AI models can produce false positives or negatives if the underlying data is biased or incomplete [4] [5]. To mitigate this, it is crucial to use large, experimentally validated datasets and to incorporate physics-based principles where possible. Furthermore, models should be continuously validated with experimental results in a closed-loop design-make-test-analyze (DMTA) cycle to identify and correct for model drift or inaccuracies [6] [2]. Transparency in model architecture and inputs is also key to building trust and understanding limitations [7].

Q4: What computational resources are typically required for different stages of AI-driven drug discovery? Resource requirements vary dramatically by task. Virtual screening of billion-compound libraries is often performed on high-performance computing (HPC) clusters or with cloud computing resources, sometimes leveraging GPUs for parallel processing [1] [6]. In contrast, generative AI for molecular design also requires significant GPU power for training and inference. The most computationally demanding tasks are detailed quantum chemistry calculations and free energy simulations for lead optimization, which can require weeks of computation time on specialized HPC systems [4] [6].

Q5: How does the use of experimental data integrate with and improve computational models? Experimental data is the cornerstone of reliable computational models. Data from Cellular Thermal Shift Assays (CETSA), which confirms target engagement in a physiologically relevant cellular environment, is used to validate and refine computational predictions [3]. In DMPK, high-quality experimental measurements of properties like solubility, permeability, and metabolic stability are essential for building accurate machine learning models that can predict these properties for new compounds [2]. This close integration of experimental and computational work ensures models are grounded in biological reality.

Troubleshooting Guides

Guide 1: Managing Excessive Computational Time in Virtual Screening

Problem: Virtual screening of a large compound library is taking an unacceptably long time, slowing down the research pipeline.

Solution:

- Step 1: Implement a Multi-Stage Filtering Workflow. Do not apply your most computationally expensive method to the entire library. Start with fast, lightweight filters like simple pharmacophore models or 2D similarity searches to quickly remove obvious non-binders [1] [3].

- Step 2: Leverage Pre-computed Chemical Libraries. Use commercially available or publicly accessible pre-filtered libraries (e.g., ZINC20) that contain molecules selected for drug-like properties, reducing the initial pool size [1].

- Step 3: Optimize Hardware Utilization. Ensure your docking software is configured to use GPU acceleration, which can speed up calculations by orders of magnitude compared to using CPUs alone [1] [6].

- Step 4: Utilize Active Learning. Implement machine learning-based active learning, where the model selectively chooses which compounds to evaluate with more expensive methods, focusing resources on the most informative regions of the chemical space [1].

Guide 2: Addressing Inaccurate AI/ML Predictions in ADMET Profiling

Problem: Machine learning models for ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties are generating predictions that do not align with subsequent experimental results.

Solution:

- Step 1: Audit the Training Data. Investigate the data used to train the model. Ensure it is relevant to your chemical series and is of high quality. Be wary of models trained on small, noisy, or biased datasets [2] [5].

- Step 2: Assess Applicability Domain. Determine if your query molecules fall outside the "applicability domain" of the model—the chemical space on which it was trained. Predictions for molecules that are structurally novel to the model are inherently less reliable [2].

- Step 3: Refine with Experimental Data. Use a small set of in-house experimental results to fine-tune or validate the model. This can help calibrate the model to your specific project needs [2].

- Step 4: Employ Model Ensembles. Instead of relying on a single model, use an ensemble of different models or algorithms. A consensus prediction from multiple models is often more robust and accurate than any single one [3].

Guide 3: Debugging a Failed Experimental Validation of a Computational Hit

Problem: A compound predicted by computational models to be a strong binder shows no activity in a biological assay.

Solution:

- Step 1: Verify Compound Integrity. Confirm the synthesized compound's identity and purity via analytical chemistry (e.g., LC-MS, NMR). Synthesis errors or compound degradation are common reasons for failure [5].

- Step 2: Re-examine the Assay Conditions. Ensure the biochemical or cellular assay is functioning correctly and has the necessary sensitivity. Confirm that the target protein is present and in its native conformation [3].

- Step 3: Re-inspect the Predicted Binding Pose. Analyze the computational model's proposed binding mode. Look for unrealistic molecular geometries, clashes with the protein, or ignored key solvent molecules that might have led to an over-optimistic affinity score [1].

- Step 4: Probe for Off-Target Effects. Use a phenotypic assay or a broad panel screening to see if the compound is active through a different, unpredicted mechanism, which could explain the lack of activity against your intended target [7].

Quantitative Data Comparison

Table 1: Comparison of Computational Methods in Drug Discovery: Scaling of Cost and Accuracy

| Computational Method | Typical Library Size | Relative Computational Cost (CPU/GPU hours) | Key Accuracy Metrics | Primary Use Case |

|---|---|---|---|---|

| 2D Ligand-Based Similarity Search | Millions to Billions [1] | Low (CPU) | Enrichment Factor (EF) | Rapid hit identification, scaffold hopping |

| Standard Rigid Docking | Millions [1] | Medium (CPU/GPU) | Root-Mean-Square Deviation (RMSD) of pose | Structure-based virtual screening |

| Ultra-Large Library Docking | Billions to Trillions (e.g., 11B+) [1] | High (HPC/GPU Cluster) | Hit Rate, Potency (IC50) | Exploring vast, novel chemical spaces |

| AI-Based Affinity Prediction (e.g., GNNs) | Billions [4] [6] | Medium-High (GPU) | Pearson R vs. experimental data [2] | High-throughput ranking of compounds |

| Molecular Dynamics (MD) Simulations | 10s - 100s [1] | Very High (HPC) | Free Energy of Binding (ΔG), RMSD | Binding mechanism and detailed stability |

| Free Energy Perturbation (FEP) | 10s [6] | Extremely High (HPC) | ΔΔG (kcal/mol) error < 1.0 [6] | Lead optimization, relative affinity |

Table 2: Data Requirements and Infrastructure for AI/ML Model Training

| Model Type | Typical Training Data Volume | Minimum Infrastructure | Impact of Data Quality on Model Performance |

|---|---|---|---|

| QSAR/2D Property Predictors | 100s - 10,000s of data points [2] | Multi-core CPU Server | Very High. Noisy or inconsistent experimental data directly translates to poor prediction accuracy [2]. |

| Graph Neural Networks (GNNs) | 10,000s - Millions of data points [4] [6] | High-RAM GPU Server | Critical. Requires large, diverse, and well-annotated datasets. Data bias leads to limited applicability [4]. |

| Generative AI (VAEs, GANs) | 100,000s+ molecular structures [6] [5] | Multi-GPU Cluster | Fundamental. Defines the chemical space and synthesizability rules for generated molecules [5]. |

| Foundation Models for Protein Structures | Billions of amino acids (e.g., AlphaFold DB) [8] | Specialized Large-Scale GPU Cluster | Defining. Model capability is almost entirely determined by the scale and diversity of the training data. |

Experimental Protocols

Protocol 1: Iterative Workflow for Cost-Effective Ultra-Large Virtual Screening

This protocol describes a multi-stage methodology for efficiently screening gigascale chemical libraries by balancing fast machine learning and more accurate, costly molecular docking [1].

Principle: To maximize the exploration of chemical space while minimizing computational expense by applying high-fidelity methods only to a pre-enriched subset of compounds.

Step-by-Step Methodology:

- Library Preparation: Obtain a make-on-demand virtual compound library (e.g., ZINC20, Enamine REAL). Apply initial filters for drug-likeness (e.g., Lipinski's Rule of Five) and remove undesirable chemical motifs [1].

- Machine Learning-Based Pre-Screening:

- Train a ligand-based machine learning model (e.g., a graph neural network) on known active and inactive compounds for the target.

- Use this model to score and rank the entire ultra-large library. This step rapidly reduces the library size by several orders of magnitude.

- Structure-Based Docking:

- Take the top 1-10 million compounds from the ML pre-screen and subject them to molecular docking against the 3D structure of the target protein.

- Use a standard docking scoring function for a balance of speed and accuracy.

- Iterative Refinement (Optional):

- Final Selection and Experimental Validation: Select a diverse set of a few hundred to a thousand top-ranking compounds for purchase and experimental testing in a primary assay.

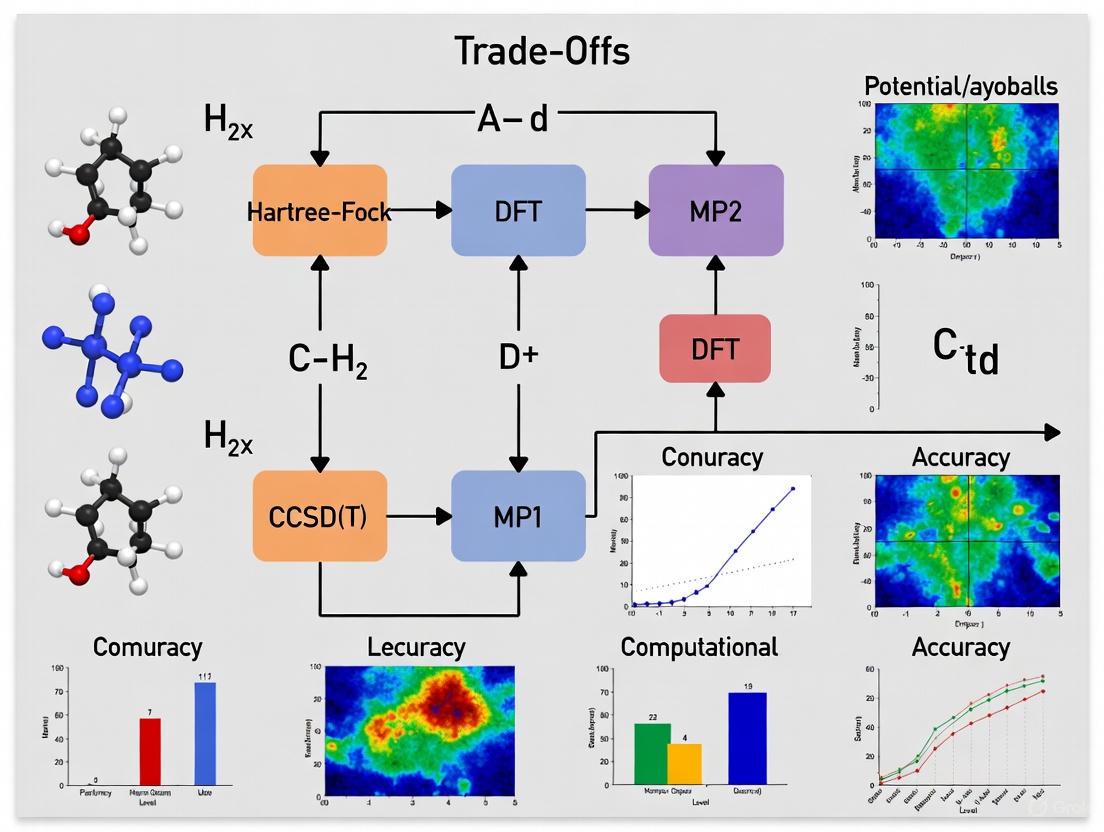

Diagram: Multi-Stage Virtual Screening Workflow

Protocol 2: Validating Computational Predictions with Cellular Target Engagement Assays

This protocol ensures that computationally identified hits demonstrate direct binding to the intended target in a physiologically relevant cellular context, using the Cellular Thermal Shift Assay (CETSA) as a key validation tool [3].

Principle: A compound that engages its protein target can stabilize it against thermally induced denaturation. This shift in thermal stability can be quantified as evidence of direct binding in intact cells.

Step-by-Step Methodology:

- Cell Treatment: Divide a cell culture expressing the target protein into aliquots. Treat one set with the computational hit compound (or a range of concentrations) and another set with a vehicle control (e.g., DMSO). Incubate to allow cellular uptake and binding.

- Heat Challenge: Subject the cell aliquots to a series of precise temperatures (e.g., from 40°C to 65°C) in a thermal cycler. This heat challenge denatures non-ligand-bound proteins.

- Cell Lysis and Protein Solubilization: Lyse the heated cells and separate the soluble (properly folded) protein from the insoluble (aggregated) protein.

- Protein Quantification: Quantify the amount of soluble target protein remaining at each temperature using a specific detection method, such as Western blot or a targeted proteomics approach like mass spectrometry [3].

- Data Analysis:

- Plot the fraction of soluble protein remaining against the temperature for both the compound-treated and vehicle-control samples.

- A positive target engagement is indicated by a rightward shift in the melting curve (Tm shift) of the treated sample, meaning the protein is stabilized and denatures at a higher temperature.

- The magnitude of the Tm shift can be correlated with binding affinity.

Diagram: CETSA Experimental Workflow for Validation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Computational and Experimental Resources for Validated Discovery

| Tool / Resource Name | Type | Primary Function in Workflow | Key Consideration for Cost-Accuracy Trade-off |

|---|---|---|---|

| ZINC20 / Enamine REAL | Virtual Compound Library | Provides access to billions of commercially available, synthesizable compounds for virtual screening [1]. | Library size directly impacts computational cost; pre-filtered subsets can save resources. |

| AutoDock-GPU, FRED | Docking Software | Performs high-throughput molecular docking to predict protein-ligand binding poses and scores [3]. | GPU acceleration is critical for speed. Scoring function choice balances speed and accuracy. |

| CETSA | Experimental Validation Assay | Confirms direct target engagement of a computational hit in a physiologically relevant cellular environment [3]. | Provides critical data to validate computational predictions, preventing pursuit of false positives. |

| Graph Neural Networks (GNNs) | Machine Learning Model | Learns from molecular graph structures to predict activity, toxicity, or other properties [4] [6]. | Requires significant labeled data for training but allows for rapid prediction once trained. |

| Molecular Dynamics (MD) Software (e.g., GROMACS, AMBER) | Simulation Software | Simulates the physical movements of atoms and molecules over time, providing insights into dynamic binding processes [1]. | Extremely high computational cost limits the number of compounds and simulation time feasible. |

| Schrödinger's FEP+ | Advanced Calculation Module | Uses free energy perturbation theory to calculate relative binding affinities with high accuracy [6]. | One of the most computationally expensive methods, reserved for final lead optimization of a few compounds. |

Troubleshooting Guides

Guide 1: Diagnosing Statistical-Computational Trade-offs in Your Experiments

Problem: My model's performance has plateaued, and increasing model complexity does not yield significant accuracy improvements.

Explanation: You have likely reached a statistical-computational gap, where the computationally feasible estimator cannot achieve the information-theoretic lower bound of statistical error. Beyond this point, additional computational resources yield diminishing returns [9].

Solution:

- Confirm the gap: Compare your model's error against known minimax lower bounds for your problem. A significant gap suggests a statistical-computational trade-off [9].

- Algorithm weakening: Consider substituting your objective with a convex relaxation, accepting a known statistical penalty for computational tractability [9].

- Hybrid methods: Implement hierarchical methods that interpolate between computationally extreme points, such as stochastic composite likelihoods [9].

Verification: The table below summarizes key indicators and solutions:

Table: Diagnostic Indicators for Statistical-Computational Trade-offs

| Indicator | Observation | Recommended Action |

|---|---|---|

| Error Plateau | Test error stops improving despite increased model parameters | Switch to convex relaxations with known statistical penalties [9] |

| Training Instability | Validation performance fluctuates wildly with small parameter changes | Implement branched residual connections with multiple schedulers [10] |

| Excessive Training Time | Model requires exponentially more time for marginal gains | Apply coreset constructions to compress data to weighted summaries [9] |

Guide 2: Resolving Computational Bottlenecks in Large-Scale Data Preprocessing

Problem: Data preprocessing is becoming the computational bottleneck in my research pipeline.

Explanation: As dataset sizes grow, serial preprocessing algorithms cannot scale effectively, creating bottlenecks that delay model training and experimentation [11].

Solution:

- Parallelization: Implement Message Passing Interface (MPI) using MPI4Py to parallelize both data preprocessing and model training stages [11].

- Data parallelism: Distribute training data across multiple processors, with each processor computing updates simultaneously [11].

- Model parallelism: For extremely large models, distribute different model components across computational resources [11].

Implementation Protocol:

- Profile your pipeline to identify specific bottleneck operations

- Implement MPI4Py to parallelize the most expensive preprocessing operations

- Balance load across processors to ensure efficient resource utilization

- For deep learning training, implement data parallelism with synchronous or asynchronous updates [11]

Expected Outcome: Research from COVID-19 data analysis demonstrated that parallelization with MPI4Py significantly reduces computational costs while maintaining model accuracy [11].

Guide 3: Managing Switching Costs in Iterative Experimentation

Problem: Frequent model adjustments and retraining are creating excessive computational overhead.

Explanation: Switching costs—penalties incurred from frequent operational adjustments—can accumulate significantly in iterative research workflows, particularly when comparing multiple approaches [12].

Solution:

- Longer commitment periods: Instead of frequent model updates, commit to longer evaluation periods (e.g., 3+ hours instead of 1-hour updates) when possible [12].

- Stable forecasts: Use probabilistic forecasting with scenario averaging to reduce sensitivity to fluctuations [12].

- Novel metrics: Implement the Scenario Distribution Change (SDC) metric to measure temporal consistency in probabilistic forecasts [12].

Workflow Optimization:

Frequently Asked Questions (FAQs)

Q1: What exactly are statistical-computational trade-offs, and why should I care about them in practical research?

Statistical-computational trade-offs describe the inherent tension between achieving the lowest possible statistical error and maintaining computationally feasible procedures. In high-dimensional data analysis, the statistically optimal estimator is often prohibitively expensive to compute, while computationally efficient methods incur a measurable statistical penalty [9]. You should care about these trade-offs because they determine the fundamental limits of what you can achieve with practical resources—understanding them helps you set realistic expectations and choose appropriate methods for your specific accuracy and computational constraints.

Q2: How can I quantitatively estimate the computational cost of achieving a certain level of accuracy in my experiments?

You can use established frameworks to quantify this relationship. The table below summarizes key metrics and approaches:

Table: Frameworks for Analyzing Statistical-Computational Trade-offs

| Framework | Key Metric | Application Scope | Practical Implementation |

|---|---|---|---|

| Oracle (Statistical Query) Model | Number of statistical queries required | Broad class of practical algorithms | Provides lower bounds without unproven hardness conjectures [9] |

| Low-Degree Polynomial Methods | Minimal degree of successful polynomial | Planted clique, sparse PCA, mixture models | Serves as proxy for computational difficulty; failure indicates no polynomial-time algorithm can succeed [9] |

| Convex Relaxation | Sample complexity or risk increase | Combinatorially hard estimators (MLE for latent variables) | Tighter relaxations require less data but more computation [9] |

Q3: Are there scenarios where I can improve both accuracy and computational cost simultaneously?

Yes, though this requires careful architectural design. In materials property prediction, researchers developed iBRNet, a deep regression neural network with branched skip connections and multiple schedulers that simultaneously reduced parameters, improved accuracy, and decreased training time [10]. The key is leveraging specific architectural innovations—branched structures with residual connections and sophisticated training schedulers—rather than simply adding more layers [10]. Similar approaches have succeeded in drug discovery applications where optimized neural network architectures outperformed both traditional machine learning and complex deep learning models [13].

Q4: What practical strategies exist for navigating the accuracy-computation trade-off in drug discovery applications?

In computer-aided drug discovery (CADD), several strategies have proven effective:

- Multi-task learning: Improves predicting performance despite data scarcity, though requires techniques like adaptive checkpointing to mitigate negative transfer from imbalanced datasets [13].

- Representation learning: Methods like ChemLM, a transformer language model with self-supervised domain adaptation on chemical molecules, enhance predictive performance for identifying potent pathoblockers [13].

- Hybrid approaches: Frameworks like TRACER integrate molecular property optimization with synthetic pathway generation, generating compounds targeting specific receptors with high reward values [13].

Q5: How do switching costs impact my research workflow, and how can I minimize them?

Switching costs—the penalties from frequent operational adjustments—create a U-shaped relationship between commitment period and performance in optimization tasks [12]. Theoretical analysis reveals that while traditional approaches favor frequent updates (1-hour commitment), incorporating switching costs makes longer commitment periods (3+ hours) optimal when combined with stable forecasts [12]. To minimize them:

- Use stochastic optimization with scenario averaging, which reduces forecast error sensitivity by up to 2.9% in grid costs compared to deterministic approaches [12].

- Implement the Scenario Distribution Change (SDC) metric to measure temporal consistency in your probabilistic forecasts [12].

- Balance commitment periods with forecast stability rather than defaulting to frequent updates [12].

Experimental Protocols

Protocol 1: Implementing Parallelized Data Preprocessing with MPI4Py

Purpose: To significantly reduce computational costs in data preprocessing and modeling stages using parallel computing concepts [11].

Materials:

- Computing cluster or multi-processor system

- MPI4Py library installed

- Dataset for preprocessing and modeling

Procedure:

- Initialize MPI environment: Set up the Message Passing Interface with the required number of processors

- Data partitioning: Distribute the dataset across available processors using scatter operations

- Parallel preprocessing: Each processor independently preprocesses its assigned data subset

- Model training implementation:

- For data parallelism: Each processor computes gradients on its data subset

- For model parallelism: Distribute model components across processors

- Synchronization: Use MPI gather operations to combine results

- Performance comparison: Compare execution time and accuracy against serial implementation

Validation: Applied successfully to COVID-19 data from Tennessee, demonstrating promising outcomes for minimizing high computational cost [11].

Protocol 2: Optimizing Neural Network Architecture under Parametric Constraints

Purpose: To simultaneously improve accuracy and reduce computational cost in materials property prediction tasks using iBRNet architecture [10].

Materials:

- Composition-based numerical vectors representing elemental fractions

- Training datasets (OQMD, AFLOWLIB, Materials Project, or JARVIS)

- Deep learning framework (TensorFlow/PyTorch)

Procedure:

- Data preparation:

- Extract composition-based features (86-dimensional vectors)

- Remove duplicates, keeping most stable structures

- Split data with stratification (81:9:10 ratio for training:validation:test)

Model architecture construction:

- Implement branched skip connections in initial layers

- Add residual connections after each stack

- Use LeakyReLU activation functions

- Employ multiple callback functions (early stopping, learning rate schedulers)

Training configuration:

- Use multiple schedulers for better convergence

- Implement adaptive checkpointing with specialization

- Monitor for signs of overfitting and negative transfer

Evaluation:

- Compare against traditional ML (Random Forest, SVM) and DL models (ElemNet, CGCNN)

- Measure training time, parameter count, and prediction accuracy

Expected Results: iBRNet demonstrated fewer parameters, faster training time with better convergence, and superior accuracy across multiple materials property datasets [10].

Research Reagent Solutions

Table: Computational Frameworks for Managing Accuracy-Cost Trade-offs

| Reagent/Framework | Function | Application Context | Key Benefit |

|---|---|---|---|

| MPI4Py | Parallelizes data preprocessing and model training | Large-scale data analysis, COVID-19 modeling [11] | Flexibility in Python data processing libraries with significant speedup |

| iBRNet | Deep regression neural network with branched skip connections | Materials property prediction, drug discovery [10] | Simultaneously improves accuracy while reducing parameters and training time |

| Convex Relaxation | Substitutes combinatorial objectives with tractable convex sets | Sparse PCA, clustering, latent variable models [9] | Provides computationally efficient algorithms with quantifiable statistical penalty |

| Coreset Constructions | Compresses data to small weighted summaries | Clustering, mixture models [9] | Enables near-optimal solutions with reduced computational burden |

| Stochastic Composite Likelihoods | Interpolates between full and pseudo-likelihood | Learning to rank, structured estimation [9] | Provides explicit trade-off between computational efficiency and statistical accuracy |

| Scenario Distribution Change (SDC) Metric | Measures temporal consistency of probabilistic forecasts | Energy management systems with switching costs [12] | Enables better balance between commitment periods and forecast stability |

The integration of artificial intelligence (AI) and complex computational models has begun to redefine preclinical drug discovery. While these tools promise to slash timelines and reduce costs, the explosive growth in computational demand is creating a new set of challenges. The infrastructure, energy, and expertise required to support this new paradigm are straining research budgets and timelines, creating a critical tension between the pursuit of accuracy and the realities of operational efficiency. This technical support center provides actionable guides and FAQs to help researchers navigate these growing pains and optimize their computational workflows.

The State of Computational Demand: Key Data

The tables below summarize the quantitative pressures facing the sector, from market growth to the direct impact on research and development (R&D).

Table 1: Market Growth and Financial Impact of AI in Pharma & Biotech

| Metric | 2024/2025 Value | 2030+ Projected Value | Key Implication for Preclinical Research |

|---|---|---|---|

| Global AI in Drug Discovery Market [14] | USD 6.3 billion (2024) | USD 16.5 billion by 2034 (CAGR 10.1%) | Rapid market expansion signals increased competition for computational resources and talent. |

| AI Spending in Pharma Industry [15] | - | ~$3 billion by 2025 | Reflects a surge in adoption to reduce the hefty time and costs of drug development. |

| Annual Value from AI for Pharma [15] | - | $350B - $410B annually by 2025 | Highlights the immense potential return, justifying upfront computational investments. |

| Preclinical CRO Market [16] | USD 6.76 billion (2025) | USD 12.21 billion by 2032 (CAGR 8.82%) | Outsourcing to specialized CROs is a growing strategy to manage complex, compute-heavy work. |

Table 2: Computational Demand's Direct Impact on R&D Timelines and Budgets

| R&D Stage | Traditional Challenge | Promise of AI/Compute | Computational Cost & Risk |

|---|---|---|---|

| Drug Discovery | Takes 14.6 years and ~$2.6B on average to bring a new drug to market [15]. | AI can reduce discovery costs by up to 40% and slash development timelines from 5 years to 12-18 months [15]. | Training models for target ID and molecular design requires massive GPU clusters, creating high infrastructure costs [17]. |

| Preclinical Research | Preclinical phase typically takes 1-2 years [18]. Accounts for part of the ~$43M average out-of-pocket non-clinical costs [18]. | AI-driven in silico toxicology can cut preclinical timelines by up to 30% and reduce animal studies [14]. | High-throughput screening and complex multi-omics data integration require scalable cloud or cluster solutions, straining IT budgets [14] [16]. |

| Overall R&D | Clinical trials alone account for ~68% of total out-of-pocket R&D expenditures [18]. | AI is projected to generate $25B in savings in clinical development alone [15]. | Global AI infrastructure demand is rapidly outpacing supply, stressing power grids and requiring trillions in investment [17]. |

Frequently Asked Questions (FAQs) and Troubleshooting Guides

FAQ 1: Our AI models for molecular design are delivering high accuracy, but the training costs are consuming over half our cloud budget. How can we reduce these costs without completely sacrificing model performance?

This is a classic accuracy-efficiency trade-off. The goal is to find a "sweet spot" where performance remains acceptable for your specific use case while computational demands are drastically reduced.

Methodology: A Tiered Optimization Protocol

- Profile and Baseline: Begin by profiling your current model's resource consumption (GPU hours, memory) and establishing its baseline performance (e.g., AUC, enrichment factor).

- Architecture Simplification: Experiment with simpler, more efficient neural network architectures (e.g., lighter-weight CNNs, graph networks) that are known for their parameter efficiency [19].

- Pre-Trained Models: Leverage transfer learning. Start with a model pre-trained on a large, general biochemical dataset and fine-tune it on your specific, smaller dataset. This requires significantly less compute than training from scratch.

- Precision Reduction: Implement mixed-precision training. Using 16-bit floating-point numbers instead of 32-bit can reduce memory usage and speed up training on supported hardware with negligible impact on accuracy [20].

- Hardware-Aware Optimization: Export your model for inference using hardware-specific quantization tools (e.g., GPTQ for NVIDIA GPUs, GGUF for CPUs). As research shows, 4-bit quantization can reduce VRAM usage by over 40%, though it may slow inference on some older GPUs due to dequantization overhead. For CPU-based deployment, GGUF formats have shown an 18x speedup in inference throughput [20].

FAQ 2: We are overwhelmed by the volume and variety of data (genomics, proteomics, imaging) in our preclinical workflows. What is a robust methodology for integrating these multi-omics data without requiring a supercomputer?

Effective multi-omics integration requires a strategic, step-wise approach to avoid computational bottlenecks.

Methodology: A Staged Multi-Omics Data Integration Pipeline

Data Preprocessing and Feature Selection:

- Tool: Use established bioinformatics libraries (e.g., SciKit-Learn in Python).

- Protocol: Independently preprocess each data modality (genomic, proteomic, etc.). This includes normalization, handling missing values, and, most critically, dimensionality reduction (e.g., using Principal Component Analysis - PCA) to extract the most informative features before integration. This step dramatically reduces the computational load for downstream models.

Intermediate Data Integration:

- Tool: Employ multi-omics integration frameworks like MOFA (Multi-Omics Factor Analysis).

- Protocol: Use MOFA to identify the common sources of variation across your different preprocessed data modalities. This model is designed to handle high-dimensional data and provides a lower-dimensional latent representation that captures the essential signal from all data types. This step is more computationally efficient than trying to train a single large model on the raw, combined data.

Downstream Predictive Modeling:

- Tool: Standard machine learning libraries (e.g., XGBoost, simple neural networks).

- Protocol: Use the latent factors from MOFA as the input features for your final predictive model (e.g., for patient stratification or toxicity prediction). Because the input is now a compact, integrated representation, the computational cost of this final step is manageable on standard hardware [14].

FAQ 3: How can we realistically incorporate quantum computing into our preclinical computational roadmap, given its early stage?

While fault-tolerant quantum computers are still years away, a practical and forward-looking approach is to explore Quantum-Hybrid Algorithms available through cloud-based Quantum-as-a-Service (QaaS) platforms.

Methodology: Piloting Quantum Computing for Molecular Simulation

- Problem Identification: Select a specific, computationally intractable problem that is a known bottleneck. A prime candidate is calculating the electronic structure of a small molecule or a critical protein-ligand interaction, a task that is exceptionally challenging for classical computers [21].

- Algorithm Selection: Choose a variational hybrid quantum-classical algorithm like the Variational Quantum Eigensolver (VQE). This algorithm splits the workload: the quantum processor handles the parts of the simulation it is naturally good at (using qubits), while a classical computer optimizes the parameters. This makes it robust against current quantum hardware noise [21].

- Platform and Execution: Use a cloud QaaS platform from providers like IBM, Microsoft, or Amazon Braket. Implement a simplified version of your chosen problem and run it on available quantum processors. The goal of this pilot is not immediate production use but to build internal expertise, benchmark performance against classical methods, and understand the potential scaling advantages for when the hardware matures [21].

- Analysis: Compare the results and computational resource requirements of the quantum-hybrid approach with your current classical methods for the same problem. Document the trade-offs in accuracy, time, and cost.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational and Experimental Reagents for Modern Preclinical Research

| Item | Function in Preclinical Research | Relevance to Cost-Accuracy Trade-offs |

|---|---|---|

| AlphaFold 3 & OpenFold3 [15] [17] | AI models for highly accurate protein structure and protein-DNA interaction prediction. | Reduces the need for expensive, time-consuming experimental methods like crystallography, though running complex predictions requires substantial GPU computation [17]. |

| CETSA (Cellular Thermal Shift Assay) [3] | An experimental method to validate direct drug-target engagement in intact cells, providing physiologically relevant confirmation. | Provides high-quality, mechanistic data early on, de-risking projects and preventing costly late-stage failures due to lack of efficacy. Justifies computational predictions with empirical evidence [3]. |

| In Silico Toxicology Platforms (e.g., DeepTox) [14] | AI-powered tools that predict compound toxicity from chemical structure, using deep neural networks. | Cuts preclinical timelines by up to 30% and reduces reliance on in vivo studies, aligning with the "3Rs" and saving significant resources [14]. |

| Patient-Derived Xenograft (PDX) Models [16] | In vivo models where human tumor tissue is implanted into mice, retaining key characteristics of the original cancer. | Offers high predictive accuracy for oncology drug efficacy, but is expensive and low-throughput. Used strategically to validate the most promising candidates from in silico screens [16]. |

| QLoRA (Quantized Low-Rank Adaptation) [20] | A fine-tuning technique that efficiently adapts large AI models to new tasks with minimal memory overhead. | A key technical solution for managing compute costs. Allows researchers to specialize powerful models for their specific domain without the exorbitant cost of full retraining [20]. |

Workflow and Relationship Visualizations

The following diagrams, generated with Graphviz, illustrate core concepts and workflows discussed in this guide.

Diagram 1: Core Conflict of Computational Growth

Diagram 2: Multi-Omics Data Integration Workflow

Diagram 3: Optimization Pathways for Cost vs. Accuracy

Frequently Asked Questions (FAQs)

What are log-space calculations and why are they used in drug discovery? Log-space calculations involve performing arithmetic operations using the logarithms of values instead of the values themselves. They are essential in computational drug discovery when dealing with extremely small probabilities, such as those found in statistical models and machine learning algorithms. Working in log-space helps prevent numerical underflow, where numbers become smaller than the computer can represent, effectively becoming zero and causing calculations to fail [22].

I keep getting -inf or NaN as results from my model. What is happening?

This is a classic sign of numerical underflow. It occurs when a probability calculation involves multiplying many small numbers together; the product can become so small that it cannot be represented as a floating-point number and underflows to zero. Taking the logarithm of zero then results in negative infinity (-inf), which can propagate through your calculations as NaN (Not a Number). The solution is to refactor your calculations to work entirely in log-space, using operations like logsumexp for addition [22].

My results are inconsistent when comparing floating-point and log-space calculations. Why?

This is likely due to the inherent approximate nature of standard floating-point datatypes like FLOAT or REAL in SQL, or float in Python/NumPy. These types sacrifice exact precision for a wide range of magnitudes and can introduce small rounding errors [23]. When these tiny errors are compounded through many operations—especially in iterative algorithms—they can lead to significant inaccuracies. Using log-space calculations with high-precision floating-point types (e.g., FLOAT(53)/double precision) or fixed-precision types (e.g., DECIMAL) for critical comparisons can mitigate this [23].

Is there a performance cost to using log-space calculations?

Yes, this is a key computational trade-off. Log-space calculations replace simple multiplication with addition (which is fast), but they replace addition with the more computationally expensive logsumexp operation. This trade-off exchanges raw speed for numerical stability and accuracy. The performance impact is generally acceptable given the alternative of failed or incorrect computations, but it should be monitored in performance-critical applications [22].

Troubleshooting Guides

Problem: Numerical Underflow in Probabilistic Models

Symptoms: Your script outputs -inf, NaN, or zero for calculations that should return valid, albeit very small, probabilities.

Diagnosis: You are directly multiplying a long chain of probabilities, each less than 1.0.

Solution: Transition your entire calculation pipeline to log-space.

Verification: Re-run your model with a small, known dataset where you can calculate the correct result by hand or using high-precision arithmetic. The log-space result should match the logarithm of the expected probability.

Problem: Significant Rounding Errors in Summation

Symptoms: Summing many small numbers in log-space yields a result with low accuracy compared to a reference value.

Diagnosis: You are using the naive method to calculate log(a + b) given log(a) and log(b).

Solution: Implement the log-sum-exp trick to maximize numerical precision [22].

This function stably computes the logarithm of a sum by first factoring out the largest exponent to prevent overflow in the exp calculation.

Verification: Test the function with pairs of numbers that span a large range of magnitudes (e.g., log_a = -1000, log_b = -1200). The stable function should return a accurate result, while the naive method may underflow to -inf.

Quantitative Data Comparison

The table below compares the outcomes of different computational approaches for handling probabilities, highlighting the trade-offs.

Table 1: Comparison of Computational Approaches for Probability Calculations

| Computational Approach | Typical Use Case | Key Advantage | Key Disadvantage (Hidden Cost) | Result for Sum of 1e-100 and 1e-200 |

|---|---|---|---|---|

| Linear-Space (Standard) | Simple, well-conditioned problems | Intuitive, direct computation | High risk of numerical underflow/overflow | 0.0 (Underflow) |

| Log-Space (Naive) | Multiplicative models (e.g., HMMs) | Prevents underflow in multiplication | Inaccurate for addition operations | -inf (Calculation fails) |

| Log-Space (Stable Log-Sum-Exp) | Critical summation in log-space (e.g., log(a+b)) |

Prevents underflow and maximizes precision | Increased computational overhead | ~ -230.26 (Correct, stable result) |

Experimental Protocol: Implementing Stable Log-Space Calculations

This protocol provides a step-by-step methodology for integrating stable log-space calculations into a drug discovery pipeline, such as a molecular docking score analysis.

1. Problem Identification and Scope

- Objective: Accurately aggregate the log-likelihood scores of multiple molecular conformations without numerical failure.

- Background: The probability of a binding pose is often a product of thousands of tiny terms, making direct computation impossible.

- Input: A list of log-likelihoods or log-scores from a virtual screening tool.

2. Algorithm Selection and Implementation

- Core Operation: For a list of log-values

[l1, l2, ..., ln], computelog(exp(l1) + exp(l2) + ... + exp(ln))stably. - Recommended Algorithm: Vectorized Log-Sum-Exp.

- Justification: The subtraction of the maximum value (

max_log_val) ensures that the largest term exponentiated is 1.0, preventing overflow and improving the precision of the sum of the smaller terms [22].

3. Validation and Benchmarking

- Create a Ground Truth Dataset: Use a small set of numbers that can be summed accurately using high-precision arithmetic (e.g., Python's

decimalmodule). - Procedure:

- Calculate the true sum and its logarithm using the ground truth method.

- Calculate the result using the stable

stable_logsumexpfunction on the logarithms of the numbers. - Compare the results from step 1 and 2. The absolute difference should be within an acceptable tolerance for your application (e.g.,

1e-12).

- Performance Profiling: Measure the execution time of the

stable_logsumexpfunction against a naive linear-space sum for large arrays (e.g., N > 1,000,000) to quantify the computational overhead.

Experimental Workflow Visualization

The following diagram illustrates the logical workflow for diagnosing and resolving numerical instability in a computational experiment, positioning log-space calculation as a key decision point.

The Scientist's Toolkit: Key Research Reagents & Computational Solutions

This table details essential computational "reagents" for managing the cost-accuracy trade-off in data-intensive research.

Table 2: Essential Computational Tools for Stable Numerical Analysis

| Item / Solution | Function / Purpose | Role in Managing Trade-offs |

|---|---|---|

| Log-Sum-Exp Trick | Stably computes the logarithm of a sum of exponentials. | The primary method for achieving numerical accuracy for addition in log-space, at the cost of increased computation [22]. |

High-Precision Float (FLOAT(53)/double) |

A floating-point datatype that uses more bits (64) for storage. | Reduces rounding errors compared to single-precision floats, providing a middle ground for problems where full log-space calculation is unnecessary [23]. |

Fixed-Precision Numeric (DECIMAL/NUMERIC) |

A datatype that represents numbers with a fixed number of digits before and after the decimal point. | Eliminates rounding errors for financial and other exact calculations, but has a smaller range and can be slower for complex computations [23]. |

Specialized Math Functions (log1p, expm1) |

Accurately compute log(1 + x) and exp(x) - 1 for very small x. |

Crucial for maintaining precision in critical steps of stable algorithms (e.g., in the log-sum-exp trick), preventing loss of significant digits [22]. |

From Theory to Therapy: Implementing Cost-Effective AI and Quantum Models

Frequently Asked Questions (FAQs)

Q1: What is the core difference between traditional supervised learning and deep learning when dealing with drug development data?

A: The core difference lies in feature engineering and data structure handling. Traditional supervised learning requires researchers to manually identify and extract relevant features (e.g., molecular descriptors) from structured data before the model can learn. In contrast, deep learning uses neural networks with multiple layers to automatically learn hierarchical features directly from raw, unstructured data, such as molecular structures or biological sequences [24].

This makes deep learning particularly powerful for complex tasks in drug development like predicting drug-target interactions from raw genomic data or analyzing medical images, as it eliminates the bottleneck of manual feature engineering. However, this advantage comes at the cost of requiring large datasets and significant computational power [25] [24].

Q2: My project has very limited labeled data for a specific therapeutic area. Which strategy can help me avoid overfitting and build an accurate model?

A: Transfer learning is the most suitable strategy for this common scenario. It allows you to leverage knowledge from a pre-trained model (the "source task")—often trained on a large, general dataset—and adapt it to your specific, data-scarce "target task" [26].

For example, you can take a model pre-trained on a large public chemogenomics database and fine-tune it on your small, proprietary dataset for a specific protein target. This approach significantly reduces the computational cost and data requirements compared to training a model from scratch, while also improving the model's ability to generalize from limited data [26] [27]. A study in the manufacturing sector showed that transfer learning could improve accuracy by up to 88% while reducing computational cost and training time by 56% compared to traditional methods [26].

Q3: How do I decide when the complexity of a deep learning model is justified over a simpler supervised model?

A: The decision should be based on a trade-off between your project's requirements for accuracy, the nature and volume of your data, and the computational resources available. The following table summarizes key decision factors:

| Decision Factor | Prefer Traditional Supervised Learning | Prefer Deep Learning |

|---|---|---|

| Data Type | Structured, tabular data (e.g., assay results, physicochemical properties) [24] | Unstructured data (e.g., molecular graphs, medical images, text) [24] |

| Data Volume | Small to medium-sized datasets [24] | Large-scale datasets (thousands to millions of samples) [25] [24] |

| Computational Resources | Limited resources; standard computers [24] | Access to GPUs/TPUs and significant computing power [25] [24] |

| Need for Interpretability | High (e.g., for regulatory submissions or hypothesis generation) [25] [28] | Lower (can tolerate "black box" models for performance) [25] |

Deep learning is justified when facing highly complex, non-linear problems (e.g., de novo molecular generation) where its superior performance outweighs the costs and interpretability limitations [25] [29].

Q4: What are the specific steps to implement a transfer learning protocol for a biological image classification task?

A: Implementing transfer learning involves a systematic, multi-step process:

- Select a Pre-trained Model: Choose a model trained on a large, diverse dataset relevant to your domain. For image-based tasks in biology, a convolutional neural network (CNN) like ResNet or EfficientNet, pre-trained on a general image corpus (e.g., ImageNet), is a common and effective starting point [26].

- Freeze Layers: Preserve the knowledge of the pre-trained model by freezing the weights of its initial layers. These layers contain general feature detectors (e.g., for edges, textures) that are likely useful for your new task [26].

- Add New Layers: Replace the final, task-specific layers of the pre-trained model (the "head") with new layers tailored to your specific classification problem (e.g., a new classifier with the number of outputs matching your biological classes) [26].

- Fine-tune: Train the model on your target biological image dataset. Two common approaches exist:

- Train only the new head: Keep the base model frozen and only train the newly added layers. This is faster and reduces overfitting risk with very small datasets.

- Fine-tune the entire model: Unfreeze all layers and train the entire model at a low learning rate. This can yield higher performance if your target dataset is sufficiently large [26] [27].

Q5: What is "negative transfer" and how can I avoid it in my experiments?

A: Negative transfer is a critical issue in transfer learning where the knowledge from the source task actually reduces the model's performance on the target task instead of improving it. This typically occurs when the source and target tasks are not sufficiently related or compatible [26].

To avoid negative transfer:

- Assess Task Similarity: Carefully evaluate the relationship between your source and target tasks. The source model should have learned features that are fundamentally useful for the target. For instance, a model trained on natural images might not be a good source for a sonar signal processing task.

- Choose Source Models Wisely: Prioritize pre-trained models developed in a related biological or chemical context over completely general models when possible.

- Validate Early: Conduct small-scale pilot experiments to verify that transfer learning provides a performance boost before committing extensive resources [26].

Troubleshooting Guides

Problem: Model is Overfitting on a Small, Labeled Drug Discovery Dataset

Symptoms: The model achieves near-perfect accuracy on the training data but performs poorly on the validation set or new, unseen data.

Solutions:

- Solution 1: Apply Transfer Learning

- Action: Instead of training a model from scratch, use a pre-trained model and fine-tune it on your small dataset. This leverages generalized features learned from a larger, related dataset.

- Rationale: This is a primary use case for transfer learning, as it directly addresses the problem of limited data by starting from a robust, pre-existing knowledge base [26].

- Solution 2: Intensify Regularization

- Action: Increase the strength of regularization techniques (e.g., L1/L2 regularization, dropout layers) in your model.

- Rationale: These techniques penalize model complexity and prevent the network from memorizing the noise in the small training dataset, thereby encouraging it to learn more generalizable patterns [25].

- Solution 3: Use a Simpler Model

- Action: If you are using a deep neural network, consider switching to a traditional supervised learning algorithm like Random Forest or Support Vector Machine.

- Rationale: Traditional models often have lower capacity and are less prone to overfitting on smaller datasets. Their simplicity can be an advantage when data is scarce [24].

Problem: Exceptionally High Computational Cost and Long Training Times for a Deep Learning Model

Symptoms: Model training takes days or weeks, consumes excessive GPU memory, or is prohibitively expensive.

Solutions:

- Solution 1: Leverage Transfer Learning

- Action: Utilize a pre-trained model and fine-tune it for your specific task.

- Rationale: Fine-tuning an existing model requires far less computational power and time than training a large deep learning model from scratch, as you are not starting the learning process from a random initialization [26].

- Solution 2: Optimize Model Architecture

- Action: Explore more efficient neural network architectures (e.g., MobileNet, EfficientNet) that are designed to provide good performance with fewer parameters and computations.

- Rationale: This reduces the fundamental computational load of the model [25].

- Solution 3: Implement Hardware and Software Optimizations

- Action: Ensure you are using optimized software libraries (e.g., TensorFlow, PyTorch) and leverage hardware accelerators like GPUs or TPUs, which are specifically designed for the matrix operations central to deep learning [24].

Experimental Protocols & Workflows

Protocol 1: A Standard Workflow for Comparative Algorithm Evaluation

This protocol provides a methodology for empirically comparing supervised, deep, and transfer learning approaches on a specific drug discovery task.

1. Objective: To determine the optimal machine learning strategy that balances predictive accuracy and computational cost for a given problem (e.g., compound activity prediction).

2. Research Reagent Solutions (Key Materials):

| Item | Function & Specification |

|---|---|

| Curated Dataset | The target task dataset, split into training, validation, and test sets. Should represent the real-world data distribution. |

| Source Pre-trained Model | For transfer learning. A model like a CNN pre-trained on ImageNet for image data, or a chemical language model pre-trained on PubChem for molecular data [26]. |

| ML Framework | Software environment like Python with Scikit-learn (for traditional ML) and PyTorch/TensorFlow (for DL and TL). |

| Computational Infrastructure | Hardware with CPU and, for DL/TL, GPU (e.g., NVIDIA V100, A100) to track training time and cost. |

3. Methodology:

- Data Preprocessing: Prepare your target dataset. For traditional ML, perform feature engineering and scaling. For DL and TL, perform data normalization and augmentation if applicable.

- Model Selection & Setup:

- Supervised (SML): Train a suite of models (e.g., Random Forest, SVM, XGBoost) on the engineered features.

- Deep Learning (DL): Design and train a neural network (e.g., Multi-Layer Perceptron, CNN) from scratch on the raw or minimally processed data.

- Transfer Learning (TL): Select a pre-trained model, freeze its base layers, replace the final classification head, and fine-tune on the target dataset [26].

- Training & Evaluation: Train all models on the same training set. Evaluate on the same held-out test set using predefined metrics (e.g., AUC-ROC, Accuracy, F1-score). Crucially, record the computational cost for each model (e.g., total training time, GPU hours, energy consumption).

- Analysis: Compare the models based on the trade-off between their achieved performance on the test set and the associated computational cost.

The logical relationship and decision flow for selecting a strategy can be visualized as follows:

Protocol 2: Implementing a Transfer Learning Pipeline for Medical Image Analysis

This protocol details the steps for applying transfer learning to a task like classifying histological images, a common application in drug safety assessment.

1. Objective: To develop a high-accuracy image classifier for a specific tissue morphology using a limited set of labeled medical images.

2. Methodology:

- Step 1: Source Model Selection. Choose a pre-trained CNN model (e.g., ResNet-50) that has been trained on a large-scale natural image dataset (e.g., ImageNet). The low-level features it has learned (edges, textures) are transferable to medical images [26] [27].

- Step 2: Base Model Freezing. Remove the original final classification layer of the pre-trained model. Freeze the weights of all the remaining convolutional layers to preserve the learned feature extractors [26].

- Step 3: Custom Classifier Addition. Add a new, randomly initialized classifier on top of the frozen base. This typically consists of one or more fully connected (Dense) layers, with the final layer having a number of units equal to your specific medical image classes [26].

- Step 4: Classifier Training. Train only the newly added layers on your target medical image dataset. Use a standard optimizer and loss function (e.g., categorical cross-entropy).

- Step 5: Optional Fine-tuning. For potential performance gains, unfreeze some of the higher-level layers of the base model and continue training the entire model at a very low learning rate. This allows the model to subtly adapt its more abstract features to the medical domain [26].

The workflow for this protocol is structured as follows:

Quantitative Comparison of Algorithmic Strategies

The table below synthesizes key quantitative and qualitative factors to guide the selection of an algorithmic strategy, with a focus on the trade-off between computational cost and predictive accuracy.

| Factor | Supervised Learning (Traditional) | Deep Learning | Transfer Learning |

|---|---|---|---|

| Typical Data Volume | Small to Medium [24] | Very Large [25] [24] | Small to Medium (target task) [26] |

| Feature Engineering | Manual (required) [24] | Automatic [25] [24] | Automatic (leveraged from source) [26] |

| Computational Cost | Low [24] | Very High [25] [24] | Moderate (significantly lower than training DL from scratch) [26] |

| Training Time | Fast [24] | Slow (hours to days) [24] | Fast (relative to DL) [26] |

| Interpretability | High [25] [24] | Low ("Black Box") [25] [28] | Low to Moderate (inherits DL traits) [25] |

| Best for Data Type | Structured/Tabular [24] | Unstructured (Images, Text) [24] | Target data is scarce or related to a large source domain [26] |

| Key Advantage | Simplicity, Transparency, Works with small data [24] | State-of-the-art accuracy on complex tasks [25] | Reduces data & computational needs; improves generalization on small datasets [26] |

Hybrid AI architectures represent a transformative approach in computational science, strategically merging the data-driven power of generative models with the robust reliability of physics-based simulations. This integration creates systems capable of navigating the complex trade-offs between computational expense and predictive accuracy, a central challenge in scientific computing. By leveraging the Newtonian paradigm (first-principles physics) alongside the Keplerian paradigm (data-driven discovery), researchers can achieve unprecedented performance in applications ranging from drug discovery to advanced engineering simulations [30].

The fundamental value proposition lies in creating a synergistic relationship where each component compensates for the other's limitations. Generative models can explore vast design spaces efficiently, while physics-based simulations provide grounding in fundamental scientific principles, ensuring generated solutions remain physically plausible and scientifically valid. This technical support center provides essential guidance for researchers implementing these sophisticated architectures in their experimental workflows.

Troubleshooting Common Implementation Challenges

Data Integration & Workflow Issues

Q: Our generative model produces chemically valid molecules, but physics-based simulations reject most for poor binding affinity. How can we improve target engagement?

A: This indicates a disconnect between your generative and evaluation components. Implement a nested active learning framework with iterative refinement:

- Problem Analysis: The generative model lacks sufficient feedback from the physics-based oracle (e.g., molecular docking). It's operating in a vacuum without learning from its failures.

- Solution Protocol:

- Establish two nested active learning cycles as demonstrated in successful drug discovery workflows [31].

- In the inner cycle, use fast chemoinformatic oracles (drug-likeness, synthetic accessibility filters) to pre-screen generated molecules. Fine-tune your generative model (e.g., Variational Autoencoder) with molecules passing these filters.

- In the outer cycle, periodically evaluate accumulated molecules using the slower, high-fidelity physics-based oracle (e.g., molecular dynamics, docking simulations).

- Transfer molecules meeting physics-based thresholds to a permanent set for subsequent generative model fine-tuning.

- Technical Configuration:

- Fine-tune your generative model first on a target-specific training set to establish baseline affinity knowledge.

- Set threshold criteria for both cycles based on your accuracy requirements (e.g., docking score < -9 kcal/mol for the outer cycle).

- This creates a continuous feedback loop where the generative component progressively learns to propose candidates with higher probability of physics-based validation.

Q: Our hybrid search for relevant simulation data returns inconsistent results, sometimes missing critical previous work. How can we improve retrieval accuracy?

A: You're likely experiencing the "weakest link" phenomenon identified in hybrid search architectures [32].

- Problem Analysis: A single weak retrieval path (lexical or semantic) can degrade overall system performance. Simple fusion methods like Reciprocal Rank Fusion (RRF) may be inadequately combining results from different paradigms.

- Solution Protocol:

- First, conduct path-wise quality assessment before fusion. Evaluate the standalone performance of each retrieval method (Full-Text Search, Sparse/Dense Vector Search) on your specific dataset.

- Replace simplistic RRF with more sophisticated Tensor-based Re-ranking Fusion (TRF), which has demonstrated higher efficacy by offering semantic power at reduced computational cost [32].

- For technical document retrieval, ensure you're combining at least one lexical method (e.g., BM25-based Full-Text Search) for keyword precision with one semantic method (e.g., Dense Vector Search) for contextual understanding.

- Implementation Check:

- Verify your document chunking strategy; inappropriate chunk sizes severely impact retrieval quality.

- Ensure your embedding model is domain-appropriate (scientific text vs. general language).

- Balance the weight given to each path in your fusion algorithm based on your initial quality assessment.

Performance & Optimization Problems

Q: Our physics-based simulations remain computationally prohibitive despite AI integration, creating bottlenecks. How can we achieve promised 1000x speed improvements?

A: Significant speedups require architectural changes, not just incremental optimization.

- Problem Analysis: You may be using AI as a peripheral component rather than deeply integrating it to replace the most expensive computational segments.

- Solution Protocol:

- Replace Core Solvers: Deploy deep learning models that act as surrogates for numerical solvers. For example, in Computational Fluid Dynamics (CFD), platforms like BeyondMath's generative physics can execute full-scale 3D transient models in under 100 seconds—tasks that traditionally require hours or days on supercomputers [33].

- Employ AI-Accelerated Pre- and Post-Processing: Use AI for mesh generation and result interpretation, which can consume up to 70% of engineering time in traditional workflows.

- Leverage Specialized Hardware: Run AI inference on GPU clusters while maintaining physics simulations on HPC-optimized CPUs, using efficient workload orchestration.

- Configuration Settings:

- For aerodynamic simulations, implement a digital wind tunnel architecture that operates without traditional solver mesh [33].

- Utilize AI models trained on high-fidelity simulation data to predict system behavior without executing full simulations for every design iteration.

- Benchmark against documented performance gains: 500x faster processing in CFD workflows and 1000x improvement in simulation times have been demonstrated in industrial applications [33].

Q: Our hybrid model performs well on training data but generalizes poorly to novel molecular structures. How can we improve out-of-distribution performance?

A: This suggests overfitting and insufficient exploration of the chemical space.

- Problem Analysis: The model is likely exploiting shortcuts in the data rather than learning underlying physical principles. The generative component may be confined to a limited region of chemical space.

- Solution Protocol:

- Enhance Diversity Enforcement: In active learning cycles, explicitly reward dissimilarity from the training set and already-selected molecules. Incorporate a novelty metric into your selection criteria.

- Implement Stochastic Generators: Ensure your generative model (e.g., VAE) samples from the full latent space rather than collapsing to mode-seeking behavior.

- Physics-Based Regularization: Add penalty terms to your loss function that enforce physical constraints (e.g., energy conservation, symmetry properties) regardless of the training data distribution.

- Progressive Difficulty: Start with broader, less restrictive filters in early active learning cycles, gradually tightening criteria as the model improves.

- Validation Method:

- Test generalization on held-out datasets with distinctly different molecular scaffolds.

- Verify that the model can generate structures beyond the "training-like" examples while maintaining physical plausibility and target affinity.

Frequently Asked Questions (FAQs)

Q: In a resource-constrained environment, which component should we prioritize for accuracy: the generative model or the physics simulator?

A: Prioritize the physics simulator's accuracy. It serves as your ground truth oracle—inaccuracies here propagate through the entire learning loop. A simpler generative model with an accurate physics simulator will eventually learn correct structure-property relationships, while an excellent generative model coupled with a poor simulator will learn incorrect physics. For limited resources, consider multi-fidelity approaches: use a fast, approximate physics model for initial screening and reserve high-fidelity simulation only for promising candidates [30].

Q: How do we validate that our hybrid model isn't hallucinating physically impossible solutions?

A: Implement a three-tier validation strategy:

- Internal Consistency Checks: Ensure generated solutions obey fundamental conservation laws (mass, energy, momentum) encoded directly into the model architecture.

- Multi-fidelity Verification: Cross-check AI predictions across different physical resolutions—compare results from fast approximate models with high-fidelity simulations for a subset of cases.

- Experimental Validation: Whenever possible, conduct physical testing on a representative subset of AI-generated designs. In drug discovery, this means synthesizing and testing top candidates, as demonstrated in the CDK2 case study where 8 of 9 AI-generated molecules showed experimental activity [31].

Q: What are the most critical metrics for evaluating the trade-off between computational cost and accuracy in hybrid architectures?

A: Track these key performance indicators simultaneously:

Table: Key Performance Indicators for Hybrid AI Architectures

| Metric Category | Specific Metrics | Target Values |

|---|---|---|

| Accuracy | Prediction vs. Ground Truth Error | <5% deviation from high-fidelity simulation |

| Novelty of Generated Solutions | >30% structurally novel valid solutions | |

| Efficiency | Simulation Time Reduction | 100-1000x faster than traditional methods [33] |

| Number of Design Iterations | Ability to explore 10-100x more design options | |

| Resource | Computational Cost per Iteration | Track reduction in CPU/GPU hours |

| Memory Optimization | 42.76% fewer resources as demonstrated by TEECNet [33] |

Q: How do regulatory agencies view AI-generated candidates in validated scientific workflows?

A: Regulatory attitudes are evolving rapidly. The FDA has published guidance (January 2025) requiring detailed documentation on AI model architecture, inputs, outputs, and validation processes [34]. Key requirements include:

- Transparency and Explainability: AI systems must provide clear explanations for decisions affecting patient safety.

- Bias Mitigation: Rigorous testing to ensure equitable performance across demographic groups.

- Human Oversight: Ultimate human responsibility for critical decisions.

- Continuous Monitoring: Ongoing surveillance to maintain AI system performance.

The European Medicines Agency has similarly established AI offices and frameworks, issuing its first qualification opinion on an AI-based methodology (AIM-NASH) in March 2025 [34].

Experimental Protocols & Workflows

Standardized Protocol for Molecular Design

This protocol implements the nested active learning approach validated in successful hybrid AI drug discovery campaigns [31].

Workflow: Nested Active Learning for Molecular Design

Phase 1: Initialization & Data Preparation

- Data Representation: Convert training molecules to SMILES strings, then tokenize and one-hot encode for model input.

- Model Pre-training: Train your generative model (e.g., VAE) initially on a general molecular dataset to learn fundamental chemical principles.

- Target-Specific Fine-tuning: Further fine-tune the model on target-specific data to establish baseline affinity knowledge.

Phase 2: Nested Active Learning Cycles

- Inner Cycle (Chemical Optimization):

- Sample and generate new molecules from the current model.

- Evaluate generated molecules using fast chemoinformatic oracles (drug-likeness, synthetic accessibility, similarity filters).

- Fine-tune the model on molecules meeting threshold criteria (temporal-specific set).

- Repeat for predetermined iterations (e.g., 5-10 cycles).

- Outer Cycle (Physics Validation):

- After inner cycles complete, evaluate accumulated molecules using physics-based oracles (molecular docking, MD simulations).

- Transfer molecules meeting physics-based thresholds to permanent-specific set.

- Fine-tune model on the permanent set to reinforce successful design patterns.

- Repeat entire nested process for multiple outer cycles (e.g., 3-5 cycles).

Phase 3: Candidate Selection & Validation

- Apply stringent filtration to select top candidates from the permanent set.

- Conduct intensive molecular modeling (e.g., PELE simulations, Absolute Binding Free Energy calculations).

- Validate through experimental synthesis and bioassays.

Protocol for Hybrid Engineering Simulation

This protocol leverages AI to accelerate traditional physics-based simulations in engineering applications [33].

Workflow: AI-Accelerated Engineering Simulation

Phase 1: Surrogate Model Development

- Data Generation: Run a diverse set of high-fidelity physics simulations (CFD, FEA) to create training data covering the design space of interest.

- Model Selection: Choose appropriate architecture—Physics-Informed Neural Networks (PINNs) for embedding physical laws, or surrogate CNNs for image-based simulation data.

- Training & Validation: Train AI surrogate model to predict simulation outcomes, validating against held-out high-fidelity simulation data.

Phase 2: AI-Driven Design Exploration

- Rapid Iteration: Use the trained surrogate model to evaluate thousands of design variations in minutes instead of days.

- Multi-objective Optimization: Simultaneously optimize for multiple performance criteria (e.g., aerodynamic efficiency, structural integrity, thermal management).

- Design Space Mapping: Identify promising regions of the design space for focused investigation.

Phase 3: High-Fidelity Validation

- Select top-performing designs from AI exploration for traditional high-fidelity simulation.

- Compare AI predictions with ground truth simulations to validate accuracy.

- If discrepancy exceeds thresholds, augment training data and refine surrogate model.

Table: Essential Resources for Hybrid AI Research

| Resource Category | Specific Tools/Solutions | Function & Application |

|---|---|---|

| Generative Models | Variational Autoencoders (VAE) [31] | Molecular generation with continuous latent space for smooth interpolation |

| Generative Adversarial Networks (GANs) | High-quality molecular generation (requires careful training to avoid mode collapse) | |

| Transformer-based Models [34] | Sequence-based generation leveraging large chemical language models | |

| Physics Simulators | Molecular Dynamics (e.g., GROMACS, AMBER) | High-fidelity simulation of molecular motion and interactions |

| Docking Software (e.g., AutoDock, Schrödinger) | Prediction of ligand binding poses and affinity | |

| CFD Solvers (e.g., OpenFOAM, ANSYS) [33] | Fluid dynamics simulation for engineering applications | |

| Hybrid Frameworks | Active Learning Controllers | Manages iterative feedback between generative and physics components |

| Tensor-based Re-ranking Fusion (TRF) [32] | Advanced method for combining multiple retrieval paradigms | |

| Physics-Informed Neural Networks (PINNs) [30] | Embeds physical laws directly into neural network loss functions | |

| Infrastructure | GPU Clusters (NVIDIA) | Accelerates both AI training and physics simulations |

| HPC Environments (AWS Parallel Cluster) [35] | Managed environment for large-scale parallel computing | |

| Hybrid Search Databases (Infinity) [32] | Supports combined lexical and semantic retrieval for research data |

Visualization Standards for Accessible Scientific Communication

All diagrams and visualizations must comply with WCAG 2.1 AA contrast standards (minimum 4.5:1 for normal text) to ensure accessibility for researchers with visual impairments [36] [37]. The color palette for all diagrams is restricted to: #4285F4 (blue), #EA4335 (red), #FBBC05 (yellow), #34A853 (green), #FFFFFF (white), #F1F3F4 (light gray), #202124 (dark gray), #5F6368 (medium gray).

Implementation Guidelines:

- For nodes containing text, explicitly set

fontcolorto #202124 against light backgrounds (#F1F3F4, #FFFFFF, #FBBC05) or #FFFFFF against dark backgrounds (#4285F4, #EA4335, #34A853, #5F6368). - Use WebAIM's Contrast Checker or similar tools to validate all color combinations before publication.

- Provide alternative text descriptions for all diagrams to support screen reader users.

By adhering to these troubleshooting guidelines, experimental protocols, and accessibility standards, research teams can effectively implement hybrid AI architectures that optimally balance computational cost with predictive accuracy across diverse scientific domains.

Frequently Asked Questions (FAQs)