Noise Resilience in Quantum Algorithms: A Comparative Analysis of VQE and Quantum Phase Estimation for Biomedical Applications

This article provides a comprehensive comparative analysis of the Variational Quantum Eigensolver (VQE) and Quantum Phase Estimation (QPE) algorithms, focusing on their performance and resilience under the noisy conditions of...

Noise Resilience in Quantum Algorithms: A Comparative Analysis of VQE and Quantum Phase Estimation for Biomedical Applications

Abstract

This article provides a comprehensive comparative analysis of the Variational Quantum Eigensolver (VQE) and Quantum Phase Estimation (QPE) algorithms, focusing on their performance and resilience under the noisy conditions of current NISQ hardware. Targeting researchers and professionals in drug development, we explore the foundational principles, methodological applications in quantum chemistry, and advanced error-mitigation strategies for both algorithms. By synthesizing recent experimental validations and benchmarking studies, particularly for molecular ground-state energy calculations, we delineate the practical trade-offs between accuracy, resource requirements, and noise robustness. The conclusions offer a strategic outlook on selecting and optimizing these algorithms to accelerate computational tasks in biomedical research, such as molecular simulation and drug discovery.

Fundamental Principles and Intrinsic Noise Susceptibility of VQE and QPE

In the pursuit of quantum advantage for computational chemistry, two distinct algorithmic paradigms have emerged: the hybrid quantum-classical approach, exemplified by the Variational Quantum Eigensolver (VQE), and the purely quantum approach, epitomized by Quantum Phase Estimation (QPE). Their fundamental operational principles diverge significantly, especially in how they leverage quantum and classical computational resources. This guide provides a detailed, experimental data-driven comparison of these two strategies, focusing on their performance, resource requirements, and resilience to the noisy conditions present on today's quantum hardware.

Core Operational Principles

Variational Quantum Eigensolver (VQE): A Hybrid Feedback Loop

The VQE operates on a hybrid quantum-classical principle where a quantum processor and a classical computer work in tandem through a closed-loop optimization [1] [2] [3].

- Quantum Subroutine: A parameterized quantum circuit (ansatz) is prepared on the quantum processor. This circuit is applied to an initial state (often the Hartree-Fock state) to generate a trial quantum state, ( |\psi(\vec{\theta})\rangle ).

- Measurement: The expectation value of the molecular Hamiltonian, ( \langle H(\vec{\theta})\rangle = \langle\psi(\vec{\theta})|H|\psi(\vec{\theta})\rangle ), is estimated by measuring the output state in various Pauli bases [4].

- Classical Optimization: The measured energy is fed to a classical optimizer. The optimizer proposes new parameters ( \vec{\theta}_{\text{new}} ) with the goal of minimizing the energy. These new parameters are then used in the next quantum circuit evaluation, closing the loop. This process iterates until the energy converges to a minimum [5].

A key feature of VQE is its inherent resilience to certain types of noise. Since the algorithm seeks the parameter set that produces the lowest energy, it can naturally compensate for coherent errors that are equivalent to a parameter shift [1].

Quantum Phase Estimation (QPE): A Purely Quantum Protocol

In contrast, QPE is a purely quantum algorithm designed to provide a precise, direct measurement of an energy eigenvalue [6].

- Principle: Given a unitary operator ( U ) (derived from the system's Hamiltonian) and an approximate eigenstate ( |u\rangle ), QPE estimates the phase ( \phi ) in ( U|u\rangle = e^{2\pi i\phi}|u\rangle ), which is directly related to the energy.

- Execution: The algorithm requires a large register of coherent ancilla qubits. It employs the inverse Quantum Fourier Transform (IQFT) on the ancilla register after applying a series of controlled-( U^{2^k} ) operations. Measuring the ancilla qubits yields a binary string representing the phase ( \phi ) to high precision [6] [7].

- Resource Intensity: Standard QPE demands deep circuits and long coherence times, making it highly susceptible to noise and largely impractical for current Noisy Intermediate-Scale Quantum (NISQ) devices [6] [7].

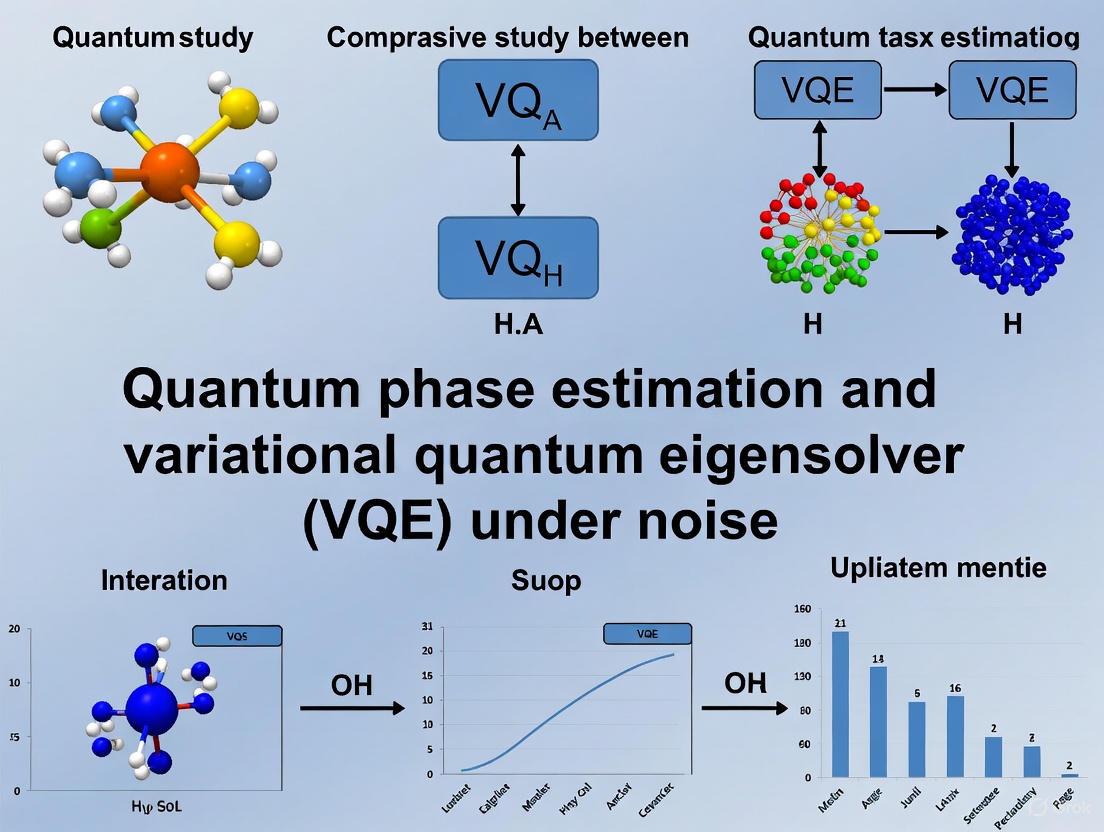

The following diagram illustrates the fundamental operational differences between these two algorithms.

Experimental Protocols & Performance Data

Key Experimental Implementations

Research groups have developed and tested various implementations of these algorithms to overcome NISQ-era limitations.

- Machine Learning-Enhanced VQE: Karim et al. used supervised learning on intermediate VQE optimization data to predict optimal circuit parameters. This approach demonstrated accelerated convergence and noise resilience on IBM quantum devices for H₂, H₃, and HeH⁺ molecules [1].

- Handover Iterative VQE (HiVQE): This algorithm hybridizes VQE with classical Selected Configuration Interaction (SCI). The quantum computer generates important electron configurations, while the classical computer constructs and diagonalizes the subspace Hamiltonian to determine the exact ground state within that subspace. This avoids the direct energy estimation pitfalls of standard VQE [3].

- Adaptive Windowed QPE (AWQPE): To mitigate QPE's resource demands, Shukla and Vedula proposed a modular algorithm. AWQPE uses small, independent blocks of control qubits to estimate multiple phase bits simultaneously within a "window," significantly reducing iterations and ancilla qubit requirements while incorporating robust classical post-processing [6].

- Quantum Phase Difference Estimation (QPDE): In a collaboration between Mitsubishi Chemical Group and Q-CTRL, a tensor-based QPDE algorithm was executed on IBM hardware. This method optimized gate operations, reducing CZ gates from 7,242 to 794 (a 90% reduction) for a 33-qubit demonstration, enabling a 5x increase in computational capacity [7].

Quantitative Performance Comparison

The table below summarizes key performance metrics from recent experimental studies, highlighting the trade-offs between the two approaches.

| Algorithm / Variant | System Tested | Key Metric | Performance Result | Experimental Platform |

|---|---|---|---|---|

| VQE (Standard) | BeH₂ Molecule [2] | Energy accuracy vs. perfect simulation | Unmitigated results on a 127-qubit processor were an order of magnitude less accurate than a mitigated 5-qubit device. | IBMQ Belem (5q) & IBM Fez (127q) |

| VQE (with T-REx MITigation) | BeH₂ Molecule [2] | Energy accuracy | T-REx error mitigation dramatically improved accuracy, making a smaller, older device outperform a larger, newer one. | IBMQ Belem (5q) |

| HiVQE | General Molecular Systems [3] | Measurement & Speed | Up to "thousands of times faster" than Pauli-word measurement in standard VQE. | Theory/Simulation |

| QPE (Traditional) | Model Systems [7] | Gate Count (CZ gates) | Required 7,242 CZ gates for a target circuit, making it impractical. | Theory/Simulation |

| QPDE (with Fire Opal) | Model Systems [7] | Gate Count & Capacity | 90% reduction in CZ gates (down to 794); 5x increase in computational capacity. | IBM Hardware |

| AWQPE | Phase Estimation [6] | Qubit & Circuit Depth | Reduced number of ancilla qubits and circuit depth via windowing and parallelization. | Simulation |

Noise Resilience and Scalability

- VQE Resilience: A study on the Quantum Computed Moments (QCM) method, an extension of VQE, demonstrated remarkable noise resilience. On IBM hardware, QCM provided reasonable energy estimates for a 20-qubit quantum magnetism model even with ultra-deep circuits containing over 500 CNOT gates, where standard VQE failed completely [8].

- QPE Scalability: The high gate complexity and qubit coherence requirements of standard QPE make it highly sensitive to noise, limiting its scalability on pre-fault-tolerant hardware. Advanced strategies like QPDE and AWQPE are essential to bridge this gap [6] [7].

The Scientist's Toolkit: Essential Research Reagents

Successful implementation of quantum chemistry algorithms requires a suite of theoretical and computational "reagents." The table below lists essential components and their functions.

| Research Reagent | Function / Purpose | Examples / Notes |

|---|---|---|

| Molecular Hamiltonian | The target physical system to be simulated, defining the energy landscape. | Generated via classical packages (e.g., PySCF [1]) and mapped to qubits via Jordan-Wigner or Bravyi-Kitaev transformations [2]. |

| Ansatz Circuit | A parameterized quantum circuit that prepares trial wavefunctions. | Hardware-Efficient Ansatz (shallow, for NISQ) [2]; UCCSD (chemically inspired) [1] [9]; Adaptive Ansatz (e.g., ADAPT-VQE) [5]. |

| Classical Optimizer | Updates quantum circuit parameters to minimize energy. | COBYLA (gradient-free) [1]; SPSA (noise-resilient) [2]; Gradient-based methods. |

| Error Mitigation Technique | Post-processes noisy results to extract accurate expectation values. | Twirled Readout Error Extinction (T-REx) [2]; Zero-Noise Extrapolation (ZNE); Probabilistic Error Cancellation. |

| Quantum Subspace Method | Constructs a classically tractable Hamiltonian from quantum-sampled configurations. | Used in HiVQE [3] and SQDOpt [4] to improve accuracy and reduce quantum resource demands. |

| Performance Management Software | Automates hardware calibration, pulse-level optimization, and error suppression. | Fire Opal (Q-CTRL) was critical for the QPDE demonstration, enabling deeper circuits [7]. |

The choice between VQE and QPE is not merely algorithmic but strategic, dictated by the constraints of current hardware and the specific requirements of the research problem.

- VQE and its hybrid variants are the algorithms of choice for NISQ-era applications. Their strength lies in moderate quantum resource requirements, inherent resilience to some noise, and flexibility. The integration of machine learning [1] and classical subspace methods [3] is pushing the boundaries of the problems they can solve with high accuracy today.

- QPE and its modern derivatives represent the long-term, fault-tolerant target. When sufficiently advanced hardware arrives, they will provide exact, non-variational results with proven speedups. Current research focuses on reducing their resource overhead [7] and breaking them into manageable, modular pieces [6] to make them viable on evolving hardware.

For researchers in drug development and materials science, the hybrid quantum-classical paradigm of VQE offers a practical, albeit approximate, path to exploring quantum chemistry on existing quantum computers. In contrast, QPE remains a crucial goal for the future, promising exact solutions once fault-tolerant quantum computing is realized.

The current state of quantum computing is defined by the Noisy Intermediate-Scale Quantum (NISQ) era, characterized by quantum processors containing up to 1,000 qubits that operate without full fault-tolerance [10]. These devices are inherently sensitive to environmental interference, leading to various forms of quantum noise including decoherence, gate errors, and measurement inaccuracies that fundamentally impact computational reliability [10] [11]. The term "NISQ," coined by John Preskill, describes this generation of devices where noise accumulation severely limits the depth and complexity of executable quantum circuits [10] [11] [12]. Understanding these noise dynamics is particularly crucial for algorithmic performance, as error rates above 0.1% per gate typically restrict quantum circuits to approximately 1,000 gates before noise overwhelms the computational signal [10].

This analysis examines how these pervasive noise sources impact the foundational principles of quantum algorithms, with particular focus on the comparative resilience of the Variational Quantum Eigensolver (VQE) and Quantum Phase Estimation (QPE) algorithms. As quantum computing moves toward practical applications in fields like drug discovery and materials science, the systematic management of noise becomes paramount for extracting reliable results from current-generation hardware [13] [14].

Algorithmic Foundations and Noise Vulnerabilities

Quantum Phase Estimation (QPE): Theoretical Precision Meets Practical Fragility

Quantum Phase Estimation represents a cornerstone quantum algorithm designed for eigenvalue estimation with theoretical precision [13]. Its foundational role in quantum computing stems from its application to problems in quantum chemistry, materials science, and linear algebra [13]. The algorithm operates by utilizing the Quantum Fourier Transform (QFT) to extract phase information with high accuracy, making it crucial for calculating energy spectra in molecular systems [13].

However, QPE exhibits significant vulnerability in NISQ environments due to its substantial circuit depth and stringent coherence requirements [14]. The algorithm's dependency on sequentially applied controlled operations and the QFT creates an extended computational pipeline that accumulates errors exponentially with system size [10] [14]. This sensitivity places QPE beyond the practical reach of current NISQ devices, as the algorithm requires sustained quantum coherence and near-perfect gate fidelity that existing hardware cannot provide [14].

Variational Quantum Eigensolver (VQE): Built for the NISQ Reality

The Variational Quantum Eigensolver adopts a fundamentally different approach specifically engineered for noisy hardware [10] [13] [14]. As a hybrid quantum-classical algorithm, VQE combines quantum state preparation and measurement with classical optimization to approximate ground state energies of molecular Hamiltonians [10] [14]. Its operational principle relies on the variational method of quantum mechanics, where a parameterized quantum circuit (ansatz) prepares trial wavefunctions whose energy expectation values are measured and iteratively minimized by a classical optimizer [10] [14].

VQE's architectural design incorporates several noise-resilient properties. Its short-depth circuit requirements and inherent robustness to certain error types make it particularly suitable for NISQ constraints [14]. The algorithm's hybrid nature allows it to offload computational complexity to classical processors, reducing quantum resource demands [10]. Furthermore, VQE employs problem-inspired ansätze and hardware-efficient parameterizations that balance expressivity with practical executability on noisy devices [14].

Table: Comparative Foundation of QPE and VQE Algorithms

| Algorithmic Feature | Quantum Phase Estimation (QPE) | Variational Quantum Eigensolver (VQE) |

|---|---|---|

| Computational Paradigm | Purely quantum | Hybrid quantum-classical |

| Primary Application | Eigenvalue estimation [13] | Ground state energy calculation [10] [14] |

| Key Components | Quantum Fourier Transform, controlled unitaries [13] | Parameterized ansatz, classical optimizer [10] [14] |

| Theoretical Precision | Exactly optimal (with sufficient resources) [13] | Approximate (variational principle) [14] |

| Circuit Depth Requirement | High (grows with desired precision) [14] | Low to moderate (ansatz-dependent) [10] [14] |

Quantitative Analysis of Noise Impacts

NISQ devices contend with multiple, simultaneous noise sources that collectively degrade computational fidelity. Decoherence represents a fundamental limitation, where qubits gradually lose their quantum character due to environmental interactions, with typical coherence times permitting only limited operational windows before information loss occurs [10] [11] [12]. Gate errors introduce infidelity in quantum operations, with current hardware achieving 99-99.5% fidelity for single-qubit gates and 95-99% for two-qubit gates [10]. These imperfections accumulate multiplicatively throughout circuit execution. Measurement errors further corrupt results through misclassification of quantum states during readout, with contemporary systems exhibiting error rates that typically range from 1-5% per qubit measurement [12].

The exponential scaling of quantum noise presents the fundamental challenge for NISQ algorithms. With realistic error rates, the maximum circuit depth before noise predominates is approximately 1,000 gates, creating a hard constraint on executable algorithms [10]. This limitation disproportionately affects algorithms with sequential dependency structures like QPE, while variational approaches like VQE can be constructed with shallower circuits that operate within these noise boundaries [10] [14].

Comparative Algorithmic Performance Under Noise

The differential impact of noise on QPE and VQE emerges clearly from their operational principles and structural requirements. QPE's performance deteriorates sharply under realistic noise conditions due to its depth-precision tradeoff and sensitivity to phase errors [14]. The algorithm's precision depends directly on circuit depth, creating an irreconcilable conflict in noisy environments where increased depth amplifies error accumulation [14].

In contrast, VQE demonstrates notable error resilience through multiple architectural features. Its hybrid nature enables noise-aware optimization where classical routines can partially compensate for quantum imperfections [14]. The variational principle ensures that measured energies always provide upper bounds to true values, maintaining mathematical validity even with imperfect executions [14]. Furthermore, VQE's modular structure supports error mitigation integration, including techniques like zero-noise extrapolation and symmetry verification that can be directly incorporated into the optimization loop [10] [14].

Table: Quantitative Noise Impact on Algorithmic Performance

| Noise Metric | Impact on QPE | Impact on VQE |

|---|---|---|

| Decoherence Time Limits | Prevents execution of deep circuits required for precision [14] | Shallow circuits remain executable within coherence windows [10] [14] |

| Gate Infidelity Accumulation | Multiplicative error accumulation destroys phase information [10] [14] | Partial error tolerance through variational optimization [14] |

| Measurement Errors | Biases phase estimation outcomes [12] | Correctable via classical post-processing and mitigation [15] [12] |

| Current Practical System Size | Limited to small, proof-of-concept demonstrations [14] | Successful demonstrations with 20+ qubits [15] [14] |

| Achievable Accuracy | Theoretically exact, practically unattainable [14] | Chemical accuracy (1 kcal/mol) demonstrated for small molecules [10] [14] |

Error Mitigation Methodologies

Framework for Reliable NISQ Computation

Quantum error mitigation has emerged as an essential software-based approach to enhance result reliability without the extensive qubit overhead required for full quantum error correction [10] [15] [12]. These techniques operate through post-processing strategies that combine results from multiple circuit executions to estimate and remove noise-induced biases [10] [15]. Unlike fault-tolerant quantum error correction, which prevents errors during computation, error mitigation acknowledges the inevitability of noise while algorithmically correcting its effects on measured outcomes [10] [12].

The experimental workflow for comprehensive error mitigation typically involves executing multiple circuit variants under different noise conditions, collecting extensive measurement data, and applying statistical techniques to infer what the result would have been on an ideal, noiseless device [15] [12]. This approach inevitably introduces a sampling overhead, with measurement requirements typically increasing by factors of 2x to 10x or more depending on error rates and specific methods employed [10] [15].

Key Error Mitigation Techniques

Zero-Noise Extrapolation (ZNE) represents one of the most widely implemented error mitigation strategies [10] [12]. This method deliberately amplifies circuit noise through techniques such as unitary folding or pulse stretching, executes the circuit at multiple noise levels, and extrapolates results back to the zero-noise limit [10] [12]. The technique's effectiveness stems from its noise-agnostic nature, requiring no detailed characterization of underlying error mechanisms [12]. Recent advancements like purity-assisted ZNE have extended its applicability to higher-error regimes [10].

Symmetry verification exploits conserved quantities inherent in many quantum systems, particularly for chemistry applications [10] [12]. By measuring symmetry operators (such as particle number or spin conservation) that would be preserved in ideal computations, this technique identifies and discards results that violate these symmetries due to errors [10]. This approach provides particularly effective error suppression for quantum chemistry problems where such symmetries naturally exist [10].

Measurement error mitigation specifically addresses readout inaccuracies by constructing confusion matrices that characterize misclassification probabilities [12]. Through preparing known basis states and measuring their outcomes, this technique builds a classical model of measurement errors that can then be inverted to correct experimental results [12]. This method has become standard practice for nearly all NISQ algorithm implementations due to its straightforward application and significant improvements to result quality [12].

Probabilistic Error Cancellation (PEC) employs a more aggressive approach that requires detailed noise characterization [12]. By representing ideal quantum operations as linear combinations of implementable noisy operations, PEC theoretically can achieve zero-bias estimation, though at the cost of typically exponential sampling overhead [10] [12].

Machine Learning-enhanced mitigation represents an emerging frontier where neural networks or Gaussian processes learn noise patterns and automatically correct outputs [12] [16]. These data-driven approaches can map noisy results closer to ideal expectations without explicit noise models, making them particularly valuable for complex noise environments [16] [17].

Experimental Protocols and Research Toolkit

Standardized Experimental Methodologies

Rigorous evaluation of algorithmic performance under noise requires standardized experimental protocols that systematically characterize resilience across varying error conditions. For VQE experiments, the established methodology involves preparing parameterized ansätze, executing quantum circuits across multiple optimization iterations, and integrating error mitigation techniques throughout the measurement process [14]. The classical optimization component typically employs gradient-based or gradient-free methods to navigate parameter landscapes potentially complicated by noise-induced barren plateaus [14].

Noise scaling studies represent another critical experimental approach, where algorithms are executed under deliberately amplified noise conditions to establish performance degradation patterns [15]. This protocol typically employs unitary folding techniques to artificially increase circuit depth without altering ideal computation, enabling controlled experiments on noise susceptibility [15]. Through such methodologies, researchers can quantitatively compare the operational thresholds of different algorithms and identify breaking points where error mitigation becomes insufficient.

Cross-platform validation has emerged as an essential practice given the diverse NISQ hardware landscape [11]. This involves executing identical algorithmic benchmarks across different quantum processing technologies (superconducting, trapped-ion, photonic) to disentangle algorithm-specific noise responses from hardware-specific error characteristics [11]. Such comparative studies reveal how architectural decisions impact practical performance across the NISQ ecosystem.

Essential Research Toolkit

The experimental research workflow in the NISQ era relies on specialized tools and methodologies designed to characterize, mitigate, and adapt to quantum noise.

Table: Research Toolkit for NISQ Algorithm Development

| Tool/Technique | Function | Application Context |

|---|---|---|

| Zero-Noise Extrapolation (ZNE) | Estimates zero-noise value through intentional noise amplification and extrapolation [10] [12] | General-purpose mitigation for expectation value estimation |

| Symmetry Verification | Discards results violating known conservation laws [10] [12] | Quantum chemistry problems with particle number/spin conservation |

| Measurement Error Mitigation | Corrects readout errors using confusion matrix inversion [12] | Essential pre-processing step for all algorithms |

| Variational Ansätze | Parameterized circuit templates balancing expressivity and noise resilience [14] | VQE implementation with hardware-efficient or chemistry-inspired designs |

| Noise-Aware Compilers | Transforms circuits to minimize noise impact using hardware-specific error models [11] [16] | Circuit optimization for specific NISQ devices |

| Quantum Volume Metric | Holistic hardware benchmark capturing combined qubit count and quality [10] [11] | Cross-platform performance comparison |

Comparative Analysis and Research Implications

Strategic Algorithm Selection for NISQ Applications

The comparative analysis between QPE and VQE reveals a fundamental trade-off between theoretical precision and practical executability in the NISQ context. QPE maintains its status as a gold standard for fault-tolerant quantum computing due to its provable optimality and precision guarantees [13] [14]. However, its operational requirements place it firmly beyond the capabilities of current-generation hardware, making it primarily relevant for future fault-tolerant systems [14].

VQE has established itself as the leading algorithm for practical quantum computation on existing devices, particularly for quantum chemistry and optimization problems [10] [13] [14]. Its architectural compatibility with NISQ constraints, combined with robust error mitigation integration, has enabled demonstrations with practical significance across multiple application domains [14]. The algorithm's successful implementation for molecular systems like H₂, LiH, and H₂O, achieving chemical accuracy in some cases, underscores its current utility [10] [14].

The emerging research landscape reflects this dichotomy, with VQE dominating experimental implementations while QPE remains important for algorithmic theory and long-term development [13] [14]. This division of labor likely will persist until hardware advancements substantially reduce error rates and increase coherence times.

Future Research Directions and Hardware Co-Design

The progression beyond the NISQ era will require coordinated advances across multiple research domains. Algorithmic innovation must continue developing noise-resilient approaches that maximize computational power within strict error budgets [10] [14]. Techniques like adaptive ansätze construction, contextual subspace methods, and variational error suppression represent promising directions for extending VQE's capabilities [14]. Simultaneously, error mitigation methodologies must evolve toward greater efficiency and broader applicability, with particular focus on reducing the currently substantial sampling overheads [10] [15].

The emerging paradigm of hardware-software co-design represents perhaps the most transformative direction for NISQ research [11]. This approach involves tailoring algorithmic designs to specific hardware capabilities, exploiting native gate sets, connectivity architectures, and noise characteristics to optimize performance [11]. Frameworks like Qonscious demonstrate early implementations of this principle, enabling dynamic resource-aware execution of quantum programs [11]. As the quantum hardware landscape continues to diversify between superconducting, trapped-ion, photonic, and other qubit technologies, such co-adaptive approaches will become increasingly essential for extracting maximum performance from each platform [11].

The ultimate transition to fault-tolerant quantum computing will not immediately render NISQ research obsolete. Instead, error mitigation techniques developed for NISQ devices will likely continue providing value in early fault-tolerant systems by suppressing residual errors and extending effective computational fidelity [15]. This evolutionary pathway ensures that current investments in understanding and combating quantum noise will yield long-term benefits throughout the development of practical quantum technologies.

In the current Noisy Intermediate-Scale Quantum (NISQ) era, quantum hardware lacks comprehensive error correction, making resilience to inherent noise a paramount consideration in algorithm selection [2] [18]. This analysis objectively evaluates the innate noise robustness of two fundamental quantum algorithms: the Variational Quantum Eigensolver (VQE) and Quantum Phase Estimation (QPE). The core distinction lies in their circuit depth and operational paradigms; VQE employs shallow, parametrized circuits in a hybrid quantum-classical loop, while QPE relies on deeper, sequential quantum circuits to achieve higher precision through quantum Fourier transforms [18]. Understanding their performance under realistic noise conditions is critical for researchers, scientists, and drug development professionals seeking to apply quantum computing to practical problems like molecular ground state energy calculations, which are fundamental to predicting chemical reactions and drug interactions [2] [19]. This guide synthesizes recent experimental data to compare how these algorithms withstand the ubiquitous noise present in contemporary processors, from superconducting devices to trapped-ion systems.

Algorithmic Fundamentals and Noise Susceptibility

Operational Principles and Circuit Depth

The Variational Quantum Eigensolver (VQE) operates on a hybrid quantum-classical principle. A parametrized quantum circuit (ansatz) prepares a trial wavefunction on the quantum processor, whose energy expectation value is measured. A classical optimizer then adjusts the parameters to minimize this energy, iterating until convergence to the ground state [2] [20]. This approach typically utilizes shallow circuits, which is a key feature for noise resilience.

In contrast, Quantum Phase Estimation (QPE) is a predominantly quantum algorithm designed to estimate the phase (and thus an eigenvalue) of a unitary operator. It employs a series of controlled unitary operations and an inverse Quantum Fourier Transform (QFT), necessitating deeper circuit depths due to the sequential application of gates and the inclusion of the QFT subroutine [18].

Theoretical Noise Exposure

The different structural approaches lead to fundamentally different exposures to noise:

- VQE's Resilience Factors: Its shallow circuits reduce the window for decoherence and cumulative gate errors. The classical optimizer can, to some extent, find parameters that compensate for systematic errors, and its iterative nature allows for noise mitigation between cycles [2].

- QPE's Vulnerability Factors: The deeper circuits are more susceptible to decoherence and the accumulation of errors from both single- and two-qubit gates. The precision of the estimated phase is directly compromised by gate infidelities, and the algorithm lacks an inherent feedback mechanism to correct for errors during its execution [18].

Table: Fundamental Operational Differences Influencing Noise Resilience

| Feature | Variational Quantum Eigensolver (VQE) | Quantum Phase Estimation (QPE) |

|---|---|---|

| Algorithm Paradigm | Hybrid quantum-classical | Primarily quantum |

| Typical Circuit Depth | Shallow | Deep |

| Key Computational Steps | Parametrized ansatz, classical optimization | Controlled-unitaries, Inverse QFT |

| Primary Noise Vulnerability | Parameter optimization noise, readout error | Decoherence, cumulative gate error |

| Inherent Error Feedback | Yes, via classical optimizer | No |

Quantitative Performance Analysis Under Noise

Empirical Performance in Chemical Applications

Experimental studies on molecular systems like Beryllium Hydride (BeH₂) reveal the tangible impact of noise on VQE. Without error mitigation, noise can cause significant deviations in the calculated ground state energy. However, the shallow-circuit nature of VQE allows error mitigation techniques to be applied effectively. For instance, applying Twirled Readout Error Extinction (T-REx) to VQE runs on a noisy 5-qubit processor (IBMQ Belem) yielded energy estimations an order of magnitude more accurate than those from a more advanced 156-qubit device (IBM Fez) without such mitigation [2]. This underscores that for VQE, the choice of error mitigation strategy can be more impactful than the raw performance of the quantum hardware itself.

Fidelity Comparisons in Algorithm Subroutines

A comprehensive numerical study comparing the digital (DQC) and digital-analog (DAQC) paradigms for implementing the QFT—a core component of QPE—provides critical insights. Under a wide range of realistic noise sources (decoherence, bit-flip, measurement, and control errors), the fidelity of the final state was analyzed. The results demonstrate that as the number of qubits scales, the fidelity of the purely digital approach (DQC) decreases more rapidly than the digital-analog approach. Since QPE is built upon the QFT, this fidelity loss directly impacts the precision and reliability of the phase estimation [18]. The deeper the circuit required for the QFT, the more pronounced the effect of noise becomes, highlighting a fundamental scalability challenge for deep-circuit algorithms like QPE on NISQ devices.

Table: Experimental Performance Metrics Under Noise

| Algorithm / Benchmark | Experimental Setup | Key Performance Metric | Result Under Noise | With Error Mitigation |

|---|---|---|---|---|

| VQE for BeH₂ [2] | 5-qubit IBMQ Belem vs. 156-qubit IBM Fez | Accuracy of ground-state energy | Significant error without mitigation | T-REx on Belem provided 10x higher accuracy than unmigated Fez |

| QFT (QPE subroutine) [18] | Simulation (up to 6 qubits) under superconducting noise model | State fidelity vs. exact solution | Fidelity decreases with qubit count; DQC outperformed by DAQC | Zero-Noise Extrapolation boosted 8-qubit fidelity above 0.95 |

| GGA-VQE (Robust Variant) [19] | 25-qubit trapped-ion (IonQ Aria) | Ground-state fidelity for Ising model | N/A (Algorithm designed for noise) | Achieved >98% fidelity on real hardware |

Methodologies for Experimental Noise Analysis

Protocol for Evaluating VQE Noise Resilience

A standard methodology for assessing VQE's performance under noise involves the following steps, often implemented using platforms like Amazon Braket and PennyLane [20]:

- Problem Definition: Select a test molecule (e.g., H₃⁺ or BeH₂) and compute its electronic Hamiltonian using a classical quantum chemistry package.

- Ansatz Selection: Choose a suitable parametrized quantum circuit, such as a hardware-efficient ansatz or a chemistry-inspired ansatz (e.g., UCCSD).

- Noise Model Construction: Build a noise model using calibration data from real quantum hardware (e.g., IQM's Garnet device via Amazon Braket). This model incorporates predefined noise channels like:

AmplitudeDamping: Models energy dissipation.DepolarizingChannel: Models completely random noise.PhaseDamping: Models pure dephasing.TwoQubitDepolarizing: Extends depolarizing noise to two-qubit gates.

- Hybrid Execution: Run the VQE algorithm iteratively. The quantum device evaluates the energy for a given set of parameters, and a classical optimizer (e.g., SPSA) suggests new parameters.

- Error Mitigation Integration: Apply quantum error mitigation (QEM) techniques like Zero-Noise Extrapolation (ZNE) or T-REx during the quantum measurement process.

- Analysis: Compare the converged energy and optimized parameters against noiseless simulations and exact classical results (e.g., Full Configuration Interaction) [2] [20].

Protocol for Evaluating QPE Noise Resilience

Evaluating QPE requires a focus on the fidelity of its constituent subroutines, particularly the QFT, under noise:

- Circuit Implementation: Implement the QPE algorithm for a specific unitary operator, which includes the QFT and controlled unitary power operations.

- Paradigm Comparison: Execute the algorithm using both pure digital (DQC) and digital-analog (DAQC) paradigms to isolate the impact of two-qubit gate noise [18].

- Noise Introduction: Introduce a comprehensive noise model mirroring superconducting processor errors, applied to each gate in the circuit. This includes independent analysis of single-qubit gate errors, two-qubit gate errors, and decoherence channels.

- Fidelity Calculation: For a set of initial states, compute the fidelity between the final state produced by the noisy circuit and the ideal, noiseless result.

- Error Mitigation: Apply techniques like Zero-Noise Extrapolation specifically to the DAQC paradigm to mitigate decoherence and intrinsic errors.

- Scalability Analysis: Repeat the experiment for increasing numbers of qubits to track how fidelity degrades with problem size for each paradigm [18].

The Scientist's Toolkit: Essential Research Reagents

For researchers aiming to reproduce or extend these noise resilience studies, the following "research reagents"—software, hardware, and methodological components—are essential.

Table: Essential Reagents for Quantum Noise Resilience Research

| Reagent / Solution | Type | Primary Function in Noise Analysis | Example Use Case |

|---|---|---|---|

| Hybrid Job Managers (Amazon Braket) [20] | Software Platform | Manages iterative quantum-classical workflows with priority QPU access. | Executing the VQE optimization loop across classical and quantum resources. |

| Noise Model Libraries (Braket.circuits.noises) [20] | Software Library | Provides pre-defined noise channels (Depolarizing, AmplitudeDamping) to emulate real hardware. | Constructing a realistic noise model based on IQM Garnet device calibration data. |

| Error Mitigation Tools (Mitiq for ZNE, T-REx) [2] [20] | Software Library | Applies post-processing techniques to reduce the impact of noise on measurement results. | Mitigating readout error in VQE energy measurements via T-REx [2]. |

| Digital-Analog Paradigm (DAQC) [18] | Methodological Framework | Replaces digital two-qubit gates with analog blocks to reduce noise sensitivity. | Implementing the QFT with higher fidelity for the QPE algorithm [18]. |

| Greedy Gradient-Free Adaptive VQE (GGA-VQE) [19] | Algorithmic Variant | A noise-resilient VQE variant that builds circuits iteratively with minimal measurements. | Achieving >98% fidelity on a 25-qubit trapped-ion quantum computer [19]. |

Discussion and Synthesis

The accumulated experimental evidence strongly indicates that the shallow-circuit VQE algorithm possesses greater innate robustness to noise compared to the deep-circuit QPE algorithm within the constraints of the NISQ era. VQE's hybrid nature and shorter circuits inherently limit the accumulation of errors during a single quantum computation burst [2]. Furthermore, its architecture readily incorporates error mitigation techniques like T-REx and ZNE, which have been proven to enhance results on real, noisy hardware to a degree that sometimes surpasses the raw performance of more powerful but unmigated devices [2] [20].

Conversely, QPE's deep circuits, necessitated by the QFT and controlled operations, make it intrinsically more vulnerable to decoherence and cumulative gate infidelities [18]. Its performance is less innate and more dependent on paradigm shifts, such as moving from a purely digital (DQC) to a digital-analog (DAQC) approach, which fundamentally changes how entangling operations are executed to leverage, rather than fight, processor interactions. While error mitigation like ZNE can be applied to DAQC to achieve high fidelities, this represents a more fundamental architectural adaptation than the mitigation typically applied to VQE [18].

For researchers and drug development professionals, the practical implication is that VQE and its adaptive variants (like GGA-VQE) currently represent the most viable path for practical quantum chemistry calculations on today's hardware. Its noise resilience has been demonstrated for small molecules and spin models on devices of up to 25 qubits [19]. QPE, while a cornerstone of fault-tolerant quantum computing and capable of higher precision, likely requires further hardware stability or sophisticated paradigm-level modifications (like DAQC) to become practical for widespread application in noisy environments. The choice between them is not merely algorithmic but strategic, balancing the immediate, noise-resilient results of VQE against the long-term, high-precision potential of QPE.

Accurately determining the ground-state energy of molecular systems is a cornerstone of computational chemistry and a critical enabler of modern drug discovery. This quantum mechanical property provides essential insights into molecular stability, reactivity, and interaction dynamics—factors that directly influence drug candidate efficacy and safety profiles [21] [22]. In the pharmaceutical pipeline, these calculations help researchers predict how potential drug molecules will interact with biological targets, thereby guiding the selection of promising candidates for further development and reducing reliance on costly experimental screening alone [23].

The emergence of quantum computing has introduced two principal algorithmic approaches for tackling these computationally intensive problems: the Variational Quantum Eigensolver (VQE) and Quantum Phase Estimation (QPE). These algorithms represent fundamentally different strategies for leveraging quantum mechanical systems to solve electronic structure problems, each with distinct strengths, limitations, and performance characteristics under realistic operating conditions [24]. As quantum hardware continues to advance through the Noisy Intermediate-Scale Quantum (NISQ) era, understanding the comparative performance of these algorithms becomes increasingly crucial for research scientists and drug development professionals seeking to integrate quantum computational methods into their workflows [25].

This guide provides an objective comparison of VQE and QPE performance characteristics, with particular emphasis on their behavior under noisy conditions relevant to current quantum hardware. By synthesizing recent experimental data and established theoretical frameworks, we aim to equip researchers with the practical knowledge needed to select appropriate algorithms for specific molecular systems and drug discovery applications.

Algorithm Fundamentals: VQE and QPE

Variational Quantum Eigensolver (VQE)

The Variational Quantum Eigensolver operates on a hybrid quantum-classical framework that combines quantum circuit execution with classical optimization techniques. In this approach, a parameterized quantum circuit (ansatz) prepares a trial wavefunction, whose energy expectation value is measured using the quantum processor. A classical optimizer then adjusts the circuit parameters to minimize this energy, iteratively converging toward the ground-state solution [26] [20]. This algorithm has become a cornerstone of NISQ-era quantum chemistry applications due to its relatively modest circuit depth requirements and inherent tolerance to certain forms of noise [25].

The VQE workflow typically involves several key components: (1) preparation of a reference state (often the Hartree-Fock state) on the quantum processor; (2) application of a parameterized quantum circuit that introduces correlations; (3) measurement of the expectation value of the molecular Hamiltonian; (4) classical optimization of the circuit parameters based on the measured energy; and (5) iteration until convergence criteria are met [26]. This hybrid structure makes efficient use of limited quantum resources while leveraging powerful classical optimization routines, creating a practical approach for current hardware limitations.

Quantum Phase Estimation (QPE)

Quantum Phase Estimation represents a fundamentally different approach based on the quantum Fourier transform to directly extract energy eigenvalues from simulated quantum dynamics. Unlike VQE, QPE is a purely quantum algorithm that provides exponential speedup under ideal conditions and guarantees convergence to the true ground state energy given sufficient circuit depth and an initial state with non-zero overlap with the true ground state [24].

The QPE algorithm operates by coupling the system register containing the molecular wavefunction to an ancilla register through a series of controlled unitary operations derived from the molecular Hamiltonian. Quantum interference effects in the ancilla register encode the energy eigenvalues in a quantum phase, which can be read out through the inverse quantum Fourier transform. This approach provides Heisenberg-limited scaling in energy precision, meaning the number of measurements required scales favorably compared to variational approaches [24].

Performance Comparison Under Noise

Quantitative Performance Metrics

Table 1: Algorithm Performance Comparison for Molecular Energy Calculations

| Performance Metric | VQE | QPE | Experimental Context |

|---|---|---|---|

| Accuracy | 99.89% for OH+ [25] | Theoretically exact | Real hardware with error mitigation [25] |

| Hardware Requirements | NISQ-compatible (≤100 qubits) | Fault-tolerant (≥1000 qubits) | Current technological landscape [24] |

| Noise Tolerance | Moderate (requires error mitigation) | Low (requires fault-tolerance) | Analysis of decoherence effects [24] |

| Circuit Depth | Shallow (∼102 gates) | Deep (∼104-106 gates) | NISQ hardware constraints [25] |

| Classical Overhead | High (optimization loop) | Minimal (once initialized) | Computational resource assessment [25] [24] |

| System Size Scaling | Polynomial resource growth | Exponential speedup potential | Theoretical scaling analysis [24] |

Noise Sensitivity Analysis

Table 2: Noise Impact and Mitigation Strategies

| Noise Type | Impact on VQE | Impact on QPE | Effective Mitigation Approaches |

|---|---|---|---|

| Depolarizing Noise | Gradual accuracy degradation | Catastrophic failure | Zero-Noise Extrapolation, Pauli twirling [20] |

| Amplitude Damping | Biased energy estimates | Complete algorithm failure | Rescaling techniques, error-aware ansatz [25] |

| Phase Damping | Parameter optimization instability | Phase coherence loss | Dynamical decoupling, robust pulses [20] |

| Measurement Errors | Systematic energy shifts | Incorrect phase readout | Measurement error mitigation [25] |

| Cross-Talk | Correlated parameter errors | Uncorrectable logical errors | Layout optimization, temporal scheduling [25] |

The performance disparity between VQE and QPE under noisy conditions stems from their fundamental operational principles. VQE's variational nature provides inherent resilience to certain error types, as the classical optimization loop can partially compensate for systematic errors, though this comes at the cost of increased measurement overhead and potential convergence to incorrect energy minima [24]. In contrast, QPE's reliance on quantum coherence throughout deep circuit executions makes it highly susceptible to all forms of noise, with even modest error rates typically destroying the phase coherence essential for accurate eigenvalue estimation [24].

Experimental studies demonstrate that VQE can maintain chemical accuracy (∼1 kcal/mol) for small molecules like H₃⁺ and OH+ on current hardware when supplemented with advanced error mitigation techniques. For example, the winning submission in the Quantum Computing for Drug Discovery Challenge achieved 99.89% accuracy for OH+ ground state energy calculation by implementing a comprehensive error mitigation strategy including noise-aware qubit mapping, measurement error mitigation, and Zero-Noise Extrapolation [25]. These techniques effectively reduce the impact of hardware noise without requiring additional qubits for quantum error correction.

Experimental Protocols and Methodologies

VQE Implementation for Molecular Systems

Protocol 1: Standard VQE Implementation with Error Mitigation

Problem Formulation:

- Generate molecular Hamiltonian in second quantized form using classical electronic structure methods (Hartree-Fock)

- Apply qubit transformation (Jordan-Wigner or Bravyi-Kitaev) to express Hamiltonian as Pauli strings

- Select active space appropriate for available quantum resources [26]

Ansatz Design:

- Choose hardware-efficient or chemistry-inspired ansatz architecture

- Implement parameterized quantum circuit with layered structure

- Optimize entangling gate patterns for target hardware connectivity [25]

Error Mitigation Integration:

Classical Optimization:

- Select appropriate optimizer (BFGS, COBYLA, or SPSA) based on noise characteristics

- Define convergence criteria (energy gradient < 10⁻⁶ Ha or maximum iterations)

- Implement shot allocation strategies to minimize statistical noise [25]

Validation:

- Compare with classical reference methods (Full CI, CCSD(T))

- Calculate energy variance as convergence metric

- Perform statistical analysis of result uncertainty [26]

QPE Implementation Considerations

Protocol 2: QPE Resource Estimation and Feasibility Assessment

Initial State Preparation:

- Prepare high-overlap initial state using classical methods or VQE

- Quantify state overlap using classical simulations

- Assess orthogonality catastrophe effects for system size [24]

Resource Estimation:

- Calculate required qubit count (system + ancilla registers)

- Estimate circuit depth based on Hamiltonian trotterization

- Determine precision requirements for drug discovery applications [24]

Fault-Tolerance Requirements:

- Calculate quantum error correction overhead

- Estimate physical qubit requirements for target logical error rate

- Assess T-gate factories and distillation requirements [24]

Benchmarking Methodology

Protocol 3: Cross-Algorithm Performance Benchmarking

Test Molecule Selection:

Hardware Configuration:

- Characterize noise parameters using gate set tomography

- Implement identical connectivity constraints for both algorithms

- Use consistent measurement and calibration procedures [20]

Performance Metrics:

Noise Impact Quantification:

- Measure algorithm sensitivity to various noise types

- Quantify resource overhead for error mitigation

- Assess performance degradation with increasing system size [24]

Quantum Computing Hardware Landscape

Hardware Performance Characteristics

Table 3: Quantum Hardware Platforms and Algorithm Compatibility

| Hardware Platform | Native Gates | Coherence Times | Qubit Connectivity | Suitable for |

|---|---|---|---|---|

| Superconducting | CNOT, Rz, √X | T₁: 50-150 μs | Nearest-neighbor | VQE with modular ansatz [20] |

| Trapped Ions | MS, Rz | T₁: 1-10 s | All-to-all | VQE with complex entanglers [27] |

| Photonic | Linear optical | T₁: N/A (flying) | Programmable | QPE with linear optics [27] |

Different quantum computing platforms exhibit distinct noise profiles that significantly impact algorithm performance. Superconducting qubits, while offering rapid gate operations and scalable fabrication, typically face challenges with coherence times and nearest-neighbor connectivity constraints [20]. Trapped ion systems provide superior coherence times and all-to-all connectivity but generally feature slower gate operations [27]. Photonic platforms avoid decoherence entirely but face challenges with photon loss and detector inefficiencies [27].

These hardware characteristics directly influence algorithm selection and performance. VQE implementations can be tailored to specific hardware constraints through noise-adaptive ansatz design and qubit mapping optimization [25]. For instance, the second-place team in the QCDDC'23 challenge employed an innovative RY hardware-efficient circuit featuring linear qubit connections and parallel CNOTs to reduce circuit duration and minimize error susceptibility on superconducting hardware [25].

Application to Drug Discovery Problems

Real-World Implementation Case Studies

Case Study 1: Prodrug Activation Energy Profiling

Recent research has demonstrated the application of hybrid quantum-classical pipelines to real-world drug discovery problems, including the calculation of Gibbs free energy profiles for prodrug activation. In one study, researchers investigated a carbon-carbon bond cleavage strategy for β-lapachone prodrug activation using a VQE-based approach with active space approximation [26]. The implementation successfully computed reaction energy barriers critical for predicting activation kinetics under physiological conditions, achieving results consistent with wet lab validation [26].

The quantum computational pipeline involved: (1) conformational optimization of reaction pathway structures; (2) active space selection to reduce problem size; (3) Hamiltonian generation for key molecular configurations; (4) VQE execution with error mitigation; and (5) solvation energy correction using polarizable continuum models [26]. This workflow demonstrates the potential for quantum computing to address practical pharmaceutical challenges, though classical pre- and post-processing steps remain essential components.

Case Study 2: Covalent Inhibitor Binding Analysis

Another significant application involves the simulation of covalent bond formation in drug-target interactions, particularly relevant for covalent inhibitors like Sotorasib targeting the KRAS G12C mutation in cancer [26]. Quantum computing-enhanced QM/MM (Quantum Mechanics/Molecular Mechanics) simulations can provide detailed insights into binding mechanisms and reaction energetics, information crucial for inhibitor optimization and selectivity profiling [26].

The hybrid implementation partitions the system between quantum and classical regions, with the quantum processor handling the electronically complex bond formation region while classical molecular mechanics describes the protein environment. This approach balances computational feasibility with quantum mechanical accuracy, though current hardware limitations restrict the size of the quantum region that can be practically simulated [26].

Research Reagent Solutions

Essential Tools for Experimental Implementation

Table 4: Key Research Resources for Quantum-Enhanced Drug Discovery

| Resource Category | Specific Tools | Function | Application Context |

|---|---|---|---|

| Quantum Software | IBM Qiskit [25], TenCirChem [26] | Algorithm design, circuit compilation | VQE implementation, noise simulation |

| Error Mitigation | Mitiq [20], ResilienQ [25] | Noise characterization, error suppression | Improving accuracy on noisy hardware |

| Classical Integration | PennyLane [20], Amazon Braket [20] | Hybrid algorithm coordination | Classical-quantum workflow management |

| Chemical Modeling | Polarizable Continuum Model [26], QM/MM [26] | Solvation effects, large system handling | Realistic drug discovery simulations |

| Hardware Access | IBM Quantum [25], Amazon Braket [20] | Quantum processing unit execution | Algorithm testing on real devices |

The successful implementation of quantum algorithms for drug discovery requires careful selection and integration of specialized software tools and computational resources. Platforms like IBM Qiskit provide comprehensive environments for quantum circuit design and simulation, while specialized libraries such as TenCirChem offer tailored functionality for quantum chemistry applications [25] [26]. Error mitigation tools like Mitiq implement techniques including Zero-Noise Extrapolation and probabilistic error cancellation, which are essential for obtaining accurate results on current hardware [20].

For drug discovery applications, integration with established chemical modeling approaches is crucial. Solvation models like the polarizable continuum model (PCM) enable the simulation of physiological environments, while QM/MM methodologies facilitate the study of drug-receptor interactions by partitioning the system between quantum and classical treatment regions [26]. These tools collectively form the essential infrastructure for pursuing quantum-computational drug discovery research.

The comparative analysis of VQE and QPE for molecular ground-state energy calculations reveals a clear performance trade-off between noise resilience and computational precision. VQE currently represents the most practical approach for NISQ-era hardware, demonstrating capabilities for achieving chemical accuracy for small molecular systems when supplemented with advanced error mitigation techniques [25]. However, this practicality comes with limitations in systematic convergence and classical optimization overhead. In contrast, QPE offers theoretically exact solutions with proven exponential speedup but requires fault-tolerant quantum resources not presently available [24].

For drug discovery professionals, these distinctions inform a strategic algorithm selection framework. VQE enables immediate exploration of quantum computational methods for pharmaceutical problems, particularly for studying reaction mechanisms, covalent inhibition, and prodrug activation where accurate quantum chemical calculations provide valuable insights [26]. As hardware advances toward fault tolerance, the field anticipates a transition to QPE-based approaches that will enable larger simulations with guaranteed precision, potentially transforming early-stage drug discovery through high-accuracy quantum chemical modeling [24].

The ongoing development of noise-adaptive algorithms, improved error mitigation strategies, and hardware-specific optimizations continues to narrow the performance gap between current implementations and theoretical potential. For research scientists operating at the intersection of quantum computing and pharmaceutical development, maintaining awareness of these rapidly evolving capabilities will be essential for leveraging quantum computational methods as they transition from specialized tools to mainstream drug discovery resources.

Algorithm Implementations and Practical Use Cases in Quantum Chemistry

Within the pursuit of practical quantum chemistry simulations on near-term devices, the Variational Quantum Eigensolver (VQE) has emerged as a leading hybrid quantum-classical algorithm [28]. Its performance is critically dependent on the choice of the ansatz, the parameterized quantum circuit that prepares the trial wavefunction [14]. In the context of comparative analysis of quantum algorithms under noise, VQE's shorter circuit depths present a compelling alternative to the resource-intensive Quantum Phase Estimation (QPE) algorithm, which requires deep circuits and fault tolerance [28]. This guide provides a structured comparison of two primary ansatz categories—chemistry-inspired (exemplified by Unitary Coupled Cluster Singles and Doubles, UCCSD) and hardware-efficient ansätze—for calculating molecular ground states, equipping researchers with the data and protocols needed for informed selection.

The selection of an ansatz involves a fundamental trade-off between physical expressivity and hardware practicality, a balance that is crucial for successful implementation on Noisy Intermediate-Scale Quantum (NISQ) devices.

Chemistry-Inspired Ansätze (UCCSD): Ansätze like UCCSD are derived from classical computational chemistry methods [29]. They operate on a Hartree-Fock reference state by applying exponentials of fermionic excitation operators [14]. Their strength lies in their systematic approach to including electron correlation effects, which often leads to high accuracy and a clear, physically motivated circuit structure [28]. However, this comes at the cost of high circuit depth, which scales as O(N⁴) with the number of qubits, making them challenging to run on current hardware without error correction [29].

Hardware-Efficient Ansätze (HEA): Designed for direct implementation on specific quantum processor architectures, HEAs use layers of single-qubit rotations and entangling gates native to the device [14] [29]. Their primary advantage is low circuit depth, making them more resilient to noise. The trade-off is that they are not physically inspired, which can lead to issues like "barren plateaus" in the optimization landscape and a failure to capture certain electron correlations unless specifically designed to preserve symmetries [29] [28]. For example, the Symmetry-Preserving Ansatz (SPA) is a HEA that maintains physical constraints like particle number and can achieve high accuracy by increasing its depth [29].

Table 1: Core Characteristics of Ansatz Paradigms for Molecular Ground States.

| Feature | Chemistry-Inspired (UCCSD) | Hardware-Efficient (HEA) |

|---|---|---|

| Design Principle | Based on fermionic excitation operators from classical chemistry [29]. | Constructed from gates native to a specific quantum processor [29]. |

| Circuit Depth | High, scaling as O(N⁴) [29]. | Low, designed for shallow circuits [29]. |

| Key Strength | High, physically-motivated accuracy [28]. | Noise resilience and feasibility on NISQ devices [29]. |

| Primary Limitation | Deep circuits are prone to noise on current hardware [29]. | Can suffer from barren plateaus and may break physical symmetries [29] [28]. |

| Optimization Landscape | More structured, but parameter optimization remains challenging [30]. | Often complex with many local minima, requiring advanced optimizers [29]. |

| Measurement Overhead | High number of measurements required for energy evaluation [31]. | Lower per-iteration, but overall cost depends on convergence [31]. |

Performance Data from Key Experiments

Benchmarking studies across various molecules provide quantitative insights into how these ansätze perform in practice, measuring success through energy accuracy and quantum resource requirements.

Ground State Energy Accuracy

Quantitative simulations show that both ansatz types can achieve chemical accuracy, but their performance varies with molecular complexity.

Table 2: Achievable Accuracy for Select Molecules with Different Ansätze.

| Molecule | Ansatz Type | Performance Summary |

|---|---|---|

| H₂ | UCCSD | A standard benchmark, consistently achieves chemical accuracy in simulations [32]. |

| LiH | UCCSD | Achieves high accuracy but requires a large number of gates [31]. |

| LiH | SPA (Hardware-Efficient) | Can achieve CCSD-level chemical accuracy by increasing the number of layers [29]. |

| H₂O | SPA (Hardware-Efficient) | Accurate results for ground and low-lying excited states are possible with high-depth circuits [29]. |

| BeH₂ | CEO-ADAPT-VQE (Adaptive) | Outperforms UCCSD, reaching chemical accuracy with drastically fewer CNOT gates [31]. |

| Si Atom | UCCSD & Hardware-Efficient | Systematic study shows performance is highly dependent on optimizer and parameter initialization [30]. |

Quantum Resource Requirements

The resource footprint, particularly the number of CNOT gates and overall circuit depth, is a critical metric for NISQ applications.

Table 3: Quantum Resource Comparison for Different Ansätze and Molecules.

| Molecule (Qubits) | Ansatz | Key Resource Metric | Result |

|---|---|---|---|

| LiH (12 qubits) | Original Fermionic ADAPT-VQE [31] | CNOT Count at Chemical Accuracy | Baseline |

| LiH (12 qubits) | CEO-ADAPT-VQE* [31] | CNOT Count at Chemical Accuracy | Reduced by 88% |

| BeH₂ (14 qubits) | Original Fermionic ADAPT-VQE [31] | CNOT Count at Chemical Accuracy | Baseline |

| BeH₂ (14 qubits) | CEO-ADAPT-VQE* [31] | CNOT Count at Chemical Accuracy | Reduced by 84% |

| H₂O | UCCSD [29] | Circuit Complexity | High, requires many gates |

| H₂O | SPA (High-Depth) [29] | Circuit Complexity | Fewer gates than UCCSD |

Experimental Protocols for Ansatz Benchmarking

To ensure fair and reproducible comparisons between ansätze, researchers follow structured experimental protocols. The core workflow involves problem definition, ansatz execution, and classical optimization, with careful configuration at each stage.

Diagram 1: The VQE algorithm's hybrid workflow for ground state energy calculation.

Problem Definition and Hamiltonian Formulation

The process begins by classically defining the molecular system. This involves selecting a basis set (e.g., STO-3G for H₂) and generating the electronic Hamiltonian in second quantization [32] [28]. The fermionic Hamiltonian is then mapped to a qubit operator using a transformation like Jordan-Wigner or Bravyi-Kitaev, expressing it as a sum of Pauli strings [32] [30]. For the H₂ molecule in a minimal basis, this results in a Hamiltonian acting on four qubits [32].

Ansatz-Specific Execution Protocols

The core of the experiment lies in configuring and executing the chosen ansatz.

UCCSD Protocol: The UCCSD ansatz is constructed by approximating the unitary coupled cluster operator with single and double excitations from the Hartree-Fock reference [29]. The quantum circuit is built by Trotterizing the exponential of these operators. Due to the high circuit depth, simulations are often conducted with state vector simulators on High-Performance Computing (HPC) systems to precisely evaluate performance without hardware noise [32] [29].

Hardware-Efficient Ansatz (HEA) Protocol: A specific HEA, like the Symmetry-Preserving Ansatz (SPA), is selected for its ability to maintain physical constraints like particle number [29]. The circuit consists of repeated layers of parameterized single-qubit gates and constrained two-qubit gates (e.g., the A(θ,φ) gate). A critical step is parameter initialization, which often uses random numbers or classical heuristics, as chemical principles cannot guide the initial values [29].

Classical Optimization and Analysis

The energy expectation value measured from the quantum circuit is fed to a classical optimizer. Common choices include gradient-based methods like Broyden–Fletcher–Goldfarb–Shanno (BFGS) or gradient-free methods like SPSA [32] [30]. To mitigate the barren plateau problem associated with HEAs, global optimization techniques like basin-hopping are employed for more thorough exploration of the parameter landscape [29]. The final analysis involves comparing the converged VQE energy against classically computed exact values (e.g., from Full Configuration Interaction) and analyzing the required quantum resources (CNOT count, circuit depth) [30] [31].

The Scientist's Toolkit: Essential Research Reagents & Solutions

Successful VQE experiments rely on a suite of software and methodological "reagents" that form the backbone of the research workflow.

Table 4: Key Tools and Methods for VQE Experimentation.

| Tool Category | Example | Function in Experimentation |

|---|---|---|

| Software Frameworks | Qiskit, Cirq, PennyLane | Provide libraries for constructing ansatz circuits, performing noise simulations, and integrating classical optimizers [32]. |

| Mapping Techniques | Jordan-Wigner, Bravyi-Kitaev | Transform the fermionic Hamiltonian into a qubit-readable form of Pauli operators [32] [30]. |

| Classical Optimizers | BFGS, SPSA, ADAM | Iteratively update ansatz parameters to minimize the energy expectation value [32] [30]. |

| Reference States | Hartree-Fock State | Serves as the initial input for chemistry-inspired ansätze like UCCSD [14]. |

| Error Mitigation | Zero-Noise Extrapolation, Readout Error Mitigation | Post-processing techniques applied to noisy hardware data to improve the accuracy of energy estimates. |

| HPC Simulations | State Vector Simulators | Enable noiseless benchmarking of ansatz performance and scalability before hardware deployment [32]. |

The choice between UCCSD and hardware-efficient ansätze is not a matter of declaring a universal winner but of strategic selection based on research goals and hardware constraints. UCCSD offers a physically intuitive, systematically improvable path with high potential accuracy but is currently limited by its circuit depth. In contrast, hardware-efficient ansätze like the SPA provide a pragmatic, NISQ-friendly approach that can achieve high accuracy with fewer gates, though they require careful design to avoid optimization pitfalls.

Emerging adaptive algorithms like ADAPT-VQE and its variants (e.g., CEO-ADAPT-VQE) represent a promising synthesis of these paradigms [5] [31]. By dynamically constructing compact, problem-tailored ansätze, they have demonstrated drastic reductions in CNOT counts and measurement overhead compared to static ansätze like UCCSD, while maintaining high accuracy [31]. This evolution suggests that the future of practical VQE applications may lie in adaptive approaches that intelligently balance the strengths of both chemistry-inspired and hardware-efficient principles. For researchers, this comparative landscape underscores the importance of a flexible, empirically-grounded strategy for ansatz selection in the pursuit of quantum utility in chemistry.

Variational Quantum Eigensolvers (VQE) represent a leading approach for molecular simulation on Noisy Intermediate-Scale Quantum (NISQ) devices, offering a promising path to quantum advantage for electronic structure problems in quantum chemistry and drug discovery [33]. Unlike quantum phase estimation, which requires deep circuits beyond current hardware capabilities, VQE employs a hybrid quantum-classical approach that trades circuit depth for increased measurement counts, making it more suitable for contemporary quantum processors [33]. The algorithm functions by preparing a parameterized wavefunction (ansatz) on a quantum computer and iteratively adjusting parameters using classical optimization to minimize the energy expectation value of the molecular Hamiltonian [31] [33].

Despite their potential, standard VQE approaches with fixed ansätze such as Unitary Coupled Cluster Singles and Doubles (UCCSD) face significant limitations, including limited accuracy for strongly correlated systems and high circuit depths [33]. These challenges have motivated the development of advanced VQE architectures, particularly adaptive and problem-tailored variants. This review provides a comprehensive comparison of Adaptive (ADAPT-VQE) and Contextual Subspace (CS-VQE) methods, focusing on their performance under realistic noise conditions and their applicability to drug discovery challenges.

Algorithmic Fundamentals and Evolution

Core ADAPT-VQE Methodology

The Adaptive Derivative-Assembled Pseudo-Trotter Variational Quantum Eigensolver (ADAPT-VQE) represents a significant advancement over fixed-ansatz VQE approaches. Instead of using a predetermined circuit structure, ADAPT-VQE dynamically constructs an ansatz by iteratively appending parameterized unitary operators selected from a predefined pool [33] [34]. At each iteration ( N ), the algorithm selects the operator ( \hat{A}_i ) that yields the largest energy gradient:

[ \left|\frac{\partial E^{(N)}}{\partial \thetai}\right|{\thetai=0} = \left| \langle \psi^{(N)} | [\hat{H}, \hat{A}i] | \psi^{(N)} \rangle \right| ]

where ( |\psi^{(N)}\rangle ) is the current variational state, ( \hat{H} ) is the molecular Hamiltonian, and ( \hat{A}i ) are anti-Hermitian operators from the pool [5] [34]. The selected operator is appended to the circuit as ( e^{\thetai \hat{A}_i} ), and all parameters are optimized before proceeding to the next iteration. This systematic, problem-informed approach generates compact, chemically relevant ansätze that often outperform static UCCSD counterparts in both accuracy and circuit efficiency [33].

Algorithmic Workflows

ADAPT-VQE Workflow

Recent Algorithmic Innovations

CEO-ADAPT-VQE

The Coupled Exchange Operator (CEO) ADAPT-VQE incorporates a novel operator pool that significantly reduces quantum resource requirements. This approach leverages coupled exchange operators to create more compact ansätze, demonstrating reductions of up to 88% in CNOT count, 96% in CNOT depth, and 99.6% in measurement costs compared to early ADAPT-VQE versions for molecules represented by 12-14 qubits (LiH, H₆, BeH₂) [31]. The CEO pool particularly enhances performance for strongly correlated systems where traditional fermionic operator pools struggle.

GGA-VQE

Greedy Gradient-Free Adaptive VQE (GGA-VQE) modifies the standard ADAPT-VQE approach by eliminating the computationally expensive global optimization step after each operator addition [5] [19]. Instead, it employs a gradient-free strategy that determines the optimal parameter for each candidate operator by fitting a simple trigonometric function to a small number of energy measurements (typically 2-5), then selects the operator that provides the largest immediate energy decrease [19]. This approach dramatically reduces measurement overhead and demonstrates improved noise resilience, enabling the first fully converged adaptive VQE computation on a 25-qubit quantum processor for a 25-spin transverse-field Ising model, achieving over 98% state fidelity despite hardware noise [19].

Measurement-Efficient ADAPT-VQE

Recent innovations focus specifically on reducing the shot overhead of ADAPT-VQE through two complementary strategies: reusing Pauli measurements obtained during VQE parameter optimization for subsequent gradient estimations, and variance-based shot allocation that optimally distributes measurement shots based on term variances [35]. These approaches collectively reduce average shot requirements to approximately 32% of naive measurement schemes while maintaining accuracy [35].

Performance Comparison and Benchmarking

Quantitative Performance Metrics

Table 1: Resource Requirements for Molecular Simulations at Chemical Accuracy

| Molecule | Qubits | Algorithm | CNOT Count | CNOT Depth | Measurement Cost | Iterations to Convergence |

|---|---|---|---|---|---|---|

| LiH | 12 | Fermionic ADAPT | Baseline | Baseline | Baseline | ~40 |

| CEO-ADAPT* | -88% | -96% | -99.6% | ~30 | ||

| H₆ | 12 | Fermionic ADAPT | Baseline | Baseline | Baseline | ~45 |

| CEO-ADAPT* | -85% | -94% | -99.4% | ~32 | ||

| BeH₂ | 14 | Fermionic ADAPT | Baseline | Baseline | Baseline | ~50 |

| CEO-ADAPT* | -82% | -92% | -99.2% | ~35 | ||

| H₂O | 12-14 | GGA-VQE | -30% | -40% | -75% | ~30 |

Table 2: Noise Resilience Comparison (Simulated with Shot Noise)

| Algorithm | H₂O Energy Error (mHa) | LiH Energy Error (mHa) | Hardware Demonstration | Measurement Shots per Iteration |

|---|---|---|---|---|

| Standard ADAPT | >2.0 | >3.0 | Not achieved | >10,000 |

| GGA-VQE | ~1.1 | ~0.6 | 25-qubit Ising model | 2-5 |

| CEO-ADAPT* | ~0.8 | ~0.5 | Not reported | ~100 |

Experimental Protocols and Methodologies

Molecular System Preparation

Benchmarking experiments typically begin with molecular geometry specification, followed by classical electronic structure calculations to generate reference data. The Hartree-Fock method provides the initial reference state, with molecular integrals (( h{pq} ) and ( h{pqrs} )) computed using standard quantum chemistry packages [2]. The electronic Hamiltonian is then transformed to qubit representation using parity or Jordan-Wigner transformations, often with qubit tapering to reduce resource requirements [2].

Operator Pool Selection

Different ADAPT-VQE variants employ distinct operator pools:

- Fermionic ADAPT: Uses generalized single and double (GSD) excitations [31]

- Qubit ADAPT: Employs operators native to qubit representation [31]

- CEO-ADAPT: Utilizes coupled exchange operators that efficiently capture strong correlations [31]

- GGA-VQE: Compatible with various pools but optimized for measurement efficiency [19]

Convergence Criteria

Standard convergence thresholds target chemical accuracy (1.6 mHa or 1 kcal/mol in atomization energies). Algorithms typically terminate when energy changes between iterations fall below this threshold or when gradient norms become sufficiently small [31] [33].

Table 3: Essential Computational Tools for Advanced VQE Research

| Resource Category | Specific Tools/Methods | Function | Application Context |

|---|---|---|---|

| Operator Pools | Fermionic GSD, Qubit Pool, CEO Pool | Define search space for ansatz construction | CEO pool reduces CNOT counts by 85-88% [31] |

| Measurement Strategies | Variance-based allocation, Pauli measurement reuse, Commutativity grouping | Reduce quantum resource requirements | Shot reduction to 32% of naive approach [35] |

| Error Mitigation | T-REx, Readout Error Mitigation, Zero-Noise Extrapolation | Counteract hardware noise | Enables older 5-qubit processors to outperform advanced 156-qubit devices without mitigation [2] |

| Classical Optimizers | SPSA, Gradient-based methods, Curve fitting (GGA-VQE) | Parameter optimization | SPSA shows better noise convergence [2] |

| Problem Decomposition | Active Space Approximation, Quantum Embedding | Reduce effective problem size | Enables 2-qubit simulation of relevant chemical systems [26] |

Applications in Drug Discovery and Development

Advanced VQE architectures show particular promise for pharmaceutical applications, where accurate molecular simulation directly impacts drug design efficacy. Quantum computing pipelines have been successfully applied to two critical drug discovery tasks: