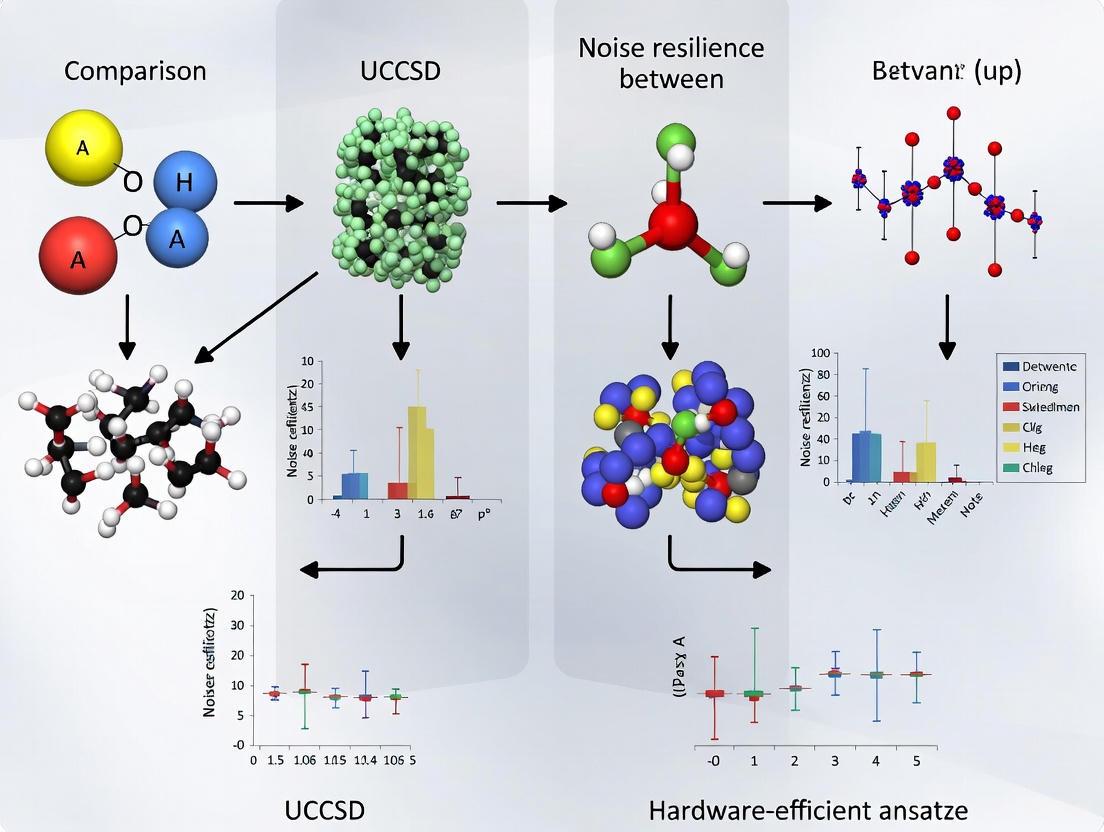

Noise Resilience in Quantum Chemistry: A Comparative Analysis of UCCSD and Hardware-Efficient Ansatze for NISQ-Era Drug Discovery

This article provides a comprehensive analysis of the noise resilience of the Unitary Coupled Cluster Singles and Doubles (UCCSD) and hardware-efficient ansatze when implementing the Variational Quantum Eigensolver (VQE) on...

Noise Resilience in Quantum Chemistry: A Comparative Analysis of UCCSD and Hardware-Efficient Ansatze for NISQ-Era Drug Discovery

Abstract

This article provides a comprehensive analysis of the noise resilience of the Unitary Coupled Cluster Singles and Doubles (UCCSD) and hardware-efficient ansatze when implementing the Variational Quantum Eigensolver (VQE) on current noisy quantum devices. Aimed at researchers and drug development professionals, it explores the foundational principles behind these ansatze, their methodological application in simulating molecular systems like H2 and HeH+, and strategies for troubleshooting and optimizing their performance against prevalent quantum noise. Through a validation and comparative lens, it synthesizes recent experimental and simulation-based findings to offer practical guidance on ansatz selection, highlighting the critical trade-offs between chemical accuracy, circuit depth, and inherent noise resilience for quantum simulations in pharmaceutical research.

Understanding Ansatze and Quantum Noise: Foundations for NISQ-Era Simulations

Theoretical Foundations and Ansatz Design Principles

In the Variational Quantum Eigensolver (VQE) algorithm, the ansatz serves as the parameterized quantum circuit that prepares trial wavefunctions for estimating ground-state energies of quantum systems, particularly in quantum chemistry applications [1]. The algorithm operates on the variational principle, where the quantum computer prepares a parameterized state (|\psi(\vec{\theta})\rangle = U(\vec{\theta})|0\rangle) and measures the expectation value (E(\vec{\theta}) = \langle\psi(\vec{\theta})|\hat{H}|\psi(\vec{\theta})\rangle), while a classical optimizer iteratively adjusts parameters (\vec{\theta}) to minimize this energy [1]. This hybrid quantum-classical approach makes VQE particularly suitable for Noisy Intermediate-Scale Quantum (NISQ) devices, as it avoids the need for prohibitively deep circuits required by quantum phase estimation [2] [1].

The selection and design of the ansatz represent a critical compromise between physical expressiveness and hardware feasibility. On one hand, the ansatz must be sufficiently expressive to capture the essential quantum correlations of the target system; on the other hand, it must be implementable within the constraints of current quantum hardware, including limited coherence times and significant gate errors [3] [4]. Two dominant philosophical approaches have emerged: the chemically-inspired unitary coupled cluster (UCC) ansätze, particularly UCCSD, which incorporate domain knowledge from quantum chemistry, and hardware-efficient ansätze (HEA), which prioritize implementability on existing hardware through simplified structures and native gate sets [1].

Table: Fundamental Characteristics of UCCSD and Hardware-Efficient Ansätze

| Characteristic | UCCSD Ansatz | Hardware-Efficient Ansatz | |

|---|---|---|---|

| Design Philosophy | Physically motivated, based on coupled-cluster theory | Heuristic, tailored to hardware constraints | |

| Theoretical Foundation | Exponential of excitation operators acting on reference state | Layers of single-qubit rotations and entangling gates | |

| Initial State | Typically Hartree-Fock reference state | Often simple product state (e.g., | 0⟩) |

| Key Advantage | Systematic improvement, chemical accuracy | Shallow depth, hardware compatibility | |

| Primary Limitation | Rapidly growing circuit depth | Potential symmetry breaking, barren plateaus |

UCCSD Ansatz: Chemical Accuracy at a Cost

The Unitary Coupled Cluster Singles and Doubles (UCCSD) ansatz represents a direct adaptation of the classical coupled-cluster method to quantum circuits through exponentiated excitation operators [1]. This approach begins with a Hartree-Fock reference state and applies a unitary transformation generated by single and double excitation operators, mathematically expressed as (U{\text{UCCSD}} = e^{T - T^\dagger}), where (T = T1 + T2) comprises single ((T1)) and double ((T_2)) excitation operators [3]. This formulation ensures that the ansatz naturally preserves physical symmetries and provides a systematic path toward chemical accuracy, defined as 1.6 milliHartrees or approximately 1 kcal/mol of error [4].

The primary strength of UCCSD lies in its firm theoretical foundation in electronic structure theory, which enables it to reliably capture electron correlation effects essential for accurate quantum chemistry simulations [1]. This physically motivated design stands in contrast to heuristic approaches, as it incorporates domain knowledge about the structure of molecular wavefunctions. However, this theoretical advantage comes with significant practical drawbacks for NISQ implementation. The circuit depth of UCCSD scales as (O(N^5)) with the number of spin orbitals or qubits (N), quickly becoming prohibitive for current quantum hardware [3]. For instance, implementing UCCSD for the LiH molecule requires circuit depths that are "way too expensive for current quantum hardware" according to researchers [2].

Recent research has demonstrated promising approaches to retain the advantages of UCCSD while mitigating its computational costs. The quantum information-inspired ansatz (QIIA) represents one such innovation, leveraging von Neumann entropy and quantum mutual information to identify the most significant qubit correlations and strategically place two-qubit entanglers [3]. This approach has demonstrated remarkable efficiency, achieving 99.99% accuracy relative to complete active space configuration interaction values while utilizing up to 99% fewer two-qubit gates than traditional UCCSD for systems of up to 12 qubits [3].

Hardware-Efficient Ansätze: Pragmatism for NISQ Devices

Hardware-efficient ansätze (HEA) adopt a fundamentally different design philosophy focused on maximizing implementability on NISQ devices through simplified structures that align with hardware constraints [1]. These ansätze typically consist of alternating layers of single-qubit rotation gates (to create superpositions) and two-qubit entangling gates (to generate entanglement), arranged in patterns that respect the native connectivity and gate sets of target quantum processors [3]. This hardware-aware construction enables significantly shallower circuit depths compared to UCCSD, making HEAs more immediately realizable on current quantum devices.

The primary advantage of HEAs lies in their reduced resource requirements, which directly address the limitations of contemporary quantum hardware. By minimizing circuit depth and utilizing native gates, HEAs reduce exposure to decoherence and gate errors that typically plague deeper circuits [3] [5]. However, this pragmatic approach comes with significant theoretical compromises. The heuristic nature of HEA design means these ansätze lack systematic improvability and may fail to adequately capture the relevant physics of target systems [3]. Furthermore, HEAs are particularly susceptible to the barren plateau problem, where gradients vanish exponentially with system size, hindering optimization convergence [1]. The fixed entanglement patterns in HEAs may also be suboptimal for specific molecular systems, potentially resulting in either insufficient or excessive entanglement [3].

Recent innovations have sought to address these limitations while preserving the hardware efficiency of HEAs. The quantum information-inspired ansatz (QIIA) represents one such advancement, systematically constructing ansatz circuits based on quantum information-theoretic quantities like von Neumann entropy and quantum mutual information [3]. This approach replaces the arbitrary arrangement of entangling gates in traditional HEAs with a deterministic placement strategy that targets qubit pairs with maximum quantum correlations [3]. Similarly, adaptive algorithms like Greedy Gradient-free Adaptive VQE (GGA-VQE) and evolutionary approaches dynamically construct circuits based on system-specific characteristics, offering improved noise resilience while maintaining manageable circuit depths [4] [1].

Performance Comparison: Accuracy Versus Efficiency

Direct comparisons between UCCSD and hardware-efficient ansätze reveal fundamental trade-offs between accuracy and efficiency that researchers must navigate based on their specific applications and available hardware. Quantitative evaluations demonstrate that UCCSD typically achieves higher accuracy for molecular systems but requires substantially greater quantum resources. For example, in noiseless simulations, UCCSD can achieve chemical accuracy for small molecules like H₂O and LiH, whereas hardware-efficient ansätze may struggle to reach this precision threshold without careful design [4].

The quantum information-inspired ansatz (QIIA) offers a promising middle ground, demonstrating 99.99% accuracy relative to complete active space configuration interaction methods while utilizing only two circuit blocks containing up to 99% fewer two-qubit gates than UCCSD for atomic systems with up to 12 qubits [3]. This significant reduction in gate count directly translates to improved feasibility on NISQ devices, where two-qubit gates typically dominate error budgets.

Table: Performance Comparison Across Ansatz Types

| Performance Metric | UCCSD | Hardware-Efficient | Quantum Information-Inspired |

|---|---|---|---|

| Typical Accuracy | Chemical accuracy achievable | Variable, system-dependent | 99.99% relative to CAS-CI |

| Circuit Depth Scaling | (O(N^5)) [3] | Linear with layers | Significantly reduced vs. UCCSD |

| 2-Qubit Gate Reduction | Baseline | Moderate reduction | Up to 99% vs. UCCSD [3] |

| Measurement Requirements | (\mathcal{O}(N^4)) Pauli terms [3] | System-dependent | Similar to HEA |

| Hardware Demonstration | Limited by depth | More frequently implemented | Proof-of-concept on IBM Sherbrooke [3] |

Under noisy conditions, the performance gap between ansatz types becomes more complex. One study comparing a 5-qubit IBMQ Belem processor with error mitigation to a more advanced 156-qubit device without mitigation found that the older, smaller device achieved an order of magnitude better accuracy for BeH₂ ground-state energy calculations when employing the Twirled Readout Error Extinction (T-REx) technique [5]. This finding underscores that for current NISQ devices, error mitigation strategies can outweigh raw hardware capabilities, particularly for hardware-efficient ansätze that benefit more directly from such techniques due to their shallower depths.

Noise Resilience and Error Mitigation Strategies

The performance of different ansätze under realistic noisy conditions represents a critical consideration for practical VQE implementations. Research indicates that hardware-efficient ansätze generally demonstrate superior noise resilience compared to UCCSD due to their shallower circuit depths, which reduce exposure to decoherence and cumulative gate errors [5]. However, this inherent resilience must often be supplemented with active error mitigation techniques to achieve chemically meaningful results.

Readout error mitigation has proven particularly effective for improving VQE performance on noisy hardware. The Twirled Readout Error Extinction (T-REx) technique, despite being computationally inexpensive, substantially enhances both energy estimation accuracy and the quality of optimized variational parameters that characterize molecular ground states [5]. Studies show that T-REx can enable older-generation 5-qubit processors to outperform more advanced 156-qubit devices without error mitigation, highlighting the critical role of mitigation strategies in extending the utility of noisy quantum hardware [5].

For UCCSD ansätze, which face greater challenges due to their depth, resource reduction techniques applied to both the Hamiltonian and circuit offer promising pathways toward practical implementation. Strategies include qubit pair-wise commutation and joint Bell-basis measurements to reduce the (\mathcal{O}(N^4)) measurement overhead typically required for molecular Hamiltonians [3]. Additionally, classical optimizers exhibit different resilience to noise-induced distortions in cost landscapes, with adaptive metaheuristics like CMA-ES and iL-SHADE demonstrating superior performance compared to gradient-based methods in noisy environments [6].

VQE Experimental Protocol for Ansatz Comparison

Optimization under noise presents distinct challenges for different ansatz types. Noise can distort cost landscapes, create false variational minima, and induce statistical bias known as the "winner's curse" [6]. Population-based optimizers that track population means rather than the best individual show promise in correcting this bias, particularly for hardware-efficient ansätze where noise has a more pronounced effect on the optimization trajectory [6]. For UCCSD, the combination of physically motivated initial parameters with error-aware optimizers offers the most promising approach to managing noise sensitivity [6] [1].

Research Toolkit and Methodological Guidelines

Implementing comparative studies of UCCSD and hardware-efficient ansätze requires specific methodological approaches and computational tools. Below are essential components of the research toolkit for conducting such investigations:

Table: Research Reagent Solutions for Ansatz Comparison Studies

| Tool/Component | Function | Example Implementation |

|---|---|---|

| Molecular Systems | Benchmarking ansatz performance | H₂, LiH, H₂O, BeH₂ [5] [4] [1] |

| Error Mitigation | Counteracting hardware noise | T-REx (Twirled Readout Error Extinction) [5] |

| Classical Optimizers | Parameter optimization | SPSA, CMA-ES, iL-SHADE [5] [6] |

| Qubit Mapping | Fermion-to-qubit transformation | Jordan-Wigner, Bravyi-Kitaev, parity [3] [5] |

| Resource Reduction | Decreasing measurement overhead | Qubit tapering, commutation grouping [3] [5] |

For researchers designing experiments to compare ansatz performance, several methodological considerations emerge from recent studies. First, system selection should include both simple benchmark systems (H₂, LiH) and more challenging molecules (H₂O, BeH₂) to evaluate scaling behavior [4] [1]. Second, experimental protocols should incorporate explicit error mitigation strategies, particularly for hardware-efficient ansätze where T-REx has demonstrated significant improvements [5]. Third, performance metrics should extend beyond final energy accuracy to include convergence behavior, parameter sensitivity, and resilience to noise [6].

Based on current evidence, practical guidelines for ansatz selection emerge: UCCSD remains valuable for noiseless simulations or small systems where chemical accuracy is paramount and circuit depth is manageable [3] [2]. Hardware-efficient ansätze, particularly when enhanced with quantum information-inspired design principles, offer the most practical pathway for current NISQ devices, especially when combined with robust error mitigation [3] [5]. Adaptive approaches like ADAPT-VQE and GGA-VQE represent promising future directions but require further development to overcome measurement overhead and optimization challenges on real hardware [4].

The field continues to evolve rapidly, with emerging approaches focusing on ansätze that balance physical motivation with hardware practicality. Quantum information-inspired designs, evolutionary circuit construction, and symmetry-preserving adaptations all represent active research frontiers aimed at overcoming the current limitations of both UCCSD and hardware-efficient ansätze [3] [4] [1].

Noisy Intermediate-Scale Quantum (NISQ) computing defines the current technological frontier of quantum information processing, characterized by processors containing from tens to over a thousand qubits that operate without full quantum error correction [7] [8]. The term "noisy" acknowledges the significant susceptibility of these systems to various error sources that degrade computational fidelity. These noise sources are broadly categorized as coherent (unitary, structured) and incoherent (stochastic, unstructured), each presenting distinct challenges for quantum algorithm performance [7] [9]. Understanding this noise landscape is particularly crucial for variational algorithms like the Variational Quantum Eigensolver (VQE), where ansatz selection—choosing between physically-inspired approaches like Unitary Coupled Cluster (UCCSD) and hardware-efficient designs—directly determines resilience to these competing noise types [1].

The fundamental limitations of NISQ devices stem from physical constraints: qubit coherence times (T₁, T₂) typically range from microseconds to seconds depending on platform, gate fidelities hover around 95-99.9% for two-qubit operations, and readout errors can reach 1-40% [9]. These imperfections collectively enforce a strict depth constraint on quantum circuits, typically limiting execution to O(10²-10³) gates before noise overwhelms the computational signal [7] [9]. For quantum chemistry applications like drug development, where simulating molecular electronic structure is paramount, these constraints necessitate careful algorithmic design and specialized error mitigation strategies to extract meaningful results [5] [10].

Coherent Noise Processes

Coherent noise arises from systematic, unitary errors that preserve the purity of quantum states while introducing deterministic deviations from ideal computation. These errors primarily stem from imperfect calibration and control of quantum systems [7]. Common manifestations include:

- Over-rotation/under-rotation errors: Pulses that implement single-qubit (Rx, Ry, Rz) or two-qubit (CNOT, CZ) gates with slightly incorrect angles or durations, effectively applying unintended unitary transformations.

- Crosstalk: Unwanted entanglement or parallel gate operations between adjacent qubits, often occurring when addressing one qubit affects neighboring qubits due to insufficient isolation.

- Parameter drift: Slow, systematic variations in qubit frequencies and coupling strengths between calibration cycles, leading to consistent miscalibration across circuits.

Unlike stochastic noise, coherent errors can constructively interfere and accumulate throughout circuit execution, potentially growing quadratically worse with circuit depth [7]. This structured nature makes coherent errors particularly detrimental for deep, structured ansätze like UCCSD, where precise angle implementation is critical for maintaining physical meaning of the parameterized quantum state.

Incoherent Noise Processes

Incoherent noise encompasses stochastic, non-unitary processes that introduce mixedness into quantum states, effectively modeling the quantum system's interaction with its environment [7] [11]. These processes include:

- Decoherence: The loss of quantum information through energy relaxation (T₁ processes) and phase damping (T₂ processes), fundamentally limiting the temporal window for quantum computation.

- Depolarizing noise: With probability p, the qubit state is replaced by the completely mixed state I/2, representing maximal classical uncertainty.

- Amplitude damping: Modeling energy dissipation to the environment, preferentially driving |1⟩ to |0⟩ states.

- Readout errors: Classical mismeasurement of quantum states, such as misidentifying |0⟩ as |1⟩ (and vice versa) during state measurement.

These processes are formally described by Lindblad master equations within the Markovian approximation, where the system's evolution follows:

$$ \frac{d\rho}{dt} = -i[H,\rho] + \sumi \gammai \left( Li\rho Li^\dagger - \frac{1}{2}{Li^\dagger Li, \rho} \right) $$

Here, ρ represents the density matrix, H the system Hamiltonian, and {Li} the Lindblad operators with decay rates γi characterizing the environmental coupling [11]. Recent research has identified that such noise can exhibit metastability—the emergence of long-lived intermediate states before final relaxation—which may be strategically leveraged for noise resilience [11].

The following diagram illustrates the classification and primary characteristics of coherent and incoherent noise in NISQ systems:

NISQ Noise Classification and Characteristics

Comparative Analysis: UCCSD vs. Hardware-Efficient Ansätze

Structural Properties and Noise Sensitivity

The core distinction between UCCSD and hardware-efficient ansätze lies in their design philosophy and consequent noise resilience profiles:

UCCSD (Unitary Coupled Cluster Singles and Doubles) ansätze are chemistry-inspired, constructed through exponential parameterization of fermionic excitation operators (T - T†) applied to a reference Hartree-Fock state: |ψ(θ)⟩ = e^(T - T†)|ψ_HF⟩ [1]. This approach maintains physical constraints like particle number conservation and N-representability, providing inherent symmetry verification capabilities. However, UCCSD typically requires deep quantum circuits with O(N⁴) gate complexity for N qubits, making them highly susceptible to both coherent error accumulation and decoherence in NISQ devices [1].

Hardware-efficient ansätze employ parameterized quantum circuits constructed specifically from a device's native gate set and connectivity map, prioritizing minimal circuit depth over physical interpretability [1]. While these designs reduce exposure to incoherent noise through shorter execution times, their lack of physical constraints makes them vulnerable to coherent errors like parameter drift and over-rotation, which can drive optimization into unphysical regions of Hilbert space [1].

Experimental Performance Comparison

Recent experimental studies directly compare these ansatz types under realistic NISQ noise conditions:

Table 1: Experimental Performance Comparison for Molecular Ground State Energy Calculation

| Metric | UCCSD Ansatz | Hardware-Efficient Ansatz | Experimental Context |

|---|---|---|---|

| Circuit Depth | O(N⁴) gates [1] | O(10-100) gates [1] | BeH₂ simulation on superconducting processors [5] |

| Energy Accuracy | Chemical accuracy (≤1 kcal/mol) in noise-free simulation [1] | 5x10⁻² Ha error without mitigation [5] | H₄ molecule on noisy simulator [12] |

| Noise Resilience | High sensitivity to incoherent noise due to depth [1] | 1 order of magnitude improvement with T-REx mitigation [5] | IBMQ Belem (5-qubit) vs. IBM Fez (156-qubit) [5] |

| Measurement Overhead | 10³-10⁴ measurements per iteration [10] | 3 orders magnitude reduction via grouping [10] | Low-rank factorization measurement strategy [10] |

| Optimization Landscape | Physically constrained, smoother [1] | Prone to barren plateaus [1] | Constrained VQE frameworks [1] |

Table 2: Error Mitigation Impact on Ansatz Performance

| Mitigation Technique | Effectiveness for UCCSD | Effectiveness for Hardware-Efficient | Experimental Validation |

|---|---|---|---|

| Zero-Noise Extrapolation (ZNE) | Limited for deep circuits | Constrains errors to O(10⁻²)-O(10⁻¹) [12] | Neural network-enhanced ZNE [12] |

| Symmetry Verification | Natural compatibility | Requires penalty terms [1] | Post-selection on particle number [10] |

| Readout Error Mitigation | Moderate improvement | T-REx enables older hardware to outperform newer devices [5] | Twirled Readout Error Extinction (T-REx) [5] |

| Metastability Exploitation | Theoretical potential | Demonstrated on IBM and D-Wave processors [11] | Noise-aware algorithm design [11] |

Experimental Protocols for Noise Resilience Comparison

Standardized Benchmarking Methodology

Rigorous experimental comparison of ansatz noise resilience follows standardized protocols encompassing device characterization, circuit compilation, and error-aware execution:

Device Calibration and Characterization: Prior to ansatz evaluation, comprehensive device benchmarking establishes baseline noise parameters: single/two-qubit gate fidelities (via randomized benchmarking), T₁/T₂ coherence times, readout error matrices, and spatial noise heterogeneity across the qubit register [9]. This characterization enables intelligent qubit selection to avoid high-error regions of the processor.

Noise-Aware Circuit Compilation: Both UCCSD and hardware-efficient ansätze undergo hardware-specific compilation incorporating dynamical decoupling on idle qubits, gate decomposition to native gate sets (e.g., Clifford+T for superconducting, MS gates for trapped ions), and qubit routing optimized for device connectivity graphs [9]. Constraint-based compilers model placement of program qubits onto hardware qubits with objective functions minimizing estimated error accumulation [9].

Error Mitigation Integration: Circuits execute with integrated error mitigation: ZNE with multiple noise scaling factors (1.0x, 1.5x, 2.0x) for extrapolation to zero-noise limit, symmetry verification via post-selection on measurement outcomes violating particle number conservation, and readout error correction using response matrix inversion techniques like T-REx [5] [12].

The following workflow diagram illustrates this comprehensive experimental methodology:

Experimental Workflow for Ansatz Noise Resilience Comparison

Key Performance Metrics and Validation

Quantitative evaluation employs multiple performance metrics to comprehensively assess noise resilience:

- Energy Accuracy Deviation: ΔE = |Ecomputed - Eexact|, measuring deviation from classically computed full configuration interaction (FCI) energies, with chemical accuracy threshold of ≤1.6 mHa (1 kcal/mol).

- Optimization Convergence Rate: Number of VQE iterations until |∇E(θ)| < ε, with ε=10⁻⁵ Ha, quantifying noise-induced optimization instability.

- Parameter Quality Index: F(θ) = |⟨ψ(θnoisy)|ψ(θideal)⟩|², assessing overlap between parameters optimized under noise versus ideal simulation [5].

- Measurement Efficiency: Total number of circuit repetitions required to achieve energy precision ε, incorporating both Hamiltonian term grouping and readout error mitigation overheads [10].

Validation employs cross-platform comparison where possible, executing identical molecular systems (e.g., H₂, LiH, BeH₂) across different quantum processor architectures (superconducting, trapped ion) to distinguish ansatz-specific from hardware-specific noise responses [5].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Experimental Resources for NISQ Noise Research

| Resource Category | Specific Solution | Function in Research |

|---|---|---|

| Quantum Processing Units | IBM Falcon/Montreal (27-127 qubits) [9] | NISQ testbed with 99.5-99.7% 2Q gate fidelity |

| Quantinuum H-Series (20 qubits) [9] | Trapped-ion platform with 99.9% 2Q fidelity and all-to-all connectivity | |

| Error Mitigation Tools | Zero-Noise Extrapolation (ZNE) [7] [12] | Infers zero-error expectation values via extrapolation from intentionally noise-amplified circuits |

| Twirled Readout Error Extinction (T-REx) [5] | Corrects measurement errors using classical post-processing with minimal quantum overhead | |

| Symmetry Verification [7] [10] | Discards measurement outcomes violating physical conservation laws (particle number, spin) | |

| Algorithmic Frameworks | Variational Quantum Eigensolver (VQE) [1] | Hybrid quantum-classical ground state energy computation |

| Quantum Approximate Optimization Algorithm (QAOA) [7] | Combinatorial optimization via alternating cost and mixer unitaries | |

| Classical Optimizers | Simultaneous Perturbation Stochastic Approximation (SPSA) [5] | Noise-resilient gradient approximation for parameter updates |

| Constrained Optimization by Linear Approximation (COBYLA) [1] | Derivative-free optimization for empirical energy landscapes | |

| Measurement Strategies | Basis Rotation Grouping [10] | Low-rank factorization reducing measurement overhead by 3 orders of magnitude |

| Contextual Subspace VQE [1] | Hamiltonian partitioning into classically simulable and quantum-corrected components |

The NISQ landscape presents a complex trade-space for quantum chemists and drug development researchers selecting between ansatz strategies. UCCSD offers physical interpretability and constraint preservation but suffers significant incoherent noise vulnerability from circuit depth, while hardware-efficient ansätze provide immediate noise resilience through shallow circuits but risk unphysical solutions from coherent error accumulation [1].

Emerging research directions promise to transcend this dichotomy: metastability exploitation leverages structured noise behavior for intrinsic resilience [11], hybrid quantum-neural eigensolvers enhance shallow ansätze with classical post-processing [1], and constrained VQE frameworks enforce physicality on hardware-efficient parameterizations [1]. For the drug development professional, current practical implementation favors hardware-efficient ansätze with advanced error mitigation (T-REx, ZNE) on carefully calibrated qubit subsets, delivering sufficient accuracy for molecular screening while respecting NISQ constraints [5] [12].

As quantum hardware continues evolving toward the beyond-NISQ era with demonstrations of early error correction [7], the noise resilience strategies developed today will inform the algorithmic foundation of tomorrow's fault-tolerant quantum computers for pharmaceutical applications.

The Unitary Coupled Cluster Singles and Doubles (UCCSD) ansatz has emerged as a cornerstone of quantum computational chemistry, representing a direct translation of a highly successful classical method into the quantum computing realm. As a chemically inspired ansatz, UCCSD provides a systematic approach to electron correlation through its foundation in excitation operators, maintaining size consistency and extensivity while obeying the variational principle [13]. This makes it particularly valuable for molecular systems where accurate treatment of electron correlation is essential, such as in drug development applications involving non-covalent interactions or transition metal complexes [14].

However, the UCCSD ansatz faces a significant implementation challenge on current Noisy Intermediate-Scale Quantum (NISQ) hardware. The quantum computational resources required scale polynomially with system size, with circuit depth of Trotterized UCCSD scaling as (O(\tau N{occ}^{2}N{vir}^{2}N)) and two-qubit gate counts as (O(N^{4}\tau)), where (\tau) represents Trotter steps, and (N{occ}), (N{vir}), and (N) correspond to occupied, virtual, and total orbital counts [13]. These substantial resource requirements result in circuits that often exceed the coherence time limitations and error tolerance of contemporary quantum processors, necessitating the development of alternative approaches that balance chemical accuracy with practical implementability.

Methodology: Comparative Framework for Ansatz Evaluation

Experimental Protocols for Ansatz Comparison

To quantitatively assess the performance of UCCSD against emerging alternatives, researchers typically employ a standardized computational workflow. The process begins with molecular system selection, focusing on chemically relevant molecules across various geometries, particularly bond dissociation curves that probe electron correlation effects [13] [15]. The electronic structure problem is then mapped to a qubit representation using transformation techniques such as Jordan-Wigner or Bravyi-Kitaev, with active space selection often employed to reduce qubit requirements for larger systems [14].

The core comparison involves implementing different ansatzes—UCCSD, hardware-efficient variants, and adaptive approaches—on quantum simulators and hardware. Circuit depth, two-qubit gate counts, and measurement requirements are tracked for resource analysis [13] [15]. Energy calculations are performed across molecular geometries, with performance benchmarking against classical reference methods like CCSD(T) and FCI to establish accuracy deviations [14]. Finally, noise resilience is evaluated through noisy simulations incorporating realistic device error models, quantifying energy error rates under different noise conditions [13].

Quantitative Metrics for Assessment

The evaluation of ansatz performance centers on three critical metrics:

- Accuracy: Deviation from exact ground state energy, typically measured in millihartree or against chemical accuracy (1 kcal/mol ≈ 1.6 millihartree).

- Quantum Resource Requirements: CNOT gate counts, circuit depth, and total number of measurements required for energy estimation.

- Noise Resilience: Increase in energy error under realistic noise models compared to noiseless simulation.

Table 1: Key Performance Metrics Across Ansatz Types

| Ansatz Type | Typical CNOT Count | Circuit Depth | Accuracy | Measurement Costs |

|---|---|---|---|---|

| UCCSD | (O(N^4\tau)) | (O(\tau N{occ}^2N{vir}^2N)) | Chemical accuracy (noiseless) | High |

| Hardware-Efficient | Significantly lower | Shallow | Variable, barren plateau concerns | Moderate |

| ADAPT-VQE | 30-50% of UCCSD | 50-70% reduction | Comparable to UCCSD | Very high (original), Low (improved) |

| Parallelized Givens | ~50% of UCCSD | 50-70% reduction | Comparable to UCCSD | Moderate |

Results: Comparative Performance of Ansatz Strategies

UCCSD Performance Profile

In noiseless simulations, UCCSD with a single Trotter step consistently delivers high accuracy, achieving chemical accuracy for many molecular systems including those with strong static correlation [13]. However, this accuracy comes at a substantial resource cost. For instance, implementation of UCCSD for simple molecules like H₂ and LiH on superconducting quantum hardware has reported energy errors of approximately 1 Hartree due to significant noise susceptibility [13]. The method's deep circuits and high two-qubit gate counts make it particularly vulnerable to the limited coherence times and gate fidelities of current NISQ devices.

Emerging Alternatives and Performance Benchmarks

Recent research has developed multiple strategies to address UCCSD's limitations while maintaining its accuracy advantages:

Parallelized Givens Rotations demonstrate particular promise, achieving ground-state energies comparable to UCCSD in noiseless simulations while reducing circuit depth by 50-70% and two-qubit gate counts by approximately 50% [13]. In noisy simulations, this approach reduces energy error rates by an order of magnitude compared to UCCSD, highlighting significantly improved noise resilience [13].

ADAPT-VQE variants with novel operator pools show dramatic resource reductions. The CEO-ADAPT-VQE* algorithm reduces CNOT counts by 88%, CNOT depth by 96%, and measurement costs by 99.6% compared to original fermionic ADAPT-VQE while maintaining chemical accuracy [15]. These improvements substantially enhance practical implementability on current hardware.

Local Unitary Coupled Cluster (LUCC) approximations enable the treatment of larger systems, such as the 54-qubit methane dimer simulation, by significantly reducing circuit depth while maintaining accuracy sufficient for capturing dispersion interactions [14].

Table 2: Experimental Performance Data for Molecular Systems

| Molecule (Qubits) | Ansatz | CNOT Count | Circuit Depth | Energy Error | Measurement Costs |

|---|---|---|---|---|---|

| H₂/LiH (2-4 qubits) | UCCSD | High | Deep | ~1 Hartree (hardware) | Not reported |

| LiH (12 qubits) | CEO-ADAPT-VQE* | 12-27% of original ADAPT-VQE | 4-8% of original | Chemical accuracy | 0.4-2% of original |

| H₆ (12 qubits) | CEO-ADAPT-VQE* | 12-27% of original ADAPT-VQE | 4-8% of original | Chemical accuracy | 0.4-2% of original |

| BeH₂ (14 qubits) | CEO-ADAPT-VQE* | 12-27% of original ADAPT-VQE | 4-8% of original | Chemical accuracy | 0.4-2% of original |

| Methane dimer (36 qubits) | LUCJ | Significantly reduced vs UCCSD | Substantially shallower | Within 1.000 kcal/mol of CCSD(T) | Not reported |

Comparative Analysis Workflow for Quantum Ansatzes

The Scientist's Toolkit: Essential Research Components

Table 3: Research Reagent Solutions for Quantum Chemistry Experiments

| Component | Function | Examples/Alternatives |

|---|---|---|

| Operator Pools | Provide building blocks for adaptive ansatzes | Fermionic excitation operators, Qubit excitation operators, Coupled Exchange Operators (CEO) |

| Measurement Techniques | Reduce resource requirements for energy estimation | Classical shadows, Operator grouping, Quantum subspace expansion |

| Error Mitigation Strategies | Counteract hardware noise effects | Zero-noise extrapolation, Probabilistic error cancellation, Symmetry verification |

| Active Space Selection Methods | Reduce qubit requirements for large systems | AVAS, DMRG, CASSCF |

| Classical Optimizers | Parameter optimization in VQE | Gradient-based methods, Quantum natural gradient, SPSA |

Discussion: Towards Quantum Advantage in Chemical Simulations

The comparative analysis reveals a nuanced landscape for UCCSD deployment in NISQ-era quantum chemistry. While UCCSD remains valuable as a theoretically rigorous approach with well-understood correlation treatment, its practical implementation on current hardware is severely limited by resource constraints and noise susceptibility. The emerging alternatives demonstrate that strategic approximations and hardware-aware constructions can yield substantial improvements in implementability while maintaining accuracy.

For researchers and drug development professionals, the choice of ansatz involves careful consideration of the target application's accuracy requirements and available quantum resources. For rapid screening or noisy hardware scenarios, approaches like parallelized Givens rotations or CEO-ADAPT-VQE offer compelling advantages. When highest accuracy is paramount and sufficient quantum resources are available, UCCSD may still be preferable, particularly in noiseless simulations.

The integration of these algorithms with quantum-centric high-performance computing (QCSC) workflows [14] and advanced error mitigation techniques represents a promising direction for extending the applicability of quantum computational chemistry to pharmaceutically relevant problems, including drug binding affinity prediction and materials design. As hardware continues to improve, the balance between circuit efficiency and chemical accuracy will undoubtedly evolve, potentially enabling quantum advantage for specific drug development applications in the future.

The Variational Quantum Eigensolver (VQE) has emerged as a leading algorithm for solving electronic structure problems on near-term quantum computers. Its performance critically depends on the choice of ansatz, a parameterized quantum circuit used to prepare trial wavefunctions. Ansatzes generally fall into two categories: chemically inspired approaches, such as the Unitary Coupled Cluster Singles and Doubles (UCCSD), and hardware-efficient ansatzes (HEA). The HEA is specifically designed for Noisy Intermediate-Scale Quantum (NISQ) devices by utilizing shallow circuits composed of native gates, offering distinct advantages in noise resilience and experimental feasibility, albeit with different expressibility properties compared to chemically motivated alternatives [16] [13].

This guide objectively compares the performance of the Hardware-Efficient Ansatz against alternative approaches, focusing on quantitative metrics from recent research and detailed experimental methodologies.

Theoretical Foundations and Comparative Framework

Ansatz Design Principles

The Hardware-Efficient Ansatz (HEA) employs a layered structure of alternating parameterized single-qubit rotations and two-qubit entangling gates that are native to the target quantum hardware. This design minimizes circuit depth and avoids the need for transpilation, which can introduce substantial overhead and errors [16] [13]. In contrast, the UCCSD ansatz is derived from classical quantum chemistry methods, implementing a unitary exponentiation of cluster operators through Trotterization, resulting in circuits with depth scaling as (O(\tau N{occ}^2 N{vir}^2 N)) and two-qubit gate counts of (O(N^4\tau)), where (N) represents orbital counts and (\tau) is the number of Trotter steps [13].

Key Performance Trade-offs

The fundamental trade-off between these approaches balances representational power against hardware practicality. UCCSD inherently captures electron correlation effects and preserves physical symmetries like size consistency, making it highly accurate in noiseless simulations. However, its deep circuits are particularly vulnerable to NISQ device noise. HEA sacrifices some chemical specificity for dramatically improved noise resilience, enabling more effective execution on current hardware despite potential limitations in describing strong correlation [16] [13].

Experimental Performance Data and Comparison

Quantitative Benchmarking Across Molecular Systems

Table 1: Performance Comparison of HEA vs. UCCSD Ansatzes

| Metric | Hardware-Efficient Ansatz (HEA) | UCCSD Ansatz |

|---|---|---|

| Circuit Depth Scaling | Constant or linear in layers [16] | (O(\tau N{occ}^2 N{vir}^2 N)) [13] |

| Two-Qubit Gate Count | Linear in system size and layers [13] | (O(N^4\tau)) [13] |

| Noise Resilience | High (shallow depth, native gates) [16] | Low (deep circuits, non-native gates) [13] |

| Energy Error (H₂O) | ~50-70% reduction in depth vs. UCCSD [13] | Reference accuracy in noiseless simulation [13] |

| Energy Error (N₂) | Order of magnitude lower in noisy simulation [13] | High sensitivity to noise [13] |

| Trainability Conditions | Trainable for area law entangled data [16] | Generally trainable but limited by noise [13] |

| Strong Correlation Handling | Limited without specialized design [16] | Accurate with exact formulation [13] |

Resource Requirements and Hardware Execution

Table 2: Resource Comparison for Molecular Simulations

| Resource Type | Parallelized Givens Ansatz | UCCSD | HEA |

|---|---|---|---|

| Qubit Requirements | Active space dependent [13] | Full system [13] | Active space dependent [16] |

| Circuit Depth | 50-70% reduction vs. UCCSD [13] | Deep [13] | Shallow [16] |

| Two-Qubit Gates | Significantly reduced [13] | (O(N^4\tau)) [13] | Minimal [16] |

| Error Mitigation Need | Moderate [13] | High [13] | Low-Moderate [16] |

| Hardware Execution | Feasible on NISQ [13] | Limited on current NISQ [13] | Well-suited for NISQ [16] |

Detailed Experimental Protocols

Protocol 1: HEA Trainability and Entanglement Analysis

Objective: Determine the trainability conditions for HEA with different input state entanglement properties [16].

Methodology:

- Input State Preparation: Generate quantum states satisfying either area law or volume law entanglement scaling.

- HEA Configuration: Construct a one-dimensional layered HEA with alternating single-qubit rotations and entangling gates native to the target hardware.

- Parameter Optimization: Employ gradient-based or gradient-free classical optimizers to minimize the energy expectation value.

- Convergence Analysis: Monitor the loss landscape for barren plateaus characterized by exponentially vanishing gradients.

- Sample Complexity: Measure the number of shots required to achieve target precision in energy estimation.

Key Findings: HEA exhibits trainability for Quantum Machine Learning (QML) tasks with input data following an area law of entanglement, avoiding barren plateaus. Conversely, it becomes untrainable for volume law entangled data due to barren plateaus [16].

Protocol 2: Noise Resilience Benchmarking

Objective: Quantify the performance of HEA versus UCCSD under realistic noise conditions [13] [5].

Methodology:

- Molecular Selection: Choose benchmark systems with varying correlation strength (H₂, H₂O, N₂, F₂).

- Ansatz Implementation: Implement HEA with hardware-native gates and UCCSD with Trotterized exponentials.

- Noise Modeling: Incorporate realistic noise channels (amplitude damping, depolarizing, phase damping) based on device calibration data.

- Error Mitigation: Apply readout error mitigation techniques like Twirled Readout Error Extinction (T-REx).

- Metric Collection: Measure energy errors, convergence rates, and optimized parameter quality across multiple trials.

Key Findings: A 5-qubit quantum processor with T-REx error mitigation achieved ground-state energy estimations an order of magnitude more accurate than a 156-qubit device without error mitigation. HEA demonstrated significantly lower energy errors in noisy simulations compared to UCCSD [5].

Protocol 3: Gate Count and Circuit Depth Analysis

Objective: Systematically compare quantum resource requirements between ansatz architectures [13].

Methodology:

- Active Space Selection: Define molecular active spaces retaining significant electron correlation.

- Circuit Construction: Implement UCCSD, HEA, and intermediate approaches like Parallelized Givens Ansatz.

- Gate Compilation: Translate all circuits to native gate sets using hardware-aware transpilation.

- Resource Counting: Tally total gates, two-qubit gates, circuit depth, and parameter counts.

- Accuracy Validation: Compute ground-state energies for each approach using noiseless simulators as reference.

Key Findings: The Parallelized Givens Ansatz achieved ground-state energies comparable to UCCSD while reducing circuit depth by 50-70% and two-qubit gate counts significantly [13].

Decision Framework and Visual Guide

Diagram 1: Ansatz Selection Decision Framework - This workflow guides researchers in selecting the optimal ansatz based on molecular properties and hardware constraints.

Essential Research Reagents and Computational Tools

Table 3: Key Research Tools for HEA Experiments

| Tool Category | Specific Examples | Function/Purpose |

|---|---|---|

| Quantum Hardware | IBMQ processors (e.g., 5-qubit, 156-qubit) [5] | Execution of variational quantum algorithms |

| Error Mitigation | T-REx (Twirled Readout Error Extinction) [5], MREM (Multireference Error Mitigation) [17] | Correct measurement and state preparation errors |

| Classical Optimizers | SPSA (Simultaneous Perturbation Stochastic Approximation) [5] | Noise-resilient parameter optimization |

| Quantum Simulators | Braket LocalSimulator, PennyLane lightning.qubit [18] | Noiseless simulation for benchmarking |

| Chemistry Packages | OpenFermion, Qiskit Nature [13] | Molecular Hamiltonian preparation and mapping |

| Noise Modeling | AmplitudeDamping, Depolarizing, PhaseDamping [18] | Realistic simulation of NISQ device errors |

The Hardware-Efficient Ansatz represents a pragmatic approach to quantum chemistry on NISQ devices, offering superior noise resilience through shallow circuits and native gate sets. Quantitative benchmarks demonstrate significant advantages in circuit depth (50-70% reduction) and noisy simulation performance (order of magnitude lower errors) compared to UCCSD for systems with weak to moderate correlation. However, chemically inspired ansatzes maintain advantages for strongly correlated systems where accurate description of electron correlation outweighs hardware constraints.

Future research directions include developing more physically informed hardware-efficient ansatzes, advancing error mitigation techniques like MREM for multireference systems, and creating hybrid approaches that balance chemical accuracy with hardware practicality. As quantum hardware continues to evolve with improved coherence times and gate fidelities, the strict trade-offs between these approaches may relax, enabling more accurate quantum simulations of complex molecular systems.

In the pursuit of quantum advantage for chemical simulations using noisy intermediate-scale quantum (NISQ) devices, the variational quantum eigensolver (VQE) has emerged as a leading algorithm. At the heart of every VQE calculation lies the ansatz—a parameterized quantum circuit responsible for preparing trial wavefunctions. The central challenge in ansatz design is navigating a fundamental trade-off: maximizing expressibility to capture complex electron correlations while maintaining noise resilience against the inherent errors of current quantum hardware. This guide objectively compares the two predominant ansatz families in quantum chemistry: the chemically inspired Unitary Coupled Cluster Singles and Doubles (UCCSD) and the pragmatically designed Hardware-Efficient Ansatz (HEA).

The performance divergence between these ansatzes becomes critically apparent under realistic experimental conditions. As this guide will demonstrate through aggregated experimental data and detailed methodologies, UCCSD generally achieves superior accuracy in noiseless simulations, whereas HEA exhibits greater robustness on physical quantum processors. This comparison provides researchers and drug development professionals with the evidence needed to make informed decisions tailored to their specific computational resources and accuracy requirements.

Ansatz Fundamentals and the Expressibility vs. Noise Dilemma

Unitary Coupled Cluster (UCCSD) Ansatz

The UCCSD ansatz is directly inspired by a successful classical computational chemistry method. It uses the Hartree-Fock (HF) state as a reference and applies a unitary transformation generated by excitation operators [13]:

[ \ket{\Psi{\text{UCCSD}}} = e^{T - T^{\dagger}} \ket{\Psi{\text{HF}}} ]

where ( T = \sum{i,\alpha}t{i}^{\alpha}a{\alpha}^{\dagger}a{i} + \sum{i,j,\alpha,\beta}t{ij}^{\alpha\beta}a{\alpha}^{\dagger}a{\beta}^{\dagger}a{i}a{j} ) is the cluster operator encompassing single and double excitations [13]. The UCCSD ansatz possesses several advantageous properties: it is size-consistent, size-extensive, and variational [13]. Even with a single Trotter step to approximate the exponential, VQE simulations with UCCSD can deliver highly accurate energies for systems with strong static correlation in ideal, noiseless conditions [13].

Hardware-Efficient Ansatz (HEA)

In contrast, the Hardware-Efficient Ansatz prioritizes experimental feasibility. HEA typically consists of alternating layers of parameterized single-qubit rotations and two-qubit entangling gates that are specifically tailored to the native gate set and connectivity of the target quantum hardware [13]. This design deliberately sacrifices explicit chemical structure in favor of reduced circuit depth and improved executability on NISQ devices.

The Core Trade-off: Theoretical Perspective

The fundamental tension arises from the conflicting demands of chemical accuracy and hardware limitations. UCCSD's strength lies in its systematic improvability and firm theoretical foundation; its circuit construction is guided by the structure of the molecular Hamiltonian. However, this comes at a significant cost: the circuit depth of a Trotterized UCCSD ansatz scales as ( O(N^4) ), where ( N ) is the number of orbitals, leading to deep circuits that are highly susceptible to noise [13].

Conversely, HEA employs a heuristic approach that accepts a reduced expressibility within the chemically relevant subspace of the full Hilbert space. This compromise enables the implementation of shallower circuits that are less affected by decoherence and gate errors, albeit with no guarantee of encompassing the true ground state.

Comparative Performance Analysis

The theoretical trade-off manifests clearly in experimental and simulation results. The table below summarizes key performance metrics for the UCCSD and HEA ansatzes.

Table 1: Performance Comparison of UCCSD vs. Hardware-Efficient Ansatz

| Performance Metric | UCCSD Ansatz | Hardware-Efficient Ansatz (HEA) |

|---|---|---|

| Circuit Depth Scaling | ( O(N^4) ) [13] | Shallow, hardware-tailored [13] |

| Noiseless Simulation Accuracy | High (often chemically accurate) [19] | Variable, can reach chemical accuracy [19] |

| Noisy Simulation Performance | Significant degradation; deep circuits accumulate error [13] | More robust; lower error rates [13] |

| Performance on Real Hardware | Challenging for >2 qubits without error mitigation [13] | More practical implementation [19] |

| Optimization Landscape | Can be complex [20] | Prone to barren plateaus [20] |

| Resource Requirements | High two-qubit gate count [13] | Reduced two-qubit gate count [13] |

Recent studies on molecules such as BeH₂ confirm this dichotomy. UCCSD demonstrates high reliability in noiseless, state-vector simulations, often achieving chemical accuracy. However, HEA shows greater resilience to hardware noise, making it a more practical choice for executions on real quantum processors like IBM Fez [19].

Furthermore, numerical evidence indicates that HEA can achieve energy error rates an order of magnitude lower than UCCSD in the presence of noise [13]. This stark difference in noise resilience underscores the practical challenges of implementing deep, chemically inspired circuits on current hardware.

Experimental Protocols and Methodologies

Standard VQE Experimental Workflow

A typical VQE experiment, whether employing UCCSD or HEA, follows a structured workflow. The diagram below illustrates the key stages, highlighting where ansatz-specific considerations come into play.

Ansatz-Specific Methodologies

The core difference in experimental protocols lies in the implementation of the quantum circuit and the initialization strategies.

UCCSD Protocol: The UCCSD ansatz requires compiling the exponential of fermionic excitation operators into native quantum gates. This is typically done using Trotter-Suzuki decomposition, often with a single Trotter step. The initial parameters, ( \theta ), are frequently set to zero or initialized using classical methods like Møller–Plesset perturbation theory to start near a good solution [20]. The circuit involves parameter-sharing gates, where a single parameter ( \thetaj ) appears in multiple rotation gates within the compiled circuit for a UCC factor ( e^{\thetaj \hat{G}_j} ) [20].

HEA Protocol: The HEA circuit is constructed from layers of fixed, hardware-native entangling gates (e.g., CNOTs or CZs) interleaved with parameterized single-qubit rotations (e.g., ( Rx, Ry, R_z )). A critical methodological step is the initialization strategy. Techniques like Identity Block Initialization can help mitigate the barren plateau problem, a common challenge for HEAs where gradients vanish exponentially with system size [19]. The optimization of these parameters is often more challenging than for UCCSD and requires noise-resilient classical optimizers.

Specialized Optimizers for Ansatz Types

The choice of classical optimizer is crucial and can be ansatz-dependent. For UCCSD, the Sequential Optimization with Approximate Parabola (SOAP) algorithm has been proposed as a tailored solution. SOAP is a sequential line-search procedure that fits an approximate parabola to find the minimum for each parameter, requiring only 2-4 energy evaluations per parameter. It leverages the fact that the initial guess for UCCSD is typically close to the minimum, making the local quadratic approximation valid [20].

Table 2: Key Research Reagents and Computational Tools

| Item / Protocol | Function in Ansatz Comparison |

|---|---|

| State-Vector Simulator (SVS) | Provides noiseless benchmark for evaluating intrinsic ansatz accuracy [19]. |

| Noise Models | Simulates specific quantum hardware (e.g., IBM Fez) to predict real-world performance [19]. |

| SOAP Optimizer | Efficient, noise-resilient parameter optimizer designed for UCC-type ansatzes [20]. |

| Givens Rotations | Circuit primitive for preparing symmetry-preserving multireference states [13] [21]. |

| Zero-Noise Extrapolation (ZNE) | Error mitigation technique used to improve energy estimates from noisy hardware [19]. |

Mitigation Strategies and Future Pathways

Bridging the Gap: Intermediate Ansatz Designs

Recent research focuses on developing ansatzes that bridge the gap between UCCSD and HEA. One promising approach is the Parallelized Givens Ansatz, which uses Givens rotations to recover substantial correlation energy while drastically reducing circuit depth and two-qubit gate counts compared to UCCSD [13]. This ansatz has demonstrated a 50–70% reduction in circuit depth while maintaining energy accuracy comparable to UCCSD in noiseless simulations [13].

Advanced Error Mitigation

Error mitigation is essential for extracting meaningful results, especially for noise-sensitive ansatzes like UCCSD. Techniques such as Reference-State Error Mitigation (REM) and its extension, Multireference-State Error Mitigation (MREM), leverage classical computational chemistry insights. REM uses a classically computable reference state (like Hartree-Fock) to calibrate and remove systematic errors from the measured energy [21]. MREM generalizes this concept to multireference states, making it effective for strongly correlated systems where a single determinant is insufficient [21].

Another advanced strategy involves characterizing and exploiting the metastability of hardware noise. If the noise exhibits long-lived intermediate states, algorithms can be designed to be intrinsically resilient by aligning computations with these metastable dynamics [11].

Measurement Strategies

The overhead of measuring the energy expectation value is a major bottleneck. The Basis Rotation Grouping strategy, based on a low-rank factorization of the two-electron integral tensor, can reduce the number of distinct measurements required. This approach transforms the measurement problem into estimating one- and two-particle correlation functions in rotated bases, leading to a linear number of term groupings in the number of qubits [10].

The choice between UCCSD and Hardware-Efficient Ansatzes is a direct manifestation of the core trade-off between expressibility and noise resilience in NISQ-era quantum chemistry. The experimental data and methodologies presented in this guide lead to a clear, evidence-based conclusion:

- For ideal (noiseless) simulations or systems where strong correlation is the dominant challenge, the UCCSD ansatz remains the gold standard due to its high expressibility and systematic approach to capturing electron correlation.

- For executions on real quantum hardware or noisy simulations where decoherence and gate errors are the primary limitation, the Hardware-Efficient Ansatz offers a more pragmatic and often more successful path to obtaining results, albeit with less guarantee of chemical accuracy.

The future of ansatz design lies not in choosing one over the other, but in the continued development of hybrid approaches that incorporate physical insight while respecting hardware constraints. Techniques such as the Parallelized Givens Ansatz, advanced error mitigation like MREM, and noise-aware optimizers like SOAP are actively blurring the lines between these two paradigms. For researchers in drug development and materials science, this evolving landscape promises increasingly powerful tools for molecular simulation, provided they carefully match their ansatz selection to their specific problem, computational resources, and required precision.

Implementing Ansatze for Molecular Systems: Methodologies and Practical Applications

Benchmarking quantum computational methods for ground state energy calculations is a critical step toward practical quantum advantage in chemistry. Simple molecular systems such as H₂, H₃⁺, and HeH⁺ serve as the fundamental testbeds for validating the performance of variational quantum algorithms and ansätze [22]. These molecules, with their minimal number of electrons and nuclei, provide the ideal proving ground for evaluating the noise resilience and computational efficiency of different approaches on Noisy Intermediate-Scale Quantum (NISQ) hardware.

The significance of these benchmark molecules stems from their foundational role in quantum chemistry. H₂ represents the simplest neutral molecule, while HeH⁺ stands as the simplest heteronuclear ion [22]. H₃⁺, being the simplest polyatomic molecule, serves as "the benchmark for rigorous ab initio theory" [22]. Its rovibrational spectral lines provide critical data for testing theoretical predictions, with research progressing to the point where observed spectra agree with ab initio calculations "mostly within 1 cm⁻¹" [22]. This level of precision makes these systems perfect for assessing quantum computational methods.

Within this context, this review focuses on the comparative analysis between two predominant types of variational ansätze: the physically-motivated Unitary Coupled Cluster (UCC) ansatz and hardware-efficient approaches. We examine their performance in calculating ground state energies, with particular emphasis on their resilience to quantum noise, resource requirements, and scalability prospects.

Methodological Approaches: UCCSD vs. Hardware-Efficient Ansätze

The core of Variational Quantum Eigensolver (VQE) algorithms lies in the choice of ansatz, which determines the quantum circuit's structure and significantly impacts performance on NISQ devices. The two primary categories are chemistry-inspired ansätze like UCCSD and hardware-efficient ansätze (HEA), each with distinct advantages and limitations [1].

Unitary Coupled Cluster (UCCSD) Ansatz

The UCCSD ansatz is a direct adaptation of the successful classical coupled cluster method to quantum circuits. It applies an exponential of fermionic excitation operators ((T - T^\dagger)) to a reference state (typically Hartree-Fock), where (T) encompasses single ((T1)) and double ((T2)) excitation operators [23] [1]. This approach inherently preserves physical symmetries such as particle number and spin, which is crucial for generating chemically meaningful results. The UCCSD ansatz has demonstrated remarkable accuracy, often achieving chemical accuracy (1.6 mHa or 1 kcal/mol) for small molecules [23]. However, this accuracy comes at a significant cost: the circuit depth scales as (O(N^4)) with the number of qubits (N), resulting in substantial CNOT gate counts that often exceed the capabilities of current NISQ devices [23] [15].

Hardware-Efficient Ansätze (HEA)

HEAs prioritize practical implementability on quantum hardware by constructing parameterized circuits from native gate sets and qubit connectivity patterns [23] [1]. Common implementations include the RyRz Linear Ansatz (RLA) and Symmetry-Preserving Ansatz (SPA) [23]. Unlike UCCSD, HEAs are not derived from physical principles but are designed to maximize expressibility within hardware constraints. The SPA variant specifically incorporates symmetry constraints to preserve physical properties like particle number [23]. The primary advantage of HEAs is their significantly lower circuit depth and reduced gate count compared to UCCSD, making them more amenable to execution on noisy hardware [23]. However, they face challenges including the barren plateau phenomenon, where gradients vanish exponentially with system size, and potential convergence to unphysical states [23] [1].

Adaptive Approaches (ADAPT-VQE)

Adaptive algorithms like ADAPT-VQE represent a promising middle ground, dynamically constructing ansätze by iteratively adding operators from a predefined pool based on energy gradient information [15]. Recent developments, such as the Coupled Exchange Operator (CEO) pool, have demonstrated dramatic resource reductions of up to 88% in CNOT count, 96% in CNOT depth, and 99.6% in measurement costs compared to early ADAPT-VQE versions [15]. These approaches combine the physical relevance of problem-tailored ansätze with the hardware efficiency of adaptive construction.

Table 1: Comparison of Ansatz Methodologies for Ground State Calculations

| Ansatz Type | Key Features | Advantages | Limitations | Representative Molecules Tested |

|---|---|---|---|---|

| UCCSD | Chemistry-inspired, exponential of excitation operators | High physical accuracy, preserves symmetries | High circuit depth ((O(N^4))), high CNOT count | H₂, LiH, H₂O, BeH₂ [23] [1] |

| Hardware-Efficient (HEA) | Device-tailored gates and connectivity | Low depth, practical for NISQ devices | Barren plateaus, may break symmetries | LiH, BeH₂, H₂O, CH₄, N₂ [23] |

| Symmetry-Preserving (SPA) | Hardware-efficient with physical constraints | Balance of efficiency and physical meaning | Still requires careful optimization | LiH, BeH₂, H₂O, CH₄, N₂ [23] |

| ADAPT-VQE | Dynamically constructed based on gradients | Resource-efficient, high accuracy | Optimization overhead | LiH, H₆, BeH₂ [15] |

Experimental Protocols for Benchmarking Studies

Rigorous benchmarking of quantum computational methods requires standardized experimental protocols and metrics to ensure fair comparison across different approaches and hardware platforms.

Performance Metrics and Targets

The primary metric for evaluating ground state calculations is the energy error, typically measured against full configuration interaction (FCI) or experimental values. The gold standard is chemical accuracy, defined as an error within 1.6 mHa or 1 kcal/mol [23] [24]. For the H₃⁺ molecule, high-precision spectroscopic measurements provide benchmark data with exceptional precision, with theoretical calculations achieving agreement with experimental rovibrational spectra within 1 cm⁻¹ [22].

Beyond energy accuracy, key computational metrics include:

- Circuit depth: Number of sequential quantum gates, critical for NISQ devices

- CNOT count: Primary contributor to error rates in superconducting qubits

- Parameter count: Number of variational parameters to optimize

- Measurement costs: Number of circuit executions required for energy estimation [15] [24]

Error Mitigation Techniques

Given the inherent noise in NISQ devices, sophisticated error mitigation strategies are essential for obtaining meaningful results:

- Readout Error Mitigation: Techniques like Twirled Readout Error Extinction (T-REx) can improve VQE performance by an order of magnitude, enabling older 5-qubit processors to outperform more advanced 156-qubit devices without error mitigation [5].

- Quantum Detector Tomography (QDT): Implementing repeated settings with parallel QDT can reduce measurement errors from 1-5% to 0.16%, approaching chemical precision requirements [24].

- Locally Biased Random Measurements: This technique reduces shot overhead by prioritizing measurement settings with greater impact on energy estimation [24].

- Blended Scheduling: Mitigates time-dependent noise by interleaving different circuit types during execution [24].

Figure 1: Generalized Workflow for Molecular Benchmarking Studies

Quantitative Performance Comparison

Recent studies provide comprehensive quantitative data on the performance of different ansätze across various molecular systems, enabling direct comparison of their efficiency and accuracy.

Convergence and Accuracy Metrics

For the BeH₂ molecule, representative studies demonstrate distinct performance patterns between ansatz types. UCCSD typically achieves chemical accuracy but requires substantial resources, with one study reporting 94 CNOT gates and measurement costs of approximately 1.5×10⁶ [15]. In contrast, CEO-ADAPT-VQE reaches chemical accuracy with significantly reduced resources - 76 CNOT gates and measurement costs of just 4.8×10³, representing a 99.6% reduction in measurement overhead [15].

The H₆ molecule shows similar trends, with fermionic ADAPT-VQE requiring 330 CNOT gates and 2.4×10⁶ measurements to achieve chemical accuracy, while CEO-ADAPT-VQE accomplishes the same target with only 41 CNOT gates and 1.0×10⁴ measurements [15]. This pattern of adaptive methods achieving comparable accuracy with substantially reduced resources holds across multiple molecular systems.

Table 2: Resource Requirements for Chemical Accuracy Across Molecular Systems

| Molecule | Ansatz Type | CNOT Count | Measurement Costs | Circuit Depth |

|---|---|---|---|---|

| BeH₂ | UCCSD | 94 | ~1.5×10⁶ | High |

| BeH₂ | CEO-ADAPT-VQE | 76 | 4.8×10³ | Medium |

| H₆ | Fermionic ADAPT | 330 | 2.4×10⁶ | High |

| H₆ | CEO-ADAPT-VQE | 41 | 1.0×10⁴ | Low |

| LiH | UCCSD | 82 | ~1.2×10⁶ | High |

| LiH | CEO-ADAPT-VQE | 15 | 5.0×10³ | Low |

Noise Resilience Comparison

The critical trade-off between UCCSD and HEA becomes most apparent under noisy conditions. Hardware-efficient approaches demonstrate superior practical performance on current quantum devices due to their lower circuit depths and reduced gate counts [23]. For instance, symmetry-preserving ansätze (SPA) can maintain CCSD-level accuracy while being more executable on NISQ hardware [23].

However, UCCSD maintains advantages in certain scenarios, particularly for modeling strong electron correlation during bond dissociation. While classical methods like CCSD struggle with such cases, both UCCSD and high-depth symmetry-preserving HEAs can accurately capture static correlation effects [23]. This makes UCCSD valuable for applications requiring high accuracy across potential energy surfaces, despite its higher resource demands.

Error mitigation plays a crucial role in both approaches. Studies show that with optimized Twirled Readout Error Extinction (T-REx), even older-generation 5-qubit processors can achieve ground-state energy estimations an order of magnitude more accurate than those from more advanced 156-qubit devices without error mitigation [5].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful benchmarking studies require both computational tools and theoretical frameworks. The following table summarizes key resources for researchers conducting ground state calculations.

Table 3: Essential Research Toolkit for Molecular Ground State Calculations

| Tool/Technique | Category | Function/Purpose | Example Applications |

|---|---|---|---|

| UCCSD Ansatz | Algorithmic | Physics-inspired high-accuracy reference | Benchmarking, small molecules |

| Symmetry-Preserving Ansatz (SPA) | Algorithmic | Hardware-efficient with physical constraints | NISQ device implementations |

| ADAPT-VQE | Algorithmic | Resource-adaptive ansatz construction | Medium-sized molecules |

| T-REx | Error Mitigation | Readout error mitigation | Improving VQE parameter quality [5] |

| Quantum Detector Tomography | Error Mitigation | Measurement error characterization | High-precision measurements [24] |

| Locally Biased Measurements | Measurement | Reduced shot overhead | Efficient energy estimation [24] |

| Bravyi-Kitaev Mapping | Encoding | Fermion-to-qubit transformation | Reducing qubit requirements |

| VQE with SPSA Optimizer | Optimization | Noise-resilient parameter optimization | Hardware experiments |

Figure 2: Noise Resilience Strategies for Quantum Molecular Calculations

The benchmarking of H₂, H₃⁺, and HeH⁺ ground state calculations reveals a complex landscape where no single approach dominates all metrics. UCCSD remains valuable for high-accuracy studies where circuit depth is less critical, while hardware-efficient ansätze offer more practical implementability on current devices. The emerging class of adaptive algorithms like CEO-ADAPT-VQE shows promise for balancing these competing demands with dramatically reduced resource requirements [15].

For near-term applications, symmetry-preserving hardware-efficient ansätze present a compelling option, achieving CCSD-level accuracy with significantly fewer resources than UCCSD [23]. As quantum hardware continues to improve with better coherence times and gate fidelities, the balance may shift toward more physically-motivated ansätze like UCCSD that can leverage increased computational resources for higher accuracy.

The progress in benchmark molecular calculations mirrors historical developments in computational chemistry. The current state of H₃⁺ calculations, where theory and experiment agree within 1 cm⁻¹, recalls the situation with H₂ in 1975 when the theory of Kołos and Wolniewicz and Herzberg's experiment agreed within 1 cm⁻¹ [22]. This steady progression from two-proton to three-proton systems over three decades, enabled by advances in both computational methods and hardware, provides a promising trajectory for the future of quantum computational chemistry.

Future research directions should focus on developing more noise-resilient ansätze, improving error mitigation strategies, and establishing standardized benchmarking protocols across different hardware platforms. As these efforts advance, the lessons learned from simple benchmark molecules will directly inform the study of more complex systems with practical applications in drug development and materials science.

In the Noisy Intermediate-Scale Quantum (NISQ) era, the Variational Quantum Eigensolver (VQE) has emerged as a leading algorithm for molecular simulations, promising to tackle electronic structure problems that challenge classical computational methods [13] [20]. The core pursuit in this field revolves around developing ansätze that balance chemical accuracy with resilience to hardware noise. This guide objectively compares the performance of two dominant approaches: the chemically inspired Unitary Coupled Cluster Singles and Doubles (UCCSD) ansatz and various hardware-efficient strategies, providing a detailed analysis of their implementation from Hamiltonian encoding to parameter optimization, contextualized within noise resilience research.

Performance Comparison: UCCSD vs. Hardware-Efficient Ansätze

The choice of ansatz critically determines the quantum resource requirements, optimization efficiency, and ultimate noise resilience of a VQE simulation. The table below provides a quantitative performance comparison between UCCSD and several hardware-efficient or modified ansätze, drawing from recent research findings.

Table 1: Performance and Resource Comparison of VQE Ansätze

| Algorithm / Ansatz | Reported Accuracy | Circuit Depth / CNOT Count | Measurement & Optimization Efficiency | Key Noise Resilience Features |

|---|---|---|---|---|

| UCCSD (Trotterized) | Comparable to FCI for small systems in noiseless simulations [13] | Scaling: (O(N^4\tau)) two-qubit gates [13]; High depth often impractical on NISQ devices [13] [20] | High number of measurements [15]; Optimization hindered by parameter correlation [20] | Significant errors (∼1 Hartree) reported on hardware without mitigation [13] |

| Parallelized Givens Ansatz | Ground-state energies comparable to UCCSD [13] | 50-70% reduction in circuit depth vs. UCCSD [13] | Not explicitly reported | Order of magnitude lower energy error rate than UCCSD in noisy simulations [13] |

| CEO-ADAPT-VQE* | Outperforms UCCSD [15] | Up to 88% reduction in CNOT count vs. early ADAPT-VQE [15] | Five orders of magnitude decrease in measurement costs vs. static ansätze [15] | Dynamical construction avoids Barren Plateaus (empirically suggested) [15] |

| Hardware-Efficient Ansatz (HEA) | Accuracy challenges for larger systems [20] | Shallow, device-tailored depth [20] | Suffers from Barren Plateaus, making optimization difficult [15] | Tailored to hardware connectivity, but trainability is a key challenge [15] |

Experimental Protocols and Methodologies

A critical assessment of algorithm performance requires a clear understanding of the experimental protocols used to generate the cited data. This section details the key methodologies.

Protocol: Noisy Simulation for Energy Error Benchmarking

This protocol was used to demonstrate the superior noise resilience of the Parallelized Givens Ansatz compared to UCCSD [13].

- Ansatz Implementation: The UCCSD ansatz is implemented using a single Trotter step. The Parallelized Givens Ansatz is constructed from low-depth circuits based on parallelized Givens rotations for an arbitrary active space.

- Noise Simulation: The energy expectation value of each ansatz is computed using a quantum circuit simulator that incorporates a realistic hardware noise model. This model typically includes parameters for qubit decoherence times ((T1), (T2)), gate infidelities (especially for two-qubit gates), and readout errors.

- Energy Calculation: The ground-state energy for a target molecule (e.g., water, nitrogen, oxygen) is calculated for both ansätze under the noisy simulation.

- Error Metric: The energy error is defined as the absolute difference between the energy obtained from the noisy simulation and the exact or noiseless simulated energy. The Parallelized Givens Ansatz demonstrated an order of magnitude lower error than UCCSD [13].

Protocol: Resource Count and Measurement Cost Analysis

This methodology quantifies the quantum computational resources required by different algorithms, as seen in the comparisons involving CEO-ADAPT-VQE* and UCCSD [15].

- System Selection: Molecular systems such as LiH, H(6), and BeH(2), represented using 12 to 14 qubits, are selected as benchmarks.

- Algorithm Execution: Different VQE algorithms (e.g., UCCSD, fermionic ADAPT-VQE, CEO-ADAPT-VQE*) are run in noiseless simulations until they reach chemical accuracy (typically 1.6 mHa or ~1 kcal/mol).

- Resource Tally: For each algorithm at the point of convergence, the following are recorded:

- CNOT Count: The total number of CNOT gates in the quantum circuit.

- CNOT Depth: The longest path of consecutive CNOT gates, determining the minimum execution time.

- Parameter Count: The number of variational parameters in the ansatz.