Pioneering the Quantum Realm: Revisiting Hans Hellmann's 1937 Seminal Work in Quantum Chemistry

This article provides a comprehensive analysis of Hans Hellmann's 1937 textbook, 'Einführung in die Quantenchemie,' recognized as the first quantum chemistry book.

Pioneering the Quantum Realm: Revisiting Hans Hellmann's 1937 Seminal Work in Quantum Chemistry

Abstract

This article provides a comprehensive analysis of Hans Hellmann's 1937 textbook, 'Einführung in die Quantenchemie,' recognized as the first quantum chemistry book. Tailored for researchers, scientists, and drug development professionals, it explores the foundational concepts Hellmann introduced, including the Hellmann-Feynman theorem and pseudopotentials. The article details the methodological applications of these principles in modern computational chemistry, examines historical and contemporary challenges in the field that Hellmann's work helps address, and validates his contributions through comparative analysis with his contemporaries. By synthesizing these perspectives, the article underscores the enduring impact of Hellmann's pioneering work on today's molecular modeling and drug discovery efforts.

The Genesis of a New Discipline: Hellmann's 1937 Quantum Chemistry Textbook

The 1930s represented a decade of profound transformation in both political structures and scientific thought. This period of economic crisis and intellectual ferment created the unique conditions that enabled groundbreaking interdisciplinary work, particularly in the emerging field of quantum chemistry. The decade's instability catalyzed a rethinking of fundamental principles across multiple domains—from economic systems to the very understanding of matter at the atomic level. Within this context, scientists like Hans Hellmann made pioneering contributions, with his 1937 textbook providing one of the first comprehensive treatments of quantum chemistry. This paper examines the intricate connections between the period's political upheavals, scientific networks, and theoretical breakthroughs that collectively shaped Hellmann's work and the broader development of quantum theory applied to chemical systems.

The Political and Economic Landscape of the 1930s

The 1930s were fundamentally defined by global economic collapse and the political responses it provoked. Understanding this backdrop is essential for contextualizing the environment in which scientific research was conducted.

The Great Depression and Policy Responses

The Great Depression began with the Stock Market Crash of October 1929 and resulted in devastating unemployment rates, which peaked at approximately 25% nationally in the United States, with African Americans and other minority groups facing even higher joblessness exceeding 50% [1]. This economic catastrophe prompted dramatic policy shifts, most notably Franklin D. Roosevelt's New Deal implemented after his 1932 election [2] [1]. These programs significantly expanded government's role in the economy through job creation initiatives like the Civilian Conservation Corps (CCC) and Civil Works Administration (CWA), and established foundational social safety nets including the Social Security Act [1].

Table: Key Economic Indicators and Policy Responses in the 1930s

| Indicator/Policy | Description | Impact |

|---|---|---|

| Unemployment Rate | Peaked at ~25% nationally (1933); ~50% for African Americans [1] | Widespread poverty, migration, social unrest |

| Dust Bowl | Ecological crisis in Great Plains due to soil erosion and drought [1] | Agricultural collapse, mass migration westward |

| New Deal Programs | Series of federal projects and financial reforms (1933-1939) [1] | Expanded government role, created social safety net |

| Shift from Gold Standard | Emergency Banking Act (1933) removed dollar from gold standard [1] | Increased monetary flexibility, expanded paper currency |

The economic distress fueled political polarization and the rise of authoritarian regimes, creating what one analysis characterizes as a "nationalistic, populist and angry" political environment across many countries [3]. This political climate had direct consequences for scientific communities, particularly in Europe.

Impact on Scientific Communities

The political turmoil of the 1930s had profound effects on scientific institutions and individual researchers. In Germany, the Nazi ascent to power in 1933 initiated the dismissal of Jewish and dissident scientists from university positions [4]. This forced migration, while devastating for individuals, inadvertently facilitated the transfer of quantum theoretical frameworks to other countries, particularly the United States and Britain [4] [5]. The University of Vienna became a stronghold of political reaction and antisemitism, with right-wing groups like the "Bear's Lair" secretly controlling academic appointments and rejecting qualified Jewish and left-wing scholars [4].

This politically charged atmosphere stood in stark contrast to the ideal of "pure science" advocated by some American institutions like Abraham Flexner's Institute for Advanced Study in New Jersey, founded in 1930 to pursue research for its own sake [4]. Central European scientists who witnessed how political reaction co-opted science and technology developed a different perspective, arguing that the integrity of science depended not on isolation from the public but on enriching and deepening its connection to society [4].

The Evolution of Quantum Theory: From Physics to Chemistry

While political systems were being reconfigured, a parallel revolution was occurring in the physical sciences. Quantum mechanics, born in the first quarter of the 20th century, reached maturity and began transforming chemistry during the 1930s.

Theoretical Foundations and Key Developments

Quantum theory developed through a process of reinterpretation of classical physics concepts within a new framework, rather than a simple replacement of existing knowledge [6]. The "old quantum theory" began around 1900 with Max Planck's introduction of energy quanta to explain black-body radiation, followed by Albert Einstein's 1905 proposal of light quanta to explain the photoelectric effect [7]. The modern era of quantum mechanics began around 1925 with the invention of wave mechanics by Erwin Schrödinger and matrix mechanics by Werner Heisenberg [7].

Table: Major Theoretical Developments in Quantum Chemistry (1920s-1930s)

| Year | Development | Key Contributors | Significance |

|---|---|---|---|

| 1927 | Quantum treatment of hydrogen molecule | Walter Heitler, Fritz London [8] [9] | First demonstration of covalent bond quantum origin |

| 1929 | Molecular orbital theory | Friedrich Hund, Robert S. Mulliken [8] [9] | Electrons described via delocalized molecular orbitals |

| 1930s | Valence bond theory development | Linus Pauling, John C. Slater [8] | Integrated quantum mechanics with chemical bonding concepts |

| 1937 | First quantum chemistry textbook | Hans Hellmann [10] [8] | Systematized knowledge for chemists |

The 1927 paper by Walter Heitler and Fritz London on the hydrogen molecule is often recognized as the first milestone in quantum chemistry, providing the first quantum-mechanical explanation of the chemical bond [8] [9]. This work was extended throughout the 1930s by researchers including Linus Pauling, who integrated various theoretical approaches into a coherent framework known as valence bond theory [8].

Institutional Networks and Scientific Cooperation

The development of quantum theory was fundamentally a collaborative endeavor centered around key institutional hubs. A central finding of the "Networks of Early Quantum Theory" project is that success depended on integrating local institutions into a broader network of quantum research [6]. Major centers included:

- Munich: Arnold Sommerfeld's institute served as a "nursery of theoretical physics," with Sommerfeld's exceptional talent as a charismatic teacher and networker making Munich a central node [6].

- Göttingen: Despite resource limitations, this center leveraged its strong mathematics department under David Hilbert and Felix Klein, with Max Born returning in 1921 to establish another quantum physics hub [6].

- Copenhagen: Niels Bohr's institute benefited from Denmark's neutrality, allowing it to serve as an international meeting point during politically turbulent times [6].

These centers maintained intensive exchanges that enabled the rapid development and dissemination of quantum theoretical approaches. The collaborative nature of this enterprise stands in stark contrast to the nationalist political trends of the period.

Hans Hellmann's Contribution in Context

Against this backdrop of political upheaval and scientific transformation, Hans Hellmann made his pioneering contributions to quantum chemistry. His work exemplifies both the interdisciplinary nature of the field and the impact of political forces on scientific dissemination.

Hellmann's 1937 Textbook and Scientific Contributions

Hans Gustav Adolf Hellmann (1903-1938) was a co-founder of quantum chemistry who investigated quantum chemical approximation methods and descriptive approaches to characterize electron bonding in chemical systems [10]. His most significant contribution was the 1937 publication "Einführung in die Quantenchemie" (Introduction to Quantum Chemistry), one of the first textbooks explicitly devoted to quantum chemistry [10] [8] [9]. This work appeared simultaneously in Russian and German, reflecting the international character of scientific exchange despite political tensions [10].

Hellmann developed the foundations of the pseudopotential method for molecules and solids under the name "combined approximation method," which has become one of the most important methods in quantum chemistry for calculating compounds of heavy atoms [10]. He also published articles on quantum chemistry for chemists and laypeople interested in science, indicating a commitment to broader scientific communication [10].

Political Circumstances and Scientific Legacy

Tragically, Hellmann's career was cut short by the political circumstances of his time. He died in Moscow in 1938 during Stalin's purges [10]. His legacy, however, endured through his textbook and theoretical contributions. The biennial Hans Hellmann Lecture, awarded by the Department of Chemistry at Philipps University of Marburg to leading researchers in quantum chemistry, continues to honor his contributions to the field [10].

Hellmann's work emerged at the intersection of multiple scientific traditions, reflecting the collaborative, international network of quantum researchers. His approach combined physical rigor with chemical intuition, helping to bridge the conceptual gap between these disciplines during a period of rapid theoretical development.

Methodology: Computational Approaches in 1930s Quantum Chemistry

The quantum chemistry of the 1930s relied on increasingly sophisticated computational approaches to solve the Schrödinger equation for chemical systems. These methodologies formed the "research reagents" that enabled theoretical advances.

Core Theoretical and Computational Framework

The fundamental challenge of quantum chemistry was (and remains) solving the Schrödinger equation for many-electron systems. The core equation for electronic structure is:

ĤelΨ = EΨ

Where Ĥel is the electronic Hamiltonian, Ψ is the electronic wave function, and E is the energy [9]. Exact analytical solutions are only possible for one-electron systems like the hydrogen atom, requiring approximations for molecular systems [8] [9].

The primary methodological frameworks developed during this period included:

- Valence Bond (VB) Theory: Extended from the Heitler-London approach, emphasizing pairwise interactions between atoms and localized electron-pair bonds, incorporating concepts of orbital hybridization and resonance [8] [9].

- Molecular Orbital (MO) Theory: Developed by Hund and Mulliken, describing electrons in mathematical functions delocalized over entire molecules, forming the basis for the Hartree-Fock method and self-consistent field (SCF) iterations [8] [9].

- Density Functional Theory (DFT): Originating with the 1927 Thomas-Fermi model, which used electronic density rather than wave functions as the fundamental variable, though this early version was too crude for molecular applications [8].

The Scientist's Toolkit: Key Research Reagents

Quantum chemists in the 1930s worked with both conceptual and mathematical tools that constituted the essential "research reagents" of their field.

Table: Essential Research Reagents in 1930s Quantum Chemistry

| Research Reagent | Function | Theoretical Basis |

|---|---|---|

| Born-Oppenheimer Approximation | Separates electronic and nuclear motion by treating nuclei as fixed [8] [9] | Allows solution of electronic Schrödinger equation for fixed nuclear coordinates |

| Wave Function (Ψ) | Describes quantum state of electrons; contains all information about system [9] | Solution to Schrödinger equation; used to calculate observable properties |

| Hamiltonian Operator (Ĥ) | Mathematical representation of total energy of quantum system [9] | Sum of kinetic energy and potential energy terms for all particles |

| Self-Consistent Field (SCF) | Iterative computational procedure to solve Hartree-Fock equations [9] | Each electron moves in average field of others until consistency achieved |

| Linear Combination of Atomic Orbitals (LCAO) | Constructs molecular orbitals as sums of atomic orbitals [9] | Basis for molecular orbital theory calculations |

| Electron Density (ρ) | Probability distribution of electrons in space [8] | Fundamental variable in density functional theory |

These methodological approaches enabled the first quantum mechanical calculations of molecular properties, laying the groundwork for the computational chemistry methods that would develop in subsequent decades.

The 1930s represented a critical period of convergence between political transformation and scientific revolution. The economic collapse of the Great Depression and the resulting political realignments created both barriers and opportunities for scientific advancement. Against this backdrop, quantum chemistry emerged as a distinct discipline through the collaborative efforts of an international network of researchers, including Hans Hellmann, whose 1937 textbook helped systematize and disseminate the new methodology. Hellmann's work, and that of his contemporaries, established the theoretical and computational foundations that would enable the profound advances in molecular science throughout the remainder of the 20th century. The development of quantum chemistry during this period exemplifies how scientific progress can occur even amidst political and economic turmoil, driven by interdisciplinary collaboration and theoretical innovation.

Hans Gustav Adolf Hellmann (1903–1938) was a pioneering theoretical physicist and one of the founding fathers of quantum chemistry. His scientific career, marked by profound theoretical contributions, was tragically cut short by political persecution. This whitepaper details Hellmann's academic journey, his groundbreaking work—including the Hellmann-Feynman theorem and the development of pseudopotentials—and his forced exile from Nazi Germany to the Soviet Union, where he authored the first quantum chemistry textbook in 1937. Framed within a broader thesis on his seminal 1937 book, this analysis examines the interplay between his scientific innovations and the tumultuous socio-political forces that shaped his life and legacy. The content is structured to provide researchers, scientists, and drug development professionals with a comprehensive understanding of Hellmann's methodological approaches and their enduring impact on modern computational chemistry.

Hans Gustav Adolf Hellmann stands as a monumental figure in the foundation of quantum chemistry, a field dedicated to applying quantum mechanics to chemical systems [8]. His most enduring legacy, the 1937 textbook "Einführung in die Quantenchemie" ("Introduction to Quantum Chemistry"), was the first of its kind, predating other seminal works and establishing a comprehensive framework for the discipline [10] [11]. Hellmann's career was characterized by the development of foundational theoretical tools, such as the Hellmann-Feynman theorem and the pseudopotential approach, which remain critical in contemporary electronic structure calculations for molecules and materials [12] [13]. However, his scientific journey was inextricably linked to the political upheavals of early 20th-century Europe. As a German scientist with a Jewish wife, Hellmann was declared 'undesirable' and dismissed from his academic post following the Nazi rise to power in 1933 [14] [13]. His subsequent emigration to the Soviet Union, intended as an escape from persecution, ultimately led to his arrest and execution in 1938 during Stalin's Great Purge [12] [14]. This whitepaper chronicles Hellmann's academic path, his forced exile, and the profound scientific contributions made under duress, contextualizing his 1937 monograph as a cornerstone of theoretical chemistry whose relevance persists in modern drug development and materials science.

Academic Formation and Early Scientific Contributions

Hans Hellmann's academic journey began in the vibrant scientific landscape of post-World War I Germany. Born on 14 October 1903 in Wilhelmshaven, he initially enrolled at the Institute of Technology in Stuttgart to study electrical engineering but swiftly shifted his focus to physics [14] [13]. His early training exposed him to an exceptional cohort of mentors, including Otto Hahn and Lise Meitner at the Kaiser Wilhelm Institute for Chemistry in Berlin, who supervised his master's thesis on preparing radioactive samples [14] [13]. He completed his doctoral degree (Dr.-Ing.) in 1929 under Professor Erich Regener at the University of Stuttgart, with an experimental investigation into the decomposition of ozone and the ionization of the stratosphere [12] [15]. It was in Regener's household that Hellmann met his future wife, Victoria Bernstein, the foster daughter of Regener and a woman of Jewish-Ukrainian origin; they married in 1929 and had a son, Hans Hellmann Jr., later that year [14] [16].

In 1929, Hellmann began an assistantship at the Leibniz University Hannover, where he was immersed in a stimulating environment of chemists and physicists [12] [13]. Influenced by lectures from Walther Kossel on valence theory and the proximity to Göttingen—a world center for quantum mechanics—Hellmann pivoted his research to the nascent field of quantum chemistry [14] [13]. His work sought to decode the physical nature of chemical bonding using the tools of quantum mechanics.

During this fertile period in Hannover (1930-1934), Hellmann produced his most impactful theoretical contributions, detailed in his publications in Zeitschrift für Physik [16] [15]. The following table summarizes his key early scientific achievements:

Table 1: Key Early Scientific Contributions of Hans Hellmann (1930-1934)

| Year | Contribution | Core Concept | Modern Application |

|---|---|---|---|

| 1933 | Molecular Virial Theorem [13] | Relates the total kinetic energy of a molecule to the expectation value of the forces acting on it [14]. | Fundamental for validating the accuracy of quantum chemical computations. |

| 1933 | Hellmann-Feynman Theorem [14] [13] | Demonstrates that the force on a nucleus in a molecule can be calculated classically from the quantum-mechanical electron charge distribution. | Essential for calculating molecular forces, geometries, and vibrational frequencies efficiently. |

| 1934 | Pseudopotential Method [10] [13] | Consolidates the effect of the nucleus and core electrons into an effective potential, allowing focus on valence electrons. | Enables quantum calculations for heavy atoms and large systems, including materials and biomolecules. |

| 1934 | Diatomics-in-Molecules (DIM) Approach [13] | A method for constructing potential energy surfaces for polyatomic molecules from diatomic fragments. | Used in the study of reaction dynamics and potential energy surfaces for chemical reactions. |

These pioneering works provided the foundation for his planned Habilitation (a post-doctoral qualification in Germany). However, with the Nazi seizure of power in 1933 and the enactment of the "Law for the Restoration of the Professional Civil Service," Hellmann's academic trajectory was violently interrupted. As his wife was Jewish, he was deemed 'undesirable' and was officially dismissed from his position on 24 December 1933 [14] [16].

Forced Exile and the Moscow Period

Compelled to seek refuge outside Nazi Germany, Hellmann, like many of his colleagues, was forced to emigrate. He received multiple job offers, including one from an American university, but ultimately accepted a position as the head of the theory group in the Department of the Structure of Matter at the Karpov Institute of Physical Chemistry in Moscow [14] [17]. This decision was influenced by his wife's Ukrainian origins, his own socialist political leanings, and an attractive offer from the well-funded Soviet institute [16]. In May 1934, Hellmann, along with his wife and son, relocated to the Soviet Union [14].

Initially, Hellmann thrived in his new environment. He was awarded the Russian equivalent of a full professorship in 1935, received significant salary increases, and was appointed a "Leading Scientist" in 1937 [14] [16]. He continued his prolific research output, mentoring a cohort of young PhD students and postdocs [16]. It was during this period that he achieved a long-standing goal: the publication of his quantum chemistry textbook.

However, the political climate in the Soviet Union deteriorated dramatically with the onset of Stalin's Great Terror in 1937-38 [14] [17]. Foreigners, even those who had fled fascism, became targets of suspicion. Hellmann's privileged status and success likely fueled jealousy and denunciation. In late 1937, he wrote to his mother, "I am really afraid to engage in too much correspondence with other countries... In fact, the wall between us gets higher every day" [14]. On the night of 9 March 1938, Soviet secret police (NKVD) broke into the family apartment, arrested Hellmann for "espionage," and imprisoned him [14] [18]. After a brief and undoubtedly unjust trial, Hans Hellmann was executed on 29 May 1938, at the age of 34 [12] [14]. His family would not learn his fate for over fifty years [14].

Table 2: Chronology of Hans Hellmann's Exile and Persecution

| Date | Event | Contextual Significance |

|---|---|---|

| 24 Dec 1933 | Dismissed from Hannover position [16] | Enforcement of Nazi racial laws against civil servants. |

| May 1934 | Emigrates to USSR, joins Karpov Institute [14] | Part of a wave of German scientists fleeing persecution. |

| 1935-1937 | Successful scientific career in Moscow [16] | Period of high productivity, including textbook publication. |

| June 1936 | Becomes a Soviet citizen [16] | An attempt to secure his position in a tightening political system. |

| Early 1937 | Publishes Kvantovaya Khimiya (Russian) [16] | First quantum chemistry textbook, aimed at a broad audience. |

| Late 1937 | Publishes Einführung in die Quantenchemie (German) [10] | Revised German edition, published in Vienna. |

| 9 Mar 1938 | Arrested by the NKVD [14] | Targeted as a foreigner during Stalin's Great Purge. |

| 29 May 1938 | Executed by firing squad [12] [14] | One of hundreds of thousands of victims of the Purge. |

Hellmann's 1937 Masterwork: "Einführung in die Quantenchemie"

Hellmann's 1937 textbook, "Einführung in die Quantenchemie" ("Introduction to Quantum Chemistry"), represents the crystallization of his scientific thought and pedagogical vision. The book was the product of years of development; Hellmann had begun the manuscript while still in Germany, but was unable to find a publisher after Hitler's rise to power [14] [16]. Upon his arrival in Moscow, he refined the material through a lecture course at the Karpov Institute, with his students sometimes assisting him in finding the appropriate Russian terminology for new concepts [16].

Two versions of the textbook were published in 1937:

- "Kvantovaya Khimiya": The Russian-language version was published first in Moscow. It was comprehensive (546 pages) and written to be accessible to a largely unprepared reader [16] [18].

- "Einführung in die Quantenchemie": The German edition was a revised and more compact version (350 pages), published by Franz Deuticke in Leipzig and Vienna. This version placed higher demands on the reader's preparation [10] [16].

The textbook synthesized and explained the key quantum mechanical principles underlying chemical phenomena. Notably, it served as a vehicle for Hellmann to elaborate on his own recent innovations, ensuring their preservation and dissemination. The text covered the physical nature of the covalent bond, the virial and Hellmann-Feynman theorems, and the pseudopotential approach [11]. Unlike other contemporary works, Hellmann's book was not merely a presentation of quantum mechanics for chemists; it was a foundational work that helped define the very field of quantum chemistry [11]. Tragically, the political circumstances surrounding its author—his arrest just months after publication and the subsequent onset of World War II—prevented the book from achieving immediate widespread influence, particularly the German edition [16]. For decades, its significance was overshadowed by Hellmann's tragic fate.

The Scientist's Toolkit: Key Methodologies and Conceptual Frameworks

Hellmann's research provided a suite of powerful conceptual and mathematical tools that have become indispensable in computational chemistry. The following table details these key "reagent solutions" and their functions in the quantum chemist's toolkit.

Table 3: Research Reagent Solutions: Core Methodologies Pioneered by Hans Hellmann

| Concept/Method | Function in Quantum Chemistry | Role in Modern Computation |

|---|---|---|

| Hellmann-Feynman Theorem [14] [13] | States that the force acting on a nucleus in a molecule is the classical electrostatic force exerted by the electron charge distribution and other nuclei. | Provides an efficient way to compute forces on nuclei directly from the electron density, crucial for geometry optimization and molecular dynamics simulations. |

| Pseudopotentials (Combined Approximation) [10] [13] | Replaces the complex, strongly interacting core electrons and nucleus with an effective potential that acts on the valence electrons. | Dramatically reduces computational cost by allowing calculations to focus on chemically active valence electrons, enabling studies of heavy elements and large systems. |

| Molecular Virial Theorem [13] | Establishes a rigorous relationship between the kinetic (T) and potential (V) energies of a molecule: 2⟨T⟩ + ⟨V⟩ = 0 for a system at its equilibrium geometry. | Serves as a critical check for the quality and validity of approximate wavefunctions in electronic structure calculations. |

| Diatomics-in-Molecules (DIM) [13] | A method to construct the potential energy surface of a polyatomic molecule from the known potential energy curves of its constituent diatomic molecules and the atomic energies. | Used for constructing global potential energy surfaces to model chemical reaction pathways and dynamics. |

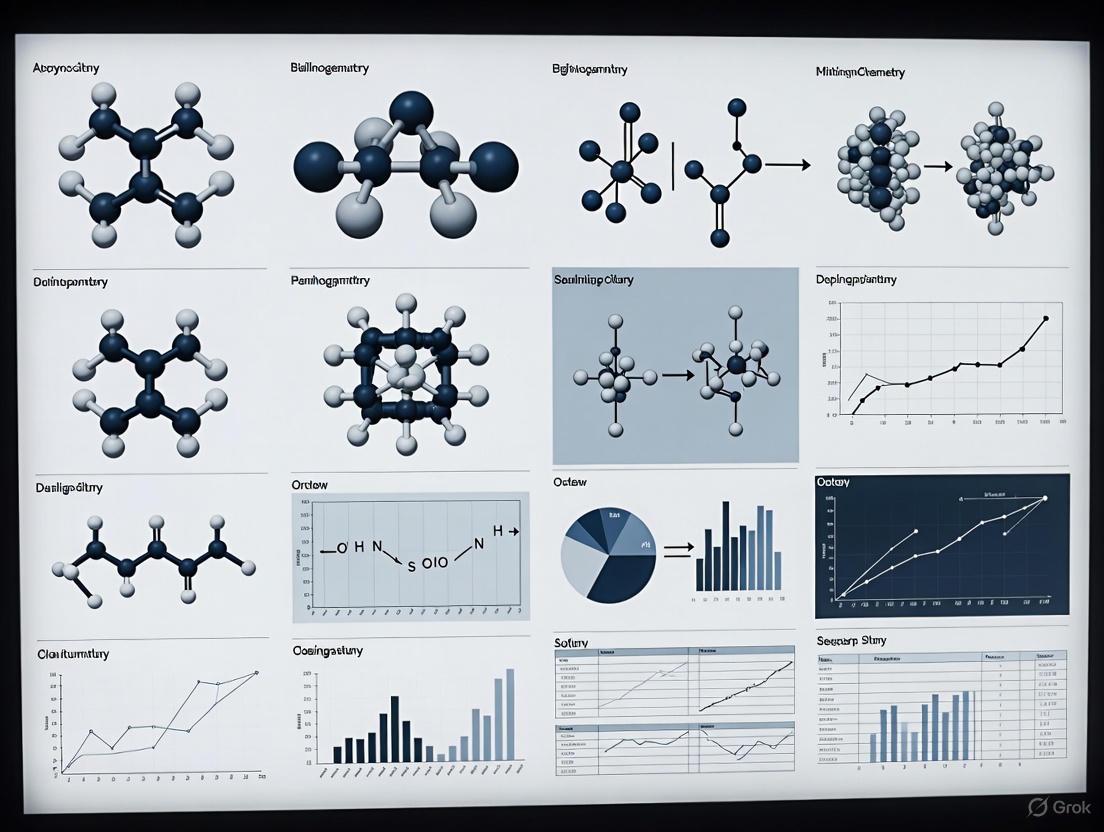

The logical relationships and workflow between Hellmann's key theoretical contributions and their application in solving quantum chemical problems can be visualized as a coherent computational pathway. The following diagram maps this logical structure:

Diagram 1: Logical workflow of Hellmann's key contributions and their applications in quantum chemistry.

Legacy and Impact on Modern Science

Despite his tragic and premature death, Hans Hellmann's scientific legacy endures. For decades, his story was largely unknown, and his name was often merely associated with the Hellmann-Feynman theorem alongside Richard Feynman, who independently derived it later [16] [13]. However, due to the determined efforts of his son, Hans Hellmann Jr., who emigrated from the USSR to Germany in 1991, and dedicated historians and scientists like Eugen Schwarz, a comprehensive biography was eventually published, restoring Hellmann's rightful place in the history of science [14] [13].

His methodological innovations form the bedrock of modern computational chemistry. The pseudopotential method is a standard technique in the calculation of electronic structures for molecules containing heavy atoms, such as catalysts and metalloproteins relevant to drug design [10] [13]. The Hellmann-Feynman theorem is fundamental to all calculations of molecular forces, vibrations, and geometries in software packages used widely in academic and industrial research [14] [11]. Furthermore, his 1937 textbook has been posthumously recognized as a landmark achievement. A new edition of "Einführung in die Quantenchemie" was published in 2015, containing biographical notes and a full list of his publications, finally granting his work the enduring recognition it deserved [11].

Hellmann's life and work stand as a powerful testament to scientific ingenuity in the face of immense adversity. His contributions continue to enable the prediction of spectroscopic data, reaction pathways, and electronic properties of molecules—tools that are indispensable for researchers and drug development professionals working to understand molecular interactions and design new therapeutics.

Historical and Biographical Context

Hans Hellmann: A Scientific Pioneer

Hans Hellmann was a German theoretical physicist who became one of the co-founders of quantum chemistry [10]. As early as the 1930s, he investigated quantum chemical approximation methods and developed descriptive approaches to characterize electron bonding in chemical systems [10]. His significant contributions, achieved in a short professional lifespan, include:

- Elucidating the nature of the covalent chemical bond (1933)

- Formulating the molecular virial theorem (1933)

- Developing the quantal force theorem (1933, 1936/1937), now known as the Hellmann-Feynman theorem

- Creating the pseudopotential method (1934), originally termed the "combined approximation method"

- Pioneering the theory of diabatical and adiabatical elementary reactions (1935), later expanded by Born and Huang [19]

Despite these groundbreaking achievements, Hellmann's legacy was overshadowed by his tragic personal circumstances. Due to his wife's Jewish ancestry, he faced increasing persecution in Nazi Germany and eventually emigrated to the Soviet Union in 1934, where he continued his research at the Karpov Institute of Physical Chemistry [20]. His textbook content was delivered as lectures at this institute during 1935/1936 before publication [20]. Tragically, he fell victim to Stalin's Great Purge and was executed in Moscow in 1938 [10].

The Dual Publication Circumstances

The parallel publication of Hellmann's work in Russian and German reflects both the international nature of science and the personal circumstances of the author. The Russian edition, "Kvantovaya Khimiya," was published in Moscow in 1937, based on lectures Hellmann delivered at the Karpov Institute [20]. The German edition, "Einführung in die Quantenchemie," was published the same year by Franz Deuticke in Leipzig and Vienna, described as a "shortened and partially reworked version" of the Russian original [20]. This dual publication strategy ensured the dissemination of his work across both linguistic and political divides during a period of increasing scientific isolationism.

Table: Bibliographic Details of Hellmann's Dual First Editions

| Characteristic | Russian Edition ('Kvantovaya Khimiya') | German Edition ('Einführung in die Quantenchemie') |

|---|---|---|

| Publication Year | 1937 | 1937 |

| Publisher | Not specified in sources | Franz Deuticke, Leipzig and Vienna |

| Language | Russian | German |

| Relationship | Original work | Shortened and partially reworked version |

| Lecture Basis | Delivered at Karpov Institute (1935/1936) | Not delivered as lectures |

| 2015 Reprint | Not indicated | Springer Spektrum, edited by Dirk Andrae |

Technical Content and Scientific Contributions

Foundational Quantum Chemistry Concepts

Hellmann's textbook integrated several pioneering theoretical frameworks that would become fundamental to quantum chemistry. His work provided one of the first systematic treatments applying quantum mechanics to chemical systems, bridging the gap between theoretical physics and practical chemistry. The text covered several key areas:

Electronic Structure and Chemical Bonding: Hellmann explicated the quantum-mechanical nature of chemical bonds, building upon the work of Heitler and London's 1927 quantum mechanical treatment of the hydrogen molecule [8]. His approach helped establish the theoretical basis for understanding how atomic orbitals combine to form molecular bonds.

Computational Methodologies: The textbook introduced what would later become essential computational techniques in quantum chemistry, most notably the pseudopotential method [10]. This "combined approximation method" provided a practical approach for dealing with the complex electron interactions in molecules and solids, particularly for systems containing heavy atoms where relativistic effects become significant.

The Hellmann-Feynman Theorem

One of the most enduring contributions detailed in Hellmann's work was the Hellmann-Feynman theorem [19]. This theorem establishes a fundamental relationship between changes in the energy of a quantum system and changes in parameters within the system's Hamiltonian. Mathematically, the theorem states that for a normalized eigenstate ψ with energy E and a parameter λ in the Hamiltonian H:

dE/dλ = ⟨ψ| ∂H/∂λ |ψ⟩

This theorem provides profound physical insight by demonstrating that forces on nuclei in molecules can be calculated classically from the electronic charge distribution, offering a powerful conceptual and computational tool for analyzing molecular structures and reactions.

Computational Framework and Approximation Methods

Hellmann's text established a systematic approach to tackling the quantum many-body problem in chemical systems. His methodology centered on making the Schrödinger equation computationally tractable for molecular systems through strategic approximations:

Born-Oppenheimer Approximation: Although not Hellmann's original contribution, his textbook emphasized the importance of this approximation, which allows the separation of electronic and nuclear motions due to their significant mass difference [8]. This foundation enables the concept of potential energy surfaces, crucial for understanding molecular structure and dynamics.

Pseudopotential Method: Hellmann's development of the pseudopotential approach (termed "combined approximation method") represented a breakthrough in simplifying electronic structure calculations [10]. This method effectively replaces the complex effects of core electrons with an effective potential, dramatically reducing computational complexity while maintaining accuracy for valence electron behavior.

Diagram: Conceptual workflow of Hellmann's pseudopotential method, transforming a computationally intensive quantum problem into a tractable model system through effective potentials.

Methodological Framework for Quantum Chemical Analysis

Theoretical Foundations of Quantum Chemistry

Hellmann's textbook established a rigorous methodological framework for applying quantum mechanics to chemical problems. His approach systematically addressed the fundamental challenge of quantum chemistry: obtaining approximate solutions to the Schrödinger equation for many-electron systems where exact solutions are mathematically impossible [8]. The methodological progression can be summarized as:

Wavefunction Formulation: The foundation begins with the time-independent Schrödinger equation Hψ = Eψ, where H represents the molecular Hamiltonian, ψ is the wavefunction describing the quantum state, and E is the corresponding energy eigenvalue. For molecular systems, the Hamiltonian incorporates kinetic energy terms for all electrons and nuclei plus potential energy terms for all Coulomb interactions between these particles.

Approximation Hierarchy: Hellmann organized quantum chemical methods into a hierarchy of approximations, balancing computational feasibility against physical accuracy. This included the development of semi-empirical methods that incorporate experimental data to simplify computations, a pragmatic approach essential in an era before electronic computers.

Experimental and Computational Protocols

While quantum chemistry is fundamentally theoretical, Hellmann emphasized the critical connection between computation and experimental validation. His text established protocols for comparing theoretical predictions with experimental observables:

Spectroscopic Prediction and Validation: Quantum chemistry aims to predict and verify spectroscopic data including IR, NMR, and UV-Vis spectra [8]. The protocol involves: (1) solving the electronic Schrödinger equation for ground and excited states, (2) calculating transition energies and probabilities between states, (3) comparing predicted spectra with experimental measurements, and (4) refining theoretical models based on discrepancies.

Molecular Structure Determination: A key application involves predicting equilibrium molecular geometries by finding nuclear arrangements that minimize the total energy. The Hellmann-Feynman theorem provides an efficient method for computing the forces on nuclei, enabling geometry optimization through iterative steps of: (1) energy computation for a trial geometry, (2) force calculation on all nuclei, (3) geometry adjustment in the direction of vanishing forces, and (4) convergence to minimum energy structure.

Table: Key Quantum Chemical Methods Described in Hellmann's Work

| Method Category | Theoretical Basis | Applications | Limitations |

|---|---|---|---|

| Valence Bond (VB) Theory | Electron pairing and atomic orbital hybridization | Qualitative understanding of chemical bonding, resonance structures | Computational complexity for many-electron systems |

| Molecular Orbital (MO) Theory | Delocalized one-electron wavefunctions | Prediction of spectroscopic properties, molecular symmetry | Less intuitive connection to classical bond concepts |

| Pseudopotential Method | Effective core potentials | Heavy element compounds, computational efficiency | Parameterization requirements for accuracy |

| Perturbation Theory | Systematic corrections to reference system | Electron correlation effects, property calculations | Convergence issues for strongly correlated systems |

Contemporary researchers extending Hellmann's foundational work rely on a sophisticated toolkit of computational methods and resources:

Table: Essential Computational Methods in Quantum Chemistry

| Method | Theoretical Foundation | Typical Applications | Scaling Complexity |

|---|---|---|---|

| Hartree-Fock (HF) | Self-consistent field approximation | Molecular orbitals, initial wavefunctions | N⁴ (with N basis functions) |

| Density Functional Theory (DFT) | Electron density as fundamental variable | Ground-state properties of molecules and materials | N³ to N⁴ |

| Coupled Cluster (CC) | Exponential wavefunction ansatz | High-accuracy energy calculations | N⁷ for CCSD(T) |

| Quantum Monte Carlo | Statistical sampling of wavefunction | Benchmark calculations, small systems | N³ to N⁴ |

Specialized Research Areas and Techniques

Building upon Hellmann's pseudopotential foundation, several specialized research areas have developed:

Relativistic Quantum Chemistry: For heavy elements, relativistic effects become significant, requiring extension of standard quantum chemical methods [21]. The Dirac equation replaces the Schrödinger equation as the fundamental description, and relativistic pseudopotentials (effective core potentials) efficiently capture these effects while maintaining computational feasibility.

Non-Adiabatic Dynamics: Beyond the Born-Oppenheimer approximation, Hellmann's work on diabatical and adiabatical reactions has evolved into sophisticated methods for modeling processes involving multiple electronic states [8]. These approaches are essential for understanding photochemical reactions, electron transfer processes, and other phenomena where electronic and nuclear motion are strongly coupled.

Legacy and Contemporary Relevance

Historical Recognition and Modern Tributes

Despite his tragic personal fate, Hellmann's scientific legacy has endured and gained increasing recognition. The Hans Hellmann Lecture, awarded biennially by the Department of Chemistry at Philipps University of Marburg, honors leading researchers in quantum chemistry [10]. This prestigious lecture series, initiated in 2013, celebrates Hellmann's pioneering contributions and ensures his name remains associated with cutting-edge research in the field he helped establish.

Recent award recipients and their lecture topics demonstrate the vibrant evolution of quantum chemistry from Hellmann's foundational work:

- 2013: Klaus Ruedenberg (Iowa State University) - "Three Millenia of Atoms, Molecules and Bonds"

- 2015: Pekka Pyykkö (University of Helsinki) - "Relativistic Effects in Heavy-Element Chemistry"

- 2017: Evert Jan Baerends (Vrije Universiteit Amsterdam) - "On the Length and Character of Chemical Bonds"

- 2023: Anna Krylov (University of Southern California) - "Molecular Orbitals: Physical Reality or Mathematical Construct"

- 2025: Benedetta Mennucci (University of Pisa) - "How Proteins Sense and Use Light" [10]

Impact on Modern Computational Chemistry

Hellmann's pioneering work, particularly his development of the pseudopotential method, has found extensive applications in contemporary computational chemistry and materials science. His approaches form the foundation for:

Materials Design and Discovery: The pseudopotential method enables accurate calculations of materials properties for systems containing heavy elements, facilitating the design of novel materials with tailored electronic, optical, and mechanical properties [10]. This capability is particularly valuable in the search for high-entropy alloys with ultra-large lattice distortions and other advanced materials [22].

Drug Discovery and Biomolecular Simulation: Quantum chemical methods derived from Hellmann's foundational work provide insights into molecular recognition, reaction mechanisms, and spectroscopic properties of biological molecules. These applications enable more rational drug design and the understanding of complex biochemical processes at the atomic level.

The 2015 reissue of "Einführung in die Quantenchemie" by Springer Spektrum, edited by Dirk Andrae, has made Hellmann's original text accessible to contemporary researchers, allowing direct engagement with the historical foundations of their field [19] [23]. This republication underscores the enduring value of Hellmann's systematic approach to quantum chemistry and enables modern scientists to appreciate the conceptual breakthroughs that established their discipline.

The quest to understand the forces that bind atoms into molecules found its first comprehensive mathematical foundation in the work of Hans Hellmann. His 1937 textbook, Einführung in die Quantenchemie, stands as a landmark publication, establishing quantum chemistry as a distinct discipline and offering chemists a systematic framework to apply quantum mechanics to chemical problems [8] [16]. Hellmann's work was pioneering; he not only authored the first quantum chemistry book but also formulated the fundamental Hellmann-Feynman theorem, which provides a powerful method for calculating forces on nuclei in molecules directly from the electronic wavefunction [18] [16]. His philosophical approach was rooted in translating the abstract formalism of quantum physics into concepts usable for understanding chemical bonding.

Despite these early advances, a universally agreed-upon quantum mechanical definition of the chemical bond that captures all its facets across diverse molecular systems has remained elusive. Traditional models—including Lewis structures, Valence Bond theory, Molecular Orbital theory, and the Quantum Theory of Atoms in Molecules (QTAIM)—each offer valuable but often complementary or conflicting perspectives [24]. This article explores the modern philosophical and technical framework that builds upon Hellmann's legacy, integrating quantum mechanics, electron density topology, and quantum information theory to achieve a unified understanding of chemical bonding. This synthesis bridges fundamental quantum mechanics with observable chemical behavior, offering new pathways for understanding complex bonding phenomena in everything from simple diatomic molecules to biological systems and advanced materials [24].

Historical Foundation: Hellmann's Pioneering Role

Hans Gustav Adolf Hellmann (1903–1938) was a visionary scientist whose work laid the groundwork for modern quantum chemistry. Forced to flee Nazi Germany in 1934 due to his wife's Jewish heritage, he continued his research at the Karpov Institute in Moscow [18] [16]. It was during this period that he authored his seminal work, Einführung in die Quantenchemie (Introduction to Quantum Chemistry), published in German in 1937 after a Russian version appeared earlier the same year [16] [25].

Hellmann's textbook was revolutionary for its time. While Dirac had famously stated that the underlying physical laws for most of physics and all of chemistry were completely known, the practical application of these laws to chemical problems remained formidably difficult [25]. Hellmann's work addressed this very challenge by making quantum mechanics accessible and applicable to chemical bonding problems. His research provided critical insights, including highlighting the role of kinetic energy of electrons in the formation of covalent bonds and formulating the virial theorem, which relates the kinetic and potential energy in quantum systems [16].

The Hellmann-Feynman theorem, formulated in 1933 and independently derived by Richard Feynman later, remains one of his most enduring contributions [18] [16]. This theorem establishes that once the electronic distribution in a molecule is known, the forces on the nuclei can be calculated classically from electrostatic principles. This provides a profound conceptual bridge between quantum mechanics and classical electrostatics, demonstrating that chemical bonds arise from the redistribution of electron density according to quantum mechanical laws. Hellmann's philosophical framework emphasized that chemical bonding could be understood through the rigorous application of quantum mechanics, setting the stage for nearly a century of continued theoretical development.

The Modern Unified Mathematical Framework

Recent advances have produced a comprehensive mathematical framework that unifies previous approaches by integrating quantum mechanical wave function analysis, electron density topology, and quantum information theory [24]. This framework addresses the limitations of traditional models by proposing a global bonding descriptor function, F_bond, that synthesizes orbital-based descriptors with entanglement measures derived from the electronic wave function [24].

Theoretical Components of the F_bond Descriptor

The F_bond descriptor represents a significant philosophical shift from viewing chemical bonds purely through an energetic or electron-density perspective to understanding them as manifestations of quantum correlational structures. The descriptor is constructed from three fundamental components:

Orbital-Based Energetics (O_MOS): This component typically incorporates molecular orbital energies, such as the HOMO-LUMO gap, which captures the traditional energetic stability associated with bond formation [24].

Entanglement Entropy (S_E,max): Derived from single-qubit reduced density matrices, this measures the quantum correlations between different parts of a molecular system. Maximum entanglement entropy identifies the strength of non-classical correlations inherent in chemical bonds [24].

Genuine Multipartite Entanglement (GME): This advanced measure quantifies the quantum correlations shared among multiple components of a molecular system, essential for understanding multicenter bonding [24].

The Fbond function synthesizes these elements through the relationship: Fbond = N × OMOS × SE,max, where N is a normalization factor [24]. This formulation captures both the energetic stability (through orbital energies) and the quantum correlational structure (through entanglement measures) of bonding, providing a more complete description than either aspect alone.

Application and Validation Across Molecular Systems

The framework has been validated through computational implementation using the Variational Quantum Eigensolver (VQE) for several molecular systems, including hydrogen (H₂), ammonia (NH₃), and water (H₂O) [24]. The results demonstrate that F_bond successfully discriminates across different bonding regimes, spanning a 60–80-fold range in values [24].

Table 1: F_bond Descriptor Values for Different Molecular Systems

| Molecule | Basis Set | F_bond Value | Bonding Character |

|---|---|---|---|

| H₂ | STO-3G | 0.0425 | Highly correlated bonding |

| H₂ | 6-31G | 0.0314 | Highly correlated bonding |

| NH₃ | STO-3G | 5.22 × 10⁻⁴ | Mean-field bonding |

| H₂O | 6-31G | ~9.61 × 10⁻⁴ | Intermediate character |

The systematic decrease in F_bond for H₂ when moving from a minimal STO-3G to a larger 6-31G basis set (a 26% decrease) demonstrates basis set convergence behavior while preserving qualitative discrimination between bonding regimes [24]. This shows the descriptor's robustness and its sensitivity to both the electronic structure method and the quality of the basis set used in calculations.

Experimental and Computational Methodologies

Quantum Computational Workflow

The implementation of the unified bonding framework follows a detailed computational workflow that bridges traditional quantum chemistry with modern quantum information science. The methodology can be broken down into several key stages:

Hartree-Fock Reference Calculation: The process begins with a conventional Hartree-Fock computation using electronic structure packages like PySCF to obtain molecular orbital energies and an initial wavefunction guess [24].

Qubit Mapping: The electronic Hamiltonian is transformed from fermionic to qubit representation using mapping techniques such as the Jordan-Wigner transformation, making it compatible with quantum algorithms [24].

VQE-UCCSD Optimization: The Variational Quantum Eigensolver (VQE) with a Unitary Coupled Cluster Singles and Doubles (UCCSD) ansatz is employed to obtain the ground state wavefunction. This hybrid quantum-classical approach is currently the most effective method for quantum chemistry simulations on near-term quantum devices [24].

Entanglement Analysis: Single-qubit reduced density matrices are constructed from the optimized wavefunction to extract the maximum entanglement entropy (S_E,max) [24].

Descriptor Computation: The Fbond descriptor is finally computed by combining the entanglement measures with the orbital energy information (OMOS) obtained from the initial Hartree-Fock calculation [24].

Diagram 1: Computational workflow for the F_bond bonding descriptor calculation. The process integrates classical and quantum computational methods to derive a unified bonding descriptor from molecular structure.

The Scientist's Toolkit: Essential Research Reagents

Implementing this unified framework requires both computational tools and theoretical constructs. The following table details the essential "research reagents" necessary for applying this approach to chemical bonding analysis.

Table 2: Essential Research Reagents for Quantum Bonding Analysis

| Research Reagent | Function/Description | Implementation Example |

|---|---|---|

| Variational Quantum Eigensolver (VQE) | Hybrid quantum-classical algorithm for finding molecular ground states | Implemented via Qiskit Nature for wavefunction optimization [24] |

| UCCSD Ansatz | Parametrized wavefunction form that captures electron correlation | Used as the variational form in VQE calculations [24] |

| Maximally Entangled Atomic Orbitals (MEAOs) | Orbital basis that maximizes quantum entanglement between atomic centers | Identifies bonding patterns from correlation analysis [24] |

| Genuine Multipartite Entanglement (GME) | Measure of quantum correlations shared among multiple system components | Quantifies entanglement in multicenter bonds [24] |

| Basis Sets | Mathematical sets of functions used to represent molecular orbitals | STO-3G, 6-31G, cc-pVDZ, cc-pVTZ for systematic convergence [24] |

| Hirshfeld Atom Refinement (HAR) | Quantum crystallographic technique for accurate structure determination | Refines X-ray diffraction data using quantum mechanical electron densities [26] |

| Quantum Theory of Atoms in Molecules (QTAIM) | Framework for analyzing chemical bonding via electron density topology | Identifies bond critical points and analyzes bond paths [26] |

Applications Across Chemical Systems

Bond Discrimination and Classification

The unified framework enables precise discrimination between different types of chemical bonds based on their quantum correlational signatures. The Fbond descriptor successfully distinguishes the highly correlated bonding in H₂ (Fbond = 0.0314–0.0425) from the more mean-field character in NH₃ (Fbond = 5.22 × 10⁻⁴) and the intermediate case of H₂O (Fbond ≈ 9.61 × 10⁻⁴) [24]. This quantitative classification system moves beyond simplistic bond-type categories (covalent, ionic, metallic) to a continuous spectrum characterized by specific quantum mechanical properties.

Attochemistry and Ultrafast Processes

Building on the fundamental understanding of bonding, modern research explores how electrons move within molecules at attosecond timescales (1 attosecond = 10⁻¹⁸ seconds) [27]. This field, known as attochemistry, uses advanced laser pulses to observe and potentially control electron behavior in real time, creating "molecular movies" of chemical reactions [27]. Professor Henrik Larsson's work at UC Merced, supported by a DOE Early Career Award, focuses on simulating how electrons interact with each other and with atomic nuclei when exposed to these laser pulses [27]. This research provides insights into fundamental processes like charge migration, where the removal of an electron creates a positive charge vacancy that moves within a molecule [27]. Understanding these ultrafast dynamics is crucial for controlling chemical reactions, potentially allowing scientists to break specific molecular bonds and create new compounds.

Quantum Crystallography

The integration of quantum mechanics with crystallography has created the burgeoning field of quantum crystallography (QCr), which promises to bridge the gap between theory and experiment in understanding matter at the atomic level [26]. Techniques like Hirshfeld Atom Refinement (HAR) use quantum-mechanically derived electron densities to refine crystal structures from X-ray diffraction data, moving beyond the traditional Independent Atom Model (IAM) [26]. These methods enable the determination of accurate charge and spin electron density distributions, providing parameters that characterize the nature of chemical bonding [26]. For drug development professionals, these advances offer more precise structural information for ligand-target interactions, potentially improving rational drug design strategies.

Future Directions and Philosophical Implications

The unified framework bridging quantum mechanics and chemical bonding points toward several promising research directions that build upon Hellmann's original vision:

Strongly Correlated Systems: The information-theoretic approach offers new tools for understanding bonding in systems with strong electron correlation, such as transition metal complexes and high-temperature superconductors, which have traditionally challenged conventional computational methods [24].

Machine Learning Integration: Combining quantum information measures with machine-learned force fields (like FFLUX) enables accurate prediction of molecular properties and dynamics, potentially revolutionizing materials design and drug discovery [26].

Quantum Control of Reactions: As attochemistry advances, the potential for using laser pulses to selectively break and form specific bonds in complex molecules moves closer to reality, opening possibilities for precision synthesis of novel compounds [27].

The philosophical implications of this unified framework are profound. It suggests a shift from viewing chemical bonds as static entities to understanding them as dynamic manifestations of quantum correlations. This perspective reconciles the particle and wave nature of electrons in the context of bonding, demonstrating that the "chemical bond" is not a fundamental quantum mechanical entity but rather an emergent phenomenon arising from the complex interplay of kinetic energy, electrostatic interactions, and quantum entanglement. Hellmann's early recognition that kinetic energy plays a crucial role in covalent bond formation finds its full expression in this modern synthesis, which continues to evolve a century after the development of quantum mechanics [16] [26].

The publication of Hans Hellmann's 1937 textbook, Quantenchemie, stands as a seminal moment in the formalization of quantum chemistry as a distinct scientific discipline [18]. As the first dedicated quantum chemistry textbook, it represented an initial, crucial pedagogical effort to structure and communicate the principles of a field that was, at the time, a rapidly evolving confluence of physics and chemistry. Hellmann was not only a pioneer in theoretical concepts, such as the famous Hellmann-Feynman theorem crucial for force calculations in molecular systems, but also in the pedagogy required to nurture its first practitioners [28] [18]. His work established a foundational paradigm for educating scientists who could bridge the gap between abstract quantum theory and concrete chemical problems. This article analyzes the target audience and pedagogical approach inherent in Hellmann's pioneering work and extends these principles to frame modern educational strategies for researchers, scientists, and drug development professionals. The core challenge, then and now, is to render the computationally intensive and mathematically complex formalism of quantum mechanics both accessible and applicable to those investigating molecular phenomena [8] [9].

Historical Context: Hellmann's Pioneering Role

Hans Hellmann authored the first quantum chemistry textbook, Quantenchemie, in 1937, published in both German and Russian [18]. This groundbreaking work provided a structured introduction to the application of quantum mechanics in chemistry, aiming to equip early researchers with the necessary theoretical tools. His contributions were not merely academic; as an antifascist who left Germany for the Soviet Union, his life and work were deeply intertwined with the political turmoil of his time [18]. Tragically, he was arrested by Soviet authorities in 1938 and perished, an event that abruptly ended his direct influence on the field [18].

Hellmann's legacy is permanently embedded in the field through the Hellmann-Feynman theorem [28] [18]. This theorem is a fundamental result that simplifies the calculation of forces on nuclei in a molecule. It states that for the exact wave function, the derivative of the total energy with respect to a nuclear coordinate is equal to the expectation value of the derivative of the Hamiltonian. In essence, the forces on the nuclei can be calculated as classical electrostatic forces once the electronic charge distribution is known [28]. This theorem remains a cornerstone for modern computational methods, including ab initio molecular dynamics and geometry optimizations performed within frameworks like density functional theory [28]. The following diagram illustrates the key milestones in the early development of quantum chemistry, a period profoundly shaped by Hellmann's work.

Core Quantum Chemistry Methodologies: A Comparative Framework

The field of quantum chemistry has developed several computational methodologies to solve the electronic structure problem, each with distinct strengths, limitations, and pedagogical considerations. These methods represent the core "toolkit" that students and researchers must master.

Table 1: Core Methodologies in Quantum Chemistry

| Methodology | Theoretical Basis | Key Concepts | Computational Scaling | Primary Applications |

|---|---|---|---|---|

| Valence Bond (VB) Theory | Direct extension of Heitler-London approach [8] [9] | Pairwise interactions, orbital hybridization, resonance [9] | High | Qualitative bonding analysis, organic chemistry reaction mechanisms [9] |

| Molecular Orbital (MO) Theory | Hund-Mulliken approach; electrons in delocalized orbitals [8] [9] | Linear Combination of Atomic Orbitals (LCAO), Self-Consistent Field (SCF) iteration [9] | HF: N³–N⁴ | Spectroscopy, prediction of molecular properties, excited states [8] [9] |

| Density Functional Theory (DFT) | Hohenberg-Kohn theorems, Kohn-Sham equations [8] [9] | Electron density as fundamental variable, exchange-correlation functional [8] [9] | N³–N⁴ | Large molecules, materials science, catalysis, ground-state properties [8] [9] |

| Post-Hartree-Fock Methods | Systematic improvement upon HF [9] | Electron correlation, configuration interaction (CI), coupled-cluster (e.g., CCSD(T)) [9] | N⁵–N⁷ (MP2–CCSD(T)) | High-accuracy energy calculations, reaction barrier heights, non-covalent interactions [9] |

The Modern "Scientist's Toolkit": Essential Research Reagents and Materials

The practical application of quantum chemistry relies on a suite of computational "research reagents" and software tools. For today's drug development professionals and researchers, understanding this toolkit is as crucial as knowing laboratory equipment.

Table 2: Essential Computational Tools for Quantum Chemistry

| Tool / Resource | Type | Primary Function | Example Use-Case |

|---|---|---|---|

| Basis Sets (e.g., STO-3G, 6-31G(d), def2-TZVPP) [29] | Mathematical Set | Represent atomic orbitals; balance between accuracy and computational cost [29] | Using a polarized basis set like 6-31G(d) to model electron distribution changes during a reaction. |

| Exchange-Correlation Functionals (e.g., B3LYP, PBE) [9] [29] | Algorithmic Component | Define the treatment of quantum effects in DFT; critical for accuracy [9] | Employing the B3LYP functional for geometry optimization and energy calculation of a drug-like molecule. |

| Pseudopotentials (or Effective Core Potentials) [28] | Numerical Approximation | Replace core electrons with a potential to reduce computational cost [28] | Modeling systems with heavy atoms (e.g., transition metal catalysts) without explicitly calculating all core electrons. |

| Quantum Chemistry Software (e.g., ORCA, Gaussian, PySCF, Psi4) [29] | Computational Platform | Implement theoretical methods to perform calculations [29] | Running a conformational search of a flexible ligand using molecular mechanics, followed by DFT refinement. |

| Programming Libraries (e.g., NumPy) [29] | Development Environment | Enable custom algorithm development and data analysis [29] | Writing a script to parse multiple output files and calculate average properties across a set of molecules. |

Experimental and Computational Protocols

Detailed Protocol: Geometry Optimization and Harmonic Frequency Analysis

A foundational task in quantum chemistry is determining the most stable structure of a molecule and confirming it is a true minimum on the potential energy surface. This protocol is essential for validating synthesized compounds or proposed structures in drug design.

- Initial Coordinate Generation: Obtain a 3D molecular structure from a database (e.g., PubChem) or generate it using a molecular builder tool. File formats like XYZ or MOL are standard.

- Methodology and Basis Set Selection: Choose an appropriate level of theory. For organic molecules, DFT with a functional like B3LYP and a basis set like 6-31G(d) is a common starting point [29].

- Input File Preparation: Create an input file for the chosen computational software (e.g., Gaussian, ORCA). This file specifies:

- The calculation type (

Optfor optimization). - The theoretical method and basis set.

- The molecular charge and multiplicity.

- The initial molecular coordinates.

- The calculation type (

- Job Execution and Monitoring: Submit the calculation to a computer or computing cluster. The software will iteratively adjust nuclear coordinates to minimize the total energy, as defined by the Hellmann-Feynman forces [28]. Convergence is typically judged by the maximum force and displacement falling below predefined thresholds.

- Transition State Optimization (If Applicable): For locating transition states (saddle points on the energy surface), the input specifies a

TS(Berny) optimization instead ofOpt. This requires a better initial guess for the transition state geometry and often uses algorithms like the Synchronous Transit-guided Quasi-Newton method. - Frequency Analysis: Upon successful optimization, a subsequent single-point calculation is run on the optimized geometry. The input file specifies

Freqas the calculation type. This job:- Calculates the second derivatives of the energy with respect to nuclear coordinates (the Hessian matrix).

- Determines the vibrational frequencies and normal modes.

- Confirms the nature of the stationary point: all real frequencies for a minimum; one imaginary frequency for a transition state.

- Provides thermodynamic corrections for enthalpy and Gibbs free energy.

The workflow for this protocol, from initial setup to final validation, is outlined below.

Detailed Protocol: Energy Profile Calculation for a Reaction Pathway

Mapping the energy profile of a chemical reaction is critical for understanding reaction kinetics and mechanisms in pharmaceutical chemistry, such as enzyme catalysis or prodrug activation.

- Reactant and Product Identification: Clearly define the initial reactants and final products of the elementary reaction step under investigation.

- Geometry Optimization of Stationary Points:

- Perform a full geometry optimization and frequency calculation (as in Protocol 5.1) for the reactant(s) and product(s) to confirm they are local minima.

- Perform a transition state optimization and frequency calculation to confirm it has one imaginary frequency. The vibrational mode corresponding to this imaginary frequency should visually correspond to the motion along the reaction coordinate.

- Intrinsic Reaction Coordinate (IRC) Analysis:

- Once a transition state is confirmed, an IRC calculation is performed. This follows the path of steepest descent in mass-weighted coordinates from the transition state down to the corresponding minima.

- The purpose is to verify that the located transition state correctly connects the intended reactant and product structures.

- Single-Point Energy Refinement (Optional but Recommended):

- The energies obtained at the optimization level of theory (e.g., B3LYP/6-31G(d)) can be improved.

- Perform a more accurate single-point energy calculation (e.g., using a higher-level method like CCSD(T) or a larger basis set) on the optimized B3LYP/6-31G(d) geometries. This provides a more reliable energy profile.

- Energy Profile Construction and Analysis:

- Calculate the relative energies of the reactant, transition state, and product. The activation energy (Eₐ) is the energy difference between the transition state and the reactant.

- Plot the relative energy versus the reaction coordinate. Include the IRC path points to visualize the energy landscape between the stationary points.

- Analyze electronic structure changes (e.g., via Natural Population Analysis or Molecular Orbital diagrams) at key points along the IRC to elucidate the reaction mechanism.

Hans Hellmann's foundational work established a template for educating quantum chemists by building a bridge from abstract theory to chemical application. The modern extension of this pedagogical approach requires equipping researchers and drug development professionals with a deep understanding of both the conceptual framework and the practical computational tools. This includes knowing the strengths and limitations of different methodologies, mastering essential protocols like geometry optimization and reaction pathway analysis, and leveraging the Hellmann-Feynman theorem for efficient dynamics and structure validation. By integrating this knowledge, today's scientists can harness the full predictive power of quantum chemistry to solve complex challenges, from materials design to rational drug discovery, thereby fulfilling the pedagogical mission initiated by Hellmann's pioneering 1937 text.

From 1937 Theory to Modern Practice: Hellmann's Enduring Methodological Legacy

The Hellmann-Feynman theorem is a fundamental principle in quantum mechanics that connects the derivative of the total energy of a system with respect to a parameter to the expectation value of the derivative of the Hamiltonian with respect to that same parameter. This theorem was developed independently in the 1930s by Hans Hellmann (1937) and Richard Feynman (1939), with Paul Güttinger (1932) and Wolfgang Pauli (1933) also making early contributions [30]. The theorem's emergence coincided with a pivotal period in quantum chemistry, closely following the publication of Hans Hellmann's 1937 textbook, Einführung in die Quantenchemie (Introduction to Quantum Chemistry), recognized as the first book in the field [10] [18] [8]. Hellmann was not only a pioneer in quantum chemistry but also developed the foundations of the pseudopotential method. Tragically, his career was cut short when he was arrested and perished in the Soviet Union in 1938 [18]. His seminal work established a foundation that enables chemists to calculate forces in molecular systems using classical electrostatics once the spatial electron distribution is known from solving the Schrödinger equation, thus providing a powerful bridge between quantum mechanics and classical chemical intuition [30].

In modern computational chemistry and drug development, the Hellmann-Feynman theorem provides the theoretical underpinning for efficient calculation of key molecular properties. For researchers in drug development, this enables precise determination of molecular forces, equilibrium geometries, and reaction pathways—critical factors in understanding drug-target interactions, prodrug activation mechanisms, and covalent inhibition processes [31] [32]. The theorem's utility extends across multiple computational methods, including ab initio quantum chemistry, density functional theory (DFT), and emerging hybrid quantum-classical computational pipelines, making it an indispensable tool in the molecular modeler's toolkit [31] [32].

Theoretical Foundation

Mathematical Formulation

The Hellmann-Feynman theorem states that for a quantum system with a Hamiltonian ( \hat{H}\lambda ) that depends on a parameter ( \lambda ), the derivative of the energy ( E\lambda ) with respect to ( \lambda ) equals the expectation value of the derivative of the Hamiltonian:

$$ \frac{dE\lambda}{d\lambda} = \left\langle \psi\lambda \left| \frac{d\hat{H}\lambda}{d\lambda} \right| \psi\lambda \right\rangle $$

Here, ( |\psi\lambda\rangle ) is an eigenvector of ( \hat{H}\lambda ) with eigenvalue ( E\lambda ), satisfying ( \hat{H}\lambda |\psi\lambda\rangle = E\lambda |\psi_\lambda\rangle ) [30]. This relationship holds for exact eigenfunctions of the Hamiltonian and also for approximate wavefunctions that derive from a variational principle, such as Hartree-Fock wavefunctions [30] [33].

Table: Key Components of the Hellmann-Feynman Theorem

| Symbol | Description | Role in the Theorem |

|---|---|---|

| ( \lambda ) | Parameter in Hamiltonian | Independent variable representing physical quantity (e.g., nuclear coordinate, electric field) |

| ( \hat{H}_\lambda ) | Parameter-dependent Hamiltonian | Operator describing total energy of quantum system |

| ( \psi_\lambda ) | Eigenfunction of Hamiltonian | Quantum state of the system (exact or variational) |

| ( E_\lambda ) | Eigenvalue of Hamiltonian | Total energy of the system in state ( \psi_\lambda ) |

| ( \frac{d\hat{H}_\lambda}{d\lambda} ) | Hamiltonian derivative | Operator representing sensitivity of Hamiltonian to parameter change |

Proof and Interpretation

The standard proof of the theorem follows from differentiating the expectation value of the energy and applying the conditions that ( |\psi_\lambda\rangle ) is an eigenfunction and normalized [30]:

\begin{align} \frac{dE_\lambda}{d\lambda} &= \frac{d}{d\lambda} \langle \psi_\lambda | \hat{H}_\lambda | \psi_\lambda \rangle \ &= \left\langle \frac{d\psi_\lambda}{d\lambda} \middle| \hat{H}_\lambda \middle| \psi_\lambda \right\rangle + \left\langle \psi_\lambda \middle| \hat{H}_\lambda \middle| \frac{d\psi_\lambda}{d\lambda} \right\rangle + \left\langle \psi_\lambda \middle| \frac{d\hat{H}_\lambda}{d\lambda} \middle| \psi_\lambda \right\rangle \ &= E_\lambda \left\langle \frac{d\psi_\lambda}{d\lambda} \middle| \psi_\lambda \right\rangle + E_\lambda \left\langle \psi_\lambda \middle| \frac{d\psi_\lambda}{d\lambda} \right\rangle + \left\langle \psi_\lambda \middle| \frac{d\hat{H}_\lambda}{d\lambda} \middle| \psi_\lambda \right\rangle \ &= E_\lambda \frac{d}{d\lambda} \langle \psi_\lambda | \psi_\lambda \rangle + \left\langle \psi_\lambda \middle| \frac{d\hat{H}_\lambda}{d\lambda} \middle| \psi_\lambda \right\rangle \ &= \left\langle \psi_\lambda \middle| \frac{d\hat{H}_\lambda}{d\lambda} \middle| \psi_\lambda \right\rangle \end{align}

The critical step occurs in the final line where the term ( E\lambda \frac{d}{d\lambda} \langle \psi\lambda | \psi\lambda \rangle ) vanishes due to the normalization condition ( \langle \psi\lambda | \psi_\lambda \rangle = 1 ) [30]. An alternative proof derives the theorem from the variational principle, showing that it holds for any wavefunction that makes the Schrödinger functional stationary [30].

Figure 1: Logical workflow illustrating the foundation of the Hellmann-Feynman theorem, showing the relationship between parameterized Hamiltonian, wavefunction solution, and the final theorem.

Computational Implementation

Molecular Force Calculations

The most prominent application of the Hellmann-Feynman theorem is calculating intramolecular forces to determine equilibrium molecular geometries [30]. For a system with N electrons and M nuclei, the Hamiltonian contains terms for electron kinetic energy, electron-electron repulsion, electron-nuclear attraction, and nuclear-nuclear repulsion. The force on a particular nucleus ( \gamma ) in the x-direction is given by:

$$ F{X\gamma} = -\frac{\partial E}{\partial X\gamma} = -\left\langle \psi \left| \frac{\partial \hat{H}}{\partial X\gamma} \right| \psi \right\rangle $$

Only the electron-nucleus and nucleus-nucleus terms of the Hamiltonian contribute to this derivative [30]. This approach enables efficient geometry optimization by calculating analytical forces instead of relying on numerical differentiation of energies.

Advanced Computational Methods

Recent methodological advances have extended Hellmann-Feynman theorem applications to excited-state forces within the GW-Bethe-Salpeter equation (GW-BSE) framework [31]. This approach allows calculation of atomic forces for molecules in excited states, which is crucial for understanding photochemical processes and relaxation pathways. The derivative of the excitonic Hamiltonian can be obtained through finite differences and applied to excited states using appropriate projectors [31].

Table: Computational Methods Utilizing the Hellmann-Feynman Theorem

| Method | Application Domain | Hellmann-Feynman Implementation |

|---|---|---|

| Hartree-Fock | Ground state electronic structure | Exact for self-consistent solutions [30] |

| Density Functional Theory | Ground state of molecules and materials | Works within variational formulation [30] |

| GW-BSE | Excited state properties and forces | Requires derivative of excitonic Hamiltonian [31] |

| Variational Quantum Eigensolver | Quantum computing applications | Applicable through variational principle [32] |

| Quantum Chemistry Methods | Molecular structure optimization | Force calculation for geometry relaxation [30] |

For covalent bond cleavage studies in prodrug activation—a crucial application in pharmaceutical research—the Hellmann-Feynman theorem enables precise calculation of energy barriers and reaction pathways [32]. In these simulations, researchers implement a pipeline for quantum computing of solvation energy based on the polarizable continuum model (PCM), with the theorem providing efficient force calculations [32].

Workflow for Excited-State Force Calculations

A modern implementation for calculating excited-state forces using the Hellmann-Feynman theorem with GW-BSE methodology follows this protocol [31]:

Ground State Calculation: Perform DFT calculation with appropriate exchange-correlation functional (e.g., PBE) and pseudopotentials to obtain ground state wavefunctions.