Quantization in Chemical Systems: From Ab Initio Methods to AI-Driven Drug Discovery

This article provides a comprehensive comparison of quantization approaches across diverse chemical systems, exploring their foundational principles and methodological applications.

Quantization in Chemical Systems: From Ab Initio Methods to AI-Driven Drug Discovery

Abstract

This article provides a comprehensive comparison of quantization approaches across diverse chemical systems, exploring their foundational principles and methodological applications. It delves into advanced techniques, from neural network wavefunctions for solids to machine learning corrections for density functional theory, highlighting their role in achieving quantum chemical accuracy. The content further addresses critical troubleshooting and optimization strategies for managing computational errors and system complexities. Finally, it offers a rigorous validation of these methods against experimental data and other computational benchmarks, underscoring their transformative impact on accelerating drug discovery and materials design for researchers and development professionals.

The Quantum Foundation: Core Principles of Quantization in Chemistry

Computational quantum chemistry provides indispensable tools for researchers investigating molecular systems, from drug discovery to materials design. The field is largely built upon two foundational formalisms: Wavefunction Theory (WFT) and Density Functional Theory (DFT). While both aim to solve the electronic Schrödinger equation, they approach this challenge through fundamentally different philosophies. WFT explicitly treats the many-electron wavefunction and systematically improves approximations, whereas DFT uses the electron density as its central variable, offering a different balance of computational cost and accuracy.

This guide provides an objective comparison of these methodologies, highlighting their respective strengths, limitations, and optimal application domains through recent benchmarking studies and experimental validations. Understanding this "formalism gap" empowers scientists to select the most appropriate tool for specific challenges in chemical research.

Theoretical Foundations and Computational Protocols

Density Functional Theory: A Practical Workhorse

DFT bypasses the complex N-electron wavefunction by using the electron density, (\rho(\mathbf{r})), a function of only three spatial coordinates, as the fundamental variable for calculating ground-state energies and properties. This conceptual leap is founded on the Hohenberg-Kohn theorems [1]. The practical implementation of DFT uses the Kohn-Sham scheme, where a system of non-interacting electrons is constructed to have the same density as the real, interacting system. The total energy is expressed as:

[ E[\rho] = Ts[\rho] + V{\text{ext}}[\rho] + J[\rho] + E_{\text{xc}}[\rho] ]

Here, (Ts) is the kinetic energy of the non-interacting electrons, (V{\text{ext}}) is the external potential energy, (J) is the classical Coulomb repulsion, and (E{\text{xc}}) is the exchange-correlation energy, which encapsulates all quantum many-body effects [1]. The accuracy of DFT hinges on the approximation used for (E{\text{xc}}), leading to a wide variety of functionals.

Table 1: The "Charlotte's Web" of Common Density Functionals [1]

| Type | Description | Key Ingredients | Example Functionals |

|---|---|---|---|

| LDA | Local Density Approximation | (\rho) | SVWN |

| GGA | Generalized Gradient Approximation | (\rho, \nabla\rho) | BLYP, PBE, BP86 |

| mGGA | meta-GGA | (\rho, \nabla\rho, \tau) | TPSS, M06-L, B97M, r²SCAN |

| Hybrid | Mixes DFT exchange with HF exchange | GGA/mGGA + HF exchange | B3LYP, PBE0, TPSSh |

| Range-Separated Hybrid | Distance-dependent HF/DFT mixing | GGA/mGGA + HF exchange | CAM-B3LYP, ωB97X, ωB97M |

Wavefunction Theory: A Systematic Hierarchy

In contrast, Wavefunction Methods (WFM) deal directly with the many-electron wavefunction, (\Psi), which depends on the coordinates of all N electrons. These methods are built upon the Hartree-Fock (HF) method, a mean-field approximation that does not account for electron correlation. Post-Hartree-Fock methods add this crucial correlation energy systematically [2]:

- Complete Active Space Self-Consistent Field (CASSCF): A multiconfigurational method that handles static correlation by performing a full configuration interaction (FCI) within a carefully selected active space of orbitals and electrons.

- Multireference Perturbation Theory (e.g., NEVPT2): Adds dynamic correlation on top of a CASSCF reference wavefunction, providing highly accurate energies for systems with strong multireference character.

- Coupled Cluster (CC): A single-reference method that, at the CCSD(T) level, is considered the "gold standard" for molecules where the HF reference is adequate.

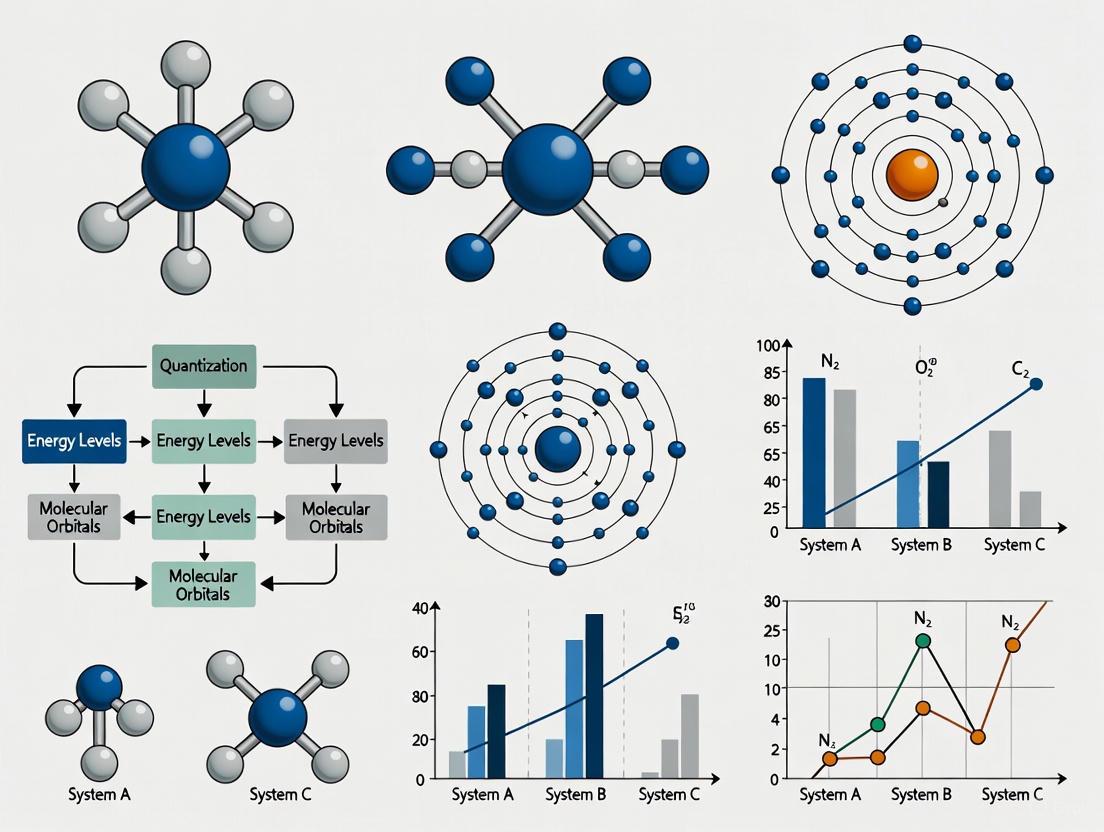

Visualization of Method Selection and Application Workflow

The following diagram illustrates a typical workflow for selecting and applying these computational protocols, particularly for complex systems like color centers in solids, integrating both DFT and wavefunction-based approaches [2].

Performance Benchmarking Across Chemical Systems

Charge Transfer and Redox Properties

Accurate prediction of reduction potentials and electron affinities is crucial in electrochemistry and drug metabolism studies. These properties are sensitive tests for a method's ability to handle changes in molecular charge and spin state. A 2025 benchmark study compared OMol25-trained neural network potentials (NNPs), DFT, and semiempirical methods against experimental data [3].

Table 2: Performance in Predicting Reduction Potentials (Mean Absolute Error, V) [3]

| Method | Type | Main-Group (OROP) MAE (V) | Organometallic (OMROP) MAE (V) |

|---|---|---|---|

| B97-3c | DFT (Composite) | 0.260 | 0.414 |

| GFN2-xTB | SQM | 0.303 | 0.733 |

| UMA-S (NNP) | Machine Learning | 0.261 | 0.262 |

| eSEN-S (NNP) | Machine Learning | 0.505 | 0.312 |

The study revealed that some modern NNPs can rival the accuracy of DFT for organometallic species. However, for main-group molecules, DFT functionals like B97-3c remained superior. In a specialized study on [FeFe]-hydrogenase-inspired catalysts, the pure GGA functional PBE demonstrated exceptional accuracy for predicting redox potentials (R² = 0.95) and molecular geometries [4], outperforming hybrid functionals like B3LYP and PBE0 for this specific organometallic system.

Multireference and Strongly Correlated Systems

Systems with strong static correlation, such as open-shell transition metal complexes, diradicals, and point defects in solids, present a major challenge for conventional DFT. The NV⁻ center in diamond is a classic example of a multireference system critical for quantum technologies. A 2025 study highlighted the limitations of single-determinant DFT for such systems and demonstrated the success of a advanced WFT protocol [2].

The protocol combined CASSCF(6e,4o) to describe the strongly correlated defect orbitals with NEVPT2 to include dynamic correlation from the surrounding lattice. This approach successfully computed the fine structure of electronic states, Jahn-Teller distortions, and zero-phonon lines with high accuracy, properties that are difficult to obtain reliably with standard DFT [2].

Reaction Mechanism Elucidation

Combining DFT with wavefunction analysis has proven powerful for elucidating complex reaction mechanisms at the molecular level. A study on the ozonation of polystyrene microplastics (PSMPs) used DFT calculations at the M06-2X/6-311+G(d,p) level to optimize reactant, intermediate, and transition state geometries [5]. This was complemented by wavefunction analysis (e.g., Fukui functions) to identify reactive sites and map the potential energy surface.

This integrated computational approach identified the key elementary reactions and the dominant pathway, which were subsequently validated experimentally using techniques like LC-MS and HPLC. The synergy between DFT and wavefunction-based analysis provided atomic-level insights that were inaccessible through experimentation alone [5].

The Scientist's Toolkit: Essential Computational Reagents

Table 3: Key Software and Computational "Reagents" for Electronic Structure Studies

| Tool/Solution | Function | Example Uses |

|---|---|---|

| Quantum ESPRESSO | Plane-wave DFT code for periodic systems | Calculating mechanical, thermal properties of solids (e.g., CdS, CdSe) [6] |

| Gaussian 16 | Molecular quantum chemistry package | Geometry optimization, frequency, reaction pathway calculation [5] |

| Psi4 | Open-source quantum chemistry package | High-accuracy energy calculations with various DFT/WFT methods [3] |

| geomeTRIC | Geometry optimization library | Optimizing structures with NNPs or DFT [3] |

| Projector Augmented-Wave (PAW) | Pseudopotential method | Treating core-valence electron interaction in periodic DFT [6] |

| Def2-TZVPD Basis Set | High-quality Gaussian basis set | Accurate molecular calculations (e.g., ωB97M-V in OMol25) [3] |

DFT and WFT are not mutually exclusive but are complementary tools in the computational chemist's arsenal. DFT, particularly hybrid and range-separated functionals, offers the best balance of accuracy and computational cost for most ground-state applications involving main-group molecules, including geometry optimization and reaction mechanism studies [5] [1]. WFT methods are indispensable for tackling problems with significant multireference character, such as excited states, bond breaking, and spin qubits in materials, providing systematically improvable, high-accuracy solutions [2].

The future lies in the intelligent integration of these methods. Protocols that use DFT for initial screening and geometry sampling, followed by high-level WFT for final energetics, are becoming the standard for challenging problems. Furthermore, the emergence of quantum computing holds the potential to revolutionize the field by efficiently simulating strongly correlated systems that are intractable for classical computers [7] [8]. As both formalisms continue to evolve, they will collectively bridge the gap between computational prediction and experimental reality, accelerating discovery across chemistry and materials science.

A quiet revolution is underway in computational chemistry and materials science. For decades, density functional theory (DFT) has served as the primary workhorse for simulating solid-state systems, offering a favorable scaling of O(n³) with system size that enables practical calculations of real materials [9]. However, this utility comes at a cost: the choice of exchange-correlation functional represents an uncontrolled approximation that sometimes yields qualitatively incorrect results for strongly correlated materials [9]. Meanwhile, highly accurate quantum chemistry methods like coupled-cluster theory face prohibitive computational costs when applied to extended systems [10].

This methodological gap has driven the development of neural network-based variational Monte Carlo (DL-VMC) approaches, which combine the expressivity of neural networks with the theoretical rigor of the variational principle [9]. Initially demonstrating remarkable success for small molecules, these methods faced significant challenges when applied to periodic solids, where calculations require numerous similar but distinct computations across different supercell sizes, boundary conditions, and geometries [9]. Recent advances in transferable neural wavefunctions and specialized architectures have begun to overcome these limitations, potentially offering a new paradigm for accurate ab initio simulation of solids.

Methodological Approaches: From Molecules to Solids

Neural Network Ansatze for Periodic Systems

Extending neural network wavefunctions from molecules to periodic solids requires incorporating two fundamental properties: anti-symmetry and periodicity. The FermiNet architecture, which uses Slater determinants of neural network-generated orbitals, successfully preserves the anti-symmetry requirement for fermionic systems [10]. For periodic systems, researchers have developed specialized approaches to maintain translational symmetry:

Periodic Feature Construction: Whitehead et al. developed a method to construct periodic distance features using lattice vectors in real and reciprocal space [10]. These features regulate ordinary distances to periodic ones at far distances while maintaining asymptotic cusp form and continuity requirements.

Transferable Wavefunctions: Recent work by Scherbela et al. enables optimization of a single neural network wavefunction across multiple system variations, including geometry, boundary conditions, and supercell sizes [9]. This approach maps computationally cheap, uncorrelated mean-field orbitals to expressive neural network orbitals that depend on the positions of all electrons.

Self-Attention Mechanisms: The attention mechanism, transformative in artificial intelligence, has been adapted to capture electron correlations by quantifying how electrons influence each other [11]. This approach constructs neural network wavefunctions from Slater determinants of generalized orbitals that depend on the configuration of all electrons.

Table 1: Key Neural Network Architectures for Solid-State Simulation

| Architecture | Key Features | Applicable Systems | Scalability |

|---|---|---|---|

| Transferable Neural Wavefunctions | Single model optimized across multiple system variations; transfer learning from small to large supercells | Real solids with different geometries, boundary conditions, and supercell sizes | Reduces optimization steps by 50x for larger systems [9] |

| Periodic Neural Network Ansatz | Incorporates periodic distance features; complex-valued wavefunctions; Bloch function-like orbitals | 1D chains, 2D materials (graphene), 3D crystals (LiH), homogeneous electron gas [10] | O(nₑₗ⁴) scaling with electron number [9] |

| Self-Attention Ansatz | Captures electron correlations via attention mechanism; unifying architecture for diverse systems | Atoms, molecules, electron gas, moiré materials [11] | Parameter scaling as Nₚₐᵣ ∝ N² with electron number [11] |

Quantum Computing Approaches

In parallel to classical neural network methods, quantum computing has emerged as a promising approach for quantum chemistry simulations, with distinct methodological frameworks:

First Quantization Methods: Recent work has developed qubitization-based quantum phase estimation (QPE) implementations of quantum chemistry Hamiltonians in first quantization with arbitrary basis sets [12]. This approach achieves asymptotic speedup for molecular orbitals and orders of magnitude improvement using dual plane waves compared to second quantization counterparts.

Second Quantization Methods: The more commonly studied approach in quantum computation, second quantization encodes anti-symmetry into creation and annihilation operators, mapping directly to qubit representations [12]. While more established, this approach typically requires more qubits than first quantization methods.

Diagram 1: Computational workflows for neural network and quantum approaches to solid-state simulation.

Performance Benchmarks and Comparative Analysis

Accuracy Across Material Systems

Neural network ansatze have demonstrated competitive accuracy across diverse material systems, from simple model systems to real solids:

Hydrogen Chains: For one-dimensional hydrogen chains, transferable neural wavefunctions achieve slightly lower (more accurate) energies than previous DeepSolid results across all chain lengths [9]. Using twist-averaged boundary conditions, these methods achieve an extrapolated thermodynamic limit energy of -565.24(2) mHa, 0.2-0.5 mHa lower than lattice-regularized diffusion Monte Carlo and DeepSolid [9].

Graphene: For the cohesive energy of graphene, periodic neural network ansatze reach within 0.1 eV/atom of experimental reference values, significantly outperforming Γ-point-only calculations that deviate by 1.25 eV/atom [10].

Lithium Hydride Crystals: Neural network calculations of LiH crystal equation of state yield excellent agreement with experimental data for cohesive energy, bulk modulus, and equilibrium lattice constant [10]. Transfer learning approaches demonstrate particularly impressive efficiency, enabling accurate simulation of 108-electron systems with 50x fewer optimization steps than previous approaches [9].

Table 2: Performance Comparison Across Computational Methods for Solid-State Systems

| Method | Computational Scaling | Accuracy | Key Limitations | Representative Systems |

|---|---|---|---|---|

| Density Functional Theory | O(nₑₗ³) or better [9] | Often sufficient but can be qualitatively wrong for correlated systems [9] | Uncontrolled approximation in exchange-correlation functional | Universal application to solids |

| Transferable Neural Wavefunctions | O(nₑₗ⁴) [9] | Reaches chemical accuracy for benchmark systems [9] [10] | High computational cost for initial training; specialized expertise required | LiH, hydrogen chains [9] |

| Periodic Neural Network Ansatz | O(nₑₗ⁴) [9] | Competitive with LR-DMC, AFQMC [10] | Complex implementation; significant computational resources | Hydrogen chains, graphene, LiH, electron gas [10] |

| Quantum Phase Estimation (QPE) | Varies by implementation; first quantization offers exponential qubit improvement [12] | Potentially exact in fault-tolerant regime [13] | Requires fault-tolerant quantum computers not yet available | Molecular orbitals, dual plane waves [12] |

Quantum Resource Requirements

For quantum computing approaches, the resource requirements vary significantly between different representations:

First vs. Second Quantization: First quantization requires Nlog₂(2D) qubits for N electrons and D basis functions, offering exponential improvement in qubit scaling with respect to orbital number compared to second quantization [12]. The sparse implementation in first quantization also provides polynomial speedup in Toffoli gate count [12].

Basis Set Dependence: For molecular orbitals, first quantization with qubitization shows polynomial speedup in Toffoli count [12]. For dual plane waves, the approach demonstrates orders of magnitude improvement in both logical qubit count and Toffoli gates over second quantization counterparts [12].

Table 3: Quantum Resource Estimates for Fault-Tolerant Quantum Simulation

| Method | Qubit Requirements | Toffoli Gate Count | Key Advantages | Optimal Use Cases |

|---|---|---|---|---|

| First Quantization (Sparse) | Nlog₂(2D) qubits [12] | Polynomial speedup vs. second quantization [12] | Exponential qubit scaling improvement; lower subnormalization factor | Active space calculations with molecular orbitals [12] |

| First Quantization (Dual Plane Waves) | Significant reduction vs. second quantization [12] | Orders of magnitude improvement [12] | Massive resource reduction; competitive with plane waves without data loading | Electron gas, materials in physically relevant regimes [12] |

| Second Quantization (Sparse) | 2D qubits for 2D spin orbitals [12] | Higher than first quantization approaches [12] | Well-established; direct occupation number mapping | Small molecules with compact basis sets [14] |

| QPE with Qubitization | Varies by encoding method [13] | Most efficient for fault-tolerant chemistry [13] | Lowest known quantum resources for chemistry problems | Large molecules in fault-tolerant regime [13] |

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Computational Tools and Frameworks for Neural Network Quantum Chemistry

| Tool/Component | Function | Implementation Example |

|---|---|---|

| Periodic Distance Features | Regulates ordinary distances to satisfy periodicity requirements while maintaining cusp conditions | Matrix construction using lattice vectors: d(r) = √(AMAᵀ)/2π with Mᵢⱼ = f²(ωᵢ)δᵢⱼ + g(ωᵢ)g(ωⱼ)(1-δᵢⱼ) [10] |

| Transferable Wavefunction Framework | Enables single neural network to represent multiple system variations (geometry, boundary conditions, supercell size) | System parameters (e.g., geometry, boundary twist) provided as additional input to neural network [9] |

| Self-Attention Mechanism | Captures complex electron correlations by quantifying inter-electron influences | Attention weights computed between electron pairs to generate orbital functions [11] |

| Kronecker-Factored Curvature Estimator | Efficient neural network optimizer outperforming traditional energy minimization algorithms | Modified DeepMind implementation for variational Monte Carlo optimization [10] |

| Twist-Averaged Boundary Conditions | Reduces finite-size errors by averaging over different boundary condition twists | Γ-centered Monkhorst-Pack grid sampling with appropriate weights [9] |

Diagram 2: Architecture of a neural network ansatz for solid-state systems, showing key components from input to wavefunction evaluation.

The extension of neural network ansatze from molecular simulations to periodic solids represents a significant advancement in computational materials science. While traditional DFT remains indispensable for its balance of efficiency and accuracy, neural network approaches now offer a path to higher accuracy for strongly correlated systems where DFT fails. The development of transferable wavefunctions that can be pretrained on small systems and efficiently fine-tuned for larger systems addresses a critical scalability limitation [9].

Meanwhile, quantum computing approaches continue to advance, with first quantization methods in particular offering promising resource reductions for fault-tolerant quantum simulation of solids [12]. The recent integration of attention mechanisms provides a potentially unifying architecture that has demonstrated success across diverse electronic systems [11].

As both classical neural network and quantum approaches mature, their complementary strengths suggest a future where multiscale modeling combines efficient DFT screening with targeted high-accuracy neural network or quantum simulations for critical regions where correlation effects dominate. This methodological ecosystem promises to accelerate the discovery and understanding of novel quantum materials and catalytic systems by providing more reliable computational tools to researchers across chemistry, materials science, and drug development.

Accurate ab initio calculation of electronic structures is a cornerstone of modern materials science and quantum chemistry. The pursuit of higher precision in predicting properties like cohesive energy, correlation energy, and dissociation curves drives methodological innovation. Benchmark systems—carefully selected for their computational tractability and representative physical phenomena—provide the essential proving grounds for new electronic structure methods. This guide objectively compares the performance of cutting-edge computational approaches, including neural network (NN) wavefunction ansatz and augmented density matrix renormalization group (DMRG), against traditional quantum chemistry methods and experimental data. We focus on three foundational benchmark systems: the one-dimensional periodic hydrogen chain, two-dimensional graphene, and three-dimensional lithium hydride (LiH) crystals. These systems span a wide range of material dimensions, bonding types (covalent, metallic, ionic), and electronic behaviors (metallic to insulating), offering a comprehensive framework for evaluating methodological accuracy across diverse chemical environments.

Benchmark Systems and Methodologies

The selected benchmark systems each present unique challenges and opportunities for quantifying the accuracy of electronic structure methods.

One-Dimensional Hydrogen Chain

The periodic hydrogen chain serves as a fundamental model for studying strong electron correlations and quantum confinement effects. Despite its simple chemical composition, it exhibits complex behavior such as a transition from an atomic insulating state to a metallic state at equilibrium bond lengths, making it a stringent test for correlation methods [10]. Its quasi-one-dimensional nature also allows for the application of powerful tensor network methods.

Two-Dimensional Graphene

Graphene, a two-dimensional carbon allotrope with a honeycomb lattice, represents systems with Dirac-like electronic spectra and topological characteristics [10]. Its accurate simulation requires methods capable of handling weak long-range dispersion interactions and subtle correlation effects that influence cohesive energy predictions [10]. The system tests a method's ability to describe covalent bonding in extended π-conjugated systems.

Three-Dimensional Lithium Hydride (LiH) Crystal

The LiH crystal, with its rock-salt structure, embodies a strongly ionic bonding character interspersed with covalent contributions [10]. This system challenges computational methods to accurately describe charge transfer, long-range electrostatic interactions, and the interplay between ionic and covalent bonding components across different lattice constants. It provides critical benchmarks for thermodynamic properties including cohesive energy, bulk modulus, and equilibrium lattice parameters [10].

Performance Comparison of Computational Methods

Methodological Approaches

Table 1: Overview of Computational Methods for Benchmark Systems

| Method Category | Specific Methods | Key Features | Applicable Systems |

|---|---|---|---|

| Neural Network Quantum State | Periodic Neural Network Ansatz [10] | Combines periodic boundary conditions with FermiNet architecture; uses VMC optimization with KFAC | H-chain, Graphene, LiH, HEG |

| Augmented Tensor Networks | MCA-MPS (Matchgate & Clifford Augmented MPS) [15] | Enhances MPS with classically simulatable quantum circuits; optimized via modified DMRG | H-chain, Quantum Many-Body Systems |

| Quantum Monte Carlo | LR-DMC [10], AFQMC [10] | Stochastic approaches; LR-DMC uses lattice regularization | H-chain, LiH |

| Traditional Ab Initio | HF [10], DFT [10] | Well-established; DFT accuracy depends on functional choice | All Systems |

| Experimental Reference | N/A | Provides ground-truth validation where available | All Systems |

Quantitative Performance Metrics

Table 2: Accuracy Comparison Across Methods and Systems

| System | Property | Neural Network Ansatz [10] | MCA-MPS [15] | LR-DMC [10] | AFQMC [10] | Traditional VMC [10] | Experimental Reference [10] |

|---|---|---|---|---|---|---|---|

| H-Chain | Dissociation Curve | Near-exact match with LR-DMC | Several orders improvement over MPS | Reference Accuracy | Reference Accuracy at TDL | Significant deviation | N/A |

| H-Chain (TDL) | Correlation Energy | Comparable to AFQMC/LR-DMC | N/A | Reference Accuracy | Reference Accuracy | N/A | N/A |

| Graphene | Cohesive Energy (eV/atom) | Within 0.1 eV/atom | N/A | N/A | N/A | N/A | 7.6 (Experimental) |

| LiH Crystal | Cohesive Energy | Excellent agreement | N/A | N/A | N/A | N/A | Excellent agreement |

| LiH Crystal | Bulk Modulus | Excellent agreement | N/A | N/A | N/A | N/A | Excellent agreement |

| LiH Crystal | Equilibrium Lattice Constant | Excellent agreement | N/A | N/A | N/A | N/A | Excellent agreement |

Method-Specific Performance Analysis

Neural Network Ansatz demonstrates remarkable versatility across all three benchmark systems. For the hydrogen chain, it achieves near-exact agreement with lattice-regularized diffusion Monte Carlo (LR-DMC) results, significantly outperforming traditional variational Monte Carlo (VMC) approaches [10]. The method's accuracy extends to two-dimensional systems, calculating graphene's cohesive energy within 0.1 eV/atom of experimental values when using twist average boundary condition (TABC) with structure factor correction [10]. For three-dimensional LiH crystals, the neural network approach successfully reproduces multiple thermodynamic properties including cohesive energy, bulk modulus, and equilibrium lattice constant with excellent agreement to experimental data [10].

MCA-MPS (Matchgate & Clifford Augmented Matrix Product States) shows exceptional performance for one-dimensional quantum systems like hydrogen chains, improving ground-state energy accuracy by several orders of magnitude compared to standard MPS with identical bond dimensions [15]. This augmented approach synergistically combines the strengths of three distinct representations: MPS for low-entanglement states, Matchgates (Gaussian transformations) for fermionic Gaussian states, and Clifford circuits for stabilizer states [15]. The method optimization integrates seamlessly with DMRG algorithms, making it particularly valuable for strongly correlated quasi-one-dimensional systems [15].

Experimental Protocols and Workflows

Neural Network Ansatz for Solids

The neural network approach employs a sophisticated workflow combining periodicity-aware feature engineering with quantum Monte Carlo optimization.

Figure 1: Workflow for neural network ansatz applied to solid systems, showing the integration of periodic boundary conditions with neural network optimization [10].

Protocol Details:

- Periodic Feature Construction: The method transforms ordinary distance metrics into periodic equivalents using lattice vectors in real and reciprocal space, ensuring proper periodicity while maintaining asymptotic cusp form and continuity [10].

- Wavefunction Ansatz: The wavefunction is approximated by two Slater determinants (spin-up/spin-down) with periodic orbitals combining plane-wave phase factors (e^ik·r) and collective molecular orbitals (u_mol) that incorporate electron-electron interactions [10].

- Optimization Framework: Variational Monte Carlo (VMC) sampling combined with Kronecker-factored curvature estimator (KFAC) optimization enables efficient training of the neural network parameters [10].

- Finite-Size Corrections: Techniques like twist average boundary condition (TABC) and structure factor S(k) correction are employed to minimize finite-size errors in extended systems [10].

MCA-MPS Optimization Framework

The MCA-MPS approach enhances traditional DMRG through synergistic integration of classically simulatable quantum circuits.

Figure 2: MCA-MPS optimization protocol showing sequential enhancement of matrix product states with Matchgate and Clifford circuits [15].

Protocol Details:

- Sequential Optimization: The method begins with standard MPS initialization and DMRG optimization, followed by sequential enhancement with Matchgate and Clifford circuits [15].

- Matchgate Application: Matchgate circuits implement SO(2N) transformations in Majorana fermion representation, enabling efficient description of Gaussian states and reduction of entanglement entropy [15].

- Clifford Enhancement: Clifford circuits apply Pauli-basis transformations to further reduce energy and entanglement, optimized within the DMRG framework before truncation via singular value decomposition [15].

- Hamiltonian Transformation: The combined transformation yields a Hamiltonian expressed as a combination of Pauli strings (HMCA-MPS = ΣaiP_i), facilitating efficient computation [15].

Table 3: Essential Computational Tools for Quantization Accuracy Research

| Tool/Category | Specific Implementation | Function/Purpose | System Applicability |

|---|---|---|---|

| Neural Network Ansatz | Periodic Neural Network [10] | High-accuracy wavefunction approximation for solids | H-chain, Graphene, LiH |

| Tensor Networks | MCA-MPS [15] | Enhanced correlation handling for 1D systems | H-chain, Quasi-1D Systems |

| Quantum Monte Carlo | VMC with KFAC [10] | Neural network parameter optimization | All Systems |

| Periodic Boundary Treatment | TABC with S(k) correction [10] | Finite-size error reduction | Graphene, LiH, H-chain |

| Hamiltonian Transformation | Pauli String Representation [15] | Efficient computation of transformed Hamiltonians | H-chain, Fermionic Systems |

| Classical Simulation | Matchgate & Clifford Circuits [15] | Enhanced representation within DMRG | H-chain, Quantum Many-Body |

This comparison guide demonstrates that recently developed neural network and augmented tensor network methods achieve remarkable accuracy across diverse benchmark systems, often surpassing traditional ab initio approaches and closely matching experimental references where available. The neural network ansatz shows particular promise as a versatile approach capable of handling systems across all dimensions—from one-dimensional hydrogen chains to three-dimensional LiH crystals—while maintaining high accuracy. For one-dimensional systems specifically, MCA-MPS offers dramatic improvements over standard tensor network methods by several orders of magnitude in ground-state energy accuracy. These advanced methods successfully address fundamental challenges in quantum materials simulation, including electron correlation handling, finite-size error correction, and balanced treatment of diverse bonding types. As these methodologies continue to mature, they establish new standards for quantification accuracy in computational materials science and quantum chemistry, enabling more reliable prediction of material properties and guiding the exploration of novel quantum phenomena.

In the pursuit of simulating nature at the quantum level, researchers are presented with a fundamental choice: how to represent molecules and materials in a form a quantum computer can process. This decision, centered on the method of quantization, directly dictates the computational resources required and the complexity of the problems that can be tackled. As quantum hardware advances, understanding the trade-offs between first and second quantization, as well as the emergence of hybrid quantum-classical algorithms, is crucial for navigating the path toward quantum utility in chemistry and materials science.

Mapping Matter to Qubits: A Tale of Two Quantization Methods

The formalism used to encode a chemical system into a quantum algorithm sets the stage for all subsequent computations. The two primary approaches, first and second quantization, have distinct strengths and resource profiles, making them suitable for different classes of problems.

Second Quantization: The Established Framework

In second quantization, the electronic wavefunction is represented using creation and annihilation operators that act on occupation number states (e.g., whether a specific spin orbital is occupied or unoccupied). This formalism naturally encodes the antisymmetry of electrons [12]. A key advantage is its compatibility with sophisticated, compact quantum chemistry basis sets, such as Gaussian-type orbitals, which are the standard in classical computational chemistry [12]. This allows for the accurate simulation of active spaces in molecules, a common task in the field [12].

The resource requirements in second quantization scale with the number of orbitals, (D). The number of qubits required is (O(D)), while the computational cost (measured in Toffoli gates for fault-tolerant algorithms) depends on the specific linear-combination-of-unitaries (LCU) decomposition used, such as sparse, double factorization, or tensor hypercontraction [12].

First Quantization: A Scalable Alternative

First quantization takes a different approach, representing the system by storing the coordinates of each of the (N) electrons in a discrete basis [12]. This leads to an exponential reduction in the scaling of qubit count with respect to the number of orbitals; the number of qubits required is (O(N \log D)) [12]. This makes it exceptionally powerful for scaling up to high-accuracy simulations that require a large number of orbitals to approximate the continuum limit, such as in modeling materials with plane-wave basis sets [12].

Recent algorithmic breakthroughs have extended first quantization beyond simple plane waves to work with any basis set, including molecular orbitals [12]. For molecular orbitals, this can lead to a polynomial speedup in Toffoli count. For dual plane waves (DPW), the resource savings can be dramatic, reaching orders of magnitude improvement in both logical qubit and Toffoli counts over second quantization counterparts [12].

Table 1: Comparison of Quantization Methods for Quantum Simulation

| Feature | Second Quantization | First Quantization |

|---|---|---|

| Qubit Scaling | (O(D)) [12] | (O(N \log D)) [12] |

| Strengths | Compatible with standard quantum chemistry basis sets (e.g., GTO); ideal for active space calculations [12] | Excellent for large systems with many orbitals; efficient for plane-wave and dual plane-wave basis sets [12] |

| Key Algorithms | QPE with qubitization using sparse, double factorization, or tensor hypercontraction LCU [12] | QPE with qubitization using a sparse LCU decomposition [12] |

| Basis Set Flexibility | High (any basis function) [12] | High (new methods work with any basis set) [12] |

Beyond Fault-Tolerance: Hybrid Algorithms for Today's Quantum Hardware

While fault-tolerant quantum algorithms like Quantum Phase Estimation (QPE) offer a long-term target, the current era of Noisy Intermediate-Scale Quantum (NISQ) hardware has spurred the development of hybrid quantum-classical algorithms. These methods delegate the most computationally demanding tasks to the quantum processor while using classical computers for optimization and control.

The VQE Family for Electronic Structure

The Variational Quantum Eigensolver (VQE) and its adaptive variant, ADAPT-VQE, are cornerstone hybrid algorithms for finding ground-state energies of molecular systems [16]. They operate by preparing a parameterized quantum circuit (ansatz) on the quantum computer, measuring the expectation value of the Hamiltonian, and using a classical optimizer to minimize this energy.

A key innovation for improving accuracy without increasing quantum resource demands is the integration of methods like double unitary coupled cluster (DUCC) theory. DUCC simplifies the Hamiltonian representation, enabling quantum simulations to recover dynamical correlation energy outside a defined active space, which is particularly valuable for systems with strongly correlated electrons [16].

Driving Molecular Dynamics with Quantum Computers

Quantum computers are not limited to static energy calculations. A rapidly growing application is their use in nonadiabatic molecular dynamics (NAMD), which simulates excited-state processes critical to photochemistry and photobiology [17].

In hybrid quantum-classical NAMD, the quantum computer's role is to compute key electronic properties—energies, energy gradients, and nonadiabatic couplings—at each step of a classical nuclear trajectory [17]. These properties are then fed into classical dynamics frameworks, such as trajectory surface hopping (TSH) or Ehrenfest dynamics [17].

Proof-of-concept demonstrations have shown the viability of this approach. For instance:

- A VQE-based framework coupled with the SHARC molecular dynamics package has successfully simulated the photoisomerization of methanimine and excited-state relaxation in ethylene [17].

- Another study used quantum subspace expansion and quantum equation-of-motion algorithms within a TSH framework to model the H + H₂ collision system [17].

These algorithms provide a distinct advantage: direct access to CI-type wavefunctions. This allows for efficient calculation of wavefunction overlaps between time steps, a requirement for propagating electronic coefficients in many dynamics schemes, which is less reliable with machine-learning techniques that lack explicit wavefunctions [17].

Figure 1: Workflow of a hybrid quantum-classical algorithm for nonadiabatic molecular dynamics. The quantum computer computes electronic properties at each nuclear geometry, which drive the classical trajectory.

The Researcher's Toolkit: Essential Methods for Quantum Chemistry

The field leverages a suite of advanced algorithms and computational techniques, each designed to address specific challenges in quantum simulation.

Table 2: Key Algorithms and Their Functions in Quantum Chemistry

| Algorithm/Method | Primary Function | Key Feature |

|---|---|---|

| Quantum Phase Estimation (QPE) [12] | Accurately estimates molecular energies (near-exact) for fault-tolerant computers. | Considered a leading approach for its resource efficiency in fault-tolerant settings [12]. |

| Variational Quantum Eigensolver (VQE) [16] [17] | Finds approximate ground-state energies on NISQ devices. | Hybrid quantum-classical approach; resilient to some noise [16] [17]. |

| ADAPT-VQE [16] | Dynamically constructs an ansatz for VQE. | Problem-tailored; improves convergence and accuracy compared to fixed ansatzes [16]. |

| Double Unitary Coupled Cluster (DUCC) [16] | Creates effective Hamiltonians for quantum simulations. | Increases accuracy without significantly increasing quantum processor load; improves treatment of electron correlation [16]. |

| Quantum Subspace Expansion (QSE) [17] | Computes excited-state properties and wavefunction overlaps. | Used to provide inputs for nonadiabatic molecular dynamics simulations [17]. |

Experimental Protocols: Benchmarking Quantum Simulations

Demonstrating the accuracy and utility of quantum simulations requires rigorous benchmarking against classical methods and experimental data.

Protocol: Quantum Simulation of Ground States with DUCC and ADAPT-VQE

This protocol, as implemented by researchers at PNNL, is designed for accurate simulation of strongly correlated molecular systems [16].

- System Definition: Define the molecular system and its active space.

- Hamiltonian Downfolding: Apply DUCC theory to the system to construct an effective Hamiltonian that incorporates dynamical correlations from outside the active space.

- Ansatz Preparation: Use the ADAPT-VQE algorithm to prepare a problem-tailored ansatz wavefunction on the quantum processor.

- Measurement & Optimization: Measure the expectation value of the DUCC effective Hamiltonian and use a classical optimizer to variationally minimize the energy.

- Benchmarking: Compare the computed ground-state energy and properties against results from full configuration interaction (FCI) or other high-accuracy classical methods to assess the accuracy recovery from the DUCC downfolding [16].

Protocol: Hybrid Quantum-Classical Nonadiabatic Molecular Dynamics

This protocol outlines the steps for simulating light-induced molecular processes, such as photoisomerization [17].

- Initialization: Generate an initial nuclear geometry and velocities, typically corresponding to the ground state after photoexcitation.

- Quantum Electronic Structure: For the current nuclear geometry (R(t)), use a hybrid algorithm (e.g., VQE/VQD or QSE) on the quantum computer to compute:

- The energies (Em(R)) of relevant electronic states.

- The gradients of these energies (∇Em(R)).

- The nonadiabatic coupling vectors (NACVs) (d_{mn}(R)) between states.

- Classical Propagation:

- Nuclear Motion: Integrate Newton's equations of motion for the nuclei using the computed forces (which can be from a single active surface in TSH or an average in Ehrenfest dynamics) [17].

- Electronic Propagation: Propagate the coefficients of the electronic wavefunction according to the time-dependent Schrödinger equation, using the computed energies and NACVs.

- Stochastic Hopping (for TSH): In trajectory surface hopping, determine if a stochastic hop between electronic states occurs based on the NACVs.

- Iteration: Repeat steps 2-4 for the duration of the dynamics simulation to generate a trajectory.

Figure 2: Detailed workflow for a single time step in Trajectory Surface Hopping (TSH) dynamics, driven by electronic properties from a quantum computer.

Performance and Resource Analysis

The choice of algorithm and quantization method has a profound impact on the computational resources required, a critical consideration for both near-term and fault-tolerant applications.

Algorithmic Performance and Hardware Progress

Recent studies highlight the tangible progress in quantum simulation:

- IonQ Collaboration: A study on protonated 2,2'-bipyridine used an ion-trap quantum computer to emulate quantum nuclear wavepacket dynamics. The extracted vibrational eigenenergies agreed with classical results "within a fraction of a kcal/mol," suggesting chemical accuracy was achieved [18].

- IonQ's QC-AFQMC: In collaboration with an automotive manufacturer, IonQ demonstrated accurate computation of atomic-level forces using a quantum-classical algorithm, reporting results more accurate than those from classical methods for modeling carbon capture materials [7].

- IBM's Hardware Roadmap: IBM's 120-qubit Nighthawk processor, designed for quantum advantage, is projected to execute circuits with 5,000 two-qubit gates by the end of 2025, a 30% increase in complexity over previous processors. Future iterations aim for up to 15,000 gates by 2028 [19]. Advances in dynamic circuits have already shown a 24% increase in accuracy at the 100+ qubit scale [19].

Resource Estimates for Fault-Tolerant Applications

For fault-tolerant quantum computing, resources are often measured in the number of logical qubits and the number of non-Clifford gates (e.g., Toffoli gates).

- First vs. Second Quantization: For molecular orbital calculations, the first quantization approach can achieve a polynomial speedup in Toffoli count with respect to the number of basis functions, (D) [12]. It also naturally provides an exponential improvement in qubit count scaling.

- Dual Plane Waves: When using dual plane wave basis sets in first quantization, the resource savings become massive, with orders of magnitude improvement in both logical qubit and Toffoli counts compared to the best-known second quantization counterparts [12]. In some instances, this new first quantization approach can even match or beat the resource requirements of earlier first quantization plane wave algorithms that avoided classical data loading [12].

The quest for quantum accuracy in simulating chemical systems is a journey of navigating complexity through innovative algorithms. Second quantization offers a direct path to studying active spaces with sophisticated chemical basis sets. In contrast, first quantization provides a scalable pathway to high-accuracy simulations requiring a large number of orbitals, with recent advances dramatically reducing resource overhead. Meanwhile, hybrid quantum-classical methods offer an immediate, practical bridge to tackle complex problems like nonadiabatic dynamics on today's quantum hardware. The continuous improvement in both algorithms and hardware fidelity signals a rapidly closing gap between theoretical potential and practical quantum advantage in computational chemistry and materials science.

Computational Arsenal: Methodologies and Their Real-World Applications

Computational predictions of molecular and material properties are fundamental to advancements in drug design and materials science. The central challenge in this field lies in balancing computational cost with quantum mechanical accuracy. Density Functional Theory (DFT) has emerged as the workhorse of computational chemistry due to its favorable balance of efficiency and accuracy for large systems, modeling properties using electron density rather than complex many-electron wavefunctions [20] [21]. However, its accuracy is intrinsically limited by the approximate nature of the exchange-correlation functional, which describes electron-electron interactions [20]. For many chemical applications, particularly those involving weak intermolecular interactions, transition states, or excited states, these limitations can lead to qualitatively incorrect predictions [20].

In pursuit of higher accuracy, coupled-cluster theory, especially the CCSD(T) method often called the "gold standard of quantum chemistry," provides systematically improvable solutions to the electronic Schrödinger equation [22]. Unfortunately, its computational cost, which scales steeply with system size (approximately as the seventh power of the number of basis functions for CCSD(T)), renders it prohibitive for many realistic systems encountered in drug development [23]. This creates a persistent accuracy-efficiency gap that hinders predictive computational science.

The emergence of Δ-machine learning (Δ-ML) presents a transformative solution to this long-standing problem. This approach leverages machine learning to learn the difference ("Δ") between high-level (coupled-cluster) and low-level (DFT) calculations, effectively correcting DFT potential energy surfaces to near-coupled-cluster quality at a fraction of the computational cost [23] [24]. This article provides a comprehensive comparison of this methodology against traditional computational approaches, detailing experimental protocols, performance metrics, and practical implementation for research scientists.

Theoretical Foundation: From DFT to Δ-Machine Learning

Density Functional Theory: The Efficient Workhorse

DFT revolutionized computational chemistry by simplifying the many-electron problem from a complex wavefunction dependent on 3N spatial coordinates to a tractable problem dependent on just three spatial coordinates through the electron density, n(r) [20]. This theoretical foundation rests on the Hohenberg-Kohn theorems, which establish that the ground-state electron density uniquely determines all molecular properties [20] [21]. In practice, DFT is implemented through the Kohn-Sham equations, which replace the interacting electron system with a fictitious non-interacting system moving in an effective potential [20].

The critical limitation of DFT stems from the exchange-correlation functional, which must be approximated as its exact form remains unknown [20]. Different functionals—including Local Density Approximation (LDA), Generalized Gradient Approximation (GGA), and hybrid functionals like B3LYP and PBE0—offer different trade-offs between accuracy, computational cost, and applicability to specific chemical systems [20] [23]. Despite remarkable success across numerous applications, standard DFT formulations often struggle with van der Waals interactions, charge transfer excitations, and strongly correlated systems [20] [21].

Coupled-Cluster Theory: The Quantum Chemical Gold Standard

The coupled-cluster hierarchy provides a systematic approach to the exact many-body solution of the electronic Schrödinger equation, producing size-extensive energies that converge rapidly with increasing excitation levels [22]. CCSD(T) specifically incorporates single and double excitations with a perturbative treatment of triple excitations, achieving chemical accuracy (within 1 kcal/mol) for many systems where dynamic correlation dominates [22].

As a wavefunction-based method, coupled-cluster theory provides not only highly accurate energies but also superior electron densities and other molecular properties compared to approximate DFT functionals [22]. The method's principal disadvantage remains its computational expense, which limits application to systems of approximately 50-100 atoms with current computing resources, creating the need for innovative approaches like Δ-ML for larger, pharmacologically relevant molecules.

Δ-Machine Learning: The Synthesis

The Δ-machine learning approach synthesizes the efficiency of DFT with the accuracy of coupled-cluster theory through a simple yet powerful equation:

VLL→CC = VLL + ΔVCC–LL

Where VLL represents the potential energy surface from low-level (DFT) calculations, ΔVCC–LL is the correction potential learned from high-level coupled-cluster data, and VLL→CC is the final corrected potential approaching coupled-cluster accuracy [23]. This approach is computationally efficient because the correction term ΔVCC–LL is typically more smoothly varying than the original PES, requiring a less complex machine learning model [23]. The method can be applied to correct not only energies but also atomic forces, enabling accurate molecular dynamics simulations [24].

Performance Comparison: Quantitative Assessment Across Chemical Systems

Molecular Systems: Ethanol as a Benchmark Case

Recent investigations have systematically evaluated the Δ-ML approach across multiple DFT functionals using ethanol as a benchmark molecule. The performance was assessed using root-mean-square error (RMSE) analysis for training and test datasets, along with fidelity tests including energetics of stationary points, normal-mode frequencies, and torsional potentials [23].

Table 1: Performance of Δ-ML Approach for Ethanol Across Different Functionals

| Functional | Base DFT RMSE (kcal/mol) | Δ-ML Corrected RMSE (kcal/mol) | Improvement Factor |

|---|---|---|---|

| B3LYP | 1.85 | 0.15 | 12.3x |

| PBE | 2.37 | 0.21 | 11.3x |

| M06 | 1.92 | 0.18 | 10.7x |

| M06-2X | 1.64 | 0.14 | 11.7x |

| PBE0+MBD | 1.71 | 0.16 | 10.7x |

The results demonstrate that Δ-ML produces similar dramatic improvements across all tested functionals, reducing errors to approximately 0.15-0.21 kcal/mol—well within chemical accuracy thresholds [23]. This consistency highlights the method's robustness across different theoretical starting points. Interestingly, significant improvement over DFT gradients was achieved even when coupled-cluster gradients were not used to correct the low-level potential energy surface [23].

Solid-State Systems: Lattice Dynamics Applications

The Δ-ML approach has been successfully extended to solid-state systems, demonstrating particular value for predicting lattice dynamics with coupled-cluster accuracy. For carbon diamond and lithium hydride solids, machine-learned force fields (MLFFs) trained on coupled-cluster theory through delta-learning produced phonon dispersions and vibrational densities of states that showed superior agreement with experiment compared to pure DFT calculations [24].

Table 2: Lattice Dynamics Performance for Solid-State Systems

| System | Method | Optical Phonon Frequency Accuracy | Anharmonic Effects |

|---|---|---|---|

| Carbon Diamond | DFT-PBE | Underestimates experimental values | Limited treatment |

| Carbon Diamond | Δ-ML-CC | Agreement with experiment | Improved description |

| Lithium Hydride | DFT-PBE | Underestimates experimental values | Limited treatment |

| Lithium Hydride | Δ-ML-CC | Agreement with experiment | Accurate CC-level estimation |

Compared to DFT, MLFFs trained on coupled-cluster theory yield higher vibrational frequencies for optical modes, agreeing better with experimental measurements [24]. Furthermore, these machine-learned force fields successfully capture anharmonic effects on the vibrational density of states of lithium hydride at the level of coupled-cluster theory [24].

Limitations and Boundary Conditions

While Δ-ML demonstrates remarkable performance across diverse systems, its accuracy depends on several critical factors. The quality of the coupled-cluster reference data remains paramount, requiring careful attention to basis set completeness and the treatment of core electron correlations [22]. Additionally, the smoothness of the difference potential ΔVCC–LL determines the efficiency of the machine learning representation—systems with strong static correlation or multireference character may present challenges where the difference potential varies rapidly [22].

For molecular systems where coupled-cluster calculations are prohibitively expensive, the hierarchical nature of coupled-cluster theory provides a systematic convergence pathway. Studies indicate that the electron density converges rapidly when ascending the coupled-cluster ladder, though less rapidly than the energy itself since energy errors are second-order in the wavefunction while density errors are first-order [22].

Experimental Protocols and Implementation

Computational Workflow for Δ-ML Potential Development

Implementing the Δ-ML approach requires a structured workflow that integrates quantum chemistry calculations with machine learning techniques. The process begins with generating a diverse set of molecular configurations that adequately sample the relevant regions of configuration space, particularly around minima and transition states [23].

For each configuration, low-level DFT single-point energy and gradient calculations are performed, followed by high-level coupled-cluster calculations on a strategically chosen subset of configurations [23]. The critical difference values (Δ = ECC - EDFT) are computed and used to train a machine learning model. Permutationally invariant polynomials (PIPs) have proven particularly effective for this purpose, as they naturally incorporate molecular symmetry and demonstrate excellent data efficiency [23]. The final potential combines the base DFT potential with the machine-learned correction.

Research Reagent Solutions: Essential Computational Tools

Table 3: Essential Software and Methods for Δ-ML Implementation

| Tool Category | Specific Examples | Function | Application Context |

|---|---|---|---|

| Quantum Chemistry Packages | Gaussian, VASP, Quantum ESPRESSO | Perform DFT and coupled-cluster calculations | Generate low and high-level reference data |

| Machine Learning Potentials | Permutationally Invariant Polynomials (PIPs), Neural Networks | Represent the Δ correction potential | Create accurate, efficient corrections |

| Δ-ML Software | Custom codes, ROBOSURFER | Automate the correction process | Enable high-throughput PES development |

| Validation Tools | Phonon dispersion analysis, vibrational spectra comparison | Assess quality of corrected potentials | Verify experimental agreement |

The Permutationally Invariant Polynomials (PIPs) approach deserves special emphasis as it represents a particularly efficient linear regression method that incorporates molecular symmetry by construction [23]. The potential is represented as V = Σcipi(y), where ci are linear coefficients, pi are permutationally invariant polynomials, and y are Morse variables (e.g., yαβ = exp(-rαβ/λ)) [23]. This approach has demonstrated performance competitive with more complex neural network methods while offering substantially faster evaluation speeds [23].

The Δ-machine learning approach represents a paradigm shift in computational chemistry, effectively bridging the decades-old gap between computational efficiency and quantum mechanical accuracy. By leveraging machine learning to capture the difference between approximate DFT and high-level coupled-cluster theories, this methodology enables coupled-cluster quality predictions for molecular systems that were previously computationally prohibitive.

The consistently dramatic improvements across diverse chemical systems—from small organic molecules like ethanol to solid-state materials like diamond and lithium hydride—demonstrate the robustness and transferability of this approach [23] [24]. The achievement of chemical accuracy (errors < 1 kcal/mol) across multiple functionals underscores how Δ-ML compensates for the limitations of approximate exchange-correlation functionals in DFT.

Looking forward, the integration of Δ-ML with emerging computational technologies presents exciting opportunities. The approach naturally complements high-throughput screening platforms, enabling rapid evaluation of catalyst libraries or drug candidates with coupled-cluster quality accuracy [21]. Similarly, synergies with artificial intelligence are rapidly expanding, with machine learning both enhancing DFT functionals and leveraging Δ-corrected datasets for property prediction [21]. As quantum computing platforms mature, they may provide even more accurate reference data for training Δ-ML models, creating a virtuous cycle of improving computational accuracy.

For researchers in drug development and materials science, these advances translate to significantly enhanced predictive capabilities for molecular properties, reaction mechanisms, and spectroscopic signatures. By providing practical pathways to coupled-cluster accuracy for molecular systems of relevant size and complexity, Δ-machine learning represents not just a theoretical advancement but an immediately useful tool for accelerating scientific discovery and innovation.

Multiscale modeling represents a paradigm shift in computational chemistry, enabling researchers to simulate complex chemical systems by integrating multiple computational methods across different scales of resolution. The core philosophy of these frameworks is the "divide and conquer" (DC) approach, where a complex problem is partitioned into simpler sub-problems until they become tractable for adequate solution [25]. This methodology is particularly valuable for simulating realistic material and (bio)chemical systems that involve complex environments such as surfaces, interfaces, and enzymatic active sites, where the Schrödinger equations are too complicated to solve directly [25].

The integration of Quantum Mechanics/Molecular Mechanics (QM/MM) with advanced quantization techniques represents a cutting-edge development in this field. QM/MM methods, first proposed by Warshel and Levitt, provide a multiscale computational tool that allows reliable quantum mechanical calculations on active sites with realistic modeling of complex environments [26]. These approaches strike a balance between computational accuracy and efficiency by describing the chemically active region using quantum mechanics while treating the surrounding environment with molecular mechanics. The recent incorporation of machine learning techniques, particularly neural networks, has further enhanced these methods by enabling direct molecular dynamics simulations on neural network-predicted potential energy surfaces that approximate ab initio QM/MM molecular dynamics [26].

Within the broader context of quantization in chemical systems research, these multiscale frameworks address a fundamental challenge: the exponential complexity of exact quantum mechanical solutions. By strategically applying high-level quantum methods only where necessary and supplementing with classical approaches, researchers can achieve accurate simulations of systems comprising hundreds of orbitals with reasonable computational costs [25]. This review provides a comprehensive comparison of current multiscale frameworks, their performance characteristics, implementation protocols, and applications across different chemical systems.

Comparative Analysis of Multiscale Frameworks

Performance Metrics Across Frameworks

Table 1: Comparative Performance of Multiscale Computational Frameworks

| Framework | System Size Capability | Accuracy Level | Computational Efficiency | Key Limitations |

|---|---|---|---|---|

| Traditional QM/MM | Medium (Tens of QM atoms) | High (Ab initio QM) | Low (Direct MD expensive) | Limited sampling, high computational cost for ab initio QM [26] |

| Semiempirical QM/MM | Large (Hundreds of atoms) | Medium (Parametrized) | High (Fast MD possible) | Accuracy depends on parameterization, less reliable for some systems [26] |

| Multiscale Quantum Computing | Large (Hundreds of orbitals) | High (Near-exact for active space) | Medium (Quantum advantage potential) | Limited by current quantum hardware, NISQ constraints [25] |

| QM/MM-NN MD | Large (Full enzymatic systems) | High (Ab initio accuracy) | High (100x cost reduction) | Requires initial training, potential instability on rough PES [26] |

| MBE Fragmentation Approach | Very Large (Complex biomolecules) | High (Systematically improvable) | Medium (Depends on fragment size) | Accuracy depends on fragmentation level and many-body terms [25] |

Table 2: Quantitative Accuracy and Efficiency Metrics

| Method | Energy Error (kcal/mol) | Speedup vs Traditional QM/MM | Configuration Sampling Efficiency | Dynamic Correlation Treatment |

|---|---|---|---|---|

| Traditional QM/MM | Reference | 1x | Limited to ps-ns scale | Direct in QM region |

| Semiempirical QM/MM | 5-15 (system dependent) | 100-1000x | Extensive (ns-μs possible) | Approximate via parameters |

| Multiscale Quantum Computing | 1-3 (for active space) | Not yet quantified | Limited by quantum simulations | Via perturbation theory [25] |

| QM/MM-NN MD | 1-2 | ~100x | Extensive with ab initio accuracy | Direct in QM region [26] |

| MBE Fragmentation | 2-5 (depends on expansion order) | 10-100x | Limited by fragment calculations | Varies with fragment method [25] |

Framework-Specific Capabilities and Applications

Traditional QM/MM methods remain the gold standard for accuracy but suffer from severe computational limitations. The requirement for electronic structure calculations at each MD step restricts simulations to small QM regions and short timescales, typically picoseconds to nanoseconds [26]. This fundamentally limits their application for processes with slow dynamics or requiring extensive statistical sampling.

Semiempirical QM/MM approaches significantly reduce computational cost through parametrized quantum methods such as AM1 and SCC-DFTB, enabling nanosecond-scale simulations of large systems [26]. However, accuracy is compromised, particularly for systems where parametrization is inadequate or electronic correlation effects are crucial. These methods serve as important starting points for more sophisticated multiscale approaches but lack the reliability needed for quantitative predictions in novel chemical systems.

Multiscale Quantum Computing represents an emerging paradigm that leverages quantum processors for the most computationally demanding components of quantum chemistry calculations. This framework employs fragmentation approaches like many-body expansion (MBE) to decompose large QM systems into smaller fragments amenable to quantum processing [25]. The quantum computer solves the complete active space (CAS) problems for each fragment, while classical processors handle the integration of fragment solutions and environmental effects. This approach is particularly promising for treating strong correlation effects that challenge classical computational methods.

QM/MM-Neural Network Molecular Dynamics combines the efficiency of semiempirical methods with the accuracy of ab initio approaches through machine learning. The neural network predicts the potential energy difference between semiempirical and ab initio QM/MM methods, enabling direct MD simulations at near-ab initio accuracy with approximately 100-fold computational cost reduction [26]. The adaptive implementation of this method, which updates the neural network during MD simulations when novel configurations are encountered, ensures robustness and transferability across configuration space.

Many-Body Expansion Fragmentation approaches systematically decompose large QM systems into subsystems whose solutions are combined to approximate the total energy and properties [25]. The accuracy of MBE can be systematically improved by including higher-order many-body corrections, providing a controlled approximation to the full system solution. This method is particularly effective for systems with localized interactions and can be integrated with various electronic structure methods at different levels of theory.

Experimental Protocols and Methodologies

QM/MM-NN Molecular Dynamics Protocol

The QM/MM-NN MD protocol represents a sophisticated integration of machine learning with multiscale simulations to achieve ab initio accuracy at significantly reduced computational cost [26]. The methodology involves an iterative cycle of neural network training and molecular dynamics simulation, gradually improving the accuracy and transferability of the potential energy surface.

Step 1: Initial Configuration Sampling. The process begins with semiempirical QM/MM MD simulations (e.g., using SCC-DFTB or AM1) to generate an initial ensemble of configurations representative of the system's relevant phase space. This sampling typically covers several picoseconds to nanoseconds, depending on system size and the processes of interest. Configurations are saved at regular intervals (e.g., every 10-100 fs) to capture the structural diversity.

Step 2: Ab Initio QM/MM Single-Point Calculations. A subset of configurations (typically hundreds to thousands) is selected from the semiempirical trajectory for high-level ab initio QM/MM single-point energy and force calculations. Selection strategies may include random sampling, geometric criteria, or energy-based criteria to ensure representation of diverse configurations.

Step 3: Neural Network Training. A neural network is trained to predict the potential energy difference (ΔE = Eab initio - Esemiempirical) between the semiempirical and ab initio QM/MM potential energies. The input features typically include descriptors of the local chemical environment, such as atom-centered symmetry functions, bond distances, angles, or dihedrals. The network architecture (number of layers, nodes, activation functions) is optimized for the specific system.

Step 4: NN-Driven MD Simulations. Direct MD simulations are performed on the NN-corrected potential energy surface. At each MD step, the semiempirical QM/MM energy and forces are computed, then corrected by the neural network prediction. This enables dynamics that approximate ab initio QM/MM accuracy at a computational cost only slightly higher than semiempirical QM/MM.

Step 5: Adaptive Database Expansion. During NN-driven MD, new configurations that exhibit high prediction uncertainty or diverge from expected behavior are identified using criteria such as the committee disagreement in ensemble neural networks or extrapolation indicators. These configurations are added to the training database, and high-level ab initio calculations are performed for these new points.

Step 6: Iterative Refinement. Steps 3-5 are repeated for 2-4 cycles until convergence is achieved, indicated by stable energy distributions, minimal neural network prediction uncertainties, and consistent thermodynamic properties across iterations.

Multiscale Quantum Computing Workflow

The multiscale quantum computing framework integrates classical computational methods with quantum processing to solve electronic structure problems beyond the reach of purely classical approaches [25]. This protocol is particularly designed for near-term noisy intermediate-scale quantum (NISQ) devices with limited qubit counts and coherence times.

Step 1: System Decomposition. The target system is partitioned into fragments using energy-based fragmentation approaches such as many-body expansion (MBE). For a system divided into N fragments, the total energy is expressed as:

Etotal = ΣEi + ΣΔEij + ΣΔEijk + ...

where Ei represents the energy of fragment i, ΔEij represents the two-body interaction correction, and higher-order terms capture increasingly complex many-body interactions.

Step 2: Active Space Selection. For each fragment, the orbital space is divided into active and frozen spaces. The active space contains orbitals essential for describing static correlation effects, typically including frontier orbitals and those involved in bond formation/breaking. The frozen space consists of core orbitals and high-energy virtual orbitals that contribute less to correlation effects.

Step 3: Quantum Computation of Fragment Hamiltonians. The electronic structure problem for each fragment's active space is mapped to a qubit representation using transformations such as Jordan-Wigner or Bravyi-Kitaev. The quantum computer solves the complete active space configuration interaction (CASCI) problem for each fragment using variational quantum eigensolver (VQE) or similar NISQ-friendly algorithms.

Step 4: Dynamic Correlation Recovery. The dynamic correlation energy, which is essential for quantitative accuracy but challenging for current quantum hardware, is recovered using classical perturbation theory methods such as second-order Møller-Plesset perturbation theory (MP2) [25]. This hybrid approach leverages the complementary strengths of quantum processing for strong correlation and classical methods for dynamic correlation.

Step 5: Energy Assembly and Environmental Effects. The fragment energies and corrections are combined according to the MBE formula. Environmental effects from the molecular mechanics region are incorporated through QM/MM coupling terms, including electrostatic embedding and van der Waals interactions.

Table 3: Computational Tools and Resources for Multiscale Simulations

| Tool/Resource | Function | Implementation Considerations |

|---|---|---|

| Quantum Processing Units (QPUs) | Hardware for solving fragment CASCI problems | Limited qubit counts (50-100+), gate fidelities, coherence times on current hardware [25] |

| High-Dimensional Neural Networks | Predict potential energy differences between computational levels | Requires careful feature design, training database construction, and validation [26] |

| Hybrid Quantum-Classical Algorithms | Variational Quantum Eigensolver (VQE) for electronic structure | Ansatz selection, parameter optimization strategies, measurement reduction techniques |

| Ab Initio Quantum Chemistry Codes | Reference calculations for neural network training | Software such as Gaussian, ORCA, Q-Chem for high-level single-point calculations [26] |

| Semiempirical Quantum Codes | Efficient QM region sampling | AM1, PM3, SCC-DFTB methods for initial configuration sampling [26] |

| Molecular Dynamics Engines | Configuration sampling and dynamics propagation | Software such as AMBER, GROMACS, NAMD with QM/MM capabilities |

| Fragmentation Algorithms | System decomposition into manageable fragments | Many-body expansion, density matrix embedding, or fragment molecular orbital approaches [25] |

The comparative analysis presented in this review demonstrates that multiscale frameworks integrating QM/MM molecular dynamics with advanced quantization techniques represent a powerful paradigm for computational chemistry. Each framework offers distinct advantages: traditional QM/MM provides benchmark accuracy for small systems, semiempirical QM/MM enables extensive sampling of large systems, multiscale quantum computing offers a path to quantum advantage for strongly correlated systems, QM/MM-NN MD delivers ab initio accuracy at significantly reduced cost, and MBE fragmentation approaches enable systematic treatment of large systems.

The experimental protocols detailed herein provide actionable methodologies for implementing these frameworks in practical research settings. The QM/MM-NN MD approach, with its adaptive learning cycle, offers particularly promising performance for free energy calculations and reaction dynamics characterization in complex chemical and biochemical environments. Meanwhile, the multiscale quantum computing framework, though still limited by current quantum hardware, represents a forward-looking approach that may unlock new capabilities as quantum technology advances.

Future developments in this field will likely focus on several key areas: improved integration between computational levels, enhanced sampling techniques for rare events, more efficient neural network architectures and training strategies, and tighter coupling between quantum and classical processing elements. As these methodologies mature, they will increasingly enable first-principles predictions of complex chemical phenomena across materials science, catalysis, and drug discovery, fundamentally advancing our ability to design and optimize molecular systems from quantum mechanics.

Quantum Phase Estimation (QPE) stands as a foundational algorithm in quantum computing, promising exponential speedups for determining the eigenvalues of unitary operators, with profound implications for quantum chemistry and materials science [27]. As research moves from theoretical promise to practical application, the choice of implementation method—particularly the formalism of quantization and the selection of basis sets—critically impacts the feasibility and resource requirements of quantum computations [12]. This analysis provides a comprehensive resource comparison of QPE implementations across different chemical systems, offering researchers in chemistry and drug development critical insights for planning quantum computing experiments in the current era of rapid technological advancement [28].

The quantum computing landscape has witnessed remarkable progress in 2025, with hardware breakthroughs pushing error rates to record lows and error correction demonstrating exponential improvement as qubit counts increase [28]. These advancements are accelerating the timeline for practical quantum advantage in chemical simulation, making rigorous resource analysis increasingly vital for research planning and implementation.

Performance Comparison of QPE Implementations

The computational resources required for QPE vary dramatically based on the choice of quantization formalism (first or second quantization) and the specific basis set employed. These choices create distinct trade-offs between qubit counts, gate requirements, and algorithmic efficiency that must be carefully balanced for specific chemical applications.

Table 1: Resource Requirements for Different QPE Implementations in Chemical Systems

| Implementation Method | System Qubits | Toffoli Gates | Algorithmic Features | Optimal Use Cases |

|---|---|---|---|---|

| First Quantization with Molecular Orbitals | (N{\log}_2 2D) | Polynomial speedup with respect to D | Sparse LCU decomposition; Advanced QROAM primitive | Active space calculations; Systems with fixed electron count |

| First Quantization with Dual Plane Waves (DPW) | (N{\log}_2 2D) | Orders of magnitude improvement | Asymptotic speedup for molecular orbitals | Electron gas systems; Bulk materials simulation |