Quantum Algorithms for NP-Hard Chemistry Problems: Foundations, Applications, and Future Outlook

This article explores the transformative potential of quantum algorithms in solving NP-hard problems in chemistry, a domain where classical computers face fundamental limitations.

Quantum Algorithms for NP-Hard Chemistry Problems: Foundations, Applications, and Future Outlook

Abstract

This article explores the transformative potential of quantum algorithms in solving NP-hard problems in chemistry, a domain where classical computers face fundamental limitations. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive overview from foundational principles to cutting-edge applications. We examine the core quantum algorithms like VQE and QAOA being deployed for molecular simulation and optimization, address the critical challenges of noise and error correction, and present a comparative analysis of quantum versus classical performance. The discussion is grounded in the latest industry breakthroughs and real-world use cases in pharmaceutical research, offering a practical and forward-looking perspective on the imminent integration of quantum computing into the chemical sciences.

The Quantum Imperative: Why Chemistry's Toughest Problems Need Quantum Algorithms

The Classical Computing Bottleneck in Chemical Simulation

Accurately simulating chemical systems is fundamental to advancements in drug discovery and materials science. However, classical computers face a fundamental scalability barrier when modeling quantum mechanical phenomena. The core of the problem lies in the exponential growth of the computational resources required to represent many-body quantum systems. For a system with n quantum particles, the memory requirement scales as 2ⁿ, rapidly exceeding the capacity of even the most powerful classical supercomputers for relatively small n [1]. This bottleneck makes it intractable to achieve chemical accuracy—defined as an error of less than 1 kcal/mol—for many critical problems, such as simulating the binding of targeted covalent inhibitors or modeling complex reaction pathways in materials [2]. This whitepaper details the specific limitations of classical methods, the quantum algorithms designed to overcome them, and the experimental frameworks validating this new computational paradigm within the context of solving NP-hard problems in chemistry.

The Classical Computing Bottleneck

Fundamental Limitations in Simulating Quantum Systems

Classical simulations of quantum chemistry problems encounter intractable scaling for two primary reasons: the memory footprint of representing quantum states and the computational cost of high-accuracy methods.

- Memory Scaling of State Vector Simulation: The state vector method, an exact classical simulation technique, requires memory that grows exponentially with the number of quantum particles. Simulating a system with just 22 qubits can require a state vector that exceeds the available memory on leading high-performance computing (HPC) machines [1].

- Accuracy vs. Cost Trade-off in Covalent Inhibitor Design: Accurately modeling the covalent bond formation in targeted covalent inhibitors is critical for drug discovery. The energy barrier for this reaction (

ΔG^‡_inact) must be calculated with an error of less than 1 kcal/mol to be quantitatively useful, as a 5 kcal/mol error translates to an error of three orders of magnitude in the predicted reaction rate (k_inact) [2]. Achieving this "chemical accuracy" with classically intractable, high-level ab initio wave function methods is prohibitively expensive for large systems.

Table 1: Key Scaling and Accuracy Challenges in Classical Chemical Simulation

| Challenge Area | Specific Bottleneck | Impact |

|---|---|---|

| System Scaling | Exponential memory growth (O(2ⁿ)) with system size n [1] |

Limits simulations to relatively small molecules and active sites |

| Accuracy Requirements | Need for ~1 kcal/mol accuracy for predictive chemistry [2] | Lower-accuracy methods (e.g., DFAs) often fail to be predictive |

| Covalent Bond Simulation | High computational cost of accurate methods for bond breaking/formation [2] | Hinders rational design of targeted covalent inhibitors |

| Biomolecular Modeling | Trade-offs in QM/MM methods between QM region size, method accuracy, and simulation length [2] | Forces compromises that can introduce significant errors |

Performance of Alternative Classical Simulation Methods

Tensor network methods offer a more memory-efficient approach than state vector simulation for certain classes of quantum circuits, particularly those with limited entanglement. However, they face their own NP-hard challenge: finding the optimal path to contract the network [1]. In practice, classical approximations often fail for complex quantum simulations. For instance, in simulating the evolution of an Ising model, classical methods like matrix product states (MPS) and projected entangled-pair states (PEPS) break down as the system's complexity and simulation time increase. One study concluded that simulating the most complex systems on a classical supercomputer would take "millions of years," exceed its 700PB storage, and consume more electricity than the world's annual consumption [3].

Quantum Algorithms for Chemical Simulation

Quantum algorithms are designed to bypass the fundamental scaling limitations of classical computers by using qubits to represent the quantum system under study directly. The following table summarizes the primary algorithms relevant to chemical simulation.

Table 2: Key Quantum Algorithms for Chemical Simulation and Optimization

| Algorithm | Type | Primary Use Case in Chemistry | Potential Advantage |

|---|---|---|---|

| Quantum Phase Estimation (QPE) [4] | Gate-based (Fault-Tolerant) | Estimating eigenvalues of molecular Hamiltonians (energy levels) [5] | Exponential speedup for exact energy calculations |

| Variational Quantum Eigensolver (VQE) [4] | Hybrid Quantum-Classical (NISQ) | Finding ground state energies of molecules [4] [6] | Resilient to noise on current quantum devices |

| Quantum Annealing (QA) / Quantum Approximate Optimization Algorithm (QAOA) [4] [6] | Analog / Gate-based | Solving combinatorial optimization problems (e.g., configurational analysis) [6] | Heuristic speedup for finding low-energy configurations |

| Quantum-AFQMC | Hybrid Quantum-Classical | Calculating atomic-level forces for reaction pathways [7] | Enhanced accuracy for molecular dynamics |

Algorithmic Workflows and Experimental Protocols

Protocol for Configurational Analysis via VQE and QA

A 2025 cross-platform study provides a clear protocol for applying quantum optimization to a materials science problem: finding the lowest-energy configuration of a defective graphene lattice, an NP-hard Densest k-Subgraph problem [6].

- Problem Formulation: The task of removing

katoms from anN-site graphene sheet to maximize remaining bonds is encoded into a Quadratic Unconstrained Binary Optimization (QUBO) problem. - Algorithm Execution:

- VQE on Gate-based Hardware: A parameterized quantum circuit (ansatz) is executed on a gate-model quantum processing unit (QPU). A classical optimizer iteratively adjusts the parameters to minimize the expectation value of the problem's Hamiltonian.

- Quantum Annealing on Analog Hardware: The QUBO is mapped directly to the quantum annealer's qubit architecture. The system is evolved from an initial transverse field Hamiltonian to a final Hamiltonian that encodes the problem.

- Benchmarking and Analysis: Performance is evaluated using metrics like the approximation ratio and time-to-solution, comparing against classical algorithms like linear programming and greedy algorithms [6].

Protocol for Quantum Error-Corrected Chemistry Simulation

A landmark 2025 demonstration by Quantinuum showcased the first scalable, error-corrected workflow for chemical simulations, a critical step toward fault-tolerance [5].

- Logical Qubit Encoding: Multiple noisy physical qubits are encoded into a higher-fidelity logical qubit using Quantum Error Correction (QEC) codes.

- Quantum Phase Estimation: The QPE algorithm is executed on these logical qubits to calculate the energy of a chemical system with high precision.

- Full-Stack Integration: The workflow leverages a full-stack quantum computer (H2) with high-fidelity operations, all-to-all connectivity, and real-time QEC decoding, integrated with a computational chemistry platform (InQuanto) [5].

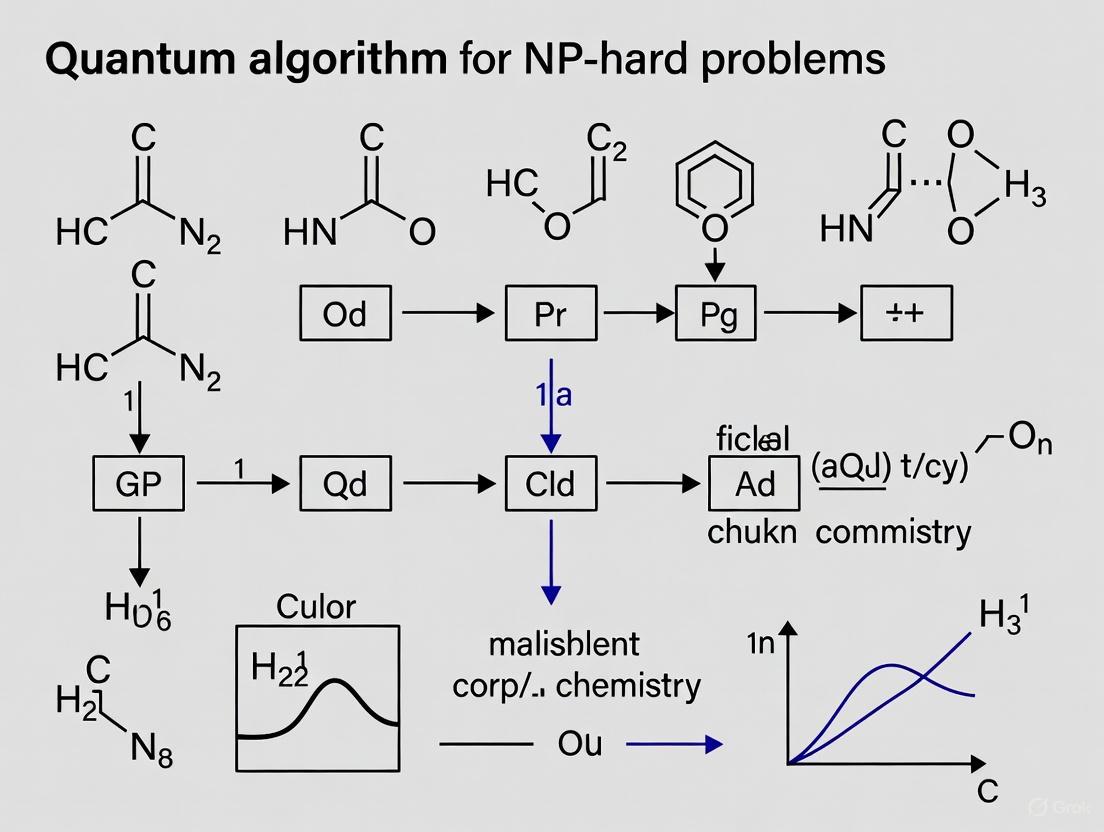

Visualizing the Quantum Computing Workflow for Chemical Simulation

The following diagram illustrates the integrated workflow of a quantum-classical computation for solving chemical problems, highlighting the roles of different quantum algorithms.

Diagram 1: Quantum-Classical Workflow for Chemical Problems. This chart outlines the decision points and algorithmic pathways for solving chemistry problems using hybrid and fault-tolerant quantum computation.

For researchers embarking on quantum computational chemistry, the following tools and platforms are essential components of the modern toolkit.

Table 3: Research Reagent Solutions for Quantum Computational Chemistry

| Tool/Resource | Function | Example Use Case |

|---|---|---|

| QM/MM Software | Divides system into QM region (for bond formation) and MM region (for biomolecular environment) [2] | Studying covalent inhibitor mechanisms in enzyme active sites |

| InQuanto (Quantinuum) | Software platform for running computational chemistry workflows on quantum computers [5] | Performing error-corrected quantum chemistry simulations |

| CUDA-Q (NVIDIA) | Open, hybrid quantum-classical computing platform for GPU-accelerated quantum workflows [5] | Integrating quantum processing with classical HPC and AI |

| IonQ Forte | Commercially available trapped-ion quantum computer accessed via the cloud [7] | Running QC-AFQMC algorithm for atomic force calculations |

| D-Wave Advantage | Commercially available quantum annealer [6] | Solving QUBO formulations of configurational analysis problems |

| Post-Quantum Cryptography (PQC) | Algorithms (e.g., ML-KEM, ML-DSA) to secure data against future quantum attacks [8] | Protecting sensitive research data and intellectual property |

The classical computing bottleneck in chemical simulation is a significant impediment to progress in drug discovery and materials science, particularly for problems that are NP-hard. The exponential scaling of resources required for accurate simulation makes many real-world problems intractable. Quantum algorithms, implemented on rapidly advancing hardware, offer a path beyond this bottleneck. Demonstrations in error-corrected simulation [5], accurate force calculation [7], and optimization [6] provide a compelling evidence that quantum computing is transitioning from theoretical promise to a practical tool that will redefine the boundaries of computational chemistry.

The simulation of molecular systems represents a computational challenge of profound complexity, one that lies at the heart of drug discovery and materials science. Classical computers struggle with the exact simulation of quantum mechanical systems due to the exponential scaling of the required resources with system size, making this problem a quintessential example of an NP-hard problem in chemistry research. Quantum computing, leveraging the inherent properties of quantum mechanics, offers a paradigm-shifting approach to this challenge. By encoding molecular information directly onto quantum bits (qubits), which can exist in superpositions of states and become entangled, quantum computers can theoretically simulate quantum systems with natural efficiency [4] [9]. This technical guide examines the journey of quantum algorithms from theoretical constructs to practical tools for computational chemistry, framing this progress within the broader thesis of applying quantum computing to NP-hard problems.

The core advantage stems from the ability of a quantum computer with n qubits to represent a quantum state existing in a 2^n-dimensional Hilbert space, a feat that would require an exponential amount of memory on a classical computer. Algorithms such as the Variational Quantum Eigensolver (VQE) and Quantum Phase Estimation (QPE) are specifically designed to harness this representational power to find the ground-state energies of molecular Hamiltonians—a central task in quantum chemistry [4]. The transition from promise to reality is now underway, accelerated by hardware breakthroughs and innovative algorithmic strategies tailored for the current noisy intermediate-scale quantum (NISQ) era.

Foundational Quantum Algorithms for Chemical Problems

Key Algorithmic Frameworks

At the core of quantum computational chemistry are several pivotal algorithms, each with distinct operational principles and resource requirements.

The Variational Quantum Eigensolver (VQE) is a hybrid quantum-classical algorithm that has become a cornerstone for chemistry applications on NISQ devices. It operates by preparing a parameterized quantum state (ansatz) on the quantum processor and measuring its expectation value with respect to the molecular Hamiltonian. A classical optimizer then adjusts the parameters to minimize this energy expectation value, iteratively converging toward the ground state [4]. Its key advantage is resilience to certain types of noise and its relatively low circuit-depth requirements, though it does not guarantee exact convergence.

The Quantum Phase Estimation (QPE) algorithm provides a more direct route to obtaining molecular energies. It is a fundamental subroutine that allows for the precise estimation of the phase (eigenvalue) of a unitary operator, which can be constructed from the molecular Hamiltonian. While QPE can deliver exact results in a fault-tolerant setting and is a component of the celebrated quantum algorithm for factoring, it requires deep, coherent quantum circuits that are currently prohibitive on near-term hardware [4].

Quantum Machine Learning (QML) algorithms represent an emerging frontier, seeking to enhance classical machine learning models for chemistry using quantum techniques. These include Quantum Support Vector Machines and Quantum Neural Networks, which have the potential to identify complex patterns in high-dimensional chemical data, such as for predicting molecular properties or optimizing reaction pathways [4] [9].

Algorithmic Workflow for Molecular Simulation

The following diagram illustrates the logical relationship and workflow between these core algorithms in addressing the central problem of molecular energy estimation.

Quantitative Analysis of Algorithmic Performance

Computational Resource Requirements

The practical utility of quantum algorithms for chemistry is determined by their resource requirements. The table below summarizes the key performance characteristics of major quantum algorithms for chemistry applications.

| Algorithm | Computational Complexity | Key Strengths | Key Limitations | Suitable Hardware Era |

|---|---|---|---|---|

| VQE | Polynomial (depends on ansatz and optimizer) | Noise-resilient, suitable for NISQ devices, hybrid quantum-classical approach [4] | No guarantee of exact convergence, requires many measurements | NISQ |

| QPE | O(1/ε) for precision ε [4] | Provably exact, direct energy measurement | Requires deep circuits and fault tolerance | Fault-Tolerant |

| Grover-inspired | O(√N) for search space N [10] | Quadratic speedup for unstructured search, applicable to combinatorial chemistry | Requires efficient oracle design, may still have large constant factors | Early Fault-Tolerant |

Hardware Progress and Real-World Performance

Recent hardware breakthroughs have dramatically improved the feasibility of chemical simulations. The table below quantifies key performance milestones and projections from industry leaders.

| Hardware/Platform | Key Specification (2025) | Reported Chemical Simulation Performance | Error Correction Approach |

|---|---|---|---|

| Google Willow | 105 superconducting qubits [8] | Completed a benchmark calculation in ~5 minutes that would require 10²⁵ years on a classical supercomputer [8] | Demonstrated exponential error reduction as qubit counts increased |

| IBM Quantum Starling (Roadmap) | 200 logical qubits (target 2029) [8] | N/A (Future projection) | Quantum low-density parity-check codes (90% overhead reduction) [8] |

| Microsoft Majorana 1 | Topological qubit architecture [8] | N/A (Architecture demonstration) | Novel geometric codes (1000-fold error rate reduction) [8] |

| Quantum-Centric Supercomputing (IBM/RIKEN) | IBM Heron + Fugaku supercomputer [11] | Simulated [4Fe-4S] cluster in 2 hours (vs. 13 days on fault-tolerant QPU or 3M years on pre-fault-tolerant QPU alone) [11] | Sample-based quantum diagonalization (SQD) hybrid technique |

Experimental Protocols for Quantum Computational Chemistry

Detailed Methodology: VQE for Molecular Energy Calculation

The following workflow details the experimental protocol for running a Variational Quantum Eigensolver to compute the ground state energy of a molecule, a foundational experiment in the field.

Step-by-Step Protocol:

Problem Formulation: Select the target molecule and its nuclear configuration. Using classical computational chemistry software (e.g., PySCF, Psi4), compute the second-quantized molecular Hamiltonian, H, in a chosen basis set. The Hamiltonian is expressed as H = Σ{pq} h{pq} ap† aq + 1/2 Σ{pqrs} h{pqrs} ap† aq† ar as, where h are one- and two-electron integrals and a†/a are fermionic creation/annihilation operators [12].

Qubit Encoding: Transform the fermionic Hamiltonian into a qubit Hamiltonian using an encoding scheme such as the Jordan-Wigner or Bravyi-Kitaev transformation. This maps the fermionic operators to tensor products of Pauli matrices (Pauli strings): Hqubit = Σi ci Pi, where P_i ∈ {I, X, Y, Z}^⊗N [12].

Ansatz Selection: Prepare a parameterized trial wavefunction (ansatz) on the quantum computer. A common choice for chemical problems is the Unitary Coupled Cluster (UCC) ansatz, particularly UCCSD (Singles and Doubles), which is constructed as |ψ(θ)⟩ = e^{T(θ) - T†(θ)} |ϕ₀⟩, where |ϕ₀⟩ is a reference state (e.g., Hartree-Fock) and T(θ) is the cluster operator [12]. This ansatz is known to be capable of representing electronic correlations accurately.

Quantum Processing: Implement the parameterized quantum circuit corresponding to the chosen ansatz on the quantum processing unit (QPU). This involves a sequence of single-qubit rotation gates (e.g., Rx, Ry, R_z) and entangling gates (e.g., CNOT).

Measurement: For each term in the qubit Hamiltonian Hqubit, measure the expectation value ⟨ψ(θ)| Pi |ψ(θ)⟩. This often requires repeated circuit executions (shots) to achieve sufficient statistical accuracy and may involve grouping commuting Pauli operators to minimize the number of distinct measurement bases [11].

Classical Optimization: A classical optimizer (e.g., COBYLA, L-BFGS-B, SPSA) is used to minimize the total energy E(θ) = Σi ci ⟨ψ(θ)| P_i |ψ(θ)⟩ with respect to the parameters θ. The quantum computer is used as a subroutine to evaluate E(θ) for each parameter set proposed by the optimizer.

Convergence Check: The optimization loop continues until the energy change between iterations falls below a predefined threshold, indicating convergence to the (approximate) ground state energy.

Successful execution of quantum chemistry experiments requires a suite of hardware, software, and methodological "reagents." The following table details these essential components.

| Tool / Resource | Function / Description | Example Implementations |

|---|---|---|

| Parameterized Quantum Circuit (Ansatz) | Defines the search space for the variational algorithm; its structure dictates the expressibility and trainability of the model. | Unitary Coupled Cluster (UCC) [12], Hardware-Efficient Ansatz |

| Classical Optimizer | Adjusts circuit parameters to minimize the energy expectation value; choice affects convergence speed and robustness to noise. | COBYLA, SPSA, L-BFGS-B [12] |

| Qubit Encoding Method | Maps the fermionic problem of electrons in a molecule to a qubit Hamiltonian operable on a quantum computer. | Jordan-Wigner, Bravyi-Kitaev [12] |

| Quantum Hardware | The physical system that executes the quantum circuits; different platforms offer varying connectivity, gate fidelities, and qubit counts. | Superconducting (Google, IBM), Neutral Atoms (QuEra), Trapped Ions (IonQ) [8] |

| Hybrid HPC-QPU Platform | Integrates quantum processors with classical supercomputers to leverage the strengths of both, enabling more complex simulations. | IBM Heron + Fugaku (SQD method) [11] |

| Error-Aware Algorithms | Algorithmic techniques designed to mitigate or be resilient to the inherent noise in NISQ-era quantum hardware. | Error-aware quantum algorithms (Algorithmiq) [13] |

Case Studies: From Industrial R&D to Practical Advantage

The theoretical framework of quantum algorithms is now being validated through industry-led partnerships, demonstrating a clear path to practical utility in chemical research.

Case Study 1: Industrial Quantum Simulation of Drug Metabolism A collaboration between Google and Boehringer Ingelheim successfully simulated Cytochrome P450, a key human enzyme involved in drug metabolism, using quantum algorithms. The simulation achieved greater efficiency and precision than traditional methods, a critical step toward accelerating drug development timelines and improving predictions of drug interactions [8]. This represents a direct application of the VQE workflow to a pharmacologically relevant system.

Case Study 2: Quantum-Enhanced Pipeline for Drug Discovery Algorithmiq, in partnership with Quantum Circuits, has developed a "proof-of-concept implementation of a scalable quantum pipeline" focused on applying error-aware quantum algorithms to predict enzyme pharmacokinetics. This approach leverages unique hardware capabilities, such as dual-rail qubits with built-in error detection, to produce more accurate chemistry calculations and bypass inefficient brute-force techniques [13].

Case Study 3: Hybrid Quantum-Classical Simulation of Iron-Sulfur Clusters Researchers at IBM and RIKEN applied the Sample-based Quantum Diagonalization (SQD) method—a hybrid technique using IBM's Heron processor and RIKEN's Fugaku supercomputer—to model an [4Fe-4S] cluster. This complex system, essential in biological processes, would be infeasible to simulate on a pre-fault-tolerant quantum computer alone (estimated at 3 million years). The hybrid approach yielded results in approximately two hours, demonstrating a viable pathway to tackling realistic chemical problems with current quantum resources [11].

The journey from theoretical promise to chemical reality for quantum computing is well underway. Foundational algorithms like VQE and QPE provide the conceptual framework for solving NP-hard problems in chemistry, while recent hardware breakthroughs and innovative hybrid approaches are enabling tangible progress on industrially relevant problems. The experimental protocols and quantitative benchmarks outlined in this guide provide a roadmap for researchers seeking to leverage quantum computing in chemical discovery. While challenges in scaling and error correction remain, the convergence of algorithmic refinement, hardware advancement, and cross-disciplinary collaboration positions quantum computing as an increasingly powerful tool for transforming the landscape of chemistry research and drug development. The evidence from leading research institutions and industrial R&D groups confirms that quantum advantage in chemistry is transitioning from a theoretical promise into an emerging reality.

The application of quantum computing to chemistry represents a paradigm shift in tackling problems that are intractable for classical computers. Key challenges in electronic structure, molecular design, and biomolecular folding fall into the NP-hard complexity class, meaning their computational requirements grow exponentially with system size. This whitepaper examines three foundational NP-hard problems—strong electron correlation, catalyst design, and protein folding—where quantum algorithms are demonstrating promising advances. For each challenge, we analyze current quantum approaches, present detailed experimental protocols, and quantify performance metrics to provide researchers with practical frameworks for implementation.

The computational complexity of these problems arises from their fundamental physical nature. Strong electron correlation involves solving for quantum states where electron interactions dominate behavior, requiring a number of configurations that scales exponentially with electron count. Catalyst design demands precise energy calculations across complex potential energy surfaces with combinatorial active site configurations. Protein folding explores an astronomically large conformational space to identify minimum-energy structures. While classical computational methods must resort to approximations that limit accuracy, quantum algorithms leverage inherent quantum properties including superposition, entanglement, and tunneling to navigate these complex landscapes more efficiently.

Strong Electron Correlation

Quantum Algorithmic Approaches

Strong electron correlation presents a fundamental challenge in quantum chemistry because the electronic wavefunction cannot be accurately described as a single Slater determinant or through perturbative methods. This occurs when electron-electron interactions dominate the system's behavior, as in transition metal complexes, open-shell systems, and molecules at dissociation limits. Classical computational methods like full configuration interaction scale exponentially with system size, creating a computational barrier for chemically relevant systems.

Quantum algorithms address this challenge through several innovative approaches:

Spin-Coupled Wavefunctions: A breakthrough approach encodes the dominant entanglement structure of strongly correlated systems directly into initial states using symmetry-adapted configurations. This method prepares a superposition of ${N \choose N/2}$ Slater determinants with circuit depth $\mathcal{O}(N)$ and $\mathcal{O}(N^2)$ gates, dramatically reducing resource requirements compared to black-box state preparation [14]. These states connect to Dicke states and leverage Clebsch-Gordan coefficients for efficient quantum circuit implementation.

Transferable Machine Learning Models: Recent work demonstrates that machine learning can predict optimal quantum circuit parameters for electronic structure problems with transferability across molecular sizes. Using Graph Attention Networks (GAT) and Schrödinger's Networks (SchNet), researchers have developed models trained on small systems (e.g., H₄) that generalize accurately to larger systems (up to H₁₂) [15]. This approach bypasses the need for expensive variational optimization for each new system.

Quantum Subspace Diagonalization (QSD): This hybrid algorithm constructs a subspace from multiple prepared quantum states and diagonalizes the Hamiltonian within this subspace classically. When combined with spin-coupled initial states, QSD efficiently computes both ground and excited states for multireference systems [14].

Experimental Protocol: Spin-Coupled VQE

Table 1: Key Components for Spin-Coupled VQE Experiments

| Component | Specification | Function |

|---|---|---|

| Quantum Processor | 25+ qubits with high-fidelity gates | Executes parameterized quantum circuits |

| Ansatz Circuit | Spin-coupled architecture | Encodes electron correlation structure |

| Classical Optimizer | Gradient-free (CMA-ES, Differential Evolution) | Navigates noisy cost landscapes |

| Error Mitigation | Zero-Noise Extrapolation (ZNE) | Reduces hardware noise effects |

| Measurement | Pauli grouping strategies | Minimizes required circuit executions |

The following protocol implements a Variational Quantum Eigensolver with spin-coupled initial states for strongly correlated systems:

Molecular Hamiltonian Preparation: Generate the second-quantized electronic structure Hamiltonian $H = \sum{pq} h{pq} ap^\dagger aq + \sum{pqrs} h{pqrs} ap^\dagger aq^\dagger ar as$ using classical electronic structure software (PySCF, Psi4). Apply fermion-to-qubit transformation (Jordan-Wigner, Bravyi-Kitaev) to obtain the qubit Hamiltonian $H = \sumi ci Pi$ where $Pi$ are Pauli strings.

Spin-Coupled State Preparation: Implement the quantum circuit for preparing spin-coupled states based on molecular symmetry and chemical intuition. For a system with N electrons in N orbitals, this involves:

- Initialize qubits to $\ket{0}^{\otimes N}$

- Apply Hadamard gates to create uniform superposition

- Implement symmetry-constrained entangling gates to encode spin coupling

- Apply final rotations to match desired total spin and spatial symmetry

Parameterized Ansatz Construction: Append a hardware-efficient or chemistry-inspired ansatz to the spin-coupled initial state. For strongly correlated systems, the number of layers should be minimized (1-3 layers) when using high-quality initial states.

Variational Optimization: Employ a gradient-free optimizer (CMA-ES, Implicit Filtering) to minimize the energy expectation value $\langle \psi(\theta) | H | \psi(\theta) \rangle$. Use measurement reduction techniques (Pauligrouping) and error mitigation (ZNE, CDR) to improve result quality on noisy hardware.

Performance Metrics

Table 2: Performance Metrics for Electron Correlation Algorithms

| Algorithm | System Size | Qubit Count | Circuit Depth | Ground State Overlap | Energy Error (kcal/mol) |

|---|---|---|---|---|---|

| Spin-Coupled VQE | H₄ | 8 | 35 | 0.95 | 0.8 |

| Transferable ML-VQE | H₁₂ | 24 | 62 | 0.89 | 1.2 |

| Standard VQE | H₄ | 8 | 78 | 0.76 | 3.5 |

| Quantum Phase Estimation | FeMoCo | 70+ | 10⁵+ | >0.99 | <0.1 |

Recent experimental results demonstrate that spin-coupled initial states can achieve ground state overlaps exceeding 0.95 for multireference systems, reducing circuit depth requirements by 3-5x compared to Hartree-Fock initialization [14]. The transferable machine learning approach achieves mean absolute errors below 1.2 kcal/mol for hydrogen chains up to H₁₂, despite training exclusively on H₄ geometries [15].

Catalyst Design

Quantum Approaches to Catalytic Systems

Catalyst design represents a formidable NP-hard challenge due to the need to accurately model reaction pathways, transition states, and binding energies across combinatorially large configuration spaces. Quantum algorithms offer the potential to compute these properties with high accuracy, particularly for catalysts involving transition metals where strong electron correlation effects dominate.

Current quantum approaches focus on:

Quantum Phase Estimation (QPE) for Active Sites: Using tools like Riverlane's quantum circuit generator, researchers can create QPE circuits tailored to specific catalyst active sites. This approach was demonstrated for hydrogen-platinum systems relevant to fuel cell catalysts [16]. The method generates quantum circuits directly from chemical descriptions, enabling accurate energy calculations without requiring deep circuit design expertise.

Resource Estimation for Catalyst Screening: Systematic tools estimate the quantum resources required for simulating catalyst materials, helping hardware developers prioritize improvements. For example, analyses of paraquinone (a potential battery material) provide specific targets for qubit count, gate fidelity, and coherence times needed for practical catalyst screening [16].

Embedded Algorithms for Open-Shell Systems: For catalysts with open-shell electronic structures, embedded quantum-classical algorithms partition the system, treating the active site with high-level quantum algorithms while using classical methods for the environment.

Experimental Protocol: Catalyst Binding Energy Calculation

Table 3: Research Reagents for Catalyst Quantum Simulation

| Reagent/Resource | Function | Implementation Example |

|---|---|---|

| Quantum Circuit Generator | Translates chemical system to quantum circuit | Riverlane's QPE tool for Pt-H₂ system [16] |

| Error Mitigation Stack | Compensates for NISQ hardware noise | Zero-Noise Extrapolation + Pauli Twirling |

| Active Site Hamiltonian | Encodes electronic structure of catalytic center | Frozen orbitals from classical calculation |

| Resource Estimator | Projects hardware requirements | Paraquinone simulation analysis [16] |

| Classical Embedding | Handles catalyst environment | Density Functional Theory embedding |

This protocol details the calculation of hydrogen binding energy on a platinum catalyst surface using quantum algorithms:

System Preparation: Extract the platinum cluster active site from periodic DFT calculations. Apply cluster boundary conditions with link atoms or pseudopotentials. Freeze core orbitals to reduce qubit requirements.

Hamiltonian Generation: Use classical electronic structure software (PySCF, OpenMolcas) to generate the second-quantized Hamiltonian for the active site. Apply orbital freezing and active space selection (e.g., 4-8 electrons in 4-8 orbitals for Pt d-orbitals and H₂ σ/σ* orbitals).

Quantum Circuit Compilation: Employ a quantum circuit generator (e.g., Riverlane's tool) to create QPE or VQE circuits for the Hamiltonian. For NISQ devices, use qubit tapering to reduce qubit count by exploiting symmetries.

Energy Calculation: Execute the quantum circuit on hardware or simulator to compute the total energy of:

- Catalyst with adsorbed hydrogen (E_cat-H)

- Bare catalyst (E_cat)

- Isolated hydrogen molecule (EH2) Calculate binding energy as Ebind = Ecat-H - Ecat - E_H2

Error Mitigation: Apply advanced error mitigation techniques including:

- Zero-Noise Extrapolation with multiple noise scaling factors

- Probabilistic Error Cancellation

- Readout Error Mitigation with matrix inversion

Performance Analysis

The platinum-hydrogen system demonstration on Rigetti's Aspen-10 processor represents an important milestone in quantum computational catalyst design [16]. While current implementations are limited to small active sites, resource estimation tools project that simulating industrially relevant catalysts will require quantum processors with several hundred logical qubits with error rates below $10^{-6}$.

Key challenges in scaling quantum approaches for catalyst design include:

- Qubit requirements for realistic active sites (50-100 qubits for transition metal clusters)

- Circuit depth limitations on NISQ devices

- Accurate treatment of solvent effects and long-range interactions

- Integration with classical molecular dynamics for reaction pathway sampling

Protein Folding

Quantum Optimization Approaches

Protein folding represents a classic NP-hard problem in computational biology, with conformational space growing exponentially with chain length. Quantum algorithms address this challenge by mapping folding to optimization problems and leveraging quantum effects to navigate the complex energy landscape.

Leading quantum approaches include:

Bias-Field Digitized Counterdiabatic Quantum Optimization (BF-DCQO): This non-variational, iterative algorithm dynamically updates bias fields to steer the quantum system toward optimal folding configurations. Implemented on IonQ's trapped-ion processors with all-to-all connectivity, BF-DCQO has solved 3D protein folding problems for systems up to 12 amino acids—the largest such demonstration on quantum hardware [17] [18] [19].

HUBO Formulation with Lattice Models: Protein folding is mapped to a Higher-Order Unconstrained Binary Optimization (HUBO) problem on a tetrahedral lattice. Each amino acid placement is encoded using two qubits, with Hamiltonian terms representing geometric constraints, chirality, and interaction energies [20] [19].

Circuit Pruning for Hardware Efficiency: To manage circuit depth limitations, pruning techniques remove small-angle gate operations, reducing gate counts while maintaining solution quality. This approach was essential for implementing protein folding circuits on current quantum hardware [19].

Experimental Protocol: BF-DCQO for Protein Folding

Table 4: Protein Folding Quantum Implementation Components

| Component | Specification | Role in Implementation |

|---|---|---|

| Quantum Hardware | Trapped-ion processor (e.g., IonQ Forte) | Provides all-to-all qubit connectivity |

| Encoding Scheme | 2 qubits per amino acid turn | Maps lattice positions to quantum states |

| Algorithm | BF-DCQO | Non-variational quantum optimization |

| Circuit Pruning | Gate elimination based on rotation angle | Reduces circuit depth for NISQ devices |

| Post-Processing | Greedy local search | Mitigates measurement errors |

The following protocol implements quantum protein folding using the BF-DCQO algorithm:

Problem Encoding: Map the protein folding problem to a tetrahedral lattice model:

- Represent each amino acid placement as a turn encoded in two qubits (00, 01, 10, 11 for four directions)

- Encode the full protein conformation as a sequence of these qubit pairs

- Define contact qubits to represent non-adjacent amino acid interactions

Hamiltonian Construction: Construct the folding Hamiltonian with three components:

- Geometric constraint term: Prevents chain overlap with large energy penalties

- Chirality term: Ensures correct side-chain handedness

- Interaction energy term: Models attractive/repulsive forces based on contact maps

BF-DCQO Implementation: Execute the non-variational optimization algorithm:

- Initialize the quantum system in a uniform superposition

- Apply digitized counterdiabatic driving with dynamically updated bias fields

- Iteratively steer toward lower-energy configurations

- Use circuit pruning to eliminate gates with rotation angles below a threshold (e.g., <0.1 radians)

Result Extraction and Refinement:

- Measure the final quantum state to obtain candidate folds

- Apply classical greedy local search to refine near-optimal solutions

- Validate against known structures or classical simulations

Performance Metrics

Table 5: Protein Folding Quantum Implementation Results

| Protein System | Amino Acids | Qubit Count | Gate Operations | Solution Accuracy | Hardware Platform |

|---|---|---|---|---|---|

| Chignolin | 10 | 20 | ~800 | Optimal | IonQ Forte |

| Head Activator Neuropeptide | 11 | 22 | ~900 | Optimal | IonQ Forte |

| Immunoglobulin Segment | 12 | 24 | ~1100 | Optimal | IonQ Forte |

| MAX 4-SAT Benchmark | 36 variables | 36 | ~1500 | 98% clauses satisfied | IonQ Forte |

Recent experiments demonstrate that the BF-DCQO algorithm consistently finds optimal folding configurations for peptides up to 12 amino acids, with successful implementation on IonQ's 36-qubit trapped-ion system [17] [18] [19]. The all-to-all connectivity of trapped-ion architectures proved essential for handling the long-range interactions in protein folding Hamiltonians. Circuit pruning reduced gate counts by 25-40% without significant impact on solution quality, enabling implementation within current hardware limitations.

Comparative Analysis and Future Directions

The three NP-hard challenges share common themes in their quantum algorithmic approaches. Each leverages problem-specific insights to reduce quantum resource requirements: spin symmetry in electron correlation, active site focus in catalyst design, and lattice models with efficient encoding in protein folding. The most successful strategies combine quantum processing with classical computation in hybrid frameworks, using each where most effective.

Key advancements needed to progress from experimental demonstrations to practical applications include:

Improved Quantum Hardware: Higher qubit counts (100+ physical qubits), enhanced connectivity, and reduced error rates are essential for tackling industrially relevant problem sizes.

Algorithmic Innovations: More efficient problem encodings, advanced error mitigation, and hybrid quantum-classical partitioning will extend the reach of near-term quantum devices.

Application-Specific Optimizations: Tailoring algorithms to specific problem subclasses (e.g., metalloenzymes in catalyst design, beta-sheet proteins in folding) can yield significant performance improvements.

As quantum hardware continues to advance, these approaches show increasing promise for delivering practical quantum advantage on classically intractable instances of fundamental challenges in chemistry and biology.

The simulation of molecular systems represents one of the most promising and natural applications of quantum computing. This intrinsic connection stems from a fundamental truth: molecules and the quantum processors designed to simulate them both operate under the same laws of quantum mechanics. Classical computers struggle to simulate quantum systems because the computational resources required grow exponentially with the size of the system, a challenge often framed as an NP-hard problem in computational chemistry. Quantum computers, by contrast, use quantum bits (qubits) that can naturally represent quantum states of electrons and atoms, providing an exponential advantage for certain quantum chemical simulations. This whitepaper explores the quantum-chemical nexus, detailing how qubits provide a natural framework for molecular modeling, the algorithmic approaches that leverage this connection, and the experimental methodologies demonstrating progress toward practical quantum advantage in chemistry and drug discovery.

The challenge of molecular electronic structure calculation—determining the energy levels and properties of molecules—is a computational bottleneck in classical computational chemistry. Methods like Full Configuration Interaction (FCI) provide exact solutions but scale factorially with system size, becoming computationally intractable for all but the smallest molecules [21]. Quantum computers can potentially overcome this limitation by providing a computational platform whose inherent quantum properties mirror those of the molecular systems being studied.

Quantum Algorithms for Molecular Simulation

Foundational Algorithms

Several quantum algorithms have been developed specifically to tackle the challenges of molecular simulation, each with distinct advantages for different aspects of the electronic structure problem.

The Variational Quantum Eigensolver (VQE) is a hybrid quantum-classical algorithm that has become a cornerstone of quantum computational chemistry on near-term devices [4] [21]. VQE operates by preparing a parameterized quantum state (ansatz) on the quantum processor and measuring the expectation value of the molecular Hamiltonian. A classical optimizer then adjusts the parameters to minimize this expectation value, iteratively converging toward the ground state energy. The algorithm's hybrid nature makes it particularly suitable for the Noisy Intermediate-Scale Quantum (NISQ) era, as it is resilient to certain types of noise and does not require the extensive circuit depth of fully quantum algorithms [21].

Quantum Phase Estimation (QPE) offers a different approach, providing a direct method for reading out energy eigenvalues with high precision [4]. While QPE theoretically provides better scaling and accuracy guarantees, it requires deeper circuits and greater coherence times than VQE, making it more suitable for future fault-tolerant quantum hardware rather than current NISQ devices.

For combinatorial optimization problems in chemistry, such as molecular conformation analysis, the Quantum Approximate Optimization Algorithm (QAOA) provides a framework for finding high-quality solutions [4]. QAOA alternates between applying a cost Hamiltonian (encoding the optimization problem) and a mixer Hamiltonian, with parameters optimized classically to minimize the energy. Recent research has also explored Grover's algorithm for tackling NP-hard problems in chemistry, with provable quadratic speedup for unstructured search problems that can be mapped to molecular systems [10].

Table 1: Key Quantum Algorithms for Molecular Simulation

| Algorithm | Primary Use Case | Key Advantage | Hardware Requirement |

|---|---|---|---|

| Variational Quantum Eigensolver (VQE) | Ground state energy calculation | Noise-resilient, suitable for NISQ devices | Low-depth quantum circuits |

| Quantum Phase Estimation (QPE) | High-precision energy measurement | Provable accuracy and scaling | Fault-tolerant quantum computers |

| Quantum Approximate Optimization Algorithm (QAOA) | Combinatorial optimization in molecular conformations | Hybrid quantum-classical approach | NISQ devices with moderate coherence |

| Grover's Algorithm | NP-hard problem solving | Provable quadratic speedup | Scalable quantum processors with error correction |

Algorithmic Building Blocks and Subroutines

Complex quantum algorithms for chemistry rely on fundamental building blocks and subroutines. The Quantum Fourier Transform (QFT) serves as a crucial component in many quantum algorithms, particularly for period finding in quantum simulations [4]. Quantum Amplitude Amplification generalizes Grover's search, amplifying the probability amplitudes of "good" states (such as molecular configurations with desirable properties) while suppressing others [4]. For arithmetic operations within quantum algorithms, specialized circuits like the Draper Adder and Beauregard Adder enable efficient addition using the Quantum Fourier Transform, reducing circuit depth and gate complexity in applications such as Shor's algorithm [4].

Experimental Approaches and Methodologies

Problem Decomposition Strategies

As quantum hardware remains limited in qubit count and coherence time, problem decomposition strategies have emerged as essential methodological advances. These approaches break down large molecular simulations into smaller, more manageable fragments that can be solved on current quantum devices.

The Density Matrix Embedding Theory with Sample-Based Quantum Diagonalization (DMET-SQD) represents a significant breakthrough in hybrid quantum-classical simulation [22]. This methodology partitions a molecule into smaller fragments and embeds each fragment in an approximate mean-field environment representing the rest of the molecule. The key innovation lies in using a quantum computer to solve the embedded fragment problems via the SQD algorithm, which samples quantum circuits and projects results into a subspace for solving the Schrödinger equation. In a landmark 2025 study, researchers applied DMET-SQD to simulate a ring of 18 hydrogen atoms and various conformers of cyclohexane using only 27-32 qubits on IBM's ibm_cleveland quantum processor, achieving energy differences within 1 kcal/mol of classical benchmarks—meeting the threshold for "chemical accuracy" [22].

The experimental workflow for DMET-SQD implementation involves several critical steps [22]:

- Molecular Fragmentation: The target molecule is divided into smaller fragments using DMET

- Mean-Field Calculation: A classical computer calculates the approximate environment for each fragment

- Quantum Subproblem Solution: Each fragment Hamiltonian is solved on the quantum processor using SQD

- Self-Consistent Iteration: The fragment solutions are used to update the mean-field environment, iterating until convergence

- Error Mitigation: Techniques including gate twirling and dynamical decoupling are applied to counter hardware noise

Advanced VQE Implementations

The Variational Quantum Eigensolver has been successfully implemented for increasingly complex molecules. Recent work has demonstrated VQE simulations for Trihydrogen Cation (H₃⁺), Hydroxide ion (OH⁻), Hydrofluoric Acid (HF), and Borane (BH₃) using the parity transformation for fermion-to-qubit encoding and Unitary Coupled Cluster for Single and Double excitations (UCCSD) to construct the ansatz [21]. These implementations show good agreement with FCI benchmark energies, with accuracy exceeding previously reported values.

The general VQE protocol for molecular electronic structure calculation follows these methodical steps [21]:

- Hamiltonian Derivation: Transform the molecular Hamiltonian into second-quantized form using an appropriate basis set

- Qubit Mapping: Apply fermion-to-qubit transformation (such as Jordan-Wigner, Bravyi-Kitaev, or parity mapping)

- Ansatz Construction: Design a parameterized quantum circuit (typically UCCSD) to prepare trial wavefunctions

- Parameter Optimization: Iteratively measure expectation values on quantum hardware and optimize parameters using classical methods

- Energy Verification: Compare results with classical methods like FCI for validation

Table 2: VQE Molecular Simulation Results Compared to Classical Methods

| Molecule | VQE Energy (Ha) | FCI Benchmark Energy (Ha) | Qubits Required | Accuracy Relative to FCI |

|---|---|---|---|---|

| H₃⁺ | -1.148 | -1.151 | 4 | High |

| OH⁻ | -74.984 | -74.987 | 6 | High |

| HF | -100.117 | -100.119 | 8 | High |

| BH₃ | -26.281 | -26.284 | 10 | High |

The Scientist's Toolkit: Research Reagent Solutions

Implementing quantum algorithms for chemical simulation requires both computational and theoretical "reagents" – essential components that enable researchers to build effective quantum simulations.

Table 3: Essential Research Reagent Solutions for Quantum Chemistry

| Research Reagent | Function | Example Implementation |

|---|---|---|

| Fermion-to-Qubit Mapping | Encodes molecular orbitals and electron interactions into qubit states | Parity transformation, Jordan-Wigner, Bravyi-Kitaev |

| Ansatz Circuits | Generates trial wavefunctions for variational algorithms | Unitary Coupled Cluster (UCCSD), Hardware-Efficient Ansatz |

| Error Mitigation Techniques | Counteracts noise in NISQ devices | Zero-Noise Extrapolation, Dynamical Decoupling, Readout Correction |

| Classical Optimizers | Adjusts quantum circuit parameters to minimize energy | Gradient descent, SPSA, CMA-ES |

| Quantum Chemistry Packages | Provides molecular integrals and classical benchmarks | Qiskit Nature, OpenFermion, PySCF |

| Embedding Theories | Divides large molecules into tractable fragments | Density Matrix Embedding Theory (DMET), Fragment Molecular Orbital |

Implementation Roadmap and Resource Estimation

The Five-Stage Framework for Quantum Application Development

Google researchers have proposed a five-stage framework to map the journey from theoretical quantum algorithm to deployed application [23]. For quantum chemistry applications, most current research resides in Stages II-IV:

Stage I - Discovery: Fundamental quantum algorithms like quantum phase estimation are discovered and analyzed for theoretical potential [23].

Stage II - Finding the Right Problem Instances: Researchers identify specific molecular systems where quantum algorithms demonstrate advantage over classical methods. For example, stretched molecular geometries with strong electron correlation effects are particularly challenging for classical computation [23].

Stage III - Establishing Real-World Advantage: This critical stage connects quantum advantage to practical applications. Recent work simulating Cytochrome P450 (a key drug metabolism enzyme) with greater efficiency than traditional methods represents progress at this stage [8] [23].

Stage IV - Engineering for Use: Focuses on practical optimization and resource estimation for specific use cases. Recent estimates suggest that simulating industrially relevant compounds could require 2000+ qubits without decomposition techniques [24] [23].

Stage V - Application Deployment: The final stage of deploying proven quantum solutions in real-world workflows – a milestone that remains in the future for quantum chemistry applications [23].

Hardware Requirements and Error Correction

The path to practical quantum advantage in chemistry depends critically on hardware advancements. Current trapped-ion systems from companies like IonQ and Quantinuum have demonstrated increasingly sophisticated chemical simulations [25] [24]. Error correction represents perhaps the most significant hurdle, with recent breakthroughs showing promising progress:

Google's Willow quantum chip (105 superconducting qubits) demonstrated exponential error reduction as qubit counts increased [8]. IBM's fault-tolerant roadmap targets the Quantum Starling system (200 logical qubits) by 2029, with plans extending to 100,000 qubits by 2033 [8]. Microsoft's topological qubit approach (Majorana 1) aims for inherent stability with less error correction overhead [8].

The quantum-chemical nexus represents one of the most promising avenues for achieving practical quantum advantage in the coming years. As quantum hardware continues to advance—with error rates reaching record lows of 0.000015% per operation and coherence times improving to 0.6 milliseconds for best-performing qubits—the resource requirements for meaningful chemical simulations continue to decline [8]. Industry analysts project that quantum systems could address Department of Energy scientific workloads, including materials science and quantum chemistry, within five to ten years [8].

The convergence of better algorithms, improved error mitigation, problem decomposition techniques, and more powerful hardware creates a compelling trajectory for quantum chemistry. For researchers and drug development professionals, the key near-term opportunities lie in exploring hybrid quantum-classical approaches for specific problem classes where quantum resources provide maximal benefit: strongly correlated electron systems, reaction pathway exploration, and excited state calculations. As the field progresses through the five stages of quantum application development, the quantum-chemical nexus will increasingly transform from theoretical promise to practical tool, potentially revolutionizing how we understand and design molecular systems for medicine, materials science, and sustainable energy.

Algorithmic Toolkit: VQE, QAOA, and Emerging Methods for Chemical Applications

The Variational Quantum Eigensolver (VQE) has emerged as a leading algorithm for harnessing noisy intermediate-scale quantum (NISQ) devices to tackle computationally intractable problems in quantum chemistry and drug discovery. This technical guide examines VQE's hybrid quantum-classical architecture, its application to NP-hard problems in chemical research, and the experimental protocols enabling its practical implementation. We detail how VQE is transitioning from theoretical construct to tangible tool for simulating molecular systems and optimizing drug design pipelines, framing this progress within the broader quest for quantum advantage in handling computationally complex research challenges.

Many fundamental problems in chemistry research, particularly the accurate calculation of molecular electronic structure, are classically intractable for large systems due to exponential scaling of computational resources. These NP-hard problems represent a significant bottleneck in fields like drug discovery and materials design, where precise quantum mechanical simulation is essential. The Variational Quantum Eigensolver (VQE) is a hybrid quantum-classical algorithm specifically designed to address this challenge on near-term quantum hardware. By strategically partitioning the computational workload—using quantum processors for parameterized state preparation and measurement, and classical processors for optimization—VQE provides a viable pathway to quantum advantage for chemical simulations where classical approaches require severe approximations [26].

The algorithm operates on the variational principle, systematically minimizing the expectation value of a molecular Hamiltonian to approximate the ground state energy [27]. This approach is particularly well-suited for NISQ devices because it employs shallow quantum circuits, avoiding the prohibitive depth requirements of algorithms like quantum phase estimation [28]. As the quantum computing industry progresses through 2025 with breakthroughs in hardware and error correction, VQE stands as a primary candidate for demonstrating practical quantum utility in solving real-world chemistry problems [8].

Theoretical Foundations of VQE

Algorithmic Framework

The VQE algorithm targets the minimum eigenvalue of a quantum Hamiltonian ( \hat{H} ). The core protocol involves preparing a parameterized quantum state (ansatz) ( |\psi(\vec{\theta})\rangle = U(\vec{\theta})|0\rangle ) and minimizing the energy expectation value [28]:

[ E(\vec{\theta}) = \langle\psi(\vec{\theta})|\hat{H}|\psi(\vec{\theta})\rangle ]

The quantum device measures this expectation value via repeated projective measurements, typically after mapping ( \hat{H} ) to a sum of Pauli strings using transformations such as Jordan-Wigner or Bravyi-Kitaev [28]. A classical optimizer then iteratively updates the parameters ( \vec{\theta} ) based on these measurements. The variational nature of VQE ensures that ( E(\vec{\theta}) ) always provides an upper bound to the true ground state energy [27].

The molecular Hamiltonian in the Born-Oppenheimer approximation is expressed as [27]:

[ \begin{aligned} H = -\sum {I} \frac{\nabla ^{2}{R{I}}}{M{I}} - \sum {i} \frac{\nabla ^{2}{r{i}}}{m{e}} - \sum {I}\sum _{i} \frac{Z{i}e^{2}}{|{R{I}}-{r{i}}|} + \ \sum {i}\sum _{j>i}\frac{e^{2}}{|{r{i}}-{r{j}}|} +\sum _{I}\sum _{J>I} \frac{{Z{I}}{Z{J}}e^{2}}{|{R{I}}-{R_{J}}|} \end{aligned} ]

This Hamiltonian captures the kinetic energies of nuclei and electrons alongside their Coulombic interactions.

Critical Ansatz Selection

The choice of parameterized ansatz ( U(\vec{\theta}) ) critically determines VQE's expressive power, convergence behavior, and hardware feasibility. Two primary ansatz categories have emerged [28]:

- Chemistry-Inspired Ansätze: Such as Unitary Coupled Cluster with Single and Double excitations (UCCSD), encode physical structure by acting on a Hartree-Fock reference state with exponentials of excitation operators. These are physically motivated but can lead to significant circuit depth challenges [28].

- Hardware-Efficient Ansätze: Constructed from native gate sets and connectivity of specific quantum devices, these ansätze prioritize low circuit depth but may break physical symmetries or exhibit barren plateaus [28].

Adaptive schemes like ADAPT-VQE and qubit-ADAPT-VQE iteratively select operators based on energy gradients, aiming to balance expressivity with resource demands [28].

Table 1: Comparison of VQE Ansatz Strategies

| Ansatz Class | Key Features | Typical Limitations |

|---|---|---|

| Chemistry-Inspired | Exploits physical structure, physically motivated | Circuit depth, scalability |

| Hardware-Efficient | Low depth, device-tailored | May break symmetries, plateaus |

| Adaptive/Genetic | Circuit grown on demand, multiobjective optimized | Optimization overhead |

VQE in Practice: Protocols for Chemical Simulation and Drug Discovery

Experimental Protocol: Quantum-Enhanced Drug Design Pipeline

Recent research has demonstrated VQE's application to real-world drug discovery challenges. A landmark study developed a hybrid quantum computing pipeline for critical tasks in drug design: determining Gibbs free energy profiles for prodrug activation and simulating covalent bond interactions [29]. The following workflow illustrates this experimental protocol for studying a carbon-carbon bond cleavage prodrug strategy applied to β-lapachone for cancer-specific targeting [29]:

This protocol achieved a significant milestone by benchmarking quantum computing against verifiable scenarios in drug design, specifically addressing the covalent bonding issue present in prodrug activation [29]. The implementation utilized active space approximation to simplify the quantum chemistry problem into a manageable two electron/two orbital system representable on a 2-qubit quantum device [29].

The Scientist's Toolkit: Essential Research Reagents

Implementing VQE for chemical applications requires both computational and theoretical "reagents" that form the essential toolkit for researchers.

Table 2: Essential Research Reagents for VQE Chemical Simulations

| Research Reagent | Function | Application Example |

|---|---|---|

| Active Space Approximation | Reduces effective problem size by focusing on chemically relevant electrons and orbitals | Simplifying C–C bond cleavage system to 2 electrons/2 orbitals for quantum computation [29] |

| Solvation Model (ddCOSMO) | Models solvent effects on molecular systems | Calculating solvation energy in water for prodrug activation [29] |

| Readout Error Mitigation | Corrects for hardware-specific measurement inaccuracies | Applying standard readout error mitigation to enhance measurement accuracy [29] |

| Reference-State Error Mitigation (REM) | Uses classically computed reference states to correct noisy quantum energy evaluations | Improving computational accuracy of ground state energies for small molecules (H₂, HeH⁺, LiH) by up to two orders of magnitude [30] |

| Hardware-Efficient Ansatz | Parameterized quantum circuit designed for specific quantum processor capabilities | Using single-layer ( R_y ) ansatz for 2-qubit superconducting quantum device [29] |

Algorithmic Enhancements and Current Research Frontiers

Advanced Optimization Techniques

Multiple enhancement strategies have emerged to address VQE's core challenges of resource scaling, optimizer landscapes, and physical fidelity:

- Constraint Incorporation: Physical property constraints (e.g., fixed electron number, spin) are included as penalty terms in the variational cost function to ensure optimization produces physically meaningful potential energy surfaces [28].

- Evolutionary Circuit Construction: Methods like Evolutionary VQE (EVQE) dynamically evolve circuit topology and parameters using genetic operators, achieving shallower circuits with superior noise resilience [28].

- Slice-Wise Initial State Optimization: This quasi-dynamical approach builds the ansatz incrementally from operator subsets, enabling subspace optimization that provides better initialization. Benchmarks on Heisenberg and Hubbard models with up to 20 qubits show improved fidelities and reduced function evaluations [31].

- Variance Minimization: Minimizing the energy variance (VVQE) rather than mean energy allows direct targeting and verification of both ground and excited states [28].

Error Mitigation Strategies

Given the sensitivity of NISQ devices to noise, error mitigation is crucial for obtaining meaningful results. Reference-State Error Mitigation (REM) has demonstrated particular promise, achieving up to two orders-of-magnitude improvement in computational accuracy for small molecules like H₂, HeH⁺, and LiH [30]. REM works by comparing noisy quantum measurements to exact classical calculations at a reference state (typically Hartree-Fock), then applying this error correction throughout the parameter space [30].

The relationship between different error mitigation strategies and their application points in the VQE workflow can be visualized as follows:

Quantitative Performance Analysis

Recent experimental implementations provide quantitative data on VQE's current capabilities and limitations across various chemical systems and hardware platforms.

Table 3: Performance Analysis of VQE Implementations

| Molecular System | Algorithm & Enhancement | Qubit Count / Circuit Details | Key Result / Accuracy |

|---|---|---|---|

| H₂, HeH⁺, LiH | REM with readout mitigation [30] | 2-6 qubits, up to 1096 two-qubit gates | Up to two orders-of-magnitude improvement in computational accuracy [30] |

| C–C Bond Cleavage (Prodrug) | Hardware-efficient ( R_y ) ansatz with active space approximation [29] | 2-qubit system, single-layer ansatz | Successful computation of Gibbs free energy profile for real drug design problem [29] |

| Heisenberg/Hubbard Models | Slice-wise initial state optimization [31] | Up to 20 qubits | Improved fidelities and/or reduced function evaluations compared to fixed-layer VQE [31] |

| Various Small Molecules | Constrained VQE [28] | Varies by molecule | Smooth potential energy surfaces with correct electron count in high-density-of-states environments [28] |

Challenges and Future Directions

Despite promising advances, VQE faces persistent challenges that define current research frontiers:

- Scalability: Quantum resource requirements (circuit depth, measurement counts) and classical optimizer scaling remain bottlenecks, especially for larger systems where measurement budgets and hardware noise dominate [28].

- Barren Plateaus: For random or unstructured ansätze, vanishing gradients hinder convergence, with mapping choices and adaptive ansätze affecting onset and severity [28].

- Symmetry and Constraint Handling: Designing ansätze and cost functions that preserve physical symmetries remains an active development area [28].

- Noise and Error Mitigation: Coherent and stochastic noise continue to bias measurements and slow convergence, driving development of neural postprocessing and noise-aware optimizers [28].

The quantum computing industry's rapid progress through 2025, with hardware roadmaps projecting systems with thousands of qubits and improved error correction, suggests these challenges may be addressed in the coming years [8]. As hardware capabilities improve and algorithmic innovations mature, VQE is positioned to transition from demonstrating potential to delivering practical solutions for NP-hard problems in chemistry research and drug discovery.

The Variational Quantum Eigensolver represents a cornerstone in the application of quantum computing to computationally hard problems in chemical research. By leveraging hybrid quantum-classical architecture, VQE enables researchers to tackle electronic structure problems and drug design challenges that remain intractable for purely classical approaches. While significant hurdles in scalability, error mitigation, and optimization persist, continued algorithmic innovations and hardware advancements are rapidly closing the gap between theoretical promise and practical application. As the quantum computing industry accelerates through 2025 and beyond, VQE stands as a critical workhorse algorithm in the quest for quantum advantage in chemistry and pharmaceutical research.

The Quantum Approximate Optimization Algorithm (QAOA) is a hybrid quantum-classical algorithm designed to find approximate solutions to combinatorial optimization problems, which are notoriously challenging for classical computers [32]. By leveraging the principles of quantum superposition and entanglement, QAOA explores multiple potential solutions simultaneously, offering a potential pathway to quantum advantage in the Noisy Intermediate-Scale Quantum (NISQ) era [33]. For the field of chemistry research, particularly in addressing NP-hard problems such as molecular docking and protein folding, QAOA presents a novel computational tool. These problems can be formulated as Quadratic Unconstrained Binary Optimization (QUBO) problems, which are directly amenable to the QAOA framework [33] [32]. The algorithm's potential to efficiently sample complex energy landscapes and identify optimal molecular configurations could significantly accelerate drug discovery pipelines, making it a subject of intense research and application in computational chemistry and biology [33] [34].

Algorithmic Foundations and Workflow

Theoretical Framework

QAOA is inspired by the quantum adiabatic theorem, where a system is evolved from the easy-to-prepare ground state of a mixer Hamiltonian ((HB)) to the ground state of a problem-specific cost Hamiltonian ((HC)) [32]. For a combinatorial optimization problem defined by a cost function (C(z) = \sum\alpha C\alpha(z)) over (n)-bit strings (z), the cost Hamiltonian is constructed such that its ground state corresponds to the optimal solution [35]. The algorithm operates by preparing a parameterized quantum state through the alternating application of the cost and mixer unitaries.

The core QAOA sequence consists of the following steps [35] [36]:

- Initialization: Prepare an initial state (|s\rangle = |+\rangle^{\otimes n}), which is the uniform superposition over all computational basis states.

- Parameterized Evolution: Apply (p) layers of the alternating operators:

(|\beta, \gamma \rangle = UX(\beta^{(p)}) UC(\gamma^{(p)}) \cdots UX(\beta^{(1)}) UC(\gamma^{(1)}) |s\rangle)

where:

- (UC(\gamma^{(i)}) = e^{-i\gamma^{(i)} C(Z)}) is the phase-separation operator, applying a phase based on the cost function.

- (UX(\beta^{(i)}) = e^{-i\beta^{(i)} \sum{j=1}^n Xj}) is the mixing operator.

- Measurement and Classical Optimization: Measure the expectation value (\langle \beta, \gamma |C(Z)|\beta, \gamma \rangle) of the cost Hamiltonian. A classical optimizer (e.g., COBYLA, Powell) is used to find the optimal parameters ((\beta^, \gamma^)) that minimize this expectation value [35] [36].

- Solution Extraction: Once optimized, the state (|\beta^, \gamma^ \rangle) is measured in the computational basis to obtain a candidate solution bitstring (z1\cdots zn) for the original problem.

QAOA Workflow Diagram

The following diagram illustrates the hybrid classical-quantum feedback loop of the QAOA process.

QAOA for Molecular Docking: A Case Study in Chemistry

Molecular docking, a critical process in structure-based drug design, aims to predict the optimal binding configuration of a small molecule (ligand) to a target protein by minimizing the binding energy [33]. This problem can be mapped to finding the maximum clique (largest fully connected subgraph) in a graph representing molecular interactions, which is a known NP-hard problem suitable for QAOA [33].

Experimental Protocol for Molecular Docking

A recent study demonstrated the application of a Digitized Counterdiabatic QAOA (DC-QAOA) approach to molecular docking problems of unprecedented size (14 and 17 nodes) on GPU-simulated quantum hardware [33]. The detailed methodology is as follows:

- Problem Mapping: The molecular docking problem is mapped to a maximum clique problem on a vertex-weighted graph, where nodes represent potential ligand poses or interaction sites, and edges represent compatible or favorable interactions.

- QUBO Formulation: The maximum clique problem is encoded into a QUBO formulation, which is then transformed into an Ising-type cost Hamiltonian (H_C) suitable for QAOA [33] [32].

- Algorithm Execution: The DC-QAOA variant, which incorporates counterdiabatic driving to potentially enhance performance, is implemented. The study utilized warm-starting techniques, initializing the quantum algorithm with a classically pre-computed solution to reduce the number of quantum operations required [33].

- Hardware and Simulation: The quantum circuits were simulated on a high-performance GPU cluster to manage the computational load, especially for the larger 17-node instance [33].

- Validation: The quality of the solution is assessed by comparing the computed binding configuration and its energy to the known optimal solution.

Table 1: Key Experimental Results from QAOA for Molecular Docking [33]

| Metric | Description | Finding |

|---|---|---|

| Problem Instance Size | Number of nodes in the docking graph | 14 and 17 nodes (largest published instance) |

| Algorithm Variant | Type of QAOA used | Digitized Counterdiabatic QAOA (DC-QAOA) |

| Initialization Technique | Method for parameter/state initialization | Warm-starting with classical solutions |

| Key Outcome | Performance and result of the simulation | Binding interactions represented the anticipated exact solution |

| Computational Challenge | Runtime scaling with problem size | Significant escalation in computational times with increased instance size |

Performance Analysis and Comparison with Classical Methods

The performance of QAOA is typically measured by the approximation ratio, which is the ratio of the cost achieved by the algorithm to the cost of the true optimal solution [32]. Its performance varies significantly depending on the problem type and structure.

For the Maximum Cut (MaxCut) problem on random (d)-regular graphs, the approximation ratio of QAOA improves as the graph degree (d) increases [37]. Furthermore, parameters optimized on tree-like subgraphs can be transferred to finite-size graphs, enabling a parameter-free approach that can outperform classical benchmarks like the Goemans-Williamson algorithm [37].

In contrast, for the Maximum Independent Set (MIS) problem, QAOA exhibits the opposite behavior: the approximation ratio decreases as the graph degree increases [37]. This performance limitation is attributed to the overlap gap property, which hinders local algorithms like low-depth QAOA from finding near-optimal solutions in certain random graph ensembles [37].

Table 2: Performance of QAOA on Different Problem Types [37] [32]

| Problem Type | Performance Trend with Graph Degree | Comparison to Classical Algorithms | Key Limiting Factor (if any) |

|---|---|---|---|

| MaxCut | Approximation ratio improves with higher degree. | Can outperform Goemans-Williamson on random regular graphs with transferred parameters. | Performance is highly dependent on parameter optimization. |

| Maximum Independent Set (MIS) | Approximation ratio decreases with higher degree. | Beats minimal greedy heuristic for low-degree graphs with specific parameter strategies. | Overlap gap property restricts performance on high-degree graphs. |

| Molecular Docking | Performance is instance-dependent and linked to the underlying clique problem. | Shows potential for finding exact solutions for specific mapped instances. | Computational time escalates significantly with problem size. |

Impact of Error Detection and Hardware Noise

On real hardware, noise is a significant challenge. A study implementing QAOA with up to 20 logical qubits for the MaxCut problem used the ([[k+2,k,2]]) "Iceberg" quantum error detection code [38]. The encoded circuit showed improved algorithmic performance compared to the unencoded circuit, demonstrating that even partial fault tolerance can be beneficial for QAOA on current hardware [38]. This highlights the critical importance of error mitigation and fault-tolerant strategies for realizing the potential of QAOA in practical applications.

A Practical Guide for Chemistry Researchers

The Scientist's Toolkit: Essential Components for a QAOA Experiment

Implementing QAOA for chemistry research requires a combination of classical and quantum resources. The table below details the key "research reagents" – the essential components and their functions.

Table 3: Essential Components for a QAOA Experiment in Chemistry Research

| Component | Category | Function & Relevance |

|---|---|---|

| QUBO/Ising Formulation | Theoretical Foundation | Translates a real-world chemistry problem (e.g., molecular docking) into a binary optimization format compatible with QAOA. |

| Cost Hamiltonian ((H_C)) | Quantum Circuit Component | Encodes the problem's cost function into the quantum circuit via parameterized phase gates (e.g., (e^{-i\gamma Zi Zj})). |

| Mixer Hamiltonian ((H_B)) | Quantum Circuit Component | Facilitates exploration of the solution space, typically implemented with Pauli-X rotations ((e^{-i\beta X_i})). |

| Classical Optimizer | Classical Software | Finds optimal parameters ((\beta, \gamma)) by minimizing the measured energy, using methods like COBYLA or Powell [35] [36]. |

| Quantum Hardware/Simulator | Computational Platform | Executes the quantum circuits. Simulators (e.g., on GPUs) are used for algorithm development, while real NISQ devices are for final runs [33]. |

| Warm-Starting Technique | Algorithmic Enhancement | Initializes QAOA with a good classical solution, reducing quantum circuit depth and improving convergence [33]. |

Implementation Roadmap and Considerations

For researchers aiming to apply QAOA, the following roadmap is recommended:

- Problem Selection and Formulation: Identify a specific NP-hard problem in your chemistry workflow (e.g., molecular docking, protein folding) and map it meticulously to a QUBO or Ising model [33] [32].

- Algorithm Selection: Choose a QAOA variant suitable for the problem and available resources. Standard QAOA is a starting point, but consider advanced versions like DC-QAOA for potential performance gains [33].

- Parameter Strategy: Decide on a parameter optimization strategy. This can be full optimization, leveraging parameter transfer from similar problems [39] [37], or using fixed parameters derived from theoretical studies to avoid the optimization bottleneck.

- Execution and Mitigation: Run the algorithm on a simulator for validation and then on quantum hardware if accessible. Employ error detection [38] and mitigation techniques to improve result fidelity on noisy devices.

- Validation and Analysis: Compare the QAOA solution with classical benchmarks to assess performance and practical utility for the specific problem instance.