Quantum Chemical Prediction of Spectroscopic Data: From Theory to Biomedical Applications

This article provides a comprehensive overview of the rapidly evolving field of quantum chemical (QC) prediction of spectroscopic data, tailored for researchers, scientists, and drug development professionals.

Quantum Chemical Prediction of Spectroscopic Data: From Theory to Biomedical Applications

Abstract

This article provides a comprehensive overview of the rapidly evolving field of quantum chemical (QC) prediction of spectroscopic data, tailored for researchers, scientists, and drug development professionals. It explores the foundational principles that link electronic structure to spectral properties, details cutting-edge methodological advances including machine learning-accelerated computations, and offers practical guidance for troubleshooting and optimizing calculations for accuracy and efficiency. Through a critical examination of validation protocols and comparative analyses of different computational methods, the article serves as a strategic guide for integrating reliable QC predictions into the drug discovery pipeline, from target identification to candidate validation, thereby reducing reliance on costly and time-consuming experimental trials.

The Quantum Foundation: Linking Electronic Structure to Spectral Signatures

Ab initio quantum chemistry methods are computational techniques designed to solve the electronic Schrödinger equation using only fundamental physical constants and the positions and number of electrons in the system as input [1]. The term "ab initio" means "from the beginning" or "from first principles," indicating that these methods rely solely on quantum mechanics without empirical parameters [1]. This approach provides a fundamental framework for predicting molecular properties, enabling researchers to explore chemical systems with high accuracy and transferability. For drug development professionals, these methods offer powerful tools for predicting molecular behavior, spectroscopic properties, and reactivity patterns, which are crucial for rational drug design.

The accuracy of these computational predictions is paramount in spectroscopic data research, where subtle electronic and vibrational features must be correctly interpreted to understand molecular structure and function. This application note details the core principles, protocols, and computational tools that enable the quantum chemical prediction of molecular properties from first principles.

Theoretical Foundations

The Fundamental Equation

At the heart of ab initio quantum chemistry lies the time-independent, non-relativistic electronic Schrödinger equation within the Born-Oppenheimer approximation [1]:

ĤΨ = EΨ

Where Ĥ is the electronic Hamiltonian operator, Ψ is the many-electron wavefunction, and E is the total electronic energy. Solving this equation provides access to the electronic energy and wavefunction, from which all molecular properties can be derived [1]. The challenge arises from the electron-electron repulsion terms in the Hamiltonian, which make the equation analytically unsolvable for systems with more than one electron, necessitating approximate computational methods.

Hierarchy of Computational Methods

Ab initio methods form a systematic hierarchy that enables researchers to balance computational cost with desired accuracy:

Hartree-Fock (HF) Theory provides the simplest wavefunction approximation but does not explicitly include electron correlation effects, considering only the average electron-electron repulsion [1]. Its nominal computational cost scales as N⁴, where N represents system size [1].

Post-Hartree-Fock Methods introduce electron correlation through various approaches. Møller-Plesset perturbation theory (MP2, MP3, MP4) provides increasingly accurate treatment of electron correlation with scaling from N⁴ to N⁷ [1]. Coupled cluster methods (CCSD, CCSD(T)) offer higher accuracy with N⁶ to N⁷ scaling [1]. For systems where a single determinant reference is inadequate, such as bond breaking, multi-reference methods like multi-configurational self-consistent field (MCSCF) are employed [1].

Density Functional Theory (DFT) approaches the electronic structure problem through the electron density rather than the wavefunction, often providing favorable accuracy-to-cost ratios, though traditional DFT is not strictly considered ab initio due to potential empirical parameterization.

Composite Methods such as Gaussian-n theories (G1, G2, G3, G4) combine multiple calculations at different levels of theory and basis sets to achieve high accuracy, typically targeting chemical accuracy of 1 kcal/mol [2]. These methods systematically approach the exact solution by combining various corrections.

Key Methodologies and Protocols

Hartree-Fock Protocol

The Hartree-Fock method provides the foundational wavefunction for most correlated ab initio calculations. The standard protocol involves:

- Molecular Geometry Input: Provide initial nuclear coordinates and atomic numbers

- Basis Set Selection: Choose an appropriate Gaussian-type orbital basis set (e.g., 6-31G(d), cc-pVDZ)

- SCF Calculation: Solve the Roothaan-Hall equations self-consistently:

- Form the initial Fock matrix using guess orbitals

- Diagonalize the Fock matrix to obtain new orbitals

- Form a new Fock matrix using the new orbitals

- Repeat until energy and density matrix convergence (typically 10⁻⁶ to 10⁻⁸ a.u.)

- Property Calculation: Compute desired properties from the converged wavefunction

Table 1: Common Basis Sets for Ab Initio Calculations

| Basis Set | Description | Applications |

|---|---|---|

| 6-31G(d) | Valence double-zeta with polarization functions | Geometry optimizations, frequency calculations |

| cc-pVDZ | Correlation-consistent valence double-zeta | Correlated calculations, property prediction |

| aug-cc-pVQZ | Augmented correlation-consistent valence quadruple-zeta | High-accuracy energy calculations, spectroscopy |

| def2-TZVPD | Triple-zeta valence plus polarization and diffuse functions | High-level DFT calculations, non-covalent interactions |

Coupled-Cluster Singles and Doubles with Perturbative Triples (CCSD(T)) Protocol

The CCSD(T) method is often considered the "gold standard" for single-reference quantum chemistry due to its excellent balance of accuracy and computational cost. The detailed protocol includes:

- Reference Wavefunction: Perform a Hartree-Fock calculation to obtain the reference wavefunction

- CCSD Calculation: Solve the coupled-cluster equations for singles and doubles excitations:

- Form the similarity-transformed Hamiltonian e^(−T̂)Ĥe^(T̂)

- Solve the coupled-cluster amplitude equations iteratively

- Check for convergence of the correlation energy (typically 10⁻⁶ to 10⁻⁸ a.u.)

- Triples Correction: Compute the non-iterative perturbative triples correction (T)

- Property Evaluation: Calculate molecular properties from the coupled-cluster wavefunction

The computational cost of CCSD(T) scales as N⁷, making it prohibitive for large systems, though local correlation approximations can reduce this scaling [1].

Composite Method Protocol (Gaussian-4 Theory)

Composite methods like G4 provide a recipe for achieving high accuracy without the prohibitive cost of directly computing at the target level. The G4 protocol [2]:

- Geometry Optimization: Optimize molecular structure at B3LYP/6-31G(2df,p) level

- Zero-Point Energy Calculation: Compute harmonic frequencies at B3LYP/6-31G(2df,p) level and scale ZPVE by an empirical factor (0.9854)

- Single-Point Energy Calculations:

- Compute CCSD(T)/6-31G(d) energy

- Compute MP2/GTMP2Large energy with all electrons correlated

- Compute HF/G4Large energy and extrapolate to the complete basis set limit

- Higher-Level Corrections: Add spin-orbit correction for heavy elements and empirical higher-level correction based on number of valence electrons and unpaired electrons

- Final Energy Combination: Combine all components to obtain the final G4 energy

This approach typically achieves chemical accuracy (within 1 kcal/mol) for thermochemical properties [2].

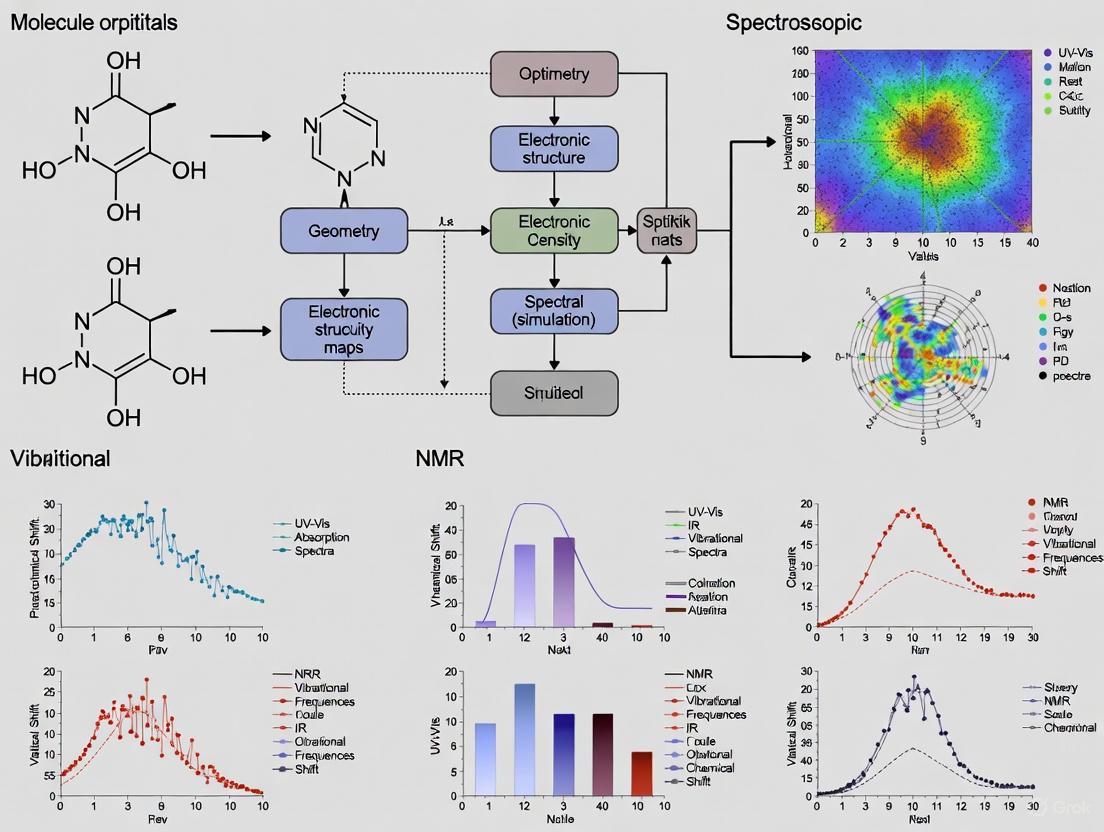

Computational Workflows

The logical flow for calculating molecular properties from first principles follows a systematic path from basic input to sophisticated prediction, as shown in the following workflow:

Computational Workflow for Ab Initio Quantum Chemistry

The relationship between major computational method classes and their respective domains of applicability follows a specific hierarchy:

Method Hierarchy and Application Domains

Advanced Applications in Spectroscopy

Machine Learning Enhancement

Machine learning has revolutionized computational spectroscopy by enabling rapid prediction of spectroscopic properties with quantum mechanical accuracy [3]. ML models can learn different aspects of quantum chemical calculations:

- Primary Outputs: Learning the electronic wavefunction itself, though this remains challenging [3]

- Secondary Outputs: Predicting properties directly from the Schrödinger equation (energies, dipole moments) [3]

- Tertiary Outputs: Direct prediction of spectra through convolution of learned properties [3]

These approaches have been successfully applied to various spectroscopic techniques, including UV-vis, IR, NMR, and X-ray spectroscopy [3]. For drug development, ML-accelerated quantum chemistry enables high-throughput screening of molecular properties and spectroscopic signatures without sacrificing accuracy.

Modern Datasets and Benchmarks

The development of large-scale quantum chemical datasets has been crucial for advancing and benchmarking ab initio methods:

Table 2: Key Quantum Chemistry Datasets for Method Development

| Dataset | Size | Content | Applications |

|---|---|---|---|

| QM7/QM9 | 7,165-134,000 molecules | Small organic molecules (up to 9 heavy atoms) with geometries and properties [4] | Method benchmarking, ML model training |

| OMol25 | 100M+ calculations | Diverse biomolecules, electrolytes, metal complexes at ωB97M-V/def2-TZVPD level [5] | Training neural network potentials, biomolecular simulation |

| GMTKN55 | 55 benchmark sets | Diverse thermochemical and kinetic data | Comprehensive method evaluation |

The recent OMol25 dataset from Meta's FAIR team represents a significant advancement, containing over 100 million calculations on diverse chemical systems including biomolecules, electrolytes, and metal complexes, all computed at the consistently high ωB97M-V/def2-TZVPD level of theory [5]. This dataset enables training of universal neural network potentials that approach the accuracy of high-level DFT at a fraction of the computational cost [5].

The Scientist's Toolkit

Table 3: Essential Computational Resources for Ab Initio Calculations

| Resource | Type | Function | Examples |

|---|---|---|---|

| Basis Sets | Mathematical functions | Represent atomic orbitals | Pople-style (6-31G*), Dunning (cc-pVXZ) |

| Electronic Structure Codes | Software packages | Implement quantum chemistry methods | Gaussian, GAMESS, Psi4, ORCA, Q-Chem |

| Force Fields | Parametrized potentials | Molecular mechanics description | UFF, GAFF for initial geometry generation |

| Visualization Tools | Analysis software | Molecular structure and property analysis | GaussView, Avogadro, VMD |

| High-Performance Computing | Computational infrastructure | Enable calculations on large systems | Computer clusters, cloud computing resources |

Ab initio quantum chemistry provides a fundamental framework for calculating molecular properties from first principles, with applications spanning from fundamental chemical research to drug development. The hierarchical nature of quantum chemical methods enables researchers to select the appropriate level of theory for their specific accuracy requirements and computational resources. Recent advances in machine learning and the development of large-scale datasets like OMol25 are accelerating the application of these methods to biologically relevant systems, promising to make high-accuracy quantum chemical predictions more accessible to drug development professionals. As these methods continue to evolve, they will further enhance our ability to predict and interpret spectroscopic data, enabling more efficient and rational molecular design.

The integration of computational chemistry with spectroscopic techniques like Nuclear Magnetic Resonance (NMR), Mass Spectrometry (MS), and Infrared (IR) spectroscopy has fundamentally transformed molecular analysis. This synergy enables the accurate prediction of spectroscopic properties, facilitates the elucidation of complex molecular structures, and accelerates the discovery of new materials and pharmaceuticals. Where traditional analytical workflows often relied heavily on experimental trial-and-error, computational prediction now provides a powerful complementary approach, offering atomic-level insights and reducing dependency on extensive laboratory work.

Machine learning (ML) has further revolutionized this field by enabling computationally efficient predictions of electronic properties, expanding libraries of synthetic data, and facilitating high-throughput screening [3]. While computational theoretical spectroscopy has been significantly strengthened by ML, its full potential in processing experimental data remains an area of active development [3]. This article presents application notes and detailed protocols for leveraging computational approaches across major spectroscopic techniques, framed within the context of quantum chemical prediction of spectroscopic data.

Application Notes

Computational Nuclear Magnetic Resonance (NMR)

The application of Density Functional Theory (DFT) has established NMR as a uniquely computable analytical technique. Unlike other methods, NMR parameters like chemical shifts and J-couplings are directly derivable from a molecule's electronic structure, enabling full spectral simulation from first principles [6]. This theoretical completeness allows for direct comparison between computed and experimental data, making computational NMR indispensable for structural verification.

Table 1: Performance of DFT Functionals and Basis Sets for NMR Calculation of Polyarsenicals [7]

| Functional | Basis Set | Method | Mean Absolute Error (1H ppm) | Mean Absolute Error (13C ppm) |

|---|---|---|---|---|

| WP04 | 6-311+G(2d,p) | GIAO | 0.15 | 1.8 |

| B97-2 | 6-311+G(2d,p) | GIAO | 0.16 | 2.1 |

| B3LYP | 6-311+G(2d,p) | GIAO | 0.17 | 2.3 |

| PBE0 | 6-311+G(2d,p) | GIAO | 0.18 | 2.5 |

Recent research demonstrates the predictive power of NMR-DFT calculations for structural elucidation of challenging systems. A comprehensive study on polyarsenical compounds with adamantane-like structures highlighted specific functional/basis set combinations that achieve exceptional accuracy, with mean absolute errors as low as 0.15 ppm for 1H chemical shifts [7]. The gauge-including atomic orbital (GIAO) method consistently outperformed other approaches, particularly when paired with the WP04 functional and 6-311+G(2d,p) basis set [7].

The integration of machine learning with quantum chemical methods addresses the substantial computational costs associated with pure QM calculations, especially for large or conformationally diverse molecules [6]. ML models trained on extensive compound databases can automate peak assignments in small-molecule characterization and predict quantum-level chemical shifts with reduced computational effort [6]. Deep learning further enhances nonlinear modeling between molecular structures and spectra, improving both speed and accuracy [6].

Computational Mass Spectrometry

Quantum chemistry electron ionization mass spectrometry (QCxMS) has emerged as a powerful approach for predicting electron ionization mass spectra (EIMS), particularly for hazardous compounds where experimental analysis presents significant challenges. Studies on Novichok agents demonstrate how systematic comparison of experimental and predicted spectra enables validation of computational approaches [8].

The fragmentation patterns in mass spectrometry depend on kinetic pathways that are context-dependent, often involving rearrangements, neutral losses, or charge migration phenomena [6]. Quantum chemical calculations systematically investigate how incorporation of additional polarization functions and expanded valence space in basis sets influences prediction accuracy [8]. Research demonstrates that more complete basis sets yield significantly improved matching scores while maintaining consistent functional parameters for ionization potential calculations [8].

The identification of characteristic patterns in both high and low m/z regions that correspond to specific structural features enables development of a systematic framework for spectral interpretation [8]. This understanding of fragmentation mechanisms allows for prediction of mass spectra for compounds with varying structural complexity, providing a promising tool for rapid identification of new chemical agents without extensive experimental analysis [8].

Computational Infrared Spectroscopy

Machine learning has dramatically accelerated IR spectral predictions by enabling computationally efficient modeling of vibrational properties. ML algorithms can learn complex relationships within massive amounts of data that are difficult for humans to interpret visually [3], making them particularly valuable for predicting IR spectra from molecular structures.

Quantile Regression Forest (QRF) represents a significant advancement for spectroscopic analysis by providing both accurate predictions and sample-specific uncertainty estimates [9]. This machine learning technique, based on random forest, retains the distribution of responses within decision trees, enabling calculation of prediction intervals alongside each prediction [9]. Applied to infrared spectroscopic measurements of soil properties and agricultural produce, QRF models produced highly accurate predictions with intervals that reflected varying confidence levels depending on sample characteristics [9].

The creation of large-scale multimodal computational spectra datasets is accelerating development in this field. Recent resources include IR spectra for 177,461 molecules derived from long-timescale molecular dynamics simulations with ML-accelerated dipole moment predictions, providing valuable resources for benchmarking computational methodologies and developing artificial intelligence models for molecular property prediction [10].

Multi-Technique Data Integration

Data fusion approaches represent the cutting edge of computational spectroscopy, enabling more accurate predictions by integrating complementary information from multiple spectroscopic techniques. Complex-level ensemble fusion (CLF) is a two-layer chemometric algorithm that jointly selects variables from concatenated mid-infrared (MIR) and Raman spectra with a genetic algorithm, projects them with partial least squares, and stacks the latent variables into an XGBoost regressor [11].

When benchmarked against single-source models and classical fusion schemes, the CLF technique consistently demonstrated significantly improved predictive accuracy on paired MIR and Raman datasets from industrial lubricant additives and RRUFF minerals [11]. This approach effectively leverages complementary spectral information, capturing feature- and model-level complementarities in a single workflow [11].

The integration of computational approaches with experimental NMR enables comprehensive study of complex systems like ionic liquids. Molecular dynamics simulations can predict how additives affect dynamics, with experimental NMR measurements validating these predictions, demonstrating how computation and spectroscopy together provide a detailed, quantitative picture of molecular behavior [12].

Protocols

Protocol 1: DFT-Based NMR Chemical Shift Prediction

Objective: To predict 1H and 13C NMR chemical shifts for organic molecules using density functional theory.

Step-by-Step Workflow:

Molecular Structure Optimization

- Generate initial 3D molecular structure from SMILES string or 2D representation.

- Perform geometry optimization using B1B95 functional with 6-311+G(3df,2pd) basis set.

- Confirm convergence to true minimum by verifying no imaginary vibrational frequencies.

- Utilize Conductor-like Polarized Continuum Model (C-PCM) for solvent effects (e.g., chloroform) [7].

NMR Parameter Calculation

- Select GIAO (Gauge-Including Atomic Orbital) method for nuclear magnetic shielding tensor calculation [7].

- Choose appropriate functional (WP04 or B97-2 recommended) with 6-311+G(2d,p) basis set [7].

- Perform single-point energy calculation on optimized geometry.

- Extract isotropic shielding values for all nuclei of interest.

Chemical Shift Referencing

- Calculate shielding tensor for reference compound (TMS for 1H/13C).

- Convert absolute shielding to chemical shifts (δ) using formula: δ = σref - σsample.

- Apply linear regression correction if systematic deviations are observed.

Validation and Analysis

- Compare predicted chemical shifts with experimental data.

- Calculate mean absolute error (MAE) to quantify accuracy.

- Identify outliers that may indicate incorrect structural assignments.

Protocol 2: Quantum Chemical Mass Spectral Prediction

Objective: To predict electron ionization mass spectra using quantum chemical calculations.

Step-by-Step Workflow:

Molecular System Preparation

- Generate 3D molecular structure and optimize geometry using appropriate functional (e.g., ωB97M-V) with def2-TZVPD basis set [5].

- Confirm structure represents global minimum through conformational analysis.

- Calculate ionization potential using high-level method (e.g., CCSD(T) with large basis set).

Fragmentation Pathway Exploration

- Identify potential bond cleavages based on bond dissociation energies.

- Locate transition states for rearrangement reactions using nudged elastic band (NEB) method.

- Calculate relative energies of fragmentation pathways at consistent theory level.

Spectral Simulation

- Compute relative abundances of fragments using Rice-Ramsperger-Kassel-Marcus (RRKM) theory.

- Apply appropriate broadening to match experimental resolution.

- Scale peak intensities based on Boltzmann distribution at ionization temperature.

Experimental Validation

- Compare predicted spectrum with experimental data using similarity scoring.

- Optimize basis set selection (ma-def2-tzvp recommended) to improve matching [8].

- Analyze characteristic fragmentation patterns for structural verification.

Protocol 3: Machine Learning-Enhanced IR Spectroscopy with Uncertainty Quantification

Objective: To predict IR spectra and quantify prediction uncertainty using Quantile Regression Forest.

Step-by-Step Workflow:

Data Preparation and Preprocessing

- Compile dataset of IR spectra with corresponding molecular structures or descriptors.

- Apply standard normal variate (SNV) or multiplicative scatter correction to minimize scattering effects.

- Split data into training (70%), validation (15%), and test (15%) sets.

Model Training

- Implement Quantile Regression Forest (QRF) algorithm using scikit-learn or specialized chemometrics package.

- Set number of trees in ensemble (typically 500-1000).

- Configure parameters to retain full distribution of responses within leaf nodes [9].

Prediction and Uncertainty Estimation

- Generate spectral predictions for test set molecules.

- Calculate prediction intervals (e.g., 90% interval) for each wavelength.

- Identify spectral regions with highest uncertainty for targeted improvement.

Model Validation

- Assess prediction accuracy using root mean square error of prediction (RMSEP).

- Validate uncertainty estimates by checking coverage of prediction intervals.

- Compare performance against traditional methods like PLS regression.

The Scientist's Toolkit

Table 2: Essential Computational Resources for Spectroscopic Prediction

| Resource Category | Specific Tools/Frameworks | Key Function | Application Examples |

|---|---|---|---|

| Quantum Chemistry Software | Gaussian, ORCA, Psi4 | Perform DFT and ab initio calculations of molecular properties | NMR chemical shifts, MS fragmentation pathways, IR vibrational frequencies [7] |

| Machine Learning Libraries | scikit-learn, PyTorch, TensorFlow | Implement ML models for spectral prediction and analysis | Quantile Regression Forest for IR spectra, neural network potentials [9] |

| Spectral Databases | OMol25, IR–NMR Multimodal Dataset | Provide training data and benchmarks for computational models | Pre-computed ωB97M-V/def2-TZVPD results for 100M+ configurations [5] |

| Neural Network Potentials | eSEN, UMA Models | Accelerate molecular dynamics and property prediction | High-accuracy energy calculations for large systems [5] |

| Data Fusion Frameworks | Complex-Level Fusion (CLF) | Integrate complementary information from multiple spectroscopic techniques | Combined MIR and Raman analysis for lubricant additives [11] |

The computational spectroscopy landscape has evolved from specialized applications to an indispensable framework that complements and enhances experimental approaches. The integration of quantum chemical methods with machine learning has created powerful tools for predicting NMR, MS, and IR spectra with remarkable accuracy. Recent advances in datasets like OMol25, algorithmic developments such as Quantile Regression Forest for uncertainty quantification, and multi-technique fusion approaches demonstrate the rapidly growing capabilities in this field.

For researchers in drug development and materials science, these computational approaches offer transformative potential—enabling rapid screening of compound libraries, elucidating structures of complex natural products, and characterizing reactive intermediates that defy isolation. As quantum chemical methods continue to advance alongside machine learning architectures, the integration of computational prediction with experimental spectroscopy will undoubtedly deepen, opening new frontiers in molecular design and discovery.

Density Functional Theory (DFT) has established itself as a cornerstone of modern computational chemistry, physics, and materials science, accounting for approximately 90% of all quantum chemical calculations performed today [13]. Its exceptional balance between computational cost and accuracy makes it particularly valuable for predicting spectroscopic properties across diverse chemical systems, from drug-like molecules to metalloproteins. This overview details the fundamental principles of DFT and basis sets, with a specific focus on their practical application in spectroscopic prediction. We provide structured protocols and best-practice recommendations to guide researchers in making informed methodological choices, enabling reliable prediction of spectroscopic data for applications in drug development and materials design.

Theoretical Foundations

Density Functional Theory Fundamentals

Density Functional Theory is a computational quantum mechanical modelling method used to investigate the electronic structure of many-body systems. Its foundational principle is that all ground-state properties of a many-electron system are uniquely determined by its electron density, ( n(\mathbf{r}) ), a function of only three spatial coordinates [14]. This stands in contrast to wavefunction-based methods, which depend on 3N variables for N electrons.

The formal groundwork for DFT was established by the Hohenberg-Kohn theorems [14]. The first theorem proves the one-to-one correspondence between the external potential acting on a system and its ground-state electron density. The second theorem defines an energy functional, ( E[n] ), for which the ground-state density is the minimizer. The practical application of these theorems was realized by Kohn and Sham, who introduced the concept of a fictitious system of non-interacting electrons that has the same ground-state density as the real, interacting system [14]. This leads to the Kohn-Sham equations:

[ \hat{H}{KS} \psii(\mathbf{r}) = \left[ -\frac{1}{2} \nabla^2 + V{eff}(\mathbf{r}) \right] \psii(\mathbf{r}) = \epsiloni \psii(\mathbf{r}) ]

where ( V{eff}(\mathbf{r}) = V{ext}(\mathbf{r}) + V{Coulomb}(\mathbf{r}) + V{XC}(\mathbf{r}) ) is the effective potential, and ( V_{XC}(\mathbf{r}) ) is the exchange-correlation potential [14] [13]. The total energy can then be expressed as:

[ E[n] = Ts[n] + \int V{ext}(\mathbf{r}) n(\mathbf{r}) d\mathbf{r} + E{Coulomb}[n] + E{XC}[n] ]

where ( Ts[n] ) is the kinetic energy of the non-interacting system, and ( E{XC}[n] ) is the exchange-correlation energy, which encompasses all many-body effects [13]. The central challenge in DFT is finding accurate approximations for ( E_{XC}[n] ), as the exact functional form remains unknown.

Basis Sets in Quantum Chemistry

A basis set is a set of mathematical functions used to represent the molecular orbitals of a system, transforming the differential Kohn-Sham equations into algebraic equations suitable for computer implementation [15]. The most common choice in molecular quantum chemistry is to use Atomic Orbital (AO) basis sets, composed of functions centered on each atomic nucleus, leading to the Linear Combination of Atomic Orbitals (LCAO) approach:

[ \psii(\mathbf{r}) \approx \sum{\mu} c{\mu i} \phi{\mu}(\mathbf{r}) ]

where ( \phi{\mu} ) are the basis functions (atomic orbitals) and ( c{\mu i} ) are the molecular orbital coefficients [15].

Table: Common Types of Basis Sets and Their Characteristics

| Basis Set Type | Description | Common Examples | Typical Applications |

|---|---|---|---|

| Minimal | One basis function per core and valence orbital. | STO-3G [15] | Quick, preliminary calculations on large systems. |

| Split-Valence | Multiple functions to describe each valence orbital, allowing electron density to polarize. | 3-21G, 6-31G [15] | Standard for geometry optimizations and frequency calculations. |

| Polarized | Adds functions with higher angular momentum (e.g., d-functions on carbon, p-functions on hydrogen). | 6-31G*, cc-pVDZ [15] | Essential for accurate thermochemistry and reaction barriers. |

| Diffuse | Adds functions with a small exponent, describing the "tail" of the electron density far from the nucleus. | 6-31+G, aug-cc-pVDZ [15] | Critical for anions, excited states, weak interactions, and spectroscopic properties. |

| Correlation-Consistent | Systematically designed to converge to the complete basis set (CBS) limit for correlated methods. | cc-pVXZ (X=D,T,Q,5,6) [15] | High-accuracy energy calculations and wavefunction-based correlation. |

The two primary types of functions used are Slater-Type Orbitals (STOs), which are physically motivated but computationally costly, and Gaussian-Type Orbitals (GTOs), which are computationally efficient because the product of two GTOs is another GTO [15]. Modern basis sets like Pople-style (e.g., 6-31G*) and Dunning's correlation-consistent (cc-pVXZ) series use contracted GTOs, which are linear combinations of primitive Gaussian functions, to approximate STOs [15].

DFT Methodologies for Spectroscopic Prediction

The Jacob's Ladder of Density Functionals

The accuracy of a DFT calculation hinges on the chosen approximation for the exchange-correlation functional. These functionals are often categorized by a hierarchy of increasing complexity and accuracy, known as "Jacob's Ladder" [13].

Table: Rungs of Jacob's Ladder for Exchange-Correlation Functionals

| Rung | Functional Type | Description | Key Characteristics | Example Functionals |

|---|---|---|---|---|

| 1 | Local Spin Density Approximation (LSDA) | Depends only on the local electron density. | Inaccurate for molecular bond energies; underpredicts bond lengths. | SVWN [13] |

| 2 | Generalized Gradient Approximation (GGA) | Depends on the density and its gradient. | Improved molecular structures and energies over LSDA. | PBE, BLYP [13] |

| 3 | Meta-GGA | Depends on density, its gradient, and the kinetic energy density. | Better thermochemistry and reaction barriers than GGA. | TPSS, SCAN [13] |

| 4 | Hybrid | Mixes a portion of exact Hartree-Fock exchange with GGA/meta-GGA exchange. | Significantly improved accuracy for thermochemistry. | B3LYP, PBE0 [13] |

| 5 | Double-Hybrid | Incorporates both exact exchange and a perturbative correlation component. | Highest accuracy for energies, approaching wavefunction methods. | B2PLYP [13] |

For general-purpose quantum chemical calculations, including the prediction of many spectroscopic properties, hybrid functionals like B3LYP and PBE0 are a robust and widely used choice. However, best-practice guidance recommends moving beyond outdated combinations like B3LYP/6-31G*, which suffers from inherent errors such as missing dispersion interactions [16]. Modern alternatives such as B3LYP-3c or r2SCAN-3c offer superior accuracy and robustness at a similar or lower computational cost [16].

Application to Spectroscopic Properties

DFT is a versatile tool for predicting a wide array of spectroscopic observables by calculating the underlying electronic structure and molecular properties.

EPR Spectroscopy: DFT can predict spin-Hamiltonian parameters such as g-tensors and hyperfine coupling constants [17]. The g-tensor, which reflects the interaction of the molecular magnetic dipole moment with an external magnetic field, is sensitive to the electronic structure, particularly for transition metal complexes [17]. The accuracy of the calculation depends critically on the functional's ability to describe spin density distribution and the inclusion of relativistic effects (e.g., spin-orbit coupling).

Mössbauer Spectroscopy: For (^{57})Fe Mössbauer spectroscopy, DFT calculates the isomer shift (IS), which is proportional to the total electron density at the iron nucleus, and the quadrupole splitting (QS), which reports on the electric field gradient at the nucleus [17]. These parameters provide deep insight into the oxidation and spin state of the iron center, as well as the geometry of its ligand field.

Vibrational Spectroscopy (IR, Raman): The second derivatives of the energy with respect to nuclear coordinates (the Hessian matrix) provide the vibrational frequencies and normal modes. This allows for the direct simulation of IR and Raman spectra. The choice of functional and basis set is crucial; a polarized triple-zeta basis set (e.g., def2-TZVP) and a hybrid functional are typically recommended for good accuracy [16].

Terahertz (THz) Spectroscopy: Low-frequency vibrational (phonon) modes in the THz region probe large-scale conformational changes and collective nuclear motions in biomolecules [18]. Temperature-dependent THz studies can quantify the anharmonicity of hydrogen-bonding networks, providing a stringent test for the underlying computational models, including classical force fields and DFT [18].

Protocols and Best Practices

Decision Workflow for Spectroscopic Studies

The following diagram outlines a systematic workflow for selecting appropriate computational methods for spectroscopic studies, from defining the chemical problem to selecting the final protocol.

Recommended Computational Protocols

The following protocols provide specific, actionable methodologies for calculating different spectroscopic properties. They emphasize a multi-level approach to balance accuracy and computational cost [16].

Protocol 1: Calculation of EPR Parameters (g-Tensor, A-Tensor) for a Metalloprotein Active Site

- Model Preparation: Extract the metal-containing active site from the protein crystal structure. Saturate dangling bonds with hydrogen atoms. For open-shell systems, define the correct multiplicity (e.g., doublet, quartet).

- Initial Geometry Optimization:

- Functional: Use a GGA functional (e.g., BP86) with an appropriate basis set (e.g., def2-SVP).

- Method: Employ the broken-symmetry DFT approach if the system is antiferromagnetically coupled [17].

- Solvation: Include an implicit solvation model (e.g., COSMO, SMD) to mimic the protein environment.

- Single-Point Property Calculation:

- Functional: Use a hybrid functional (e.g., B3LYP, PBE0). The amount of exact exchange can significantly impact results and may need calibration [17].

- Basis Set: Use a polarized triple-zeta basis set (e.g., def2-TZVP) on all atoms. For hyperfine calculations, core-property basis sets (e.g., EPR-II, EPR-III) are recommended for atoms with significant spin density.

- Keywords: Enable the calculation of spin-properties, including the g-tensor and hyperfine couplings. Ensure the inclusion of spin-orbit coupling operators, which are essential for accurate g-tensors [17].

Protocol 2: Prediction of FT-IR Spectra for an Organic Drug Molecule

- Conformer Search: Perform a thorough conformational search (e.g., using molecular mechanics or meta-dynamics) to identify low-energy conformers.

- Geometry Optimization and Frequency Calculation:

- Functional: Use a hybrid-GGA functional like B3LYP-D3 or PBE0-D3. The empirical dispersion correction (-D3) is crucial for capturing intramolecular non-covalent interactions [16].

- Basis Set: A polarized double-zeta basis set like def2-SVP is typically sufficient.

- Validation: Confirm that the optimized geometry is a true minimum (no imaginary frequencies).

- IR Spectrum Generation:

- Refinement (Optional): For higher accuracy, perform a single-point energy calculation on the optimized geometry with a larger basis set (e.g., def2-TZVP) and a hybrid functional to better describe electron correlation effects on frequencies.

- Scaling: Apply a standard scaling factor (specific to the functional/basis set combination) to the calculated harmonic frequencies to account for known systematic errors (anharmonicity, incomplete basis set). Plot the scaled frequencies with appropriate peak broadening.

The Scientist's Toolkit: Essential Research Reagents

Table: Key Computational "Reagents" for DFT-Based Spectroscopy

| Item / Software | Function / Role | Example Use Case |

|---|---|---|

| Quantum Chemistry Code | Software package that implements DFT and other quantum mechanical methods. | Q-Chem [13], Gaussian, ORCA, Turbomole. |

| Density Functional | The approximation that defines the exchange-correlation energy, determining accuracy. | B3LYP-D3 (general organics), PBE0 (solid-state), TPSSh (metals) [16] [13]. |

| Atomic Basis Set | The set of mathematical functions used to expand the molecular orbitals. | 6-31G (initial optimizations), def2-TZVP (property calc.), cc-pVQZ (high-accuracy) [15]. |

| Dispersion Correction | An additive term to account for long-range van der Waals interactions. | Grimme's D3 correction with Becke-Johnson damping (D3(BJ)) [16]. |

| Implicit Solvation Model | A continuum model to approximate the effects of a solvent environment. | SMD (for solvation energies) or COSMO (for relative energies in solution) [16]. |

| Property Calculation Module | Specialized code for calculating specific spectroscopic parameters. | EPR module for g-tensors [17], NMR module for shielding constants. |

| Visualization Software | Tool for analyzing molecular structures, orbitals, and vibrational modes. | GaussView, ChemCraft, VMD. |

Density Functional Theory, when combined with appropriate basis sets and well-defined protocols, provides a powerful and efficient framework for predicting a wide range of spectroscopic properties. Success relies on a careful balance of methodological choices: selecting a robust, modern functional; employing a basis set with sufficient flexibility for the target property; and accurately modeling the chemical environment. By adhering to the best-practice recommendations and protocols outlined in this document, researchers in drug development and materials science can leverage DFT as a reliable tool to interpret complex experimental data, validate structural hypotheses, and gain deep atomic-level insight into molecular structure and reactivity. The continued development of more accurate density functionals and efficient computational algorithms promises to further expand the frontiers of spectroscopic prediction.

The Critical Role of 3D Molecular Conformation in Accurate Spectral Prediction

The accurate prediction of molecular properties is a cornerstone of computational chemistry, with profound implications for drug discovery and materials science. While traditional machine learning models have relied on one-dimensional (1D) string representations or two-dimensional (2D) molecular graphs, emerging evidence demonstrates that these approaches are fundamentally limited because most quantum chemical properties are intrinsically dependent on refined three-dimensional (3D) equilibrium conformations [19]. This technical review examines the critical importance of 3D molecular conformation in spectral and quantum chemical property prediction, providing experimental validation, detailed methodologies, and practical resources for researchers implementing 3D-aware computational approaches.

The fundamental limitation of 1D/2D representations stems from their inability to capture the spatial arrangements of atoms that dictate molecular behavior in physical systems. As molecules exist as dynamic ensembles of conformers in solution, property prediction requires explicit consideration of 3D geometry [20]. This article documents the paradigm shift toward 3D-enhanced machine learning, demonstrating how methods that incorporate spatial structural information significantly outperform traditional approaches across diverse molecular classes and target properties.

Results & Discussion

Performance Benchmarking of 3D-Enhanced Models

Table 1: Performance comparison of molecular property prediction models on benchmark datasets

| Model | Representation | PCQM4MV2 (HOMO-LUMO gap MAE) | OC20 IS2RE (Energy MAE) | Cyclic Molecules (R²) |

|---|---|---|---|---|

| Uni-Mol+ [19] | 3D Conformations | 0.0079 (relative 11.4% improvement) | Not specified | - |

| 3DMSE [21] | 3D Geometries | - | - | - |

| AIMNet2 [22] | 3D-enhanced | - | - | >0.95 (Electronic properties) |

| Traditional 2D ECFP [23] | 2D Fingerprints | Higher MAE | Higher MAE | ~0.6-0.8 (Electronic properties) |

| Graph Neural Networks [23] | 2D Graphs | Moderate accuracy | Moderate accuracy | ~0.8-0.9 (Electronic properties) |

The benchmarking data reveals a consistent advantage for 3D-enhanced approaches across diverse molecular systems. On the PCQM4MV2 dataset, which contains approximately 4 million molecules and targets the HOMO-LUMO gap property, Uni-Mol+ achieves a substantial improvement over previous state-of-the-art methods, with a relative improvement of 11.4% on validation data for single-model performance [19]. This improvement stems from the model's ability to iteratively refine raw 3D conformations toward DFT equilibrium structures before property prediction.

For cyclic organic molecules, which play crucial roles in bioactive compounds and organic electronics, the 3D-enhanced AIMNet2 model demonstrates exceptional performance, achieving R² values exceeding 0.95 for key electronic properties including HOMO-LUMO gap, ionization potential, and redox potentials [22]. This represents a significant advancement over 2D-based models, with mean absolute errors reduced by over 30%, enabling high-throughput screening for functional molecule discovery.

Systematic comparisons between 2D and 3D descriptors reveal that while traditional 2D extended connectivity fingerprints (ECFPs) show reasonable performance, they are consistently outperformed by 3D-based approaches, particularly for conformation-sensitive properties [23]. The Uni-Mol model, which utilizes atomic coordinates and elements combined with ground-truth conformation, significantly surpasses both traditional 2D and 3D descriptors, though its accuracy decreases when suboptimal conformers are used as input [23].

Conformational Ensembles in Property Prediction

Molecular properties under experimental conditions represent statistical averages across all accessible conformers at finite temperature [20]. This fundamental principle necessitates consideration of conformational ensembles rather than single structures for accurate property prediction. The GEOM dataset addresses this need by providing 37 million molecular conformations for over 450,000 molecules, enabling the development of models that predict properties from conformer ensembles [20].

The critical importance of ensemble-based approaches is particularly evident for thermodynamic properties and biological activities where molecular flexibility plays a decisive role. Studies comparing aggregation methods for conformer ensembles have demonstrated that using all available conformers as simple data augmentation consistently achieves high prediction accuracy, followed by mean aggregation approaches [23]. Multi-instance learning methods, particularly neural network-based approaches with self-attention mechanisms, show promise for automatically extracting important conformers for target properties without manual weighting schemes.

Experimental Protocols

Protocol 1: 3D Conformation Generation and Refinement for Quantum Chemical Property Prediction

This protocol describes the Uni-Mol+ framework for accurate quantum chemical property prediction through 3D conformation refinement, achieving state-of-the-art performance on benchmark datasets [19].

Materials and Reagents

- Computational Resources: High-performance computing cluster with CPU and GPU nodes

- Software Dependencies: RDKit (v2020.09.1 or later), PyTorch (v1.9.0 or later), OpenBabel (v3.0.0 or later)

- Reference Data: PCQM4MV2 dataset or OC20 dataset for training and validation

Procedure

Initial Conformation Generation:

- Input SMILES strings or 2D molecular graphs

- Generate 8 initial 3D conformations per molecule using RDKit's ETKDG method

- Apply MMFF94 force field optimization for initial refinement

- For molecules where 3D generation fails, generate 2D conformations with flat z-axis using AllChem.Compute2DCoords

- Time requirement: Approximately 0.01 seconds per molecule

Model Architecture Configuration:

- Implement two-track transformer backbone with atom and pair representation tracks

- Enhance pair representation via outer product of atom representation (OuterProduct)

- Incorporate triangular operator for 3D geometric information (TriangularUpdate)

- Set conformation optimization rounds (R) based on dataset complexity

Training Strategy Implementation:

- Sample conformations from pseudo trajectory between RDKit-generated and DFT equilibrium conformations

- Employ mixed sampling strategy using Bernoulli and Uniform distributions

- Bernoulli distribution addresses distributional shift and enhances equilibrium mapping

- Uniform distribution generates intermediate states for data augmentation

Model Training and Inference:

- During training: Randomly sample 1 conformation per epoch as input

- During inference: Generate predictions from 8 conformations and compute average

- Training duration: 24-72 hours on 8 NVIDIA V100 GPUs for PCQM4MV2 dataset

Validation and Testing:

- Evaluate model performance on validation and test sets using mean absolute error

- Compare against baseline models (Graph Networks, GCN, GIN, GAT) for benchmarking

Troubleshooting

- Poor Convergence: Adjust learning rate schedule and increase batch size

- Memory Limitations: Reduce number of conformations per molecule or model dimensions

- Overfitting: Implement early stopping with patience of 10-15 epochs

Protocol 2: Conformer Ensemble Generation for Experimental Property Prediction

This protocol describes the generation of conformer-rotamer ensembles (CREs) using the CREST software, as implemented for the GEOM dataset, suitable for predicting experimental properties including biological activity and physicochemical characteristics [20].

Materials and Reagents

- Computational Resources: Linux cluster with 40+ CPU cores per calculation

- Software: CREST (v2.10 or later) with GFN2-xTB method

- Optional: DFT software (Gaussian16, ORCA) for higher-level refinement

Procedure

Input Preparation:

- Prepare molecular structures in SDF or XYZ format

- For molecules with undefined stereocenters, enumerate stereoisomers

- Time requirement: Variable, depending on molecular complexity

CREST Conformer Sampling:

- Execute CREST with GFN2-xTB Hamiltonian for conformer search

- Use metadynamics sampling for exhaustive conformational exploration

- Set appropriate temperature parameter (default: 298 K)

- Typical runtime: Several hours to days per molecule on 40 CPU cores

Conformer Probability Assignment:

- Calculate conformer probabilities using Boltzmann distribution: [ pi^\text{CREST} = \frac{di \exp(-Ei/kB T)}{\sumj dj \exp(-Ej/kB T)} ]

- Where (di) represents degeneracy, (Ei) conformer energy, (k_B) Boltzmann constant, and (T) temperature

Optional DFT Refinement:

- Select subset of low-energy conformers for DFT optimization

- Use hybrid functional (B3LYP) with dispersion correction and triple-zeta basis set

- Calculate single-point energies at higher theory level for improved accuracy

Ensemble Property Prediction:

- Aggregate properties across conformer ensemble using Boltzmann weights

- Implement multi-instance learning for direct ensemble-to-property mapping

Troubleshooting

- Incomplete Sampling: Increase metadynamics simulation time or use multiple initial guesses

- Force Field Failures: Switch to semi-empirical quantum mechanical methods

- High Computational Demand: Implement conformer pre-screening with faster methods

The Scientist's Toolkit

Table 2: Essential computational tools for 3D molecular property prediction

| Tool Name | Type | Primary Function | Application Context |

|---|---|---|---|

| Uni-Mol+ [19] | Deep Learning Framework | 3D conformation refinement and property prediction | Quantum chemical property prediction for small molecules and catalyst systems |

| CREST [20] | Conformer Sampling | Comprehensive conformer generation using metadynamics | Creating conformer ensembles for flexible drug-like molecules |

| GEOM Dataset [20] | Reference Data | 37 million molecular conformations for 450,000+ molecules | Training and benchmarking conformer-aware machine learning models |

| RDKit [19] | Cheminformatics | Initial 3D conformation generation from SMILES/2D | Preprocessing for 3D deep learning models |

| Balloon [24] | Conformer Generation | 3D structure generation from 2D inputs via genetic algorithm | Building initial conformers for quantum chemical calculations |

| MOPAC2012 [24] | Semi-empirical QM | Fast quantum chemical energy calculations | Conformer ranking and pre-optimization before DFT |

| MolViewSpec [25] | Visualization | Standardized 3D molecular visualization specification | Communicating and sharing molecular scenes and conformations |

| Multimodal Spectroscopic Dataset [26] | Reference Data | Simulated NMR, IR, and MS spectra for 790k molecules | Developing foundation models for spectroscopic prediction |

Workflow Diagram

3D Molecular Property Prediction Workflow

The workflow illustrates the sequential process for 3D-enhanced property prediction, beginning with molecular input and progressing through conformation generation, refinement, and final prediction stages.

Conformer Ensemble Generation Process

This complementary workflow details the process for generating conformer ensembles, a critical prerequisite for accurate 3D-based property prediction, showing both standard and comprehensive sampling approaches.

Stereoelectronic effects, which describe the dependence of electronic interactions and properties on the spatial arrangement of atoms and orbitals, are fundamental determinants of molecular structure, stability, and reactivity. These quantum-mechanical phenomena—including hyperconjugation, anomeric effects, and n→π* interactions—directly influence molecular spectra by altering electron density distributions, vibrational frequencies, and magnetic shielding environments. Within quantum chemical prediction of spectroscopic data research, accurately modeling these effects is crucial for bridging the gap between computed results and experimental observations. This Application Note provides detailed protocols for capturing stereoelectronic effects in spectroscopic predictions, enabling researchers to decode the sophisticated electronic information embedded in molecular spectra.

Fundamental Stereoelectronic Interactions and Spectral Manifestations

Key Stereoelectronic Effects

Stereoelectronic effects arise from through-space and through-bond interactions between filled and empty orbitals, leading to stabilization that influences both molecular structure and spectral properties.

- Hyperconjugation: An interaction between σ or π bonding orbitals with adjacent antibonding orbitals (σ→σ, n→σ), leading to bond length alterations and charge transfer. This effect is quantified through Natural Bond Orbital (NBO) analysis, with stabilization energies typically ranging from 4-20 kJ/mol [27] [28].

- n→π* Interactions: Delocalization of lone pair electrons (n) into adjacent antibonding π* orbitals, commonly observed in systems with carbonyl groups and amide bonds. These interactions provide stabilization energies of approximately 0.5-2.0 kcal/mol and significantly influence torsional angles and peptide bond isomerization [29].

- Anomeric and Homoanomeric Effects: Special categories of hyperconjugation occurring in heterocyclic systems where lone pair electrons on heteroatoms interact with antibonding σ* orbitals of adjacent C-X bonds, preferentially stabilizing axial conformations in six-membered rings [27].

Spectral Impact of Stereoelectronic Effects

Table 1: Spectral Manifestations of Key Stereoelectronic Effects

| Stereoelectronic Effect | NMR Impact | Vibrational Spectral Impact | Typical Spectral Shifts |

|---|---|---|---|

| n→σ* Hyperconjugation | Altered ( ^1J{C-H} ) coupling constants; ( ^1J{C-H{ax}} < ^1J{C-H_{eq}} ) by ~4 Hz in saturated N-heterocycles [28] | Modified C-H stretching frequencies | Δν ~5-15 cm⁻¹ |

| n→π* Interactions | Deshielding of carbonyl carbon chemical shifts; altered ( ^3J_{H-H} ) coupling constants | Carbonyl stretching frequency reduction; altered amide III band intensities | ΔδC ~1-3 ppm; Δν{C=O} ~10-20 cm⁻¹ [29] |

| σ→σ* Hyperconjugation | Increased ( ^1J_{C-H} ) for equatorial vs axial protons in β-position to heteroatoms | Weakened bond stretching forces; reduced vibrational frequencies | Δν ~5-10 cm⁻¹ [28] |

Application Notes: Spectroscopic Analysis of Stereoelectronic Effects

NMR Analysis of Hyperconjugation in Saturated Heterocycles

Background: In nitrogen-containing saturated heterocycles, the interaction between nitrogen lone pair electrons and antibonding σ* orbitals of adjacent C-H bonds (nN→σ*{C-H}) produces characteristic changes in NMR parameters that serve as experimental evidence for hyperconjugative effects [27] [28].

Key Observables:

- One-bond C-H coupling constants (( ^1J_{C-H} )) show distinct differences between axial and equatorial positions

- ( ^1J{C-H{ax}} ) values are typically lower than ( ^1J{C-H{eq}} ) for carbons α to nitrogen

- Chemical shifts of protons involved in hyperconjugative interactions show characteristic upfield or downfield displacements

Table 2: Experimental NMR Parameters for Stereoelectronic Analysis in Piperidones

| Compound | Position | ( ^1J_{C-H} ) (Hz) | ( ^1H ) Chemical Shift (δ, ppm) | Observed Stereoelectronic Effect |

|---|---|---|---|---|

| Piperidone 4 | H(4)ax | - | 4.40 | nN→σ*{C-H} hyperconjugation |

| H(5)eq | - | 2.57 | σ{C-H}→σ*{C-N} stabilization | |

| H(2)ax | - | 3.95 | Through-bond electronic effects | |

| Imidazolidines | C-Hax | 138-142 | - | nN→σ*{C-H_{ax}} interaction [28] |

| C-Heq | 142-146 | - | Reduced hyperconjugation | |

| Hexahydropyrimidines | C-Hax | 136-140 | - | nN→σ*{C-H} stabilization [28] |

Vibrational Spectroscopy for n→π* Interaction Analysis

Background: n→π* interactions in collagen-like peptides involving prolyl-4-hydroxylation influence peptide backbone stability through electronic delocalization, which manifests as specific alterations in vibrational frequencies and intensities [29].

Key Observables:

- Shifts in carbonyl stretching frequencies due to electron density changes

- Altered amide band intensities resulting from changes in peptide bond isomerization

- Characteristic C-O and O-H stretching modifications from hydroxyl group interactions

Quantitative Impact: 4(R)-hydroxylation in proline residues promotes exo ring pucker, optimizing main-chain torsional angles for stable trans peptide bonds and maximizing n→π* interactions with stabilization energies (E_{n→π}) of approximately 0.9 kcal/mol. This enhances σ→σ interactions between axial C-H σ-electrons and C-OH* orbitals of the pyrrolidine ring [29].

Experimental and Computational Protocols

Protocol 1: NMR Analysis of Hyperconjugation in Heterocycles

Objective: Experimentally characterize n→σ* hyperconjugative interactions in saturated N-heterocycles using NMR coupling constants and chemical shifts.

Materials and Methods:

- Sample Preparation: Dissolve 10-20 mg of heterocyclic compound (e.g., piperidone, imidazolidine, hexahydropyrimidine) in 0.6 mL of appropriate deuterated solvent (CDCl₃, DMSO-d₆)

- NMR Acquisition:

- Acquire ( ^1H ) NMR spectrum with sufficient digital resolution (0.1-0.3 Hz/pt)

- Record ( ^1H )-( ^{13}C ) HSQC with J-refocusing to accurately measure one-bond coupling constants

- Collect ( ^1H )-( ^{13}C ) HMBC to confirm connectivity assignments

- Perform t-ROESY experiments to determine conformational preferences (mixing time 200-400 ms)

- Data Analysis:

- Extract ( ^1J{C-H} ) values from ( ^{13}C ) satellites in ( ^1H ) NMR or directly from HSQC cross-peaks

- Compare ( ^1J{C-H} ) values for axial versus equatorial protons

- Correlate reduced ( ^1J{C-H{ax}} ) values with nN→σ*{C-H_{ax}} hyperconjugation

- Confirm through-space interactions via ROESY correlations

Computational Validation:

- Optimize molecular geometry using DFT method (ωB97XD/6-311++G(d,p) recommended)

- Perform NBO analysis to quantify nN→σ*{C-H} stabilization energies (typically 18-19.2 kJ/mol)

- Calculate NMR parameters (chemical shifts and J-couplings) for comparison with experimental values [27] [28]

NMR Hyperconjugation Analysis Workflow: Experimental and computational steps for characterizing n→σ interactions.*

Protocol 2: Quantum Chemical Prediction of Vibrational Spectra with Stereoelectronic Corrections

Objective: Accurately predict IR and Raman spectra while accounting for stereoelectronic effects that influence vibrational frequencies and intensities.

Materials and Methods:

- Software Requirements: Gaussian09 or later, with support for frequency calculations and anharmonic corrections

- Computational Workflow:

- Geometry Optimization:

- Method: PBEPBE/6-31G (balanced for accuracy/efficiency) [30]

- Convergence criteria: Tight optimization (rms force < 0.000015)

- Ensure real frequencies only (no imaginary frequencies)

- Frequency Calculation:

- Use same method/basis set as optimization

- Calculate Raman activities and IR intensities

- Apply anharmonic corrections for improved experimental matching

- Scaling Procedure:

- Apply uniform scaling factor (0.96-0.98 for PBEPBE/6-31G)

- Use dual scaling for high-frequency (>2000 cm⁻¹) and low-frequency regions

- Implement mode-specific scaling for systems with strong stereoelectronic effects

- Geometry Optimization:

Data Interpretation:

- Identify frequency regions most affected by stereoelectronic interactions (C=O stretches, C-H bends)

- Correlate frequency shifts with NBO-derived stabilization energies

- Analyze Raman intensity changes resulting from polarizability alterations

- Compare scaled computational results with experimental reference spectra

Vibrational Spectra Prediction Workflow: Computational steps for predicting IR and Raman spectra with stereoelectronic corrections.

Protocol 3: Machine Learning-Enhanced NMR Prediction with IMPRESSION-G2

Objective: Utilize neural network models for rapid, accurate prediction of NMR parameters with DFT-level accuracy while capturing stereoelectronic influences.

Materials and Methods:

- Software Requirements: IMPRESSION-G2 model, GFN2-xTB for geometry optimization

- Workflow:

- 3D Structure Generation:

- Optimize molecular geometry using GFN2-xTB method (few seconds for ~50 atoms)

- Confirm conformational preferences through relaxed potential energy surface scans

- NMR Prediction:

- Input optimized 3D structure into IMPRESSION-G2 transformer model

- Simultaneously predict all NMR parameters (<50 ms per molecule):

- Chemical shifts (¹H, ¹³C, ¹⁵N, ¹⁹F)

- Scalar couplings (¹J, ²J, ³J, ⁴J) for H, C, N, F nuclei

- Stereoelectronic Analysis:

- Identify outliers in predicted vs experimental values as potential stereoelectronic indicators

- Correlate J-coupling variations with dihedral angles and heteroatom influences

- 3D Structure Generation:

Performance Metrics:

- Mean Absolute Deviations: ¹H shifts ~0.07 ppm, ¹³C shifts ~0.8 ppm, ³JHH ~0.15 Hz

- Speed enhancement: 10³-10⁴ times faster than full DFT workflows [31]

Table 3: Essential Resources for Stereoelectronic Effects Analysis

| Resource | Specification/Function | Application Context |

|---|---|---|

| Computational Software | ||

| Gaussian09/16 | Quantum chemical calculations with frequency analysis | Geometry optimization, vibrational frequency calculation, NBO analysis [30] |

| IMPRESSION-G2 | Transformer neural network for NMR prediction | Rapid prediction of chemical shifts and J-couplings from 3D structures [31] |

| NBO 7.0 | Natural Bond Orbital analysis | Quantification of hyperconjugative interactions and stabilization energies [27] [28] |

| Experimental Resources | ||

| Deuterated Solvents | CDCl₃, DMSO-d₆, etc. for NMR spectroscopy | Sample preparation for conformational analysis in solution [27] |

| Chiral Ligands | (R)-5,5′,6,6′,7,7′,8,8′-octafluoro-BINAS | Probing stereoelectronic effects in ligand exchange reactions [32] |

| Computational Methods | ||

| ωB97XD/6-311++G(d,p) | Density functional theory with dispersion correction | Accurate geometry optimization for stereoelectronic analysis [27] |

| PBEPBE/6-31G | Balanced DFT functional for large systems | High-throughput vibrational frequency calculations [30] |

| Databases | ||

| Cambridge Structural Database | Experimental crystal structures | Source of training data and structural validation [31] |

| ChEMBL | Bioactive molecule database | Source of drug-like molecules for spectral calculations [30] [31] |

Stereoelectronic effects represent a critical frontier in the quantum chemical prediction of spectroscopic data, providing the conceptual bridge between orbital-level interactions and experimental observables. The protocols outlined herein enable researchers to systematically investigate these effects through complementary experimental and computational approaches. As machine learning methods like IMPRESSION-G2 continue to advance, incorporating explicit stereoelectronic descriptors—such as those in stereoelectronics-infused molecular graphs (SIMGs)—will further enhance our ability to predict and interpret molecular spectra [33]. This integrative approach promises to accelerate research in drug discovery, materials science, and catalyst design by providing deeper insights into the relationship between electronic structure, molecular conformation, and spectroscopic signatures.

Advanced Methods and Real-World Biomedical Applications

Quantum chemistry provides the fundamental framework for predicting molecular properties and spectroscopic data by solving the electronic Schrödinger equation. However, the high computational cost of accurate quantum chemical methods, such as coupled cluster theory, presents a significant bottleneck for research in drug development and materials science. These calculations can require days or even weeks for moderately-sized molecules, severely limiting high-throughput screening and the exploration of complex chemical systems [34].

The integration of machine learning (ML) with quantum chemistry has emerged as a transformative solution to this challenge. By learning from existing quantum chemical data, ML models can predict electronic structures and molecular properties with near-quantum accuracy at a fraction of the computational cost, accelerating calculations by up to 1,000 times [34]. This paradigm shift not only accelerates computations but also opens new avenues for inverse molecular design and the efficient prediction of complex spectroscopic properties, thereby enhancing the capabilities of researchers and scientists in spectroscopic data analysis.

Machine Learning Approaches to Quantum Chemical Calculations

Machine learning models circumvent the need for explicit, costly quantum chemical calculations by learning the underlying mathematical mapping between molecular structure and electronic properties from reference data. These approaches can be categorized by the level of quantum mechanical information they predict.

Learning the Electronic Wavefunction

The most fundamental approach involves directly predicting the quantum mechanical wavefunction in a local basis of atomic orbitals. The SchNOrb (SchNet for Orbitals) framework exemplifies this strategy. It uses a deep neural network to predict the Hamiltonian matrix in an atomic orbital basis, from which molecular orbitals, eigenvalues (such as orbital energies), and all other ground-state properties can be derived [35].

- Architecture: SchNOrb extends an atomistic neural network (SchNet) by constructing symmetry-adapted pairwise features (( \mathbf{\Omega}_{ij}^l )) to represent the Hamiltonian matrix block for atom pairs (i, j). These features ensure the model respects the rotational symmetries of atomic orbitals [35].

- Input and Output: The model takes 3D atomic coordinates and nuclear charges as input. It outputs the Hamiltonian and overlap matrices, which are then diagonalized to obtain molecular orbitals and orbital energies [35].

- Significance: This provides full access to the electronic structure via the wavefunction at "force-field-like efficiency" and offers an analytically differentiable representation of quantum mechanics, which is crucial for property optimization [35].

Learning from Orbital Representations

Other models leverage orbital information as a feature set to improve predictions. OrbNet, for instance, uses a graph neural network where the nodes represent electron orbitals and the edges represent interactions between them. This architecture is inherently more aligned with the Schrödinger equation than graphs based solely on atoms and bonds, enabling accurate predictions for molecules much larger than those in its training data [34].

A related approach introduces Stereoelectronics-Infused Molecular Graphs (SIMGs), which enrich standard molecular graphs with quantum-chemical information about natural bond orbitals and their interactions. This explicitly encodes stereoelectronic effects that influence molecular reactivity and stability. A key advantage is a dedicated model that can rapidly generate these SIMGs from standard molecular graphs in seconds, making this quantum-chemical insight accessible for large systems like peptides and proteins where direct calculations are intractable [33].

Learning Molecular Properties for Spectroscopy

Most current ML models in spectroscopy predict the secondary or tertiary outputs of quantum chemical calculations [3].

- Secondary Outputs: These are properties computed directly from the Schrödinger equation, such as electronic energies, dipole moments, or coupling constants. Learning these allows for the subsequent computation of various spectroscopic properties [3].

- Tertiary Outputs: These are the final spectra themselves. While faster, this approach can sacrifice physical interpretability regarding the electronic structure origins of spectral features [3].

Table 1: Categorization of Machine Learning Models in Quantum Chemistry and Spectroscopy

| Model Type | Target Output | Key Example(s) | Advantages | Limitations |

|---|---|---|---|---|

| Wavefunction Models | Hamiltonian matrix; Molecular orbitals | SchNOrb [35] | Provides access to all ground-state properties; Analytically differentiable | High complexity; Requires sophisticated architecture |

| Orbital-Feature Models | Molecular properties | OrbNet [34], SIMGs [33] | Strong performance and transferability; Incorporates key quantum insights | Relies on accurate orbital features from initial calculation |

| Property Prediction Models | Specific spectroscopic properties (e.g., energies, spectra) | Various supervised ML models [3] | Computationally efficient; Directly applicable for spectral prediction | Limited transferability to properties not included in training |

Application Notes: Machine Learning for Spectroscopic Predictions

The application of these ML methods has demonstrated significant success across various spectroscopic domains, enabling rapid and accurate predictions that were previously infeasible.

Performance and Validation

ML models have achieved accuracy close to "chemical accuracy" (~0.04 eV) for properties like orbital energies [35]. In real-world applications:

- OrbNet performs quantum-chemistry calculations 1,000 times faster than conventional methods, enabling interactive computational work [34].

- SchNOrb has been used to perform ML-driven molecular dynamics simulations, such as simulating the evolution of the electronic structure during a proton transfer in malondialdehyde, reducing computational cost by 2–3 orders of magnitude [35].

- In mass spectrometry, the QCEIMS (Quantum Chemistry Electron Ionization Mass Spectrometry) method uses quantum chemical molecular dynamics to predict EI mass spectra for compounds not found in experimental libraries, such as trimethylsilyl (TMS) derivatives. For a set of 816 TMS compounds, in silico spectra showed a weighted dot score similarity of 635 (out of 1000) compared to experimental library spectra, demonstrating substantial predictive power for complex fragmentation processes [36].

Table 2: Quantitative Performance of Selected ML-QC Models

| Model / Method | Key Performance Metric | Computational Speed-up | Validated On / Application |

|---|---|---|---|

| SchNOrb [35] | Near "chemical accuracy" (~0.04 eV) for properties | 100 to 1000x | Organic molecule dynamics; HOMO-LUMO gap optimization |

| OrbNet [34] | Accurate property predictions for molecules 10x larger than training data | 1000x | Drug candidate properties; Solubility; Protein binding |

| QCEIMS [36] | Average spectral similarity score of 635/1000 for TMS derivatives | N/A (Enables in silico prediction) | Prediction of electron ionization mass spectra for derivatized metabolites |

Inverse Design and Optimization

A powerful application of ML-quantum chemistry models is inverse design, where molecular structures are optimized for target electronic properties. Because models like SchNOrb provide an analytically differentiable representation of quantum mechanics, they allow for efficient gradient-based optimization. For example, researchers can directly optimize a molecular structure to achieve a specific HOMO-LUMO gap, a critical property in photochemistry and material science [35].

Protocols for Key Experiments

Protocol: ML-Assisted Prediction of Optical Absorption Spectra

This protocol outlines the process of using a machine learning model to predict the UV-visible absorption spectrum of a novel organic molecule.

1. Research Objective: To rapidly predict the UV-vis absorption spectrum of a candidate drug molecule to assess its photophysical properties prior to synthesis.

2. Background: Traditional time-dependent density functional theory (TD-DFT) calculations for excited states are computationally demanding. ML models trained on TD-DFT data can predict spectra within seconds [3].

3. Materials and Data Requirements:

- Software: An ML spectroscopy platform (e.g., SpectrumLab, SpectraML [37]) or a pre-trained model like OrbNet [34].

- Input: 3D molecular structure of the candidate molecule in a standard format (e.g., SDF, XYZ).

- Training Data: The protocol assumes the ML model has been pre-trained on a large dataset of quantum chemical calculations (e.g., excitation energies and oscillator strengths from TD-DFT).

4. Procedure: 1. Structure Preparation: Generate a reasonable 3D conformation of the candidate molecule. This can be done using molecular mechanics or a fast semi-empirical quantum method. 2. Model Input: Submit the 3D structure file to the ML prediction software. 3. Prediction Execution: The ML model will infer the key spectroscopic properties. For models predicting secondary outputs, this includes: - Excitation energies (( \Delta E )) - Oscillator strengths (( f )) - Transition dipole moment vectors [3] 4. Spectrum Generation: Convolve the discrete transitions (excitation energies and oscillator strengths) with a line shape function (e.g., Gaussian or Lorentzian) to produce a continuous absorption spectrum. 5. Validation (Critical): If possible, compare the ML-predicted spectrum for a known reference compound against its experimental spectrum to benchmark accuracy.

The following workflow diagram visualizes the protocol for predicting optical absorption spectra using machine learning:

Protocol: Enhancing Self-Consistent Field (SCF) Convergence with ML

This protocol uses a predicted wavefunction from an ML model to accelerate the convergence of a traditional quantum chemistry calculation.

1. Research Objective: To reduce the number of SCF iterations required to reach a converged result in a density functional theory (DFT) calculation, saving computational time.

2. Background: The SCF procedure is iterative and can stagnate or diverge, especially for molecules with complex electronic structures. Using a good initial guess for the molecular orbitals is crucial [35].

3. Materials:

- Software: A quantum chemistry package (e.g., PySCF, Gaussian, ORCA) and an ML model capable of predicting wavefunctions or orbital coefficients (e.g., SchNOrb [35]).

- Input: 3D molecular structure of the study system.

4. Procedure: 1. ML Prediction: For the target molecule, use SchNOrb (or an equivalent model) to predict the Hamiltonian matrix and, subsequently, the initial molecular orbital coefficients. 2. Wavefunction Restart: In the quantum chemistry software, input these ML-predicted orbitals as the initial guess for the SCF procedure, instead of using the default guess (e.g., superposition of atomic densities). 3. Run SCF: Proceed with the standard SCF calculation. The improved initial guess should lead to a significant reduction in the number of iterations required to achieve convergence. 4. Result Analysis: Confirm that the final, converged result (energy, properties) is consistent with expectations, verifying that the ML guess did not bias the calculation.

The Scientist's Toolkit: Essential Research Reagents and Software

The following table details key software and computational tools that form the essential "reagent solutions" for researchers in this field.

Table 3: Key Research Reagent Solutions for ML-Enhanced Quantum Chemistry

| Tool Name | Type | Primary Function | Relevance to Spectroscopy |

|---|---|---|---|

| SchNOrb [35] | Deep Neural Network | Predicts molecular wavefunctions and Hamiltonian matrices in an atomic orbital basis. | Provides full electronic structure for property derivation; Enables inverse design of molecules with target electronic properties. |

| OrbNet [34] | Graph Neural Network | Predicts molecular properties using symmetry-adapted atomic-orbital features as input. | Rapidly predicts properties (e.g., energies, dipole moments) for large molecules relevant to drug discovery. |

| QCEIMS [36] | Quantum Chemical MD Software | Predicts electron ionization (EI) mass spectra from first principles via molecular dynamics trajectories. | Generates in silico mass spectral libraries for compounds lacking experimental reference data (e.g., TMS derivatives). |

| SpectraML [37] | AI Platform | Standardized deep learning platform for spectroscopic data analysis and prediction. | Offers benchmarks and pre-trained models for various spectroscopic techniques, promoting reproducibility. |

| SIMG Generator [33] | ML Model / Web Tool | Generates stereoelectronics-infused molecular graphs from standard molecular graphs. | Encodes quantum-chemical orbital interactions to improve predictive models for reactivity and spectroscopy. |