Quantum Computing for Complex Biomolecular Problems: From Theory to Clinical Impact

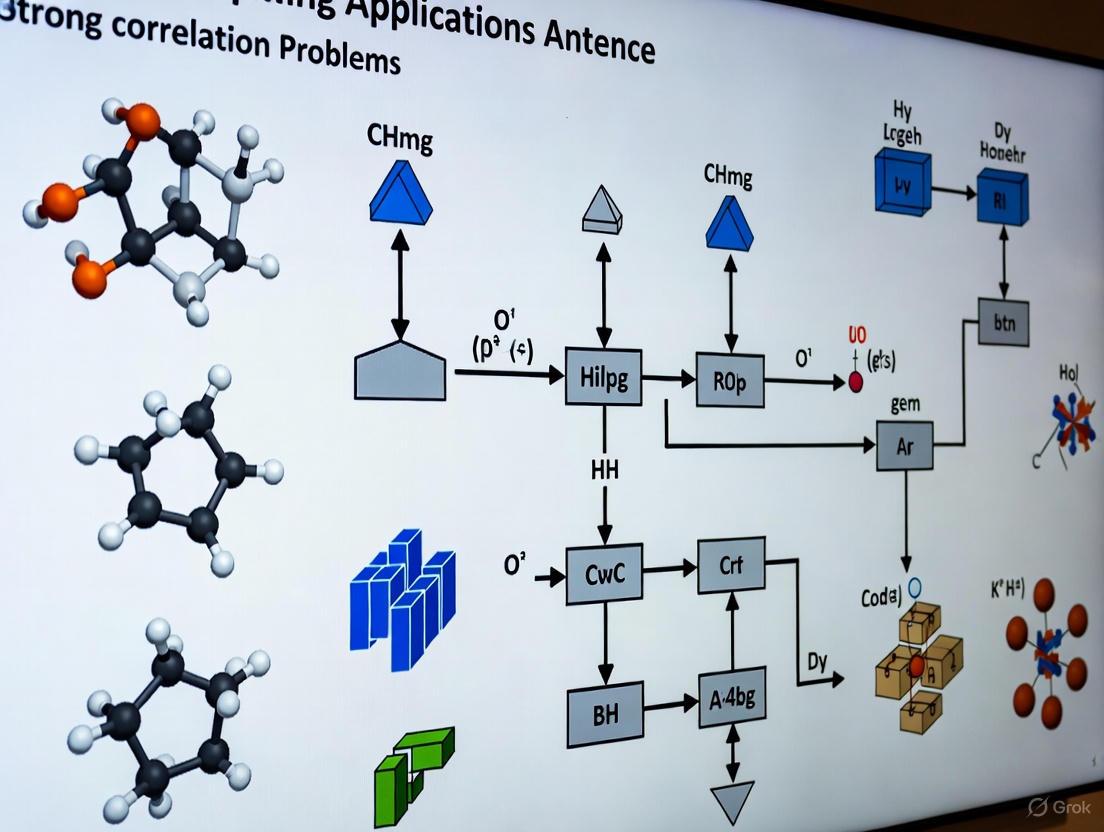

This article explores the transformative role of quantum computing in solving strong correlation problems, a long-standing challenge in computational chemistry and drug discovery.

Quantum Computing for Complex Biomolecular Problems: From Theory to Clinical Impact

Abstract

This article explores the transformative role of quantum computing in solving strong correlation problems, a long-standing challenge in computational chemistry and drug discovery. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive analysis of how quantum mechanics is being harnessed to accurately simulate molecular systems where classical computers fail. We cover the foundational principles, detail current methodological applications in pharmaceutical R&D, address critical challenges like error correction, and validate progress through comparative case studies. The synthesis demonstrates that quantum computing is rapidly transitioning from a theoretical promise to a practical tool with the potential to revolutionize the speed and precision of biomedical research.

The Strong Correlation Problem: Why Classical Computing Falls Short in Molecular Simulation

Defining Strongly Correlated Electrons in Drug-Relevant Molecules

In the realm of quantum chemistry and drug discovery, strongly correlated electrons present both a fundamental challenge and opportunity for advancing computational precision. Strong electron correlation occurs when the motion of one electron is highly dependent on the positions of other electrons, making it impossible to describe their behavior accurately using single-reference wavefunction theories like Hartree-Fock (HF) or standard density functional theory (DFT) approximations [1]. This phenomenon is particularly prevalent in drug-relevant molecules containing transition metals, open-shell systems, biradicals, and covalent bond formation/cleavage processes [2] [1].

The distinction between strongly and weakly correlated systems can be understood through the Heitler-London versus molecular orbital approaches for the H₂ molecule [3] [4]. In the strongly correlated (Heitler-London) limit, ionic configurations are suppressed to minimize Coulomb repulsion energy, while the molecular orbital approach equally weights ionic and non-ionic configurations. A quantitative measure of interatomic correlation strength is given by the parameter: $$ \sum(i) = \frac{〈Φ{SCF}|(Δni)^2|Φ{SCF}〉 - 〈ψ0|(Δni)^2|ψ0〉}{〈Φ{SCF}|(Δni)^2|Φ_{SCF}〉} $$ where $∑(i) = 0$ indicates no correlation and $∑(i) ≈ 1$ indicates strong correlation [3] [4]. In drug discovery contexts, these correlations significantly impact accurate prediction of binding energies, reaction barriers, and electronic properties of covalent inhibitors [2] [5].

Quantitative Characterization in Pharmaceutical Systems

Table 1: Correlation Strength Metrics in Drug-Relevant Molecular Systems

| System Category | Correlation Measure | Typical Value | Computational Challenge |

|---|---|---|---|

| C–C or N–N σ bond | Electron fluctuation reduction (∑) | 0.30-0.35 [3] | Bond cleavage energetics in prodrugs [2] |

| C=C or N=N π bond | Electron fluctuation reduction (∑) | ~0.50 [3] | Resonance energy accuracy |

| Transition metal complexes (e.g., Fe in CYPs) | Active space orbitals required | ~50 orbitals [5] | Metal-ligand bonding accuracy |

| KRAS covalent inhibitors | Near-degeneracy correlation | Multireference [2] | Covalent bond formation energetics |

| Biradicals/Bond dissociation | Static correlation error | >5 kcal/mol in DFT [1] | Reaction barrier prediction |

Table 2: Energetic Comparison for β-lapachone Prodrug C–C Bond Cleavage

| Computational Method | Basis Set | Solvation Model | Reaction Barrier (kcal/mol) | Correlation Treatment |

|---|---|---|---|---|

| DFT (M06-2X) [2] | Not specified | Implicit | Consistent with experiment [2] | Approximate density functional |

| Hartree-Fock [2] | 6-311G(d,p) | ddCOSMO | Reference value | No electron correlation |

| CASCI [2] | 6-311G(d,p) | ddCOSMO | Reference value | Exact within active space |

| VQE (Quantum) [2] | 6-311G(d,p) | ddCOSMO | Comparable to CASCI | Quantum circuit approximation |

Experimental and Computational Protocols

Protocol: Quantum Computation of Gibbs Energy Profiles for Prodrug Activation

Objective: Precisely determine Gibbs free energy profiles for covalent bond cleavage in prodrug activation using hybrid quantum-classical computational approaches [2].

Methodology:

- System Preparation:

- Select key molecular structures along the reaction coordinate for C–C bond cleavage

- Perform conformational optimization using classical methods

- Define active space (typically 2 electrons in 2 orbitals for minimal representation) [2]

Hamiltonian Construction:

- Generate molecular Hamiltonian in fermionic form

- Apply parity transformation to convert to qubit Hamiltonian

- Utilize 6-311G(d,p) basis set for consistent comparison [2]

Quantum Circuit Execution:

- Implement hardware-efficient $R_y$ ansatz with single layer

- Employ Variational Quantum Eigensolver (VQE) framework

- Measure energy expectation values using quantum processor

- Apply readout error mitigation techniques [2]

Solvation and Thermodynamics:

- Incorporate solvation effects via ddCOSMO polarizable continuum model

- Calculate thermal Gibbs corrections at HF/6-311G(d,p) level

- Combine electronic energies with thermal corrections for Gibbs free energy [2]

Validation:

- Compare with classical reference methods (HF, CASCI)

- Benchmark against experimental reaction feasibility [2]

Protocol: Covalent Inhibitor Binding Analysis via Quantum Fingerprints

Objective: Characterize covalent inhibitor interactions with protein targets (e.g., KRAS G12C) using quantum-derived features for binding affinity prediction [5] [6].

Methodology:

- Warhead Electronic Structure Calculation:

- Isolate covalent warhead moiety from inhibitor structure

- Perform classical density matrix embedding theory (DMET) calculations

- Generate initial quantum state for time evolution [5]

Quantum Time Evolution:

- Implement quantum algorithms for real-time dynamics simulation

- Extract time-evolved quantum states

- Calculate quantum features ("quantum fingerprints") [5]

Feature Extraction and Machine Learning:

- Compute highest occupied molecular orbital (HOMO) and lowest unoccupied molecular orbital (LUMO) levels

- Use quantum features as input for machine learning models

- Predict pocket-specific reactivities versus intrinsic warhead properties [5]

Binding Validation:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Strong Correlation Analysis

| Tool/Resource | Function | Application Context |

|---|---|---|

| TenCirChem Package [2] | Quantum chemistry workflow implementation | VQE execution for prodrug activation energy profiles |

| Variational Quantum Eigensolver (VQE) [2] | Hybrid quantum-classical algorithm | Ground state energy calculation for molecular systems |

| Density Matrix Embedding Theory (DMET) [5] | Classical embedding approach | Initial state preparation for quantum time evolution |

| Multiconfiguration Pair-Density Functional Theory (MC-PDFT) [1] | Combined wavefunction-DFT method | Strong correlation treatment for transition metal systems |

| Polarizable Continuum Model (PCM) [2] | Implicit solvation method | Physiological environment simulation for drug molecules |

| Hardware-efficient $R_y$ Ansatz [2] | Parameterized quantum circuit | Near-term quantum processor compatibility |

| Symmetry-Adapted Perturbation Theory (SAPT) [5] | Non-covalent interaction analysis | Drug-target binding energy decomposition |

Quantum Computing Integration and Advantages

Quantum computing offers transformative potential for strongly correlated electron systems in drug discovery through native quantum mechanical representation [7]. The exponential cost of exact simulation on classical computers versus polynomial scaling on quantum hardware for specific problems represents a fundamental advantage [7] [5]. Key integration points include:

Active Space Treatment: Quantum computers enable larger active spaces beyond the ~50 orbital limit where classical computation becomes prohibitive [5]. This is particularly relevant for cytochrome P450 enzymes and other metalloenzymes crucial in drug metabolism.

Dynamic Correlation Capture: Hybrid quantum-classical algorithms like VQE provide a pathway to systematically improve upon mean-field solutions by capturing electron correlation effects essential for accurate drug-relevant molecule energetics [2] [7].

Quantum Machine Learning: Quantum-derived features ("quantum fingerprints") enable enhanced predictive models for covalent inhibitor design, potentially unlocking previously "undruggable" targets like KRAS [5] [6].

The convergence of improved quantum hardware, advanced algorithms, and drug discovery applications positions strongly correlated electron analysis as a prime candidate for achieving practical quantum advantage in pharmaceutical research.

Limitations of Classical Computational Methods (Density Functional Theory)

Density Functional Theory (DFT) stands as a cornerstone of computational quantum chemistry and materials science, enabling the study of electronic structure in atoms, molecules, and solids. Its success hinges on a pragmatic compromise: substituting the intractable many-electron wavefunction with the more manageable electron density as the fundamental variable. While DFT has proven immensely successful for a wide range of applications, its limitations become critically apparent when confronting systems with strong electron correlation. As research pivots towards leveraging quantum computing to solve such classically challenging problems, a precise understanding of where and why DFT fails is essential. This Application Note details the specific limitations of DFT, provides protocols for diagnosing these failures, and contextualizes them within the emerging paradigm of quantum computational solutions.

Core Limitations of Density Functional Theory

The theoretical foundation of DFT is exact, but in practice, all implementations require approximations for the exchange-correlation functional. This is the primary source of its limitations, which are particularly severe in systems relevant to drug development, such as transition metal complexes and conjugated organic molecules.

Table 1: Key Limitations of Density Functional Theory and Their Implications

| Limitation | Primary Cause | Impact on Calculation | Commonly Affected Systems |

|---|---|---|---|

| Self-Interaction Error (SIE) | Inadequate cancellation of an electron's interaction with itself in the approximate functional [8]. | Over-delocalization of electrons; underestimation of band gaps and reaction barriers; poor description of charge-transfer states [9] [8]. | Transition metal oxides, charge-transfer complexes, long-chain molecules. |

| Static Correlation Error | Inability of standard functionals to describe near-degeneracy situations [9]. | Catastrophic failure for bond dissociation, incorrect prediction of electronic ground states in multi-reference systems [9]. | Dissociating bonds, diradicals, anti-ferromagnetic states, many transition-metal complexes. |

| Delocalization Error | The complementary error to SIE; tendency to over-stabilize delocalized densities [9]. | Underestimation of energy barriers in chemical reactions; incorrect electronic densities in extended systems [9]. | Reaction transition states, semiconductor nanocrystals, conjugated polymers. |

| Poor Treatment of Non-Covalent Interactions | Standard (semi-)local functionals do not capture long-range van der Waals (dispersion) forces [9]. | Significant underestimation of binding energies in π-π stacking, hydrogen bonding, and noble gas dimers [9]. | Protein-ligand binding, supramolecular assemblies, molecular crystals. |

The "Charlotte's Web" of Functional Approximations

The journey to improve upon the Local Density Approximation (LDA) has resulted in a complex web of exchange-correlation functionals, each aiming to address specific shortcomings [8]. This hierarchy, often visualized as "Jacob's Ladder," ranges from simple GGAs to meta-GGAs, hybrids, and range-separated hybrids. While this diversity offers a toolbox for different problems, it also creates a significant challenge for practitioners: the choice of functional is often system-dependent and non-transferable. A functional that excels for main-group thermochemistry may fail catastrophically for transition metal catalysis, creating a persistent uncertainty in predictive calculations [10] [8].

Quantitative Benchmarking of DFT Performance

Systematic benchmarking against highly accurate wavefunction theory or experimental data is crucial for assessing the performance of DFT functionals. The following table summarizes typical errors for various properties across different rungs of Jacob's Ladder.

Table 2: Typical Errors of DFT Functional Classes for Key Properties (Representative Values)

| Functional Class | Example | Bond Energy (kcal/mol) | Reaction Barrier (kcal/mol) | Band Gap (eV) | Non-Covalent Interaction (kcal/mol) |

|---|---|---|---|---|---|

| GGA | PBE, BLYP | 10-20 | >10 | Severe Underestimation | Very Poor |

| meta-GGA | SCAN, M06-L | 5-10 | 5-10 | Underestimation | Poor to Moderate |

| Global Hybrid | B3LYP, PBE0 | 3-7 | 3-7 | Moderate Underestimation | Moderate |

| Range-Separated Hybrid | ωB97X-V, CAM-B3LYP | 2-5 | 2-5 | Improved but Underestimated | Good |

| Double-Hybrid | DSD-BLYP | < 3 | < 3 | Good for Molecules | Excellent |

Experimental Protocols for Diagnosing DFT Failures

Before embarking on computationally intensive quantum simulations, researchers should employ these protocols to diagnose potential DFT failures in their systems.

Protocol 4.1: Diagnosing Strong Correlation and Multi-Reference Character

Objective: To determine if a molecular system has significant multi-reference character, rendering standard DFT approximations unreliable.

Workflow:

- Initial DFT Calculation: Perform a geometry optimization and frequency calculation using a standard global hybrid functional (e.g., B3LYP) and a medium-sized basis set (e.g., 6-31G(d)) to confirm a stable minimum.

- Wavefunction Stability Analysis: Check for the existence of lower-energy, broken-symmetry solutions. An unstable wavefunction is a strong indicator of multi-reference character.

- Occupancy Analysis: a. Perform a Complete Active Space Self-Consistent Field (CASSCF) calculation. b. Define an active space encompassing the relevant frontier orbitals (e.g., metal d-orbitals and ligand orbitals). c. Analyze the natural orbital occupancies. Significant deviation from 2.0 or 0.0 (e.g., occupancies between 1.2 and 0.8) indicates strong static correlation.

- $T1$ Diagnostic: Carry out a coupled-cluster singles and doubles (CCSD) calculation on the DFT-optimized geometry. A $T1$ diagnostic value greater than 0.02 for closed-shell molecules signals multi-reference character.

Interpretation: A positive result in either Step 2, 3, or 4 suggests that the system is a poor candidate for standard DFT and a prime target for quantum computing algorithms.

Protocol 4.2: Assessing Charge Transfer Inaccuracies

Objective: To evaluate the performance of DFT for systems with potential charge-transfer excitations, which are critical in photochemistry and photovoltaic materials.

Workflow:

- System Preparation: Construct a model donor-acceptor complex (e.g., a tetrathiafulvalene-tetracyanoquinodimethane complex).

- Reference Data Generation: Calculate the first charge-transfer excitation energy using high-level wavefunction methods (e.g., EOM-CCSD) or obtain reliable experimental UV-Vis data.

- Time-Dependent DFT (TD-DFT) Benchmarking: a. Run TD-DFT calculations with a range of functionals: a GGA (e.g., PBE), a global hybrid (e.g., B3LYP), and a range-separated hybrid (e.g., CAM-B3LYP or ωB97X-D). b. Compute the first few excitation energies and their character.

- Analysis: Compare the TD-DFT results to the reference data. Standard GGAs and global hybrids will typically severely underestimate charge-transfer excitation energies, while range-separated hybrids should provide markedly improved results.

Diagram 1: Diagnostic protocol for strong correlation.

The Quantum Computing Pathway for Strong Correlation

Quantum computing offers a fundamentally different approach to the electronic structure problem, potentially overcoming the core limitations of DFT. Quantum algorithms, such as the Variational Quantum Eigensolver (VQE) and Quantum Phase Estimation (QPE), prepare and manipulate multi-electron wavefunctions directly, thereby explicitly capturing strong correlation without relying on approximate density functionals [11] [12].

The community is progressing through a staged framework for developing quantum applications [12]:

- Stage I-II (Discovery & Problem Instances): Identifying molecules (like the FeMoco nitrogenase cofactor) where quantum computers theoretically surpass classical ones.

- Stage III (Real-World Advantage): Connecting these problem instances to tangible applications, such as designing novel catalysts or drugs.

- Stage IV-V (Engineering & Deployment): Optimizing resource requirements (logical qubits, gate counts) and integrating quantum solutions into real-world workflows.

Recent analyses indicate rapid progress. Resource estimates for simulating molecules have dropped by factors of hundreds to thousands, while hardware roadmaps project the necessary qubit counts and fidelities could be available within the next 5-10 years [13]. The key metric is shifting from simple qubit count to Sustained Quantum System Performance (SQSP), which measures practical scientific throughput [13].

Diagram 2: Classical failure vs. quantum solution pathway.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Strong Correlation Research

| Tool / Resource | Type | Function in Research | Relevance to Quantum Transition |

|---|---|---|---|

| CASSCF/CASPT2 | Wavefunction Method | Provides high-accuracy benchmarks for diagnosing DFT failures and training machine learning potentials [10]. | Gold standard for validating early quantum computer results on small molecules [14]. |

| DMRG/CCSD(T) | Wavefunction Method | Handles strong correlation in larger 1D systems or provides near-exact benchmarks for medium systems. | A performance target for quantum algorithms; used in hybrid quantum-classical workflows. |

| Quantum Cloud Services | Hardware Platform | Provides remote access to prototype quantum processors (e.g., via Google Quantum AI, IBM Quantum). | Essential for running quantum algorithm experiments and assessing current hardware performance [12]. |

| Quantum Algorithm Libraries | Software | Libraries (e.g., Google's Cirq, IBM's Qiskit) provide implementations of VQE and error mitigation techniques. | Used to develop and compile quantum circuits for specific chemistry problems [12]. |

| Post-Quantum Cryptography | Cybersecurity | Upgrades IT infrastructure to be secure against future quantum attacks on encrypted data. | Critical for protecting sensitive intellectual property (e.g., drug designs) in a future quantum era [15]. |

Quantum computing represents a paradigm shift in computational chemistry, offering a fundamentally natural framework for solving problems involving strong electron correlation. Unlike classical computers, which struggle with the exponential scaling of quantum mechanical systems, quantum processors leverage the same principles—superposition and entanglement—that govern molecular behavior [11]. This intrinsic compatibility positions quantum computing as a transformative tool for simulating chemical dynamics and electronic structure, particularly for problems intractable to classical methods [16] [17].

The field is transitioning from theoretical promise to tangible capability. In 2025, the industry has reached an inflection point, marked by hardware breakthroughs and the first documented cases of quantum advantage in real-world applications, such as medical device simulations [18]. This progress is fueled by unprecedented investment, with the global quantum computing market reaching an estimated $1.8 billion to $3.5 billion in 2025 and projected to grow at a compound annual growth rate (CAGR) of over 30% [18]. This report details the protocols, resource requirements, and experimental methodologies enabling this transition, providing a roadmap for researchers aiming to leverage quantum computing for complex chemical problems.

State of the Field: Hardware and Algorithmic Breakthroughs

The resources required for quantum chemical calculations vary significantly based on the algorithm and target hardware. The following table synthesizes key resource estimations to aid in project planning. These figures represent physical qubit counts and runtimes for various algorithms processed under different hardware configurations [19].

Table 1: Quantum Computing Resource Estimator Guide

| Algorithm Class | Physical Qubits (Range) | Runtime (Range) | Key Hardware Considerations |

|---|---|---|---|

| Variational Quantum Eigensolver (VQE) | 0-0.5 Million | 2 hours - 1 month | Suitable for Near-term, Noisy Devices |

| Quantum Approximate Optimization Algorithm (QAOA) | 0.5-1 Million | 1 month - 5 years | Requires Moderate Error Rates |

| Quantum Phase Estimation (QPE) | 1-10 Million | 100 - 1000 Million | Requires High-Fidelity, Fault-Tolerant Logic |

| Fault-Tolerant Quantum Simulation | 10-100 Million | 0.5 - 1000 Million | Dependent on Quantum Error-Correction Overhead |

Key Hardware Performance Metrics

Rapid hardware progress is making these resource estimates increasingly feasible. Breakthroughs in 2025 have directly addressed the critical barrier of quantum error correction:

- Qubit Performance: Google's 105-qubit "Willow" chip demonstrated exponential error reduction as qubit counts increased, a phenomenon known as going "below threshold." It completed a benchmark calculation in minutes that would require a classical supercomputer $$10^{25}$$ years [18].

- Error Rates: Recent breakthroughs have pushed error rates to record lows of 0.000015% per operation [18].

- Coherence Times: Research through the NIST SQMS Nanofabrication Taskforce achieved coherence times of up to 0.6 milliseconds for the best-performing superconducting qubits [18].

- Logical Qubit Progress: Microsoft, in collaboration with Atom Computing, demonstrated 24 entangled logical qubits, the highest number on record, utilizing novel geometric codes that reduce error rates 1,000-fold [18].

Application Protocols: Simulating Chemical Dynamics

Protocol 1: MQB Simulation of Non-Adiabatic Chemical Dynamics

This protocol details the methodology for simulating non-adiabatic photochemical dynamics using a Mixed-Qudit-Boson (MQB) approach on a trapped-ion quantum simulator, as demonstrated in recent experimental work [16]. This method is hardware-efficient, using both qubits and bosonic degrees of freedom to encode molecular information.

Research Reagent Solutions

Table 2: Essential Materials for MQB Quantum Simulation

| Item | Function | Experimental Realization |

|---|---|---|

| Trapped-Ion Qudit | Encodes molecular electronic states | A trapped-ion system (e.g., Yb+ or Sr+) with multiple accessible electronic levels. |

| Bosonic Motional Modes | Encodes nuclear vibrational degrees of freedom | The collective vibrational modes of the ion crystal within the trapping potential. |

| Vibronic Coupling (VC) Hamiltonian | Represents molecular potential energy surfaces and their couplings | Hamiltonian parameters obtained from electronic-structure theory (e.g., DFT). |

| Laser-Ion Interaction System | Drives the evolution of the simulator | Precisely controlled laser pulses with specific frequencies and intensities to reproduce the molecular VC Hamiltonian. |

Step-by-Step Experimental Procedure

Wavefunction Preparation

- Qudit Initialization: Prepare the trapped-ion qudit in a specific electronic state corresponding to the initial molecular electronic state (e.g., the ground or photoexcited state).

- Motional Mode Displacement: Displace the relevant motional modes (bosonic modes) of the trapped ion using laser pulses to prepare the initial nuclear wavepacket.

System Evolution

- Hamiltonian Engineering: Apply laser-ion interactions with frequencies and intensities precisely calibrated to reproduce the target molecular Vibronic Coupling Hamiltonian.

- Temporal Scaling: Evolve the simulator for a specific duration. Note that the molecular dynamics are rescaled from femtoseconds to milliseconds (a factor of approximately $$10^{11}$$) to match the trapped-ion system's accessible timescales.

Observable Measurement

- Repeated Sampling: Measure the desired observables (e.g., electronic state population).

- Dynamics Reconstruction: Repeat the preparation and evolution process for varying evolution durations to reconstruct the time-dependence of the observables and capture the full dynamics.

The programmability of this protocol allows for the simulation of diverse molecules, such as the allene cation, butatriene cation, and pyrazine, by simply adjusting the parameters of the VC Hamiltonian [16].

Diagram 1: MQB simulation workflow

Protocol 2: Verifiable Quantum Advantage in Chemical Simulation

This protocol outlines the co-design approach for achieving verifiable quantum advantage in chemical simulations, a critical focus for industry and academia [18] [17]. The emphasis is on generating solutions that are not only faster but also verifiable, ensuring practical utility.

The Five-Stage Application Development Framework

Developing a practical quantum application is a multi-stage process, as outlined by the Google Quantum AI team [17]. Understanding this framework is essential for structuring a research program.

Table 3: The Five Stages of Quantum Application Research

| Stage | Core Objective | Key Activities | Exit Criteria |

|---|---|---|---|

| 1. Discovery | Find new quantum algorithms in an abstract setting. | Theoretical research, complexity analysis. | A novel algorithm with a proven quantum speedup is proposed. |

| 2. Instance Identification | Identify concrete problem instances exhibiting quantum advantage. | Benchmarking against classical methods, identifying "quantumly-easy" yet "classically-hard" instances. | A method to generate hard instances with a proven quantum advantage is established. |

| 3. Application Connection | Connect advantageous problems to real-world use cases. | Collaboration with domain specialists, mapping industrial problems to quantum-solvable structures. | A real-world problem is identified where the quantum advantage is expected to hold under practical constraints. |

| 4. Resource Estimation | Optimize and estimate resources for the use case. | Logical circuit compilation, error-correction overhead calculation, runtime estimation. | A full resource estimate for the application on a target architecture is completed. |

| 5. Deployment | Execute the application on fault-tolerant hardware. | Running the computation, verifying results, delivering solutions. | The computation is successfully run and its output is validated. |

Step-by-Step Procedure for Verifiable Dynamics Simulation

Problem Formulation & Hamiltonian Sourcing

- Select a target chemical dynamics problem, such as electron transfer or photochemical reaction involving conical intersections.

- Source or derive a Vibronic Coupling Hamiltonian for the system using electronic structure calculations (e.g., CASSCF, DFT).

Algorithm Selection and Co-Design

- Choose an algorithm suited for dynamics, such as quantum phase estimation or variational quantum simulations, based on the problem and available hardware.

- Engage in hardware-software co-design, optimizing the algorithm for the specific error model and architecture of the target quantum processor (e.g., superconducting, trapped-ion).

Execution with Classical Hybridization

- Use a classical computer to handle the overall workflow and control.

- Offload the core quantum dynamics simulation to the quantum co-processor.

Verification and Validation

- Cross-Verification: Run the simulation on two different quantum devices and compare results [17].

- Classical Validation: For smaller problem instances tractable on classical hardware (e.g., via MCTDH calculations), validate the quantum simulator's output [16].

- Experimental Benchmarking: Compare predictions against experimental data, such as spectroscopic measurements or kinetic data (e.g., Hammett parameters for substituent effects) [20].

Diagram 2: Solution verification pathways

The Scientist's Toolkit: Essential Research Reagents

Beyond physical materials, the "reagents" for quantum chemical research include computational tools, algorithms, and hardware platforms. The following table details key components of a modern quantum chemistry research stack.

Table 4: The Quantum Chemist's Research Toolkit

| Tool Category | Specific Example | Function & Application |

|---|---|---|

| Quantum Hardware Platforms | Superconducting Qubits (Google, IBM), Trapped Ions (IonQ), Neutral Atoms (Atom Computing) | Physical systems for running quantum algorithms; each platform offers different trade-offs in connectivity, coherence times, and gate fidelities. |

| Quantum Algorithms | VQE, QPE, Quantum Dynamical Simulations | Core software routines for solving specific problem classes like electronic ground state energy (VQE) or real-time chemical dynamics. |

| Chemical Descriptors & Benchmarks | Hammett σ Parameters, Q Descriptor [20] | Experimental and theoretical benchmarks for validating quantum simulation results and connecting them to established chemical knowledge. |

| Error Mitigation Techniques | Zero-Noise Extrapolation, Probabilistic Error Cancellation | Software methods to improve the quality of results from noisy quantum processors before full fault-tolerance is achieved. |

| Quantum-Classical Hybrid Frameworks | QaaS (IBM, Microsoft), Custom Hybrid Algorithms | Enables the integration of quantum subroutines with classical high-performance computing (HPC) workflows. |

The natural synergy between quantum computing and chemical simulation is now yielding experimentally validated results. The protocols and tools detailed herein provide a concrete foundation for researchers to explore complex chemical phenomena, from non-adiabatic dynamics to strongly correlated electronic structures. As hardware continues to scale and algorithms become more refined, the quantum promise is rapidly evolving from a theoretical framework into a practical, indispensable tool in computational chemistry and drug discovery. The ongoing breakthroughs in error correction and the strategic focus on verifiable, application-specific algorithms underscore a clear path toward solving some of the most persistent challenges in molecular simulation.

The study of biomolecular targets such as metalloproteins, catalysts, and novel materials represents a critical frontier in computational biochemistry and materials science. These systems are often characterized by strong electron correlations, making them notoriously difficult to model accurately with classical computational methods. Quantum computing offers a promising pathway to overcome these limitations by directly simulating quantum mechanical phenomena. This application note details protocols for investigating these biomolecular targets, with a specific focus on addressing the strong correlation problem through hybrid quantum-classical computational workflows. The integration of quantum computational techniques enables researchers to achieve unprecedented accuracy in modeling electronic interactions in metalloenzyme active sites and catalytic materials, potentially accelerating discoveries in drug development and energy technologies.

The challenge of strong electronic correlations is particularly pronounced in systems containing transition metal ions, where closely spaced energy levels and complex electron interactions lead to quantum behaviors that exceed the capabilities of conventional density functional theory (DFT). This limitation has driven the development of new quantum algorithms and experimental protocols that can more accurately capture the electronic structure of these systems. By framing these approaches within the context of quantum computing applications, this document provides researchers with practical methodologies to advance the understanding of biologically and industrially relevant molecular systems.

Metalloproteins: Structure, Function, and Quantum Modeling

Fundamental Characteristics of Metalloproteins

Metalloproteins constitute a broad class of proteins that incorporate metal ion cofactors essential for their biological functions. It is estimated that approximately half of all known proteins bind metal ions or metal-containing cofactors [21]. These proteins perform critical roles in numerous biological processes, including oxygen transport, electron transfer, enzymatic catalysis, and gene regulation [22]. The metal centers in these proteins, often featuring iron, zinc, copper, or manganese, confer specific chemical reactivity that underpins their biological function. For example, iron in hemoglobin facilitates oxygen binding and release, while zinc in metalloproteases is vital for catalytic activity [22].

The functional diversity of metalloproteins stems from the intricate interplay between the protein scaffold and the metal cofactor. The protein environment precisely positions ligand residues to control the coordination geometry and electronic properties of the metal center, tuning its reactivity for specific biological functions. This precise control enables metalloproteins to catalyze chemically challenging reactions under mild physiological conditions, making them subjects of intense interest for both basic research and biomedical applications. Understanding the relationship between structure and function in these systems requires detailed knowledge of their metal-binding sites and electronic structures—a challenge that quantum computational methods are particularly well-suited to address.

Quantum Computational Approaches for Metalloprotein Modeling

Modeling metalloproteins presents significant challenges due to the strongly correlated electronic structures often found at their metal centers. These systems frequently require multi-reference quantum chemical methods for accurate description, which are computationally prohibitive for large systems on classical computers. Quantum computing offers promising alternatives through algorithms such as the Variational Quantum Eigensolver (VQE) and Quantum Phase Estimation (QPE) [23] [24].

Recent research has demonstrated the feasibility of hybrid quantum-classical workflows for studying metalloprotein-relevant systems. These approaches employ embedding schemes that partition the system into a strongly correlated fragment treated quantum mechanically and an environment handled with classical methods [23]. The automatic valence active space/regional embedding (AVAS/RE) approach generates highly localized molecular orbitals, selecting the most strongly correlated and chemically relevant orbitals for treatment on the quantum processor [23]. This strategy leverages the localized nature of interactions between substrates and metal centers, allowing researchers to focus quantum computational resources where they are most needed.

For current noisy intermediate-scale quantum (NISQ) devices, active spaces are typically limited to 2 electrons in 3 orbitals (requiring 6 qubits) or 4 electrons in 4 orbitals (requiring 8 qubits) [23]. The ADAPT-VQE algorithm has shown particular promise for these systems, as it progressively constructs ansätze by sequentially incorporating operators that most significantly lower the energy, ensuring moderate circuit depths compatible with current hardware limitations [23]. These quantum algorithms represent a significant advancement over conventional computational methods, potentially providing more accurate insights into the electronic structure and reactivity of metalloproteins.

Table 1: Quantum Computational Methods for Strongly Correlated Systems

| Method | Key Features | Qubit Requirements | Application Examples |

|---|---|---|---|

| ADAPT-VQE | Progressive ansatz construction, reduced circuit depth | 6-8 qubits for active spaces | Metalloprotein active sites [23] |

| Quantum Phase Estimation (QPE) | High-precision eigenvalue estimation, requires fault-tolerant hardware | Higher qubit counts | Molecular energy spectra [24] |

| DMET + VQE | Density matrix embedding theory combined with VQE | Varies with fragment size | Nickel oxide magnetic ordering [23] |

| k-UpCCGSD | Unitary coupled cluster with generalized singles and doubles | Moderate qubit requirements | Platinum-cobalt catalysts [23] |

Catalysts for Energy Applications: Oxygen Reduction Reaction

The Oxygen Reduction Challenge

The oxygen reduction reaction (ORR) is a critical process in hydrogen fuel cell technology, which has emerged as a promising alternative to hydrocarbon-based energy systems for low-carbon mobility applications [23]. In proton-exchange membrane fuel cells (PEMFCs), molecular hydrogen is oxidized at the anode, producing protons that migrate through a membrane to the cathode, where oxygen is reduced on a catalyst surface. The interaction of protons with reduced oxygen atoms leads to the formation of water as the primary product [23].

Despite its conceptual simplicity, ORR presents significant kinetic challenges that limit fuel cell efficiency. In an ideal reversible hydrogen electrode, the transfer of four protons and electrons to molecular oxygen generates a potential of 1.23 V in acidic media. However, in practical applications, the observed voltage is less than 0.9 V at any usable current output due to kinetic overpotentials [23]. The complex nature of ORR, involving multiple possible reaction pathways and strong electronic correlations in catalyst materials, has made it notoriously difficult to model accurately using classical computational methods. This challenge is particularly pronounced for the reductive and dissociative adsorption of oxygen (O₂ → 2O), which is a rate-determining step in the 4-electron exchange pathway [23].

Quantum Computing for Catalytic Mechanism Elucidation

Quantum computing offers novel approaches to overcome the limitations of classical methods for studying ORR mechanisms. Recent work has demonstrated a hybrid quantum-classical workflow that combines the strengths of both computing paradigms to model ORR on platinum-based surfaces [23]. This approach uses an embedding method that couples the relevant electronic degrees of freedom of a representative subsystem (described using ADAPT-VQE) with an environment treated within mean-field theory and N-electron Valence State Perturbation Theory (NEVPT2) to account for electronic dynamic correlation [23].

This methodology has been applied to study ORR on both pure platinum and platinum-capped cobalt (Pt/Co) catalysts. For pure platinum, researchers used an active space of 2 electrons in 3 orbitals (2e,3o), while for Pt/Co, they employed a (4e,4o) active space [23]. The increased active space requirement for Pt/Co reflects the more strongly correlated nature of this system, highlighting how quantum computers can potentially handle complex electronic structures that challenge classical computational methods. The implementation of this workflow on the H1-series trapped-ion quantum computer represents a significant step toward practical quantum computational chemistry applications in catalysis [23].

The following diagram illustrates the hybrid quantum-classical workflow for studying oxygen reduction reaction mechanisms:

Table 2: Catalyst Performance in Oxygen Reduction Reaction

| Catalyst Type | Active Space | Qubit Requirements | Key Findings |

|---|---|---|---|

| Pure Platinum (Pt) | 2 electrons, 3 orbitals | 6 qubits | Standard performance, well-characterized [23] |

| Platinum/Cobalt (Pt/Co) | 4 electrons, 4 orbitals | 8 qubits | Enhanced performance, stronger correlations [23] |

| Platinum/Nickel | Not specified in results | Similar to Pt/Co | Promising alternative to cobalt systems [23] |

Novel Materials for Quantum Computing Hardware

Materials with Diffuse Electrons for Qubit Applications

Beyond computational applications, novel materials are being developed specifically for quantum computing hardware implementations. Recent research has investigated materials featuring diffuse electrons whose spin states can function as qubits [25]. These materials consist of diamond-like grids of Li+ centers bridged by diamine chains (NH₂(CH₂)ₙH₂N) of varying carbon lengths. In this structure, the tetracoordinated lithium-amine center is surrounded by one diffuse electron solvated by the N-H bonds [25].

The electronic properties of these materials can be tuned by modifying the carbon chain length, with shorter chains producing metallic behavior and longer chains resulting in semiconducting properties [25]. This tunability offers potential for designing customized quantum materials with specific electronic characteristics. Density functional theory-based ab initio molecular dynamics simulations have characterized the thermal stability of these materials, revealing stability ranges from 100 to 220 K, depending primarily on the carbon chain length [25]. Critically, these thermal stability thresholds are well above the operating temperatures typically used in quantum computing applications, making them potentially suitable for practical implementations.

Metallated Carbon Nanowires for Quantum Devices

Another promising class of materials for quantum applications involves metallated carbon nanowires. Specifically, researchers have explored metallated carbyne nanowires for their potential to host Majorana zero modes (MZM) [26]—exotic quantum states that are fundamental to topological quantum computing. Majorana zero modes are non-abelian anyons that could enable fault-tolerant quantum computation through topological protection.

Studies have optimized various types of metallated carbyne, achieving average magnetic moments surpassing 1μB for Mo, Tc, and Ru metallated carbyne, with local moments exceeding 2μB [26]. The Ru metallated carbyne exhibits particularly promising characteristics, including periodic variations in magnetism with increasing carbyne length and strong average spin-orbital coupling of approximately 140 meV [26]. When ferromagnetic Ru metallated carbyne is coupled with a superconducting Ru substrate, band inversions occur at both the gamma (G) point and M point, where spin-orbital coupling triggers transitions between band inversion and Dirac gap formation [26]. These properties suggest that carbon-based materials may be capable of hosting Majorana zero modes, presenting an exciting opportunity for developing novel quantum computing hardware.

Experimental Protocols and Methodologies

Protocol 1: Hybrid Quantum-Classical Study of ORR Mechanisms

Objective: To investigate the kinetic and thermodynamic aspects of the reductive and dissociative adsorption reaction (O₂ → 2O) on platinum-based catalyst surfaces using a hybrid quantum-classical computational workflow.

Materials and Computational Resources:

- Quantum processor (e.g., H1-series trapped-ion quantum computer) or quantum simulator

- Classical computing cluster for molecular mechanics and DFT calculations

- Quantum chemistry software packages (e.g., PySCF, QChem)

- Quantum algorithm development frameworks (e.g., Qiskit, Cirq)

Procedure:

- System Preparation:

- Construct atomic models of the catalyst surface with adsorbed oxygen species

- Perform geometry optimization using density functional theory (DFT) with appropriate functionals

System Partitioning:

- Apply the automatic valence active space/regional embedding (AVAS/RE) approach [23]

- Generate highly localized molecular orbitals

- Select the most strongly correlated orbitals for quantum treatment (2e,3o for Pt; 4e,4o for Pt/Co)

Quantum Computational Setup:

- Configure electrons in lower-energy spin-orbitals to establish the Hartree-Fock reference

- Initialize the Fock state on the quantum processor

- Construct the wavefunction ansatz using the generalized unitary coupled cluster (k=1)-UpCCGSD [23]

- Apply the ADAPT-VQE algorithm to progressively build the ansatz with reduced circuit depth

Energy Evaluation:

- Execute the variational quantum eigensolver (VQE) to measure the expectation value of the electronic Hamiltonian

- Combine quantum results with classical mean-field and NEVPT2 treatments of the environment

- Optimize parameters classically to minimize the energy expectation value

Data Analysis:

- Calculate reaction energy profiles for O₂ dissociation

- Compare kinetic and thermodynamic parameters across different catalyst compositions

- Benchmark results against classical computational methods and experimental data

Troubleshooting Tips:

- For noisy quantum devices, employ error mitigation techniques such as zero-noise extrapolation

- If active space selection proves challenging, systematically test different orbital combinations

- For convergence issues in VQE, adjust optimization algorithms or ansatz parameters

Protocol 2: NMR Structure Determination of Metalloproteins with MD Refinement

Objective: To determine the solution structure of a designed metalloprotein using NMR spectroscopy, with subsequent refinement through molecular dynamics to accurately characterize the metal-binding site.

Materials:

- Purified metalloprotein sample (e.g., di-Zn(II) DFsc)

- NMR buffer (e.g., 20 mM phosphate buffer, pH 6.5, 100 mM NaCl)

- Deuterated solvent for locking (e.g., D₂O)

- NMR tube appropriate for the spectrometer

- High-field NMR spectrometer (e.g., 600 MHz or higher)

- High-performance computing cluster for molecular dynamics simulations

Procedure:

- Sample Preparation:

- Prepare 0.5-1.0 mM protein solution in NMR buffer with 5-10% D₂O

- Add sodium 2,2-dimethyl-2-silapentane-5-sulfonate (DSS) as internal chemical shift reference

NMR Data Collection:

- Acquire 2D NOESY spectrum with 100-150 ms mixing time

- Collect TOCSY spectrum with 70-80 ms spin-lock time

- Obtain HSQC spectra for ¹H-¹⁵N and ¹H-¹³C correlations

- Measure residual dipolar couplings in aligned media if possible

Structure Calculation:

- Assign NMR signals using standard sequential assignment strategies

- Generate distance restraints from NOE cross-peak intensities (typically 1700-2400 restraints)

- Include dihedral angle restraints from chemical shift analysis

- Add hydrogen bond restraints for slowly-exchanging amide protons

- Calculate initial structure ensemble using programs such as CNS or XPLOR with metal-ligand bonding restraints

Molecular Dynamics Refinement:

- Solvate the NMR structure in a water box using molecular dynamics software

- Perform 10 ns classical MD simulation using a non-bonded force field for the metal ions [21]

- Conduct 5 ps of Car-Parrinello hybrid QM/MM dynamics with the metal site treated at the DFT-BLYP level [21]

- Extract the final refined structure from the equilibrated trajectory

Validation:

- Check for NOE violations in the MD model

- Compare experimental and calculated B factors to assess dynamic consistency

- Validate metal-ligand geometry against known coordination chemistry principles

Troubleshooting Tips:

- If metal-ligand geometry appears distorted in initial NMR structure, increase MD simulation time

- For poor NOE assignments, collect additional spectra with different mixing times

- If protein stability is concerns, use lower temperature during data collection

The following diagram illustrates the integrated NMR and molecular dynamics workflow for metalloprotein structure determination:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Materials and Computational Tools

| Reagent/Resource | Function/Application | Specific Examples/Notes |

|---|---|---|

| Designed Metalloprotein Scaffolds | Provide tunable systems for studying metal-protein interactions | DFsc (due ferri single chain) with EXXH metal-binding motifs [21] |

| Quantum Computing Hardware | Execute quantum algorithms for strongly correlated systems | H1-series trapped-ion quantum computer; IBM superconducting qubit processors [23] [24] |

| Quantum Chemistry Software | Implement quantum and classical computational methods | PySCF, QChem for electronic structure; Qiskit, Cirq for quantum algorithms [23] |

| NMR Spectroscopy Suite | Determine solution structures of metalloproteins | High-field NMR with cryoprobes; NOESY, TOCSY, HSQC experiments [21] |

| Molecular Dynamics Packages | Refine structures and simulate dynamics | GROMACS, AMBER, NAMD with specialized metal force fields [21] |

| Platinum-Based Catalysts | Study oxygen reduction reaction mechanisms | Pure Pt surfaces; Pt/Co and Pt/Ni bimetallic systems [23] |

| Metallated Carbon Nanowires | Investigate materials for quantum hardware | Ru-metallated carbyne for Majorana zero mode studies [26] |

| Li-Amine Materials | Develop qubits from diffuse electrons | Diamond-like Li+ grids with diamine bridges [25] |

The integration of quantum computational approaches with experimental methodologies provides powerful tools for investigating key biomolecular targets including metalloproteins, catalysts, and novel materials. These approaches are particularly valuable for addressing the strong correlation problem that has long challenged conventional computational methods. As quantum hardware continues to advance, with increasing qubit counts and improved fidelity, the applications to biological and materials systems will expand significantly.

Future developments will likely focus on several key areas: (1) increasing the size of treatable active spaces in metalloprotein simulations, (2) improving embedding techniques to more seamlessly integrate quantum and classical computational regions, (3) developing more efficient quantum algorithms with reduced circuit depths, and (4) creating specialized quantum materials with enhanced properties for quantum computing hardware. The continued collaboration between quantum information scientists, computational chemists, and experimental researchers will be essential to fully realize the potential of these technologies for advancing our understanding of complex biomolecular systems.

Quantum Algorithms in Action: Methodologies for Drug Discovery and Development

Quantum-Enhanced Protein Folding and Docking Simulations

The application of quantum computing to biological simulations represents a paradigm shift in computational biology and drug discovery. Traditional classical computing methods face significant challenges in solving problems with inherent quantum mechanical nature, such as protein folding and molecular docking, due to the exponential scaling of computational resources required. These challenges are particularly pronounced in strong correlation problems where electron interactions create complex quantum states that classical computers struggle to simulate efficiently. Quantum computing, leveraging principles of superposition and entanglement, offers a fundamentally different approach that can potentially overcome these limitations [27] [28].

Protein folding and docking are central problems in structural biology and pharmaceutical research. The protein folding problem involves predicting the three-dimensional native structure of a protein from its amino acid sequence, which is essential for understanding biological function and malfunction in diseases. Similarly, protein-ligand docking aims to predict how small molecules bind to target proteins, which is crucial for drug design and development. Both processes are governed by quantum mechanical interactions at the molecular level, making them prime candidates for quantum computational approaches [29] [28].

This application note explores recent breakthroughs in quantum-enhanced simulations of protein folding and docking, with particular focus on methodologies, protocols, and their application to strong correlation problems in computational biophysics. We present detailed experimental frameworks and quantitative comparisons to guide researchers in implementing these cutting-edge approaches.

Quantum-Enhanced Protein Folding

Recent Advances and Performance Benchmarks

Recent experimental demonstrations have established new milestones in applying quantum computing to protein folding problems. A collaboration between Kipu Quantum and IonQ successfully solved the most complex protein folding problem ever tackled on quantum hardware, involving peptides of 10 to 12 amino acids using a 36-qubit trapped-ion quantum computer [30] [31]. This achievement marks the largest such demonstration to date on real quantum hardware and highlights the promise of quantum systems for tackling complex biological computations.

Table 1: Quantum Protein Folding Performance Benchmarks

| Metric | Kipu Quantum & IonQ (2025) | IBM Quantum (2021) | Classical HPC Reference |

|---|---|---|---|

| System Size | 12 amino acids | 7-10 amino acids | Varies by method |

| Qubits Required | 33-36 qubits | 9-22 qubits | Not applicable |

| Hardware Platform | Trapped-ion (IonQ Forte) | Superconducting (IBM) | CPU/GPU clusters |

| Algorithm | BF-DCQO | Variational Quantum Algorithm | Molecular Dynamics |

| Key Achievement | Optimal/near-optimal folding configurations for biologically relevant peptides | Folding of 7-amino acid neuropeptide on real quantum processor | Varies by protein size |

The breakthrough was achieved using a non-variational quantum optimization method called Bias-Field Digitized Counterdiabatic Quantum Optimization (BF-DCQO). This algorithm reframes protein folding as a higher-order binary optimization (HUBO) problem, mapping the folding process onto a lattice and expressing it as complex energy functions that are difficult to minimize using classical methods [30]. The BF-DCQO algorithm dynamically updates bias fields to steer the quantum system toward lower energy states with each iteration, drawing from principles in adiabatic evolution and counterdiabatic control while being designed for compatibility with current noisy quantum hardware [30].

Experimental Protocol: BF-DCQO for Protein Folding

Materials and Requirements:

- Quantum Hardware: Trapped-ion quantum computer with all-to-all connectivity (e.g., IonQ Forte-generation system)

- Classical Computing Resources: For pre-processing and post-processing steps

- Software Stack: Quantum programming framework (e.g., Qiskit, Cirq) with BF-DCQO implementation

Step-by-Step Workflow:

Problem Formulation and Encoding:

- Map the protein folding problem onto a 3D lattice model

- Encode each amino acid turn in the protein chain using two qubits

- Model interactions between non-consecutive amino acids using known contact energies (e.g., Miyazawa-Jernigan matrix)

- Formulate the problem as a HUBO cost function representing the energy landscape

Circuit Implementation:

- Implement the BF-DCQO algorithm with parametrized quantum circuits

- Apply circuit pruning techniques to reduce quantum gate counts by eliminating small-angle gate operations

- Configure iterative bias-field updates to steer the system toward lower energy states

Execution and Optimization:

- Execute the quantum circuit on trapped-ion hardware

- Perform multiple iterations with updated parameters based on measurement outcomes

- Utilize the all-to-all connectivity of trapped-ion qubits to efficiently model long-range interactions in the protein chain

Post-Processing:

- Apply greedy local search algorithms to refine near-optimal quantum results

- Mitigate potential bit-flip and measurement errors through classical correction

- Validate obtained structures against known biological constraints

Diagram 1: Quantum Protein Folding Workflow using BF-DCQO Algorithm. The process shows the integration of quantum and classical steps for structure prediction.

Quantum-Enhanced Protein-Ligand Docking

Quantum Docking Algorithms and Performance

Protein-ligand docking is a fundamental technique in structure-based drug design that predicts how small molecules interact with target proteins. Quantum algorithms offer novel approaches to overcome limitations of classical docking methods, particularly for simulating quantum mechanical processes such as covalent binding, metal coordination, and polarization effects [27] [32].

A novel quantum algorithm for protein-ligand docking site identification extends the protein lattice model to include protein-ligand interactions. This approach introduces quantum state labelling for interaction sites and implements an extended and modified Grover quantum search algorithm to search for docking sites [27]. The algorithm has been tested on both quantum simulators and real quantum computers, demonstrating effective identification of docking sites with high scalability for larger proteins as qubit availability increases [27].

Table 2: Quantum Docking Methodologies and Applications

| Method | Key Innovation | Interactions Modeled | Hardware Compatibility |

|---|---|---|---|

| Quantum Search Algorithm [27] | Extended Grover search with quantum state labeling | Hydrophobic, Hydrogen bonding | Current NISQ devices |

| Hybrid QM/MM with Attracting Cavities [32] | Multi-level quantum mechanical/molecular mechanical | Covalent binding, Metal coordination | Classical HPC with QM calculations |

| Folding-Docking-Affinity (FDA) Framework [33] | Integration of quantum folding with docking | General protein-ligand interactions | Hybrid quantum-classical |

The quantum docking algorithm represents protein and ligand interaction sites using quantum registers with one qubit for each type of interaction. For the most frequently used interactions—hydrophobic interactions and hydrogen bonding—each interaction site is represented by a tensor product of two qubits. The complete protein quantum state is given by the tensor product of its interaction sites, enabling comprehensive representation of potential binding configurations [27].

Experimental Protocol: Quantum Search for Docking Sites

Materials and Requirements:

- Quantum Processing Unit: Quantum simulator or real quantum computer

- Classical Pre-processing: Protein and ligand structure preparation tools

- Interaction Parameters: Hydrophobic and hydrogen bonding potential definitions

Step-by-Step Workflow:

System Preparation and Quantum State Initialization:

- Prepare the protein structure and extend the protein lattice model to include protein interaction sites

- Represent each amino acid with a set of interaction sites forming an inner lattice

- Encode the positions of interaction sites using turn-based encoding, requiring two qubits per direction

- Initialize the protein quantum state as the tensor product of its interaction sites: ( |P\rangle = |p1\rangle \otimes |p2\rangle \otimes \cdots \otimes |p_M\rangle )

- Initialize the ligand quantum state similarly: ( |L\rangle = |l1\rangle \otimes |l2\rangle \otimes \cdots \otimes |l_N\rangle )

Superposition State Preparation:

- Transform the protein state to a protein superposition state according to the ligand size

- Segment the protein into parts comparable to the ligand and set protein interaction sites in superposition

- For the search, consider only the latest occurrence of each protein interaction site with similar properties to avoid duplicates

Quantum Search Implementation:

- Implement the extended Grover quantum search algorithm to identify potential docking sites

- Utilize quantum amplitude amplification to enhance the probability of measuring correct docking configurations

- Apply multiple iterations of the Grover operator based on the size of the search space

Measurement and Validation:

- Measure the quantum state to obtain probable docking configurations

- Calculate classical metrics such as root-mean-square deviation (RMSD) to validate predictions against experimental structures

- Analyze interaction energies for the identified binding poses

Diagram 2: Quantum Docking Workflow using Grover Search Algorithm. The process illustrates the quantum-enhanced identification of protein-ligand binding sites.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Quantum-Enhanced Simulations

| Tool/Reagent | Function/Purpose | Example Vendors/Platforms |

|---|---|---|

| Trapped-Ion Quantum Computers | Hardware platform with all-to-all connectivity ideal for protein folding problems | IonQ Forte, AQT |

| Superconducting Quantum Processors | Gate-based quantum computers for algorithm testing and development | IBM Quantum, Google Sycamore |

| Quantum Programming Frameworks | Software development kits for implementing quantum algorithms | Qiskit (IBM), Cirq (Google), PennyLane |

| Hybrid QM/MM Software | Classical software enabling quantum mechanical calculations for specific interactions | CHARMM with Gaussian interface, Attracting Cavities |

| Quantum Simulators | Classical emulation of quantum computers for algorithm validation | NVIDIA cuQuantum, Amazon Braket |

| Lattice Model Libraries | Pre-defined lattice structures for protein representation | Custom implementations (varies by research group) |

| Interaction Energy Parameters | Empirical potentials for amino acid and ligand interactions | Miyazawa-Jernigan matrix, AMBER force field |

Application to Strong Correlation Problems

Strong correlation problems in biological systems present particular challenges for classical computational methods. These problems arise when electrons in a molecular system exhibit strongly correlated behavior, making mean-field approximations ineffective and requiring exponentially scaling resources for exact solutions [28]. Quantum computers offer a natural platform for addressing these challenges through direct mapping of electronic structures to qubit systems.

In protein folding, strong correlations manifest in the coupled motions of amino acid residues and the cooperative nature of folding transitions. The quantum folding algorithms described in Section 2 can successfully navigate the rugged energy landscape of protein folding funnels, where classical methods often become trapped in local minima [28]. The BF-DCQO algorithm's ability to handle higher-order binary optimization problems makes it particularly suited for these strongly correlated systems.

For protein-ligand docking, strong correlation effects are crucial in metal-binding sites, charge transfer complexes, and covalent inhibition mechanisms. The hybrid QM/MM approach with Attracting Cavities has demonstrated significant improvements over classical methods for metalloproteins, where accurate description of metal-ligand interactions requires proper treatment of strong electron correlations [32]. By applying density functional theory or semi-empirical quantum methods to the active site while treating the remainder of the protein with molecular mechanics, this approach achieves a balance between accuracy and computational feasibility.

Recent research has successfully applied quantum computing to study the catalytic loop of the Zika Virus NS3 Helicase, demonstrating the potential for addressing biologically relevant strong correlation problems [28]. As quantum hardware continues to improve in qubit count, coherence times, and gate fidelities, these applications will expand to larger and more complex biological systems, potentially enabling breakthroughs in understanding enzymatic mechanisms and designing targeted therapies.

Quantum-enhanced protein folding and docking simulations have transitioned from theoretical concepts to experimentally demonstrated capabilities with tangible results. The recent successful folding of 12-amino acid peptides on quantum hardware [30] [31] and the development of efficient quantum docking algorithms [27] mark significant milestones in the field. These advances demonstrate the potential of quantum computing to address the exponential complexity of biological structure prediction that has limited classical computational methods.

The integration of these quantum approaches with classical computational methods creates a powerful hybrid framework that leverages the strengths of both paradigms. As quantum hardware continues to scale—with roadmaps projecting 1,000+ qubit systems within the next few years [18]—and algorithmic efficiency improves, we anticipate rapid advancement in the size and complexity of biological problems that can be addressed. This progress will be particularly impactful for strong correlation problems in drug discovery, including metalloenzyme inhibition, covalent drug design, and allosteric modulation.

For researchers and drug development professionals, early engagement with quantum computational methods is advisable to build expertise and identify the most promising application areas within their domains. Strategic partnerships with quantum hardware and software providers can facilitate access to these rapidly evolving technologies. As the field progresses, quantum-enhanced simulations are poised to significantly accelerate drug discovery pipelines, reduce development costs, and enable the targeting of previously intractable biological systems.

Precise Electronic Structure Calculations for Drug-Target Interactions

The accurate prediction of drug-target interactions (DTIs) is a cornerstone of modern pharmaceutical research, yet it remains a formidable challenge due to the quantum mechanical complexity of molecular systems. Precise electronic structure calculations are critical for understanding the interaction energies, binding affinities, and reaction pathways that govern drug efficacy at the atomic level. Traditional computational methods, including classical density functional theory (DFT), often struggle with the strong electron correlation problem prevalent in transition metal complexes, conjugated systems, and radical intermediates frequently encountered in pharmacological compounds. This strong correlation arises when the motion of one electron is strongly dependent on the positions of other electrons, making mean-field approximations like DFT insufficient for many biologically relevant systems.

Quantum computing offers a paradigm shift for tackling these challenges. By inherently encoding quantum phenomena, quantum processors can potentially simulate molecular systems with a precision that is computationally intractable for classical computers. The variational quantum eigensolver (VQE) and related algorithms have emerged as promising approaches for finding ground-state energies of molecular systems on noisy intermediate-scale quantum (NISQ) devices. This application note details protocols for leveraging quantum computational methods to advance electronic structure calculations for drug-target interactions, framed within the broader research context of solving strong correlation problems.

Background and Significance

The Strong Correlation Problem in Drug Discovery

Electron correlation is a fundamental phenomenon that serves as nature's "chemical glue," determining the structural and functional properties of molecular and solid-state systems [34]. The correlation energy is defined as the error introduced by calculating a system's energy under the approximation that electrons do not explicitly account for each other's positions. In pharmaceutical contexts, strong electron correlations are particularly prevalent in:

- Metalloenzymes: Drug targets containing transition metal ions (e.g., zinc hydrolases, iron-containing cytochrome P450 enzymes).

- Extended conjugated systems: Aromatic rings and delocalized electron systems in many small-molecule drugs.

- Radical intermediates: Reactive species involved in drug metabolism and catalytic processes.

The rule of thumb is that the larger the correlation energy, the more likely quantum computers will provide a computational advantage over classical methods. Current research suggests that strong correlation effects are more likely in solids than in molecules, though significant correlation challenges exist in both domains [34].

Quantum Computing Approaches

Quantum computers leverage qubits, which exploit superposition and entanglement to represent and process quantum information in ways fundamentally different from classical bits [35]. This capability allows quantum computers to naturally simulate quantum mechanical systems. Research groups are actively exploring methods for computing electron correlation energy within the framework of density functional theory by mapping the problem onto quantum computing architectures [36]. The goal is to achieve improved accuracy, convergence, and scaling for large quantum systems relevant to pharmaceutical applications, such as predicting drug-target binding affinities.

Quantitative Data on Computational Methods

The table below summarizes key electronic structure methods, their applicability to correlated systems, and their computational scaling, highlighting where quantum computing can provide advantages.

Table 1: Comparison of Electronic Structure Methods for Drug-Target Interactions

| Method | Strong Correlation Capability | Classical Computational Scaling | Quantum Algorithm | Key Limitations |

|---|---|---|---|---|

| Density Functional Theory (DFT) | Poor with standard functionals | O(N³) | Quantum DFT | Inaccurate for strongly correlated systems; functional approximation error |

| Coupled Cluster (CCSD(T)) | Moderate | O(N⁷) | Quantum Phase Estimation | Prohibitive for large systems; "gold standard" but expensive |

| Full Configuration Interaction (FCI) | Excellent | O(e^N) | Variational Quantum Eigensolver (VQE) | Only feasible for small molecules on classical computers |

| Quantum Monte Carlo | Good | O(N³ - N⁴) | - | Fermionic sign problem; statistical error |

| Hybrid Quantum-Classical (VQE) | Excellent (Theoretical) | Depends on ansatz | VQE with UCCSD ansatz | Current hardware noise limits qubit count and depth |

For drug-target interactions, binding energies typically range from 5-15 kcal/mol (∼0.2-0.6 eV), requiring chemical accuracy (1 kcal/mol or ∼0.043 eV) for predictive value. Strong electron correlation can contribute significantly to these interaction energies, particularly when transition metals or charge-transfer complexes are involved.

Table 2: Representative Molecular Systems in Drug Discovery with Correlation Challenges

| System Type | Example Drug Target | Correlation Significance | Classical Computational Challenge |

|---|---|---|---|

| Transition Metal Complex | Zinc metalloproteases, HIV-1 integrase | Multireference character of d-electron systems | Standard DFT fails to predict correct spin states and binding energies |

| Conjugated Organic Molecules | Kinase inhibitors (e.g., imatinib) | Delocalized π-electron systems | Accurate correlation treatment needed for redox properties and excitation energies |

| Radical Enzymes | Cytochrome P450, Ribonucleotide reductase | Open-shell electronic structures | Multiconfigurational character requires sophisticated wavefunction methods |

| Solid-State Formulations | Drug polymorphs, cocrystals | Periodic boundary conditions with correlation | Band gaps and cohesive energies poorly described by standard DFT |

Experimental Protocols

Protocol 1: VQE for Binding Energy Calculation

This protocol describes how to calculate the binding energy between a drug candidate and its protein target using a hybrid quantum-classical computational approach.

Materials and Setup

- Quantum Processing Unit (QPU) or noisy quantum simulator with at least 12 qubits

- Classical optimizer (COBYLA, SPSA, or BFGS)

- Quantum chemistry software (Qiskit, PennyLane, or OpenFermion)

- Molecular structure files of drug and target binding pocket

Step-by-Step Procedure

Active Space Selection:

- Identify the key molecular orbitals involved in the drug-target interaction.

- For a typical binding site, select 6-12 electrons in 6-12 orbitals (6e/6o to 12e/12o active space).

- This selection reduces the problem size while retaining essential correlation effects.

Qubit Mapping:

- Transform the electronic Hamiltonian to qubit representation using Jordan-Wigner or Bravyi-Kitaev transformation.

- The Bravyi-Kitaev transformation typically offers better scaling for molecular systems.

Ansatz Preparation:

- Prepare the Unitary Coupled Cluster Singles and Doubles (UCCSD) ansatz:

U(θ) = exp(T - T†)where T is the cluster operator and θ are variational parameters. - For hardware-efficient approaches, use a layered circuit ansatz with alternating rotation and entanglement blocks.

- Prepare the Unitary Coupled Cluster Singles and Doubles (UCCSD) ansatz:

Variational Optimization:

- Execute the parameterized quantum circuit on the QPU to generate the trial wavefunction.

- Measure the expectation value of the qubit-mapped Hamiltonian.

- Use the classical optimizer to minimize the energy with respect to the parameters θ.

- Repeat until convergence to the ground state energy (typically 100-500 iterations).

Binding Energy Calculation:

- Calculate the total electronic energy for the drug-target complex (E_complex).

- Calculate energies for the drug (Edrug) and target (Etarget) separately.

- Compute the binding energy: ΔEbind = Ecomplex - (Edrug + Etarget).

- Apply counterpoise correction to account for basis set superposition error.

Error Mitigation:

- Implement readout error mitigation using matrix inversion techniques.

- Use zero-noise extrapolation to estimate the energy in the absence of hardware noise.

Protocol 2: Quantum Machine Learning for DTI Prediction

This protocol combines quantum computing with machine learning to predict drug-target interactions based on electronic structure features.

Materials and Setup

- Hybrid quantum-classical neural network framework

- Dataset of known drug-target pairs with binding affinities

- Molecular featurization tools (RDKit or similar)

- Quantum simulator with at least 8 qubits

Step-by-Step Procedure

Data Preparation:

- Encode molecular structures of drugs and targets into feature vectors.

- Use molecular fingerprints for drugs and position-specific scoring matrices (PSSM) for targets.

- Split data into training, validation, and test sets (70/15/15 ratio).

Quantum Circuit Design:

- Design a parameterized quantum circuit (PQC) as a feature map or classification layer.

- Use hardware-efficient ansatz with rotational gates and entangling layers.

Hybrid Model Training:

- Train the model using stochastic gradient descent or quantum natural gradient.

- Employ mini-batching for large datasets to manage computational cost.

- Monitor validation loss to prevent overfitting.

Model Evaluation:

- Evaluate performance on test set using AUC-ROC, precision-recall curves, and Matthews correlation coefficient.

- Compare against classical baseline models (random forests, neural networks).

Visualization of Workflows

The following diagrams illustrate key experimental workflows and logical relationships in quantum electronic structure calculations for drug-target interactions.

Quantum DTI Calculation Workflow

Diagram 1: Quantum DTI Calculation Workflow. This diagram illustrates the complete workflow for calculating drug-target binding energies using quantum algorithms.

VQE Optimization Process

Diagram 2: VQE Optimization Process. This diagram shows the hybrid quantum-classical feedback loop in the Variational Quantum Eigensolver algorithm.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools for Quantum Electronic Structure Calculations

| Item | Function | Example Tools/Platforms |

|---|---|---|

| Quantum Computing Frameworks | Provides tools for quantum algorithm development and execution | Qiskit (IBM), Cirq (Google), PennyLane (Xanadu) [37] [35] |

| Quantum Chemistry Packages | Performs electronic structure calculations and active space selection | PySCF, OpenFermion, Psi4, Gaussian |

| Classical Ab Initio Codes | Generates reference data and compares quantum algorithm performance | NWChem, ORCA, Molpro, VASP |

| Molecular Visualization Software | Prepares and analyzes molecular structures for quantum calculation | PyMol, VMD, Chimera, RDKit |

| Error Mitigation Tools | Reduces the impact of noise on current quantum hardware | Mitiq, Qiskit Ignis, Zero-Noise Extrapolation |

| Quantum Simulators | Emulates quantum computers for algorithm development and testing | Qiskit Aer, BlueQubit emulators [35], QuEST |