Quantum Computing for Molecular Ground States: From Theory to Drug Discovery Applications

This article provides a comprehensive overview of how quantum computing is revolutionizing the search for molecular Hamiltonian ground states, a cornerstone of accurate quantum chemistry.

Quantum Computing for Molecular Ground States: From Theory to Drug Discovery Applications

Abstract

This article provides a comprehensive overview of how quantum computing is revolutionizing the search for molecular Hamiltonian ground states, a cornerstone of accurate quantum chemistry. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles that give quantum computers a potential edge, details cutting-edge hybrid algorithms already in use on current hardware, and discusses strategies to overcome noise and errors. Furthermore, it examines the emerging evidence of verifiable quantum advantage and compares quantum approaches with classical methods, concluding with an analysis of the transformative future impact on biomedical research and therapeutic discovery.

The Quantum Promise: Why Qubits Can Solve Intractable Chemistry Problems

The determination of the molecular ground state—the lowest energy state of a molecule's electrons—represents one of the most fundamental challenges in quantum chemistry and materials science. This ground state energy and its associated wavefunction govern molecular structure, stability, reactivity, and physical properties, making its accurate computation essential for drug discovery, materials design, and chemical reaction modeling [1]. The molecular Hamiltonian, which encapsulates the total energy of the electrons and nuclei within a molecule, serves as the starting point for this quantum mechanical description [1].

In the context of quantum computing, the molecular Hamiltonian ground state problem has emerged as a potential benchmark application where quantum algorithms may demonstrate significant advantage over classical computational methods. The search for quantum advantage in this domain represents an active frontier of research, bridging quantum chemistry, computational physics, and quantum information science [2]. This technical guide examines the core challenge from both theoretical and computational perspectives, with particular emphasis on emerging quantum approaches.

Theoretical Foundation: The Molecular Hamiltonian

Components of the Coulomb Hamiltonian

The full molecular Hamiltonian, often referred to as the Coulomb Hamiltonian, comprises five distinct physical contributions that collectively describe the energy landscape of the molecular system [1]:

- Nuclear kinetic energy: ( \hat{T}n = -\sumi \frac{\hbar^2}{2Mi}\nabla{\mathbf{R}_i}^2 )

- Electronic kinetic energy: ( \hat{T}e = -\sumi \frac{\hbar^2}{2me}\nabla{\mathbf{r}_i}^2 )

- Electron-nucleus attraction: ( \hat{U}{en} = -\sumi \sumj \frac{Zi e^2}{4\pi \varepsilon0 |\mathbf{R}i - \mathbf{r}_j|} )

- Electron-electron repulsion: ( \hat{U}{ee} = \frac{1}{2}\sumi \sum{j \neq i} \frac{e^2}{4\pi \varepsilon0 |\mathbf{r}i - \mathbf{r}j|} )

- Nuclear-nuclear repulsion: ( \hat{U}{nn} = \frac{1}{2}\sumi \sum{j \neq i} \frac{Zi Zj e^2}{4\pi \varepsilon0 |\mathbf{R}i - \mathbf{R}j|} )

The complexity arises from the coupled nature of these terms, particularly the electron-electron repulsion that creates a many-body problem requiring approximate solutions for all but the smallest molecular systems.

The Born-Oppenheimer Approximation

Practical computation almost universally employs the Born-Oppenheimer approximation, which separates nuclear and electronic motion based on the significant mass disparity between these components [1]. This simplification allows chemists to focus on the electronic Hamiltonian with fixed nuclear positions, generating potential energy surfaces that govern nuclear motion. The electronic Schrödinger equation within this framework becomes:

( \hat{H}{electronic} = \hat{T}e + \hat{U}{en} + \hat{U}{ee} )

where the nuclear-nuclear repulsion term ( \hat{U}_{nn} ) adds a constant energy offset for fixed nuclear configurations.

Classical Computational Approaches

Hierarchy of Methods

Classical computational chemistry employs a spectrum of methods balancing accuracy and computational cost, as summarized in Table 1.

Table 1: Classical Computational Methods for Molecular Ground State Determination

| Method | Key Approach | Scaling Complexity | Key Limitations |

|---|---|---|---|

| Hartree-Fock (HF) | Mean-field approximation | ( \mathcal{O}(N^4) ) | Neglects electron correlation |

| Configuration Interaction (CI) | Linear expansion in Slater determinants | ( \mathcal{O}(N^{6-10}) ) | Size inconsistency; factorial scaling |

| Coupled Cluster (CC) | Exponential ansatz (e.g., CCSD(T)) | ( \mathcal{O}(N^7) ) for CCSD(T) | High polynomial scaling |

| Full CI (FCI) | Exact solution within basis set | Factorial in electrons/orbitals | Limited to very small systems |

| Density Functional Theory (DFT) | Electron density functional | ( \mathcal{O}(N^3) ) | Functional approximation error |

| Quantum Monte Carlo (QMC) | Stochastic sampling | ( \mathcal{O}(N^{3-4}) ) | Fermionic sign problem |

| DMRG/MPS | Tensor network compression | ( \mathcal{O}(N) ) for 1D systems | Accuracy depends on entanglement |

The Full Configuration Interaction Limit

Full Configuration Interaction (FCI) represents the exact numerical solution of the electronic Schrödinger equation within a given one-electron basis set [3]. The FCI wavefunction is expressed as a linear combination of all possible Slater determinants:

( \Psi{\text{FCI}} = c0 |\Psi0\rangle + \sum{i,a} ci^a |\Psii^a\rangle + \sum{i>j,a>b} c{ij}^{ab} |\Psi_{ij}^{ab}\rangle + \cdots )

where ( |\Psi0\rangle ) is the Hartree-Fock determinant, ( |\Psii^a\rangle ) are singly-excited determinants, and higher terms include up to N-tuple excitations for an N-electron system [3]. While FCI provides the benchmark against which all approximate methods are judged, its factorial scaling with system size limits practical application to small molecules with minimal basis sets.

The Challenge of Strong Correlation

Particularly challenging cases for classical methods include systems with strong electron correlation, such as open-shell molecules, transition metal complexes (e.g., iron-sulfur clusters in nitrogenase), and bond dissociation processes [3] [2]. In these scenarios, single-reference methods like Hartree-Fock and standard coupled cluster approximations break down, requiring more sophisticated multi-configurational approaches such as Complete Active Space Self-Consistent Field (CASSCF) methods.

Quantum Computing Approaches

Quantum Algorithm Frameworks

Quantum computing offers potentially transformative approaches to the molecular ground state problem, with several algorithmic frameworks currently under development, as summarized in Table 2.

Table 2: Quantum Algorithms for Molecular Ground State Determination

| Algorithm | Key Principle | Resource Requirements | Current Status |

|---|---|---|---|

| Variational Quantum Eigensolver (VQE) | Hybrid quantum-classical optimization | Shallow circuits; noise-resistant | Demonstrated on small molecules [4] [5] [6] |

| Quantum Phase Estimation (QPE) | Coherent energy measurement | Deep circuits; error correction | Fault-tolerant requirement |

| Quantum Imaginary Time Evolution (QITE) | Non-unitary evolution via parameterization | Moderate circuit depth | Experimental demonstrations [7] [8] |

| Dissipative Lindblad Dynamics | Open quantum systems approach | State preparation via dissipation | Theoretical proposal [9] |

| Quantum Annealing | Adiabatic ground state preparation | Specialized hardware | Hamiltonian reduction applications [3] |

The VQE Framework and Enhancements

The Variational Quantum Eigensolver (VQE) has emerged as a leading algorithm for the Noisy Intermediate-Scale Quantum (NISQ) era due to its relative resilience to noise and minimal quantum resource requirements [4] [6]. VQE employs a hybrid quantum-classical approach where:

- A parameterized quantum circuit (ansatz) prepares trial wavefunctions on a quantum processor

- The expectation value of the molecular Hamiltonian is measured

- A classical optimizer adjusts circuit parameters to minimize the energy

Recent enhancements include adaptive ansatz construction protocols like ADAPT-VQE and K-ADAPT-VQE, which dynamically build problem-specific circuits by selecting operators from a predefined pool based on their gradient contributions [4]. The K-ADAPT variant adds operators in chunks of size K, significantly reducing the total number of quantum measurements and classical optimization cycles required to achieve chemical accuracy (1.6 mHa or 1 kcal/mol).

Hamiltonian Transformation and Qubit Reduction

The fermionic Hamiltonian of quantum chemistry must be mapped to qubit operators for implementation on quantum processors. Common techniques include the Jordan-Wigner and Bravyi-Kitaev transformations [4] [6]. Additionally, quantum tapering approaches exploit molecular symmetries to reduce the number of qubits required, while quantum community detection algorithms running on quantum annealers can identify relevant Slater determinant clusters to reduce Hamiltonian complexity [3].

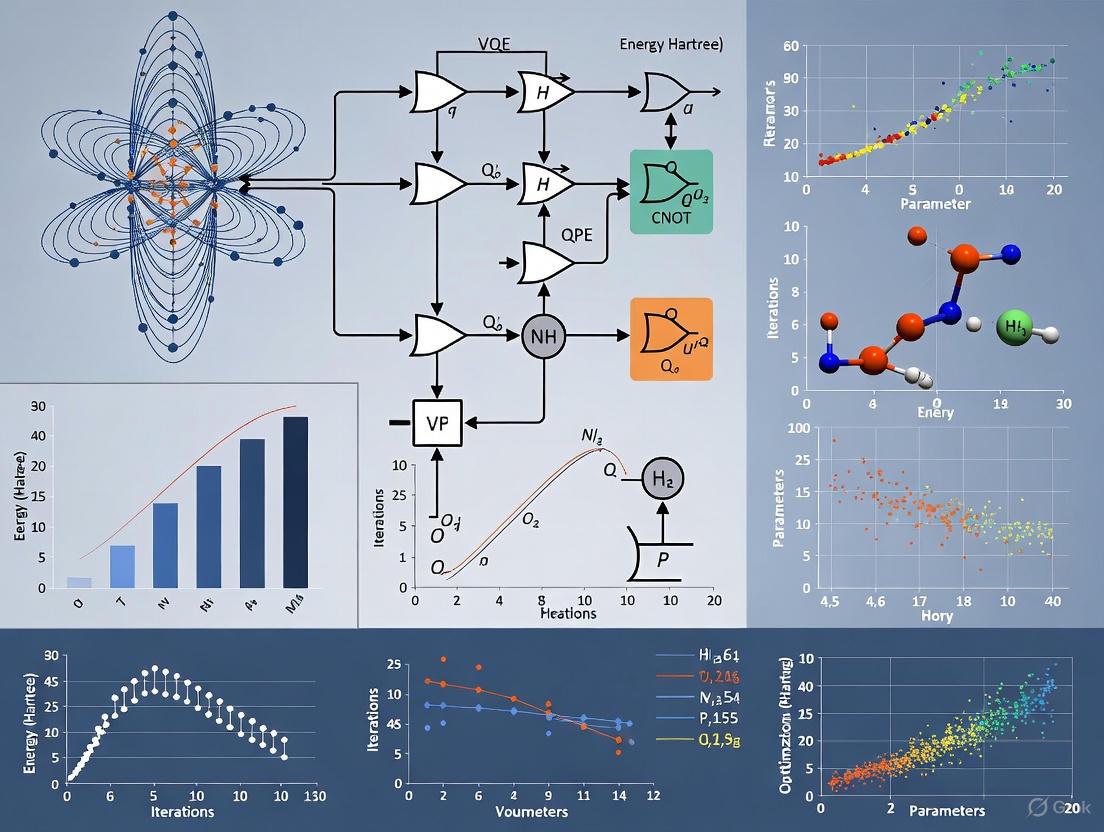

The following diagram illustrates the complete workflow for molecular ground state determination using quantum algorithms:

Experimental Protocols and Methodologies

VQE Implementation Protocol

A standardized protocol for VQE implementation encompasses the following steps:

Molecular Hamiltonian Preparation

- Perform Hartree-Fock calculation using quantum chemistry packages (e.g., PySCF)

- Extract one-electron (( h{pq} )) and two-electron (( h{pqrs} )) integrals

- Apply active space approximation if necessary to reduce problem size

Qubit Hamiltonian Construction

- Transform fermionic operators to qubit operators using Jordan-Wigner or Bravyi-Kitaev transformation

- Apply symmetry-based tapering to reduce qubit count

- Express Hamiltonian as a linear combination of Pauli terms: ( H = \sumi ci P_i )

Ansatz Selection and Initialization

- Choose ansatz architecture: UCCSD, hardware-efficient, or adaptive construction

- Initialize parameters using classical approximations or heuristic methods

- Prepare reference state (typically Hartree-Fock) on quantum processor

Measurement and Optimization Loop

- Measure expectation values of Hamiltonian terms

- Compute total energy: ( E(\theta) = \langle \psi(\theta) | H | \psi(\theta) \rangle )

- Update parameters using classical optimizers (COBYLA, BFGS, or CMA-ES)

- Iterate until convergence to chemical accuracy

Resource Estimation for Fault-Tolerant Algorithms

For fault-tolerant quantum computing, the quantum phase estimation algorithm requires careful resource estimation [2]. The total cost to obtain energy E with precision ε is given by:

( \text{Cost}_{\text{QPE}} = \text{poly}(1/S) \times [\text{poly}(L)\text{poly}(1/\epsilon) + C] )

where S is the initial state overlap with the true ground state, L is the system size, and C represents state preparation cost. This highlights the critical importance of high-overlap initial states for practical quantum advantage.

Computational Tools and Frameworks

Table 3: Essential Computational Tools for Molecular Ground State Research

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| PySCF [4] | Classical quantum chemistry package | Hartree-Fock, integral computation | Hamiltonian preparation |

| OpenFermion [4] | Quantum chemistry library | Fermion-to-qubit mapping | Quantum algorithm input |

| PennyLane [6] | Quantum machine learning | Differentiable quantum computing | VQE implementation |

| QChem [6] | Software module | Molecular Hamiltonian construction | End-to-end quantum chemistry |

| D-Wave Ocean [3] | Quantum annealing SDK | QUBO problem formulation | Hamiltonian reduction |

Key Mathematical Constructs

The "Unitary Coupled Cluster with Singles and Doubles (UCCSD)" ansatz serves as a cornerstone for many quantum approaches, with the wavefunction form:

( |\Psi{\text{UCCSD}}\rangle = e^{T - T^\dagger} |\Psi{\text{HF}}\rangle )

where ( T = T1 + T2 ) with ( T1 = \sum{i,j} \theta{ij} \hat{a}i^\dagger \hat{a}j ) and ( T2 = \sum{i,j,k,l} \theta{ij}^{kl} \hat{a}i^\dagger \hat{a}j^\dagger \hat{a}k \hat{a}l ) [4].

For dissipative approaches [9], the Lindblad master equation:

( \frac{d}{dt}\rho = \mathcal{L}[\rho] = -i[\hat{H},\rho] + \sumk \hat{K}k \rho \hat{K}k^\dagger - \frac{1}{2} {\hat{K}k^\dagger \hat{K}_k, \rho} )

provides the theoretical framework for ground state preparation through engineered dissipation, with Type-I and Type-II jump operators enabling different convergence properties.

Current Challenges and Research Frontiers

The Quest for Quantum Advantage

The evidence for exponential quantum advantage in ground state quantum chemistry remains uncertain [2]. Key challenges include:

- Initial state preparation: The overlap between easily preparable states (e.g., Hartree-Fock) and the true ground state may decrease exponentially with system size due to the orthogonality catastrophe

- Classical heuristic power: Advanced classical methods (DMRG, AFQMC, neural network states) often achieve polynomial scaling for realistic chemical systems

- Error scaling: The precise relationship between system size and computational cost for fixed accuracy remains poorly characterized for both classical and quantum approaches

Emerging Approaches

Promising research directions include:

- Stabilizer ground states for initializing and analyzing quantum many-body systems [10]

- Broken-symmetry wavefunctions for improving initial state overlap in open-shell systems [7]

- Quantum-inspired classical algorithms leveraging insights from quantum information science

- Hierarchical multilevel approaches combining classical and quantum computations

The following diagram illustrates the key considerations in the quantum-classical comparison for molecular ground state problems:

The determination of molecular Hamiltonian ground states remains a vibrant research area at the intersection of quantum chemistry, computational physics, and quantum information science. While quantum computing offers promising long-term potential, particularly for strongly correlated systems that challenge classical methods, rigorous demonstration of exponential quantum advantage awaits both theoretical advancement and hardware development. The most productive near-term trajectory appears to involve co-design of quantum-classical hybrid algorithms that leverage the respective strengths of both computational paradigms, with careful attention to resource scaling, error management, and application to chemically relevant problems.

In quantum chemistry, determining the exact ground state of a molecular Hamiltonian is fundamental to predicting a molecule's properties and behavior. For systems where electrons are strongly correlated—such as transition metal complexes, catalysts, and high-temperature superconductors—this task becomes profoundly difficult. Classical computational methods face an exponential wall, a problem where the computational resources required to find an exact solution grow exponentially with the size of the system [11]. This scaling relationship poses a fundamental limit to what is computationally tractable, even on the most powerful classical supercomputers.

This article explores the nature of this exponential wall, details the limitations of current classical algorithms, and examines how quantum computing offers a promising pathway to overcome these barriers, ultimately enabling the accurate simulation of complex molecular systems that are critical for advancements in drug discovery and materials science [12] [13].

The Exponential Wall in Classical Computational Chemistry

The Source of the Exponential Scaling

The exponential wall arises from the fundamental nature of quantum mechanics. A multi-electron wavefunction, which describes the state of a system, can be represented as a linear combination of Slater determinants. Each determinant represents a possible electronic configuration. For an exact solution—a method known as Full Configuration Interaction (FCI)—one must consider all possible excitations of electrons across the available orbitals [11].

The number of these determinants does not grow linearly or polynomially with system size; it scales combinatorially. For even modestly sized molecules (e.g., more than four heavy atoms) and basis sets, the number of determinants becomes astronomically large. Consequently, solving for the coefficients in the wavefunction expansion requires diagonalizing a Hamiltonian matrix whose dimension is this vast number of determinants, an operation whose cost scales as the cube of the matrix dimension. This combinatorial explosion of problem size, combined with the polynomial cost of linear algebra, creates the intractable "exponential wall" [11].

Limitations of Approximate Classical Methods

To make simulations feasible, quantum chemists have developed approximate methods that avoid the full FCI solution. Table 1 summarizes the key classical approaches and their limitations when dealing with strongly correlated systems.

Table 1: Classical Computational Methods for Ground-State Quantum Chemistry

| Method | Key Principle | Scaling with System Size | Limitations with Strong Correlation |

|---|---|---|---|

| Density Functional Theory (DFT) | Uses electron density instead of wavefunction; relies on approximate functionals. | Polynomial [13] | Standard functionals fail for strongly correlated electrons; inaccurate for bond breaking, transition metals, and catalytic sites [12] [13]. |

| Coupled Cluster (CC) | Uses an exponential ansatz of excitation operators. | High-order polynomial (e.g., CCSD(T) scales as ~N⁷) | Fails when the reference wavefunction (often from Hartree-Fock) is a poor starting point, as in multi-reference systems [11]. |

| Complete Active Space SCF (CASSCF) | Performs FCI within a selected "active space" of important orbitals. | Exponentially scaling with active space size [11] | The exponential wall reappears as the active space grows to capture more correlation; tractable only for small active spaces. |

| Density Matrix Renormalization Group (DMRG) | Matrix Product State ansatz; iterative variational optimization. | Polynomial for weakly correlated 1D systems [11] | For strongly correlated systems, the scaling becomes unfavorable, and the computational cost increases dramatically [11]. |

As the table indicates, each classical method involves a trade-off between computational cost and accuracy. Strongly correlated systems, which are central to many important chemical problems like nitrogen fixation [13] and enzymatic catalysis [14], often fall into the gap where approximations fail and exact methods are too costly.

Quantum Computing: A Path Beyond the Exponential Wall

The Quantum Approach to Electronic Structure

Quantum computers naturally circumvent the exponential wall by leveraging the principles of superposition and entanglement. While a classical computer must store and process each of the exponentially many configurations of a quantum state separately, a quantum computer can encode this information in the amplitudes of a quantum state of just n qubits, which can represent 2ⁿ states simultaneously [12] [13].

The Quantum Phase Estimation (QPE) algorithm can, in principle, project onto the exact ground state of a Hamiltonian and determine its energy with high precision. For the Noisy Intermediate-Scale Quantum (NISQ) era, hybrid classical-quantum algorithms like the Variational Quantum Eigensolver (VQE) have been developed. In VQE, a quantum processor prepares and measures a parameterized trial wavefunction (ansatz), while a classical optimizer adjusts the parameters to minimize the expectation value of the energy [12]. This approach trades circuit depth for increased measurements and is more resilient to noise.

Documented Quantum Speedups and Current Capabilities

While fault-tolerant quantum computers capable of solving large chemistry problems are still under development, progress is accelerating. Recent industry roadmaps project systems with 1,000+ physical qubits in the near term, with plans for error-corrected systems featuring hundreds of logical qubits by the end of the decade [15]. Table 2 quantifies the requirements and recent demonstrations of quantum chemistry simulations.

Table 2: Quantum Computing for Molecular Hamiltonians: Requirements and Demonstrations

| Metric / Molecule | Classical FCI Requirement | Quantum Computing Demonstration / Requirement | Key Finding / Implication |

|---|---|---|---|

| General Qubit Requirement | N/A | Problem encoded onto ~Polynomial(n) qubits [12] | Exponential compression of the problem representation. |

| FeMoco (Nitrogenase) | Intractable for FCI [13] | Estimated at ~2.7 million physical qubits (pre-error correction) [13] | Highlighed the qubit count challenge; recent advances in error correction and algorithms are reducing this figure. |

| Cytochrome P450 | Intractable for FCI [13] | Similar scale to FeMoco [13] | A key industrial target for drug metabolism simulation. |

| Small Molecules (H₂, LiH) | Tractable, but limited | VQE demonstrated on NISQ devices [13] | Proof-of-principle for hybrid quantum-classical algorithms. |

| Water Molecule | Tractable | Ground state simulated via adiabatic state preparation [16] | Demonstrates advanced state preparation methods on quantum simulators. |

| Quantum Error Correction | N/A | Error rates reduced to ~0.000015%; algorithmic fault tolerance reduces overhead 100x [15] | Critical for making large-scale quantum chemistry simulations feasible. |

These developments indicate that quantum computing is steadily progressing toward solving chemically relevant problems. For instance, Google's Quantum Echoes algorithm has demonstrated a verifiable quantum advantage by running an algorithm 13,000 times faster than classical supercomputers, and collaborations between companies like IonQ and Ansys have shown quantum simulations outperforming classical high-performance computing by 12% in a medical device simulation [15].

Experimental Protocols for Quantum Ground-State Simulation

This section outlines two primary methodological frameworks for finding molecular ground states on quantum computers.

The Variational Quantum Eigensolver (VQE) Protocol

The VQE is a hybrid protocol designed for today's noisy quantum processors [13]. The workflow, as shown in Diagram 1, involves iterative communication between a quantum and a classical computer.

Diagram 1: Variational Quantum Eigensolver (VQE) Workflow

Key Steps in the VQE Protocol:

- Problem Mapping: The second-quantized molecular electronic Hamiltonian is encoded into qubits using a transformation such as the Jordan-Wigner or Bravyi-Kitaev transformation [12].

- Ansatz Preparation: A parameterized quantum circuit (ansatz) is chosen. Common choices include the Unitary Coupled Cluster (UCC) ansatz or hardware-efficient ansatzes.

- Quantum Execution: The quantum processor prepares the state

|ψ(θ)⟩and performs measurements to estimate the expectation value of the Hamiltonian,⟨ψ(θ)|H|ψ(θ)⟩. This often requires measuring the expectation values of each Pauli term in the qubit Hamiltonian. - Classical Optimization: A classical optimizer (e.g., gradient descent) uses the energy reported from the quantum computer to update the parameters

θfor the next iteration. This loop continues until the energy converges to a minimum.

Adiabatic State Preparation (ASP) Protocol

ASP is an alternative method that leverages the quantum adiabatic theorem. The protocol, detailed in Diagram 2, involves evolving the system from a simple, easy-to-prepare ground state to the complex ground state of the target molecular Hamiltonian [16].

Diagram 2: Adiabatic State Preparation (ASP) Workflow

Key Steps in the ASP Protocol:

- Define Hamiltonians: Identify an initial Hamiltonian

H₀whose ground state is known and easy to prepare on the quantum computer (e.g., the ground state of a non-interacting system). Define the target molecular HamiltonianHₜ. - Prepare Initial State: Initialize the quantum computer into the ground state of

H₀. - Adiabatic Evolution: Slowly evolve the system's Hamiltonian from

H₀toHₜover a total timeT, typically following a pathH(s) = (1-s)H₀ + sHₜ, wheresgoes from 0 to 1. The evolution must be slow enough to satisfy the adiabatic condition and prevent transitions to excited states. - Final Measurement: After the evolution, the system will reside in the ground state of

Hₜ, and its properties can be measured directly.

A recent advancement, as demonstrated for molecules like water and methylene, involves constructing an optimal adiabatic path starting from an approximate initial wavefunction (e.g., from a classical computation), rather than a trivial H₀ [16]. This heuristic method can significantly improve the efficiency of ASP.

Table 3 catalogs key algorithmic and hardware "reagent solutions" essential for conducting ground-state searches for molecular Hamiltonians.

Table 3: Essential "Research Reagents" for Quantum Computational Chemistry

| Category / Item | Function | Application in Ground-State Search |

|---|---|---|

| Algorithmic Reagents | ||

| VQE (Variational Quantum Eigensolver) | Hybrid quantum-classical algorithm for finding ground states on NISQ devices. | Robust to noise; used to simulate small molecules like H₂ and LiH [13]. |

| QPE (Quantum Phase Estimation) | Coherent algorithm for high-precision energy measurement. | Provides exact energy; requires fault-tolerant hardware [12]. |

| Quantum Krylov Methods | Projects the Hamiltonian into a subspace generated by real-time evolution. | An alternative to VQE for obtaining ground and excited states [12]. |

| Hardware Reagents | ||

| Superconducting Qubits | Quantum processors based on superconducting circuits. | Used by Google, IBM; dominant platform for current experiments [15]. |

| Trapped Ions | Quantum processors using trapped atomic ions as qubits. | Used by IonQ; known for high fidelity and long coherence [12] [15]. |

| Neutral Atoms | Quantum processors using neutral atoms in optical tweezers. | Platform used by companies like Atom Computing; offers scalability potential [15]. |

| Enabling Software | ||

| Jordan-Wigner / Bravyi-Kitaev | Fermion-to-Qubit Mapping transforms electronic Hamiltonians into qubit operators. | Encodes the molecular Hamiltonian of Fermionic systems into a form a quantum computer can process [12]. |

| UCC Ansatz | A chemically inspired ansatz for the VQE algorithm. | Generates trial wavefunctions based on electronic excitations [13]. |

The exponential wall presents a fundamental barrier to the classical simulation of strongly correlated molecular systems, hindering progress in drug discovery and materials science. While classical methods provide approximate solutions, they often fail for critical industrial problems like modeling catalytic active sites and metalloenzymes. Quantum computing, by its very nature, offers a pathway to scale polynomially rather than exponentially with system size. Although significant challenges in qubit count and error correction remain, rapid hardware advancements and algorithmic innovations are steadily transforming this promise into a tangible reality. The transition from simulating simple diatomic molecules to complex biomolecules is underway, marking the beginning of a new era for computational quantum chemistry.

The search for the ground state energy of molecular Hamiltonians represents a cornerstone problem in computational chemistry and drug discovery, with direct applications in predicting molecular reactivity, stability, and properties. Classical computational methods, particularly for strongly correlated molecules, require expensive wave function methods that become prohibitively costly even for few-atom systems [17]. Quantum computing emerges as a transformative solution to this enduring challenge by harnessing the distinct properties of quantum mechanics—superposition and entanglement—to process information in ways fundamentally inaccessible to classical computers.

This technical guide examines the pathway from classical bits to quantum bits (qubits), focusing on how these quantum properties enable computational advantage in molecular ground state searches. Unlike classical bits that are confined to definite states of 0 or 1, qubits can exist in a coherent superposition of both states simultaneously [18] [19]. Furthermore, through entanglement, qubits become intrinsically linked, creating correlations that persist even when physically separated [20]. When combined, these phenomena enable a quantum computer to represent and manipulate molecular wavefunctions in an exponentially large computational space, offering a potentially powerful alternative to classical computational chemistry methods for tackling the electronic structure problem [21] [22].

Theoretical Foundations: From Bits to Qubits

The Classical Bit: A Binary Foundation

The classical bit is the fundamental unit of information in traditional computing. It is a binary system that can exist in only one of two mutually exclusive states, physically represented by a high or low voltage in a circuit, and logically interpreted as 0 or 1 [19]. This deterministic nature means that a system of n classical bits can be in exactly one of 2^n possible configurations at any given time. While this paradigm has powered decades of computational advancement, its sequential processing nature creates a fundamental bottleneck for simulating quantum mechanical systems, which do not abide by these classical constraints.

The Quantum Bit: A Superposition of Possibilities

The qubit, the quantum analog of the classical bit, leverages the principles of quantum mechanics to transcend binary limitations. Like a classical bit, a qubit can be measured in the basis states |0⟩ or |1⟩. However, before measurement, it can exist in any coherent superposition of these states, represented as |ψ⟩ = α|0⟩ + β|1⟩, where α and β are complex probability amplitudes such that |α|^2 + |β|^2 = 1 [18] [22]. Upon measurement, the qubit's state collapses to either |0⟩ or |1⟩, with probabilities |α|^2 and |β|^2 respectively.

The power of quantum computing scales exponentially with the number of qubits. While n classical bits can represent only one of 2^n states at a time, n qubits can exist in a superposition of all 2^n possible states simultaneously [20]. This exponential scaling provides the theoretical foundation for quantum advantage, allowing quantum algorithms to explore a vast solution space in parallel.

Quantum Entanglement: Generating Non-Classical Correlations

Entanglement is a uniquely quantum phenomenon where the quantum states of two or more qubits become inextricably linked, such that the state of one cannot be described independently of the others [18]. Measuring one entangled qubit instantaneously affects the state of its partner, regardless of the physical distance between them [20]. This "spooky action at a distance," as Einstein termed it, creates powerful non-classical correlations that are essential for quantum computation and communication. Entanglement enables operations on one qubit to have a cascading effect on many others, allowing for a high degree of coordination and information encoding that is impossible for classical systems [20].

Table 1: Fundamental Properties of Classical Bits vs. Quantum Bits

| Property | Classical Bit | Quantum Bit (Qubit) | ||||

|---|---|---|---|---|---|---|

| State | Definitively 0 or 1 | Superposition of | 0⟩ and | 1⟩ (`α | 0⟩ + β | 1⟩`) |

| Information Capacity | 1 bit per bit | Theoretically infinite (before measurement) | ||||

System of n Units |

Can represent one of 2^n states |

Can represent a superposition of all 2^n states |

||||

| Inter-Particle Correlation | Independent | Can be entangled, creating non-classical correlations | ||||

| Governing Physics | Classical Mechanics | Quantum Mechanics |

The Molecular Hamiltonian and the Ground State Problem

Defining the Electronic Hamiltonian

In computational chemistry, the goal is to solve the electronic Schrödinger equation to determine a molecule's properties. Within the Born-Oppenheimer approximation, which treats nuclei as fixed point charges, the electronic Hamiltonian H_e captures the energy contributions from electron kinetic energy and all Coulomb interactions (electron-nucleus attraction, electron-electron repulsion, and nucleus-nucleus repulsion) [21]. The total electronic energy E and the wave function Ψ are obtained by solving H_e Ψ = E Ψ. The lowest eigenvalue E_g is the ground state energy, and its corresponding eigenvector Ψ_g is the ground state wave function.

The Challenge of Strong Correlation

Classical algorithms for predicting the equilibrium geometry of strongly correlated molecules require expensive wave function methods that become impractical already for few-atom systems [17]. The computational cost of exact methods scales exponentially with the number of electrons, making them intractable for all but the smallest molecules. While approximation methods exist, they often trade accuracy for feasibility, particularly for systems where electron correlation is strong, such as in transition metal complexes or reaction transition states, which are critical in pharmaceutical research.

Second Quantization and Qubit Mapping

To simulate molecules on a quantum computer, the electronic Hamiltonian must be translated into a form that operates on qubits. This is typically done using the second quantization formalism. In this representation, the Hamiltonian H is expressed in terms of creation (c_p^†) and annihilation (c_q) operators as [21]:

The coefficients h_{pq} and h_{pqrs are one- and two-electron integrals computed using a chosen basis set of molecular orbitals.

The next step is to map these fermionic operators to qubit operators. This is achieved using techniques like the Jordan-Wigner or Bravyi-Kitaev transformations, which encode the occupation number of each molecular orbital into the state of a qubit [21]. These transformations express the Hamiltonian as a linear combination of tensor products of Pauli operators (I, X, Y, Z):

This Pauli string representation is executable on a quantum processor.

Leveraging Quantum Properties for Ground State Search

The Role of Superposition in State Preparation

Superposition is instrumental in preparing an initial ansatz for the molecular wavefunction. A quantum computer can efficiently generate a trial state that is a superposition of many different electronic configurations simultaneously. For instance, starting all qubits in the |0⟩ state and applying Hadamard gates creates a uniform superposition of all possible computational basis states. This state can then be evolved or parameterized to approach the true, complex ground state of the target molecular Hamiltonian. This ability to compactly represent a vast set of configurations is a direct advantage over classical computers, which must often enumerate configurations individually.

The Role of Entanglement in Capturing Electron Correlation

Entanglement is the quantum resource that directly encodes electron correlations. In a molecular system, the motion of electrons is correlated; the position of one electron provides information about the likely positions of others. Classical methods struggle to represent these complex, multi-electron correlations efficiently. On a quantum computer, entangling gates (such as CNOT gates) applied between qubits create these necessary correlations in the wavefunction ansatz [23]. The degree and structure of entanglement available in a variational quantum algorithm significantly impact its convergence and ability to accurately approximate the ground state, particularly for strongly correlated systems [23].

Interference and Amplitude Amplification

While not a core pillar like superposition and entanglement, quantum interference is the process that amplifies correct answers and suppresses incorrect ones. After preparing a superposed and entangled state, a quantum algorithm manipulates the probability amplitudes (α, β) of different states such that those corresponding to the ground state energy constructively interfere, while others destructively interfere. This process effectively "rotates" the quantum state in the high-dimensional Hilbert space toward the desired solution. When the system is finally measured, the probability of obtaining an outcome corresponding to the ground state energy is maximized.

Algorithmic Frameworks and Experimental Protocols

The Variational Quantum Eigensolver (VQE) Protocol

The VQE algorithm is a leading hybrid quantum-classical approach for ground state finding in the NISQ era. It combines a quantum computer's ability to prepare and measure complex states with a classical computer's power to optimize parameters.

Detailed Methodology:

- Problem Specification: Define the target molecule (atomic symbols and nuclear coordinates) and a basis set [21].

- Hamiltonian Generation: Use a classical computer to compute the one- and two-electron integrals (

h_{pq},h_{pqrs}) and transform the electronic Hamiltonian into a qubit-representable form (a sum of Pauli strings) [21]. - Ansatz Preparation: On the quantum computer, prepare a parameterized trial wavefunction (ansatz)

|ψ(θ)⟩ = U(θ)|ψ_0⟩, whereU(θ)is a parameterized quantum circuit, and|ψ_0⟩is a simple reference state (e.g., the Hartree-Fock state) [17]. - Expectation Value Measurement: For each Pauli term

P_iin the HamiltonianH = Σ_i c_i P_i, measure the expectation value⟨ψ(θ)|P_i|ψ(θ)⟩on the quantum processor. The total energy estimate isE(θ) = Σ_i c_i ⟨ψ(θ)|P_i|ψ(θ)⟩. - Classical Optimization: A classical optimizer (e.g., gradient descent) uses the energy

E(θ)to propose new parametersθ_newto minimize the energy. - Iteration: Steps 3-5 are repeated until convergence, at which point

E(θ*)is the estimated ground state energy.

Diagram Title: VQE Workflow for Molecular Ground State Search

Advanced Protocol: Qubit Configuration Optimization for Neutral Atoms

For specific hardware platforms like neutral atom tweezers, where qubit interactions are determined by their physical positions, advanced configuration optimization can dramatically improve VQE performance.

Detailed Methodology (Consensus-Based Optimization) [23]:

- Initialization: Multiple "agents" (each a set of qubit positions

X^(k)) are randomly initialized in the configuration space𝒳. - Partial Pulse Optimization: For each agent's configuration

X^(k), a partial optimization of the control pulsesz^(k)is performed to get an initial indication of the cost landscapeJ(X, z). - Consensus Update: The agents' cost function values and positions are shared. A weighted consensus point is computed, favoring agents with lower costs.

- Exploration and Diffusion: Agents are updated by moving toward the consensus point while adding noise for exploration and a diffusion term to avoid premature convergence.

- Iteration and Selection: Steps 2-4 are repeated for several iterations. The positions converge to an optimized configuration, which is then used for a full VQE run. This method avoids the pitfalls of gradient-based position optimization, which fails due to the divergent

R^(-6)nature of Rydberg interactions [23].

Diagram Title: CBO for Qubit Configuration Optimization

Quantitative Performance and Resource Analysis

The practical utility of quantum algorithms depends on their performance relative to resource constraints, particularly in the NISQ era. Recent research demonstrates significant progress in making Hamiltonian simulation more feasible.

Table 2: Algorithmic Performance and Resource Reduction in Hamiltonian Simulation

| Metric | Classical / Baseline Method | Quantum-Enhanced Approach | Improvement Factor |

|---|---|---|---|

| Circuit Depth (All-to-All connectivity) | Baseline | Hamiltonian truncation + Clifford Decomposition (CDAT) [24] | 28.5-fold reduction |

| Circuit Depth (IBMQ Heron) | Baseline | Hamiltonian truncation + Clifford Decomposition (CDAT) [24] | 15.5-fold reduction |

| Convergence Acceleration | Standard fixed configuration VQE | VQE with Consensus-Based qubit configuration optimization [23] | Significant acceleration and lower error |

| Mitigation of Barren Plateaus | Standard ansatz initialization | Problem-inspired ansatz & optimized qubit positions [23] | Helps avoid flat, untrainable regions |

A 2024 study on simulating pharmaceutically relevant molecules with sulfonyl fluoride warheads achieved a reduction in circuit depth to 1330 gates for an 8-qubit Hamiltonian. Using middleware decomposition, they successfully executed sub-circuits with depths of 371 gates (containing 216 2-qubit gates) on current hardware, representing one of the largest electronic structure dynamics calculations implemented to date [24].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Quantum Computational Chemistry

| Resource / "Reagent" | Type | Function / Application | Example Platforms / Libraries |

|---|---|---|---|

| Superconducting Qubits | Hardware | Fast, controllable physical qubits; common in commercial processors. | IBM Condor, Google Willow |

| Trapped Ion Qubits | Hardware | High-fidelity qubits with long coherence times; well-suited for quantum simulations. | IonQ |

| Neutral Atom Arrays | Hardware | Configurable qubit interactions; enables position optimization for specific problems [23]. | Cold atom platforms |

| PennyLane | Software | A cross-platform Python library for differentiable programming of quantum computers; facilitates quantum chemistry simulations [21]. | Xanadu |

| Qiskit | Software | An open-source SDK for working with quantum computers at the level of circuits, pulses, and algorithms [22]. | IBM |

| Molecular Hamiltonian | Input Data | The target operator encoding the molecular energy; the core object of the simulation. | Generated via qchem.molecular_hamiltonian() [21] |

| Variational Quantum Eigensolver (VQE) | Algorithm | A hybrid algorithm for finding ground states, robust to NISQ-era noise. | Standard in QC libraries |

| Consensus-Based Optimization (CBO) | Algorithm | A gradient-free method for optimizing qubit configurations in neutral atom systems [23]. | Custom implementation per research |

| Problem-Inspired Ansatz | Algorithm | An ansatz (e.g., UCCSD, ADAPT-VQE) tailored to the problem, reducing circuit depth vs. universal ansatzes [23]. | Common in quantum chemistry |

The transition from bits to qubits, powered by the fundamental principles of superposition and entanglement, marks a paradigm shift in computational science with profound implications for molecular research. By providing a natural framework for representing and manipulating complex quantum systems, quantum computing offers a viable path to solving the electronic structure problem for molecules that are currently beyond the reach of classical computers. While significant challenges remain—including qubit decoherence, noise, and the need for robust error correction [25] [19]—the methodological advances in variational algorithms, qubit configuration optimization, and circuit depth reduction are steadily paving the way for quantum utility in drug development and materials discovery. The ongoing symbiosis of algorithmic innovation and hardware progress promises to unlock new frontiers in understanding and designing molecular systems.

The quest for quantum utility—the point at which quantum computers solve practically valuable problems beyond the reach of classical systems—represents a pivotal challenge in computational science. For researchers focused on molecular Hamiltonian ground state searches, understanding the fundamental relationships between quantum and classical complexity classes is essential for navigating this rapidly evolving landscape. The complexity classes BQP (Bounded-error Quantum Polynomial time) and BPP (Bounded-error Probabilistic Polynomial time) serve as critical landmarks in this exploration, defining the boundaries of efficient computation on quantum and classical devices, respectively [26] [27].

Within computational chemistry, the ground state energy estimation problem provides a compelling case study for examining these complexity relationships. While classical methods like Density Matrix Renormalization Group (DMRG) and tensor networks have demonstrated considerable success, they face exponential scaling walls for strongly correlated systems prevalent in catalytic and biomolecular applications [28] [12]. Quantum computing offers a potential pathway through polynomial-time solutions to these otherwise intractable problems, positioning ground state estimation as a problem likely residing in BQP but not in BPP [12] [29].

This technical analysis examines the current understanding of the BQP-BPP relationship through the lens of molecular Hamiltonian problems, surveys established and emerging quantum algorithmic approaches, and presents recent experimental evidence suggesting the imminent realization of quantum utility in computational chemistry.

The Computational Complexity Landscape

Defining the Complexity Classes

The complexity classes BPP and BQP define the sets of decision problems solvable by probabilistic classical and quantum computers, respectively, with bounded error probability in polynomial time [26] [27].

- BPP: A decision problem is in BPP if a probabilistic Turing machine can solve it in polynomial time with an error probability of at most 1/3 for all instances. The error bound can be made exponentially small through repetition [26].

- BQP: Similarly, BQP contains decision problems solvable by a quantum computer in polynomial time with error probability at most 1/3. The quantum circuit model serves as the formal model of computation for BQP [27].

The table below summarizes the fundamental relationships between key complexity classes relevant to quantum chemistry applications:

Table 1: Computational Complexity Class Relationships

| Complexity Class | Computational Model | Error Bound | Inclusion Relationships |

|---|---|---|---|

| P | Deterministic Turing machine | None | P ⊆ BPP [26] |

| BPP | Probabilistic Turing machine | ≤ 1/3 | BPP ⊆ BQP [26] [27] |

| BQP | Quantum computer | ≤ 1/3 | BQP ⊆ PP ⊆ PSPACE [26] [27] |

| NP | Non-deterministic Turing machine | None | BQP relationship with NP unknown [27] |

| PSPACE | Deterministic Turing machine with polynomial space | None | BQP ⊆ PSPACE [27] |

The Fundamental Question: BQP vs. BPP

The central open question in quantum complexity theory relevant to quantum utility is whether BPP ⊊ BQP—that is, whether quantum computers can solve problems that classical computers cannot efficiently solve [26] [27]. While it is known that BPP ⊆ BQP, meaning all efficiently solvable classical problems are also efficiently solvable on quantum computers, the converse containment remains unproven [26].

For practical quantum chemistry applications, this theoretical question translates to whether quantum algorithms can provide superpolynomial speedups for real molecular systems. The existence of such speedups remains actively debated, with evidence suggesting that quantum advantage may be most pronounced for dynamics simulations and strongly correlated systems [12].

Quantum Algorithms for Molecular Ground State Problems

Algorithmic Approaches and Their Complexities

Multiple quantum algorithmic frameworks have been developed for ground state energy estimation, each with distinct resource requirements and complexity profiles:

Table 2: Quantum Algorithms for Ground State Energy Estimation

| Algorithm | Key Principle | Theoretical Complexity | Fault-Tolerance Requirement | Application Scale Demonstrated |

|---|---|---|---|---|

| Variational Quantum Eigensolver (VQE) [12] | Hybrid quantum-classical optimization | Heuristic; depends on ansatz | No (NISQ-compatible) | 12+ qubits [28] |

| Quantum Phase Estimation (QPE) [29] | Quantum Fourier transform of phase | O(1/ϵ) depth for precision ϵ | Yes | Small-scale fault-tolerant simulations |

| Quantum Selected CI (QSCI) [28] | Quantum-assisted configuration interaction | Beyond classical exact diagonalization | Partial (with error mitigation) | Up to 77 qubits [28] |

| CDF-Based Methods [29] | Cumulative distribution function analysis | O(polylog(n)) for sparse systems | Early fault-tolerant | 26-qubit simulations [29] |

The Role of Hybrid Quantum-Classical Approaches

Hybrid quantum-classical algorithms represent a pragmatic approach to leveraging current quantum hardware within the broader context of computational chemistry workflows. The quantum-selected configuration interaction (QSCI) method demonstrates how quantum processors can be deployed for specific, computationally challenging subroutines within larger classical computational frameworks [28]. This approach aligns with the increasingly common integration of Quantum Processing Units (QPUs) with conventional High-Performance Computing (HPC) platforms, creating heterogeneous systems capable of addressing complex multiscale simulations [28].

For molecular systems, these hybrid approaches often employ embedding techniques such as projection-based embedding (PBE) and density matrix embedding theory (DMET) to partition the computational problem into quantum and classical tractable subdomains [28]. This strategic partitioning enables the application of quantum resources to the most electronically correlated regions of large molecular systems while treating the broader environmental effects through classical molecular mechanics or density functional theory.

Experimental Evidence: Toward Quantum Utility

Recent Experimental Demonstrations

Recent experiments on noisy intermediate-scale quantum (NISQ) devices have provided preliminary evidence for the utility of quantum computing before full fault tolerance. A landmark 2023 study on a 127-qubit superconducting processor demonstrated the measurement of accurate expectation values for circuit volumes beyond brute-force classical computation [30]. This experiment implemented Trotterized time evolution of a 2D transverse-field Ising model, with results in the strong entanglement regime where classical tensor network methods break down [30].

Crucially, these experiments leveraged advanced error mitigation techniques, including zero-noise extrapolation (ZNE) and probabilistic error cancellation (PEC), to extract accurate expectation values from noisy quantum circuits [30]. The successful application of these techniques to circuits with up to 2,880 CNOT gates suggests a viable path toward quantum utility for chemical applications in the pre-fault-tolerant era.

Experimental Protocol: Quantum Utility Demonstration

The experimental methodology for demonstrating quantum utility in chemical systems typically follows this structured protocol:

System Selection: Identify a molecular system with strong electron correlation effects that challenge classical methods (e.g., multimetallic catalysts or conjugated polymers) [28] [12].

Problem Decomposition: Apply embedding techniques to partition the system into active quantum and environmental classical regions using methods like QM/MM or DMET [28].

Algorithm Implementation: Execute hybrid quantum-classical algorithms (VQE, QSCI) on integrated HPC-QPU platforms, employing qubit subspace techniques to reduce quantum resource requirements [28].

Error Mitigation: Apply advanced error mitigation protocols (ZNE, PEC) to extract accurate expectation values from noisy quantum computations [30].

Classical Verification: Compare results with exactly solvable test cases and state-of-the-art classical methods to verify accuracy where possible [30].

The workflow diagram below illustrates this multi-scale computational approach:

The Scientist's Toolkit: Research Reagent Solutions

For researchers implementing quantum computational methods for molecular Hamiltonian problems, the following "research reagents" represent essential components of the experimental framework:

Table 3: Essential Research Reagents for Quantum Computational Chemistry

| Research Reagent | Function | Implementation Examples |

|---|---|---|

| Error Mitigation Protocols [30] | Reduce noise impact in NISQ era | Zero-noise extrapolation (ZNE), Probabilistic error cancellation (PEC) |

| Embedding Techniques [28] | Partition large systems into quantum/classical regions | Projection-based embedding (PBE), Density matrix embedding theory (DMET) |

| Qubit Subspace Methods [28] | Reduce quantum resource requirements | Qubit tapering, Contextual subspace VQE |

| Classical-Quantum Hybridizers [28] [29] | Interface quantum and classical resources | QM/MM frameworks, HPC-QPU integration platforms |

| State Preparation Protocols [29] | Initialize quantum states for computation | ADAPT-VQE, Quantum Krylov methods, DMRG-inspired initial states |

The path to demonstrable quantum utility in molecular Hamiltonian ground state calculations requires continued progress along multiple fronts: improvement in quantum hardware coherence and scalability, development of more efficient quantum algorithms with proven speedups, and refinement of error mitigation techniques that bridge the NISQ and fault-tolerant eras. The current evidence suggests that hybrid quantum-classical approaches employing strategic embedding and resource reduction techniques offer the most viable near-term pathway [28].

For computational chemists and drug development professionals, the practical implication is that quantum computational methods are transitioning from theoretical constructs to potentially valuable tools for specific, classically challenging problems in molecular systems. The complexity landscape defined by BQP and BPP provides both a target for algorithmic development and a framework for assessing progress toward genuine quantum utility in real-world chemical applications.

From Algorithms to Action: Practical Methods for Ground State Search on Quantum Hardware

The accurate calculation of ground state energies of molecular systems is a cornerstone problem in quantum chemistry and drug discovery. This challenge, however, is classically intractable for large systems due to the exponential scaling of the Hilbert space. Quantum computing offers a promising path forward, with three primary algorithmic paradigms emerging for the molecular ground state search: the Variational Quantum Eigensolver (VQE), Quantum Krylov Subspace Methods, and Quantum Phase Estimation (QPE). This whitepaper provides an in-depth technical analysis of these core algorithms, comparing their theoretical foundations, resource requirements, and practical implementation for molecular Hamiltonian problems relevant to pharmaceutical research.

Algorithmic Foundations & Comparative Analysis

Variational Quantum Eigensolver (VQE)

VQE is a hybrid quantum-classical algorithm that applies the variational principle to find the ground state energy of a Hamiltonian [31] [32]. The algorithm prepares a parameterized trial state (ansatz) |ψ(θ⃗)⟩ on a quantum computer and measures the expectation value ⟨H⟩ = ⟨ψ(θ⃗)|H|ψ(θ⃗)⟩. A classical optimizer then adjusts the parameters θ⃗ to minimize this expectation value [33].

The molecular Hamiltonian H must first be mapped to qubit operators, typically via the Jordan-Wigner or Bravyi-Kitaev transformation, expressing H as a sum of Pauli strings: H = Σᵢ αᵢPᵢ, where Pᵢ are tensor products of Pauli operators [32]. Each term's expectation value is measured separately, and the results are combined classically. Common ansätze include the Unitary Coupled Cluster (UCC) and hardware-efficient ansätze [33] [34].

Quantum Krylov Subspace Methods

Quantum Krylov algorithms diagonalize the Hamiltonian within a subspace spanned by quantum states {|ψ⟩, U|ψ⟩, U²|ψ⟩, ..., U^(k-1)|ψ⟩}, where U is typically the time-evolution operator e^(-iHt) [35] [36]. The ground state energy is estimated by solving a generalized eigenvalue problem in this subspace: Hc = ESc, where Hᵢⱼ = ⟨ψᵢ|H|ψⱼ⟩ and Sᵢⱼ = ⟨ψᵢ|ψⱼ⟩ are measured on the quantum computer [36].

Recent advances like Mirror Subspace Diagonalization (MSD) address the significant sampling cost challenge by expressing the Hamiltonian as a linear combination of time-evolution unitaries with symmetrically shifted timesteps, approaching the theoretical lower bound of sampling cost for quantum Krylov algorithms [35]. These methods are particularly effective when the spectral norm of the Hamiltonian is significantly smaller than its 1-norm [35].

Quantum Phase Estimation (QPE)

QPE is a fundamental quantum algorithm that estimates the phase ϕ of an eigenvalue e^(iϕ) of a unitary operator U, where U|ψ⟩ = e^(iϕ)|ψ⟩ for an eigenstate |ψ⟩ [37]. For Hamiltonian simulation, U is typically chosen as the time-evolution operator e^(-iHt), and the phase ϕ is related to the energy E by ϕ = Et [37].

The algorithm uses two registers: an estimation register with n qubits and a state register initially containing |ψ⟩. After applying Hadamard gates to the estimation register, controlled-U^(2^k) operations are applied for k = 0 to n-1, followed by an inverse Quantum Fourier Transform to extract the phase estimate [37] [38]. The precision of the estimate scales with the number of estimation qubits, with an error that decreases exponentially at the cost of exponentially increasing circuit depth [37].

Filtered Quantum Phase Estimation (FQPE) has recently been developed to mitigate the unfavorable dependence on initial state overlap present in standard QPE [39]. This approach uses spectral filtering to enhance the overlap of the input state with the desired eigenstate, with numerical experiments on Fermi-Hubbard models showing runtime reductions of more than two orders of magnitude in the high-precision regime [39].

Comparative Performance Analysis

Table 1: Comparative Analysis of Quantum Ground State Algorithms

| Algorithm | Theoretical Foundation | Circuit Depth | Sampling Complexity | Classical Co-processing | Current Hardware Suitability | ||

|---|---|---|---|---|---|---|---|

| VQE | Variational principle [31] [32] | Low (NISQ-friendly) | O( | γ₀ | ⁻⁴ ΔE₀ ε⁻²) for QCELS variant [39] | Extensive (parameter optimization) | High (runs on NISQ devices) [32] |

| Quantum Krylov | Subspace diagonalization [36] | Moderate | Near-optimal up to logarithmic factor [35] | Moderate (subspace eigenvalue problem) | Medium (early fault-tolerant) | ||

| QPE | Quantum Fourier transform [37] | High (exponential in precision) | O~(ε⁻¹ + | γ₀ | ⁻²ΔE₀⁻¹) for FQPE variant [39] | Minimal (classical post-processing) | Low (requires fault-tolerance) [38] |

Table 2: Algorithmic Resource Requirements and Performance Characteristics

| Algorithm | Initial Overlap Dependence | Convergence Guarantees | Measurement Cost | Key Limitations | |

|---|---|---|---|---|---|

| VQE | Moderate | No guarantee (barren plateaus) [32] | High (many Pauli terms) | Ansatz design, optimization challenges [32] | |

| Quantum Krylov | Moderate to high | Provable convergence under conditions [36] | Medium to high (matrix elements) | Ill-conditioned overlap matrices [36] | |

| Standard QPE | Strong (success probability ∝ | γ₀ | ²) [39] | Deterministic with perfect circuits | Exponential circuit depth [38] |

| FQPE | Reduced via filtering [39] | Deterministic with perfect circuits | Similar to QPE with improved success | Filter implementation complexity |

Experimental Protocols & Methodologies

VQE Implementation for H₂ Molecule

The VQE protocol for the hydrogen molecule demonstrates the standard experimental workflow [33]:

Hamiltonian Preparation: The molecular Hamiltonian for H₂ is constructed in the STO-3G basis set using the Jordan-Wigner transformation, resulting in a 4-qubit Hamiltonian with 15 Pauli terms [33].

Ansatz Circuit: The quantum circuit is initialized to the Hartree-Fock state |1100⟩, followed by a

DoubleExcitationoperation (Givens rotation) that couples the |1100⟩ and |0011⟩ states with a single parameter θ [33].Measurement Protocol: The expectation value of each Pauli term is measured individually, requiring separate basis rotations for non-Z measurements. The results are combined with appropriate coefficients to compute the total energy [33].

Classical Optimization: A classical optimizer (e.g., SGD, SLSQP, or ADAM) adjusts the parameter θ to minimize the energy expectation value, typically requiring 10-100 iterations for convergence [33] [34].

Quantum Krylov Subspace Diagonalization

The experimental protocol for quantum Krylov methods involves [36]:

Krylov Basis Generation: Generate basis states by applying powers of the time-evolution operator U = e^(-iHτ) with carefully chosen time steps τ to an initial state |ψ₀⟩.

Overlap and Hamiltonian Matrix Construction: Measure all matrix elements Hᵢⱼ = ⟨ψᵢ|H|ψⱼ⟩ and Sᵢⱼ = ⟨ψᵢ|ψⱼ⟩ through quantum measurements. Recent MSD approaches reduce this measurement cost by expressing the Hamiltonian as a linear combination of time-evolution unitaries [35].

Generalized Eigenvalue Solution: Solve the generalized eigenvalue problem Hc = ESc classically to obtain energy estimates and state approximations within the Krylov subspace.

Error Mitigation: Apply regularization techniques to address ill-conditioned overlap matrices and statistical error analysis to account for measurement noise [36].

Quantum Phase Estimation Protocol

The standard QPE experimental procedure comprises [37]:

Register Initialization: Prepare the estimation register with n qubits in |0⟩ state and the state register with the initial state |ψ⟩, ideally with high overlap with the target eigenstate.

Superposition Creation: Apply Hadamard gates to all estimation qubits to create a uniform superposition.

Controlled-Unitary Operations: Apply controlled-U^(2^k) operations for k = 0 to n-1, where the unitary is typically U = e^(-iHt) for a suitably chosen t.

Inverse Fourier Transform: Apply the inverse Quantum Fourier Transform to the estimation register.

Measurement and Interpretation: Measure the estimation register in the computational basis and convert the binary result to a phase estimate θ = 0.θ₁θ₂...θₙ, yielding energy E = 2πθ/t.

For the Filtered QPE variant, an additional spectral filtering step is applied to the initial state to enhance overlap with the target eigenstate before the standard QPE procedure [39].

Visualization of Algorithmic Workflows

VQE Algorithmic Flow

Quantum Krylov Subspace Method

Quantum Phase Estimation Circuit

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Computational Tools for Quantum Ground State Calculations

| Tool/Component | Function | Example Implementations |

|---|---|---|

| Molecular Hamiltonians | Encodes electronic structure problem | PySCF [34], PennyLane[qchem [33]] |

| Qubit Mappers | Fermionic-to-qubit transformation | Jordan-Wigner [33], Bravyi-Kitaev [32], ParityMapper [34] |

| Ansatz Circuits | Parameterized trial wavefunctions | UCCSD [34], Hardware-Efficient [32], DoubleExcitation [33] |

| Classical Optimizers | Hybrid algorithm parameter optimization | SLSQP [34], SPSA [34], ADAM [34], Gradient Descent [33] |

| Quantum Simulators | Algorithm testing and validation | PennyLane [33] [37], Qiskit Aer [34], Cirq |

| Measurement Tools | Expectation value estimation | Estimator [34], Shot-based sampling [32] |

The three algorithmic paradigms—VQE, Quantum Krylov, and QPE—offer complementary approaches to the molecular ground state problem with distinct trade-offs. VQE provides immediate utility on current NISQ devices but faces challenges with optimization and convergence. Quantum Krylov methods offer improved convergence guarantees with moderate quantum resources, making them promising for early fault-tolerant devices. QPE remains the asymptotically optimal approach for fault-tolerant quantum computers, with recent FQPE variants addressing critical overlap limitations. For pharmaceutical researchers, the optimal algorithm choice depends on available quantum hardware, molecular system size, and required precision, with hybrid approaches potentially offering the most practical near-term path toward quantum utility in drug development.

The accurate computation of molecular ground-state energies is a fundamental challenge in quantum chemistry, with profound implications for drug design and materials science. Classical computational methods often struggle with the exponential scaling required to simulate large, strongly correlated quantum systems. Within the framework of quantum computing for molecular Hamiltonian ground state search, hybrid quantum-classical algorithms emerge as a pivotal strategy for the current noisy intermediate-scale quantum (NISQ) era. Among these, the integration of Density Matrix Embedding Theory (DMET) and Sample-Based Quantum Diagonalization (SQD) represents a significant advance, enabling accurate simulations of large molecules by strategically distributing the computational workload between quantum and classical processors [40] [41]. This guide provides an in-depth technical examination of the DMET-SQD methodology, its experimental implementation, and its application to problems of biological relevance.

Theoretical Foundations

Density Matrix Embedding Theory (DMET)

DMET is a quantum embedding technique that allows a large, intractable molecular system to be solved by dividing it into smaller, manageable fragments coupled to a mean-field environment [42] [41].

- Core Principle: The formally exact Schmidt decomposition allows the ground state of a full system to be expressed through an impurity model containing only 2N degrees of freedom for a fragment of size N, significantly reducing the problem's complexity [42].

- Self-Consistent Loop: An effective local potential (U) couples the fragment to its environment. This potential is optimized self-consistently by matching the density matrix of the impurity model and the effective lattice model projected onto the fragment [42].

- Role in Hybrid Computing: Within a quantum-centric supercomputing (QCSC) architecture, DMET provides the overarching framework for fragmenting the molecule, allowing the quantum processor to focus computational resources on the chemically relevant subsystem where electron correlation is strongest [40] [41].

Sample-Based Quantum Diagonalization (SQD)

SQD is a quantum diagonalization algorithm designed to approximate ground-state energies on near-term quantum devices without requiring deep parameter optimization [43] [44].

- Core Principle: A quantum device samples electronic configurations (bitstrings) from a prepared ansatz state. Classical high-performance computing (HPC) resources then post-process these samples to reconstruct a set of dominant configurations [44].

- Classical Diagonalization: The Hamiltonian is diagonalized within the subspace spanned by the recovered configurations, effectively solving the Schrödinger equation for a drastically reduced Hilbert space [44].

- Noise Resilience: As a sample-based method, SQD is inherently tolerant to the noise present on current quantum hardware, and its accuracy can be systematically improved by increasing the number of sampled configurations [43] [44].

Methodological Integration: The DMET-SQD Workflow

The integration of DMET and SQD creates a powerful synergy for simulating large molecules. The workflow below illustrates the self-consistent interaction between the classical DMET framework and the quantum SQD subroutine.

DMET-SQD Self-Consistent Workflow: The process initiates with a mean-field calculation for the full molecule. A fragment is selected, and its entangled quantum bath is constructed via Schmidt decomposition. The embedded Hamiltonian for this fragment-plus-bath system is formed and passed to the SQD subroutine on the quantum computer. The resulting fragment density matrix is fed back to the classical computer. The process iterates until self-consistency is achieved between the fragment density and the global mean-field potential [40] [41].

The SQD Subroutine

The quantum SQD subroutine, which solves the embedded Hamiltonian, follows a detailed sequence of steps as shown in the following workflow.

SQD Subroutine Detail: The embedded Hamiltonian is used to prepare an ansatz state (e.g., the Local Unitary Coupled Cluster - LUCJ ansatz) on the quantum processing unit (QPU). The QPU then samples electronic configurations from this state. These noisy samples are processed classically by the S-CORE algorithm to mitigate errors and recover the most important configurations [44]. The Hamiltonian and overlap matrices are built within this subspace and diagonalized on a classical HPC system to obtain the ground-state energy and density matrix for the fragment [43] [44].

Experimental Protocols & Validation

The DMET-SQD methodology has been experimentally validated on quantum hardware for specific molecular systems, demonstrating its potential for biomedical applications [40].

Key Experimental Demonstrations

Table 1: Summary of DMET-SQD Experimental Validations

| Molecular System | Qubits Used (Full / Fragment) | Quantum Hardware | Key Result | Classical Benchmark |

|---|---|---|---|---|

| Hydrogen Ring (18 atoms) | 41 / 27 | ibm_cleveland (Eagle processor) |

Agreement with HCI benchmarks [40] [41] | Heat-Bath CI (HCI) |

| Cyclohexane Conformers | 89 / 32 | ibm_cleveland (Eagle processor) |

Energy differences within 1 kcal/mol of CCSD(T) [40] [41] | CCSD(T) |

Detailed Protocol: Cyclohexane Conformer Calculation

The following workflow and protocol outline the steps for calculating relative conformer energies, a critical test for drug development where molecular conformation dictates biological activity.

- System Preparation: The molecular geometry of each cyclohexane conformer (chair, boat, twist-boat, half-chair) is first optimized using classical computational methods [40].

- Active Space Selection: For each conformer, a chemically relevant fragment (e.g., a set of carbon atoms and their associated electrons) is selected. The DMET procedure maps the full 89-qubit problem to a 32-qubit embedded Hamiltonian [41].

- Quantum Execution: The SQD subroutine is executed on the

ibm_clevelandquantum processor for the 32-qubit fragment. This involves: - Classical Post-Processing: The sampled configurations are used to construct and diagonalize the generalized eigenvalue problem on a classical HPC cluster.

- Self-Consistency: The fragment density matrix is fed back into the DMET loop until convergence is achieved for the total energy.

- Validation: The relative energies between conformers computed via DMET-SQD are compared to established classical methods like CCSD(T) and HCI, with successful results falling within the threshold of chemical accuracy (1 kcal/mol) [40].

The Scientist's Toolkit: Research Reagent Solutions

Implementing the DMET-SQD workflow requires a suite of software and hardware components.

Table 2: Essential Research Tools for DMET-SQD Simulations

| Tool Name | Type | Primary Function | Application in DMET-SQD |

|---|---|---|---|

| Tangelo [40] | Software Library | Quantum Chemistry Toolkit | Provides the DMET framework and interfaces with quantum software. |

| Qiskit / SQD Addon [44] | Software Library | Quantum Algorithm Development | Implements the SQD algorithm, including configuration sampling and recovery. |

| PySCF [44] | Software Library | Classical Electronic Structure | Performs initial mean-field calculations and integral transformation. |

| AVAS [44] | Software Tool | Automated Active Space Selection | Identifies optimal molecular orbitals for the fragment, streamlining setup. |

IBM Quantum Hardware (e.g., ibm_cleveland) |

Quantum Hardware | Quantum Processing Unit (QPU) | Executes the quantum circuits for the SQD subroutine [40] [41]. |

| Classical HPC Cluster | Classical Hardware | High-Performance Computing | Handles classical pre- and post-processing, including diagonalization of large subspaces [44]. |

Applications and Future Outlook

The integration of DMET and SQD marks a tangible step toward practical quantum-centric simulations for drug discovery. By accurately computing the relative energies of molecular conformers and simulating non-covalent interactions, this approach can impact the early stages of drug design, where understanding protein-ligand binding and molecular stability is critical [40] [44].

Future work will focus on refining the method to overcome current limitations, such as the use of minimal basis sets and the accuracy of sampling in strongly correlated systems [40]. As quantum hardware improves with lower error rates and higher qubit counts, DMET-SQD is poised to simulate increasingly complex biological systems, such as peptides and proteins, potentially unlocking new paths to medical advances [41].

In the pursuit of simulating quantum molecular systems to accelerate drug discovery and materials design, finding the ground state energy of a molecular Hamiltonian is a fundamental challenge. Classical computational methods, such as those based on the Coupled Cluster theory, struggle with the exponential scaling of the Hilbert space for systems exhibiting strong electron correlations. Quantum computing offers a promising pathway to overcome this hurdle. This technical guide details two core procedural pillars essential for quantum algorithms targeting molecular ground states: the preparation of problem-specific ansätze and the decomposition of the molecular Hamiltonian into measurable Pauli operators. Framed within the context of variational quantum algorithms, these techniques enable the use of both near-term noisy intermediate-scale quantum (NISQ) devices and future fault-tolerant quantum computers for quantum chemistry problems [4] [45].

Matrix (Ansatz) Preparation Techniques

The preparation of a parameterized quantum state, or ansatz, is a critical step in variational algorithms like the Variational Quantum Eigensolver (VQE). The choice of ansatz significantly influences the convergence, accuracy, and resource requirements of the ground state search.

Adaptive Ansatz Construction: ADAPT-VQE and Beyond

Adaptive algorithms dynamically build a problem-specific ansatz, offering a compact and expressive alternative to fixed-ansatz approaches.

- ADAPT-VQE: The standard ADAPT-VQE algorithm iteratively grows an ansatz by selecting operators from a predefined pool (e.g., based on Unitary Coupled-Cluster single and double excitations, UCCSD). At each iteration, it calculates the energy gradient with respect to each operator in the pool and selects the one with the largest gradient magnitude for inclusion in the circuit. This process continues until the energy converges to a desired accuracy, such as chemical accuracy [4].

- K-ADAPT-VQE: An enhancement to ADAPT-VQE, this method improves efficiency by adding multiple (

K) operators to the ansatz in each iteration, a process referred to as "chunking." This strategy substantially reduces the total number of circuit evaluations and optimization iterations required to achieve chemical accuracy, as demonstrated in simulations of small molecules like BeH₂, LiH, and N₂ [4]. - ADAPT-AQC: This algorithm uses an adaptive-ansatz approach for the related task of preparing a specific target state, such as a Matrix Product State (MPS). It dynamically constructs a circuit by iteratively placing two-qubit unitaries based on a selection criterion, followed by parameter optimization. This method has been shown to prepare complex states like molecular ground states and the ground state of a 50-site Heisenberg model with high fidelity [46].

Matrix Product State (MPS) Based Preparation

Matrix Product States provide a structured, efficient representation for quantum states with limited entanglement, making them suitable for both classical simulation and quantum state preparation.

- Efficient Representation: An MPS represents an

n-qubit state usingnconnected tensors. The maximum dimension of the connections, known as the bond dimension (χ), controls the amount of entanglement the MPS can capture. States with low entanglement can be accurately represented with a smallχ, leading to an efficient classical description [47]. - State Preparation via Compression: Any quantum state can be decomposed into an MPS using a sequence of Singular Value Decompositions (SVDs). This process involves iteratively reshaping the state vector into a matrix, performing an SVD, and retaining only the largest

χsingular values and corresponding vectors. This truncation compresses the state, and the resulting components form the MPS tensors [47]. The resulting MPS can then be compiled into a quantum circuit. - Application to Smooth Distributions: Probability distributions that are smooth and differentiable can be efficiently approximated by an MPS with low bond dimension, as their entanglement grows slowly with system size. This property is leveraged to prepare quantum states that encode normal distributions, which are useful in quantum algorithms like Monte-Carlo methods [48].

Compiled and Variational Preparation