Quantum Computing in Electronic Structure Calculations: Revolutionizing Drug Discovery and Materials Science

This article explores the transformative role of quantum computing in performing electronic structure calculations, a cornerstone of modern chemistry and drug discovery.

Quantum Computing in Electronic Structure Calculations: Revolutionizing Drug Discovery and Materials Science

Abstract

This article explores the transformative role of quantum computing in performing electronic structure calculations, a cornerstone of modern chemistry and drug discovery. Aimed at researchers and development professionals, it provides a comprehensive overview of the foundational principles of quantum simulations, detailing current hybrid methodological approaches like VQE and quantum subspace diagonalization. It addresses the critical challenges of noise and optimization in today's NISQ-era devices and offers a comparative analysis against classical methods like Density Functional Theory. By presenting recent validation case studies, such as targeting the KRAS protein in cancer, the article synthesizes the present capabilities and future roadmap for achieving quantum advantage in modeling complex molecular systems, promising to accelerate the development of new therapeutics and materials.

The Quantum Leap: Why Classical Computers Fail at Molecular Simulation

The electronic structure problem represents a fundamental computational challenge across chemistry, materials science, and drug discovery. This problem originates from the quantum mechanical nature of electrons within molecular systems, where the wavefunction of an N-electron system depends on 3N spatial coordinates, leading to exponential scaling of computational complexity with system size [1]. As famously stated by Paul A. M. Dirac in 1929, "The underlying physical laws necessary for the mathematical theory of a large part of physics and the whole of chemistry are thus completely known, and the difficulty is only that the exact application of these laws leads to equations much too complicated to be soluble" [1]. Despite decades of algorithmic improvements, accurately solving the electronic Schrödinger equation for biologically relevant systems remains prohibitively expensive, creating a significant bottleneck in structure-based drug design and materials discovery [2] [3].

The core of this challenge lies in electron correlation effects. While the Hartree-Fock method provides a foundational approach through the Roothaan-Hall equations, it treats opposite-spin electrons as uncorrelated, necessitating more computationally intensive post-Hartree-Fock methods such as coupled-cluster theory for chemical accuracy [1] [4]. For drug design applications, where understanding precise protein-ligand interactions is crucial, this computational limitation directly impacts the accuracy of binding affinity predictions, tautomer analysis, and lead optimization workflows [5].

Current Computational Frameworks and Methodologies

Traditional Electronic Structure Methods

Traditional computational chemistry approaches to the electronic structure problem encompass a hierarchy of methods with varying trade-offs between accuracy and computational cost, summarized in Table 1.

Table 1: Traditional Electronic Structure Methods and Their Computational Scaling

| Method | Theoretical Foundation | Computational Scaling | Key Limitations |

|---|---|---|---|

| Hartree-Fock (HF) | Single Slater determinant | O(N³–N⁴) | Neglects electron correlation [1] |

| Density Functional Theory (DFT) | Electron density functional | O(N³) | Accuracy depends on exchange-correlation functional [3] |

| Coupled-Cluster SD(T) | Exponential cluster operator | O(N⁷) | "Gold standard" but prohibitively expensive for large systems [3] |

| Full Configuration Interaction (FCI) | Linear combination of all determinants | Combinatorial | Exact solution but computationally feasible only for tiny systems [1] |

The steep computational scaling of accurate methods like CCSD(T) has traditionally restricted their application to small molecules of approximately 10 atoms, creating a significant gap between computationally accessible systems and biologically relevant drug targets [3].

Emerging Machine Learning Approaches

Recent advances in machine learning have created new paradigms for addressing the electronic structure bottleneck:

MEHnet (Multi-task Electronic Hamiltonian network) developed by MIT researchers utilizes a CCSD(T)-trained E(3)-equivariant graph neural network to predict multiple electronic properties simultaneously, including dipole moments, electronic polarizability, and optical excitation gaps, at CCSD(T) level accuracy but with dramatically reduced computational cost [3].

NextHAM framework introduces a neural E(3)-symmetry architecture with expressive correction for materials Hamiltonian prediction. This approach uses zeroth-step Hamiltonians constructed from initial electron density as physical descriptors, significantly simplifying the input-output mapping and enabling generalization across diverse material systems [6]. On the Materials-HAM-SOC benchmark comprising 17,000 material structures, NextHAM achieved prediction errors of 1.417 meV across full Hamiltonian matrices while offering substantial computational advantages over traditional DFT [6].

Quantum Subspace Methods represent another emerging approach, with theoretical frameworks establishing rigorous complexity bounds for molecular electronic structure calculations. These methods enable quantum computers to efficiently explore molecular potential energy surfaces through iterative subspace construction, with proven exponential reduction in required measurements for transition-state mapping of chemical reactions [7].

Application Notes for Drug Design Workflows

Quantum-Mechanical Refinement of Experimental Structures

Accurate protein-ligand structure determination is critical for structure-based drug discovery (SBDD). QuantumBio has integrated the DivCon semiempirical quantum mechanics engine into crystallographic refinement to enable automated, density-driven structure preparation and QM/MM refinement [5]. This protocol addresses key limitations of traditional refinement methods:

- Protonation state determination: XModeScore with SE-QM refinement determines protonation states and stereoisomers even at medium resolutions where experimental methods struggle [5].

- Ligand strain reduction: QM/MM refinement improves agreement with experimental data while yielding chemically accurate models with reduced ligand strain [5].

- Interaction clarification: Provides more accurate modeling of protein-ligand interactions including hydrogen bonding, polarization, and charge transfer effects [5].

This approach produces structurally precise, chemically robust models that enhance the quality of AI/ML training datasets and support more reliable computer-aided drug design workflows [5].

Quantum Computing for Molecular Simulation

Quantum computing represents a transformative approach to the electronic structure problem, with potential value creation of $200-500 billion in pharmaceuticals by 2035 [8]. Key applications under development include:

- Precision protein simulation: Quantum computers can accurately model protein folding geometries with solvent environment effects, vital for understanding protein behavior and identifying drug targets, especially for orphan proteins with limited experimental data [8].

- Enhanced electronic structure simulations: Quantum computing offers unprecedented detail for calculating electronic structures of complex systems like metalloenzymes, which are critical for drug metabolism studies [8].

- Improved binding affinity predictions: Quantum-enhanced docking and structure-activity relationship analysis provide more reliable predictions of drug-target binding strengths [8].

Leading pharmaceutical companies including AstraZeneca, Boehringer Ingelheim, and Amgen are actively exploring these applications through collaborations with quantum technology providers [8].

Experimental Protocols

Protocol: Neural Network Prediction of Electronic Hamiltonians

This protocol outlines the methodology for utilizing deep learning models to predict electronic Hamiltonians, based on the NextHAM framework [6].

Research Reagent Solutions:

Table 2: Essential Computational Tools for Electronic Structure Prediction

| Research Reagent | Function/Application |

|---|---|

| MEHnet Architecture | E(3)-equivariant graph neural network for multi-property prediction [3] |

| NextHAM Framework | Neural E(3)-symmetry model with expressive correction for Hamiltonian prediction [6] |

| DivCon SE-QM Engine | Semiempirical QM engine for crystallographic refinement [5] |

| Quantum Subspace Algorithms | Quantum algorithms for molecular electronic structure with proven complexity bounds [7] |

| Materials-HAM-SOC Dataset | Benchmark dataset with 17,000 material structures for training Hamiltonian prediction models [6] |

Step-by-Step Methodology:

Input Feature Preparation:

- Generate zeroth-step Hamiltonians (H⁽⁰⁾) from initial electron density ρ⁽⁰⁾(r) calculated as the sum of isolated atomic charge densities

- Construct atomic coordinate representations with strict E(3)-symmetry preservation

- Encode diverse elemental information across periodic table elements

Network Architecture and Training:

- Implement E(3)-equivariant Transformer architecture with non-linear expressiveness

- Set regression target as ΔH = H⁽ᵀ⁾ - H⁽⁰⁾ rather than full H⁽ᵀ⁾ to reduce output space complexity

- Apply joint optimization framework simultaneously refining real-space and reciprocal-space Hamiltonians

- Utilize loss function preventing "ghost states" and error amplification from overlap matrix condition number

Validation and Analysis:

- Evaluate Hamiltonian prediction accuracy in real space (target: <1.5 meV error)

- Validate band structures derived from k-space Hamiltonians against DFT references

- Assess spin-off-diagonal block accuracy (target: sub-μeV scale for SOC effects)

Protocol: Quantum-Classical Hybrid Workflow for Drug Discovery

This protocol outlines the integration of quantum computational methods into classical drug discovery pipelines, based on industry implementations [8].

Step-by-Step Methodology:

Target Identification and System Preparation:

- Select protein targets with limited structural data or complex electronic properties

- Prepare molecular systems including protein active site, ligands, and solvent environment

- Define electronic structure problem parameters (basis sets, active space, convergence criteria)

Quantum-Accelerated Calculation:

- Implement quantum subspace diagonalization for ground and excited state calculations

- Apply error mitigation strategies for near-term quantum hardware limitations

- Utilize quantum machine learning for molecular descriptor analysis and activity prediction

Classical Integration and Validation:

- Incorporate quantum-derived electronic structure information into molecular dynamics simulations

- Validate predictions against experimental data for binding affinities and spectroscopic properties

- Refine force field parameters based on quantum-mechanical potential energy surfaces

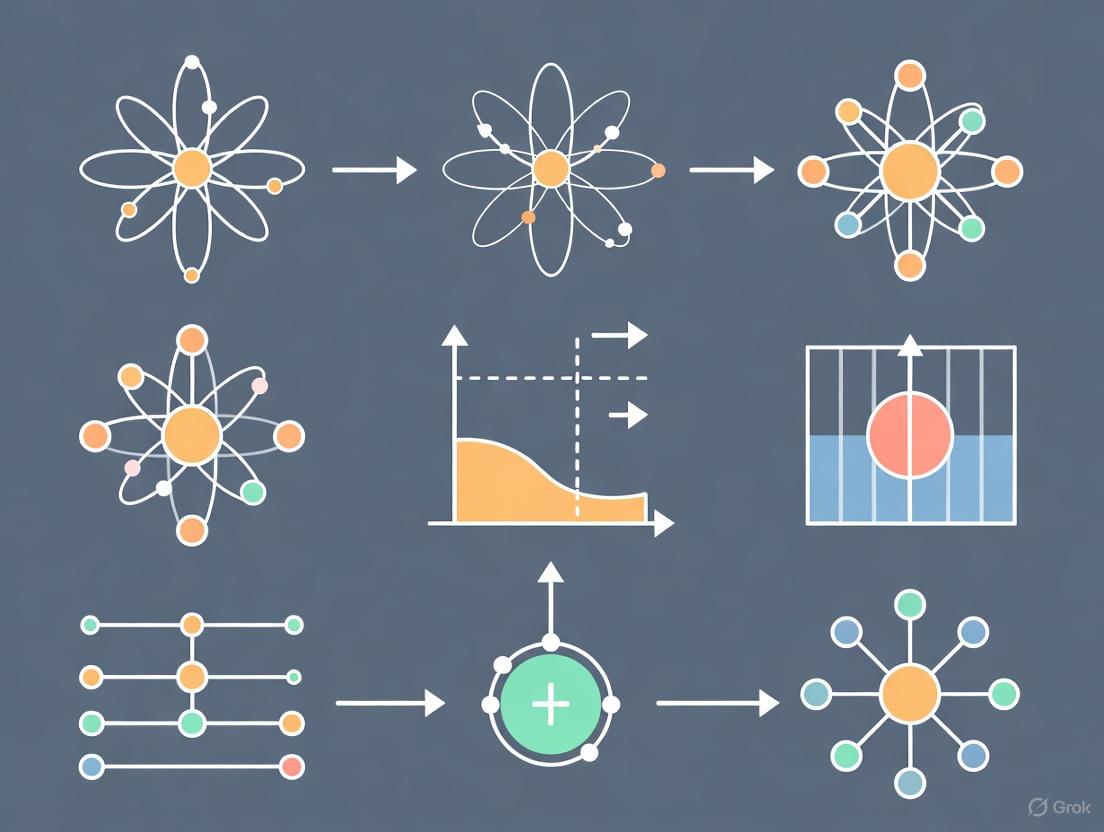

The workflow for this protocol can be visualized as follows:

Data Presentation and Analysis

Performance Metrics of Advanced Electronic Structure Methods

Table 3: Quantitative Performance of Next-Generation Electronic Structure Methods

| Method | System Size | Accuracy Metrics | Computational Efficiency | Application Scope |

|---|---|---|---|---|

| NextHAM [6] | 17,000 materials across 68 elements | 1.417 meV Hamiltonian error; SOC blocks: sub-μeV scale | DFT-level precision with dramatically improved computational efficiency | Universal materials Hamiltonian prediction |

| MEHnet [3] | Thousands of atoms (eventually 10,000s) | CCSD(T)-level accuracy for multiple electronic properties | Faster than DFT with bad scaling; enables high-throughput screening | Organic molecules, heavier elements including Pt |

| Quantum Subspace Methods [7] | Chemical reaction transition states | Chemical accuracy with polynomial advantage | Exponential measurement reduction for transition states | Battery electrolytes, drug discovery, materials design |

| QM/MM Refinement [5] | Protein-ligand complexes | Improved agreement with experimental data; reduced ligand strain | Automated density-driven structure preparation | X-ray & Cryo-EM refinement, tautomer analysis |

The electronic structure problem continues to represent a significant computational bottleneck in chemistry and drug design, but emerging methodologies are progressively overcoming these limitations. Machine learning approaches like MEHnet and NextHAM demonstrate that neural networks can achieve high accuracy while dramatically reducing computational costs [6] [3]. Meanwhile, quantum computing developments promise to fundamentally transform electronic structure calculations through first-principles simulations of unprecedented accuracy [8].

The integration of these technologies into established drug discovery workflows is already enhancing structure-based design through improved refinement protocols [5] and more reliable protein-ligand interaction models [2]. As these methods mature and scale to larger biological systems, they will progressively eliminate the electronic structure bottleneck, enabling truly predictive in silico drug discovery and materials design.

The simulation of molecular electronic structure is a problem of fundamental importance in chemistry and drug discovery, yet it remains notoriously challenging for classical computers. This challenge arises because molecular systems are governed by quantum mechanics, and the computational resources required for exact simulations scale exponentially with system size on classical hardware. Quantum computation offers a paradigm shift by harnessing the very quantum phenomena that make these simulations difficult, using them as computational resources [9].

The core quantum phenomena of superposition, entanglement, and interference form the foundation for this approach. Superposition allows a quantum computer to represent exponentially many quantum states simultaneously. Entanglement creates powerful correlations between quantum bits (qubits) that enable the compact representation of correlated molecular wavefunctions. Interference allows quantum algorithms to amplify computational pathways leading to correct solutions while canceling out those that do not [9]. This application note details the practical harnessing of these phenomena for electronic structure simulations, providing protocols and resources for researchers.

Superposition: Beyond Binary States

Superposition is the fundamental property that allows a quantum system to exist in multiple states at once. In quantum computing, the basic unit of information is the qubit. Unlike a classical bit, which can be only 0 or 1, a qubit can be in a state described by (|ψ⟩ = c0|0⟩ + c1|1⟩), where (c0) and (c1) are complex probability amplitudes ((|c0|^2 + |c1|^2 = 1)) [9]. This state can be visualized as a point on the surface of the Bloch sphere. For (n) qubits, the system can be in a superposition of all (2^n) possible basis states simultaneously. This exponential scaling is what allows quantum computers to naturally represent molecular wavefunctions, which are superpositions of many electronic configurations.

Entanglement: Encoding Correlated Wavefunctions

Entanglement is a uniquely quantum correlation between qubits. When qubits are entangled, the state of the entire system cannot be described as a product of individual qubit states; they form a single, indivisible quantum entity. This "spooky action at a distance," as Einstein called it, is crucial for efficiently representing the strongly correlated electron behavior found in molecular bonds, transition metal complexes, and "strange metals" [10] [11]. In electronic structure calculations, entanglement is the resource that allows a quantum computer to model the complex, multi-electron interactions that are computationally expensive for classical methods.

Interference: Directing Probability Amplitudes

Interference is the phenomenon where the probability amplitudes of different quantum states combine. Like waves, these amplitudes can interfere constructively (increasing the probability of a desired outcome) or destructively (decreasing the probability of an incorrect outcome) [9]. Quantum algorithms are carefully designed sequences of operations that choreograph this interference. The goal is to manipulate the quantum state so that measurements at the end of the computation yield the correct answer—such as the ground state energy of a molecule—with high probability.

Table 1: Core Quantum Phenomena and Their Computational Roles

| Quantum Phenomenon | Computational Role | Relevance to Electronic Structure |

|---|---|---|

| Superposition | Enables parallel evaluation of exponentially many states | Simultaneously represents multiple electronic configurations |

| Entanglement | Creates correlations between qubits to represent complex systems | Models electron-electron interactions beyond mean-field theory |

| Interference | Combines computational paths to amplify correct answers | Extracts physically meaningful energies and properties from simulations |

Quantitative Performance of Quantum Simulation Methods

Recent large-scale experiments have demonstrated the practical application of these principles. The following table summarizes key quantitative results from a state-of-the-art hybrid quantum-classical simulation of iron-sulfur clusters, which are biologically essential but classically challenging systems [12].

Table 2: Performance Data from a Large-Scale Electronic Structure Calculation

| Parameter | [2Fe-2S] Cluster | [4Fe-4S] Cluster | Methodology Notes |

|---|---|---|---|

| Problem Qubits | 72 qubits | 72 qubits | Jordan-Wigner mapping of active space |

| Active Space | 50e- in 36 orbitals | 54e- in 36 orbitals | Chemically realistic active space |

| Hilbert Space Size | (3.61 \times 10^{17}) | (8.86 \times 10^{15}) | Beyond exact diagonalization limits |

| Classical Compute Nodes | 152,064 nodes (Fugaku) | 152,064 nodes (Fugaku) | Quantum-centric supercomputing |

| Energy Accuracy (vs. DMRG) | Approached DMRG accuracy | Between RHF and CISD | SQD method with LUCJ ansatz |

| Key Algorithm | Sample-based Quantum Diagonalization (SQD) | Sample-based Quantum Diagonalization (SQD) | Quantum sampling + classical diagonalization |

Experimental Protocol: Quantum Simulation of Electronic Structure

This protocol details the hybrid quantum-classical workflow for computing the ground-state energy of a molecule, such as an iron-sulfur cluster, using the Sample-based Quantum Diagonalization (SQD) method [12].

Pre-Computation: Hamiltonian Generation (Classical)

- Molecular Geometry Input: Begin with the optimized molecular geometry of the target system (e.g.,

[Fe₂S₂(SCH₃)₄]²⁻). - Active Space Selection: Select a chemically relevant active space (e.g., 54 electrons in 36 orbitals for a [4Fe-4S] cluster). This step is critical and requires chemical intuition.

- Integral Calculation: Use a classical quantum chemistry package (e.g., PySCF) to compute the one- and two-electron integrals ((h_{pr}) and ((pr\|qs))) in the chosen basis set.

- Qubit Hamiltonian Mapping: Map the electronic Hamiltonian (Eq. 1) to a qubit operator using a fermion-to-qubit transformation (e.g., Jordan-Wigner or Bravyi-Kitaev). This yields a Pauli string representation of the Hamiltonian, (\hat{H} = \sumi ci Pi), where (Pi) are tensor products of Pauli matrices (I, X, Y, Z).

Ansatz Preparation and Sampling (Quantum)

- Ansatz Preparation: Prepare a parameterized wavefunction ansatz (|\Psi(\vec{\theta})\rangle) on the quantum processor. For the SQD method, the Local Unitary Cluster Jastrow (LUCJ) ansatz is used, with parameters often initialized from a classical CCSD calculation [12].

- Quantum Sampling: Perform repeated measurements of the prepared quantum state in the computational basis to obtain a set of sampled electronic configurations (bitstrings), denoted (\mathcal{S}). The number of samples must be sufficient to accurately represent the support of the ground state.

Post-Processing and Diagonalization (Classical)

- Configuration Recovery: Process the raw quantum samples (\mathcal{S}) to recover a list of electronic determinants.

- Projected Hamiltonian Construction: Construct the Hamiltonian matrix (\hat{H}_{\mathcal{S}}) by projecting the full qubit Hamiltonian into the subspace spanned by the sampled configurations (\mathcal{S}) (Eq. 2).

- Classical Diagonalization: Diagonalize the matrix (\hat{H}_{\mathcal{S}}) on a classical supercomputer to obtain the ground-state energy and wavefunction within the sampled subspace.

The following workflow diagram illustrates this integrated process:

The Scientist's Toolkit: Essential Research Reagents & Materials

This table catalogs the key hardware, software, and methodological "reagents" required for advanced quantum simulation experiments in electronic structure.

Table 3: Essential Research Reagents and Materials for Quantum Simulation

| Research Reagent | Function/Description | Example Use-Case |

|---|---|---|

| Superconducting Qubits (Heron) | Physical qubits with fast gate times; used in hybrid algorithms [12]. | Scalable, fast-cycle computation in hybrid quantum-classical workflows. |

| Molecular Beam Epitaxy (MBE) | A "3D-printing" nanofabrication technique that builds crystals layer-by-layer for high purity [13]. | Creating high-coherence rare-earth-doped crystals (e.g., erbium) for quantum memories and networks. |

| Local Unitary Cluster Jastrow (LUCJ) | A specific, hardware-efficient parameterized quantum circuit (ansatz) [12]. | Preparing an initial trial wavefunction that approximates the support of the true molecular ground state. |

| Sample-based Quantum Diagonalization (SQD) | A quantum-centric algorithm that uses quantum samples to build a classically diagonalizable subspace [12]. | Solving for ground states of large, correlated molecules (e.g., 72-qubit iron-sulfur clusters). |

| Quantum Fisher Information (QFI) | A metric from quantum information science used to quantify entanglement [11]. | Probing entanglement structure and identifying quantum critical points in materials like "strange metals". |

| Density Functional Theory (DFT) | A classical computational method that uses functionals of the electron density [14]. | Providing initial guesses for molecular orbitals and geometries for the active space selection. |

Visualization of Key Quantum-Classical Data Flow

The successful execution of these protocols relies on a tightly integrated data flow between quantum and classical resources, as demonstrated in recent landmark experiments. The following diagram details this orchestration.

Quantum computational chemistry is an emerging field that exploits quantum computing to simulate chemical systems, addressing a challenge that has been intractable for classical computers. As noted by Dirac in 1929, the fundamental quantum mechanical equations governing molecular behavior are inherently complex and difficult to solve using classical computation [15]. This complexity arises from the exponential growth of a quantum system's wave function with each added particle, making exact simulations on classical computers inefficient for all but the simplest systems [15].

The molecular Hamiltonian, which represents the total energy of the electrons and nuclei in a molecule, serves as the central component in these calculations [16]. In atomic units, the full molecular Hamiltonian can be represented as [17]:

[ H = -\sumi\frac{\nabla^{2}{\mathbf{R}i}}{2Mi}-\sumi\frac{\nabla^{2}{\mathbf{r}i}}{2}-\sum{i,j}\frac{Zi}{|\mathbf{R}i-\mathbf{r}j|} + \sum{i,j>i}\frac{ZiZj}{|\mathbf{R}i-\mathbf{R}j|}+\sum{i,j>i}\frac{1}{|\mathbf{r}i-\mathbf{r}_j|} ]

where (\mathbf{R}i), (Mi), and (Zi) are the position, mass, and charge of the nuclei, respectively, and (\mathbf{r}i) denotes the position of the electrons [17]. Solving the associated Schrödinger equation for this Hamiltonian allows researchers to predict molecular properties including stable structures, reactivity, and spectroscopic behavior [17] [16].

Theoretical Foundation: From Electrons to Qubits

The Second-Quantized Formalism

In practical quantum chemistry simulations, the molecular Hamiltonian is typically transformed into the second-quantized formalism, which provides a more tractable framework for computation [18]. In this representation, the electronic Hamiltonian takes the form:

[ H = \sum{p,q} h{pq} cp^\dagger cq + \frac{1}{2} \sum{p,q,r,s} h{pqrs} cp^\dagger cq^\dagger cr cs ]

where (c^\dagger) and (c) are the electron creation and annihilation operators, respectively, and the coefficients (h{pq}) and (h{pqrs}) denote the one- and two-electron Coulomb integrals evaluated using Hartree-Fock orbitals [18]. This representation requires choosing a basis set of molecular orbitals, which are typically constructed as linear combinations of atomic orbitals [18].

Fermion-to-Qubit Mapping Methods

The crucial step in adapting quantum chemistry problems for quantum computers involves mapping fermionic operations to qubit operations. This process must preserve the antisymmetric nature of fermionic wavefunctions, which is essential for capturing the Pauli exclusion principle. Three primary mapping approaches have been developed:

Table 1: Comparison of Fermion-to-Qubit Mapping Methods

| Mapping Method | Key Transformation | Advantages | Limitations |

|---|---|---|---|

| Jordan-Wigner [19] [15] | (a{j}^{\dagger} = \frac{1}{2}(Xj - iYj) \otimes{k | Intuitive occupation number representation; Simple implementation | Non-local string operators increase resource requirements |

| Parity [19] | (a{j}^{\dagger} = \frac{1}{2}(Z{j-1} \otimes Xj - iYj) \otimes{k>j} X{k}) | Enables qubit tapering using symmetries; Reduces qubit count | Replaces Z strings with X strings; Still non-local |

| Bravyi-Kitaev [19] | Hybrid representation storing both occupation and parity information | Logarithmic scaling; More local operators | More complex implementation; Less intuitive |

The Jordan-Wigner transformation provides the most intuitive approach by directly storing fermionic occupation numbers in qubit states [19]. However, it requires lengthy sequences of Pauli Z operations to maintain the correct anti-commutation relations, which increases the computational resources needed [19]. The Parity mapping instead stores the parity information (sum modulo 2 of occupation numbers) locally while occupation information becomes non-local [19]. The Bravyi-Kitaev mapping offers a balanced approach by storing both occupation and parity information non-locally, resulting in more efficient operator scaling [19].

Quantum Algorithms for Electronic Structure Calculation

Variational Quantum Eigensolver (VQE)

The Variational Quantum Eigensolver (VQE) has emerged as a leading algorithm for molecular simulations on noisy intermediate-scale quantum (NISQ) devices [17] [15]. First proposed by Peruzzo et al. in 2014, VQE is a hybrid quantum-classical algorithm that combines quantum state preparation and measurement with classical optimization [17] [15].

The algorithm operates on the variational principle, which guarantees that the expectation value of the Hamiltonian for any parameterized trial wave function will be at least the lowest energy eigenvalue of that Hamiltonian [15]. Mathematically, this is expressed as:

[ E(\theta) = \frac{\langle \psi(\theta) | H | \psi(\theta) \rangle}{\langle \psi(\theta) | \psi(\theta) \rangle} \geq E_0 ]

where (E0) is the true ground state energy [15]. In the Hamiltonian variational ansatz, the initial state (|\psi0\rangle) is evolved using parameterized exponentials of the Hamiltonian terms [15]:

[ |\psi(\theta)\rangle = \prodd \prodj e^{i\theta{d,j}Hj} |\psi_0\rangle ]

where (\theta_{d,j}) are the variational parameters [15].

The VQE algorithm follows these key steps [17]:

Ansatz Preparation: A parameterized trial wave function (ansatz) is prepared on the quantum computer, typically using the Unitary Coupled Cluster for Single and Double excitations (UCCSD) approach [17].

Expectation Measurement: The expectation value of the Hamiltonian is measured on the quantum computer [17].

Classical Optimization: Parameters are optimized iteratively on a classical computer to minimize the energy expectation value [17].

The number of measurements required for VQE scales as (Nm = (\sumi |hi|)^2/\epsilon^2), where (hi) are the Hamiltonian coefficients and (\epsilon) is the desired precision [15]. For molecular systems, this typically scales as (O(M^4/\epsilon^2)) to (O(M^6/\epsilon^2)), where M is the number of molecular orbitals [15].

Quantum Phase Estimation (QPE)

Quantum Phase Estimation (QPE) represents another major algorithmic approach for quantum chemistry simulations, with particular importance for fault-tolerant quantum computing [15]. First proposed by Kitaev in 1996, QPE identifies the lowest energy eigenstate by estimating the phase accumulated by the system under time evolution [15].

The QPE algorithm requires (\omega = n + \lceil \log2(2 + \frac{1}{2p}) \rceil) ancilla qubits, where (n) is the number of system qubits and (p) is the desired precision [15]. The total coherent time evolution required is (T = 2^{(\omega + 1)}\pi), with phase estimation error (\varepsilon{PE} = \frac{1}{2^n}) [15].

The algorithm proceeds through several key stages [15]:

Initialization: The qubit register is initialized in a state with nonzero overlap with the target Full Configuration Interaction (FCI) eigenstate.

Hadamard Application: Each ancilla qubit undergoes a Hadamard gate application, placing the ancilla register in a superposed state.

Controlled Unitaries: Controlled gates modify the superposed state based on the system Hamiltonian.

Inverse Quantum Fourier Transform: This transform reveals the phase information encoding the energy eigenvalues.

Measurement: The ancilla qubits are measured, collapsing the main register into the corresponding energy eigenstate.

While QPE provides more precise energy estimates than VQE, it requires deeper circuits and greater coherence times, making it more suitable for future fault-tolerant quantum hardware [15].

Experimental Protocols and Implementation

VQE Protocol for Molecular Ground State Energy

Objective: Calculate the ground state energy of a target molecule using the VQE algorithm.

Materials and Software Requirements:

- Quantum computing framework (PennyLane, Qiskit, or similar)

- Classical computational chemistry software for integral calculation

- Classical optimizer (COBYLA, L-BFGS-B, or similar)

Procedure:

Molecular Structure Definition:

- Specify atomic symbols and nuclear coordinates in atomic units

- Example for water molecule [18]:

Hartree-Fock Calculation:

- Compute molecular orbitals using the Hartree-Fock method

- Generate one- and two-electron integrals in the molecular orbital basis

Hamiltonian Construction:

- Build the second-quantized electronic Hamiltonian

- Apply fermion-to-qubit mapping (Jordan-Wigner, Parity, or Bravyi-Kitaev)

- Example using PennyLane [18]:

Ansatz Preparation:

- Initialize the quantum circuit in the Hartree-Fock state

- Apply UCCSD or other parameterized ansatz

- For UCCSD, the ansatz takes the form: (|\psi(\theta)\rangle = e^{T(\theta) - T^\dagger(\theta)}|HF\rangle)

Parameter Optimization Loop:

- Measure the energy expectation value (\langle H \rangle) on quantum hardware/simulator

- Update parameters using classical optimization algorithm

- Check convergence criteria (energy change < threshold or maximum iterations reached)

Result Validation:

- Compare with classical methods (Full CI, CCSD(T))

- Calculate error metrics and statistical analysis

Troubleshooting Tips:

- For convergence issues, try different initial parameters or optimizers

- If energy is significantly higher than expected, check ansatz expressibility

- For noisy hardware results, implement error mitigation techniques

Application to Molecular Systems

Quantum chemistry simulations have been successfully demonstrated for various small molecules, providing proof-of-principle validation of the approach. Recent implementations have calculated ground state energies for molecules including H₃⁺, OH⁻, HF, and BH₃ with increasing qubit requirements [17]. These simulations show good agreement between molecular ground state energy obtained from VQE and Full Configuration Interaction (FCI) benchmarks [17].

Table 2: Representative Molecules for Quantum Simulation Studies

| Molecule | Chemical Significance | Qubit Requirements | Simulation Accuracy |

|---|---|---|---|

| H₃⁺ | Most abundant ion in universe; simplest triatomic molecule | Moderate | High accuracy vs FCI benchmark [17] |

| OH⁻ | Base, ligand, and catalyst in chemical reactions | Moderate | Good agreement with classical methods [17] |

| HF | Precursor to pharmaceutical compounds | Higher | Accurate bond dissociation energies [17] |

| BH₃ | Strong Lewis acid | Higher | Demonstrates electron correlation effects [17] |

Table 3: Key Research Reagent Solutions for Quantum Chemistry Simulations

| Tool/Platform | Type | Primary Function | Application Example |

|---|---|---|---|

| PennyLane [19] [18] | Software framework | Quantum machine learning and quantum chemistry | Differentiable molecular Hamiltonian construction [18] |

| Qiskit [17] [20] | Quantum computing SDK | Quantum circuit design and execution | VQE implementation for molecular systems [17] |

| InQuanto [21] | Computational chemistry platform | Quantum computational chemistry workflows | Error-corrected chemistry simulations [21] |

| UCCSD Ansatz | Algorithmic component | Parameterized wavefunction ansatz | Electron correlation treatment in VQE [17] |

| Jordan-Wigner Transform | Mapping method | Fermion-to-qubit encoding | Molecular Hamiltonian representation [19] |

| Parity Mapping | Mapping method | Fermion-to-qubit encoding with symmetry reduction | Qubit count reduction via tapering [19] |

Advanced Applications and Future Directions

Error Correction and Fault Tolerance

Recent advances have demonstrated the first scalable, error-corrected, end-to-end computational chemistry workflows [21]. These implementations combine quantum phase estimation with logical qubits for molecular energy calculations, representing an essential step toward fault-tolerant quantum simulations [21]. The QCCD (Quantum Charge-Coupled Device) architecture with all-to-all connectivity, mid-circuit measurements, and conditional logic enables more complex quantum computing simulations than previously possible [21].

Scalability and Hardware Considerations

Modular quantum architectures have emerged as a promising approach for scaling quantum computing systems by connecting multiple Quantum Processing Units (QPUs) [20]. However, this approach introduces challenges due to costly inter-core operations between chips and quantum state transfers, which contribute to noise and quantum decoherence [20]. Advanced compilation techniques using attention-based deep reinforcement learning can optimize qubit allocation and routing to minimize inter-core communications [20].

Mid-circuit measurement and reset capabilities enable qubit reuse during computation, potentially reducing qubit requirements and minimizing inter-core communications significantly [20]. This functionality allows a single physical qubit to be used for multiple logical qubits at different points during circuit execution, dramatically reducing resource requirements for sequential algorithms [20].

The mapping of molecules to qubits represents a fundamental transformation in computational chemistry, enabling researchers to leverage quantum computers for solving electronic structure problems that are intractable for classical computers. The development of efficient fermion-to-qubit mapping techniques, combined with hybrid quantum-classical algorithms like VQE, has established a viable pathway toward quantum advantage in chemical simulation.

As quantum hardware continues to advance with improved error correction, higher qubit counts, and enhanced connectivity, these mapping techniques will enable increasingly complex and accurate simulations of molecular systems. The integration of quantum computing with high-performance classical computing and artificial intelligence promises to create powerful synergistic approaches for tackling challenging problems in drug discovery, materials design, and fundamental chemical research.

The workflow from molecular structure to qubit representation to quantum algorithm execution has now been demonstrated for a growing range of chemical systems, establishing a foundation for the continued expansion of quantum computational chemistry into new domains of scientific and industrial relevance.

In the field of computational chemistry and materials science, accurately solving the electronic structure of molecules and materials is foundational for predicting properties, guiding experiments, and designing new compounds. Classical computational methods, primarily Density Functional Theory (DFT) and Hartree-Fock (HF), have long been the workhorses for these calculations. However, despite their widespread use, these methods face significant and inherent limitations when applied to complex systems. Framed within a broader thesis on the applications of quantum theory in electronic structure research, this application note details the specific points of failure for DFT and HF, supported by quantitative benchmarking. It further explores emerging methodologies, including advanced machine learning and quantum computing approaches, that are being developed to overcome these challenges, providing detailed protocols for practitioners.

Key Limitations of Classical Methods: A Quantitative Analysis

The limitations of HF and DFT can be broadly categorized into challenges related to accuracy and computational cost. The following sections and summary table provide a detailed comparison.

Accuracy and Applicability Limits

- Excited State and Electronically Complex Systems: DFT, while sufficient for many ground-state properties, is notably inadequate for modeling excited state properties, such as how materials behave when interacting with light or conducting electricity [22]. This restricts its utility for predicting optoelectronic and excited-state dynamics.

- Systematic Errors in Molecular Properties: Benchmarking studies reveal significant absolute errors in predicting key molecular properties. For instance, as shown in Table 1, even the best-performing HF method for a set of push-pull chromophores exhibits a Mean Absolute Percentage Error (MAPE) of 45.5% for first hyperpolarizability (( \beta )), a key nonlinear optical property [23].

- Inability to Capture Strong Correlation: Both HF and DFT struggle with systems exhibiting strong electron correlation, such as transition metal complexes, bond-breaking processes, and frustrated magnetic systems. Their approximations often fail to describe the multi-configurational nature of these systems accurately.

Computational Cost and Scalability

- Exponential Scaling with System Size: Conventional methods for calculating excited-state properties, such as the GW approximation, which builds upon DFT, can be prohibitively expensive. For a system with just three atoms, such calculations can require between 100,000 and one million CPU hours [22]. This severe computational scaling renders them infeasible for large or complex systems.

- Accuracy-Speed Trade-offs: As benchmarked in Table 1, there is a constant trade-off between computational accuracy and cost. While larger basis sets and advanced functionals can improve accuracy, the computational cost increases dramatically with diminishing returns [23].

Table 1: Benchmarking HF and DFT for Molecular Hyperpolarizability (Adapted from [23])

| Method | Mean Absolute Percentage Error (MAPE, %) | Computational Time (min/molecule) | Pairwise Rank Agreement |

|---|---|---|---|

| HF/STO-3G | 60.5 | 2.7 | Perfect (10/10 pairs) |

| HF/3-21G | 45.5 | 7.4 | Perfect (10/10 pairs) |

| HF/6-31G(d,p) | 50.4 | 22.0 | Perfect (10/10 pairs) |

| B3LYP/3-21G | 50.1 | 14.9 | Perfect (10/10 pairs) |

| CAM-B3LYP/3-21G | 47.8 | 28.1 | Perfect (10/10 pairs) |

| M06-2X/3-21G | 48.4 | 35.0 | Perfect (10/10 pairs) |

Detailed Protocols for Assessing Method Limitations

To systematically evaluate the performance of electronic structure methods, researchers can follow specific benchmarking and analysis protocols.

Protocol 1: Benchmarking Hyperpolarizability with HF/DFT

Application Note: This protocol is designed to assess the accuracy and efficiency of HF and DFT functionals in predicting the first hyperpolarizability (( \beta )) of push-pull organic chromophores, which is critical for nonlinear optical material design [23].

Materials & Computational Setup:

- Software: PySCF quantum chemistry package.

- Hardware: Standard computational node (e.g., Intel i7-12700K, 32 GB RAM).

- Molecule Set: Five prototypical push-pull chromophores with experimentally known ( \beta ) values (e.g., para-nitroaniline, Disperse Red 1 analog).

- Method Combinations: 5 functionals (HF, PBE0, B3LYP, CAM-B3LYP, M06-2X) × 6 basis sets (STO-3G, 3-21G, 6-31G, 6-311G, 6-31G(d,p), 6-311G(d)).

Procedure:

- Molecular Geometry Optimization: Obtain and verify stable ground-state geometries for all test molecules.

- Hyperpolarizability Calculation: Compute the static hyperpolarizability tensor components using the finite field method.

- Apply a finite electric field strength (e.g., ( h = 0.001 ) a.u.).

- Numerically differentiate the molecular dipole moments to obtain ( \beta ).

- Performance Evaluation:

- Calculate the Mean Absolute Percentage Error (MAPE) for each method combination against experimental data.

- Assess Pairwise Rank Agreement: For all molecule pairs, verify if the calculation correctly identifies which molecule has the larger ( \beta ) compared to experiment.

- Record the wall-clock computational time for each calculation.

- Data Analysis:

- Identify Pareto-optimal methods that balance accuracy and speed.

- Analyze the effect of basis set size and functional type on error and cost.

Protocol 2: AI-Accelerated Excited-State Calculation

Application Note: This protocol uses a variational autoencoder (VAE) to dramatically accelerate the calculation of quasiparticle energies (band structures), a task that is traditionally computationally prohibitive with DFT-based methods [22].

Materials & Computational Setup:

- Software: Custom code incorporating a VAE and a second neural network.

- Initial Data: Wave functions from DFT calculations, represented as high-resolution images in space (~100 GB of data initially).

- AI Model: Unsupervised variational autoencoder (VAE) for dimensionality reduction, followed by a predictive neural network.

Procedure:

- Data Generation and Preparation: Perform initial DFT calculations on target materials (e.g., 2D systems) to generate electron wave functions.

- Wave Function Representation: Plot the wave function as a probability distribution over space, treating it as an image.

- Dimensionality Reduction with VAE:

- Train the VAE in an unsupervised manner to compress the wave function image data from ~100 GB into a compact, latent representation of approximately 30 numbers.

- Property Prediction:

- Use the 30-number latent representation as input to a second, supervised neural network.

- Train this network to predict the excited-state properties of interest, such as band structures.

- Validation: Compare the AI-predicted results with those from high-accuracy, computationally expensive reference calculations where feasible.

Emerging Solutions to Classical Limitations

The limitations of DFT and HF have spurred the development of next-generation computational strategies.

AI and Machine Learning Approaches

- Deep Active Optimization: Frameworks like DANTE integrate deep neural networks as surrogate models with a tree search algorithm to efficiently explore high-dimensional (up to 2,000 dimensions) search spaces with limited data. This is particularly valuable for optimizing complex systems where the objective function is nonconvex and nondifferentiable [24].

- Data Pruning to Counter Spurious Correlations: A novel technique addresses the problem of AI models latching onto spurious correlations in training data. By identifying and removing a small subset of the most "difficult" training samples, this method severs misleading correlations without requiring prior knowledge of what those correlations are, leading to more robust models [25].

Quantum Computing Approaches

- Hybrid Quantum-Classical Algorithms: Researchers are developing iterative hybrid simulations using current noisy intermediate-scale quantum (NISQ) computers. In this approach, a small number of qubits (e.g., 4-6) perform part of the calculation, and the results are processed by a classical computer. This method, combined with new error mitigation techniques, has been used to solve the electronic structures of spin defects in solids, paving the way for tackling problems beyond the reach of classical computers as hardware scales up [26].

- Variational Quantum Eigensolver (VQE): VQE is a leading algorithm for finding ground state energies on quantum hardware. It uses a parameterized quantum circuit (ansatz), such as the unitary coupled cluster (UCC) ansatz, and a classical optimizer. Techniques like active-space reduction and density matrix purification are employed to mitigate noise and reduce circuit depth for modern quantum processors [27].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Electronic Structure Research

| Tool / Reagent | Function/Brief Explanation | Example Use-Case |

|---|---|---|

| Density Functional Theory (DFT) | A classical method for approximating ground-state electronic structure; balances cost and accuracy. | Calculating formation energies, bond lengths, and ground-state vibrational modes of molecules and solids. |

| Hartree-Fock (HF) | A foundational quantum chemical method that does not include electron correlation beyond the exchange term. | Serves as a starting point for more accurate correlated methods; benchmarking. |

| Variational Autoencoder (VAE) | An AI model for unsupervised dimensionality reduction of complex data. | Compressing multi-gigabyte wave functions into a minimal latent representation for fast property prediction [22]. |

| Deep Active Optimization (DANTE) | An AI pipeline for finding optimal solutions in high-dimensional spaces with limited data. | Designing complex alloys, architected materials, or peptide binders where traditional optimization fails [24]. |

| Variational Quantum Eigensolver (VQE) | A hybrid quantum-classical algorithm for finding ground state energies on quantum computers. | Solving electronic structure problems for small molecules and solid-state defects on near-term quantum hardware [27] [26]. |

| Unitary Coupled Cluster (UCC) Ansatz | A specific form of trial wavefunction for VQE that conserves electron number. | Preparing physically meaningful, correlated quantum states for energy calculation [27]. |

| Error Mitigation Techniques | A suite of methods to reduce the impact of noise in quantum computations. | Purifying noisy density matrices (e.g., McWeeny purification) to improve the accuracy of computed energies [27]. |

Workflow Diagram: AI-Accelerated Electronic Structure Calculation

The following diagram illustrates the integrated workflow of the AI-accelerated protocol for calculating excited-state properties, demonstrating how it overcomes the computational bottleneck of traditional methods.

AI-Accelerated Electronic Structure Workflow

Hybrid Algorithms and Practical Pipelines: Quantum Computing Methods in Action

The field of electronic structure calculations, pivotal for materials science and drug discovery, is confronting the inherent limitations of classical computational methods, particularly for simulating strongly correlated quantum systems. The integration of quantum computing with classical high-performance computing (HPC) represents a transformative approach to overcoming these barriers. Hybrid quantum-classical paradigms leverage the complementary strengths of both technologies: quantum processors (QPUs) manage the exponentially complex aspects of quantum simulations, while classical supercomputers handle traditional computational workloads, data-intensive processing, and system orchestration. This collaboration is accelerating the path toward practical quantum advantage in scientific domains, especially in quantum chemistry and molecular dynamics, where accurate simulations can revolutionize the design of new materials and pharmaceuticals [28] [8]. The emergence of software frameworks and hardware architectures specifically designed for this integration marks a significant milestone in computational science, moving hybrid models from theoretical concepts to practical tools for research.

Fundamental Concepts and Architectural Frameworks

The Layered Architecture for Hybrid Systems

A successful hybrid quantum-classical supercomputing architecture operates on a layered principle, where classical resources are categorized based on their required latency and functional role. This hierarchical integration is crucial for managing the real-time demands of quantum processing.

- Ultra-low latency control (Quantum Runtime Network): This layer involves the quantum system controller (e.g., Quantum Machines' OPX1000) directly connected to the QPU. It performs real-time orchestration, mid-circuit measurement, and quantum feedback with latencies in the hundreds of nanoseconds, which is essential for operating within qubit coherence times. This layer transforms physical qubits into a functional Quantum Processing Unit (QPU) [29].

- Low-latency acceleration (Quantum Error Correction Network): The middle layer connects quantum controllers to dedicated acceleration servers, typically equipped with GPUs and CPUs. This layer handles computationally intensive tasks like quantum error correction (QEC) decoding, pulse calibration, and optimization policies. With round-trip latencies demonstrated below 4 microseconds, this layer is responsible for creating a logical QPU from a physical one [29].

- High-performance orchestration (HPC Network): The outermost layer involves the classical HPC cluster, which manages circuit compilation, large-scale calibration campaigns, data management, and the scheduling of hybrid applications. At this level, with latencies in the millisecond range, QPU jobs are submitted to a workload manager like Slurm, treating the quantum computer as just another accelerator in a heterogeneous computing ecosystem [30] [29].

Software Frameworks and Workflow Management

Enabling these layered architectures requires sophisticated software that can orchestrate workflows across quantum and classical boundaries. Frameworks like NVIDIA's CUDA-Q provide a unified, open-source programming model for co-programming CPUs, GPUs, and QPUs [31]. This allows researchers to integrate quantum kernels—specific circuits designed for a sub-task like energy estimation—seamlessly into larger classical applications.

The Quantum Framework (QFw), as demonstrated by Oak Ridge National Laboratory, provides a backend-agnostic orchestration layer. It allows hybrid applications to be reproducible and portable across different quantum simulators (e.g., Qiskit Aer, NWQ-Sim) and hardware providers (e.g., IonQ), ensuring that the best available resource is used for a specific task without rewriting application code [30]. Workflow Management Systems (WMS) like Pegasus are also being extended to manage the execution of tasks in these hybrid ecosystems, identifying which parts of a classical scientific workflow are candidates for quantum acceleration [28].

Applications in Electronic Structure Calculations

Hybrid paradigms are finding impactful applications in areas where classical methods struggle, particularly in calculating the electronic properties of molecules and materials. These applications align directly with the goal of achieving more accurate and predictive in silico research.

- Periodic Material Band Gaps: Researchers at IBM have demonstrated a hybrid workflow for investigating the band gap of periodic materials using an Extended Hubbard Model (EHM). The methodology involves classically computing the EHM's hopping and Coulomb interaction parameters from first principles using Density Functional Theory (DFT). A hybrid quantum-classical algorithm, specifically the Sample-based Quantum Diagonalization (SQD) framework with a Local Unitary Cluster Jastrow (LUCJ) ansatz, then solves the resulting electronic Hamiltonian to compute key electronic properties like band gaps, a critical parameter in materials science [32].

- Molecular Dynamics and Quantum Chemistry: Quantum computers can accurately model the electronic structure of molecules, providing a level of detail far beyond classical methods. This is crucial for predicting drug-molecule interactions, protein folding in solvent environments, and the activity of metalloenzymes. Companies like Boehringer Ingelheim and AstraZeneca are actively collaborating with quantum hardware firms to explore these applications [8]. Hybrid quantum-classical workflows have been successfully demonstrated for calculating molecular electronic structure properties, forming the core workflow behind several commercial chemistry applications [31].

- Drug Discovery and Optimization: The life sciences industry represents a major application domain, with an estimated value creation of $200-$500 billion by 2035. Quantum computing enables first-principles calculation of molecular properties, which can dramatically reduce the need for wet-lab experiments. Applications include precise protein simulation, improved docking and structure-activity relationship analysis, and the prediction of off-target effects for drug candidates early in the development pipeline [8].

Table 1: Key Application Areas and Demonstrated Workflows

| Application Area | Specific Problem | Hybrid Approach | Key Outcome/Potential |

|---|---|---|---|

| Materials Science | Band gap calculation of periodic materials | DFT (classical) + Sample-based Quantum Diagonalization (quantum) [32] | Accurate modeling of strongly correlated systems for material design. |

| Quantum Chemistry | Molecular electronic structure | Classical computation of integrals + Variational Quantum Eigensolver (VQE) [28] | More precise prediction of molecular properties and reaction paths. |

| Pharmaceutical R&D | Drug candidate screening & toxicity | Quantum-generated training data for AI / Quantum simulation of protein-ligand binding [8] | Accelerated drug discovery and reduced clinical trial failures. |

Experimental Protocols and Methodologies

Protocol: Hybrid Workflow for Molecular Property Calculation

This protocol outlines the steps for a typical hybrid quantum-classical computation of a molecule's electronic properties, as demonstrated in recent industry applications [31].

- Problem Formulation: Define the target molecule and the specific electronic property to be calculated (e.g., ground state energy, charge density, orbital energies).

- Hamiltonian Generation (Classical):

- Input: Molecular geometry (atomic types and positions).

- Method: Use classical computational chemistry software (e.g., based on Hartree-Fock or DFT approximations) to compute the one-body and two-body integrals of the electronic Hamiltonian.

- Output: A second-quantized fermionic Hamiltonian representing the system.

- QPU Preparation:

- Qubit Mapping: Transform the fermionic Hamiltonian into a spin-qubit Hamiltonian suitable for the QPU using techniques like the Jordan-Wigner or Bravyi-Kitaev transformation.

- Ansatz Selection and Circuit Compilation: Choose a parameterized quantum circuit (ansatz), such as the Unitary Coupled Cluster (UCC) or a hardware-efficient ansatz. Compile the circuit into the native gate set of the target QPU (e.g., IonQ Forte).

- Hybrid Execution Loop (Variational Algorithm):

- The classical optimizer (e.g., running on an NVIDIA A100 GPU) sets initial parameters for the quantum circuit.

- The parameterized circuit is executed on the QPU for multiple "shots" to gather measurement statistics.

- The measurement results are sent back to the classical optimizer, which calculates the expectation value of the Hamiltonian (the energy).

- The optimizer updates the circuit parameters to minimize the energy.

- Steps (b-d) are repeated until convergence is reached.

- Result Analysis (Classical): The converged energy and corresponding wavefunction are analyzed to extract the desired molecular properties. The entire workflow is coordinated by a hybrid platform like NVIDIA CUDA-Q [31].

Protocol: Distributed Quantum-Classical Simulation on an HPC Cluster

This protocol describes a backend-agnostic approach for running hybrid workloads on a supercomputer, as benchmarked on the Frontier cluster [30].

- Workload Submission: A user submits a hybrid application (e.g., a Quantum Approximate Optimization Algorithm - QAOA) written in a framework like Qiskit or PennyLane.

- Orchestration by QFw: The Quantum Framework (QFw), integrated with the HPC's resource manager (SLURM), receives the application.

- Resource Allocation and Task Distribution: QFw uses the Process Management Interface for Exascale (PMIx) to spawn distributed quantum tasks across multiple compute nodes. It analyzes the workload and dispatches quantum circuits to the most suitable backend based on the problem structure:

- Highly entangled states → NWQ-Sim.

- Large Ising models → Qiskit Aer with matrix product state method.

- Real quantum hardware → Cloud-based QPUs (e.g., IonQ) via REST API.

- Parallel Execution: For variational algorithms like QAOA, the framework can partition the problem and execute many sub-problems simultaneously across different backends (CPUs, GPUs, simulators, QPUs).

- Result Aggregation and Return: The classical optimizer node collects results from all distributed tasks, computes the overall cost function, and determines the new parameters for the next iteration. The final result is returned to the user.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Resources for Hybrid Quantum-Classical Experimental Research

| Category | Item / Tool | Function / Description |

|---|---|---|

| Software & Frameworks | NVIDIA CUDA-Q | Unified, open-source platform for co-programming CPU, GPU, and QPU in a single workflow [29] [31]. |

| Qiskit / PennyLane | Quantum software development kits for building and simulating quantum circuits and hybrid algorithms. | |

| Quantum Hardware Access | IonQ Forte | Trapped-ion quantum computer (36 algorithmic qubits) accessible via cloud for running hybrid workflows [31]. |

| IBM Quantum Systems | Superconducting qubit processors accessible via cloud, integrated within IBM's quantum-centric supercomputing roadmap [33]. | |

| Classical HPC & Control | Quantum Machines OPX1000 | Quantum system controller providing ultra-low latency (nanoseconds) real-time control and feedback for QPUs [29]. |

| SLURM Workload Manager | Open-source job scheduler for managing and allocating resources in a classical HPC cluster for hybrid workflows [30]. | |

| Algorithmic & Modeling | Variational Quantum Eigensolver (VQE) | A hybrid algorithm for finding the ground state energy of a molecular system, central to quantum chemistry [28]. |

| Extended Hubbard Model (EHM) | A lattice Hamiltonian used to represent the phenomenological behavior of periodic materials for quantum simulation [32]. |

Technical Diagrams and Workflow Visualization

System Architecture Diagram

The following diagram illustrates the three-layer classical integration blueprint for a hybrid quantum-classical supercomputer, showing the flow of information and control across different latency domains.

Quantum computing holds transformative potential for electronic structure calculations, a domain where the computational cost grows exponentially with system size on classical computers. This challenge is central to many fields, including drug discovery, where accurately modeling molecular interactions is critical [8]. Among the most promising approaches for tackling the electronic Schrödinger equation are the Variational Quantum Eigensolver (VQE) and Quantum Phase Estimation (QPE). VQE is a hybrid quantum-classical algorithm designed for today's Noisy Intermediate-Scale Quantum (NISQ) devices, trading some depth for noise resilience. In contrast, QPE is a fully quantum algorithm with proven efficiency, targeting future, fault-tolerant hardware for exact solutions [34]. These frameworks are pivotal for achieving quantum advantage in predicting molecular properties, enabling breakthroughs in the design of new pharmaceuticals and materials [8] [35].

Variational Quantum Eigensolver (VQE)

Theoretical Foundation

The VQE algorithm is grounded in the Rayleigh-Ritz variational principle, which states that for any parameterized wavefunction ( |\Psi(\theta)\rangle ), the expectation value of the Hamiltonian ( \langle H \rangle ) provides an upper bound to the true ground state energy ( E0 ): [ E0 \leq \frac{ \langle \Psi(\theta) | H | \Psi(\theta) \rangle }{ \langle \Psi(\theta) | \Psi(\theta) \rangle } ] The core objective is to variationally minimize this expectation value to approximate ( E_0 ) [36].

The molecular Hamiltonian in the second-quantized form is expressed as: [ H = \sum{pq} h{pq} ap^\dagger aq + \frac{1}{2} \sum{pqrs} h{pqrs} ap^\dagger aq^\dagger ar as ] where ( h{pq} ) and ( h{pqrs} ) are one- and two-electron integrals, and ( a^\dagger ) and ( a ) are fermionic creation and annihilation operators [34]. To execute this on a quantum computer, the fermionic Hamiltonian must be mapped to a qubit Hamiltonian using transformations such as the Jordan-Wigner or parity encoding, resulting in a form expressed as a sum of Pauli strings: [ H = \sumi ci Pi ] where ( Pi ) are Pauli operators and ( c_i ) are complex coefficients [34] [36].

Algorithmic Workflow and Protocol

The VQE protocol is an iterative hybrid quantum-classical loop. The following workflow details the steps for calculating the ground state energy of a molecule.

Figure 1: VQE algorithm workflow for molecular ground state energy calculation.

Experimental Protocol for Molecular Ground State Energy Calculation using VQE

Step 1: Hamiltonian Construction

Step 2: Fermion-to-Qubit Mapping

- Method: Transform the fermionic Hamiltonian to a qubit Hamiltonian using a mapping such as Jordan-Wigner or parity transformation. This step encodes fermionic operators into tensor products of Pauli matrices (I, X, Y, Z) [34].

Step 3: Ansatz Preparation

- Method: Prepare a parameterized trial wavefunction (ansatz) on the quantum processor. A common approach is the Unitary Coupled Cluster with Singles and Doubles (UCCSD) ansatz.

- Circuit: The UCCSD ansatz is constructed as ( U(\theta) = e^{T(\theta) - T^\dagger(\theta)} ), where ( T(\theta) ) is the cluster operator. The quantum circuit is built using a series of parameterized single- and two-qubit gates [34].

Step 4: Measurement and Expectation Estimation

- Method: Measure the expectation value ( \langle H \rangle = \sumi ci \langle \Psi(\theta) | Pi | \Psi(\theta) \rangle ) by measuring each Pauli term ( Pi ) individually. This can require a large number of circuit executions for statistical accuracy [36].

Step 5: Classical Optimization

Performance and Applications

VQE has been successfully applied to simulate small molecules, demonstrating its capability as a proof-of-concept for quantum chemistry on NISQ devices. The table below summarizes key performance metrics from recent studies.

Table 1: VQE Performance in Molecular Ground State Energy Calculations

| Molecule | Number of Qubits | Ansatz Type | Accuracy vs. FCI | Key Findings |

|---|---|---|---|---|

| H₂ | 4 | UCCSD | Chemically Accurate | Standard benchmark; successfully demonstrates VQE principle [36]. |

| H₃⁺ | Varies | UCCSD | Good Agreement | The simplest triatomic ion; VQE results agree well with FCI benchmark [34]. |

| OH⁻ | Varies | UCCSD | Good Agreement | Important base and catalyst; VQE provides accurate ground state energy [34]. |

| HF | 12 (with spin orbitals) | UCCSD | Good Agreement | Common molecule in pharmaceuticals; VQE accuracy higher than prior reports [34]. |

| BH₃ | Varies | UCCSD | Good Agreement | Strong Lewis acid; demonstrates VQE for systems with increasing qubit count [34]. |

Beyond these canonical examples, VQE's utility extends to industry research. For instance, AstraZeneca has explored quantum-accelerated workflows for chemical reactions relevant to small-molecule drug synthesis [8].

Quantum Phase Estimation (QPE)

Theoretical Foundation

Quantum Phase Estimation is a cornerstone quantum algorithm for determining the eigenphase of a unitary operator. For quantum chemistry, the goal is to find the ground state energy ( E0 ) of a molecular Hamiltonian ( H ). This is achieved by applying QPE to the time-evolution operator ( U = e^{iHt} ). If the system is prepared in an initial state ( |\psi\rangle ) with non-zero overlap with the true ground state ( |\phi0\rangle ), the algorithm probabilistically yields the energy ( E0 ) via the measured phase ( \phi ), where ( E0 = 2\pi \phi / t ) [37].

Unlike VQE, QPE is not a variational algorithm. It relies on the principles of superposition and quantum interference to project the initial state onto an energy eigenstate and measure its phase directly. This allows it to achieve chemical accuracy—the precision required for predicting chemical reactions—without relying on classical optimization loops [37] [38].

Algorithmic Workflow and Protocol

The standard QPE algorithm requires a large number of reliable qubits and deep circuits, making it challenging for current hardware. The protocol below outlines the steps for a fault-tolerant QPE computation, while also introducing a resource-optimized variant.

Figure 2: Standard QPE workflow for direct phase estimation.

Experimental Protocol for Ground State Energy via QPE/QPDE

Step 1: Hamiltonian Preparation and Block Encoding

- Method: The molecular Hamiltonian ( H ) must be block-encoded into a unitary operator ( U ). This is a non-trivial step that dominates the algorithm's resource requirements. The 1-norm of the Hamiltonian, ( \lambda = \sumi |ci| ), is a key parameter determining the cost [37].

Step 2: Initial State Preparation

- Method: Prepare an initial state ( |\psi\rangle ) on the target register. The fidelity of this state with the true ground state ( |\phi_0\rangle ) directly impacts the success probability. States are often prepared using classically efficient methods like Hartree-Fock [37].

Step 3: Quantum Phase (Difference) Estimation Circuit

- Standard QPE: This involves a series of controlled applications of the unitary ( U ) raised to increasing powers of two, followed by an inverse Quantum Fourier Transform on the ancilla register to extract the phase [38].

- Advanced Variant (QPDE): A recent innovation is the Quantum Phase Difference Estimation (QPDE) algorithm. Instead of estimating a single phase, QPDE uses tensor network-based compression to directly estimate the energy difference between a reference state and the target state. This significantly reduces gate complexity [38].

Step 4: Measurement and Energy Calculation

- Method: Measure the ancilla qubits to obtain a bit string representing a phase estimate ( \tilde{\phi} ). The energy is then calculated as ( E = 2\pi\tilde{\phi} / t ) [37].

Performance and Resource Optimization

QPE is theoretically capable of exact ground state energy calculation but is currently limited by resource constraints. Recent research focuses on optimizing these resources to bring practical applications closer to reality.

Table 2: QPE Resource Requirements and Optimization Strategies

| Aspect | Standard QPE Challenge | Proposed Optimization Strategy | Reported Improvement |

|---|---|---|---|

| Hamiltonian 1-norm (λ) | Scales quadratically with orbitals, dominating cost [37]. | Frozen Natural Orbitals (FNO): Use large-basis-set FNOs to create a compact, high-quality active space [37]. | Up to 80% reduction in λ and 55% reduction in orbital count [37]. |

| Gate Complexity/Depth | High gate overhead, infeasible on NISQ devices [38]. | Tensor-based QPDE: A new algorithm that compresses unitaries to estimate energy differences [38]. | 90% reduction in CZ gates (from 7,242 to 794) in a 33-qubit demo [38]. |

| Basis Set Quality | Large basis sets needed for accuracy explode resource cost [37]. | Basis Set Optimization: Adjusting Gaussian basis function coefficients to minimize λ while preserving accuracy [37]. | System-dependent reduction in λ (up to 10%) [37]. |

| Computational Capacity | Limited by noise and circuit width/depth [38]. | Performance Management Software: Use of tools like Fire Opal for noise-aware circuit compilation and optimization [38]. | 5x increase in achievable circuit width over previous QPE studies [38]. |

A landmark study involving Mitsubishi Chemical Group demonstrated the QPDE algorithm on an IBM quantum device, setting a record for the largest QPE demonstration and highlighting a viable path toward large-scale quantum chemistry simulations on evolving hardware [38].

The Scientist's Toolkit: Research Reagents & Computational Materials

Executing VQE and QPE experiments requires a suite of software and hardware "research reagents." The following table details essential components for quantum computational chemistry.

Table 3: Essential Research Reagents for Quantum Computational Chemistry

| Category | Item | Function & Purpose |

|---|---|---|

| Software & Libraries | Qiskit, PennyLane | Open-source frameworks for quantum algorithm design, simulation, and execution [34]. |

| Psi4, PySCF | Classical computational chemistry packages for calculating molecular integrals and reference energies [34]. | |

| Fire Opal (Q-CTRL) | Performance management software that automates error suppression and circuit optimization for hardware execution [38]. | |

| Algorithmic Components | Unitary Coupled Cluster (UCCSD) | A chemically motivated ansatz for preparing trial wavefunctions in VQE simulations [34]. |

| Frozen Natural Orbitals (FNO) | A method to generate compact, correlated active spaces from large basis sets, drastically reducing qubit requirements for QPE [37]. | |

| Hardware & Simulators | State Vector Simulators | Classical HPC tools that emulate an ideal quantum computer, used for algorithm development and validation [36]. |

| NISQ Hardware | Current-generation quantum processors (e.g., superconducting, ion trap) for running small-scale experiments and benchmarking [8] [39]. | |

| Classical Optimizers | BFGS, COBYLA | Classical optimization algorithms used in the VQE loop to minimize the energy with respect to the ansatz parameters [34] [36]. |

The accurate prediction of molecular properties, such as binding free energies, is a cornerstone of rational drug design and biochemical research. However, computational methods face a fundamental trade-off: high-accuracy quantum chemical methods are computationally prohibitive for large systems, while scalable classical force fields often lack the fidelity to capture critical quantum interactions [40]. This is particularly true for systems involving transition metals, open-shell electronic structures, and multiconfigurational characters, which are common in pharmaceutical compounds [41].

Modular computational pipelines represent a paradigm shift, addressing this challenge through a hybrid, "quantum-ready" architecture. These frameworks, exemplified by the FreeQuantum pipeline, strategically integrate classical simulation, machine learning, and quantum computational methods in a modular fashion [40] [41]. Their core innovation lies in using precise, computationally expensive quantum methods only where absolutely necessary—on small, chemically critical "quantum cores" of the molecular system—and then propagating this accuracy to the larger system via machine-learned potentials [41]. This provides a realistic and incremental pathway to quantum advantage in biochemistry, where future quantum computers can be seamlessly integrated to solve the most classically intractable subproblems [42].

The FreeQuantum pipeline is a three-layer hybrid model designed for calculating biomolecular free energies. Its modular architecture allows for the flexible integration of classical and quantum computational resources, focusing high-accuracy methods on the subproblems where they are most needed [40].

Core Modules and Data Flow

The pipeline's operation can be visualized through its primary data flow and modular components. The following diagram illustrates the high-level workflow and the relationship between its classical and quantum components.

Key Modules and Their Functions

- Classical Sampling Module: Utilizes classical molecular dynamics (MD) with standard force fields to extensively sample the conformational space of the biomolecular complex. This is computationally efficient but lacks quantum-level accuracy [41].

- Configuration Selection: Identifies a representative subset of molecular structures from the classical MD trajectory for subsequent high-accuracy processing. This step is critical for managing computational cost [40].

- Quantum Core Processing: This is the most critical and computationally intensive module, which performs electronic structure calculations on small, chemically relevant fragments of the larger system. The pipeline is designed to execute this module either on classical high-performance computers using methods like coupled cluster theory or, in the future, on fault-tolerant quantum computers using algorithms like Quantum Phase Estimation (QPE) [40] [41].

- Machine Learning Bridge: A machine learning model (a neural network potential) is trained to predict the potential energy surface of the entire system using the high-accuracy energy data from the quantum core calculations. This creates a link between local quantum accuracy and global molecular behavior [41].

- Free Energy Calculation: The trained machine-learned potential is used to perform free energy simulations, yielding a quantitatively accurate prediction of the binding affinity [40].

Experimental Validation: The NKP-1339/GRP78 Case Study

The FreeQuantum pipeline was experimentally validated by modeling the binding of a ruthenium-based anticancer drug, NKP-1339, to its protein target, GRP78 [40] [42]. This system represents a "worst-case scenario" for classical force fields due to the complex, open-shell electronic structure of the ruthenium transition metal center [41].

Detailed Experimental Protocol

The following workflow details the specific experimental steps undertaken in the NKP-1339/GRP78 binding free energy calculation.

Step-by-Step Methodology:

- System Preparation and Classical Sampling: The three-dimensional structure of the GRP78 protein in complex with the NKP-1339 drug was prepared and solvated in an explicit water model. Extensive classical molecular dynamics (MD) simulations were performed using standard molecular mechanics force fields to sample the thermodynamic ensemble of the bound and unstated states [41].

- Configuration Sampling and Quantum Core Definition: A diverse set of approximately 4,000 molecular configurations was extracted from the MD trajectories [40]. For each configuration, a quantum core was defined, focusing on the ruthenium atom and its immediate chemical environment within the drug and the protein binding pocket.

- High-Accuracy Energy Calculations: The electronic energy for each quantum core was calculated using a hierarchy of increasingly accurate quantum chemical methods. This progression included:

- Machine Learning Potential Training: The high-accuracy energy data from the quantum core calculations were used to train two levels of machine-learning potentials (labeled ML1 and ML2). These potentials learned to predict the system's potential energy surface based on the atomic coordinates, effectively generalizing the quantum-mechanical accuracy from the small core to the entire biomolecular complex [41].

- Free Energy Perturbation/Calculation: The trained ML potential was then employed in free energy calculation techniques (e.g., free energy perturbation or thermodynamic integration) to compute the binding free energy difference between the bound and unbound states [40].

Quantitative Results and Performance Data

The following table summarizes the key quantitative findings from the NKP-1339/GRP78 case study, highlighting the significant impact of high-accuracy quantum methods on the predicted binding affinity.