Quantum Monte Carlo in Electronic Structure: From Fundamental Theory to Breakthroughs in Drug Discovery

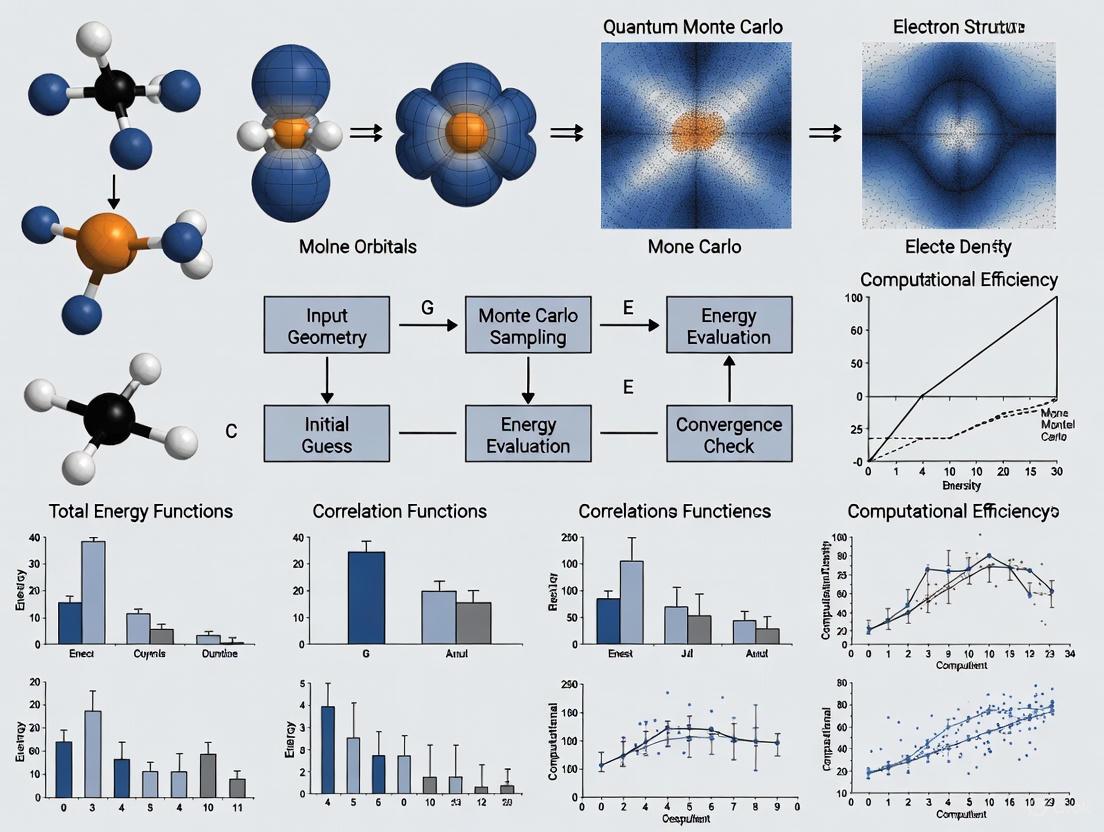

This article provides a comprehensive exploration of Quantum Monte Carlo (QMC) methods for electronic structure calculations, targeting researchers and professionals in computational chemistry and drug development.

Quantum Monte Carlo in Electronic Structure: From Fundamental Theory to Breakthroughs in Drug Discovery

Abstract

This article provides a comprehensive exploration of Quantum Monte Carlo (QMC) methods for electronic structure calculations, targeting researchers and professionals in computational chemistry and drug development. It covers foundational QMC principles, practical methodologies, and optimization strategies using leading software like QMCPACK. The scope includes real-world applications in molecular and solid-state systems, troubleshooting computational challenges, and validation through modern benchmarking techniques like the V-score. A key focus is on QMC's transformative potential in drug discovery for accurately predicting molecular properties, simulating protein-ligand interactions, and accelerating the design of novel pharmaceuticals.

Quantum Monte Carlo Fundamentals: Mastering Core Principles for Accurate Electronic Structure

The quantum many-body problem represents one of the most fundamental challenges in computational physics and chemistry, pertaining to the properties of microscopic systems composed of multiple interacting particles. In quantum mechanics, "many" can refer to anywhere from three to infinity (in the case of practically infinite, homogeneous systems like crystals), with systems of three to four particles sometimes separately classified as few-body systems that can be treated by specific mathematical approaches [1].

The core complexity arises from the quantum correlations and entanglement that develop through repeated interactions between particles. As a result, the wave function of the system becomes a complicated object holding massive amounts of information, making exact or analytical calculations generally impractical or impossible [1]. This challenge becomes evident when comparing quantum to classical mechanics: while a classical system of n particles can be described with k·n parameters (where k=6 for position and momentum in three dimensions), a quantum many-body system exists in a superposition of combinations of single particle states, leading to kⁿ possible combinations [1].

The exponential scaling of computational resources with system size makes simulating the dynamics of more than a handful of quantum-mechanical particles infeasible for many physical systems, ranking among the most computationally intensive fields of science [1]. These challenges are particularly prominent in condensed matter physics and quantum chemistry, where accurate descriptions of electron correlation are essential for predicting material properties and chemical behavior [2].

Quantum Monte Carlo Fundamentals

Quantum Monte Carlo (QMC) methods represent a family of computational approaches that circumvent the memory limitations of exact quantum chemical methods through statistical sampling of wavefunctions. Unlike full configuration interaction (FCI) methods, which have memory requirements scaling exponentially with system size, QMC methods achieve polynomial scaling by leveraging stochastic processes [3].

QMC methods are among the most accurate electronic structure techniques available, providing benchmark high accuracy for many-body calculations across diverse systems ranging from weakly bound molecules to strongly correlated solids [4]. These methods enable researchers to directly access many-body properties, validate more approximate electronic structure methods, and generate reference data for machine learning and artificial intelligence applications [4].

Table: Comparison of Electronic Structure Methods

| Method | Computational Scaling | Key Strengths | Key Limitations |

|---|---|---|---|

| Quantum Monte Carlo | Polynomial with system size | High accuracy for correlated systems; Direct many-body properties access | Statistical uncertainty; Sign problem for large systems |

| Hartree-Fock | N³-N⁴ | Computationally efficient; Good starting point | Neglects electron correlation |

| Density Functional Theory | N³-N⁴ | Balance of accuracy and efficiency | Functional approximation limitations |

| Coupled-Cluster | N⁶-N⁷ for CCSD(T) | High accuracy for small systems | Exponential scaling limits system size |

| Full CI | Exponential | Exact solution for given basis set | Only feasible for very small systems |

Key QMC Variants and Applications

Auxiliary-Field Quantum Monte Carlo (AFQMC) has emerged as a particularly powerful QMC approach. AFQMC represents the target wavefunction as a linear combination of Slater determinant wavefunctions (anti-symmetrized product states of occupied spin-orbitals), referred to as "walker states." These walker states remain Slater determinants throughout the stochastic evolution process, with their linear combination approximating the ground state wavefunction [3].

The accuracy of AFQMC critically depends on the quality of the trial wavefunction |ψT〉 used for importance sampling. This involves computing overlaps 〈φl|ψT〉 and local energies 〈φl|H|ψT〉 between the trial wavefunction and walker states at each algorithm step [3]. Classical computers can only efficiently evaluate these quantities using a restricted class of trial states, which has motivated the development of hybrid quantum-classical approaches.

QMC methods have demonstrated particular strength for challenging systems including van der Waals heterostructures, strongly correlated materials, and high-accuracy molecular calculations [4]. Recent advances have expanded applications to geometry relaxation of molecules and solids, further broadening the method's utility in materials design and drug discovery [4].

QMC Protocols and Workflows

Molecular System Setup Protocol

Objective: Prepare molecular system for QMC calculation Prerequisites: Molecular geometry, basis set selection, trial wavefunction choice

Molecular Specification

Implementation note: Molecular coordinates should be provided in angstroms with proper atomic symbols and positions.

Hartree-Fock Reference Calculation

The HF calculation provides the initial wavefunction guess and molecular orbitals.

Trial Wavefunction Preparation

Protocol note: The trial wavefunction significantly impacts QMC efficiency and accuracy.

Chemical Properties Computation

AFQMC Execution Workflow

Objective: Perform AFQMC calculation to obtain ground state energy Input: Prepared molecular system, trial wavefunction, computational parameters

AFQMC Computational Workflow

The AFQMC workflow involves these critical steps:

- Walker Initialization: Initialize walker states using the trial wavefunction

- Quantum Evaluation: Compute overlaps and local energies between walker states and trial wavefunction

- Stochastic Propagation: Update walker states using AFQMC update rules based on measured quantities

- Convergence Check: Monitor energy convergence across iterations

Quantum-Enhanced AFQMC Protocol

The emergence of hybrid quantum-classical QMC methods (qAFQMC) leverages quantum processing units (QPUs) to construct and sample trial wavefunctions that would be intractable to represent classically [3].

Quantum Circuit Implementation:

Parallelization Strategy:

Implementation note: The "max_pool" parameter controls computational core utilization, while "quantum_evaluations_every_n_steps" balances quantum resource usage with classical computation.

Research Toolkit

Table: Research Reagent Solutions for QMC Calculations

| Tool Category | Specific Software/Package | Function/Role | System Requirements |

|---|---|---|---|

| QMC Engines | QMCPACK | Main QMC simulation platform | CPU/GPU clusters, MPI parallelization |

| Electronic Structure | Quantum ESPRESSO, PySCF | Initial wavefunction generation | Moderate CPU resources |

| Quantum Computing | Amazon Braket, PennyLane | Hybrid quantum-classical QMC | QPU access or quantum simulators |

| Visualization & Analysis | VESTA, matplotlib | Result visualization and plotting | Standard workstations |

| Workflow Management | AiiDA, signac | Computational workflow organization | Database servers |

Methodological Comparison Framework

QMC Methodological Evolution

The researcher's toolkit should encompass this methodological spectrum:

- Classical QMC: Efficient treatment of Slater determinants on classical hardware [3]

- Hybrid QMC: Quantum trial states with classical walker updates [3]

- Full Quantum QMC: Complete quantum implementation for maximum accuracy

Applications in Drug Discovery

Quantum Monte Carlo methods have emerged as valuable tools in computational drug discovery, particularly in scenarios requiring high accuracy for molecular systems where traditional density functional theory (DFT) methods struggle. QMC provides precise molecular insights unattainable with classical methods alone, enabling researchers to model electronic structures, binding affinities, and reaction mechanisms with benchmark accuracy [5].

Drug Discovery Applications

Protein-Ligand Binding Affinity Prediction: QMC methods can accurately characterize weak interactions crucial in drug binding, including van der Waals forces, dispersion interactions, and hydrogen bonding networks. These capabilities are particularly valuable for:

- Kinase inhibitors: Small molecule therapeutics targeting protein kinases

- Metalloenzyme inhibitors: Compounds targeting metal-containing enzyme active sites

- Covalent inhibitors: Drugs forming covalent bonds with their targets

- Fragment-based leads: Small molecular fragments optimized for binding [5]

Challenge Mitigation: QMC addresses several limitations of conventional quantum chemical methods in drug discovery:

- Electron correlation effects: Properly captures correlation energies in molecular systems

- Strongly correlated systems: Handles transition metal complexes and radical species

- Non-covalent interactions: Accurately describes dispersion forces and weak interactions

- Binding energy benchmarks: Provides reference data for validating faster methods [6]

Validation Protocols

Experimental Correlation Framework:

Integration Workflow:

- QMC Calculation: Perform high-accuracy QMC simulation of drug candidate systems

- Experimental Measurement: Obtain experimental data using techniques above

- Machine Learning Bridge: Train models connecting QMC results to experimental outcomes

- Iterative Refinement: Use discrepancies to improve computational protocols

- Prediction Generation: Apply validated models to novel compound spaces

This integrated approach significantly accelerates the discovery of new materials and molecules, leading to breakthroughs in medicine and energy research [6]. The combination of HPC-enabled QMC simulations with machine learning and experimental validation creates a powerful pipeline for pharmaceutical development.

Future Perspectives

The integration of quantum computing with QMC methodologies represents a promising frontier for tackling currently intractable many-body problems in electronic structure research. Hybrid quantum-classical QMC approaches, such as quantum-AFQMC (qAFQMC), leverage quantum processors to construct and sample trial wavefunctions that are challenging to represent classically [3].

While quantum computation provides new routes to addressing the electronic structure problem, it remains an active research direction to identify specific systems and trial states for which quantum computers provide clear advantages over purely classical approaches. The potential benefits include reduced bias and variance in energy estimates, albeit with the introduction of measurement noise from statistical sampling of the QPU [3].

The continued development of QMC methods, including advances in trial wavefunctions, optimization algorithms, and hardware acceleration, promises to further expand the applicability of these powerful computational tools across chemistry, materials science, and pharmaceutical research.

Quantum Monte Carlo (QMC) methods represent a suite of powerful computational techniques for solving the Schrödinger equation for many-body quantum systems. Within the landscape of electronic structure research, two algorithms stand as cornerstones: Variational Monte Carlo (VMC) and diffusion Monte Carlo (DMC). These stochastic approaches provide a route to accurate solutions for molecular systems where traditional methods, such as density functional theory or post-Hartree-Fock approaches, struggle with accuracy or computational cost [7]. VMC leverages the variational principle to optimize a trial wavefunction, while DMC employs a projective technique to approach the exact ground state. Within the context of drug development, these methods offer the potential for sub-chemical accuracy in modeling molecular interactions, such as hydrogen bonding and adsorption phenomena, which are critical for understanding drug-receptor binding and material design [7]. This document provides a detailed exposition of these algorithms, their protocols, and their application in modern computational research.

Theoretical Foundations

The Variational Principle

The foundation of the VMC method is the variational principle, which states that for a trial wave function ΨT(\boldsymbol{R}, \boldsymbol{\alpha}), the expectation value of the Hamiltonian provides an upper bound to the true ground state energy E0:

[ E[H]= \langle H \rangle = \frac{\int d\boldsymbol{R}\Psi^{\ast}T(\boldsymbol{R})H(\boldsymbol{R})\PsiT(\boldsymbol{R})} {\int d\boldsymbol{R}\Psi^{\ast}T(\boldsymbol{R})\PsiT(\boldsymbol{R})} \ge E_0 ]

Here, \boldsymbol{R} represents the coordinates of all particles, and \boldsymbol{\alpha} denotes variational parameters [8]. The trial wave function can be expanded in the complete set of eigenstates of the Hamiltonian. The accuracy of the VMC calculation hinges on the quality of the trial wave function and the optimization of its parameters.

From VMC to DMC

While VMC is powerful, its accuracy is limited by the ansatz of the trial wave function. DMC overcomes this limitation through an imaginary-time evolution process. The core idea is to project out the ground state from a trial wave function by applying the operator e^{-(H - ET)\tau}, where \tau is imaginary time and ET is an energy offset [9]. This projection is stochastically simulated using a combination of diffusion and branching processes.

The infamous fermionic sign problem arises because electronic wave functions must be antisymmetric. This is typically circumvented by the fixed-node approximation, which restricts the stochastic evolution to regions where the trial wave function's nodal surface (where Ψ_T(\boldsymbol{R})=0) does not change [9] [7]. Consequently, the accuracy of fixed-node DMC is ultimately governed by the quality of the nodal surface of the trial wave function.

The Variational Monte Carlo (VMC) Method

VMC evaluates the multi-dimensional integral for the expectation value ⟨H⟩ by stochastically sampling configurations of the system according to the probability distribution P(\boldsymbol{R}) = |\psiT(\boldsymbol{R})|^2 / \int |\psiT(\boldsymbol{R})|^2 d\boldsymbol{R} [8]. A key concept is the local energy, EL(\boldsymbol{R},\boldsymbol{\alpha}) = \frac{1}{\psiT(\boldsymbol{R},\boldsymbol{\alpha})}H\psi_T(\boldsymbol{R},\boldsymbol{\alpha}). The expectation value of the Hamiltonian is then approximated as the average over N sampled configurations:

[ E[H(\boldsymbol{\alpha})] \approx \frac{1}{N}\sum{i=1}^N EL(\boldsymbol{R_i},\boldsymbol{\alpha}) ]

The following diagram illustrates the core workflow of a VMC calculation:

Detailed Experimental Protocol

The VMC algorithm, as outlined in the workflow, can be broken down into the following detailed steps [8]:

Initialization:

- Fix the total number of Monte Carlo steps (e.g., 10^5 to 10^7).

- Choose an initial configuration of the system, \boldsymbol{R}.

- Choose an initial set of variational parameters \boldsymbol{\alpha} for the trial wave function.

- Calculate the initial probability density |\psi_T^{\alpha}(\boldsymbol{R})|^2.

Monte Carlo Cycle:

- Propose a trial move: \boldsymbol{R}_p = \boldsymbol{R} + r \cdot \text{step}, where r is a random number uniformly distributed in [0,1].

- Use the Metropolis-Hastings algorithm to accept or reject the move:

- Calculate the ratio w = P(\boldsymbol{R}_p)/P(\boldsymbol{R}).

- Accept the move if w \geq \xi, where \xi is a random number in [0,1]. Otherwise, reject it.

- If the move is accepted, update the system configuration: \boldsymbol{R} = \boldsymbol{R}_p.

- For the current configuration (whether new or old), calculate and accumulate the local energy E_L(\boldsymbol{R}, \boldsymbol{\alpha}) and other observables of interest.

Averaging and Optimization:

- After a sufficient number of Monte Carlo steps, compute the averages of the accumulated quantities. The expectation value of the energy is ⟨H⟩ ≈ (1/N) \sum{i=1}^N EL(\boldsymbol{R_i}).

- Vary the parameters \boldsymbol{\alpha} to minimize the expectation value ⟨H⟩ (or a combination of energy and variance) using a minimization algorithm (e.g., the stochastic reconfiguration method [10]).

- Repeat the entire process until the energy converges to a minimum.

Example Application: Hydrogen Atom

As a pedagogical example, consider the hydrogen atom. The radial Schrödinger equation can be written in dimensionless form with the Hamiltonian H=-\frac{1}{2}\frac{\partial^2 }{\partial \rho^2}- \frac{1}{\rho}+\frac{l(l+1)}{2\rho^2}. A suitable trial wave function is uT^{\alpha}(\rho)=\alpha\rho e^{-\alpha\rho}, where \alpha is the variational parameter [8]. The local energy for this wave function is EL(\rho)=-\frac{1}{\rho}- \frac{\alpha}{2}\left(\alpha-\frac{2}{\rho}\right). The results of a VMC calculation for different values of \alpha are summarized in Table 1.

Table 1: VMC Results for the Hydrogen Atom with a Trial Wave Function u_T^α(ρ)=αρe^{-αρ} [8]

| Variational Parameter (α) | ⟨H⟩ (a.u.) | Variance (σ²) | Standard Error (σ/√N) |

|---|---|---|---|

| 0.7 | -0.457759 | 0.045120 | 6.71715e-04 |

| 0.8 | -0.481461 | 0.030574 | 5.52934e-04 |

| 0.9 | -0.495899 | 0.008205 | 2.86443e-04 |

| 1.0 | -0.500000 | 0.000000 | 0.000000 |

| 1.1 | -0.493738 | 0.011699 | 3.42036e-04 |

| 1.2 | -0.475563 | 0.088590 | 9.41222e-04 |

| 1.3 | -0.454341 | 0.145171 | 1.20487e-03 |

The key observation is that at α = 1.0, which corresponds to the exact ground state wave function, the energy is exact and the variance is zero. This is a manifestation of the zero-variance property: if the trial wave function is an exact eigenstate, the local energy is constant everywhere, leading to zero variance [8].

The Diffusion Monte Carlo (DMC) Method

DMC refines the VMC result by acting on the trial wave function with the projection operator e^{-(H - E_T)\tau} to filter out the ground state component. Under the fixed-node approximation, the fermionic sign problem is handled by fixing the nodes of the wave function to be the same as those of the initial trial wave function. The algorithm involves a population of walkers that diffuse in configuration space, representing the wave function.

The following diagram illustrates the core workflow of a fixed-node DMC calculation:

Detailed Experimental Protocol

A typical fixed-node DMC simulation involves these key steps [9] [7]:

Initialization:

- Generate an initial ensemble of walkers sampled from the distribution |Ψ_T(\boldsymbol{R})|^2, typically obtained from a preliminary VMC run.

- Set the reference energy E_T and the time step Δτ.

DMC Iteration (for each time step):

- Diffusion: For each walker, propose a move: \boldsymbol{R'} = \boldsymbol{R} + \chi + (\nabla \PsiT / \PsiT) Δτ, where \chi is a random displacement drawn from a Gaussian distribution with mean zero and variance 2Δτ. The term (\nabla \PsiT / \PsiT) is the quantum force that provides importance sampling.

- Node Checking: If the proposed move \boldsymbol{R'} crosses the nodal surface of ΨT (i.e., sign change of ΨT), the move is rejected.

- Branching/Death: Calculate the branching weight associated with the walker: w = \exp[- (EL(\boldsymbol{R}) + EL(\boldsymbol{R'}))/2 - E_T) Δτ]. The walker is then replicated (or killed) with a probability proportional to w. This step amplifies walkers in regions of low local energy and removes them from regions of high local energy.

- Population Control: The total number of walkers is monitored. The reference energy E_T is periodically adjusted to maintain a stable population.

Averaging:

- After an equilibration period, the local energy of each walker is accumulated to compute the DMC energy. The final DMC energy is the average over all walkers and all subsequent time steps.

Advanced Integration: Neural Network Wavefunctions

A recent breakthrough is the integration of neural networks as trial wave functions. The FermiNet architecture, for example, is a powerful neural network ansatz that can learn the electronic wavefunction of molecules directly from data [7]. FermiNet-DMC combines the expressive power of neural networks with the projective accuracy of DMC.

Studies show that FermiNet-DMC achieves superior accuracy and efficiency compared to neural network-based VMC (FermiNet-VMC). For the Beryllium atom, even when starting from an undertrained neural network wavefunction, DMC can recover energies within 1 milliHartree of the reference value, whereas VMC alone fails to reach chemical accuracy with the same network [7]. This is because DMC is primarily sensitive to the nodal structure of the trial wavefunction, which often converges faster in the neural network training than the overall wavefunction shape. This allows for significantly shorter training times while maintaining high accuracy.

Comparative Analysis and Research Applications

Algorithm Comparison

Table 2: Comparison of VMC and DMC Methods

| Feature | Variational Monte Carlo (VMC) | Diffusion Monte Carlo (DMC) |

|---|---|---|

| Theoretical Basis | Variational Principle | Projection in Imaginary Time |

| Accuracy | Limited by trial wavefunction ansatz | Exact within Fixed-Node approximation |

| Wavefunction | Samples Ψ_T^2 | Samples Ψ_T Φ, where Φ is the FN ground state |

| Nodal Dependence | Energy depends on overall shape of Ψ_T | Energy depends only on the nodal surface of Ψ_T |

| Output | ⟨H⟩ ≥ E₀ | E ≤ E_FN ≥ E₀ |

| Variance | Finite, but can be large for poor Ψ_T | Generally lower than VMC for the same Ψ_T |

| Computational Cost | Lower | Higher (due to branching and smaller time steps) |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Components for a QMC Simulation

| Component | Function and Description | Example/Note |

|---|---|---|

| Trial Wave Function | Encodes the physical ansatz for the quantum state; critical for both VMC efficiency and DMC nodal accuracy. | Jastrow-Slater type [10], neural network (FermiNet) [7]. |

| Jastrow Factor | Correlates particles (e-e, e-nucleus) to improve description of Coulomb interactions and reduce variance [10]. | Often includes electron-electron and electron-nucleus terms. |

| Determinantal Expansion | Describes the antisymmetric fermionic part of the wave function. | Hartree-Fock, CIS, CAS, CIPSI [10]. |

| Optimization Method | Algorithm for minimizing the energy or variance with respect to wave function parameters. | Stochastic Reconfiguration [10], LM/CG [11]. |

| Pseudopotentials | Replace core electrons to reduce computational cost and mitigate core-valence oscillations. | Not explicitly covered in results, but often essential for heavy elements. |

| Regularized Estimators | Enable calculation of finite-variance derivatives for wave function optimization and force calculations. | Pathak-Wagner (PW) [11], "warp" transformation [11]. |

VMC and DMC are complementary pillars of modern quantum Monte Carlo. VMC provides a robust, variational framework for optimizing sophisticated trial wavefunctions, while DMC projects out the ground state within the fixed-node constraint, often delivering benchmark accuracy for molecular systems. The ongoing integration of machine learning, particularly through neural network wavefunctions like FermiNet, is pushing the boundaries of these methods, enabling accurate treatment of a broader range of molecules and materials. For researchers in drug development and chemical physics, this combined VMC/DMC approach offers a powerful path toward sub-chemical accuracy in understanding molecular interactions, paving the way for more reliable predictions in structure-based drug design and material science.

Quantum Monte Carlo (QMC) methods represent a family of stochastic approaches for solving the quantum many-body problem that provide benchmark accuracy beyond the limitations of Density Functional Theory (DFT). While DFT has served as the workhorse of computational materials science and chemistry for decades, its approximate exchange-correlation functionals introduce significant uncertainties, particularly for systems with strong electron correlations, van der Waals bonding, and transition metal compounds where accurate prediction of absolute energies is crucial [12]. QMC methods overcome these limitations by offering a more direct approach to the many-body Schrödinger equation with explicitly correlated wavefunctions, enabling computational predictions with chemical accuracy – a level of precision sufficient to guide experimental synthesis and characterization without empirical parameterization.

The theoretical foundation of QMC's advantage lies in its treatment of electron correlation. Whereas DFT approximates the exchange-correlation functional and conventional quantum chemistry methods face scaling bottlenecks, QMC techniques such as Diffusion Monte Carlo (DMC) employ stochastic integration in high-dimensional spaces to compute expectation values directly. This approach systematically reduces the systematic errors that plague DFT, providing reference-quality data for validating more approximate methods and supplying training data for machine learning interatomic potentials [4]. For researchers in drug development and materials science, this benchmark accuracy enables reliable prediction of formation enthalpies, binding energies, and electronic properties that are essential for rational design of functional materials and pharmaceutical compounds.

Theoretical Foundation: How QMC Surpasses DFT Limitations

Fundamental Limitations of Density Functional Theory

Density Functional Theory's computational efficiency has made it ubiquitous across chemistry and materials science, but its approximations introduce critical limitations for predictive science. The central challenge lies in the unknown exact exchange-correlation functional, which must be approximated in all practical calculations. For strongly correlated systems such as transition metal oxides or magnetic materials, standard functionals (LDA, GGA, hybrids) often fail qualitatively, incorrectly predicting metallic versus insulating behavior or dramatically misrepresenting reaction barriers [12]. Similarly, dispersion interactions crucial to molecular crystals, supramolecular chemistry, and physisorption processes are not naturally captured by most functionals without empirical corrections. As noted in benchmark studies, "DFT can often be reliable for predicting trends in the energetics of materials, it can be sometimes in error when used to obtain absolute energies" – a critical limitation when formation enthalpies or reaction energies are sought [12].

QMC's Stochastic Approach to Electron Correlation

Quantum Monte Carlo methods transcend these limitations through fundamentally different theoretical foundations. Rather than relying on an approximate density functional, QMC methods work with explicitly correlated many-body wavefunctions, treating electron-electron interactions directly through stochastic sampling. The key variants include:

- Variational Monte Carlo (VMC): Evaluates expectation values with sophisticated trial wavefunctions containing explicit electron correlation terms, providing an upper bound to the true ground state energy.

- Diffusion Monte Carlo (DMC): Projects out the ground state from an initial trial wavefunction by simulating imaginary time evolution, effectively solving the many-body Schrödinger equation within the fixed-node approximation.

- Linear Method: Optimizes many-parameter wavefunctions through a generalized eigenvalue approach within a stochastic framework, systematically improving the nodal structure critical for DMC accuracy [13].

This multi-level approach enables QMC to achieve high accuracy with computational cost that scales as O(N³–N⁴) with system size – notably gentler than the exponential scaling of high-level quantum chemistry methods like coupled-cluster theory. The fixed-node approximation, which represents the primary source of systematic error in DMC, can be systematically improved through better trial wavefunctions, making QMC an increasingly accurate and controllable approach for predictive electronic structure calculations.

Quantitative Accuracy Comparison: QMC vs DFT

Benchmark Case Study: Magnesium Hydride (MgH₂)

The superior accuracy of QMC is quantitatively demonstrated in benchmark studies of magnesium hydride (MgH₂), a promising hydrogen storage material. Theoretical prediction of its formation enthalpy presents a significant challenge for electronic structure methods due to the delicate energy balance between solid MgH₂ and its constituent elements. As shown in the table below, QMC achieves remarkable agreement with experimental values:

Table 1: Accuracy comparison for MgH₂ formation enthalpy prediction

| Computational Method | Formation Enthalpy (kJ/mol) | Error vs Experiment | Computational Cost |

|---|---|---|---|

| Density Functional Theory | Varies significantly with functional | >5-15 kJ/mol | Low |

| Diffusion Monte Carlo (Periodic) | 82.0 | ~5.0 kJ/mol | High |

| DMC (Cluster Extrapolation) | 78.8 | 1.0 kJ/mol | Medium |

| Experimental Value | 77.8 ± 2.0 | - | - |

In this study, researchers implemented an innovative cluster approach to eliminate finite-size errors that typically plague periodic QMC calculations. By extrapolating from increasingly large clusters (Mg₄₈H₉₆ to Mg₁₂₅H₂₅₀), they obtained a formation enthalpy differing by merely 1.0 kJ/mol from experiment – well within chemical accuracy (1 kcal/mol or 4.184 kJ/mol) [12]. This precision is particularly notable given that "the result has reached the experimental accuracy" and provides a reliable benchmark for assessing more approximate methods [12].

Performance Across Material Classes

QMC's advantage extends across diverse material systems where DFT faces challenges:

- Strongly correlated materials: QMC accurately describes electronic localization and magnetic interactions without resorting to system-specific DFT+U parameters.

- Molecular crystals: QMC naturally captures van der Waals interactions and hydrogen bonding without empirical corrections.

- Surface adsorption: QMC provides reliable adsorption energies critical for catalysis research.

- Defect formation energies: QMC offers predictive capability for point defects in semiconductors and insulators.

For pharmaceutical and materials researchers, this translates to reliable prediction of binding energies, reaction barriers, and thermodynamic stability across diverse chemical spaces – a capability impossible with DFT's functional-dependent results.

QMC Methodologies and Experimental Protocols

Core QMC Algorithms and Workflows

Modern QMC simulations employ a sophisticated multi-step workflow to achieve high accuracy efficiently. The standard protocol involves:

Initial Wavefunction Generation: High-quality trial wavefunctions are prepared using conventional methods (DFT or quantum chemistry). QMCPACK integrates with Quantum ESPRESSO, PySCF, and other electronic structure codes to generate initial orbitals [4].

Wavefunction Optimization: The Linear Method optimizes Jastrow factors and orbital parameters within VMC to reduce variance and improve nodal surfaces [13].

Projection to Ground State: DMC propagates the optimized wavefunction in imaginary time to project out the true ground state within the fixed-node approximation.

Statistical Analysis: Block averaging and autocorrelation analysis ensure proper error estimation for all measured observables.

Table 2: Key QMC Methods and Their Applications in Materials Research

| Method | Theoretical Basis | Primary Applications | Accuracy Limitations |

|---|---|---|---|

| Variational Monte Carlo (VMC) | Stochastic integration of parameterized trial wavefunction | Initial wavefunction preparation, property evaluation with good nodes | Depends entirely on trial wavefunction quality |

| Diffusion Monte Carlo (DMC) | Imaginary time projection to ground state | Benchmark energy calculations, binding energies, defect formation energies | Fixed-node approximation, locality approximation for nonlocal pseudopotentials |

| Linear Optimization Method | Energy minimization in parameter space | Wavefunction optimization for improved nodes and reduced variance | Computational cost increases with parameters |

| Reptation Monte Carlo (RMC) | Path integral representation in imaginary time | Calculation of excited states, exact expectation values without mixed estimator | Higher computational cost than DMC |

Advanced Protocol: Bell Sampling QMC for Entanglement Characterization

A recent innovation expanding QMC's capabilities is Bell-QMC, which leverages Bell sampling – a two-copy measurement protocol in the transversal Bell basis – to enable efficient estimation of previously challenging quantities like off-diagonal operators and entanglement metrics [14]. This protocol, implemented within stochastic series expansion (SSE) frameworks, enables:

- Simultaneous entanglement measurement across all system partitions in a single simulation

- Unbiased estimation of Renyi entropies and entanglement spectra

- Access to universal quantum features using only simple diagonal measurements

The Bell-QMC workflow employs an efficient update scheme for sampling configurations in the Bell basis, dramatically expanding the quantum properties accessible to QMC simulation [14]. For pharmaceutical researchers, this enables characterization of electronic entanglement in molecular systems and complex materials – information crucial for understanding charge transfer processes and designing quantum-inspired materials.

Technical Implementation and Computational Infrastructure

Performance-Optimized QMC with Batched Drivers

Modern high-performance QMC implementations like QMCPACK employ sophisticated "batched drivers" to maximize computational efficiency on CPU and GPU architectures. These implementations introduce the concept of "crowds" – subsets of walkers processed simultaneously to enable lock-step execution optimal for GPU parallelism [13]. Key performance considerations include:

Walker Management: Optimal performance requires balancing

walkers_per_rankandtotal_walkersparameters to maximize GPU utilization without exceeding memory limits. For typical GPU runs, "hundreds of walkersperrank, or the largest number that will fit in GPU memory" yields optimal throughput [13].Crowd Configuration: The

crowdsparameter controls walker subdivision within MPI processes, with multiple crowds per GPU (typically 2,4,8) often optimal for large electron counts or many walkers.Statistical Parameters: Proper configuration of

blocks,steps, andsubstepsensures sufficient statistical sampling while managing I/O overhead.

Table 3: Essential Research Reagents & Computational Tools for QMC

| Tool/Category | Specific Implementation | Research Function | Access Method |

|---|---|---|---|

| QMC Software | QMCPACK | Production QMC simulations (VMC, DMC, RMC) | GitHub repository, virtual machines |

| Electronic Structure Prep | Quantum ESPRESSO, PySCF | Initial wavefunction generation, orbital preparation | Open-source packages |

| Pseudopotentials | Burkatzki-Filippi-Dolg, CCSD | Coulomb interaction regularization, core electron treatment | Standard libraries, tabulated data |

| Workflow Management | Nexus, AFlow++ | Automated calculation chaining, high-throughput screening | Custom scripts, published frameworks |

| Performance Portability | Batched Drivers, OpenMP/GPU | Cross-architecture optimization (CPU/GPU) | QMCPACK build options |

Finite-Size Error Correction Protocols

A critical challenge in periodic QMC calculations is the elimination of finite-size errors arising from simulation cells containing typically 10²-10³ electrons rather than the thermodynamic limit. The benchmark MgH₂ study demonstrates an effective protocol:

Cluster Approximation: Model the solid as increasingly large finite clusters (Mg₄₈H₉₆ → Mg₁₂₅H₂₅₀) to eliminate periodic image effects [12].

Extrapolation to Infinite Size: Systematically extrapolate properties to the infinite-cluster limit using well-defined scaling relationships.

Twist Averaging: Implement k-point sampling through twisted boundary conditions to reduce single-particle finite-size errors.

This approach enabled the MgH₂ benchmark to achieve chemical accuracy while using "much less consumed [computation resource] than that of DMC under periodic boundary condition" [12].

Applications in Pharmaceutical and Materials Research

Molecular and Solid-State Systems

QMC's benchmark accuracy enables transformative applications across pharmaceutical and materials research domains:

- Molecular Energetics: High-accuracy prediction of binding affinities, conformational energies, and reaction barriers for drug design.

- Solid-State Properties: Reliable calculation of formation enthalpies, phase stability, and defect properties for materials discovery.

- Strongly Correlated Systems: First-principles characterization of transition metal complexes, magnetic materials, and high-temperature superconductors.

- Surface Chemistry: Accurate adsorption energies for catalyst screening and design.

The QMC summer school curriculum emphasizes "hands-on examples for both molecular and solid-state systems" and "real-world examples from recent research calculations" to equip researchers with practical skills for these applications [4].

Emerging Method: Geometry Relaxation with QMC

Traditional QMC calculations typically employ geometries optimized at lower levels of theory (DFT or quantum chemistry), potentially introducing systematic errors. Recent advances now enable "geometry relaxation of molecules and solids with QMC" – a capability that eliminates this approximation and provides fully consistent QMC-optimized structures [4]. This development is particularly valuable for:

- Molecular crystals where dispersion interactions dominate packing arrangements

- Systems with strong correlation effects that DFT misrepresents

- Defect structures where local bonding environments differ substantially from bulk

- Pharmaceutical compounds requiring accurate conformational analysis

Quantum Monte Carlo methods provide a transformative approach for achieving benchmark accuracy in electronic structure calculations, overcoming fundamental limitations of Density Functional Theory while remaining computationally feasible for systems of practical interest. As QMC algorithms, software implementations, and computational hardware continue advancing, these methods are positioned to become the gold standard for predictive materials and pharmaceutical research – particularly for challenging systems where quantitative accuracy is essential for design decisions.

The demonstrated capability to achieve chemical accuracy in benchmark systems like MgH₂, combined with emerging methodologies for entanglement characterization and geometry optimization, establishes QMC as a versatile and powerful framework for addressing the most challenging problems in computational chemistry and materials physics. For research professionals, mastering QMC methodologies provides access to a tool capable of generating reference data for machine learning applications, validating approximate methods, and directly predicting properties with sufficient reliability to guide experimental synthesis and characterization.

In modern electronic structure research, the integration of complementary computational tools enables a comprehensive strategy for tackling complex many-body problems in quantum chemistry and materials science. This ecosystem typically leverages Density Functional Theory (DFT) for initial system characterization, advanced post-Hartree-Fock wavefunction methods for capturing strong electron correlation, and finally, Quantum Monte Carlo (QMC) methods for benchmark-quality results. Within this framework, three powerful software packages have emerged as essential tools: Quantum ESPRESSO for plane-wave DFT, PySCF for general-purpose quantum chemistry, and QMCPACK for high-accuracy QMC calculations. These tools collectively provide researchers with a complete workflow from initial structure characterization to high-accuracy benchmarking, each excelling in their respective domains while maintaining growing interoperability. This article details their capabilities, performance characteristics, and provides explicit protocols for their application in electronic structure research, particularly within drug development and materials science contexts.

Software Capabilities and Characteristics

The complementary nature of these tools stems from their specialized numerical approaches and methodological focus. The table below summarizes their core capabilities.

Table 1: Core Capabilities of Quantum ESPRESSO, PySCF, and QMCPACK

| Feature | Quantum ESPRESSO | PySCF | QMCPACK |

|---|---|---|---|

| Primary Method | Plane-wave DFT | Gaussian-basis quantum chemistry | Quantum Monte Carlo |

| Periodic Systems | Excellent (native) | Good (with k-point sampling) | Excellent (native) |

| Molecular Systems | Good (with supercells) | Excellent (native) | Excellent (native) |

| Wavefunction Methods | Limited (mostly DFT) | Extensive (CC, CAS, MP2, etc.) | QMC (DMC, VMC, RMC) |

| Relativistic Effects | Scalar relativistic, SOC | X2C, 4-component DHF, ECP-SOC | ECPs, spin-orbit coupling |

| GPU Acceleration | Emerging | Extensive (via GPU4PySCF) | Extensive & unified (v4.0.0+) |

Quantum ESPRESSO serves as the foundation of this computational workflow, specializing in plane-wave density functional theory for both extended and molecular systems. Its robust implementation of pseudopotentials and periodic boundary conditions makes it ideal for obtaining initial electronic structures and geometries for solid-state systems and molecules in solution. Recent versions have introduced new modules like turboMagnon for spin-wave spectra simulations and expanded support for Hubbard and Koopmans functionals [15] [16].

PySCF provides exceptional methodological breadth through its Python-based architecture, implementing an extensive range of wavefunction-based theories including coupled-cluster, complete active space (CAS), and many-body perturbation theory. This makes it indispensable for studying strongly correlated molecular systems and for generating high-quality trial wavefunctions for subsequent QMC calculations. Its recent development of GPU4PySCF provides significant acceleration for DFT and TDDFT calculations, with demonstrated speedups of 13-30x for molecules of drug-like complexity [17].

QMCPACK delivers benchmark accuracy through its implementation of high-performance Quantum Monte Carlo methods, particularly diffusion Monte Carlo (DMC). Its recently released version 4.0.0 features a major architectural update with unified GPU support across NVIDIA, AMD, and Intel hardware, significantly expanding its accessibility and performance [18] [19]. Key advancements include fully GPU-accelerated LCAO/Gaussian-basis set wavefunctions, a fast spin-orbit implementation, and improved wavefunction optimizers, making it particularly valuable for validating more approximate methods and providing reference data for machine-learning applications [4].

Performance Considerations and Hardware Support

Computational performance and hardware support critically influence software selection for research projects. The three packages exhibit distinct performance characteristics and hardware optimization strategies.

Table 2: Performance Characteristics and Hardware Support

| Aspect | Quantum ESPRESSO | PySCF | QMCPACK |

|---|---|---|---|

| Primary Parallelization | MPI + OpenMP | MPI (large systems), Serial | MPI + OpenMP/GPU |

| GPU Support | Emerging | Extensive (GPU4PySCF) | Mature (v4.0.0+) |

| GPU Portability | - | CUDA | CUDA, HIP, SYCL |

| Max System Size | ~1000s atoms | ~100s atoms (accurate methods) | ~1000s electrons |

| Typical Use Case | Geometry optimization, DOS | Correlation energy, Excitations | Benchmark accuracy |

Quantum ESPRESSO traditionally excels on CPU-based clusters, leveraging massive MPI parallelization for large-scale plane-wave calculations. Its GPU support is progressively developing, though not yet as comprehensive as the other packages. PySCF's performance profile is method-dependent: standard calculations run efficiently on workstations, while the GPU4PySCF extension dramatically accelerates specific tasks like DFT, gradients, and Hessians on NVIDIA GPUs, showing particular advantage for molecules with 20-100 atoms [17].

QMCPACK demonstrates exceptional performance portability across diverse architectures. Version 4.0.0 introduced a simplified QMC_GPU CMake option that replaces previous hardware-specific flags, allowing consistent builds for NVIDIA ("openmp;cuda"), AMD ("openmp;hip"), or Intel ("openmp;sycl") GPUs [18]. The new batched drivers now default in both QMCPACK and its NEXUS workflow manager, providing performance-portable CPU and GPU support while implementing more rigorous input validation [18] [19]. Recent optimizations have specifically improved AMD GPU performance through better memory handling [19].

Interoperability and Workflow Integration

The power of these tools emerges most significantly when combined in integrated workflows. The following diagram illustrates a typical research pipeline connecting all three packages:

The NEXUS workflow tool seamlessly connects these packages, automating multi-step computational processes. Recent versions support grand-canonical twist averaging (GCTA), stochastic reconfiguration, and orbital optimization specifically for QMCPACK inputs [19]. For wavefunction conversion, QMCPACK includes a converter for determinants from PySCF CAS-CI or CAS-SCF calculations, enabling sophisticated multi-reference wavefunctions for QMC [18]. Quantum ESPRESSO 7.4.1 enhances interoperability through support for HDF5 charge density formats and improved Hubbard U+V parameters compatible with workflows feeding into PySCF and QMCPACK [15] [16].

Experimental Protocols

Protocol 1: Molecular Energy Benchmarking

Application: Validating computational methods for molecular systems, particularly relevant for drug development where accurate binding energies are crucial.

Step-by-Step Procedure:

- Geometry Optimization: Use Quantum ESPRESSO's

pw.xto optimize molecular geometry in a simulation box with sufficient vacuum (≥10 Å) to prevent spurious interactions. Employ a hybrid functional such as PBE0 for improved accuracy. - Wavefunction Generation: Convert the optimized structure to PySCF format. Perform a CAS-SCF or CCSD(T) calculation in PySCF to generate a high-quality trial wavefunction. For the CASSCF calculation, ensure appropriate active space selection (e.g., 6 electrons in 6 orbitals for benzene).

- Wavefunction Conversion: Use QMCPACK's

convert4qmcutility with the-pyscfflag to convert the PySCF wavefunction to QMCPACK's format. For example:convert4qmc -pyscf -prefix benzene pyscf_output.h5. - QMC Calculation: In QMCPACK, run a two-stage optimization:

- VMC Optimization: Optimize the Jastrow factor parameters using the linear method or stochastic reconfiguration [18].

- DMC Production: Run a DMC calculation with a timestep of 0.01-0.02 a.u., employing T-move scheme for non-local pseudopotential consistency. Use the

driver_version = "batched"parameter for optimal GPU performance [18].

- Statistical Analysis: Extract the total energy with error bars from the DMC calculation. Compare with PySCF CCSD(T) and Quantum ESPRESSO DFT results to assess convergence and method agreement.

Protocol 2: Solid-State Defect Formation Energy

Application: Determining accurate formation energies for point defects in semiconductors, crucial for materials in electronic devices.

Step-by-Step Procedure:

- Bulk Calculation: Using Quantum ESPRESSO, perform a converged k-point calculation for the pristine bulk supercell. Obtain the total energy and electron density.

- Defect Structure: Create a supercell (typically 64-216 atoms) with the point defect. Re-optimize the ionic positions using DFT to relax strain effects.

- Wavefunction Preparation: Use PySCF with k-point sampling or Quantum ESPRESSO to generate a single-reference trial wavefunction. For transition metal defects, consider using PySCF for a CAS-CI calculation on the defect state.

- QMC Setup: Use NEXUS to automate the workflow from Quantum ESPRESSO output to QMCPACK input. The workflow should include:

- QMC Execution: Run DMC calculations for both bulk and defect supercells using consistent computational parameters (timestep, twist sampling). For charged defects, employ the same charge corrections applied in DFT.

- Energy Difference: Calculate the defect formation energy as ΔE = Edefect - Ebulk ± μ + qEF, where Edefect and E_bulk are DMC total energies.

Essential Research Reagents and Computational Tools

The computational research workflow relies on several essential "reagent" solutions that enable specific capabilities.

Table 3: Essential Computational Tools and Resources

| Tool/Resource | Function | Availability |

|---|---|---|

| NEXUS Workflow Manager | Automates multi-step QMC workflows between codes | Included with QMCPACK |

| GPU4PySCF Plugin | Accelerates PySCF calculations on NVIDIA GPUs | https://github.com/pyscf/gpu4pyscf |

| Pseudopotential Library | Provides correlation-consistent ECPs for QMC | https://pseudopotentiallibrary.org/ |

| convert4qmc Utility | Converts wavefunctions from various formats to QMCPACK input | Included with QMCPACK |

| Build Scripts for HPC | Pre-configured compilation recipes for supercomputers | Included with QMCPACK |

QMCPACK, Quantum ESPRESSO, and PySCF represent a powerful, interoperable toolkit for advancing electronic structure research. Quantum ESPRESSO provides robust foundations in solid-state DFT, PySCF offers unparalleled methodological breadth for molecular quantum chemistry, and QMCPACK delivers benchmark accuracy through modern, performance-portable QMC implementations. Their continued development—particularly in GPU acceleration, interoperability, and workflow automation—ensures they remain essential for researchers tackling challenging problems in materials design and drug development where quantitative accuracy is paramount. The upcoming QMC summer school in July 2025 [4] and ongoing development of correlation-consistent pseudopotentials [20] will further lower barriers to adoption, expanding the impact of these high-accuracy computational methods.

QMC in Action: Practical Methodologies for Molecules, Materials, and Drug Discovery

Within electronic structure research, Quantum Monte Carlo (QMC) methods have emerged as a powerful tool for achieving benchmark accuracy in many-body quantum systems, offering a compelling alternative for problems where traditional methods like Density Functional Theory (DFT) or coupled cluster struggle, particularly in strongly correlated systems [4]. The application of QMC in domains such as drug development is increasingly relevant for modeling challenging catalytic systems, including transition metal complexes involved in cross-coupling reactions like Suzuki–Miyaura, which are pivotal in pharmaceutical synthesis [21]. The reliability of any QMC study, however, is fundamentally tied to a meticulously designed workflow. This protocol details the end-to-end process, from generating initial orbitals to executing production-level Diffusion Monte Carlo (DMC) calculations, providing researchers with a structured framework for obtaining high-fidelity results.

The journey to a production QMC calculation is a multi-stage process, where the output of each step forms the essential input for the next. The overarching goal is to construct and refine a many-body wavefunction that accurately represents the system's electronic structure, ultimately enabling the precise estimation of properties like total energies and reaction barriers through the DMC method. The following diagram delineates this structured pathway.

Figure 1: A sequential workflow for Quantum Monte Carlo calculations, outlining the key stages from initial system setup to the final production run.

Detailed Stage Protocols and Data

Stage 1: Pseudopotential Preparation and Testing

Objective: To obtain and validate a high-quality pseudopotential for the element(s) of interest, ensuring its accuracy and compatibility with the QMC workflow.

Detailed Protocol:

- Acquisition: Download the pseudopotential from a reputable database, such as the Burkatzki-Filippi-Dolg (BFD) database. For an oxygen atom, this involves selecting the appropriate element and retrieving the potential in a format like Gamess [22].

- Conversion: Use the

ppconverttool to transform the pseudopotential into the formats required by different components of the workflow.- For Quantum ESPRESSO (QE), convert to the UPF format:

- For QMCPACK, convert to the FSATOM XML format:

- Validation: Conduct a DMC time-step study on an isolated atom (e.g., neutral oxygen) to verify the pseudopotential's performance and establish the magnitude of time-step errors for the production system [22].

Table 1: Pseudopotential File Formats and Their Uses in the QMC Workflow.

| File Format | Primary Use | Tool |

|---|---|---|

| Gamess (.gamess) | Source format; obtained from database | BFD Database |

| UPF (.upf) | Plane-wave DFT calculation | Quantum ESPRESSO |

| FSATOM XML (.xml) | QMC calculation | QMCPACK |

Stage 2: Orbital Generation with Plane-Wave DFT

Objective: To generate a high-quality single-particle orbital file that will form the determinantal part of the QMC trial wavefunction.

Detailed Protocol:

- DFT Input Preparation: Create an input file for Quantum ESPRESSO's

pw.x. Key parameters must be carefully set [22]:calculation = 'scf'for a single-point self-consistent field calculation.wf_collect = .true.to ensure orbitals are written to disk.- A high plane-wave energy cutoff (

ecutwfc). A value of 300 Ry is recommended for oxygen, but this should be converged for your specific system to ensure orbital quality [23] [22]. - Use the converted UPF pseudopotential in the

ATOMIC_SPECIESsection.

- Execution: Run the DFT calculation.

- Output Verification: Confirm the calculation completed successfully and note the total energy. The orbitals are written to a directory (e.g.,

O.q0.save/) [22].

Stage 3: Orbital Conversion to ESHDF Format

Objective: To convert the orbitals from the native Quantum ESPRESSO format to the ESHDF (Electron Structure Hierarchy Data Format) file that QMCPACK reads.

Detailed Protocol:

- Input for Converter: Prepare a simple input file (e.g.,

O.q0.p2q.in) for thepw2qmcpack.xconverter tool [22]. - Execution: Run the converter.

- Outputs: This process generates the critical

O.q0.pwscf.h5file containing the orbitals, along with template XML files for the particle set and wavefunction [22].

Stage 4: Wavefunction Optimization - Jastrow Factor

Objective: To optimize the parameters of the Jastrow correlation factor, which captures electron-electron and electron-ion correlations, thereby reducing the variance of the local energy and improving the efficiency and accuracy of the subsequent DMC calculation [23].

Detailed Protocol:

- Input Preparation: Create a QMCPACK input file (e.g.,

O.q0.opt.in.xml) using themethod="linear"driver for wavefunction optimization [13]. - Wavefunction Definition: In the input file, define the trial wavefunction as the product of the converted Slater determinants and a Jastrow factor. The Jastrow typically includes 1-body (electron-ion), 2-body (electron-electron), and sometimes 3-body terms.

- Optimization Parameters: The optimization uses the linear method to minimize the energy variance or total energy. A sufficient number of samples (e.g., ~20,000 for initial iterations, increasing for later ones) must be used to converge the parameters reliably [23].

- Execution: Run the optimization in QMCPACK.

Stage 5: Production DMC and Time-Step Extrapolation

Objective: To perform a statistically rigorous DMC calculation that projects out the ground state energy from the optimized trial wavefunction, while systematically removing time-step bias.

Detailed Protocol:

- Input Preparation: Create a DMC input section in the QMCPACK XML file. Use the

method="dmc"driver and the optimized wavefunction from the previous stage [13]. - Population Control: Specify the total walker population using

total_walkersorwalkers_per_rank. For GPU efficiency, hundreds of walkers per rank may be required to maximize throughput [13]. - Time-Step Study: Run a series of DMC calculations at different time steps (e.g., 0.02, 0.01, 0.005 a.u.). The DMC energy will approach the true ground state energy as the time step approaches zero [22].

- Extrapolation: Fit the obtained energies versus time step to a linear or quadratic function and extrapolate to zero time step to eliminate time-step bias.

- Statistical Analysis: Ensure a sufficient number of blocks are collected to reduce the statistical error bar on the final energy to an acceptable level (error ∝ 1/√Number of Samples) [23].

Table 2: Key Parameters for a Production Batched DMC Calculation in QMCPACK.

| Parameter | Datatype | Description & Purpose | Typical Value/Range |

|---|---|---|---|

timestep |

Real | Imaginary time step for electron propagation. Key for time-step study. | 0.001 - 0.02 a.u. |

blocks |

Integer | Number of statistical data collection blocks. | 100 - 1000+ |

steps |

Integer | Number of electron move steps per block. | 1 - 100+ |

total_walkers |

Integer | Total number of walkers in the simulation. | System-dependent |

walkers_per_rank |

Integer | Number of walkers per MPI rank. Tuned for GPU performance. | Hundreds for GPUs |

warmupsteps |

Integer | Steps for equilibration; data not collected. | 10 - 100 |

The Scientist's Toolkit: Essential Research Reagents

This section catalogues the critical software and data components, or "research reagents," required to execute the QMC workflow.

Table 3: Essential Software and Data Components for QMC Calculations.

| Research Reagent | Function in the Workflow |

|---|---|

| Pseudopotential (e.g., BFD) | Replaces core electrons with an effective potential, drastically reducing the number of explicitly treated electrons and the computational cost [22]. |

| Orbital File (ESHDF .h5) | Contains the single-particle orbitals forming the antisymmetric (Slater determinant) part of the trial wavefunction, typically generated by a preceding DFT or HF calculation [22]. |

| Optimized Jastrow Factor | A symmetric function that multiplies the determinant, explicitly encoding electron correlations. This drastically reduces the variance of the energy, improving statistical efficiency [23] [24]. |

| Batched DMC Driver | The modern, performance-portable QMCPACK driver for DMC. It organizes walkers into "crowds" for efficient, lock-step computation on GPU architectures [13]. |

The pathway from initial orbitals to production QMC calculations is a rigorous but structured process. Each stage—from pseudopotential selection and meticulous orbital generation to Jastrow optimization and controlled DMC projection—builds upon the previous one to ensure the final results are both accurate and meaningful. Adherence to the detailed protocols outlined in this document, with particular attention to the convergence of key parameters such as plane-wave cutoff, Jastrow optimization samples, and DMC time step, will equip computational researchers with a robust framework for leveraging the power of Quantum Monte Carlo. This is especially critical in fields like drug development, where accurately modeling the electronic structure of complex, strongly correlated molecular systems can provide decisive insights.

The multiple-minima problem is a fundamental challenge in computational chemistry, where a molecule can exist in a vast number of local energy minima on its potential energy surface (PES). The identification of low-energy conformers—spatially distinct structures—is crucial for accurately predicting molecular properties, reactivity, and biological activity [25]. Within the broader context of quantum Monte Carlo (QMC) methods in electronic structure research, the Multiple-Minimum Monte Carlo (MMMC) method emerges as a powerful sampling approach to navigate this complex conformational space. By leveraging stochastic sampling combined with local minimization, MMMC efficiently locates low-energy conformers, providing a robust solution for molecular geometry relaxation and conformer search, which is especially vital for flexible systems in drug development and materials science [26] [27].

Underlying Principles and Methodologies

The core of the MMMC method is a Monte Carlo-minimization procedure designed to overcome the multiple-minima problem [27]. This hybrid technique combines the global sampling power of Monte Carlo with the local refinement of energy minimization.

The algorithm operates through an iterative cycle, as visually summarized in the workflow below:

Key Algorithmic Features:

- Usage-Directed Sampling: The input conformation for each iteration is sampled from the existing ensemble, prioritizing structures that have been used the least. This promotes comprehensive exploration of the conformational space [26].

- Energetic and Geometric Criteria: Newly found minima are accepted based on two criteria:

- Their energy must lie within a specified window (e.g., 10 kcal/mol) above the current global minimum energy.

- They must be geometrically distinct from all accepted conformers, as determined by a root-mean-square deviation (RMSD) threshold [26].

- Steric Screening: Before minimization, random dihedral angle modifications are first subjected to a quick steric test to immediately reject unphysical configurations, saving computational resources [26].

This method stands in contrast to other conformational search strategies. For instance, while molecular dynamics (MD) simulations excel at sampling typical fluctuations, they can struggle to cross high energy barriers and access rare conformations. The MMMC method, with its stochastic jumps in dihedral space, is particularly adept at overcoming these barriers [26]. Similarly, a comparison between Monte Carlo and Low Mode (LM) search methods found that for a medium-sized cyclic molecule with 14 rotatable bonds, both pure MC and pure LM methods performed equally well. However, for a very large macrocycle (39 rotatable bonds), a hybrid MC:LM method proved to be the most efficient search strategy [28].

Performance Data and Comparative Analysis

The MMMC method demonstrates significant performance advantages, particularly for large, flexible molecular systems where other methods may fail to locate the global minimum or achieve broad conformational coverage.

Table 1: Comparative Performance of MMMC Against Metadynamics (iMTD) on a Flexible Dimeric Catalyst [26]

| Method | Starting Conformation | Number of Iterations | Lowest Energy Found (relative) | Conformational Diversity |

|---|---|---|---|---|

| MMMC | Extended (RDKit) | 250 | 0.0 kcal/mol (reference) | Significantly larger space explored |

| iMTD (CREST) | Extended (RDKit) | N/A | > +8.0 kcal/mol | Limited in comparison |

Table 2: Search Method Efficiency for Molecules of Different Sizes [28]

| Molecule Size | Rotatable Bonds | Most Efficient Method | Key Finding |

|---|---|---|---|

| Small | 6 | Pure MC, Pure LM, or Hybrid | All methods found the same ensemble of 13 unique structures with equal efficiency. |

| Medium (Cyclic) | 14 | Pure MC, Pure LM, or Hybrid | All methods were equally efficient at searching the conformational space. |

| Large (Macrocycle) | 34 | Hybrid MC:LM (50:50) | The hybrid method was most efficient for searching the high-dimensional space. |

The data in Table 1 highlights MMMC's capability to find significantly lower energy structures and a more diverse set of conformers in fewer iterations compared to metadynamics-based approaches for a challenging flexible catalyst [26]. Table 2 illustrates that while pure MC is robust across small and medium systems, combining it with other methods like LM can be beneficial for the most complex, high-dimensional problems [28].

Experimental and Computational Protocols

Protocol: Multiple-Minimum Monte Carlo Conformer Search

This protocol outlines the steps for performing a conformer search using the MMMC method, suitable for implementation with software packages like the multiple-minimum-monte-carlo package that supports the ASE (Atomic Simulation Environment) calculator interface [26].

I. Initial Setup

- Define the Molecular System: Obtain an initial 3D conformation of the molecule, typically generated by a tool like RDKit.

- Select Energy Calculator: Choose an appropriate energy calculator (e.g., a quantum mechanics method, machine-learned interatomic potential, or molecular mechanics force field) via the ASE interface.

- Set Algorithm Parameters:

- Energy window: Set the acceptance energy threshold (e.g., 10 kcal/mol above the current global minimum).

- RMSD threshold: Define the cutoff for considering two structures unique (e.g., 0.5 Å).

- Maximum iterations: Set the total number of MMMC steps to perform.

- Sampling strategy: Select "usage-directed" sampling, which uses the least-sampled conformer from the ensemble as the next starting point.

II. Execution Loop

- Sample Input Conformer: From the current ensemble of accepted conformers, select one based on the usage-directed strategy.

- Perturb Geometry: Randomly modify the dihedral angles of the selected input conformer.

- Steric Clash Check: Perform a quick steric test on the perturbed structure. If unphysical clashes are detected, reject the structure and return to Step 4.

- Local Minimization: Subject the sterically-viable structure to a local energy minimization using the chosen calculator, moving it to the nearest local minimum on the PES.

- Evaluation and Acceptance:

- Check if the energy of the minimized structure is within the defined energy window.

- If it passes, calculate the RMSD between the new minimum and all previously accepted conformers.

- If the RMSD is above the threshold for all accepted conformers, add this new, unique, low-energy conformer to the ensemble.

III. Analysis and Validation

- Convergence Check: Monitor the discovery rate of new unique minima. The search can be considered converged when no new minima are found over a large number of consecutive iterations.

- Post-Processing: Analyze the final ensemble of conformers, including their energy distribution, geometric diversity, and relevance to the property of interest (e.g., identifying substrate-binding modes).

Advanced Modifications

- Parallelization: The MMMC process can be accelerated by running multiple iterations or minimizations in parallel [26].

- Constrained Searches: Specific atoms can be frozen during the search to model surface adsorption or to focus sampling on a particular region of the molecule [26].

- Integration with Machine Learning: For extremely high-dimensional spaces, consider using a generative model like a Variational Autoencoder (VAE) to search in a reduced latent space biased towards low-energy regions, which can improve sampling efficiency [29].

The Scientist's Toolkit

Successful application of the MMMC method and related conformational search techniques relies on a suite of computational tools and reagents.

Table 3: Essential Research Reagent Solutions for Conformer Search

| Tool / Reagent | Function | Example Use Case |

|---|---|---|

| ASE (Atomic Simulation Environment) | A Python framework for defining and interacting with atoms, molecules, and calculators. | Provides a common interface to set up the molecular system and connect it to various energy calculators [26]. |

| MLIPs (Machine-Learned Interatomic Potentials) | Fast, near-quantum accuracy potentials for energy and force evaluation. | Enables rapid energy minimization steps during the MMMC loop, making quantum-level conformer searches feasible [26]. |

| RDKit | Open-source cheminformatics toolkit. | Generates reasonable initial 3D molecular conformations to seed the MMMC search [26]. |

| Variational Autoencoder (VAE) | A generative model that learns a compressed, low-dimensional representation (latent space) of molecular conformations. | Biases conformational sampling towards low-energy regions, increasing search efficiency in high-dimensional spaces [29]. |

| Gaussian Process (GP) Regression | A non-parametric Bayesian model used to create a surrogate (approximate) model of the potential energy surface. | After data generation, a GP can be used to identify local minima for final refinement with more accurate methods [29]. |

Integration with Electronic Structure Research

The MMMC method finds a natural and powerful synergy with quantum Monte Carlo (QMC) in electronic structure research. While MMMC efficiently samples the conformational space defined by nuclear coordinates, QMC provides highly accurate energies and forces for a given electronic configuration, serving as a premium "calculator" within the MMMC framework.

- High-Accuracy Force Calculations: QMC methods, particularly diffusion Monte Carlo (DMC) with the variational-drift-diffusion approximation, can provide forces that are as accurate as those from the coupled-cluster (CC) "gold standard," but with more favorable scaling for larger systems [30]. These accurate forces are critical for correct local minimization within the MMMC algorithm.

- Machine-Learned Force Fields (MLFFs): The high computational cost of QMC can be mitigated by using it to generate reference data for training MLFFs [30]. An MMMC search can then be performed using a QMC-trained MLFF, combining the sampling power of MMMC with the accuracy and speed of the MLFF for high-throughput discovery of low-energy conformers at near-QMC accuracy.

- Beyond Organic Molecules: This combined approach is not limited to drug-like molecules. The principles of MMMC sampling with accurate electronic structure calculators can be applied to materials science problems, such as the relaxation of crystal structures [31] or the study of supramolecular assemblages [32].

In conclusion, the Multiple-Minimum Monte Carlo method represents a robust and efficient strategy for tackling the pervasive multiple-minima problem in computational chemistry. Its integration with high-accuracy electronic structure methods like quantum Monte Carlo paves the way for reliable predictions of molecular structure, dynamics, and function across chemistry and materials science.

Quantum Monte Carlo (QMC) methods represent a class of high-accuracy, many-body computational techniques for solving the electronic Schrödinger equation. While traditionally applied to problems in materials science and condensed matter physics, such as studying strongly correlated solids, the robust and benchmark-quality accuracy of QMC is finding new applications in biomedical research [4]. These methods provide a first-principles approach to computing molecular interactions with benchmark high accuracy, offering a powerful tool for investigating biological systems. This application note details how QMC and related computational Monte Carlo (MC) techniques are advancing two critical areas in drug development: the precise prediction of protein-ligand binding affinity and the reliable assessment of molecular toxicity. By moving beyond more approximate methods, these approaches provide a more fundamental understanding of electronic interactions in biological processes, enabling more effective and safer therapeutic design.

Quantum Monte Carlo in Electronic Structure and Biomedicine

QMC Fundamentals and Biomedical Relevance

Quantum Monte Carlo encompasses several stochastic methods for investigating the electronic structure of quantum systems. A key advantage is its capability to directly handle many-body wavefunctions, providing solutions with accuracy that often serves as a benchmark for other electronic structure methods [4]. The core principle involves using random walks to sample the probability distribution of electrons, allowing for a direct computation of expectation values without the need for simplifying approximations common in other computational approaches.

In the context of biomedicine, this translates to an ability to model molecular interactions with high fidelity. For instance, the binding between a drug candidate (ligand) and its protein target is governed by a complex interplay of electrostatic, van der Waals, and hydrophobic interactions, all of which are electronic in origin. QMC methods can provide a detailed picture of these interactions by accurately modeling the electron correlation effects that are often poorly described by more approximate methods like density functional theory (DFT) with standard exchange-correlation functionals [4]. This makes QMC particularly suited for challenging systems, including those involving van der Waals forces or transition metal centers often found in biomolecules.

Connecting Electronic Structure to Binding and Toxicity

The accuracy of QMC in describing electronic structure is the foundation for its predictive power in biomedical applications:

- Binding Affinity: The binding free energy of a protein-ligand complex is a central quantity in drug discovery. It can be rigorously computed from first principles by considering the energy differences along the binding pathway. QMC can provide highly accurate interaction energies for these calculations, which are critical for distinguishing between strong and weak binders [33].

- Toxicity Prediction: Toxicity often arises from undesired interactions between a molecule and off-target proteins or from the molecule's inherent chemical reactivity. Both can be probed through electronic structure calculations. Accurate prediction of frontier orbital energies (HOMO/LUMO) and excitation energies via QMC can inform on a molecule's reactivity, while interaction energy calculations can identify potential off-target binding [34].

Predicting Protein-Ligand Binding Affinity

Accurate evaluation of ligand binding free energies remains a central challenge in computational biophysics. Alchemical free energy perturbation (FEP) methods, which compute the free energy difference after a chemical change, are a particularly attractive option due to their rigorous theoretical foundation [35]. These methods can be implemented with either Molecular Dynamics (MD) or Monte Carlo (MC) sampling, and their predictive power is increasingly being enhanced by QMC-derived reference data.

Free Energy Perturbation (FEP) Foundations

FEP is based on the Zwanzig relationship, which provides a way to compute the free energy difference between two states (e.g., a ligand A and a slightly modified ligand B) by gradually transforming one into the other via a series of non-physical intermediates. This is controlled by an alchemical parameter, λ, which varies from 0 (initial state) to 1 (final state) [35]. The sampling at each λ-window can be performed using MC or MD, and the free energy difference is typically calculated using methods like the Bennett acceptance ratio (BAR) [35]. A major challenge is achieving adequate sampling of all relevant conformations. Enhanced sampling protocols, such as Replica Exchange with Solute Tempering (REST), are often employed, where a selected region (e.g., the ligand) is effectively "heated" to promote barrier crossing while maintaining rigorous Boltzmann sampling [35].

QMC-Accelerated FEP Protocol

The following protocol outlines how high-accuracy QMC calculations can be integrated into an FEP workflow to improve binding affinity predictions.

Detailed Protocol: QMC-Enhanced FEP for Binding Affinity

- Objective: To compute the relative binding free energy between two similar ligands for a protein target with high accuracy, using QMC to refine key energy terms.

- Prerequisites: A high-resolution structure of the protein-ligand complex (e.g., from X-ray crystallography or cryo-EM) and parameterized force fields for the protein and ligands.

System Preparation: