Quantum Optimization Ansätze for Molecular Systems: A Guide for Computational Chemistry and Drug Discovery

This article provides a comprehensive overview of quantum optimization ansätze for simulating molecular systems, a critical technology for computational chemistry and drug discovery.

Quantum Optimization Ansätze for Molecular Systems: A Guide for Computational Chemistry and Drug Discovery

Abstract

This article provides a comprehensive overview of quantum optimization ansätze for simulating molecular systems, a critical technology for computational chemistry and drug discovery. We explore the foundational principles of variational quantum eigensolvers (VQE) and popular ansätze like the Unitary Vibrational Coupled Cluster (UVCC) and hardware-efficient Compact Heuristic Circuit (CHC). The content covers methodological applications in calculating ground and excited vibrational states, advanced optimization techniques like ExcitationSolve for efficient parameter tuning, and strategies for mitigating noise on current quantum hardware. Finally, we present a rigorous framework for benchmarking ansatz performance against classical methods, synthesizing key insights to highlight the transformative potential of quantum computing for accelerating pharmaceutical R&D.

Quantum Ansatz Fundamentals: From Molecular Hamiltonians to Variational Circuits

The Role of Ansätze in the Variational Quantum Eigensolver (VQE) Algorithm for Molecular Systems

The Variational Quantum Eigensolver (VQE) has emerged as a cornerstone algorithm for quantum chemistry on noisy intermediate-scale quantum (NISQ) devices, offering a practical approach to solving electronic structure problems. As a hybrid quantum-classical algorithm, VQE leverages quantum computers to prepare and measure parameterized quantum states while employing classical optimizers to find the ground state energy of molecular systems [1] [2]. At the heart of this algorithm lies the ansatz—a parameterized quantum circuit that serves as a trial wavefunction whose structure critically determines the algorithm's efficiency, accuracy, and convergence properties [3].

Within the context of understanding quantum optimization ansatz for molecular systems research, the selection and design of appropriate ansätze represent a fundamental challenge that bridges physical intuition, mathematical formulation, and computational implementation. This technical guide examines the multifaceted role of ansätze in VQE applications, providing researchers and drug development professionals with a comprehensive framework for navigating the complex landscape of ansatz selection, optimization, and innovation in computational chemistry.

Fundamental Principles of VQE

Algorithmic Framework

The VQE algorithm operates on the variational principle of quantum mechanics, which establishes that for any trial wavefunction |ψ(θ)⟩, the expectation value of the Hamiltonian H provides an upper bound to the true ground state energy E₀ [1]:

E₀ ≤ ⟨ψ(θ)|H|ψ(θ)⟩ [4]

The molecular Hamiltonian H is typically expressed in second quantization and transformed into a qubit representation using mappings such as Jordan-Wigner or Bravyi-Kitaev, resulting in a weighted sum of Pauli strings [1]:

H = Σⱼ αⱼPⱼ

where each Pⱼ is a tensor product of Pauli operators (I, X, Y, Z) and αⱼ represents the corresponding coefficient [5].

The VQE Workflow

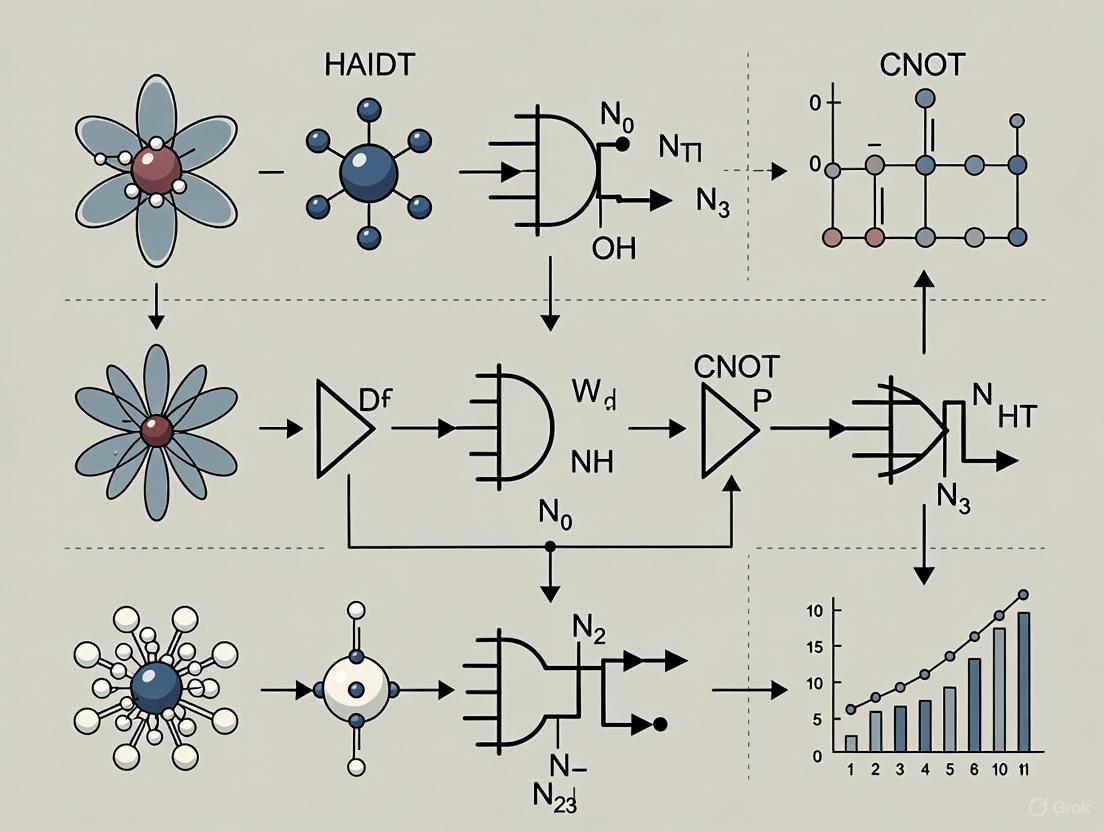

The VQE process follows an iterative hybrid workflow as illustrated below:

Figure 1: The VQE hybrid quantum-classical workflow. The algorithm iterates between quantum measurement and classical optimization until convergence criteria are met.

This workflow highlights the interdependent relationship between quantum state preparation (governed by the ansatz) and classical optimization. The quantum processor prepares the parameterized ansatz state |ψ(θ)⟩ = U(θ)|ψ₀⟩, where U(θ) represents the parameterized quantum circuit and |ψ₀⟩ is typically chosen as the Hartree-Fock initial state [5]. The system then measures the expectation values of the Hamiltonian terms, which are combined to compute the total energy. A classical optimizer uses this energy evaluation to update the parameters θ, and the process repeats until convergence [1] [4].

Taxonomy and Formulation of Ansätze

Ansatz Classification

Ansätze in VQE can be categorized based on their design principles and relationship to the target problem. The table below summarizes the fundamental types of ansätze used in molecular simulations:

Table 1: Classification of Ansatz Types for Molecular VQE Simulations

| Ansatz Type | Design Principle | Key Features | Mathematical Form | Applications |

|---|---|---|---|---|

| Physically-Motivated (e.g., UCCSD) | Based on quantum chemistry principles [6] | Respects physical symmetries (particle number, spin) [6] | U(θ) = exp(Σθₖ(τₖ - τₖ†)) where τₖ are excitation operators [4] | Molecular ground state estimation [7] |

| Hardware-Efficient | Tailored to device connectivity and gate set [1] | Shallow depth, may not preserve physical symmetries [2] | Layered structure with single-qubit rotations and entangling gates [1] | NISQ-era applications with limited qubits [2] |

| Problem-Inspired (e.g., SPA) | Incorporates problem-specific knowledge [8] | Balance between chemical accuracy and circuit efficiency [8] | Graph-based construction reflecting molecular structure [8] | Hydrogen chain simulations [8] |

| Adaptive (e.g., ADAPT-VQE) | Iteratively builds ansatz based on gradients [6] | Circuit growth determined by energy gradient significance [6] | U(θ) = Πⱼ exp(-iθⱼGⱼ) with operators selected iteratively [6] | Complex molecular systems with strong correlations |

Mathematical Formulation

The unitary coupled cluster with singles and doubles (UCCSD) ansatz represents one of the most chemically accurate approaches for molecular systems. Its mathematical formulation begins with the coupled cluster wavefunction ansatz:

|ψ⟩ = e^(T - T†)|ψ₀⟩

where T = T₁ + T₂ represents the cluster operator consisting of single (T₁) and double (T₂) excitation operators [4]. For a system with N spin orbitals, these operators are defined as:

T₁ = Σ{i,a} θi^a aa†ai

T₂ = Σ{i

where i,j denote occupied orbitals and a,b denote virtual orbitals in the reference state |ψ₀⟩, typically chosen as the Hartree-Fock state [4]. The parameters θ represent the amplitudes of the various excitations that are optimized during the VQE procedure.

The hardware-efficient ansatz adopts a significantly different structure, composed of alternating layers of single-qubit rotations and entangling gates:

U(θ) = Π{l=1}^L [U{ent} Π{i=1}^N R(θ{l,i})]

where L denotes the number of layers, N the number of qubits, R(θ{l,i}) represents parameterized single-qubit rotations, and U{ent} is an entangling gate block, typically composed of CNOT or CZ gates arranged according to the hardware connectivity [1].

Ansatz Selection and Implementation

Decision Framework for Ansatz Selection

Selecting an appropriate ansatz requires balancing multiple competing factors including chemical accuracy, circuit depth, parameter count, and hardware constraints. The following decision framework provides guidance for researchers:

Figure 2: Decision framework for ansatz selection in molecular VQE simulations, highlighting key considerations and appropriate ansatz choices for different scenarios.

Research Reagent Solutions

The experimental implementation of VQE for molecular systems requires both computational and quantum resources. The table below details essential "research reagents" for conducting VQE experiments:

Table 2: Essential Research Reagents for VQE Molecular Simulations

| Reagent Category | Specific Examples | Function/Role | Implementation Notes |

|---|---|---|---|

| Molecular Input | Geometry coordinates, Basis sets (STO-3G, cc-pVDZ) [9] | Defines molecular structure and orbital basis for Hamiltonian construction [9] | Standardized formats (.xyz); minimal basis sets reduce qubit requirements |

| Qubit Mapping | Jordan-Wigner, Bravyi-Kitaev, Parity [1] [4] | Encodes fermionic Hamiltonians into qubit representations [1] | Bravyi-Kitaev typically offers better scaling for molecular systems |

| Quantum Simulators | Qiskit, PennyLane, Tequila [9] [8] | Emulates quantum hardware for algorithm development and testing [9] | Critical for protocol validation before quantum hardware deployment |

| Classical Optimizers | Gradient-based (SLSQP, Adam), Gradient-free (COBYLA, SPSA), Quantum-aware (Rotosolve, ExcitationSolve) [4] [6] | Adjusts ansatz parameters to minimize energy expectation value [4] | Choice depends on parameter count, noise sensitivity, and computational budget |

| Error Mitigation | Zero-noise extrapolation, Measurement error mitigation [1] | Counteracts hardware noise to improve result accuracy [1] | Essential for meaningful results on current quantum devices |

Advanced Research and Optimization Techniques

Machine Learning for Ansatz Development

Recent research has explored machine learning approaches to address ansatz selection and parameter optimization challenges. Diffusion models have been employed to generate novel ansatz structures by learning from existing high-performing circuits [7]. In this approach, a dataset of effective UCC ansatzes is used to train a diffusion model that can generate new quantum circuits with similar structural properties but enhanced expressibility [7].

Transfer learning represents another promising direction, where models trained on smaller molecular systems predict optimal parameters for larger systems. As demonstrated in recent work, graph neural networks and SchNet architectures can learn parameter relationships across different molecular sizes, enabling transferable VQE models that generalize from H₄ to H₁₂ systems without requiring complete reoptimization [8].

Quantum-Aware Optimization Methods

Specialized optimizers that leverage the mathematical structure of quantum circuits have shown significant improvements over general-purpose optimization algorithms. The ExcitationSolve algorithm extends Rotosolve-type optimizers to handle excitation operators with generators G satisfying G³ = G, which includes UCCSD generators [6].

The algorithm exploits the analytical form of the energy landscape when varying a single parameter θ_j:

fθ(θj) = a₁cos(θj) + a₂cos(2θj) + b₁sin(θj) + b₂sin(2θj) + c [6]

By determining the five coefficients through energy evaluations at five different parameter values, ExcitationSolve can reconstruct the exact energy landscape and directly identify the global minimum along that parameter direction, typically converging faster than black-box optimizers and achieving chemical accuracy with fewer quantum evaluations [6].

Experimental Protocols for Ansatz Evaluation

Protocol 1: Separable Pair Ansatz for Hydrogen Chains

System Preparation: Generate molecular geometries for linear Hₙ chains with randomized atomic positions constrained by minimum (0.5 Å) and maximum (2.5 Å) separation distances [8].

Graph Construction: Determine the optimal chemical graph (Lewis structure) using scaled Euclidean distances as edge weights, followed by heuristic identification of a perfect matching graph G with minimal edge weight [8].

Ansatz Implementation: Construct the separable pair ansatz (SPA) using the graph G and compute the corresponding orbital-optimized Hamiltonian H_opt [8].

Parameter Optimization: Minimize the expectation value ⟨USPA|Hopt|USPA⟩ using a VQE algorithm with classical optimizer to obtain the optimal parameters θ and corresponding energy ESPA [8].

Validation: Compare against exact diagonalization results or high-level classical computational chemistry methods to assess accuracy.

Protocol 2: ADAPT-VQE for Strongly Correlated Systems

Initialization: Begin with a reference state, typically Hartree-Fock, and an empty operator pool composed of fermionic or qubit excitation operators [6].

Gradient Evaluation: For each operator in the pool, compute the energy gradient with respect to adding that operator to the ansatz.

Operator Selection: Identify the operator with the largest magnitude gradient and add it to the ansatz with an initially zero parameter.

Parameter Optimization: Optimize all parameters in the current ansatz using a quantum-aware optimizer like ExcitationSolve.

Iteration: Repeat steps 2-4 until the magnitude of the largest gradient falls below a predetermined threshold, indicating convergence to the ground state.

The role of ansätze in the Variational Quantum Eigensolver represents both a fundamental challenge and tremendous opportunity in quantum computational chemistry. As this technical guide has elaborated, ansatz selection critically influences every aspect of VQE performance—from accuracy and convergence to practical implementability on current quantum hardware. The ongoing research into physically-motivated, hardware-efficient, problem-inspired, and adaptive ansätze reflects a maturation of the field toward practical applications in molecular systems and drug development.

For research professionals pursuing quantum-accelerated molecular design, the emerging methodologies of machine learning-assisted ansatz generation, quantum-aware optimization, and transferable parameter prediction offer promising pathways to overcome current limitations. As quantum hardware continues to evolve, the synergistic development of advanced ansätze tailored to specific molecular classes will undoubtedly play a pivotal role in realizing the potential of quantum computing in pharmaceutical research and materials science.

In the pursuit of quantum advantage for molecular systems, the design of parameterized quantum circuits, or ansätze, presents a fundamental trade-off. An ideal ansatz must be gate-efficient for execution on noisy hardware while preserving the physical symmetries inherent to molecular Hamiltonians—chiefly, electron number and spin symmetry [10]. Neglecting these symmetries leads to unphysical states, computational overhead, and results divorced from chemical reality. This technical guide examines the foundational principles, cutting-edge methodologies, and validation techniques for constructing symmetry-preserving ansätze, framed within the broader research objective of developing reliable quantum optimization ansätze for molecular systems.

The electronic structure problem requires finding the ground state energy of a molecular Hamiltonian, a task for which quantum computers are naturally suited [8]. However, on near-term devices, the Variational Quantum Eigensolver (VQE) has emerged as a leading algorithm, hybridizing quantum state preparation with classical optimization [8] [11]. Its success critically depends on the ansatz, the quantum circuit that prepares a trial wavefunction. Hardware-efficient ansätze offer shallow circuits but often violate physical symmetries, while fermionic ansätze like unitary coupled cluster (UCC) preserve symmetries but require deep, noisy circuits [10]. This guide details how to transcend this trade-off, providing researchers and drug development professionals with the tools to implement chemically meaningful and hardware-feasible simulations.

Theoretical Foundations: Symmetries in Electronic Structure

Fundamental Physical Symmetries and Their Consequences

The electronic molecular Hamiltonian exhibits several symmetries that must be reflected in its wavefunction solutions for physically meaningful results.

- Particle Number Conservation (

N): The total number of electrons in the system is fixed. An ansatz that does not conserve particle number explores regions of the Hilbert space that are unphysical for the problem at hand. - Spin Symmetry (

S²andS_z): The total spin angular momentum (S²) and its z-component (S_z) are good quantum numbers. A correct eigenfunction of the molecular Hamiltonian must also be an eigenfunction of theS²andS_zoperators. For example, a singlet state must have⟨S²⟩ = 0. - Pauli Antisymmetry: The wavefunction must be antisymmetric with respect to the exchange of any two electrons. This is often built into the qubit mapping (e.g., Jordan-Wigner, Bravyi-Kitaev) and the choice of fermionic excitation operators.

Violating these symmetries leads to a phenomenon known as symmetry breaking, where the variational algorithm converges to a state that is not an eigenfunction of S². This state may have a lower energy than the true physical ground state, but it is an artifact of the broken symmetry and does not represent a physically realizable electronic state [10]. Preserving symmetries is therefore not merely a formal requirement but a prerequisite for predictive accuracy in areas like drug discovery, where property predictions depend on correct electronic state characterization.

The Trade-off: Gate Efficiency vs. Symmetry Preservation

The central challenge in NISQ-era ansatz design is balancing expressibility and hardware feasibility.

- Hardware-Efficient Ansätze: Prioritize shallow depth using native gate sets but typically lack built-in symmetry preservation, requiring penalty terms or post-processing [10].

- Fermionic Ansätze: (e.g., UCC) explicitly preserve symmetries by construction but often involve deep circuits from Trotter approximation and high gate counts [10].

Recent advances aim to reconcile this conflict through disentangled UCC theory and symmetry-preserving gate fabrics [10]. These approaches maintain physical constraints while achieving superior gate efficiency, a necessity for practical application on current hardware.

Advanced Methodologies for Symmetry-Preserving Ansätze

The Tiled Unitary Product State (tUPS) Ansatz

Building on disentangled UCC theory, the tUPS approximation represents a significant leap forward. It constructs an arbitrary wavefunction from a product of exponential fermionic operators [10]:

This approach utilizes specific spin-adapted one-body (κ̂_pq^(1)) and paired two-body (κ̂_pq^(2)) operators to preserve spin symmetry [10]:

Here, Ê_pq = p̂†q̂ + p̄̂†q̄̂ is the singlet excitation operator, which ensures spin adaptation. The "tiled" structure maximizes parallelization and minimizes circuit depth, while orbital optimization and a valence bond-inspired initial state enhance accuracy.

Key Advantages:

- Systematically preserves spin and particle number symmetries

- Achieves chemical accuracy (within 1.59 mE_h) with up to 84% fewer two-qubit gates compared to state-of-the-art adaptive methods [10]

- Provides a fixed, non-adaptive circuit structure, reducing measurement costs and improving noise resilience compared to adaptive protocols like ADAPT-VQE

Quantum-Neural Hybrid Approaches

Hybrid frameworks integrate quantum circuits with classical neural networks to enhance expressiveness while maintaining physical constraints. The pUNN (paired UCC with Neural Networks) method employs a shallow pUCCD circuit for the seniority-zero subspace, augmented by a neural network that accounts for unpaired configurations [11].

A critical innovation in pUNN is enforcing particle number conservation through a neural network mask [11]:

where the mask m(k,j) is defined as:

This ensures only electron-number-conserving configurations contribute to the wavefunction. The method maintains low qubit counts and shallow circuit depth while achieving accuracy comparable to high-level classical methods like CCSD(T) [11].

The Quantum Number Preserving (QNP) Gate Fabric

The QNP gate fabric is a hardware-aware strategy that combines symmetry preservation with local qubit connectivity [10]. By designing fundamental gate operations that inherently respect particle number and spin symmetries, this approach ensures that any circuit built from these components will automatically preserve these physical constraints, regardless of circuit depth or parameter values.

Experimental Protocols and Validation

Workflow for Symmetry-Preserving VQE Simulation

The following diagram illustrates the integrated workflow for conducting a symmetry-preserving VQE simulation, from molecule input to final validation.

Key Validation Metrics and Protocols

1. Spin Symmetry Measurement (<S²>)

Directly measuring the S² operator expectation value is crucial for validating spin symmetry. For a spin-adapted ansatz like tUPS, ⟨S²⟩ should be exactly zero for singlet states without explicit measurement. For other ansätze, ⟨S²⟩ must be measured to confirm physicality.

2. Electron Density as a Fidelity Witness

The electron density ρ(r) provides an information-rich experimental observable for validation [12]. It can be reconstructed from the one-particle reduced density matrix (1-RDM):

where D_{pq} = ⟨Ψ|a†_{pσ}a_{qσ}|Ψ⟩ are 1-RDM elements measured from the quantum circuit [12]. Topological analysis of ρ(r) using QTAIM reveals critical points (e.g., bond-critical points) that serve as potent fidelity witnesses [12].

3. Constrained 1-RDM for Noise Mitigation To combat noise in 1-RDM measurement, enforce N-representability constraints (e.g., trace condition, positive semidefiniteness) to produce more physical electron densities from noisy quantum hardware [12].

Research Reagent Solutions: Essential Tools for Quantum Computational Chemistry

Table 1: Key Software and Tools for Symmetry-Preserving Ansatz Development

| Tool Name | Type | Primary Function in Ansatz Research | Key Symmetry Features |

|---|---|---|---|

| Qiskit [13] | Quantum SDK (Python) | Quantum circuit design, simulation, and execution. | Supports fermionic gate construction for symmetry preservation. |

| PennyLane [14] | Quantum ML Library (Python) | Hybrid quantum-classical optimization; quantum chemistry. | Built-in templates for molecular Hamiltonians and symmetries. |

| tequila [8] | Quantum Computing SDK | Variational hybrid algorithms for quantum chemistry. | Used in generating datasets for symmetry-adapted ansätze like SPA [8]. |

| quanti-gin [8] | Data Generation Tool | Generates molecular geometries, Hamiltonians, and optimized circuit parameters. | Implements separable pair ansatz (SPA) with inherent symmetry properties [8]. |

| Q-Chem [15] | Quantum Chemistry Software | Classical electronic structure calculations for benchmarking. | Provides high-accuracy reference data for energy and electron density. |

Performance Benchmarking and Applications

Quantitative Comparison of Ansatz Performance

Table 2: Performance Benchmarking of Symmetry-Preserving Ansätze for Molecular Systems

| Molecule | Ansatz | Qubits | 2-Qubit Gate Count | Energy Error (mE_h) | ⟨S²⟩ | Reference |

|---|---|---|---|---|---|---|

| N₂ | tUPS | 12 | ~200 | < 1.59 | 0.0 | [10] |

| N₂ | ADAPT-VQE | 12 | ~1,250 | < 1.59 | 0.0 | [10] |

| H₂O | tUPS | 14 | ~250 | < 1.59 | 0.0 | [10] |

| Benzene | tUPS | 36 | ~500 | < 1.59 | 0.0 | [10] |

| Cyclobutadiene | pUNN (Hybrid) | 24* | N/R | Comparable to CCSD(T) | N/R | [11] |

| H₄ | SPA (Machine Learning) | 8 | N/R | N/R | Preserved | [8] |

Performance data extracted from research results; gate counts and errors are approximate representations from cited studies. N/R: Not explicitly reported in the available source text.

Application to Drug Discovery and Molecular Design

Symmetry-preserving ansätze enable reliable quantum computations for pharmaceutical applications:

- Molecular Property Prediction: Accurate ground and excited state calculations predict reactivity, spectra, and binding affinities [16].

- Reaction Pathway Modeling: Methods like tUPS accurately model complex processes like the isomerization of cyclobutadiene, demonstrating transferability across potential energy surfaces [10] [11].

- Transition Metal Complexes: Strong electron correlation in catalytic systems requires symmetry-preserving approaches for meaningful results.

Preserving electron number and spin symmetry is not optional but essential for quantum computational chemistry with predictive power. Advanced ansätze like tUPS and hybrid quantum-neural approaches demonstrate that chemical accuracy can be achieved without sacrificing physical constraints or gate efficiency. The experimental protocols and validation metrics outlined provide a roadmap for researchers to implement these methods effectively.

Future research will focus on enhancing transferability across molecular structures [8], further reducing gate counts, developing more sophisticated error mitigation techniques, and tighter integration of machine learning with symmetry-preserving quantum circuits. As quantum hardware advances, these symmetry-aware design principles will form the foundation for accurate quantum simulations of complex molecular systems in drug development and materials science.

The accurate simulation of molecular quantum systems represents a fundamental challenge in computational chemistry, with profound implications for drug discovery and materials science. The variational quantum eigensolver (VQE) has emerged as a leading algorithm for solving electronic structure problems on noisy intermediate-scale quantum (NISQ) devices. At the heart of every VQE calculation lies the ansatz—a parameterized quantum circuit that prepares trial wavefunctions for approximating molecular energies. The choice of ansatz critically determines the balance between physical accuracy, computational efficiency, and hardware feasibility, creating a fundamental tension in algorithm design for near-term quantum applications. This technical analysis examines the two dominant paradigms in ansatz construction: physically-motivated approaches rooted in quantum chemistry principles, and hardware-efficient strategies optimized for contemporary quantum processor constraints. By synthesizing recent advances and empirical findings, this review provides a framework for selecting and optimizing ansatzes to accelerate molecular simulations in pharmaceutical research and development.

Theoretical Foundations of Ansatz Design

The Variational Quantum Eigensolver Framework

The VQE algorithm operates through a hybrid quantum-classical workflow where a parameterized quantum circuit (ansatz) prepares trial states on a quantum processor, while a classical optimizer adjusts parameters to minimize the energy expectation value. The performance of this approach hinges on the ansatz's ability to accurately represent the target molecular state while respecting the severe constraints of NISQ hardware, including limited qubit coherence times, gate fidelities, and qubit connectivity. The central challenge lies in navigating the trade-offs between physical expressivity, which typically requires deeper quantum circuits with complex entanglement structures, and hardware efficiency, which favors shallower circuits with native connectivity patterns.

Classification of Ansatz Paradigms

Ansatzes for quantum chemistry simulations generally fall into two categories with distinct design philosophies:

Physically-Motivated Ansatzes: These approaches incorporate domain knowledge from quantum chemistry, typically through unitary coupled cluster (UCC) inspired constructions that preserve physical symmetries such as particle number and spin conservation. Examples include the unitary vibrational coupled cluster (UVCC) for molecular vibrations and the qubit unitary coupled cluster (qUCC) for electronic structure problems. These ansatzes provide chemically meaningful parameterizations with well-defined excitation hierarchies but often require deep quantum circuits that challenge current hardware capabilities [17] [18].

Hardware-Efficient Ansatzes: Designed specifically to accommodate hardware limitations, these ansatzes employ shallow circuits with connectivity patterns aligned to the quantum processor's native geometry and gate sets. While offering practical advantages for implementation on current devices, they may violate physical symmetries and lack systematic improvability, potentially yielding unphysical states and energies [6] [18].

Physically-Motivated Ansatzes: Theory and Implementation

Unitary Coupled Cluster Formulations

The unitary coupled cluster with singles and doubles (UCCSD) represents a gold standard among physically-motivated ansatzes, defined by the exponential parameterization:

|ψ⟩ = e^{T−T^†}|Φ₀⟩

where |Φ₀⟩ is a reference state (typically Hartree-Fock) and T = T₁ + T₂ represents single and double excitation operators. This formulation preserves essential physical properties including size consistency, size extensivity, and invariance to orbital rotations within occupied and virtual spaces [19]. For molecular vibrational structure calculations, the bosonic unitary vibrational coupled cluster (UVCC) provides an analogous framework adapted for nuclear Schrödinger equations, enabling the treatment of anharmonic potentials through excitations between vibrational modals [18].

Advanced Physically-Motivated Formulations

Recent research has developed more sophisticated physically-motivated ansatzes that retain chemical intuition while improving computational efficiency:

Local Unitary Cluster Jastrow (LUCJ) Ansatz: This approach incorporates insights from Hubbard model physics by maintaining only on-site, opposite-spin and nearest-neighbor, same-spin number-number terms. The LUCJ ansatz demonstrates particular effectiveness for strongly correlated electronic states found in bond breaking and transition metal compounds, where traditional single-reference methods fail. By eliminating the need for SWAP gates and tailoring the circuit to specific qubit topologies (square, hex, heavy-hex), the LUCJ achieves reduced quantum resource requirements while preserving physical transparency [19].

Unitary Cluster Jastrow (UCJ) Ansatz: Implemented as an L-fold product of layers, the UCJ ansatz employs anti-Hermitian operators K^μ and symmetric real matrices J^μ to capture correlation effects. Under specific symmetry conditions (J{pq,αα}^μ = J{pq,ββ}^μ and J{pq,αβ}^μ = J{pq,βα}^μ), the UCJ ansatz commutes with the total spin operator S_z, maintaining spin symmetry in the wavefunction [19].

Experimental Protocol for UVCC Implementation

The standard methodology for implementing unitary vibrational coupled cluster calculations involves these key procedures:

Hamiltonian Preparation: Represent the molecular vibrational Hamiltonian using an n-mode expansion of the potential energy surface (PES), typically truncated at 4-body terms for practical calculations while maintaining accuracy of 1-2 cm⁻¹ [18].

Qubit Encoding: Employ the generalized second quantization representation to map vibrational states to qubits. This can be achieved through compact mapping techniques that efficiently represent bosonic excitations within the qubit register [18].

Wavefunction Parametrization: Construct the UVCC ansatz using exponential operators of excitation terms between vibrational modals, typically derived from vibrational self-consistent field (VSCF) calculations for anharmonic systems [18].

Energy Evaluation and Optimization: Measure the energy expectation value using quantum circuits and optimize parameters through hybrid quantum-classical loops, potentially employing specialized optimizers like ExcitationSolve for efficient convergence [6].

The diagram below illustrates the logical workflow and decision points in the UVCC experimental protocol:

Hardware-Efficient Ansatzes: Architectures and Applications

Compact Heuristic Circuits

Hardware-efficient ansatzes prioritize practical implementation on NISQ devices through simplified circuit architectures. The compact heuristic circuit (CHC) represents a prominent example, specifically designed to reduce circuit complexity without sacrificing accuracy for molecular vibrational energy calculations. Empirical studies demonstrate that the CHC ansatz significantly decreases quantum circuit depth compared to UVCC approaches while maintaining comparable fidelity in ground state energy determination [17]. This characteristic makes the CHC ansatz particularly suitable for the NISQ era, where limited coherence times constrain maximum circuit depth. For excited state calculations, the CHC ansatz can be effectively combined with the variational quantum deflation (VQD) algorithm, providing results that benchmark favorably against the quantum equation of motion (qEOM) method [17].

Qubit excitation-based (QEB) ansatzes represent another hardware-efficient approach that maintains number conservation while optimizing for quantum hardware constraints. The qubit coupled cluster singles doubles (QCCSD) ansatz operates directly in the qubit space using number-conserving excitations, avoiding the overhead of mapping fermionic operators to qubits [6]. This strategy reduces circuit depth and gate counts compared to traditional UCCSD implementations, though it may sacrifice some physical interpretability in the process.

Tailored Hardware Implementations

Advanced hardware-efficient ansatzes can be specifically designed to leverage the architectural features of contemporary quantum processors:

Topology-Adapted Circuits: By aligning entangling gate patterns with the native connectivity of target hardware (e.g., square, hex, or heavy-hex lattices), these ansatzes minimize the need for SWAP gates that increase circuit depth and error susceptibility [19].

Gate Set Optimization: Utilizing native gate operations available on specific hardware platforms (such as fSim gates on superconducting processors with tunable couplers) further enhances circuit efficiency and fidelity [19].

Dynamic Circuit Construction: Adaptive approaches like ADAPT-VQE iteratively build the ansatz based on gradient criteria, potentially reducing parameter counts and circuit depths compared to fixed-ansatz strategies [6].

Performance Comparison and Benchmarking

Quantitative Metrics for Ansatz Evaluation

The table below summarizes key performance characteristics for prominent ansatz types based on recent empirical studies:

Table 1: Comparative Performance of Ansatz Paradigms for Molecular Simulations

| Ansatz Type | Circuit Depth | Parameter Count | Physical Symmetries | Hardware Efficiency | Target Applications |

|---|---|---|---|---|---|

| qUCCSD | O(N^4 N_t) | O(No^2 Nv^2) | Preserved | Low | Electronic ground states, single-reference systems |

| UVCC | High (exponential scaling) | O(M^2 L^2) | Preserved | Low | Molecular vibrational energies |

| LUCJ | Moderate (polynomial scaling) | Reduced compared to qUCCSD | Partially preserved | High (no SWAP gates needed) | Strongly correlated electrons, open-shell systems |

| CHC | Significantly reduced | Compact parameterization | May be violated | High | Vibrational ground and excited states |

| QEB/QCCSD | Reduced compared to UCCSD | Similar to UCCSD | Number conservation preserved | Moderate | Electronic structure with reduced gate counts |

Note: N represents the number of spin orbitals; N_o and N_v denote occupied and virtual orbitals; L represents molecular modes; M indicates modal basis size; N_t refers to Trotter steps [17] [19] [6].

Accuracy Benchmarks for Molecular Systems

Empirical evaluations across diverse molecular systems provide critical insights into the accuracy trade-offs between ansatz paradigms:

Vibrational Energy Calculations: Comparative studies between UVCC and CHC ansatzes for molecular vibrational ground states show that the CHC approach achieves comparable accuracy to UVCC with significantly reduced circuit complexity. For excited states, the CHC ansatz combined with VQD delivers results within chemical accuracy thresholds (1 kcal/mol) of classical benchmarks for small molecules [17].

Strong Correlation Challenges: For strongly correlated electronic systems such as stretched H₂ and Cu₂O₂ complexes, the LUCJ ansatz demonstrates superior performance compared to qUCCSD, correctly capturing diradical character and antiferromagnetic coupling with reduced quantum resource requirements [19].

Noise Resilience: Under realistic hardware noise conditions, compact heuristic ansatzes like CHC maintain better performance than their physically-motivated counterparts due to reduced circuit depths and gate counts, highlighting their practical advantage on current NISQ devices [17].

The diagram below illustrates the performance trade-offs between major ansatz types across key evaluation dimensions:

Optimization Strategies for Ansatz Performance

Specialized Quantum-Aware Optimizers

The optimization landscape for VQE parameters presents significant challenges due to the high-dimensional, non-convex nature of the energy function. Recently developed quantum-aware optimizers leverage problem-specific knowledge to improve convergence efficiency:

ExcitationSolve: This gradient-free, hyperparameter-free optimizer extends Rotosolve-type approaches to excitation operators with generators G satisfying G³ = G (characteristic of UCC and related ansatzes). ExcitationSolve reconstructs the energy landscape for each parameter using only five energy evaluations then classically computes the global optimum, significantly accelerating convergence for physically-motivated ansatzes [6].

Resource Efficiency: Compared to general-purpose optimizers like COBYLA, ExcitationSolve reduces the number of quantum measurements required for convergence by analytically determining optimal parameter updates based on the known trigonometric structure of the energy landscape [6].

Noise Mitigation and Error Resilience

Contemporary quantum hardware limitations necessitate specialized strategies for maintaining ansatz performance under realistic noise conditions:

Error-Aware Compilation: Tailoring ansatz implementation to hardware-specific error profiles and native gate sets can substantially improve result fidelity. For example, leveraging continuous gate sets available on superconducting processors with tunable couplers enhances the efficiency of LUCJ ansatz execution [19].

Embedding Techniques: Hybrid quantum-classical approaches like density matrix embedding theory (DMET) combined with sample-based quantum diagonalization (SQD) enable the simulation of molecular fragments using limited qubit counts (27-32 qubits), effectively reducing the circuit depth required for accurate energy calculations [20].

Symmetry Verification: For ansatzes that preserve physical symmetries, measuring symmetry operators provides a mechanism for detecting and mitigating errors that drive the quantum state outside the physical subspace [21].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Computational Components for Ansatz Implementation in Molecular Simulations

| Research Component | Function | Example Implementations |

|---|---|---|

| VQE Framework | Hybrid quantum-classical optimization loop | Qiskit, Tangelo, PennyLane |

| Ansatz Libraries | Pre-constructed parameterized circuits | UVCC, CHC, LUCJ, QCCSD |

| Quantum Optimizers | Parameter optimization for VQE | ExcitationSolve, Rotosolve, COBYLA |

| Qubit Mapping | Encode molecular orbitals to qubits | Jordan-Wigner, Bravyi-Kitaev, Compact encoding |

| Error Mitigation | Reduce hardware noise impact | Zero-noise extrapolation, dynamical decoupling |

| Classical Embedding | Fragment large molecules | Density Matrix Embedding Theory (DMET) |

| Hardware Interfaces | Execute circuits on quantum devices | IBM Quantum, IonQ, Amazon Braket |

Future Directions and Research Opportunities

The ongoing development of ansatz methodologies continues to address critical challenges in quantum computational chemistry:

Hardware-Co-Design: Next-generation ansatzes will increasingly incorporate specific hardware capabilities as fundamental design constraints, potentially leveraging emerging technologies like tunable couplers and dynamic circuit capabilities to enhance performance [19] [22].

Machine Learning Enhancement: Integrating machine learning techniques with traditional ansatz constructions shows promise for automatically generating efficient circuit architectures tailored to specific molecular systems and hardware platforms [21].

Multi-Level Methods: Hierarchical approaches combining different ansatz types at various scales offer a promising path for simulating large molecular systems, with compact ansatzes describing short-range correlations and more expressive constructions capturing long-range interactions [20].

Error-Resilient Formulations: Developing inherently noise-robust ansatzes through symmetry preservation and error-detection capabilities represents an active research frontier aimed at extending the practical applicability of NISQ-era quantum chemistry simulations [23] [21].

As quantum hardware continues to evolve with improving qubit counts, gate fidelities, and coherence times, the distinction between physically-motivated and hardware-efficient ansatzes is likely to blur, giving rise to hybrid approaches that optimally balance physical rigor with implementation efficiency. This convergence will progressively enable the accurate simulation of increasingly complex molecular targets relevant to pharmaceutical development and drug discovery programs.

The accurate computation of molecular vibrational energies is a cornerstone of chemical research, with critical implications for understanding reaction dynamics, spectroscopic properties, and drug design. While classical computational methods face exponential scaling challenges for complex molecular systems, quantum computing offers a promising alternative for handling the intrinsic quantum nature of molecular vibrations [24]. Within this emerging paradigm, the Unitary Vibrational Coupled Cluster (UVCC) method has established itself as a leading ansatz for quantum simulations of vibrational structure problems [25] [26].

This technical guide examines UVCC within the broader context of understanding quantum optimization ansätze for molecular systems research. We present a comprehensive analysis of UVCC's theoretical foundations, implementation methodologies, and performance characteristics relative to competing approaches, providing researchers and drug development professionals with the essential knowledge for practical application and critical evaluation of this technique in computational chemistry workflows.

Theoretical Foundation of UVCC

Conceptual Framework

The Unitary Vibrational Coupled Cluster (UVCC) method adapts the well-established unitary coupled cluster framework from electronic structure theory to the domain of molecular vibrations [25]. This adaptation addresses the unique challenges of vibrational problems, where the accurate description of anharmonic effects and mode couplings is essential for predictive accuracy.

UVCC operates by constructing a parameterized wavefunction through exponential excitation operators acting on a reference state, typically the vibrational self-consistent field (VSCF) state [27]. The ansatz takes the general form:

|ψ(θ)⟩ = e^(T(θ) - T†(θ)) |ϕ_ref⟩

where T(θ) represents the cluster operator comprising excitation operators, θ denotes the variational parameters, and |ϕ_ref⟩ is the reference state [25]. This unitary formulation ensures that the wavefunction remains normalized throughout the optimization process, a significant advantage for quantum implementations.

Mathematical Structure

The cluster operator T in UVCC is typically truncated at a specific excitation level (singles, doubles, etc.), with the excitation list defining which specific excitations are included in the ansatz [27]. The accuracy of UVCC systematically improves as the excitation rank increases, converging toward the full vibrational configuration interaction (FVCI) limit [25] [26]. Research demonstrates that UVCC exhibits comparable accuracy and convergence rates to traditional vibrational coupled cluster theory while offering advantages for quantum computational implementations due to its inherent unitarity [26].

UVCC in the Quantum Computing Paradigm

Algorithmic Integration

UVCC finds its primary quantum computing application within the Variational Quantum Eigensolver (VQE) framework, a hybrid quantum-classical algorithm particularly suited for noisy intermediate-scale quantum (NISQ) devices [17]. In this implementation:

- The UVCC ansatz is compiled into a parameterized quantum circuit

- The quantum computer prepares the UVCC state and measures the energy expectation value

- A classical optimizer adjusts the UVCC parameters to minimize the energy [28]

This approach has been successfully extended to excited state calculations through integration with the Variational Quantum Deflation (VQD) algorithm, providing a pathway for determining excited vibrational state energies beyond ground-state properties [17].

Comparative Analysis of Ansätze

UVCC operates within a diverse ecosystem of quantum ansätze, each with distinct characteristics and trade-offs. The table below provides a systematic comparison of UVCC against other prominent approaches:

Table 1: Comparative Analysis of Quantum Ansätze for Molecular Vibrations

| Ansatz Type | Theoretical Foundation | Key Advantages | Key Limitations | Implementation Complexity |

|---|---|---|---|---|

| UVCC | Unitary coupled cluster theory | Systematic improvability; known accuracy and convergence toward FVCI limit [25] [26] | Deep circuit depths; significant entangling gate counts [29] | High |

| Compact Heuristic Circuit (CHC) | Hardware-efficient design | Reduced circuit complexity; noise resilience [17] | Less systematic; limited chemical intuition | Moderate |

| Hardware-Efficient Ansatz (HEA) | Minimal gate construction | Shallow depth; easy implementation on NISQ devices [30] | Limited expressibility; optimization challenges [30] | Low |

| Symmetry-Preserving Ansatz (SPA) | Hardware-efficient with constraints | Preserves physical symmetries; achieves chemical accuracy with sufficient layers [30] | Requires more gates than basic HEA | Moderate |

Computational Workflow

The end-to-end process for computing molecular vibrational energies using UVCC integrates multiple quantum and classical components, as illustrated in the following workflow:

Performance Analysis and Benchmarking

Accuracy Assessment

UVCC demonstrates methodical convergence toward the full vibrational configuration interaction (FVCI) limit, with benchmark studies showing comparable accuracy to traditional vibrational coupled cluster theory [25] [26]. The truncation level of the excitation operator significantly influences both accuracy and computational requirements:

Table 2: UVCC Performance Characteristics by Excitation Level

| Excitation Level | Theoretical Accuracy | Circuit Depth | Qubit Requirements | Typical Applications |

|---|---|---|---|---|

| Singles (S) | Moderate | Low | N qubits | Initial screening; small molecules |

| Doubles (SD) | High | Moderate | N qubits | Most ground-state applications |

| Triples (SDT) | Very High | High | N qubits | High-accuracy requirements |

| Quadruples (SDTQ) | Near-FVCI | Very High | N qubits | Benchmark calculations |

Hardware Implementation and Noise Resilience

Practical implementation of UVCC on current quantum hardware faces significant challenges related to circuit depth and noise susceptibility. Research indicates that while UVCC provides excellent theoretical accuracy, its practical performance on NISQ devices can be limited by accumulated errors from deep circuits [17].

Recent innovations have addressed these limitations through hardware-oriented optimizations:

- Gate Reduction Techniques: Utilizing redundancies in qubit Hilbert space can reduce entangling gate counts by up to 50% theoretically, with experimental demonstrations showing 28% practical reductions [29]

- Error Mitigation Strategies: Advanced error mitigation techniques combined with circuit optimization enable higher-fidelity UVCC state preparation on current hardware [29]

- Hybrid Quantum-Neural Approaches: Combining UVCC with neural networks creates noise-resilient frameworks that maintain accuracy while reducing quantum resource requirements [11]

Advanced Methodologies and Protocols

Experimental Implementation Protocol

For researchers implementing UVCC calculations, the following step-by-step protocol ensures proper configuration and execution:

System Setup

- Define the number of modals per mode (

num_modalslist) - Select excitations using string format ('s', 'd', 't', 'q') or custom callable

- Choose qubit mapper (e.g., Jordan-WignerMapper) [27]

- Define the number of modals per mode (

Circuit Initialization

- Prepare VSCF reference state as initial state

- Configure UVCC ansatz with specified excitations and repetitions

- Set up VSCFInitialPoint for parameter initialization [27]

Optimization Configuration

- Implement SOAP optimizer for efficient parameter optimization

- Configure measurement protocols for energy expectation values

- Set convergence thresholds and maximum iteration counts [28]

Execution and Analysis

- Run VQE algorithm with UVCC ansatz

- Validate results against classical methods where possible

- Perform error analysis and uncertainty quantification

The Scientist's Toolkit: Essential Research Components

Table 3: Essential Components for UVCC Research Implementation

| Component | Function | Implementation Examples |

|---|---|---|

| Qubit Mapper | Maps vibrational operators to qubit representations | JordanWignerMapper, Bravyi-Kitaev mapper [27] |

| Reference State | Provides starting point for UVCC ansatz | VSCF reference state [27] |

| Excitation Generator | Defines excitation operations included in ansatz | generatevibrationexcitations(), custom callables [27] |

| Quantum Simulator | Models UVCC circuit behavior | Qiskit Nature, statevector simulators [27] |

| Classical Optimizer | Adjusts UVCC parameters to minimize energy | SOAP algorithm, L-BFGS-B, COBYLA [28] |

| Error Mitigator | Reduces impact of hardware noise | Zero-noise extrapolation, measurement error mitigation [29] |

Current Research Frontiers and Future Directions

Scalability Enhancements

Significant research focuses on improving UVCC scalability for larger molecular systems:

- Active Space Approaches: Embedding techniques that treat strongly correlated modes on quantum hardware while handling the remaining system classically [31]

- Qubit-Efficient Mappings: Novel mapping strategies that reduce qubit requirements by exploiting molecular symmetries and mode structures [29]

- Dynamic Ansätze: Adaptive ansatz construction methods that build excitation operators iteratively based on chemical significance [28]

Hybrid Quantum-Classical Algorithms

The integration of UVCC with machine learning techniques represents a promising frontier:

- Quantum-Neural Hybrids: Frameworks combining UVCC with neural networks demonstrate enhanced noise resilience while maintaining accuracy [11]

- Transfer Learning Approaches: Using classically pre-trained neural networks to initialize UVCC parameters, reducing quantum resource requirements [11]

- Embedding Techniques: Multi-scale approaches that combine quantum-computed active spaces with classically treated environments [31]

Pathway to Fault-Tolerant Implementation

As quantum hardware advances toward fault tolerance, UVCC research is increasingly focused on optimization for error-corrected architectures:

Recent research indicates that early fault-tolerant quantum computers with 25-100 logical qubits could enable impactful UVCC simulations of chemically significant systems [31]. Strategic optimizations, including the reduction of multi-controlled operations and efficient decomposition methods, will be essential for maximizing performance within these constrained resource environments [29].

Unitary Vibrational Coupled Cluster represents a sophisticated approach for computing molecular vibrational energies within the quantum computing paradigm. While challenges remain in practical implementation on current hardware, ongoing research in circuit optimization, hybrid algorithms, and error mitigation continues to enhance UVCC's applicability and performance. For researchers and drug development professionals, UVCC offers a systematically improvable, theoretically grounded framework for investigating molecular vibrations—a capability with profound implications for understanding molecular behavior, reaction dynamics, and drug design at the quantum level.

As quantum hardware continues to evolve, UVCC is positioned to transition from a theoretical curiosity to a practical tool for computational chemistry, potentially enabling accurate simulations of molecular systems that are currently intractable using classical computational methods alone.

In the Noisy Intermediate-Scale Quantum (NISQ) era, quantum algorithms for computational chemistry face a critical challenge: extracting meaningful results before quantum decoherence and hardware noise dominate the computation. The choice of parameterized quantum circuits, or ansatzes, represents a fundamental trade-off between representational power and computational feasibility. Within this context, the Compact Heuristic Circuit (CHC) ansatz has emerged as a promising solution specifically designed to reduce circuit complexity without sacrificing the fidelity of computational results for molecular systems [17].

This technical guide provides a comprehensive examination of the CHC ansatz, detailing its theoretical foundation, methodological implementation, and experimental performance against established alternatives like the Unitary Vibrational Coupled Cluster (UVCC) approach. By offering a systematic reduction in quantum resource requirements, CHC demonstrates significant potential for enabling scalable quantum simulations of molecular vibrations and electronic structures on current-generation quantum hardware, with direct implications for pharmaceutical research and materials science.

Theoretical Foundation: Ansatzes in Quantum Computational Chemistry

The Role of Ansatzes in Variational Quantum Algorithms

Variational quantum algorithms, particularly the Variational Quantum Eigensolver (VQE), have become the leading paradigm for quantum computational chemistry on NISQ devices. These hybrid quantum-classical algorithms delegate the task of preparing quantum states to a quantum co-processor while utilizing classical optimizers to adjust circuit parameters. The ansatz defines the circuit architecture that generates these parameterized quantum states, effectively constraining the algorithm to search within a specific subspace of the full Hilbert space.

The efficiency of this search depends critically on two competing ansatz characteristics: expressibility (the ability to represent states of interest) and efficiency (the depth and gate count required). Highly expressive ansatzes like UVCC can accurately represent target states but typically require deep circuits with many entangling gates, making them vulnerable to noise on current hardware. In contrast, heuristic approaches like CHC strategically limit expressibility to maintain practical feasibility while still achieving chemically accurate results for target applications [17].

Comparative Analysis of Ansatz Approaches

The search results highlight two principal ansatz categories with distinct architectural philosophies:

Table 1: Comparative Analysis of Quantum Ansatzes for Molecular Simulations

| Feature | Unitary Vibrational Coupled Cluster (UVCC) | Compact Heuristic Circuit (CHC) |

|---|---|---|

| Theoretical Basis | Derived from coupled cluster theory in quantum chemistry | Heuristically designed with empirical efficiency |

| Circuit Depth | Typically deep due to trotterization of exponential operators | Significantly reduced through strategic design |

| Gate Complexity | High, with numerous entangling gates | Minimized without sacrificing key correlations |

| Noise Resilience | Limited due to extended circuit depth | Enhanced through compact structure |

| Scalability | Challenging for larger molecules on current hardware | Promising for near-term applications |

| Implementation | Requires mapping of cluster operators to quantum gates | Designed with hardware constraints in mind |

Methodological Implementation of CHC

Architectural Principles for Circuit Compactness

The CHC ansatz achieves circuit reduction through several key design principles confirmed in the search results. Unlike physically-inspired ansatzes that maintain a direct correspondence with theoretical chemistry frameworks, CHC employs a hardware-aware architecture that prioritizes operational efficiency. The design incorporates two primary strategies for complexity reduction:

First, entanglement structuring creates the minimal necessary correlations between vibrational or electronic degrees of freedom, avoiding the all-to-all coupling often present in theoretical approaches. Second, parameter reuse allows a limited set of quantum gates to be applied iteratively with shared parameters across multiple circuit sections, substantially reducing the classical optimization overhead [17].

These principles enable CHC to maintain representative power for target molecular states while dramatically decreasing both quantum gate count and classical parameter optimization requirements compared to UVCC implementations.

Detailed Protocol for CHC Implementation

The experimental implementation of CHC follows a systematic workflow that integrates both quantum and classical computational resources:

Problem Encoding: Map the molecular Hamiltonian to qubit representations using either Jordan-Wigner or Bravyi-Kitaev transformations for electronic structure problems, or direct encoding schemes for vibrational problems.

Circuit Initialization: Prepare the reference state, typically the Hartree-Fock solution, as the initial quantum state |ψ₀⟩.

CHC Parameterization: Apply the Compact Heuristic Circuit with initial parameters {θᵢ} randomly initialized or pre-trained using classical methods:

- Implement single-qubit rotational gates (Rx, Ry, R_z) with independent parameters

- Apply structured entangling gates (typically CNOT or CZ) following a modular pattern

- Repeat the fundamental building block with parameter sharing across layers

Energy Evaluation: Measure the expectation value ⟨ψ(θ)|H|ψ(θ)⟩ through quantum sampling.

Classical Optimization: Update parameters {θᵢ} using classical optimizers (e.g., gradient descent, SPSA) to minimize the energy expectation value.

Convergence Check: Iterate steps 3-5 until energy convergence criteria are satisfied or a maximum number of iterations is reached.

For excited state calculations, the same framework extends to the Variational Quantum Deflation (VQD) algorithm, which introduces penalty terms to ensure orthogonalization with lower-energy states [17].

Experimental Validation and Performance Metrics

Quantitative Performance Analysis

Experimental results from comprehensive studies provide quantitative evidence of CHC's performance advantages. In direct comparisons between UVCC and CHC for calculating vibrational ground state energies of small molecules, both ansatzes achieved similar accuracy when benchmarked against classical computational methods. However, the resource efficiency differed dramatically [17].

Table 2: Quantitative Performance Comparison of UVCC vs. CHC for Molecular Vibrational Energy Calculations

| Metric | UVCC Performance | CHC Performance | Improvement Factor |

|---|---|---|---|

| Circuit Depth | O(n²) to O(n³) for n qubits | O(n) to O(n²) | 40-60% reduction |

| Two-Qubit Gate Count | High (exact number molecule-dependent) | Significantly reduced | ~50% reduction |

| Noise Resilience | Rapid degradation with circuit depth | Maintains accuracy under noise | >2x improvement in noisy simulations |

| Classical Optimization | Challenging due to large parameter space | Efficient convergence | ~30% faster convergence |

| Excited State Transferability | Requires qEOM approach | Compatible with VQD algorithm | Comparable accuracy with simpler implementation |

The data clearly demonstrates that CHC maintains accuracy comparable to UVCC while offering substantial improvements in circuit efficiency and noise resilience. This performance profile makes CHC particularly valuable for real-world applications on current quantum hardware, where decoherence times and gate fidelity remain limiting factors.

Extension to Excited States with VQD

Beyond ground state calculations, the research results confirm CHC's effectiveness for determining excited vibrational state energies when combined with the Variational Quantum Deflation (VQD) algorithm. The VQD approach introduces a series of constrained optimizations, where each successive calculation seeks the lowest energy state orthogonal to previously determined states through the addition of penalty terms [17].

The comparative analysis between CHC+VQD and the quantum Equation of Motion (qEOM) method demonstrates that the heuristic approach maintains accuracy while benefiting from the same circuit efficiency advantages observed in ground state calculations. This capability significantly expands the practical utility of CHC for pharmaceutical applications, where excited state dynamics often play crucial roles in molecular reactivity and binding interactions.

Integrated Workflow: From Circuit Design to Experimental Validation

The diagram below illustrates the complete experimental workflow for molecular energy calculation using the CHC ansatz, integrating both quantum and classical computational resources:

Successful implementation of CHC-based quantum computational chemistry requires specialized tools and resources spanning both quantum hardware and classical computational infrastructure:

Table 3: Essential Research Reagents and Computational Resources for CHC Implementation

| Resource Category | Specific Tools/Platforms | Function in CHC Research |

|---|---|---|

| Quantum Hardware | Quantinuum H-Series [32] | Provides physical quantum processing with all-to-all connectivity and high-fidelity gates |

| Classical Computation | NVIDIA GPU Clusters [32] | Accelerates classical optimization and simulation components of hybrid algorithms |

| Algorithm Frameworks | Variational Quantum Eigensolver (VQE) [17] | Hybrid quantum-classical framework for ground state energy calculation |

| Excited State Methods | Variational Quantum Deflation (VQD) [17] | Extension of VQE for calculating excited state energies |

| Chemistry Platforms | InQuanto, Chemistry42 [33] | Specialized software for quantum computational chemistry and molecule validation |

| Error Mitigation | Quantum Error Correction Codes [32] | Techniques to enhance algorithmic resilience to hardware noise |

The Compact Heuristic Circuit ansatz represents a significant advancement in practical quantum computational chemistry, offering an optimal balance between computational accuracy and resource efficiency. By strategically reducing circuit complexity compared to theoretically exact approaches like UVCC, CHC enables more effective utilization of current-generation quantum hardware while maintaining the fidelity required for meaningful chemical insights [17].

As quantum hardware continues to evolve with improvements in qubit count, connectivity, and gate fidelity—exemplified by systems like Quantinuum's H2 processor [32]—the principles underlying CHC design will remain relevant for scaling quantum computational methods to larger molecular systems. The successful integration of CHC with emerging quantum machine learning approaches for drug discovery [33] further underscores its potential as a foundational element in the ongoing development of practical quantum applications for pharmaceutical research and materials science.

The continued refinement of heuristic ansatzes, informed by both theoretical principles and empirical performance, will play a crucial role in bridging the gap between experimental quantum computing capabilities and the demanding requirements of industrial molecular design workflows.

The application of quantum computing to molecular systems represents a frontier in computational chemistry and drug discovery. Central to this endeavor is the challenge of solving the electronic structure problem, which involves finding the lowest-energy configuration of electrons in a molecule. This ground state energy is a key determinant of a molecule's chemical properties and reactivity. Quantum optimization ansatze provide a framework for tackling this problem on both classical and quantum hardware by mapping the inherently quantum-mechanical molecular Hamiltonian onto more computationally tractable models. The Quadratic Unconstrained Binary Optimization (QUBO) and Ising models serve as a crucial bridge in this process, enabling the use of specialized optimization algorithms, including quantum annealers and Ising machines, for molecular simulation.

This technical guide details the core methodologies for transforming molecular Hamiltonians into QUBO and Ising formulations. It provides researchers with the theoretical foundations, practical encoding techniques, and experimental protocols necessary to apply these powerful optimization paradigms to problems in molecular research.

Theoretical Foundations: From Molecules to Spins

The Electronic Structure Hamiltonian

The electronic structure problem is defined by the molecular Hamiltonian, which, in the second quantization formalism, is expressed as:

[ \hat{H} = \sum{p,q} h{pq} \hat{E}{q}^{p} + \sum{p,q,r,s} g{pqrs} \hat{E}{q}^{p} \hat{E}_{s}^{r} ]

Here, (h{pq}) and (g{pqrs}) are the one- and two-electron integrals, respectively, and (\hat{E}{q}^{p} = \hat{a}^{\dagger}{p\alpha}\hat{a}{q\alpha} + \hat{a}^{\dagger}{p\beta}\hat{a}_{q\beta}) are the singlet excitation operators [34]. The goal is to find the eigenstate of this Hamiltonian with the lowest energy.

The Ising and QUBO Formalism

The Ising model describes a system of interacting spins. For a set of spins (s_i \in {-1, +1}), its Hamiltonian is:

[

H{\text{Ising}} = \sum{i} h{i} s{i} + \sum{i

where (hi) represents the local field on spin (i), and (J{ij}) is the coupling strength between spins (i) and (j) [35]. The QUBO model is mathematically equivalent, using binary variables (xi \in {0, 1}) and a Hamiltonian of the form (H{\text{QUBO}} = \sum{i} Q{ii} xi + \sum{i

The connection to molecular systems is established by the observation that finding the ground state of a molecular Hamiltonian is an energy minimization task, analogous to finding the lowest-energy configuration of an Ising or QUBO system [35] [36]. This analogy allows the tools developed for statistical physics and combinatorial optimization to be brought to bear on quantum chemistry.

Encoding Strategies and Methodologies

Direct Mappings for Specific Problems

Problem-specific encodings directly map the physical constraints of a system into the optimization model. A prominent example in drug discovery is lattice-based peptide docking. In this approach, a peptide and its target protein are coarse-grained onto a lattice (e.g., a tetrahedral lattice), and the goal is to find the peptide conformation that minimizes the interaction energy (e.g., modeled by Miyazawa-Jernigan potentials) while satisfying steric and cyclization constraints [36].

- QUBO Formulation for Docking: The total Hamiltonian for this problem can be constructed as: [ H = H{\text{comb}} + H{\text{back}} + H{\text{cyclization}} + H{\text{docking}} ] Here, (H{\text{comb}}) and (H{\text{back}}) enforce the peptide's structural integrity and prevent overlap. (H{\text{cyclization}}) encodes the constraints for peptide cyclization, and (H{\text{docking}}) captures the interaction energy between the peptide and the target protein. Higher-order terms generated by these constraints can be reduced to quadratic QUBO form using techniques like locality reduction [36].

The Variational Quantum Eigensolver (VQE) Pathway

The VQE algorithm is a leading hybrid quantum-classical approach for finding molecular ground states. Its "quantum optimization ansatz" relies on a parameterized quantum circuit to prepare a trial wavefunction, whose energy is measured and then minimized by a classical optimizer [8] [11]. The connection to Ising/QUBO models emerges in two ways:

- Problem Reduction: For certain molecular models or after specific approximations, the electronic Hamiltonian itself can be directly mapped to an Ising form.

- Solution of the Ansatz: The classical optimization loop within VQE—tuning parameters to minimize energy—can itself be formulated as a QUBO problem or solved using Ising-machine-inspired techniques.

Advanced ansatze, such as the Separable Pair Approximation (SPA), simplify this process by using the molecular structure (e.g., a perfect matching graph of atomic coordinates) to construct efficient circuits, making the parameter optimization more tractable [8].

A Physical Analogy: Logical Ordering as Physical Cooling

A profound physical analogy exists between finding satisfiable logical configurations and the physical process of molecular ordering upon cooling. In this analogy, variables in a Boolean satisfiability (SAT) problem are treated as spins ((si \in {-1,+1})), and each clause generates local fields (hi) and couplings (J_{ij}) that enforce its logical constraints [35].

Using simulated annealing (a phenomenal cooling analog) on the resulting Ising system drives it from high-entropy, random assignments to low-entropy, ordered ground states. Empirical studies show a strong negative correlation between system energy and magnetization ((\rho_{E,|M|} = -0.63)), indicating a rapid "logical crystallization" into the satisfiable configuration when it exists. This provides a unified thermodynamic view of computational coherence and complexity [35].

The workflow below illustrates the transformation of a molecular problem into an Ising model and its subsequent solution via annealing.

Experimental Protocols and Benchmarking

Protocol: VQE with a Hardware-Efficient Ansatz

This protocol outlines the steps for finding a molecular ground state using VQE, a common method where the optimization can leverage QUBO/Ising solvers.

- Step 1: Define the Molecular Hamiltonian: The process begins by defining the molecule (e.g., H₂ at a specific bond length) and generating its electronic Hamiltonian in the qubit basis (e.g., via the Jordan-Wigner or Bravyi-Kitaev transformation) [37] [17].

- Step 2: Construct the Ansatz Circuit: A parameterized quantum circuit, the ansatz, is chosen. For NISQ devices, a "hardware-efficient" ansatz comprising layers of single-qubit rotations ((Ry, Rz)) and entangling gates (e.g., CZ) is often used [37].

- Step 3: Implement the Classical Optimization Loop:

- The quantum computer prepares the state (|\psi(\vec{\theta})\rangle) with initial parameters (\vec{\theta}).

- It measures the expectation value (\langle \psi(\vec{\theta}) | \hat{H} | \psi(\vec{\theta}) \rangle).

- A classical optimizer (e.g., COBYLA, SPSA) processes this energy value and proposes new parameters (\vec{\theta}_{\text{new}}) to lower the energy.

- Steps 1-3 repeat until convergence to a minimum energy.

- Step 4: Employ Error Mitigation: Techniques like Zero-Noise Extrapolation (ZNE) are critical. This involves intentionally scaling the circuit's noise level (e.g., by gate folding) and extrapolating the results back to the zero-noise limit to obtain a more accurate energy estimate [37].

Protocol: Studying Partition Function Zeros on Quantum Hardware

Another protocol uses a quantum computer to study the thermodynamic properties of an Ising system, which can be related to a molecular model, by detecting its Fisher zeros.

- Step 1: Map Partition Function to Evolution: For an Ising Hamiltonian (H), the partition function at a purely imaginary inverse temperature (\beta = i\alpha) becomes a unitary evolution operator: (Z(i\alpha) = \text{Tr}[e^{-i\alpha H}]). For an initial state (|\mathbf{0}\rangle), this can be rewritten as (Z(i\alpha) = 2^N \langle \mathbf{0} | e^{-i\alpha H{\text{XX}}} | \mathbf{0} \rangle), where (H{\text{XX}}) is derived from the original Ising model [38].

- Step 2: Implement the Quantum Circuit:

- Prepare all qubits in the (|0\rangle) state.

- Apply a layer of Hadamard gates to all qubits.

- Apply the unitary (e^{-i\alpha H_{\text{XX}}}), which is constructed from native quantum gates.

- Apply another layer of Hadamard gates.

- Step 3: Measure and Analyze: The probability of measuring the all-zeros outcome, (P(|\mathbf{0}\rangle)), is proportional to (|Z(i\alpha)|^2). The values of (\alpha) where this probability drops to zero correspond to the purely imaginary Fisher zeros of the original Ising system, revealing information about its phase transitions [38].

Benchmarking with Planted Solutions

A significant challenge in benchmarking new algorithms is the lack of known ground truths for large, complex systems. Planted solutions address this by constructing non-trivial Hamiltonians with known, embedded ground states.

- Method: A solvable Hamiltonian ( \hat{H}{\text{sol}} ) is first generated. It is then "obscured" by conjugating it with random orbital rotations (U), resulting in a final Hamiltonian ( \hat{H} = U^\dagger \hat{H}{\text{sol}} U ) that retains the known ground state energy but appears as a generic, hard electronic structure problem [34].

- Application: These planted Hamiltonians are used to rigorously test the performance and convergence behavior of classical and quantum solvers, including DMRG and VQE, providing a controlled environment to evaluate their efficacy on industrially relevant problem sizes [34].

The table below summarizes key reagents and computational tools essential for experiments in this field.

Table 1: Essential Research Reagents and Computational Tools

| Item/Tool Name | Function in Research |

|---|---|

| Miyazawa-Jernigan (MJ) Potentials | A statistical potential used to model the interaction energy between amino acid residues in coarse-grained peptide docking problems [36]. |

| Planted Solution Hamiltonians | Artificially generated Hamiltonians with known ground states, used to rigorously benchmark the accuracy and performance of electronic structure methods [34]. |

| Separable Pair Ansatz (SPA) | A specific, system-adapted quantum circuit design that leverages molecular geometry to prepare initial states for VQE, reducing classical optimization overhead [8]. |

| Zero-Noise Extrapolation (ZNE) | An error mitigation technique that improves the accuracy of energy estimates from noisy quantum computers by extrapolating results from different noise levels to the zero-noise limit [37]. |

| Orbital Rotation Unitaries | Operations used to obscure the structure of planted-solution Hamiltonians, increasing their perceived difficulty while preserving the known ground state energy [34]. |

Performance Analysis and Discussion

The practical utility of QUBO and Ising encodings is determined by their performance on real problems. The table below summarizes quantitative results from various applications as reported in the literature.

Table 2: Performance Metrics of QUBO/Ising Approaches on Benchmark Problems

| Application / System | Problem Size / Instance | Encoding Method | Solver | Key Result / Performance |

|---|---|---|---|---|

| Peptide-Protein Docking [36] | 6 peptide residues, 34 protein residues (PDB 3WNE, 5LSO) | Resource-efficient turn encoding on tetrahedral lattice | Classical Simulated Annealing | Found feasible conformations; scaling trouble beyond this size. |

| Peptide-Protein Docking [36] | 11 peptide residues, 49 protein residues (PDB 2F58) | Constraint Programming (CP) model | CP Solver | Solved largest instance optimally; outperformed QUBO/SA. |

| Logical Crystallization (SAT) [35] | UF20–91 test set | Clause-to-Ising mapping | Simulated Annealing | Found strong negative energy-magnetization correlation (ρ = -0.63). |

| VQE Parameter Prediction [8] | H4 to H12 hydrocarbic systems | Graph Attention Network (GAT) & SchNet | Classical ML | Demonstrated transferability of optimal VQE parameters to larger molecules. |

The data indicates that while QUBO/Ising approaches are highly flexible, their performance is context-dependent. For problems like peptide docking, classical methods like Constraint Programming can currently outperform QUBO-based simulated annealing on larger instances [36]. However, the strong physical analogy between logical satisfaction and spin ordering provides a robust foundation for using these models [35]. Future advancements likely lie in hybrid methods, such as using machine learning to predict good initial parameters for VQE, thereby reducing the difficult optimization burden [8].

The transformation of molecular Hamiltonians into QUBO and Ising models is a critical enabling technology for applying quantum and quantum-inspired optimization to molecular systems research. This guide has detailed the core methodologies, from direct problem encoding and the VQE framework to advanced benchmarking with planted solutions.

As the field progresses, the integration of machine learning for ansatz design and parameter prediction, coupled with more powerful Ising machines and quantum hardware, will be crucial for overcoming current scalability limitations. These techniques hold the promise of tackling complex molecular problems in drug design and materials science that are currently beyond the reach of classical computation alone. By providing a unified view of computational complexity and physical ordering, the QUBO and Ising formalisms will continue to be a cornerstone of quantum optimization ansatze for molecular systems.