Quantum Principles in Chemical Kinetics: From Theory to Drug Discovery Applications

This article explores the transformative integration of quantization principles and quantum computing methodologies into chemical kinetics, a frontier poised to redefine computational chemistry and drug discovery.

Quantum Principles in Chemical Kinetics: From Theory to Drug Discovery Applications

Abstract

This article explores the transformative integration of quantization principles and quantum computing methodologies into chemical kinetics, a frontier poised to redefine computational chemistry and drug discovery. We first establish the foundational quantum mechanical concepts governing molecular dynamics and reaction pathways. The discussion then progresses to cutting-edge methodological applications, including data assimilation techniques for kinetic parameter estimation and novel quantum algorithms for simulating reaction dynamics. A dedicated section addresses the significant challenges and optimization strategies in implementing these quantum-based approaches. Finally, we present a comparative analysis of the validation frameworks and performance benchmarks of these emerging methods against established classical techniques. This comprehensive review is tailored for researchers, scientists, and drug development professionals seeking to leverage quantum advancements for more accurate and efficient kinetic modeling.

Quantum Foundations: Core Principles Governing Molecular Dynamics and Reactivity

The Schrödinger Equation and Potential Energy Surfaces

The fundamental challenge in predicting the rates and outcomes of chemical reactions lies in accurately describing how molecular systems evolve from reactants to products. This process is governed by the Schrödinger equation, with the potential energy surface (PES) serving as the central quantitative descriptor that determines reaction pathways and kinetics. The PES represents the energy of a molecular system as a function of its nuclear coordinates, creating a multidimensional landscape upon which chemical dynamics unfold [1]. Within the Born-Oppenheimer approximation, the molecular Hamiltonian elegantly separates into kinetic and potential energy components, (\textbf{H} = \textbf{K}(\hat{p}) + \textbf{V}(\hat{x})), where the potential operator (\textbf{V}(\hat{x})) becomes diagonal in coordinate space [2]. This quantized representation of molecular energy landscapes provides the foundation for modern chemical kinetics research, enabling researchers to move beyond phenomenological models toward first-principles predictions of reaction behavior.

Recent advances in computational quantum chemistry and the emergence of quantum computing have revolutionized our approach to these surfaces, particularly through novel encoding strategies that address the exponential scaling problems inherent in classical simulations of quantum systems [2]. The spatial grid method, representing a first quantization approach, has shown particular promise for quantum computing applications as it naturally avoids the basis-set incompleteness problems of second quantization methods while offering favorable linear-scaling properties for extensive systems [2]. These developments have created new opportunities for applying quantization principles directly to chemical kinetics research, particularly in the simulation of nonadiabatic processes where the Born-Oppenheimer approximation breaks down.

Computational Protocols: From Theory to Implementation

Protocol: Grid-Based Potential Energy Surface Construction

Principle: Discretization of molecular coordinates creates a computational grid for numerical solution of the Schrödinger equation, facilitating implementation on digital and quantum computing architectures.

Materials and Setup:

- Coordinate System: Mass-weighted normal mode coordinates separating translational, rotational, and vibrational degrees of freedom

- Grid Parameters: Spatial resolution (Δx), number of grid points (N=2n), and domain boundaries

- Software Tools: Schrödinger Materials Science Suite for molecular modeling [3]

- Computational Platform: Quantum computing simulators or hardware (e.g., IBM Quantum) for Hamiltonian simulation [2]

Procedure:

- System Preparation: Define nuclear coordinates and generate initial molecular geometry

- Coordinate Transformation: Convert Cartesian coordinates to internal coordinates, separating vibrations from rotations/translations

- Grid Initialization: Establish spatial grid with sufficient resolution to capture wavefunction features

- Potential Operator Construction: Compute potential energy values V(xi) at each grid point xi

- Hamiltonian Assembly: Combine potential operator with discretized kinetic energy operator

- Wavefunction Propagation: Solve time-dependent Schrödinger equation using appropriate numerical methods

Technical Notes: For quantum computing implementations, the potential energy operator must be encoded in diagonal unitary forms, with recent polynomial encoding algorithms reducing gate complexity from (\mathcal{O}(2^n)) to (\mathcal{O}(\sum{i=1}^{r} {}^nCr)) for r≪n [2].

Protocol: Pre-Born-Oppenheimer Molecular Dynamics Simulation

Principle: Direct simulation of coupled electron-nuclear dynamics without separation of timescales, providing exact treatment of nonadiabatic effects critical for photochemical processes.

Materials and Setup:

- Wavefunction Ansatz: Second quantized representation with position-dependent spin orbitals

- Mapping Scheme: Fermion-qubit mapping for electrons, bosonic modes for nuclear vibrations

- Hardware Platform: Trapped-ion devices or other analog quantum simulators [4]

Procedure:

- Hamiltonian Formulation: Express molecular vibronic Hamiltonian in second quantization [4]: [ \textbf{H} = \sum{pq} h{pq} ap^\dagger aq + \sum{\nu} \omega\nu b\nu^\dagger b\nu + \sum{\nu,pq} d{\nu,pq} ap^\dagger aq (b\nu^\dagger + b\nu) + \cdots ]

- Active Space Selection: Choose relevant electronic orbitals and vibrational modes

- Wavefunction Initialization: Prepare initial state as superposition of electronic and vibrational occupation number vectors

- Time Evolution: Implement unitary propagation (e^{-i\hat{H}t/\hbar}) using device-native operations

- Measurement: Extract population dynamics and correlation functions

Technical Notes: This approach provides exponential savings in computational resources compared to equivalent classical algorithms and naturally includes nonadiabatic coupling effects without requiring pre-calculation of electronic states [4].

Table 1: Computational Methods for Potential Energy Surface Generation

| Method | Theoretical Basis | System Size | Accuracy Considerations | Implementation Platform |

|---|---|---|---|---|

| Grid-Based First Quantization | Spatial discretization of molecular coordinates | Large systems with linear scaling | Grid resolution dependence; polynomial encoding reduces error | Quantum hardware (IBM Quantum) [2] |

| Pre-Born-Oppenheimer Dynamics | coupled electron-nuclear wavefunction | Small to medium molecules | Exact treatment of nonadiabatic effects; active space selection critical | Analog quantum simulators (trapped ions) [4] |

| Walsh Series Approximation | Hadamard basis expansion | Medium systems | Basis set truncation error; suitable for near-term devices | Quantum simulators with Z/I gates [2] |

| Multi-Configurational Time-Dependent Hartree | Time-dependent variational principle | Small molecules | Configuration selection; suitable for bosonic systems | Classical HPC systems [4] |

Experimental Kinetics: Bridging Theory and Measurement

Protocol: Optical Kinetics Monitoring for Reaction Rate Determination

Principle: Exploit the proportionality between light absorption and species concentration (Beer's Law) to track reaction progress in real-time.

Materials and Setup:

- Light Source: Tungsten lamp (colorimeter) or monochromatic source (spectrophotometer)

- Detection System: Photodetector with linear response to light intensity

- Cuvette: Fixed path length cell (typically 1.0 cm)

- Thermostat: Temperature control system (±0.1°C)

- Data Acquisition: Computer interface for continuous monitoring

Procedure:

- Instrument Calibration:

- Block light path and set instrument zero

- Insert reference blank (unreactive solution) and set 100% transmission

- Select appropriate wavelength filter matching analyte absorption maximum

Reaction Initiation:

- Prepare reactant solutions at precise concentrations

- Thermostat all solutions to target temperature

- Mix reactants rapidly and transfer to measurement cuvette

Data Collection:

- Record transmission (I/I₀) or absorbance (-log(I/I₀)) at timed intervals

- Ensure measurement time ≪ reaction half-life

- Continue until reaction completion or sufficient data acquired

Data Analysis:

- Convert absorbance to concentration using Beer's Law: A = ε·c·l

- Plot concentration versus time for kinetic modeling

- Determine reaction order and rate constant via fitting procedures

Technical Notes: For colored species, select complementary filter colors (e.g., blue filter for yellow solutions). Modern spectrophotometers enable UV-vis monitoring with dual-beam referencing for enhanced stability [5].

Protocol: Numerical Compass for Optimal Experiment Design

Principle: Utilize computational models and ensemble methods to identify experimental conditions that maximize parameter constraint potential.

Materials and Setup:

- Kinetic Model: Mechanism-based computational model (e.g., KM-SUB for aerosols)

- Fit Ensemble: Collection of parameter sets reproducing existing data

- Surrogate Model: Neural network approximating kinetic model

- Experimental Parameter Space: Ranges of feasible conditions (T, [X]₀, etc.)

Procedure:

- Ensemble Generation:

- Acquire fit ensemble through global optimization against existing data

- Define acceptance threshold for sufficient agreement

- Validate ensemble diversity and representation

Constraint Potential Assessment:

- Evaluate model variance across experimental parameter space

- Calculate ensemble variance at each candidate condition

- Identify conditions with maximum discrimination potential

Experiment Prioritization:

- Rank experimental conditions by constraint potential

- Select conditions with highest ensemble variance

- Design minimal set of maximally informative experiments

Technical Notes: This approach implicitly handles experimental uncertainty through acceptance thresholds and requires only single model evaluation per fit-condition combination, offering computational efficiency over traditional optimality criteria methods [6].

Table 2: Experimental Techniques for Chemical Kinetics Research

| Technique | Measured Property | Time Resolution | Applications | Key Considerations |

|---|---|---|---|---|

| Absorption Spectroscopy | Light transmission/absorption | Milliseconds to seconds | Reactions involving colored compounds | Requires chromophore; follows Beer's Law [5] |

| Light Scattering (Nephelometry) | Turbidity/precipitate formation | Seconds to minutes | Precipitation reactions, aggregation kinetics | Qualitative unless calibrated; simple implementation [5] |

| Stopped-Flow with Optical Detection | Rapid mixing with fast spectroscopy | Microseconds to milliseconds | Fast reactions in solution | Dead time considerations; specialized equipment needed |

| Numerical Compass Optimization | Model ensemble variance | Pre-experiment design phase | Optimal condition selection for parameter estimation | Requires existing data and model; reduces experimental effort [6] |

Advanced Applications: Quantum Computing and Machine Learning

Case Study: Sodium Iodide Potential Energy Surface Simulation on Quantum Hardware

Background: The NaI molecule exhibits complex ionic-covalent crossing in its excited state potential energy curve, making it an ideal test case for quantum simulation algorithms.

Implementation:

- Potential Energy Operator Encoding:

- Represent exponential functional form of NaI potential using diagonal unitaries

- Apply polynomial encoding algorithm to reduce gate complexity

- Implement on IBM quantum simulator and hardware

- Time Evolution Simulation:

- Construct time evolution operator (e^{-i\hat{V}t/\hbar}) for potential component

- Measure fidelity of simulation on quantum hardware

- Reconstruct potential energy curve from quantum simulation

Results: The polynomial encoding method demonstrated significant resource optimization while maintaining chemical accuracy, with gate complexity reduction enabling feasible implementation on near-term devices [2].

Protocol: Machine Learning-Enhanced Kinetic Parameter Estimation

Principle: Combine neural network surrogate models with global optimization for efficient parameter space exploration.

Materials and Setup:

- Template Model: Full kinetic model (e.g., multi-layer aerosol chemistry)

- Training Data: Input-output pairs from template model evaluations

- Network Architecture: Siamese neural networks for uncertainty quantification

Procedure:

- Surrogate Model Training:

- Generate diverse training set through Latin hypercube sampling

- Train neural network to emulate template model behavior

- Validate prediction accuracy on test dataset

Ensemble-Based Inference:

- Utilize surrogate model for rapid parameter space exploration

- Acquire fit ensemble using global optimization

- Quantify parametric uncertainty through ensemble variance

Active Learning Extension:

- Identify high-uncertainty regions in molecular space

- Select informative training molecules for QSAR model improvement

- Iteratively refine model through targeted data acquisition

Technical Notes: Surrogate models dramatically reduce computational cost of ensemble generation, though model uncertainty must be carefully monitored to ensure reliability [6].

Table 3: Key Research Reagent Solutions for Kinetic Studies

| Reagent/Resource | Function/Application | Specification Guidelines | Example Use Cases |

|---|---|---|---|

| Absorption Spectrophotometer | Quantitative concentration monitoring | Wavelength range 200-800 nm; dual-beam design | Reaction kinetics of colored compounds [5] |

| Temperature-Controlled Cuvette Holder | Maintain constant reaction temperature | Stability ±0.1°C; rapid thermal equilibration | Temperature-dependent rate studies |

| Quantum Chemistry Software Suite | Electronic structure calculations | Schrödinger Materials Science Suite; Gaussian | Potential energy surface generation [3] |

| Quantum Computing Simulators | Algorithm development and testing | Qiskit (IBM); virtual computer access | Hamiltonian simulation testing [2] |

| Kinetic Modeling Frameworks | Mechanism validation and parameter estimation | KM-SUB for multiphase chemistry; custom codes | Aerosol chemistry optimization [6] |

| Global Optimization Algorithms | Parameter space exploration | Evolutionary algorithms; Bayesian optimization | Fit ensemble acquisition [6] |

Workflow Visualization: Integrating Theory and Experiment

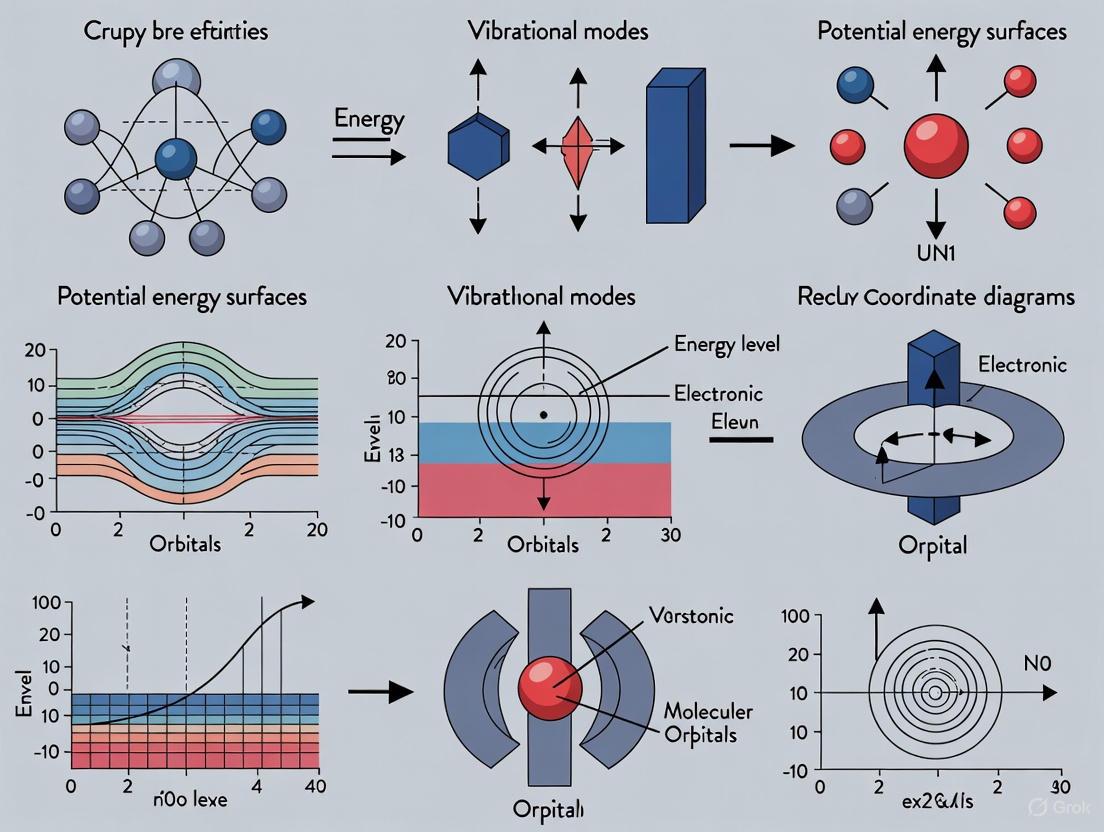

Diagram 1: Integrated workflow for chemical kinetics research, showing the cyclic relationship between potential energy surface calculation, kinetics simulation, and experimental validation with iterative refinement.

The integration of potential energy surfaces derived from the Schrödinger equation with advanced experimental and computational techniques represents a powerful framework for advancing chemical kinetics research. The quantization principles underlying both the fundamental physics and emerging computational approaches—particularly quantum computing algorithms—offer transformative potential for predicting reaction dynamics from first principles. Protocols such as grid-based surface construction, pre-Born-Oppenheimer dynamics simulation, optical kinetics monitoring, and numerical compass experiment design provide researchers with a comprehensive toolkit for tackling complex kinetic problems across diverse domains from drug development to materials science. As quantum hardware continues to advance and algorithmic innovations reduce resource requirements, the explicit treatment of quantized energy landscapes will increasingly enable truly predictive chemical kinetics without empirical parameterization.

{ document.title = "Zero-Point Energy and Quantum Tunneling in Reaction Rates"; }

Zero-Point Energy and Quantum Tunneling in Reaction Rates

The integration of quantization principles into chemical kinetics research has fundamentally altered our understanding of reaction dynamics, moving beyond classical transition state theory to account for non-classical phenomena that dominate at molecular scales. This paradigm shift recognizes that energy is quantized, molecular systems possess zero-point energy (ZPE) even at absolute zero, and particles can tunnel through energy barriers rather than exclusively passing over them. Zero-point energy, defined as the lowest possible energy a quantum mechanical system may possess, is a direct consequence of the Heisenberg uncertainty principle, which prevents particles from coming to complete rest [7] [8]. Quantum tunneling represents a fundamentally quantum mechanical phenomenon where particles penetrate energy barriers that would be insurmountable according to classical physics [9]. Together, these quantum effects significantly influence reaction rates, kinetic isotope effects, and temperature dependencies across diverse chemical and biological systems, from enzymatic catalysis to atmospheric chemistry [10] [9]. This application note provides a structured framework for investigating these quantum phenomena, offering standardized protocols, quantitative benchmarks, and visualization tools to advance research at the quantum-classical interface in chemical kinetics.

Quantitative Data on Quantum Effects in Chemical Reactions

The magnitude of quantum effects varies significantly across chemical systems, with measurable impacts on reaction probabilities, rate constants, and kinetic isotope effects. Table 1 summarizes key quantitative data from rigorous theoretical and experimental studies, providing benchmarks for researchers evaluating quantum contributions in their systems.

Table 1: Quantitative Data on Quantum Tunneling and Zero-Point Energy Effects

| System/Parameter | Quantitative Value | Significance/Context |

|---|---|---|

| N + O₂ Reaction (Theoretical) [10] | ||

| ⋄ Tunneling threshold | < 0.334 eV | Reactivity only possible via tunneling below this energy |

| ⋄ Classical barrier height | 0.299 eV | Minimum classical energy required to overcome barrier |

| ⋄ Relevant temperature range (rate constant) | 200–500 K | Temperature range where quantum effects significantly enhance rates |

| Heavy vs. Light Atom Tunneling [10] | Barrier width > height & mass | Explains non-negligible tunneling in heavy atom systems |

| Enzymatic Catalysis Rate Enhancement [9] | 50–100 times | Rate enhancement factors compared to conventional catalysts |

| Deuterium Isotope Effect (E2 Reaction) [8] | Slower rate for C-D vs. C-H | Direct evidence of ZPE role in reaction rates |

| Zero-Point Energy Difference (C-H vs. C-D) [11] | ED⁰ < EH⁰ | Heavier isotopes have lower ZPE, higher activation energy |

The data reveals that quantum tunneling is not restricted to light atoms but plays a measurable role even in heavy atom reactions like N + O₂, where it enables reactivity at collision energies below the classical barrier [10]. The significant rate enhancements observed in enzymatic systems (50-100 fold) demonstrate the biological importance of optimized quantum effects [9]. The consistent observation of kinetic isotope effects, particularly for hydrogen/deuterium systems, provides direct experimental evidence for the role of ZPE in chemical kinetics, as the lower ZPE of deuterium increases the activation barrier compared to hydrogen [11] [8].

Experimental and Computational Protocols

Protocol 1: Theoretical Assessment of Heavy-Atom Tunneling in Bimolecular Reactions

This protocol provides a rigorous methodology for quantifying quantum tunneling contributions in elementary gas-phase reactions involving heavy atoms, based on close-coupling time-dependent real wave packet (CC-TDRWP) methods applied to the N + O₂ system [10].

1. Potential Energy Surface Development

- Obtain an accurate ground-state potential energy surface (PES) using high-level ab initio methods (e.g., MRCI, CCSD(T)) with extensive atomic basis sets [10].

- Ensure proper characterization of the transition state geometry, reaction enthalpy, and classical barrier height (0.299 eV for N + O₂).

- Validate the PES against experimental data for reaction enthalpies and known spectroscopic parameters.

2 Quantum Dynamics Calculations

- Implement the close-coupling time-dependent real wave packet (CC-TDRWP) method to simulate the reaction dynamics [10].

- Initialize the wave packet with O₂ in the lowest vibro-rotational state (v₀ = 0, j₀ = 1).

- Propagate the wave packet across the collision energy range of interest (0.200–0.651 eV for N + O₂).

- Calculate the reaction probability as a function of collision energy, identifying the tunneling regime (<0.334 eV for N + O₂).

3. Quasi-classical Trajectory (QCT) Calculations

- Perform QCT calculations on the same PES as a classical benchmark [10].

- Use identical initial conditions (v₀ = 0, j₀ = 1) for direct comparison with quantum results.

- Calculate classical reaction probabilities across the same energy range.

4. Data Analysis and Tunneling Quantification

- Compute the reaction cross-section from both quantum and classical probabilities.

- Calculate thermal rate constants for temperatures from 200–1000 K using both methods.

- Quantify tunneling contributions by comparing quantum and classical results:

- Below-barrier reaction probabilities indicate pure tunneling.

- Enhanced reaction probabilities in the quantum case near the barrier indicate tunneling contributions.

- Rate constant enhancements at low temperatures (200–500 K) demonstrate thermal tunneling effects.

Protocol 2: Multidimensional Tunneling Corrections for Enzymatic Reactions

This protocol validates a computational strategy for incorporating multidimensional tunneling corrections in enzyme reactions using variational transition state theory (VTST) with small-curvature tunneling (SCT) corrections, specifically developed for QM(DFT)/MM calculations [12].

1. System Preparation and QM(DFT)/MM Setup

- Construct the enzyme-substrate complex using crystal structure data or homology modeling.

- Employ electrostatic embedding QM/MM partitioning with the reactive region treated with DFT (e.g., B3LYP, M06-2X) and the protein environment with a molecular mechanics force field.

- Ensure proper treatment of the active site residues, cofactors, and solvent molecules in the MM region.

2. Reaction Path Calculation

- Calculate the minimum energy path (MEP) using a small step size (≤0.1 a₀) to ensure accurate characterization of the barrier region [12].

- Avoid using distinguished reaction coordinates (DCPs) as they are inadequate for tunneling calculations in biological systems [12].

- Compute sufficient gradient and Hessian calculations along the MEP to cover the entire tunneling region and obtain converged adiabatic potential energy profiles.

3. Multidimensional Tunneling Corrections

- Implement small-curvature tunneling (SCT) corrections within the VTST framework [12].

- Calculate the ground-state tunneling transmission coefficient (κ) at the temperature of interest.

- For ensemble-averaged VTST, average the transmission coefficient over multiple enzyme configurations to account for protein dynamics.

4. Kinetic Isotope Effect (KIE) Calculation

- Repeat the MEP and SCT calculations for deuterated and tritiated substrates.

- Compute KIEs from the ratio of rate constants (kH/kD).

- Compare calculated KIEs with experimental values to validate the tunneling model.

- Anomalously high KIEs (> classical limit of ~7) indicate substantial tunneling contributions.

Visualization of Concepts and Workflows

Zero-Point Energy and Kinetic Isotope Effects

This diagram illustrates the fundamental relationship between zero-point energy (ZPE) and kinetic isotope effects. The Morse potential curve shows how heavier isotopes (e.g., deuterium) have lower ZPE compared to lighter isotopes (e.g., hydrogen) due to their smaller vibrational frequencies [11] [13]. This ZPE difference persists in the transition state, creating a higher effective activation barrier for deuterated compounds (Ea,D > Ea,H), resulting in slower reaction rates and measurable kinetic isotope effects [11] [8]. The quantum tunneling path demonstrates how particles can penetrate the classical energy barrier, further contributing to reaction rates, particularly for light atoms.

Workflow for Quantum Tunneling Analysis in Enzymes

This workflow outlines the protocol for quantifying quantum tunneling contributions in enzymatic reactions using QM(DFT)/MM calculations with multidimensional tunneling corrections [12]. The process begins with system preparation and proceeds through MEP calculation with careful attention to step size, followed by computation of small-curvature tunneling corrections and kinetic isotope effects. Ensemble averaging accounts for protein dynamics, and final validation against experimental data ensures computational reliability.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Essential Research Reagents and Computational Tools

| Category/Item | Specification/Purpose | Application Context |

|---|---|---|

| Computational Software | ||

| ⋄ Quantum Chemistry Packages | Gaussian, ORCA, Q-Chem | Ab initio PES development, frequency calculations |

| ⋄ Dynamics Software | Custom CC-TDRWP, QCT codes | Quantum and classical reaction dynamics [10] |

| ⋄ QM/MM Packages | CHARMM, AMBER, GROMACS with QM interfaces | Enzymatic tunneling calculations [12] |

| Theoretical Methods | ||

| ⋄ Close-Coupling TDRWP | Time-dependent real wave packet method | Rigorous quantum dynamics with Coriolis coupling [10] |

| ⋄ QM(DFT)/MM | Hybrid quantum-mechanical/molecular-mechanical | Enzymatic reaction modeling with electronic structure [12] |

| ⋄ VTST with SCT | Variational transition state theory with small-curvature tunneling | Multidimensional tunneling corrections [12] |

| Isotopically Labeled Compounds | ||

| ⋄ Deuterated Substrates | >99% D purity, specific labeling sites | Kinetic isotope effect measurements [11] [8] |

| ⋄ ¹³C, ¹⁵N Labeled Compounds | >99% isotopic purity | Heavy atom KIE studies |

| Experimental Characterization | ||

| ⋄ Kinetic Isotope Effect Measurement | Temperature-controlled reactors with analytical detection | Quantification of ZPE and tunneling contributions [11] |

| ⋄ Transition State Analogs | Stable compounds mimicking TS geometry | Experimental probing of TS structure |

The integration of quantization principles through zero-point energy and quantum tunneling concepts has fundamentally enriched chemical kinetics research, providing both explanatory power and predictive capability for reaction rates that deviate from classical expectations. The protocols and data presented here establish standardized methodologies for quantifying these quantum effects across diverse systems, from atmospheric heavy-atom reactions to biologically significant enzymatic processes. As research in this domain advances, the interplay between theoretical development, computational implementation, and experimental validation will continue to refine our understanding of quantum phenomena in chemical reactivity. The tools and frameworks provided in this application note serve as essential resources for researchers exploring the quantum-classical interface in chemical kinetics, with significant implications for catalyst design, drug development, and materials science.

The Born-Oppenheimer (BO) approximation is a fundamental concept in quantum chemistry that enables the separation of electronic and nuclear motions within molecules, thereby simplifying the complex many-body quantum mechanical problem. Proposed in 1927 by Max Born and J. Robert Oppenheimer, this approximation recognizes the significant mass disparity between electrons and atomic nuclei, which results in their motion occurring on drastically different timescales [14] [15]. Electrons, being much lighter, move and respond to forces far more rapidly than nuclei, allowing researchers to treat nuclear positions as fixed parameters when solving for electronic wavefunctions [16] [17].

This conceptual separation forms the cornerstone of modern computational chemistry, making the quantum mechanical treatment of molecules computationally tractable. The approximation effectively decouples the molecular Schrödinger equation into two more manageable parts: one describing electron motion around fixed nuclei, and another describing nuclear motion on a potential energy surface generated by the electrons [18]. This hierarchical approach enables the prediction of molecular structure, reactivity, and various spectroscopic properties that are essential for research in chemical kinetics and drug development.

Theoretical Foundation and Physical Basis

Mass Disparity and Timescale Separation

The physical basis of the Born-Oppenheimer approximation rests on the significant mass difference between electrons and nuclei. A proton weighs approximately 1836 times more than an electron, and this mass ratio directly impacts their relative velocities and response times [15]. When equal momentum is imparted to both particles, the electron moves nearly 2000 times faster than the proton [17]. This velocity difference means electrons effectively instantaneously adjust to any changes in nuclear positions, while nuclei experience electrons as a rapidly averaged field [19].

This separation of timescales is mathematically expressed through the molecular Hamiltonian. The full Hamiltonian incorporates terms for both electronic and nuclear kinetic energies, along with all potential energy contributions from electron-electron, electron-nuclear, and nuclear-nuclear interactions [14] [20]. The BO approximation allows this complex Hamiltonian to be separated into electronic and nuclear components, significantly reducing computational complexity while maintaining physical relevance for most ground-state molecular systems [14].

Mathematical Formulation

The Born-Oppenheimer approximation begins with the complete molecular Hamiltonian:

[ \hat{H}{\text{total}} = \hat{T}n + \hat{T}e + V{ee} + V{en} + V{nn} ]

Where:

- (\hat{T}_n) represents nuclear kinetic energy

- (\hat{T}_e) represents electronic kinetic energy

- (V_{ee}) represents electron-electron repulsion

- (V_{en}) represents electron-nuclear attraction

- (V_{nn}) represents nuclear-nuclear repulsion

The approximation assumes nuclear kinetic energy can be neglected when solving the electronic Schrödinger equation, leading to the electronic Hamiltonian:

[ \hat{H}{elec} = \hat{T}e + V{ee} + V{en} + V_{nn} ]

This simplification allows the molecular wavefunction to be expressed as a product of electronic and nuclear wavefunctions:

[ \Psi{\text{total}}(\mathbf{r}, \mathbf{R}) = \psi{\text{electronic}}(\mathbf{r}; \mathbf{R}) \times \phi_{\text{nuclear}}(\mathbf{R}) ]

Here, the electronic wavefunction (\psi{\text{electronic}}(\mathbf{r}; \mathbf{R})) depends parametrically on the nuclear coordinates (\mathbf{R}), meaning it is solved for fixed nuclear positions, while the nuclear wavefunction (\phi{\text{nuclear}}(\mathbf{R})) describes the motion of nuclei on the resulting potential energy surface [14] [18].

Computational Implementation and Protocols

Standard Implementation Workflow

The practical implementation of the Born-Oppenheimer approximation in computational chemistry follows a systematic workflow that enables the prediction of molecular properties with high accuracy for most ground-state systems. The process begins with molecular structure input, where initial nuclear coordinates are specified, either from experimental data or preliminary calculations [18].

For each fixed nuclear configuration, the electronic Schrödinger equation is solved numerically using methods such as Hartree-Fock, Density Functional Theory (DFT), or more advanced post-Hartree-Fock approaches [18]. This electronic structure calculation yields the electronic energy (E_{elec}(\mathbf{R})) and wavefunction for that specific geometry. By repeating this procedure for various nuclear arrangements, researchers map out a potential energy surface (PES) that represents the electronic energy as a function of nuclear coordinates [20] [18].

The nuclear Schrödinger equation is then solved using this PES as the effective potential, producing vibrational and rotational energy levels that characterize the nuclear motion [14] [19]. Finally, the resulting wavefunctions and energies enable the prediction of observable molecular properties, including geometries, vibrational frequencies, and reaction pathways [18].

Quantitative Performance Data

Table 1: Accuracy of the Born-Oppenheimer Approximation Across Molecular Systems

| Molecular System | Mass Ratio (Nucleus:Electron) | Typical Error | Applicability |

|---|---|---|---|

| H₂⁺ | 1836:1 | ~1% | Good, with minor corrections needed |

| C₂ | ~22000:1 | <0.1% | Excellent |

| Typical organic molecules | >12000:1 | <0.1% | Excellent for ground states |

| Systems with conical intersections | N/A | Complete breakdown | Poor - requires beyond-BO methods |

The accuracy of the BO approximation improves significantly with increasing nuclear mass [15]. For the H₂⁺ system, the simplest molecular ion, the error introduced by the approximation is approximately 1% compared to experimental values. For carbon-containing molecules, where the mass ratio exceeds 12,000:1, the error decreases to less than 0.1%, making the approximation highly reliable for most applications in organic chemistry and drug design [15].

Research Reagent Solutions

Table 2: Essential Computational Tools for Born-Oppenheimer-Based Calculations

| Research Tool | Function | Application Context |

|---|---|---|

| Gaussian Suite | Electronic structure calculation | Molecular geometry optimization, frequency analysis, reaction pathway mapping |

| ORCA | Density Functional Theory | Large system calculations, spectroscopic property prediction |

| NWChem | Parallel computational chemistry | High-performance computing for complex molecular systems |

| Monte Carlo Methods | Non-BO calculations | Ab initio molecular dynamics without BO approximation [15] |

| Surface Hopping Algorithms | Non-adiabatic dynamics | Modeling transitions between electronic states |

Applications in Chemical Research

Molecular Structure and Dynamics

The Born-Oppenheimer approximation enables the computational determination of molecular equilibrium geometries by identifying minima on the potential energy surface [18]. This capability is fundamental to rational drug design, where the three-dimensional arrangement of atoms directly influences biological activity and binding affinity. By analyzing the curvature of the PES around these minima, researchers can predict vibrational frequencies that correspond to IR and Raman spectroscopic signals, providing crucial fingerprints for molecular identification [19] [18].

The approximation also facilitates the mapping of reaction coordinates, allowing researchers to locate transition states and calculate activation barriers [18]. This application is particularly valuable in chemical kinetics research, where understanding the energy landscape of reactions enables the prediction of reaction rates and mechanisms. For drug development professionals, this translates to the ability to model metabolic pathways and predict reaction products of pharmaceutical compounds.

Spectroscopy and Energy Decomposition

Within the BO framework, molecular energy can be decomposed into independent contributions:

[ E{\text{total}} = E{\text{electronic}} + E{\text{vibrational}} + E{\text{rotational}} ]

This separation enables the interpretation of complex spectroscopic data by assigning features to specific types of molecular motion [14]. Electronic transitions typically occur in the visible or ultraviolet range, vibrational transitions in the infrared, and rotational transitions in the microwave region of the electromagnetic spectrum. This hierarchical understanding of molecular energy states facilitates the design of spectroscopic experiments and the interpretation of resulting data for molecular characterization in pharmaceutical analysis.

Limitations and Breakdown Scenarios

When the Approximation Fails

Despite its widespread success, the Born-Oppenheimer approximation has well-defined limitations. It breaks down in situations involving non-adiabatic processes, where electronic and nuclear motions become strongly coupled [21] [18]. This typically occurs when potential energy surfaces approach or cross each other, creating regions where the assumption of separable motion becomes invalid [18].

Specific scenarios where the BO approximation fails include:

- Conical intersections: Points where electronic states become degenerate, enabling rapid transitions between states [18]

- Electron transfer reactions: Processes where electronic configuration changes significantly [18]

- Photoexcited dynamics: Systems involving excited electronic states and their interconversions [18]

- Reactions involving light atoms: Systems where quantum nuclear effects become significant [21]

- Jahn-Teller systems: Molecules with electronically degenerate states that couple strongly to nuclear vibrations

Advanced Protocols for Non-Adiabatic Systems

When the BO approximation breaks down, researchers employ specialized computational protocols that explicitly account for couplings between electronic and nuclear motions. The Born-Huang representation extends the basic BO framework by including off-diagonal elements that capture interactions between different electronic states [15]. These non-adiabatic coupling terms become significant when potential energy surfaces approach each other, facilitating transitions between electronic states driven by nuclear motion [18].

For modeling photochemical processes and electron transfer reactions, trajectory surface hopping methods provide a practical approach by simulating transitions between adiabatic states during molecular dynamics simulations [18]. Multi-configurational time-dependent Hartree (MCTDH) methods offer a more rigorous quantum dynamical treatment for small systems, fully capturing quantum effects in nuclear motion [18]. Close-coupling methods have also been successfully employed to study reactions where non-Born-Oppenheimer effects are significant, such as in the Cl + D₂ reaction system [21].

Applications in Chemical Kinetics and Drug Development

Enabling Quantitative Chemical Kinetics

The Born-Oppenheimer approximation provides the theoretical foundation for calculating potential energy surfaces that are essential for understanding reaction kinetics at the quantum level [18]. By mapping the energy landscape along reaction coordinates, researchers can identify transition states and calculate activation barriers that determine reaction rates [18]. This capability is crucial for predicting temperature-dependent kinetic parameters and isotope effects, supporting the development of detailed microkinetic models for complex reaction networks.

For pharmaceutical researchers, this translates to the ability to model metabolic pathways and predict reaction products of drug candidates. The BO approximation enables computational studies of enzyme-catalyzed reactions by providing reliable potential energy surfaces for quantum mechanical/molecular mechanical (QM/MM) simulations, bridging the gap between electronic structure calculations and biological complexity.

Recent Advances and Future Directions

Recent research has pushed beyond the traditional limitations of the Born-Oppenheimer approximation. A group in Norway has successfully recovered the structure of the D₃⁺ molecule using a completely ab initio Monte Carlo approach without applying the BO approximation [15]. Other advances include the exact calculation of the dipole moment of the LiH molecule using the full Coulombic Hamiltonian, demonstrating that molecular properties can be recovered without relying on the clamped-nuclei assumption [15].

The development of efficient non-adiabatic dynamics methods continues to expand the range of systems accessible to accurate simulation. These advances are particularly relevant for photopharmacology, where light-activated drugs undergo electronic transitions that involve non-adiabatic processes. Similarly, the design of molecular materials for organic photovoltaics and photocatalysis benefits from methods that can accurately describe charge and energy transfer processes involving multiple electronic states [18].

Quantized Energy Levels and Their Role in Transition State Theory

Quantized Energy Levels

A quantum mechanical system or particle that is bound—that is, confined spatially—can only take on certain discrete values of energy, called energy levels. This contrasts with classical particles, which can have any amount of energy. The term is commonly used for the energy levels of electrons in atoms, ions, or molecules, which are bound by the electric field of the nucleus, but can also refer to energy levels of nuclei or vibrational or rotational energy levels in molecules [22].

When the potential energy is set to zero at infinite distance from the atomic nucleus, the usual convention, bound electron states have negative potential energy. The state with the lowest possible energy is called the ground state. Any higher energy levels are called excited states [23] [22].

Transition State Theory

In chemistry, transition state theory (TST) explains the reaction rates of elementary chemical reactions. The theory assumes a special type of chemical equilibrium (quasi-equilibrium) between reactants and activated transition state complexes [24]. The transition state itself is a first-order saddle point on the potential energy surface (PES) and is characterized by a vanishing gradient combined with a Hessian that has one and only one negative eigenvalue [25].

A transition state is a very short-lived configuration of atoms at a local energy maximum in a reaction-energy diagram (a.k.a. reaction coordinate). It has partial bonds, an extremely short lifetime (measured in femtoseconds), and cannot be isolated [26]. This contrasts with a reactive intermediate, which exists at a local energy minimum and is, in theory, isolable.

Quantitative Foundations

Mathematics of Quantized Energy Levels

The energy levels for a hydrogen-like atom (one electron around a nucleus) are given by the following fundamental equations [27] [22].

Table 1: Energy Level Equations for Hydrogen-like Atoms

| Concept | Formula | Variables and Constants |

|---|---|---|

| Bohr Model Energy | ( En = -\dfrac{RH}{n^2} ) | ( R_H ): Rydberg constant for H ((2.180 \times 10^{-18} \text{J})), ( n ): principal quantum number |

| General One-Electron System | ( E_n = -\dfrac{2 \pi^2 m e^4 Z^2}{n^2 h^2} ) | ( m ): electron mass, ( e ): electron charge, ( Z ): atomic number, ( h ): Planck's constant |

| Rydberg Formula for Wavelength | ( \dfrac{1}{\lambda} = R Z^2 \left( \dfrac{1}{n1^2} - \dfrac{1}{n2^2} \right) ) | ( \lambda ): photon wavelength, ( R ): Rydberg constant, ( n1, n2 ): quantum numbers (( n2 > n1 )) |

For multi-electron atoms, electron-electron interactions raise the energy levels. This is often accounted for by using an effective nuclear charge, ( Z{\text{eff}} ), resulting in the modified formula: ( E{n,\ell} = -hcR{\infty} \dfrac{Z{\text{eff}}^2}{n^2} ), where the orbital type (determined by the azimuthal quantum number ℓ) also influences the energy [22].

Mathematics of Transition State Theory

The key equations in transition state theory connect molecular-level features of the transition state to the macroscopic reaction rate.

Table 2: Key Equations in Transition State Theory

| Concept | Formula | Variables and Constants |

|---|---|---|

| Eyring Equation | ( k = \dfrac{k_B T}{h} \exp \left( -\frac{\Delta^{\ddagger} G^{\ominus}}{RT} \right) ) | ( k ): rate constant, ( k_B ): Boltzmann's constant, ( T ): temperature, ( \Delta^{\ddagger} G^{\ominus} ): standard Gibbs energy of activation |

| Activation Parameters | ( k \propto \exp \left( \frac{\Delta^{\ddagger} S^{\ominus}}{R} \right) \exp \left( -\frac{\Delta^{\ddagger} H^{\ominus}}{RT} \right) ) | ( \Delta^{\ddagger} S^{\ominus} ): standard entropy of activation, ( \Delta^{\ddagger} H^{\ominus} ): standard enthalpy of activation |

| Arrhenius Equation (Empirical) | ( k = A e^{-E_a / RT} ) | ( A ): pre-exponential factor, ( E_a ): empirical activation energy |

The energy difference between the reactants and the transition state is the activation energy ((E_a)) [24] [26]. The overall energy change for the reaction is the difference between the energy of the products and the energy of the reactants [26].

The Conceptual and Energetic Link

The connection between quantized energy levels and transition state theory lies in the molecular energy landscape. The total energy of a molecule can be considered a sum of its components [22]: ( E = E{\text{electronic}} + E{\text{vibrational}} + E{\text{rotational}} + E{\text{nuclear}} + E_{\text{translational}} )

With the exception of translational energy, these components are quantized. During a chemical reaction, as bonds break and form, the electronic, vibrational, and rotational energy levels of the system are perturbed and reconfigured. The transition state represents the specific molecular configuration at the saddle point of this multidimensional potential energy surface, where one vibrational mode (the reaction coordinate) has an imaginary frequency [25].

The diagram below illustrates the energetic relationship between quantized reactant/product states and the transition state in a chemical reaction.

Diagram 1: Energetic relationship between quantized states and the transition state.

Application Notes: Computational Protocol for TS Optimization

The following protocol details a standard computational workflow for locating and characterizing a transition state, integrating the principles of quantized molecular energy levels.

Protocol: Transition State Search via Initial Guess and Optimization

Principle: Systematically locate the first-order saddle point on the potential energy surface (PES) that corresponds to the transition state of an elementary reaction [25].

Materials and Software:

- Quantum Chemistry Software (e.g., Gaussian, ORCA, Q-Chem)

- High-Performance Computing (HPC) Cluster

- Molecular Visualization Software (e.g., GaussView, Avogadro)

Procedure:

- Reactants and Products Optimization:

- Geometry optimization of the reactant and product molecules must be performed first. This finds the local energy minimum (ground state) on the PES for each species, corresponding to their stable, quantized configurations.

- Level of Theory: Start with a cost-effective method like Density Functional Theory (DFT) with a medium-sized basis set (e.g., B3LYP/6-31G*).

- Frequency Calculation: Confirm a local minimum by performing a frequency calculation. All vibrational frequencies (second derivatives of energy) must be real (positive).

Initial Transition State Guess:

- Method A (Coordinate Scan): Perform a relaxed potential energy surface scan by incrementally changing a key internal coordinate (e.g., a bond length or angle involved in the reaction). The structure at the energy maximum of this scan serves as the initial guess [25].

- Method B (Interpolation): Use the optimized reactant and product geometries. A linear or quadratic interpolation scheme (e.g., Synchronous Transit) can generate a structure halfway along the reaction path as an initial guess. This is the principle behind modern machine learning approaches like React-OT [28].

Transition State Optimization:

- Using the initial guess from Step 2, launch a transition state optimization calculation.

- Level of Theory: Use a more robust DFT functional (e.g., ωB97x-D) and a polarized basis set (e.g., 6-31G(d)) [28].

- Algorithm: Specify a quasi-Newton optimizer (e.g., Berny algorithm) that is designed to converge to a saddle point. The calculation will use the Hessian (matrix of second derivatives) to maximize energy along one coordinate (the reaction path) while minimizing it in all others [25].

Transition State Verification:

- Frequency Calculation: A critical step. The optimized structure must have one, and only one, imaginary vibrational frequency (negative value in the Hessian).

- Intrinsic Reaction Coordinate (IRC): Follow the path of steepest descent from the transition state in both directions. A successful IRC calculation must connect your optimized transition state back to the previously optimized reactant and product structures, confirming it is the correct saddle point for the intended reaction.

The workflow for this protocol is summarized in the diagram below.

Diagram 2: Workflow for computational transition state search and verification.

Advanced Applications and Current Research

Machine Learning for Accelerated TS Discovery

A significant advancement is the use of machine learning (ML) to predict transition state structures with high accuracy and minimal computational cost. React-OT, an optimal transport approach, can generate highly accurate TS structures from reactant and product geometries in about 0.4 seconds per reaction, achieving a median structural root-mean-square deviation (RMSD) of 0.053 Å and a median barrier height error of 1.06 kcal mol⁻¹ [28]. This is a powerful demonstration of integrating physical principles (the unique TS structure given paired reactants and products) with data-driven models to overcome the high computational cost of traditional DFT-driven TS searches.

Table 3: Comparison of TS Search Methods

| Method | Principle | Typical Workflow | Relative Cost | Key Metrics |

|---|---|---|---|---|

| Traditional DFT Optimization | Locate saddle point on DFT PES using gradient/Hessian | Initial Guess → DFT TS Opt → Verification | High (Hours/Days) | Requires 1 imaginary frequency; IRC confirmation |

| Machine Learning (e.g., React-OT) | Learn mapping from Reactants/Products to TS | Input R/P → ML Model → Predicted TS | Very Low (<1 second) | Structural RMSD (~0.05 Å); Barrier Height Error (~1 kcal/mol) [28] |

Uncertainty Quantification in Kinetic Parameter Estimation

Robust estimation of kinetic parameters and their uncertainty is essential for validating models and for rational design in catalysis and drug development. Bayesian inference software like the Chemical Kinetics Bayesian Inference Toolbox (CKBIT) provides a framework for this [29]. CKBIT uses experimental data to estimate probability distributions for parameters like activation energy ((E_a)) and pre-exponential factors ((A)), rather than single-point estimates. This allows for meaningful comparison between experimental results and theoretical predictions, explicitly accounting for uncertainty in both.

The Scientist's Toolkit

Table 4: Essential Research Reagents and Computational Tools

| Tool / Reagent | Category | Function / Application | Example / Note |

|---|---|---|---|

| Density Functional Theory (DFT) | Computational Method | Models electronic structure; used for optimizing geometries and calculating energies on the PES. | Functionals: ωB97x, B3LYP. Basis Sets: 6-31G(d) [28]. |

| Nudged Elastic Band (NEB) | Computational Algorithm | Finds minimum energy path and approximate transition state between reactants and products. | Often used to generate an initial guess for a precise TS optimization [28]. |

| Quantum Chemistry Software | Software | Suite of programs to perform electronic structure calculations. | Gaussian, ORCA, Q-Chem, GAMESS. |

| Bayesian Inference Software | Software/Statistical Tool | Quantifies uncertainty in kinetic parameters (e.g., (E_a)) estimated from experimental data. | CKBIT (Chemical Kinetics Bayesian Inference Toolbox) [29]. |

| Machine Learning Models (e.g., React-OT) | Computational Tool | Rapidly and accurately generates transition state structures from reactant and product geometries. | Can reduce computational cost by a factor of 7 in high-throughput workflows [28]. |

| High-Performance Computing (HPC) Cluster | Hardware | Provides the substantial processing power required for quantum chemistry calculations. | Essential for scanning reactions or building large reaction networks. |

From Wave-Particle Duality to Molecular Orbital Theory

The development of modern chemistry is deeply rooted in the fundamental principles of quantum mechanics, beginning with the revolutionary concept of wave-particle duality. This principle dictates that entities at the atomic and subatomic scale, such as electrons and photons, exhibit both wave-like and particle-like properties depending on the experimental context [30]. The profound implications of this duality form the cornerstone for understanding electronic structure in molecules and materials, ultimately enabling the prediction of chemical behavior, reactivity, and kinetics.

The transition from classical to quantum descriptions of matter was necessitated by experimental observations that could not be explained by Newtonian physics. Landmark experiments, such as the double-slit experiment with electrons, demonstrated unequivocally that matter possesses wave-like characteristics, including interference patterns previously associated only with light [31]. This led to the development of quantum mechanics, which provides the mathematical framework for describing the behavior of electrons in atoms and molecules. Within this framework, Molecular Orbital Theory (MOT) emerged as a powerful method for describing the electronic structure of molecules using quantum mechanics, treating electrons as delocalized wavefunctions extending over multiple atomic nuclei [32] [33].

The integration of these quantum principles is particularly transformative in the field of chemical kinetics research. By providing a detailed understanding of electron density distributions, bonding interactions, and orbital symmetries, MOT enables researchers to predict reaction pathways, transition states, and activation energies with remarkable accuracy. Recent advances in computational quantum chemistry and experimental techniques, such as single-molecule imaging, now allow for the direct observation and quantification of kinetic parameters based on first principles, moving beyond empirical models to fundamentally grounded predictions of chemical behavior [34].

Foundational Quantum Concepts

Wave-Particle Duality and the Double-Slit Experiment

Wave-particle duality represents one of the most profound departures from classical physics, fundamentally altering our understanding of matter and energy. The double-slit experiment provides the most compelling demonstration of this principle. When a coherent beam of electrons (or photons) is directed at a barrier with two parallel slits, the resulting pattern on the detection screen is not two bright lines corresponding to particle trajectories, but an interference pattern of alternating bright and dark bands characteristic of wave behavior [31].

This phenomenon persists even when particles are sent through the apparatus one at a time, with the interference pattern emerging gradually as the cumulative detections build up. This indicates that each individual particle behaves as a wave passing through both slits simultaneously, interfering with itself before being detected as a discrete particle at a specific point on the screen. As physicist Richard Feynman famously noted, this phenomenon "contains the only mystery of quantum mechanics" and is impossible to explain in any classical way [31].

The theoretical implications of wave-particle duality are formalized in several core quantum principles:

- Heisenberg Uncertainty Principle: This principle states that it is impossible to simultaneously determine the exact position and exact momentum of a quantum particle [30]. This inherent uncertainty is not a limitation of measurement technology but a fundamental property of quantum systems.

- Quantum Superposition: Particles exist in all possible states simultaneously until measured, described mathematically by a wavefunction ψ.

- Probability Interpretation: The square of the wavefunction, ψ², gives the probability density of finding the particle at a specific location [30].

Mathematical Description of Molecular Orbitals

Molecular Orbital Theory provides a quantitative framework for applying these quantum principles to chemical systems. In MOT, electrons are described by molecular orbitals – wavefunctions that extend over the entire molecule. These molecular orbitals are constructed as Linear Combinations of Atomic Orbitals (LCAO), where the molecular wavefunction ψⱼ is formed from a weighted sum of atomic orbitals χᵢ [32]:

[ \psij = \sum{i=1}^{n} c{ij} \chii ]

The coefficients cᵢⱼ are determined by solving the Schrödinger equation for the molecular system, typically using computational methods such as Hartree-Fock or Density Functional Theory [32]. The resulting molecular orbitals can be classified as:

- Bonding orbitals: Formed by constructive interference of atomic orbitals, characterized by enhanced electron density between nuclei that stabilizes the molecule.

- Antibonding orbitals: Formed by destructive interference, characterized by reduced electron density between nuclei and a nodal plane that destabilizes the molecule.

- Non-bonding orbitals: Molecular orbitals with energy similar to the original atomic orbitals, neither contributing to nor detracting from bond strength.

The physical requirements for effective atomic orbital combination include symmetry compatibility, significant spatial overlap, and comparable energy levels between the interacting orbitals [32].

Figure 1: Molecular Orbital Formation Pathway. This diagram illustrates the quantum mechanical process through which atomic orbitals combine to form molecular orbitals, ultimately determining molecular properties.

Molecular Orbital Theory: Principles and Applications

Bond Order and Molecular Stability

A key predictive capability of Molecular Orbital Theory is the calculation of bond order, which quantifies the number of chemical bonds between a pair of atoms and correlates with bond strength and molecular stability. The bond order is calculated as [32] [35]:

[ \text{Bond order} = \frac{1}{2} \times (\text{Number of bonding electrons} - \text{Number of antibonding electrons}) ]

This quantitative approach successfully explains the stability and properties of diatomic molecules:

Table 1: Bond Order and Stability of Selected Diatomic Molecules

| Molecule | Total Electrons | Bonding Electrons | Antibonding Electrons | Bond Order | Stability |

|---|---|---|---|---|---|

| H₂⁺ | 1 | 1 | 0 | 0.5 | Stable |

| H₂ | 2 | 2 | 0 | 1 | Stable |

| He₂⁺ | 3 | 2 | 1 | 0.5 | Stable |

| He₂ | 4 | 2 | 2 | 0 | Not stable |

| O₂ | 16 (valence) | 8 | 4 | 2 | Stable (paramagnetic) |

The bond order concept successfully predicts that He₂ is not stable (bond order = 0), while He₂⁺ has a fractional bond order (0.5) and can exist, consistent with experimental observations [35]. Furthermore, MOT correctly predicts the paramagnetism of oxygen molecules (O₂), which have two unpaired electrons in degenerate π* antibonding orbitals – a phenomenon that valence bond theory cannot adequately explain [32].

Molecular Orbital Diagrams for Homonuclear Diatomics

The relative ordering of molecular orbital energies follows predictable patterns for homonuclear diatomic molecules. For second-period elements, two distinct ordering patterns emerge:

- For B₂, C₂, and N₂: The σ₂p orbital is higher in energy than the π₂p orbitals

- For O₂, F₂, and beyond: The σ₂p orbital is lower in energy than the π₂p orbitals [35]

These orbital configurations directly influence magnetic properties: molecules with unpaired electrons (paramagnetic) are attracted to magnetic fields, while those with all electrons paired (diamagnetic) are weakly repelled [32] [35].

Experimental Protocols in Quantum-Informed Chemical Research

Protocol: Single-Molecule Atomic-Resolution Real-Time Electron Microscopy (SMART-EM)

SMART-EM represents a groundbreaking approach for directly observing molecular reactions and conformational changes at atomic resolution, enabling the study of chemical kinetics through visual analysis of individual reaction events [34].

Table 2: Research Reagent Solutions for SMART-EM Imaging

| Reagent/Material | Function | Specifications |

|---|---|---|

| Single-walled Carbon Nanotubes (CNTs) | 1D nanoscale reaction container | 1-2 nm diameter, functionalized as needed |

| Target Molecules | Specimen for imaging and reaction studies | Purified, e.g., [60]fullerene derivatives |

| Transmission Electron Microscope | Imaging instrument | 120-kV acceleration, complementary metal oxide semiconductor detector |

| Molecular Trapping Components | "Eel trap" or "fish hook" strategies | For immobilizing and positioning molecules |

Procedure:

Sample Preparation:

- Prepare single-walled carbon nanotubes (1-2 nm diameter) as confinement vessels.

- Introduce target molecules (e.g., [60]fullerene derivatives) into nanotubes using appropriate chemical functionalization or solution methods.

- Confirm successful incorporation of molecules into nanotubes using preliminary TEM imaging.

Instrument Setup:

- Configure transmission electron microscope with 120-kV acceleration voltage.

- Ensure use of complementary metal oxide semiconductor detector for continuous imaging capability.

- Calibrate imaging parameters for optimal contrast and resolution.

Data Acquisition:

- Position sample in electron beam path.

- Record real-time movies of molecular behavior with sub-millisecond temporal resolution.

- Maintain constant temperature conditions using variable-temperature stage.

- Collect data over multiple regions and time periods to ensure statistical significance.

Kinetic Analysis:

- Identify individual reaction events from movie frames.

- Track time evolution of molecular structures.

- Calculate reaction rates by analyzing event frequencies across multiple molecules.

- Determine temperature dependence of rates through variable-temperature experiments.

This protocol enabled the first experimental validation of quantum mechanical transition state theory, demonstrating that isolated molecules behave as if all their accessible states are occupied in random order, consistent with quantum predictions rather than classical "average molecule" behavior [34].

Figure 2: SMART-EM Experimental Workflow. This diagram outlines the key steps in single-molecule atomic-resolution real-time electron microscopy for studying chemical kinetics.

Protocol: Quantum Cheshire Cat Experiment for Attribute Separation

Recent advances in quantum measurement techniques have enabled the experimental separation of wave and particle attributes of single photons, demonstrating a phenomenon analogous to the quantum Cheshire cat, where physical properties can be separated from their carriers [36].

Materials and Equipment:

- Quantum optics setup with single-photon source

- Mach-Zehnder interferometer configuration

- Beam splitters and phase shifters

- Single-photon detectors

- Weak measurement apparatus

Procedure:

State Preparation:

- Generate single photons with superposition of wave and particle attributes: |ψ⟩ = cosα|Particle⟩ + sinα|Wave⟩

- Pass photons through first beam splitter to create path superposition: |ψᵢ⟩ = (|L⟩ + |R⟩)(cosα|Particle⟩ + sinα|Wave⟩)/√2

Weak Measurement Implementation:

- Apply minimal disturbance to system evolution using imaginary-time evolution technique

- Extract weak values without collapsing quantum state

- Use formula: ⟨Â⟩w = ⟨ψf|Â|ψi⟩/⟨ψf|ψ_i⟩

Post-selection:

- Configure second beam splitter with operation U for mutual transformation between wave and particle states

- Implement operator X to separate |Wave⟩ and |Particle⟩ to different detectors

- Post-select state: |ψ_f⟩ = (|L⟩|Wave⟩ + |R⟩|Particle⟩)/√2

Verification:

- Confirm spatial separation of wave and particle attributes through correlation measurements

- Calculate normalized incidence rate N = N(U)/N₀

- Extract weak value: ⟨Â⟩_w = -(∂N/∂t)/2

This protocol successfully demonstrated the counterintuitive quantum phenomenon where the "wave attribute" and "particle attribute" of a single photon travel through different paths of an interferometer, providing new insights into fundamental quantum mechanics and potential applications in quantum information processing [36].

Advanced Applications in Chemical Kinetics Research

Data Assimilation in Kinetic Parameter Estimation

The Augmented Ensemble Kalman Filter (AEnKF) represents a powerful data assimilation approach for estimating kinetic parameters in complex reaction systems. This method integrates experimental data with computational models to enhance predictive accuracy while maintaining physical consistency [37].

Application to Ammonia Oxidation:

- System: Ammonia oxidation kinetics in shock tube experiments

- Parameters: Four rate-equation parameters for key reaction steps

- Methodology: Simultaneous estimation of state variables and model parameters through ensemble of stochastic simulations

- Results: Improved model accuracy across varied conditions compared to baseline parameters, revealed intrinsic temperature dependence of reaction parameters [37]

This approach effectively handles the inherent nonlinearities of chemical kinetics while retaining physical meaning throughout the parameter estimation process, providing a robust framework for developing advanced combustion kinetic models.

Quantum Computation for Chemical Systems

Recent advances in first-quantization quantum algorithms enable more efficient simulation of molecular systems on emerging quantum computing hardware. This approach requires Nlog₂(2D) qubits to represent the wavefunction, where N is the number of electrons and D is the number of basis functions, offering exponential improvement in scaling compared to second-quantization methods for fixed electron count [38].

Key Developments:

- Implementation of arbitrary basis sets in first quantization, including molecular orbitals and dual plane waves

- Asymptotic speedup in Toffoli count for molecular orbital calculations

- Orders of magnitude improvement in resource requirements using dual plane waves compared to second quantization counterparts

- Application to active space calculations in computational quantum chemistry [38]

These methodological advances promise to extend the boundaries of quantum chemistry calculations, potentially enabling high-accuracy predictions of reaction pathways and kinetic parameters for complex molecular systems that are currently intractable with classical computational approaches.

Integration with Drug Development Research

The principles of wave-particle duality and Molecular Orbital Theory provide fundamental insights that inform multiple aspects of pharmaceutical research and development:

Molecular Recognition and Drug-Target Interactions: Understanding the electronic structure of drug molecules and their protein targets through MOT enables rational design of compounds with optimized binding affinity and selectivity.

Reaction Mechanism Elucidation: Quantum-informed kinetic studies facilitate the identification of reaction intermediates and transition states in synthetic pathways for active pharmaceutical ingredients, enabling optimization of reaction conditions and impurity control.

Metabolic Pathway Prediction: Analysis of frontier molecular orbitals (HOMO-LUMO interactions) helps predict sites of metabolic transformation and potential reactive metabolite formation.

Photophysical Properties Optimization: For photodynamic therapy agents or fluorescent tags, wave-particle principles guide the design of molecules with tailored excitation and emission characteristics.

The integration of advanced experimental techniques like SMART-EM imaging and quantum computational methods continues to expand the capabilities of drug development researchers, providing unprecedented atomic-level insights into the molecular processes underlying biological activity and therapeutic efficacy.

Quantum Methods in Action: Advanced Algorithms and Kinetic Parameter Estimation

Data Assimilation with Ensemble Kalman Filters for Kinetic Parameter Recovery

The accurate recovery of kinetic parameters is a fundamental challenge in chemical research and drug development. This application note explores the integration of data assimilation principles, specifically the Ensemble Kalman Filter (EnKF), with the core concepts of energy quantization to address the inverse problem in chemical kinetics. We present a detailed protocol demonstrating how sparse, noisy experimental data can be systematically combined with computational models to achieve robust estimates of rate constants and reaction orders, effectively quantizing the solution space to a finite set of physically plausible parameters. The methodologies outlined herein provide researchers with a powerful framework for optimizing reaction pathways and accelerating the characterization of molecular dynamics.

In chemical kinetics, the relationship between reactant concentrations and reaction rates is governed by rate laws containing kinetic parameters, such as the rate constant ( k ) and reaction orders [39]. Determining these parameters experimentally is often constrained by limited data, measurement noise, and model simplifications. This creates an inverse problem where the underlying parameters must be inferred from indirect observations.

The concept of quantization, foundational to quantum mechanics, describes how physical systems, such as molecular rotors, can only occupy discrete energy states [40]. This principle can be extended to the conceptual framework of kinetic analysis, where the goal is to identify a discrete set of valid kinetic parameters from a continuous, and often infinite, possibility space. Data assimilation (DA) provides the mathematical tools for this "quantization" of the parameter space.

DA offers a systematic approach to combining incomplete observational data with physics-based models to produce more accurate estimates of a system's state [41]. The Ensemble Kalman Filter (EnKF), a Monte Carlo variant of the classic Kalman filter, is particularly suited for nonlinear systems like chemical reactions [42] [41]. It uses an ensemble of model states to represent the probability distribution of the system, allowing for the simultaneous estimation of both the system state and its underlying parameters [43].

Theoretical Background

Principles of Quantization in Chemical Systems

Quantization, in its physical context, dictates that systems like a quantum mechanical rigid rotor can only possess specific, discrete energy levels, with angular momentum governed by ( L = \hbar \sqrt{n(n+1)} ), where ( n ) is a quantum number [40]. The corresponding rotational energy levels are given by ( E_n = \frac{\hbar^2}{2I}n(n+1) ), where ( I ) is the moment of inertia. This discreteness is what gives rise to sharp spectral lines in rotational spectroscopy [40]. While the kinetic energy of a free-moving particle is not quantized [44], the energy states of bound systems, which are critical to understanding activation barriers and reaction pathways, are subject to quantization. This principle underlies the discrete nature of the parameter sets we seek to identify through data assimilation.

Fundamentals of Chemical Kinetics

The rate law for a reaction expresses the rate as a function of reactant concentrations. For a reaction with reactants ( A ) and ( B ), the rate law is: [ \text{Rate} = k[A]^x[B]^y ] where ( k ) is the rate constant, and ( x ) and ( y ) are the reaction orders with respect to ( A ) and ( B ), respectively [39] [45]. The sum ( x+y ) is the overall reaction order. These parameters must be determined experimentally. The following table summarizes common rate laws.

Table 1: Common Reaction Orders and Their Rate Laws

| Reaction Order | Rate Law | Description |

|---|---|---|

| Zero-Order | ( \text{Rate} = k ) | The rate is constant and independent of reactant concentrations. |

| First-Order | ( \text{Rate} = k[A] ) | The rate is directly proportional to the concentration of one reactant. |

| Second-Order | ( \text{Rate} = k[A]^2 ) or ( \text{Rate} = k[A][B] ) | The rate is proportional to the square of a single reactant or the product of two reactant concentrations. |

The rate constant ( k ) is temperature-dependent, commonly described by the Arrhenius equation: [ k = A e^{-Ea/(RT)} ] where ( A ) is the frequency factor, ( Ea ) is the activation energy, ( R ) is the gas constant, and ( T ) is the temperature in Kelvin [45].

Data Assimilation and the Ensemble Kalman Filter

Data assimilation provides a Bayesian framework for updating the belief about a system's state by combining model forecasts with new observations. The Ensemble Kalman Filter (EnKF) is a powerful DA method that represents the state distribution using a collection of state vectors, or an ensemble [41] [42].

For parameter recovery, the system's state vector is augmented to include the kinetic parameters themselves (e.g., ( k ), ( x ), ( y )) as quantities to be estimated [43]. The EnKF procedure operates in a repeating forecast-analysis cycle:

- Forecast Step: Each ensemble member is propagated forward in time using the kinetic model (e.g., integrating the rate laws).

- Analysis Step: As new experimental data becomes available, each ensemble member is updated. This update is a weighted average of the model forecast and the observation, where the weight (Kalman gain) is determined by the relative uncertainty of the forecast and the observation.

A key advantage of the EnKF is its ability to handle non-linear models and to provide uncertainty estimates from the spread of the ensemble.

Application Note: Recovering Rate Law Parameters from Concentration Data

Problem Formulation

Consider a reaction ( aA + bB \rightarrow products ), with an unknown rate law ( \text{Rate} = k[A]^x[B]^y ). The goal is to use time-series concentration data of ( A ) and ( B ) to recover the parameters ( k ), ( x ), and ( y ). The state vector for the EnKF is defined as: [ \mathbf{v} = [A, B, k, x, y]^T ] This approach treats the parameters as state variables with dynamics, allowing the filter to converge to their true values over time.

Experimental Data for Protocol Demonstration

The following table contains idealized experimental data for the reaction between phenolphthalein and excess base, which exhibits first-order kinetics with respect to phenolphthalein [39].

Table 2: Experimental Data for Phenolphthalein Reaction with Excess Base

| Time (s) | [Phenolphthalein] (M) |

|---|---|

| 0.0 | 0.0050 |

| 10.5 | 0.0045 |

| 22.3 | 0.0040 |

| 35.7 | 0.0035 |

| 51.1 | 0.0030 |

| 69.3 | 0.0025 |

| 91.6 | 0.0020 |

| 120.4 | 0.0015 |

| 160.9 | 0.0010 |

| 230.3 | 0.00050 |

| 299.6 | 0.00025 |

Detailed EnKF Protocol for Kinetic Parameter Recovery

Objective: Recover the rate constant ( k ) and reaction order ( x ) for phenolphthalein.

Preparatory Steps:

- Model Selection: Choose a candidate rate law. For this protocol, we assume a general form: ( \frac{d[A]}{dt} = -k[A]^x ).

- State Vector Definition: Define the state vector as ( \mathbf{v} = [[A], k, x]^T ).

- Observation Operator: Define the operator ( H ) that maps the state to the observable. Here, ( H ) simply extracts the concentration ( [A] ) from the state vector, so ( H = [1, 0, 0] ).

- Ensemble Initialization: Generate an initial ensemble of ( N ) state vectors (e.g., ( N=100 )). The initial concentrations can be set to the first measurement, and the initial parameters ( k ) and ( x ) are drawn from a prior distribution (e.g., ( k \sim U(10^{-5}, 10^{-3}) ), ( x \sim U(0, 2) )), where ( U ) denotes a uniform distribution.

Cyclical Execution: For each new measurement of ( [A]{obs}(ti) ):

- Forecast: Propagate each ensemble member from time ( t{i-1} ) to ( ti ) by numerically integrating the differential equation ( \frac{d[A]}{dt} = -k[A]^x ). This yields a forecasted ensemble of states, ( { \mathbf{v}^f_j } ).

- Calculate Ensemble Statistics: Compute the mean forecast state ( \bar{\mathbf{v}}^f ) and the forecast error covariance matrix ( P^f ) from the spread of the forecasted ensemble.