Quantum Protocols for Material Science: From Foundational Theory to Biomedical Applications

This article synthesizes the latest advancements in quantum computing protocols and their transformative impact on material science research.

Quantum Protocols for Material Science: From Foundational Theory to Biomedical Applications

Abstract

This article synthesizes the latest advancements in quantum computing protocols and their transformative impact on material science research. Tailored for researchers, scientists, and drug development professionals, it provides a comprehensive roadmap from foundational quantum principles to practical methodologies for designing novel materials. We explore core protocols for material simulation and discovery, tackle key optimization challenges in the NISQ era, and present rigorous validation frameworks. The review highlights immediate applications in drug development, from simulating molecular interactions for targeted therapies to designing porous materials for drug delivery and carbon capture, offering a critical resource for integrating quantum tools into the biomedical research pipeline.

Quantum Foundations: Core Principles for Material Discovery

This document provides a standardized set of application notes and experimental protocols for the synthesis and characterization of advanced quantum materials. The procedures are framed within the broader thesis that quantum theory provides the fundamental protocols for understanding and engineering material properties from the atomic scale up. These methodologies are designed for researchers investigating correlated electron systems, superconductivity, and quantum-enabled drug discovery platforms.

Experimental Protocols & Methodologies

Protocol 2.1: Nanoscale Magnetic Fluctuation Sensing with Entangled Nitrogen-Vacancy Centers

2.1.1 Objective: To directly measure local magnetic fields and their fluctuations at the nanoscale in quantum materials using a pair of entangled Nitrogen-Vacancy (NV) centers in diamond.

2.1.2 Principle: Two NV centers, implanted nanometers apart and prepared in an entangled quantum state, act as a correlated sensor pair. This entanglement provides a quantum advantage, allowing the system to detect magnetic field correlations and triangulate their source with high sensitivity, revealing phenomena invisible to single-point sensors [1].

2.1.3 Materials & Reagents:

- Substrate: High-purity, lab-grown single-crystal diamond.

- NV Center Creation: Nitrogen gas source.

- Measurement Apparatus: Confocal microscope with laser excitation (typically green laser), microwave antenna for quantum state control, and photon detection system [1].

2.1.4 Step-by-Step Procedure:

- NV Pair Creation: Implant nitrogen molecules into the diamond substrate with kinetic energy controlled to ~30,000 feet/second. This energy is tuned so molecules dissociate upon impact, embedding two nitrogen atoms approximately 10 nm apart and 20 nm below the surface [1].

- Sensor Characterization: Confirm the presence and properties of the NV pair via photoluminescence measurements under laser excitation.

- Quantum State Initialization: Illuminate the NV pair with a laser to initialize them into a specific quantum spin state.

- Entanglement Generation: Apply a precise sequence of microwave pulses to the entangled NV pair to create quantum correlation ("spooky action") between their electronic spins [1].

- Sample Proximity: Place the quantum material of interest (e.g., a 2D material flake) in close proximity (~nanometers) to the diamond sensor surface.

- Measurement Sequence:

- Expose the entangled NV centers to the local magnetic environment of the sample.

- Apply a second microwave pulse sequence to manipulate the quantum state based on magnetic field exposure.

- Read out the final quantum state via laser-induced fluorescence.

- Data Acquisition: The fluorescence signal reveals the joint quantum state of the NV pair, from which correlated magnetic noise and local field structures can be reconstructed in a single, highly sensitive measurement [1].

Protocol 2.2: Probing Unconventional Superconductivity in Magic-Angle Twisted Trilayer Graphene

2.2.1 Objective: To characterize the superconducting state and measure the superconducting gap structure in magic-angle twisted trilayer graphene (MATTG) to confirm unconventional superconductivity [2].

2.2.2 Principle: Combining electron tunneling spectroscopy with electrical transport measurements in the same device allows unambiguous identification of the superconducting gap only when the material exhibits zero electrical resistance [2].

2.2.3 Materials & Reagents:

- Material Stack: Trilayer graphene, hexagonal boron nitride (hBN) crystals for encapsulation.

- Substrate: Si/SiO₂ wafer.

- Fabrication: Electron-beam lithography system, metal (e.g., Cr/Au) for electrode deposition.

- Measurement: Cryogenic system with temperature control (<1K) and high magnetic field.

2.2.4 Step-by-Step Procedure:

- Material Fabrication (Twistronics):

- Mechanically exfoliate graphene and hBN flakes.

- Use a precision transfer stage to stack three graphene layers with a deliberate "magic angle" twist (typically ~1.6 degrees) and encapsulate the stack in hBN to preserve quality [2].

- Device Fabrication: Pattern electrical contacts (source, drain, gate) onto the MATTG heterostructure using electron-beam lithography and metal deposition.

- Cooling: Load the device into a cryostat and cool to milli-Kelvin temperatures to induce superconductivity.

- Simultaneous Transport & Tunneling Measurement:

- Step A (Transport): Apply a small current through the device and measure electrical resistance. Confirm the superconducting state by observing a drop to zero resistance.

- Step B (Tunneling): Simultaneously, use a separate circuit to measure the tunneling current between the MATTG and a separate electrode. This current is proportional to the density of electronic states.

- Gap Mapping: Record the tunneling conductance as a function of bias voltage while the material is in the superconducting state (confirmed by zero resistance).

- Analysis: The resulting conductance spectrum directly maps the superconducting gap. A distinct V-shaped gap profile is a key signature of unconventional superconductivity, differing from the U-shaped gap of conventional superconductors [2].

Protocol 2.3: Quantum-Integrated Chemistry for Drug Discovery Platforms

2.3.1 Objective: To utilize a hybrid quantum-classical computing platform for high-precision analysis of protein-ligand binding interactions, including the critical role of hydration water molecules [3] [4].

2.3.2 Principle: A classical computer handles initial molecular simulations, while a quantum computer leverages superposition and entanglement to solve the complex problem of optimally placing water molecules in protein binding pockets and modeling electronic interactions with high accuracy [3] [4].

2.3.3 Materials & Reagents:

- Software Platform: Quantum-Integrated Discovery Orchestrator (QIDO) or equivalent, integrating classical (e.g., QSP Reaction) and quantum (e.g., InQuanto) software [3].

- Computational Resources: Access to high-performance computing (HPC) cluster and a quantum computer (e.g., neutral-atom quantum computer like "Orion") [3] [4].

- Input Data: 3D structural data of the target protein (e.g., from protein data bank).

2.3.4 Step-by-Step Procedure:

- System Setup: Input the 3D atomic coordinates of the protein and candidate ligand into the QIDO platform.

- Classical Pre-processing: Run molecular dynamics or density functional theory (DFT) calculations on the classical HPC cluster to generate initial electron density data and protein flexibility profiles [3].

- Quantum Task Formulation: The platform automatically translates the key electronic structure problem—such as determining the optimal configuration of water molecules in a buried protein pocket or calculating the binding affinity—into a format suitable for quantum processing [3].

- Quantum Processing: Execute the algorithm on the quantum computer. The quantum processor evaluates countless possible molecular configurations simultaneously via quantum superposition to find the lowest-energy, most stable state [4].

- Result Post-processing: The output from the quantum computer is returned to the classical platform for analysis and conversion into actionable data, such as a precise binding energy value or a 3D visualization of the hydrated binding site.

- Validation: Compare the quantum-computed results with classical simulation results and available experimental data to validate the model's improved accuracy.

Data Presentation & Analysis

Table 1: Quantitative Metrics for Quantum Sensing and Superconductivity Protocols

| Protocol | Key Measurable | Typical Value / Signature | Significance / Interpretation |

|---|---|---|---|

| 2.1: NV Center Sensing | Sensor Spatial Resolution [1] | ~20 nm depth, ~10 nm pair separation | Probes the mesoscale regime between atomic and optical scales. |

| Sensitivity Gain [1] | ~40x greater than previous techniques | Enables detection of previously invisible magnetic fluctuations. | |

| 2.2: MATTG Superconductivity | Superconducting Gap Structure [2] | V-shaped profile | Key evidence of unconventional superconductivity, distinct from conventional U-shaped gap. |

| Critical Temperature (T_c) | ~3 K (example) | The temperature below which superconductivity occurs. | |

| 2.3: Quantum Chemistry | Calculation Type | Protein hydration analysis, Binding affinity [4] | Provides atomistic insight critical for drug design. |

| Computational Approach | Hybrid quantum-classical (e.g., QIDO platform) [3] | Makes quantum computing accessible for non-specialists in research environments. |

Table 2: The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Solution | Function / Application | Protocol |

|---|---|---|

| Lab-Grown Diamond with NV Centers | Serves as the solid-state host for quantum sensors that detect magnetic fields. | 2.1 |

| Twisted van der Waals Heterostructures | Engineered materials (e.g., MATTG) that exhibit exotic quantum phenomena like unconventional superconductivity. | 2.2 |

| Hexagonal Boron Nitride (hBN) | An insulating crystal used to encapsulate and protect sensitive 2D materials from disorder. | 2.2 |

| Quantum Chemistry Software (InQuanto) | Software that translates chemical problems into algorithms executable on quantum computers. | 2.3 |

| Neutral-Atom Quantum Computer | A type of quantum hardware used to run complex molecular simulations efficiently. | 2.3 |

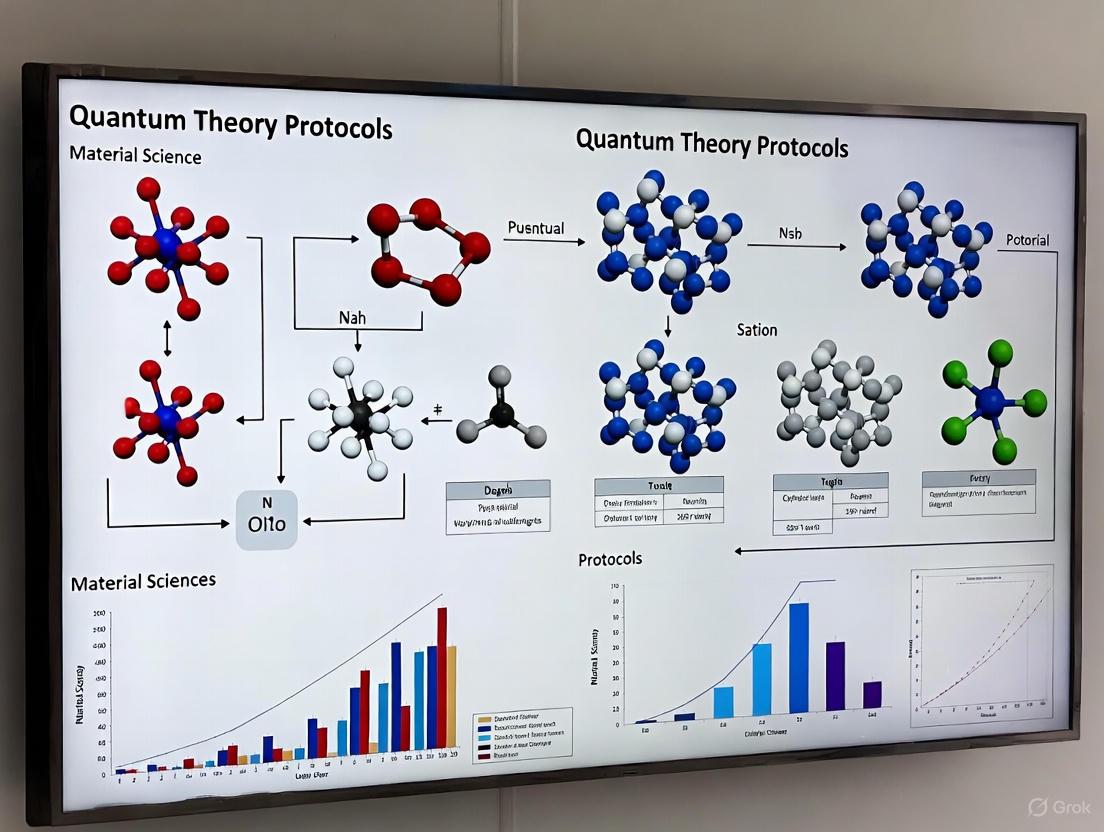

Workflow & System Visualization

Diagram 1: Quantum Sensor Fabrication & Measurement

Diagram 2: Superconductivity Analysis Workflow

Diagram 3: Quantum-Integrated Drug Discovery Platform

Quantum mechanics has revolutionized materials science by providing a fundamental framework for understanding and predicting the behavior of matter at the atomic and subatomic levels. This shift from classical physics enables researchers to describe and manipulate electronic structure, energy levels, bonding, and optical and magnetic properties with unprecedented precision [5]. The core principles of quantum theory—particularly entanglement and superposition—have evolved from theoretical curiosities to practical design tools, guiding the development of advanced materials with tailored properties [6]. The emerging "second quantum revolution" leverages these phenomena to create next-generation technologies, from fault-tolerant quantum computers to novel pharmaceuticals and highly efficient energy materials [6] [7]. This document outlines specific experimental protocols and applications for harnessing entanglement and superposition in materials research, providing a practical toolkit for scientists and drug development professionals.

Fundamental Concepts: Entanglement and Superposition

Quantum Superposition

Superposition is the fundamental principle that a quantum system can exist in multiple probabilistic states simultaneously until a measurement is performed [6]. For example, an electron within an atom does not occupy a single fixed position but rather exists as a "cloud" of probabilities, representing a range of possible positions and energies at once [6]. This phenomenon is mathematically described by the wavefunction (ψ), which encapsulates the probability amplitudes for all possible states of the system [5]. When a measurement occurs, this probabilistic cloud "collapses" to a single definite state [6]. In materials design, this property is exploited in quantum bits (qubits), the fundamental units of quantum information, which can represent a 0, 1, or any superposition of both states, enabling massively parallel computation for simulating molecular structures and material properties [6].

Quantum Entanglement

Entanglement is a profound quantum mechanical phenomenon where two or more particles become correlated in such a way that the quantum state of one particle cannot be described independently of the others, regardless of the physical distance separating them [5] [6]. This "spooky action at a distance," as Einstein termed it, creates non-local correlations that are crucial for quantum communication, ultra-precise sensors, and understanding correlated electron systems in materials like high-temperature superconductors [6] [8]. Measuring the state of one entangled particle instantly determines the state of its partner, a property that is harnessed in quantum cryptography and quantum networking [6].

Table 1: Key Characteristics of Quantum Phenomena in Materials

| Phenomenon | Fundamental Principle | Key Implication for Material Design |

|---|---|---|

| Superposition | Ability to exist in multiple states simultaneously [6] | Enables quantum computing for material simulation; allows electrons to occupy multiple energy states [6] |

| Entanglement | Non-local correlation between particles [5] [6] | Facilitates development of ultra-precise quantum sensors and understanding of superconductivity [6] [8] |

Experimental Protocols and Methodologies

Protocol 1: Investigating Spin Coherence in Molecular Systems for Quantum Information Science

1.1. Objective: To measure and extend electron spin coherence lifetimes in molecular systems by suppressing molecular vibrations, a critical requirement for functional quantum bits in computing and sensitive quantum sensors [6].

1.2. Background: Electron "spin coherence" refers to the ability of an electron's quantum spin state to retain information over time. This coherence rapidly decays due to environmental interactions, particularly molecular vibrations [6]. This protocol addresses the chemistry challenge of designing materials that protect this quantum state.

1.3. Materials and Equipment:

- Molecular Beam Epitaxy (MBE) System: For atomically precise growth of magnetically doped topological insulator thin films and heterostructures [6].

- Ultra-fast Lasers: For imaging materials under magnetic fields and initiating spin coherence [6].

- Advanced Solvents and Ligands: Specifically chosen to create a more rigid molecular structure to suppress natural atomic oscillations [6].

1.4. Procedure:

- Material Synthesis: Use molecular beam epitaxy (MBE) to grow high-purity, magnetically doped topological insulator films [6].

- Sample Preparation: Embed the synthesized material into a matrix of rigidifying solvents or ligands designed to dampen molecular vibrations [6].

- Laser Excitation: Apply ultra-fast laser pulses in the presence of a magnetic field to initialize a coherent electron spin state in the material [6].

- Imaging and Measurement: Use a state-of-the-art laser imaging technique (e.g., time-resolved magneto-optic imaging) to track the evolution and decay of the spin state over time.

- Vibrational Coupling Analysis: Analyze how the electron spin couples with the remaining molecular vibrations to understand the dominant decay pathways [6].

- Iterative Design: Use the results to inform the synthesis of new materials with optimized ligands and structures for longer coherence times.

1.5. Data Analysis:

- The primary metric is the spin coherence lifetime (T₂), measured from the laser experiment's decay signal.

- Compare T₂ values between materials with different rigidifying ligands to quantify the effectiveness of vibrational suppression [6].

Protocol 2: Probing Quantum Effects in Biological Systems (Quantum Neuroscience)

2.1. Objective: To experimentally demonstrate the existence of non-classical quantum effects (e.g., entanglement, superposition) within neural systems and their potential influence on brain function, such as signaling or cognition [7].

2.2. Background: The warm, wet, and noisy environment of biological systems was historically considered hostile to fragile quantum states. However, evidence of quantum effects in photosynthesis and bird navigation has spurred investigation into similar phenomena in the brain [7]. Google's Quantum Neuroscience Research Challenge is a prime example of the institutional push for this high-risk, high-reward research [7].

2.3. Materials and Equipment:

- Quantum Sensors: Nano-scale NMR, electron spin resonance, or advanced magnetometers to detect subtle quantum signals in biological tissue [7].

- 2D Electronic Spectroscopy Setup: To probe quantum coherence in biological molecules [7].

- Quantum Computing Hardware: For modeling how quantum mechanics might influence biological molecules involved in cognition [7].

2.4. Procedure:

- Hypothesis Formulation: Define a specific quantum effect to test (e.g., entanglement between nuclear spins in neural proteins).

- Sample Preparation: Isolate specific neural components (e.g., microtubules, ion channels) or use in-vitro cultured neuronal networks.

- Quantum Sensing: Deploy quantum sensors (e.g., nano-NMR) to monitor neural activity or specific molecules for signatures of quantum coherence or entanglement [7].

- Spectroscopic Analysis: Use techniques like 2D electronic spectroscopy to search for coherent energy transfer in neural components [7].

- Computational Modeling: Leverage quantum computers to simulate and model how quantum effects could functionally influence the target biological molecules [7].

- Behavioral Correlation: If possible, correlate the observed quantum signals with organism-level behavior or cognitive tasks.

2.5. Data Analysis:

- Analyze sensor data for statistical signatures that definitively prove non-classical correlations (e.g., violating Bell inequalities) [7].

- Computational models should generate testable predictions for subsequent experimental rounds.

Table 2: Experimental Approaches for Probing Quantum Biology

| Methodology | Application | Key Measurable Output |

|---|---|---|

| Nano-NMR / Electron Spin Resonance [7] | Detecting quantum spin states in neural proteins or tissues. | Signature of spin coherence/entanglement. |

| 2D Electronic Spectroscopy [7] | Probing coherent energy transfer in biomolecules. | Presence and lifetime of quantum coherence. |

| Quantum Computing Simulations [7] | Modeling quantum effects in cognitive molecules. | Predictions of functional impact on neural signaling. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for Quantum Material Experiments

| Research Reagent / Material | Function and Application |

|---|---|

| Magnetically Doped Topological Insulator Thin Films [6] | Serve as the platform for observing the Quantum Anomalous Hall (QAH) effect, a key phenomenon for topological quantum computing. |

| Rigidifying Solvents and Ligands [6] | Suppress molecular vibrations to protect electron spin coherence, extending quantum information lifetime in molecular qubits. |

| Atomically Precise Nanomaterials [6] | Provide a defined and controllable system for studying electron spin behavior and spin-vibration coupling. |

| Quantum Sensors (e.g., Nano-NMR) [7] | Enable high-precision detection of quantum signals (e.g., spin, magnetic fields) in biological and material samples. |

Application Notes: From Theory to Tangible Devices

Topological Quantum Computing

Traditional quantum computers based on superconducting qubits are highly susceptible to environmental noise and decoherence. Topological quantum computing presents a more robust alternative by encoding information not in local states, but in the global topology of a system [6]. This is analogous to a carpet's overall pattern remaining intact even if individual threads are pulled. The core material enabling this is the topological insulator, which acts as an insulator in its bulk but conducts electricity on its surface without resistance due to unique quantum states [6]. The Quantum Anomalous Hall (QAH) effect, realized in magnetically doped topological insulators, is a primary platform for this technology [6]. The current research challenge is to engineer these materials to maintain their quantum properties at higher temperatures for practical application [6].

Quantum Anomalies in Condensed Matter

Quantum anomalies are singularities that occur when symmetries preserved in classical physics are broken upon quantization, leading to quantum fluctuations [8]. Once purely theoretical constructs, these anomalies are now becoming tangible in condensed matter experiments. For instance, the scale anomaly is a prediction that can be tested in certain quantum materials [8]. The practical implication is that these theoretical peculiarities can be leveraged as new design principles for next-generation quantum technologies and devices. The key is using tools like materials informatics and AI to identify compounds where these anomaly-related signals are strong enough to be functionally useful [8].

Benchmarking Quantum vs. Classical Approaches for Molecular Simulation

Molecular simulation is a cornerstone of modern scientific research, enabling the prediction of chemical properties, reaction mechanisms, and material behaviors from first principles. For decades, classical computational methods have been the primary tool for these simulations, but they face significant challenges in accurately modeling large quantum systems due to the exponential scaling of computational resources required. The emergence of quantum computing offers a paradigm shift, potentially providing exponential speedups for simulating quantum mechanical systems. This application note provides a structured comparison of current quantum and classical approaches for molecular simulation, detailing quantitative benchmarks, experimental protocols, and essential research tools to guide researchers in selecting appropriate methodologies for their specific applications in material science and drug development.

Quantitative Performance Benchmarking

Accuracy and Performance Metrics

Table 1: Comparative Accuracy of Quantum vs. Classical Simulation Methods

| Method | System Tested | Accuracy Metric | Result | Qubits/Computational Resources |

|---|---|---|---|---|

| DMET-SQD (Hybrid Quantum-Classical) [9] | 18-hydrogen ring, cyclohexane conformers | Energy difference vs. classical benchmarks | Within 1 kcal/mol (chemical accuracy) | 27-32 qubits on IBM ibm_cleveland |

| DMET-SQD (Hybrid Quantum-Classical) [9] | Cyclohexane conformers | Relative energy ordering | Correct ordering preserved | 27-32 qubits on IBM ibm_cleveland |

| QC-AFQMC (IonQ) [10] | Complex chemical systems (carbon capture) | Atomic force calculations | More accurate than classical methods | Not specified |

| Quantum Annealing (D-Wave Advantage2) [11] | Quantum dynamics (8 models) | Success probability | Lower than classical VeloxQ | Thousands of physical qubits |

| Classical VeloxQ [11] | Quantum dynamics (8 models) | Success probability and time to solution | Superior to quantum annealers | GPU-accelerated classical solver |

Table 2: Hardware and Error Mitigation Comparison

| Platform/Method | Key Hardware Features | Error Mitigation Techniques | Connectivity/Topology |

|---|---|---|---|

| IBM Quantum [9] | Eagle processor, 27-32 qubits used | Gate twirling, dynamical decoupling | Not specified |

| D-Wave Advantage2 [11] | Thousands of physical qubits, analog operation | Native noise tolerance of quantum annealing | Zephyr topology (20 connections/qubit) |

| D-Wave Advantage [11] | Thousands of physical qubits, analog operation | Native noise tolerance of quantum annealing | Pegasus topology (15 connections/qubit) |

| IonQ [10] | Not specified | Algorithm-level error resilience | Not specified |

| Classical HCI [9] | High-performance computing | Not applicable | Not applicable |

Detailed Experimental Protocols

Hybrid Quantum-Classical DMET-SQD Protocol

Application: Simulation of complex molecular systems (hydrogen rings, cyclohexane conformers) [9]

Step-by-Step Workflow:

System Fragmentation:

- Partition the target molecule into smaller, chemically relevant fragments using Density Matrix Embedding Theory (DMET)

- This reduces the quantum resource requirements from thousands of qubits to tractable 27-32 qubit subsystems

Classical Pre-processing:

- Perform initial Hartree-Fock calculations to generate starting molecular orbitals

- Define the embedding problem and construct the impurity Hamiltonian for each fragment

Quantum Subsystem Resolution:

- Employ Sample-Based Quantum Diagonalization (SQD) on quantum hardware:

- Encode fragment configurations derived from Hartree-Fock calculations

- Execute quantum circuits on IBM's Eagle processor (ibm_cleveland)

- Use S-CORE iterative refinement to maintain correct particle number and spin characteristics

- Apply error mitigation techniques including gate twirling and dynamical decoupling

- Employ Sample-Based Quantum Diagonalization (SQD) on quantum hardware:

Classical Post-processing:

- Project quantum results into subspace for solving the Schrödinger equation

- Reconstruct full molecular properties from fragment solutions

- Self-consistent optimization of the embedding potential

Validation and Benchmarking:

- Compare energy differences against classical Coupled Cluster [CCSD(T)] and Heat-Bath Configuration Interaction (HCI) benchmarks

- Validate relative conformational energies (e.g., chair, boat, half-chair, twist-boat cyclohexane conformers)

- Assess convergence with increasing quantum samples (8,000-10,000 configurations recommended)

Quantum Annealing for Quantum Dynamics Protocol

Application: Solving quantum-inspired dynamics, simulating quantum gates and non-Hermitian systems [11]

Step-by-Step Workflow:

Problem Formulation:

- Encode the real-time propagator of an n-qubit Hamiltonian into a Quadratic Unconstrained Binary Optimization (QUBO) problem using parallel-in-time encoding

- Select from eight representative models: single-qubit rotations, multi-qubit entangling gates (Bell, GHZ, cluster), and PT-symmetric non-Hermitian generators

Hardware Embedding:

- For D-Wave quantum annealers: map QUBO problem to hardware topology using minor embedding

- Use D-Wave's heuristic minorminer tool for Pegasus (Advantage) or Zephyr (Advantage2) topology matching

- Account for hardware constraints including sparse connectivity and analog control errors

Execution:

- Quantum Annealing: Execute on D-Wave Advantage or Advantage2 systems with annealing schedule defined by A(s) and B(s) functions in HIsing = A(s)/2 HD + B(s)/2 H_P

- Classical Comparison: Execute identical QUBO instances on VeloxQ (GPU-accelerated) and Simulated Annealing solvers

Performance Metrics:

- Track success probability (likelihood of finding ground state)

- Measure time to solution (TTS) for direct quantum-classical comparison

- Analyze scaling behavior with problem size

Error Analysis:

- Characterize impact of thermal noise, control errors, and embedding overhead

- Compare performance across hardware generations (Advantage vs. Advantage2)

Research Reagents and Computational Tools

Table 3: Essential Research Toolkit for Quantum Molecular Simulation

| Tool/Platform | Type | Primary Function | Key Features |

|---|---|---|---|

| IBM Quantum Systems [9] | Quantum Hardware | Execution of quantum circuits | Eagle processor, 27-32 qubit capacity, gate-based |

| D-Wave Advantage/Advantage2 [11] | Quantum Annealer | Solving QUBO problems | Thousands of physical qubits, Pegasus/Zephyr topology |

| IonQ Forte [10] | Quantum Hardware | Quantum chemistry simulations | QC-AFQMC algorithm implementation |

| VeloxQ [11] | Classical Solver | Solving QUBO problems | GPU-accelerated, physics-inspired heuristic |

| Qiskit [9] | Software Library | Quantum circuit design and execution | SQD algorithm implementation, error mitigation |

| Tangelo [9] | Software Library | DMET framework implementation | Quantum-classical hybrid algorithm support |

Workflow Visualization: Quantum Annealing for Dynamics

Critical Analysis and Research Implications

The benchmarking data reveals that hybrid quantum-classical approaches currently represent the most promising near-term application of quantum computing to molecular simulation. The DMET-SQD method demonstrates that chemical accuracy (within 1 kcal/mol) can be achieved with as few as 27-32 qubits by strategically dividing the computational workload between quantum and classical resources [9]. This approach effectively circumvents current hardware limitations while providing a pathway to practical quantum advantage as devices improve.

Quantum annealing platforms show rapid performance improvements between hardware generations, with D-Wave Advantage2 delivering an order of magnitude higher success probability than its predecessor [11]. However, specialized classical solvers like VeloxQ currently maintain superior performance on the same problem instances, highlighting both the maturity of classical optimization algorithms and the remaining challenges for quantum hardware. The establishment of standardized benchmarking suites for quantum dynamics simulation enables objective tracking of hardware progress.

For research applications in drug discovery and materials science, these developments suggest a strategic approach: quantum computing is ready for exploration in specific subproblems where classical methods face fundamental limitations, particularly in strongly correlated electron systems and quantum dynamics. The integration of quantum simulations into established workflows—such as using quantum-computed force calculations to enhance classical molecular dynamics simulations—represents a practical near-term application [10]. As quantum hardware continues to advance with improving error rates, qubit counts, and connectivity, these benchmarking protocols provide essential metrics for evaluating progress toward unambiguous quantum advantage in molecular simulation.

The global pursuit of quantum technologies represents a paradigm shift in computational science and materials research. By 2025, worldwide government investments in quantum technologies have exceeded $55.7 billion, with the global quantum technology market projected to reach $106 billion by 2040 [12]. This substantial financial commitment underscores the recognition that quantum computing promises revolutionary capabilities for simulating complex material systems, optimizing molecular structures, and accelerating the discovery of novel compounds with tailored properties. The International Quantum Science Initiative for 2025 establishes a coordinated framework to leverage these emerging capabilities toward addressing critical challenges in materials science and pharmaceutical development, positioning quantum technologies as essential tools for next-generation scientific discovery.

The strategic integration of quantum computing into materials science enables researchers to overcome fundamental limitations of classical computational methods. Quantum systems can efficiently simulate quantum mechanical phenomena—a task that remains intractable for even the most powerful supercomputers when dealing with complex molecular structures. This capability is particularly valuable for modeling electron correlations, predicting reaction pathways, and understanding emergent properties in condensed matter systems. As nations worldwide escalate their quantum investments, the 2025 initiative establishes standardized protocols and benchmarks to ensure that these diverse international efforts converge toward complementary research objectives with shared methodologies for validation and knowledge transfer.

Global Quantum Investment and Strategic Priorities

International commitment to quantum technology development has accelerated dramatically, with numerous nations establishing ambitious roadmaps and funding mechanisms. The following quantitative analysis summarizes the global investment landscape for quantum initiatives in 2025, providing context for the resource allocation supporting the experimental protocols detailed in subsequent sections.

Table 1: National Quantum Initiative Funding and Strategic Focus Areas (2025)

| Country/Region | Total Investment | Primary Research Focus | Key Institutions/Initiatives |

|---|---|---|---|

| European Union | €1 billion+ (over 10 years) [12] | Quantum computing, Quantum communication infrastructure | Quantum Flagship, EuroHPC, EuroQCI |

| China | ~$15 billion (estimated) [12] | Quantum communications, Quantum computing prototypes | National venture capital fund ($138B for AI, quantum, hydrogen) [12] |

| France | €1.8 billion (5-year plan) [12] | Quantum technologies | National quantum strategy |

| Canada | CA$1 billion+ (past decade) + CA$360M National Quantum Strategy [12] | Photonic quantum computing, Commercialization | National Quantum Strategy, Xanadu, Quantum Algorithms Institute |

| Australia | AU$893 million (public investment) [12] | Quantum computation, Communication | CQC2T, EQUS, National Quantum Strategy |

| Denmark | 1 billion DKK (5 years) [12] | Quantum computer development | Quantum Innovation Centre (Qubiz), ATOM Computing collaboration |

| Finland | €70 million (2024-2027) [12] | Quantum processor development | VTT, IQM (300-qubit target) |

| Austria | €107 million [12] | Quantum research and technology | Quantum Austria (NextGenerationEU) |

| Brazil | BRL60 million (initial) + BRL31 million [12] | Quantum technologies competence center | Embrapii, São Paulo Research Foundation |

The tabulated data reveals distinct strategic priorities across the global research ecosystem. European initiatives emphasize infrastructure development through the EuroQCI for secure communications and the EuroHPC consortium for deploying quantum computers across member states [12]. North American efforts, particularly in Canada, highlight public-private partnerships to commercialize technologies, exemplified by the CA$40 million investment in Xanadu to develop photonic-based quantum computers [12]. Meanwhile, China's approach combines substantial state funding with recently announced venture capital mechanisms to mobilize additional private investment [12].

These strategic priorities directly influence the direction of materials science research, with different nations leveraging their specialized capabilities. Nations with strengths in quantum hardware, such as Finland's collaboration with IQM for 300-qubit systems [12], facilitate research requiring increasingly complex quantum simulations. Countries emphasizing quantum communication infrastructure, like Austria through its Quantum Austria initiative [12], enable secure distributed quantum computing applications that may eventually allow materials researchers to access specialized quantum resources across national boundaries.

Quantum Data Encoding Protocols for Materials Simulation

The translation of classical materials data into quantum-representable formats constitutes the foundational step in quantum-enhanced materials research. Various encoding techniques transform classical information—such as molecular structures, electron densities, or material properties—into quantum states that can be processed efficiently [13]. The selection of appropriate encoding methods directly impacts the accuracy, resource requirements, and computational efficiency of quantum simulations for materials science applications.

Comparative Analysis of Encoding Methodologies

Table 2: Classical-to-Quantum Data Encoding Techniques for Materials Science Applications

| Encoding Method | Technical Principle | Materials Science Applications | Resource Requirements | Performance Considerations |

|---|---|---|---|---|

| Basis Encoding | Direct binary mapping to computational basis states | Crystalline structures, lattice configurations | Qubits scale linearly with input size | Limited representation efficiency for continuous variables |

| Angle Encoding | Classical values stored in qubit rotation angles | Molecular torsion angles, phonon spectra | Constant qubit count with circuit depth increase | Suitable for continuous material properties |

| Amplitude Encoding | Classical data stored in state vector amplitudes | Electron wavefunctions, density matrices | Exponential compression of data representation | Preparation circuits can be computationally expensive |

| Quantum Feature Maps | Kernel-based transformation to quantum Hilbert space | Material classification, property prediction | Varies with kernel complexity | Enables quantum machine learning applications |

Basis encoding represents the most straightforward approach, mapping classical binary strings directly to quantum basis states. For materials science applications, this technique proves valuable for representing discrete configurations, such as atomic positions in crystal lattices or spin arrangements in magnetic materials [13]. Angle encoding (also known as qubit encoding) provides a more efficient approach for continuous material properties by storing classical data values in the rotation angles of qubits, making it particularly suitable for representing spectroscopic data, stress-strain relationships, or thermodynamic variables [13]. The most compact representation comes from amplitude encoding, which stores a normalized classical vector in the state amplitudes, enabling an exponential reduction in qubit requirements—highly valuable for representing complex electron wavefunctions or material response functions [13].

Experimental Protocol: Angle Encoding for Molecular Geometries

Objective: Encode molecular structural parameters into quantum states for subsequent energy calculation via variational quantum algorithms.

Materials and Equipment:

- Classical preprocessing unit

- Quantum processing unit (QPU) with parameterized gate operations

- State tomography or measurement apparatus

Procedure:

- Molecular Parameter Extraction:

- From the target molecular structure (e.g., drug candidate molecule), identify key structural parameters including bond lengths (r), bond angles (θ), and torsion angles (φ).

- Normalize parameters to the range [0, π] for rotation angle compatibility.

Qubit Initialization:

- Initialize n qubits in the |0⟩^⊗n state, where n corresponds to the number of structural parameters to encode.

- Apply Hadamard gates to each qubit to create uniform superposition states: H|0⟩ = (|0⟩ + |1⟩)/√2.

Parameterized Rotation:

- For each structural parameter xi, apply a Y-rotation gate to the corresponding qubit: Ry(xi) = e^{-i(xi/2)Y}.

- For multi-dimensional correlations, apply controlled rotation gates to entangle parameters representing spatially proximate molecular features.

Verification and Validation:

- Perform quantum state tomography on a subset of executions to verify correct encoding.

- Compare classical reconstruction with input parameters to validate encoding fidelity.

- For large-scale molecules, implement segmentation protocol with multiple quantum registers.

Technical Notes: The circuit depth for angle encoding scales linearly with the number of parameters, making it suitable for near-term quantum devices. For molecular systems with symmetry constraints, incorporate appropriate rotation symmetries to reduce parameter counts. Error mitigation techniques should be employed to address coherent errors in rotation gates.

Quantum Algorithmic Frameworks for Materials Discovery

Quantum algorithms offer transformative potential for materials science by enabling efficient simulation of quantum mechanical phenomena and accelerating the discovery of novel materials. Several algorithmic frameworks have emerged as particularly promising for addressing core challenges in computational materials science and drug development.

Quantum Approximate Optimization Algorithm (QAOA) for Molecular Conformation

The Quantum Approximate Optimization Algorithm has demonstrated significant potential for solving complex optimization problems relevant to molecular conformation prediction and protein folding landscapes. Recent benchmarking studies have evaluated QPU performance on QAOA implementations, with Quantinuum systems showing superior performance metrics, including all-to-all qubit connectivity crucial for complex molecular graphs [14].

Experimental Protocol: Molecular Conformation Optimization

Objective: Determine the lowest-energy conformation of a molecular system using QAOA.

Problem Mapping:

- Represent molecular structure as a graph G = (V, E) where vertices V correspond to atoms and edges E represent bonds or spatial interactions.

- Encode conformational degrees of freedom (torsion angles) as binary variables.

- Formulate cost Hamiltonian H_C representing molecular energy function with terms for:

- Bond stretching energy

- Angle bending energy

- Torsional energy

- Van der Waals interactions

- Electrostatic interactions

Circuit Implementation:

- Prepare initial state |ψ₀⟩ = |+⟩^⊗n using Hadamard gates on all qubits.

- Apply p alternating layers of cost and mixer unitaries:

- Cost unitary: UC(γ) = e^{-iγHC}

- Mixer unitary: UM(β) = e^{-iβHM} with HM = Σj X_j

- Optimize parameters (γ, β) using classical optimizers (gradient-based or gradient-free).

- Measure expectation value ⟨ψ|H_C|ψ⟩ to evaluate solution quality.

Technical Considerations: Performance varies significantly across QPU architectures. Recent benchmarking shows Quantinuum systems achieving superior performance in full connectivity, a critical feature for molecular optimization problems [14]. For near-term applications, limit problem sizes to 10-20 qubits with shallow circuit depths (p < 10) to maintain coherence throughout computation.

Variational Quantum Eigensolver (VQE) for Electronic Structure

The Variational Quantum Eigensolver has become the leading algorithm for determining ground-state energies of molecular systems, with direct applications to drug candidate evaluation and catalytic material design.

Experimental Protocol: Electronic Structure Calculation

Objective: Estimate ground-state energy of molecular systems for drug binding affinity prediction.

Procedure:

- Hamiltonian Formulation:

- Express molecular Hamiltonian in second quantization: H = Σ{pq} h{pq} ap^† aq + 1/2 Σ{pqrs} h{pqrs} ap^† aq^† ar as

- Apply Jordan-Wigner or Bravyi-Kitaev transformation to map to qubit operators: H = Σj cj Pj where Pj are Pauli strings.

Ansatz Selection:

- For near-term devices, employ hardware-efficient ansatzes with layered rotation and entanglement blocks.

- For higher accuracy, use chemically inspired ansatzes (UCCSD) with adaptive variants to reduce circuit depth.

Measurement Protocol:

- Decompose expectation value calculation into separate measurements of Pauli terms.

- Utilize qubit-wise commuting groups to reduce measurement overhead.

- Implement measurement error mitigation techniques.

Validation Metrics:

- Compare computed dissociation curves with classical reference data (CCSD(T)).

- Calculate drug-receptor binding energies for known pharmaceutical compounds.

- Evaluate computational cost scaling with system size.

Visualization of Quantum Materials Research Workflows

The integration of quantum computational methods into materials science requires well-defined workflows that leverage the respective strengths of classical and quantum processing units. The following diagrams illustrate standardized protocols for quantum-enhanced materials discovery.

Quantum Materials Simulation Workflow

Quantum-Enhanced Drug Discovery Pipeline

Research Reagent Solutions for Quantum Experiments

The experimental implementation of quantum protocols for materials science requires specialized resources and computational tools. The following table details essential components of the research infrastructure supporting quantum materials investigations.

Table 3: Essential Research Reagents and Resources for Quantum Materials Experiments

| Resource Category | Specific Examples | Function in Quantum Experiments | Implementation Considerations |

|---|---|---|---|

| Quantum Processing Units (QPUs) | Quantinuum H-series [14], IBM Quantum Systems [14] | Execution of quantum circuits for material simulation | Variable qubit counts (20-100+), connectivity architectures, gate fidelities (>99.9% for high-precision) |

| Quantum Data Encoding Libraries | Basis, Angle, Amplitude encoding modules [13] | Transformation of material data to quantum states | Encoding efficiency, qubit requirements, circuit depth constraints |

| Error Mitigation Tools | Zero-noise extrapolation, measurement error mitigation [14] | Enhancement of result accuracy under noisy conditions | Resource overhead, scalability to larger systems |

| Material-Specific Ansatzes | Hardware-efficient, UCCSD, QAOA parameterized circuits [14] | Problem-specific wavefunction approximation | Balance between expressibility and trainability |

| Classical-QQuantum Hybrid Controllers | Custom compilation stacks, quantum-classical interfaces | Management of variational algorithm execution | Communication latency, parameter optimization efficiency |

The selection of appropriate QPUs represents a critical consideration, with performance varying significantly across platforms. Recent independent benchmarking studies evaluating 19 different QPUs on the Quantum Approximate Optimization Algorithm identified Quantinuum systems as delivering superior performance, particularly in full connectivity essential for complex materials simulations [14]. The Quantinuum H-series achieves two-qubit gate fidelities exceeding 99.9%, enabling more complex quantum simulations of material properties with reduced error accumulation [14].

Quantum data encoding libraries provide the essential interface between classical material descriptors and quantum-representable formats. The selection of encoding strategy involves trade-offs between qubit efficiency, circuit complexity, and expressibility [13]. Basis encoding offers conceptual simplicity but limited efficiency for continuous material properties, while amplitude encoding provides exponential compression at the cost of more complex state preparation circuits [13]. For near-term applications on limited-qubit devices, angle encoding often provides the most practical balance for representing continuous material parameters such as bond lengths, torsion angles, or spectroscopic features.

Implementation Challenges and Future Directions

Despite significant progress, the practical application of quantum computing to materials science faces several substantial challenges that guide research priorities for the 2025 initiative. Qubit coherence times, gate fidelities, and error rates remain primary concerns for achieving quantum advantage in materials simulation [14]. Current state-of-the-art systems achieve two-qubit gate fidelities exceeding 99.9% [14], yet these levels must be further improved to execute the deep circuits required for complex molecular simulations.

Scalability represents another critical challenge, as the number of qubits required for practical materials problems often exceeds current hardware capabilities. The 2025 quantum initiatives address this through parallel development paths, including Quantinuum's plan to deploy a 100-logical-qubit system by 2027 [14] and Finland's target to scale quantum computers to 300 qubits [12]. These hardware advancements must be accompanied by improved algorithmic efficiency through better ansatz design, measurement reduction techniques, and error mitigation strategies tailored to materials science applications.

The integration of quantum computing with artificial intelligence represents a particularly promising direction for materials research. Quantum machine learning models can potentially identify complex patterns in material property databases, predict synthesis pathways, and guide quantum simulations toward the most promising regions of chemical space [13]. As quantum hardware continues to mature, the 2025 initiative prioritizes the development of hybrid quantum-classical frameworks that leverage the respective strengths of both paradigms for accelerated materials discovery.

The International Quantum Science Initiative for 2025 establishes a comprehensive framework for leveraging quantum technologies to advance materials science and pharmaceutical development. Through standardized protocols for quantum data encoding, algorithmic implementation, and validation metrics, the initiative enables researchers worldwide to contribute to a collective knowledge base while utilizing diverse hardware platforms. The substantial global investments in quantum technologies—exceeding $55.7 billion in public funding [12]—reflect the recognized potential of these approaches to transform materials discovery and optimization.

As quantum hardware continues to evolve toward greater qubit counts, improved connectivity, and enhanced fidelity, the protocols outlined in this document provide a foundation for incremental advancement toward increasingly complex materials simulations. The integration of these quantum tools with classical computational methods, experimental validation, and machine learning approaches creates a multidisciplinary ecosystem poised to address critical challenges in energy storage, drug development, and advanced material design. Through coordinated international effort and shared methodological standards, the quantum materials science community is positioned to translate these emerging capabilities into practical advances with significant scientific and societal impact.

Quantum Toolbox: Protocols for Simulating and Designing Materials

The discovery and development of novel materials are fundamental to technological progress, from creating more efficient energy storage systems to designing new pharmaceuticals. However, the computational simulation of quantum mechanical systems, which is central to understanding material properties, remains a formidable challenge for classical computers due to the exponential scaling of resources required with system size [15]. Quantum computing offers a paradigm shift by providing a native environment for simulating quantum phenomena. Within the Noisy Intermediate-Scale Quantum (NISQ) era, characterized by quantum processors with limited qubit counts and error-prone operations, specific algorithms have emerged as particularly promising for material science applications [16]. This application note details the implementation, protocols, and practical considerations for three leading quantum algorithms: the Variational Quantum Eigensolver (VQE), the Quantum Approximate Optimization Algorithm (QAOA), and Quantum Annealing.

Algorithmic Foundations and Comparative Analysis

Core Algorithm Principles

Variational Quantum Eigensolver (VQE) is a hybrid quantum-classical algorithm designed to find the ground state (lowest energy) of a quantum system, such as a molecule or material. Its operation is based on the variational principle, where a parameterized quantum circuit (ansatz) prepares a trial wavefunction. A classical optimizer iteratively adjusts these parameters to minimize the expectation value of the system's Hamiltonian, which corresponds to the ground state energy [17] [16]. VQE is particularly well-suited for NISQ devices as it can be resilient to certain types of noise and does not require deep quantum circuits [18].

Quantum Approximate Optimization Algorithm (QAOA) is another hybrid algorithm that tackles combinatorial optimization problems by encoding them into a cost Hamiltonian. The algorithm alternates between applying a phase separation operator based on this cost Hamiltonian and a mixing operator. A classical optimizer tunes the parameters controlling the application time of these operators to minimize the expected energy of the cost Hamiltonian [19] [20]. In material science, QAOA can be applied to problems like determining the optimal configuration of atoms in a complex material or solving multi-objective optimization problems in material design [19].

Quantum Annealing (QA) is a metaheuristic quantum algorithm inspired by classical simulated annealing. It leverages quantum fluctuations, particularly quantum tunneling, to navigate the energy landscape of an optimization problem. The system is initialized in a simple ground state of a known Hamiltonian and is slowly evolved to the problem Hamiltonian, whose ground state encodes the solution to the optimization problem [21] [22]. Quantum annealers, such as those built by D-Wave, are specialized hardware devices that execute this process and are naturally applied to problems formulated as Quadratic Unconstrained Binary Optimization (QUBO) [21].

Comparative Performance and Application Scope

Table 1: Comparative analysis of VQE, QAOA, and Quantum Annealing for material science applications.

| Feature | VQE | QAOA | Quantum Annealing |

|---|---|---|---|

| Primary Use Case | Ground state energy estimation, quantum chemistry [17] [16] | Combinatorial optimization, approximate solutions [19] [20] | Combinatorial optimization, sampling energy landscapes [21] [22] |

| Computational Paradigm | Hybrid quantum-classical [16] | Hybrid quantum-classical [20] | Primarily quantum, with classical pre/post-processing [21] |

| Typical Problem Formulation | Molecular Hamiltonian [15] | QUBO/Ising Model [19] | QUBO/Ising Model [21] |

| Hardware Suitability | Gate-based NISQ devices [16] | Gate-based NISQ devices [19] | Specialized annealing processors (e.g., D-Wave) [22] |

| Key Strength | High accuracy for small molecules, noise-resilient [18] [16] | Flexibility in problem mapping, theoretical performance guarantees [19] | Rapid sampling for specific problem classes, demonstrated scalability [21] [22] |

| Reported Performance Example | Achieved energy minima near -8.0 for model systems [23] | Converged in 19 iterations to Hamiltonian min of -4.3 for MAXCUT [23] | Demonstrated quantum supremacy for a materials simulation, solving in minutes a task estimated to take a supercomputer a million years [21] [22] |

Table 2: Summary of classical optimizer performance with quantum algorithms, based on a renewable energy system study [23].

| Classical Optimizer | Associated Quantum Algorithm | Performance Notes |

|---|---|---|

| NELDER-MEAD | VQE | Attained minima near -8.0 in 125 iterations [23] |

| SLSQP | QAOA | Converged in 19 iterations to a Hamiltonian minimum of -4.3 [23] |

| AQGD | QAOA | Reached convergence in just 3 iterations at -1.0 [23] |

Experimental Protocols

Protocol for Molecular Ground State Energy Calculation using VQE

Application Note: This protocol is designed for calculating the ground state energy of a molecule, a critical step in predicting chemical reactivity and stability in material science and drug discovery [15] [16].

Required Research Reagents & Solutions:

- Quantum Hardware/Simulator: A gate-based quantum computer or simulator (e.g., via IBM Quantum, Amazon Braket, or Azure Quantum).

- Classical Optimizer: A classical optimization routine (e.g., NELDER-MEAD, SLSQP, or COBYLA) [23].

- Quantum Chemistry Package: Software like PySCF or OpenFermion to generate the molecular Hamiltonian.

- Parameterized Quantum Circuit (Ansatz): A pre-defined circuit architecture, such as the Unitary Coupled Cluster (UCC) ansatz or a hardware-efficient ansatz.

Procedure:

- Problem Formulation: Begin by selecting a target molecule (e.g., H₂ or LiH) and defining its geometry and basis set. Use the quantum chemistry package to compute the second-quantized molecular Hamiltonian.

- Qubit Mapping: Transform the fermionic Hamiltonian into a qubit Hamiltonian using a mapping technique such as the Jordan-Wigner or Bravyi-Kitaev transformation.

- Ansatz Selection and Initialization: Choose an appropriate ansatz and initialize its parameters, either randomly or based on a heuristic.

- Hybrid Loop Execution: a. Quantum Execution: Prepare the trial state by running the parameterized quantum circuit on the quantum processor. b. Expectation Value Estimation: Measure the quantum state repeatedly to estimate the expectation value of the Hamiltonian. c. Classical Optimization: Feed the estimated energy to the classical optimizer. The optimizer calculates a new set of parameters to minimize the energy. d. Iteration: Repeat steps (a) through (c) until the energy converges to a minimum within a predefined tolerance.

The following workflow diagram illustrates the hybrid nature of the VQE process:

Protocol for Material Design Optimization using QAOA

Application Note: This protocol applies QAOA to a multi-objective optimization problem relevant to material design, such as finding a Pareto-optimal set of configurations that balance competing properties like strength and weight [19].

Required Research Reagents & Solutions:

- Quantum Hardware/Simulator: A gate-based quantum computer supporting the necessary connectivity.

- Classical Optimizer: An optimizer suitable for QAOA parameter landscapes (e.g., SLSQP, BFGS) [23] [19].

- Problem Instance: A well-defined multi-objective combinatorial problem, such as a weighted MAX-CUT problem on a graph representing atomic interactions.

Procedure:

- Problem Encoding: Formulate the multi-objective material design problem as a QUBO or a Hamiltonian. For multiple objectives, a common approach is to use a weighted sum or a randomized scalarization technique to combine them into a single cost function [19].

- Circuit Construction: Construct the QAOA circuit with a specified number of layers (p). Each layer consists of the problem unitary (phase separator) and the mixer unitary.

- Parameter Training & Transfer: a. Optimize the QAOA parameters (γ and β) for a smaller instance of the problem using a classical simulator and a basin-hopping optimization algorithm [19]. b. Transfer the optimized parameters to a larger problem instance to avoid the computational bottleneck of training on quantum hardware [19].

- Execution and Sampling: Run the QAOA circuit with the transferred parameters on the quantum computer. Sample from the output state multiple times to obtain a distribution of candidate solutions.

- Pareto Front Approximation: Analyze the sampled solutions to identify non-dominated points and approximate the Pareto front, which describes the optimal trade-offs between the competing objectives.

Protocol for Energy Landscape Sampling using Quantum Annealing

Application Note: This protocol uses quantum annealing to sample low-energy configurations of a material system, such as finding stable states of a spin glass model or low-energy conformations of a molecular lattice [21] [22].

Required Research Reagents & Solutions:

- Quantum Annealer: Access to a quantum annealing processor (e.g., D-Wave system).

- Minor-Embedding Tool: Software to map the problem graph onto the physical qubit topology of the annealer.

- QUBO Formulation: The material science problem defined as a QUBO or Ising model.

Procedure:

- QUBO Formulation: Define the energy function of the material system as a QUBO problem. For example, in a spin glass, the interactions between spins can be directly mapped to the QUBO coefficients [21].

- Minor-Embedding: Map the logical QUBO problem onto the physical qubits of the annealing hardware. This step is non-trivial and addresses the limited connectivity between qubits on the processor [21].

- Annealing Cycle: Program the embedded problem into the annealer and execute the annealing process. The system evolves from an initial quantum superposition to a final state that, ideally, represents a low-energy configuration of the problem Hamiltonian.

- Resampling: Repeat the anneal-readout cycle many times (e.g., thousands of times) to collect a statistical sample of solutions due to the probabilistic nature of the process [21].

- Solution Analysis: Read out the classical states of the qubits and analyze the distribution of solutions to identify the lowest energy states and understand the structure of the energy landscape.

The following workflow summarizes the end-to-end process for solving a problem on a quantum annealer:

The Scientist's Toolkit

Table 3: Essential resources and software for implementing quantum algorithms in material science research.

| Tool Category | Example Tools | Function in Research |

|---|---|---|

| Quantum Cloud Platforms | IBM Quantum, Amazon Braket, Microsoft Azure Quantum, D-Wave Leap [16] | Provides cloud-based access to real quantum devices and high-performance simulators for protocol execution. |

| Quantum SDKs & Libraries | Qiskit, Cirq, PennyLane, D-Wave Ocean [16] | Offers pre-built functions for algorithm construction, circuit compilation, and result analysis. |

| Classical Optimizers | COBYLA, SLSQP, NELDER-MEAD, BFGS [23] | The classical component in VQE and QAOA that adjusts parameters to minimize the cost function. |

| Quantum Chemistry Packages | PySCF, OpenFermion, Psi4 | Generates the molecular Hamiltonians and performs reference calculations for validation in VQE experiments. |

| Problem Formulation Tools | D-Wave Ocean, Qiskit Optimization | Aids in converting real-world material science problems into QUBO or Ising model formulations. |

The design of multivariate (MTV) porous materials represents a significant frontier in materials science, offering the potential for synergistic functionalities that exceed the sum of their individual components. These materials incorporate multiple distinct chemical building units within the same framework, creating unique structural complexities based on diverse spatial arrangements of multiple building block combinations [24]. However, the exponentially increasing design complexity of these materials poses substantial challenges for accurate ground-state configuration prediction and design using classical computational methods. With increasing numbers of metal nodes and linkers, structural complexity scales exponentially, making it impossible to predesign MTV porous materials for large numbers of building blocks [24]. For instance, in an hcb topology containing 32 linker sites, the inclusion of eight distinct MTV linkers at a fixed ratio leads to approximately 7.8 quadrillion unique combinatorial structures [24]. This extensive configuration space makes exploration with existing classical methods intractable, necessitating novel computational approaches.

Quantum computing offers a promising solution to this combinatorial optimization challenge. Unlike classical computers, quantum computers leverage quantum bits (qubits) that possess unique properties such as superposition and entanglement, enabling quantum algorithms to explore vast solution spaces in parallel [24]. This capability makes quantum computing particularly well-suited for solving NP-hard combinatorial optimization problems, including the design of MTV porous materials where the number of possible configurations grows exponentially with increasing building blocks and topological sites [24]. This case study presents a comprehensive framework for applying quantum computing to MTV porous material design, providing detailed protocols, validation methodologies, and implementation guidelines for researchers pursuing quantum-enabled materials discovery.

Theoretical Framework and Quantum Hamiltonian Design

Qubit Representation for MTV Porous Materials

To effectively utilize quantum computers for navigating the vast material space of MTV porous frameworks, the reticular nature of the porous material must be mapped into qubit representations. In the developed encoding scheme, the number of qubits (nqubits) is determined by the product of (1) the number of linker types (|t|) and (2) the number of linker sites in a defined unit cell (Ni), such that nqubits = |t| × Ni [24].

Each qubit represents whether a specific linker type occupies a particular linker site and is labeled as qi^t, where the subscript i indicates the linker site, and the superscript t denotes the type of linker [24]. As a test case, the encoding method was applied to a Cu-THQ-HHTP MOF system, a two-dimensional MOF containing eight linker sites and two linker types (THQ and HHTP), requiring a total allocation of 16 qubits labeled as q0^THQ, q0^HHTP, ..., q7^THQ, q7^HHTP [24]. A qubit state of 1 (e.g., q0^THQ = 1) indicates the presence of a THQ linker at site 0, while a state of 0 means that THQ is absent from that site, enabling representation of every possible configuration of MTV linkers within the unit cell as a unique qubit state [24].

Graph-Based Framework Representation

The interactions between qubits are described by a graph-based framework representation, denoted as G(i, j, w_i,j), with G symbolizing the connectivity of the MOF framework [24]. Indices i and j represent distinct linker sites within a unit cell, with each ordered pair (i, j) defining an edge. Edges represent either direct topological connections (linker sites connected directly by an edge) or spatial adjacency (linker sites not directly bonded but positioned as next-nearest neighbors), allowing for indirect interactions [24].

The distinction between topological connection and spatial adjacency is achieved through a connection weight, wi,j, defined as wi,j = di,j^α, where di,j denotes the spatial distance (in Ångstroms) between nodes i and j, while the sensitivity parameter α accounts for the type of connection [24]. Specifically, α varies based on whether the connection is a topological connection (first-nearest neighbor, α = 1) or a spatial adjacency (second-nearest neighbor, 0 ≤ α < 1) [24]. This formulation enables wi,j to capture the varying influence of spatial distance based on the relative importance of connection types, with spatial adjacency weighted less due to weaker physical relevance modulated by di,j and α [24].

Hamiltonian Formulation

A Hamiltonian cost function was developed specifically for optimizing MTV porous material configurations, integrating compositional, structural, and balance constraints directly into the Hamiltonian [24]. By directly embedding these constraints into the Hamiltonian and representing topological information on reticular frameworks as a graph-based structure, the proposed quantum algorithm enables efficient exploration of MTV porous material configurations that satisfy all predefined design requirements [24].

The Hamiltonian incorporates:

- Compositional constraints: Ensuring the correct number and type of linkers are present

- Structural constraints: Maintaining proper spatial relationships between building blocks

- Balance constraints: Achieving energetically favorable distributions of components

This approach allows the quantum encoding of a vast linker design space, enabling representation of exponentially many configurations with linearly scaling qubit resources, and facilitating efficient search for optimal structures based on predefined design variables [24].

Experimental Protocols and Methodologies

Quantum Computational Workflow

The following diagram illustrates the complete workflow for quantum computing-based design of MTV porous materials, from problem formulation to experimental validation:

Detailed Protocol: Variational Quantum Eigensolver Implementation

Quantum Circuit Configuration

- Algorithm Selection: Implement the Sampling Variational Quantum Eigensolver (VQE) algorithm using the IBM Qiskit framework [24]

- Ansatz Design: Construct a variational quantum circuit with hardware-efficient ansatz appropriate for the target quantum processor

- Parameter Optimization: Utilize classical optimizers (e.g., COBYLA, SPSA) for parameter tuning to minimize the Hamiltonian expectation value

- Execution: Run quantum circuits with sufficient shots (typically 10,000-100,000) to obtain statistically significant results

Constraint Integration Protocol

- Compositional Constraints: Implement as penalty terms in the Hamiltonian that increase energy when linker counts deviate from target ratios

- Spatial Constraints: Encode through the graph-based representation weights w_i,j that penalize unfavorable linker proximities

- Balance Constraints: Incorporate as equality constraints ensuring uniform distribution of linker types across the framework

Error Mitigation Strategies

- Readout Error Correction: Implement measurement error mitigation using matrix inversion techniques

- Noise-Aware Compilation: Optimize quantum circuits for specific hardware architectures to minimize gate errors

- Zero-Noise Extrapolation: Employ error extrapolation techniques to estimate noise-free results from noisy quantum computations

Material System Validation Protocol

The quantum computational framework was validated using experimentally known MTV porous materials through the following protocol:

System Selection: Identify well-characterized MTV systems with known ground-state configurations:

- Cu-THQ-HHTP (copper metal with THQ and HHTP linkers)

- Py-MV-DBA-COF

- MUF-7 series MTV-MOFs

- SIOC-COF2 [24]

Qubit Allocation: Determine the required number of qubits based on linker sites and types in the unit cell

Hamiltonian Construction: Build the specific Hamiltonian for each material system incorporating its unique topological constraints

Quantum Simulation: Execute VQE algorithms on quantum simulators and hardware to obtain predicted ground-state configurations

Result Comparison: Compare computationally obtained configurations with experimentally determined structures to validate the model

Key Research Reagent Solutions

Table 1: Essential Research Reagents and Computational Resources for Quantum-Enabled MTV Material Design

| Category | Specific Item | Function/Application | Implementation Details |

|---|---|---|---|

| Quantum Hardware | IBM 127-qubit quantum processors | Execution of variational quantum algorithms for configuration optimization | Real hardware validation of VQE calculations [24] |

| Quantum Software | IBM Qiskit framework | Implementation of quantum circuits, VQE algorithm, and classical optimization | Variational quantum circuit construction and execution [24] |

| Material Systems | Cu-THQ-HHTP MOF | Validation system with 8 linker sites and 2 linker types (16 qubit representation) | 2D MOF with modulated conductivity and high porosity [24] |

| Algorithmic Components | Sampling Variational Quantum Eigensolver (VQE) | Hybrid quantum-classical algorithm for ground-state energy estimation | Efficient search for optimal MTV configurations [24] |

| Topological Frameworks | hcb topology with 32 linker sites | Test case for combinatorial complexity assessment | Represents 7.8 quadrillion possible configurations with 8 linkers [24] |

| Constraint Formulation | Compositional, structural, and balance constraints | Ensures physically realistic and synthetically accessible configurations | Directly embedded into Hamiltonian formulation [24] |

Results and Validation Data

Computational Validation Results

The quantum computational framework was rigorously validated through simulations and hardware execution, demonstrating its effectiveness for MTV porous material design.

Table 2: Validation Results for Quantum Computing-Based MTV Material Design

| Material System | Qubit Count | Simulation Success | Hardware Validation | Key Performance Metrics |

|---|---|---|---|---|

| Cu-THQ-HHTP MOF | 16 qubits | Successful reproduction of ground-state configuration | Performed on IBM 127-qubit hardware | Accurate prediction of linker arrangement [24] |

| Py-MV-DBA-COF | System-dependent | Successful reproduction of ground-state configuration | Validation completed | Balanced linker arrangement prediction [24] |

| MUF-7 Series | System-dependent | Successful reproduction of ground-state configuration | Validation completed | Pore distribution and catalytic capability alignment [24] |

| SIOC-COF2 | System-dependent | Successful reproduction of ground-state configuration | Validation completed | Structural parameter accuracy [24] |

Performance and Scalability Analysis

The developed framework demonstrates significant advantages for MTV porous material design:

- Exponential Search Space Efficiency: The quantum encoding enables representation of exponentially many configurations (e.g., 7.8 quadrillion for hcb topology with 8 linkers) with linearly scaling qubit resources [24]

- Hardware Validation: Successful execution on real IBM 127-qubit quantum hardware signals a crucial step toward practical quantum algorithms for rational design of porous materials [24]

- Constraint Integration: Direct embedding of compositional, structural, and balance constraints into the Hamiltonian ensures physically realistic configurations while maintaining computational efficiency [24]

Implementation Diagram: Qubit Encoding and Hamiltonian Construction

The following diagram details the qubit encoding strategy and Hamiltonian construction process for MTV porous materials:

Discussion and Future Perspectives

The development of quantum computing-based approaches for MTV porous material design represents a paradigm shift in computational materials science. The successful validation of this framework on experimentally known systems demonstrates its potential to overcome the combinatorial explosion problem that renders classical methods intractable for complex multi-component systems [24]. As quantum hardware continues to advance in scale and fidelity, with ongoing research focused on scaling quantum computers from hundreds to millions of superconducting qubits [25], the practical applicability of this approach will expand significantly.

Future developments in this field will likely focus on several key areas:

- Increased Material Complexity: Extension to systems with larger numbers of linker types and more complex topological arrangements

- Advanced Algorithm Development: Implementation of more sophisticated quantum algorithms as hardware capabilities improve

- Experimental Integration: Closer coupling between computational prediction and synthetic validation in laboratory settings

- Industrial Application: Translation of quantum-designed materials to practical applications in catalysis, gas storage, and separation technologies

This quantum computing framework for MTV porous material design establishes a foundation for addressing exponentially complex combinatorial problems in materials science, providing a powerful tool for the rational design of next-generation functional materials beyond the reach of classical computational methods.

Quantum sensing represents a frontier in measurement science, leveraging the principles of quantum mechanics to detect physical quantities with unprecedented precision. This document focuses on protocols for probing magnetic phenomena at the nanoscale, a critical capability for advancing material science research. Traditional measurement techniques struggle to resolve magnetic behaviors at length scales between atomic dimensions and the wavelength of visible light, precisely where many intriguing quantum material properties emerge [1]. The development of quantum sensing protocols based on solid-state spin systems has enabled researchers to overcome these limitations, providing a window into previously inaccessible quantum phenomena.

Recent theoretical and experimental breakthroughs have established new paradigms for quantum sensing and communication systems that capitalize on the unique properties of non-Gaussian quantum states, which can overcome limitations inherent in conventional Gaussian-state-based systems [26]. Simultaneously, advances in entangled sensor systems have demonstrated significant enhancements in both sensitivity and spatial resolution compared to single-sensor approaches [1] [27]. These developments are particularly relevant for characterizing quantum materials such as high-temperature superconductors, graphene, and twisted van der Waals magnets, where understanding magnetic correlations and fluctuations is essential for unlocking their technological potential [1] [28].

The following sections detail specific experimental protocols, quantitative performance metrics, and implementation methodologies that enable researchers to leverage these quantum sensing advances for material science investigations.

Theoretical Foundation & Sensing Mechanisms

Quantum Sensing Principles