Quantum Reservoir Computing: Optimizing Molecular Registers for Drug Discovery

This article explores Quantum Reservoir Computing (QRC) as a transformative approach for molecular property prediction, particularly in data-scarce drug discovery scenarios.

Quantum Reservoir Computing: Optimizing Molecular Registers for Drug Discovery

Abstract

This article explores Quantum Reservoir Computing (QRC) as a transformative approach for molecular property prediction, particularly in data-scarce drug discovery scenarios. It details the foundational principles of using neutral-atom quantum registers as computational reservoirs, outlines methodological workflows for implementation, and addresses key optimization challenges like noise tolerance. Through comparative analysis with classical machine learning, the article validates QRC's superior performance on small datasets and discusses its future potential to accelerate biomedical research and clinical trial predictions.

The Quantum Reservoir Advantage: Foundations of Molecular Feature Extraction

Defining Quantum Reservoir Computing (QRC) and Molecular Quantum Registers

Frequently Asked Questions

Q: What is the fundamental principle behind Quantum Reservoir Computing? A: Quantum Reservoir Computing (QRC) is a computational paradigm that leverages the high-dimensional, nonlinear dynamics of a quantum system (the "reservoir") to process information. Unlike fully programmable quantum computers, only a simple classical output layer is trained; the complex quantum system itself remains fixed. This makes it particularly suitable for processing time-dependent signals and performing machine learning tasks like time-series prediction [1] [2].

Q: How does a molecular quantum register differ from other qubit architectures? A: A molecular quantum register uses the inherent spins of atoms within a molecule or a solid-state system (like a quantum dot) as qubits. A key advancement is the creation of a "dark state"—a collective, entangled state of thousands of nuclear spins that is less susceptible to environmental noise. This makes the register more robust and scalable for quantum networks and memories [3].

Q: We are experiencing rapid information loss in our quantum reservoir. What could be the cause? A: This is likely due to an imbalance in the reservoir's fading-memory property. In a Bose-Einstein Condensate (BEC)-based QRC, this is controlled by damping.

- No damping (

γ = 0): The reservoir remembers the entire input history, leading to information overload and poor performance. - Excessive damping (

γtoo high): Information is erased too quickly, degrading short-term memory and accuracy. The optimal performance is achieved at a balanced damping rate (e.g.,γ ∼ 10⁻³), which selectively retains relevant historical data [2].

Q: Our neutral atom register suffers from atomic losses over time, limiting experiment duration. Are there solutions? A: Yes. A technique known as real-time reloading can solve this. Researchers have demonstrated a system where a register of 1,200 atoms is maintained by successively adding new atoms (e.g., ~130 atoms every 3.5 seconds) to replace those that are lost. This principle allows for continuous operation of the quantum register for extended periods, a crucial step toward practical quantum computation [4].

Q: What is a key challenge in scaling up quantum optimization, and how is it being addressed? A: A primary challenge is hardware limitation and noise. Current quantum processors have a limited number of qubits and are sensitive to external interference ("noise"), which disrupts calculations. Research is focused on developing robust error-correction methods and hybrid quantum-classical approaches. Rigorous benchmarking against classical algorithms is also essential to identify problems where quantum optimization can offer a real advantage [5] [6].

Troubleshooting Guides

Problem: Low Predictive Accuracy on Temporal Tasks in QRC Your Quantum Reservoir Computer performs poorly on tasks like the NARMA-10 time-series prediction.

- Potential Cause 1: Incorrectly Tuned Reservoir Parameters The performance of a QRC is highly sensitive to its physical parameters. Refer to the following table for guidance [2]:

| Parameter | Role/Effect | Optimal Regime / Troubleshooting Tip |

|---|---|---|

Damping rate (γ) |

Sets the memory window; prevents information overload. | Balance is key. Tune to match the required memory length of your task (e.g., γ ∼ 10⁻³). |

Nonlinearity (g) |

Enables complex, nonlinear mapping of input data. | Avoid values that are too large, as they can cause mode-broadening and degrade performance. |

| Particle Number | Maintains stationary reservoir dynamics. | Implement active particle number compensation to prevent drift and transience from atom loss. |

| Observation Window | Defines the accessible feature space. | Ensure it covers the active region of the reservoir's dynamics. |

- Potential Cause 2: Improper Input Encoding The method of feeding data into the reservoir is critical. Ensure that input signals are correctly mapped onto the quantum system via a well-defined encoding strategy, such as applying a temporally and spatially localized potential "kick" to a BEC [2].

Problem: Short Coherence Time in Molecular Quantum Register The quantum information in your register degrades too quickly.

Potential Cause 1: Uncontrolled Nuclear Magnetic Interactions In quantum dot registers, uncontrolled interactions between nuclear spins cause noise. Solution: Apply advanced quantum feedback techniques to polarize the nuclear spins, creating a low-noise environment. Using highly uniform materials like gallium arsenide (GaAs) quantum dots can also help overcome this challenge [3].

Potential Cause 2: Fabrication-Induced Defects The method used to create the crystal hosting the qubits can introduce impurities. Solution: Utilize advanced fabrication techniques like Molecular-Beam Epitaxy (MBE). Unlike traditional melting-pot methods, MBE builds the crystal layer-by-layer ("3D printing"), resulting in a material of much higher purity and superb quantum coherence properties [7].

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function in Experiment |

|---|---|

| Strontium Atoms (Sr) | Serves as a robust qubit platform for neutral atom-based quantum registers, offering stable energy levels for trapping and manipulation [4]. |

| Gallium Arsenide (GaAs) Quantum Dots | Acts as a nanoscale host for creating a many-body quantum register. Its uniformity is key for creating stable, collective spin states [3]. |

| Erbium-Doped Crystals | Functions as a spin-photon interface in quantum networking. The erbium ions are the qubits, and their coherence is critical for distance [7]. |

| Nitrogen-Vacancy (NV) Center Diamond | Provides a stable, room-temperature qubit system for instructional labs and fundamental experiments on spin dynamics [8]. |

| Bose-Einstein Condensate (BEC) | Serves as the high-dimensional, nonlinear physical substrate for a quantum reservoir in machine learning applications [2]. |

Experimental Protocols & Data

Protocol 1: Benchmarking a Quantum Reservoir with NARMA-10 This is a standard method for evaluating the performance of a reservoir computing system on a task that requires both nonlinearity and memory [2].

- Reservoir System: Implement a quantum reservoir, such as a dissipative Bose-Einstein Condensate.

- Input Encoding: Map the input time-series

{u(n)}onto the BEC at each discrete timestepnusing a potential kick,V_encode(x,t;n). - Reservoir Evolution: Let the BEC evolve according to the damped Gross–Pitaevskii equation.

- State Sampling: Sample the spatial density

|ψ(x,t)|²at multiple time points during the timestep to create a high-dimensional feature vectorΦ_n. - Readout and Training: Train a classical linear model (

y^(n+1) = wᵀΦ_n + b) to predict the target output. Only the weightswand biasbare trained. - Performance Metric: Calculate the Normalized Mean-Square Error (NMSE) to quantify predictive accuracy.

Protocol 2: Operating a Neutral Atom Quantum Register This protocol outlines the steps for running a scalable register with neutral atoms [4].

- Atom Trapping: Trap individual strontium atoms in an optical lattice formed by interfering laser beams.

- Register Initialization: Initialize the register, where each trapped atom represents a qubit.

- Real-Time Reloading: Continuously monitor the register for atom loss. Use a dedicated reloading zone to add new atoms to the array (e.g., ~130 atoms every 3.5 seconds) to maintain register size.

- Qubit Control: Control the electronic state of individual atoms using optical tweezers to define qubit states.

- Entanglement Generation: Introduce controlled interactions between nearby atoms to generate quantum entanglement, the resource for computation.

Quantitative Advances in Quantum Registers

| Platform | Key Metric | Achievement | Significance |

|---|---|---|---|

| Neutral Atoms (Strontium) [4] | Register Size & Duration | 1,200 atoms for >1 hour | Enables large-scale, sustained quantum simulations and calculations. |

| Quantum Dot Nuclear Spins [3] | Number of Entangled Qubits / Coherence Time | 13,000 nuclei / >130 µs | Creates a robust, scalable quantum memory for networks. |

| Erbium-Doped Crystals [7] | Coherence Time / Theoretical Range | 24 ms / 4,000 km | Dramatically extends the potential distance for quantum internet links. |

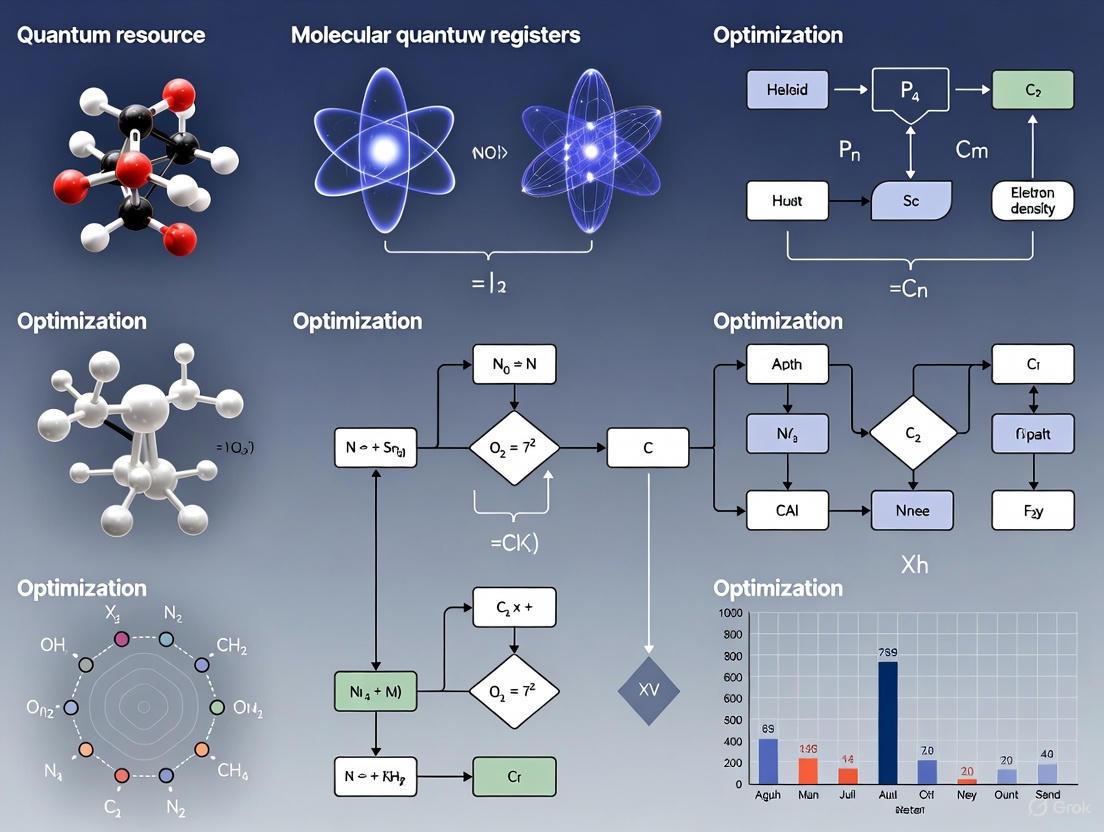

Workflow Visualization

Why Small Data is a Big Problem in Drug Discovery and Clinical Trials

FAQs: Understanding the Small Data Problem

What is the "Small Data" problem in pharmaceutical research?

The "Small Data" problem refers to the challenges that arise from limited, low-quality, or inaccessible datasets in drug discovery and clinical trials. In an industry increasingly driven by artificial intelligence (AI) and machine learning, these models require massive, high-quality datasets to produce accurate and reliable results. Key aspects of the problem include:

- Limited Data Availability: For novel drug targets or rare diseases, the amount of available biological, chemical, and clinical data is inherently small.

- Data That is Not FAIR: A recurring theme in the industry is the slow progress in making data Findable, Accessible, Interoperable, and Reusable. Despite familiar diagrams and intent, implementation often lags, with each new assay type requiring bespoke data engineering [9].

- Poor Data Quality: As noted in expert predictions for 2025, there is a significant industry pullback from using synthetic data for AI model training due to limitations and potential risks. The focus is shifting back to high-quality, real-world patient data to build more reliable and clinically validated models [10]. The fundamental rule of "garbage in, garbage out" is acutely relevant, as the data defines the solution space and boundaries of what a model can predict [11].

How does small data impact AI-driven drug discovery?

Small data severely constrains the effectiveness of AI, which is the cornerstone of modern drug discovery innovation. Its impact is multifaceted:

- Limits Model Accuracy and Generalizability: AI models trained on small or biased datasets fail to learn the complex patterns in biology and chemistry, leading to poor predictive performance for drug efficacy, toxicity, and synthesizability [11] [12].

- Hinders Exploration of Chemical Space: The potential number of pharmacologically active compounds is estimated to be greater than 10^60. Small data makes it impossible to efficiently navigate this vast space to identify novel drug candidates [11].

- Reduces Clinical Trial Efficiency: Small data complicates patient recruitment and cohort identification. In 2025, over half of new trials are expected to use AI-driven protocol optimization to address these hurdles. Without sufficient data, these optimizations are less effective [10].

What role can quantum computing play in mitigating small data problems?

Quantum computing offers a paradigm shift by simulating molecular interactions at a fundamental level, reducing the dependency on large, pre-existing experimental datasets.

- First-Principles Simulation: Quantum computers can model complex molecular interactions, such as protein-ligand binding and the role of water molecules in binding pockets, from the ground up. This provides high-fidelity data that is computationally infeasible to generate with classical computers alone [13].

- Accelerating Data Generation: A collaboration between IonQ, AstraZeneca, AWS, and NVIDIA demonstrated a quantum-accelerated workflow that slashed the simulation time for a key pharmaceutical reaction (Suzuki-Miyaura) by over 20-fold, turning projected runtimes from months into days [14].

- Enhancing AI Models: The high-accuracy data generated from quantum simulations can be used to refine and train AI models for drug discovery, making them more accurate and reliable even in low-data regimes [13].

What are the practical data strategies for researchers today?

Researchers are adopting several key strategies to overcome data limitations:

- Prioritize Real-World Data (RWD): The trend is moving towards using high-quality, real-world patient data for AI training to create more reliable discovery processes [10].

- Iterate Between Wet and Dry Labs: Robust, rapid iteration between computational (dry) and experimental (wet) labs is critical. This helps identify data biases early and allows for quick model tuning, which is more effective than years of optimizing towards the wrong target [11].

- Leverage Specialized Foundation Models: Using pre-trained foundation models for biology (e.g., for protein sequences or structures) significantly lowers computational costs. Companies can then fine-tune these models on their smaller, proprietary datasets, maximizing the impact of their limited data [11].

- Build a "Mixture of Experts": Instead of relying on one large AI model, using multiple smaller sub-models, each trained on specific tasks or data types, can improve outcomes when comprehensive data is unavailable [12].

Troubleshooting Guides

Problem: Poor AI Model Performance Due to Limited or Low-Quality Datasets

Symptoms:

- The model performs well on training data but poorly on new, unseen data (overfitting).

- Inability to generate chemically feasible or synthesizable drug candidates.

- Predictions of drug properties (e.g., toxicity, binding affinity) are inaccurate.

Solution: A Hybrid Quantum-Classical Data Generation Workflow

This methodology uses quantum computing to generate high-fidelity molecular data, which is then used to augment classical AI training.

Experimental Protocol:

- Target Identification: Select a specific molecular target for study (e.g., a protein pocket for ligand binding).

- Problem Formulation: Define the specific molecular interaction to simulate, such as a ligand-protein binding energy calculation or a catalytic reaction step.

- Hybrid Workflow Configuration:

- Classical Pre-/Post-Processing: Use high-performance computing (HPC) resources to set up the simulation parameters and process the quantum processor's output.

- Quantum Processing: Offload the core, computationally intensive simulation task to a quantum processor. For example, use a variational quantum algorithm to compute the ground state energy of the molecular system.

- Data Integration: Use the results from the quantum simulation (e.g., precise energy values, molecular configurations) to create a curated dataset.

- AI Model Retraining: Augment your existing training dataset with the new quantum-generated data and retrain your AI models.

The following workflow diagram illustrates this hybrid approach, which was used to achieve a 20-fold improvement in simulation time for a key drug development reaction [14].

Diagram: Hybrid quantum-classical workflow for data generation.

Problem: Inefficient Clinical Trial Enrollment and Design

Symptoms:

- Slow patient recruitment leading to trial delays.

- Inability to identify the right patient cohorts for targeted therapies.

- High costs associated with prolonged trial timelines.

Solution: Leveraging AI and Real-World Data for Trial Optimization

Experimental Protocol:

- Data Aggregation: Securely aggregate real-world data from electronic health records (EHRs), medical device data, and genomic databases. Tools with natural language processing can extract insights from unstructured clinical notes [10].

- Predictive Analytics: Implement machine learning models to analyze this data for precise cohort identification, following precedents set in oncology [10].

- Protocol Optimization: Use AI-driven tools to design more efficient trial protocols. In 2025, more than half of new trials are expected to incorporate such optimization to address recruitment and engagement hurdles [10].

- Adopt a Hybrid Trial Model: Implement a hybrid trial design that combines traditional site-based visits with decentralized approaches. Use predictive analytics to personalize and improve patient engagement [10].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources and their functions for implementing the advanced data strategies discussed.

| Research Reagent / Resource | Function in Context of Small Data |

|---|---|

| FAIR Data Infrastructure | A systematic framework to make data Findable, Accessible, Interoperable, and Reusable. It is foundational for breaking down data silos and maximizing the utility of existing datasets, though its implementation remains a challenge [9]. |

| Quantum Computing Cloud Services (e.g., Amazon Braket) | Provides cloud-based access to quantum processors, enabling researchers to run quantum-enhanced molecular simulations without owning the hardware. This democratizes access to quantum-generated data [14]. |

| AI-Powered Clinical Data Abstraction Tools | These tools, often used with clinical experts "in the loop," extract and structure valuable data from unstructured clinical notes in EHRs, turning hidden data into a usable resource for trials [10]. |

| Biological Foundation Models (e.g., AMPLIFY) | Open-source, pre-trained protein language models. They provide a powerful starting point for researchers to fine-tune on their specific, smaller datasets, accelerating tasks like protein sequence prediction and function annotation [11]. |

| Hybrid Trial Platforms | Integrated software platforms that support the execution of hybrid clinical trials, facilitating remote data collection, patient engagement, and the integration of real-world evidence into the trial data stream [10]. |

This technical support center provides guidance for researchers implementing Digitized Counterdiabatic Quantum Feature Extraction, a method that leverages untrained quantum dynamics to generate informative features for machine learning tasks. This approach is situated within the broader research objectives of quantum resource optimization and the development of molecular quantum registers, offering a pathway to quantum utility on near-term devices [15]. The following sections offer detailed experimental protocols, troubleshooting guides, and FAQs to support your experiments.

Experimental Protocols & Methodologies

Core Workflow for Quantum Feature Extraction

The fundamental methodology involves transforming raw data into features using the dynamics of a quantum system without traditional training of the quantum circuit parameters [15].

Detailed Protocol: Molecular Toxicity Classification

This protocol details the application of quantum feature extraction for predicting molecular toxicity, a use case demonstrating real-world impact [15].

- Data Preprocessing: Prepare molecular structures from the UCI Toxicity Dataset [15]. Convert molecular data into a format suitable for quantum processing, focusing on statistical correlations between atomic properties.

- Hamiltonian Encoding: Map the preprocessed molecular data onto a spin-glass Hamiltonian. This involves:

- Encoding individual molecular properties onto local magnetic fields (

h_i) of qubits. - Encoding statistical correlations between properties onto coupling strengths (

J_ij,J_ijk) between qubits [15].

- Encoding individual molecular properties onto local magnetic fields (

- Quantum Dynamics Evolution: Allow the system to evolve under a digitized counterdiabatic quantum drive in the impulse regime. This evolution occurs on a quantum processor (e.g., IBM's 156-qubit Heron r2,

ibm_kingston) [15]. - Feature Measurement & Extraction: Perform measurements in the Z-basis at the end of the dynamics evolution. The extracted features are:

- Local magnetizations (

⟨Z_i⟩) from individual qubits. - Higher-order correlations (

⟨Z_i Z_j⟩,⟨Z_i Z_j Z_k⟩) from multi-qubit interactions [15].

- Local magnetizations (

- Model Training & Validation: Feed the extracted quantum features, potentially combined with classical features, into a classical Gradient Boosting model. Use k-fold cross-validation and SHAP analysis to evaluate performance and feature importance [15].

Detailed Protocol: Breast Tumor Detection

This protocol adapts the core principle for medical image analysis [15].

- Classical Feature Preprocessing: Start with 224x224 ultrasound images (e.g., from Breast MNIST). Extract initial classical features using standard techniques like Fast Fourier Transform (FFT) and Gabor filters [15].

- Hybrid Feature Encoding: Encode a subset of the most salient classical features or their representations into the coupling parameters of the quantum Hamiltonian.

- Quantum Feature Generation: Follow the same core workflow of Hamiltonian evolution and measurement as in the molecular protocol to generate a set of quantum features.

- Feature Fusion & Selection: Combine the newly generated quantum features with the original classical feature set. Use SHAP analysis to select the most informative features from this combined pool for the final model [15].

- Model Benchmarking: Train a classical Support Vector Classifier (SVC) on the SHAP-selected features. Benchmark its performance against established deep learning baselines (e.g., ResNet-18, ResNet-50, Google AutoML Vision) [15].

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: What is the fundamental advantage of using untrained quantum dynamics over a trained Variational Quantum Circuit (VQC) for feature generation?

A1: The key advantage is resource optimization. Trained VQCs face challenges like barren plateaus and require extensive, noisy parameter optimization cycles, which consume significant quantum resources [16] [17]. Untrained dynamics bypass this by using a fixed, physically motivated evolution (counterdiabatic driving) to generate complex features. This makes the process faster and avoids the classical optimization overhead, which is critical in the NISQ era [15] [17].

Q2: Our extracted quantum features sometimes show poor performance. How can we diagnose if the issue is with the encoding or the dynamics?

A2: Follow this diagnostic workflow:

- Verify Encoding: Check if the classical data's correlation structure is correctly mapped to the Hamiltonian couplings. Simplify your data and use a known, verifiable input to test the encoding step in isolation.

- Benchmark Dynamics: Run your encoding protocol with a trivial (e.g., very slow) dynamics evolution. The output features should be predictable. Then, introduce the counterdiabatic drive and observe the change.

- Circuit Depth Check: Examine if the depth of your digitized dynamics circuit exceeds the coherence time of the hardware. Use simulator results with and without noise models to isolate hardware errors [18] [17].

Q3: How do we effectively combine quantum features with classical features without causing overfitting?

A3: Feature selection is crucial. Use model-agnostic tools like SHAP (SHapley Additive exPlanations) analysis to identify which features—classical, quantum, or a combination—contribute most to the model's predictions. As demonstrated in the research, a model using SHAP-selected hybrid features can outperform models using either set alone or established deep learning baselines [15].

Q4: What are the most critical hardware limitations we should consider when designing our experiments?

A4: The primary constraints are:

- Coherence Time: Limits the depth of the quantum circuit you can reliably run [17].

- Qubit Connectivity: Affects the fidelity of simulating complex correlation terms (e.g., three-body interactions

J_ijk) [15]. - Readout Fidelity: Directly impacts the accuracy of the extracted local magnetizations and correlations [18]. Always design your experiment within the "volumetric" bounds (qubit count x circuit depth) your hardware can support.

Troubleshooting Common Problems

| Problem | Symptoms | Potential Causes & Solutions |

|---|---|---|

| Low Feature Variance | Extracted features from different data samples are nearly identical. | - Encoding Mismatch: The data's information is not being mapped effectively to the Hamiltonian. Review and adjust the encoding strategy.- Insufficient Dynamics: The counterdiabatic evolution might be too weak or short. Adjust the protocol's impulse strength or duration. |

| High Results Variance | Significant fluctuation in extracted features between runs on the same data. | - Insufficient Measurement Shots: Statistical noise is dominating. Increase the number of measurement shots per circuit execution [17].- Hardware Noise: Circuit depth may be pushing hardware limits. Test on a simulator with a realistic noise model to confirm. Simplify the circuit if necessary [18]. |

| Circuit Execution Failures | Quantum processor returns errors or fails to execute. | - Circuit Too Deep: Decompose the circuit into native gates and check its depth against hardware limits. Optimize or simplify the design [18].- Unsupported Gates: Ensure all gates in your digitized dynamics are part of the hardware's native gate set. |

Research Reagent Solutions & Materials

The following table details the key "research reagents"—the essential materials, algorithms, and software—required to implement quantum feature extraction.

| Item / Solution | Function / Explanation | Example / Specification |

|---|---|---|

| Quantum Hardware | Executes the digitized counterdiabatic dynamics. Requires sufficient qubits and connectivity. | IBM Heron r2 156-qubit processor (ibm_kingston) [15]. |

| Spin-Glass Hamiltonian | The core "substrate" that encodes the data. Its couplings ((J{ij}, J{ijk})) hold the statistical structure of the input data [15]. | Parameterized Hamiltonian with 2-body and 3-body interaction terms. |

| Counterdiabatic Driving Protocol | A rapid, controlled evolution that generates complex features from the encoded data without traditional training [15]. | Digitized quantum dynamics in the "impulse" regime. |

| Classical ML Model | Consumes the extracted quantum features to perform final classification or regression tasks. | Gradient Boosting Classifier, Support Vector Classifier (SVC) [15]. |

| Feature Analysis Tool (SHAP) | Identifies and validates the importance of quantum features, enabling effective hybrid model building [15]. | SHapley Additive exPlanations (SHAP) library. |

Performance Data & Benchmarking

The efficacy of this method is demonstrated by quantitative results from real-world applications.

Table 1: Performance Improvement with Quantum Features

| Task | Model & Feature Set | Key Performance Metric | Performance with Classical Features Only | Performance with Hybrid (Classical + Quantum) Features |

|---|---|---|---|---|

| Molecular Toxicity Classification [15] | Gradient Boosting | Precision | Baseline (X) | 121% Increase |

| Breast Tumor Detection [15] | SVC (SHAP-selected) | AUC (Area Under Curve) | 0.887 | 0.937 |

| Breast Tumor Detection [15] | SVC (SHAP-selected) | Accuracy | 0.830 | 0.876 |

Experimental Setup & Hardware Specifications

The following table summarizes the key parameters from the cited experiments, which can serve as a reference for your own resource planning.

| Experimental Parameter | Molecular Toxicity Task | Breast Tumor Detection Task |

|---|---|---|

| Quantum Processor | IBM Heron r2 (ibm_kingston) [15] | IBM Heron r2 (ibm_kingston) [15] |

| Qubit Usage / Topology | Circuits with 2- and 3-body interactions [15] | Information Not Explicitly Stated |

| Feature Vector Size | 156 features [15] | 156 features (post SHAP selection) [15] |

| Classical Model | Gradient Boosting [15] | Support Vector Classifier (SVC) [15] |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: In what scenarios does QRC with neutral atoms provide the most significant advantage over classical machine learning? Quantum Reservoir Computing (QRC) demonstrates its most significant advantages when working with small, expensive-to-obtain datasets, particularly those with only 100-200 training records [19]. This is common in early-stage pharmaceutical development and rare-disease research. The performance advantage typically disappears with larger datasets (e.g., 800+ records), where classical methods perform equally well [19].

Q2: What type of hardware noise is QRC most sensitive to? While QRC is generally tolerant to many hardware imperfections found in neutral-atom systems, it is most sensitive to sampling noise [19]. This refers to the statistical uncertainty that arises from making a finite number of measurements on the quantum system to estimate its state.

Q3: Can I use QRC for molecular property prediction tasks? Yes. Research has successfully applied QRC using simulated neutral-atom arrays to predict molecular properties from datasets like the Merck Molecular Activity Challenge [19]. The quantum system transforms molecular descriptors into higher-dimensional features that often improve prediction accuracy for small datasets.

Q4: How does QRC performance compare to a classical reservoir computer? Evidence suggests that QRC often outperforms its classical reservoir computing counterpart [19]. This performance gap hints that quantum correlations and entanglement within the neutral-atom system contribute significantly to the enhanced data transformation capabilities.

Troubleshooting Common Experimental Issues

Issue 1: Poor Model Performance or Low Prediction Accuracy

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Excessive Sampling Noise | Check the variance in output features across multiple measurement rounds. | Increase the number of measurements (shots) on the quantum system to reduce statistical uncertainty [19]. |

| Insufficient Dataset Size | Evaluate performance against a baseline classical model (e.g., Random Forest). | Leverage QRC specifically for small-data scenarios (N<200). For larger data, classical methods may be more efficient [19]. |

| Suboptimal Feature Selection | Use tools like SHAP to analyze the importance of input molecular descriptors [19]. | Re-run the feature selection process to ensure the most relevant 15-20 molecular descriptors are used as input for the QRC [19]. |

Issue 2: Challenges in System Calibration and Operation

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Hardscape Imperfections | Characterize qubit coherence times and gate fidelities using standard benchmarking. | Calibrate laser systems for trapping and manipulation; ensure stable control electronics [20]. |

| Complex Control Workflows | Audit the time and expertise required to run a basic qubit characterization experiment. | Utilize specialized quantum control hardware (e.g., Quantum Orchestration Platforms) to simplify and accelerate experimental sequences [20]. |

Experimental Protocols and Data

QRC for Molecular Property Prediction: A Detailed Workflow

This protocol is adapted from a study published in the Journal of Chemical Information and Modeling that utilized simulated neutral-atom arrays [19].

1. Data Preprocessing and Feature Selection

- Input: Acquire a dataset linking molecular descriptors to a target property (e.g., biological activity). The Merck Molecular Activity Challenge is a standard benchmark [19].

- Feature Selection: Employ SHAP (Shapley Additive Explanations) to identify and select the top 18 most relevant molecular descriptors for the prediction task. This reduces input dimensionality [19].

2. Quantum Reservoir Computing Phase

- Encoding: Map the selected 18 molecular descriptors into the parameters of a simulated neutral-atom quantum system. This system acts as the "reservoir" [19].

- Evolution: Let the quantum system evolve freely according to its natural dynamics. The input data nonlinearly transforms through this complex evolution.

- Measurement: Measure simple local observables (e.g., local magnetizations) from the evolved quantum state. These measurements form a new, rich set of features (embeddings) for the classical model [19].

3. Classical Machine Learning Phase

- Model Training: Use the new QRC-generated features to train a classical machine learning model, such as a Random Forest classifier.

- Prediction & Validation: The trained classical model makes the final property predictions. Validate performance on a held-out test set and compare against a purely classical workflow [19].

Quantitative Performance Data

The table below summarizes key performance findings from the QRC study on molecular datasets [19].

| Metric | Finding | Experimental Context |

|---|---|---|

| Performance at Small Data Size | QRC often matched or outperformed classical ML. | Consistent results were observed at a training size of 100 records. |

| Performance at Large Data Size | QRC advantage disappeared; performance was similar to classical ML. | Observations were made at a training size of 800 records. |

| Data Clustering | QRC features showed clearer cluster separation in low-dimensional projections (UMAP). | This was compared to clusters formed from the original molecular descriptors. |

| Robustness to Noise | Performance was fairly tolerant to hardware noise but sensitive to sampling noise. | The study was conducted using simulations with realistic noise models. |

The Scientist's Toolkit

Key Research Reagent Solutions

The following table details essential components for implementing a neutral-atom QRC research program.

| Item | Function in Experiment |

|---|---|

| Neutral-Atom Quantum Processor | The core physical platform. It uses optical traps (tweezers or lattices) to hold individual atoms (e.g., rubidium, strontium) that serve as qubits [21] [22]. |

| Quantum Control System | Dedicated hardware (e.g., Quantum Orchestration Platforms) to generate precise, synchronized laser pulses for qubit initialization, manipulation, and readout [20]. |

| High-NA Objective Lens | A critical optical component for tightly focusing laser beams to create optical tweezers that trap individual atoms with high fidelity [21]. |

| Rydberg Excitation Lasers | Lasers tuned to excite atoms from their ground state to a high-energy Rydberg state, which enables strong, long-range interactions between qubits for quantum operations [21]. |

| Molecular Activity Dataset | A curated dataset, such as from the Merck Molecular Activity Challenge, which provides molecular descriptors and associated biological activity values for model training and validation [19]. |

| Classical ML Software Stack | Standard machine learning libraries (e.g., scikit-learn) for implementing the final-stage Random Forest or other classical models that use the QRC-generated features [19]. |

Workflow and System Diagrams

QRC Experimental Workflow

Neutral-Atom Qubit Control Logic

Troubleshooting Guides

Data Preprocessing and Feature Selection

Problem: High-Dimensional Classical Data Causing Computational Bottlenecks

- Symptoms: Long processing times for embedding generation; memory errors during quantum circuit simulation.

- Solutions:

- Implement Feature Selection: Use classical methods like SHAP (SHapley Additive exPlanations) to identify and retain only the top molecular descriptors (e.g., the top 18 features as used in the Merck Molecular Activity Challenge) without significant performance loss [23].

- Dimensionality Reduction: Apply Principal Component Analysis (PCA) to reduce feature space while preserving variance before feeding data into the quantum reservoir [24].

- Data Subsampling: For initial pipeline testing and hyperparameter tuning, use clustered-based sampling to create smaller, representative subsamples (e.g., 100, 200 records) [23].

Problem: Inconsistent Molecular Descriptor Formats

- Symptoms: Scripts fail to parse input files; errors in data loading and normalization.

- Solutions:

- Standardize Input: Ensure all molecular descriptor data is in a consistent, machine-readable format (e.g., CSV). The pipeline for the Merck dataset expects files named as

ACT{number}_competition_training.csv[24]. - Validate Data Cleaning: Run the data preparation script (

qrc-dataprep.py) to automate data cleaning, outlier detection, and feature scaling, ensuring a uniform input for the quantum reservoir [24].

- Standardize Input: Ensure all molecular descriptor data is in a consistent, machine-readable format (e.g., CSV). The pipeline for the Merck dataset expects files named as

Quantum Reservoir Computing (QRC) Embedding Generation

Problem: Poor Model Performance with QRC Embeddings

- Symptoms: Classical models trained on QRC embeddings show high mean-squared error (MSE) compared to those trained on raw features.

- Solutions:

- Check Reservoir Dynamics: Verify the parameters of the quantum reservoir, such as the Rydberg Hamiltonian used in neutral atom arrays. The entangled quantum dynamics are crucial for creating informative embeddings [23].

- Adjust Embedding Type: Explore different types of observables. The pipeline can generate both "one-body" and "two-body" embeddings (quantum observables). Two-body embeddings may capture more complex interactions but are computationally more intensive [24].

- Analyze Embedding Structure: Use the Uniform Manifold Approximation and Projection (UMAP) technique to project high-dimensional QRC embeddings into 2D/3D space. This helps visualize whether the quantum dynamics introduce more interpretable structure to the data, a reported advantage of QRC [23].

Problem: Quantum Simulation is Too Slow or Resource-Intensive

- Symptoms: The script

qrc_regression_merck.jltakes excessively long to generate embeddings. - Solutions:

- Reduce Feature Count: The computational complexity of the QRC simulation is highly sensitive to the number of input features (

nfeats). Revisit the feature selection step to reduce this number [24]. - Leverage Classical Reservoir Computing (CRC): As a benchmark or alternative, generate Classical Reservoir Computing (CRC) embeddings using

crc_randforest_embeddingonly.jl, which simulates the spin vector limit of the Rydberg Hamiltonian and is computationally less demanding [24]. - Optimize Computational Resources: Ensure the job is scheduled on a high-performance computing (HPC) cluster using a workload manager like SLURM, as recommended in computational resource workshops [25].

- Reduce Feature Count: The computational complexity of the QRC simulation is highly sensitive to the number of input features (

Model Training and Evaluation

Problem: Large Variance in Model Performance Across Data Subsamples

- Symptoms: Regression metrics (e.g., MSE) fluctuate significantly when models are trained on different random subsamples of the dataset.

- Solutions:

- Increase Cross-Validation: Use the provided pipeline to run 25 subsamples of 100 records for robust cross-validation, which helps in reliably estimating model performance and variance [24].

- Check for Dataset Bias: Perform the subsampling analysis on multiple different molecular activity datasets (e.g., MMACD 4 and MMACD 14) to ensure the robustness of the QRC approach is not dataset-specific [23].

- Ensemble Models: Utilize ensemble regression algorithms like Random Forest Regressor, which was reported to perform consistently well across both classical and QRC-embedded features [23].

Frequently Asked Questions (FAQs)

Q1: What are the key advantages of using Quantum Reservoir Computing over Variational Quantum Algorithms for molecular property prediction?

A1: QRC offers two primary advantages:

- Mitigation of Barren Plateaus: Unlike variational quantum models that require gradient estimation on quantum hardware—a process susceptible to vanishing gradients (barren plateaus) due to noise and entanglement—QRC offloads all training to classical post-processing. This leads to a more trainable and robust model [23].

- Performance on Small Datasets: QRC has demonstrated more robust performance degradation compared to classical models as the size of the training dataset decreases. This is particularly valuable for pharmaceutical research, where high-quality experimental data can be limited and costly to produce [23].

Q2: My background is in classical machine learning for drug discovery. What are the essential components I need to set up a QRC pipeline?

A2: You will need to configure the following core components, often available in open-source implementations [24]:

- Classical Preprocessing Layer: For data cleaning, feature scaling, and dimensionality reduction (e.g., using Scikit-learn).

- Quantum Reservoir Layer: A simulator (or quantum hardware) that evolves the classical input data using parameterized quantum dynamics, such as a Rydberg Hamiltonian on neutral atom arrays.

- Readout Layer: A classical machine learning model (e.g., Random Forest, Linear Regression) that is trained on the measured observables from the quantum reservoir to make the final prediction.

Q3: How is the "quantum embedding" different from the classical molecular descriptors I start with?

A3: Classical molecular descriptors (e.g., physiological properties, molecular fingerprints) are hand-crafted features representing the molecule's structure [23]. A quantum embedding is a high-dimensional representation created by processing these classical descriptors through the complex, entangled dynamics of a quantum system (the reservoir). This process can uncover non-linear relationships and patterns in the data that are not easily accessible to classical methods, potentially leading to more interpretable and powerful features for prediction [23].

Q4: What does the typical computational workflow look like, from raw data to a trained model?

A4: The end-to-end workflow can be visualized as follows:

Experimental Protocols & Data

Detailed Methodology: QRC for Molecular Activity Prediction

This protocol is adapted from the study applying QRC to the Merck Molecular Activity Challenge dataset [23] [24].

Data Preparation:

- Dataset: Obtain the Merck Molecular Activity Challenge (MMACD) dataset. Use the provided Python script (

qrc-dataprep.py) to load the data (e.g.,ACT4_competition_training.csv). - Cleaning & Imputation: Handle missing values using a data standardization procedure. Detect and process outliers.

- Exploratory Analysis: Conduct initial analysis to understand data distribution and salient features.

- Feature Selection: Use the SHAP method on a baseline classical model (e.g., Random Forest) to select the top

kmost important molecular descriptors (e.g.,k=18). This step reduces the problem dimensionality for the quantum simulator. - Subsampling: Use clustering-based sampling to create multiple stratified subsamples of sizes 100, 200, and 800 records for robust evaluation.

- Dataset: Obtain the Merck Molecular Activity Challenge (MMACD) dataset. Use the provided Python script (

Embedding Generation:

- Quantum Reservoir Computing (QRC):

- Run the Julia script

qrc_regression_merck.jl. - The script encodes the selected classical features into a quantum state via detuning layers.

- It simulates the evolution of this state under a Rydberg Hamiltonian,

- Finally, it extracts quantum observables (e.g., one-body and two-body correlation functions) to form the final QRC embeddings.

- Run the Julia script

- Classical Reservoir Computing (CRC) (For Comparison):

- Run the Julia script

crc_randforest_embeddingonly.jl. - This simulates the classical vector-spin limit of the same Rydberg Hamiltonian to generate CRC embeddings.

- Run the Julia script

- Quantum Reservoir Computing (QRC):

Model Training & Evaluation:

- Inputs: Train a suite of classical regression models (e.g., Random Forest, SVM, Linear Regression) on three different data types: a) raw classical features, b) QRC embeddings, and c) CRC embeddings.

- Evaluation: Use the Python script

qrc_runalgos_alltypes.pyto train models and evaluate their performance on a held-out test set. The primary metric is Mean Squared Error (MSE). - Analysis: Use UMAP to project the high-dimensional embeddings (both classical and quantum) into 2D space to visually compare the structure and separability of the data representations.

Key Research Reagent Solutions

The following table details the essential computational "reagents" required to implement the QRC workflow for molecular property prediction.

Table 1: Essential Research Reagents for the QRC Workflow

| Item Name | Function / Definition | Example / Note |

|---|---|---|

| Molecular Descriptors | Numerical representations of molecular structures and properties used as input features [23]. | Physiological properties, biochemical properties, or molecular fingerprints from the Merck Molecular Activity Challenge [23]. |

| Quantum Reservoir | A fixed, complex quantum system that processes input data through its natural dynamics to create a rich feature set [23]. | A simulated system of neutral atoms evolved under a Rydberg Hamiltonian, which generates entanglement [23]. |

| Rydberg Hamiltonian | The governing equation for the quantum reservoir dynamics, describing atom interactions in the Rydberg state [23]. | Key for creating the entangled quantum dynamics that provide the computational power in neutral-atom-based QRC [23]. |

| SHAP (SHapley Additive exPlanations) | A method from cooperative game theory used to explain the output of machine learning models and select the most important input features [23]. | Used to reduce the number of molecular descriptors to a manageable size (e.g., 18) for the quantum reservoir without significant performance loss [23]. |

| UMAP (Uniform Manifold Approximation and Projection) | A dimensionality reduction technique for visualizing high-dimensional data in lower dimensions [23]. | Used to project and analyze the structure of QRC embeddings, often revealing more interpretable clusters compared to classical features [23]. |

The table below summarizes key quantitative findings from the referenced QRC study, providing benchmarks for expected performance [23].

Table 2: Key Performance Findings from QRC Molecular Prediction Study

| Metric / Observation | Details | Implication |

|---|---|---|

| Robustness on Small Data | QRC models showed slower performance decay compared to standard classical models as training dataset size decreased. | QRC is a promising approach for pharmaceutical datasets which are often of limited size [23]. |

| Feature Dimension Reduction | Using only the top 18 molecular descriptors (via SHAP) resulted in a performance difference of less than 1% compared to using all predictors for the MMACD4 dataset. | Justifies aggressive feature selection to make quantum simulation computationally feasible without major accuracy loss [23]. |

| Model Performance | The Random Forest Regressor consistently performed the best across different sample sizes and embedding types (classical vs. QRC). | Recommends Random Forest as a strong baseline and primary model for benchmarking in this pipeline [23]. |

| Interpretability | UMAP analysis showed that quantum reservoir embeddings appeared to be more interpretable in lower dimensions than classical features. | Suggests QRC not only aids in prediction but may also provide more insightful data representations [23]. |

From Theory to Pipeline: Implementing QRC for Molecular Prediction

Frequently Asked Questions (FAQs)

Q1: Why is traditional data preprocessing often unsuitable for quantum machine learning (QML) models?

Classical preprocessing methods often fail for QML due to fundamental constraints of quantum hardware. Unlike classical models that can handle hundreds of features, QML faces a qubit bottleneck, where each feature typically maps to one or more qubits. Current Noisy Intermediate-Scale Quantum (NISQ) devices limit practical implementations to between 4 and 8 features. Furthermore, data scaling for classical models (e.g., using StandardScaler) produces outputs that cannot be directly encoded into quantum states. Quantum circuits require features to be scaled to a specific range, such as [0, 2π] for angle encoding, to function properly with rotation gates [26].

Q2: What is the primary advantage of using Quantum Reservoir Computing (QRC) for small-data scenarios in drug discovery? QRC demonstrates more robust performance as dataset size decreases, a critical quality for pharmaceutical research involving rare diseases or early-stage clinical trials where samples are limited. In proof-of-concept studies, QRC outperformed classical models on small subsets of 100-200 samples, delivering higher predictive accuracy and significantly lower prediction variability. This advantage diminishes with larger datasets (≥800 samples), highlighting QRC's core strength in low-data regimes [27] [23].

Q3: How do I choose between PCA and LDA for dimensionality reduction before quantum encoding? The choice depends on whether your data is labeled. Principal Component Analysis (PCA) is an unsupervised linear transformation technique that finds orthogonal axes of maximum variance without considering class labels. In contrast, Linear Discriminant Analysis (LDA) is a supervised method that explicitly uses class labels to find a feature subspace that optimizes class separability. Studies have shown that using LDA during the preprocessing step can lead to better classical encoding and performance for quantum classifiers like Variational Quantum Algorithms (VQA) [28].

Q4: What is a practical workflow for creating representative sub-samples from a larger dataset? A robust, clustering-based sub-sampling workflow ensures that small datasets preserve the underlying distribution of the original, larger dataset [27] [23]:

- Data Preparation and Exploratory Analysis: Clean the data and handle missing values.

- Cluster Assignment: Assign data points to clusters. This step ensures the subsample is representative of the entire data distribution.

- Sub-sample Creation: From the clustered data, create random subsamples of the desired sizes (e.g., 100, 200, and 800 records). The clustering step prevents biased sampling.

The diagram below illustrates this sub-sampling and QRC workflow.

Troubleshooting Guides

Problem: Quantum model performance is poor after preprocessing with PCA.

- Potential Cause: PCA selects features for maximum variance, which may not align with features relevant for quantum separation.

- Solution:

- Try LDA: If your data is labeled, use LDA for preprocessing, as it optimizes for class separability, which can be more beneficial for the subsequent quantum classifier [28].

- Use Feature Importance: Employ tree-based models (Random Forest, XGBoost) to rank features by predictive power and select the top N features for quantum encoding [26].

- Validate with Statistical Selection: Use

SelectKBestwith statistical tests like the ANOVA F-test to identify features with the strongest relationships to the target variable [26].

Problem: Training a quantum model is slow, and simulation requires excessive memory.

- Potential Cause: The number of features (qubits) is too high, leading to exponential scaling of the quantum state space.

- Solution:

- Aggressively Reduce Features: Limit the number of features to a strict maximum (e.g., 4-8) using the methods above. A 20-feature dataset requires 20 qubits, creating over a million possible quantum states, which is computationally prohibitive [26].

- Check Feature Scaling: Ensure features are scaled to the [0, 2π] range for angle encoding. Incorrect scaling can lead to inefficient use of the quantum state space and longer convergence times. Use

MinMaxScaler(feature_range=(0, 2 * np.pi))for this purpose [26].

Experimental Protocols & Data

Protocol: Quantum Reservoir Computing (QRC) for Molecular Property Prediction This protocol is based on a consortium study involving Merck, Amgen, and Deloitte [27] [23].

- Data Source: Utilize a molecular dataset, such as those from the Merck Molecular Activity Challenge (MMACD), which contains biological activity prediction problems.

- Feature Selection:

- Perform initial modeling on the full dataset to select a baseline classical model (e.g., Random Forest Regressor).

- Use the SHAP (SHapley Additive exPlanations) method on the best-performing model to select the top N most important features (e.g., 18 features) to reduce dimensionality for the quantum circuit.

- Sub-sampling: Apply the clustering-based sub-sampling method to create smaller, representative datasets of sizes 100, 200, and 800 records.

- Quantum Embedding:

- Encode the classical molecular features (e.g., the top 18 features) into control parameters of a neutral-atom quantum system.

- Let the system evolve under Rydberg interactions. The measurements from this evolution will produce high-dimensional "quantum embeddings."

- Crucially, only the classical readout layer is trained; the quantum reservoir itself is fixed and requires no training.

- Classical Modeling & Analysis:

- Feed the raw classical features and the new QRC embeddings into the same classical machine learning model (e.g., Random Forest).

- Compare the performance (e.g., Mean Squared Error) across different dataset sizes and feature types.

Quantitative Performance Comparison The table below summarizes the typical performance of QRC versus classical models on different dataset sizes, as observed in the case study [27].

| Dataset Size | Classical Model Performance | QRC Model Performance | Key Observation |

|---|---|---|---|

| 100-200 samples | Lower predictive accuracy, higher prediction variability | Higher predictive accuracy, significantly lower variability | QRC demonstrates superior robustness in small-data regimes. |

| ≥800 samples | Performance improves, matching or nearing QRC | Good performance, but advantage over classical methods diminishes | Classical methods catch up as data becomes more abundant. |

Research Reagent Solutions

The table below lists key computational and hardware "reagents" essential for experiments in quantum reservoir computing for molecular property prediction.

| Item Name | Function / Explanation |

|---|---|

| MMACD Datasets | A public benchmark dataset containing molecular structures and associated biological activities, used for training and validating predictive models [23]. |

| SHAP (SHapley Additive exPlanations) | A method for interpreting model predictions and determining the importance of each input feature, crucial for feature reduction before quantum processing [23]. |

| QuEra Neutral-Atom QPU | A type of quantum processing unit (QPU) that uses arrays of neutral atoms. It serves as the physical "reservoir" in QRC, transforming inputs into rich, high-dimensional quantum embeddings [27]. |

| UMAP (Uniform Manifold Approximation and Projection) | A dimensionality reduction technique used for visualization and analysis. It helps reveal whether quantum embeddings structure data more distinctly than classical features [27] [23]. |

| Scikit-learn Regression Ensemble | A suite of classical regression algorithms (e.g., Random Forest, SVR, Gradient Boosting) used as the trainable readout layer to make final predictions from quantum reservoir embeddings [23]. |

QRC System Architecture and Data Flow

The following diagram illustrates the architecture of a Quantum Reservoir Computing system and the flow of data from classical preparation to final prediction, as implemented in the featured protocol.

Encoding Molecular Features into Neutral-Atom Quantum Registers

Frequently Asked Questions

FAQ: What are the most common sources of error when encoding molecular data onto a neutral-atom register?

The primary challenges are atom loss, control inaccuracies, and decoherence. Atom loss occurs when qubits escape their optical traps, erasing the information they carry [29]. Control inaccuracies arise from imperfect laser pulses used to manipulate atomic states, leading to errors in quantum gate operations [30]. Decoherence causes the quantum state to deteriorate over time due to interactions with the environment [30]. Mitigation strategies include dynamic reloading of atoms to counter atom loss and robust calibration of laser parameters to minimize control errors [31].

FAQ: My quantum embeddings show high variability. Is this a problem with the hardware or the encoding scheme?

High variability can stem from both sources. On the hardware side, instability in Rydberg laser systems or fluctuating local electric fields can be culprits. From an encoding perspective, the chosen method for mapping molecular features to quantum parameters (like detuning) might be suboptimal. It is recommended to first verify the stability of classical control systems. Then, systematically test different encoding schemes, for instance, comparing one-body against two-body interaction terms, as the latter often provide richer, more stable embeddings [27].

FAQ: How does the choice of Rydberg blockade radius influence the representation of molecular connectivity?

The Rydberg blockade radius is fundamental for representing molecular structure. It determines the distance within which two atoms cannot both be excited to the Rydberg state, thereby enforcing a constraint that can mimic bonded or non-bonded interactions in a molecule [32]. If the radius is too small, intended correlations between different parts of the molecule will be lost. If too large, it might restrict the system's ability to explore valid configurations. Optimization techniques like GRAPHINE can be used to find the ideal blockade radius for a given molecular graph and its associated connectivity [32].

Troubleshooting Guide: Resolving Low-Fidelity Quantum Embeddings

| Observed Issue | Potential Root Cause | Recommended Diagnostic Steps | Solution |

|---|---|---|---|

| Low Fidelity Quantum Embeddings | Atom loss during circuit evolution [29]. | Check vacuum pressure and laser trap stability. Use high-fidelity state-selective readout to identify loss locations [33]. | Implement atom reloading protocols without disrupting the entire computation [31]. |

| Excessive decoherence [30]. | Characterize qubit coherence times (T1, T2) and compare against circuit duration. | Simplify the circuit to reduce execution time or use dynamical decoupling pulses. | |

| Imperfect Rydberg gates [32]. | Perform quantum process tomography on two-qubit gates to measure fidelity. | Re-calibrate Rydberg laser parameters (Ω, δ) and check for phase noise [32]. |

Troubleshooting Guide: Addressing Inefficient Molecular Representation

| Observed Issue | Potential Root Cause | Recommended Diagnostic Steps | Solution |

|---|---|---|---|

| Inefficient Molecular Representation | Suboptimal register mapping [32]. | Analyze the molecular graph and the qubit connectivity graph for mismatches. | Use a register mapping optimizer (e.g., GEYSER, GRAPHINE) to tailor the atom positions to the problem [32]. |

| Weak nonlinear interactions in the quantum system [27]. | Compare results from embeddings using only one-body terms versus those including two-body terms. | Configure the quantum system to leverage richer two-body quantum interactions for more expressive embeddings [27]. |

The Scientist's Toolkit: Research Reagent Solutions

Table: Key Experimental Components for Neutral-Atom Molecular Encoding

| Item | Function in the Experiment |

|---|---|

| Alkali Atoms (e.g., Rubidium-85) | The physical qubits. Their electronic energy levels (ground and Rydberg states) are used to encode quantum information [32] [33]. |

| Optical Tweezers | Highly focused laser beams that trap and arrange individual atoms into a desired register configuration [32] [31]. |

| Rydberg Excitation Lasers | Laser systems with tunable Rabi frequency (Ω) and detuning (δ) used to drive atomic transitions to Rydberg states and execute quantum gates [32]. |

| Spatial Light Modulator (SLM) | A device that shapes laser light to dynamically reconfigure the positions of optical tweezers, allowing for flexible register geometry [33]. |

Table: Performance Metrics from a Quantum Reservoir Computing (QRC) Case Study on Molecular Data [27]

| Metric | Small Data (100-200 samples) | Larger Data (≥800 samples) | Notes / Implication |

|---|---|---|---|

| Predictive Accuracy | QRC outperformed classical methods. | Classical methods caught up with QRC. | QRC is particularly advantageous in low-data regimes common in early-stage trials. |

| Prediction Variability | QRC showed significantly lower variability. | Variability between methods became comparable. | QRC provides more robust and reliable predictions when data is scarce. |

| Impact of Nonlinearity | Embeddings using two-body interactions yielded stronger performance gains. | Not explicitly reported. | Leveraging richer quantum interactions is key to the enhanced performance. |

Detailed Experimental Protocol: Quantum Reservoir Computing for Molecular Property Prediction

This protocol is based on a collaborative case study by QuEra, Merck, Amgen, and Deloitte [27].

1. Data Preparation and Sub-sampling

- Objective: Simulate small-data scenarios typical in clinical trials or rare-disease research.

- Method: Begin with a larger molecular dataset. Use clustering techniques (e.g., k-means) to create representative subsets of varying sizes (e.g., 100, 200, 800 samples). This ensures the underlying data distribution is preserved even in small samples.

2. Quantum Embedding Generation

- Objective: Encode classical molecular features into a high-dimensional quantum state space.

- Hardware Setup: Utilize a neutral-atom Quantum Processing Unit (QPU) like those from QuEra, with atoms arranged in a programmable array.

- Encoding: Map the classical molecular features (e.g., atom types, bond lengths, energies) into quantum control parameters. This includes:

- Atomic Detunings (δ): Encoding features into the energy levels of individual atoms.

- Atom Arrangements: Using the spatial configuration of the atoms in the register to reflect the molecular graph.

- System Evolution: Let the quantum system evolve under the native Rydberg Hamiltonian. The strong Rydberg interactions between atoms process the input information.

- Measurement: After evolution, measure the final state of the system (e.g., via fluorescence imaging [33]) to obtain a classical snapshot. This measurement outcome is the high-dimensional "quantum embedding."

3. Classical Modeling and Comparison

- Objective: Use the quantum embeddings for a supervised learning task and benchmark performance.

- Readout Model: Train a classical machine learning model (e.g., a Random Forest classifier/regressor) on the quantum embeddings. Only this readout layer is trained.

- Control Experiments: Compare against:

- A: The same classical model trained on the raw molecular features.

- B: The same classical model trained on classical kernel-based embeddings (e.g., from a Gaussian Radial Basis Function).

Advanced Technical Guide: Optimizing Register Mapping

For complex molecules, the spatial arrangement of atoms in the quantum register is critical. A poor mapping can lead to excessive gate operations and reduced fidelity. Below is a structured methodology for optimizing this process, based on techniques like GRAPHINE [32].

Optimization Workflow:

- Graph Formation: Represent the target molecule as a graph where atoms are nodes and bonds (or desired quantum interactions) are edges. Assign edge weights based on the required interaction strength or the number of quantum operations needed between two "atomic" qubits.

- Qubit Position Optimization: Construct a 2D layout for the physical qubits (the neutral atoms). The goal is to place pairs of qubits with high edge weights closer together, ideally within the Rydberg blockade radius to enable direct interaction.

- Blockade Radius Calibration: Determine the optimal Rydberg blockade radius for the specific molecular problem. This ensures that all necessary qubit pairs are connected while minimizing unwanted crosstalk between distant pairs.

Harnessing Rydberg Interactions and Quantum Evolution

Frequently Asked Questions (FAQs)

Q1: Our controlled-phase gate fidelity is degraded by residual thermal motion of atoms. How can this be mitigated? Atomic motion within traps introduces Doppler shifts and dephasing. Actively monitor trap frequencies and depths to ensure tight confinement. Implement sideband cooling techniques prior to gate operation to initialize atoms in their motional ground state, minimizing motion-induced phase errors.

Q2: We observe an unexpected population in Rydberg states after gate operations. What could be the cause? This is typically caused by inadequate Rydberg state decay or improper pulse sequencing. Ensure your Rydberg laser detuning and Rabi frequency (Ω) are optimally chosen for your target Rydberg interaction strength, V. Utilize Floquet frequency modulation (FFM) to enhance the Rydberg anti-blockade condition, which provides more precise control and can suppress unwanted excitations [34].

Q3: What is the primary advantage of using Floquet frequency modulation in Rydberg gates? FFM provides a robust method to realize Rydberg anti-blockade dynamics, independent of the precise strength of the Rydberg-Rydberg interaction (RRI) [34]. This overcomes constraints on atomic separations and eliminates the need for individual laser addressing of atoms, simplifying experimental setup and enhancing convenience for practical applications [34].

Q4: How can we optimize our system for implementing quantum walks on complex spatial networks? For quantum walks, encode the walker position in the excitation state of your atom array. Utilize the native multi-qubit gates (e.g., C^s-1 Z gates) available in Rydberg platforms to efficiently implement the reflection operators required for staggered quantum walks. A classical pre-processing step to find a tessellation cover of your target graph is essential [35].

Troubleshooting Guides

Low Gate Fidelity in Controlled-Phase Gates

Symptoms:

- Measured gate fidelity significantly below theoretical model.

- High population loss or decoherence during gate operation.

- Inconsistent gate performance across multiple runs.

Possible Causes and Solutions:

| Cause | Diagnostic Steps | Solution |

|---|---|---|

| Fluctuating RRI Strength | Measure atom positions with high-resolution imaging; characterize RRI via spectroscopy. | Improve initial atom rearrangement; use FFM to make the gate robust to variations in RRI [34]. |

| Laser Phase Noise | Analyze laser linewidth with a heterodyne detection setup. | Implement noise-eater systems; use phase-locking techniques for Rydberg excitation lasers. |

| Incorrect Pulse Shape | Measure the actual temporal profile of your laser pulses at the experiment. | Apply soft quantum control strategies, such as Gaussian-shaped pulses, to suppress non-adiabatic transitions and high-frequency oscillations [34]. |

Inefficient Spatial Search in Quantum Walk Algorithms

Symptoms:

- Quantum walk fails to find the marked node with the expected success probability.

- The search time does not show the expected quadratic speedup.

Possible Causes and Solutions:

| Cause | Diagnostic Steps | Solution |

|---|---|---|

| Imperfect Tessellation Cover | Classically compute the tessellation cover of your graph and verify all edges are included. | Use an efficient algorithm to construct a minimal tessellation cover; ensure cliques are mapped correctly to atomic positions [35]. |

| Faulty W-State Generation | Perform quantum state tomography on the qubits within a single clique. | Calibrate the unitary operation ( U{\alphak} ) that creates the W-state; its circuit requires O(s) two-qubit gates for a clique of size s [35]. |

| Decoherence during Walk | Measure single and two-qubit coherence times (T2) and compare them to the total walk time. | Optimize the walk evolution time to be less than the coherence time; use dynamical decoupling pulses during idle periods. |

Experimental Protocols & Methodologies

Protocol: Realizing a Controlled-Phase Gate via Floquet Frequency Modulation

This protocol details the implementation of a robust controlled-phase (C-Phase) gate between two Rydberg atoms using Floquet frequency modulation, based on the methodology outlined in [34].

1. Principle: The gate operates by tailoring the system dynamics to achieve a Rydberg anti-blockade condition through periodic modulation of the laser detuning. This allows the |11⟩ state to undergo a closed evolution path, acquiring a non-trivial phase of π, while other computational states remain unaffected.

2. Initialization:

- Prepare two neutral atoms in their hyperfine ground states, initialized to the |11⟩ state.

- Ensure the atoms are trapped at a distance where the Rydberg-Rydberg interaction (RRI) strength is V.

- Apply sideband cooling to minimize motional errors.

3. Laser Excitation and Modulation:

- Apply a global Rydberg excitation laser with a time-dependent Rabi frequency Ω(t) and phase ϕ(t).

- Critically, modulate the laser detuning sinusoidally: Δ(t) = δ sin(ω₀t), where δ is the modulation amplitude and ω₀ is the modulation frequency.

- The modulation index is defined as α = δ/ω₀. The values of δ and ω₀ must be chosen judiciously to satisfy the desired anti-blockade condition for the given V.

4. Gate Operation:

- The system evolves under the modified Hamiltonian for a specific duration τ until the |11⟩ state acquires a π-phase shift.

- The gate time and fidelity can be optimized by integrating this approach with Gaussian soft quantum control, which smooths the pulse shapes [34].

5. Verification:

- Perform quantum process tomography (QPT) to fully characterize the gate.

- Alternatively, use benchmarking sequences like randomized benchmarking (RB) to estimate the average gate fidelity.

Protocol: Implementing a Staggered Quantum Walk for Spatial Search

This protocol describes how to implement a staggered quantum walk on an arbitrary spatial network using an array of Rydberg atoms [35].

1. Principle: A staggered quantum walk uses reflections over graph cliques (tessellations) instead of a coin. The walker's position is encoded as a single Rydberg excitation among N atoms.

2. Graph Encoding and Pre-processing:

- Encode the Graph: Map each vertex of your target graph to a single atom in the array. The state |i⟩ = |0...1ᵢ...0⟩ represents the walker at vertex i.

- Find a Tessellation Cover: Run a classical algorithm to partition the graph's vertices into cliques (tessellation α). You may need multiple tessellations (α, β, ...) to cover all graph edges. This is a crucial pre-processing step [35].

3. Implementing the Walk Operator: For each tessellation α, the reflection operator is ( W\alpha = 1 - 2\sumk |\alphak⟩⟨\alphak| ), where |αₖ⟩ is the uniform superposition of vertices in the k-th clique.

- For each clique αₖ in the tessellation:

- Apply the unitary ( U{\alphak} ) that maps the state |1...1ₛ⟩ to the W-state |αₖ⟩. This can be done with a quantum circuit of O(s) two-qubit gates [35].

- Apply a multi-controlled Z-gate (C^(s-1)Z) on all s qubits in the clique. Rydberg platforms offer this as a native gate due to the strong RRI [35].

- Apply ( U{\alphak}^\dagger ) to revert the basis.

4. Spatial Search: To search for a marked vertex |m⟩:

- Apply the walk operator U = Wα Wβ ... interleaved with a query operator R_m = 1 - (1 - e^(iπ))|m⟩⟨m|.

- After O(√N) steps, measure the atom array. The excitation will be found at the marked node |m⟩ with high probability, demonstrating a quadratic speedup [35].

Research Reagent Solutions

Table: Essential Materials for Rydberg-Based Quantum Experiments

| Item | Function | Specification / Notes |

|---|---|---|

| Neutral Atoms (e.g., Rb, Cs) | Qubit physical platform; quantum information is encoded in ground and Rydberg states. | Long-lived coherence, strong, tunable RRI when excited. |

| Rydberg Excitation Laser | Drives transitions from ground | |

| Optical Tweezers | Traps and rearranges individual atoms into desired arrays (e.g., for spatial networks). | High numerical aperture (NA) objective for tight focusing. |

| Arbitrary Waveform Generator (AWG) | Generates the precise voltage signals to control AOMs/RF drives for laser modulation. | Critical for implementing FFM and complex pulse shapes (Gaussian, etc.). |

| Acousto-Optic Modulator (AOM) | Modulates the amplitude, frequency, and phase of the Rydberg laser beam. | Used to apply the Floquet frequency modulation Δ(t) = δ sin(ω₀t) [34]. |

Experimental Workflow and System Diagrams

Experimental Workflow for a Staggered Quantum Walk

FFM-Enhanced C-Phase Gate Setup

Measuring and Interpreting the High-Dimensional Quantum Embeddings

High-dimensional quantum embedding is a technique for encoding complex, high-dimensional classical data into the state of a quantum processor. This process addresses the "dimensionality gap"—the challenge that most near-term quantum devices have limited qubit counts and cannot natively handle datasets with hundreds or thousands of features [36].

The core innovation involves mapping classical data into a richer quantum state representation, often using a form of Projected Quantum Kernel (PQK) [36]. This mapping allows quantum computers to process information in a high-dimensional Hilbert space, which is a key source of potential quantum advantage. In one demonstration, researchers successfully loaded over 500 features into quantum circuits using only 128 qubits, with methods claiming to scale to problems with tens of thousands of features on near-term hardware [36].

These techniques are particularly relevant for applications in financial modeling, predictive maintenance, and health diagnostics, where they have been shown to enhance performance in anomaly detection tasks, achieving high performance scores (e.g., F1 score of 0.96) even on noisy hardware [36].

Troubleshooting Guide & FAQs

This section addresses common practical challenges researchers face when working with high-dimensional quantum embeddings.

FAQ 1: My quantum model's performance has suddenly degraded. How can I determine if the issue is a Barren Plateau?

A Barren Plateau is a region in the optimization landscape where the gradients of the cost function vanish exponentially with the number of qubits, making training impossible [37].

Diagnosis Steps:

- Monitor Gradient Magnitudes: During training, track the norms of the gradients. If you observe an exponential decay of the gradient magnitudes as your circuit depth or qubit count increases, this is a strong indicator of a Barren Plateau.

- Check Parameter Updates: Observe the changes in your circuit parameters per optimization step. Progress that halts entirely, with near-zero parameter updates, suggests a Barren Plateau.

- Run a Local Analysis: For a small subset of parameters, manually perturb their values and compute the change in the cost function. A consistently negligible change confirms the problem.

Solutions & Mitigations:

- Use Identity-Initialized Layers: Initialize parameterized quantum gates close to the identity transformation to start with a simpler circuit.

- Adopt Local Cost Functions: Design a cost function that depends on a local subset of qubits rather than a global observable on all qubits.

- Switch to Layerwise Training: Train one layer of the quantum circuit to convergence before adding and training the next layer.

- Re-examine Feature Mapping: The choice of how classical data is embedded into the quantum state (the feature map) can induce Barren Plateaus. Consider using a problem-specific embedding strategy [38].

FAQ 2: The results from my quantum embedding experiment are too noisy. What error mitigation strategies can I apply?

Noise from gate errors, decoherence, and imprecise readouts is a fundamental challenge on NISQ devices [37].

Diagnosis Steps:

- Run Characterization Benchmarks: Use built-in device characterization tools (e.g., randomized benchmarking) to understand the baseline gate and readout error rates of the quantum processor you are using.