Quantum Theory in Chemical Research: Principles and Applications for Drug Discovery

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to the fundamental principles of quantum theory and their practical applications in chemical research.

Quantum Theory in Chemical Research: Principles and Applications for Drug Discovery

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to the fundamental principles of quantum theory and their practical applications in chemical research. It covers foundational concepts like wavefunctions and the Schrödinger equation, explores methodological applications in quantum chemistry and molecular modeling, addresses common computational challenges and optimization strategies, and validates these approaches through comparative analysis with classical methods. The content is designed to bridge the gap between abstract quantum theory and its critical role in advancing modern computational chemistry and pharmaceutical development.

Quantum Fundamentals: From Wave-Particle Duality to Molecular Orbitals

The advent of quantum mechanics in the early 20th century marked a revolutionary departure from classical physics, which proved fundamentally inadequate for describing phenomena at the atomic and molecular scale. While classical mechanics successfully predicts the behavior of macroscopic objects, it fails to explain key chemical phenomena including atomic stability, chemical bonding, discrete atomic spectra, and molecular reactivity. Quantum mechanics resolves these failures by introducing a fundamental reimagining of how matter and energy behave at the subatomic level. This paradigm shift forms the essential theoretical foundation for modern chemical research, from drug design to materials science, enabling researchers to predict and manipulate molecular behavior with unprecedented accuracy. The following sections detail the specific failures of classical physics and establish the core quantum mechanical principles that provide the definitive explanations for chemical behavior.

Core Failures of Classical Mechanics in Chemistry

Classical physics, built upon Newtonian mechanics and Maxwell's electromagnetic theory, operates on the principle of determinism—where particles have definite positions and momenta, and energy changes continuously. At the molecular scale, these principles break down catastrophically, as illustrated by the following critical failures:

- Atomic Stability: According to classical electromagnetism, an electron orbiting a nucleus should continuously emit radiation, lose energy, and spiral into the nucleus within nanoseconds. This predicts that all atoms should collapse almost instantly, contradicting the observed stability of matter [1] [2].

- Discrete Atomic Spectra: Classical theory predicts that atoms can emit or absorb light at any frequency, producing a continuous spectrum. Experimentally, atoms emit and absorb light only at specific, discrete frequencies, producing line spectra that classical physics cannot explain [2] [3].

- Chemical Bonding and Molecular Structure: Classical physics offers no explanation for why atoms form stable bonds to create molecules, why these molecules exhibit specific, rigid geometries, or why certain combinations of atoms are stable while others are not [4] [5].

- The Photoelectric Effect: Classical wave theory predicts that the kinetic energy of electrons ejected from a metal by light should depend on the light's intensity, not its frequency. Experiments show that a threshold frequency exists, below which no electrons are emitted regardless of intensity—a phenomenon only explainable by quantum theory [2].

Table 1: Key Failures of Classical Physics at the Molecular Scale

| Phenomenon | Classical Prediction | Experimental Observation | Implication for Chemistry |

|---|---|---|---|

| Atomic Stability | Electrons spiral into nucleus; atoms collapse. | Atoms are stable with well-defined sizes. | Matter is stable, enabling molecular existence. |

| Atomic Spectra | Continuous emission/absorption spectra. | Discrete line spectra (e.g., Balmer series). | Unique spectral fingerprints for element identification. |

| Chemical Bonding | No mechanism for directed, stable bonds. | Atoms form molecules with specific geometries. | Predicts molecular structure and reactivity. |

| Wave-Particle Duality | Particles are particles; waves are waves. | Electrons show both particle and wave properties. | Explains electron diffraction and orbital theory. |

The Quantum Mechanical Framework

Quantum mechanics replaces the deterministic framework of classical physics with a probabilistic one, governed by a set of core principles that successfully describe atomic and molecular behavior.

Wave-Particle Duality and the Wave Function

Louis de Broglie proposed that all matter exhibits wave-like properties, with a wavelength given by λ = h/p, where h is Planck's constant and p is momentum [3]. This wave-particle duality means that entities like electrons are not localized particles but are described by a wave function, Ψ. The square of the wave function's amplitude, |Ψ|², provides the probability density of finding a particle at a specific location in space [2] [3]. This directly contradicts the classical concept of a defined trajectory.

The Schrödinger Equation

The time-independent Schrödinger equation, ĤΨ = EΨ, is the fundamental equation of quantum mechanics for stationary states [3] [5]. Here, Ĥ is the Hamiltonian operator (representing the total energy of the system), Ψ is the wave function, and E is the total energy of the state. Solving this equation for a given system (e.g., an electron in an atom) yields two key pieces of information:

- The allowed quantized energy states (E).

- The corresponding wave functions (Ψ) for those states, which define the electron orbitals and their probability distributions.

Quantization of Energy

The solutions to the Schrödinger equation naturally lead to energy quantization. Unlike classical systems where energy can vary continuously, quantum systems can only possess specific, discrete energy values. For a particle confined in a one-dimensional box of length L, the allowed energies are: Eₙ = (n²h²)/(8mL²), where n = 1, 2, 3,... is the quantum number [3]. This quantized energy level structure explains the discrete lines observed in atomic and molecular spectra.

The Uncertainty Principle

Formulated by Werner Heisenberg, this principle states that there is a fundamental limit to the precision with which certain pairs of physical properties, such as position (x) and momentum (p), can be known simultaneously [2]. The principle is quantitatively expressed as ΔxΔp ≥ ℏ/2, where ℏ is the reduced Planck's constant. This inherent uncertainty prohibits the classical concept of a well-defined electron path around a nucleus.

Quantum Explanations for Chemical Phenomena

Quantum mechanics provides direct, quantitative explanations for the very phenomena that stymie classical physics.

Atomic Structure and the Chemical Bond

The quantum mechanical model of the hydrogen atom, obtained by solving the Schrödinger equation with a Coulomb potential, yields wave functions (orbitals) characterized by three quantum numbers: n (principal), l (angular momentum), and mₗ (magnetic) [3]. The spatial distribution and energy of these orbitals, along with the Pauli exclusion principle, form the basis for understanding multi-electron atoms and the periodic table [4].

Chemical bonds are a quantum mechanical phenomenon. The covalent bond, for instance, is explained by the linear combination of atomic orbitals to form molecular orbitals. In the H₂ molecule, the electron density is enhanced between the two nuclei, leading to a stable bond. This model accurately predicts bond energies, lengths, and magnetic properties [4] [5].

Molecular Spectroscopy

The quantization of energy provides the foundation for all spectroscopic techniques. A molecule can absorb a photon of electromagnetic radiation only if the photon's energy (hν) exactly matches the energy difference between two of its quantized states: ΔE = hν [3].

- Electronic Spectroscopy (UV-Vis): Probes transitions between electronic energy levels.

- Vibrational Spectroscopy (IR): Probes transitions between quantized vibrational levels, described by the quantum harmonic oscillator model: Eᵥ = ℏω(v + 1/2), where v is the vibrational quantum number [3].

- Rotational Spectroscopy (Microwave): Probes transitions between quantized rotational levels, described by the rigid rotor model: E_J = BJ(J+1), where J is the rotational quantum number [3].

Nuclear Quantum Effects

Classical treatments often assume nuclei behave as classical particles. However, quantum effects such as tunneling and zero-point energy are critical, especially for light atoms. Zero-point energy, E_ZPE = (1/2)ℏω, is the residual energy a quantum harmonic oscillator possesses even at absolute zero, a consequence of the uncertainty principle [6] [3]. This energy differs for isotopes, leading to kinetic isotope effects that can be used to elucidate reaction mechanisms. Quantum tunneling allows particles to penetrate energy barriers, significantly impacting reaction rates for processes like proton transfer [2] [3].

Methodologies and Experimental Probes

Validating quantum mechanical predictions requires sophisticated experimental and computational techniques.

Key Experimental Techniques

- Scanning Tunneling Microscopy (STM): Relies directly on quantum tunneling to image surfaces at the atomic level [4] [2].

- Spectroscopy: Techniques like NMR, IR, and UV-Vis are used to measure the quantized energy levels of molecules, providing data on structure, bonding, and dynamics [5].

- X-ray Crystallography: While not a quantum technique per se, it provides experimental electron density maps that validate the probability distributions predicted by quantum-derived wave functions [5].

Computational Quantum Chemistry

Computational methods translate the principles of quantum mechanics into practical tools for predicting molecular properties [5] [7].

Table 2: Research Reagent Solutions for Computational Quantum Chemistry

| Computational 'Reagent' | Function & Purpose | Key Consideration |

|---|---|---|

| Basis Sets | Mathematical functions that describe atomic orbitals. The "building blocks" for molecular orbitals. | Larger basis sets increase accuracy but also computational cost. |

| Pseudopotentials | Model the core electrons in heavy atoms, reducing the number of electrons to be calculated explicitly. | Essential for systems with heavy atoms (e.g., transition metals). |

| Density Functionals (for DFT) | Approximate the complex electron-electron interaction term. | The choice of functional (e.g., B3LYP, PBE) critically impacts accuracy. |

| Solvation Models | Implicitly model the effects of a solvent environment on the molecule of interest. | Crucial for simulating reactions in solution, as in biological systems. |

Protocol: Quantum Chemical Calculation of a Reaction Pathway

Objective: To determine the energy profile and transition state for a simple chemical reaction, such as the SN2 reaction of Cl⁻ + CH₃Br → ClCH₃ + Br⁻.

Geometry Optimization:

- Use a quantum chemistry software package (e.g., Gaussian, ORCA, Q-Chem).

- Input the initial guessed structures for the reactants, products, and a guessed transition state.

- Select an electronic structure method (e.g., DFT with the B3LYP functional) and a basis set (e.g., 6-31+G*).

- Run a geometry optimization calculation for each structure to find the local energy minimum (for reactants/products) or first-order saddle point (for the transition state) on the potential energy surface.

Frequency Analysis:

- Perform a frequency calculation on each optimized structure.

- Validation: The reactants and products should have no imaginary frequencies. The transition state must have exactly one imaginary frequency, whose vibrational mode corresponds to the motion along the reaction coordinate.

Intrinsic Reaction Coordinate (IRC) Calculation:

- Starting from the verified transition state, run an IRC calculation to confirm it connects the correct reactants and products.

Energy Calculation:

- Perform a single-point energy calculation at a higher level of theory (e.g., CCSD(T)) on the optimized geometries to obtain a more accurate reaction energy and barrier height.

Current Research and Future Outlook

The application of quantum mechanics in chemistry continues to evolve rapidly, pushing the boundaries of what is possible.

- Quantum Computing for Chemistry: Quantum computers are being developed to solve electronic structure problems that are intractable for classical computers, such as simulating complex catalytic processes or large biomolecules [4] [8] [9]. IBM has projected that this may revolutionize fields like drug discovery and materials science [4].

- Quantum Effects in Biology: Research is uncovering the role of quantum effects, such as coherence and entanglement, in biological processes like photosynthesis and enzyme catalysis [4] [6].

- Advanced Materials Design: Quantum mechanical simulations are indispensable for the de novo design of novel materials, such as organic photovoltaics and high-temperature superconductors, by predicting their electronic and optical properties before synthesis [7].

- Macroscopic Quantum Systems: The 2025 Nobel Prize in Physics recognized the demonstration of macroscopic quantum tunneling in superconducting circuits [9]. This bridges the conceptual gap between the quantum and classical worlds and underpins the technology of superconducting qubits used in quantum computers.

Classical physics is fundamentally incapable of describing the behavior of matter at the atomic and molecular scale. Its failures regarding atomic stability, discrete spectra, and chemical bonding are profound and irreconcilable within its own framework. Quantum mechanics, with its core principles of wave-particle duality, quantization, and probability, provides the essential and powerful theoretical foundation for all modern chemistry. From explaining the structure of the periodic table to enabling the computational design of new drugs and materials, quantum mechanics is not merely an alternative to classical physics but the correct and necessary language for chemical research. Its continued development promises to further deepen our understanding and control of the molecular world.

Quantum mechanics provides the fundamental framework for understanding chemical bonding, a reality that becomes evident when classical physics fails to explain atomic and molecular stability. At the heart of this quantum description lie two foundational concepts: wave-particle duality and the Heisenberg Uncertainty Principle. These principles are not merely philosophical curiosities but form the mathematical and conceptual bedrock upon which modern computational chemistry and molecular design rest. For researchers in chemical research and drug development, a rigorous understanding of these quantum phenomena is essential for advancing predictive methodologies in molecular design, reaction optimization, and materials science. This whitepaper examines the operational principles of quantum duality and uncertainty, their mathematical formalisms, and their direct implications for chemical bonding and property prediction.

Core Conceptual Framework

Wave-Particle Duality

Wave-particle duality represents a fundamental departure from classical mechanics, asserting that all physical entities exhibit both wave-like and particle-like properties, with the observable behavior depending on the experimental context [10]. This duality is quintessentially quantum mechanical, as these two aspects are never simultaneously manifest in their entirety within a single measurement arrangement [11].

Historical Experimental Evidence: The photoelectric effect demonstrates light's particle-like nature, where light interacts with matter in discrete energy packets (photons) satisfying (E = h\nu) [10]. Conversely, the double-slit experiment reveals wave-like behavior through interference patterns, which emerge even when particles are sent through the apparatus one at a time [12] [10]. This phenomenon is not restricted to photons but extends to material particles such as electrons, as confirmed by the Davisson-Germer experiment which showed electron diffraction patterns [10].

The de Broglie Hypothesis: Louis de Broglie postulated that any particle with momentum (p) possesses a wavelength given by (\lambda = h/p), now known as the de Broglie wavelength [13] [14]. This hypothesis mathematically formalizes the connection between a particle's dynamical properties and its wave-like characteristics, establishing that wave-particle duality is a universal property of matter.

Complementarity Principle: Developed by Niels Bohr, this principle states that the wave and particle natures of a quantum system are mutually exclusive yet complementary aspects of its description [14]. The choice of measurement apparatus determines which property is manifested, fundamentally linking the nature of reality to the process of observation.

Heisenberg's Uncertainty Principle

The Heisenberg Uncertainty Principle (HUP) establishes a fundamental limit on the precision with which certain pairs of physical properties (canonically conjugate variables) can be simultaneously known [13] [15].

Mathematical Formulation: For position (x) and momentum (p), the uncertainty principle states that the product of their uncertainties has a lower bound: (\Delta x \Delta p \geq \hbar/2), where (\hbar = h/2\pi) is the reduced Planck constant [13] [15] [14]. A mathematically analogous relationship exists for energy and time: (\Delta E \Delta t \geq \hbar/2) [15].

Conceptual Interpretation: This limitation is not due to experimental imperfections but arises from the fundamental wave-like nature of matter [15] [14]. A pure sinusoidal wave has precisely defined wavelength (and thus momentum) but is completely delocalized in space. Conversely, a wave packet localized in position must be composed of multiple wavelengths, introducing momentum uncertainty [13]. This trade-off is mathematically inherent in Fourier analysis and manifests in quantum systems through the wave function description.

Physical Consequences: The uncertainty principle explains numerous quantum phenomena absent from classical physics, including atomic stability (preventing electron collapse into the nucleus), zero-point energy, and quantum tunneling [15] [14].

Table 1: Key Quantitative Relationships in Quantum Foundations

| Concept | Mathematical Relation | Physical Significance | Experimental Manifestation |

|---|---|---|---|

| de Broglie Relation | (\lambda = h/p) | Connects particle momentum to wave character | Electron diffraction patterns [10] |

| Position-Momentum Uncertainty | (\Delta x \Delta p \geq \hbar/2) | Limits simultaneous localization in position and momentum space | Spectral line widths, molecular vibration energies [13] [15] |

| Energy-Time Uncertainty | (\Delta E \Delta t \geq \hbar/2) | Relates state lifetime to energy precision | Natural linewidths in spectroscopy [15] |

| Wave-Particle Complementarity | (V^2 + P^2 \leq 1) (older form) | Quantitative trade-off between wave and particle behavior [11] | Which-path information in interferometers [12] [11] |

Quantitative Formalisms and Relationships

Mathematical Expression of Uncertainty

The uncertainty principle finds rigorous formulation through the standard deviations of quantum operators. For any wavefunction (\psi(x)), the standard deviation of position is defined as (\Delta x = \sqrt{\langle x^2 \rangle - \langle x \rangle^2}), with a similar expression for momentum [13]. Working in one dimension for a particle constrained to move along a line, the product (\Delta x \Delta p) has a precise minimum value. This formulation can be derived without explicit recourse to operator commutation relations through the Fourier transform relationship between position-space and momentum-space wavefunctions [16]. The wave function in momentum space (\varphi(p)) is the Fourier transform of its position-space counterpart (\psi(x)), mathematically ensuring that localization in one domain necessitates delocalization in the other [13].

Modern Quantification of Duality

Recent theoretical advances have produced a more complete quantitative framework for wave-particle duality. Researchers at Stevens Institute of Technology have derived a precise closed mathematical relationship showing that, when accounting for quantum coherence, "wave-ness" and "particle-ness" can be expressed in a relationship that sums exactly to one, rather than the previous inequality formulation ((V^2 + P^2 \leq 1)) [11]. For a perfectly coherent system, this relationship plots as a quarter-circle on a graph of wave-ness versus particle-ness, deforming to a flatter ellipse as coherence decreases. This refined understanding enables more precise quantification of complementary behaviors and has direct applications in quantum imaging techniques that exploit these relationships for information extraction [11].

Table 2: Research Reagent Solutions for Quantum Chemical Investigations

| Research Tool | Function/Description | Relevance to Quantum Principles |

|---|---|---|

| Ultracold Atom Arrays | Atoms cooled to microkelvin temperatures and arranged in optical lattices [12] | Provides idealized quantum systems for testing foundational principles like double-slit interference with single atoms as slits |

| Electron Diffraction Apparatus | Measures interference patterns from electron scattering off crystalline materials [10] | Demonstrates wave-like behavior of matter (electrons) via de Broglie wavelength |

| Quantum Imaging with Undetected Photons (QIUP) | Technique using entangled photon pairs to image objects [11] | Applies wave-particle duality relationships practically; measures coherence changes to map object structures |

| First-Principles Electronic Structure Codes | Computational methods solving fundamental quantum equations for molecular systems [7] | Applies uncertainty principle and wave nature to predict chemical bonding, excitation energies, and reaction pathways |

| Supercomputing Infrastructure | High-performance computing systems with parallel processing capabilities [7] | Enables practical quantum chemical calculations on complex systems (e.g., DNA with >10,000 electrons) incorporating quantum principles |

Experimental Methodologies and Protocols

The Double-Slit Experiment: An Idealized Protocol

The double-slit experiment remains the paradigmatic demonstration of wave-particle duality. Recent work at MIT has realized an exceptionally idealized version with atomic-level precision [12].

Experimental Setup: Researchers used a lattice of over 10,000 atoms, cooled to microkelvin temperatures and arranged in a uniform crystal-like configuration using an array of laser beams. Each atom in this ultracold lattice functions as an identical, isolated slit [12].

Measurement Procedure: A weak beam of light is shone through two adjacent atoms, ensuring that each atom scatters at most one photon. The pattern of scattered light is detected using ultrasensitive equipment. By repeating the experiment numerous times, researchers build statistics on whether the light behaves as a wave (showing interference) or particle (showing which-path information) [12].

Quantum Control: The "fuzziness" or spatial uncertainty of the atomic slits is tuned by adjusting the laser confinement. Looser confinement increases position uncertainty, enhancing the particle-like behavior of the photons (by providing which-path information via atomic recoil) while diminishing wave-like interference [12].

Quantum Imaging with Undetected Photons (QIUP)

This innovative protocol applies wave-particle duality relationships for practical imaging applications [11].

Photon Entanglement: A pair of entangled photons is generated. One photon (the signal) is directed toward the object to be imaged, while its partner (the idler) travels a separate path.

Coherence Monitoring: If the signal photon passes unimpeded through the object aperture, the quantum coherence remains high. If it collides with the aperture walls, coherence decreases sharply.

Wave-Particle Measurement: The wave-ness and particle-ness of the idler photon are measured, allowing researchers to deduce the coherence level of the signal photon and thereby reconstruct the object's shape without directly detecting the photons that interacted with the object.

Robustness to Decoherence: External factors like temperature or vibration degrade overall coherence but affect both high and low coherence scenarios similarly, preserving the differential signal needed for imaging [11].

Implications for Chemical Bonding and Molecular Science

Quantum Foundations of Chemical Bonds

The formation and stability of chemical bonds are direct manifestations of quantum principles. Wave-particle duality, particularly the wave nature of electrons, enables the molecular orbital descriptions that underpin modern chemistry [1] [7].

Electron Delocalization and Bonding: The wave-like character of electrons allows them to delocalize across multiple nuclei, forming bonding orbitals with lower energy than isolated atomic orbitals. This delocalization is mathematically described by wavefunctions that extend over molecular dimensions, with the square of the wavefunction amplitude giving the probability density of finding the electron in a particular region [1].

Uncertainty Principle and Bond Stability: The position-momentum uncertainty relationship (\Delta x \Delta p \geq \hbar/2) prevents electrons from collapsing into the nucleus, as confinement to a smaller volume (decreased (\Delta x)) would necessitate increased momentum uncertainty (increased (\Delta p)), raising kinetic energy [15] [14]. The stable bond length represents a compromise between electrostatic attraction and this uncertainty-driven kinetic energy increase.

Kinetic Energy in Bond Formation: Contrary to classical intuition where bonding is often viewed as primarily electrostatic, quantum mechanics reveals the crucial role of kinetic energy in bond formation. As Klaus Ruedenberg's work showed, kinetic energy changes play a fundamental role in the quantum mechanical explanation of chemical bonding [1].

Computational Quantum Chemistry

First-principles computational methods in chemistry directly implement the mathematical formalisms of wave-particle duality and uncertainty [7].

Electronic Structure Theory: Quantum chemistry uses the laws of quantum mechanics to understand electron and nuclear behavior in molecules, with electrons treated as quantum waves described by wavefunctions rather than classical particles with definite trajectories [7].

Methodology Development: Creating computational methods involves formulating quantum mechanical equations to be programmable on supercomputers, requiring expertise in mathematics, physics, chemistry, and computer science [7]. These methods must account for the inherent uncertainties and probabilistic nature of quantum systems.

Application Examples: Quantum simulations provide molecular-level insights difficult to obtain experimentally, such as femtosecond-scale energy transfer in DNA during proton beam cancer therapy, revealing that energy preferentially transfers to side chains with exposed electrons rather than DNA base pairs [7].

Advanced Applications in Chemical Research

The quantum principles discussed enable sophisticated applications with direct relevance to pharmaceutical and materials research.

Reaction Energy Calculations: Quantum chemistry can reliably predict reaction energies and some optical excitation properties, though accuracy varies across molecular classes [7]. For organic molecules, optical excitation properties can be modeled reliably, while transition metal molecules present greater challenges due to relativistic effects [7].

DNA Damage Mechanisms: Quantum simulations of proton beam therapy reveal energy transfer preferences to DNA side chains rather than base pairs, explaining why proton therapy effectively induces difficult-to-repair DNA damage in cancer cells [7]. This understanding could guide development of improved ion beam therapies using carbon ions or alpha particles [7].

Molecular-Level Insights: Quantum simulations provide attosecond-resolution understanding of energy transfer processes that are experimentally inaccessible, such as identifying that exposed electrons on DNA side chains under physiological conditions are responsible for high energy transfer from proton beams [7].

Wave-particle duality and the Heisenberg Uncertainty Principle are not abstract philosophical concepts but practical foundations upon which modern chemical research is built. These quantum principles provide the essential framework for understanding chemical bonding, molecular stability, and electronic behavior at the most fundamental level. For researchers in drug development and materials science, familiarity with these concepts enables more sophisticated use of computational tools and interpretation of experimental results. As quantum chemical methodologies continue advancing alongside computational power, their ability to predict and explain complex chemical phenomena will only increase, further cementing the central role of these foundational quantum principles in chemical research and innovation.

Quantum chemistry relies on the fundamental principles of quantum mechanics to understand and predict the behavior of atoms and molecules, forming the theoretical basis for modern chemical research. In the early 1900s, the emergence of theoretical chemistry introduced a paradigm where mathematics and physics could elucidate molecular behavior under different conditions without exclusive reliance on experiments. Within this field, quantum chemistry specifically uses the fundamental physics of quantum mechanics to understand how molecules behave, recognizing that at the scale of atoms and molecules, electrons and atomic nuclei do not behave like classical particles but instead follow quantum laws [7]. The Schrödinger equation, formulated by Erwin Schrödinger in 1925 and published in 1926, serves as the cornerstone of quantum mechanics, providing a mathematical framework for describing these quantum systems [17] [18]. Its discovery was a landmark achievement that earned Schrödinger the Nobel Prize in Physics in 1933 and formed the basis for quantum mechanics as the counterpart to Newton's second law in classical mechanics [17]. This equation and its solutions, the wavefunctions, provide the essential tools for describing electronic structure in atoms and molecules, enabling breakthroughs in drug design, materials science, and the understanding of chemical bonding.

Mathematical Foundation of the Schrödinger Equation

The Time-Dependent and Time-Independent Forms

The Schrödinger equation exists in two primary forms: the time-dependent and time-independent equations. The time-dependent Schrödinger equation is a partial differential equation that governs the evolution of a quantum system over time. It is expressed as [17] [18]:

iħ ∂Ψ/∂t = ĤΨ

In this fundamental equation, i represents the imaginary unit, Ψ symbolizes the wavefunction of the quantum system, ħ denotes the reduced Planck's constant, ∂Ψ/∂t represents the rate of change of the quantum state with respect to time, and Ĥ is the Hamiltonian operator, which encapsulates the total energy information of the system [18].

For many physical systems where the Hamiltonian does not explicitly depend on time, we can employ the time-independent Schrödinger equation [17] [18]:

ĤΨ = EΨ

Here, E represents the energy eigenvalue corresponding to the quantum state Ψ. Solving this equation enables the determination of allowed energy levels and corresponding stationary states of the system, which are of paramount importance in quantum chemistry for understanding molecular structure and stability [18].

Table 1: Components of the Schrödinger Equation

| Symbol | Name | Physical Significance | Mathematical Properties |

|---|---|---|---|

Ĥ |

Hamiltonian operator | Encodes the total energy of the system | Hermitian operator, acts on wavefunction |

Ψ |

Wavefunction | Describes the quantum state of the system | Complex-valued, square-integrable function |

E |

Energy eigenvalue | Allowed energy values of the system | Real-valued, often quantized |

ħ |

Reduced Planck's constant | Fundamental quantum of action | ħ = h/2π, where h is Planck's constant |

i |

Imaginary unit | Provides phase information | i = √-1, enables wave behavior |

The Hamiltonian Operator

The Hamiltonian operator is at the core of quantum dynamics, encoding the energy information of a quantum system and driving its time evolution [18]. For a single particle with mass m moving in a potential V(r) in three dimensions, the Hamiltonian operator takes the form [18]:

Ĥ = - (ħ²/2m)∇² + V(r)

This operator consists of two distinct parts: the kinetic energy operator (-ħ²/2m)∇², which describes the energy associated with the particle's motion, and the potential energy operator V(r), which accounts for interactions within the system, such as electrostatic attractions and repulsions [18]. The Laplace operator ∇² (also known as the Laplacian) involves second derivatives with respect to spatial coordinates and embodies the wave-like properties of quantum particles [19].

Wavefunctions: Interpretation and Properties

Physical Interpretation of the Wavefunction

The wavefunction Ψ provides a complete description of a quantum system. For a single particle, the wavefunction is a mathematical function that relates the location of an electron to its energy, typically expressed using three spatial variables (x, y, z) and time [20]. While the wavefunction itself is a complex-valued function and not directly measurable, its squared magnitude |Ψ(x,t)|² represents the probability density of finding the particle at a particular position in space [17] [21] [20].

For a wavefunction in position space Ψ(x,t), the probability Pr(x,t) of finding the particle at position x and time t is given by [17]:

Pr(x,t) = |Ψ(x,t)|²

This probabilistic interpretation, known as the Born rule after Max Born who proposed it in 1926, forms the foundation for the statistical nature of quantum mechanics [21]. The wavefunction must be normalized such that the integral of |Ψ|² over all space equals 1, ensuring the total probability of finding the particle somewhere is unity [21].

Key Mathematical Properties

Wavefunctions possess several essential mathematical properties that define their physical significance:

- Square-integrability: Wavefunctions belong to the space of square-integrable functions, meaning

∫|Ψ(x)|²dx < ∞over all space [17] [21]. This ensures that probabilities are well-defined and finite. - Continuity: Wavefunctions and their spatial derivatives must be continuous everywhere, even at potential boundaries [17].

- Single-valuedness: The wavefunction must yield a unique value at each point in space to provide unambiguous probability densities [17].

- Linearity: The Schrödinger equation is linear, meaning that if

Ψ₁andΨ₂are solutions, then any linear combinationaΨ₁ + bΨ₂is also a solution [17]. This property gives rise to the superposition principle, which allows quantum systems to exist in multiple states simultaneously [17] [22].

Table 2: Wavefunction Properties and Their Significance in Quantum Chemistry

| Property | Mathematical Expression | Chemical Significance | ||

|---|---|---|---|---|

| Probability Density | `ρ(x) = | Ψ(x) | ²` | Electron density distribution in atoms and molecules |

| Normalization | `∫ | Ψ(x) | ²dx = 1` | Conservation of probability, total electron count |

| Phase Factor | `Ψ = | Ψ | e^(iφ)` | Chemical bonding interference effects |

| Orthogonality | ∫Ψₘ*Ψₙdx = δₘₙ |

Independent molecular orbitals | ||

| Boundary Conditions | Ψ → 0 as x → ±∞ |

Localized electrons in atoms and molecules |

Computational Methodologies in Quantum Chemistry

Solving the Schrödinger Equation for Chemical Systems

The application of the Schrödinger equation in quantum chemistry involves solving for the wavefunctions and energies of electrons in atoms and molecules. For multi-electron systems, the Hamiltonian includes additional terms accounting for electron-electron repulsions:

Ĥ = -∑(ħ²/2mₑ)∇ᵢ² - ∑∑(Zₐe²)/(4πε₀rᵢₐ) + ∑∑(e²)/(4πε₀rᵢⱼ)

Where the terms represent: electron kinetic energy, electron-nucleus attractions, and electron-electron repulsions respectively. The complexity of this equation for many-electron systems necessitates computational approaches [7].

The development of electronic computers in the 1950s enabled practical application of quantum mechanics to study molecules [7]. With advances in computing technology over the past 70 years, quantum chemistry has seen remarkable developments, allowing researchers to model systems with increasing accuracy [7]. For organic molecules, quantum mechanical calculations can reliably predict properties such as reaction energies and optical excitation energies, though challenges remain for systems containing heavy elements where relativistic effects become important [7].

The Hartree-Fock Method and Beyond

In 1927, Hartree and Fock made the first significant attempt to solve the N-body wavefunction problem, developing a self-consistency cycle: an iterative algorithm to approximate the solution [21]. This approach, known as the Hartree-Fock method, incorporates the Slater determinant (developed by John C. Slater) to account for the antisymmetry principle of fermions, providing a fundamental methodology for approximating multi-electron wavefunctions [21].

Modern quantum chemistry employs increasingly sophisticated methods that build upon the Hartree-Fock foundation, including:

- Density Functional Theory (DFT): Uses electron density rather than wavefunctions as the fundamental variable

- Post-Hartree-Fock Methods: Include electron correlation effects through configuration interaction, coupled cluster theory, and perturbation methods

- Quantum Monte Carlo: Uses stochastic approaches to solve the Schrödinger equation

These computational methodologies allow quantum chemists to predict molecular structures, binding energies, reaction pathways, and spectroscopic properties with remarkable accuracy, forming the basis for computer-aided drug design and materials discovery [7].

Research Reagents and Computational Tools

Table 3: Essential Computational "Reagents" in Quantum Chemistry Research

| Tool/Method | Function | Application in Quantum Chemistry |

|---|---|---|

| Basis Sets | Mathematical functions to represent molecular orbitals | Expand wavefunctions in calculations; determine accuracy |

| Pseudopotentials | Approximate core electron interactions | Reduce computational cost for heavy elements |

| Density Functionals | Approximate electron exchange and correlation | Calculate electron interactions in DFT |

| Quantum Chemistry Software | Implement numerical algorithms | Solve Schrödinger equation for molecules (e.g., Gaussian, GAMESS) |

| High-Performance Computing | Provide computational resources | Enable calculations for large molecular systems |

Experimental Protocols: Quantum Chemistry Workflow

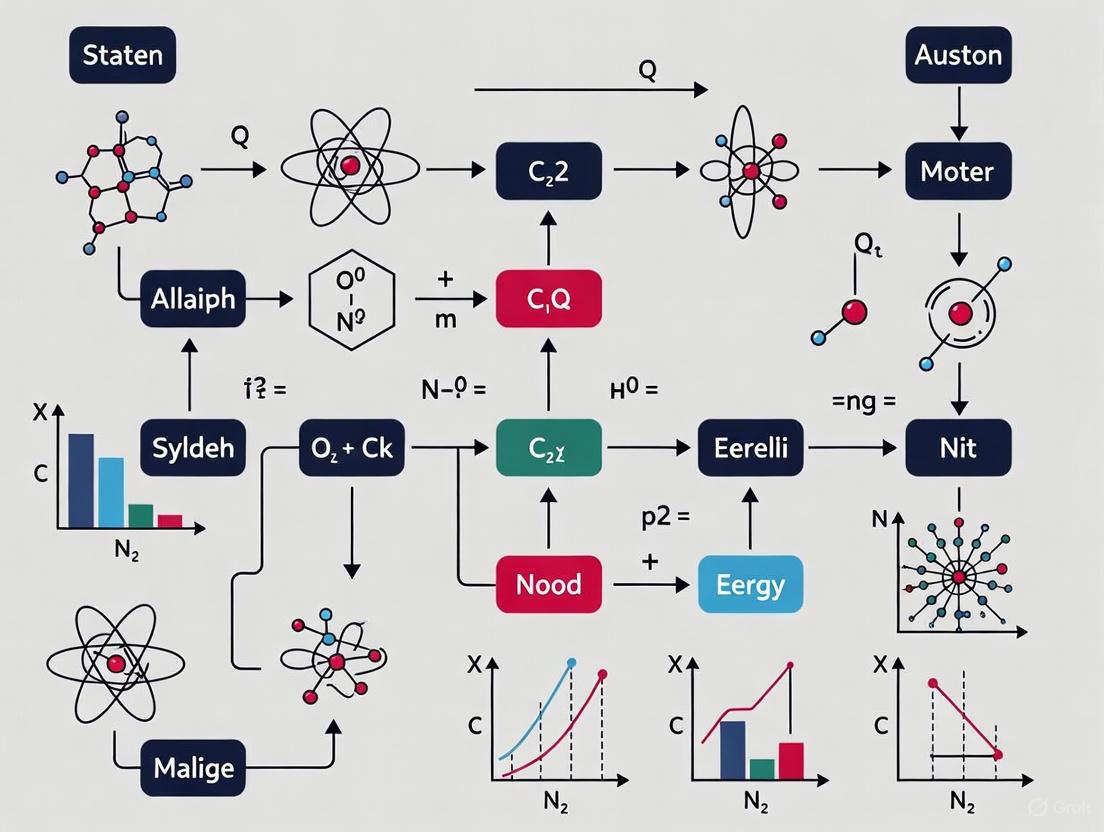

The following diagram illustrates the standard workflow for performing quantum chemical calculations to determine molecular properties:

Quantum Chemistry Computational Workflow

Molecular Structure Input and Geometry Optimization

The computational protocol begins with molecular structure specification, where the researcher defines the atomic composition and initial spatial arrangement of atoms in the molecule. This includes atomic numbers, Cartesian coordinates, and molecular connectivity [7]. The subsequent geometry optimization step involves iterative calculations that adjust nuclear coordinates to locate minima on the potential energy surface, corresponding to stable molecular conformations. This process utilizes algorithms such as steepest descent, conjugate gradient, or Newton-Raphson methods to minimize the energy with respect to nuclear coordinates, yielding equilibrium geometries that correspond to stable molecular structures [7].

Quantum Chemical Method Selection and Energy Calculation

The choice of computational method represents a critical decision point that balances accuracy and computational cost. For preliminary studies on medium-sized organic molecules, density functional theory (DFT) with hybrid functionals such as B3LYP often provides satisfactory results [7]. For higher accuracy, especially in spectroscopic property prediction, post-Hartree-Fock methods like coupled-cluster theory (CCSD(T)) may be employed despite their greater computational demands [7]. The basis set selection must align with the chosen method, with polarized triple-zeta basis sets (e.g., 6-311G) often providing a good compromise between accuracy and computational efficiency for organic molecules [7].

Once the method is selected, single-point energy calculations determine the total energy of the optimized molecular structure. This energy value serves as the foundation for predicting thermodynamic properties, relative stabilities of isomers, and reaction energies [7]. Subsequent molecular properties calculation extracts chemically relevant information from the wavefunction, including:

- Molecular orbitals and energy levels

- Electron density distributions

- Electrostatic potentials

- Vibrational frequencies

- NMR chemical shifts

- Electronic excitation energies

These properties enable researchers to connect quantum mechanical calculations with experimental observables [7].

Applications in Chemical Research and Drug Development

Quantum Chemistry in Pharmaceutical Research

Quantum chemical principles provide the foundation for understanding molecular interactions central to drug discovery and development. The application of Schrödinger's equation enables researchers to predict binding affinities between drug candidates and their target proteins, model reaction mechanisms of metabolic processes, and optimize molecular structures for enhanced efficacy and reduced side effects [7]. For example, quantum mechanical calculations can elucidate proton transfer mechanisms in enzyme active sites, predict the reactivity of functional groups in drug molecules, and model the electronic excitations responsible for photochemical degradation of pharmaceuticals [7].

Advanced quantum simulations can provide insights difficult to obtain experimentally. Recent work simulating DNA interactions with high-energy protons revealed that energy transfer preferentially targets DNA side chains rather than base pairs, especially under physiological conditions where side chains have exposed electrons [7]. This understanding has implications for proton beam cancer therapy, as damage to side chains is more difficult for cellular repair mechanisms to fix, potentially leading to more effective cancer treatments [7].

Chemical Bonding and Reactivity

The fundamental understanding of chemical bonding emerges directly from solutions to the Schrödinger equation for molecular systems. Quantum mechanics reveals that bonding involves not just energy minimization but also changes in electron kinetic energy and distribution [17]. Early studies applying the virial theorem to chemical bonds demonstrated the crucial role that electron kinetic energy plays in bond formation, completing our understanding of the quantum mechanical origin of chemical bonding [17].

Modern quantum chemistry enables the prediction of reaction pathways and activation energies for chemical transformations, providing insights that guide synthetic chemistry in pharmaceutical development. Computational modeling of reaction mechanisms allows researchers to explore hypothetical transformations without conducting extensive laboratory experiments, accelerating the discovery of efficient synthetic routes to drug candidates [7].

Visualization of Wavefunctions and Molecular Orbitals

Representing Complex Wavefunctions

Wavefunctions are complex-valued mathematical objects that can be challenging to visualize effectively. The following diagram illustrates the relationship between different representations of a Gaussian wave packet, a common type of wavefunction for localized particles:

Wavefunction Representation Relationships

Three primary techniques exist for visualizing wavefunctions [23]:

- Component Plotting: Displaying real part

Re[Ψ(x)]and imaginary partIm[Ψ(x)]as separate curves, often in red and blue respectively [23] - Amplitude-Phase Representation: Plotting the magnitude

|Ψ(x)|as a curve with color indicating the phasearg[Ψ(x)][23] - Probability Density Visualization: Showing

|Ψ(x)|²using grayscale shading where darker regions correspond to higher probability density [23]

For molecular systems, wavefunctions are typically represented as molecular orbitals plotted as three-dimensional isosurfaces of constant electron probability density. These visualizations provide intuitive understanding of bonding interactions, lone pairs, and reactive sites within molecules [20].

Time Evolution of Quantum Systems

The time-dependent Schrödinger equation governs how wavefunctions evolve, with the formal solution given by [17]:

|Ψ(t)⟩ = e^(-iĤt/ħ)|Ψ(0)⟩

Where e^(-iĤt/ħ) is the time evolution operator. For a wavefunction with well-defined momentum, this evolution corresponds to movement at nearly constant speed with gradual spreading [23]. The unitary nature of time evolution in quantum mechanics ensures probability conservation, as the norm of the wavefunction remains constant over time [17].

Schrödinger's equation and the wavefunction concept provide the essential mathematical foundation for quantum chemistry, enabling researchers to understand and predict molecular behavior from first principles. The integration of these quantum mechanical tools with modern computational resources has created a powerful framework for addressing challenges in drug discovery, materials design, and fundamental chemical research. As computational methodologies continue to advance and computing power grows, quantum chemical approaches will play an increasingly vital role in chemical research, providing insights that complement and extend experimental investigations. The ongoing development of more accurate and efficient computational methods ensures that quantum chemistry will remain at the forefront of innovation in the chemical sciences, driving advances that benefit pharmaceutical development, materials science, and our fundamental understanding of molecular phenomena.

In the realm of quantum chemistry and drug development, predicting and visualizing the behavior of electrons is paramount. The electron density, ρ(r), is a fundamental quantum mechanical observable that uniquely defines the ground state properties of electronic systems, as established by the Hohenberg-Kohn theorem [24]. This function describes the distribution of electronic charge in position space around atomic nuclei and serves as the foundation for understanding chemical bonding, molecular reactivity, and intermolecular interactions—all critical considerations in rational drug design [25]. For research scientists, the ability to accurately compute, analyze, and visualize this electron density provides indispensable insights for predicting molecular properties and reaction pathways without resorting to exhaustive experimental screening.

Electrons exhibit both wave-like and particle-like properties, existing not in discrete orbits but within atomic orbitals—mathematical functions describing their wave-like behavior and location probability in atoms [26]. When atoms combine to form molecules, their atomic orbitals combine to form molecular orbitals, which extend across multiple atoms and provide a visual representation of electron location and movement in molecules [27]. This technical guide explores the core principles, computational methodologies, and visualization techniques for atomic orbitals and electron density, providing researchers with the foundational knowledge necessary to leverage these concepts in advanced chemical research and pharmaceutical development.

Theoretical Foundations: From Atomic Orbitals to Electron Density Distributions

Quantum Mechanical Definition of Atomic and Molecular Orbitals

In quantum mechanics, an atomic orbital is formally defined as a one-electron wave function, typically denoted as ψ, which is an approximate solution to the Schrödinger equation for electrons bound to an atom's nucleus [26]. These orbitals are characterized by a set of three quantum numbers: the principal quantum number n (energy level), the azimuthal quantum number l (orbital shape), and the magnetic quantum number m_l (orbital orientation). The square of the wave function, |ψ(r)|², gives the electron probability density—the probability of finding an electron in a specific region around the nucleus [26]. Each orbital can be occupied by a maximum of two electrons with opposite spins, in accordance with the Pauli exclusion principle.

Molecular orbitals are constructed through linear combinations of atomic orbitals (LCAO), forming delocalized wave functions that describe electron behavior across entire molecules. These can be classified as bonding orbitals (electron density concentrated between nuclei, stabilizing the molecule), anti-bonding orbitals (nodal planes between nuclei, destabilizing), or non-bonding orbitals (localized on single atoms) [27]. The spatial components of these one-electron functions provide the framework for understanding electron distribution in molecular systems, though it's crucial to recognize that this independent-particle model represents an approximation, as electron motion is actually correlated [26].

Electron Density and Its Topological Features

The one-electron density for a system of N electrons can be expressed within the Born-Oppenheimer approximation as follows [24]:

ρ(r) = N∫...∫|Ψel(r, r2, ..., rN)|² dr2 ... dr_N

where r, R represent electronic and nuclear coordinates, and Ψ_el denotes the electronic wave function. This electron density is a real-valued scalar field that lends itself to topological analysis through the Quantum Theory of Atoms in Molecules (QTAIM) framework [24].

Topological analysis of electron density involves identifying critical points (CPs) where the gradient of the density vanishes (∇ρ = 0) [24]. These critical points are characterized by their signature, κ, which is the sum of the signs of the three eigenvalues of the Hessian matrix of second derivatives. Key critical points include:

- Nuclear critical points (κ = -3): Local maxima located at nuclear positions

- Bond critical points (κ = -1): Saddle points typically found between chemically bonded atoms

- Ring critical points (κ = +1): Located within ring structures

- Cage critical points (κ = +3): Found in enclosed cage-like molecules

Table 1: Classification and Properties of Critical Points in Electron Density Topology

| Critical Point Type | Signature (κ) | Electron Density Curvature | Typical Location |

|---|---|---|---|

| Nuclear Attractor | -3 | All curvatures negative | Atomic nuclei positions |

| Bond Critical Point (BCP) | -1 | Two negative, one positive curvature | Between bonded atoms |

| Ring Critical Point | +1 | Two positive, one negative curvature | Center of ring structures |

| Cage Critical Point | +3 | All curvatures positive | Center of cage molecules |

At bond critical points, the sign of the Laplacian of the electron density (∇²ρ) provides crucial information about bond character: a negative ∇²ρ indicates local concentration of electron density characteristic of covalent bonds, while a positive ∇²ρ suggests closed-shell interactions typical of ionic bonds or intermolecular interactions [24].

Computational Methodologies and Protocols

Quantum Computation of Electron Densities

For quantum computation of molecular systems, the electronic structure problem is formulated within second quantization, where the electron density is obtained from the one-particle reduced density matrix (1-RDM) [24]. The electron density can be defined as:

ρ(r) = ∑(p,q,σ) φ*pσ(r)φqσ(r) Dpq

where φpσ(r) correspond to spin orbitals and Dpq represents elements of the 1-RDM, which can be expressed as:

Dpq = 〈a†pσ aqσ〉 = 〈Ψ|a†pσ a_qσ|Ψ〉

In this formalism, a†pσ and aqσ are fermionic creation and annihilation operators, and |Ψ〉 represents the wave function expressed as a linear combination of Slater determinants [24]. The 1-RDM is constructed following measurements of parametrized quantum circuits (ansätze) representing molecular ground states. This approach scales as O(n²), making density-based fidelity witness approaches potentially efficient for validating quantum computations [24].

Figure 1: Workflow for Electron Density Analysis via Quantum Computation

Machine Learning Approaches for Electron Density Prediction

Recent advances have demonstrated the effectiveness of machine learning models for predicting electron densities, drawing inspiration from image super-resolution techniques in computer vision [28]. In this approach, the electron density is treated as a 3D grayscale image, and a convolutional residual network (ResNet) transforms a crude initial guess of the molecular density into an accurate ground-state quantum mechanical density.

The protocol involves:

- Input Generation: Creating a superposition of atomic densities (SAD) as the initial guess

- Feature Extraction: Representing the input superposed atomic density on a coarse grid

- Spatial Upscaling: Using the model to predict accurate molecular electron density on a high-resolution grid (typically with upscaling factors of 2-4× along each axis)

- Property Calculation: Deriving energies and orbitals through a single diagonalization of the Kohn-Sham Hamiltonian based on the predicted density

This approach has demonstrated superior accuracy compared to traditional density fitting methods, achieving chemical accuracy (1 kcal/mol ≈ 43 meV) for total electronic energies [28].

Experimental Electron Density Determination from Diffraction

Experimentally, electron densities can be reconstructed through refinement of X-ray diffraction data using multipolar models, X-ray constrained wave functions, or the maximum entropy method [24]. The fundamental relationship is:

ρ(r) = (1/V) ∑(hkl) F(hkl) exp[-2πi(hx + ky + lz)]

where F_(hkl) are the structure factors obtained from diffraction experiments [25].

However, practical limitations include:

- Resolution truncation effects from limited experimental resolution

- The phase problem in structure factor determination

- Thermal motion effects, as experiments measure thermally averaged electron densities

To address these challenges, modeling is necessary to obtain static electron density distributions. The multipolar expansion model parameterizes electron density around atoms using:

ρatom(r) = ρcore(r) + Pvκ³ρval(κr) + ∑(l=0)^lmax κ'³Rl(κ'r)∑(m=0)^l Plm± ylm±(θ,φ)

where Pv and Plm± are population parameters, and κ, κ' are contraction/expansion coefficients [25]. This model can be refined against experimental diffraction data to obtain accurate electron density distributions.

Table 2: Comparison of Electron Density Determination Methods

| Method | Key Principle | Accuracy Metrics | Computational/Experimental Cost |

|---|---|---|---|

| Quantum Computation | Measurement of 1-RDM from parametrized quantum circuits | Comparison to full CI or CCSD references | Currently limited by quantum hardware fidelity and scale |

| Machine Learning (Image Super-Resolution) | Convolutional ResNet transformation of atomic density guess | MAE ~0.16% on QM9 dataset [28] | Low inference cost after training; requires extensive training data |

| Experimental X-ray Diffraction | Fourier synthesis of structure factors with multipolar modeling | Resolves sub-atomic features (e.g., bonding density) | Requires high-quality crystals and extensive measurement time |

| Conventional Quantum Chemistry (DFT, HF, CCSD) | Numerical solution of Schrödinger equation with varying approximations | Systematic improvement with method level | Scales from O(N³) to O(e^N) with system size and accuracy |

Visualization Techniques and Analytical Applications

Visualizing Electron Density and Molecular Orbitals

Electron density distributions are commonly visualized using isosurfaces—three-dimensional surfaces connecting points of identical electron density value [27]. For molecular shape representation, isosurfaces with cutoffs in the range of 0.01–0.05 e/Bohr³ are typically used, while higher cutoffs (0.1–0.15 e/Bohr³) reveal bond density, with higher volumes corresponding to higher bond orders [27].

Atomic orbitals such as the 2p orbitals display characteristic lobed structures with nodal planes where electron density drops to zero. For example, the 2p_x orbital has two lobes aligned along the x-axis with a yz nodal plane passing through the nucleus [29]. The "surface" of three-dimensional orbital representations corresponds to an isosurface of constant electron density value, with size changing according to the selected density value [29].

Molecular orbitals provide critical insights into conjugation and reactivity. Key features include:

- Bonding orbitals: Electron density concentrated between nuclei, strengthening bonds

- Anti-bonding orbitals: Nodal planes between nuclei, weakening bonds when occupied

- Non-bonding orbitals: Localized on single atoms, with minimal effect on bonding

Analyzing Electron Density Changes in Chemical Reactions

Visualizing electron density changes (EDC) during chemical reactions provides mechanistic insights, particularly for drug development where reaction pathways influence synthetic feasibility. A robust method for EDC visualization involves mapping rectangular grid points from a reference structure to distorted positions around atoms of another structure along a reaction pathway [30].

The transformation is implemented as:

Gk^(distorted)(s+Δs) = Gk^(distorted)(s) + ∑A wA,k(s)[RA(s+Δs) - RA(s)]

where w_A,k(s) are atomic weights for each grid point based on Hirshfeld partitioning:

wA,k(s) = ρA^(free)(|Gk(s) - RA(s)|) / ∑B ρB^(free)(|Gk(s) - RB(s)|)

The electron density change is then computed as:

Δρ(Gk^(0)) = ρ^(1)(Gk^(1,distorted)) - ρ^(0)(G_k^(0))

This approach reveals expected electron density reductions around severed bonds and density increases around newly formed bonds, correlating with chemical intuition [30].

Figure 2: Workflow for Visualizing Electron Density Changes in Reactions

Advanced Analysis: Quantum Topology and Chemical Descriptors

Beyond visualization, electron density analysis provides quantitative descriptors for chemical research:

- Bond orders: Wiberg and Mayer bond orders derived from density matrices characterize bond strength and aromaticity [27]

- Atomic charges: Multipole-derived charges (from Bader's QTAIM or Hirshfeld partitioning) describe charge distribution [30]

- Electrostatic potential: Surfaces mapping electrostatic potential identify nucleophilic/electrophilic sites for reactivity prediction [27]

- Fukui functions: Electron density differences between neutral and charged systems identify sites prone to nucleophilic or electrophilic attack [30]

In pharmaceutical research, these descriptors help predict drug-receptor interactions, solubility, reactivity, and spectroscopic properties, enabling rational design without exhaustive experimental testing.

Research Reagents and Computational Tools

Table 3: Essential Computational Tools and Theoretical "Reagents" for Electron Density Analysis

| Tool/Resource | Type | Primary Function | Application in Research |

|---|---|---|---|

| Multipolar Model | Theoretical Framework | Parameterizes electron density around atoms using spherical and angular functions | Experimental charge density refinement from diffraction data [25] |

| Hirshfeld Partitioning | Computational Method | Divides molecular electron density into atomic contributions | Weighting schemes for grid mapping in EDC visualization [30] |

| Quantum Theory of Atoms in Molecules (QTAIM) | Analytical Framework | Topological analysis of electron density critical points | Bond characterization, atomic property definition [24] |

| Superposition of Atomic Densities (SAD) | Initial Guess | Crude approximation of molecular electron density from isolated atoms | Starting point for machine learning density prediction [28] |

| Convolutional Residual Network (ResNet) | Machine Learning Architecture | Image super-resolution for 3D electron density prediction | Accurate density prediction from crude initial guesses [28] |

| One-Particle Reduced Density Matrix (1-RDM) | Quantum Mechanical Construct | Contains all one-electron information including electron density | Quantum computation of molecular properties [24] |

Atomic orbitals and electron density representations provide the fundamental framework for understanding electronic structure in chemical systems. The visualization and topological analysis of electron density offer powerful methods for characterizing chemical bonding, predicting reactivity, and rationalizing molecular properties—all essential capabilities in pharmaceutical research and development. As computational methods advance, particularly in quantum computation and machine learning approaches inspired by image processing, researchers gain increasingly powerful tools for accurate electron density prediction and analysis. These techniques continue to bridge the gap between theoretical quantum mechanics and practical chemical research, enabling more efficient drug discovery and materials design through deep electronic-level understanding.

Quantum superposition and entanglement are not merely abstract mathematical concepts but are fundamental physical phenomena that underpin the behavior and properties of matter at the molecular scale. The principle of superposition states that any two or more quantum states can be added together, or "superposed," and the result will be another valid quantum state [31]. This principle arises directly from the linearity of the Schrödinger equation, which is a foundational postulate of quantum mechanics [31]. Concurrently, quantum entanglement describes the phenomenon where the quantum states of two or more particles become inextricably linked, such that the quantum state of each particle cannot be described independently of the state of the others, even when separated by large distances [32]. The interplay between these phenomena enables the rich complexity of molecular structures and their chemical behaviors, forming the basis for emerging quantum technologies in chemistry and materials science.

For chemical research, these principles provide the theoretical framework necessary to understand and predict molecular behavior that defies classical explanation. The non-classical correlations produced by entanglement and the simultaneous existence in multiple states afforded by superposition directly impact electronic configurations, bonding characteristics, and energy transfer processes in molecular systems [33]. Recent experimental advances have transformed these once-theoretical concepts into tangible resources that can be harnessed for quantum simulation, sensing, and computation, offering potential pathways to overcome current limitations in drug discovery and materials design [34] [35].

Theoretical Foundations

Mathematical Framework of Superposition

The mathematical description of superposition originates from the linear nature of the Schrödinger equation. For a quantum system, if ψ₁ and ψ₂ are valid solutions to the Schrödinger equation, then any linear combination Ψ = c₁ψ₁ + c₂ψ₂ also constitutes a valid solution, where c₁ and c₂ are complex numbers [31]. This mathematical property enables physical systems to exist in superpositions of multiple states simultaneously.

In Dirac's bra-ket notation, a quantum state of a system is represented as |Ψ⟩. For a simple two-level system, such as a qubit, this can be expressed as |Ψ⟩ = c₀|0⟩ + c₁|1⟩, where |0⟩ and |1⟩ represent the basis states, and c₀ and c₁ are complex probability amplitudes [31]. The squares of the absolute values of these coefficients (|c₀|² and |c₁|²) give the probabilities of finding the system in the corresponding basis states upon measurement, with the constraint that |c₀|² + |c₁|² = 1, ensuring total probability conservation [31].

For molecular systems, the superposition principle extends to more complex scenarios where molecules can exist in superpositions of different rotational, vibrational, or electronic states. The general state of a composite system can be expressed as |Ψ⟩ = ∑cᵢ|ψᵢ⟩, where the |ψᵢ⟩ represent the eigenstates of the Hamiltonian governing the system [31]. This mathematical description enables the modeling of molecular behavior that transcends classical limitations, including quantum coherence in photosynthetic energy transfer and the simultaneous exploration of multiple reaction pathways in chemical reactions [33].

Quantum Entanglement in Composite Systems

Quantum entanglement represents a profound departure from classical physics, exhibiting correlations between measurement outcomes that cannot be replicated by any local hidden variable theory [32]. Mathematically, a system is entangled if its quantum state cannot be factored as a simple product of states of its local constituents; instead, the particles form an inseparable whole [32]. For a bipartite system consisting of particles A and B, entanglement occurs when the total state vector cannot be written as |Ψ⟩ = |ψ⟩ₐ ⊗ |φ⟩_b, but rather requires a sum of such product states.

The singlet state of two spin-½ particles provides a canonical example of entanglement: |Ψ⟩ = (1/√2)(|↑↓⟩ - |↓↑⟩). In this state, neither particle possesses a definite spin state individually, yet their spins are perfectly anti-correlated [32]. Measuring the spin of one particle immediately determines the spin of the other, regardless of the distance separating them—a phenomenon that Einstein famously described as "spooky action at a distance" [32].

In molecular contexts, entanglement can manifest between different quantum degrees of freedom, including electronic spins, molecular vibrations, and rotational states. Compared to atoms, molecules offer additional degrees of freedom that can become entangled, providing richer possibilities for quantum information processing and simulation of complex materials [35]. For instance, a molecule can vibrate and rotate in multiple modes, and these modes can be used to encode quantum information, with polar molecules interacting even when spatially separated [35].

Table 1: Key Mathematical Properties of Quantum Superposition and Entanglement

| Property | Mathematical Description | Physical Significance |

|---|---|---|

| Linear Superposition | (|\Psi\rangle = c1|\psi1\rangle + c2|\psi2\rangle) | Allows simultaneous existence in multiple states |

| Probability Amplitude | (P(i) = |\langle \psi_i |\Psi \rangle|^2) | Born rule for measurement probabilities |

| Entanglement Criterion | (|\Psi\rangle{AB} \neq |\psi\rangleA \otimes |\phi\rangle_B) | Defines non-separability of composite systems |

| Bell State | (|\Phi^+\rangle = \frac{1}{\sqrt{2}} (|0\rangleA|0\rangleB + |1\rangleA|1\rangleB)) | Maximally entangled two-qubit state |

| Density Matrix | (\rho = \sumi pi |\psii\rangle\langle\psii|) | Statistical representation of quantum states |

Decoherence and the Quantum-Classical Transition

The transition from quantum to classical behavior occurs through decoherence, a process where a quantum system loses its coherent quantum properties through interaction with its environment [36]. When a quantum object interacts with its surroundings—such as air molecules, dust particles, or photons—it becomes entangled with the environment, causing the rapid deterioration of superposition states [36]. The more complex the object and the greater its interactions with the environment, the faster this decoherence process occurs.

For molecular systems, decoherence plays a critical role in determining the timescales over which quantum effects persist. A dust grain in the vacuum of space would lose a superposition of positions separated by its own width in less than a second due to interactions with stray photons and cosmic background radiation [36]. In chemical applications, understanding and controlling decoherence is essential for harnessing quantum effects in molecular systems, whether for quantum computing, quantum sensing, or exploiting quantum effects in biological processes such as photosynthesis [34].

Experimental Advances and Methodologies

Controlled Entanglement of Individual Molecules

A groundbreaking experimental achievement in quantum molecular science came in 2023 when Princeton physicists successfully entangled individual molecules using a reconfigurable optical tweezer array [35]. This methodology enabled unprecedented control over molecular quantum states, establishing molecules as a viable platform for quantum science applications.

The experimental apparatus employed tightly focused laser beams ("optical tweezers") to trap and manipulate individual molecules with high precision [35]. This approach allowed researchers to position molecules in desired configurations and engineer specific quantum states through precise control of external electromagnetic fields. The tweezer arrays provided the isolation necessary to maintain quantum coherence while allowing controlled interactions between molecules to generate entanglement.

This demonstration was particularly significant because molecules offer more quantum degrees of freedom compared to atoms, including vibrational and rotational modes that can be exploited for encoding quantum information [35]. For chemical applications, this means that entangled molecules could serve as building blocks for quantum simulators capable of modeling complex quantum materials whose behaviors are difficult to simulate with classical computers, as well as for quantum sensors with enhanced sensitivity and quantum computers with novel approaches to information processing [35].

Figure 1: Experimental workflow for generating and verifying molecular entanglement using optical tweezer arrays.

Entanglement-Enhanced Superradiance

Recent research from the University of Warsaw and collaborating institutions has revealed how direct atom-atom interactions can amplify superradiance, a quantum phenomenon where atoms emit light in perfect synchronization, creating a collective burst of light with intensity far greater than the sum of individual emissions [34]. This work, published in 2025, incorporated quantum entanglement into models of light-matter interactions, demonstrating that these interactions can enhance energy transfer efficiency.

The researchers developed a computational method that explicitly represents entanglement, allowing them to track correlations within and between atomic and photonic subsystems [34]. Their findings showed that direct interactions between neighboring atoms can lower the threshold for superradiance to occur and even reveal previously unknown ordered phases with distinctive properties. This research highlights that including entanglement in theoretical models is essential for accurately describing the full range of light-matter behaviors, particularly in systems where atoms are closely spaced and interact through short-range dipole-dipole forces [34].

From a chemical perspective, this entanglement-enhanced superradiance has profound implications for understanding energy transfer in molecular aggregates and designing novel quantum-enhanced devices. The principles demonstrated could inform the development of quantum batteries—conceptual energy storage units that could charge and discharge much faster by exploiting collective quantum effects [34]. By adjusting the strength and nature of atom-atom interactions, scientists can tune the conditions required for superradiance and control how energy moves through molecular systems, turning "a many-body effect into a practical design rule" for quantum technologies [34].

Table 2: Experimental Parameters for Quantum Molecular Phenomena

| Experimental Parameter | Molecular Entanglement [35] | Superradiance Enhancement [34] |

|---|---|---|

| System Type | Individual molecules in optical tweezers | Atoms in optical cavity |

| Key Interaction | Direct dipole-dipole coupling | Photon-mediated and direct atom-atom |

| Entanglement Metric | Bell inequality violation | Quantum correlations in light emission |

| Temperature Requirements | Ultra-cold (near absolute zero) | Cryogenic to room temperature |

| Coherence Timescale | Millisecond range | Microsecond to millisecond |

| Primary Detection Method | Quantum state tomography | Photon statistics and intensity |

| Technological Applications | Quantum simulators, molecular qubits | Quantum batteries, enhanced sensors |

Research Reagent Solutions and Essential Materials

The experimental investigation of quantum effects in molecular systems requires specialized materials and instrumentation designed to maintain quantum coherence and enable precise manipulation at the molecular scale.

Table 3: Essential Research Reagents and Materials for Quantum Molecular Experiments

| Item | Function | Specific Application Example |

|---|---|---|

| Optical Tweezer Arrays | Trapping and positioning individual molecules | Isolation of molecules for entanglement generation [35] |

| Ultra-high Vacuum Chambers | Minimize environmental interactions | Reduce decoherence from gas collisions [35] |

| Cryogenic Systems | Reduce thermal noise | Extend quantum coherence times [35] |

| High-Finesse Optical Cavities | Enhance light-matter interactions | Superradiance studies and enhancement [34] |

| Dipole-Molecule Species | Provide strong, long-range interactions | Quantum simulation of many-body systems [35] |

| Quantum State-Targeted Lasers | Precise quantum state manipulation | Preparation and measurement of superposition states [35] |

| Single-Photon Detectors | Measure quantum light statistics | Verification of superradiant emission [34] |

| Arbitrary Waveform Generators | Control interaction sequences | Precisely timed entanglement operations [35] |

Implications for Molecular Structure and Chemical Properties

Electronic Structure and Bonding

The principles of superposition and entanglement provide profound insights into chemical bonding and molecular electronic structure that transcend classical descriptions. Quantum superposition allows electrons in molecules to exist in delocalized states spread across multiple atomic centers, fundamentally explaining the nature of chemical bonds [33]. Molecular orbital theory, a cornerstone of modern chemistry, inherently relies on the superposition principle, with molecular orbitals constructed as linear combinations of atomic orbitals [37].

Entanglement plays an equally crucial role in molecular electronic structure. The electrons in a molecule become strongly entangled, particularly through Coulombic interactions, with the entanglement patterns directly influencing bond strengths, reaction barriers, and spectroscopic properties [32]. Recent research suggests that the degree of entanglement between different parts of a molecular system correlates with important chemical properties, including aromaticity, bond order, and reactivity patterns [33]. This perspective enables a more fundamental understanding of molecular behavior than possible with conventional approaches that treat electrons as independent particles.

Energy Transfer and Storage

Quantum superposition and entanglement offer novel mechanisms for energy transfer and storage in molecular systems. The recently discovered role of entanglement in enhancing superradiance suggests potential pathways for highly efficient energy transfer in molecular aggregates [34]. In natural systems, evidence indicates that photosynthetic organisms may exploit quantum superposition to achieve greater efficiency in transporting energy, allowing pigment proteins to be spaced further apart than would otherwise be possible [31] [34].

The concept of quantum batteries represents another promising application, where entangled molecular states could enable energy storage devices with dramatically faster charging and discharging characteristics [34]. By engineering molecular systems with specific entanglement patterns, researchers may design materials with tailored energy transfer properties, potentially revolutionizing approaches to solar energy conversion, quantum-enhanced catalysis, and efficient illumination technologies.

Figure 2: Logical diagram showing how entanglement-enhanced superradiance enables advanced quantum applications.

Chemical Reactivity and Dynamics

Quantum superposition enables molecules to simultaneously explore multiple reaction pathways and transition states, fundamentally influencing chemical reactivity and reaction dynamics [33]. This simultaneous exploration can lead to quantum interference effects, where different pathways either constructively or destructively interfere, altering reaction rates and product distributions in ways unpredictable from classical theories.