Self-Consistent Field Methods: From Quantum Foundations to Drug Discovery Applications

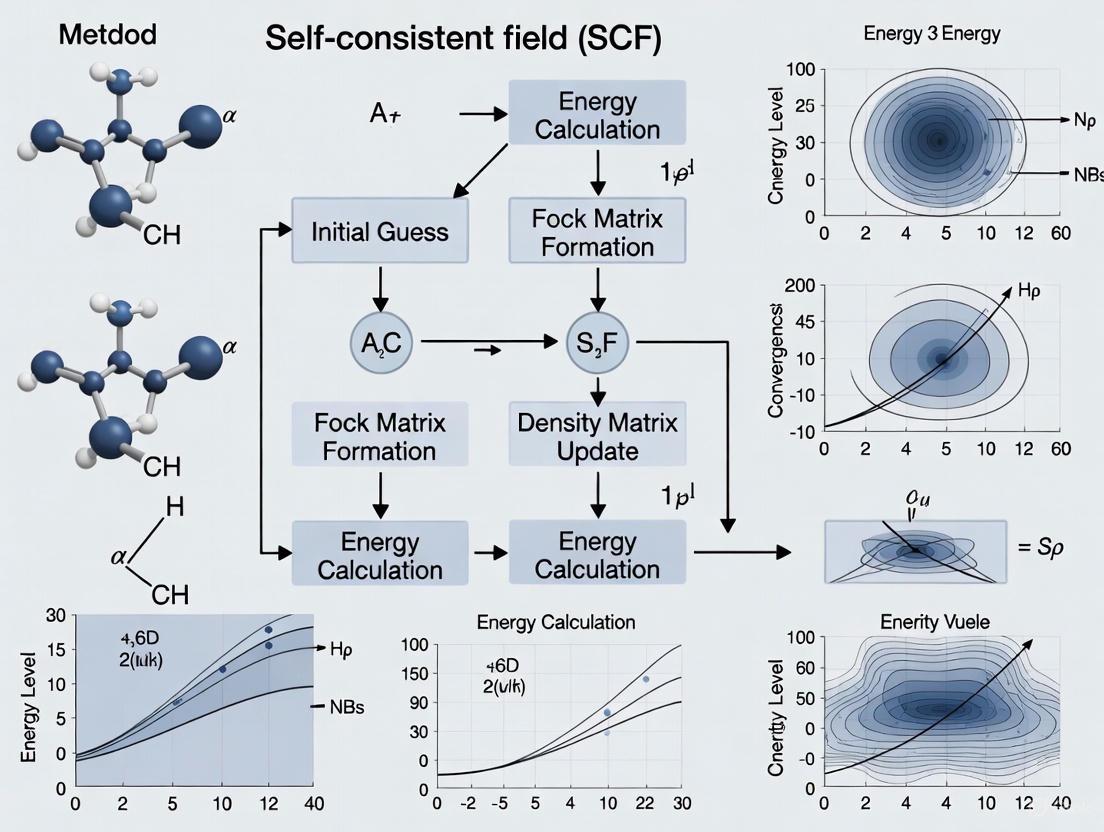

This comprehensive review explores self-consistent field (SCF) methods, fundamental computational techniques in quantum chemistry that iteratively solve the electronic structure of molecules.

Self-Consistent Field Methods: From Quantum Foundations to Drug Discovery Applications

Abstract

This comprehensive review explores self-consistent field (SCF) methods, fundamental computational techniques in quantum chemistry that iteratively solve the electronic structure of molecules. Tailored for researchers and drug development professionals, the article covers foundational SCF theory through Hartree-Fock and Density Functional Theory, practical applications in drug design for modeling protein-ligand interactions and reaction mechanisms, strategies for overcoming convergence challenges, and comparative analysis with other computational methods. By synthesizing current methodologies with emerging trends like quantum computing integration, this resource provides both theoretical understanding and practical implementation guidance for leveraging SCF in pharmaceutical research and development.

Quantum Foundations: Understanding the Core Principles of SCF Theory

The Schrödinger Equation and the Birth of Quantum Chemistry

The Schrödinger equation, formulated by Erwin Schrödinger in 1926, represents the quantum counterpart to Newton's second law in classical mechanics [1]. This partial differential equation governs the wave function of a non-relativistic quantum-mechanical system, enabling predictions about a system's behavior at the submicroscopic scale with remarkable accuracy [1] [2]. Its discovery was a landmark achievement that formed the foundational pillar for the entire field of quantum chemistry, providing the necessary theoretical framework to move beyond descriptive chemistry to a predictive, first-principles science of atoms and molecules [3].

This equation's significance extends beyond theoretical importance; it serves as the fundamental engine driving modern computational chemistry. The Schrödinger equation provides the mathematical basis for self-consistent field (SCF) methods, which include both Hartree-Fock theory and Kohn-Sham density functional theory [4] [5]. These methods represent the simplest, most affordable, and most widely-used electronic structure techniques, forming the computational backbone for research across chemistry, materials science, and drug development [6].

Historical Foundation: From Quantum Theory to Chemical Application

The origins of quantum chemistry date to a pivotal calculation performed by Walter Heitler and Fritz London in 1927 [3]. Through months of intensive pencil-and-paper mathematics, they successfully applied the newly formulated Schrödinger equation to describe the bonding between two hydrogen atoms, marking the first application of quantum mechanics to explain the enigmatic phenomenon of chemical bonding [3]. This breakthrough demonstrated that quantum theory could quantitatively address chemical problems, birthing an entirely new field.

Despite this promising start, the field immediately encountered a significant barrier. As Paul Dirac famously noted, quantum mechanics provided all that was necessary to explain problems in chemistry, but at a huge computational cost that made practical ab initio calculations for molecular systems nearly impossible to perform by hand [3]. This limitation persisted until the advent of digital computers, which eventually transformed quantum chemistry from a theoretical exercise into a practical tool.

A major milestone occurred in 1955 when W.C. Scherr, a graduate student of future Nobel laureate Robert S. Mulliken, performed the first-ever all-electron ab initio calculation of a molecule larger than hydrogen [3]. This calculation, which took two years to complete for a nitrogen molecule (comprising just two nuclei and fourteen electrons), demonstrated that quantum chemistry could yield accurate predictions for molecular systems beyond the simplest cases [3]. This breakthrough marked the beginning of quantum chemistry's evolution into an indispensable tool for modern chemical research.

Mathematical Foundation: The Schrödinger Equation and Its Formulations

Fundamental Equations

The Schrödinger equation exists in two primary forms essential for computational quantum chemistry. The time-dependent Schrödinger equation describes how quantum systems evolve:

$$i\hbar\frac{\partial}{\partial t}|\Psi(t)\rangle = \hat{H}|\Psi(t)\rangle$$

where $i$ is the imaginary unit, $\hbar$ is the reduced Planck constant, $\frac{\partial}{\partial t}$ is the partial derivative with respect to time, $|\Psi(t)\rangle$ is the quantum state vector, and $\hat{H}$ is the Hamiltonian operator [1].

For stationary states and most chemical applications, the time-independent Schrödinger equation is used:

$$\hat{H}|\Psi\rangle = E|\Psi\rangle$$

where $E$ represents the energy eigenvalues corresponding to the stationary states of the system [1]. This eigenvalue equation determines the allowed energy levels and electron distributions in atoms and molecules.

In the position-space representation for a single non-relativistic particle in one dimension, the equation becomes:

$$i\hbar\frac{\partial}{\partial t}\Psi(x,t) = \left[-\frac{\hbar^2}{2m}\frac{\partial^2}{\partial x^2} + V(x,t)\right]\Psi(x,t)$$

where $\Psi(x,t)$ is the wave function, $m$ is the particle mass, and $V(x,t)$ represents the potential energy [1].

Table 1: Key Mathematical Operators in the Schrödinger Equation

| Operator/Term | Mathematical Expression | Physical Significance |

|---|---|---|

| Hamiltonian ($\hat{H}$) | $\hat{H} = \hat{T} + \hat{V}$ | Total energy operator of the system |

| Kinetic Energy ($\hat{T}$) | $-\frac{\hbar^2}{2m}\nabla^2$ | Energy due to electron motion |

| Potential Energy ($\hat{V}$) | $V(x,t)$ | Energy from external potentials and electron interactions |

| Wave Function ($\Psi$) | $\Psi(x,t)$ | Probability amplitude containing all system information |

Physical Interpretation and Properties

The wave function solutions to the Schrödinger equation contain complete information about a quantum system. The square of the absolute value of the wave function at each point defines a probability density function [1]:

$$\Pr(x,t) = |\Psi(x,t)|^2$$

This probabilistic interpretation connects the abstract mathematics to physically observable phenomena, enabling the prediction of electron distributions, molecular properties, and spectroscopic behavior.

Two crucial mathematical properties make the Schrödinger equation particularly suitable for computational implementation:

Linearity: If $|\psi1\rangle$ and $|\psi2\rangle$ are solutions, then any linear combination $a|\psi1\rangle + b|\psi2\rangle$ is also a solution [1]. This permits the construction of complex wave functions from simpler components and enables the superposition principle that characterizes quantum systems.

Unitarity: The time-evolution operator $\hat{U}(t) = e^{-i\hat{H}t/\hbar}$ is unitary, preserving the normalization of the wave function over time [1]. This ensures conservation of probability in isolated quantum systems.

From Equation to Computation: The Self-Consistent Field Framework

Basis Set Expansion and the LCAO Approach

Practical application of the Schrödinger equation to molecular systems requires expanding molecular orbitals (MOs) as a linear combination of atomic orbitals (LCAO) [5]. In this approach, the molecular orbitals $\varphi_i(\vec{r})$ are expressed as:

$$\varphii(\vec{r}) = \sum{\mu=1}^M C{\mu i} \chi\mu(\vec{r})$$

where $C{\mu i}$ are the molecular orbital coefficients, $\chi\mu(\vec{r})$ are atomic orbital basis functions, and $M$ is the number of basis functions [5]. The atomic orbitals are typically chosen as Gaussian-type orbitals (GTOs), Slater-type orbitals (STOs), or numerical atomic orbitals (NAOs) [5].

The electron density, which plays a pivotal role in both Hartree-Fock and density functional theory, can be evaluated from the density matrix $P_{\mu\nu}$:

$$\rho(\vec{r}) = \sum{\mu\nu} P{\mu\nu} \chi\mu(\vec{r}) \chi\nu(\vec{r})$$

where the density matrix is constructed from the molecular orbital coefficients:

$$P{\mu\nu} = \sum{i=1}^{N} C{\mu i} C{\nu i}$$

with $N$ representing the number of occupied orbitals [5].

The Self-Consistent Field Equations

Minimization of the total electronic energy with respect to the molecular orbital coefficients within a finite basis set leads to the Roothaan-Hall equations for restricted closed-shell systems [5]:

$$\mathbf{FC} = \mathbf{SCE}$$

where $\mathbf{F}$ is the Fock matrix, $\mathbf{C}$ is the matrix of molecular orbital coefficients, $\mathbf{S}$ is the atomic orbital overlap matrix, and $\mathbf{E}$ is a diagonal matrix of orbital energies [4] [5].

The Fock matrix $\mathbf{F}$ is defined as:

$$\mathbf{F} = \mathbf{T} + \mathbf{V} + \mathbf{J} + \mathbf{K}$$

where $\mathbf{T}$ is the kinetic energy matrix, $\mathbf{V}$ is the external potential, $\mathbf{J}$ is the Coulomb matrix, and $\mathbf{K}$ is the exchange matrix [4]. In Kohn-Sham density functional theory, the exchange term is replaced by the exchange-correlation potential.

Table 2: Computational Methods Derived from the Schrödinger Equation

| Method | Theoretical Foundation | Key Features | Computational Cost |

|---|---|---|---|

| Hartree-Fock (HF) | Wavefunction theory | Mean-field approximation; exact exchange | $\mathcal{O}(N^4)$ |

| Density Functional Theory (DFT) | Hohenberg-Kohn theorems | Electron density as basic variable; approximate exchange-correlation | $\mathcal{O}(N^3)$ |

| Second-Order SCF (SOSCF) | Newton-Raphson method | Quadratic convergence; improved stability | $\mathcal{O}(N^3)$ |

| Linear-Scaling SCF | Sparse matrix algebra | Reduced computational cost for large systems | $\mathcal{O}(N)$ |

SCF Convergence Methods

The nonlinear nature of the SCF equations necessitates iterative solution strategies. Two primary approaches are implemented in modern quantum chemistry packages:

DIIS (Direct Inversion in Iterative Subspace): Extrapolates the Fock matrix using information from previous iterations by minimizing the norm of the commutator $[\mathbf{F},\mathbf{PS}]$, where $\mathbf{P}$ is the density matrix [4]. Variants include EDIIS and ADIIS for improved convergence in challenging cases.

SOSCF (Second-Order SCF): Implements a Newton-Raphson approach using the orbital Hessian to achieve quadratic convergence [4]. This method, particularly the co-iterative augmented Hessian (CIAH) implementation, can converge difficult cases more reliably than DIIS.

Additional techniques to facilitate SCF convergence include damping (mixing old and new density matrices), level shifting (increasing the energy gap between occupied and virtual orbitals), and fractional occupation schemes (smearing orbital occupations) [4].

Practical Implementation: Methodologies for Computational Research

Initial Guess Strategies

The choice of initial guess significantly impacts SCF convergence behavior. Several approaches are commonly implemented:

Superposition of Atomic Densities: Constructs the initial guess density by combining precomputed atomic densities or potentials [4]. This includes the 'minao', 'atom', and 'vsap' options in PySCF, with the latter using pretabulated atomic potentials to build the guess potential on a DFT quadrature grid.

Core Hamiltonian Guess: Diagonalizes the one-electron core Hamiltonian $\mathbf{H}_0 = \mathbf{T} + \mathbf{V}$, ignoring electron-electron interactions [4]. While simple, this approach performs poorly for molecular systems.

Chkpoint File Restart: Reads molecular orbitals from a previous calculation, potentially for a different molecular system or basis set [4]. This approach enables project-based workflows where simpler calculations bootstrap more complex ones.

Stability Analysis and Wavefunction Validation

Even converged SCF solutions may not represent the true ground state. Stability analysis detects whether a solution corresponds to a local minimum or a saddle point on the energy hypersurface [4]. Two types of instabilities are recognized:

Internal Instabilities: The solution represents an excited state rather than the ground state within the same variational space.

External Instabilities: The energy can be lowered by relaxing constraints on the wave function, such as transitioning from restricted to unrestricted Hartree-Fock [4].

Systematic stability analysis is essential for verifying that computed solutions represent physically meaningful states rather than mathematical artifacts.

Multiscale Coupling and Advanced Methodologies

Modern implementations extend beyond isolated molecules through multiscale methods that couple SCF quantum chemistry with coarser-grained models [7]. This framework self-consistently couples quantum mechanics with molecular mechanics (QM/MM) and even mesoscale simulations, enabling accurate modeling of complex environments such as solutions, proteins, and materials [7].

The Research Toolkit: Essential Components for SCF Calculations

Table 3: Essential Computational Research Reagents for SCF Methods

| Component | Function | Implementation Examples |

|---|---|---|

| Basis Sets | Atomic orbital expansions for molecular orbitals | cc-pVTZ, cc-pVQZ, minimal basis, effective core potentials |

| Exchange-Correlation Functionals | Electron interaction modeling in DFT | LDA, GGA (PBE, BLYP), meta-GGA, hybrid (B3LYP) |

| Integration Grids | Numerical integration for DFT functionals | Lebedev, Euler-Maclaurin for radial and angular integration |

| SCF Convergers | Algorithms for iterative solution | DIIS, SOSCF, damping, level shifting, fractional occupations |

| Initial Guess Generators | Starting points for SCF iterations | Superposition of atomic densities, core Hamiltonian, Hückel guess |

| Molecular Integrals | Fundamental interactions between basis functions | Electron repulsion integrals (ERIs), overlap, kinetic, nuclear attraction |

The Schrödinger equation's transformation from abstract mathematical formulation to practical computational tool represents one of the most significant developments in theoretical chemistry. Through the development of self-consistent field methods, this fundamental equation has become the cornerstone of modern computational chemistry, enabling in silico prediction of molecular structures, properties, and reactivities with remarkable accuracy.

The ongoing evolution of SCF methodologies—including linear-scaling algorithms, improved density functionals, robust convergence techniques, and multiscale coupling approaches—ensures that Schrödinger's equation remains as relevant today as when it was first derived. For researchers in drug development and materials design, these methods provide powerful tools for understanding and predicting molecular behavior, reducing reliance on trial-and-error experimentation, and accelerating the discovery of new therapeutic agents and functional materials.

As quantum computing emerges on the horizon, the Schrödinger equation may find new computational implementations that overcome current limitations, potentially enabling accurate quantum chemical calculations for systems that remain computationally intractable with classical computers. Regardless of the computational platform, the Schrödinger equation will continue to serve as the fundamental principle guiding our understanding and prediction of chemical phenomena.

The Born-Oppenheimer approximation represents one of the most fundamental concepts in quantum chemistry and molecular physics, enabling the practical solution of the Schrödinger equation for molecular systems. This approximation leverages the significant mass disparity between atomic nuclei and electrons to separate their motions, forming the foundational framework for nearly all computational chemistry methods. Within the context of self-consistent field (SCF) approaches, including Hartree-Fock and Kohn-Sham density functional theory, the Born-Oppenheimer approximation provides the critical starting point that makes these computational techniques feasible. This whitepaper provides an in-depth technical examination of the approximation's physical basis, mathematical formulation, and its indispensable role in modern computational chemistry, particularly for applications in drug development and molecular design where accurate electronic structure calculations are paramount.

In quantum chemistry, the fundamental goal is to solve the time-independent Schrödinger equation for molecular systems. However, the exact solution of this equation for systems with more than two particles presents formidable challenges due to the complex interactions between all electrons and nuclei. The Hamiltonian operator for a molecule containing M nuclei and N electrons includes kinetic energy terms for all particles and potential energy terms for all pairwise Coulomb interactions [8] [9]. The complexity of this many-body problem necessitates intelligent approximations to make computational tractability possible.

The Born-Oppenheimer approximation, proposed in 1927 by J. Robert Oppenheimer, then a 23-year-old graduate student working with Max Born, addresses this challenge by exploiting the significant mass difference between atomic nuclei and electrons [10]. This mass disparity, typically exceeding a factor of 1000, means that nuclei move much more slowly than electrons, allowing for a separation of their motions in the quantum mechanical treatment of molecules. The approximation has become so deeply embedded in quantum chemistry that it now serves as the starting point for virtually all electronic structure methods, forming the essential foundation upon which self-consistent field methods are built.

Physical Basis of the Approximation

Mass Disparity and Timescale Separation

The physical foundation of the Born-Oppenheimer approximation rests on the substantial mass difference between atomic nuclei and electrons. Even the lightest nucleus, the proton, possesses a mass approximately 1836 times greater than that of an electron [11] [12]. This mass disparity has direct implications for the dynamics of these particles through Newton's second law (F = ma). When subjected to the same mutual attractive Coulomb force (Ze²/r²), the acceleration of electrons is consequently much greater than that of nuclei [11].

This differential in acceleration leads to a natural separation of timescales for nuclear and electronic motion. Electrons effectively instantaneously adjust to any movement of the much heavier, slower nuclei. As a physical analogy, one might imagine a 100-yard dash between two individuals where one has an acceleration capability 2000 times greater than the other; the faster individual could literally run circles around the slower one [12]. In quantum mechanical terms, this separation means that for any fixed nuclear configuration, the electrons rapidly settle into their stationary states, effectively "experiencing" the nuclei as fixed potential energy sources.

Mechanical Model Illustration

A simple mechanical model helps illustrate this concept: consider two particles of dissimilar masses (m₁ >> m₂) connected by a spring to each other and with the heavier particle also attached to a fixed wall by another spring [12]. The motion of this coupled system will be dominated by the heavy particle, with the light particle essentially following the heavy one while executing rapid oscillations around it. Similarly, in molecules, electrons move rapidly within the average field created by the slowly moving nuclei, justifying their treatment as moving in the static field of fixed nuclear positions.

Mathematical Formulation

Molecular Hamiltonian and the Electronic Schrödinger Equation

The complete molecular Hamiltonian operator for a system with M nuclei and N electrons is given in atomic units as:

[ \hat{H} = -\frac{1}{2}\sum{i=1}^{N}\nablai^2 - \frac{1}{2}\sum{A=1}^{M}\frac{1}{MA}\nablaA^2 - \sum{i=1}^{N}\sum{A=1}^{M}\frac{ZA}{r{iA}} + \sum{i=1}^{N}\sum{j>i}^{N}\frac{1}{r{ij}} + \sum{A=1}^{M}\sum{B>A}^{M}\frac{ZAZB}{R_{AB}} ]

The Born-Oppenheimer approximation begins by neglecting the nuclear kinetic energy terms (the second term in the equation above), which corresponds to treating the nuclei as fixed in space [10] [11]. This simplification yields the electronic Hamiltonian:

[ \hat{H}{elec} = -\frac{1}{2}\sum{i=1}^{N}\nablai^2 - \sum{i=1}^{N}\sum{A=1}^{M}\frac{ZA}{r{iA}} + \sum{i=1}^{N}\sum{j>i}^{N}\frac{1}{r{ij}} ]

The subsequent electronic Schrödinger equation is then solved:

[ \hat{H}{elec}\Psi{elec} = E{elec}\Psi{elec} ]

where the electronic energy (E_{elec}) depends parametrically on the nuclear coordinates R [10] [8]. For each arrangement of the nuclei, one computes the electronic energy, generating a potential energy surface (PES) upon which the nuclei move.

Nuclear Schrödinger Equation and Potential Energy Surfaces

After solving the electronic problem, the total energy of the molecule is obtained by adding the nuclear-nuclear repulsion energy to the electronic energy:

[ E{total} = E{elec} + E{nuc} = E{elec} + \sum{A=1}^{M}\sum{B>A}^{M}\frac{ZAZB}{R_{AB}} ]

This total energy becomes the effective potential in the Schrödinger equation for nuclear motion:

[ [\hat{T}n + E{total}(R)]\phi(R) = E\phi(R) ]

where (\hat{T}_n) is the nuclear kinetic energy operator [10]. The solution of this equation provides the vibrational, rotational, and translational states of the molecule.

Table 1: Key Mathematical Components in the Born-Oppenheimer Approximation

| Component | Mathematical Expression | Physical Significance |

|---|---|---|

| Electronic Hamiltonian | (\hat{H}{elec} = -\frac{1}{2}\sumi\nablai^2 - \sumi\sumA\frac{ZA}{r{iA}} + \sumi\sum{j>i}\frac{1}{r{ij}}) | Determines electronic structure for fixed nuclear positions |

| Nuclear-Nuclear Repulsion | (E{nuc} = \sumA\sum{B>A}\frac{ZAZB}{R{AB}}) | Classical electrostatic repulsion between nuclei |

| Total Molecular Energy | (E{total} = E{elec} + E_{nuc}) | Forms potential energy surface for nuclear motion |

| Nuclear Wavefunction | ([\hat{T}n + E{total}(R)]\phi(R) = E\phi(R)) | Describes vibrational, rotational, and translational states |

Wavefunction Factorization

In the Born-Oppenheimer approximation, the total wavefunction of the molecule is approximated as a product:

[ \Psi{total} \approx \psi{electronic}(r,R) \cdot \psi_{nuclear}(R) ]

where r represents all electronic coordinates and R represents all nuclear coordinates [10]. This factorization signifies that the electronic wavefunction depends parametrically on the nuclear coordinates—it changes with nuclear arrangement but does not explicitly depend on nuclear momentum.

Computational Implementation

Workflow and Connection to Self-Consistent Field Methods

The practical implementation of the Born-Oppenheimer approximation in computational chemistry follows a well-defined workflow that enables the application of self-consistent field methods:

Diagram 1: Born-Oppenheimer and SCF Computational Workflow

Self-Consistent Field Methods Built on BO Approximation

Self-consistent field methods, including Hartree-Fock and Kohn-Sham density functional theory, fundamentally rely on the Born-Oppenheimer approximation [4] [9]. These methods express the electronic wavefunction as a single Slater determinant of molecular orbitals, which are themselves linear combinations of atomic orbitals (LCAO) [9]:

[ \psii = \sum{\mu} c{\mu i} \phi{\mu} ]

The optimization of these molecular orbitals leads to the Roothaan-Hall matrix equation [9]:

[ \mathbf{FC} = \mathbf{SC}\varepsilon ]

where F is the Fock matrix, C is the matrix of molecular orbital coefficients, S is the overlap matrix, and ε is a diagonal matrix of orbital energies. The Fock operator itself is a one-electron effective Hamiltonian that incorporates the average field of all other electrons [13]:

[ \hat{F}(i) = -\frac{1}{2}\nablai^2 - \sum{A=1}^{M}\frac{ZA}{r{iA}} + \sum{j=1}^{N} (2\hat{J}j - \hat{K}_j) ]

Here, (\hat{J}j) and (\hat{K}j) are the Coulomb and exchange operators, respectively, representing the electron-electron interactions in this mean-field approach [13].

Table 2: Computational Methods Relying on the Born-Oppenheimer Approximation

| Method | Key Equations | Approximation Level | Typical Applications |

|---|---|---|---|

| Hartree-Fock (HF) | (\hat{F}\varphii = \varepsiloni\varphi_i) | Mean-field, neglects electron correlation | Initial calculations, molecular properties |

| Density Functional Theory (DFT) | (\hat{F}{DFT} = -\frac{1}{2}\nabla^2 + \nu{eff}) | Includes approximate correlation | Ground state properties, reaction mechanisms |

| Post-HF Methods | Build on HF reference | Include electron correlation | Accurate thermochemistry, spectroscopy |

Complexity Reduction and Computational Efficiency

The computational advantage afforded by the Born-Oppenheimer approximation is substantial. Consider the benzene molecule (C₆H₆) with 12 nuclei and 42 electrons [10]. The full Schrödinger equation requires solving a partial differential equation in 162 variables (3×12 + 3×42). Using the Born-Oppenheimer approximation, one instead solves:

- An electronic problem in 126 variables for multiple fixed nuclear configurations

- A nuclear problem in 36 variables on the resulting potential energy surface

The computational complexity for solving eigenvalue equations increases faster than the square of the number of coordinates, making this separation enormously beneficial for practical computations [10].

Applications in Pharmaceutical Research

Molecular Property Prediction for Drug Development

The Born-Oppenheimer approximation enables the computational prediction of molecular properties essential to pharmaceutical research. By providing access to electronic wavefunctions and energies for fixed nuclear configurations, researchers can compute:

- Molecular electrostatic potentials crucial for understanding drug-receptor interactions

- Orbital energies relevant to reactivity and metabolism studies

- Vibrational frequencies used in spectroscopic characterization

- Solvation properties important for bioavailability prediction

Advanced applications include the high-fidelity prediction of drug solubility in supercritical CO₂ for pharmaceutical processing, where machine learning models trained on quantum chemical descriptors can achieve remarkable accuracy (R² = 0.9920) [14] [15]. Such predictions facilitate the development of green technologies in drug formulation and separation processes.

Potential Energy Surfaces and Reaction Pathways

In drug metabolism studies, the Born-Oppenheimer approximation enables the mapping of potential energy surfaces along reaction coordinates, providing insights into:

- Metabolic transformation pathways of pharmaceutical compounds

- Activation energies for enzymatic reactions

- Transition state structures for drug degradation pathways

- Conformational energy landscapes of flexible drug molecules

These applications rely critically on the separation of electronic and nuclear motion, allowing researchers to compute electronic energies at specific nuclear configurations along a reaction path.

Limitations and Breakdown of the Approximation

When the Approximation Fails

Despite its widespread success, the Born-Oppenheimer approximation has limitations and can break down in certain important scenarios:

- Conical intersections: Points where different electronic potential energy surfaces come close together or cross, particularly important in photochemistry

- Non-adiabatic processes: Reactions where electronic and nuclear motions become strongly coupled

- Light nuclei systems: Systems containing hydrogen or helium where the mass ratio is less extreme

- Charge transfer processes: Electron transfer reactions where electronic and nuclear timescales become comparable

In such cases, the approximation that electrons instantaneously adjust to nuclear motion becomes invalid, and more sophisticated treatments are necessary.

Beyond Born-Oppenheimer: Non-Adiabatic Dynamics

When the Born-Oppenheimer approximation breaks down, computational chemists employ non-adiabatic dynamics methods that explicitly account for couplings between electronic and nuclear motion. These methods include:

- Surface hopping approaches

- Multiple spawning techniques

- Exact factorization methods

- Mixed quantum-classical dynamics

These advanced techniques remain active areas of research and development in theoretical chemistry.

Research Reagents and Computational Tools

Table 3: Essential Computational Tools for Born-Oppenheimer-Based Calculations

| Tool Category | Specific Examples | Function in Research |

|---|---|---|

| Quantum Chemistry Software | PySCF [4], Q-Chem [9] | Solve electronic structure equations using SCF methods |

| Basis Sets | cc-pVTZ, 6-31G*, minimal basis [4] | Mathematical functions to represent molecular orbitals |

| Initial Guess Algorithms | 'minao', 'atom', 'huckel' [4] | Generate starting electron density for SCF procedure |

| SCF Convergence Accelerators | DIIS, SOSCF, damping, level shifting [4] | Ensure robust convergence of self-consistent field equations |

| Property Analysis Tools | SHAP analysis, sensitivity methods [14] | Interpret computational results and identify key determinants |

The Born-Oppenheimer approximation remains a cornerstone of modern computational chemistry, providing the fundamental framework that enables practical electronic structure calculations for molecules of relevance to pharmaceutical research and drug development. By separating the complex many-body problem into more tractable electronic and nuclear components, this approximation permits the application of self-consistent field methods that yield invaluable insights into molecular structure, properties, and reactivity.

While the approximation has known limitations, particularly in non-adiabatic processes, its proper implementation and understanding continue to drive advances in computational drug design, materials science, and molecular engineering. As computational power increases and algorithms become more sophisticated, the Born-Oppenheimer approximation will undoubtedly remain an essential component of the theoretical chemist's toolkit, continuing its nearly century-long legacy of enabling molecular quantum mechanics.

The Hartree-Fock (HF) method stands as a cornerstone in computational physics and chemistry, providing the fundamental framework for approximating the wave functions and energies of quantum many-body systems. This whitepaper traces the theoretical evolution from the initial Hartree method to the sophisticated Hartree-Fock formalism, framing this development within the broader context of self-consistent field (SCF) methods in computational chemistry research. We examine the core approximations, mathematical foundations, and algorithmic implementation of HF theory, highlighting its position as a specific instance of mean-field theory (MFT) where complex many-electron interactions are replaced by an effective one-body problem. The document further details practical methodologies, visualizes computational workflows, and discusses both the limitations of the standard HF approach and modern extensions that address electron correlation, including connections to dynamical mean-field theory (DMFT) and emerging quantum computing implementations. Designed for researchers, scientists, and drug development professionals, this guide provides both theoretical depth and practical insights essential for understanding electronic structure calculations.

The fundamental challenge in theoretical chemistry and computational materials science is solving the time-independent Schrödinger equation for many-electron systems. For a system comprising multiple nuclei and electrons, the non-relativistic electronic Hamiltonian (in atomic units) is expressed as [16]:

[

\hat{H} = -\frac{1}{2}\sumi \nablai^2 - \sum{i,I}\frac{ZI}{|\mathbf{r}i - \mathbf{R}I|} + \sum{i

The terms represent, respectively: the kinetic energy of electrons, the Coulomb attraction between electrons and nuclei, and the electron-electron repulsion. The Born-Oppenheimer approximation, which treats nuclei as fixed due to their greater mass, enables this separation [16]. While exact solutions exist for one-electron systems like hydrogen, the electron-electron repulsion term makes the Schrödinger equation analytically unsolvable for general multi-electron systems [17]. This complexity necessitates approximate methods, with mean-field approaches providing the most fundamental starting point.

Historical Foundation: From Hartree to Slater-Determinants

The Hartree Method and Self-Consistent Field Concept

In 1927, Douglas Hartree introduced a procedure he called the self-consistent field (SCF) method to calculate approximate wave functions and energies for atoms and ions [18]. His approach addressed the many-electron problem by decomposing it into effective one-electron equations. The initial Hartree method employed a simple approximation: the multi-electron wave function was written as a product of single-electron wave functions (orbitals), known as the Hartree product [18] [17]:

[ \Psi(\mathbf{r}1, \mathbf{r}2, \ldots, \mathbf{r}N) \approx \psi1(\mathbf{r}1)\psi2(\mathbf{r}2) \ldots \psiN(\mathbf{r}_N) ]

This ansatz assumes electrons are independent, interacting only via an average field. Each electron thus moves in the electrostatic field created by the nucleus and the average charge distribution of all other electrons. Hartree's key insight was that the final field computed from the charge distribution must be consistent with the assumed initial field—hence "self-consistent" [18]. The solution proceeded iteratively: an initial guess for the orbitals was used to calculate the potential, which was then used to solve for new orbitals, repeating until convergence [17].

The Fock Improvement: Incorporating Antisymmetry

Despite its conceptual breakthrough, the original Hartree method had a fundamental flaw: it failed to account for the quantum mechanical principle that electronic wave functions must be antisymmetric—their sign must change upon exchange of any two electrons, as required by the Pauli exclusion principle [18] [16].

In 1930, Slater and Fock independently identified this limitation and proposed the solution: replacing the simple Hartree product with a Slater determinant [18]. For an N-electron system, the Slater determinant is constructed from spin orbitals as [16]:

[ \Psi(\mathbf{r}1, \mathbf{r}2, \ldots, \mathbf{r}N) = \frac{1}{\sqrt{N!}} \begin{vmatrix} \chi1(\mathbf{x}1) & \chi2(\mathbf{x}1) & \cdots & \chiN(\mathbf{x}1) \ \chi1(\mathbf{x}2) & \chi2(\mathbf{x}2) & \cdots & \chiN(\mathbf{x}2) \ \vdots & \vdots & \ddots & \vdots \ \chi1(\mathbf{x}N) & \chi2(\mathbf{x}N) & \cdots & \chiN(\mathbf{x}_N) \end{vmatrix} ]

This determinant automatically satisfies the antisymmetry requirement—if two electrons occupy the same state, two rows become identical, making the determinant zero, thus enforcing the Pauli exclusion principle [16]. The combination of Hartree's SCF approach with the Slater determinant wave function became known as the Hartree-Fock method.

Table 1: Key Historical Developments in Early Mean-Field Theory

| Year | Researcher(s) | Contribution | Key Innovation |

|---|---|---|---|

| 1927 | Douglas Hartree | Hartree Method | Introduced self-consistent field concept; used product wavefunction |

| 1930 | Slater and Fock | Hartree-Fock Method | Incorporated antisymmetry via Slater determinant |

| 1935 | Hartree | Reformulation | Made theory more accessible for practical calculations [18] |

| 1950s | Various | Computational Implementation | Enabled by advent of electronic computers [18] |

Theoretical Framework: Hartree-Fock as Mean-Field Theory

Mean-Field Theory Foundations

Mean-field theory (MFT) is a powerful approximation method for studying high-dimensional random models by replacing all interactions to any one body with an average or effective interaction [19]. This approach transforms complex many-body problems into more tractable effective one-body problems by averaging over degrees of freedom [19]. In the context of electronic structure, MFT replaces the instantaneous Coulomb repulsion between electrons with an average potential, effectively causing each electron to experience the mean field created by all other electrons [18].

The formal basis for MFT is the Bogoliubov inequality, which provides an upper bound for the free energy of a system [19]. By minimizing this upper bound with respect to single-degree-of-freedom probabilities, one obtains effective one-body equations that form the foundation of mean-field approximations.

The Hartree-Fock Equations

Applying the variational principle to the Slater determinant ansatz yields the Hartree-Fock equations [18] [20]. For a closed-shell system, these take the form of one-electron eigenvalue equations:

[ \hat{f}(\mathbf{r}1) \chii(\mathbf{r}1) = \varepsiloni \chii(\mathbf{r}1) ]

where ( \hat{f} ) is the Fock operator, ( \chii ) are the spin orbitals, and ( \varepsiloni ) are the orbital energies [18]. The Fock operator consists of [20]:

[ \hat{f} = -\frac{1}{2}\nabla^2 - \sumI \frac{ZI}{|\mathbf{r} - \mathbf{R}I|} + \hat{V}{\text{HF}} ]

Here, the first term is the kinetic energy operator, the second represents electron-nucleus attraction, and ( \hat{V}_{\text{HF}} ) is the Hartree-Fock potential, which captures the average electron-electron interactions.

The Hartree-Fock potential contains two distinct terms [20]:

- Hartree Term (Coulomb operator): The classical electrostatic repulsion between electrons, representing the potential from the average charge distribution of all other electrons.

- Fock Term (Exchange operator): A non-local operator arising from the antisymmetric nature of the Slater determinant, accounting for the exchange interaction between electrons of parallel spin.

The exchange term eliminates the unphysical self-interaction contained in the Hartree term and creates an "exchange hole" around each electron—a region where same-spin electrons avoid each other due to the Pauli exclusion principle [20].

The Self-Consistent Field Procedure

The nonlinear nature of the Hartree-Fock equations (since the Fock operator depends on its own solutions) necessitates an iterative solution algorithm [18]:

Diagram 1: The Hartree-Fock self-consistent field (SCF) iterative procedure

This SCF cycle continues until the change in total electronic energy or density matrix between iterations falls below a predefined threshold, indicating convergence to a self-consistent solution [18].

Methodological Implementation and Computational Considerations

Basis Set Expansion

In practical implementations, the molecular orbitals are expanded as linear combinations of basis functions, typically atomic orbitals (LCAO - Linear Combination of Atomic Orbitals) [18] [20]:

[ \psii(\mathbf{r}) = \sum{\mu} c{\mu i} \phi\mu(\mathbf{r}) ]

The choice of basis set is critical for computational accuracy and efficiency. Two common approaches are:

- Localized Gaussian functions: Predominantly used for molecular systems, allowing efficient integral computation [20].

- Plane waves: Typically employed for periodic solid-state systems [20].

The basis set must be sufficiently complete to accurately represent the molecular orbitals, and convergence with respect to basis set size must be verified in practical applications [20].

Key Mathematical Formulations

Table 2: Core Equations in Hartree-Fock Theory

| Concept | Mathematical Expression | Physical Significance | ||

|---|---|---|---|---|

| Slater Determinant | ( \Psi = \frac{1}{\sqrt{N!}} \det(\chii(\mathbf{x}j)) ) | Ensures antisymmetry; encodes Pauli exclusion principle | ||

| Fock Operator | ( \hat{f} = -\frac{1}{2}\nabla^2 - \sumI \frac{ZI}{ | \mathbf{r}-\mathbf{R}_I | } + \hat{J} - \hat{K} ) | Effective one-electron Hamiltonian |

| Coulomb Operator | ( \hat{J}j(1)\chii(1) = \left[\int \frac{ | \chi_j(2) | ^2}{r{12}} d\mathbf{x}2\right] \chi_i(1) ) | Classical electrostatic repulsion |

| Exchange Operator | ( \hat{K}j(1)\chii(1) = \left[\int \frac{\chij^*(2)\chii(2)}{r{12}} d\mathbf{x}2\right] \chi_j(1) ) | Quantum mechanical exchange interaction |

The Scientist's Toolkit: Essential Computational Components

Table 3: Key Computational Components in Hartree-Fock Calculations

| Component | Function/Role | Implementation Notes |

|---|---|---|

| Basis Functions | Expand molecular orbitals; determine flexibility of representation | Gaussian-type orbitals (molecules) or plane waves (solids) |

| Initial Guess Generator | Provide starting orbitals for SCF procedure | From superposition of atomic densities or minimal basis calculation |

| Integral Evaluator | Compute one- and two-electron integrals | Most computationally demanding step; efficient algorithms critical |

| DIIS Extrapolator | Accelerate SCF convergence | Reduces number of iterations; essential for difficult cases |

| Density Fitter | Approximate electron density | Used in resolution-of-identity techniques to speed up calculations |

Limitations and Advanced Methodologies

Electron Correlation: The Fundamental Limitation

While Hartree-Fock theory incorporates exchange correlation through the antisymmetric wave function, it neglects Coulomb correlation—the tendency of electrons to avoid each other due to their mutual repulsion, regardless of spin [18] [20]. This limitation has several consequences:

- Systematic overestimation of electron energies: The HF method typically yields total energies that are higher than the true values [18].

- Inability to describe London dispersion forces: These weak intermolecular forces arise entirely from electron correlation effects [18].

- Poor description of bond breaking: Correlation becomes increasingly important as bonds stretch [20].

- Inaccurate band gaps in solids: HF often overestimates band gaps in insulating materials.

The Hartree-Fock energy forms an upper bound to the true ground-state energy (by the variational principle), with the difference from the exact solution termed the correlation energy [18].

Post-Hartree-Fock Methods

To address the correlation problem, numerous post-Hartree-Fock methods have been developed, which can be broadly categorized as [18]:

- Configuration Interaction (CI): Includes excited Slater determinants in the wave function.

- Coupled Cluster (CC): Uses exponential ansatz to incorporate excitations.

- Møller-Plesset Perturbation Theory: Treats correlation as a perturbation to the HF solution.

- Multiconfigurational SCF (MCSCF): Uses multiple determinants in the variational optimization.

These methods progressively improve accuracy but at significantly increased computational cost.

Connection to Dynamical Mean-Field Theory

For strongly correlated materials (e.g., transition metal oxides), where electron localization effects dominate, neither standard HF nor DFT with semi-local functionals provides adequate descriptions [21]. Dynamical Mean-Field Theory (DMFT) has emerged as a powerful approach that extends the static mean-field concept of HF to include energy-dependent (dynamical) correlations [21].

DMFT maps a lattice model onto an effective impurity model embedded in a self-consistent, frequency-dependent mean field [21]. When combined with DFT (DFT+DMFT), this approach has successfully described the electronic structure of various strongly correlated materials, including high-temperature superconductors [21]. Recent research has explored implementing DMFT impurity solvers on quantum computers, potentially offering more efficient solutions for large systems where classical methods struggle [21] [22].

Diagram 2: Methodological evolution from Hartree-Fock to modern approaches

The evolution from Hartree's initial self-consistent field concept to the sophisticated Hartree-Fock formalism represents a foundational development in computational quantum chemistry. As a specific implementation of mean-field theory, HF provides the conceptual framework for understanding electrons as moving in an average field while properly accounting for quantum statistics through the antisymmetric wave function. Despite its limitation in neglecting electron correlation, HF remains the starting point for most accurate electronic structure methods and continues to provide conceptual insights into chemical bonding and electronic behavior.

The self-consistent field paradigm established by Hartree and refined by Fock has proven remarkably enduring, extending beyond quantum chemistry to nuclear physics, condensed matter theory, and emerging applications in quantum computing. For researchers and drug development professionals, understanding the approximations, capabilities, and limitations of Hartree-Fock theory is essential for judicious application of computational methods and meaningful interpretation of their results. As computational power grows and methodological innovations continue, the HF foundation will undoubtedly remain central to addressing ever more challenging problems in molecular and materials design.

Slater Determinants and the Pauli Exclusion Principle

In computational chemistry, the accurate prediction of molecular structure and properties hinges on solving the many-electron Schrödinger equation. For multi-electron systems, a fundamental challenge arises from the indistinguishable nature of fermions and the constraints imposed by the Pauli Exclusion Principle. This principle, which states that no two fermions can occupy the same quantum state, is elegantly and efficiently embedded in quantum chemical calculations through the use of Slater determinants. Within the broader context of self-consistent field (SCF) methods, the Slater determinant provides the essential wavefunction ansatz that ensures physically meaningful solutions while facilitating computationally tractable models.

This article provides an in-depth examination of Slater determinants, detailing their mathematical formulation, role in enforcing antisymmetry, and central function in modern computational workflows like the Hartree-Fock-SCF method. We will explore how this foundational concept enables the treatment of complex molecular systems in research and drug development.

Theoretical Foundation

The Pauli Exclusion Principle and Wavefunction Antisymmetry

The Pauli Exclusion Principle is a key quantum mechanical principle that has profound implications for the electronic structure of atoms and molecules. For a wavefunction describing a system of multiple fermions, it requires that the wavefunction must be antisymmetric with respect to the exchange of any two identical fermions [23]. This means that if the coordinates (both spatial and spin) of two electrons are swapped, the total wavefunction must change sign [24]:

$$ \Psi(\mathbf{x}1, \mathbf{x}2, ..., \mathbf{x}i, ..., \mathbf{x}j, ..., \mathbf{x}N) = -\Psi(\mathbf{x}1, \mathbf{x}2, ..., \mathbf{x}j, ..., \mathbf{x}i, ..., \mathbf{x}N) $$

This antisymmetry property naturally enforces the Pauli principle: if two electrons were to occupy the exact same spin-orbital, the wavefunction would vanish, indicating such a configuration is impossible [23]. This fundamental symmetry requirement is the foundation upon which Slater determinants are built.

From Hartree Products to Slater Determinants

A naive first attempt at constructing a many-electron wavefunction is to use a simple product of one-electron spin-orbitals, known as a Hartree product [23]:

$$ \Psi{\text{Hartree}}(\mathbf{x}1, \mathbf{x}2) = \chi1(\mathbf{x}1)\chi2(\mathbf{x}_2) $$

However, this simple product is unsatisfactory for fermions because it is not antisymmetric under particle exchange [23]. The solution is to take a linear combination of all possible permutations of the electrons among the spin-orbitals, with the appropriate sign for each permutation. For a two-electron system, this leads to [23]:

$$ \Psi(\mathbf{x}1, \mathbf{x}2) = \frac{1}{\sqrt{2}}[\chi1(\mathbf{x}1)\chi2(\mathbf{x}2) - \chi1(\mathbf{x}2)\chi2(\mathbf{x}1)] $$

This expression can be elegantly written as a determinant [23]. For an N-electron system, this construction generalizes to the Slater determinant:

Formal Definition of the Slater Determinant

For an N-electron system, the Slater determinant is defined as [23]:

$$ \Psi(\mathbf{x}1, \mathbf{x}2, \ldots, \mathbf{x}N) = \frac{1}{\sqrt{N!}} \begin{vmatrix} \chi1(\mathbf{x}1) & \chi2(\mathbf{x}1) & \cdots & \chiN(\mathbf{x}1) \ \chi1(\mathbf{x}2) & \chi2(\mathbf{x}2) & \cdots & \chiN(\mathbf{x}2) \ \vdots & \vdots & \ddots & \vdots \ \chi1(\mathbf{x}N) & \chi2(\mathbf{x}N) & \cdots & \chiN(\mathbf{x}_N) \end{vmatrix} $$

This determinant formulation automatically ensures the required antisymmetry under particle exchange, as swapping the coordinates of two electrons corresponds to interchanging two rows of the determinant, which reverses its sign [23]. The factor of $1/\sqrt{N!}$ ensures proper normalization.

Table 1: Key Properties of Slater Determinants

| Property | Mathematical Expression | Physical Significance | ||

|---|---|---|---|---|

| Antisymmetry | Swapping $\mathbf{x}i \leftrightarrow \mathbf{x}j$ changes the sign of $\Psi$ | Enforces the Pauli Exclusion Principle | ||

| Normalization | $\langle \Psi \vert \Psi \rangle = 1$ with $1/\sqrt{N!}$ factor | Probabilities sum to one | ||

| Vanishing | $\Psi = 0$ if any $\chii = \chij$ | No two electrons in same quantum state | ||

| Indistinguishability | Probability density $ | \Psi | ^2$ invariant to particle labels | Electrons are fundamentally identical |

Slater Determinants in Self-Consistent Field Methods

The Hartree-Fock-SCF Framework

The Slater determinant forms the cornerstone of the Hartree-Fock (HF) method, one of the most fundamental ab initio quantum chemical approaches [25]. In the HF approximation, the many-electron wavefunction is represented by a single Slater determinant composed of the best possible set of spin-orbitals. The method operates within a self-consistent field (SCF) procedure [26]:

The goal is to find the set of molecular orbitals that minimize the energy of a single Slater determinant wavefunction for a given system [26]. The SCF procedure is responsible for finding the ground state as the global minimum of the one-determinant approximation of the electronic energy [26]. Recent methodological innovations have explored replacing the traditional SCF optimization with algebraic geometry approaches, which can locate not only the ground state but also low-lying excited states within the one-determinant framework [26].

Matrix Elements and the Electronic Energy

The expectation value of the electronic energy for a Slater determinant wavefunction reveals important physical insights. The electronic Hamiltonian can be separated into one-electron and two-electron components [23]:

$$ \hat{H}e = \sum{i}^{N} \hat{h}(\mathbf{r}i) + \frac{1}{2} \sum{i \neq j}^{N} \frac{e^2}{r_{ij}} $$

The expectation value of the one-electron operators for a Slater determinant wavefunction is simply the sum of the individual spin-orbital energies [23]:

$$ \langle \Psi0 | \hat{G}1 | \Psi0 \rangle = \sumi \langle \chii | \hat{h} | \chii \rangle $$

However, the two-electron electron-electron repulsion term introduces characteristic Coulomb and exchange contributions. The exchange term, which arises directly from the antisymmetric nature of the Slater determinant, has no classical analog and lowers the total energy by reducing electron-electron repulsion for electrons of parallel spin.

Table 2: Computational Methods Building on Slater Determinants

| Method | Wavefunction Ansatz | Treatment of Electron Correlation | Computational Scaling |

|---|---|---|---|

| Hartree-Fock (HF) | Single Slater determinant | None | $O(N^4)$ |

| Density Functional Theory (DFT) | Effective single determinant | Approximate, via functional | $O(N^3)$ |

| Post-Hartree-Fock Methods | |||

| Møller-Plesset (MP2) | Single determinant reference | Perturbative | $O(N^5)$ |

| Coupled Cluster (CCSD(T)) | Exponential ansatz on reference | High accuracy, "gold standard" | $O(N^7)$ |

| Multi-Reference Methods | Multiple determinants | Strong correlation | High/$O(e^N)$ |

Advanced Applications and Methodological Extensions

Beyond Hartree-Fock: Electron Correlation

While the single Slater determinant of Hartree-Fock theory provides a qualitatively correct description of molecular systems, it fails to capture electron correlation - the correlated motion of electrons that avoids their mutual repulsion [25]. This limitation has driven the development of post-Hartree-Fock methods that build upon the HF foundation:

- Configuration Interaction (CI): Constructs the wavefunction as a linear combination of Slater determinants representing excited electron configurations [25]

- Coupled Cluster (CC): Uses an exponential ansatz acting on the HF reference to generate a more sophisticated wavefunction [25]

- Perturbation Theory (MP2, MP4): Adds electron correlation as a perturbation to the HF Hamiltonian [25]

Among these, the Coupled Cluster with Single, Double, and perturbative Triple excitations (CCSD(T)) method is widely regarded as the "gold standard" for quantum chemical accuracy, though its high computational cost limits application to small and medium-sized molecules [25].

Integration with Machine Learning and Quantum Computing

Recent advances are reshaping how Slater determinants and SCF methods integrate into the broader computational landscape:

Machine Learning in Quantum Chemistry: The integration of machine learning with quantum chemical methods has enabled the development of data-driven tools that can identify molecular features correlated with target properties [25]. These approaches are being used to create more accurate and efficient potentials, and to accelerate the exploration of chemical space in drug discovery and materials design [25].

Quantum Computing: Algorithms such as the Variational Quantum Eigensolver (VQE) are being developed to solve electronic structure problems more efficiently than classical computers [25]. These approaches typically prepare and measure multi-qubit states that represent Slater determinants or their correlated extensions. While current hardware limitations restrict applications to small molecules, ongoing advances in quantum hardware are progressively expanding these capabilities [25].

Experimental Protocols and Computational Workflows

Standard Hartree-Fock-SCF Protocol

The following workflow outlines the key steps in a typical Hartree-Fock calculation using Slater determinants:

Research Reagent Solutions: Computational Tools

Table 3: Essential Computational Tools for SCF Calculations

| Tool Category | Specific Examples | Function in SCF Methodology |

|---|---|---|

| Basis Sets | Pople-style (6-31G*), Dunning (cc-pVDZ), Karlsruhe (def2-SVP) | Provide mathematical functions for expanding molecular orbitals |

| Integral Packages | Libint, ERD, McMurchie-Davidson | Compute electron repulsion integrals efficiently |

| SCF Solvers | DIIS, Energy-based direct minimization | Accelerate convergence of self-consistent field procedure |

| Geometry Optimizers | Berny, Baker, Eigenvector-following | Locate minima and transition states on potential energy surface |

| Quantum Chemistry Packages | Gaussian, GAMESS, ORCA, PySCF, Psi4 | Implement complete HF-SCF workflow with post-HF extensions |

Slater determinants provide the fundamental mathematical structure that enforces the Pauli Exclusion Principle in multi-electron quantum mechanical calculations. Their integration into the Hartree-Fock-SCF framework establishes the foundation for nearly all ab initio computational chemistry methods, from basic molecular orbital theory to sophisticated correlated approaches. As computational methodologies continue to evolve through integration with machine learning and quantum computing, the Slater determinant remains an essential conceptual and practical tool for researchers investigating molecular structure, reactivity, and properties in fields ranging from fundamental chemical physics to drug design and materials science. The ongoing refinement of SCF methodologies ensures that this cornerstone of computational quantum chemistry will continue to enable new discoveries across the molecular sciences.

The variational principle represents a fundamental mathematical concept that enables the solution of complex physical problems by finding functions that optimize the values of quantities dependent on those functions [27]. In computational chemistry, this principle provides the rigorous foundation for determining molecular properties by optimizing electronic wavefunctions. Its application is particularly crucial for self-consistent field (SCF) methods, which form the computational bedrock for predicting molecular structure, reactivity, and electronic properties in chemical systems.

This whitepaper examines the mathematical basis of variational principles and their role as the optimization engine behind computational methods. We explore how these principles drive the convergence of wavefunctions to energy minima, enable the calculation of molecular properties, and facilitate the treatment of both ground and excited states. By understanding these fundamental mathematical underpinnings, researchers can better leverage computational tools for drug design and materials development.

Mathematical Foundations of the Variational Principle

Core Theoretical Framework

The variational principle states that for any trial wavefunction Ψ, the expectation value of the Hamiltonian operator Ĥ provides an upper bound to the true ground state energy E₀:

<Ψ|Ĥ|Ψ> / <Ψ|Ψ> ≥ E₀

This inequality provides the mathematical basis for systematic improvement of wavefunction approximations [28]. The quality of the energy estimate depends entirely on the choice of trial wavefunction, with more flexible parameterizations capable of closer approach to the true ground state.

The fundamental optimization problem in variational quantum chemistry can be expressed as:

E = min〈Ψ|Ĥ|Ψ〉 subject to 〈Ψ|Ψ〉 = 1

This constrained optimization is typically solved using Lagrange multipliers, leading to the Hartree-Fock equations for mean-field theories or more complex eigenvalue problems for correlated methods [27].

Extensions to Excited States

While the fundamental variational principle applies specifically to ground states, several extensions enable the treatment of excited states:

- State-specific optimization: Targeting higher-energy roots of the secular equation

- State-average optimization: Minimizing average energy of multiple states simultaneously

- Variance minimization: Seeking wavefunctions that minimize the energy variance

Each approach presents distinct advantages and challenges for computational chemistry applications [29].

Variational Principles in Self-Consistent Field Methods

Hartree-Fock Theory as a Variational Method

The Hartree-Fock method represents the paradigmatic application of variational principles in computational chemistry. The approach seeks the single Slater determinant that minimizes the electronic energy, resulting in the self-consistent field equations:

Fψᵢ = εᵢψᵢ

where F is the Fock operator, ψᵢ are molecular orbitals, and εᵢ are orbital energies [26]. The SCF procedure alternates between building the Fock matrix from current orbitals and diagonalizing to obtain improved orbitals until self-consistency is achieved.

Recent methodological innovations have explored replacing the conventional SCF convergence with alternative optimization schemes. Algebraic geometry optimization coupled with multivariable Newton methods has demonstrated potential for locating both ground and excited states within the one-determinant approximation [26].

Beyond Single Reference: Multiconfigurational Methods

For systems with strong static correlation, single-determinant approaches prove inadequate. The Complete Active Space Self-Consistent Field (CASSCF) method extends the variational approach to multiconfigurational wavefunctions:

|Ψᴹᶜˢᶜᶠ〉 = Σᵢ Cᵢ|Φᵢ〉

where the Cᵢ coefficients and molecular orbitals are variationally optimized [30]. The CASSCF method partitions orbitals into inactive, active, and virtual spaces, with a full configuration interaction expansion within the active space. This approach maintains the variational property while providing a more balanced treatment of electron correlation.

Table 1: Comparison of Variational Methods in Computational Chemistry

| Method | Wavefunction Form | Variational Parameters | Strengths | Limitations |

|---|---|---|---|---|

| Hartree-Fock | Single determinant | Molecular orbitals | Size consistent, computationally efficient | Neglects electron correlation |

| CASSCF | Multiconfigurational | CI coefficients and orbitals | Handles static correlation, multireference systems | Exponential scaling with active space size |

| VMC | Jastrow-Slater | Jastrow, CI, and orbital parameters | Explicit correlation, favorable scaling | Stochastic optimization challenges |

| VQE | Parameterized quantum circuit | Quantum gate parameters | Suitable for NISQ devices, quantum advantage potential | Current hardware limitations, noise sensitivity |

Computational Implementation and Protocols

Energy Minimization Techniques

Energy minimization forms the core computational approach for variational methods. The stochastic reconfiguration method provides an efficient approach for optimizing complex wavefunctions in quantum Monte Carlo (QMC) applications [29]. The parameter variations Δp are computed according to:

Δp = -τS̅⁻¹g

where τ is a positive step size, S̅ is the overlap matrix, and g is the energy gradient. This approach enables stable optimization of thousands of parameters in Jastrow-Slater wavefunctions.

For state-average optimization required when treating states of the same symmetry, the minimization target becomes a weighted average:

Eˢᵃ = Σᵢ wᵢEᵢ with Σᵢ wᵢ = 1

This approach ensures balanced description of multiple states and prevents collapse to lower states during optimization [29].

Wavefunction Optimization Workflow

The following diagram illustrates the complete variational optimization workflow for molecular systems:

Advanced Optimization: Variance Minimization

As an alternative to direct energy minimization, variance minimization approaches can be employed, particularly for excited state targeting. The variance functional:

σ² = <Ψ|(Ĥ - E)²|Ψ> / <Ψ|Ψ>

possesses its minimum value of zero for exact eigenstates [29]. Modified functionals such as:

σ²ᵦ = <Ψ|(Ĥ - ω)²|Ψ> / <Ψ|Ψ>

with ω fixed during optimization steps, can improve stability. However, recent QMC studies have identified challenges with variance minimization, including state drifting during optimization and convergence to incorrect states [29].

Table 2: Optimization Methods in Variational Quantum Chemistry

| Method | Target Functional | Strengths | Weaknesses | Convergence Behavior | ||

|---|---|---|---|---|---|---|

| Energy Minimization | E[Ψ] = <Ψ | Ĥ | Ψ> | Variational guarantee, stable | May converge slowly, state collapse | Monotonic energy decrease |

| Variance Minimization | σ²[Ψ] = <Ψ | (Ĥ-E)² | Ψ> | Can target excited states | May drift from target state | Can exhibit state switching |

| State-Average Optimization | Eˢᵃ = ΣwᵢEᵢ | Balanced states, avoids collapse | Not fully variational for individual states | Simultaneous improvement of multiple states | ||

| Newton Method | E[Ψ] with exact Hessian | Quadratic convergence | Computationally expensive | Very fast near minimum |

Applications in Electronic Structure Theory

Ground State Properties

Variational principles enable the calculation of fundamental molecular properties including:

- Equilibrium geometries through energy minimization with respect to nuclear coordinates

- Vibrational frequencies via second derivatives of the variational energy

- Electronic properties including multipole moments and polarizabilities

- Reaction energies and barrier heights through energy differences

The variational guarantee ensures systematic improvability of these properties with increasingly sophisticated wavefunction ansatzes.

Excited State Methodologies

Treating excited states within variational frameworks requires specialized approaches:

State-Specific Optimization: Direct targeting of excited states through root-homing algorithms or initial guesses biased toward specific excitations.

State-Average Methods: Optimization of an average energy for multiple states ensures orthogonality and balanced description [29].

Time-Dependent Extensions: Linear response theory within variational frameworks enables efficient computation of excitation spectra.

The following diagram illustrates the excited state treatment workflow:

Research Reagent Solutions

Table 3: Essential Computational Tools for Variational Methods

| Tool Category | Specific Examples | Function | Application Context |

|---|---|---|---|

| Electronic Structure Packages | PySCF, CFOUR, Molpro | Implement SCF, CASSCF, CC algorithms | General quantum chemistry, method development |

| QMC Software | QMCPACK, CHAMP | Variational and diffusion Monte Carlo | High-accuracy correlation, solids, excited states |

| Quantum Computing Frameworks | Qiskit, Cirq, Pennylane | VQE algorithm implementation | NISQ device algorithms, quantum advantage |

| Optimization Libraries | SciPy, NLopt, Opt++ | General-purpose optimizers | Custom wavefunction optimization |

| Basis Set Libraries | Basis Set Exchange | Standardized Gaussian basis sets | Consistent calculations across methods |

Emerging Directions and Challenges

Quantum Computing and Hybrid Algorithms

The variational principle finds new expression in quantum computing through the Variational Quantum Eigensolver (VQE) algorithm [28]. This hybrid quantum-classical approach employs parameterized quantum circuits to prepare trial states, with classical optimization of parameters. The quantum variational formula:

E(θ) = min〈ψ(θ)|Ĥ|ψ(θ)〉

serves as the theoretical foundation for VQE and related algorithms [28]. Current challenges include barren plateaus in optimization landscapes and hardware noise effects, which active research seeks to address through adaptive ansatzes and error mitigation.

Methodological Innovations

Recent advances in variational methods include:

- Adaptive wavefunction approaches that dynamically expand Hilbert space

- Machine learning-inspired parameterizations using neural network quantum states

- Embedding techniques that combine high-level variational treatments with lower-level methods

- QED-extended variational methods for strong light-matter coupling environments [30]

These developments expand the applicability of variational principles to increasingly complex systems while maintaining the rigorous theoretical foundation that ensures reliability.

The variational principle provides the fundamental mathematical basis for energy optimization in computational chemistry, serving as the theoretical foundation for self-consistent field methods and beyond. Its rigorous formulation guarantees systematic improvability and connects electronic structure theory with practical computation. As methodological developments continue to expand the reach of variational approaches—from quantum Monte Carlo to quantum computing—this principle maintains its central role in enabling accurate predictions of molecular properties and behaviors. For researchers in drug development and materials design, understanding these foundations empowers more effective application of computational tools and critical evaluation of computational results.

Linear Combination of Atomic Orbitals (LCAO) and Basis Sets

The Linear Combination of Atomic Orbitals (LCAO) is a fundamental approximation in quantum chemistry that forms the cornerstone of modern computational electronic structure calculations. Introduced in 1929 by Sir John Lennard-Jones, this technique provides a framework for calculating molecular orbitals by representing them as quantum superpositions of atomic orbitals [31]. Within the context of self-consistent field (SCF) methods, the LCAO approach transforms the complex differential equations of quantum mechanics into tractable matrix equations that can be solved iteratively [9]. This methodology is indispensable for researchers investigating molecular properties, reaction energetics, and electronic behaviors in systems ranging from pharmaceutical compounds to advanced materials.

The LCAO approximation bridges the conceptual gap between atomic and molecular quantum states, allowing scientists to predict electronic distributions, bond formation, and spectroscopic properties. For drug development professionals, these computational approaches provide critical insights into molecular reactivity, stability, and intermolecular interactions without exclusive reliance on experimental screening. The effectiveness of any LCAO-based calculation depends critically on the choice of basis set—the collection of mathematical functions used to represent atomic orbitals—making understanding basis set selection crucial for obtaining accurate computational results in research applications [32].

Theoretical Foundation of LCAO

Mathematical Formalism

The fundamental principle of the LCAO method states that molecular orbitals can be expressed as linear combinations of atomic orbitals. Mathematically, the i-th molecular orbital, φi, is constructed from n atomic orbitals, χr:

[ \phii = c{1i}\chi1 + c{2i}\chi2 + c{3i}\chi3 + \cdots + c{ni}\chin = \sumr c{ri}\chir ]

where cri are the expansion coefficients that determine the contribution of each atomic orbital to the molecular orbital [31]. These coefficients are determined by solving the Schrödinger equation for the molecular system under the constraints of the variational principle, which ensures the optimal wavefunction for a given basis.

In the SCF approximation, this approach leads to the Roothaan-Hall matrix equation:

[ \mathbf{FC} = \boldsymbol{\varepsilon}\mathbf{SC} ]

where F is the Fock matrix representing the effective Hamiltonian, C is the matrix of molecular orbital coefficients, ε is a diagonal matrix of orbital energies, and S is the overlap matrix with elements Sμν = ⟨φμ|φν⟩ [9]. Solving this equation self-consistently provides both the molecular orbital coefficients and the associated energies that define the electronic structure of the system.

Connection to Self-Consistent Field Methods

The LCAO method is intrinsically linked to SCF methodologies, which iteratively solve the quantum mechanical equations for molecular systems. The SCF process begins with an initial guess of the molecular orbitals, constructs the Fock operator based on this guess, solves the Roothaan-Hall equations for new orbitals, and repeats until convergence is achieved [9]. This procedure ensures that the calculated electronic distribution is consistent with the potential field it generates.

For molecular systems, the core Hamiltonian is constructed using the LCAO approach, where matrix elements are computed between basis functions centered on different atoms. The quality of the final SCF solution depends critically on the completeness and quality of the underlying atomic basis set, making basis set selection a crucial step in computational modeling [33].

Basis Sets in Computational Chemistry

Basis Set Fundamentals and Types

Basis sets are collections of mathematical functions used to represent atomic orbitals in electronic structure calculations [32]. In the Hartree-Fock theory and related SCF methods, each molecular orbital is expressed as a linear combination of these basis functions, with coefficients determined iteratively during the calculation [32]. Ideally, an infinite basis set would provide an exact description of electron probability density, but practical computations require finite approximations that balance accuracy and computational efficiency [32].

The most frequently used basis functions in modern computational chemistry take the form of contracted Gaussians, which offer computational advantages in evaluating the multi-center integrals required in molecular calculations [32] [33]. From a chemical perspective, it is advantageous to treat valence orbitals differently from core orbitals since valence orbitals vary significantly with chemical bonding while core orbitals remain relatively unchanged [32].

Table 1: Common Basis Set Types in Computational Chemistry

| Basis Set Type | Description | Common Examples | Typical Applications |

|---|---|---|---|

| Minimal Basis | Uses minimum number of functions needed for each atom | STO-3G | Initial calculations, very large systems |

| Split-Valence | Treats valence and core electrons with different flexibility | 3-21G, 6-31G | Standard molecular calculations [32] |

| Polarized | Adds higher angular momentum functions | 6-31G(d), 6-31G* | Systems with polar bonds, accurate geometries |

| Diffuse | Includes spatially extended functions | 6-31+G, aug-cc-pVDZ | Anions, excited states, weak interactions [32] |

| High-Precision | Multiple polarization and correlation-consistent functions | cc-pVQZ, aug-cc-pV5Z | Benchmark calculations, spectroscopy |

Basis Set Selection and Optimization

Selecting an appropriate basis set requires careful consideration of the chemical system and properties of interest. For routine calculations on neutral organic molecules, split-valence basis sets like 6-31G provide a good balance between accuracy and computational cost [32]. When studying polar bonds or molecular geometries, adding polarization functions (e.g., 6-31G(d)) becomes necessary to accurately describe electron distribution deformations during bond formation [32].

For specific electronic states such as anions, highly excited states, or weakly bound complexes, diffuse functions are essential to properly describe the spatially extended electron densities [32]. Without sufficient flexibility for weakly bound electrons to localize far from the molecular framework, significant errors in energies and molecular properties can occur [32].

Basis set optimization follows systematic approaches where researchers often begin with a minimal basis set (e.g., STO-3G) and progressively use larger split-valence basis sets (e.g., 3-21G followed by 6-31G) to achieve converged results [32]. This stepwise approach helps identify convergence issues early while managing computational resources effectively.

Zhan et al. demonstrated that the 6-31+G(d) basis set is generally sufficient for calculating highest occupied molecular orbital (HOMO) and lowest unoccupied molecular orbital (LUMO) energies when the latter are negative, though this may not be economical for screening large datasets or large systems [32]. For calculating accurate reaction energetics and molecular properties, methods such as mPW1PW91/MIDIX+ provide both economical and reasonably accurate alternatives [32].

Experimental and Computational Methodologies

SCF Convergence Protocols

Achieving convergence in SCF calculations remains a significant challenge in computational chemistry. The SCF process involves iteratively solving for the electron density until the input and output densities converge within a specified threshold [9]. Convergence difficulties frequently arise from poor initial guesses, near-degenerate orbital energies, or complex electronic structures, and can arbitrarily cause calculations to fail or converge to high-energy states [34].

Advanced convergence acceleration techniques include:

- Improved Initial Guesses: Moving beyond the core Hamiltonian guess to methods like superposition of atomic densities (SAD), extended Hückel, or fragment orbitals provides better starting points [34].

- Damping and Level Shifting: These techniques modify the SCF procedure to avoid oscillatory behavior and occupation of high-energy virtual orbitals.

- Direct Inversion in Iterative Subspace (DIIS): This method extrapolates new density matrices from previous iterations to accelerate convergence.

- Second-Order Methods: Algorithms like quadratically convergent SCF provide robust convergence at increased computational cost per iteration.

For excited-state calculations, specialized methods like ΔSCF (delta SCF) target saddle points on the electronic Hamiltonian surface to model low-lying excitations, charge-transfer states, or core-hole excitations where time-dependent DFT often fails [34]. Implementing excited-state-aware SCF convergence methods using the Maximum Overlap Method (MOM), constrained DFT, or projection-based methods requires careful algorithmic design to ensure convergence to the desired electronic state [34].

Basis Set Implementation in DFT Calculations

In density functional theory calculations using the LCAO approach (DFT-LCAO), the key parameter is the density matrix, which defines the electron density [35]. For closed or periodic systems, this matrix is calculated by diagonalization of the Kohn-Sham Hamiltonian [35]. The electron density n(r) is constructed from the occupied eigenstates of the Kohn-Sham Hamiltonian: