Solving the Electron Correlation Problem: From Quantum Materials to Drug Discovery

Accurately solving the many-electron Schrödinger equation remains a central challenge across physical sciences and drug development.

Solving the Electron Correlation Problem: From Quantum Materials to Drug Discovery

Abstract

Accurately solving the many-electron Schrödinger equation remains a central challenge across physical sciences and drug development. This article explores the complexity of electron correlation, a key driver behind high-temperature superconductivity, quantum spin liquids, and the electronic properties of biomolecules. We detail foundational concepts and examine cutting-edge solutions, from transformative neural network quantum states and attention mechanisms to efficient, parameter-free methods like Correlation Matrix Renormalization. The article provides a critical comparison of these methodologies, discusses common optimization challenges, and validates performance against established benchmarks. Finally, we synthesize key takeaways and outline future implications for accurately modeling complex electronic structures in biomedical research and drug design.

The Core Challenge of Electron Correlation: From Quantum Materials to Complex Molecules

Defining Strongly Correlated Electron Systems and the 'Anna Karenina Principle'

Frequently Asked Questions (FAQs)

What is a strongly correlated electron system? A strongly correlated material is one where the behavior of electrons cannot be effectively described by models that treat them as non-interacting, independent particles [1]. In these systems, electron-electron interactions are so strong that they fundamentally alter the electronic properties, leading to phenomena that single-electron theories like standard density-functional theory (DFT) fail to explain, even qualitatively [1] [2].

What is the 'Anna Karenina Principle' in this context? This principle, inspired by Leo Tolstoy's novel, suggests that "all non-interacting systems are alike; each strongly correlated system is strongly correlated in its own way" [3]. While systems with weak electron correlations can be uniformly understood and described by a common set of theoretical tools, systems with strong correlations exhibit a vast diversity of exotic behaviors, with each one presenting a unique set of challenges and requiring a potentially unique approach for understanding [3].

Why is it so difficult to predict the properties of correlated materials? Our predictive power for strongly correlated systems is currently lacking because their physics emerges from the complex interplay of many competing interactions [3] [2]. Unlike weakly correlated systems, they cannot be adiabatically connected to a non-interacting model, and there is no single, unified theoretical framework that can describe all of them [3].

What are common experimental signatures of strong correlations? Key experimental indicators include [1] [2]:

- Enhanced effective mass: A large specific heat coefficient (γ) and magnetic susceptibility at low temperatures.

- Non-Fermi liquid behavior: Resistivity that deviates from the standard T² dependence, e.g., linear-T resistivity.

- Anomalous optical spectra: Spectral features that do not match the one-electron density of states predictions.

- Metal-insulator transitions: Unexplained transitions from a conducting to an insulating state, as seen in NiO or VO₂ [1].

What is the difference between strong and weak correlation? The distinction often comes down to the ratio between the electron interaction energy (e.g., Coulomb repulsion) and the kinetic energy. In a strongly correlated system, interaction energy dominates, making charge fluctuations costly and leading to phenomena like Mott insulation [4]. In a weakly correlated system, kinetic energy dominates, and electrons can be treated as nearly independent particles moving freely [4].

Troubleshooting Guide: Common Experimental Challenges

| Symptom | Possible Cause | Diagnostic Steps | Potential Solutions |

|---|---|---|---|

| Irreproducible transport measurements | Sample quality, surface degradation, poor electrical contacts. | - Image surface with atomic force microscopy (AFM).- Perform energy-dispersive X-ray spectroscopy (EDX) for stoichiometry.- Measure multiple contact configurations. | - Improve sample growth/synthesis conditions.- Prepare contacts in inert atmosphere or ultra-high vacuum. |

| Inconsistent spectroscopic results | Surface contamination, poor cleaving, final state effects. | - Compare data from multiple sample cleaves.- Use low-energy ion scattering (LEIS) to check surface purity.- Cross-reference with bulk-sensitive techniques (e.g., neutron scattering). | - Introduce in-situ cleaving capabilities.- Correlate with surface-sensitive techniques (e.g., STM). |

| Failure to observe predicted phase transition | The material is stuck in a metastable state, or the energy landscape is too flat. | - Perform specific heat measurements to check for hidden transitions.- Use neutron scattering to probe for short-range magnetic order.- Apply external tuning parameters (stress, magnetic field). | - Explore different annealing protocols.- Tune the system towards quantum criticality with pressure or doping [2]. |

| Theoretical model fails to fit data | The model neglects a key interaction (e.g., spin-orbit coupling, electron-phonon coupling) or is in the wrong universality class. | - Check if the model captures the correct low-energy scales and symmetries.- Compare fits from multiple competing models (e.g., DMFT vs. HF).- Look for signatures of "hidden order" [3]. | - Use more advanced theoretical methods (e.g., LDA+DMFT, neural network quantum states) [1] [5]. |

Key Experimental Protocols

1. Protocol for Diagnosing Non-Fermi Liquid Behavior via Resistivity

Objective: To identify deviations from standard Fermi liquid theory, where resistivity follows ρ(T) = ρ₀ + AT². A linear-T resistivity is a common signature of non-Fermi liquid behavior near a quantum critical point [2].

Methodology:

- Sample Preparation: Mount a single-crystal or high-quality polycrystalline sample in a cryostat with a well-calibrated thermometer.

- Measurement: Measure the electrical resistivity (ρ) as a function of temperature (T) over a continuous range, typically from sub-Kelvin to room temperature.

- Data Analysis:

- Plot ρ vs. T.

- Attempt to fit the low-temperature data to the form ρ(T) = ρ₀ + AT².

- If the fit is poor, fit to a power law ρ(T) = ρ₀ + CTⁿ and determine the exponent

n. A value ofn≈ 1 is indicative of non-Fermi liquid behavior.

2. Protocol for Probing Topology in Correlated Insulators via Green's Function

Objective: To diagnose topological order in a strongly correlated insulator, such as a Mott insulator, where standard band topology methods fail [6].

Methodology:

- Experimental Setup: Use momentum-resolved techniques like angle-resolved photoemission spectroscopy (ARPES) or, more effectively, resonant inelastic X-ray scattering (RIXS) [1].

- Data Collection: Map the electronic spectral function over the Brillouin zone. Identify contours where the Green's function vanishes (known as "zeros").

- Topological Diagnosis:

- Calculate the frequency-dependent Green's function Berry curvature from the experimental data or complementary theoretical calculations.

- Integrate the Berry flux around the Green's function zeros.

- A quantized Berry flux is a direct probe of the system's non-trivial topology, surviving even in the presence of strong correlations [6].

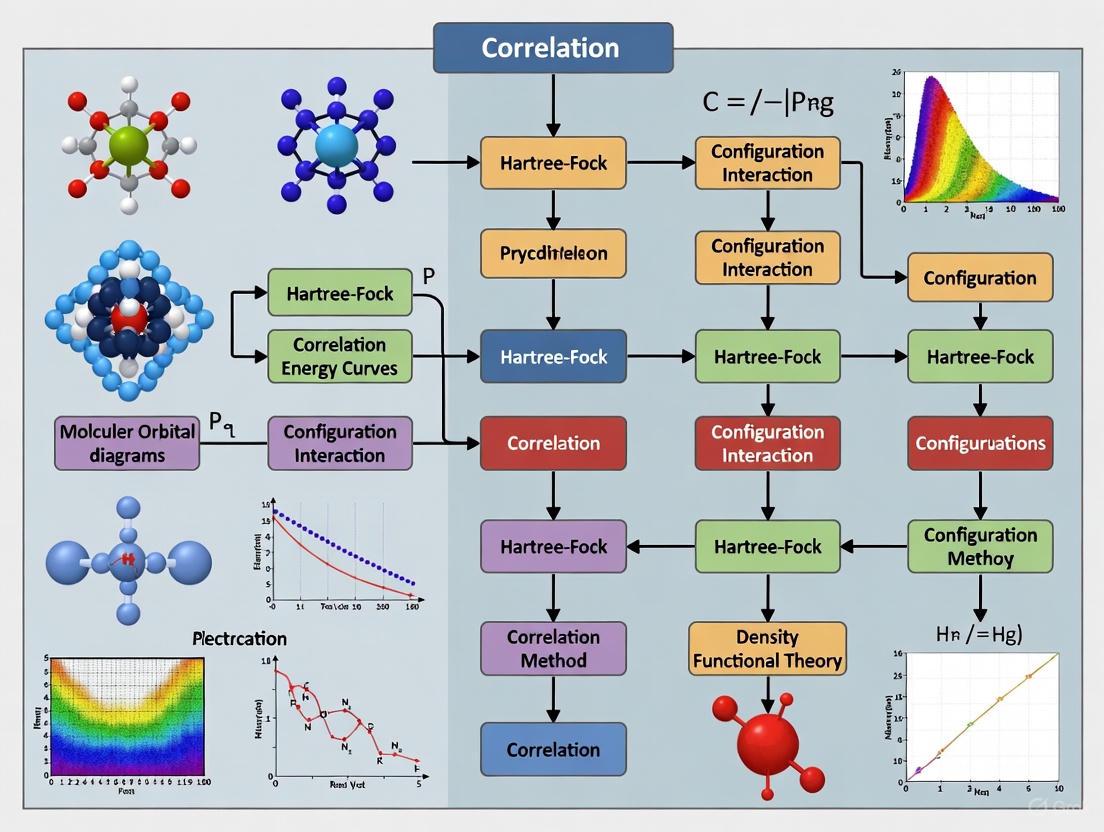

The following workflow visualizes the diagnostic process for a strongly correlated material, integrating both theoretical and experimental approaches:

The Scientist's Toolkit: Key Research Reagents & Materials

The following table lists essential "research reagents"—both theoretical and experimental—used to investigate strongly correlated electron systems.

| Tool / Material | Function / Role |

|---|---|

| Transition Metal Oxides (e.g., Cuprates, Ruthenates) | Prototypical platforms for studying high-temperature superconductivity, Mott insulation, and unconventional magnetism [1]. |

| Heavy Fermion Compounds (e.g., CeCu₂Si₂, YbCu₄Ag) | Materials where strong correlations lead to quasiparticles with extremely large effective masses, often hosting quantum criticality [2]. |

| Dynamical Mean-Field Theory (DMFT) | A computational method that maps a lattice model onto an impurity model, successfully capturing local correlation effects beyond LDA [1]. |

| Neural Network Variational Monte Carlo (NN-VMC) | An emerging approach using self-attention neural networks as wavefunction ansatzes to solve the many-electron problem with high accuracy and favorable scaling [5]. |

| Resonant Inelastic X-ray Scattering (RIXS) | A powerful spectroscopic technique to probe elementary excitations (spin, charge, orbital) in correlated materials, crucial for diagnosing topology via Green's function [1] [6]. |

| Hydrostatic Pressure Cells | A key tuning parameter to reversibly change the interatomic distance, thereby controlling electron correlation strength and driving systems across quantum phase transitions [2]. |

Frequently Asked Questions (FAQ)

Q1: What is the fundamental difference between conventional and unconventional superconductors? In conventional superconductors, electron pairing is mediated by lattice vibrations (phonons), and the superconducting energy gap is typically uniform. In unconventional superconductors, such as magic-angle graphene or cuprates, pairing is driven by strong electron correlations, leading to a non-uniform, V-shaped superconducting gap. This indicates a different, non-phononic pairing mechanism [7].

Q2: My high-Tc material does not show zero resistance. What could be the issue? Even when a material enters a superconducting phase, defects can prevent the realization of true zero resistance. For instance, in stabilized nickelate thin films, superconductivity was observed at temperatures up to -231°C, but zero resistance was only achieved at a much lower temperature of -271°C due to material imperfections and oxygen atom ratio variations [8].

Q3: What experimental evidence confirms strong electron correlations in cuprates? The coexistence of short-range magnetic order and superconductivity in the ground state is a key signature. Muon-spin-relaxation (μSR) measurements on T'-structure cuprates have directly revealed this coexistence, confirming their nature as strongly correlated electron systems [9].

Q4: What are "strange metals," and why are they important for superconductivity? Strange metals are materials where electrons violate conventional rules of electricity, showing a linear decrease in electrical resistance with temperature. This phase often competes with and underlies high-temperature superconductivity. Understanding this phase is thought to be essential for understanding the superconductivity itself [10].

Q5: How can I stabilize a high-pressure superconductor at ambient pressure? External chemical pressure can replace physical pressure. For nickelate superconductors, using a supporting substrate that imposes lateral compression during thin-film growth has successfully stabilized the superconducting state at room pressure, enabling easier study [8].

Troubleshooting Guides

Issue: Inability to Distinguish Unconventional Superconductivity

- Problem: Standard tunneling spectroscopy signals are ambiguous and cannot definitively confirm unconventional superconductivity.

- Solution: Implement a combined measurement platform that integrates electron tunneling with electrical transport.

- Procedure:

- Fabricate a device that allows for simultaneous tunneling and transport measurements on the same sample [7].

- Continuously monitor electrical resistance while performing tunneling spectroscopy. A genuine superconducting gap will appear only when the resistance is zero [7].

- Analyze the shape of the superconducting gap. A V-shaped profile is a key signature of unconventional superconductivity, as observed in magic-angle trilayer graphene, distinct from the flat gap of conventional superconductors [7].

- Procedure:

- Underlying Principle: This method directly links the electronic density of states (from tunneling) to the hallmark of superconductivity, zero resistance (from transport), providing unambiguous evidence.

Issue: Material Requires Impractically High Pressure for Superconductivity

- Problem: Superconductivity in promising materials (e.g., nickelates, hydrides) only occurs under extreme pressures, limiting study and application.

- Solution: Utilize epitaxial strain from a matched substrate to simulate high pressure.

- Procedure:

- Select a substrate with a smaller lattice constant than your superconducting material.

- Grow a thin film of the superconductor on this substrate. The crystal structure of the film will compress laterally to match the substrate, creating an internal strain [8].

- Optimize the level of compressive strain by using different substrates to maximize the superconducting transition temperature at ambient pressure [8].

- Procedure:

Issue: Difficulty Probing the "Strange Metal" Phase

- Problem: The strange metal phase exhibits mysterious properties that are difficult to quantify and understand.

- Solution: Employ quantum information theory metrics, specifically Quantum Fisher Information (QFI).

- Procedure:

- Use techniques like inelastic neutron scattering to probe the material's atomic-level behavior [11].

- Apply the theoretical framework of QFI to the experimental data. QFI measures the degree of quantum entanglement in a system [11].

- Identify the quantum critical point, where entanglement is expected to peak and quasiparticles break down. This provides a direct measure of the strong correlations driving the strange metal behavior [11].

- Procedure:

Table 1: Key Characteristics of Correlated Superconductors

| Material Class | Example Material | Max Tc (or range) | Pressure / Stabilization Method | Key Evidence of Correlation |

|---|---|---|---|---|

| Hydrogen-rich Hydrides | LaH₁₀, H₃S | 250-287 K | High Pressure (180-274 GPa) | Tc divergence; enhanced effective mass (BR picture) [12] |

| Nickelates | NdNiO₂ thin film | -247°C to -231°C | Epitaxial strain (ambient pressure) | Cuprate-like electronic structure [8] |

| Cuprates | T'-Pr₁.₃₋ₓLa₀.₇CeₓCuO₄ | 15-27 K | Ambient (after chemical reduction) | Coexistence of superconductivity & short-range magnetic order (μSR) [9] |

| Magic-Angle Graphene | Twisted Trilayer Graphene | Not Specified | Moiré potential (ambient) | V-shaped superconducting gap (tunneling/transport) [7] |

Table 2: Diagnostic Signatures of Quantum Phases

| Phase | Diagnostic Measurement | Key Signature / Quantitative Limit |

|---|---|---|

| Strange Metal | Electrical Resistivity vs. Temperature | Linear dependence (ρ ∝ T); scattering rate reaches Planckian limit: 1/τ ≈ (kₚ/ħ) * T [10] |

| Unconventional Superconductor | Combined Tunneling & Transport Spectroscopy | V-shaped superconducting gap that appears concurrently with zero resistance [7] |

| Quantum Critical Point | Quantum Fisher Information (QFI) | Peak in electron entanglement, measured via QFI analysis of neutron scattering data [11] |

Detailed Experimental Protocols

Protocol 1: Stabilizing Superconductivity in Nickelates at Ambient Pressure

This protocol is based on the methodology from Stanford and SLAC [8].

- Substrate Selection and Preparation: Choose a perovskite substrate (e.g., (LaAlO₃)₃(Sr₂TaAlO₆)₇) with a lattice constant smaller than the target nickelate film. Clean the substrate surface using standard techniques to achieve an atomically smooth, single-crystalline surface.

- Thin-Film Deposition: Deposit a thin film of the nickelate material (e.g., NdNiO₂) onto the substrate using pulsed laser deposition (PLD) or molecular beam epitaxy (MBE). Precisely control the growth temperature and oxygen partial pressure to achieve the correct crystalline phase.

- Strain Engineering: The in-plane lattice mismatch between the substrate and the film will impose a compressive strain on the nickelate layer during growth. This lateral compression mimics the effects of hydrostatic pressure, stabilizing the superconducting phase.

- Post-Processing (Reduction): After deposition, perform a soft-annealing process in a reducing atmosphere to remove excess oxygen and achieve the optimal oxygen stoichiometry for superconductivity.

- Validation: Confirm superconductivity via electrical transport measurements (4-probe method) showing a drop in resistance to zero, and characterize the crystal structure using X-ray diffraction at a synchrotron facility like SSRL [8].

Protocol 2: Measuring the Unconventional Superconducting Gap in Magic-Angle Graphene

This protocol is adapted from the MIT experiment [7].

- Device Fabrication: Create a heterostructure of twisted trilayer graphene (MATTG) hexagonal boron nitride (hBN) using the "tear-and-stack" method. Precisely control the twist angle to the "magic angle" (~1.6°) where correlated effects emerge.

- Setup Combined Measurement Platform: Fabricate side gates and contacts to the MATTG device to allow for simultaneous electrical transport and tunneling spectroscopy measurements.

- Transport Measurement: Cool the device to millikelvin temperatures. Apply a DC current and measure the longitudinal resistance as a function of gate voltage and temperature to identify the superconducting dome.

- Tunneling Spectroscopy: At points within the superconducting dome (where resistance is zero), perform tunneling spectroscopy. This is done by applying a bias voltage and measuring the differential conductance (dI/dV), which is proportional to the density of states.

- Gap Analysis: Plot the differential conductance as a function of bias voltage. An unconventional superconductor will show a V-shaped gap structure, as opposed to the U-shaped gap of a conventional s-wave superconductor. Correlate the appearance of this gap directly with the zero-resistance state [7].

The Scientist's Toolkit

Essential Materials and Reagents

| Item | Function in Experiment |

|---|---|

| Diamond Anvil Cell (DAC) | Applies extreme hydrostatic pressure (hundreds of GPa) to materials like hydrides to induce or enhance superconductivity [12]. |

| Matched Single-Crystal Substrates (e.g., LSAT, LAO) | Provides epitaxial strain to stabilize high-pressure phases of superconductors (e.g., nickelates) at ambient conditions during thin-film growth [8]. |

| Quantum Fisher Information (QFI) | A theoretical tool from quantum information science used to quantify electron entanglement from experimental data (e.g., neutron scattering) in strange metals [11]. |

| Self-Attention Neural Network (NN) Ansatz | A powerful computational wavefunction used in Variational Monte Carlo (VMC) simulations to solve the many-electron Schrödinger equation in strongly correlated systems with high accuracy [5]. |

Experimental Workflow Visualization

Diagram 1: Correlated Material Research Workflow

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental cause of the exponential growth of the Hilbert space in electron correlation calculations?

The exponential growth arises from the combinatorial nature of constructing multi-configurational wavefunctions. To accurately describe electron correlation, the wavefunction is typically expressed as a sum of multiple Configuration State Functions (CSFs), as (\psi = \sumI CI \Phi_I) [13]. The number of possible CSF configurations scales factorially with the number of electrons and orbitals in the system [14]. For a molecule with N electrons, the number of electron pairs scales as ( \dfrac{N(N-1)}{2} = X ), and the number of terms in a full Configuration Interaction (CI) wavefunction can scale as (2^X) [13]. This means that for a system with just ten electrons, the number of terms can reach (3.5 \times 10^{13}), making full CI calculations impractical for all but the smallest molecules [13].

FAQ 2: What is the practical impact of this scaling on my research simulations?

This scaling directly translates to massive computational demands, primarily in two areas:

- Memory and Storage: The Hamiltonian matrix (\mathbf{H}), with elements (H{I,J} = \langle \PhiI | H | \Phi_J \rangle), that must be constructed and diagonalized becomes intractably large [13].

- Processing Time: The transformation of two-electron integrals from the atomic orbital basis to the molecular orbital basis is a critical pre-step for forming (\mathbf{H}). This transformation alone requires computer resources proportional to the fifth power of the number of basis functions ((M^5)) [13], creating a severe bottleneck before the CI calculation even begins.

FAQ 3: What are the main strategies to circumvent this computational hurdle?

Researchers have developed several strategies to manage this complexity, which can be broadly categorized as shown in the diagram below.

Troubleshooting Guides

Issue 1: Abrupt Calculation Termination During Integral Transformation

Problem: Your calculation fails with memory-related errors during the step that transforms two-electron integrals from the atomic orbital (AO) basis to the molecular orbital (MO) basis.

Explanation: This step is a known bottleneck in correlated calculations, as its computational cost scales as (M^5), where M is the number of basis functions [13]. The process requires storing a large number of intermediate integrals in memory or on disk.

Solution:

Troubleshooting Steps:

- Check Basis Set Size: Reduce the size of your AO basis set. Consider using a double-zeta basis before moving to larger triple-zeta or quadruple-zeta sets.

- Utilize Molecular Symmetry: If your molecule has high symmetry, ensure your computational code is configured to use point group symmetry. This can dramatically reduce the number of unique integrals that need to be computed and stored.

- Increase System Resources: If possible, allocate more memory (RAM) to your calculation and ensure sufficient disk space is available for scratch files.

- Employ Density Fitting: Use the "density fitting" (DF) or "resolution of the identity" (RI) approximation for two-electron integrals. This technique reduces the formal scaling and storage requirements of the integral transformation.

Preventative Measures:

- Always start with a smaller basis set to test the feasibility of your chosen correlation method.

- Consult your software documentation for specific keywords to enable density fitting or symmetry exploitation.

Issue 2: Inaccurate Dissociation Energies or Reaction Barriers

Problem: Your calculated potential energy surfaces are qualitatively wrong, such as failing to correctly describe bond dissociation or giving inaccurate reaction barrier heights.

Explanation: This is a classic symptom of inadequate treatment of static electron correlation [14] [15]. Single-reference methods like standard Density Functional Theory (DFT) or Hartree-Fock (HF) with only single and double excitations (CISD) fail where multiple electronic configurations become important.

Solution:

Diagnosis:

- Perform a stability analysis on your HF or DFT wavefunction. An unstable solution indicates that a multi-reference approach is needed.

- Check the weights of the configurations in your CI expansion. If one or more additional configurations have weights nearly as large as the reference configuration, static correlation is significant.

Resolution:

- Adopt a Multi-Reference Method: Switch to a method capable of handling static correlation, such as Complete Active Space Self-Consistent Field (CASSCF) [15].

- Choose an Appropriate Active Space: Select which molecular orbitals and electrons (the "active space") are most relevant to the process you are studying (e.g., bonding and antibonding orbitals for a breaking bond). The workflow for this approach is detailed below.

- Add Dynamic Correlation: Follow the CASSCF calculation with a method that incorporates dynamic correlation from the external space, such as Multi-Reference Configuration Interaction (MRCI) or Multi-Reference Perturbation Theory (e.g., CASPT2) [15].

Experimental Protocols & Data

Protocol: Configuration Interaction (CI) for Electron Correlation

Methodology Summary: This protocol outlines the steps for a Configuration Interaction (CI) calculation, a foundational ab initio method for including electron correlation by constructing the wavefunction as a linear combination of multiple electronic configurations (CSFs) [13].

Step-by-Step Workflow:

- Reference Wavefunction: Perform a Hartree-Fock (HF) calculation on the system to obtain an initial set of molecular orbitals (MOs) and a reference wavefunction (a single CSF) [13].

- Integral Generation: Compute all one- and two-electron integrals over the atomic orbital (AO) basis set.

- Integral Transformation: Transform the two-electron integrals from the AO basis to the MO basis obtained in Step 1. This is a critical and resource-intensive step with (M^5) scaling [13].

- Generate Configuration State Functions (CSFs): Create a set of CSFs ((\PhiI, \PhiJ, ...)) by promoting electrons from occupied orbitals in the reference CSF to virtual orbitals. Common levels of excitation are:

- CISD: Singles and Doubles.

- CISDT: Singles, Doubles, and Triples.

- Full CI: All possible excitations (computationally prohibitive).

- Construct Hamiltonian Matrix: Build the CI matrix (\mathbf{H}), where each element is (H{I,J} = \langle \PhiI | H | \Phi_J \rangle) [13].

- Diagonalize Hamiltonian Matrix: Solve the matrix eigenvalue equation (\sumJ H{I,J} CJ = E CI) to obtain the CI energy ((E)) and the coefficients ((C_I)) for each CSF in the final wavefunction [13].

Quantitative Data on Method Scaling

Table 1: Computational Scaling of Various Electron Correlation Methods. This table summarizes the formal computational cost scaling of different methods, where N represents the number of correlated electrons and/or basis functions (M). These are indicative of the steep increase in resource requirements with system size.

| Method Category | Specific Method | Formal Scaling | Key Limitation |

|---|---|---|---|

| Hartree-Fock | HF | (M^3) to (M^4) | Neglects all electron correlation [14]. |

| Density Functional Theory | DFT | ~(M^3) to (M^4) | Accuracy depends on the (unknown) exact functional [14]. |

| Single-Reference CI | CISD | (M^6) | Not size-consistent; limited to dynamic correlation [13]. |

| Full Configuration Interaction | Full CI | Factorial in N | Computationally prohibitive for >10 electrons [13]. |

| Integral Transformation | (Pre-step for CI) | (M^5) | Becomes a primary bottleneck for large calculations [13]. |

Table 2: Performance Comparison of Example Exchange-Correlation (XC) Functionals for Core-Ionization Energies (as of 2025). Specialized functionals like cQTP25 are being developed to target specific properties accurately, offering an alternative to expensive wavefunction-based methods [16].

| XC Functional | Jacob's Ladder Rung | Key Feature | Reported Performance (XPS) |

|---|---|---|---|

| cQTP25 | N/A (Meta-GGA/Hybrid) | Optimized for core-level 1s electrons [16]. | Best performance in benchmark studies [16]. |

| QTP00 | N/A | Predecessor to cQTP25 [16]. | Close performance to cQTP25 [16]. |

| QTP17 | N/A | Predecessor to cQTP25 [16]. | Good performance, behind QTP00 and cQTP25 [16]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational "Reagents" for Electron Correlation Studies. In computational chemistry, software, algorithms, and basis sets are the essential reagents for successful experiments.

| Item / "Reagent" | Function / Purpose | Example(s) |

|---|---|---|

| Atomic Orbital Basis Sets | The set of functions used to expand molecular orbitals. | Pople-style (e.g., 6-31G*), Correlation-consistent (e.g., cc-pVDZ, cc-pVTZ). |

| Electronic Structure Codes | Software packages that implement quantum chemical methods. | Molpro, ORCA, PySCF, Q-Chem, Gaussian, GAMESS. |

| Hartree-Fock Solver | Provides the initial reference wavefunction and orbitals for most correlated calculations [13]. | Built-in module in all major electronic structure codes. |

| CI & CASSCF Solvers | Algorithms to solve for the coefficients and energy in multi-configurational wavefunctions [13] [15]. | Configuration Interaction (CI), Complete Active Space SCF (CASSCF). |

| Perturbation Theory Modules | Provides a computationally efficient way to add dynamic electron correlation to a reference wavefunction [15]. | Møller-Plesset 2nd Order (MP2), CASPT2. |

| Density Fitting (RI) Libraries | A numerical approximation that significantly speeds up the calculation of two-electron integrals, reducing the (M^5) bottleneck [13]. | Auxiliary basis sets (e.g., cc-pVDZ-RI). |

A central challenge in modern condensed matter physics and quantum chemistry is the understanding of materials and molecules with strong electron correlations. In many systems, the effects of electron-electron interactions can be captured by ignoring correlations or treating them as a perturbation. However, strongly correlated electron systems explicitly manifest interactions where adiabatic connection to an interaction-free system is not possible. These systems host fascinating macroscopic phenomena including high-temperature superconductivity, quantum spin-liquids, fractionalized topological phases, and strange metals. Despite decades of intensive research, the essential physics of many such systems remains poorly understood, and predictive power for these materials is notably lacking [3].

The exponential growth of the Hilbert space dimension with system size makes solving the many-electron Schrödinger equation for solids exceptionally difficult. While traditional quantum chemistry methods like configuration interaction (CI) can be accurate, they become computationally prohibitive for larger systems. Conversely, density functional theory (DFT), while efficient, often fails for strongly correlated electrons. This accuracy-versus-efficiency trade-off has driven research into novel computational approaches, including machine learning and neural network-based methods [5] [17].

Current Methodologies and Benchmarking Approaches

Traditional Electronic Structure Methods

Several established methods form the foundation for electronic structure calculations, each with distinct advantages and limitations concerning electron correlation.

- Hartree-Fock (HF) Method: This method uses a single Slater determinant and captures approximately 99% of the total energy but misses crucial electron correlation effects. It serves as a starting point for more accurate post-Hartree-Fock methods [5] [18].

- Post-Hartree-Fock Methods: Techniques like Møller-Plesset perturbation theory (MP2) and coupled cluster theory (CCSD, CCSD(T)) add corrections to the HF energy to account for electron correlation. While they can achieve high accuracy, their computational cost skyrockets with system size, making them intractable for very large systems [18].

- Density Functional Theory (DFT): DFT is highly successful for many materials but faces well-known challenges for strongly correlated electrons, where standard exchange-correlation functionals are inadequate [17].

Emerging Approaches for Correlated Systems

Table 1: Modern Computational Methods for Electron Correlation

| Method | Key Principle | Strengths | Limitations |

|---|---|---|---|

| Correlation Matrix Renormalization (CMR) [17] | Extends the Gutzwiller approximation to evaluate two-particle operators; uses variational wavefunctions. | No adjustable Coulomb parameters; correct atomic limit; good for bonding/dissociation. | Residual correlation energy requires fitting; computational cost scales with basis set. |

| Neural Network Variational Monte Carlo (NN-VMC) [5] | Uses neural network wavefunctions (e.g., self-attention) as ansatz; optimized via variational Monte Carlo. | High accuracy; massive representational power; promising scaling with system size. | Requires significant computational resources for training and optimization. |

| Information-Theoretic Approach (ITA) [18] | Uses density-based descriptors (e.g., Shannon entropy, Fisher information) to predict correlation energies. | Low cost (uses HF results); physically interpretable descriptors; good for large systems. | Accuracy can vary; may struggle with highly delocalized or 3D metallic systems. |

Neural Network Variational Monte Carlo (NN-VMC) has recently emerged as a powerful tool. This approach uses neural networks to construct trial wavefunctions, which are optimized by minimizing the energy using Monte Carlo techniques. Recent work explores using a self-attention mechanism—the cornerstone of modern large language models—to learn how electrons influence each other. This approach has demonstrated high accuracy in systems ranging from atoms and molecules to moiré quantum materials [5].

The Information-Theoretic Approach (ITA) offers a different strategy. It uses information-theoretic quantities derived from the Hartree-Fock electron density, such as Shannon entropy and Fisher information, to predict post-Hartree-Fock correlation energies via linear regression. This method can predict correlation energies at the cost of a HF calculation, offering a potentially efficient path for complex systems like molecular clusters and polymers [18].

Correlation Matrix Renormalization (CMR) theory is another efficient method that extends the Gutzwiller approximation. It is free of adjustable Coulomb parameters and has been shown to accurately describe the bonding and dissociation behaviors of hydrogen and nitrogen clusters, problems that are particularly challenging for DFT [17].

Troubleshooting Guides for Computational Experiments

Guide 1: Addressing Convergence Failures in NN-VMC Calculations

Problem: The variational Monte Carlo calculation fails to converge or converges to an energy that is too high.

- Checkpoint 1: Neural Network Architecture and Initialization

- Issue: Poorly chosen network architecture or initial parameters.

- Solution: For correlated electron systems in solids, consider a self-attention-based ansatz, which has shown promising results [5]. Ensure the number of variational parameters is sufficient; studies suggest the required number may scale with the square of the number of electrons (~N²) [5]. Use standard, well-tested initialization schemes for network weights.

- Checkpoint 2: Optimization Procedure

- Issue: Unstable optimization or trapped in local minima.

- Solution: Use adaptive learning rate methods. Monitor the energy and variance during optimization. Consider using a hybrid approach, initializing the NN wavefunction with a mean-field solution (e.g., Hartree-Fock) to provide a good starting point.

- Checkpoint 3: Monte Carlo Sampling

- Issue: Inadequate sampling of the configuration space.

- Solution: Increase the number of Monte Carlo samples. Check for ergodicity issues. For lattice systems, ensure your sampler can properly explore different spin and charge configurations.

Guide 2: Correcting Systematic Errors in Correlation Energy Predictions

Problem: Predicted correlation energies show large deviations from benchmark values (e.g., from high-level quantum chemistry calculations).

- Checkpoint 1: Method Transferability

- Issue: The method (e.g., a fitted functional in CMR or a trained ITA model) does not transfer well to the new system.

- Solution: For CMR, ensure the renormalization functional f(z) was fitted on a reference system (like H₂ or N₂) that is chemically similar to your target system [17]. For ITA, verify that the linear regression model was trained on systems with similar bonding patterns (e.g., don't use a model trained on alkanes for metallic clusters) [18].

- Checkpoint 2: Basis Set Incompleteness

- Issue: The basis set used is too small to accurately represent the electron correlation.

- Solution: Conduct a basis set convergence study. If using a minimal basis, be aware that it may not capture all correlation effects, even with advanced methods [17].

- Checkpoint 3: Strong Correlation Effects

- Issue: In systems with very strong correlations (e.g., near a Mott transition), single-reference methods may fail.

- Solution: For NN-VMC, ensure the neural network ansatz is expressive enough to capture multi-reference character. For other methods, verify their applicability for strongly correlated regimes.

Frequently Asked Questions (FAQs)

FAQ 1: What defines a "strongly correlated electron system," and why is it problematic?

A strongly correlated electron system is one where the electron-electron interactions are so dominant that the system's properties cannot be understood by starting from a picture of non-interacting electrons. It is not possible, or not useful, to adiabatically connect such a system to an interaction-free one. These systems are problematic because they exhibit complex phenomena like high-temperature superconductivity and strange metal behavior that defy explanation by standard theoretical tools, and our ability to predict their properties from first principles is severely limited [3].

FAQ 2: My DFT calculations are failing for my correlated transition metal oxide. What are my most efficient options?

You have several paths, each with a different balance of cost and accuracy:

- Information-Theoretic Approach (ITA): If you have benchmark data for similar materials, you can use ITA quantities to predict correlation energies at a low computational cost [18].

- Correlation Matrix Renormalization (CMR): This method is parameter-free for Coulomb interactions and has a computational workload similar to Hartree-Fock, but can deliver accuracy comparable to high-level quantum chemistry calculations [17].

- Neural Network VMC: For ultimate accuracy, if resources allow, NN-VMC with a self-attention ansatz is a promising but computationally intensive option [5].

FAQ 3: How can I rigorously benchmark the accuracy of my new method for predicting electron correlation energies?

Rigorous benchmarking should involve:

- Diverse Test Sets: Use a variety of molecules and materials with known high-quality reference data (e.g., from CCSD(T) or quantum Monte Carlo). The RDB7 dataset is used for reaction barriers, and clusters like (C₆H₆)ₙ are used for extended systems [19] [18].

- Out-of-Distribution Testing: Test your model's performance on systems that are not represented in your training set to evaluate its generalizability. Performance often drops sharply under these conditions [19].

- Standardized Frameworks: Use community-developed software frameworks like ChemTorch for chemical reactions, which provide built-in data splitters and benchmarking pipelines to ensure fair comparisons and reproducibility [19].

FAQ 4: Are there any upcoming events to learn about the latest advances in this field?

Yes, the field is very active. Key conferences include the International Conference on Strongly Correlated Electron Systems (SCES 2025), which will be held in Montréal, Canada, from July 6-11, 2025 [20]. There are also educational schools like the Boulder Summer School in Condensed Matter and Materials Physics (scheduled for June 30-July 25, 2025), which in 2025 focuses on the dynamics of strongly correlated electrons [21].

Essential Research Reagents & Computational Tools

Table 2: Key Research "Reagents" and Resources for Correlated Electron Studies

| Category | Item / Software / Resource | Primary Function in Research |

|---|---|---|

| Software & Frameworks | ChemTorch [19] | A deep learning framework for benchmarking and developing chemical reaction property prediction models, ensuring reproducibility. |

| NN-VMC Codes (e.g., custom) [5] | Implements neural network variational Monte Carlo with architectures like self-attention for solving many-electron problems. | |

| Benchmark Datasets | RDB7 Dataset [19] | A standard dataset for benchmarking chemical reaction barrier height predictions. |

| Molecular Clusters (e.g., (H₂O)ₙ, (C₆H₆)ₙ) [18] | Used to test the scalability and accuracy of methods for predicting electron correlation energies in extended systems. | |

| Model Systems | Hydrogen & Nitrogen Clusters [17] | Well-understood test systems for validating a method's description of bonding and dissociation under changing correlation strength. |

| Moiré Heterobilayers (e.g., WSe₂/WS₂) [5] | A modern, tunable materials platform for studying correlated electron phases like Mott insulators and Wigner crystals. |

Workflow and Pathway Visualizations

Diagram Title: Decision Workflow for Selecting a Correlation Method

Diagram Title: Parameter Transfer Validation Protocol

Modern Computational Arsenal: From AI-Enhanced to Parameter-Free Methods

The quest to solve the many-electron Schrödinger equation represents one of the most enduring challenges in physical sciences and computational chemistry. The exponential complexity of the quantum many-body problem, often called the "exponential wall," has limited traditional computational methods like Full Configuration Interaction (FCI) to small molecular systems. In recent years, a transformative approach has emerged: using neural network quantum states (NNQS) parameterized by Transformer architectures to approximate many-body wavefunctions. These methods, including QiankunNet and various self-attention ansatzes, leverage the remarkable ability of attention mechanisms to capture complex, long-range correlations—precisely what is needed to describe intricate electron interactions in molecules and materials. By framing electronic configurations as sequences and applying language model architectures, researchers are developing powerful new tools to tackle the electron correlation problem with unprecedented accuracy and efficiency.

Core Concepts: Transformer-Based Wavefunctions

Fundamental Architecture and Components

Transformer-based wavefunctions adapt the architecture that revolutionized natural language processing to the domain of quantum mechanics. The fundamental insight treats electronic configurations—represented as sequences of occupation numbers (0s and 1s) in second quantization—as "sentences" to be processed by attention mechanisms [22]. This approach has been implemented in several variants:

QiankunNet: A comprehensive framework combining Transformer decoders for amplitude prediction with multi-layer perceptrons (MLPs) for phase prediction [23] [22]. The architecture processes configuration strings autoregressively and incorporates physics-informed initialization using truncated configuration interaction solutions.

Vision Transformer (ViT) Wavefunctions: Adapts the Vision Transformer architecture for quantum spin systems by splitting spin configurations into patches, embedding them, and processing through transformer encoders [24].

Self-Attention Ansatzes: Employ attention mechanisms to construct Slater determinants from generalized orbitals that depend on the configuration of all electrons, effectively creating context-aware orbital representations [25] [26].

Key Technical Innovations

Table: Key Technical Innovations in Transformer-Based Wavefunctions

| Innovation | Description | Benefit |

|---|---|---|

| Autoregressive Sampling | Uses Monte Carlo Tree Search (MCTS) with hybrid BFS/DFS strategy to generate electron configurations [23] | Eliminates Markov Chain Monte Carlo correlations, conserves electron number |

| Neural Network Backflow | Transformer generates configuration-dependent orbitals fed into Slater determinants [27] | Captures complex correlation patterns beyond fixed orbital bases |

| Factored Attention | Attention weights depend only on positions, not values [24] | Reduces computational cost while maintaining performance for quantum systems |

| Physics-Informed Initialization | Uses truncated configuration interaction solutions as starting points [23] [22] | Accelerates convergence and improves stability |

Troubleshooting Guide: Common Implementation Challenges

Convergence and Optimization Issues

Problem: Poor convergence during variational optimization

- Root Cause: Rugged loss landscape common in neural quantum states; inappropriate learning rates; inadequate sampling.

- Solution: Implement hybrid optimization with data-driven pretraining using numerical or experimental data followed by Hamiltonian-driven optimization [28]. Use physics-informed initialization with truncated configuration interaction solutions [23].

- Advanced Tip: For strongly correlated systems, employ transfer learning from smaller systems or similar chemical environments to initialize parameters.

Problem: Energy estimates fluctuating excessively during training

- Root Cause: Insufficient sample size for local energy evaluation; high variance of gradient estimates.

- Solution: Increase batch size systematically; implement variance reduction techniques like control variates; use efficient Hamiltonian representation to reduce memory requirements [23] [22].

- Verification: Monitor both energy and variance metrics throughout training; ensure stable decrease in both quantities.

Sampling and Memory Challenges

Problem: Inefficient sampling of relevant configurations

- Root Cause: Standard Markov Chain Monte Carlo (MCMC) gets trapped in metastable states; low acceptance rates.

- Solution: Implement autoregressive sampling with Monte Carlo Tree Search (MCTS) as in QiankunNet [23]. Use electron number conservation to prune the sampling space [23].

- Performance Tip: Leverage the batched implementation with explicit multi-process parallelization for distributed sampling across multiple GPUs.

Problem: Memory constraints for large active spaces

- Root Cause: Exponential growth of configuration space; large transformer parameter count.

- Solution: Use factored attention mechanisms [24]; implement efficient KV caching during autoregressive generation [23]; employ model parallelism for very large networks.

- Configuration: For systems beyond 30 spin orbitals, consider patching strategies that process the configuration in segments [24] [28].

Accuracy and Performance Problems

Problem: Failure to achieve chemical accuracy (1 kcal/mol)

- Root Cause: Insufficient model expressivity; inadequate treatment of electron correlations; inappropriate orbital basis.

- Solution: Increase transformer depth strategically; incorporate neural backflow transformations to create configuration-dependent orbitals [27]; ensure adequate active space selection.

- Benchmarking: Compare with classical methods like DMRG and CCSD(T) on smaller systems where available [23] [27].

Problem: Inaccurate prediction of magnetic properties

- Root Cause: Failure to capture multi-reference character; inadequate treatment of spin correlations.

- Solution: Use multiple determinant extensions in backflow transformations [27]; ensure wavefunction ansatz preserves physical symmetries; incorporate explicit spin constraints in architecture.

Experimental Protocols and Methodologies

Standard Implementation Workflow

The following diagram illustrates the core workflow for implementing Transformer-based wavefunction methods:

QiankunNet Specific Protocol

For implementing QiankunNet specifically, follow this detailed workflow:

System Preparation

- Define molecular geometry and basis set (e.g., STO-3G, cc-pVDZ)

- Generate second-quantized Hamiltonian using Jordan-Wigner or similar transformation [23]

- Select active space considering computational constraints and correlation effects

Network Architecture Configuration

Sampling Strategy Implementation

- Implement autoregressive sampling with Monte Carlo Tree Search (MCTS)

- Configure hybrid BFS/DFS strategy with tunable exploration parameter [23]

- Set up electron number conservation constraints

- Implement parallel sampling across multiple processes

Optimization Procedure

- Use variational Monte Carlo (VMC) with stochastic gradient descent

- Employ efficient local energy evaluation with compressed Hamiltonian representation [23]

- Monitor both energy and variance metrics

- Implement early stopping based on energy convergence (typically 10^-5 Ha tolerance)

Research Reagent Solutions: Essential Computational Tools

Table: Essential Computational Components for Transformer-Based Wavefunction Methods

| Component | Function | Implementation Examples |

|---|---|---|

| Transformer Encoder/Decoder | Captures long-range electron correlations via attention mechanisms | QiankunNet's amplitude network [23], ViT wavefunction encoder [24] |

| Autoregressive Sampler | Generates valid electron configurations with conserved particle number | MCTS with BFS/DFS hybrid [23], NAQS-inspired approaches [23] |

| Neural Backflow | Creates configuration-dependent orbitals for enhanced correlation | Transformer-based orbital generator [27] |

| Variational Monte Carlo Engine | Optimizes wavefunction parameters to minimize energy | VMC with stochastic gradient descent [23] [25] |

| Hamiltonian Compressor | Reduces memory footprint of second-quantized Hamiltonian | Sparse representation, symmetry exploitation [23] |

Frequently Asked Questions (FAQs)

Q: How does the scaling of Transformer-based wavefunctions compare to traditional quantum chemistry methods? A: Traditional methods like FCI scale exponentially with system size. Coupled cluster methods (e.g., CCSD(T)) typically scale as N^7. Transformer-based approaches show promising scaling—empirical studies suggest the number of parameters scales roughly as N^2 with the number of electrons [25] [26], though computational cost depends on specific implementation and sampling requirements.

Q: Can these methods handle strongly correlated systems where traditional methods fail? A: Yes, this is a key advantage. QiankunNet has successfully handled challenging systems like the Fenton reaction mechanism with CAS(46e,26o) active space [23] and iron-sulfur clusters [27], where multi-reference character causes traditional methods to fail. The attention mechanism naturally captures complex correlation patterns without pre-defined reference configurations.

Q: What computational resources are required for typical applications? A: Resource requirements vary significantly with system size:

- Small molecules (up to 30 spin orbitals): Can often run on a single GPU with 8-16GB memory

- Medium systems (30-50 spin orbitals): Typically require multiple GPUs or high-memory nodes

- Large systems (50+ spin orbitals): Require distributed computing and model parallelism The memory-efficient sampling strategies in QiankunNet help manage larger systems [23].

Q: How is fermionic antisymmetry enforced in these wavefunctions? A: Different approaches exist:

- QiankunNet uses a combination of autoregressive property and Slater determinants in backflow approaches [27]

- Some implementations use antisymmetric layers or explicit antisymmetrization

- The neural backflow approach naturally maintains antisymmetry through the use of determinants [27]

Q: What is the role of pre-training in these models? A: Pre-training plays a crucial role in stabilization and convergence acceleration. Common strategies include:

- Physics-informed initialization using truncated configuration interaction solutions [23] [22]

- Data-driven pretraining with numerical or experimental data [28]

- Transfer learning from smaller similar systems Pre-training helps navigate the challenging optimization landscape of neural quantum states.

Advanced Technical Reference

Performance Benchmarks

Table: Performance Benchmarks of Transformer-Based Wavefunction Methods

| System | Method | Accuracy (% FCI) | Key Achievement |

|---|---|---|---|

| Small Molecules (up to 30 spin orbitals) | QiankunNet | 99.9% FCI [23] | Chemical accuracy across benchmark set |

| N₂ molecule (STO-3G) | QiankunNet | >99.9% FCI [23] | Two orders of magnitude improvement over MADE |

| [2Fe-2S] cluster | QiankunNet with backflow | Chemical accuracy vs DMRG [27] | Accurate magnetic coupling constants |

| Moiré quantum materials | Self-attention ansatz | Beyond Hartree-Fock and ED [25] | Unbiased solution for solid-state systems |

| Fenton reaction CAS(46e,26o) | QiankunNet | Accurate description [23] | Large active space handling |

Architectural Decision Guide

When designing your Transformer-based wavefunction implementation, consider these key architectural choices:

- For molecules with strong static correlation: Prioritize neural backflow approaches with multiple determinants [27]

- For large systems with limited resources: Use factored attention mechanisms [24] and efficient sampling strategies [23]

- For properties beyond ground state energy: Ensure architecture preserves physical symmetries relevant to target properties

- For rapid prototyping: Start with Vision Transformer adaptations and established libraries like NetKet [24]

The field of Transformer-based wavefunctions continues to evolve rapidly, with new architectures and optimization strategies emerging regularly. The frameworks established by QiankunNet and self-attention ansatzes provide a powerful foundation for tackling the electron correlation problem across diverse chemical systems, from drug molecules to quantum materials.

Efficient Autoregressive Sampling with Monte Carlo Tree Search (MCTS)

Core Concepts and Definitions

What is the fundamental role of MCTS in enhancing autoregressive sampling for scientific problems?

Monte Carlo Tree Search (MCTS) provides a structured planning framework to guide autoregressive generative models. Unlike standard autoregressive sampling that proceeds sequentially without lookahead, MCTS explores a tree of possible future actions (e.g., next atom in a molecule, next token in a sequence). It balances exploring new possibilities (exploration) and refining known promising paths (exploitation). This is crucial in scientific domains like quantum chemistry and drug discovery, where the goal is to find sequences (molecular structures, electron configurations) that optimize complex, expensive-to-evaluate properties. MCTS uses stochastic simulations to estimate the potential of partial sequences, allowing for more informed and efficient generation compared to greedy or random sampling [23] [29] [30].

How does "autoregressive sampling" differ from other generation methods in this context?

Autoregressive sampling generates a solution (e.g., a molecule, a quantum state configuration) step-by-step, where each new step is conditioned on all previous steps. This is analogous to how one writes a sentence one word at a time. In contrast, one-shot or all-at-once methods generate the entire solution in a single step. The key advantage of the autoregressive approach is its compatibility with MCTS, as the tree can be built by considering each step as a new decision node. This combination allows the model to "plan ahead" and backtrack from poor decisions, which is not possible with standard one-shot generation [31].

Frequently Asked Questions (FAQs)

FAQ 1: My MCTS simulation is getting stuck in a local optimum and failing to discover diverse solutions. What could be wrong?

This is often a result of an imbalanced exploration/exploitation trade-off. The Upper Confidence Bound for Trees (UCT) formula is central to this balance.

- Problem: The exploration constant in the UCT formula might be set too low, causing the search to over-exploit known good paths and miss better alternatives.

- Solution: Systematically increase the exploration constant to encourage visiting less-explored nodes. Additionally, consider implementing advanced selection rules. For example, the

ParetoPUCTscheme was designed for multi-objective optimization to better navigate trade-offs between different goals [29]. Another innovative approach isPℋ-UCT-ME, which uses predictive entropy and multiple experts to guide exploration, making it particularly effective in vast search spaces like protein design [30].

FAQ 2: The computational cost of MCTS is too high for my large-scale problem. How can I improve efficiency?

The memory and time complexity of MCTS can become prohibitive for large systems. Several strategies can mitigate this:

- Hybrid Search Strategy: Implement a hybrid Breadth-First/Depth-First Search (BFS/DFS). Use BFS to accumulate a batch of promising starting points, then perform batched DFS. This strategy significantly reduces memory usage [23].

- Search Space Pruning: Introduce domain-specific constraints to prune irrelevant branches. A highly effective method in quantum chemistry is to enforce electron number conservation during tree traversal, which immediately eliminates physically invalid configurations and drastically shrinks the search space [23].

- Parallelization: Move beyond single-process computation. Design your sampling algorithm for multi-process parallelization, allowing unique sample generation to be distributed across multiple CPUs or GPUs [23].

- Model Caching: For Transformer-based models, use Key-Value (KV) caching during autoregressive generation. This avoids redundant computation of attention keys and values for previously generated tokens, providing substantial speedups [23].

FAQ 3: How can I integrate prior knowledge or physical constraints into the MCTS process?

Integrating domain knowledge is key to making MCTS efficient and physically meaningful.

- Physics-Informed Initialization: Instead of starting from a random state, initialize the search from a principled starting point. For instance, QiankunNet uses truncated configuration interaction solutions to provide a physically reasonable initial state for variational optimization of quantum systems, which accelerates convergence [23] [32].

- Biophysical Fidelity in Rollouts: Use a rollout policy that incorporates domain expertise. In protein design, using a biophysical-fidelity-enhanced diffusion model for rollouts, guided by metrics like pLDDT (predicted local-distance difference test), helps focus edits on structurally uncertain regions and ensures generated sequences are physically plausible [30].

- Reward Shaping: Incorporate physical constraints directly into the reward function or the tree expansion rules. For example, the VGAE-MCTS model uses a "steric strain filter" and a filter to discourage large ring structures to generate more realistic and stable molecules [33].

Troubleshooting Common Experimental Issues

Issue: Poor Convergence or Inaccurate Results in Quantum System Calculations

- Symptoms: The computed energy of a molecular system fails to converge to the benchmark Full Configuration Interaction (FCI) value, or the convergence is unacceptably slow.

- Investigation Protocol:

- Verify Wave Function Ansatz: Ensure the expressive capacity of the neural network is sufficient. Transformer-based architectures are often superior for capturing complex quantum correlations compared to simpler Multi-Layer Perceptrons (MLPs) [23].

- Check Sampling Procedure: Confirm that the autoregressive sampling with MCTS is generating uncorrelated samples. A key advantage of this approach is circumventing the slow mixing and correlated samples of Markov Chain Monte Carlo (MCMC) [34]. Validate that your sampling is truly direct and uncorrelated.

- Validate Reward Signal: In variational optimization, the local energy is the primary reward. Use a compressed Hamiltonian representation and parallel local energy evaluation to ensure this calculation is both memory-efficient and computationally fast [23].

- Review Initialization: Check your physics-informed initialization. The model should not start from a random state. Using a truncated configuration interaction solution as a starting point is critical for rapid and accurate convergence [23].

- Resolution Workflow:

- Begin with a small, well-understood system (e.g., a diatomic molecule) to establish a performance baseline.

- Upgrade the neural network ansatz to a more expressive model (e.g., a Transformer) if using an MLP.

- Increase the MCTS search budget (number of simulations) to allow for more thorough exploration of the configuration space.

- Re-initialize the run using a improved, principled starting point from a truncated CI calculation.

Issue: Failure to Generate Molecules with Multiple Target Properties

- Symptoms: The generated molecules show good performance on one objective (e.g., binding affinity) but poor scores on other critical properties (e.g., drug-likeness QED, synthetic accessibility SA).

- Investigation Protocol:

- Analyze the MCTS Selection Policy: The standard UCT might be overly favoring a single objective. Check if you are using a multi-objective MCTS variant like

ParetoPUCT[29]. - Inspect the Pareto Front Pool: Algorithms like ParetoDrug maintain a global pool of Pareto-optimal molecules. Verify that this pool is being updated correctly and contains diverse candidates that represent different trade-offs between the objectives [29].

- Evaluate the Guidance Model: Assess the quality of the pre-trained autoregressive generative model that provides the initial policy. If this model is not properly conditioned on the target protein, the search will be inefficient [29].

- Analyze the MCTS Selection Policy: The standard UCT might be overly favoring a single objective. Check if you are using a multi-objective MCTS variant like

- Resolution Workflow:

- Switch from a single-objective UCT policy to a dedicated multi-objective MCTS algorithm.

- Adjust the relative weights of different properties in the combined reward function or Pareto ranking.

- Ensure the pre-trained guidance model is robust and was trained on relevant data (e.g., protein-ligand complexes for target-aware generation).

Quantitative Performance Data

The following tables summarize key performance metrics for MCTS-enhanced autoregressive sampling from recent literature.

Table 1: Performance in Quantum Chemistry Applications (QiankunNet)

| Molecular System | Metric | QiankunNet Performance | Benchmark Value (FCI) |

|---|---|---|---|

| Systems up to 30 spin orbitals | Correlation Energy Recovery | 99.9% of FCI | 100% [23] |

| N₂ molecule (STO-3G basis) | Accuracy vs NAQS | Two orders of magnitude higher accuracy | NAQS fails chemical accuracy [23] |

| Fenton reaction (CAS(46e,26o)) | Active Space Size Handled | Successfully described electronic evolution | Previously intractable [23] [32] |

Table 2: Performance in Multi-Objective Drug Discovery (ParetoDrug)

| Evaluation Metric | Description | ParetoDrug Performance Note |

|---|---|---|

| Docking Score | Measures binding affinity to target protein | Optimized synchronously with other drug-like properties [29] |

| QED | Quantitative Estimate of Drug-likeness (0 to 1) | Optimized for values closer to 1 [29] [33] |

| SA Score | Synthetic Accessibility Score | Optimized for easier synthesis (lower score) [29] |

| Uniqueness | Sensitivity to different target proteins | High uniqueness, generating diverse molecules per target [29] |

Experimental Protocols

Protocol 1: Solving the Many-Electron Schrödinger Equation with MCTS Sampling

This protocol outlines the methodology for the QiankunNet framework [23] [32].

- System Setup: Map the electronic Hamiltonian to a spin Hamiltonian using a transformation like Jordan-Wigner.

- Wave Function Ansatz: Employ a Transformer-based neural network to represent the quantum wave function. Its attention mechanism is key for capturing complex correlations.

- Physics-Informed Initialization: Initialize the network parameters using a truncated configuration interaction solution to provide a principled starting point.

- Autoregressive Sampling with MCTS:

- Use a layer-wise MCTS to autoregressively sample electron configurations (orbital occupations).

- The MCTS policy dynamically allocates samples, maximizing the probability of selecting the best action at the root.

- Enforce electron number conservation as a hard constraint during tree expansion to prune invalid states.

- Variational Optimization: Optimize the network parameters (wave function) using the variational Monte Carlo (VMC) method, where the energy expectation is computed from samples generated in step 4.

Protocol 2: Multi-Objective Molecule Generation with Pareto MCTS

This protocol is based on the ParetoDrug framework for drug discovery [29].

- Objective Definition: Define the set of target properties to optimize (e.g., Docking Score, QED, SA Score).

- Guidance Model: Load a pre-trained, target-aware, autoregressive generative model (e.g., a Transformer conditioned on protein structure).

- Pareto MCTS Search:

- Initialize a global pool to track Pareto-optimal molecules.

- For a given number of iterations, run MCTS. In the selection phase, use the

ParetoPUCTrule to balance exploration and exploitation across multiple objectives. - During expansion and simulation, use the pre-trained generative model to propose and evaluate candidate atom additions.

- Update the Pareto front pool with any new molecule that is not dominated in all objectives by existing members.

- Output: Return the set of molecules in the final Pareto front, representing the best trade-offs between the desired properties.

Workflow and System Diagrams

MCTS Autoregressive Sampling Core Workflow

System Architecture for Quantum Chemistry

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Frameworks

| Tool/Component | Function | Example Use-Case |

|---|---|---|

| Transformer Architecture | A highly expressive neural network using attention mechanisms to model complex, long-range dependencies in sequential data. | Serves as the core wave function ansatz in QiankunNet to capture quantum correlations [23]. |

| Variational Graph Autoencoder (VGAE) | A deep learning model that learns latent representations of graph-structured data (e.g., molecules). | Used in VGAE-MCTS to generate molecular feature maps that guide the MCTS search process [33]. |

| Pre-trained Autoregressive Model | A generative model trained on a large dataset to predict the next component in a sequence (atoms, tokens). | Provides a prior policy and rollout guidance for MCTS in frameworks like ParetoDrug [29]. |

| Discrete Diffusion Model | A generative model that adds and removes noise in discrete steps, capable of revising multiple positions in a sequence simultaneously. | Used as a planning and rollout engine in MCTD-ME for protein design, enabling more flexible revisions than autoregressive models [30]. |

| Compressed Hamiltonian | A memory-efficient representation of the quantum mechanical operator that defines a system's energy. | Critical for enabling the efficient, parallel evaluation of local energies in large quantum systems [23]. |

Frequently Asked Questions

Q1: What is the primary purpose of using a truncated Configuration Interaction (CI) solution for physics-informed initialization? The primary purpose is to provide a principled, physically-motivated starting point for the subsequent variational optimization of a neural network quantum state (NNQS). This initialization strategically places the initial model parameters closer to the true solution, which significantly accelerates convergence and helps avoid poor local minima. In the QiankunNet framework, this method has been proven to enhance performance, enabling the achievement of 99.9% of the full configuration interaction (FCI) benchmark correlation energies for systems of up to 30 spin orbitals [23].

Q2: My model is failing to converge after initialization. What could be wrong? This issue can stem from several factors. First, ensure that the fidelity of the initial CI solution is sufficient; a truncation that is too severe may not provide a useful starting point. Second, verify the correct mapping of the CI state to the neural network parameters. The initial neural network state must accurately represent the quantum state from the CI calculation. Third, check for implementation errors in the orbital configurations used to generate the truncated CI solution, as incorrect electron number conservation will lead to unphysical states [23].

Q3: Does physics-informed initialization limit the model's ability to find solutions beyond the initial CI guess? No, when implemented correctly, it does not. The neural network wave function ansatz, particularly a highly expressive one like a Transformer, possesses the capacity to refine and correct the initial state. The initialization serves as a guide, but the variational optimization process can subsequently discover more accurate wave functions and lower energies than the initial CI starting point [23].

Q4: How do I choose the appropriate level of truncation for the CI initialization? The choice involves a trade-off between computational cost and quality of the initial guess. A higher level of excitation (e.g., CISD vs CIS) in the truncated CI calculation will provide a better initial state but requires more pre-computation. It is recommended to start with a level of truncation that is computationally feasible for your system and then empirically validate that it provides a convergence benefit over a random initialization [23].

Q5: Can this initialization strategy be applied to other NNQS architectures beyond Transformers? Yes, the general principle is architecture-agnostic. The method of using a pre-computed classical quantum chemistry solution to initialize a neural network wave function can be applied to other NNQS ansatzes, such as multilayer perceptrons (MLPs) or convolutional neural networks, provided there is a method to map the classical state onto the network's initial parameters [23].

Troubleshooting Guide

| Problem | Possible Causes | Suggested Solutions |

|---|---|---|

| Slow Convergence | Low-quality initial CI guess; Poor hyperparameter tuning. | Increase the level of CI truncation; Adjust learning rate and optimizer settings. |

| Training Instability | Incorrect state mapping; High-variance energy gradients. | Verify the parameter initialization mapping; Use gradient clipping; Tune the batch size in autoregressive sampling [23]. |

| Unphysical Results | Violation of particle number; Incorrect orbital active space. | Implement sampling constraints to conserve electron number; Re-check the active space selection for the CI calculation [23]. |

| High Memory Usage | Large CI vector; Overly expressive neural network. | Use a more aggressive CI truncation; Consider a smaller neural network width before scaling up. |

Experimental Protocols and Data

Protocol 1: Generating the Truncated CI Initial State

- Define the Molecular System: Specify the molecular geometry, basis set (e.g., STO-3G), and active space (e.g., CAS(n electrons, m orbitals)).

- Perform Hartree-Fock Calculation: Obtain a mean-field reference state.

- Run Truncated CI: Execute a Configuration Interaction calculation with single and double excitations (CISD) or a selected higher truncation level. This generates a state vector of coefficients for electronic configurations.

- Map to Neural Network Parameters: Use the CI state vector to initialize the weights of the neural network wave function ansatz. This may involve setting the initial output of the network to be proportional to the logarithm of the CI coefficients [23].

Protocol 2: Benchmarking Performance

To quantitatively evaluate the effectiveness of physics-informed initialization, compare the following metrics against training from a random initialization:

- Time (or optimization steps) to reach chemical accuracy (1.6 mHa error).

- Final achieved correlation energy relative to the FCI benchmark.

- Training stability (e.g., variance of loss over multiple runs).

The table below summarizes hypothetical benchmarking data illustrating the expected performance gain:

Table 1: Comparative Performance of Initialization Methods on a Model System

| Initialization Method | Steps to Chemical Accuracy | Final Correlation Energy (% of FCI) | Stability (Loss Variance) |

|---|---|---|---|

| Random | 50,000 | 99.5% | High |

| Truncated CI (CIS) | 25,000 | 99.7% | Medium |

| Truncated CI (CISD) | 10,000 | 99.9% | Low |

Workflow Visualization

The following diagram illustrates the complete workflow for implementing physics-informed initialization with a truncated CI solution, integrating into the broader neural network quantum state training procedure.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools and Their Functions

| Item | Function in Research |

|---|---|

| Classical Electronic Structure Package (e.g., PySCF, Molpro) | Computes the initial Hartree-Fock and truncated CI wave functions, which serve as the physics-informed guess [23]. |

| Neural Network Quantum State (NNQS) Framework | Provides the architecture (e.g., Transformer, MLP) to parameterize the wave function and perform variational Monte Carlo (VMC) optimization [23]. |

| Autoregressive Sampler with MCTS | Efficiently generates uncorrelated samples of electron configurations, enforcing physical constraints like particle number conservation [23]. |

| Compressed Hamiltonian Representation | Reduces memory and computational cost during the local energy evaluation, which is critical for scaling to larger systems [23]. |

Frequently Asked Questions (FAQs)

Q1: What is the core advantage of CMR theory over other methods like DFT+U or DMFT? CMR is a parameter-free, ab initio method that requires no adjustable Coulomb parameters (like the U parameter) and avoids double-counting issues of electron correlation energy, which are common challenges in LDA+U and DFT+DMFT approaches [17] [35]. It provides the correct atomic limit and handles electron correlations from the weak to strong regime efficiently [17].

Q2: My CMR calculation for a molecule at dissociation yields poor total energy. What could be wrong? This is likely related to the treatment of the residual correlation energy, Ec. The renormalization z-factor might require modification via a functional, f(z). Ensure that f(z) has been properly determined for your system. For minimal basis sets, f(z) can be derived analytically by fitting to exact Configuration Interaction (CI) results for a dimer of the same element. For larger basis sets, a numerical fit is required [17].

Q3: How does the computational cost of CMR scale, and what are the limiting factors? The computational workload for evaluating the non-local part of the energy is similar to the Hartree-Fock approach, scaling as O(N4) with the number of basis functions, N [17]. The most demanding part is the optimization of local configuration weights, which scales linearly with the number of inequivalent correlated atoms but exponentially with the number of local correlated orbitals per atom [17].

Q4: Can CMR be applied to periodic solid-state systems? Yes. The CMR theory has been formulated and implemented for multi-band periodic lattice systems. This implementation has been benchmarked on materials with s and p orbitals, showing good performance for properties like equilibrium lattice constant, cohesive energy, and bulk modulus [35].

Troubleshooting Guides

Issue: Poor Description of Bond Dissociation

| # | Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|---|

| 1 | Incorrect or missing residual correlation functional, f(z). | Check if the system under study is similar to the reference dimer used to fit f(z). Compare local double occupancy probabilities with reference data. | Determine f(z) by matching CMR total energy and local configuration weights to exact CI or high-level MCSCF results for a reference dimer of the same element [17]. |

| 2 | Insufficient treatment of local orbitals. | Verify that all relevant valence orbitals (e.g., 2s and 2p for nitrogen) are included as correlated orbitals. | For atoms with multiple orbitals, use separate functionals fs(zs) and *fp(zp*) for different orbital types, fitted against a dimer [17]. |

Issue: Slow Convergence or High Computational Cost

| # | Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|---|

| 1 | Too many correlated orbitals per atom. | Review the number of local correlated orbitals selected for each atom. | Carefully choose the minimal set of chemically relevant orbitals to capture the essential correlation effects, as the cost scales exponentially with this number [17]. |

| 2 | Large number of inequivalent correlated atoms. | Analyze the system's symmetry to identify equivalent atoms. | Exploit the system's symmetry. The optimization cost scales linearly with the number of inequivalent atoms, so reducing this number through symmetry identification lowers the cost [17]. |

Experimental Protocols & Methodologies

Protocol: Determining the Residual Correlation Functional f(z)

Objective: To empirically derive the functional f(z) that corrects the residual correlation energy in CMR calculations for a specific element and basis set [17].

Procedure:

- Select a Reference Dimer: Choose a homonuclear diatomic molecule (e.g., H₂ or N₂) as the reference system.