Strategies for Improving Computational Efficiency in Large-Scale Biomedical Calculations

This article provides a comprehensive overview of advanced strategies for enhancing computational efficiency in large-scale biomedical calculations, crucial for researchers and drug development professionals.

Strategies for Improving Computational Efficiency in Large-Scale Biomedical Calculations

Abstract

This article provides a comprehensive overview of advanced strategies for enhancing computational efficiency in large-scale biomedical calculations, crucial for researchers and drug development professionals. It explores the foundational challenges of resource-intensive simulations, details cutting-edge methodological advances in AI model optimization and equivariant architectures, and offers practical troubleshooting guidance for balancing performance trade-offs. By validating these techniques through real-world case studies in molecular dynamics and drug discovery, the article serves as an essential guide for accelerating biomedical research, reducing computational costs, and enabling previously infeasible large-system simulations.

The Computational Bottleneck: Understanding Efficiency Challenges in Biomedical Simulations

Defining Computational Efficiency in Large-Scale Biomedical Calculations

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common bottlenecks affecting computational efficiency in biomedical AI? Common bottlenecks include insufficient access to high-performance computing (HPC) resources like GPUs, inefficient data management strategies for large genomic datasets, and suboptimal configuration of AI model training parameters. The exponential growth in AI compute demand is rapidly outpacing the available infrastructure supply [1].

FAQ 2: How can I determine if my research workload is suitable for cloud computing? Cloud computing is ideal for projects requiring scalable resources, such as training large neural networks or processing multi-omics data. It provides on-demand access to specialized hardware like GPUs and avoids the capital expense of building in-house clusters. However, you must consider data privacy regulations like HIPAA and ensure your cloud provider complies with security standards for handling sensitive medical data [2].

FAQ 3: What is Hyperdimensional Computing (HDC) and how can it improve efficiency? Hyperdimensional Computing (HDC) is an emerging computational paradigm that represents data as points in a high-dimensional space (typically thousands of dimensions). Its key advantages for biomedical applications include:

- Robustness to noise and errors due to distributed, holographic data representation [3].

- High computational efficiency from simple vector operations, leading to fast and energy-efficient processing, which is crucial for real-time applications [3].

- Data agnosticism, allowing it to be applied to diverse data types, from biomedical signals to text [3].

FAQ 4: What are the best practices for managing computational costs in the cloud? To manage costs effectively, leverage the pricing models offered by cloud providers, such as pay-as-you-go or reserved instances. This allows you to pay only for the resources you consume and can significantly reduce expenses compared to maintaining local workstations with comparable power [2].

FAQ 5: Why is data interoperability a challenge for computational efficiency? The healthcare and biotechnology sectors generate vast amounts of data in diverse and often incompatible formats. A lack of standardization makes data integration and analysis computationally expensive. Initiatives like the Fast Healthcare Interoperability Resources (FHIR) standard are crucial for creating a more efficient platform for data analysis [2].

Troubleshooting Guides

Issue 1: Slow Model Training Times

Problem: AI model training is taking significantly longer than expected, delaying research progress.

Possible Causes & Solutions:

| Cause | Diagnostic Steps | Solution |

|---|---|---|

| Insufficient GPU Resources | Monitor GPU utilization (e.g., using nvidia-smi). Check if memory is maxed out. |

Scale up GPU resources via cloud platforms (e.g., access to NVIDIA H100 or A100 clusters) or utilize institutional HPC resources like the Frontera supercomputer [1] [4]. |

| Inefficient Data Pipeline | Check if CPU is at 100% while GPU utilization is low, indicating a data loading bottleneck. | Optimize data loading by using efficient formats (e.g., TFRecords), implementing prefetching, and ensuring data is stored on high-speed storage (e.g., SSDs). |

| Suboptimal Hyperparameters | Review training configuration. Is the model larger than necessary for the task? | Perform hyperparameter tuning (e.g., adjusting batch size, learning rate) and consider using a simpler model architecture or transfer learning. |

Issue 2: High Cloud Computing Costs

Problem: The cost of running computations in the cloud is exceeding the project's budget.

Possible Causes & Solutions:

| Cause | Diagnostic Steps | Solution |

|---|---|---|

| Unoptimized Resource Allocation | Analyze cloud provider's cost management dashboard to identify underutilized or over-provisioned resources. | Switch to a pay-as-you-go model for variable workloads or purchase reserved instances for predictable, long-running workloads to reduce costs [2]. |

| Inefficient Code or Algorithms | Profile code to identify sections consuming the most compute cycles. | Refactor code for efficiency and explore alternative, less computationally intensive algorithms like Hyperdimensional Computing (HDC) where applicable [3]. |

| Data Egress Fees | Review bills for costs associated with moving data out of the cloud network. | Plan workflows to keep data processing and storage within the same cloud ecosystem to minimize egress fees. |

Issue 3: Integration of AI Models into Clinical Workflows

Problem: A successfully trained and efficient AI model fails to be adopted in a real-world clinical setting.

Possible Causes & Solutions:

| Cause | Diagnostic Steps | Solution |

|---|---|---|

| Poor Usability and Integration | Get feedback from clinicians. Is the model's output easy to access and interpret within their existing systems? | Design AI tools to fit seamlessly into clinical workflows, involving clinicians and patients in the design process to ensure practicality [2]. |

| Lack of Trust and Transparency | Evaluate if the model's decision-making process is a "black box" to the end-user. | Employ explainable AI (XAI) techniques to make the model's predictions more interpretable and transparent for healthcare professionals. |

| Regulatory and Validation Hurdles | Check if the model meets regulatory standards for medical devices (e.g., FDA approvals). | Engage with regulatory experts early in the development process to ensure the model and its computational pipeline meet all necessary compliance and validation requirements [1]. |

Quantitative Data on Compute Demand

The table below summarizes key statistics highlighting the scale of current and projected computational demands in AI, which directly impacts biomedical research.

| Metric | Value | Source/Projection |

|---|---|---|

| Global AI Data Center Power Demand (Projected 2030) | 200 gigawatts | Bain & Company [1] |

| Cumulative AI Infrastructure Spending (Projected 2029) | $2.8 trillion | Citigroup [1] |

| U.S. Data-Center Electricity Use (Projected 2028) | Nearly triple current levels | Industry Forecast [1] |

| NVIDIA Data Center GPU Sales (Q2 2025) | $41.1 Billion (Quarterly, +56% YoY) | NVIDIA Financial Report [1] |

Experimental Protocols for Efficiency

Protocol 1: Benchmarking Computational Efficiency for a Protein Folding Workflow

This protocol outlines steps to measure and optimize the performance of a structure prediction pipeline, using tools like AlphaFold.

1. Objective: To quantitatively assess and improve the computational speed and resource utilization of a protein structure prediction experiment.

2. Materials & Computational Environment:

- HPC/Cloud Cluster: Access to a system with multiple GPUs (e.g., NVIDIA A100/V100).

- Software: AlphaFold2/3 installation via Docker or Singularity.

- Input Data: Protein sequence(s) in FASTA format.

- Monitoring Tools:

nvidia-smifor GPU monitoring,htopfor CPU/RAM, and custom timing scripts.

3. Methodology:

- Step 1 - Baseline Measurement: Run the prediction for a standard protein sequence (e.g., 250 residues) with default settings. Record the total wall-clock time, peak GPU memory usage, and average GPU utilization.

- Step 2 - Resource Variation: Repeat the experiment while varying the number of GPUs (1, 2, 4). Record the execution time for each configuration to identify scaling efficiency.

- Step 3 - Data Pipeline Optimization: If GPU utilization is low, investigate the data input pipeline. Implement data prefetching and ensure databases are stored on fast local storage. Rerun and measure performance.

- Step 4 - Analysis: Plot the speedup versus the number of GPUs. Calculate the parallel efficiency. The optimal configuration is the one that delivers the best trade-off between speed and resource cost.

4. Expected Output: A performance profile that identifies the most computationally efficient resource configuration for your specific hardware setup.

Protocol 2: Implementing a Hyperdimensional Computing (HDC) Model for Biomedical Data Classification

This protocol provides a high-level methodology for applying HDC to a classification task, such as patient stratification based on medical records.

1. Objective: To create and evaluate an HDC model for classifying biomedical data, leveraging its computational efficiency and noise robustness.

2. Materials:

- Dataset: A labeled biomedical dataset (e.g., clinical features, gene expressions).

- Programming Language: Python with libraries like

numpy. - HDC Framework: A custom or open-source HDC library (e.g.,

hdcpy).

3. Methodology:

- Step 1 - Encoding: Map each data point (feature vector) to a high-dimensional space (e.g., D=10,000 dimensions). This involves creating a base hypervector for each feature and using HDC operations (binding ⊗, bundling ⊕) to form a single hypervector representing the sample [3].

- Step 2 - Training: Aggregate the hypervectors of all training samples belonging to the same class into a single "prototype" hypervector per class using the bundling operation [3].

- Step 3 - Inference: For a test sample, encode it into a query hypervector. Compare this query to all class prototype hypervectors using a similarity measure (e.g., cosine similarity). Assign the class of the most similar prototype.

- Step 4 - Validation: Evaluate the model's accuracy, precision, and recall on a held-out test set. Compare its training and inference speed, as well as energy consumption, against a traditional ML model (e.g., a neural network) on the same task.

4. Expected Output: A trained HDC classifier with performance metrics and a comparative analysis of its computational efficiency versus conventional methods.

Workflow and Relationship Diagrams

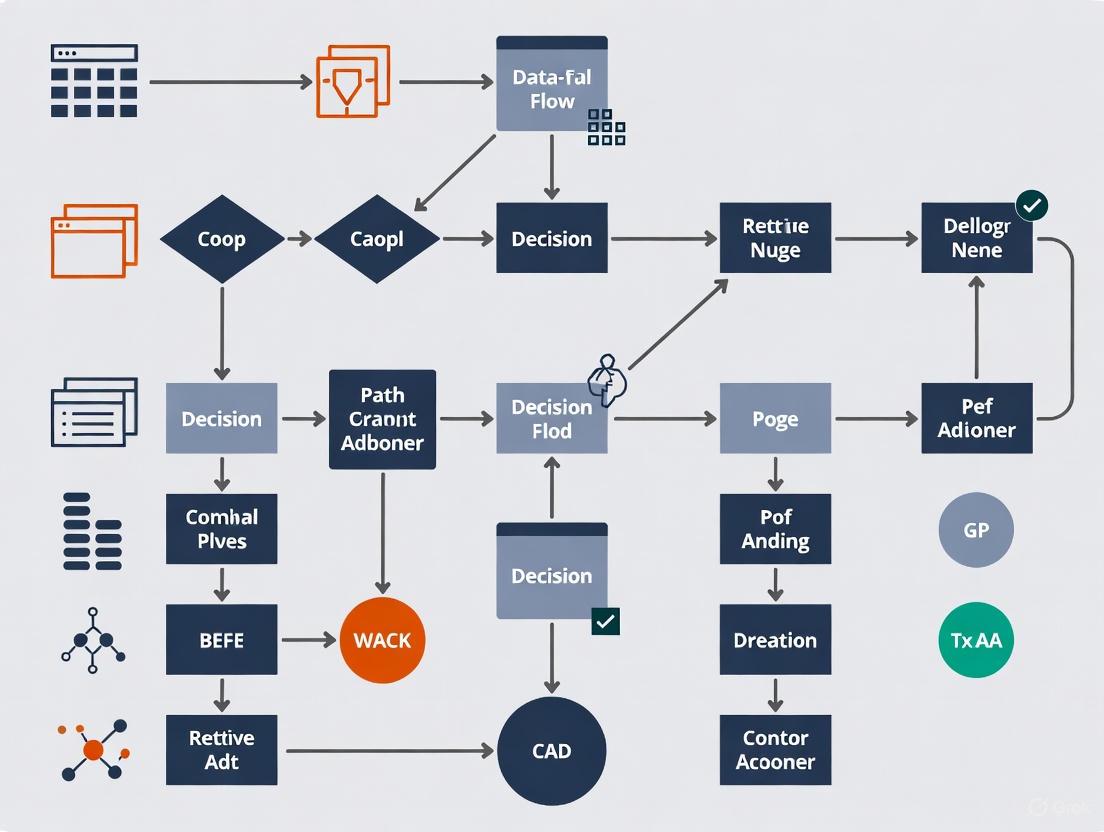

DOT Script: AI-Driven Drug Discovery Workflow

AI Drug Discovery Pipeline

DOT Script: Hyperdimensional Computing (HDC) Encoding Logic

HDC Data Encoding and Classification

The Scientist's Toolkit: Essential Research Reagents & Solutions

The table below lists key computational tools and resources essential for conducting efficient large-scale biomedical calculations.

| Item Name | Function/Benefit | Example Use-Case |

|---|---|---|

| GPU-Accelerated Cloud Platforms (AWS, GCP, Azure) | Provides scalable, on-demand access to high-performance computing resources like NVIDIA GPUs, avoiding upfront hardware costs [2]. | Training large deep learning models for drug-target interaction prediction. |

| High-Performance Computing (HPC) Clusters | Offers massive parallel processing power for extremely demanding tasks, often available through national research institutions or universities [1] [4]. | Running large-scale molecular dynamics simulations or genome-wide association studies (GWAS). |

| Hyperdimensional Computing (HDC) Libraries | Enables the development of fast, energy-efficient, and noise-robust models for classification and pattern recognition tasks on biomedical data [3]. | Real-time classification of electroencephalography (EEG) signals or medical sensor data at the edge. |

| FHIR (Fast Healthcare Interoperability Resources) | A standard for exchanging healthcare information electronically, crucial for overcoming data interoperability challenges and streamlining data pipelines [2]. | Integrating and harmonizing electronic health record (EHR) data from multiple hospital systems for a unified analysis. |

| Containerization Software (Docker, Singularity) | Ensures computational reproducibility and simplifies software deployment by packaging code, dependencies, and environment into a portable container [1]. | Reproducing a complex AlphaFold protein structure prediction analysis across different computing environments. |

Troubleshooting Guides and FAQs

FAQ: Why does my computational model run slowly and produce inaccurate results when I try to increase its resolution?

This is a classic manifestation of the trade-off between processing speed, memory utilization, and accuracy. Higher-resolution models require significantly more memory to store complex data and more processing power for calculations, which can slow down simulations. If the system runs out of physical memory (RAM), it may use slower disk-based virtual memory, drastically reducing speed. Furthermore, with fixed computational resources, pushing for higher resolution can force compromises, like reducing the number of simulation iterations or using less accurate numerical methods, which harms the final result [5] [6]. To manage this, consider using surrogate modeling or adaptive mesh refinement, which increases resolution only in critical areas to maintain accuracy while conserving memory and computation time [7] [6].

FAQ: How can I accelerate my virtual screening process in drug discovery without missing promising compounds?

Ultra-large virtual screening of billions of compounds is computationally intensive. To improve speed without sacrificing accuracy, employ a multi-stage filtering approach. The first stage uses fast, less computationally expensive methods (like machine learning-based pre-screening or pharmacophore searches) to quickly narrow the candidate pool. Subsequent stages then apply more accurate, but slower, methods like molecular docking with high-quality scoring functions only to the top candidates [8]. This strategy effectively manages the speed-accuracy trade-off by ensuring that computational resources are allocated efficiently. Techniques like this have enabled screens of over 11 billion compounds [8]. Leveraging GPU accelerators can also provide a massive speedup for these parallelizable tasks [9].

FAQ: My simulation fails on a high-performance computing (HPC) cluster with a "memory allocation" error. What steps should I take?

This error indicates that your job is requesting more memory than is available on the compute node. Follow this troubleshooting protocol:

- Profile Memory Usage: Run your application on a small-scale test problem locally while using profiling tools to identify memory bottlenecks and measure baseline memory consumption per process.

- Check Job Script Parameters: Verify the memory specifications in your job submission script. Ensure you have not requested an unrealistic amount of memory per node or per CPU.

- Optimize Your Code:

- Memory Efficiency: Check for and eliminate memory leaks. Use data structures that are appropriate for your problem size.

- Data Distribution: In distributed-memory parallel computing (using MPI), ensure the data and workload are evenly balanced across all processes to prevent a single node from being overloaded [9].

- High-Performance Algorithms: Implement algorithms optimized for sparse data if applicable to reduce memory footprint [9].

FAQ: What are the best practices for balancing speed and accuracy in a mechanistic pharmacological model?

Mechanistic models that incorporate detailed biological pathways can become computationally prohibitive. The key is to find the right level of model abstraction.

- Start Simple: Begin with a coarse-grained model and progressively add mechanistic detail only where it is necessary to capture the essential biology relevant to your research question [10].

- Use Surrogate Models: For tasks like parameter optimization or uncertainty quantification that require thousands of model runs, replace the high-fidelity model with a fast, data-driven surrogate model (e.g., a neural network) trained on the input-output behavior of the full model [7] [6].

- Sensitivity Analysis: Perform a global sensitivity analysis to identify the model parameters to which the output is most sensitive. You can then fix non-influential parameters to their nominal values, reducing the computational cost of subsequent analyses without impacting output accuracy [10].

Experimental Protocols for Benchmarking Performance

Protocol 1: Quantifying the Speed-Accuracy Trade-off in a Decision-Making Model

This protocol is based on established practices in neuroscience and psychology for studying the Speed-Accuracy Tradeoff (SAT) [5].

- Objective: To empirically measure how changes in decision thresholds affect the reaction time and accuracy of a computational model.

- Experimental Setup:

- Model: Implement a sequential sampling model (e.g., a Drift Diffusion Model or a Random Walk model) [5].

- Task: The model performs a two-choice discrimination task (e.g., identifying a signal from noise).

- Manipulation: Systematically vary the decision threshold, which is the amount of evidence required to make a choice.

- High Threshold: A conservative setting demanding more evidence.

- Low Threshold: A liberal setting demanding less evidence.

- Data Collection: For each threshold level, run a large number of simulated trials and record:

- Mean Reaction Time (RT)

- Percentage of Correct Choices (Accuracy)

- Analysis: Plot accuracy against mean reaction time. The resulting curve is the characteristic SAT curve for the model. A higher threshold will yield higher accuracy but longer RT, and vice versa [5].

Protocol 2: Benchmarking Memory and Speed for Molecular Dynamics Simulations

This protocol outlines a standard method for evaluating computational performance in molecular modeling [11].

- Objective: To determine the optimal system size and time step for a Molecular Dynamics (MD) simulation that balances computational efficiency with physical accuracy.

- System Preparation: Prepare a model of a protein-ligand complex in a solvated box. Create multiple systems of increasing size (e.g., by varying the dimensions of the water box or using different protein complexes).

- Benchmarking Run:

- Software: Use a common MD package (e.g., GROMACS, NAMD).

- Hardware: Perform all runs on identical hardware (e.g., the same node of an HPC cluster).

- Parameters: For each system size, run a short simulation (e.g., 1 ns) using different time steps (e.g., 1 fs, 2 fs).

- Metrics Collection: For each run, log:

- Wall-clock Time: Total time to complete the simulation.

- Memory Usage: Peak memory consumed by the process.

- Performance: Simulation speed in nanoseconds-per-day.

- Accuracy/Stability: Check if the simulation remained stable (did not crash) and monitor conservation of energy.

- Analysis: Create plots of memory usage and simulation speed versus system size. This will show how resource demands scale. The largest stable time step that does not compromise energy conservation provides the best speed for a given accuracy.

Data Presentation

Table 1: Performance Trade-offs in Common Computational Methods

| Computational Method | Typical Processing Speed | Memory Utilization | Typical Accuracy | Best Use Case |

|---|---|---|---|---|

| Machine Learning (Trained Model) | Very Fast (for inference) | Low to Moderate | High (for in-domain data) | Rapid prediction and classification on large datasets [7]. |

| Molecular Docking | Moderate to Fast | Low | Moderate | Initial, high-throughput virtual screening of compound libraries [8] [11]. |

| Molecular Dynamics (MD) | Slow | High | High | Detailed study of atomistic interactions and pathways over time [11]. |

| Finite Element Analysis (FEA) | Slow | High | High | Simulating physical stresses and fluid dynamics in complex geometries [7] [6]. |

| Surrogate Modeling | Very Fast | Very Low | Variable (Good within training domain) | Optimization and uncertainty quantification when full-model runs are too costly [6]. |

Table 2: Impact of HPC Techniques on Performance Metrics

| HPC Technique | Effect on Processing Speed | Effect on Memory Utilization | Impact on Accuracy |

|---|---|---|---|

| Parallel Computing (MPI/OpenMP) | Significant Increase | Increase (due to data replication) | No Direct Impact (preserves model fidelity) [9]. |

| GPU Acceleration | Massive Increase for parallel tasks | Moderate Increase | No Direct Impact (preserves model fidelity) [8] [9]. |

| Adaptive Mesh Refinement | Significant Increase | Significant Decrease | Minimal Loss (resolution is high only where needed) [6]. |

| Mixed-Precision Arithmetic | Moderate Increase | Decrease | Potential Minor Loss (from reduced numerical precision) [9]. |

Visualizations

Trade-off Relationships

Multi-Stage Screening Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Efficient Research

| Tool / Solution | Function in Research |

|---|---|

| Sequential Sampling Models (e.g., DDM) | Provides a mathematical framework to quantitatively model and understand the speed-accuracy trade-off in decision-making processes [5]. |

| Surrogate Models (Reduced-Order Models) | Acts as a fast, approximate substitute for a high-fidelity simulator, enabling rapid exploration of parameter spaces and optimization when the full model is too costly [7] [6]. |

| Adaptive Mesh Refinement (AMR) | Dynamically adjusts the computational grid resolution, concentrating resources where needed most. This "reagent" optimizes memory and CPU cycles for a given level of accuracy [6]. |

| GPU-Accelerated Libraries (e.g., CUDA) | Provides a massive boost in processing speed for parallelizable tasks like molecular docking, deep learning, and certain numerical simulations [8] [9]. |

| Message Passing Interface (MPI) | A communication "reagent" that enables distributed-memory parallel computing, allowing a single problem to be solved across multiple nodes of an HPC cluster [9]. |

| Ultra-Large Virtual Compound Libraries | Large-scale collections of synthesizable molecules (billions to tens of billions) that serve as the input material for virtual screening campaigns in drug discovery [8]. |

Common Bottlenecks in Molecular Dynamics and Drug Discovery Workflows

Troubleshooting Guides

Sampling Limitations and Inefficient Conformational Exploration

Problem: My MD simulation is not efficiently crossing energy barriers or sampling biologically relevant states within a practical simulation timeframe.

Solution: Implement enhanced sampling methods to accelerate the exploration of conformational space.

Detailed Methodology:

- Diagnose the Barrier: Identify the slow conformational degree of freedom (e.g., a dihedral rotation, protein domain motion).

- Select an Enhanced Sampling Method:

- Accelerated Molecular Dynamics (aMD): Apply a boost potential to the system's dihedral and/or total potential energy. This method decreases energy barriers, allowing more frequent transitions between low-energy states without requiring pre-defined reaction coordinates [12]. Key parameters to set are the acceleration energy thresholds (

Eandα). - Metadynamics: Use a history-dependent bias potential in a pre-defined collective variable (CV) space to push the system away from already-visited states. This helps map free energy landscapes.

- Accelerated Molecular Dynamics (aMD): Apply a boost potential to the system's dihedral and/or total potential energy. This method decreases energy barriers, allowing more frequent transitions between low-energy states without requiring pre-defined reaction coordinates [12]. Key parameters to set are the acceleration energy thresholds (

- Run and Analyze: Perform multiple, independent aMD or metadynamics simulations. Analyze the combined trajectories to identify metastable states and calculate free energies.

Performance Metrics for Enhanced Sampling Protocols

| Method | Key Parameter | Typical Simulation Length | Primary Use Case |

|---|---|---|---|

| Accelerated MD (aMD) | Dihedral/Torsional Boost Potential | 100 ns - 1 μs | Exploring large-scale conformational changes, cryptic pockets [12] |

| Metadynamics | Collective Variable (CV) Definition | 50 - 500 ns | Calculating free energy landscapes, protein-ligand binding |

| Conventional MD | N/A | 1 μs - 1 ms+ | Studying rapid, local dynamics and equilibrium fluctuations [13] |

Inaccurate Force Fields and Protein-Ligand Binding Affinities

Problem: My simulation results do not agree with experimental data for ligand binding affinity or protein dynamics, suggesting potential force field inaccuracies.

Solution: Utilize a multi-scale approach that combines quantum mechanics (QM) with molecular mechanics (MM) and leverage free energy perturbation (FEP) methods for more accurate binding affinity predictions.

Detailed Methodology:

- System Preparation: Parameterize the ligand using a QM method (e.g., HF/6-31G*) to derive accurate partial charges and torsion potentials. Use a modern biomolecular force field (e.g., AMBER, CHARMM) for the protein.

- QM/MM Simulation: For critical interactions (e.g., metal ion coordination, covalent binding), set up a QM/MM simulation where the ligand and key protein residues are treated with QM, and the rest of the system with MM.

- Free Energy Calculation: Use Free Energy Perturbation (FEP) or Thermodynamic Integration (TI) to compute relative binding free energies. This involves running a series of simulations where one ligand is alchemically transformed into another within the binding site [13].

- Validation: Compare the calculated binding free energies and protein-ligand interaction geometries with available experimental data (e.g., IC₅₀, Kᵢ, crystallographic structures).

High Computational Cost for Large Systems

Problem: Simulations of large biological systems (e.g., ribosomes, viral capsids) are prohibitively slow, even on high-performance computing (HPC) resources.

Solution: Optimize your workflow using large-scale optimization techniques and efficient hardware utilization.

Detailed Methodology:

- Software and Hardware Optimization:

- Use MD software (e.g., GROMACS, NAMD, OpenMM) optimized for GPU acceleration.

- Leverate distributed computing resources or cloud computing (e.g., AWS ParallelCluster) for massive parallelism [14].

- Algorithmic Optimization: For problems like virtual screening, employ large-scale optimization algorithms to manage computational complexity:

- Linear Programming (LP) Solvers: Use advanced solvers like PDLP, which can solve problems with 100 billion variables using first-order methods and avoid memory bottlenecks of traditional solvers [15].

- Composable Coresets: For massive datasets, partition data among machines, compute small summaries (sketches) on each, and then solve the optimization problem on the combined sketch [15].

- System Reduction: When possible, simulate only the relevant functional subunit of a large complex to reduce the number of atoms.

Data Management and Analysis Bottlenecks

Problem: The volume of trajectory data (terabytes) is overwhelming, and analysis is time-consuming, hindering insight generation.

Solution: Implement a "Lab in a Loop" paradigm with automated, FAIR (Findable, Accessible, Interoperable, Reusable) data management [14].

Detailed Methodology:

- Automate Data Pipelines: Use workflow management tools (e.g., Nextflow, Snakemake) to chain simulation setup, execution, and analysis.

- Adopt FAIR Data Principles: Store trajectories and metadata in a structured, cloud-native database (e.g., using Amazon S3, Amazon DataZone) to ensure data is Findable, Accessible, Interoperable, and Reusable [14].

- Integrate AI-Assisted Analysis: Deploy AI tools to sift through large datasets. For instance, use an AI research agent to automatically analyze trajectories for specific conformational events or to cross-reference results with scientific literature, saving thousands of manual hours [14].

Frequently Asked Questions (FAQs)

Q1: What is the biggest remaining challenge in structure-based drug discovery, and how can MD help? The primary challenge is target flexibility and the existence of cryptic pockets. Proteins and ligands are highly flexible, and most molecular docking tools keep the protein fixed or allow only limited flexibility. This limits the ability to discover novel allosteric sites. MD simulations address this by modeling full conformational changes. The Relaxed Complex Method is a key solution, where multiple target conformations (snapshots) from an MD trajectory are used for docking, increasing the chance of finding hits that bind to transient pockets [12].

Q2: How can I make my virtual screening of ultra-large libraries (billions of compounds) computationally feasible? This requires a multi-pronged approach leveraging modern computing resources and algorithms:

- Cloud and GPU Computing: Utilize scalable cloud computing (e.g., AWS) and GPU-accelerated docking software to process millions of compounds per day [12].

- Advanced Optimization: Employ large-scale optimization techniques. For example, column generation reformulates the problem into a manageable master problem and subproblems, drastically reducing computational complexity [16]. Linear programming solvers like PDLP can handle problems on a 100-billion-variable scale [15].

Q3: Our experimental and clinical data are siloed. How can we integrate them for better AI models without compromising security? Federated learning is a advanced technique designed for this exact problem. It allows multiple institutions to collaboratively train an AI model without sharing or moving the underlying raw data. Each party trains the model on their local data, and only the model updates (e.g., weights, gradients) are securely aggregated. This protects intellectual property and patient privacy while leveraging diverse datasets to build more robust and accurate models for tasks like predicting protein-ligand interactions [14].

Q4: Are AI-predicted protein structures (like from AlphaFold) reliable for MD simulations and drug discovery? Yes, but with considerations. AlphaFold has provided over 214 million predicted protein structures, offering unprecedented opportunities for targets without experimental structures [12]. These models are excellent starting points for:

- Identifying binding sites.

- Structure-based virtual screening. However, they typically represent a single, static conformation. For MD, it is crucial to run an initial equilibration simulation to relax the structure into a more physiologically realistic state, as AI models may contain local steric clashes or strained loops.

Essential Computational Tools for Modern Drug Discovery

| Resource/Solution | Type | Primary Function |

|---|---|---|

| REAL Database (Enamine) | Compound Library | An ultra-large, commercially available on-demand library of >6.7 billion make-on-demand compounds for virtual screening [12]. |

| AlphaFold Protein Structure Database | Structural Resource | Provides over 214 million predicted protein structures for targets lacking experimental data, enabling SBDD for novel targets [12]. |

| PDLP Solver (Google OR-Tools) | Optimization Algorithm | A large-scale linear programming solver capable of handling problems with 100 billion variables, useful for complex optimization in workflow management [15]. |

| eProtein Discovery System (Nuclera) | Automated Workstation | Automates protein expression and purification, moving from DNA to purified protein in under 48 hours to streamline upstream protein production for structural studies [17]. |

| Biological Foundation Models (e.g., ESM-2) | AI Model | Pre-trained deep learning models that generate informative representations (embeddings) of protein sequences, used to predict function, structure, and druggability [14]. |

The Impact of Model Architecture on Computational Resource Demands

For researchers in computational fields, selecting the right model architecture is a critical decision that directly impacts resource consumption, experimental feasibility, and time-to-results. This guide provides practical troubleshooting advice and methodologies to help you navigate the trade-offs between different deep learning architectures, optimize them for efficiency, and deploy them successfully in resource-constrained environments.

Core Concepts: Architectural Trade-Offs

The choice between popular architectures like Convolutional Neural Networks (CNNs), Vision Transformers (ViTs), and Recurrent Neural Networks (RNNs) involves fundamental trade-offs between accuracy, computational cost, and data efficiency.

Table 1: Comparison of Deep Learning Model Architectures

| Architecture | Computational Demand | Typical Memory Footprint | Data Efficiency | Key Strengths |

|---|---|---|---|---|

| Convolutional Neural Networks (CNNs) [18] [19] | Moderate to High | Moderate | High (good with smaller datasets) [18] | Capturing local patterns, spatial hierarchies; ideal for image data [19] |

| Vision Transformers (ViTs) [18] [19] [20] | Very High (due to self-attention) | High (can be lower during training) [20] | Low (requires large datasets) [19] | Capturing global dependencies and long-range interactions in data [20] |

| Recurrent Neural Networks (RNNs/LSTMs) [18] | Low (during inference) | Low | Moderate | Real-time sequential data processing on limited resources [18] |

| Diffusion Models [18] | Very High | Very High | Low | High-quality, diverse generative outputs (images, video) [18] |

Optimization Methodologies and Experimental Protocols

Post-Training Optimization (PTO) Workflow

For researchers with a pre-trained model, Post-Training Optimization offers a pathway to drastically reduce deployment overhead without retraining. The following protocol, adapted from studies on medical imaging AI, provides a systematic approach [21].

Figure 1: A systematic workflow for optimizing pre-trained models using Post-Training Optimization (PTO) techniques.

Experimental Protocol:

- Baseline Establishment: Run your pre-trained model on a validation dataset to establish baseline performance metrics (e.g., accuracy, Dice score) and baseline runtime metrics (latency, peak memory usage) [21].

- Graph Optimization (GO):

- Objective: To simplify the model's computational graph for more efficient execution.

- Method: Use frameworks like TensorFlow or OpenVINO to apply techniques such as node merging, kernel optimization, and stride optimizations [21].

- Validation: Run the optimized model on the same validation set. If the performance drop is less than 2%, proceed. Otherwise, revert [21].

- Post-Training Quantization (PTQ):

- Objective: To reduce the numerical precision of the model's weights, decreasing memory footprint and speeding up computation.

- Method: Convert model parameters from 32-bit floating-point (FP32) to 8-bit integers (INT8). This can reduce model size by up to 75% [22] [21].

- Validation: Again, validate that the performance drop remains within an acceptable threshold (e.g., <2%) [21].

Comparative Analysis Protocol: CNN vs. ViT

To empirically determine the best architecture for a specific task, such as image-based prediction, a structured comparative experiment is essential. The following protocol is based on benchmark studies from face recognition and wildfire prediction research [20] [23].

Experimental Protocol:

- Model Selection & Setup:

- Select one CNN model (e.g., ResNet, EfficientNet) and one ViT model (e.g., ViT-Base).

- Use standard hyperparameters for a fair comparison: image size (e.g., 224x224), batch size (e.g., 256), optimizer (e.g., Adam), and learning rate (e.g., 0.0001) [20].

- Training & Evaluation:

- Train both models on the same dataset. If data is limited, leverage transfer learning by starting with a model pre-trained on a large, generic dataset (like ImageNet), which is especially critical for ViTs [19].

- Evaluate models on a held-out test set. Use Explainable AI (XAI) techniques like SHAP or Grad-CAM to interpret which features each model prioritizes, adding a layer of scientific insight beyond mere accuracy [23].

- Resource Profiling:

- During inference, measure key metrics for both models: Latency (time per prediction), Peak Memory Usage, and Throughput (predictions per second) [21].

- Use profiling tools to track hardware utilization, such as GPU/CPU usage and energy consumption.

Table 2: Sample Experimental Results - ViT vs. CNN on Face Recognition

| Model | Top-1 Accuracy (%) | Inference Speed (ms) | Peak Memory (MB) | Robustness to Occlusions |

|---|---|---|---|---|

| Vision Transformer (ViT) | 98.5 | 45 | 1,450 | High [20] |

| EfficientNet (CNN) | 97.1 | 32 | 1,210 | Medium [20] |

| ResNet-50 (CNN) | 96.8 | 38 | 1,680 | Low [20] |

Troubleshooting FAQs

FAQ 1: My model's inference is too slow for our real-time analysis. What are my options?

- Problem: High latency during model prediction.

- Solution:

- Apply Quantization: Convert your model from FP32 to a lower precision like INT8. This can significantly speed up inference, especially on hardware with dedicated INT8 processing units [22] [21].

- Use a Simpler Architecture: For real-time tasks, a well-optimized CNN is often faster than a ViT due to its localized processing and high data efficiency [18] [24].

- Leverage Hardware-Specific Optimization: Use toolkits like NVIDIA's TensorRT or Intel's OpenVINO, which apply graph optimizations and leverage hardware-specific libraries to accelerate inference [22] [25].

FAQ 2: I keep running out of GPU memory during training. How can I reduce memory pressure?

- Problem: GPU memory exhaustion prevents model training.

- Solution:

- Reduce Batch Size: This is the most straightforward way to lower memory usage, though it may affect training stability.

- Use Gradient Accumulation: Simulate a larger batch size by accumulating gradients over several smaller batches before updating weights.

- Consider Model Architecture: Be aware that ViTs have a high memory footprint due to their self-attention mechanism. For very large models or images, a CNN or a hybrid architecture might be more feasible [19].

- Apply Mixed Precision Training: Use 16-bit floating-point numbers (FP16) for certain operations to halve memory usage, a technique supported by modern frameworks and hardware [22].

FAQ 3: When should I choose a Vision Transformer over a CNN for my research?

- Problem: Uncertainty in architectural choice for a new project.

- Solution: The choice hinges on data, resources, and task requirements.

- Choose a ViT if:

- Your task relies on understanding global context and long-range dependencies within the data (e.g., analyzing complex cellular structures across a whole slide image) [20] [23].

- You have access to very large datasets (millions of samples) or can use a model pre-trained on such a dataset [19].

- You have ample computational resources for training and inference [18].

- Choose a CNN if:

- Your dataset is of small to medium size [18].

- You need a model for deployment on edge devices or in resource-constrained environments due to their smaller size and higher inference speed [19] [24].

- Your task is based on recognizing local features and patterns (e.g., detecting specific morphological features in a cell) [19].

- Choose a ViT if:

The Scientist's Toolkit: Key Research Reagents

Table 3: Essential Software Tools for Model Optimization and Evaluation

| Tool / "Reagent" | Function | Use Case in Computational Research |

|---|---|---|

| TensorRT-LLM / OpenVINO | Hardware-specific optimization | Significantly reduces energy consumption and latency during inference on NVIDIA or Intel hardware, respectively [25]. |

| Optuna / Ray Tune | Hyperparameter Optimization | Automates the search for optimal model training settings, balancing performance and resource use [22]. |

| XAI Libraries (SHAP, Grad-CAM) | Model Interpretation | Provides visual explanations and feature importance scores, critical for validating model decisions in scientific contexts [23]. |

| ONNX Runtime | Model Interoperability | Provides a standardized format for running models across different frameworks and hardware platforms, simplifying deployment [22]. |

High-Performance Computing (HPC) Infrastructure for Large-System Calculations

Technical Support Center

Troubleshooting Guides

This section addresses common issues encountered when running large-scale calculations on HPC clusters.

Problem: Job Fails to Start or is Immediately Killed

- Symptoms: Job exits with an error message about memory, or does not appear in the job queue.

- Diagnosis: This is often due to requesting more resources per node than are available.

- Resolution:

- Check the specification of the compute nodes (e.g., cores per node, memory per node) in the system documentation [26].

- Modify your job submission script to ensure your request for

--ntasks-per-node,--cpus-per-task, and--memdoes not exceed the physical limits of a single node. - For memory-intensive tasks, consider spreading the workload across more nodes.

Problem: Job Runs Successfully but Takes Excessively Long

- Symptoms: Job is running but does not complete in the expected time; system monitoring tools show low CPU utilization.

- Diagnosis: The application may not be fully utilizing the parallel architecture of the cluster, often due to insufficient parallelization or I/O bottlenecks [27] [28].

- Resolution:

- Profile your code to identify serial bottlenecks.

- Ensure you are using optimized, parallel libraries (e.g., for linear algebra).

- Check if your job is spending significant time reading from or writing to the shared storage system. If possible, leverage node-local storage for temporary files.

Problem: Network Communication Errors in Parallel Jobs

- Symptoms: Job fails with errors like "connection timed out" or "message queue full," especially in multi-node applications using MPI.

- Diagnosis: The application may be overloading the high-speed interconnect (e.g., InfiniBand) with too many simultaneous communications [28].

- Resolution:

- Review the communication patterns in your code. Optimize to reduce the frequency of small messages.

- Check that the MPI library and its settings are appropriate for the HPC system's network.

- If using a hybrid MPI+OpenMP model, increase the number of OpenMP threads per process to reduce the total number of MPI processes and thus network pressure.

Problem: Inefficient Energy Consumption and Node Overheating

- Symptoms: System logs show nodes throttling performance or shutting down due to overheating; overall energy consumption is high [26].

- Diagnosis: Computational workload is not balanced, causing some nodes to work harder and generate more heat than others.

- Resolution:

- Implement load-balancing algorithms in your application to distribute work evenly.

- Consult the data center's digital twin or monitoring dashboard, if available, to identify nodes running hotter than others [26].

- Schedule less critical, lower-intensity jobs during peak energy demand periods.

Frequently Asked Questions (FAQs)

Q1: What is the fundamental architecture of an HPC system? A1: An HPC system is a cluster of interconnected compute servers (nodes). The main elements are compute (nodes with multiple processors/cores), network (a high-speed interconnect like InfiniBand), and storage (high-performance parallel file systems) [27] [28]. These nodes work in parallel to solve large problems by breaking them into smaller, simultaneous tasks.

Q2: How does parallel processing in HPC accelerate my research simulations? A2: Parallel processing allows a large problem to be divided into many smaller tasks, which are then processed simultaneously across thousands of compute cores [27] [29]. This drastically reduces the time to solution compared to running on a single desktop computer, enabling larger, more complex simulations and the analysis of massive datasets that would otherwise be infeasible.

Q3: My application ran on a previous cluster. Why is it performing poorly on this new system? A3: Different HPC clusters have different architectures (e.g., CPU types, GPU accelerators, network interconnects). Code that is not optimized for a specific architecture may not perform well. You may need to recompile your application with architecture-specific flags and use optimized numerical libraries provided by the HPC support team.

Q4: What are the most critical factors for improving the computational efficiency of my large-system calculations? A4: Key factors include:

- Algorithm Choice: Using scalable, parallel algorithms.

- Code Optimization: Profiling and optimizing bottlenecks.

- Efficient Resource Request: Requesting the optimal number of nodes and cores for your job in the scheduler.

- I/O Optimization: Minimizing read/write operations to shared storage by batching data.

- Energy Awareness: Monitoring power consumption can lead to practices that reduce operational costs and environmental impact [26].

Q5: How can containers help with the reproducibility of my computational experiments? A5: Containers (e.g., Docker, Podman) package your application code, libraries, and dependencies into a single, portable unit [27]. This ensures your application runs consistently across different HPC environments—from your laptop to a national supercomputer—significantly enhancing reproducibility and simplifying the sharing of your research workflows.

Experimental Protocols for Computational Efficiency

Protocol 1: Benchmarking and Profiling HPC Applications Objective: To identify performance bottlenecks and establish a baseline for optimization.

- Select a Representative Dataset: Use an input dataset that reflects the typical size and complexity of your research problem.

- Run with Profiling Tools: Execute your application using profiling tools (e.g.,

gprof,perf,VTune) on a small number of nodes. - Analyze Output: Identify functions or code sections consuming the most CPU time and memory.

- Vary Core Count: Run the same benchmark while varying the number of cores/nodes to analyze scaling behavior and identify the point of diminishing returns.

Protocol 2: Measuring Energy Efficiency of Computational Workloads Objective: To correlate computational output with energy consumption, supporting sustainable HPC research [26].

- Integrate with Monitoring Systems: Configure your job scheduler to interface with the data center's power monitoring sensors or digital twin dashboard [26].

- Run Standardized Workload: Execute a standard, well-defined computational task (e.g., a single iteration of your primary simulation).

- Record Metrics: Log the total energy consumed (in kWh) by the compute nodes during the job execution, along with the job's runtime and the number of cores used.

- Calculate Efficiency Metric: Compute a metric like performance-per-watt (e.g., simulation steps per kWh) to quantify efficiency gains from code optimizations.

HPC System Visualization

The following diagram illustrates the typical workflow for a researcher to submit and run a computational job on an HPC cluster, from problem formulation to result analysis.

This diagram outlines the logical architecture of a high-performance computing cluster, showing the interconnection between its core components: login nodes, compute nodes, high-speed networks, and storage systems.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key software and hardware "reagents" essential for conducting computational experiments on HPC infrastructure.

| Item | Type | Function in Computational Experiments |

|---|---|---|

| Job Scheduler (Slurm/PBS) | Software | Manages and allocates cluster resources, queues user jobs, and ensures fair sharing of compute nodes among all researchers [28]. |

| MPI (Message Passing Interface) | Software Library | Enables communication and data exchange between parallel processes running on different compute nodes, essential for multi-node simulations [28]. |

| OpenMP | Software API | Simplifies parallel programming on a single compute node by allowing multiple threads to execute different parts of the code on shared memory [28]. |

| Optimized Math Kernels (e.g., Intel MKL, BLAS) | Software Library | Provides highly optimized, parallel implementations of common mathematical operations (linear algebra, FFT), drastically accelerating core numerical computations. |

| Container Technology (e.g., Podman) | Software | Packages an application and its entire environment, ensuring reproducibility and portability across different HPC platforms [27]. |

| High-Speed Interconnect (e.g., InfiniBand) | Hardware | The network backbone of the cluster. Provides low-latency, high-bandwidth communication between nodes, which is critical for parallel application performance [28] [26]. |

| Parallel File System (e.g., Lustre, GPFS) | Hardware/Software | A storage system that allows all compute nodes to read from and write to a shared storage resource simultaneously, handling the massive I/O demands of large-scale simulations [27]. |

| GPU Accelerators | Hardware | Specialized processors that handle thousands of parallel threads simultaneously, offering tremendous speedups for specific workloads like machine learning and molecular dynamics [29]. |

Performance and Efficiency Metrics

The table below summarizes key quantitative data relevant to HPC system performance and efficiency, providing benchmarks for researchers.

| Metric | Typical Value/Specification | Relevance to Research Efficiency |

|---|---|---|

| HPC Cluster Scale | 100,000+ cores is common [29] | Determines the maximum problem size and parallelism achievable for a single simulation. |

| Network Bandwidth | >100 Gb/s (e.g., InfiniBand) [29] | Limits the speed of data exchange between nodes; critical for tightly coupled parallel applications. |

| Power Consumption | 20-30 MW for a typical HPC data center [26] | Highlights the operational cost and environmental impact, driving the need for energy-efficient algorithms. |

| Power Usage Effectiveness (PUE) | ~1.2 (closer to 1.0 is better) [26] | Measures data center infrastructure efficiency; a lower PUE means less energy is wasted on cooling. |

| Global Data Center Energy Use | Projected to be ~3% of global electricity by 2030 [26] | Contextualizes the importance of energy-efficient computing for sustainable research. |

Advanced Techniques for Accelerating Large-System Biomedical Calculations

Core Concept FAQs

What is the primary goal of model optimization in computational research? The primary goal is to improve how artificial intelligence models work by making them faster, smaller, and more resource-efficient without significantly sacrificing their accuracy or ability to perform tasks. This is crucial for deploying models in resource-constrained environments and for reducing computational costs in large-scale calculations [22].

How does Pruning enhance model efficiency? Pruning removes unnecessary parameters (weights, neurons, or even layers) from a trained neural network. This leverages the common over-parameterization of networks, eliminating connections that contribute minimally to the final predictions. The result is a more compact model with accelerated inference speeds and lower computational cost [22] [30] [31].

- Structured Pruning: Removes entire components like neurons, filters, or layers. This approach directly reduces computational complexity and is hardware-friendly [30] [31] [32].

- Unstructured Pruning: Targets individual weights regardless of their position in the network. It can achieve high sparsity but requires specialized hardware or software to realize performance benefits [30] [32].

What is Quantization and how does it reduce resource consumption? Quantization reduces the numerical precision of the model's parameters and activations. It typically involves converting 32-bit floating-point numbers into lower-precision formats like 16-bit floats or 8-bit integers. This significantly cuts the model's memory footprint and enables faster computation on hardware optimized for lower-precision arithmetic [22] [32].

- Post-training Quantization: Applied after a model is trained. It is a quick compression method but may lead to some accuracy loss [22] [32].

- Quantization-Aware Training (QAT): Integrates the quantization simulation directly into the training process, allowing the model to adapt and typically preserving higher accuracy [30] [32].

Can you explain Knowledge Distillation in simple terms? Knowledge distillation is a process of transferring knowledge from a large, complex model (the "teacher") to a smaller, more efficient model (the "student"). Instead of training the small model on raw data alone, it is trained to mimic the teacher's behavior and outputs, often capturing richer information and relationships. This allows the compact student model to retain much of the teacher's performance at a fraction of the computational cost [30] [31].

Table 1: Performance Benchmarks of Optimization Techniques

| Technique | Reported Model Size Reduction | Reported Performance Retention | Key Benefit |

|---|---|---|---|

| Pruning | 40% faster inference with 2% accuracy loss [32] | Up to 97% accuracy maintained [32] | Lower computational cost & faster inference [31] |

| Quantization | 75% smaller model [32] | 97% accuracy maintained [32] | Drastically reduced memory & power use [32] |

| Knowledge Distillation | Model size reduced to 1.1% of teacher's size [30] | Retains 90% of teacher's performance [30] | Enables compact models with high performance [30] |

| Hybrid (Pruning + Quantization) | 75% reduction in model size, 50% lower power [32] | Maintains 97% accuracy [32] | Combined benefits for maximum efficiency [32] |

Troubleshooting Common Experimental Issues

Issue: My model's accuracy drops significantly after aggressive pruning. Diagnosis: This is a common problem when the pruning process removes too many critical parameters or does not allow the model to recover. Solution:

- Adopt Iterative Pruning: Do not remove a large percentage of weights at once. Instead, use an iterative process: prune a small percentage (e.g., 10-20%), then fine-tune the model, and repeat. This allows the network to adapt gradually [30].

- Use a Calibration Dataset: Employ a small, representative calibration dataset to better assess the importance of weights during pruning, rather than relying solely on magnitude [31].

- Fine-Tune After Pruning: Pruning must be followed by a fine-tuning or retraining phase on your original training data to recover any lost accuracy [31].

Issue: My quantized model exhibits unstable behavior and poor performance. Diagnosis: Post-training quantization can be too coarse for sensitive models, and the precision loss disproportionately affects certain layers. Solution:

- Switch to Quantization-Aware Training (QAT): Incorporate fake quantization operations into your training loop. This allows the model to learn parameters that are robust to lower precision, leading to much better accuracy [32].

- Use Mixed-Precision Quantization: Avoid quantizing the entire model with the same bit-width. Use higher precision (e.g., 16-bit) for sensitive layers and lower precision (e.g., 8-bit) for others. Analyze layer sensitivity to find the optimal balance [32].

Issue: The distilled student model fails to learn effectively from the teacher. Diagnosis: The knowledge transfer may be ineffective due to a mismatch in capacity, poor choice of distillation loss, or issues with the teacher's soft labels. Solution:

- Adjust the Distillation Loss: Combine the standard cross-entropy loss with the distillation loss (e.g., KL Divergence). Experiment with the weight (alpha) given to each term to balance learning from hard labels and the teacher's soft targets [31].

- Employ Feature-Based Distillation: Instead of just matching the teacher's final output (logits), try to align the intermediate feature representations or attention maps between the teacher and student models. This provides a richer learning signal [30].

- Verify Teacher Model Quality: Ensure your teacher model is well-calibrated and produces high-quality soft labels. A poor teacher will lead to a poor student.

Detailed Experimental Protocols

Protocol 1: Iterative Magnitude Pruning for a Deep Learning Model

This protocol outlines a standard iterative pruning workflow to compress a model while aiming to preserve its accuracy.

Objective: To reduce the number of parameters in a trained neural network via iterative magnitude-based pruning.

Workflow:

Methodology:

- Load Pre-trained Model: Begin with a fully trained, accurate model [31].

- Prune a Small Percentage: Identify and remove (set to zero) the weights with the smallest absolute values. A common starting point is 10-20% of the remaining weights [30].

- Fine-Tune the Pruned Model: Retrain the sparsified model for a few epochs on the original training data. This allows the remaining weights to compensate for the removed ones [31].

- Evaluate Performance: Assess the pruned and fine-tuned model on a validation set to monitor accuracy drop.

- Check Sparsity Goal: If the target model size or sparsity level has not been met, return to Step 2. Repeat this cycle until the goal is achieved [30].

Protocol 2: Knowledge Distillation for a Classification Model

This protocol describes how to train a compact student model using knowledge transferred from a large teacher model.

Objective: To train a small student model to mimic the predictions and internal representations of a larger, pre-trained teacher model.

Workflow:

Methodology:

- Obtain Teacher Predictions: Pass the training data through the pre-trained teacher model to generate "soft targets" – the probability distribution over classes output by the final softmax layer [30] [31].

- Train Student with Combined Loss:

- Pass the same training data through the untrained student model.

- Calculate a composite loss function:

- Distillation Loss (Ldistill): Measures the difference (e.g., using KL Divergence) between the student's output and the teacher's soft targets.

- Student Loss (Lstudent): The standard cross-entropy loss between the student's output and the true hard labels.

- The total loss is a weighted sum:

L_total = α * L_distill + (1 - α) * L_student. The hyperparameterαcontrols the influence of the teacher's knowledge [31].

- Update Student Model: Use backpropagation on the total loss to update the weights of the student model.

- Iterate: Repeat steps 2-3 until the student model converges.

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Tools and Frameworks for AI Model Optimization

| Tool / Framework Name | Type | Primary Function in Optimization |

|---|---|---|

| TensorRT Model Optimizer (NVIDIA) [31] | Software Library | Provides a streamlined pipeline for applying pruning and knowledge distillation to large language models. |

| LoRA (Low-Rank Adaptation) [33] [30] | Fine-tuning Method | A Parameter-Efficient Fine-Tuning (PEFT) technique that adapts large models for specific tasks by updating a very small number of parameters. |

| Optuna [22] | Hyperparameter Framework | Automates the search for optimal hyperparameters (e.g., learning rate, pruning sparsity), which is critical for effective optimization. |

| OpenVINO Toolkit (Intel) [22] | Software Toolkit | Optimizes and deploys models for Intel hardware, including quantization and pruning functionalities. |

| NeMo Framework (NVIDIA) [31] | Training Framework | An end-to-end framework for building, training, and optimizing large language models, with built-in support for distillation. |

| XGBoost [22] | ML Library | An efficient gradient-boosting library that includes built-in regularization and tree pruning capabilities. |

Equivariant Graph Neural Networks for Efficient Molecular Modeling

Technical Support Center

Troubleshooting Common Experimental Issues

Issue 1: High Memory Consumption During Training on Large Molecular Structures

- Problem Description: Training runs fail due to GPU memory exhaustion, especially with structures exceeding a few hundred atoms or when using a large cutoff radius (rcut > 10 Å), which creates densely connected graphs [34].

- Diagnosis Steps:

- Solutions:

- Distributed Training: Implement a distributed eGNN that leverages direct GPU communication. Use a graph partitioning strategy to split the input graph across multiple GPUs, reducing the memory footprint per device [34].

- Efficient Representations: Consider models that use a scalar-vector dual representation (e.g., E2GNN, PaiNN) instead of higher-order spherical harmonics, as they are less memory-intensive [35].

- Adjust Hyperparameters: If distributed computing is unavailable, reduce the

rcutvalue or the batch size, bearing in mind that this may affect model accuracy by truncating long-range interactions [34].

Issue 2: Model Performance Degradation with Increased Network Depth (Oversmoothing)

- Problem Description: As the number of layers in the eGNN increases, the model's performance on the validation set decreases. Node features become indistinguishable, failing to capture hierarchical information [36].

- Diagnosis Steps:

- Track the Dirichlet energy of node features across layers; a rapid decrease indicates oversmoothing [36].

- Visualize node embeddings from different layers; overlapping clusters suggest a loss of discriminative power.

- Solutions:

- Regularization Techniques: Apply methods like

PairReg, which uses a regularization term on equivariant messages (e.g., coordinates) to mitigate oversmoothing while preserving equivariance [36]. - Advanced Residual Connections: Use residual connections that incorporate the initial node features or coordinates, as seen in GCNII or specialized EGNN frameworks [36].

- Regularization Techniques: Apply methods like

Issue 3: Poor Generalization and Data Scarcity

- Problem Description: The model shows high error on test data or new molecular scaffolds, particularly when labeled training data is limited [37].

- Diagnosis Steps:

- Perform a learning curve analysis by training on subsets of the data.

- Check for significant distribution shifts between training and test sets.

- Solutions:

- Leverage Pre-trained Models: Utilize pre-trained E(3)-equivariant networks (e.g., EnviroDetaNet). These models, pre-trained on large molecular datasets, can be fine-tuned with limited data, improving stability and accuracy [37].

- Incorporate Molecular Environment Information: Enhance atomic representations by integrating global molecular environment information, which has been shown to maintain high accuracy even with a 50% reduction in training data [37].

Issue 4: Maintaining Equivariance in Custom Model Architectures

- Problem Description: A custom-built GNN fails to produce rotationally equivariant outputs, breaking a fundamental physical symmetry.

- Diagnosis Steps:

- Test the model: input a rotated molecular structure and check if the outputs transform correctly (e.g., forces rotate with the structure, energies remain invariant).

- Audit the operations in each layer. Only certain mathematical operations preserve equivariance.

- Solutions:

- Use Equivariant Operations: Ensure all feature transformations use equivariant operations like tensor products (with Clebsch-Gordan coefficients), spherical harmonic projections, or scalar-vector interactions [38] [35].

- Leverage Established Frameworks: Build models using proven architectures like the Equivariant Spherical Transformer (EST) [38], SEGNN [36], or the Equivariant Transformer from TorchMD-NET [39] as a reference.

Issue 5: Long-Range Interactions are Not Captured

- Problem Description: The model's predictive accuracy suffers for molecular properties that depend on interactions between distant atoms.

- Diagnosis Steps:

- Analyze model performance on specific tasks where long-range interactions are critical, such as electronic structure prediction [34].

- Check if the chosen

rcutis large enough to encompass the relevant physical interactions.

- Solutions:

- Increase Cutoff Radius: Increase

rcut, but be aware of the associated computational cost [34]. - Global Interaction Modules: Employ models with a dedicated global message-passing mechanism. For example, E2GNN uses global scalar and vector features to facilitate long-range information exchange across the entire graph [35].

- Hierarchical Methods: For extremely large systems, consider a multi-scale approach that first processes local neighborhoods and then integrates information at a coarser granularity.

- Increase Cutoff Radius: Increase

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between invariant and equivariant GNNs, and why does it matter for molecular modeling? A1: Invariant GNNs produce the same output (e.g., a scalar energy) regardless of how the input molecule is rotated or translated. Equivariant GNNs, however, ensure that their outputs transform predictably with the inputs. For example, if the input structure is rotated, vector outputs like forces or dipole moments rotate accordingly [35]. This built-in geometric awareness is a powerful physical inductive bias that improves data efficiency, generalization, and prediction accuracy for direction-dependent properties [34] [35].

Q2: My research requires predicting both scalar (e.g., energy) and vector/tensor (e.g., forces, polarizability) properties. Which model architecture is most suitable? A2: You should use an equivariant model that natively handles both scalars and vectors. Architectures like E2GNN [35] and PaiNN [37] [35] use a scalar-vector dual representation, making them efficient and well-suited for this task. They can simultaneously predict invariant energies and equivariant forces with high accuracy, which is essential for molecular dynamics simulations.

Q3: How can I validate that my model is truly equivariant? A3: Perform a simple rotation test. Follow this protocol:

- Take a molecular structure ( R ) and its rotated version ( R' ).

- Pass both through your model to get predictions ( P ) and ( P' ).

- Apply the same rotation used on ( R ) to the prediction ( P ).

- Compare the rotated ( P ) with ( P' ). For a perfectly equivariant model, they should be identical (within numerical precision). A significant difference indicates a break in equivariance [35] [39].

Q4: Are there specific eGNNs that are more efficient for large-scale simulations? A4: Yes. Models that avoid computationally expensive higher-order tensor products can offer significant speedups.

- E2GNN is designed for efficiency, using scalar-vector interactions and achieving strong performance on large-scale datasets [35].

- Equivariant Spherical Transformer (EST) is reported to achieve higher expressiveness with greater efficiency than models relying on Clebsch-Gordan tensor products [38].

- For system sizes beyond a single GPU's memory, a distributed eGNN implementation is necessary, as described in the large-scale electronic structure prediction work [34].

Experimental Protocols & Performance Data

Table 1: Benchmarking eGNN Performance on Molecular Property Prediction (QM9 Dataset)

| Model | Architecture Type | Dipole Moment (MAE) | Polarizability (MAE) | Computational Efficiency (Relative) |

|---|---|---|---|---|

| EnviroDetaNet [37] | E(3)-equivariant MPNN | 0.033 | 0.023 | Baseline (1x) |

| DetaNet [37] | E(3)-equivariant | 0.061 | 0.048 | ~1.2x |

| E2GNN [35] | Scalar-Vector Equivariant | Outperforms baselines [35] | Outperforms baselines [35] | High |

| Equivariant Spherical Transformer (EST) [38] | Spherical Fourier Transform | State-of-the-art on OC20 & QM9 [38] | State-of-the-art on OC20 & QM9 [38] | More efficient than tensor product models [38] |

MAE: Mean Absolute Error. Lower is better. Data synthesized from [38] [37] [35].

Table 2: Scalability of Distributed eGNN for Electronic Structure Prediction

| System Size (Atoms) | Number of GPUs | Parallel Efficiency | Key Enabling Technology |

|---|---|---|---|

| 3,000 | 128 | Strong Scaling Demonstrated | Distributed eGNN with graph partitioning [34] |

| 190,000 | 512 | 87% | Direct GPU communication & optimized partitioning [34] |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Datasets and Models for eGNN Research

| Item Name | Type | Function & Application | Source / Reference |

|---|---|---|---|

| QM9 Dataset | Molecular Dataset | Benchmark dataset for validating model performance on quantum chemical properties like dipole moment and polarizability [36] [37]. | https://qm9.github.io/ |

| OC20 Dataset | Catalyst Dataset | Challenging benchmark for evaluating models on complex molecular systems like catalysts [38]. | https://open-catalyst.github.io/ |

| rMD17 Dataset | Molecular Dynamics | Used for ablation studies and testing model robustness for molecular dynamics simulations [36]. | https://arxiv.org/abs/2007.09577 |

| TorchMD-NET | Software Framework | Provides pre-trained equivariant transformer (ET) models, suitable for transfer learning on tasks like toxicity prediction [39]. | https://github.com/torchmd/torchmd-net |

| EnviroDetaNet Model | Pre-trained Model | An E(3)-equivariant network that integrates molecular environment information, demonstrating strong generalization with limited data [37]. | [37] |

Workflow for Large-Scale eGNN Experimentation

The following diagram outlines a systematic workflow for setting up and troubleshooting large-scale eGNN experiments, integrating solutions to the common issues detailed above.

Structure-Preserving Integrators for Long-Timescale Molecular Dynamics

Frequently Asked Questions

Q: What are structure-preserving integrators, and why are they important for long-time-step molecular dynamics?

Structure-preserving integrators are numerical methods that respect the fundamental geometric properties and physical invariants (like energy and momentum) of the dynamical systems they simulate [40]. For long-time-step Molecular Dynamics (MD), they are crucial because they prevent nonphysical behavior and simulation artifacts that plague non-structure-preserving methods, enabling both computational efficiency and numerical stability [41] [40].

Q: My long-time-step simulation with a machine-learned integrator shows poor energy conservation. What could be wrong?

This is a common pitfall. Standard machine-learned predictors can introduce artifacts such as lack of energy conservation. The solution is to use a structure-preserving, data-driven map. These are equivalent to learning the mechanical action of the system and have been shown to eliminate this pathological behavior while still allowing for a greatly extended integration time step [41].

Q: I am using the Hydrogen Mass Repartitioning (HMR) method with a 4 fs time step to simulate protein-ligand binding, but the process seems artificially slow. Is this expected?

Yes, this is a documented caveat. While HMR allows for a larger time step, it can sometimes retard the simulated biomolecular recognition process. This occurs because the mass repartitioning can lead to faster ligand diffusion, which reduces the stability of key on-pathway intermediate states. This can paradoxically negate the performance gain by requiring more simulation steps to observe the event [42]. For binding to buried cavities, a careful assessment of this effect is necessary.

Q: For a new system, how do I choose between a symplectic integrator and an energy-momentum scheme?

The choice depends on your priority between accuracy and stability.

- Symplectic Integrators are excellent for long-time-scale behavior as they preserve the symplectic structure of phase space, leading to near-conservation of energy over exponentially long times. They are often highly accurate for benchmarking [40] [43].

- Energy-Momentum Integrators are designed to exactly conserve energy and momentum at each time step. This makes them very robust and stable, which can be advantageous for systems with strong nonlinearities [40].

Troubleshooting Guides

Problem: Energy Drift in Long-Time-Scale Simulations

Issue: The total energy of the system drifts significantly over time, indicating a non-physical simulation.

Diagnosis and Solutions:

| Potential Cause | Diagnostic Check | Recommended Solution |

|---|---|---|

| Non-structure-preserving algorithm | Verify if the integrator is symplectic or energy-conserving. | Switch to a structure-preserving method like a variational integrator or symplectic scheme [41] [40]. |

| Time step is too large | Check if the highest frequency motions (e.g., bond vibrations) are stable. | Consider using Hydrogen Mass Repartitioning (HMR) to allow a larger time step without instability, but be aware of its potential impact on kinetics [42]. |

| Incorrect force evaluation | Validate force calculations and cut-off methods. | Ensure the use of proper filtering for short-range force computations to avoid superfluous particle-pair calculations [44]. |

Problem: Inaccurate Kinetics in Biomolecular Recognition

Issue: While thermodynamics seem correct, the rates of processes like protein-ligand binding are inaccurate when using long-time-step methods.

Diagnosis and Solutions:

| Potential Cause | Diagnostic Check | Recommended Solution |

|---|---|---|

| HMR-induced faster diffusion | Compare ligand diffusion coefficients in HMR vs. regular simulations. | For accurate binding kinetics, revert to a standard 2 fs time step without HMR, or use a structure-preserving ML integrator that does not alter atomic masses [42]. |

| Loss of metastable intermediates | Analyze survival probabilities of encounter complexes. | Use a method that preserves the geometric structure of the dynamics, which can better capture the correct pathway statistics [41] [40]. |

Experimental Protocols & Data

Protocol: Implementing a Structure-Preserving Machine Learning Integrator

This protocol is based on the method of learning the mechanical action for long-time-step simulations [41].

- Data Generation: Run a short, high-resolution (small time step) reference simulation of your system using a conventional, accurate integrator.

- Training Set Creation: From the reference trajectory, generate a set of state transitions

(q_t, p_t) -> (q_{t+∆T}, p_{t+∆T})where∆Tis the desired large time step. - Model Training: Train a machine learning model (e.g., a neural network) to learn the map between the initial and final states. Crucially, the architecture of the model must be constrained to be symplectic and time-reversible.

- Validation: Validate the trained model by checking its conservation of energy and other invariants over long simulation times compared to a non-structure-preserving ML model.

- Production Simulation: Use the trained model to propagate the system dynamics over long time steps.

Protocol: Assessing HMR for a Protein-Ligand System

This protocol helps evaluate the trade-offs of the HMR method [42].

- System Preparation: Prepare your protein-ligand system using standard procedures. Create two versions: one with standard masses (control) and one with masses repartitioned using HMR (typically setting hydrogen masses to 3.024 au and reducing heavy atom masses).

- Simulation Setup: Run multiple independent, unbiased MD simulations for both systems. Use a 2 fs time step for the control system and a 4 fs time step for the HMR system.

- Binding Event Detection: For each trajectory, monitor and record the time taken for the ligand to find and stably bind to the native protein cavity.

- Analysis:

- Calculate the mean first passage time for binding in both conditions.

- Compute the ligand's diffusion coefficient in the solvent for both systems.

- Analyze the formation and survival probability of key metastable intermediates along the binding pathway.

- Interpretation: If binding is significantly slower in HMR simulations despite the larger time step, the method may not provide a net performance benefit for your specific system.

Key Quantitative Comparisons