Systematic Benchmarking of DFT Methods for Heats of Formation: A Guide for Computational Chemists and Drug Developers

Accurately predicting heats of formation is critical for computational chemistry, materials science, and drug development, yet selecting the right Density Functional Theory (DFT) method remains a challenge.

Systematic Benchmarking of DFT Methods for Heats of Formation: A Guide for Computational Chemists and Drug Developers

Abstract

Accurately predicting heats of formation is critical for computational chemistry, materials science, and drug development, yet selecting the right Density Functional Theory (DFT) method remains a challenge. This article provides a systematic benchmark and practical guide, exploring the foundational principles of DFT benchmarking, methodological approaches for different chemical systems, strategies for troubleshooting and uncertainty quantification, and a comparative validation of popular functionals using the latest gold-standard data. It equips researchers with the knowledge to optimize their computational workflows for reliable thermochemical predictions in biomedical and energy research.

The Critical Challenge: Why Benchmarking DFT for Heats of Formation is Non-Trivial

The Fundamental Importance of Accurate ΔfH° in Drug Design and Materials Science

The standard enthalpy of formation (ΔfH°) is a fundamental thermodynamic property that quantifies the heat released or absorbed when a substance is formed from its constituent elements in their standard states. In both drug design and materials science, accurate prediction of ΔfH° provides crucial insights into compound stability, synthetic feasibility, and performance characteristics. For pharmaceuticals, formation enthalpies help predict binding affinities and metabolic stability, while in materials science, they inform the synthesis pathways and thermodynamic stability of new compounds. The development of computational methods to predict this property with high accuracy has therefore become an essential pursuit, enabling researchers to screen candidate molecules efficiently before undertaking costly synthetic efforts.

Computational Methodologies for Predicting ΔfH°

Density Functional Theory (DFT) Approaches

Density Functional Theory has become a cornerstone for calculating formation enthalpies due to its favorable balance between computational cost and accuracy. Different functionals and approaches have been developed, each with distinct strengths and limitations for specific chemical systems.

Table 1: Performance of Selected DFT Functionals for ΔfH° Prediction

| Functional | Method Class | Reported Accuracy (RMS) | Optimal Use Cases | Key Limitations |

|---|---|---|---|---|

| PBE0 | Hybrid GGA | 1.1 kcal/mol (activation energies) [1] | Main-group thermochemistry, transition metal catalysis | Systematic underestimation for some systems [2] |

| B3LYP | Hybrid GGA | 1.9 kcal/mol (activation energies) [1] | Organic molecules, energetic materials [3] | Underestimates excitation energies [2] |

| M06-2X | Hybrid meta-GGA | 0.26-0.30 eV (excitation energies) [2] | Excited states, charge transfer systems | Overestimates VEEs; less accurate for barriers (6.3 kcal/mol) [1] |

| CAM-B3LYP | Range-separated | 0.32 eV (excitation energies) [2] | Charge-transfer excitations, biochromophores | Systematic overestimation of VEEs (0.25-0.31 eV) [2] |

| ωB97X-D | Range-separated + dispersion | 0.30 eV (excitation energies) [2] | Systems requiring dispersion correction | Performance varies with system type |

The performance of these functionals varies significantly across different chemical systems. For instance, the PBE0 functional with D3 dispersion correction has demonstrated exceptional performance for activation energies of covalent main-group single bonds with an MAD of 1.1 kcal/mol relative to CCSD(T)/CBS reference data [1]. In contrast, range-separated functionals like CAM-B3LYP tend to systematically overestimate vertical excitation energies by 0.2-0.3 eV for biochromophores, prompting the development of empirically adjusted versions like CAMh-B3LYP to reduce this error [2].

Beyond DFT: Emerging Machine Learning Approaches

Recent advances in machine learning have introduced powerful alternatives to traditional quantum mechanical methods for property prediction:

- Neural Network Potentials (NNPs): Models like EMFF-2025 achieve DFT-level accuracy for predicting structures, mechanical properties, and decomposition characteristics of high-energy materials while being significantly more computationally efficient [4].

- Graph Neural Networks (GNNs): These approaches incorporate elemental features and physical symmetries to improve generalization capabilities, even for compounds containing elements not present in the training data [5].

- Transfer Learning: Strategies that leverage pre-trained models like DP-CHNO-2024 enable accurate predictions with minimal new training data, dramatically reducing computational costs for new systems [4].

Experimental Protocols for Validation

Calorimetric Measurement Techniques

Experimental validation of computed ΔfH° values relies primarily on calorimetric methods, which measure heat changes during chemical reactions:

Experimental Workflow for Calorimetric ΔfH° Determination

Bomb Calorimetry involves combusting a sample in a sealed, oxygen-filled vessel surrounded by a known volume of water, allowing complete combustion and accurate measurement of heat release [6]. The calibration factor (CF) is determined using a substance with known enthalpy change: [ CF = \frac{E}{\Delta T} ] where E is the energy released and ΔT is the temperature change. For electrical calibration: [ E = VIt ] where V is voltage, I is current, and t is time [6].

Solution Calorimetry measures enthalpy changes of reactions in solution using insulated vessels to minimize heat loss. This method is particularly valuable for pharmaceutical compounds where solution-phase properties are relevant to biological activity [7] [6].

Experimental determinations face several challenges that must be addressed for accurate results:

- Heat Loss Compensation: Extrapolating cooling curves back to the time of mixing provides better estimates of maximum temperature in the absence of heat loss [7].

- System Calibration: Using the same amount of water during both calibration and measurement minimizes errors [6].

- Accounting for All Components: The heat capacity of all system components (not just the solvent) must be considered for accurate measurements [7].

Specialized Applications Across Disciplines

Energetic Materials Design

In the development of high-energy materials (HEMs), accurate ΔfH° predictions are crucial for determining performance characteristics and stability. Quantum mechanical calculations using atom-equivalent methods can predict gas-phase heats of formation with root mean square deviations of approximately 3.1 kcal/mol from experimental values [3]. The atom-equivalent approach represents the heat of formation as: [ \Delta Hi = Ei - \sum nj \epsilonj ] where (Ei) is the energy of molecule i, (nj) is the number of j atoms in the molecule, and (\epsilon_j) is the atom equivalent correction determined through least-squares fitting to experimental data [3]. For condensed-phase HEMs, neural network potentials like EMFF-2025 now enable large-scale molecular dynamics simulations with DFT-level accuracy, predicting both mechanical properties and thermal decomposition behaviors [4].

Hydrogen Storage Materials

For hydrogen storage applications, DFT calculations help screen potential materials by predicting formation energies and hydrogen storage capacities. Recent studies on Fe-based perovskite hydrides (XFeH₃, where X = Y, Zr) reveal negative formation energies confirming thermodynamic stability, with hydrogen storage capacities of 2.05 wt% and 2.02 wt%, respectively [8]. Such predictions guide experimental efforts toward the most promising candidates.

Pharmaceutical and Biological Systems

In drug design, ΔfH° values inform compound stability and reactivity. Time-dependent DFT (TDDFT) calculations help elucidate light-capturing mechanisms in photobiological systems, though careful functional selection is crucial. For biochromophores, range-separated functionals with adjusted HF exchange (e.g., CAMh-B3LYP, ωhPBE0) significantly reduce systematic errors in excitation energy predictions compared to CC2 reference data [2].

Table 2: Key Computational and Experimental Resources for ΔfH° Research

| Resource Category | Specific Tools | Primary Function | Application Context |

|---|---|---|---|

| Computational Software | Wien2k, TURBOMOLE, ORCA | DFT calculation implementation | Materials screening, reaction modeling |

| Force Fields | EMFF-2025, DP-CHNO-2024 | Neural network potentials | Large-scale MD simulations of HEMs |

| Machine Learning Frameworks | SchNet, MACE | Graph neural network training | Formation energy prediction with elemental features |

| Experimental Instruments | Bomb calorimeter, Solution calorimeter | Direct ΔH measurement | Experimental validation of predictions |

| Benchmark Databases | NIST Chemistry WebBook, Matbench | Reference data sources | Method validation and calibration |

Performance Benchmarking Across Methods

Accuracy Comparison

Table 3: Comprehensive Accuracy Assessment of ΔfH° Prediction Methods

| Methodology | Representative Examples | Reported Accuracy | Computational Cost | System Limitations |

|---|---|---|---|---|

| Semiempirical | NDDO methods | Lower accuracy vs. DFT [9] | Low | Limited transferability |

| Standard DFT | B3LYP, PBE0 | 1.1-1.9 kcal/mol (thermochemistry) [1] | Medium | Charge transfer states, multireference systems |

| Range-Separated DFT | CAM-B3LYP, ωB97X-D | 0.16-0.31 eV (excitation) [2] | Medium-High | Systematic over/under-estimation trends |

| Double-Hybrid DFT | PWPB95-D3, B2PLYP | ~1.9 kcal/mol (barriers) [1] | High | Multireference character challenges |

| Neural Network Potentials | EMFF-2025 | DFT-level accuracy [4] | Low (after training) | Training data requirements |

| Graph Neural Networks | SchNet, MACE | Improved OoD generalization [5] | Variable | Representation space coverage critical |

Guidelines for Method Selection

Choosing the appropriate computational method depends on the specific research requirements:

- For high-throughput screening of organic molecules, B3LYP or PBE0 provide reasonable accuracy with manageable computational cost [1] [3].

- For systems with charge-transfer character or excited states, range-separated functionals like CAM-B3LYP or ωB97X-D are preferable despite systematic errors [2].

- When studying reaction dynamics or large systems, neural network potentials like EMFF-2025 offer near-DFT accuracy with significantly lower computational expense [4].

- For elements outside training distributions, GNNs with elemental features provide the best generalization capabilities [5].

Accurate prediction of ΔfH° remains essential for advances in both drug design and materials science. While DFT methods continue to evolve with improved functionals and corrections, machine learning approaches present a promising path forward, particularly through their ability to capture complex relationships while dramatically reducing computational costs. The integration of physical knowledge into machine learning architectures, as demonstrated by element-aware GNNs and physically-informed NNPs, will likely drive further improvements in predictive accuracy and transferability across the chemical space. As these computational tools become more sophisticated and accessible, they will increasingly enable researchers to navigate complex molecular landscapes with greater confidence, accelerating the discovery and development of novel pharmaceuticals and advanced materials.

Density Functional Theory (DFT) has become an indispensable computational tool across chemistry and materials science, yet its systematic errors present particular challenges when moving beyond simple organic molecules to complex systems such as boron hydrides and inorganic clusters. While DFT provides reasonable accuracy for many organic systems, its performance becomes more variable and method-dependent when confronting the electron-deficient bonding environments in boranes, the strong correlation effects in transition metal complexes, and the delocalized electronic structures in nanomaterials. Understanding these limitations is crucial for researchers relying on computational predictions for drug development, materials design, and catalytic processes.

The inherent approximations in exchange-correlation functionals manifest differently across chemical space, with boron-containing compounds presenting unique challenges due to their propensity for multicenter bonding and electron delocalization. As research expands into increasingly complex systems, a thorough understanding of these systematic errors becomes essential for accurate prediction of molecular properties, reaction energies, and spectroscopic characteristics. This review systematically benchmarks DFT performance across diverse chemical systems, providing researchers with practical guidance for functional selection and error anticipation in computational investigations.

Benchmarking DFT Performance: Quantitative Error Analysis

Comprehensive benchmarking studies reveal substantial variations in DFT performance across different chemical systems. A large-scale assessment of 2648 gas-phase reactions demonstrated that modern functionals can correctly predict temperature-independent equilibrium compositions in over 90% of cases when using appropriate basis sets [10]. However, the errors are not uniformly distributed across chemical space, with particular challenges emerging for specific element combinations and bonding environments.

Table 1: DFT Performance Across Chemical Systems and Properties

| System Category | Representative Error Range | Best-Performing Functionals | Key Challenges |

|---|---|---|---|

| Small Organic Molecules | 2-10 kcal/mol for reaction enthalpies [10] | PBE0, B3-LYP, ωB97X [10] [11] | van der Waals interactions, dispersion forces |

| Inorganic Compounds | Up to 40 kcal/mol for reaction energies [10] | PBE0, TPSS [10] | Strong correlation, metal-ligand bonding |

| Boron Clusters | Significant delocalization errors [12] | PBE0, ωB97X [13] | Electron-deficient bonding, multicenter interactions |

| Transition Metal Complexes | Variable bond dissociation energies [14] | PBE0, B3-LYP [14] | d-electron correlation, spin states |

| Charge-Transfer Systems | Incorrect excitation energies [11] | Range-separated hybrids [11] | Long-range exchange, excited states |

The performance of DFT depends significantly on both the functional choice and basis set quality. For general applications, triple-zeta basis sets (TZVP) typically provide the optimal balance between accuracy and computational cost, with minimal improvement when moving to quadruple-zeta basis sets (QZVPP) [10]. Hybrid functionals like PBE0 and B3-LYP consistently outperform their pure GGA counterparts, though the margin of improvement varies across chemical systems.

Table 2: Functional Performance in Reaction Energy Prediction (Percentage Correct) [10]

| Functional | SVP Basis Set | TZVP Basis Set | QZVPP Basis Set |

|---|---|---|---|

| PWLDA | 88.7% | 90.5% | 90.7% |

| PBE | 92.1% | 94.2% | 94.6% |

| B3-LYP | 92.7% | 94.7% | 94.8% |

| PBE0 | 92.8% | 94.8% | 95.2% |

| M06 | 92.6% | 94.4% | 94.5% |

| TPSS | 91.5% | 95.1% | 94.9% |

Systematic errors in DFT originate from both the electronic ground state energy and the treatment of nuclear motions. The harmonic approximation for vibrational modes introduces temperature-dependent errors that range from -45.53 to 60.53 kJ/mol at 400 K, expanding to -312.72 to 297.78 kJ/mol at 1500 K [10]. These errors become particularly significant when calculating temperature-dependent equilibrium compositions and thermodynamic properties.

Special Challenges in Boron Chemistry and Complex Systems

Electron-Deficient Bonding in Boron Hydrides

Boron-containing compounds present exceptional challenges for DFT due to their electron-deficient nature and propensity for unconventional bonding patterns. The intrinsic electron deficiency in elemental boron networks initially posed significant obstacles to computational characterization [15]. Boron atoms possess only three valence electrons but four valence orbitals, creating unique bonding scenarios that defy traditional two-center, two-electron bond descriptions [15].

The structural diversity of borophene polymorphs exemplifies these challenges, with different lattice configurations and defect patterns dramatically influencing stability [15]. DFT computations have revealed that introducing atomic vacancies plays an essential role in stabilizing borophene structures [15]. For borane clusters like B₅H₁₁, the delocalized nature of electrons in the boron cage limits the efficiency of local correlation methods, requiring careful treatment of electron correlation [12]. These systems often feature three-center, two-electron bonds that test the limits of standard density functionals.

Metal-Doped Boron Clusters

The incorporation of transition metals into boron clusters creates additional complexity, as demonstrated in studies of B₈Cu₃⁻ clusters [13]. DFT investigations reveal that the lowest-energy structure features a vertical Cu₃ triangle supported on a B₈ wheel geometry, with the horizontal Cu₃ configuration lying 3.0 kcal/mol higher in energy at the PBE0 level [13]. Electron localization function (ELF) and Mulliken population analyses indicate strong Cu-B interactions and significant electron delocalization in the most stable isomer [13].

These metal-doped systems exhibit complex bonding patterns with multicenter interactions that challenge standard computational approaches. The accurate description of these clusters requires careful attention to functional selection, with double-hybrid and range-separated functionals often providing improved performance for these electronically complex systems.

Methodological Approaches for Error Mitigation

Advanced Electron Correlation Methods

For systems where standard DFT approaches prove inadequate, advanced electron correlation methods offer improved accuracy at increased computational cost. The incremental scheme provides a systematic approach to calculating correlation energies for large systems by expanding the correlation energy as a sum over contributions from individual domains and their interactions [12].

This method groups localized Hartree-Fock orbitals into domains and calculates correlation energy through a series of calculations on single domains, pairs, triples, etc., until correction terms fall below a specified threshold [12]. Error analysis demonstrates that the propagation of errors in this incremental expansion can be controlled systematically, with total correlation energy errors kept below 1 kcal/mol with respect to canonical coupled cluster singles and doubles (CCSD) calculations [12].

Incremental Scheme Workflow for Correlation Energy Calculation [12]

Charge-Transfer Descriptors and Excited States

The accurate description of charge-transfer excitations presents particular challenges for DFT-based methods. Benchmarking studies have identified several reliable approaches for characterizing these states, with density-based descriptors providing crucial insights into charge-transfer character [11].

The DCT descriptor, based on the variation of electron density upon excitation, has emerged as a particularly valuable tool for identifying charge-transfer states [11]. This approach calculates the distance between centers of charge accumulation and depletion upon excitation, providing a quantitative measure of charge-transfer extent [11]. For supramolecular systems and molecular dimers, orbital-optimized DFT methods (OO-DFT) and time-dependent DFT (TD-DFT) with range-separated hybrid functionals have demonstrated improved performance for charge-transfer excitations [11].

Research Reagent Solutions: Computational Tools for DFT Benchmarking

Table 3: Essential Computational Tools for DFT Benchmarking Studies

| Tool Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Quantum Chemistry Packages | ORCA [13], Jaguar [14], TURBOMOLE [12] | DFT calculations, geometry optimization, property prediction | General computational chemistry |

| Post-Processing Analysis | Multiwfn [13], TheoDORE [11] | Electron localization analysis, charge-transfer descriptors | Bonding analysis, excited state characterization |

| Wavefunction Analysis | QTAIM, NBO, ELF [16] [13] | Bonding analysis, electron distribution | Understanding bonding patterns in complex systems |

| Reference Databases | CCCBDB [10], NIST Chemistry WebBook [10] | Experimental reference data for benchmarking | Method validation and error assessment |

| Local Correlation Methods | LMP2, LCCSD, LCCSD(T) [12] | Accurate correlation energies for large systems | Systems where conventional coupled cluster is prohibitive |

These computational tools enable researchers to implement the methodologies discussed throughout this review, from initial structure optimization to sophisticated bonding analysis. The combination of robust quantum chemistry packages with specialized analysis tools provides a comprehensive toolkit for assessing and mitigating systematic errors in DFT calculations.

The systematic errors in DFT calculations present significant but manageable challenges when moving from organic molecules to boron hydrides and complex systems. Through careful functional selection, method benchmarking, and the application of specialized approaches for specific chemical challenges, researchers can navigate these limitations while maintaining predictive accuracy.

Future developments in functional design, particularly in range-separated hybrids and double-hybrid functionals, show promise for addressing current limitations in charge-transfer and strongly correlated systems. Similarly, the integration of machine learning approaches with traditional quantum chemistry methods may provide pathways to improved accuracy without prohibitive computational cost. As these methods continue to evolve, systematic benchmarking across diverse chemical spaces will remain essential for guiding method selection and application in both academic and industrial research contexts.

Computational chemistry provides an indispensable toolkit for modern scientific discovery, from designing new drugs to developing high-energy materials. However, the reliability of these computational predictions hinges entirely on the accuracy of the methods employed. A rigorous, multi-layered benchmarking hierarchy has thus emerged, stretching from the theoretical "gold standards" of quantum chemistry to the ultimate arbiter of experimental data. This hierarchy balances a critical trade-off: achieving high chemical accuracy often requires immense computational resources, creating a persistent tension between feasibility and precision. This guide systematically compares the performance of prevalent computational methods—from Coupled Cluster theory to various Density Functional Theory (DFT) functionals and modern machine-learning potentials—using heats of formation and related properties as key metrics. The analysis is framed within a broader thesis on systematic benchmark DFT methods, providing researchers and drug development professionals with a clear, data-driven framework for selecting the appropriate tool for their specific challenge.

The Benchmarking Hierarchy Explained

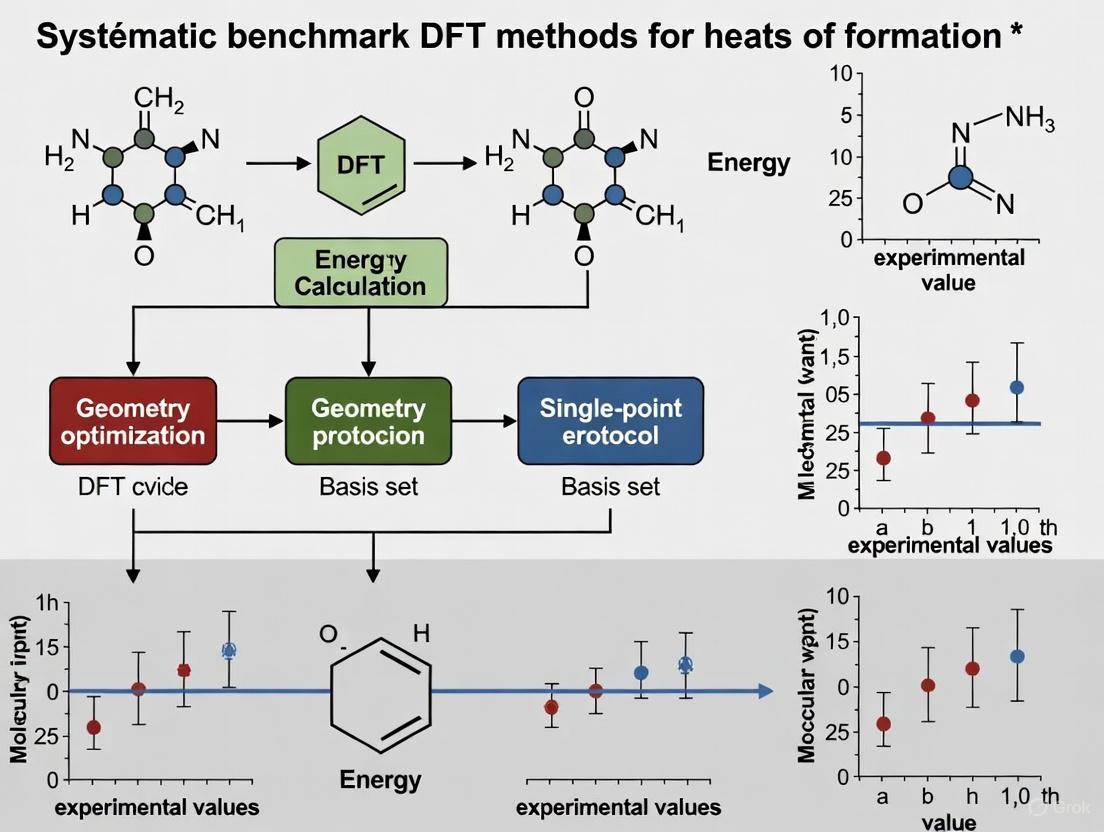

The benchmarking of computational chemistry methods is not a linear path but a structured ecosystem where high-level theories validate more approximate methods, which in turn are judged against physical reality. The following diagram illustrates the logical relationships and validation pathways within this hierarchy.

This hierarchy functions as follows: Coupled Cluster (CCSD(T)) and Quantum Monte Carlo (QMC) are considered theoretical "gold standards" for solving the Schrödinger equation [17] [18]. Their results are used to validate and calibrate the more approximate Density Functional Theory (DFT) methods. In a modern paradigm, both CCSD(T) and DFT data are also used to train Machine Learning Potentials, such as Neural Network Potentials (NNPs), which aim to achieve near-quantum accuracy at a fraction of the computational cost [4] [17]. Ultimately, the predictions of all these methods—from high-level quantum chemistry to force fields—are benchmarked against real Experimental Data, such as experimentally measured heats of formation, binding energies, or crystal structures [19] [20]. This creates a robust framework for establishing the accuracy and limitations of each computational approach.

Quantitative Performance Comparison of Computational Methods

The true test of any computational method lies in its quantitative performance against reliable benchmarks. The following tables summarize the accuracy of various methods for predicting heats of formation and interaction energies, key properties in material and drug design.

Table 1: Performance of Methods on Heats of Formation (ΔfH°) for Boron Hydrides [19]

| Method | Mean Absolute Error (MAE) for Boron Hydrides (kcal mol⁻¹) | Key Limitation / Note |

|---|---|---|

| G4 | 3.6 | High computational cost |

| CCSD(T) | 6.6 | Requires specialized basis set for core-valence correlation |

| ωB97X-D3 | ~10.0 (est.) | Poor performance vs. hydrocarbons (MAE: 3.2 kcal mol⁻¹) |

| B3LYP-D3 | ~13.0 (est.) | Poor performance vs. hydrocarbons (MAE: 6.0 kcal mol⁻¹) |

| B3LYP-D2 | 13.3 | Empirical dispersion can overcorrect |

Table 2: Performance on Organic/Biological Molecule Benchmarks

| Method / Benchmark | Mean Absolute Error (MAE) / Performance | Application Context |

|---|---|---|

| ANI-1ccx (NNP) | ~1.6 kcal mol⁻¹ (GDB-10to13 relative energies) [17] | General-purpose for organic molecules |

| DLPNO-CCSD(T) | 1.6 kcal mol⁻¹ (ΔfH° for organic molecules) [19] | Approximate CCSD(T) for larger systems |

| DFT (PBE0+MBD) | Accurate structural prediction for ligand-pocket motifs [18] | Non-covalent interactions in drug discovery |

| Hartree-Fock | Outperformed DFT in crystal structure refinement of amino acids [20] | Hirshfeld Atom Refinement (HAR) |

The data reveals critical trends. For heats of formation, high-level methods like G4 and CCSD(T) offer the best accuracy but are computationally demanding [19]. Standard DFT functionals like B3LYP and ωB97X, while excellent for many properties, show significant errors for systems with complex bonding, such as the multicenter bonds in boron hydrides, though they perform much better for typical organic molecules [19]. This highlights the importance of context in benchmarking; a method's performance is system-dependent. Furthermore, modern Neural Network Potentials (NNPs) like ANI-1ccx can approach coupled-cluster accuracy at speeds billions of times faster, bridging the gap between cost and accuracy for specific chemical spaces [17].

Detailed Experimental Protocols

To ensure the reproducibility of benchmark studies, it is essential to outline standard protocols for data generation and validation.

Protocol 1: Coupled Cluster Data Generation for Machine Learning Potentials

This protocol was used to create the data for training the ANI-1ccx potential [17].

- Step 1: Generate Conformational Ensemble. A vast set of diverse molecular conformations (e.g., 5 million from the ANI-1x dataset) is generated, often through active learning or molecular dynamics.

- Step 2: Preliminary DFT Calculation. Single-point energy and force calculations are performed on these conformations using a robust DFT functional (e.g., ωB97X) with a moderate basis set (e.g., 6-31G*).

- Step 3: Intelligent Data Selection. A smaller subset (e.g., ~500,000 conformations) is intelligently selected from the larger DFT set to optimally span the chemical space of interest.

- Step 4: High-Level CC Calculation. Single-point energy calculations are performed on the selected subset at the CCSD(T)*/CBS level, a highly accurate extrapolation to the complete basis set limit.

- Step 5: Transfer Learning. A neural network potential is first pre-trained on the large DFT dataset and then refined (transfer learning) on the high-quality CCSD(T) dataset to create the final potential (e.g., ANI-1ccx).

Protocol 2: Atomization Energy Approach for Heats of Formation

This protocol is a standard method for computationally determining gas-phase enthalpies of formation (ΔfH°), as applied in the study of boron hydrides [19].

- Step 1: Geometry Optimization. The molecule of interest is optimized at a reliable level of theory (e.g., B3LYP/DZVP-DFT or MP2/cc-pVDZ) using strict convergence criteria for energy and gradient.

- Step 2: Frequency Calculation. A harmonic vibrational frequency calculation is performed at the same level of theory as the optimization. This provides the Zero-Point Vibrational Energy (ZPVE) and other thermodynamic corrections (enthalpy, entropy) needed to convert electronic energies into thermodynamic properties at a specific temperature.

- Step 3: High-Level Single-Point Energy Calculation. The total electronic energy of the optimized molecule is computed at a higher level of theory (e.g., CCSD(T) or DFT with a large basis set like def2-QZVP).

- Step 4: Atom Energy Calculation. The energies of the constituent atoms in their standard states (e.g., C(g), H(g), etc.) are calculated at the same high level of theory as in Step 3.

- Step 5: Compute Atomization Energy and ΔfH°. The atomization energy is calculated as the difference between the sum of the atomic energies and the molecular energy. The heat of formation is then derived using this atomization energy and the known experimental heats of formation of the atoms.

Protocol 3: Validating Ligand-Pocket Interactions (QUID Framework)

The QUID framework provides a robust protocol for benchmarking methods on systems relevant to drug discovery [18].

- Step 1: Dimer Selection. Select large, flexible, drug-like molecules (host) and small, representative ligands (e.g., benzene, imidazole).

- Step 2: Equilibrium Dimer Generation. Manually position the ligand near a binding site on the host molecule (e.g., an aromatic ring at ~3.55 Å) and perform a geometry optimization (e.g., at PBE0+MBD level) to generate an equilibrium dimer structure.

- Step 3: Non-Equilibrium Conformation Sampling. For a subset of equilibrium dimers, generate non-equilibrium conformations by displacing the ligand along the dissociation coordinate (e.g., using a scaling factor q from 0.90 to 2.00 of the equilibrium distance), with heavy atoms frozen.

- Step 4: "Platinum Standard" Energy Calculation. Calculate the interaction energy (E_int) for all dimers using two fundamentally different high-level methods, specifically Localized Natural Orbital Coupled Cluster (LNO-CCSD(T)) and Fixed-Node Diffusion Monte Carlo (FN-DMC). The benchmark "platinum standard" value is established where these two methods converge (e.g., within 0.5 kcal/mol).

- Step 5: Benchmark Approximate Methods. Compute E_int for all dimers using various DFT functionals, semi-empirical methods, and force fields. Their performance is assessed by comparing their predictions against the established "platinum standard" energies.

The Scientist's Toolkit: Essential Research Reagents

This section details key computational "reagents" and resources essential for conducting rigorous benchmark studies in computational chemistry.

Table 3: Key Research Reagents and Computational Resources

| Item Name | Function / Application | Example Use Case |

|---|---|---|

| Coupled Cluster Theory | Provides theoretical gold-standard energies for small systems; used to train/validate faster methods. | Generating benchmark data for reaction energies and non-covalent interactions [17] [18]. |

| Density Functional Theory (DFT) | Balanced speed/accuracy method for geometry optimization and property prediction of medium-sized systems. | Optimizing molecular structures and predicting spectroscopic parameters [21] [19]. |

| Neural Network Potentials (NNPs) | Surrogate models trained on QM data to achieve near-QM accuracy at million-fold speed increases. | Large-scale molecular dynamics simulations of energetic materials [4] [17]. |

| def2 Basis Set Series | Standardized, size-consistent Gaussian basis sets for accurate QM calculations across the periodic table. | Standard choice for molecular DFT calculations and Hirshfeld Atom Refinement (HAR) [19] [20]. |

| PubChemQCR Dataset | Large-scale dataset of DFT relaxation trajectories for small organic molecules; used for training MLIPs. | Benchmarking the generalizability of machine learning interatomic potentials [22]. |

| Hirshfeld Atom Refinement (HAR) | QM-based method for refining crystal structures against XRD data, providing highly accurate H-atom positions. | Determining precise molecular geometries in the solid state for use as experimental benchmarks [20]. |

The benchmarking hierarchy from Coupled Cluster to experimental data provides an essential, systematic framework for validating computational methods. The quantitative comparisons presented in this guide clearly demonstrate that there is no single "best" method for all scenarios. While CCSD(T) remains the gold standard for accuracy, its cost is prohibitive for most practical applications. DFT offers a versatile balance but requires careful functional selection, particularly for challenging systems like boron hydrides or non-covalent interactions in drug discovery.

The most promising future direction lies in the integration of machine learning with traditional quantum chemistry. Transfer learning, where models pre-trained on massive DFT datasets are fine-tuned on high-quality CC data, has proven capable of producing potentials like ANI-1ccx and EMFF-2025 that approach quantum-mechanical benchmark accuracy at a fraction of the cost [4] [17]. As these methods mature and are validated against robust experimental and theoretical benchmarks, they are poised to redefine the boundaries of what is computationally feasible, ultimately accelerating discovery across chemistry, materials science, and drug development.

Calculating accurate thermochemical properties, such as enthalpies of formation, is a fundamental test for electronic structure methods. Density Functional Theory (DFT) is widely used due to its favorable balance of cost and accuracy. However, its performance is not universal; certain chemical systems expose significant limitations of approximate exchange-correlation functionals. This guide objectively compares the performance of various computational methods when applied to two particularly challenging classes of systems: molecules with multicenter bonding and transition metal complexes.

Benchmark studies reveal that these systems are challenging because their electronic structure requires a delicate treatment of electron correlation effects that are often poorly described by standard DFT functionals. For researchers in drug development and materials science, where such motifs are common, selecting an inappropriate computational method can lead to large errors in predicted energies, stability, and reactivity.

Multicenter Bonding: The Case of Boron Hydrides

The Computational Challenge

Boron hydrides feature three-center two-electron (3c-2e) bonds and three-dimensional aromaticity, leading to structures that differ dramatically from traditional hydrocarbons with their two-center two-electron bonds [19]. This unique bonding presents a severe test for computational methods. The difficulty arises from the complex electron correlation effects inherent in multicenter bonding, which are not captured accurately by many standard functionals [19].

Table 1: Performance of Computational Methods for Boron Hydride Heats of Formation (kcal/mol)

| Method | Mean Absolute Error (MAE) | Key Finding |

|---|---|---|

| G4 | 3.6 | Best performer for boron hydrides [19] |

| CCSD(T) | 6.6 | Requires specialized basis sets for accuracy [19] |

| ωB97X-D3 | >3.2 | Much larger errors vs. organic molecules (MAE 3.2 for organics) [19] |

| B3LYP-D3 | >6.0 | Much larger errors vs. organic molecules (MAE 6.0 for organics) [19] |

Performance Data and Analysis

The data shows a significant accuracy gap between the best methods and commonly used DFT functionals. The G4 composite method achieves a high level of accuracy (MAE 3.6 kcal/mol), while even high-level methods like CCSD(T) show compromised accuracy (MAE 6.6 kcal/mol) unless combined with a basis set optimized for core-valence correlation effects [19]. The empirical dispersion correction D3, when combined with the BJ damping function, was found to provide excessively large intramolecular dispersion energies for boron hydrides, thereby compromising the accuracy of DFT-D3 methods [19].

Recommended Experimental Protocols

For reliable results on boron clusters, the following protocol is recommended based on benchmark studies [19]:

- Geometry Optimization: Use B3LYP/DZVP-DFT or MP2/cc-pVDZ levels with strict convergence criteria.

- Single-Point Energy Calculation: Employ high-level methods like CCSD(T) with basis sets specifically designed for accurate description of core-valence correlation. The atomization energy approach is used to compute ΔfH°.

- Bonding Analysis: Apply the intrinsic bond orbital (IBO) method to identify complex bonding, such as the five-center two-electron (5c-2e) bond in B5H9.

Diagram 1: Computational workflow for boron hydrides.

Transition Metal Complexes: Spin States and Binding Energies

The Computational Challenge

Transition metal (TM) complexes, such as metalloporphyrins found in hemoglobin and cytochrome P450 enzymes, are ubiquitous in biology and catalysis. A primary challenge is accurately calculating spin-state energetics—the energy differences between low-spin (LS) and high-spin (HS) states. This is critical for characterizing spin-crossover materials and modeling open-shell reaction mechanisms [23]. The accuracy of DFT for these systems is heavily influenced by the admixture of exact exchange (EE); higher EE typically stabilizes the HS state [23].

Performance Data and Analysis

Benchmarking against the Por21 database reveals that most functionals fail to achieve "chemical accuracy" (1.0 kcal/mol) for spin-state energetics and binding energies in metalloporphyrins [24].

Table 2: Performance of Select DFT Functionals for Transition Metal Porphyrins (Por21 Database)

| Functional | Type | Mean Unsigned Error (MUE, kcal/mol) | Remarks |

|---|---|---|---|

| GAM | GGA | <15.0 | Best overall performer [24] |

| r2SCANh | Hybrid Meta-GGA | 10.8 | Top performer among commonly suggested functionals [24] |

| HCTH | GGA | ~15.0 | Class A performer [24] |

| revM06-L | Meta-GGA | ~15.0 | Class A performer [24] |

| B3LYP | Hybrid GGA | >23.0 | Failing grade; representative of many popular hybrids [24] |

The errors for most functionals are at least twice the best-case MUE, with many suffering from catastrophic failures [24]. Range-separated and double-hybrid functionals with high percentages of exact exchange are often particularly problematic. Furthermore, many functionals incorrectly predict the ground state of iron(III) and cobalt(II) porphyrins, casting doubt on computed reaction mechanisms [24].

Recommended Experimental Protocols

For reliable computation of TM complex properties [24] [23]:

- Functional Selection: Use local functionals (GGAs or meta-GGAs) or global hybrids with a low percentage of exact exchange (e.g., r2SCANh, revM06-L).

- Geometry Optimization: Perform with a selected functional, noting that dispersion corrections have minimal effect on spin-state energy differences.

- Reference Data: When possible, benchmark against experiment-derived spin-state gaps, which may require correcting for vibrational and environmental (solvent, crystal) effects [23].

- Wavefunction Methods: For highest accuracy, use correlated wavefunction methods like DLPNO-CCSD(T) or CASPT2 on top of DFT-optimized geometries, though their computational cost is high [23].

Diagram 2: Computational workflow for transition metal complexes.

The Scientist's Toolkit: Key Research Reagents and Methods

This table details essential computational "reagents" and their functions for tackling these challenging systems.

Table 3: Essential Computational Tools for Challenging Chemical Systems

| Tool / Method | Function | Application Notes |

|---|---|---|

| CCSD(T) | High-level wavefunction method for accurate energies | Requires specialized basis for boron; high cost for TMs [19] |

| G4 | High-accuracy composite method | Best for boron hydride ΔfH° [19] |

| r2SCANh / revM06-L | Density Functional Approximations | Recommended for TM spin-state and binding energies [24] |

| DLPNO-CCSD(T) | Approximate coupled cluster method | Balances accuracy and cost for larger TM complexes [23] |

| CASPT2 | Multireference perturbation theory | For systems with strong static correlation [24] |

| Intrinsic Bond Orbital (IBO) | Bonding analysis | Visualizes complex bonding (e.g., 5c-2e bonds) [19] |

| Atomization Energy Approach | Calculates gas-phase enthalpy of formation | Used for boron hydride benchmarks [19] |

Systematic benchmarking reveals a clear hierarchy of method performance for challenging chemical systems. For multicenter-bonded boron hydrides, high-level composite methods (G4) or specially-tailored CCSD(T) calculations are necessary for accurate thermochemistry. For transition metal complexes, the choice of DFT functional is critical; local functionals and those with low exact exchange (like r2SCANh and revM06-L) generally provide the most balanced description of spin-state energetics, while popular hybrids like B3LYP often fail.

The pursuit of universal, chemically accurate functionals continues. Until then, researchers and drug development professionals must rely on method-specific benchmarks and the protocols outlined here to ensure computational predictions are both reliable and meaningful.

Computational Approaches: From Atomization Energy to Advanced Error-Correction Schemes

The accurate prediction of thermochemical properties, such as enthalpy of formation (ΔHf), is a cornerstone of computational chemistry with critical applications in the development of energetic materials, pharmaceuticals, and novel chemical processes [25]. The performance of quantum chemical methods in calculating these properties varies significantly based on the chosen computational protocol. This guide provides an objective comparison of three core calculation strategies—the atomization energy method, the isodesmic reaction approach, and the novel isocoordinated reaction method—within the context of systematically benchmarking Density Functional Theory (DFT) for heats of formation research. We summarize quantitative performance data, detail experimental protocols, and provide essential resources to guide researchers in selecting the appropriate method for their specific needs.

The three methods differ fundamentally in their computational design, balancing the trade-off between theoretical rigor and practical error cancellation. The following diagram illustrates the core workflow and logical relationship between these approaches.

Performance Benchmarking and Quantitative Comparison

The choice of method profoundly impacts the accuracy and computational cost of the resulting thermochemical predictions. The following tables summarize key performance metrics across the different approaches, providing a quantitative basis for comparison.

Table 1: Comparative Accuracy of Calculation Methods

| Calculation Method | Mean Absolute Error (MAE) | Key Advantages | Key Limitations |

|---|---|---|---|

| Atomization Energy [26] | Varies by DFT functional:• Best (M06-2X): 1.8 kcal mol⁻¹• Worst (B97-D): 10.0 kcal mol⁻¹ | • Theoretically direct• No need for reference reactions | • No error cancellation• Extremely sensitive to method quality |

| Isodesmic Reaction [27] | Can be < 1.0 kcal mol⁻¹ with low-level theory (e.g., HF/STO-3G) | • Excellent error cancellation• Accurate with inexpensive methods | • Requires known ΔHf of references• Limited to gas phase |

| Isocoordinated Reaction [25] | 9.3 kcal mol⁻¹ (39 kJ mol⁻¹) for over 150 solid energetic materials | • Direct solid-phase ΔHf calculation• No experimental input or fitting | • Higher MAE than best isodesmic cases• Newer, less validated method |

Table 2: DFT Functional Performance for Challenging Thermochemistry (TAE Benchmark) [26]

| Functional Type | Example Functional | Mean Absolute Deviation (MAD) for TAEs (kcal mol⁻¹) |

|---|---|---|

| Pure-GGA | B97-D | 10.0 |

| Meta-GGA | B97M-V | 2.9 |

| Hybrid-GGA | CAM-B3LYP-D4 | 4.0 |

| Hybrid-Meta-GGA | M06-2X | 1.8 |

Detailed Experimental Protocols

Atomization Energy Method

The Total Atomization Energy (TAE) approach is considered the most demanding test for electronic structure methods because it involves breaking all bonds in a molecule without any error cancellation [26].

- Geometry Optimization: Fully optimize the molecular structure of the target compound using a chosen DFT functional and basis set [26].

- Frequency Calculation: Perform a frequency calculation at the same level of theory to confirm a true minimum (no imaginary frequencies) and to obtain the zero-point vibrational energy (ZPVE) [26].

- Single Point Energy Calculation: Calculate the electronic energy of the optimized target molecule.

- Atomic Energy Calculations: Calculate the electronic energies of the individual constituent atoms (e.g., C, H, O, N) in their ground states, using the same method and basis set.

- TAE Calculation: The TAE is computed as the difference between the sum of the energies of the isolated atoms and the energy of the molecule: TAE = ΣE(atoms) - E(molecule).

- Enthalpy of Formation: The gas-phase enthalpy of formation is derived from the TAE using known enthalpies of formation of the atoms [26].

Isodesmic Reaction Method

This method uses a balanced hypothetical reaction where the number and formal types of bonds remain constant, leading to significant error cancellation [28] [27].

- Reaction Design: Construct a balanced chemical reaction for the target molecule where the types and number of chemical bonds are conserved on both sides [27]. For example, to find the ΔHf of ethanol, one might use: CH₃CH₂OH + CH₄ → CH₃CH₃ + CH₃OH [27].

- Reference Selection: Select reference molecules (e.g., CH₄, CH₃CH₃, CH₃OH) for which highly accurate experimental or theoretical enthalpies of formation are known [27].

- Geometry Optimization & Energy Calculation: Optimize the geometries and calculate electronic energies for all species in the reaction (target and references) at a consistent level of theory.

- Reaction Energy Calculation: Compute the energy of the isodesmic reaction (ΔE_rxn) from the electronic energies.

- Target ΔHf Calculation: Use Hess's Law to calculate the unknown enthalpy of formation of the target molecule. The formula is: ΔH_f(target) = Σ ΔH_f(products) - Σ ΔH_f(reactants) + ΔE_rxn, where the sums are over the stoichiometrically weighted known ΔHf values [27].

Isocoordinated Reaction Method

This recently developed method extends the error-cancellation principle to the direct calculation of solid-phase enthalpies of formation by conserving the coordination environment of each atom [25].

- Crystal Structure Preparation: Obtain the experimental crystal structure of the solid energetic material from a database like the Cambridge Structural Database (CSD) [25].

- DFT Structural Relaxation: Perform DFT structural relaxation of the crystal, including dispersion corrections (e.g., DFT-D3), to optimize lattice parameters [25].

- Reference State Assignment: For each atom in the material, identify its coordination number. The constituent elements are then represented by small reference molecules where the central atom has the same coordination number [25]:

- H (Coordination Number = 1): H₂

- O (CN=1): O₂

- O (CN=2): H₂O

- N (CN=1): N₂

- N (CN=2): N₂H₂

- N (CN=3): NH₃

- Energy Calculation: Compute the enthalpy difference between the solid-phase energetic material and a "reaction" with its constituent elements in these reference states [25].

- ΔHf, solid Calculation: The standard solid-phase enthalpy of formation is obtained directly from this first-principles calculation, without using experimental gas-phase ΔHf or sublimation enthalpy data [25].

The Scientist's Toolkit: Essential Research Reagents & Databases

Table 3: Key Computational Resources for Thermochemical Benchmarking

| Resource Name | Type | Primary Function | Relevance |

|---|---|---|---|

| GDB9-W1-F12 Database [26] | Thermochemical Database | Provides 3,366 highly accurate CCSD(T)/CBS Total Atomization Energies for organic molecules up to 8 non-H atoms. | Benchmarking DFT functional performance. |

| MSR-ACC/TAE25 Dataset [29] [30] | Thermochemical Database | A massive dataset of 76,879 TAEs at the CCSD(T)/CBS level, broadly covering chemical space for elements up to argon. | Training and validating data-driven models. |

| Cambridge Structural Database (CSD) [25] | Structural Database | A repository of experimentally determined crystal structures. | Source of initial geometries for solid-state calculations. |

| CCCBDB (NIST) [10] | Thermochemical Database | A collection of experimental thermochemical data for molecules. | Source of reference ΔHf values and Gibbs free energies for method validation. |

| DFT-D3 [25] | Computational Method | An empirical dispersion correction for DFT. | Critical for accurately modeling intermolecular interactions in solids and molecular crystals. |

Leveraging Massive Datasets (OMol25) for Training and Validation

The emergence of massive, curated datasets represents a paradigm shift in computational chemistry, enabling the development and validation of more accurate and transferable models for predicting critical molecular properties. In the specific context of benchmarking Density Functional Theory (DFT) methods for heats of formation research, these large-scale datasets provide the essential foundation for systematic comparison and methodological improvement. The OMol25 dataset, alongside other contemporary data resources, allows researchers to move beyond isolated validation on small, homogeneous molecular sets to robust evaluation across diverse chemical spaces. This comprehensive approach is crucial for identifying methodological strengths and weaknesses, ultimately guiding the selection and development of computational strategies with proven predictive power for thermochemical properties. The integration of machine learning with traditional quantum chemical approaches further leverages these datasets, creating powerful hybrid frameworks that achieve chemical accuracy while managing computational expense [4] [31].

Comparative Performance of Quantum Chemistry Methods

Benchmarking Density Functionals for Enthalpy of Formation

Table 1: Performance of Select Minnesota Functionals for ΔHf° Prediction (Hydrocarbons)

| Density Functional | Mean Absolute Error (MAE) (kcal/mol) | Key Characteristics | Sensitivity to ZPE Corrections |

|---|---|---|---|

| MN15 | 1.70 (with ZPE) | Highest accuracy for diverse hydrocarbons; recommended for general use | Moderate |

| M06-2X | Available in source | Good overall performance | Moderate |

| MN12-SX | Available in source | Competitive for some applications | High (limiting factor for reliability) |

Note: Benchmarks performed with Gaussian 16, cc-pVTZ basis set, using the atom equivalent method. ZPE = Zero-Point Energy [31].

The accurate prediction of standard enthalpy of formation (ΔHf°) is a critical test for quantum chemical methods. Recent benchmarking of three Minnesota density functionals (M06-2X, MN12-SX, and MN15) on a diverse set of hydrocarbons identified MN15 as the most accurate functional when zero-point energy corrections are included, achieving a mean absolute error of 1.70 kcal/mol [31]. This level of accuracy approaches chemical accuracy (1 kcal/mol), making it suitable for predictive studies where experimental data is unavailable. In contrast, the MN12-SX functional exhibited significant sensitivity to ZPE corrections, limiting its reliability for thermochemical predictions. These findings highlight the importance of systematic benchmarking on large, diverse datasets like OMol25 to identify functionals with consistent performance across different molecular classes.

Cross-Functional Transferability Challenges in Foundation Models

Table 2: Comparison of DFT Methodologies for Foundation Potentials

| Methodological Approach | Typical Formation Energy MAE | Strengths | Limitations |

|---|---|---|---|

| GGA/GGA+U (PBE) | ~194 meV/atom (compounds) | Computationally efficient; extensive datasets available | Large errors in oxides and strongly bound systems |

| Meta-GGA (SCAN) | ~84 meV/atom (compounds) | Improved accuracy for formation energies, volumes, band gaps | Higher computational cost; smaller datasets |

| r2SCAN | Similar to SCAN | Balanced accuracy and numerical stability | Limited large-scale datasets; transfer learning required |

Note: Data compiled from large-scale tests on formation energies of solid-state compounds [32].

The development of foundation machine learning interatomic potentials (MLIPs) faces significant challenges regarding cross-functional transferability. Current foundation potentials predominantly trained on Generalized Gradient Approximation (GGA) and GGA+U calculations inherit the limitations of these functionals, including mean absolute errors of approximately 194 meV/atom for formation energy prediction across diverse compounds [32]. The transition to higher-fidelity functionals like the meta-GGA r2SCAN, which demonstrates improved accuracy with MAEs of approximately 84 meV/atom, is complicated by significant energy scale shifts and poor correlation between different functional levels. This creates fundamental challenges for transfer learning approaches, where models pre-trained on lower-fidelity data struggle to adapt to higher-fidelity datasets, often requiring specialized techniques like elemental energy referencing to achieve satisfactory performance [32].

Machine Learning Potentials: A New Frontier

Performance of Emerging Neural Network Potentials

Table 3: Machine Learning Potentials for Molecular Property Prediction

| Model/Approach | Key Features | Demonstrated Applications | Reported Performance |

|---|---|---|---|

| EMFF-2025 | General NNP for C,H,N,O systems; Transfer learning | Structure, mechanical properties, decomposition of 20 HEMs | MAE: ~0.1 eV/atom (energy), ~2 eV/Å (force) |

| MACE-OFF | Short-range transferable FF; 10 organic elements | Molecular crystals, liquids, proteins, torsion scans | Accurate lattice parameters, energies, dynamics |

| ANI-2x | Classical ML force field; Broad organic coverage | Molecular simulations; Hybrid ML/MM | Widely adopted benchmark |

| CHGNet | Foundation potential with charge information | Crystal properties, dynamics | Challenges in cross-functional transfer |

HEMs = High-Energy Materials [4] [32] [33].

Machine learning potentials have emerged as powerful surrogates for direct quantum mechanical calculations, achieving near-DFT accuracy with significantly reduced computational cost. The EMFF-2025 model, a general neural network potential for C, H, N, O-based systems, demonstrates the effectiveness of transfer learning approaches, achieving mean absolute errors within ±0.1 eV/atom for energies and ±2 eV/Å for forces across 20 high-energy materials [4]. Similarly, the MACE-OFF framework showcases remarkable capabilities for organic molecules, producing accurate dihedral torsion scans for unseen molecules, reliable descriptions of molecular crystals and liquids, and even enabling nanosecond-scale simulations of fully solvated proteins [33]. These advances highlight how massive datasets enable the development of ML potentials with exceptional transferability across chemical space.

The Out-of-Distribution Generalization Challenge

Despite these advances, systematic benchmarking through initiatives like BOOM (Benchmarking Out-Of-distribution Molecular property predictions) reveals fundamental limitations in current ML models. Across 10 diverse molecular property tasks and 12 ML models, no existing approach achieved strong out-of-distribution generalization across all tasks, with the top-performing model exhibiting an average OOD error approximately 3 times larger than in-distribution error [34]. This performance gap presents a critical challenge for molecular discovery campaigns, which inherently require extrapolation beyond known chemical space. The findings underscore the need for more sophisticated architectures, training strategies, and benchmarking practices that specifically address OOD generalization rather than merely optimizing in-distribution performance [34].

Experimental Protocols and Methodologies

Quantum Chemical Benchmarking Workflow

The reliable benchmarking of computational methods requires standardized, rigorous protocols. For enthalpy of formation predictions, the atom equivalent method provides a robust approach, where carbon and hydrogen energy equivalents are obtained via least-squares fitting to experimental data [31]. Calculations typically employ high-level theory such as the MN15 functional with the correlation-consistent polarized valence triple-zeta (cc-pVTZ) basis set, incorporating vibrational frequency calculations for zero-point energy corrections. Single-point electronic energies should be computed with tight convergence criteria and ultrafine integration grids to minimize numerical errors, with the Gaussian 16 software package commonly employed for such studies [31] [35].

Machine Learning Potential Development Pipeline

The development of generalizable neural network potentials like EMFF-2025 follows a sophisticated pipeline beginning with the creation of diverse training datasets from first-principles calculations. The Deep Potential Generator (DP-GEN) framework provides an active learning approach for efficiently sampling the configuration space, while transfer learning strategies leverage pre-trained models on larger, lower-fidelity datasets to accelerate learning on smaller, higher-fidelity datasets [4]. Training typically employs deep neural networks with physical constraints such as translational, rotational, and periodic invariance, with models validated against both quantum mechanical references and experimental data for properties including crystal parameters, mechanical properties, and thermal decomposition behavior [4] [32].

Diagram 1: Workflow for computational method benchmarking using massive datasets, showing the iterative cycle of quantum chemical calculation, machine learning, and multi-level validation.

Research Reagent Solutions: Essential Computational Tools

Table 4: Essential Software and Computational Tools for Molecular Property Prediction

| Tool Name | Category | Primary Function | Application in Heats of Formation Research |

|---|---|---|---|

| Gaussian 16 | Quantum Chemistry | Electronic structure calculations | Reference DFT calculations with various functionals |

| ADF | Quantum Chemistry | DFT with relativistic methods | Heavy element systems; QM/MM approaches |

| DP-GEN | Machine Learning | Active learning for ML potentials | Automated configuration space sampling |

| CHGNet | Foundation Potential | Pre-trained MLIP | Transfer learning starting point |

| MACE-OFF | Machine Learning | Organic molecule force field | Biomolecular system simulations |

| BerkeleyGW | Many-Body Perturbation | Excited state calculations | Beyond-DFT accuracy benchmarks |

| ASE | Atomistic Simulation | Workflow automation | High-throughput calculation management |

Note: MLIP = Machine Learning Interatomic Potential [36] [32] [37].

The computational chemist's toolkit for leveraging massive datasets encompasses both traditional quantum chemistry packages and emerging machine learning frameworks. Established quantum chemistry software like Gaussian 16 and ADF provide the reference calculations essential for training and validation, offering a range of density functionals, basis sets, and solvation models [36] [31] [35]. Specialized tools like the Atomic Simulation Environment (ASE) enable workflow automation and high-throughput computation management, while machine learning frameworks such as DP-GEN facilitate the active learning cycles necessary for developing robust neural network potentials [4] [36]. The emerging category of foundation potentials, including CHGNet and MACE-OFF, offers pre-trained models that can be fine-tuned for specific applications, significantly reducing the computational cost of property prediction [32] [33].

The systematic benchmarking of computational methods using massive datasets like OMol25 represents a critical advancement in the pursuit of predictive thermochemical calculations. The comprehensive evaluation of density functionals reveals significant performance differences, with meta-GGA functionals like MN15 and r2SCAN generally outperforming GGA approaches for formation enthalpy prediction, though with higher computational cost. Machine learning potentials demonstrate remarkable potential as accurate, efficient surrogates for quantum mechanical calculations, though challenges remain in out-of-distribution generalization and cross-functional transferability. The integration of these approaches—leveraging massive datasets for both traditional quantum chemical benchmarking and machine learning model development—provides a powerful pathway toward more accurate, efficient, and reliable prediction of molecular properties essential for materials design and drug development. Future progress will depend on continued expansion of high-fidelity datasets, development of more sophisticated transfer learning approaches, and systematic attention to out-of-distribution generalization in model evaluation.

Density Functional Theory (DFT) is a cornerstone of computational chemistry and materials science, enabling the prediction of molecular and solid-state properties by solving the Kohn-Sham equations to reconstruct electron density distributions [38]. The accuracy of these predictions, however, critically depends on the approximations made for the exchange-correlation energy (E_xc), a term that encompasses all quantum mechanical effects not covered by the simple non-interacting kinetic and electrostatic energy treatments [39] [40]. With hundreds of available functionals, selecting the appropriate one for a specific system or property remains a significant challenge for researchers [40].

This guide provides a systematic framework for functional and basis set selection, particularly within the context of benchmarking DFT methods for calculating properties like heats of formation. We present an objective comparison of functional performance across different chemical systems, supported by experimental and high-level theoretical data, to equip researchers with practical decision-making tools for their computational investigations.

Theoretical Foundation of DFT and the Exchange-Correlation Problem

In the Kohn-Sham formulation of DFT, the total electronic energy is expressed as a sum of several components: the non-interacting kinetic energy of a fictitious reference system, the electrostatic interactions (electron-nuclei attraction and classical electron-electron repulsion), and the exchange-correlation energy [40]. The precise mathematical formulation is:

$$E\textrm{electronic} = T\textrm{non-int.} + E\textrm{estat} + E\textrm{xc}$$ [39]

The exchange-correlation energy (Exc) and its associated potential (vxc = δExc/δρ) are the only terms that lack an exact, universally applicable form [39] [40]. The development of increasingly sophisticated approximations for Exc gives rise to the various families of functionals, each with distinct computational costs, strengths, and limitations [38].

Table 1: Hierarchy of Common Exchange-Correlation Functional Types

| Functional Type | Dependence | Key Features | Computational Cost | Example Functionals |

|---|---|---|---|---|

| LDA (Local Density Approximation) | Local electron density (n) [40] | Nearly exact for homogeneous electron gas; inaccurate for most real systems [40] [38] | Lowest | SPW92, SVWN5 [41] |

| GGA (Generalized Gradient Approximation) | Electron density and its gradient (n, ∇n) [40] | Improved over LDA for molecular properties and hydrogen bonding [38] | Low | PBE, BLYP, revPBE [41] |

| meta-GGA | Electron density, its gradient, and kinetic energy density (n, ∇n, τ) [40] | Can detect bonding character; better for reaction energies and lattice properties [39] | Moderate | SCAN, M06-L, TPSS [41] |

| Hybrid | Mix of Hartree-Fock (HF) and semilocal exchange [40] | Often more accurate for reaction mechanisms and molecular spectroscopy [38] | High | B3LYP, PBE0, HSE06 [42] [40] |

| Hybrid + vdW | Includes non-local correlation for dispersion [39] | Crucial for systems dominated by weak interactions (e.g., physisorption) [39] | High | B97M-V, B97-D, VV10 [41] |

A Systematic Guide to Functional Selection

Choosing the right functional requires balancing computational cost, system characteristics, and the target properties. The following workflow and detailed breakdown provide a structured approach to this selection process.

Diagram 1: A practical workflow for selecting a density functional.

Selection by System Composition and Properties

Solids, Surfaces, and Bulk Materials

For periodic systems like solids and surfaces, GGAs like PBE are widely used and perform well for structural properties and initial geometry optimizations [40]. The PBE functional offers a good balance of reliability and computational efficiency for cohesive and elastic properties [39]. For more accurate energetics, including surface chemistry and bulk properties simultaneously, modern meta-GGAs like SCAN are recommended, as they provide a better description of different bonding types [39] [40]. When dispersion forces are critical, as in physisorption or layered materials, functionals with non-local van der Waals corrections, such as rVV10 or BEEF-vdW, are essential [39] [41]. For accurate electronic band structures, especially band gaps, hybrid functionals like HSE06 are superior to semi-local functionals, though at a significantly higher computational cost [39] [40].

Molecular Systems and Reaction Mechanisms

For gas-phase molecular calculations, including reaction energies and barrier heights, hybrid functionals often show superior performance. B3LYP and PBE0 are well-established for studying reaction mechanisms and molecular spectroscopy [38]. The M06 suite of functionals is also a popular choice for main-group thermochemistry and kinetics [42]. Double-hybrid functionals, which incorporate a second-order perturbation theory correction, can provide even higher accuracy for excited-state energies and reaction barriers but are computationally very intensive [38].

Transition Metal Complexes and Strongly Correlated Systems

Systems containing transition metals pose a significant challenge for standard DFT due to localized d- or f-electrons, which can lead to self-interaction errors [39]. For such systems, a GGA+U or meta-GGA+U approach, which adds an on-site Coulomb repulsion term (Hubbard U), can often be as reliable as the much more costly hybrid functionals [39] [40]. Studies on complexes containing first-row transition metals have shown that PBE0 and M06 perform similarly to B3LYP, but their accuracy can be substantially improved with localized orbital correction approaches like DBLOC [42]. For strongly correlated materials like certain transition metal oxides, a beyond-DFT treatment is often necessary [39].

Pharmaceutical and Biomolecular Applications

In drug formulation design, where understanding API-excipient interactions is key, functionals that accurately describe weak interactions are vital. GGA functionals are a preferred choice for biomolecular systems and hydrogen bonding [38]. For quantifying van der Waals interactions and π-π stacking, which are critical in nanodelivery systems and drug-target binding, long-range corrected (LC-)DFT or empirically-corrected functionals like B97-D are highly effective [38] [41].

Performance Benchmarking and Experimental Data

The true test of a functional's utility lies in its performance against reliable experimental or high-level theoretical benchmark data. The following table and case studies illustrate this comparative performance.

Table 2: Quantitative Performance Comparison of Select Functionals Across Systems

| Functional (Type) | Transition Metal Redox Potentials (MUE) | Surface Chemisorption Energy (MAE) | Band Gap of Silicon (eV) | D2 Sticking on Cu(111) |

|---|---|---|---|---|

| PBE (GGA) | ~0.3 V [42] | Moderate [39] | ~0.6 (Underestimated) [39] | Poor agreement with experiment [39] |

| PBE0 (Hybrid) | ~0.3 V (Improved with DBLOC) [42] | - | - | - |

| M06 (meta-GGA) | ~0.3 V (Improved with DBLOC) [42] | - | - | - |

| MCML (meta-GGA) | - | Low (~0.1 eV) [39] | - | - |

| MS-B86bl (meta-GGA) | - | - | - | Excellent agreement [39] |

| HSE06 (Hybrid) | - | - | >1.0 (Improved) [39] | - |

| Experiment / Benchmark | Reference Data [42] | Benchmark Experiments [39] | 1.1 eV [39] | Experimental Curve [39] |

Case Study: Surface Chemistry and Adsorption

The performance of a functional can be system-dependent even within the same domain. For predicting binding energies on transition metal surfaces, the machine-learned MCML meta-GGA functional demonstrates a lower mean absolute error for both chemisorption and physisorption energies compared to other GGAs and meta-GGAs [39]. In dynamics studies, such as the sticking probability of D₂ on Cu(111), the MS-B86bl meta-GGA functional shows particularly good agreement with experiment, whereas other functionals like PBE and RPBE deviate significantly [39].

Case Study: Band Gaps and Electronic Structure

Semi-local functionals like PBE are known to underestimate band gaps, as seen in the case of silicon where PBE predicts a gap of about 0.6 eV, much lower than the experimental 1.1 eV [39]. Machine-learning functionals like DM21, trained on molecular quantum chemistry data, can perform even worse and produce spurious band structure oscillations if not properly constrained. However, a modified version, DM21mu, which incorporates the homogeneous electron gas as a physical constraint, predicts a more reasonable band gap of about 1.0 eV for silicon and reduces the overall bandwidth, demonstrating the importance of physical constraints in functional design [39].

Case Study: Transition Metal-Containing Systems

A benchmark study evaluating B3LYP, PBE0, and M06 for calculating redox potentials and spin splittings in octahedral transition metal complexes found that all three functionals had similar mean unsigned errors (MUEs) [42]. The study further showed that applying a localized orbital correction (DBLOC) substantially improved the results, with PBE0-DBLOC and B3LYP-DBLOC showing remarkably similar and high accuracy. This indicates that the deviations between hybrid DFT and experiment for transition metal systems exhibit regularities that can be systematically corrected [42].

Essential Research Reagents: Computational Tools

The following table details key "research reagent" solutions and materials essential for conducting benchmark DFT studies.

Table 3: Essential Computational Tools for Benchmark DFT Studies

| Tool / Reagent | Function / Purpose | Example Use-Case |

|---|---|---|

| DFT Software (VASP, Gaussian, Q-Chem) | Platform for performing self-consistent DFT calculations using various functionals and basis sets. | Geometry optimization of a drug molecule; calculating the band structure of a semiconductor [40] [43]. |

| Pseudopotentials / Basis Sets | Represent the core electrons and define the spatial functions for valence electrons, balancing cost and accuracy. | Using a plane-wave basis with PAW pseudopotentials in VASP for solids; using the 6-31G* basis set in Gaussian for molecules [38]. |

| Solvation Models (e.g., COSMO) | Simulate the effects of a solvent environment (polarity) on molecular structure and reactivity. | Calculating the solvation free energy (ΔG) of a drug molecule to predict its release kinetics [38]. |

| DFT+U / DBLOC Corrections | Apply corrective terms for systems with strongly correlated electrons (localized d/f orbitals). | Improving the description of electronic properties in a transition metal oxide like Fe₂O₃ [42] [44]. |

| van der Waals Corrections (DFT-D, VV10) | Account for weak, non-covalent dispersion interactions not captured by standard semi-local functionals. | Modeling the physisorption of graphene on a Ni(111) surface or π-π stacking in a molecular crystal [39] [41]. |

| High-Performance Computing (HPC) & GPUs | Provide the necessary computational power for expensive calculations (hybrid functionals, large systems). | Running a frequency calculation for a 50-atom system using DFT with a hybrid functional in Gaussian [45] [43]. |

Practical Protocols for Functional Benchmarking

To ensure reliable and reproducible results, a structured benchmarking protocol is essential. The following workflow outlines key steps from system preparation to result validation.

Diagram 2: A proposed workflow for systematic benchmarking of DFT methods.

Protocol for Benchmarking Heats of Formation

System Preparation and Geometry Optimization:

- Begin with well-defined initial molecular or crystal structures from databases or experimental crystallography data.

- Perform an initial geometry optimization using a computationally efficient GGA like PBE to relax the structure to its nearest local minimum [40]. This serves as a common starting point for higher-level methods.

Single-Point Energy Refinement:

- Using the PBE-optimized geometry, perform a series of single-point energy calculations with a panel of more advanced functionals. This panel should include:

- A meta-GGA (e.g., SCAN)

- A global hybrid (e.g., PBE0 or B3LYP for molecules)

- A range-separated hybrid (e.g., HSE06 for solids)

- A dispersion-corrected functional (e.g., B97M-V) [41]

- This approach isolates the effect of the functional on the electronic energy without the confounding variable of slight geometric differences.

- Using the PBE-optimized geometry, perform a series of single-point energy calculations with a panel of more advanced functionals. This panel should include:

Frequency Analysis and Thermodynamic Correction:

- For molecular systems, perform a frequency calculation on the optimized structure to confirm it is a true minimum (no imaginary frequencies) and to obtain the vibrational contributions to the internal energy and enthalpy.

- Apply the appropriate thermal corrections to the electronic energy to compute the standard enthalpy (heat) of formation at the desired temperature (typically 298 K).

Validation and Error Analysis:

- Compare the computed heats of formation against a reliable experimental benchmark dataset.

- Calculate statistical error metrics such as Mean Unsigned Error (MUE) and Mean Signed Error (MSE) for each functional to quantitatively assess its accuracy and systematic bias (over- or under-binding) [39] [42].

Technical Considerations for Accurate Calculations

- Basis Set Selection: The choice of basis set is critical. For molecules, polarized triple-zeta basis sets (e.g., cc-pVTZ) are often a good starting point for property prediction. For periodic systems, a high plane-wave energy cutoff must be selected to ensure convergence [38].

- Numerical Convergence: Tight convergence criteria for the self-consistent field (SCF) cycle and geometry optimization are necessary to obtain precise energies, with SCF convergence precision up to 10⁻⁶ a.u. or tighter being common [38].

- Integration Grids: Use finer integration grids (e.g., "UltraFine" in Gaussian) for final energy calculations, especially when using meta-GGAs or for systems with transition metals, to avoid numerical noise.

- Exploiting Hardware: For large systems, using GPUs can significantly speed up DFT calculations, particularly for energies, gradients, and frequencies with hybrid functionals. Note that each GPU requires a dedicated CPU core for control, and sufficient memory must be allocated for both [43].

Density Functional Theory (DFT) is a cornerstone of computational chemistry, but standard functionals often fail to accurately describe two critical areas: non-covalent interactions and solid-state enthalpies of formation. London dispersion forces, a type of weak, attractive non-covalent interaction, are notoriously poorly described by conventional DFT [46] [47]. Similarly, predicting the solid-phase enthalpy of formation (∆Hf, solid) is challenging due to the difficulty of accurately computing the energy difference between a crystalline solid and its constituent elements in their reference states [25] [48]. These limitations are particularly consequential in fields like drug development and materials science, where reliable predictions of binding affinity and thermodynamic stability are essential.