Validating Chemical Methods with Quantum Information Theory: From Foundations to Drug Discovery

This article explores the transformative intersection of quantum information theory (QIT) and chemical computation, providing researchers and drug development professionals with a roadmap for validating and enhancing computational methods.

Validating Chemical Methods with Quantum Information Theory: From Foundations to Drug Discovery

Abstract

This article explores the transformative intersection of quantum information theory (QIT) and chemical computation, providing researchers and drug development professionals with a roadmap for validating and enhancing computational methods. We first establish the foundational principles of QIT, including entropy and mutual information, and their role in analyzing electronic structure. The review then details emerging quantum-informed algorithms and hybrid quantum-classical methods that reduce circuit complexity and improve accuracy for simulating molecular systems. A critical discussion on overcoming hardware limitations through error mitigation and novel optimization techniques is presented. Finally, the article provides a rigorous framework for the comparative validation of these quantum-enhanced methods against classical benchmarks, highlighting groundbreaking applications in drug discovery for previously 'undruggable' targets like KRAS.

Theoretical Convergence: How Quantum Information Theory is Redefining Quantum Chemistry

Core Conceptual Definitions and Mathematical Formulations

In the field of quantum information theory applied to chemical methods, concepts from classical information theory provide powerful tools for analyzing molecular electronic structure and guiding quantum computations. This section defines the core concepts and their mathematical foundations.

Table 1: Core Information Theory Concepts and Formulations

| Concept | Mathematical Definition | Key Interpretation |

|---|---|---|

| Shannon Entropy | ( H(X) = -\sum_{x \in \mathcal{X}} p(x) \log p(x) ) [1] [2] | Measures the average uncertainty or information in a random variable [1]. |

| Kullback-Leibler (KL) Divergence | ( D{KL}(P \parallel Q) = \sum{x} P(x) \log \frac{P(x)}{Q(x)} ) [3] [4] | Quantifies the information loss when distribution Q is used to approximate true distribution P [3] [4]. |

| Mutual Information | ( I(X;Y) = \sum_{x,y} p(x,y) \log \frac{p(x,y)}{p(x)p(y)} ) [2] | Measures the amount of information one variable contains about another [2]. |

Shannon entropy serves as a foundational concept, measuring the uncertainty or average level of "surprise" inherent in a random variable's possible outcomes [1]. In chemical systems, this translates to quantifying the information content in various representations of molecular structure.

KL Divergence, while not a true metric due to its asymmetry and failure to satisfy the triangle inequality, provides a crucial measure for comparing distributions [3] [2]. This is particularly valuable for assessing approximations common in computational chemistry.

Mutual information extends these ideas to capture the correlation and shared information between two random variables, such as different parts of a molecular system [2]. The relationships between these core concepts can be visualized as a logical framework.

Application in Chemical and Quantum Chemical Research

Information-theoretic concepts have diverse applications in chemistry, from analyzing topological molecular structures to quantifying electron distributions.

Analyzing Molecular Graphs and Topology

The discrete information entropy approach quantifies molecular complexity by treating molecules as graphs where atoms represent vertices and bonds represent edges. The information content of a molecular graph ( G ) is calculated as:

[ I(G, \alpha) = -\sum{i=1}^{n} \frac{|Xi|}{|X|} \log2 \frac{|Xi|}{|X|} ]

where ( |X_i| ) is the cardinality of the i-th subset of equivalent graph elements (atoms or bonds), ( |X| ) is the total number of elements, and ( \alpha ) is the equivalence criterion used for partitioning [5]. This approach characterizes structural complexity, influenced by elemental diversity, molecular size (increasing entropy), and symmetry (decreasing entropy) [5].

Information-Theoretic Approach (ITA) in Quantum Chemistry

In quantum chemistry, information theory analyzes electronic structure by treating properly normalized electron density distributions and eigenvalues of the reduced density matrix as probability distributions [2]. The information-theoretic approach (ITA) leverages classical information theory with electron density and pair density as information carriers, while quantum information theory (QIT) employs quantum entropy measures like von Neumann entropy to analyze entanglement in quantum many-body systems [2].

Table 2: Experimental Protocols for Information-Theoretic Analysis in Chemistry

| Application Area | Experimental Protocol | Key Measures |

|---|---|---|

| Molecular Structure Analysis | 1. Represent molecule as molecular graph.2. Partition graph elements using equivalence criterion.3. Calculate probabilities for each equivalence class.4. Compute information entropy using discrete formula. | Information content ( I(G, \alpha) ), Structural complexity [5] |

| Electron Density Analysis | 1. Compute electron density ( \rho(\mathbf{r}) ) from quantum calculation.2. Normalize density to create probability distribution.3. Apply continual entropy formula using Lebesgue reference measure. | Shannon entropy of electron density, Information gain in reactions [5] [2] |

| Quantum Chemistry (ITA) | 1. Obtain reduced density matrix from wavefunction.2. Convert to electron density or pair density.3. Analyze using classical information measures.4. Interpret results in chemical context. | Electron density entropy, Molecular similarity [2] |

Comparative Analysis and Benchmarking

KL Divergence for Distribution Comparison

KL Divergence provides a quantitative measure for comparing probability distributions, such as assessing how well a simplified model approximates complex observed data. The following workflow illustrates its application in model evaluation:

In a practical example comparing worm tooth distributions, the KL divergence from the observed distribution to a uniform distribution was 0.338, while to a binomial distribution it was 0.477, indicating the uniform distribution was a better approximation in this specific case [4]. This highlights how KL divergence can objectively guide model selection in chemical data analysis.

Symmetry and Metric Properties

A critical distinction between these measures lies in their mathematical properties, which influences their application:

- Shannon Entropy: Single-distribution measure [1]

- Mutual Information: Symmetric ( (I(X;Y) = I(Y;X)) ) [2]

- KL Divergence: Asymmetric ( (D{KL}(P \parallel Q) \neq D{KL}(Q \parallel P)) ), not a metric [3] [2] [6]

The asymmetry of KL divergence means it matters which distribution is considered the reference, making it crucial for applications like variational autoencoders where the direction of approximation is important [4].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Information-Theoretic Analysis in Quantum Chemistry

| Tool/Resource | Function in Research | Application Context |

|---|---|---|

| Reduced Density Matrix (RDM) | Simplifies N-electron system to subsystem; provides essential information for entropy calculations [2]. | Quantum many-body theory, Entanglement analysis [2] |

| Electron Density ( \rho(\mathbf{r}) ) | Acts as information carrier for classical information theory approach; fundamental variable in DFT [2]. | Density Functional Theory, Molecular similarity [2] |

| Molecular Graph Representation | Abstract representation of molecular structure for topological analysis [5]. | Topological complexity analysis, QSAR/QSPR studies [5] |

| Quantum Computing Testbeds | Hardware platforms for testing quantum algorithms for chemical systems [7]. | Quantum algorithm validation, Pre-logical qubit experiments [7] |

| High-Performance Computing (HPC) | Handles classically tractable portions of hybrid quantum-classical workflows [8]. | Quantum-classical hybrid algorithms, Pre-/post-processing [8] |

Future Directions in Quantum Information for Chemistry

The integration of quantum information theory with chemical methods is accelerating with advances in quantum computing hardware and algorithms. Research centers like the Quantum Systems Accelerator (QSA) are working to achieve 1,000-fold performance gains in quantum computational power by 2030 across various qubit platforms [7]. The concept of "quantum utility" in chemistry focuses on identifying problems where quantum computers offer an exponential advantage over classical methods, particularly in simulating complex chemical systems relevant to energy applications [8]. Success in this field hinges on co-design between quantum algorithm developers, chemistry domain experts, and hardware engineers [8].

The Reduced Density Matrix (RDM) as a Bridge Between Quantum States and Information

Reduced Density Matrices (RDMs) are foundational tools in quantum mechanics, serving as a critical link between the full description of a quantum system and the accessible information about its subsystems. In the context of quantum information theory and its validation of chemical methods, RDMs enable the calculation of observable properties and the quantification of quantum entanglement, even when the complete quantum state is too complex to handle. This guide compares the performance of contemporary computational strategies that leverage RDMs, from classical simulations to emerging quantum algorithms, providing researchers with a clear overview of the current technological landscape.

Theoretical Foundation of Reduced Density Matrices

The reduced density matrix formalizes the concept of focusing on a subsystem within a larger quantum system. For a composite system divided into parts A and B, the total system's density matrix is ρ. The RDM for subsystem A is obtained by partially tracing over the degrees of freedom of subsystem B: ρ_A = Tr_B(ρ) [9]. This mathematical operation ensures that all predictions made by ρ_A for measurements performed solely on A match those of the full state ρ.

The RDM is more than a computational convenience; it is the generator of almost all physical quantities related to the subsystem's degrees of freedom [10] [11]. Its eigenvalues form the entanglement spectrum (ξ_i = -ln(λ_i), where λ_i are the eigenvalues of ρ_A), which provides deep insight into quantum correlations and is a more fundamental characteristic than entanglement entropy alone [10] [11]. The RDM can also be used to define an entanglement Hamiltonian ℋ_E, where ρ_A = exp(-ℋ_E), offering a bridge to interpret emergent thermodynamic behavior at the subsystem level [10].

Comparative Analysis of RDM-Driven Methodologies

The following table compares the core operational principles, strengths, and limitations of different RDM-based approaches used in computational chemistry and physics.

| Methodology | Core Principle | Key Strength | Primary Limitation | Representative Tool/Platform |

|---|---|---|---|---|

| Reduced Density Matrix Formulation of Quantum Linear Response (qLR) [12] | Uses an RDM-driven approach to predict spectral properties (e.g., absorption spectra) within a hybrid quantum-classical framework. | Reduces classical computational cost, enabling studies of molecules (e.g., benzene) with large basis sets (cc-pVTZ). | Performance is sensitive to quantum shot noise when used with near-term quantum hardware. | Custom quantum-classical hybrid algorithms |

| Quantum Monte Carlo (QMC) Sampling of RDM [10] [11] | A path-integral based Monte Carlo scheme that directly samples elements of the RDM by opening the imaginary time boundary in the subsystem. | Enables precise extraction of fine levels of the entanglement spectrum for large systems and long entangled boundaries, previously intractable. | Requires combining with exact diagonalization for the subsystem, which can become costly for very large subsystems. | Custom QMC+ED simulation codes |

| Classical Shadow Tomography with N-Representability [13] | Uses classical shadows from a quantum computer to estimate the 2-RDM, then variationally enforces physical constraints (N-representability conditions). | Can reduce the required measurement shots (shot budget) by up to a factor of 15 compared to unoptimized shadow estimation. | Optimization is complex; improvement is not guaranteed in all shot-noise regimes. | Quantum Lab (Boehringer Ingelheim) software stack |

| Hybrid Quantum-Classical SAPT [14] | A quantum algorithm estimates the 1-RDM of a monomer; this is classically combined with another monomer's data to find electrostatic interaction energies. | Demonstrated on a trapped-ion quantum computer (AQT) for a biochemical problem (enzyme catalysis), yielding results within chemical accuracy (1 kcal/mol). | Active space is severely limited by the number of available qubits on current hardware. | AQT trapped-ion quantum computer / QC Ware software |

Performance and Application Analysis

System Scale and Precision The QMC sampling approach has demonstrated unprecedented capability in simulating large-scale quantum many-body systems. For example, it has been used to compute the entanglement spectrum of a 2D Heisenberg model, revealing a tower of states (TOS) structure that signals continuous symmetry breaking, a task difficult for other methods [10] [11]. This method's power is further shown in studies of Heisenberg ladders with long entangled boundaries, clarifying previous misunderstandings that arose from smaller-scale simulations [10].

Measurement Efficiency and Accuracy The classical shadow protocol addresses the challenge of efficiently learning quantum states from a limited number of measurements. The core task is to estimate the expectation value of observables, including the 2-RDM elements ⟨a_p^† a_q^† a_s a_r⟩, from an unknown quantum state ρ prepared on a quantum computer [13]. Recent work shows that by using an improved estimator and rephrasing the optimization constraints within a semidefinite program (a method known as variational 2-RDM or v2RDM), the shot budget for accurate estimation can be drastically reduced [13]. Numerical studies indicate potential savings of up to a factor of 15 in the number of shots required compared to the standard unbiased estimator [13].

Chemical Relevance and Hardware Implementation The hybrid quantum-classical SAPT (Symmetry-Adapted Perturbation Theory) algorithm represents a tangible step toward industrial application. In a collaborative experiment, researchers used a trapped-ion quantum computer to estimate the electrostatic interaction energy for a model of nitric oxide reductase (NOR), a biologically relevant enzyme [14]. The results were significantly better than those from classical Hartree-Fock theory and were within the threshold of chemical accuracy (1 kcal/mol) of the exact classical reference calculation (CASCI) for the model system [14]. This demonstrates that even today's noisy quantum processors can generate useful RDMs (specifically the 1-RDM) for quantum chemistry when embedded in a carefully designed hybrid framework.

Detailed Experimental Protocols

Protocol: Quantum Monte Carlo Sampling for Entanglement Spectrum

This protocol enables the extraction of the full entanglement spectrum from the RDM of a subsystem in a large many-body system [10] [11].

- Step 1: System and Subdivision. Define the total quantum system (e.g., a Heisenberg ladder or 2D lattice) and partition it into the subsystem of interest A and the environment B.

- Step 2: Path Integral Setup with Open Boundary Condition. In the Stochastic Series Expansion (SSE) QMC framework, configure the path integral with a special boundary condition. The imaginary time boundary is kept periodic for the environment B but is opened for the subsystem A. This allows the configurations at the start and end of the worldline in A (C_A and C_A') to differ.

- Step 3: Sampling RDM Elements. The matrix elements of the RDM are proportional to the frequency with which the specific configuration pair (C_A, C_A') is sampled during the QMC process: ρ_A_{C_A, C_A'} ∝ N_{C_A, C_A'} / N_total [10].

- Step 4: Exact Diagonalization. After collecting a sufficient number of samples to build the numerical RDM, the final step is to perform exact diagonalization on this matrix. The logarithm of its eigenvalues yields the entanglement spectrum: ES_i = -ln(λ_i) [10] [11].

Protocol: Classical Shadow Tomography for 2-RDM with N-Representability

This protocol uses a quantum computer to estimate the 2-RDM more efficiently by enforcing physical constraints [13].

- Step 1: Prepare Quantum State. Prepare multiple copies of the target quantum state ρ on the quantum processor (e.g., the ground state from a VQE algorithm).

- Step 2: Random Measurement. For each copy, apply a random unitary U drawn from an ensemble 𝒰 (e.g., single-particle basis rotations that conserve particle number and spin). Then, measure in the computational basis, obtaining a bitstring b.

- Step 3: Construct Classical Shadow. For each measurement (U, b), apply the inverse of the measurement channel to build an unbiased estimator of the state: ρ̂ = ℳ⁻¹(U† |b⟩⟨b| U). From these snapshots, construct the unbiased estimator for the 2-RDM, ^2_S𝐃^pqrs [13].

- Step 4: Constrained Optimization. Use the raw shadow estimator ^2_S𝐃 as input to a semidefinite program. The program variationally finds a physically valid 2-RDM that is consistent with the shadow data while satisfying the N-representability constraints (conditions that ensure the 2-RDM could have come from a physical N-electron wavefunction) [13]. This constrained optimization reduces the noise and shot requirements of the final 2-RDM estimate.

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table details key computational "reagents" and their functions in RDM-based research.

| Tool / Resource | Function in RDM Research |

|---|---|

| Stochastic Series Expansion (SSE) QMC [10] | A specific, efficient QMC algorithm that can be adapted with open boundary conditions to directly sample the elements of the RDM. |

| N-Representability Conditions (e.g., PQG conditions) [13] | A set of mathematical constraints (often formulated as semidefinite programs) that ensure a computed 2-RDM corresponds to a physically valid N-electron wavefunction. |

| Classical Shadow Tomography [13] | A protocol that uses randomized measurements on a quantum computer to construct a compact, classical representation of a quantum state, from which properties like the RDM can be estimated. |

| Fermionic Orbital Rotation Ensemble [13] | A specific ensemble of random unitaries used in shadow tomography for quantum chemistry. It preserves particle number and spin, allowing for efficient and targeted estimation of fermionic RDMs. |

| Zero-Noise Extrapolation (ZNE) [14] | An error mitigation technique used on noisy quantum hardware. It intentionally increases noise levels in a circuit to extrapolate the expected result in the zero-noise limit, improving the quality of the measured 1-RDM. |

| Quantum Chemistry Toolbox for Maple (RDMChem) [15] | A commercial software package that provides implementations of RDM-based methods for electronic structure calculations, useful for education and exploratory research on strongly correlated systems. |

The reduced density matrix solidifies its role as a fundamental bridge connecting the formal description of quantum states to practically accessible information. As the comparative data shows, classical methods like QMC continue to push the boundaries of what is simulable for entanglement properties, while quantum algorithms, though still in their infancy, are already demonstrating tangible value in calculating chemically relevant properties. The ongoing refinement of protocols like classical shadow tomography, enhanced by physical constraints, is steadily improving the measurement efficiency of RDMs on quantum devices. For researchers in drug development and materials science, this evolving toolkit promises increasingly powerful ways to probe quantum interactions, with the RDM serving as the central, unifying mathematical object.

The transition from classical to quantum information theory represents a fundamental shift in how we process and understand data. While classical information theory relies on the Shannon entropy to quantify uncertainty, quantum information theory requires a more nuanced approach due to superpositions and entanglement. The Von Neumann entropy serves as the quantum counterpart to Shannon entropy, providing a fundamental measure of uncertainty and quantum correlations within physical systems [16] [17]. For researchers in chemical methods and drug development, these quantum measures offer unprecedented capabilities for simulating molecular systems and quantum processes that defy classical computational approaches [18].

This comparison guide examines how Von Neumann entropy serves as both a theoretical foundation and practical tool for characterizing quantum systems, with particular emphasis on its relationship with quantum entanglement and its applications in advancing quantum simulation and molecular modeling for scientific research.

Theoretical Foundations: From Classical to Quantum Measures

Classical Shannon Entropy

In classical information theory, the Shannon entropy measures the uncertainty associated with a random variable. For a probability distribution (p1, p2, \ldots, pn), the Shannon entropy is defined as (H = -\sumi pi \log pi). This foundational concept underpins all classical information processing, from data compression to communication protocols [17].

Quantum Von Neumann Entropy

The Von Neumann entropy extends this concept to quantum systems described by a density matrix (\rho). The entropy is defined as (S(\rho) = -\operatorname{tr}(\rho \ln \rho)) [16]. When (\rho) is expressed in its diagonal basis (\rho = \sumj \etaj |j\rangle\langle j|), this expression simplifies to (S(\rho) = -\sumj \etaj \ln \eta_j), directly mirroring the form of Shannon entropy but applied to quantum states [16].

Table 1: Key Properties of Von Neumann Entropy

| Property | Mathematical Expression | Physical Significance |

|---|---|---|

| Zero for pure states | (S(\rho) = 0) iff (\rho) is pure | Pure states have maximal quantum information |

| Maximal for maximally mixed states | (S(\rho) = \ln N) for (\rho = I/N) | Maximally mixed states have maximal uncertainty |

| Invariance under unitary transformations | (S(\rho) = S(U\rho U^\dagger)) | Entropy is basis-independent |

| Additivity | (S(\rhoA \otimes \rhoB) = S(\rhoA) + S(\rhoB)) | Entropy extensive for independent systems |

| Subadditivity | (S(\rho{AB}) \leq S(\rhoA) + S(\rho_B)) | Correlations reduce total entropy |

| Strong subadditivity | (S(\rho{ABC}) + S(\rhoB) \leq S(\rho{AB}) + S(\rho{BC})) | Fundamental inequality for quantum systems |

Quantum Entanglement as a Resource

Quantum entanglement creates correlations between particles that cannot be explained by classical physics, where the state of one particle instantly influences another, regardless of distance [19]. This "spooky action at a distance," as Einstein famously described it, has been rigorously validated through experimental tests of Bell's inequalities, leading to the 2022 Nobel Prize in Physics for Alain Aspect, John Clauser, and Anton Zeilinger [19].

Unlike classical correlation, which can always be explained by shared information from the past, quantum entanglement involves non-local correlations that violate classical probability constraints [19]. For quantum computing and simulation, entanglement is not merely a curiosity but a fundamental computational resource that can be harnessed to achieve performance advantages [20].

Comparative Analysis: Entropy and Entanglement in Quantum Systems

Relating Von Neumann Entropy and Entanglement

Von Neumann entropy provides a direct quantitative measure of entanglement for bipartite pure states. For a system divided into subsystems A and B, the entanglement entropy (S(\rhoA) = S(\rhoB)) quantifies the entanglement between the subsystems, where (\rhoA = \operatorname{tr}B(\rho_{AB})) [16]. This relationship makes Von Neumann entropy indispensable for characterizing and quantifying entanglement resources in quantum technologies.

The distinction between classical and quantum correlation becomes evident in their entropy behavior. For classical systems, the joint entropy never exceeds the sum of individual entropies, but quantum systems can exhibit more complex relationships, as seen in the triangle inequality: (\left|S(\rhoA) - S(\rhoB)\right| \leq S(\rho_{AB})) [16] [17].

Quantum Advantages in Simulation

Recent research reveals that entanglement, once viewed as a computational obstacle, actually provides significant advantages in quantum simulation. A 2025 study published in Nature Physics demonstrated that as a quantum system becomes more entangled, simulation errors decrease and computational efficiency improves [20].

Table 2: Quantum vs. Classical Simulation Performance

| Metric | Classical Simulation | Quantum Simulation |

|---|---|---|

| Scaling with system size | (O(Nt/\varepsilon)) | (O(\sqrt{N}t/\varepsilon)) for highly entangled states |

| Error trend with entanglement | Increases | Decreases |

| Handling strongly correlated electrons | Approximations required (e.g., DFT) | Exact treatment possible |

| Simulation of chemical dynamics | Limited for complex systems | Demonstrated for small molecules |

For chemical systems, this entanglement advantage is particularly significant. Quantum computers can determine the exact quantum state of all electrons and compute their energy and molecular structures without approximations, enabling accurate modeling of catalysis, chemical reactions, and molecular structures that challenge classical methods [18].

Experimental Protocols and Validation Methods

Quantum State Tomography (QST)

Quantum state tomography aims to reconstruct the complete density matrix of a quantum state through experimental measurements. The standard approach requires measuring (4^n - 1) observables for an n-qubit system, which grows exponentially with system size [21]. This exponential scaling makes full tomography impractical for large systems, necessitating more efficient approaches.

Threshold Quantum State Tomography (tQST)

Threshold quantum state tomography (tQST) provides an optimized protocol that reduces measurement requirements by leveraging the structural properties of density matrices [21]. The method follows a systematic procedure:

- Measure diagonal elements: Project onto computational basis states to obtain diagonal elements ({\rho_{ii}}) of the density matrix

- Apply threshold: Select a threshold parameter (t) to identify significant off-diagonal elements satisfying (\sqrt{\rho{ii}\rho{jj}} \geq t)

- Measure significant elements: Construct and perform only measurements corresponding to these selected off-diagonal elements

- Reconstruct density matrix: Process the reduced dataset using statistical inference techniques

Experimental validation of tQST on a fully-reconfigurable photonic integrated circuit with states up to 4 qubits has demonstrated consistent reduction in measurement requirements with minimal information loss [21]. The threshold (t) can be determined using the Gini index applied to the diagonal elements: (t = \|\rho\|_1 \frac{\text{GI}(\rho)}{2^n - 1}), providing a systematic approach to balance measurement effort and reconstruction accuracy [21].

Entanglement-Enhanced Quantum Simulation

The 2025 China-U.S. study established new error bounds for quantum simulations that directly incorporate entanglement entropy [20]. The research team developed an adaptive simulation algorithm that introduces periodic checkpoints to measure system entanglement during evolution, allowing dynamic adjustment of simulation steps. This approach leverages the observation that as entanglement entropy increases, Trotter errors (approximation artifacts) decrease significantly [20].

The experimental protocol involves:

- Initialize quantum system: Prepare the initial state of the quantum processor

- Time evolution with checkpoints: Implement Trotter decomposition with periodic entanglement measurements

- Estimate entanglement entropy: Use limited measurements on subsystems to quantify entanglement

- Adjust step size dynamically: Reduce simulation steps when high entanglement is detected

- Verify results: Compare with classical simulations where feasible

This protocol was validated through simulations of a 12-qubit quantum Ising spin model, confirming that rapidly entangled systems exhibited markedly lower errors [20].

Chemical Research Applications

Molecular Simulation and Drug Discovery

Quantum computers demonstrate particular promise for chemical problems because molecules are inherently quantum systems [18]. The variational quantum eigensolver (VQE) algorithm has been successfully used to model small molecules including helium hydride ions, hydrogen molecules, lithium hydride, and beryllium hydride [18]. More advanced applications include:

- IBM's hybrid approach applying classical-quantum algorithms to estimate energy of iron-sulfur clusters

- Protein folding simulations with a 16-qubit computer identifying potential KRAS inhibitors for cancer treatment

- Quantum simulation of chemical dynamics modeling molecular structure evolution over time

These applications leverage the fundamental relationship between Von Neumann entropy and electronic structure, where entropy measures provide insights into electron correlation and bonding patterns essential for predictive molecular modeling.

Quantum-Enhanced Spectroscopy

Research from the Hammes-Schiffer Group at Princeton demonstrates how first-principles simulations of molecular polaritons can detect quantum entanglement between photons and molecules [22]. By treating electromagnetic fields quantum mechanically rather than classically, researchers identified unique behaviors manifesting as light-matter entanglement, detectable through real-time dynamics simulations [22].

This approach combines:

- Time-dependent Density Functional Theory (TD-DFT) in conventional and Nuclear-Electronic Orbital (NEO) forms

- Semiclassical, mean-field-quantum, and full-quantum approaches to simulate polariton dynamics

- Quantification of light-matter entanglement to determine when quantum treatment of light is necessary

Challenges and Current Limitations

Despite promising advances, practical quantum advantage in chemical applications requires overcoming significant hurdles:

- Qubit requirements: Modeling industrially relevant systems like cytochrome P450 enzymes or iron-molybdenum cofactor (FeMoco) may require millions of physical qubits [18]

- Qubit stability: Quantum states are fragile and easily decohere, limiting computational time windows

- Algorithm development: Only a few hundred quantum algorithms exist, with even fewer tested on actual quantum hardware [18]

Current research focuses on developing "quantum-inspired" algorithms that adapt quantum techniques for classical computers, providing intermediate benefits while hardware development continues [18].

The Scientist's Toolkit: Research Reagents and Materials

Table 3: Essential Research Materials for Quantum Entropy and Entanglement Studies

| Material/Platform | Function | Research Application |

|---|---|---|

| Superconducting qubits | Basic units of quantum information | Quantum processing and state manipulation |

| Photonic integrated circuits | Manipulate quantum states of light | Quantum state tomography and communication |

| Quantum dot single-photon sources | Generate high-quality single photons | Photonic quantum information processing |

| Atomic clock networks | Ultra-precise time measurement | Testing gravitational effects on quantum systems |

| Optical cavities | Create strong light-matter coupling | Polariton formation and cavity quantum electrodynamics |

| Reconfigurable Beam Splitters (RBS) | Dynamically control photonic interference | Programmable quantum information processing |

Future Directions and Research Opportunities

The integration of Von Neumann entropy measures into chemical research methodologies continues to evolve, with several promising directions emerging:

Quantum Network Applications

Research from the University of Illinois demonstrates how distributed quantum networks with optical atomic clocks can probe general relativity's effects on quantum systems [23]. By separating quantum computers by as little as 1 kilometer in elevation, researchers can measure how Earth's gravitational field affects shared quantum states, potentially revealing deviations from standard quantum theory predicted by general relativity [23].

Entanglement as a Computational Resource

The recognition that entanglement reduces rather than increases simulation errors suggests a paradigm shift in quantum algorithm design [20]. Future research may identify similar advantages for other quantum resources like coherence and "magic states," potentially leading to unified resource theories that optimize multiple quantum properties simultaneously [20].

Chemical Reaction Control

The demonstration of light-matter entanglement in polariton dynamics suggests new pathways for controlling chemical reactions using electromagnetic fields [22]. Future research may enable selective enhancement or suppression of specific reaction pathways through quantum interference effects, potentially revolutionizing catalyst design and reaction optimization.

Von Neumann entropy and quantum entanglement represent fundamental concepts distinguishing quantum from classical information theory. While Von Neumann entropy extends Shannon's classical uncertainty measure to quantum systems, entanglement enables uniquely quantum correlations that can be harnessed as computational resources. For chemical researchers and drug development professionals, these quantum measures offer new methodologies for simulating molecular systems, designing materials, and understanding chemical processes at fundamental levels. As quantum hardware continues to advance and algorithmic efficiency improves, the integration of quantum information concepts into chemical research methodologies promises to accelerate discovery across pharmaceutical and materials science domains.

Information-Theoretic Measures for Analyzing Electron Correlation and System Complexity

The accurate quantification of electron correlation is a central challenge in quantum chemistry and materials science, directly impacting the predictive accuracy of computational models in fields like drug discovery and catalyst design. Traditional post-Hartree-Fock methods, while accurate, suffer from computational costs that skyrocket with system size, creating a pressing need for efficient alternatives [24]. In this context, the information-theoretic approach (ITA) has emerged as a powerful framework for understanding and predicting electron correlation energy by treating electron density as a probability distribution and applying information-theoretic descriptors [24].

This guide provides a comparative analysis of information-theoretic measures for analyzing electron correlation, evaluating their performance across diverse molecular systems from simple isomers to complex clusters. We present standardized methodologies, quantitative performance data, and practical workflows to enable researchers to select and implement these approaches effectively within quantum information validation frameworks.

Theoretical Framework of Information-Theoretic Measures

Information-theoretic measures quantify electron correlation by analyzing the electron density distribution using concepts from information theory. These approaches are grounded in the recognition that the electron density encapsulates information about all monoelectronic properties of a system [25]. The foundational measure is Löwdin's definition of correlation energy as the difference between the exact eigenvalue of the Hamiltonian and its expectation value in the Hartree-Fock approximation [25].

Key information-theoretic quantities include Shannon entropy, which characterizes the global delocalization of electron density; Fisher information, which quantifies local inhomogeneity and density sharpness; and Onicescu's informational energy, which measures the concentration of the electron distribution [24] [25]. These descriptors are inherently basis-agnostic and physically interpretable, providing a robust framework for correlation analysis across diverse chemical systems [24].

Table 1: Core Information-Theoretic Quantities for Electron Correlation Analysis

| Quantity | Mathematical Definition | Physical Interpretation | Computational Cost |

|---|---|---|---|

| Shannon Entropy (SS) | -∫ρ(r) lnρ(r) dr | Global delocalization of electron density | Low (HF level) |

| Fisher Information (IF) | ∫[∇ρ(r)]²/ρ(r) dr | Local inhomogeneity of density | Low (HF level) |

| Ghosh-Berkowitz-Parr Entropy (SGBP) | Specific functional of ρ(r) | Correlation entropy | Low (HF level) |

| Onicescu Information Energy (E2, E3) | ∑pi2 or ∑pi3 | Concentration of distribution | Low (HF level) |

| Relative Rényi Entropy (R2r, R3r) | Specific divergence measures | Distinguishability between densities | Low (HF level) |

The relationship between these measures and electron correlation stems from their ability to capture different aspects of the electron distribution. For instance, the deviation from idempotency in the first-order density matrix between correlated and Hartree-Fock wavefunctions provides a direct link to correlation effects, forming the basis for correlation measures like Icorr = TrΓCISD2 - TrΓHF2 [25].

Comparative Performance Across Molecular Systems

Organic Molecules and Polymers

For organic systems with localized electronic structures, information-theoretic measures demonstrate remarkable predictive accuracy for electron correlation energies. In a benchmark study of 24 octane isomers, linear regression models using ITA quantities achieved strong correlations (R² > 0.990) with post-Hartree-Fock correlation energies, with Fisher information (IF) outperforming Shannon entropy due to the highly localized nature of electron density in alkanes [24].

The root mean square deviations (RMSDs) between LR(ITA)-predicted and calculated correlation energies were below 2.0 mH for MP2, CCSD, and CCSD(T) methods, indicating chemical accuracy achievable at Hartree-Fock computational cost [24]. For linear polymeric systems including polyyne, polyene, all-trans-polymethineimine, and acene, most ITA quantities maintained near-perfect correlations (R² ≈ 1.000) with prediction errors ranging from ~1.5 mH for polyyne to ~10-11 mH for the more challenging acenes with delocalized electronic structures [24].

Table 2: Performance of ITA Measures Across System Types

| System Category | Example Systems | Best-Performing ITA Measures | Prediction RMSD | Correlation (R²) |

|---|---|---|---|---|

| Organic Isomers | 24 octane isomers | Fisher Information (IF) | < 2.0 mH | > 0.990 |

| Linear Polymers | Polyyne, polyene | Multiple (E2, E3, R2r, R3r) | 1.5-4.0 mH | ≈ 1.000 |

| Acenes | Linear acenes | SGBP, IF | 10-11 mH | ≈ 1.000 |

| Metallic Clusters | Ben, Mgn | All 11 ITA quantities | 17-37 mH | > 0.990 |

| Covalent Clusters | Sn | All 11 ITA quantities | 26-42 mH | > 0.990 |

| Hydrogen-Bonded Clusters | H+(H2O)n | E2, E3 | 2.1 mH | 1.000 |

Molecular Clusters and Complex Systems

For three-dimensional molecular clusters, information-theoretic measures maintain strong linear correlations but show increased absolute errors in predicting correlation energies. Metallic clusters (Ben, Mgn) and covalent clusters (Sn) exhibited excellent correlation (R² > 0.990) between ITA quantities and MP2 correlation energies, but with substantially higher RMSDs of 17-37 mH and 26-42 mH respectively [24]. This suggests that single ITA quantities capture the extensivity of correlation but lack sufficient information for quantitative predictions in these complex metallic systems.

In contrast, hydrogen-bonded systems like protonated water clusters H+(H2O)n showed exceptional performance with 8 out of 11 ITA quantities achieving perfect correlation (R² = 1.000) and RMSDs as low as 2.1 mH for Onicescu information energies (E2 and E3) [24]. Similarly, for dispersion-bound clusters like (CO2)n and (C6H6)n, the LR(ITA) method demonstrated accuracy comparable to the linear-scaling generalized energy-based fragmentation (GEBF) method, highlighting its utility for large molecular clusters [24].

Experimental Protocols and Methodologies

Standard Computational Protocol

The LR(ITA) protocol for predicting electron correlation energies follows a standardized workflow:

System Preparation: Generate molecular structures using computational chemistry packages or retrieve from databases like CCCBDB [26]. For clusters and polymers, ensure systematic size progression for transferability analysis.

Wavefunction Calculation: Perform Hartree-Fock calculations with a standardized basis set (e.g., 6-311++G(d,p) recommended). This constitutes the primary computational bottleneck of the protocol [24].

ITA Quantity Computation: Calculate all 11 information-theoretic quantities from the Hartree-Fock electron density: SS, IF, SGBP, E2, E3, R2r, R3r, IG, G1, G2, and G3 [24].

Reference Correlation Energy Calculation: Compute reference post-Hartree-Fock correlation energies (MP2, CCSD, or CCSD(T)) for a subset of systems using the same basis set [24].

Linear Regression Modeling: Establish linear relationships between ITA quantities and reference correlation energies: Ecorr = a × ITA + b [24].

Prediction and Validation: Use regression equations to predict correlation energies for remaining systems and validate against calculated values using RMSD metrics.

Active Space Selection for Strong Correlation

For systems with strong electron correlation, the LR(ITA) protocol can be integrated with active space methods:

Orbital Analysis: Use packages like PySCF to analyze molecular orbitals and determine appropriate active spaces [26].

Active Space Transformation: Employ Active Space Transformers (e.g., in Qiskit Nature) to focus quantum computation on strongly correlated regions [26].

Dynamic Correlation Treatment: Apply information-theoretic measures to capture dynamic correlation effects beyond the active space, addressing one of the fundamental challenges in multi-reference calculations [27].

This hybrid approach is particularly valuable for molecular systems with competing electronic states, such as transition metal complexes or open-shell systems, where static correlation dominates.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for ITA-Based Correlation Analysis

| Tool/Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Quantum Chemistry Packages | Gaussian 09, PySCF | Wavefunction calculation, ITA computation | Core electronic structure calculations [25] [26] |

| Basis Sets | 6-311++G(d,p), cc-pVTZ, STO-3G | One-electron basis functions | Balancing accuracy and computational cost [24] [26] |

| Natural Population Analysis | NBO 5.0, NBO 6.0 | Occupation number analysis | Required for occupation-based ITA measures [25] |

| Quantum Computing Frameworks | Qiskit, OpenFermion | Active space calculations, VQE integration | Hybrid quantum-classical workflows [26] |

| Linear Scaling Methods | GEBF, DC, FMO | Fragmentation of large systems | Enabling application to molecular clusters [24] |

| Benchmark Databases | GMTKN30, CCCBDB | Reference data for validation | Method benchmarking and accuracy assessment [28] [26] |

Integration with Quantum Information Validation

Information-theoretic measures provide a crucial bridge between traditional quantum chemistry and emerging quantum computation approaches. The LR(ITA) protocol offers a standardized framework for validating quantum algorithms like the Variational Quantum Eigensolver (VQE) by providing classically-derived reference data for electron correlation [26].

In quantum-DFT embedding frameworks, where the system is divided into classical and quantum regions, information-theoretic measures can guide active space selection and validate quantum subsystem treatments [26]. This integration is particularly valuable for pharmaceutical applications, where accurately predicting molecular interactions is essential for drug discovery but remains challenging for current quantum hardware [29] [30].

The quantitative relationships between ITA descriptors and correlation energies enable the generation of high-quality training data for quantum machine learning models, addressing data scarcity issues in chemical applications [29]. Furthermore, as quantum hardware advances, these measures provide scalable validation metrics for increasingly complex molecular simulations.

Information-theoretic measures represent a powerful toolkit for analyzing electron correlation across diverse molecular systems, offering an optimal balance between computational efficiency and predictive accuracy. The LR(ITA) protocol enables prediction of post-Hartree-Fock correlation energies at Hartree-Fock cost while maintaining chemical accuracy for many system types.

Performance varies systematically with system complexity, with excellent results for organic molecules and hydrogen-bonded clusters, good performance for polymers, and reduced quantitative accuracy but maintained transferability for metallic and covalent clusters. Integration of these measures with emerging quantum computational methods provides a robust validation framework, positioning information-theoretic approaches as essential tools for next-generation computational chemistry and drug discovery pipelines.

As quantum computing hardware advances, these classically-derived information-theoretic benchmarks will play an increasingly crucial role in validating quantum algorithms and ensuring their reliability for pharmaceutical applications where accurate molecular simulation is critical.

Quantum-Informed Algorithms for Practical Chemical Simulation

The pursuit of practical quantum advantage in computational chemistry faces significant challenges on noisy intermediate-scale quantum (NISQ) devices, where limitations in qubit counts and circuit depths impede applications to realistic molecular systems [31]. Within this context, quantum information-informed algorithms represent a promising frontier, leveraging insights from quantum information theory to enhance the efficiency and feasibility of variational quantum eigensolver (VQE) simulations [32]. These approaches strategically utilize quantum information measures, such as entanglement and correlation, to optimize how quantum circuits are structured and executed, thereby mitigating the stringent resource requirements of NISQ hardware.

The PermVQE and ClusterVQE algorithms exemplify this methodology, both aiming to reduce quantum circuit complexity—a critical barrier to practical quantum chemistry simulations [31] [32]. While they share the common goal of making quantum simulations more tractable, they employ distinct strategies: PermVQE focuses on relocating correlations through qubit permutation to minimize circuit depth, whereas ClusterVQE partitions the problem into smaller, manageable clusters that can be solved with fewer qubits and shallower circuits [32] [33]. By transforming the computational problem to align with hardware constraints, these algorithms enable more accurate and efficient simulations of molecular electronic structure, potentially accelerating discoveries in drug development and materials science.

In-Depth Algorithm Analysis: Core Principles and Methodologies

PermVQE: Qubit Permutation for Correlation Localization

PermVQE addresses a fundamental challenge in quantum simulations: the efficient representation of electronic correlations within the constraints of quantum hardware connectivity [32]. The algorithm is predicated on the observation that the physical layout of qubits on a processor (the hardware topology) and the inherent entanglement patterns of the target molecular system (the correlation topology) are often misaligned. This misalignment forces the use of numerous SWAP gates to enable interactions between non-adjacent qubits, significantly increasing circuit depth and susceptibility to noise.

The core innovation of PermVQE is its use of quantum information measures, specifically entanglement metrics, to determine an optimal permutation of the qubits [32]. This process involves:

- Correlation Mapping: Initially, the entanglement structure between spin-orbitals in the target molecule is characterized, identifying which qubits need to interact most frequently.

- Topology Analysis: The hardware connectivity graph of the target quantum processor is analyzed.

- Permutation Optimization: A classical optimization routine finds a mapping between the logical qubits (representing spin-orbitals) and the physical qubits of the device. The goal is to place highly correlated logical qubits as close as possible on the hardware graph, thereby minimizing the distance and the number of SWAP gates required for their interaction.

By systematically reducing the overhead of SWAP operations, PermVQE achieves substantial reductions in circuit depth. This leads to shorter execution times and higher fidelity results, as deeper circuits are more prone to decoherence and cumulative gate errors. This approach is particularly powerful for hardware-efficient ansatzes, making it a vital tool for enhancing the noise resilience of VQE simulations on existing devices [32].

ClusterVQE: Problem Decomposition via Entangled Clusters

ClusterVQE adopts a divide-and-conquer strategy, tackling the dual challenges of limited qubit numbers and shallow circuit depths simultaneously [31] [33]. It is designed to simulate large molecules by decomposing the problem into smaller, exactly solvable fragments, which are then reconciled to recover the full system's solution.

The algorithm's workflow is methodical and consists of several key stages. The process begins with Qubit Clustering, where the complete set of qubits is partitioned into smaller clusters. This partitioning is not arbitrary; it is guided by maximizing intra-cluster entanglement, quantified using mutual information between spin-orbitals [31] [34]. This ensures that the most strongly correlated qubits are grouped, minimizing the correlation between different clusters. The clusters are then distributed to individual, shallower quantum circuits, each requiring fewer qubits.

To account for the interactions between these now-separated clusters, ClusterVQE employs a Dressed Hamiltonian technique [31]. The original molecular Hamiltonian is transformed to incorporate the effects of inter-cluster correlations. This "dressing" is an iterative process that occurs on a classical computer, effectively downfolding the correlation from one cluster into the effective Hamiltonian of another. Finally, Parallelized VQE Execution occurs, where each smaller cluster is simulated independently on a quantum device using a standard VQE algorithm. Because each sub-circuit is shallower and requires fewer qubits, they are significantly more resilient to noise. The results from these independent simulations are combined classically via the dressed Hamiltonian to reconstruct the energy and properties of the full molecular system [31] [33]. This approach makes it a "quantum parallel" scheme, enabling the simulation of large molecules across multiple smaller quantum devices.

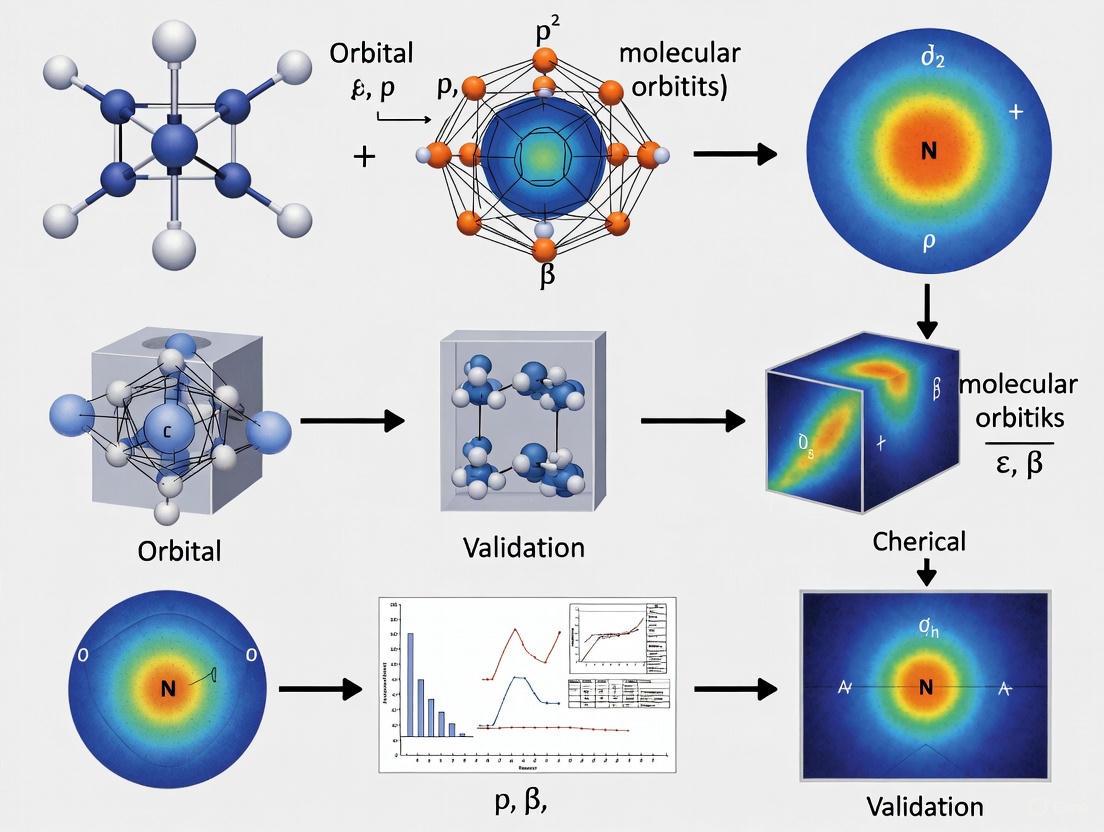

Figure 1: The ClusterVQE workflow decomposes a large molecular problem into smaller clusters processed in parallel, with correlations handled by a classically constructed dressed Hamiltonian.

Comparative Performance Analysis

Algorithmic Features and Target Applications

The distinct mechanistic approaches of PermVQE and ClusterVQE make them suitable for different scenarios within the quantum computational chemist's toolkit. The table below summarizes their core characteristics.

Table 1: Fundamental characteristics of PermVQE and ClusterVQE

| Feature | PermVQE | ClusterVQE |

|---|---|---|

| Primary Innovation | Qubit reordering via permutation [32] | Problem decomposition into clusters [31] |

| Main Resource Reduction | Circuit depth [32] | Circuit depth and qubit count (width) [33] |

| Quantum Information Basis | Quantum correlation localization [32] | Mutual information for clustering [31] [34] |

| Inter-Subsystem Correlation | Handled natively by circuit | Treated via dressed Hamiltonian [31] |

| Classical Overhead | Low (optimizing permutation) | High (building dressed Hamiltonian) [31] |

| Ideal Use Case | Single, moderate-sized molecules on one device [32] | Large molecules, distributed quantum computing [33] |

Experimental Protocols and Performance Benchmarks

Experimental validations of these algorithms, particularly ClusterVQE, have demonstrated their effectiveness on both simulators and actual IBM quantum devices [31]. A common benchmark involves calculating the ground-state energy of the LiH (Lithium Hydride) molecule at various bond lengths using the minimal STO-3G basis set.

ClusterVQE Experimental Protocol:

- Molecular System Preparation: The geometry of the LiH molecule is defined (e.g., equilibrium bond distance of 1.547 Å). The electronic structure problem is translated into a qubit Hamiltonian using the Jordan-Wigner transformation [31].

- Mutual Information Clustering: The mutual information between all pairs of spin-orbitals is computed. A graph is constructed where vertices represent qubits and weighted edges represent their mutual information. Graph partitioning algorithms are then used to identify clusters that maximize intra-cluster entanglement and minimize inter-cluster correlation [31]. For LiH, this resulted in clusters such as

[0, 1, 4, 5, 6, 9]and[2, 3, 7, 8][31]. - Hamiltonian Dressing: The initial Hamiltonian is dressed to incorporate the dominant correlations between the identified clusters. This step is iterative and performed classically [31].

- Cluster Simulation: Each cluster is processed using a VQE algorithm with a QUCCSD (Qubit Unitary Coupled-Cluster Singles and Doubles) ansatz on a quantum device or simulator. The L-BFGS-B classical optimizer is typically used for parameter tuning [31].

- Energy Reconciliation: The energies obtained from the individual cluster simulations are combined via the classically constructed dressed Hamiltonian to produce the total ground-state energy of the molecule [31].

Performance Data: The algorithms are benchmarked against other state-of-the-art VQE improvements, such as qubit-ADAPT-VQE and iQCC (iterative Qubit Coupled Cluster). The performance is evaluated based on the number of iterations or optimization cycles required to achieve convergence (e.g., to within 10⁻⁴ of the exact energy) and the resultant circuit depths [31].

Table 2: Performance comparison for simulating the LiH molecule

| Algorithm | Key Metric | Performance at Equilibrium Geometry | Performance at Stretched Bond (2.4 Å) |

|---|---|---|---|

| ClusterVQE | Energy Convergence | Accurate simulation with shallower circuits [31] | Maintains accuracy with reduced qubit count [31] |

| ClusterVQE | Qubit Requirements | Reduced vs. full VQE [33] | Reduced vs. full VQE [33] |

| Qubit-ADAPT-VQE | Optimization Cycles | More cycles required [31] | More cycles required [31] |

| iQCC | Optimization Cycles | Fewer cycles per iteration [31] | Fewer cycles per iteration [31] |

The data shows that ClusterVQE's primary strength lies in its ability to simultaneously reduce circuit depth and width, enabling the simulation of problems that would otherwise exceed available quantum resources [33]. While iQCC can require fewer optimization cycles because it fixes parameters in previous iterations, ClusterVQE and qubit-ADAPT-VQE typically involve optimizing a larger set of parameters concurrently [31].

Successfully implementing PermVQE, ClusterVQE, and related quantum chemistry simulations requires a suite of software tools and computational resources. The following table details key components of the modern quantum computational chemist's toolkit.

Table 3: Essential research reagents and computational resources for quantum chemistry simulations

| Tool/Resource | Type | Primary Function |

|---|---|---|

| Quantum Mutual Information | Quantum Information Metric | Measures correlation between spin-orbitals to guide qubit clustering in ClusterVQE [31] [34]. |

| Von Neumann Entropy | Quantum Information Metric | Quantifies entanglement; used in ansatz design and analysis [34]. |

| Jordan-Wigner Transformation | Encoding Method | Maps fermionic creation/annihilation operators to Pauli spin operators for quantum computation [31]. |

| QUCCSD Ansatz | Parametrized Quantum Circuit | A chemically inspired circuit structure for preparing trial molecular wave functions [31]. |

| L-BFGS-B Optimizer | Classical Optimizer | A gradient-based algorithm for optimizing variational parameters in VQE to minimize energy [31]. |

| NWQSim | Simulation Tool | A classical simulator for noisy quantum systems, used to validate algorithms and model noise effects [35]. |

Figure 2: The PermVQE algorithm finds an optimal qubit permutation that minimizes the circuit depth by aligning the molecular correlation pattern with hardware connectivity.

PermVQE and ClusterVQE represent a significant paradigm shift in quantum algorithm design, moving from hardware-agnostic approaches to methods that are informed by quantum information theory to co-design solutions around hardware limitations [32]. PermVQE enhances the feasibility of simulating moderate-sized molecules on a single device by making circuits shallower and more robust. In contrast, ClusterVQE provides a more radical, "quantum parallel" path to simulating large molecules that would be otherwise intractable, achieving reductions in both circuit depth and qubit count at the cost of greater classical computation [31] [33].

The integration of these algorithms into the broader computational workflow is crucial for achieving practical quantum utility. As noted in a recent international workshop, success in this era hinges on co-design between algorithm developers, chemistry domain experts, and hardware engineers [8]. Furthermore, quantum computers will not operate in isolation; they will be integrated into hybrid workflows that leverage high-performance computing (HPC) for pre- and post-processing, and artificial intelligence (AI) for tasks like error mitigation and parameter optimization [8]. Within this tiered workflow, algorithms like ClusterVQE are poised to act as a critical bridge, allowing classical HPC resources to manage inter-cluster correlations while delegating the computationally challenging, high-precision simulation of strongly correlated clusters to smaller, more reliable quantum devices. As quantum hardware continues to mature, these quantum information-informed algorithms will play a pivotal role in unlocking the first real-world applications of quantum computing in drug discovery and materials science [36].

The field of computational quantum chemistry is poised for transformation through hybrid quantum-classical computing. These architectures aim to surpass the limitations of both purely classical methods and nascent quantum algorithms by leveraging their complementary strengths. A particularly promising direction is the integration of expressive classical deep neural networks (DNNs) with quantum circuits based on the paired Unitary Coupled-Cluster with Double Excitations (pUCCD) ansatz. This guide provides a comparative analysis of this emerging paradigm, evaluating its performance against established classical and quantum computational chemistry methods. The content is framed within the broader thesis of validating quantum information theory through practical chemical methods research, offering scientists a detailed overview of protocols, performance data, and essential resources.

Performance Comparison of Computational Chemistry Methods

The table below summarizes a comparative analysis of key performance metrics for the hybrid pUCCD-DNN model against other prominent classical and quantum computational methods.

Table 1: Performance Comparison of Computational Chemistry Methods

| Method | Computational Scaling | Key Applications | Reported Accuracy (Mean Absolute Error) | Notable Strengths | Inherent Limitations |

|---|---|---|---|---|---|

| pUCCD-DNN (Hybrid) [37] [38] | Favorable scaling; reduced quantum hardware calls | Molecular energy calculation, reaction barrier prediction (e.g., Cyclobutadiene) | Reduced by two orders of magnitude vs. pUCCD [37] | Noise resilience; high accuracy with shallow circuits; data re-use [37] [38] | Integration complexity; classical neural network training overhead |

| Classical CCSD(T) [39] | O(N⁸) [39] | High-accuracy molecular energy benchmark | Near-chemical accuracy (reference) | Considered the "gold standard" for many chemical problems [38] | Extremely high computational cost for large systems |

| Classical DFT [37] | Variable, typically O(N³) | Large system simulation; materials science | Lower than pUCCD-DNN [37] | Good balance of speed/accuracy for large systems [37] | Accuracy limited by approximate functionals [37] |

| VQE with UCCSD [39] | Circuit depth: O(N⁴) [39] | Near-term quantum simulation of small molecules | Challenging to achieve chemical accuracy | Designed for NISQ devices [39] | High circuit depth; vulnerable to noise; "barren plateaus" [38] |

| pUCCD (Quantum-Only) [37] [39] | Circuit depth: O(N) [39] | Quantum simulation with restricted Hilbert space | Higher than hybrid pUCCD-DNN [37] | More hardware-efficient than UCCSD [39] | Limited accuracy due to restricted ansatz [37] |

Experimental Protocols and Workflows

Core pUCCD-DNN Methodology

The hybrid pUCCD-DNN framework, also referred to as pUNN (paired unitary coupled-cluster with neural networks), integrates a parameterized quantum circuit with a classical deep neural network to represent the molecular wavefunction. The protocol is designed for high accuracy and resilience to quantum hardware noise [38].

1. Wavefunction Ansatz Initialization:

- The molecular wavefunction is first prepared using the pUCCD ansatz on a quantum processor. This ansatz is restricted to the seniority-zero subspace, meaning it only includes molecular orbitals that are occupied or unoccupied by electron pairs, which significantly reduces the required qubit resources and circuit depth [37] [39].

- The pUCCD state, denoted as |ψ>, is generated by a parameterized quantum circuit with a linear depth scaling of O(N) with the number of orbitals, a marked improvement over the O(N⁴) depth of UCCSD [39].

2. Hilbert Space Expansion and Neural Network Processing:

- The pUCCD state is then expanded into a larger Hilbert space using N ancilla qubits and an entanglement circuit Ê, which is typically composed of parallel CNOT gates. This creates an expanded state |Φ⟩ = Ê(|ψ⟩ ⊗ |0⟩) [38].

- A deep neural network is applied as a non-unitary post-processing operator on this expanded state. The neural network, with a tailored architecture, modulates the wavefunction coefficients to account for electron correlation effects outside the seniority-zero subspace, which the pUCCD ansatz alone cannot capture. The final, refined wavefunction is given by |Ψ⟩ = Ê(|ψ⟩ ⊗ |0⟩) [38].

3. Energy Expectation Measurement:

- The energy expectation value is calculated without resorting to computationally expensive quantum state tomography. The pUNN framework uses an efficient algorithm to compute the expectation values of the Hamiltonian, ⟨Ψ|Ĥ|Ψ⟩ / ⟨Ψ|Ψ⟩, directly from the combined quantum-neural state [38].

Benchmarking and Validation Protocols

Isomerization of Cyclobutadiene:

- Objective: To validate the method on a challenging, multi-reference chemical reaction that is difficult for classical methods to model accurately [37] [38].

- Procedure: The reaction barrier for the isomerization is computed using the pUCCD-DNN model. The results are benchmarked against high-level classical methods like Full Configuration Interaction (FCI) and CCSD(T), as well as the quantum-only pUCCD approach [37].

- Outcome: The pUCCD-DNN model demonstrated a significant improvement over classical Hartree-Fock and perturbation theory, closely matching the predictions of FCI calculations [37].

Molecular Energy Calculations on Diatomic and Polyatomic Molecules:

- Objective: To perform numerical benchmarking of the pUCCD-DNN approach on standard test systems like N₂ and CH₄ [38].

- Procedure: The calculated molecular energies are compared against advanced quantum (e.g., UCCSD) and classical (e.g., CCSD(T)) techniques to assess the achievement of "near-chemical accuracy" [38].

- Outcome: The hybrid model achieved accuracy comparable to these high-level methods while retaining the low qubit count and shallow circuit depth of the pUCCD approach [38].

Workflow and System Architecture Diagrams

pUCCD-DNN Hybrid Algorithm Workflow

The following diagram illustrates the step-by-step workflow of the hybrid pUCCD-DNN algorithm for computing molecular energies.

Diagram 1: Workflow of the pUCCD-DNN hybrid algorithm for molecular energy computation.

High-Level System Architecture

This diagram outlines the high-level system architecture of a hybrid quantum-classical computer, showing the flow of information between classical and quantum processing units.

Diagram 2: High-level system architecture of a hybrid quantum-classical computer.

The Scientist's Toolkit: Essential Research Reagents and Solutions

For researchers aiming to implement or study hybrid quantum-classical models like pUCCD-DNN, the following tools and platforms are essential.

Table 2: Essential Research Tools and Platforms for Hybrid Quantum-Classical Research

| Tool/Solution | Type | Primary Function | Key Feature |

|---|---|---|---|

| Amazon Braket [40] | Cloud Service | Managed access to quantum hardware and simulators | Integrates quantum resources with classical AWS services (e.g., Batch, ParallelCluster) for hybrid workflows [40]. |

| Q-CTRL Fire Opal [40] | Software Tool | Quantum circuit optimization | Improves algorithm performance on real hardware by mitigating noise; demonstrated use in finance and cybersecurity [40]. |

| pUCCD Ansatz [37] [39] | Algorithmic Component | Restricted Hamiltonian simulation | Enables linear circuit depth O(N), reducing coherence requirements and making simulation feasible on NISQ devices [39]. |

| Deep Neural Network (DNN) [37] [38] | Algorithmic Component | Wavefunction post-processing | Corrects amplitudes from the quantum circuit; learns from past optimizations, reducing quantum hardware calls [37]. |

| Variational Quantum Eigensolver (VQE) [38] [39] | Algorithmic Framework | Hybrid quantum-classical optimization | The overarching framework for optimizing the parameters of a quantum circuit (like pUCCD) to find a molecular ground state [38]. |

| NVIDIA CUDA-Q [40] | Platform | Hybrid quantum-classical workflow orchestration | Patterns for integrating GPUs and QPUs in a single workflow for performance and reliability [40]. |

Sample-Based Quantum Diagonalization (SQD) for Open-Shell Molecules and Transition Metals

The accurate simulation of open-shell molecules and transition metal complexes represents one of the most persistent challenges in computational chemistry. These systems, characterized by unpaired electrons and strong electron correlation effects, play crucial roles in catalysis, combustion, energy storage, and materials science. Traditional classical computational methods, including density functional theory (DFT) and Hartree-Fock approximations, often fail to capture the subtleties of these systems, particularly when electron correlation effects become significant [41] [42]. The most accurate classical techniques, such as full configuration interaction (FCI) and selected configuration interaction (SCI), provide better solutions but become computationally prohibitive for all but the smallest molecules due to costs that grow exponentially with the number of interacting electrons [43] [41].

Quantum computing offers a promising alternative by enabling direct simulation of many-body electronic structures on qubit-based devices. Among emerging quantum algorithms, Sample-Based Quantum Diagonalization (SQD) has recently demonstrated particular promise as a prime candidate for near-term demonstrations of quantum advantage in chemical simulation [43]. This guide provides a comprehensive comparison of SQD's performance against alternative quantum approaches, focusing specifically on its application to open-shell systems and transition metal complexes within the broader context of quantum information theory validation for chemical methods research.

Sample-Based Quantum Diagonalization (SQD) Fundamentals

Sample-Based Quantum Diagonalization (SQD) is a hybrid quantum-classical algorithm that operates within IBM's quantum-centric supercomputing framework, which tightly couples quantum processors with classical compute resources [43]. The method samples bit-string representations of electronic states and reconstructs molecular wavefunctions from quantum measurements, allowing researchers to combine high-performance quantum computers with high-performance classical computers in tackling complex simulation problems [43] [41].

In practical implementation, SQD uses a compact quantum chemistry model—typically a Local Unitary Cluster Jastrow (LUCJ) ansatz—to generate initial guesses for molecular wavefunctions [41]. The calculations involve substantial quantum resources, with recent implementations using 52 qubits of an IBM quantum processor and executing up to 3,000 two-qubit gates per experiment [43]. Post-processing employs self-consistent error recovery methods to mitigate quantum noise and improve particle number conservation, which is crucial for obtaining chemically accurate results on current noisy intermediate-scale quantum (NISQ) hardware [41].

Alternative Quantum Algorithms for Chemical Simulation

For comparative analysis, researchers currently employ several alternative quantum algorithms for chemical simulations:

Variational Quantum Eigensolver (VQE): A hybrid algorithm that uses a quantum processor to prepare and measure quantum states while employing classical optimization to find the ground state energy [44]. VQE is considered resource-efficient for NISQ devices but can face challenges with convergence and depth limitations.

VQE with quantum Equation of Motion (qEOM): An extension of VQE that accesses excited states in addition to ground states, making it suitable for calculating excitation energies and reaction pathways [44]. The combined VQE-qEOM approach provides a comprehensive framework for both ground and excited state properties.

Quantum Phase Estimation (QPE): A theoretically exact algorithm for measuring energy eigenvalues but requires fault-tolerant quantum computers with significant quantum resources, making it impractical for current NISQ devices [44].

Table 1: Comparison of Quantum Algorithm Methodologies for Chemical Simulation

| Algorithm | Key Principle | Resource Requirements | Primary Applications | NISQ Viability |

|---|---|---|---|---|

| SQD | Samples bit-strings to reconstruct wavefunctions | 52+ qubits, 3000+ two-qubit gates | Open-shell systems, excited states, strong correlation | High |

| VQE | Variational principle with classical optimization | Moderate qubits, shallow circuits | Ground state energies, molecular properties | High |

| VQE-qEOM | VQE extension for excited states | Similar to VQE with increased measurement | Excitation energies, vertical transitions | Moderate |

| QPE | Quantum Fourier transform for phase measurement | High qubit counts, deep circuits, error correction | Exact eigenvalues for future fault-tolerant devices | Low |

Comparative Performance Analysis: SQD vs. Alternative Methods

Case Study: Methylene (CH₂) Singlet-Triplet Energy Gap

A landmark study jointly conducted by IBM and Lockheed Martin researchers provides critical performance data for SQD applied to open-shell systems [43] [41] [42]. The team simulated the methylene (CH₂) molecule, a prototypical open-shell system with significant chemical relevance in combustion and atmospheric chemistry. Methylene presents a particular challenge due to its rare electronic configuration where the triplet state is lower in energy than the singlet state [43].

The researchers employed SQD to calculate the potential energy surfaces for both singlet and triplet states across a range of C–H bond lengths, with the specific aim of determining the singlet-triplet energy gap—a crucial parameter for understanding the molecule's chemical reactivity [41]. The quantum computations modeled CH₂ as a six-electron system across 23 orbitals, encoded using 52 qubits on IBM's ibm_nazca processor [41].

Table 2: Performance Comparison for Methylene (CH₂) Singlet-Triplet Energy Gap Calculation

| Method | Singlet Energy Error (mHa) | Triplet Energy Error (mHa) | Singlet-Triplet Gap (mHa) | Deviation from Experiment |

|---|---|---|---|---|

| SQD (Quantum) | Minimal deviation from SCI reference | ~7 mHa near equilibrium | 19 mHa | 5 mHa |

| Selected CI (Classical) | Reference value | Reference value | 24 mHa | 10 mHa |

| Traditional DFT (Classical) | Varies significantly | Varies significantly | 30-100+ mHa | 16-86+ mHa |

| VQE-qEOM (Quantum) | Data not available in sources | Data not available in sources | Data not available in sources | Data not available in sources |

The results demonstrated that SQD achieved strong agreement with high-accuracy classical methods, with singlet dissociation energy within a few milli-Hartrees of the Selected Configuration Interaction (SCI) reference [43]. Most significantly, the energy gap between the two states calculated via SQD (19 milli-Hartree) was much closer to experimental values (14 milli-Hartree) than conventional techniques predicted (24 milli-Hartree), suggesting the quantum approach more accurately captures the underlying physics [41].

Case Study: Battery Electrolyte Salts with SQD and VQE-qEOM

Recent research has applied both SQD and VQE-qEOM to study excited states in battery electrolytes, providing a direct performance comparison between these quantum approaches [44]. The study investigated LiPF₆, NaPF₆, LiFSI, and NaFSI salts—systems relevant to energy storage technologies where understanding excited-state properties is crucial for photostability, oxidative stability, and degradation pathways.

The research employed VQE with qEOM for vertical singlet excitations within compact active spaces constructed from frontier orbitals, which were mapped to qubits and reduced via symmetry tapering and commuting-group measurements to lower sampling cost [44]. Within ~10-qubit models, VQE-qEOM agreed closely with exact diagonalization of the same Hamiltonians, while SQD in larger active spaces recovered near-exact (subspace-FCI) energies [44].

The spectra revealed clear anion and cation trends: PF₆ salts exhibited higher first-excitation energies (e.g., LiPF₆ ∼13.2 eV) with a compact three-state cluster at 12–13 eV, whereas FSI salts showed substantially lower onsets (≈8–9 eV) with a near-degenerate (S₁,S₂) pair followed by S₃ ≈1.3 eV higher [44]. Substituting Li⁺ with Na⁺ narrowed the gap by ~0.4–0.8 eV within each anion family. These results demonstrate that both SQD and VQE-qEOM can deliver chemically meaningful excitation and binding trends for realistic electrolyte motifs, providing quantitative baselines to guide electrolyte screening and design [44].

Table 3: Battery Electrolyte Salts Excitation Energy Comparison Between Methods

| Electrolyte Salt | First Excitation Energy (eV) | VQE-qEOM Accuracy | SQD Accuracy | Classical Methods Limitations |

|---|---|---|---|---|

| LiPF₆ | ~13.2 eV | Close to exact diagonalization | Near-exact (subspace-FCI) | Struggle with charge transfer states |

| NaPF₆ | ~12.4 eV | Close to exact diagonalization | Near-exact (subspace-FCI) | Struggle with charge transfer states |

| LiFSI | ~8.5-9.0 eV | Close to exact diagonalization | Near-exact (subspace-FCI) | Inaccurate for degenerate excited states |

| NaFSI | ~8.3-8.8 eV | Close to exact diagonalization | Near-exact (subspace-FCI) | Inaccurate for degenerate excited states |

Limitations and Performance Boundaries

While demonstrating promising results, the IBM and Lockheed Martin study also identified specific limitations in SQD's current performance. The research highlighted performance degradation in modeling the triplet state at larger bond distances, where the electronic wavefunction becomes more dispersed and harder to capture with current quantum sampling strategies [41]. The accuracy of SQD hinges on effective bit-string recovery and the representational capacity of the quantum ansatz, both of which are strained in regions of strong static correlation [41].