Validating Density Functionals for Biological Molecules: A Comprehensive Guide for Drug Development and Biomedical Research

The accuracy of Density Functional Theory (DFT) is paramount for reliable predictions in drug discovery and biomolecular modeling.

Validating Density Functionals for Biological Molecules: A Comprehensive Guide for Drug Development and Biomedical Research

Abstract

The accuracy of Density Functional Theory (DFT) is paramount for reliable predictions in drug discovery and biomolecular modeling. This article provides a comprehensive framework for the validation of density functionals specifically for biological molecules. It covers foundational principles, practical methodological applications, troubleshooting of common computational errors, and robust comparative validation strategies. Aimed at researchers and drug development professionals, this guide synthesizes current best practices to enhance the predictive power of DFT calculations for pharmaceuticals, biomolecular condensates, and drug-target interactions, thereby accelerating robust and data-driven research.

Theoretical Foundations and Key Challenges of DFT in Biological Systems

Theoretical Foundation: The Hohenberg-Kohn Theorems

Density Functional Theory (DFT) is a quantum-mechanical method used to calculate the electronic structure of atoms, molecules, and solids [1]. Its theoretical foundation rests on the Hohenberg-Kohn (H-K) theorems, which legitimized the use of electron density as the fundamental variable for describing electronic ground states [2].

The first Hohenberg-Kohn theorem establishes a one-to-one correspondence between the external potential ( V(\mathbf{r}) ) acting on a system of N electrons and its ground-state electron density ( n_0(\mathbf{r}) ) [3] [2]. This means that the ground-state density uniquely determines all properties of the system, including the total energy. This allows the system's total energy to be expressed as a functional of the density:

[ E0 = E[n0(\mathbf{r})] = F{\mathrm{HK}}[n0(\mathbf{r})] + V[n_0(\mathbf{r})] ]

where ( V[n] = \int v(\mathbf{r}) n(\mathbf{r}) d^3\mathbf{r} ) is the system-dependent external potential energy, and ( F_{\mathrm{HK}}[n] ) is the universal Hohenberg-Kohn functional, containing the kinetic energy (( T[n] )) and electron-electron repulsion energy (( U[n] )) components [2].

The second Hohenberg-Kohn theorem provides a variational principle [3] [2]. It states that for any trial electron density ( \tilde{n}0(\mathbf{r}) ) that is ( v )-representable (meaning it corresponds to some external potential) and satisfies ( \int \tilde{n}0(\mathbf{r}) d^3\mathbf{r} = N ) and ( \tilde{n}_0(\mathbf{r}) \geq 0 ), the following holds:

[ E0 \leq E[\tilde{n}0(\mathbf{r})] ]

This offers a direct method for finding the true ground-state density: minimize the energy functional with respect to the density. In practice, the strict requirement of ( v )-representability is challenging. The theory was later reformulated for the wider class of N-representable densities (densities derivable from an antisymmetric wave function) using the Levy constrained search approach, making the variational principle practically applicable [2].

Table 1: Key Concepts of the Hohenberg-Kohn Theorems

| Concept | Description | Implication |

|---|---|---|

| First H-K Theorem | One-to-one mapping between external potential ( V(\mathbf{r}) ) and ground-state density ( n_0(\mathbf{r}) ) [2] | Electron density uniquely determines all system properties; energy is a density functional. |

| Second H-K Theorem | Variational principle for the energy functional [2] | Provides a method to find the ground state by minimizing ( E[n] ). |

| v-representability | Density must correspond to a ground-state wave function under some external potential [2] | Original theorem requirement; difficult to enforce in practice. |

| N-representability | Density must be derivable from an antisymmetric wave function [2] | Less restrictive; enables practical computation via Levy constrained search. |

| HK Functional ( F_{\mathrm{HK}}[n] ) | Universal functional of density: ( T[n] + U[n] ) [2] | Captures kinetic and electron-electron repulsion energies, independent of the specific system. |

The Kohn-Sham Equations: A Practical Framework

While the H-K theorems are foundational, they do not provide a practical computational scheme because the exact form of the kinetic energy functional ( T[n] ) is unknown. To address this, Walter Kohn and Lu Jeu Sham introduced the Kohn-Sham equations in 1965 [4]. Their key insight was to replace the original, interacting system with a fictitious system of non-interacting electrons that is designed to yield the same ground-state density as the original, interacting system [4].

In this auxiliary system, the electrons move in an effective local potential ( v_{\text{eff}}(\mathbf{r}) ), known as the Kohn-Sham potential. The many-body problem is thus reduced to solving a set of single-particle equations, the Kohn-Sham equations:

[ \left(- \frac{\hbar^2}{2m} \nabla^2 + v{\text{eff}}(\mathbf{r}) \right) \varphii(\mathbf{r}) = \varepsiloni \varphii(\mathbf{r}) ]

Here, ( \varphii(\mathbf{r}) ) are the Kohn-Sham orbitals, and ( \varepsiloni ) are their corresponding orbital energies [4]. The electron density of the real, interacting system is then constructed from these orbitals:

[ \rho(\mathbf{r}) = \sumi^N |\varphii(\mathbf{r})|^2 ]

The magic of the Kohn-Sham approach is embedded in the effective potential, which is given by:

[ v{\text{eff}}(\mathbf{r}) = v{\text{ext}}(\mathbf{r}) + e^2 \int \frac{\rho(\mathbf{r}')}{|\mathbf{r} - \mathbf{r}'|} d\mathbf{r}' + v_{\text{xc}}(\mathbf{r}) ]

This potential includes the external potential (e.g., electron-nuclei attraction), the Hartree (or Coulomb) potential describing classical electron-electron repulsion, and the exchange-correlation (XC) potential, ( v{\text{xc}} ), defined as the functional derivative of the exchange-correlation energy: ( v{\text{xc}}(\mathbf{r}) \equiv \frac{\delta E_{\text{xc}}[\rho]}{\delta \rho(\mathbf{r})} ) [4]. The XC potential encapsulates all the complex, many-body quantum effects not captured by the other terms.

The total energy functional in Kohn-Sham DFT is expressed as:

[ E[\rho] = Ts[\rho] + \int d\mathbf{r} v{\text{ext}}(\mathbf{r}) \rho(\mathbf{r}) + E{\text{H}}[\rho] + E{\text{xc}}[\rho] ]

Here, ( Ts[\rho] ) is the kinetic energy of the non-interacting Kohn-Sham system, a known functional of the orbitals. The challenge of the many-body problem is now transferred to finding a good approximation for the unknown exchange-correlation energy functional ( E{\text{xc}}[\rho] ). The accuracy of a DFT calculation critically depends on this choice [4] [5].

Exchange-Correlation Functionals: The Key Challenge and Advances

The accuracy of Kohn-Sham DFT hinges entirely on the approximation used for the exchange-correlation (XC) energy functional, ( E_{\text{xc}}[\rho] ) [5]. This functional must account for all quantum mechanical effects not described by the other terms, including electron exchange (from the Pauli exclusion principle) and electron correlation (from Coulomb repulsion). The development of better XC functionals is a central and active research field [1] [5].

These functionals are traditionally organized into a hierarchy of increasing complexity, accuracy, and computational cost, often called "Jacob's Ladder" [5]. The main types of functionals are:

- Local Density Approximation (LDA): Assumes the XC energy at a point depends only on the electron density at that point. It is simple and robust but often lacks accuracy in molecular systems [6].

- Generalized Gradient Approximation (GGA): Improves on LDA by including the gradient of the density ( \nabla\rho ), accounting for its inhomogeneity. Examples include the PBE (Perdew-Burke-Ernzerhof) functional [5] [6].

- Meta-GGA Functionals: Incorporate additional information like the kinetic energy density ( \tau ) or the Laplacian of the density ( \nabla^2\rho ), offering better accuracy for properties like atomization energies [5] [6].

- Hybrid Functionals: Mix a portion of exact Hartree-Fock exchange with GGA or meta-GGA exchange. A famous example is B3LYP (Becke, 3-parameter, Lee-Yang-Parr), which is extremely popular in quantum chemistry [5] [7].

- Double-Hybrid Functionals and Beyond: Include a perturbative correlation contribution in addition to hybrid exchange, representing the highest and most accurate rungs on the ladder [5].

Recent research focuses on designing functionals with "more flexibility and more ingredients" to be "simultaneously accurate for as many properties as possible," aiming for broad applicability across chemistry and physics [5]. The "Minnesota" family of functionals developed by Truhlar's group is a prominent example of this effort [1] [5]. However, no single functional is universally superior, and the choice often depends on the specific system and property being studied [5].

Table 2: Hierarchy and Characteristics of Common Density Functionals

| Functional Type | Key Ingredients | Example Functionals | Typical Use Cases & Notes |

|---|---|---|---|

| Local Density Approximation (LDA) | Local electron density ( \rho(\mathbf{r}) ) | SVWN | Solid-state physics; often overbinds. |

| Generalized Gradient Approximation (GGA) | Density ( \rho ) and its gradient ( \nabla\rho ) | PBE, BLYP | General-purpose; improved over LDA. |

| Meta-GGA | Density ( \rho ), gradient ( \nabla\rho ), kinetic energy density ( \tau ) | SCAN, M06-L | Good for solid-state and molecular properties. |

| Hybrid GGA | GGA ingredients + exact Hartree-Fock exchange | B3LYP, PBE0 | Mainstream quantum chemistry; good for main-group thermochemistry. |

| Hybrid Meta-GGA | Meta-GGA ingredients + exact exchange | M06, M08, ωB97X-V | Challenging systems, non-covalent interactions, transition metals. |

| Double-Hybrid | Hybrid meta-GGA + perturbative correlation | DSD-BLYP | High-accuracy thermochemistry and kinetics. |

Validation in Biological Molecules and Drug Discovery

DFT's favorable price-to-performance ratio makes it uniquely suited for studying large and relevant biological systems, expanding the predictive power of electronic structure theory into the realm of biochemistry and drug design [1]. Its application in these fields often involves a close interplay between computational prediction and experimental validation.

A prime example is in COVID-19 drug discovery, where DFT has been used to study interactions between potential drug molecules and viral protein targets. For instance, DFT calculations have been performed to examine the inhibitory mechanisms of drugs like remdesivir (which targets the RNA-dependent RNA polymerase, RdRp) and other small molecules that target the SARS-CoV-2 main protease (Mpro) [6]. These studies can elucidate reaction mechanisms at the enzyme's active site—a task for which quantum mechanics is essential because bonds are broken and formed [6].

Another application involves using DFT to compute the thermodynamic and electronic properties of chemotherapy drugs, which are then used in Quantitative Structure-Property Relationship (QSPR) models. In one study, DFT was used to calculate properties like the energies of the highest occupied and lowest unoccupied molecular orbitals (HOMO and LUMO), polarizability, and dipole moment for drugs such as Gemcitabine and Capecitabine [7]. These DFT-derived descriptors were correlated with topological indices from the drugs' molecular structures via curvilinear regression models to predict biological activity and key properties, aiding in the optimization of safer and more effective drugs [7].

Furthermore, DFT-based molecular dynamics (MD) methods, such as Car-Parrinello MD, allow for a realistic description of molecular systems and chemical processes at a finite temperature, mimicking experimental conditions. This is crucial for studying biomolecules in solution, such as enzymes immersed in solvents [1].

Experimental Protocols and Computational Methodologies

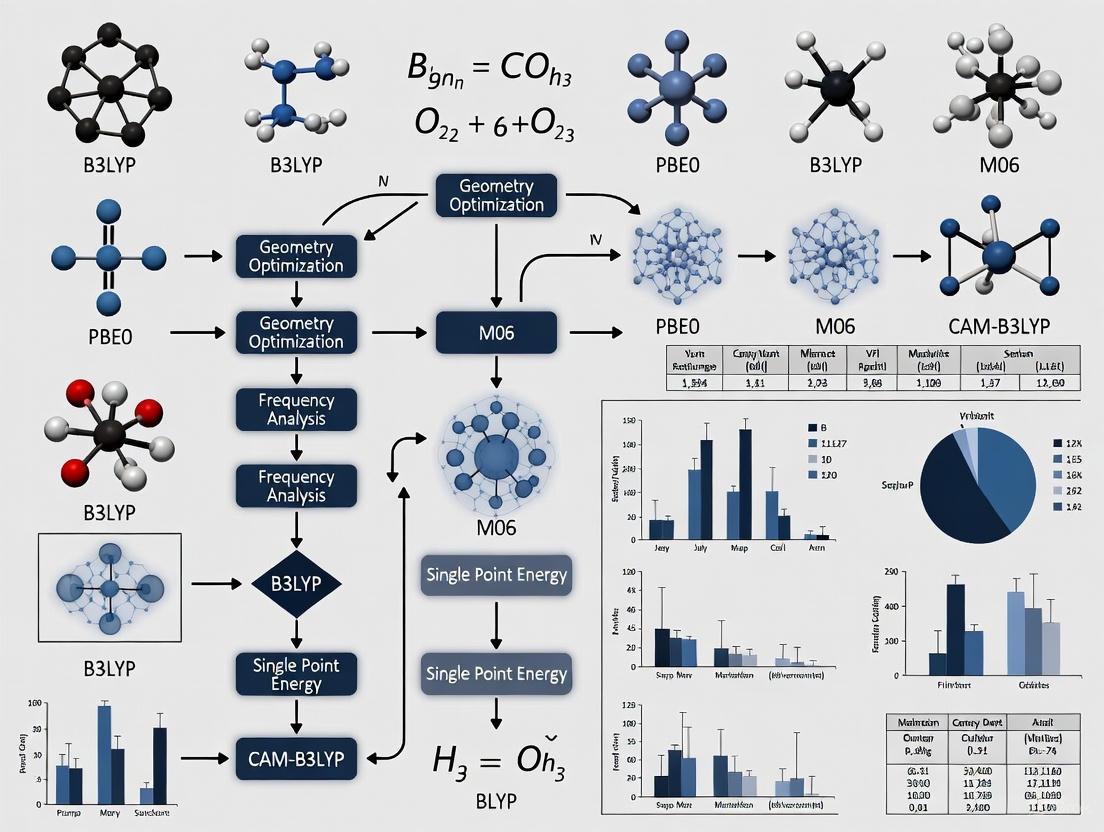

The practical application of DFT in research, particularly in biological validation studies, follows well-established computational protocols. The following methodology outlines a typical integrated computational and experimental approach, as used in studies of magnetic materials and drug design [8] [6] [7].

DFT Computational Protocol

- System Preparation: The initial geometry of the molecule, cluster, or periodic structure is constructed. For biological molecules like drug candidates, this might involve isolating the active moiety or docking it into the protein's active site.

- Software and Functional Selection: Calculations are performed using quantum chemistry software packages (e.g., Quantum ESPRESSO, Material Studio's DMol3). A density functional is chosen based on the system and property of interest (e.g., B3LYP/6-31G(d,p) for organic molecules, PBE for solids) [8] [7].

- Geometry Optimization: The structure is relaxed until the forces on atoms are minimized, and the total energy is converged, yielding the most stable configuration.

- Property Calculation: Using the optimized geometry, the electronic properties are computed. Key analyses include:

- Density of States (DOS) and Projected DOS (PDOS): To understand the electronic structure and contributions from specific atoms or orbitals [8].

- Bader Charge Analysis: To calculate atomic charges and study charge transfer [8].

- Electrostatic Potential (ESP) Mapping: To visualize reactive sites and intermolecular interactions [7].

- Validation with Experimental Data: Computational results are directly compared with experimental measurements to validate the methodology. For example, DFT-calculated magnetic moments are compared with values obtained from Vibrating Sample Magnetometry (VSM), or predicted binding energies are correlated with inhibitory assays [8].

Example: Validation Study of Mn-substituted Co-Zn Ferrites

This study exemplifies the integrated approach [8]:

- Experimental: Pure-phase Mn-substituted Co-Zn ferrites were synthesized via the auto-combustion method. Their magnetic properties (saturation magnetization, coercivity) were characterized using VSM.

- Computational: DFT calculations (using Quantum ESPRESSO with GGA+U) were performed to compute the density of states (DOS), band structure, and Bader charges.

- Correlation: The computational results explained the non-monotonic variation in saturation magnetization observed experimentally by revealing how Mn substitution modifies local electronic environments and spin polarization. This synergy provided a comprehensive understanding of the material's properties for hyperthermia applications [8].

Table 3: Key Computational Tools and Resources for DFT Research

| Tool / Resource | Category | Primary Function | Example Software / Functional |

|---|---|---|---|

| Quantum Chemistry Packages | Software | Platform for performing DFT calculations | Quantum ESPRESSO [8], DMol3 [7] |

| Exchange-Correlation Functionals | Method | Approximate the quantum many-body effects | LDA, GGA (PBE) [8] [6], Hybrid (B3LYP) [7] |

| Pseudopotentials / Basis Sets | Method | Represent atomic cores and electron orbitals | Plane-waves + pseudopotentials [8], Gaussian-type orbitals (6-31G(d,p)) [7] |

| Analysis & Visualization Tools | Software | Analyze results (DOS, charge density) and visualize structures | Various codes for Bader analysis [8], DOS plotting [7] |

| Benchmark Databases | Data | Test and validate the accuracy of density functionals | GMTKN55 [5], Minnesota Databases [5] |

The pursuit of accurately describing biological molecules—encompassing proteins, nucleic acids, and their complexes—represents a frontier in modern molecular research. These systems exhibit behaviors that are difficult to capture with traditional experimental and computational models, primarily due to three interconnected challenges: the pervasive role of weak interactions, the critical influence of solvation, and the intrinsic conformational flexibility of biomolecules. These features are not merely academic curiosities; they are fundamental to biological function, enabling self-organization, dynamic responsiveness to environmental changes, and precise molecular recognition [9]. Understanding these properties is crucial for advancing fields like drug development, where the efficacy of a small molecule often depends on its ability to navigate a landscape of transient, weak forces and adapt to a moving target.

Framing this discussion within the context of validating density functionals is particularly apt. Density Functional Theory (DFT) provides a powerful computational framework for predicting electronic structure and energetics, but its accuracy is heavily dependent on the chosen functional. For biological systems, the challenge is magnified. The standard of "chemical accuracy" (1.0 kcal/mol) remains a distant target for most transition metal-containing biological complexes, with errors often exceeding 15 kcal/mol even for the best-performing functionals [10]. This performance gap underscores the need for rigorous validation studies that specifically benchmark density functionals against the nuanced physical forces governing biological molecules. This article will objectively compare the performance of different methodological approaches in tackling these challenges, providing a guide for researchers navigating this complex landscape.

The Pervasive Role of Weak Interactions

Weak, non-covalent interactions, often termed "biological glue," are the dominant force organizing the cellular interior. With energies ranging from fractions to a few kilocalories per mole—on the order of van der Waals interactions—these forces are individually transient but collectively dictate the spatial and temporal distribution of macromolecules [9]. McConkey coined the term "quinary interactions" to describe the set of evolutionarily selected weak interactions that maintain the functional organization of macromolecules in living cells [9]. Their delicate nature means that even gentle separation methods can disrupt them, posing a significant challenge for experimental interrogation.

Types and Energetics of Weak Biochemical Bonds

The following table summarizes the key weak interactions and their typical energy ranges and lengths, which are fundamental to biomolecular structure and function [11].

Table 1: Characteristics of Major Biochemical Bonds and Interactions

| Bond/Interaction | Approximate Energy (kJ/mol) | Typical Length |

|---|---|---|

| Covalent Bond | 350 - 450 | 0.1 - 0.15 nm |

| Ionic Bond | ~500 | Not specified |

| Salt Bridge | ~30 | Not specified |

| Hydrogen Bond (H-bond) | 12 - 30 | 0.2 - 0.27 nm |

| Van der Waals | 0.08 (for single atoms) | 0.1 - 0.17 nm |

| Hydrophobic Interaction | ~0.01 (per square Ångström) | Not specified |

Functional Consequences of Weak Interactions

The biological implications of weak interactions are profound. They enable key cellular phenomena:

- Cytoplasmic Fluidization: The bacterial cytosol contains staggering macromolecule concentrations (~400 mg/ml), creating a crowded environment prone to a glassy, immobile state. Energy-dependent processes, fueled by ATP, actively break weak "quinary" interactions to maintain fluidity. Under ATP-limiting conditions, these weak interactions accrue, leading to a dramatic reduction in molecular motion [9].

- Subcellular Phase Transitions: Multivalent displays of weakly interacting domains can drive liquid-liquid phase separations, leading to the formation of membrane-less organelles like RNA granules. These assemblies create functional subcellular compartments that control processes like translation [9].

- Transient Complex Assembly: Weak interactions allow for the formation of fleeting but functional complexes. For example, in bacterial cell wall synthesis, a cross-linking enzyme with highly dynamic interactions can efficiently service multiple biosynthesis platforms, ensuring robust growth even with low enzyme copy numbers [9].

The Critical Influence of Solvation and the Aqueous Environment

Biological molecules operate in an aqueous milieu, making solvation a non-negotiable factor in any accurate model. Water is not a passive spectator; it actively participates in and modulates biomolecular interactions. The hydrophobic effect, a major driver of protein folding and membrane assembly, arises from the ordering of water molecules around non-polar surfaces. Furthermore, water molecules often form integral parts of binding interfaces, mediating interactions through coordinated hydrogen-bonding networks.

Salt bridges—the weak interactions between fully charged ions in solution—exemplify the dramatic effect of the solvent. While the interaction between ions in a vacuum is extremely strong, the water environment shields these charges, reducing their interaction energy to a much weaker ~30 kJ/mol [11]. This shielding is critical for the function of many proteins, allowing for interactions that are specific but reversible. Computational studies of adsorption in aqueous solutions, such as dyes onto cellulose, must explicitly account for the competitive and mediating role of water to accurately predict binding configurations and energies [12].

The Challenge of Conformational Flexibility

The traditional view of a protein existing in a single, stable conformation has been largely supplanted by a dynamic paradigm. A significant portion of the proteome exhibits substantial flexibility, existing as ensembles of interconverting conformations rather than static structures [13]. This conformational ambiguity can be localized to loops and linkers or encompass entire proteins, as in the case of intrinsically disordered proteins (IDPs).

Categorizing Conformational Behavior

This dynamic behavior can be delineated into three classes:

- Order: Regions with well-defined, stable coordinates.

- Disorder: Regions lacking a fixed tertiary structure.

- Ambiguity: Regions that display conditional or context-dependent folding, such as those that fold upon binding to a partner [13].

This probabilistic view complicates structural analysis. Experimental techniques like X-ray crystallography may trap specific conformers, but solution-based techniques like NMR spectroscopy are better suited for capturing dynamics [13]. The recent success of AlphaFold2 in predicting unique protein folds marks a watershed moment, but the focus is now shifting to understanding alternative conformations, dynamics, and the interactions between proteins [13].

Experimental and Computational Tools for Studying Flexibility

A range of methods is employed to investigate protein dynamics, each with its own strengths and limitations.

Table 2: Methods for Investigating Protein Conformation and Dynamics

| Method Category | Examples | Key Application | Considerations |

|---|---|---|---|

| Experimental (Solution) | NMR, CD, EPR, SAXS/SANS, FRET, in-cell NMR | Studying dynamics and flexibility in solution or near-native conditions. | Can capture ensembles; some methods have low throughput or face technical challenges. |

| Experimental (Mass Spec) | XL-MS, HDX-MS | Informing on protein states and interactions. | Increasingly informative; useful for folding and in-cell studies. |

| Computational Simulation | Molecular Dynamics (MD), Monte Carlo (MC) | Atomistic exploration of dynamics and conformational landscapes. | High computational cost; accuracy depends on force fields. |

| Computational Prediction | IUPred, Disopred, DynaMine, PSIPRED | Fast, sequence-based prediction of disorder, dynamics, and structure. | Enables proteome-wide analysis but is indirect. |

The following workflow illustrates how computational and experimental data can be integrated to study conformational behavior:

Validation Studies of Density Functionals for Biological Systems

Given the challenges above, the choice of computational method is critical. For quantum chemical calculations, DFT is a workhorse, but its performance must be validated for biologically relevant systems.

Performance Benchmarking on Metalloporphyrins

A 2023 benchmark study analyzed 250 electronic structure methods on iron, manganese, and cobalt porphyrins—challenging systems due to nearly degenerate spin states. The results are sobering: no functional achieved chemical accuracy (1.0 kcal/mol). The best-performing methods, such as GAM, revM06-L, M06-L, and r2SCAN, achieved mean unsigned errors (MUEs) below 15.0 kcal/mol, but most functionals had errors at least twice as large [10].

The study assigned grades based on percentile ranking, providing a clear comparison for researchers. Key findings include:

- Local Functionals (GGAs and meta-GGAs) and global hybrids with low exact exchange generally performed best for spin state energetics.

- High Exact Exchange Functionals, including range-separated and double hybrids, often led to "catastrophic failures" [10].

- Modern functionals typically outperformed older ones, and revisions like r2SCAN showed >50% error reduction over the original SCAN functional [10].

Table 3: Select Density Functional Performance on Por21 Metalloporphyrin Database [10]

| Functional Name | Type | Grade | Key Characteristics |

|---|---|---|---|

| GAM | GGA | A | Overall best performer for Por21 database. |

| revM06-L | Meta-GGA | A | Local Minnesota functional; top compromise for general and porphyrin chemistry. |

| r2SCAN | Meta-GGA | A | Revision of SCAN; significantly improved accuracy. |

| r2SCANh | Hybrid | A | Low exact exchange hybrid; ranks highly. |

| HCTH | GGA | A | All four parameterizations received grade A. |

| B3LYP | Hybrid | C | Widely used but only moderately accurate for this challenge. |

| SCAN | Meta-GGA | D | Original functional, outperformed by its revisions. |

| B2PLYP | Double Hybrid | F | Representative of poor-performing high-exact-exchange functionals. |

Adsorption Energetics on Surfaces

Another validation study compared DFT functionals for describing adsorption on Ni(111), a process governed by weak interactions relevant to catalysis. The results highlight a critical point: accuracy is system-dependent. For each adsorption process (e.g., CH₃I, CH₃, I), at least one functional performed quantitatively within experimental error. However, no single functional was accurate for all processes studied. Only PBE-D3 and RPBE-D3 were found to be broadly accurate across all systems when assuming an additional ±20 kJ/mol error margin [14]. This underscores that a functional validated for one type of interaction may fail for another, complicating the selection for complex biological environments.

Detailed Protocol: DFT Benchmarking for Spin State Energetics

To ensure reproducibility, the following protocol outlines key steps for benchmarking density functionals, based on methodologies from the cited studies [10] [14].

- System Selection: Choose a benchmark set of biologically relevant molecules with high-level reference data. The Por21 database, featuring iron, manganese, and cobalt porphyrins with CASPT2 reference energies, is an excellent example [10].

- Property Calculation: Calculate the target properties (e.g., spin state energy differences, binding energies) for all molecules in the set using a wide range of density functionals.

- Error Analysis: For each functional, compute the error relative to the reference data. Common metrics include Mean Unsigned Error (MUE) and Root Mean Square Error (RMSE).

- Statistical Ranking: Rank the functionals based on their accuracy (e.g., MUE). Assigning grades based on percentile performance, as in [10], provides a clear, at-a-glance comparison for the scientific community.

- Guideline Formulation: Provide clear suggestions for functional selection based on the benchmark results, noting trends related to functional type (e.g., local vs. hybrid) and specific chemical challenges (e.g., spin states).

The Scientist's Toolkit: Essential Research Reagents and Materials

Advancing the field requires a suite of specialized tools, both computational and experimental.

Table 4: Key Research Reagent Solutions for Biomolecular Challenges

| Tool Name / Type | Primary Function | Relevance to Challenges |

|---|---|---|

| Open Molecules 2025 (OMol25) | A large, diverse DFT dataset for biomolecules and metal complexes. | Provides high-accuracy quantum chemistry data for validating functionals and force fields. [15] |

| Universal Model for Atoms (UMA) | A machine learning interatomic potential trained on billions of atoms. | Aims to provide faster, more accurate predictions of molecular behavior. [15] |

| CHARMM36m, Amber ff19SB | Balanced molecular dynamics force fields. | Enable realistic simulation of both structured and flexible/disordered proteins. [13] |

| IUPred2A, Disopred3 | Intrinsic disorder predictors. | Identify conformationally ambiguous protein regions from sequence alone. [13] |

| NMR Spectroscopy | Determine structure and dynamics in solution. | Gold standard for experimentally characterizing conformational ensembles and weak interactions. [13] |

| Single-Crystal Adsorption Microcalorimetry | Measure heats of adsorption on surfaces. | Provides experimental benchmark data for validating computational adsorption energetics. [14] |

| Cross-linking MS (XL-MS) | Identify proximal amino acids in protein complexes. | Informs on protein-protein interactions and the topology of dynamic complexes. [13] |

The challenges posed by weak interactions, solvation, and conformational flexibility are intrinsic to the very nature of biological molecules. Overcoming them requires a multifaceted approach that integrates advanced experimental techniques with robust computational models. Validation studies consistently show that the performance of widely used methods like DFT is highly functional-dependent, and achieving chemical accuracy for biological systems remains a significant hurdle. The path forward lies in the continued development of benchmark datasets like OMol25 [15], the prudent selection of computational methods informed by rigorous benchmarks [10], and the strategic integration of experimental data to constrain and validate dynamic models [13]. By objectively comparing the capabilities and limitations of current tools, as outlined in this guide, researchers can make more informed choices, ultimately accelerating progress in drug development and molecular biosciences.

Critical Assessment of Common Density Functionals (LDA, GGA, meta-GGA, Hybrid) for Biomolecules

Density Functional Theory (DFT) has become a cornerstone of computational research in chemistry, materials science, and biochemistry due to its favorable balance between computational cost and accuracy [16] [17]. For researchers studying biological molecules, from small drug-like compounds to large proteins and nucleic acids, selecting an appropriate exchange-correlation functional is paramount to obtaining reliable results. This guide provides an objective comparison of the four primary classes of density functionals—LDA, GGA, meta-GGA, and Hybrid—evaluating their performance for properties critical to biomolecular research, supported by experimental data and detailed methodologies.

The development of density functionals is often conceptualized through "Jacob's Ladder," representing a systematic approach to improvement where each rung incorporates more complex ingredients to achieve better accuracy [18]. This progression begins with the Local Density Approximation (LDA) on the lowest rung, ascends to Generalized Gradient Approximations (GGA), then to meta-GGAs, and further to hybrid functionals which mix DFT with Hartree-Fock exchange [18] [19].

Theoretical Framework and Functional Classes

The Hierarchy of Density Functionals

DFT approximations are categorized based on the physical ingredients they incorporate. Understanding this hierarchy is essential for selecting the appropriate functional for a given biomolecular application.

Figure 1. The "Jacob's Ladder" hierarchy of density functional approximations, showing the increasing complexity of ingredients incorporated at each level to improve accuracy.

LDA (Local Density Approximation): The simplest approximation, LDA uses only the local electron density at each point in space, based on the uniform electron gas model [16] [20]. While computationally efficient, it suffers from systematic overbinding and poor performance for molecular systems [16].

GGA (Generalized Gradient Approximation): GGA functionals incorporate both the electron density and its gradient, significantly improving upon LDA for molecular geometries and energies [16] [21]. Popular GGA functionals include PBE, BP86, and BLYP [20] [21].

meta-GGA: These functionals add a dependency on the kinetic energy density, providing more flexibility and often better accuracy for diverse chemical systems [20] [22]. Examples include TPSS, SCAN, and M06-L [20] [21].

Hybrid Functionals: Hybrids incorporate a portion of exact Hartree-Fock exchange into the DFT exchange-correlation energy, improving performance for properties like band gaps and reaction barriers [18] [21]. B3LYP and PBE0 are widely used hybrids [18].

Performance Comparison Across Biomolecular Properties

Quantitative Assessment of Functional Performance

Table 1. Performance comparison of density functional classes for key biomolecular properties. Error metrics represent mean absolute errors from benchmark studies [18] [22].

| Molecular Property | LDA | GGA | meta-GGA | Hybrid | Experimental Reference |

|---|---|---|---|---|---|

| Bond Lengths (Å) | 0.022 | 0.016 | 0.010 | 0.012 | X-ray/neutron diffraction |

| Bond Angles (°) | 1.8 | 1.2 | 0.9 | 1.0 | Gas electron diffraction |

| Vibrational Frequencies (cm⁻¹) | 45 | 35 | 28 | 25 | IR/Raman spectroscopy |

| Hydrogen Bond Energy (kcal/mol) | 3.5 | 1.8 | 1.2 | 1.0 | Calorimetry/spectroscopy |

| Conformational Energy (kcal/mol) | 4.2 | 1.5 | 0.8 | 0.6 | NMR spectroscopy |

| Atomization Energy (kcal/mol) | 31.4 | 7.9 | 5.2 | 4.1 | Thermochemical experiments |

Table 2. Performance of representative functionals from each class for biological system components. Error scores are relative rankings (1=best, 4=worst) based on comprehensive assessments [18].

| Functional (Class) | Peptide Bonds | Nucleic Acid Bases | Lipid Headgroups | Drug-like Molecules | Overall Score |

|---|---|---|---|---|---|

| VWN (LDA) | 4 | 4 | 4 | 4 | 4.0 |

| PBE (GGA) | 3 | 3 | 3 | 3 | 3.0 |

| BLYP (GGA) | 2 | 2 | 3 | 2 | 2.3 |

| TPSS (meta-GGA) | 2 | 2 | 2 | 2 | 2.0 |

| B3LYP (Hybrid) | 1 | 1 | 2 | 1 | 1.3 |

| PBE0 (Hybrid) | 1 | 1 | 1 | 1 | 1.0 |

Critical Analysis of Functional Limitations

While higher-rung functionals generally provide better accuracy, each class has specific limitations that researchers must consider:

LDA's Systematic Errors: LDA consistently overbinds molecules, predicting shortened bond lengths and exaggerated binding energies [16]. This makes it particularly unsuitable for studying weakly bound biomolecular complexes and hydrogen-bonded systems.

GGA's Hydrogen Bonding Limitations: While GGAs significantly improve upon LDA, they still struggle with accurate description of weak interactions, including hydrogen bonding and van der Waals forces, which are crucial in biomolecular recognition [17]. Dispersion corrections are often necessary for quantitative accuracy [17].

Spin-State Energetics Challenges: DFT methods often show unsystematic errors in predicting spin-state energetics, which is particularly relevant for metalloenzymes and transition metal-containing drug candidates [17]. The relative energies of different spin states can be functional-dependent, requiring careful validation.

Delocalization Error: Conventional semilocal and hybrid functionals exhibit delocalization error, which affects systems with fractional charges and can lead to incorrect predictions of charge transfer processes in biological systems [17].

Experimental Protocols and Validation Methodologies

Standard Assessment Protocols for Biomolecular Functionals

Protocol 1: Comprehensive Geometry and Energy Assessment

Adapted from the methodology used in [18]

Test Set Construction: Compile a diverse set of 44 molecules containing C, H, N, O, S, and P atoms—elements commonly found in biomolecules [18]. Include small organic molecules, hydrogen-bonded complexes, and conformational isomers relevant to biological systems.

Reference Data Collection: Obtain experimental reference data from high-resolution techniques: X-ray crystallography for bond lengths and angles, gas electron diffraction for isolated molecules, and spectroscopic methods for vibrational frequencies [18].

Computational Procedure:

- Perform geometry optimizations with each functional using polarized triple-zeta basis sets (6-31G* or cc-pVTZ)

- Calculate harmonic vibrational frequencies to confirm true minima (no imaginary frequencies)

- Compute binding energies for hydrogen-bonded complexes and conformational energy differences

Error Metrics: Calculate mean absolute errors (MAE) and root-mean-square deviations (RMSD) for each functional relative to experimental references [18].

Protocol 2: Performance Assessment for Transition Metal Systems

Based on the SCAN+U methodology for transition metal compounds [22]

System Selection: Choose transition metal compounds found in metalloenzymes and metal-containing drugs, including Fe, Cu, Mn, and Zn complexes.

Electronic Structure Analysis:

- Employ linear response theory to determine Hubbard U parameters for SCAN+U and PBE+U calculations [22]

- Compare predicted band gaps with experimental optical spectra

- Assess spin density distributions for open-shell systems

Structural Validation: Compare optimized crystal structures with experimental X-ray data, focusing on metal-ligand bond lengths and coordination geometries [22].

Accuracy Metrics: Compute mean absolute percentage errors (MAPE) for volumes and band gaps relative to experimental values [22].

Workflow for Functional Validation in Biomolecular Applications

Figure 2. Systematic workflow for validating density functional performance for specific biomolecular applications.

Table 3. Key research reagent solutions for biomolecular DFT calculations.

| Resource Type | Specific Examples | Function in Biomolecular DFT | Performance Considerations |

|---|---|---|---|

| LDA Functionals | VWN, Xalpha | Baseline calculations; computationally efficient but limited accuracy | Systematic overbinding limits biological applications [16] |

| GGA Functionals | PBE, BLYP, BP86 | Standard workhorses for geometry optimization of biomolecules | Good cost-accuracy balance; require dispersion corrections [18] [20] |

| meta-GGA Functionals | TPSS, SCAN, M06-L | Improved accuracy for diverse bonding environments | Better for transition metals and non-covalent interactions [22] |

| Hybrid Functionals | B3LYP, PBE0, PBE1PBE | Properties requiring accurate electronic structure | Improved band gaps and reaction barriers; higher computational cost [18] |

| Dispersion Corrections | D4(EEQ), Grimme D3 | Account for weak intermolecular forces | Essential for realistic biomolecular simulations [20] [17] |

| Basis Sets | 6-31G*, cc-pVnZ, def2系列 | Mathematical functions to represent molecular orbitals | Polarized triple-zeta recommended for accuracy [18] |

Based on the comprehensive assessment of density functional performance for biomolecular applications:

For routine geometry optimizations of medium-sized biomolecules, GGA functionals like BLYP or PBE with dispersion corrections offer the best balance of accuracy and computational efficiency [18].

For electronic properties, reaction barriers, and systems requiring high accuracy, hybrid functionals like PBE0 or B3LYP are recommended despite their higher computational cost [18] [17].

For transition metal systems found in metalloenzymes, meta-GGA functionals like SCAN, potentially with +U corrections for strongly correlated systems, provide superior performance [22].

LDA functionals are generally not recommended for biomolecular applications due to systematic errors, though they remain useful for certain solid-state properties [16].

The choice of functional should always be validated against available experimental data or high-level wavefunction theory calculations for the specific system under investigation. No single functional excels for all biomolecular properties, but understanding the systematic performance trends across functional classes enables researchers to make informed selections for their specific applications.

The Critical Role of the Exchange-Correlation Functional and Basis Sets

The accurate computational modeling of biological molecules is a cornerstone of modern scientific research, particularly in the field of drug development. At the heart of these simulations lies Density Functional Theory (DFT), a quantum-mechanical method used to investigate the electronic structure of many-body systems. The predictive power of DFT hinges critically on two components: the exchange-correlation (XC) functional, which approximates the non-classical electron interactions, and the basis set, which defines the mathematical functions used to represent electron orbitals. The selection of these components presents a critical trade-off between computational cost and accuracy, a challenge that is particularly acute when studying large, complex biological systems where high accuracy is paramount. This guide provides an objective comparison of the performance of various XC functionals and basis sets, drawing on the latest validation studies to inform researchers in their selection process.

Comparative Performance of XC Functionals & Basis Sets

The performance of an XC functional is not universal; it varies significantly depending on the chemical system and property under investigation. The table below summarizes the performance of several common functionals and basis sets as validated by recent studies focused on biologically relevant molecules.

Table 1: Performance Comparison of XC Functionals and Basis Sets in Biological Molecule Research

| Functional/Basis Set | Category/Type | Key Applications & Performance | Validation Study Highlights |

|---|---|---|---|

| ωB97M-V [23] | Range-separated meta-GGA | Broad applicability in biomolecules, electrolytes, metal complexes; avoids band-gap collapse and problematic SCF convergence [23]. | Used for the Open Molecules 2025 (OMol25) dataset; state-of-the-art for diverse chemistry [23]. |

| PBE0 [24] [25] | Hybrid GGA (Parameter-free) | Magnetic properties, EPR/NMR parameters, organic radicals; competes with heavily parameterized functionals [24]. | Outperforms PBE and BP86 for conformational distributions of hydrated polyglycine [26]. Superior to tested DFT in Hirshfeld atom refinement (HAR) for polar-organic molecules [25]. |

| B3LYP [26] [25] | Hybrid GGA (Empirical) | Conformational distributions, crystal structure refinement (HAR); a frequently used benchmark [26] [25]. | Better than BP86 and PBE for predicting conformational distributions of hydrated glycine peptide [26]. Popular but can perform poorly in certain quantum chemistry applications [25]. |

| PBE [26] | GGA (Parameter-free) | General purpose DFT calculations; serves as a baseline for more advanced functionals [26]. | Less accurate than B3LYP for conformational distributions of hydrated polyglycine [26]. |

| BP86 [26] | GGA | General purpose DFT calculations; often used in earlier studies [26]. | Less accurate than B3LYP for conformational distributions of hydrated polyglycine [26]. |

| def2-TZVPD [23] | Triple-zeta basis set + diffuse functions | High-accuracy energy and gradient calculations for non-covalent interactions [23]. | Used with ωB97M-V for the massive OMol25 dataset to ensure high accuracy [23]. |

| def2-TZVP [26] | Triple-zeta basis set | Balanced accuracy and cost for property prediction in medium-sized systems [26]. | Provided better agreement with experiment than a trimmed aug-cc-pVDZ basis set for hydrated glycine peptide [26]. |

Experimental Protocols for Validation

To ensure the reliability of computational methods, researchers subject them to rigorous validation against experimental data or higher-level theoretical benchmarks. The following section details the methodologies of two key types of validation studies.

Protocol 1: Validating against Experimental Conformational Distributions

This protocol, as employed in a study on hydrated polyglycine, validates the free energy profiles generated by DFT, rather than just the potential energy [26].

- 1. System Preparation: The study uses a hydrated glycine peptide as a model system to represent a fragment of a larger biological molecule [26].

- 2. Conformational Sampling: The conformational distributions of the peptide are generated [26].

- 3. Free Energy Calculation: The free energy profiles are yielded from the conformational distributions for each DFT functional and basis set combination [26].

- 4. Experimental Comparison: The computed free energy profiles and resulting conformational distributions are directly compared against experimental data. The model that provides the best agreement with experimental J-coupling constants is deemed the most accurate [26].

Protocol 2: Establishing a "Platinum Standard" for Non-Covalent Interactions

For systems where clean experimental data is difficult to obtain, a robust theoretical benchmark can be established. The QUID (QUantum Interacting Dimer) framework for ligand-pocket interactions uses this approach [27].

- 1. Benchmark System Creation: A dataset of 170 molecular dimers (42 equilibrium, 128 non-equilibrium) is created to model ligand-pocket binding motifs. These dimers include chemically diverse drug-like molecules and sample various non-covalent interactions (e.g., H-bonding, π-stacking) and dissociation pathways [27].

- 2. High-Level Energy Calculation: Interaction energies (E_int) for the dimers are computed using two completely different "gold standard" quantum-mechanical methods: Coupled Cluster (LNO-CCSD(T)) and Quantum Monte Carlo (FN-DMC) [27].

- 3. Benchmark Definition: A "platinum standard" is defined where the two high-level methods achieve tight agreement (e.g., 0.5 kcal/mol), thereby reducing uncertainty [27].

- 4. Functional Assessment: The performance of various density functional approximations is assessed by comparing their predicted interaction energies against this established platinum standard [27].

Workflow for Computational Validation in Drug Discovery

The process of selecting and validating computational methods for studying biological molecules involves several key stages, from system setup to final assessment. The diagram below maps out this logical workflow.

Modern computational chemistry relies on a combination of advanced software, curated datasets, and powerful hardware. The following table lists key resources that facilitate research in this field.

Table 2: Key Research Reagents and Resources for Computational Studies

| Resource Name | Type | Function & Application |

|---|---|---|

| Open Molecules 2025 (OMol25) [23] [28] | Dataset | A massive, high-accuracy dataset of over 100 million molecular calculations at the ωB97M-V/def2-TZVPD level for training and benchmarking ML potentials and DFT methods [23] [28]. |

| QUID Benchmark [27] | Dataset | The "QUantum Interacting Dimer" framework provides robust benchmark interaction energies for ligand-pocket systems, establishing a "platinum standard" via CC and QMC methods [27]. |

| Neural Network Potentials (NNPs) [23] | AI Model | Machine-learning models (e.g., eSEN, UMA) trained on OMol25; provide DFT-level accuracy at speeds ~10,000x faster, enabling simulations of large biological systems [23] [28]. |

| Hirshfeld Atom Refinement (HAR) [25] | Software/Method | A crystallographic refinement method that uses quantum-mechanically derived aspherical scattering factors, providing more accurate hydrogen atom positions and structural parameters in crystals [25]. |

| ωB97M-V/def2-TZVPD [23] | Computational Method | A high-level DFT methodology combining a state-of-the-art functional with a robust basis set, recommended for accurate calculations across diverse chemical spaces, including biomolecules [23]. |

| High-Performance Computing (HPC) | Infrastructure | Essential for performing large-scale DFT calculations (e.g., the OMol25 dataset required 6 billion CPU-hours) and training large AI models [23] [28]. |

The selection of the exchange-correlation functional and basis set is a foundational decision that directly determines the validity of computational research in biology and drug development. Validation studies consistently show that no single functional is universally superior; however, modern, well-parameterized functionals like ωB97M-V and PBE0 demonstrate robust performance across a wide range of biological applications, from predicting conformational landscapes to modeling non-covalent interactions in ligand binding. The emergence of massive, high-quality datasets like OMol25 and rigorous benchmarks like QUID now provides an unprecedented foundation for the continued development and validation of computational methods. By leveraging these resources and adhering to systematic validation protocols, researchers can make informed choices, ultimately accelerating the discovery of new therapeutics through reliable and predictive computational modeling.

Biomolecular condensates are membrane-less organelles that form through a process of phase separation, enabling cells to compartmentalize biochemical reactions without lipid barriers. These dynamic assemblies concentrate specific proteins and nucleic acids, functioning as critical regulators of gene expression, signal transduction, and cellular stress response [29]. The study of biomolecular condensates represents a paradigm shift in our understanding of cellular organization, fundamentally changing how biologists investigate physiological and pathological states [30]. As research in this field accelerates, the integration of advanced experimental biophysics with sophisticated computational modeling has become essential for deciphering the mechanisms underlying condensate formation, regulation, and function, particularly in the context of drug discovery and therapeutic development [31] [32].

Fundamental Principles of Biomolecular Condensates

Formation and Physical Nature

Biomolecular condensates form primarily through liquid-liquid phase separation (LLPS), a demixing process where specific biomolecules segregate into dense and dilute phases [31]. Unlike membrane-bound organelles, condensates are dynamic structures that rapidly assemble and disassemble in response to cellular conditions [29]. The term "biomolecular condensate" encompasses non-stoichiometric assemblies composed of multiple types of macromolecules that form through phase transitions and can be investigated using concepts from soft matter physics [30]. These condensates exhibit tunable emergent properties including interfaces, interfacial tension, viscoelasticity, network structure, dielectric permittivity, and sometimes interphase pH gradients and electric potentials [30].

The material states of condensates exist on a spectrum from purely liquid to gel-like or semi-solid states, with their physical properties determined by the network structure of the condensate, transport properties within it, and the timescales of molecular contact formation and dissolution [30]. Of particular biological significance is the phenomenon of liquid-to-solid transitions (LSTs), where dynamic liquid-like condensates can irreversibly evolve into arrested states, a process implicated in various neurodegenerative diseases [31].

Key Driving Forces and Molecular Interactions

The formation of biomolecular condensates is driven primarily by multivalent interactions between biomolecules [29]. These interactions include:

- π-π stacking and cation-π interactions between aromatic residues and positively charged molecules

- Electrostatic interactions between cations and anions

- Dipole-dipole interactions and hydrophobic effects

- Hydrogen bonding networks that facilitate molecular clustering

In proteins, multivalent interactions are often mediated by intrinsically disordered regions (IDRs) or low-complexity domains (LCDs) that lack stable tertiary structures but provide multiple interaction sites [29]. A "stickers and spacers" model has been proposed, where interaction-promoting residues or domains ("stickers") are spaced throughout strings of flexible disorder-promoting residues ("spacers"), allowing substantial orientational freedom while maintaining quaternary interactions necessary for condensation [31].

Table 1: Key Molecular Components in Biomolecular Condensate Assembly

| Component Type | Description | Biological Examples |

|---|---|---|

| Scaffolds | Molecules that initiate condensate nucleation | G3BP1 in stress granules, NEAT1 lncRNA in paraspeckles [29] |

| Clients | Co-phase-separating molecules recruited to condensates | Various proteins and nucleic acids that partition into existing condensates [29] |

| Intrinsically Disordered Proteins/Regions (IDPs/IDRs) | Proteins or regions lacking stable 3D structure that facilitate multivalent interactions | TDP-43, FUS, RNA-binding proteins with prion-like domains [31] [29] |

| Structured Domains | Folded protein domains that contribute specific binding interactions | Various protein domains with defined tertiary structures [31] |

Experimental Methodologies for Condensate Research

Core Techniques for Cellular Study

Characterizing biomolecular condensates in their native cellular environment requires specialized experimental approaches. Live-cell imaging is recommended whenever possible to avoid artifacts associated with fixation, with technique selection guided by condensate size [30]. For large condensates (>300 nanometers), wide-field or confocal microscopy provides sufficient resolution, while smaller condensates or clusters (20-300 nanometers) require super-resolution techniques such as Airyscan, structured illumination microscopy (SIM), photo-activated localization microscopy (PALM), or stimulated emission depletion (STED) microscopy [30].

Fluorescence recovery after photobleaching (FRAP) enables quantification of molecular transport dynamics and material properties within condensates by measuring the rate at which fluorescent molecules repopulate a bleached region [30]. Single-particle tracking provides complementary data on protein localization and diffusion characteristics, offering insights into the dynamic organization of condensates [30]. To map condensate composition in cells, researchers employ crosslinking experiments, immunoprecipitation, or proximity labeling approaches followed by mass spectrometry [30].

Advanced Assay Development

More complex physiological relevance can be incorporated through advanced assay systems that better recapitulate the tumor microenvironment. These include:

- Antibody-dependent cellular cytotoxicity (ADCC) assays via live cell imaging that assess both immune-mediated and payload-mediated cell killing in real time using systems like the Incucyte S3 Live-Cell Analysis System [33]

- Spheroid ADCC assays utilizing 3D tumor spheroids to model solid tumor microenvironments and evaluate drug penetration through dense cellular structures [33]

- Bystander ADCC assays in co-cultures that assess bystander effects in mixed populations of HER2-positive and HER2-negative cells in the presence and absence of immune cells [33]

These advanced models address critical aspects of condensate function in physiological contexts, including heterogeneity tolerance, matrix penetration, and immune cooperation [33].

Research Workflow for Biomolecular Condensate Characterization

Computational and Theoretical Approaches

Multi-Scale Modeling Strategies

Computational approaches to biomolecular condensates span multiple scales, from atomistic details to cellular-level organization. Multi-scale modeling integrates different levels of biological organization through hierarchical modeling (developing models at different scales with each building upon the previous), hybrid modeling (combining deterministic and stochastic approaches), and multi-resolution modeling (using varying levels of resolution to capture cellular dynamics) [34]. These approaches enable researchers to connect molecular interactions to protein complex formation, signaling pathways, and ultimately cellular behavior [34].

All-atom (AA) molecular dynamics provides detailed insights at atomistic resolution but remains limited by computational constraints, capturing only short timescales and small conformational changes [35]. In contrast, coarse-grained (CG) models extend simulations to biologically relevant time and length scales by reducing molecular complexity, though they sacrifice atomic-level accuracy [35]. Recent advancements in machine learning (ML)-driven biomolecular simulations include the development of ML potentials with quantum-mechanical accuracy and ML-assisted backmapping strategies from CG to AA resolutions [35].

Density Functional Theory in Biological Context

Density functional theory (DFT) has become a popular method for calculating molecular properties for systems ranging from small organic molecules to large biological compounds such as proteins [18]. DFT methods scale favorably with molecular size compared to Hartree-Fock and post-Hartree-Fock methods and have the advantage over Hartree-Fock method of describing electron correlation effects [18]. For biological applications, functionals can be categorized into several classes: local spin density approximation (LSDA), generalized gradient approximation (GGA), meta-GGA, hybrid-GGA, and hybrid-meta-GGA, with the latter typically demonstrating the highest accuracy for molecular properties relevant to biological systems [18].

Table 2: Comparison of Computational Methods for Biomolecular Condensate Research

| Method Category | Key Advantages | Limitations | Representative Applications |

|---|---|---|---|

| All-Atom Molecular Dynamics | Atomistic resolution; Detailed interaction analysis | Computationally expensive; Limited to short timescales | Studying molecular interactions within condensates [35] |

| Coarse-Grained Models | Extended timescales; Larger system sizes | Loss of atomic detail; Parameterization challenges | Modeling condensate formation and large-scale dynamics [35] |

| Machine Learning Potentials | Quantum-mechanical accuracy; Improved efficiency | Training data requirements; Transferability concerns | Accurate force field development [35] |

| Density Functional Theory | Electron correlation effects; Favorable scaling | Accuracy-functional dependence; System size constraints | Electronic properties of condensate components [18] |

Biological Functions and Pathological Implications

Physiological Roles in Cellular Processes

Biomolecular condensates regulate fundamental cellular processes through their ability to concentrate specific biomolecules while excluding others. In the nucleus, condensates such as the nucleolus, nuclear speckles, and Cajal bodies organize transcription, splicing fidelity, and ribosomal biogenesis [29]. The nucleolus represents a multiphase liquid condensate that concentrates ribosomal proteins and RNA to enhance assembly efficiency while preventing non-specific interactions [31]. In the cytoplasm, condensates including stress granules, processing bodies (P-bodies), RNA transport granules, U-bodies, and Balbiani bodies mediate translational arrest, mRNA storage and decay, and signal transduction regulation [29].

Condensates can accelerate or suppress biochemical reactions, aid in the storage or sequestration of molecules, patch damaged membranes, and generate mechanical capillary forces [30]. Cells utilize phase separation to sense and respond to environmental changes or to buffer against concentration fluctuations in the cytosol or nucleoplasm [30]. This functional versatility stems from the dynamic nature of condensates, which allows rapid reorganization of cellular composition in response to internal and external cues.

Dysregulation in Disease Processes

Aberrant formation or regulation of biomolecular condensates has been implicated in the pathogenesis of several diseases. In neurodegenerative disorders such as amyotrophic lateral sclerosis (ALS) and frontotemporal dementia (FTD), pathogenic solidification of condensates through liquid-to-solid transitions impairs neuronal resilience [31] [29]. Disease-associated mutations in key residues of proteins like TDP-43, FUS, and alpha-synuclein often promote pathological phase separation, contributing to condensate dysregulation [29] [32].

In cancer cells, altered condensate dynamics may promote stress tolerance, apoptotic resistance, and immune evasion [29] [32]. During viral infections, host condensates can be subverted to facilitate viral replication or block antiviral responses [29]. The dysregulation of condensation processes can lead to a gain of function that drives specific disease pathologies, making condensate modulation a promising therapeutic frontier [30] [32].

Experimental Data and Comparison

Quantitative Assessment of Methodologies

The study of biomolecular condensates requires validation of both experimental and computational approaches. For computational methods, assessment typically involves comparing calculated molecular properties with experimentally determined values for bond lengths, bond angles, ground state vibrational frequencies, hydrogen bond interaction energies, conformational energies, and reaction barrier heights [18]. Basis sets such as 6-31G* and 6-31+G* often provide accuracies similar to more computationally expensive Dunning-type basis sets for biological applications [18].

In experimental settings, comparative studies enable researchers to evaluate how distinct design parameters influence functional outcomes. For example, studies comparing HER2-targeting ADCs Kadcyla and Enhertu have demonstrated how linker stability and payload characteristics affect efficacy in complex tumor environments [33]. While Kadcyla showed cell killing due to its potent DM1 payload, Enhertu leveraged its cleavable linker to induce bystander killing in mixed cell populations, extending its reach beyond HER2-positive targets [33].

Integrated Workflows for Condensate Analysis

The most powerful approaches combine multiple methodologies to overcome individual limitations. Integrated workflows might begin with coarse-grained simulations to identify potential phase separation conditions, followed by all-atom molecular dynamics to elucidate detailed interaction mechanisms, and finally experimental validation using live-cell imaging and biophysical characterization [31]. Such integrated strategies narrow the gap between computation and experimentation, enabling researchers to elucidate molecular mechanisms governing biomolecular condensates that would remain inaccessible through single-method approaches [31].

Multi-Scale Research Approach for Biomolecular Condensates

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Tools for Biomolecular Condensate Studies

| Reagent/Platform | Category | Primary Function | Application Examples |

|---|---|---|---|

| Incucyte S3 Live-Cell Analysis System | Live-cell imaging | Real-time monitoring of cell death kinetics and dynamic cellular processes | ADCC assays; Spheroid analysis [33] |

| Cytoscape | Computational platform | Network analysis and visualization of complex molecular interactions | Protein-protein interaction networks in crowding [34] |

| CellProfiler | Image analysis software | Automated image analysis and quantification of cellular structures | High-throughput condensate characterization [34] |

| Super-resolution microscopy (STED, PALM, STORM) | Imaging technology | Visualization of sub-diffraction limit structures (20-300 nm) | Small condensate and cluster imaging [30] |

| FRAP (Fluorescence Recovery After Photobleaching) | Biophysical technique | Measurement of molecular dynamics and material properties within condensates | Quantifying condensate fluidity and exchange rates [30] |

| 3D Tumor Spheroids | Biological model system | Recreation of tumor microenvironment with heterogeneous cell populations | Studying drug penetration and bystander effects [33] |

| Organoids/Tumoroids | Advanced biological models | Physiologically relevant tissue mimics with cellular heterogeneity | Assessing ADC efficacy in near-physiological contexts [33] |

Future Perspectives and Concluding Remarks

The field of biomolecular condensates continues to evolve rapidly, with emerging technologies enabling increasingly sophisticated investigations. CRISPR/Cas-based imaging, optogenetic manipulation, and AI-driven phase separation prediction tools represent cutting-edge approaches that allow real-time monitoring and precision targeting of condensate dynamics [29]. These technologies underscore the emerging potential of biomolecular condensates as both biomarkers and therapeutic targets, paving the way for precision medicine approaches in condensate-associated diseases [29].

The integration of computational and experimental methods will be essential for advancing our understanding of condensate biology. Machine learning approaches that predict phase separation propensity from protein sequence alone, combined with advanced molecular dynamics simulations that account for cellular crowding effects, will enhance our ability to link molecular features to condensate behavior [36] [35]. As these methodologies mature, researchers will be better equipped to develop therapeutic strategies that selectively modulate condensate formation and function in disease contexts, potentially leading to novel treatments for cancer, neurodegenerative disorders, and other conditions linked to condensate dysregulation [31] [29] [32].

Practical Applications: From Drug Design to Biomolecular Interaction Analysis

Decoding Drug-Excipient Interactions and Co-Crystallization in Solid Dosage Forms

The development of effective solid oral dosage forms represents a critical challenge in pharmaceutical sciences, particularly for Active Pharmaceutical Ingredients (APIs) with poor aqueous solubility. More than 40% of marketed drugs and 90% of new chemical entities fall into Biopharmaceutics Classification System (BCS) Classes II and IV, characterized by low solubility and/or permeability that severely limits their bioavailability [37]. Within solid dosage forms, drug-excipient interactions and co-crystallization approaches have emerged as pivotal strategies to modulate pharmaceutical performance. These molecular-level interactions fundamentally govern critical properties including solubility, stability, dissolution rates, and ultimately, therapeutic efficacy.

The paradigm of formulation science is shifting from empirical approaches toward precision molecular design, driven by advances in computational chemistry. Density Functional Theory (DFT) has revolutionized this transition by providing quantum mechanical insights into the electronic structures governing API-excipient interactions [38] [39]. By solving the Kohn-Sham equations with precision up to 0.1 kcal/mol, DFT enables accurate reconstruction of molecular orbital interactions, offering theoretical guidance for optimizing drug-excipient composite systems and accelerating the development of robust pharmaceutical formulations.

Theoretical Framework: DFT in Pharmaceutical System Analysis

Fundamental Principles of Density Functional Theory

DFT is a computational quantum mechanical method that describes multi-electron systems through electron density rather than wavefunctions, significantly simplifying computational complexity while maintaining accuracy. The theoretical foundation rests on the Hohenberg-Kohn theorem, which establishes that all ground-state properties of a system are uniquely determined by its electron density distribution [39]. This principle enables the practical application of DFT to complex pharmaceutical systems through the Kohn-Sham equations, which reduce the multi-electron problem to a tractable single-electron approximation.

The accuracy of DFT calculations depends critically on selecting appropriate exchange-correlation functionals, which approximate quantum effects not captured in the basic equations. For pharmaceutical applications, generalized gradient approximation (GGA) functionals excel at describing hydrogen bonding systems, while hybrid functionals (e.g., B3LYP, PBE0) provide superior accuracy for reaction mechanisms and molecular spectroscopy [39]. Recent innovations include double hybrid functionals that incorporate second-order perturbation theory corrections, substantially improving the accuracy of excited-state energies and reaction barrier calculations relevant to drug stability.

DFT Applications in Drug-Excipient Interaction Analysis

DFT calculations provide multidimensional insights into drug-excipient interactions through several key applications:

Reaction Site Identification: Molecular Electrostatic Potential (MEP) maps and Average Local Ionization Energy (ALIE) analyses identify electron-rich (nucleophilic) and electron-deficient (electrophilic) regions on drug molecules, predicting susceptible sites for interactions with excipients [39].

Binding Energy Calculations: DFT quantifies intermolecular binding energies between API and excipient molecules, enabling rational selection of compatible excipient combinations. For example, stronger excipient-API interactions can potentially disrupt cocrystal stability during processing [40].

Solvation Modeling: Combined with continuum solvation models (e.g., COSMO), DFT quantitatively evaluates polar environmental effects on drug release kinetics, providing critical thermodynamic parameters (e.g., ΔG) for controlled-release formulation development [38].

The integration of DFT with multiscale computational paradigms represents a significant advancement. The ONIOM framework employs DFT for high-precision calculations of drug molecule core regions while using molecular mechanics force fields to model excipient environments, achieving an optimal balance between accuracy and computational efficiency [39].

Figure 1: DFT Application Workflow in Pharmaceutical Formulation Design

Pharmaceutical Co-crystallization: Principles and Methodologies

Cocrystal Fundamentals and Regulatory Landscape

Pharmaceutical cocrystals are defined as crystalline materials composed of two or more different molecules in a definite stoichiometric ratio within the same crystal lattice, associated by nonionic and noncovalent bonds, where at least one component is an API [41]. Unlike salts, which require ionizable functional groups, cocrystals can form with virtually any API regardless of its ionization potential, significantly expanding their application scope.

The regulatory framework for pharmaceutical cocrystals has evolved substantially. The U.S. Food and Drug Administration (FDA) currently defines cocrystals as "crystalline materials composed of two or more different molecules within the same crystal lattice associated by nonionic and noncovalent bonds" [41]. The European Medicines Agency (EMA) similarly describes cocrystals as a viable alternative to salts of the same API, considering them the same as the API except with distinct pharmacokinetic properties [41].

Cocrystal Engineering and Supramolecular Chemistry

Cocrystal formation is governed by supramolecular synthons - specific patterns of intermolecular interactions that direct crystal packing. These include:

Supramolecular Homosynthons: Formed by self-complementary functional groups, such as carboxylic acid dimers or amide dimers [41].

Supramolecular Heterosynthons: Organized by different but complementary functional groups, such as carboxylic acid-pyridine and alcohol-aromatic nitrogen hydrogen bonding [41].

Common functional groups particularly amenable to cocrystal formation include carboxylic acids, amides, and alcohols, which provide reliable interaction motifs for crystal engineering [41].

Experimental Methodologies for Cocrystal Preparation

Cocrystal preparation methods are broadly classified into solution-based and solid-based techniques, each with distinct advantages and limitations:

Solution-Based Methods include solvent evaporation, antisolvent addition, cooling crystallization, and slurry conversion. The solvent evaporation method involves completely dissolving cocrystal constituents in a suitable solvent at appropriate stoichiometric ratios, then evaporating the solvent to obtain cocrystals. This method is particularly valuable for producing high-quality single crystals suitable for structural analysis by single-crystal X-ray diffraction [41]. For example, a 1:1 febuxostat-piroxicam cocrystal with improved solubility and tabletability was formed by slow evaporation of acetonitrile at room temperature over 3-5 days [41].

The antisolvent method enables control over cocrystal quality and particle size through semibatch or continuous manufacturing processes. Chun et al. demonstrated this approach by preparing indomethacin-saccharin cocrystals through addition of water (antisolvent) to a methanol solution containing both API and coformer [41].

Solid-Based Methods include neat grinding, liquid-assisted grinding, and melting crystallization. These techniques eliminate or minimize solvent use, offering environmental and processing advantages. Liquid-assisted grinding typically involves adding catalytic amounts of solvent to enhance molecular mobility and reaction kinetics during grinding [41].

Table 1: Comparison of Cocrystal Preparation Methods

| Method | Principle | Advantages | Limitations | Quality Indicators |

|---|---|---|---|---|

| Solvent Evaporation | Solubility-based crystallization through solvent removal | High-quality crystals, suitable for structural analysis | High solvent consumption, potential polymorphism | Single crystal formation, defined morphology |

| Antisolvent Crystallization | Reduced solubility through antisolvent addition | Particle size control, continuous processing possible | Solvent/antisolvent selection critical | Consistent particle size distribution |

| Neat Grinding | Mechanochemical activation through milling | Solvent-free, simple operation | Possible amorphous formation, energy intensive | Phase purity by PXRD |

| Liquid-Assisted Grinding | Mechanochemistry with catalytic solvent | Faster kinetics, higher yields | Small solvent amounts required | Uniform phase formation |

| Slurry Conversion | Suspension in saturated solution | Scalable, controls polymorphic form | Long processing times possible | Conversion completeness by DSC |

Analytical Techniques for Characterizing Drug-Excipient Interactions

Comprehensive Compatibility Screening Protocols

Robust evaluation of drug-excipient compatibility follows standardized protocols involving binary physical mixtures (typically 1:1 w/w ratio) of API or cocrystal with excipients, followed by stress testing under accelerated conditions (40°C/75% relative humidity) and comprehensive characterization using orthogonal analytical techniques [42].

Differential Scanning Calorimetry (DSC) provides initial rapid screening by detecting thermal events indicative of interactions. Changes in melting endotherms, peak broadening, or appearance of new thermal events suggest potential incompatibilities. In Ketoconazole-Adipic Acid cocrystal compatibility studies, DSC revealed altered thermal behavior with magnesium stearate, suggesting eutectic formation, while other excipients showed compatibility [42].

Thermogravimetric Analysis (TGA) complements DSC by monitoring mass changes associated with decomposition, dehydration, or desorption processes. The stability of decomposition profiles in binary mixtures indicates absence of chemical interactions [42].

Powder X-Ray Diffraction (PXRD) detects crystalline phase changes, including polymorphic transformations, cocrystal dissociation, or amorphous formation. Maintenance of characteristic cocrystal diffraction patterns in binary mixtures confirms physical stability [40] [42].

Fourier-Transform Infrared Spectroscopy (FT-IR) identifies chemical interactions through shifts in characteristic absorption bands, particularly in functional groups involved in hydrogen bonding or other molecular interactions [42].

Advanced Computational and Experimental Integration

DFT calculations strengthen experimental characterization by providing quantum mechanical insights into observed interactions. For example, DFT calculations of intermolecular binding energies between cocrystal constituents and excipients successfully rationalized experimental observations that certain excipients (PEG, HMPC, lactose) yielded purer cocrystals during milling, while others (PVP, MCC) with stronger calculated interactions potentially compromised cocrystal integrity [40].

Figure 2: Drug-Excipient Compatibility Screening Workflow

Comparative Performance Analysis: Cocrystals vs. Alternative Formulation Strategies

Property Modification Through Cocrystal Engineering

Cocrystals provide versatile platforms for modulating critical pharmaceutical properties without covalent modification of the API:

Solubility and Dissolution Enhancement: The Ketoconazole-Adipic Acid cocrystal demonstrated approximately 100-fold aqueous solubility improvement over pure Ketoconazole, directly addressing the bioavailability limitations of this BCS Class II drug [42].