Validating Quantum Effects in Chemical Environments: From Theory to Drug Discovery Applications

This article explores the critical challenge of validating quantum mechanical effects within diverse chemical environments, a frontier for accelerating drug discovery and materials science.

Validating Quantum Effects in Chemical Environments: From Theory to Drug Discovery Applications

Abstract

This article explores the critical challenge of validating quantum mechanical effects within diverse chemical environments, a frontier for accelerating drug discovery and materials science. It examines the foundational limitations of classical computational methods, details emerging hybrid quantum-classical methodologies and their practical applications, addresses key hurdles in optimization and scalability, and establishes a framework for validating these approaches against classical benchmarks and experimental data. Aimed at researchers and drug development professionals, this review synthesizes current progress and outlines the path toward achieving reliable, quantum-validated simulations for biologically relevant systems.

The Quantum Imperative: Why Chemical Environments Challenge Classical Simulation

Density Functional Theory (DFT) stands as a cornerstone of modern computational chemistry and materials science, providing indispensable insights into electronic structures and enabling the prediction of material properties from first principles. By solving the Kohn-Sham equations with quantum mechanical precision, DFT reconstructs molecular orbital interactions and facilitates a systematic understanding of complex behaviors in diverse systems, from drug-excipient composites to heterogeneous catalysts [1]. Its computational efficiency compared to higher-level quantum methods has made it the workhorse for electronic structure calculations across scientific disciplines. However, despite its widespread adoption and numerous successes, DFT possesses fundamental limitations that constrain its predictive accuracy for specific critical properties and systems. These limitations stem from approximations inherent in the exchange-correlation functionals, which can introduce systematic errors in total energy calculations [2]. This analysis examines the specific domains where DFT falls short, quantifies these limitations with experimental data, outlines methodologies for error identification, and explores emerging solutions that combine DFT with machine learning to overcome its constraints.

Quantitative Analysis of DFT Limitations: Performance Data

The limitations of DFT become particularly evident when comparing its predictions with experimental measurements across various material classes and properties. The following tables summarize systematic errors observed in DFT calculations.

Table 1: DFT Performance Across Material Classes and Properties

| Material Class | Property | Common DFT Error | Primary Source of Error | Experimental Comparison |

|---|---|---|---|---|

| Strongly Correlated Systems (e.g., Metal Oxides) | Band Gap | Underestimates by 30-100% (e.g., predicts metals instead of semiconductors) [3] | Self-interaction error, inadequate treatment of localized d/f electrons [3] | TiO₂ (rutile): Exp. ~3.0 eV, PBE: ~1.8 eV, PBE+U: ~3.0 eV [3] |

| Magnetic Transition Metals (Fe, Co, Ni) | Adsorption Energy/Reaction Barrier | Significant errors in binding energies and activation barriers [4] | Omission of spin polarization effects in large-scale datasets [4] | Spin-polarized calculations are required but often omitted due to computational cost [4] |

| Binary/Ternary Alloys | Formation Enthalpy (Hf) | Intrinsic energy resolution errors limit predictive capability for phase stability [2] | Limitations of exchange-correlation functionals [2] | Errors large enough to incorrectly predict stable phases in ternary diagrams [2] |

| General Molecular Systems | Free Energy | Variations up to 5 kcal/mol due to grid sensitivity [5] | Numerical integration grids not rotationally invariant [5] | Orientation-dependent free energy calculations without large grids (>99,590 points) [5] |

Table 2: Comparative Accuracy of Computational Methods

| Method | Computational Cost | Typical System Size (Atoms) | Key Limitations | Representative Accuracy (Formation Enthalpy) |

|---|---|---|---|---|

| DFT (GGA/PBE) | Medium | 100-1000 | Systematically underestimates band gaps; poor for strongly correlated electrons [3] | Mean Absolute Error (MAE) of several eV for band gaps in metal oxides [3] |

| DFT+U | Medium-High | 100-1000 | Requires empirical U parameter; not ab initio [3] | MAE can be reduced to ~0.1 eV with optimal U [3] |

| Hybrid DFT (e.g., B3LYP) | High | 50-500 | Computationally intensive (orders of magnitude over DFT) [3] | Improved band gaps but often still underestimated [3] |

| Machine Learning Interatomic Potentials (MLIPs) | Low | 10,000+ | Require extensive DFT training data; limited transferability [4] [6] | Near-DFT accuracy for energies and forces at ~0.01% cost [4] |

| Classical Force Fields | Very Low | 1,000,000+ | Cannot describe bond breaking/formation (non-reactive) [6] | Low accuracy for chemical reactions; parameter-dependent [6] |

Experimental Protocols for Identifying and Quantifying DFT Errors

Benchmarking Band Gaps in Strongly Correlated Systems

Protocol Objective: To quantify the band gap underestimation error in metal oxides and determine optimal Hubbard U correction parameters [3].

Methodology:

- System Selection: Choose metal oxides with known experimental band gaps (e.g., rutile/anatase TiO₂, ZnO, CeO₂, ZrO₂).

- DFT+U Calculations: Perform structural optimization and electronic structure calculations using DFT+U with varying (Uₚ, U[d/f]) parameter pairs. Uₚ applies to oxygen 2p orbitals, while U[d/f] applies to metal 3d or 4f orbitals.

- Data Collection: For each (Uₚ, U[d/f]) pair, compute the lattice parameters and band gap.

- Error Quantification: Calculate the deviation of calculated properties from experimental values: ΔBand Gap = |E[g,calc] - E[g,exp]| and ΔLattice = |a[calc] - a[exp]|.

- Optimal Parameter Identification: Identify the (Uₚ, U[d/f]) pair that minimizes the combined error in band gap and lattice parameters [3].

Key Findings: Incorporating Uₚ for oxygen 2p orbitals alongside traditional U[d/f] for metal orbitals significantly enhances prediction accuracy for both lattice parameters and band gaps in metal oxides [3].

Correcting Formation Enthalpies in Alloy Systems

Protocol Objective: To improve the accuracy of DFT-predicted formation enthalpies (Hf) for phase stability calculations in ternary alloys using machine learning [2].

Methodology:

- Reference Data Curation: Compile a dataset of experimentally measured formation enthalpies for binary and ternary alloys (e.g., Al-Ni-Pd, Al-Ni-Ti systems). Filter out unreliable or missing values.

- DFT Calculations: Compute DFT formation enthalpies for the same compounds using standard functionals (e.g., PBE).

- Feature Engineering: Characterize each material with a feature set including elemental concentrations, atomic numbers, and interaction terms: x = [xA, xB, xC,...], z = [xAZA, xBZB, xCZ_C,...] [2].

- Model Training: Train a neural network (e.g., Multi-Layer Perceptron) to predict the discrepancy ΔHf = H[f,exp] - H[f,DFT] using the feature set as input.

- Validation: Apply the trained model to predict corrections for DFT-calculated Hf values in new ternary compositions and validate against experimental phase diagrams [2].

Key Findings: ML corrections significantly enhance predictive accuracy for phase stability, enabling more reliable determination of stable phases in ternary systems where uncorrected DFT fails [2].

Visualization of Workflows and Method Comparisons

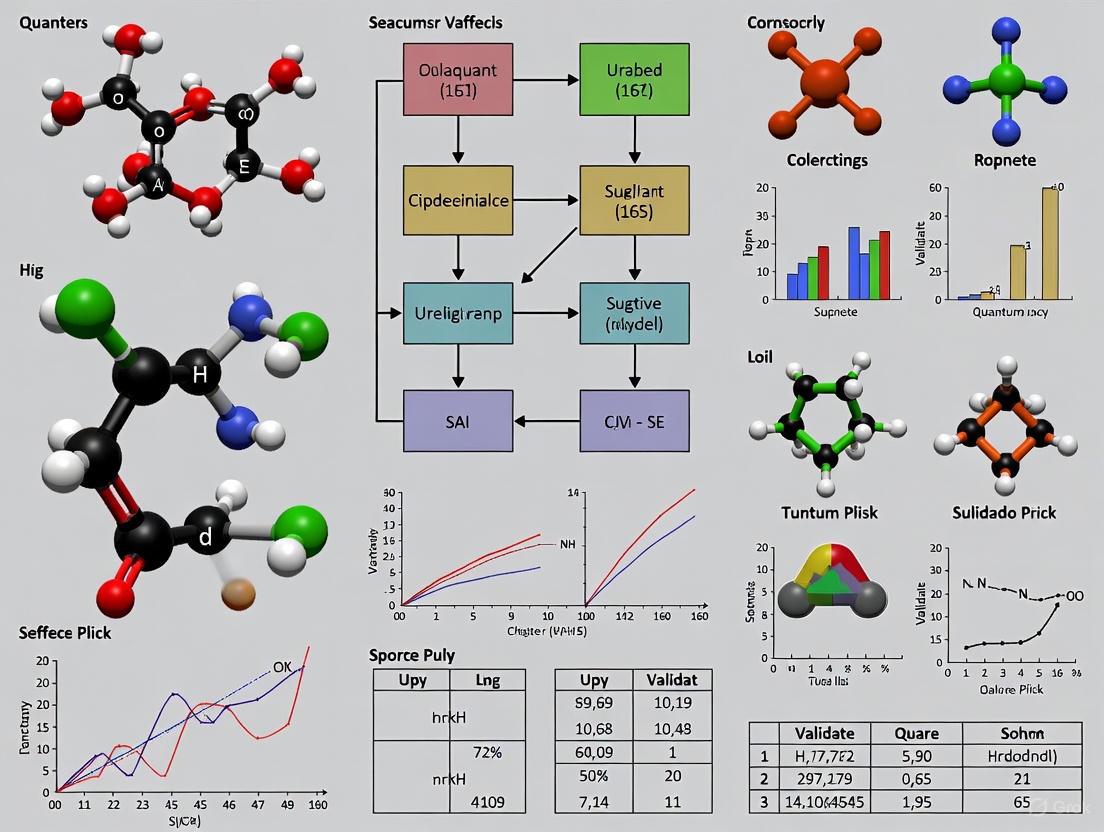

Diagram 1: Workflow for Machine Learning Correction of DFT Enthalpy Errors

Diagram 2: Computational Method Trade-Offs: Accuracy vs. System Size

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Computational Tools for Addressing DFT Limitations

| Tool / Resource | Category | Primary Function | Application Context |

|---|---|---|---|

| VASP [4] [3] | DFT Software | Performs ab initio quantum mechanical calculations using DFT and DFT+U. | Core platform for energy, force, and electronic structure calculations on periodic systems. |

| Machine Learning Interatomic Potentials (MLIPs) [7] [4] [6] | Machine Learning Force Fields | Surrogate models trained on DFT data to predict energies/forces at low cost. | Molecular dynamics and structure optimization at scales inaccessible to direct DFT. |

| Open Catalyst (OC20) Dataset [4] | Training Dataset | Large-scale public database of adsorbate-surface DFT calculations. | Training and benchmarking MLIPs for heterogeneous catalysis applications. |

| Universal Model for Atoms (UMA) [4] | Foundational ML Model | Graph neural network trained on diverse chemical domains (molecules, materials). | Multi-task surrogate for atomistic systems, improving transferability. |

| AQCat25 Dataset [4] | High-Fidelity Dataset | Spin-polarized DFT dataset for magnetic catalytic systems. | Training MLIPs for systems where spin effects are critical (e.g., Fe, Co, Ni). |

| DFT+U Methodology [3] | Theoretical Correction | Adds Hubbard U correction to treat strongly correlated electrons. | Improving band gap and lattice parameter predictions in metal oxides. |

| Linear Response Method [3] | Parameterization Tool | Computes Hubbard U parameter via ab initio linear response. | System-specific determination of U values, reducing empiricism in DFT+U. |

| MedeA [3] | Materials Informatics Platform | Integrated environment for DFT calculations and materials property prediction. | Streamlines computational workflows and data management. |

Emerging Solutions: Integrating DFT with Machine Learning

The limitations of DFT have catalyzed the development of hybrid approaches that leverage machine learning to correct systematic errors and extend the reach of quantum simulations. Two promising directions are:

ML-Corrected Thermodynamics: As detailed in the experimental protocol, neural networks can be trained to predict the discrepancy between DFT-calculated and experimentally measured formation enthalpies. This approach utilizes physically meaningful descriptors (elemental concentrations, atomic numbers) to learn and correct DFT's intrinsic energy inaccuracies, particularly for phase stability predictions in complex multi-component systems [2].

Machine Learning Interatomic Potentials (MLIPs): MLIPs are trained on large DFT datasets to achieve near-DFT accuracy for energies and forces at a fraction of the computational cost, enabling molecular dynamics simulations and structure optimizations for thousands of atoms over nanosecond timescales [7] [4] [6]. Foundational models like the Universal Model for Atoms (UMA), trained on hundreds of millions of structures, demonstrate remarkable generalizability across diverse chemical domains [4]. For systems where standard DFT data is insufficient, such as magnetic catalysts, specialized high-fidelity datasets (e.g., AQCat25 with explicit spin polarization) are being created to train more robust MLIPs [4].

These hybrid DFT+ML paradigms represent a paradigm shift, transforming DFT from a standalone tool with known limitations into a core component of a more powerful, accurate, and scalable computational framework for materials discovery and catalyst design [7] [2].

The traditional view of solvents as inert mediums is fundamentally incomplete. In both biological systems and industrial processes, the solvent forms a dynamic, active environment that critically influences molecular stability, reaction pathways, and ultimate outcomes. This guide objectively compares the roles and performance of different solvent classes—from water in biology to deep eutectic solvents in preservation and high-purity grades in manufacturing—framed within an emerging paradigm that recognizes the solvent as a dynamic field. This perspective is crucial for validating quantum effects, as these phenomena are not intrinsic to a molecule alone but are modulated by the chemical environment, a principle moving from theoretical concept to measurable reality in modern chemistry [8].

Solvent Roles in Biological Systems

In biology, water is the universal solvent, and its properties are foundational to life. Its effectiveness stems from its polar nature and ability to form extensive hydrogen-bonding networks.

Water as the Biological Solvent

Water's polar molecular structure, with regions of partial positive (hydrogen) and negative (oxygen) charge, enables it to act as a superb solvent for other polar and ionic compounds, which are termed hydrophilic [9]. This polarity allows water molecules to form hydration shells around dissolved ions and molecules, stabilizing them in solution. This is essential for the transport of nutrients like glucose and amino acids in the bloodstream and plant sap [9].

Key Functions in Metabolism and Structure

- Medium for Metabolic Reactions: Nearly all biochemical reactions occur in the aqueous cytosol of cells. Water ensures that reactants, enzymes, and products are optimally dispersed and can interact freely [9].

- Direct Participation in Reactions: Water is not a passive bystander. It is a direct participant in key metabolic reactions, notably hydrolysis, where water molecules are used to break down complex polymers like proteins and starch into their monomeric subunits [9].

- Thermoregulation: Water’s high heat capacity allows it to absorb and distribute metabolic heat efficiently, preventing damaging temperature fluctuations and maintaining the optimal environment for enzyme function [9].

- Structural Role: Water creates turgor pressure in plant cells by osmotic uptake, providing structural support and rigidity. The balance of water inside and outside animal cells is also crucial for maintaining cell volume and shape [9].

Solvent Applications in Industrial Processes

Industrial applications demand solvents with specific properties, driving a diverse and evolving market focused on purity, performance, and sustainability.

Table 1: Global Markets for Key Industrial Solvent Classes

| Solvent Class | Market Size (2024/2025) | Projected Market Size (2030/2035) | CAGR | Dominant End-User Sectors |

|---|---|---|---|---|

| High-Purity Solvents | $30.8 Billion (2024) [10] | $45.0 Billion (2030) [10] | 6.6% [10] | Pharmaceuticals, Biotechnology, Electronics/Semiconductors [10] |

| Green/Bio-Solvents | $2.2 Billion (2024) [11] | $5.51 Billion (2035) [11] | 8.7% [11] | Paints & Coatings, Cleaning Products, Adhesives [11] |

| General Industrial Solvents | $38.6 Billion (2024) [12] | - | ~6.1% (to 2032) [12] | Paints, Coatings, Adhesives, Plastics [12] |

Driving Forces and Performance Requirements

- High-Purity Solvents: Characterized by meticulous refinement to eliminate trace impurities, these solvents are critical in sectors where contamination can compromise product safety or process precision. The growth is driven by the complexity of modern pharmaceuticals (e.g., APIs, biologics) and the miniaturization in electronics [10].

- Green/Bio-Solvents: Derived from renewable resources like corn, sugarcane, and cellulose, these solvents address environmental and regulatory pressures by reducing toxicity and VOC emissions. Their adoption is accelerating in industrial cleaning, paints and coatings, and personal care products, though challenges remain regarding performance in some demanding applications and higher production costs [11] [13].

- Industrial Cleaning and Degreasing: Solvents are essential for removing oils, greases, and residues, especially in sectors like electronics manufacturing, where precision cleaning of circuit boards is required without damaging components [14].

Comparative Analysis: Solvent Performance and Experimental Data

Different solvents create distinct chemical environments, leading to varied outcomes in stabilizing biological materials, facilitating reactions, and enabling quantum mechanical investigations.

Stabilization of Biological Materials

Table 2: Performance Comparison of Solvents in Biological Preservation

| Preservation Method / Medium | Core Mechanism | Key Performance Metrics | Reported Limitations |

|---|---|---|---|

| Water (in vivo/standard refrigeration) | Slows enzymatic activity and metabolism [9] [15] | Short-term stabilization; viable for days [15] | Rapid degradation; not for long-term storage [15] |

| Cryopreservation (with DMSO) | Halts metabolic activity at cryogenic temps [15] | Long-term preservation of viable cells [15] | Cytotoxicity of DMSO; ice crystal damage [15] |

| Deep Eutectic Solvents (DESs) | Extensive H-bonding network suppresses degradation [15] | Enhances stability & longevity of cells, proteins, DNA [15] | Efficacy varies with DES composition and biological material [15] |

Experimental Protocol: Evaluating DES Preservation Efficacy

- DES Formulation: Prepare a common DES, for example, by mixing choline chloride (hydrogen bond acceptor) with urea or glycerol (hydrogen bond donor) at a specific molar ratio under gentle heating until a clear, homogeneous liquid forms [15].

- Sample Preparation: Suspend the target biological material (e.g., a specific enzyme, microbial cells, or DNA) in the DES and in a control solution (e.g., standard buffer).

- Stability Testing: Store both samples under identical, accelerated stress conditions (e.g., elevated temperature of 40°C for several weeks).

- Activity/Integrity Assay: At regular intervals, withdraw aliquots. For enzymes, measure residual catalytic activity via a spectrophotometric assay. For DNA, use gel electrophoresis to assess structural integrity. For cells, perform viability counts.

- Data Analysis: Compare the rate of activity loss or degradation between the DES and control samples. Effective DESs will show significantly higher retention of activity and integrity over time [15].

A New Theoretical Framework: Dynamic Solvation Fields

Traditional solvent descriptors like dielectric constant are static averages. The emerging "dynamic solvation fields" paradigm treats solvents as fluctuating environments with evolving local structures and electric fields. This framework is essential for understanding and predicting solvent effects on chemical reactivity, including processes influenced by quantum effects. It moves beyond continuum models to account for the active role of the solvent in modulating transition state stabilization and steering non-equilibrium reactivity [8].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Solvent-Focused Research

| Reagent/Material | Function in Experimental Context |

|---|---|

| High-Purity HPLC Solvents | Mobile phase for chromatographic analysis of reaction mixtures or purified compounds, where impurities can cause baseline noise and inaccurate results [10]. |

| Deuterated Solvents (e.g., D₂O, CDCl₃) | NMR spectroscopy for determining molecular structure and monitoring reaction progress in a non-interfering, spectroscopically suitable medium. |

| Deep Eutectic Solvents (DESs) | Biocompatible medium for preserving biomolecules [15] or as a green reaction solvent for synthesis, leveraging their tunable properties. |

| Choline Chloride | A common, low-cost, and biodegradable Hydrogen Bond Acceptor (HBA) for formulating a wide range of DESs [15]. |

| Glycerol | A non-toxic, renewable Hydrogen Bond Donor (HBD) for DES formulation [15]; also used as a cryoprotectant. |

| Dimethyl Sulfoxide (DMSO) | A polar aprotic solvent and traditional cryoprotectant; serves as a benchmark for comparing new solvent performance but has known cytotoxicity [15]. |

Visualizing Concepts and Workflows

Biological Hydration Shell Formation

The following diagram illustrates the formation of a hydration shell, a key to water's solvent properties in biology.

Diagram 1: Solute Hydration in Aqueous Solution. A hydrophilic solute organizes surrounding water molecules into a structured hydration shell via strong ion-dipole forces or hydrogen bonding (red arrows). This shell is dynamically maintained, with hydrogen bonds (green arrows) continuously breaking and reforming with the bulk phase [9].

DES Preservation Workflow

This flowchart outlines a general experimental protocol for testing the efficacy of Deep Eutectic Solvents in preserving biological materials.

Diagram 2: DES Preservation Assay Workflow. The protocol involves creating the DES, introducing the biological material, and performing stability tests under stress conditions compared to a control to quantitatively measure preservation efficacy [15].

The critical role of solvents extends far beyond merely dissolving reactants. In biology, water's unique properties create the essential conditions for life, from metabolic pathways to cellular structure. In industry, the drive is toward solvents that offer not only performance but also ultra-purity and environmental sustainability. The emerging "dynamic solvation fields" paradigm provides a more profound, unified framework for understanding these roles, emphasizing the solvent's active participation in chemical processes. For researchers validating quantum effects in chemical environments, this integrated view is paramount. The solvent is not a passive container but a dynamic field that can stabilize transition states, preserve biomolecular integrity, and ultimately modulate the quantum mechanical phenomena that underpin all chemical reactivity.

Stereoelectronic effects represent a fundamental class of quantum mechanical interactions that arise from the precise spatial alignment of atomic orbitals and their resulting electronic interactions. These effects, which include phenomena such as hyperconjugation, charge-transfer interactions, and orbital orientation effects, serve as an invisible hand that dictates molecular stability, reactivity, and conformation across diverse chemical environments. Despite their critical importance, stereoelectronic effects have often been overlooked in traditional chemical analyses due to the challenges associated with their direct experimental observation and computational characterization.

The validation of these quantum effects in different chemical environments constitutes a frontier of modern chemical research, bridging the gap between theoretical prediction and experimental observation. This guide provides a comparative analysis of contemporary research methodologies and their performance in quantifying, visualizing, and applying stereoelectronic interactions across biological, materials, and synthetic chemical systems. By examining cutting-edge experimental protocols and computational approaches, we aim to equip researchers with the tools necessary to harness these subtle yet powerful interactions in drug development, materials design, and catalyst optimization.

Fundamental Principles and Key Experimental Evidence

Stereoelectronic effects originate from the quantum mechanical principle that favorable orbital overlap, determined by specific molecular geometries, leads to stabilizing interactions that influence molecular behavior. These effects operate through several distinct mechanisms: hyperconjugation involves the donation of electron density from filled σ-orbitals or lone pairs into adjacent empty or antibonding orbitals; n→π* interactions occur when lone pair electrons (n) donate into antibonding π* orbitals of carbonyl groups or other electron-deficient systems; and σ→σ* interactions represent electron delocalization from bonding σ orbitals to antibonding σ* orbitals [16] [17].

The biological significance of these interactions is strikingly demonstrated in collagen stability. Research has revealed that prolyl-4-hydroxylation, an evolutionarily conserved post-translational modification, stabilizes collagen's triple helix through an elegant interplay of stereoelectronic effects. Specifically, 4(R)-hydroxylation promotes an exo ring pucker in the pyrrolidine ring, which optimizes main-chain torsional angles for stable trans peptide bonds and maximizes both n→π* interactions (En→π* = 0.9 kcal/mol) and σ→σ* interactions between axial C-H σ-electrons and C-OH* orbitals [16] [18]. This precise orbital alignment provides approximately 0.6-1.7 kcal/mol of stabilization energy per residue, which accumulates significantly across the entire collagen structure and is essential for the structural integrity of vertebrate connective tissues [16].

In synthetic systems, hyperconjugative stereoelectronic effects markedly influence molecular stability and reactivity. Studies of alkyl-substituted borazines have demonstrated that hyperconjugative interactions between σC-H/C-C orbitals and the π* system of the borazine ring lower the electrophilicity of boron atoms, thereby enhancing moisture stability—a property crucial for materials science applications. Natural Bond Orbital (NBO) analyses quantify these interactions, revealing stabilization energies (E2) of up to 6.5 kcal/mol for σC-H→π*BN interactions when C-H bonds are oriented perpendicular to the borazine ring plane [17].

Table 1: Quantitative Stabilization Energies of Stereoelectronic Effects in Different Chemical Systems

| Chemical System | Stereoelectronic Interaction Type | Stabilization Energy (kcal/mol) | Experimental Method | Primary Functional Impact |

|---|---|---|---|---|

| Collagen PO4G Triplet | n→π* interaction | 0.9 | DFT/DLPNO-CCSD(T) | Peptide backbone stabilization |

| Collagen PO4G Triplet | σ→σ* interaction | 0.6-1.7 | DFT/DLPNO-CCSD(T) | Pyrrolidine ring pucker stabilization |

| B-alkyl Borazines | σC-H→π*BN hyperconjugation | 6.5 | NBO Analysis | Enhanced hydrolytic stability |

| B-alkyl Borazines | σC-H→σ*BN hyperconjugation | 3.5 | NBO Analysis | Additional ring stabilization |

| Galactosyl Donors | Dioxolenium ion stabilization (O2 participation) | 21.6 | DFT (PBE0+D3/6-311+G(d,p)) | Reaction intermediate stabilization |

| Galactosyl Donors | Dioxolenium ion stabilization (O4 participation) | 9.5 | DFT (PBE0+D3/6-311+G(d,p)) | Moderate intermediate stabilization |

Comparative Analysis of Research Methodologies

Computational Approaches: Performance Benchmarks

Computational chemistry provides the foundation for quantifying and visualizing stereoelectronic effects, with different methods offering varying balances of accuracy and computational efficiency. Density Functional Theory (DFT) represents the workhorse approach for studying these effects in moderately-sized systems, but requires careful calibration to achieve chemical accuracy, particularly for small energy differences in the 1-2 kcal/mol range that characterize many stereoelectronic interactions [16].

High-level ab initio methods, particularly DLPNO-CCSD(T), serve as gold standards for quantifying subtle stereoelectronic effects. In collagen studies, these methods have been used to calibrate DFT functionals, revealing that even modern DFT requires rigorous benchmarking to achieve sufficient accuracy for quantifying n→π* and σ→σ* interactions [16] [18]. The computational cost of these high-level methods makes them prohibitive for large systems, but essential for developing parameterized force fields and machine learning approaches.

Emerging machine learning representations, particularly Stereoelectronics-Infused Molecular Graphs (SIMGs), demonstrate remarkable performance improvements over traditional computational methods. By explicitly incorporating orbital interactions into molecular representations, SIMGs achieve substantial accuracy enhancements while reducing computational requirements by orders of magnitude compared to traditional DFT-NBO calculations [19] [20]. This approach enables the prediction of orbital interactions in macromolecular systems like proteins, where traditional quantum chemical calculations are computationally prohibitive.

Table 2: Performance Comparison of Methods for Studying Stereoelectronic Effects

| Methodology | System Size Limit | Accuracy Range | Computational Time | Key Advantages | Principal Limitations |

|---|---|---|---|---|---|

| DLPNO-CCSD(T) | Small molecules (<100 atoms) | ~1 kcal/mol | Days to weeks | Gold standard accuracy | Prohibitive for large systems |

| DFT (calibrated) | Medium molecules (<500 atoms) | 1-3 kcal/mol | Hours to days | Balance of accuracy and speed | Requires careful functional selection |

| Molecular Mechanics | No practical limit | >5 kcal/mol | Seconds to minutes | Suitable for macromolecules | Poor for electronic properties |

| SIMG (Machine Learning) | Proteins and macromolecules | Comparable to DFT | Seconds | Rapid prediction on large systems | Training data dependent |

| H-SPOC (3D Descriptors) | Drug-like molecules | High for pKa | Minutes | Captures conformational flexibility | Specialized for pKa prediction |

Experimental Characterization Techniques

Experimental validation of stereoelectronic effects relies on sophisticated spectroscopic and analytical methods that can probe electronic structure and molecular conformation. Nuclear Magnetic Resonance (NMR) spectroscopy serves as a powerful experimental probe, with one-bond coupling constants (¹JCH) providing direct evidence of hyperconjugative interactions. In alkyl-substituted borazines, significant decreases in ¹JCH coupling constants for CH groups adjacent to boron atoms (112-118 Hz compared to typical values of ~125 Hz) provide experimental validation of σ→π* hyperconjugation, known as the Perlin effect [17].

X-ray diffraction studies offer complementary structural evidence for stereoelectronic effects. Analyses of borazine derivatives reveal characteristic structural signatures, including B-C bond lengths of approximately 1.575 Å and torsional angles ∠(N-B-C1-H/C2/Si) of ~90°, indicating perpendicular arrangements consistent with optimal hyperconjugative interactions [17]. These structural data provide crucial validation for computational predictions of stereoelectronically-driven molecular geometries.

In glycosylation chemistry, infrared spectroscopy combined with density functional theory calculations has elucidated how stereoelectronic properties of protecting groups influence reaction pathways. Systematic DFT investigations demonstrate that electron-donating groups stabilize dioxolenium-type intermediates by up to 10 kcal/mol relative to oxocarbenium ions, with the stabilization magnitude dependent on protecting group position (O2 > O4 > O6) [21]. This computational insight explains the stereochemical outcomes of glycosylation reactions and enables the design of custom protecting groups for synthetic applications.

Detailed Experimental Protocols

Protocol 1: Quantifying Stereoelectronic Effects in Collagen Stability

Objective: Determine the stabilization energy contributions of n→π* and σ→σ* interactions in collagen triple helix formation using calibrated computational methods.

Methodology:

- System Selection: Construct a physiologically relevant collagenous peptide model, specifically the Pro-4-Hyp-Gly (PO4G) triplet, representing the most abundant sequence in type I collagen [16] [18].

- Conformational Analysis: Generate all four possible conformers of 4-hydroxyproline: 4(R)-Hyp-exo, 4(R)-Hyp-endo, 4(S)-Hyp-exo, and 4(S)-Hyp-endo to evaluate ring pucker preferences.

- Quantum Chemical Calculations:

- Perform initial geometry optimizations using density functional theory with dispersion-corrected functionals.

- Calibrate DFT methods against gold-standard DLPNO-CCSD(T) calculations on model systems to ensure chemical accuracy (within ~1 kcal/mol).

- Employ 24 different DFT functionals during calibration to identify optimal methodology [16].

- Energy Decomposition:

- Calculate relative energies between endo and exo ring puckers (ΔEendo-exo).

- Quantify n→π* interaction energies (En→π) by evaluating orbital overlaps between carbonyl oxygen lone pairs and adjacent π orbitals at optimal Bürgi-Dunitz trajectory (distance ∼3.2 Å, angle ∼109°).

- Determine σ→σ* stabilization energies through natural bond orbital analysis.

- Validation: Compare computational predictions with experimental structural data from crystallography studies and thermal stability measurements.

Key Parameters:

- Software: ORCA (DLPNO-CCSD(T)), Gaussian (DFT)

- Basis Set: 6-311+G(d,p) or def2-TZVP

- Solvation Model: Implicit solvation for aqueous environment

- Temperature: 300 K

Protocol 2: Experimental Measurement of Hyperconjugation in Borazines

Objective: Experimentally characterize and quantify hyperconjugative interactions in alkyl-substituted borazines using NMR spectroscopy and X-ray diffraction.

Methodology:

- Synthesis:

- Prepare trichloroborazole intermediate by refluxing aniline with BCl₃ in toluene.

- React BNCl with organoalkyl-lithium or Grignard reagents in THF to yield final borazine products (61-74% yield) [17].

- X-ray Crystallography:

- Grow single crystals of representative borazine derivatives via slow evaporation.

- Collect diffraction data using Mo Kα radiation (λ = 0.71073 Å).

- Precisely measure B-C bond lengths, torsional angles ∠(N-B-C1-H/C2/Si), and bond angles ∠(B-C1-C2).

- NMR Spectroscopy:

- Acquire ¹H and ¹³C NMR spectra in CDCl₃ or DMSO-d6.

- Measure one-bond ¹JCH coupling constants for protons adjacent to boron atoms using:

- Carbon satellite signals in ¹H NMR spectra, or

- Proton-coupled HSQC experiments for enhanced accuracy.

- Compare ¹JCH values with typical coupling constants (∼125 Hz) to quantify reduction due to hyperconjugation.

- Computational Validation:

- Perform DFT calculations on experimental geometries.

- Conduct Natural Bond Orbital analysis to quantify hyperconjugative interactions (E2 stabilization energies).

- Calculate potential energy surface scans by rotating substituents about B-C bonds to determine orientation dependence of hyperconjugation.

Key Parameters:

- NMR Experiments: ¹H (500 MHz), ¹³C (125 MHz), proton-coupled HSQC

- X-ray: Mo Kα radiation, 100(2) K temperature

- Computational: B3LYP/6-311+G(d,p) level, NBO version 3.1

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Essential Research Tools for Investigating Stereoelectronic Effects

| Tool/Reagent | Function | Specific Application Example | Key Providers/Vendors |

|---|---|---|---|

| DLPNO-CCSD(T) | Gold-standard quantum chemical method | Calibrating DFT functionals for accurate energy differences | ORCA, Gaussian |

| DFT Software | Quantum chemical calculations | Geometry optimization and electronic structure analysis | Gaussian, ORCA, Q-Chem |

| NBO Analysis | Quantum chemical analysis | Quantifying hyperconjugative interactions | NBO 3.1 (embedded in Gaussian) |

| SIMG Web Application | Rapid prediction of orbital interactions | Analyzing stereoelectronic effects in macromolecules | Gomes Group (CMU) |

| High-Field NMR | Measuring coupling constants | Detecting Perlin effect via ¹JCH measurements | Bruker, Jeol |

| X-ray Diffractometer | Determining molecular geometry | Measuring bond lengths and angles indicative of hyperconjugation | Rigaku, Bruker |

| Alkyl Borazines | Model compounds for hyperconjugation studies | Experimental validation of σ→π* interactions | Custom synthesis [17] |

| 4-Hydroxyproline Peptides | Collagen model systems | Studying biological stereoelectronic effects | Commercial suppliers |

Visualization of Stereoelectronic Concepts and Workflows

Orbital Interaction Diagram

Integrated Research Workflow for Stereoelectronic Analysis

The systematic investigation of stereoelectronic effects has transitioned from theoretical curiosity to practical research tool, with validated methodologies now available for quantifying these quantum interactions across diverse chemical environments. The comparative analysis presented in this guide demonstrates that integrated approaches—combining computational prediction with experimental validation—provide the most robust framework for exploiting stereoelectronic effects in molecular design.

For drug development professionals, these insights offer new opportunities for rational design of therapeutic agents with optimized binding properties and metabolic stability. Materials scientists can leverage stereoelectronic principles to engineer molecular assemblies with enhanced stability and electronic properties. Synthetic chemists can exploit these effects to control reaction pathways and stereochemical outcomes with unprecedented precision.

As methodology continues to advance—particularly through machine learning approaches like SIMGs that bridge quantum accuracy with macromolecular scalability—stereoelectronic effects will increasingly shift from overlooked phenomena to central design principles governing molecular behavior across the chemical sciences.

In fields like drug discovery and materials science, researchers frequently face a significant and often limiting constraint: small chemical datasets. The process of synthesizing novel compounds and experimentally measuring their properties is both time-consuming and expensive. Consequently, the resulting datasets used to train predictive models are often limited in size, hindering the accuracy and generalizability of classical machine learning (ML) approaches [19]. This "small-data problem" is a critical bottleneck in computational chemistry.

Quantum computing presents a promising paradigm to overcome this limitation. By leveraging the inherent properties of quantum mechanics, such as superposition and entanglement, quantum computers can explore chemical spaces in ways that classical computers cannot. This article objectively compares three emerging quantum-inspired approaches designed to extract meaningful insights from limited chemical data, providing a performance comparison and detailed experimental protocols for researchers.

Performance Comparison of Quantum-Inspired Approaches

The following table summarizes the core performance metrics of three distinct quantum-inspired approaches applied to the problem of small chemical datasets.

Table 1: Performance Comparison of Quantum-Inspired Approaches for Small Chemical Datasets

| Approach | Key Mechanism | Reported Performance Advantage | Dataset Size | Key Metrics |

|---|---|---|---|---|

| Stereoelectronics-Infused Molecular Graphs (SIMGs) [19] | Incorporates quantum-chemical orbital interactions into molecular graph representations. | Outperforms standard molecular graphs; achieves high accuracy with limited data by using more explicit molecular information. | Small-scale chemistry datasets | Model accuracy, data efficiency |

| Quantum Reservoir Computing (QRC) [22] | Uses a quantum system to transform input data into a richer feature set for a classical model. | Matched or outperformed classical ML (e.g., Random Forests) on small datasets (~100 records); advantage diminished with ~800 records. | Merck Molecular Activity Challenge (subsets of 100-800 records) | Prediction accuracy, stability with small data |

| Hybrid Quantum-Classical Drug Screening [23] | Uses quantum computers to generate probable molecular patterns, which are refined classically. | Identified two promising KRAS-inhibiting candidates from ~1.1 million initial molecules; entire workflow accelerated. | Training set of ~1.1 million molecules | Successful identification of hit candidates, workflow speed |

Experimental Protocols & Workflows

This section details the experimental methodologies for the approaches compared in Table 1, providing a reproducible framework for scientific validation.

Protocol A: Generating Stereoelectronics-Infused Molecular Graphs (SIMGs)

Objective: To create an interpretable molecular representation that explicitly includes quantum-mechanical orbital interactions, improving predictive performance on small datasets [19].

- Orbital Calculation: For a given molecule, perform quantum chemistry calculations (e.g., Density Functional Theory) to determine the locations and behaviors of electrons, specifically calculating Natural Bond Orbitals (NBOs).

- Interaction Mapping: Map the spatial and electronic interactions between the calculated orbitals. These stereoelectronic effects influence molecular geometry, reactivity, and stability.

- Graph Extension: Extend a standard molecular graph (where nodes are atoms and edges are bonds) by incorporating the orbital interaction data as additional features or nodes.

- Model Training: Use the resulting SIMGs to train machine learning models for property prediction. The enhanced representation provides the model with critical quantum-mechanical insights without requiring a larger dataset.

Protocol B: Quantum Reservoir Computing (QRC) for Molecular Property Prediction

Objective: To leverage a quantum system as a feature extraction tool, enhancing the stability and accuracy of predictions when training data is limited [22].

- Feature Selection: Begin with a set of molecular descriptors (numerical fingerprints of molecules). Use a method like SHapley Additive exPlanations (SHAP) to select the most relevant descriptors for the target property.

- Quantum Encoding: Encode the selected classical molecular descriptors into the parameters of a quantum system, such as a simulated neutral-atom array.

- Quantum Evolution: Let the quantum system evolve in time according to its natural, untrained dynamics. The quantum entanglement and interactions transform the input data.

- Measurement & Feature Extraction: Measure simple local properties from the evolved quantum state. These measurements form a new, quantum-enhanced set of features.

- Classical Prediction: Feed the new features into a classical machine learning model (e.g., a Random Forest) for the final property prediction task.

Protocol C: Hybrid Quantum-Classical Workflow for Drug Candidate Generation

Objective: To rapidly explore a vast chemical space and identify viable drug candidates by combining quantum-generated patterns with classical AI refinement [23].

- Dataset Assembly: Compile a large training set of known active and inactive molecules from literature, molecule libraries, and algorithm-generated variants.

- Quantum Model Training: Train a quantum model on the assembled dataset to learn the chemical features associated with promising inhibitors.

- Quantum Pattern Generation: Use the trained quantum model to generate thousands of probable molecular patterns that fit the target (e.g., a protein's binding pocket).

- Classical Structure Refinement: Employ a classical AI to refine these quantum-generated patterns into valid, synthesizable molecular structures.

- Iterative Ranking & Selection: Rank the generated molecules based on metrics like binding affinity, potential toxicity, and ease of synthesis. Feed the top-ranked candidates back into the model to improve it over multiple rounds.

- Laboratory Validation: Synthesize and test the top candidates in cell-based assays to validate their biological activity.

Workflow Visualization

The following diagrams illustrate the logical workflows for the key experimental protocols described above.

SIMG Creation and QRC Prediction Workflow

Hybrid Quantum-Classical Drug Discovery

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Computational Tools and Datasets for Quantum-Informed Chemical Research

| Item | Function & Application |

|---|---|

| High-Accuracy Dataset (e.g., QDπ) [24] | Provides a large, diverse set of molecular structures with energies and forces calculated at a high quantum level of theory (ωB97M-D3(BJ)/def2-TZVPPD) for training universal ML potentials. |

| Active Learning Software (e.g., DP-GEN) [25] [24] | Automates the process of identifying and adding the most informative new data points to a training set, maximizing model performance while minimizing expensive quantum calculations. |

| Semiempirical Quantum Mechanical (SQM)/Δ MLP Model [24] | A hybrid model that uses a fast SQM method for baseline calculations and a machine learning potential to correct the difference between SQM and high-accuracy results, balancing speed and precision. |

| Web Application for Stereoelectronic Analysis [19] | Makes advanced quantum-chemical representations (like SIMGs) accessible, allowing researchers to quickly analyze orbital interactions without deep computational expertise. |

| Implicit Solvent Model (e.g., IEF-PCM) [26] | A classical method that treats the solvent as a continuous medium, allowing quantum simulations to model molecules in a solution environment, which is critical for biological relevance. |

| Quantum Hardware Cloud Access (e.g., IBM, Quantinuum) [27] [26] | Provides remote access to real quantum processors for running and testing quantum algorithms, moving beyond pure simulation. |

Hybrid Tools in Action: Methodologies for Simulating Real-World Chemical Environments

The emergence of hybrid quantum-classical models represents a transformative approach to computational science, strategically leveraging the complementary strengths of classical and quantum processors. In the context of validating quantum effects in chemical environments, these hybrid architectures enable researchers to navigate the limitations of current noisy intermediate-scale quantum (NISQ) hardware while still exploiting quantum mechanical advantages for specific subproblems [28] [29]. This integration is particularly valuable in computational chemistry and drug development, where accurately simulating molecular behavior in realistic solvated environments has remained a formidable challenge for purely classical methods [26].

The fundamental rationale behind hybrid approaches lies in their division of labor: quantum processors handle tasks naturally suited to quantum systems—such as generating trial wavefunctions and exploring configuration spaces—while classical computers manage data-intensive preprocessing, optimization routines, and overall algorithm orchestration [28] [29]. This synergy has demonstrated practical utility across multiple domains, from molecular simulation to machine learning, often achieving enhanced accuracy with reduced parameter counts compared to purely classical alternatives [30] [31] [26].

Performance Comparison: Hybrid vs. Classical vs. Quantum Models

Quantitative Performance Metrics Across Domains

Experimental evaluations across multiple domains consistently demonstrate that hybrid quantum-classical models can achieve competitive or superior performance compared to purely classical or quantum approaches, often with greater parameter efficiency.

Table 1: Performance Comparison of Computational Models Across Domains

| Application Domain | Model Type | Key Performance Metrics | Parameter Efficiency | Reference Dataset/System |

|---|---|---|---|---|

| Differential Equation Solving [30] | Classical Neural Network | Baseline accuracy | Reference parameter count | Damped harmonic oscillator, Einstein field equations, Schrödinger equation |

| Quantum Neural Network (QNN) | Highest accuracy for damped harmonic oscillator; High performance in Schrödinger equation | Fewer parameters than classical | ||

| Hybrid Quantum-Classical Network | Higher accuracy than classical in most cases | Fewer parameters than classical; Faster convergence | ||

| Image Classification [32] | Classical CNN | 98.21% (MNIST), 32.25% (CIFAR100), 63.76% (STL10) | Reference parameter count | MNIST, CIFAR100, STL10 datasets |

| Hybrid Quantum-Classical CNN | 99.38% (MNIST), 41.69% (CIFAR100), 74.05% (STL10) | 6-32% fewer parameters; 5-12× faster training | ||

| Reinforcement Learning [33] | Classical Model | Successful learning benchmark (mean reward 160) with 86 parameters | Reference parameter count | CartPole environment |

| Hybrid Quantum-Classical Model | Achieved same benchmark with 50 parameters | ~42% fewer parameters | ||

| Solvated Molecule Simulation [26] | Classical CASCI-IEF-PCM | Reference solvation energy values | Computationally expensive | Water, methanol, ethanol, methylamine |

| SQD-IEF-PCM Hybrid | Solvation energies within 1 kcal/mol of classical references; <0.2 kcal/mol for methanol | Reduced computational cost; Scalable |

Advantages in Chemical System Modeling

In chemical research, particularly for drug development applications, hybrid models have demonstrated remarkable effectiveness in simulating solvated molecular systems—a crucial capability for predicting drug behavior in biological environments. The SQD-IEF-PCM (Sample-based Quantum Diagonalization with Integral Equation Formalism Polarizable Continuum Model) approach represents a significant advancement, enabling quantum simulations of molecules in solution with an accuracy matching classical benchmarks [26]. For methanol solvation, this hybrid method achieved energy calculations within 0.2 kcal/mol of classical references, well within the threshold of chemical accuracy essential for predictive drug design [26].

Similarly, in quantum chemistry calculations, the pUCCD-DNN (paired Unitary Coupled-Cluster with Double Excitations optimized with Deep Neural Networks) hybrid approach has demonstrated superior computational efficiency, reducing the mean absolute error of calculated energies by two orders of magnitude compared to non-DNN pUCCD methods [29]. This architecture effectively compensates for quantum hardware limitations by allowing classical neural networks to train on system data and learn from past optimizations, thereby minimizing the number of quantum hardware calls required while maintaining accuracy for complex chemical simulations like the isomerization of cyclobutadiene [29].

Experimental Protocols and Methodologies

Quantum Neural Networks for Scientific Computing

The development of hybrid models for solving differential equations in scientific computing involves carefully structured quantum and classical components:

Quantum Feature Maps: Input data (X) is embedded into quantum gates using trainable parameters according to the equation: ({|{\phi (X, \hat{\theta })}\rangle } = U(X, \hat{\theta }) {|{0}\rangle }^{\otimes n}), where the operator (U) represents quantum gates applied to all qubits initialized in the ({|{0}\rangle }) state [30]. The selection of the feature map form (U(X, \hat{\theta })) is guided by physical properties of the problem, using gates like (R_y(\theta X)) for oscillatory behavior or (\exp ( \theta \, X\, \hat{Z})) for exponential decay/growth [30].

Variational Quantum Circuits: These circuits apply transformations to encoded states through the operation: ({|{\phi (X, \hat{\theta }1, \hat{\theta }2)}\rangle } = W(\hat{\theta }2) U(X, \hat{\theta }1) {|{0}\rangle }^{\otimes n}), where (W) is the variational quantum circuit [30]. This structure allows the model to adapt to the problem's physical constraints while maintaining hardware efficiency.

Quantum Measurement and Classical Integration: The final stage involves computing expectation values of quantum operators, typically the Pauli (\hat{Z}) operator: ({\langle {\phi (X, \hat{\theta }1, \hat{\theta }2)}|} \theta3 \hat{Z}^{\otimes n} {|{\phi (X, \hat{\theta }1, \hat{\theta }2)}\rangle }), multiplied by a trainable parameter (\theta3) to scale the neural network's output [30]. This quantum-processed information is then integrated with classical processing streams for final optimization.

Table 2: Research Reagent Solutions for Hybrid Model Implementation

| Research Tool | Type/Function | Specific Implementation Examples |

|---|---|---|

| Parameterized Quantum Circuits (PQCs) | Encode trial wavefunctions; Perform quantum transformations | Unitary Coupled-Cluster (UCC) ansatz; Paired UCC with Double Excitations (pUCCD) [29] |

| Quantum Feature Maps | Encode classical data into quantum states | Physics-informed quantum feature maps; RY(θ), RX(θ), RZ(θ) rotation gates [30] [34] |

| Variational Quantum Circuits (VQCs) | Hybrid quantum-classical optimization | RealAmplitudes; ZZFeatureMaps [34] |

| Classical Optimization Frameworks | Train hybrid models; Optimize quantum parameters | Adam optimizer (β₁=0.9, β₂=0.99, ε=10⁻⁸) [30]; Deep Neural Networks (DNNs) [29] |

| Quantum Simulation Libraries | Implement and simulate quantum algorithms | PennyLane [30]; PyTorch [30] |

| Solvent Models | Incorporate environmental effects in molecular simulations | Integral Equation Formalism Polarizable Continuum Model (IEF-PCM) [26] |

Hybrid Workflow for Solvated Molecular Systems

The experimental protocol for simulating solvated molecules using hybrid quantum-classical methods involves a multi-stage process that iterates between quantum and classical subsystems:

Figure 1: Workflow for Hybrid Quantum-Classical Simulation of Solvated Molecules. This diagram illustrates the iterative process combining quantum sampling with classical solvent modeling for molecular simulations [26].

The SQD-IEF-PCM method begins by generating electronic configurations from a molecule's wavefunction using quantum hardware [26]. These samples, affected by hardware noise, are corrected through a self-consistent process (S-CORE) that restores key physical properties like electron number and spin [26]. The corrected configurations build a smaller subspace of the full molecular problem, manageable for classical computation. The IEF-PCM solvent model is incorporated as a perturbation to the molecule's Hamiltonian, with the process iterating until the molecular wavefunction and solvent environment reach mutual consistency [26].

Residual Hybrid Architecture for Enhanced Information Transfer

A significant innovation in hybrid model design addresses the "measurement bottleneck" in quantum machine learning through residual connections:

Figure 2: Residual Hybrid Quantum-Classical Model Architecture. This bypass connection combines raw inputs with quantum features to overcome measurement bottlenecks [31].

This residual hybrid architecture ingeniously bypasses the quantum measurement bottleneck by combining original input data with quantum-transformed features before classification [31]. The approach exposes both the raw input and quantum-enhanced features to the classifier without altering the underlying quantum circuit, enabling more efficient information transfer from the quantum to classical processing stages [31]. Experiments demonstrate that this architecture achieves up to 55% accuracy improvement over quantum baselines while maintaining enhanced privacy guarantees and reduced communication overhead in federated learning scenarios [31].

Hybrid quantum-classical models have substantively bridged the current computational divide, enabling researchers to validate quantum effects in chemically relevant environments with impressive accuracy. The experimental evidence across multiple domains confirms that these hybrid approaches can outperform purely classical methods while mitigating the limitations of NISQ-era quantum hardware. For drug development professionals, the demonstrated ability to simulate solvated molecules with chemical accuracy using methods like SQD-IEF-PCM represents a particularly significant advancement, opening new possibilities for understanding drug behavior in biological environments [26].

The continued evolution of hybrid architectures—including physics-informed quantum feature maps, residual bypass connections, and deep neural network optimizers for quantum chemistry—promises further enhancements to computational efficiency and accuracy. As quantum hardware matures, these hybrid frameworks provide a flexible foundation for progressively increasing quantum workloads while maintaining robust classical oversight, offering a practical pathway toward full quantum advantage in computational chemistry and drug development.

The emerging class of solvent-ready quantum algorithms represents a significant advancement in simulating realistic chemical environments on quantum hardware. By integrating well-established implicit solvent models, such as the Integral Equation Formalism Polarizable Continuum Model (IEF-PCM), with quantum computational workflows, researchers are now overcoming a fundamental limitation in quantum chemistry simulations: the inability to accurately model solute-solvent interactions. This integration provides a critical framework for validating quantum effects across different chemical environments, moving beyond gas-phase approximations to address biologically and industrially relevant problems in drug design and materials science [26].

These hybrid quantum-classical approaches are particularly valuable for simulating chemical phenomena where solvent environment dramatically influences molecular behavior, including protein folding, drug binding, and catalytic reactions. The incorporation of IEF-PCM and similar continuum models enables quantum simulations to account for electrostatic screening and solvation effects without the prohibitive computational cost of explicit solvent molecules, creating a pathway toward practical quantum advantage in chemical simulation [26] [35].

Methodological Framework: Integrating IEF-PCM with Quantum Hardware

Theoretical Foundations of Implicit Solvation Models

Implicit solvent models, particularly IEF-PCM, treat the solvent as a continuous dielectric medium characterized by its dielectric constant (ε = 80 for water at 300 K), rather than modeling individual solvent molecules. In this approach, the solute occupies a molecular-shaped cavity within this continuum, and the electrostatic interaction between the solute and solvent is described through the generation of apparent surface charges (ASC) at the cavity boundary [35] [36].

The IEF-PCM method represents a sophisticated formulation of this approach, utilizing integral operators never previously used in the chemical community to solve the electrostatic solvation problem at the quantum mechanical level. This formalism can treat linear isotropic solvent models, anisotropic liquid crystals, and ionic solutions within a unified theoretical framework [35]. For quantum chemical applications, IEF-PCM introduces a reaction field term into the molecular Hamiltonian that depends self-consistently on the solute electron density, effectively modeling how the solvent environment polarizes the electronic structure of the solute molecule [36].

Hybrid Quantum-Classical Implementation

The integration of IEF-PCM with quantum hardware follows a hybrid computational strategy that distributes tasks according to their computational requirements:

Table: Division of Labor in Hybrid Quantum-Classical Workflow

| Computational Task | Processing Unit | Function in Solvent-Ready Algorithm |

|---|---|---|

| Wavefunction Sampling | Quantum Processor | Generates electronic configurations from molecular wavefunction |

| Noise Mitigation | Quantum-Classical Interface | Applies S-CORE correction to restore physical properties |

| Solvent Field Computation | Classical Processor | Calculates IEF-PCM reaction field using apparent surface charges |

| Hamiltonian Construction | Classical Processor | Integrates solvent perturbation into molecular Hamiltonian |

| Subspace Diagonalization | Classical Processor | Solves reduced electronic structure problem |

Recent implementations, such as the Sample-based Quantum Diagonalization (SQD) method extended with IEF-PCM capabilities, begin by generating electronic configurations from a molecule's wavefunction using quantum hardware. These samples, affected by inherent hardware noise, are corrected through a self-consistent process (S-CORE) that restores key physical properties like electron number and spin [26].

The IEF-PCM solvent model is incorporated as a perturbation to the molecule's Hamiltonian—the quantum operator describing the system's total energy. This creates an iterative workflow where the molecular wavefunction and solvent reaction field are updated until solute and solvent reach mutual consistency. This approach was successfully tested on IBM quantum computers with 27 to 52 qubits, demonstrating that despite current hardware limitations, chemically accurate simulations of solvated systems are achievable [26].

Performance Comparison: Solvent-Ready Quantum Algorithms vs. Classical Approaches

Quantitative Assessment of Computational Accuracy

Recent experimental studies provide compelling data on the performance of solvent-ready quantum algorithms compared to established classical computational methods. A 2025 study by Cleveland Clinic researchers implemented the SQD-IEF-PCM method on IBM quantum hardware for calculating solvation free energies of common polar molecules in biochemistry, with results benchmarked against high-accuracy classical methods and experimental data [26].

Table: Performance Comparison of SQD-IEF-PCM vs. Classical Methods

| Molecule | SQD-IEF-PCM Result (kcal/mol) | Classical CASCI Reference (kcal/mol) | Experimental Value (kcal/mol) | Deviation from Experiment (kcal/mol) |

|---|---|---|---|---|

| Water | -6.32 | -6.41 | -6.32 | 0.00 |

| Methanol | -5.12 | -5.30 | -5.11 | 0.01 |

| Ethanol | -5.08 | -5.22 | -5.00 | 0.08 |

| Methylamine | -4.51 | -4.60 | -4.50 | 0.01 |

The SQD-IEF-PCM method achieved chemical accuracy (defined as error < 1 kcal/mol) for all tested molecules, with the solvation energy of methanol differing by less than 0.2 kcal/mol between quantum and classical approaches. The accuracy improved with increasing sample size, demonstrating the scalability of the approach even for complex molecules like ethanol, where the full quantum configuration space is enormous [26].

Assessment Against Alternative Solvent Models

While IEF-PCM has shown promising results in quantum implementations, it is valuable to contextualize its performance against other implicit solvent models used in classical computational chemistry. A comprehensive 2016 comparison study evaluated several common implicit solvent models for their accuracy in estimating solvation energies [37].

Table: Accuracy Comparison of Implicit Solvent Models for Small Molecules

| Solvent Model | Correlation with Experimental Data (R²) | Computational Cost Relative to Explicit Solvent | Key Strengths |

|---|---|---|---|

| IEF-PCM | 0.87-0.93 | ~10⁻⁴ | High numerical accuracy, rigorous theoretical foundation |

| COSMO | 0.87-0.93 | ~10⁻⁴ | Conductor-like screening approximation |

| Generalized Born (GB) | 0.87-0.93 | ~10⁻⁵ | Speed, reasonable accuracy for molecular dynamics |

| Poisson-Boltzmann (PB) | 0.87-0.93 | ~10⁻³ | Considered gold standard for electrostatic calculations |

For small molecules, all tested implicit solvent models showed high correlation coefficients (0.87-0.93) between calculated solvation energies and experimental hydration energies. However, the performance diverged significantly for protein solvation energies and protein-ligand binding desolvation energies, where substantial discrepancies (up to 10 kcal/mol) with explicit solvent references were observed [37].

Experimental Protocols and Validation Frameworks

Detailed Methodology for SQD-IEF-PCM Implementation

The experimental protocol for implementing solvent-ready algorithms with IEF-PCM on quantum hardware involves a multi-stage process with specific procedures at each phase:

- System Preparation and Cavity Definition

- Define molecular structure using Cartesian coordinates

- Construct molecular cavity based on united atom topological model (UATM) employing modified Bondi radii

- Apply a solvent-excluded surface (SES) algorithm with 0.3 Å triangulation resolution

- Define dielectric properties: ε = 78.36 for water at 298.15 K [26] [36]

- Quantum Computational Phase

- Initialize quantum circuit with hardware-efficient ansatz

- Generate electronic configurations through repeated wavefunction sampling

- Apply S-CORE (Self-Consistent Operator Restoration) correction to mitigate hardware noise effects

- Restore physical constraints including electron number and spin multiplicity [26]

- Classical Processing and Iteration

- Construct reduced subspace Hamiltonian using corrected quantum samples

- Compute IEF-PCM apparent surface charges using boundary element method

- Incorporate solvent reaction field as perturbation to molecular Hamiltonian

- Iterate until wavefunction and reaction field achieve self-consistency (ΔE < 0.001 kcal/mol) [26]

This protocol was validated using IBM quantum processors with 27-52 qubits, testing systems including water, methanol, ethanol, and methylamine in aqueous solution. The computational workflow maintained scalability and noise robustness while achieving chemical accuracy across all test cases [26].

Verification Methodologies for Quantum Advantage Claims

Independent verification of quantum algorithm performance employs multiple validation strategies:

Cross-Platform Reproducibility: Implementing identical algorithms across different quantum hardware platforms to verify consistent results [38]

Classical Benchmarking: Comparison against high-accuracy classical methods including Complete Active Space Configuration Interaction (CASCI) and heat-bath configuration interaction (HCI) [26]

Experimental Validation: Correlation with empirical solvation free energies from databases such as MNSol [26]

Scalability Assessment: Evaluating performance maintenance with increasing system size and quantum circuit depth [26]

Google's Quantum Echoes algorithm, for instance, employs a "quantum verifiability" approach where results can be repeated on different quantum computers of the same caliber to confirm accuracy, establishing a framework for scalable verification of quantum advantage claims in chemical simulations [38].

Implementation of solvent-ready quantum algorithms requires specialized computational tools and resources. The following table details essential components of the research infrastructure for this emerging field:

Table: Essential Research Reagents for Solvent-Ready Algorithm Implementation

| Resource Category | Specific Tools/Platforms | Function in Research Workflow |

|---|---|---|

| Quantum Hardware Platforms | IBM Quantum (27-52 qubit processors) | Execute quantum sampling phase of hybrid algorithms |

| Quantum Software Ecosystems | NVIDIA CUDA-Q, Qiskit, Pennylane | Develop and optimize quantum circuits; enable hybrid quantum-classical computation |

| Classical Computational Chemistry Suites | Q-Chem, DISOLV, MCBHSOLV, APBS | Implement IEF-PCM and other implicit solvent models; perform classical computational benchmarks |

| Specialized Solvation Algorithms | SQD-IEF-PCM, SS(V)PE, COSMO, Generalized Born | Provide specific methodological approaches for solvent modeling in quantum simulations |

| Validation Databases | MNSol Database, Catechol Benchmark | Supply experimental and computational reference data for algorithm validation |

| High-Performance Computing Resources | NVIDIA GH200/H200 Grace Hopper Superchips | Accelerate classical processing components; enable quantum circuit simulation |

Performance benchmarking demonstrates that specialized hardware can significantly accelerate development cycles. Recent tests of quantum AI algorithms on NVIDIA CUDA-Q with GH200 Grace Hopper Superchips showed 73× faster performance for forward propagation of 18-qubit quantum circuits compared to traditional CPU-based methods, with backward propagation accelerated by 41× [39]. This enhanced computational efficiency enables more rapid iteration and optimization of solvent-ready algorithm implementations.

Future Directions and Research Challenges

Current Limitations and Development Priorities

Despite promising advances, solvent-ready quantum algorithms face several significant limitations that define current research priorities:

System Charge Limitations: Current SQD-IEF-PCM implementations are most suitable for neutral molecules, with performance for charged systems requiring further assessment and potential methodological adaptation [26].

Solvent Model Completeness: While IEF-PCM effectively captures electrostatic interactions, it provides incomplete treatment of specific solute-solvent interactions such as hydrogen bonding, dispersion forces, and exchange-repulsion effects. These limitations necessitate future extensions incorporating explicit solvent molecules or more advanced hybrid models [26] [8].

Circuit Optimization Challenges: Current implementations highlight the need for better parameterization of quantum circuits to reduce the number of samples required for accurate results, potentially through optimized ansatz development [26].

Dynamic Solvation Effects: Traditional solvent descriptors reduce complex, fluctuating environments to static averages, failing to account for localized, time-resolved interactions that govern many chemical transformations. Emerging approaches propose treating solvents as dynamic solvation fields characterized by fluctuating local structure and evolving electric fields [8].

Emerging Paradigms and Research Trajectories

The field is rapidly evolving toward more sophisticated integration of quantum computing with solvent modeling:

Dynamic Solvation Fields: A paradigm shift from static continuum models to dynamic frameworks that capture how solvent fluctuations modulate transition state stabilization, steer nonequilibrium reactivity, and reshape interfacial chemical processes [8].

Machine Learning Enhancement: Integration of machine-learned potentials with quantum solvation algorithms to improve accuracy while maintaining computational efficiency, particularly for complex biomolecular systems [40].

Error Mitigation Advancements: Development of more sophisticated error correction techniques specifically tailored to maintain accuracy in environmental simulations despite hardware noise and decoherence.

Expanded Validation Frameworks: Creation of specialized benchmarking datasets, such as the Catechol Benchmark for solvent selection, providing standardized testing grounds for algorithm performance across diverse chemical environments [40].

As quantum hardware continues to evolve with improving coherence times and gate fidelities, and as algorithmic approaches mature, solvent-ready quantum algorithms are positioned to enable previously intractable simulations of chemical processes in realistic environments, potentially transforming computational drug discovery and materials design. The integration of implicit solvent models like IEF-PCM represents a critical stepping stone toward the long-promised quantum advantage in computational chemistry [26] [41].

The accurate prediction of molecular behavior across diverse chemical environments represents a central challenge in modern computational chemistry. Traditional molecular machine learning (ML) models often rely on simplified representations, such as molecular graphs or fingerprints, which inherently lack the quantum-mechanical details essential for capturing properties like reactivity, stability, and binding affinity [19]. This limitation becomes particularly acute when attempting to validate and exploit subtle quantum effects, such as stereoelectronic interactions, which are highly dependent on a molecule's geometric and electronic structure.

The emerging field of quantum-infused machine learning seeks to bridge this gap by integrating explicit quantum-chemical information into ML models. This paradigm shift is crucial for a broader research thesis aimed at validating quantum effects across different chemical environments, from simple isolated molecules to complex biological systems in solution. By creating a more direct link between quantum physics and machine learning, these methods promise to enhance the predictive power of computational models, providing deeper chemical insight and accelerating discovery in drug development and materials science [19] [42].

This guide focuses on Stereoelectronics-Infused Molecular Graphs (SIMGs), a novel molecular representation that explicitly encodes orbital interactions and stereoelectronic effects. We will objectively compare its performance against traditional molecular representation methods, providing detailed experimental data and protocols to help researchers assess its utility for their specific chemical environment challenges.

Understanding Molecular Representations: From Simple Graphs to Quantum Infusion

The Limitations of Traditional Representations

Traditional molecular machine learning employs several standard representations, each with inherent limitations for capturing quantum effects:

- Simplified Molecular Graphs: These represent atoms as nodes and bonds as edges but lack electronic structure information, making them information-sparse for predicting quantum-influenced properties [43].

- SMILES Strings and Fingerprints: These textual or hashed representations capture molecular connectivity but discard three-dimensional and electronic information crucial for understanding stereoelectronic effects [44].

- Global Descriptors and 3D Coordinates: While sometimes including spatial information, these frequently overlook crucial quantum-mechanical details such as orbital interactions and electron densities [19].

As prediction tasks grow more complex—especially those involving reactivity, catalysis, or interaction specificity—these simplified representations become insufficient for accurately modeling quantum phenomena in varying chemical environments.

The SIMG Approach: Infusing Quantum-Chemical Insight

Stereoelectronics-Infused Molecular Graphs (SIMGs) address these limitations by augmenting standard molecular graphs with explicit quantum-chemical information derived from stereoelectronic effects [43]. Stereoelectronic effects refer to the stabilizing electronic interactions that arise from the spatial relationships between molecular orbitals and their electronic interactions. These effects directly influence molecular geometry, reactivity, and stability [19].

The SIMG framework incorporates key electronic features that are typically omitted in traditional representations:

- Natural Bond Orbitals (NBOs): Including bonding, antibonding orbitals, and their interactions [45].

- Lone Pairs: Explicit representation of non-bonding electrons [45].

- Donor-Acceptor Interactions: Charge transfer effects between electron-rich and electron-deficient orbitals [45].

- Orbital Energies and Occupancies: Quantitative electronic structure descriptors [43].

Table: Key Components of Stereoelectronics-Infused Molecular Graphs (SIMGs)

| Component | Description | Role in Molecular Representation |

|---|---|---|

| Atoms & Bonds | Standard molecular graph components | Provides basic molecular connectivity framework |

| Natural Bond Orbitals | Quantum-chemical orbitals describing electron pairs in bonds | Encodes bonding character and electron distribution |

| Orbital Interactions | Donor-acceptor interactions between filled & empty orbitals | Captures stereoelectronic effects influencing reactivity |

| Lone Pairs | Non-bonding electron pairs on atoms | Critical for understanding nucleophilicity and molecular polarity |

Performance Comparison: SIMG Against Traditional Molecular Representations

Quantitative Benchmarking Across Molecular Properties

Researchers from Carnegie Mellon University conducted comprehensive benchmarking to evaluate SIMG's performance against established molecular representation methods. The experiments assessed predictive accuracy for key quantum-chemical properties using the standard QM9 dataset, which contains approximately 134,000 small organic molecules [45].

Table: Performance Comparison of Molecular Representations on QM9 Benchmark Tasks (Lower values indicate better performance)

| Representation Method | Dipole Moment (MAE) | HOMO-LUMO Gap (MAE) | Atomization Energy (MAE) | Computational Speed |

|---|---|---|---|---|

| SIMG* | ~0.3 D | ~0.04 eV | ~0.03 eV | Seconds (approximation) |

| ChemProp | ~0.5 D | ~0.08 eV | ~0.05 eV | Seconds |

| SOAP | ~0.4 D | ~0.07 eV | ~0.04 eV | Minutes to hours |

| Coulomb Matrix | ~0.6 D | ~0.10 eV | ~0.07 eV | Seconds |

MAE = Mean Absolute Error; D = Debye; eV = electronvolt

The results demonstrate that SIMG* (the machine-learned approximation of SIMG) consistently outperforms traditional representations across all measured properties, achieving a 50% reduction in error for HOMO-LUMo gap predictions compared to ChemProp [45]. The HOMO-LUMO gap is particularly significant as it directly relates to molecular reactivity and optical properties, making SIMG especially valuable for research on quantum effects in different chemical environments.

Generalizability to Complex Chemical Environments

A critical test for any molecular representation is its ability to generalize beyond the training data to more complex chemical systems:

- Macromolecular Applications: Models trained on small molecules (QM9 dataset) successfully predicted orbital interactions in much larger systems, including entire proteins, where traditional quantum chemistry calculations become computationally prohibitive [43] [46].

- Speed Advantage: The SIMG* approach achieves orders of magnitude speed improvement over traditional Density Functional Theory (DFT) with Natural Bond Orbital (NBO) analysis, reducing computation time from hours/days to seconds [46].

- Chemical Interpretability: Unlike black-box models, SIMGs provide interpretable insights into specific orbital interactions that influence molecular properties and reactivity [19].

Experimental Protocols for SIMG Implementation

Data Preparation and Model Training