Validating Quantum Theory in Chemical Reactions: From Computational Design to Experimental Breakthroughs in Biomedicine

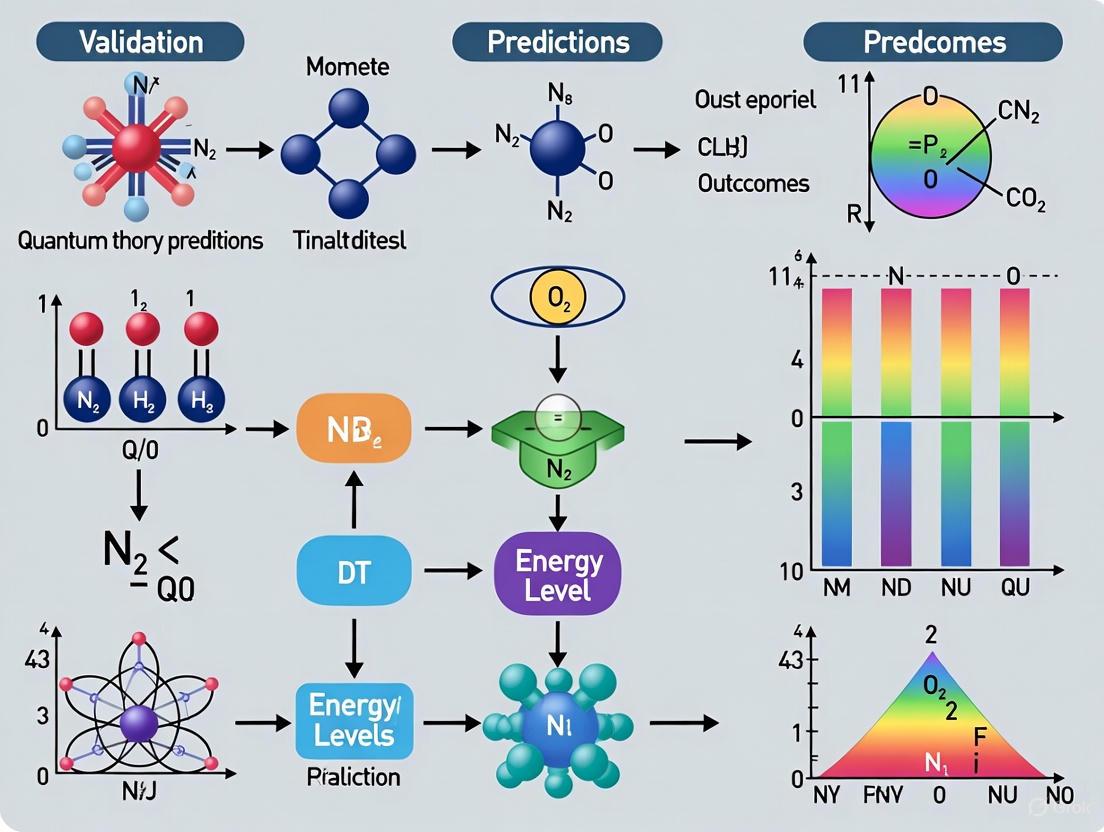

This article explores the integrated workflow of using quantum chemical calculations to predict chemical behavior and subsequently validating these predictions with experimental data.

Validating Quantum Theory in Chemical Reactions: From Computational Design to Experimental Breakthroughs in Biomedicine

Abstract

This article explores the integrated workflow of using quantum chemical calculations to predict chemical behavior and subsequently validating these predictions with experimental data. It covers foundational quantum principles, modern computational methodologies like machine learning and hybrid QM/MM, strategies for troubleshooting computational limitations, and rigorous validation frameworks. Aimed at researchers and drug development professionals, it highlights how this synergy accelerates the design of novel materials, catalysts, and therapeutics with enhanced efficiency and reduced reliance on traditional trial-and-error, ultimately bridging the gap between theoretical prediction and practical application in biomedical research.

Quantum Chemistry Foundations: From Schrödinger's Equation to Predictive Modeling

The Schrödinger equation is the cornerstone of quantum mechanics, governing the behavior of electrons in molecules and materials [1]. Solving this equation for chemical systems allows researchers to predict reaction energies, molecular properties, and the fundamental pathways of chemical transformations [2]. However, the computational challenge is immense: the complexity of finding exact solutions grows exponentially with the number of electrons, a difficulty known as the "exponential wall problem" [3]. This guide provides an objective comparison of contemporary computational methods developed to solve the Schrödinger equation, evaluating their performance, accuracy, and applicability to real-world chemical problems faced by researchers and drug development professionals.

Computational Methodologies: Approaches and Theoretical Foundations

Traditional Quantum Chemistry Methods

Traditional computational approaches balance approximations with computational cost through well-established theoretical frameworks [2]. The Hartree-Fock (HF) method uses a mean-field approximation where electrons move independently in an average field, serving as the starting point for more advanced methods. Coupled Cluster Theory (CCSD, CCSD(T)) incorporates electron correlation through excitation operators, with CCSD(T) often considered the "gold standard" for achieving chemical accuracy in small to medium-sized molecules. Density Functional Theory (DFT) bypasses the complex many-electron wavefunction by using electron density as the fundamental variable, offering a good balance of accuracy and computational efficiency for many systems. The Full Configuration Interaction (FCI) method provides the exact solution within a given basis set but is computationally feasible only for very small systems due to exponential scaling.

Emerging Algorithmic and Machine Learning Approaches

Recent advances introduce novel computational paradigms for solving quantum chemical problems [3] [4] [5]. Quantum Phase Estimation (QPE) algorithms, including iterative (IQPE) and approximate (AIPE) variants, leverage quantum computing principles to determine energy eigenvalues, though current implementations primarily run on classical simulators [3]. Neural Network Quantum States (NNQS) frameworks parameterize the wavefunction with neural networks, with Transformer-based architectures like QiankunNet demonstrating particular promise for capturing complex quantum correlations [4]. Generative AI approaches such as FlowER (Flow matching for Electron Redistribution) incorporate physical constraints like mass and electron conservation to predict reaction outcomes and mechanisms [5]. Specialized machine learning models including React-OT focus on specific chemical challenges like predicting transition state structures with high speed and accuracy [6].

Performance Comparison of Computational Methods

Accuracy Benchmarks Across Molecular Systems

Table 1: Accuracy comparison of computational methods for molecular energy calculations

| Method | Theoretical Foundation | Accuracy (% FCI correlation energy) | System Size Limitations | Computational Scaling |

|---|---|---|---|---|

| Hartree-Fock (HF) | Wavefunction theory | 90-95% | Limited by integral evaluation | N³-N⁴ |

| CCSD(T) | Wavefunction theory | 99.5-99.9% | ~50 atoms with moderate basis sets | N⁷ |

| DFT | Electron density | Varies by functional | ~1000s of atoms | N³-N⁴ |

| DMRG | Tensor networks | ~99.9% | Strongly correlated 1D systems | Polynomial |

| QiankunNet | Neural network quantum state | 99.9% (30 spin orbitals) | Tested on CAS(46e,26o) | Polynomial |

| IQPE/AIPE | Quantum algorithm | Varies by system (e.g., 98.5% for SWP) | Limited by qubit requirements | Polynomial |

The QiankunNet framework has demonstrated remarkable accuracy, achieving 99.9% of Full Configuration Interaction (FCI) correlation energy across a benchmark set of 16 molecules including F₂, HCl, and H₂O [4]. In the Fenton reaction mechanism—a fundamental process in biological oxidative stress—QiankunNet successfully handled a large CAS(46e,26o) active space, enabling accurate description of the complex electronic structure evolution during Fe(II) to Fe(III) oxidation [4]. Quantum algorithm approaches show variable performance depending on the system: for finite square well potentials, the Approximate Iterative Quantum Phase Estimation (AIPE) method outperformed traditional IQPE, while the reverse was true for harmonic oscillator systems [3].

Computational Efficiency and Transition State Prediction

Table 2: Computational efficiency and application scope comparison

| Method | Time Requirements | Key Strengths | Key Limitations |

|---|---|---|---|

| CCSD(T) | Hours to days (single point) | High accuracy for balanced correlations | Poor scaling for large systems |

| DFT | Minutes to hours (single point) | Good cost-accuracy balance | Functional dependence |

| React-OT | 0.4 seconds per transition state | Rapid transition state prediction | Limited element diversity in training |

| FlowER | Faster than traditional QM | Mass/electron conservation | Limited to trained reaction types |

| QiankunNet | Hours (training required) | High expressivity for correlations | Data and compute intensive training |

Machine learning approaches demonstrate exceptional efficiency gains for specific tasks. The React-OT model predicts transition state structures—critical points determining reaction rates—in approximately 0.4 seconds, a dramatic improvement over quantum chemistry methods that can require hours or days [6]. This model uses linear interpolation to generate intelligent initial guesses for atomic positions in transition states, requiring only about five optimization steps compared to dozens for previous approaches [6]. The FlowER system ensures physical realism in reaction prediction by explicitly conserving electrons and atoms through a bond-electron matrix representation, matching or outperforming existing approaches in identifying standard mechanistic pathways [5].

Experimental Protocols and Methodologies

Quantum Algorithm Implementation (IQPE/AIPE)

The implementation of iterative quantum phase estimation algorithms follows a structured protocol [3]. Circuit Construction: Quantum circuits are built using the Qiskit Python SDK, incorporating Hadamard gates for superposition, controlled unitary operations for time evolution, and measurement operations. Time Evolution Operator: The exponential of the Hamiltonian (U = e^{-iHt}) is decomposed into fundamental quantum gates, requiring trotterization for many-body systems. Iterative Phase Extraction: The algorithm functions with a single control qubit, with each iteration determining successive bits of the phase, and subsequent measurements informing phase adjustments in following steps. Validation: Results are validated against known theoretical values for systems like harmonic oscillators and finite square well potentials, with accuracy quantified by deviation from exact solutions.

Neural Network Quantum State (QiankunNet) Framework

The QiankunNet methodology employs a sophisticated neural network architecture [4]. Wavefunction Ansatz: A Transformer-based network parameterizes the quantum wavefunction, capturing complex electron correlations through attention mechanisms. Autoregressive Sampling: The framework uses layer-wise Monte Carlo tree search (MCTS) with a hybrid breadth-first/depth-first strategy to generate electron configurations while conserving electron number. Physics-Informed Initialization: The model is initialized with truncated configuration interaction solutions, providing a principled starting point for variational optimization. Variational Optimization: Parameters are optimized using the stochastic reweighted gradient descent approach to minimize the energy expectation value, computed through parallel local energy evaluation.

Machine Learning for Reaction Prediction (FlowER and React-OT)

These approaches combine physical principles with data-driven training [5] [6]. Data Preparation: Models are trained on quantum chemistry data from patent literature and established databases, containing reactant, product, and transition state structures. Physical Constraints: FlowER incorporates a bond-electron matrix based on Ugi's method to explicitly conserve electrons and atoms throughout reactions. Architecture Design: React-OT employs optimal transport theory to map reactants to products through physically realistic trajectories. Transfer Learning: Models pretrained on general chemical datasets are fine-tuned for specific reaction classes or elements.

Research Reagent Solutions: Essential Computational Tools

Table 3: Key software and computational tools for quantum chemical calculations

| Tool/Platform | Function | Application Context |

|---|---|---|

| Qiskit | Quantum circuit simulation | Quantum algorithm development and testing [3] |

| PySchrodinger | Schrödinger equation solver | 1D quantum system simulation [7] |

| Quantum Chemistry Packages (e.g., PySCF) | Electronic structure calculations | Traditional wavefunction and DFT methods [2] |

| React-OT App | Transition state prediction | Rapid reaction pathway analysis [6] |

| FlowER Model | Reaction outcome prediction | Mechanistic reaction prediction with physical constraints [5] |

Validation of Quantum Theory Predictions in Chemical Research

The validation of computational quantum chemistry methods occurs through multiple complementary approaches [8]. Comparison with Experimental Data: For smaller systems where exact quantum chemical calculations are feasible, researchers compare predicted energies, spectroscopic properties, and reaction barriers with experimental measurements. Cross-Method Validation: Different computational approaches with independent approximations are compared against each other, such as when neural network quantum states are validated against coupled cluster results [4]. Progressive Testing: Methods are tested on increasingly complex systems where some higher-level reference data exists, building confidence for applications to truly unknown systems. Physical Consistency Checks: Predictions are evaluated for adherence to physical principles like size consistency, variational behavior, and known limiting cases.

The computational landscape for solving the Schrödinger equation has diversified dramatically, with traditional quantum chemistry methods now complemented by quantum-inspired algorithms, neural network wavefunctions, and physically constrained machine learning models. While established methods like CCSD(T) and DFT remain indispensable for their well-understood performance characteristics, emerging approaches offer exciting capabilities: QiankunNet demonstrates unprecedented accuracy for strongly correlated systems, React-OT provides remarkable speed in transition state prediction, and FlowER ensures physical realism in reaction outcome forecasting. The optimal method selection depends critically on the specific application—system size, required accuracy, available computational resources, and the particular chemical property of interest. Future advancements will likely focus on hybrid approaches that combine the physical rigor of traditional quantum chemistry with the scalability of machine learning, potentially enabling accurate simulation of increasingly complex chemical phenomena relevant to drug development, materials design, and fundamental chemical research.

The validation of quantum theory predictions in chemical research, particularly in fields like drug development, relies on a suite of computational quantum chemistry methods. These techniques, which solve the electronic Schrödinger equation from first principles (ab initio), enable researchers to predict molecular structures, energies, and reactivities with remarkable accuracy [9]. The three primary classes of methods—ab initio Hartree-Fock (HF), Density Functional Theory (DFT), and post-Hartree-Fock (post-HF) correlated methods—offer a spectrum of trade-offs between computational cost, accuracy, and applicability. This guide provides an objective comparison of these methodologies, framing them within the broader scientific thesis of validating and refining quantum mechanical predictions for complex chemical systems, including those relevant to pharmaceutical design and materials science. The ability to run these calculations has fundamentally transformed theoretical chemistry, a contribution recognized by the 1998 Nobel Prize awarded to John Pople and Walter Kohn for their pioneering work [9] [10].

Table 1: Key Characteristics of Major Quantum Chemistry Methods

| Method | Theoretical Foundation | Handles Electron Correlation? | Typical Applications |

|---|---|---|---|

| Hartree-Fock (HF) | Approximates the many-electron wavefunction with a single Slater determinant [9]. | No; only includes an average effect (mean field) [9]. | Foundational calculation; starting point for post-HF methods [9]. |

| Density Functional Theory (DFT) | Uses the electron density, rather than the wavefunction, as the fundamental variable [11] [12]. | Yes, approximately via the exchange-correlation functional [11] [10]. | Geometry optimization, reaction mechanisms, materials science, large systems [11] [12]. |

| Post-Hartree-Fock (e.g., MP2, CCSD(T), CASSCF) | Builds on HF by introducing a more sophisticated, multi-determinant wavefunction [9] [13]. | Yes; aims to treat correlation explicitly and systematically [13] [10]. | High-accuracy thermochemistry, excited states, bond breaking, and multiconfigurational systems [14] [10]. |

Table 2: Computational Scaling and Benchmark Accuracy for Common Methods

| Method | Formal Computational Scaling | Benchmark Accuracy (Atomization Energies) |

|---|---|---|

| Hartree-Fock (HF) | N⁴ [9] | Not reliable (neglects correlation) [10] |

| MP2 | N⁵ [9] | ~0.3 kcal/mol (with extrapolation) [10] |

| CCSD(T) | N⁷ [9] | ~0.1 kcal/mol [10] |

| Hybrid DFT (B3LYP) | Similar to HF, but with a larger constant [9] | ~3.1 kcal/mol (G2 set) [10] |

| CASPT2 | Exponential with active space size [10] | Method of choice for multireference problems [10] |

Detailed Examination of Computational Methods

Ab Initio Hartree-Fock Method

The Hartree-Fock (HF) method is the simplest ab initio electronic structure calculation, serving as the starting point for more advanced techniques [9]. It is a variational procedure, meaning the approximate energies obtained are always equal to or greater than the exact energy [9]. The key limitation of HF is that it does not specifically account for the instantaneous Coulombic electron-electron repulsion; only its average effect is included in the calculation [9]. This neglect of electron correlation means that while HF can produce reasonable molecular structures, it is often inadequate for predicting reaction energies, bond dissociation, or any property where electron correlation plays a significant role [10]. The HF method scales nominally as N⁴ with system size, making it computationally manageable for relatively large systems [9].

Density Functional Theory (DFT)

Density Functional Theory (DFT) has become one of the most popular and versatile methods in computational chemistry and materials science due to its favorable balance of cost and accuracy [11] [12]. Instead of the complex many-electron wavefunction, DFT uses the electron density—a function of only three spatial coordinates—as the fundamental variable [11]. This is based on the Hohenberg-Kohn theorems, which prove that the ground-state energy is a unique functional of the electron density [11]. In practice, the Kohn-Sham approach is used, which replaces the original problem with one of non-interacting electrons moving in an effective potential [11].

The main challenge in DFT is the exchange-correlation functional, which accounts for all quantum mechanical effects not captured by the simple electrostatic terms and must be approximated [11] [10]. The accuracy of a DFT calculation depends critically on the choice of functional. Common classes include:

- Local Density Approximation (LDA): Based on the uniform electron gas.

- Generalized Gradient Approximation (GGA): Adds dependence on the density gradient (e.g., BLYP).

- Hybrid Functionals: Incorporate a portion of exact Hartree-Fock exchange (e.g., B3LYP) [10].

While hybrid DFT methods like B3LYP offer good accuracy for atomization energies and excellent performance for equilibrium geometries, they are typically inferior to high-level post-HF methods for non-bonded interactions and conformational energetics [10]. DFT also has known limitations, including difficulties with van der Waals (dispersion) interactions, charge transfer excitations, and strongly correlated systems [11]. Extensions like time-dependent DFT (TD-DFT) allow for the study of excited states [12].

Post-Hartree-Fock Methods

Post-Hartree-Fock methods are designed to recover the electron correlation missing in the standard HF calculation. They can be broadly divided into single-reference and multi-reference methods, depending on whether they start from a single HF determinant or multiple determinants.

Single-Reference Methods

These methods assume the HF wavefunction is a good starting point.

- Møller-Plesset Perturbation Theory (MPn): This approach treats electron correlation as a perturbation to the HF Hamiltonian. The second-order correction (MP2) is widely used because it captures a considerable amount of dynamical correlation at a computational cost that scales as N⁵ [9] [13]. It is not significantly more expensive than HF and is often used for initial estimates of correlation effects. Higher orders (MP3, MP4) are more accurate but scale less favorably (N⁶, N⁷) [9].

- Coupled Cluster (CC): This family of methods uses an exponential ansatz to couple electron excitations. The CCSD(T) method—coupled cluster with singles, doubles, and a perturbative treatment of triples—is often called the "gold standard" of quantum chemistry for single-reference systems due to its high accuracy (within a few tenths of 1 kcal/mol for atomization energies) [10]. The computational cost of CCSD(T) scales as N⁷, limiting its application to small- to medium-sized molecules [10].

- Configuration Interaction (CI): The CI method constructs the wavefunction as a linear combination of the HF determinant and excited determinants (singles, doubles, etc.). While conceptually simple, full CI is computationally intractable for all but the smallest systems. Truncated versions like CISD are used but are not size-consistent [13].

Multi-Reference Methods

For systems where the electronic wavefunction is not well-described by a single determinant (e.g., bond breaking, diradicals, or some excited states), multi-reference methods are necessary.

- Complete Active Space SCF (CASSCF): This method performs a full CI calculation within a carefully selected set of orbitals (the active space). CASSCF is excellent for treating static (non-dynamical) correlation but is computationally demanding because the cost increases exponentially with the size of the active space [13] [10].

- Multireference Perturbation Theory (e.g., CASPT2, NEVPT2): These methods combine CASSCF with perturbation theory to add dynamical correlation, yielding highly accurate results for challenging systems [13] [14]. For example, a recent study of the NV⁻ center in diamond successfully used a CASSCF/NEVPT2 protocol to compute energy levels, Jahn-Teller distortions, and fine structures [14].

Experimental Protocols for Method Validation

Validating the predictions of quantum chemistry methods requires careful comparison with reliable experimental data or highly accurate theoretical benchmarks. The following protocols are commonly employed in the field.

Protocol for Thermochemical Benchmarking

Objective: To assess the accuracy of a computational method for predicting reaction energies and bond strengths.

- Select a Benchmark Set: Use a well-established set of small molecules with precisely known atomization energies or reaction enthalpies, such as the G2 set [10].

- Geometry Optimization: Optimize the molecular geometry of all species (reactants and products) at a consistent level of theory (often a lower-level method like DFT is sufficient for this step).

- Single-Point Energy Calculation: Perform a high-level energy calculation on the optimized geometry using the target method (e.g., MP2, CCSD(T), or a specific DFT functional).

- Basis Set Extrapolation: To approximate the complete basis set (CBS) limit, perform calculations with a sequence of basis sets of increasing quality (e.g., cc-pVDZ, cc-pVTZ, cc-pVQZ) and extrapolate the energy [15].

- Error Analysis: Calculate the mean absolute error (MAE) and root-mean-square error (RMSE) relative to the experimental or benchmark theoretical values. For example, CCSD(T) can achieve accuracy within ~0.1 kcal/mol, while the B3LYP functional has an average error of ~3.1 kcal/mol for the G2 set [10].

Protocol for Studying Complex Defect Centers (e.g., NV⁻ in Diamond)

Objective: To accurately model the electronic states and properties of solid-state color centers with strong multiconfigurational character, as described in a recent Nature study [14].

- Cluster Model Construction: Embed the point defect within a nanocluster of the host material (e.g., diamond), passivating the surface with hydrogen atoms. Systematically increase the cluster size to test for convergence of properties [14].

- Active Space Selection: Identify the defect-localized molecular orbitals relevant to the low-energy excitations. For the NV⁻ center, this results in a CASSCF(6 electrons, 4 orbitals) active space [14].

- State-Specific Geometry Optimization: Perform a CASSCF calculation to optimize the geometry for each electronic state of interest individually (e.g., the triplet ground state and relevant singlet excited states) [14].

- Dynamic Correlation Treatment: Perform a single-point energy calculation at the optimized geometry using a method that incorporates dynamic correlation, such as NEVPT2, on top of the CASSCF wavefunction [14].

- Property Calculation: Use the resulting CASSCF/NEVPT2 electronic structure to compute measurable properties like zero-phonon lines (ZPLs), fine structure, and Jahn-Teller parameters for comparison with experiment [14].

Method Selection Workflow

The following diagram illustrates the decision process for selecting an appropriate computational method based on the chemical problem and available resources.

Diagram 1: A workflow for selecting a quantum chemistry method based on system size, desired accuracy, and electronic structure considerations.

Table 3: Key Computational "Reagents" and Tools in Quantum Chemistry

| Tool / Resource | Function / Purpose | Examples / Notes |

|---|---|---|

| Basis Sets | Sets of mathematical functions (atomic orbitals) used to expand molecular orbitals. | def2-series: Balanced for efficiency [15]. cc-pVXZ (Dunning): Systematic path to the complete basis set limit [15]. Choice depends on target property (energy differences, geometries) and system size [15]. |

| Exchange-Correlation Functionals (DFT) | Approximates quantum mechanical electron interactions in DFT. | LDA/GGA (e.g., BLYP): Efficient, less accurate [10]. Hybrid (e.g., B3LYP): Incorporates HF exchange, better for atomization energies [10]. |

| Active Space (Post-HF) | Selection of electrons and orbitals for multi-configurational calculations. | Critical for CASSCF/CASPT2 accuracy. Defined by number of electrons and orbitals (e.g., CASSCF(6e,4o) for NV⁻ center) [14]. Requires chemical insight. |

| Quantum Chemistry Software | Program packages that implement the algorithms for solving the electronic Schrödinger equation. | Gaussian, VASP, Quantum ESPRESSO, MOLFDIR, COLUMBUS [13] [12]. Enable calculations of energies, geometries, and spectroscopic properties. |

| Embedding Schemes | Methods to treat a small region of a large system at a high level of theory and the surroundings at a lower level. | QM/MM (Quantum Mechanics/Molecular Mechanics): For enzymes/solvents [10]. DMET (Density Matrix Embedding Theory): For solid-state defects [14]. |

The selection of a computational quantum chemistry method is a critical step in the validation of quantum theory predictions for chemical research. Each class of methods—DFT, and the various post-HF approaches—occupies a specific niche defined by a trade-off between computational cost and physical accuracy. Density Functional Theory remains the workhorse for most applications involving large systems or where a good balance of speed and accuracy is needed, despite its known limitations with dispersion interactions and strongly correlated systems [11] [12]. Coupled Cluster theory, particularly CCSD(T), serves as the gold standard for high-accuracy thermochemistry on single-reference problems, but its steep computational scaling restricts its use to smaller molecules [10]. For the most challenging cases involving bond breaking or clearly multiconfigurational electronic structures, multi-reference methods like CASSCF and CASPT2 are indispensable, albeit at a high computational cost and with increased complexity in setup [14] [10]. The continued development of these methods, along with algorithmic improvements and the integration of new computational paradigms like machine learning, ensures that theoretical chemistry will maintain its vital role as a partner to experiment in explaining and predicting chemical phenomena [10] [12].

In the pursuit of predicting chemical reactions, researchers navigate a complex trade-off between computational accuracy and associated costs. This balance is particularly critical in fields like drug development and materials science, where reliable simulations can significantly accelerate discovery while reducing laboratory expenses. The validation of quantum theory predictions relies on multiple computational approaches, each with distinct strengths and limitations in this accuracy-cost continuum. Current methodologies span from highly accurate but resource-intensive quantum many-body calculations and emerging quantum computing techniques to more approximate yet efficient classical methods like Density Functional Theory (DFT) and machine learning (ML) models. As quantum computing advances from theoretical promise to practical application, understanding this landscape becomes essential for research scientists and drug development professionals selecting appropriate tools for their specific validation challenges. This guide objectively compares the performance of these computational approaches, providing experimental data and protocols to inform strategic decisions in computational chemistry workflows.

Comparative Analysis of Computational Methods

The table below summarizes the key computational methods used for chemical reaction prediction, highlighting their relative positioning in the accuracy-cost spectrum:

| Computational Method | Theoretical Accuracy | Computational Cost & Scalability | Key Applications in Reaction Prediction | Representative Experimental Performance |

|---|---|---|---|---|

| Quantum Many-Body Methods | High (Theoretical gold standard) | Very High (Resources scale exponentially with electron count); Limited to small molecules [16] | Benchmarking; Small system reference data | Exact solution for electron behavior; Limited to handful of electrons [16] |

| Quantum Computing (QC-AFQMC) | High (Accurate computation of atomic-level forces) | High (Runs on 36-qubit hardware; Requires quantum-classical hybrid approach) [17] | Atomic force calculations; Reaction pathways; Carbon capture material design | More accurate than classical methods for specific automotive manufacturer applications [17] |

| Density Functional Theory (DFT) | Medium (Approximates electron behavior) | Medium (Computing resources scale with number of electrons cubed) [16] | Molecular structure optimization; Reaction mechanism insight | Third-rung accuracy at second-rung cost with advanced ML-derived functionals [16] |

| Machine Learning (ML) from Quantum Chemistry | Medium-High (Matches COSMO-RS calculations) | Low (Instant predictions from SMILES strings) [18] | Kinetic solvent effect prediction; High-throughput screening | MAE of 0.71 kcal mol−1 for ΔΔG‡solv; Relative rate constants within factor of 4 of experiment [18] |

| Quantum Machine Learning (QML) | Theoretical Potential High | Evolving (Currently limited by NISQ hardware; Requires hybrid algorithms) [19] | Molecular property prediction; Drug-target binding affinity | Early-stage proof of concept; Potential for exponential speedup [19] |

Experimental Protocols for Method Validation

Quantum-Classical Hybrid Approach for Atomic Forces

Objective: To accurately compute nuclear forces at critical points where significant changes occur in chemical systems, enabling the tracing of reaction pathways and design of more efficient carbon capture materials [17].

Methodology:

- Algorithm: Quantum-Classical Auxiliary-Field Quantum Monte Carlo (QC-AFQMC)

- Hardware: IonQ's 36-qubit quantum computer

- Implementation:

- Quantum computation focuses on accurate force calculations at critical points along reaction coordinates

- Classical computational chemistry workflows integrate these forces to trace complete reaction pathways

- Force calculations are fed into molecular dynamics simulations to improve estimated rates of change within systems

- Validation: Comparison of results against classical computational methods for accuracy benchmarking

- Application: Developed in collaboration with a Global 1000 automotive manufacturer for complex chemical system simulation

Machine Learning for Kinetic Solvent Effects

Objective: To predict solvation free energy and solvation enthalpy of activation (ΔΔG‡solv, ΔΔH‡solv) for solution phase reactions using only 2D molecular structures, enabling fast prediction of solvent effects on reaction rates [18].

Methodology:

- Data Generation:

- Created dataset of over 28,000 neutral reactions and 295 solvents

- Computed ΔΔG‡solv and ΔΔH‡solv using COSMO-RS calculations

- Structures stored as atom-mapped reaction SMILES and solvent SMILES strings

- Model Architecture: Graph Convolutional Neural Network (GCNN) with separate GCNN layers for solvent molecular encoding

- Input Representation: Condensed Graph of Reaction (CGR) representation

- Training Approach:

- Pre-training on large-scale COSMO-RS dataset

- Fine-tuning on targeted reaction datasets

- Transfer learning with additional features to improve generalizability

- Validation: Tested on unseen reactions and experimental data for relative rate constant prediction

Machine Learning-Enhanced Density Functional Theory

Objective: To improve the accuracy of DFT calculations by developing a more universal exchange-correlation (XC) functional through machine learning, achieving higher accuracy at lower computational cost [16].

Methodology:

- Reference Data Generation:

- Performed quantum many-body calculations on light atoms (lithium, carbon, nitrogen, oxygen, neon) and small molecules (dihydrogen, lithium hydride)

- Created training dataset representing accurate electron behavior

- Machine Learning Approach:

- Inverted the DFT problem: instead of using approximate XC functional to determine electron behavior, used known electron behavior from many-body theory to determine optimal XC functional

- Trained ML model to identify XC functional that reproduces many-body results

- Achieved third-rung DFT accuracy at second-rung computational cost

- Computational Resources: Utilized National Energy Research Scientific Computing Center and Oak Ridge National Laboratory supercomputers

Visualization of Computational Workflows

Quantum-Classical Force Calculation Workflow

ML-Solvent Effect Prediction Pipeline

Research Reagent Solutions: Computational Tools

The table below details key computational tools and algorithms used in advanced chemical reaction prediction:

| Tool/Algorithm | Type | Primary Function | Application in Reaction Validation |

|---|---|---|---|

| QC-AFQMC | Quantum-Classical Hybrid Algorithm | Accurate computation of atomic-level forces [17] | Tracing reaction pathways; Carbon capture material design |

| COSMO-RS | Solvation Model | Predicts solvation free energies and thermodynamic properties [18] | Generating training data for ML models; Solvent effect benchmarking |

| Density Functional Theory (DFT) | Quantum Mechanical Method | Calculates electronic structure using electron density [16] | Molecular structure optimization; Reaction mechanism insight |

| Graph Convolutional Neural Network (GCNN) | Machine Learning Architecture | Learns molecular representations from graph structures [18] | Predicting kinetic solvent effects from SMILES strings |

| Variational Quantum Eigensolver (VQE) | Quantum Algorithm | Estimates ground-state energy of molecules [20] | Molecular energy calculation on quantum hardware |

| Condensed Graph of Reaction (CGR) | Molecular Representation | Encodes reaction transformation patterns [18] | Input representation for reaction prediction ML models |

The validation of quantum theory predictions in chemical reactions requires careful consideration of the accuracy-cost balance across available computational methods. Quantum computing approaches like QC-AFQMC demonstrate promising accuracy for specific force calculations but remain resource-intensive and require hybrid classical integration. Machine learning methods offer compelling cost-efficiency for high-throughput screening while maintaining reasonable accuracy, particularly for solvent effect prediction. Traditional DFT continues to play a crucial role, especially when enhanced with machine learning-derived functionals that improve accuracy without proportionally increasing computational burden. For research scientists and drug development professionals, strategic implementation involves matching method selection to specific validation needs—using quantum methods for critical benchmark calculations, ML approaches for rapid screening, and DFT for balanced everyday applications. As quantum hardware continues to advance and algorithms mature, the accuracy-cost balance is expected to shift, potentially making quantum methods more accessible for routine validation workflows in the coming years.

The accurate prediction of chemical reactivity, from simple bimolecular reactions to complex synthetic pathways, represents a cornerstone of modern chemistry with profound implications for drug discovery, materials science, and catalyst design. For decades, the scientific community has relied on quantum mechanical (QM) theories to provide a fundamental understanding of reaction mechanisms. However, traditional QM methods, while accurate, are often computationally prohibitive for large systems or high-throughput screening. The emergence of machine learning (ML) has introduced a new paradigm, offering the potential for rapid predictions with varying degrees of inherent interpretability. This guide objectively compares the performance of contemporary predictive models—ranging from gold-standard quantum chemistry and novel theoretical frameworks to state-of-the-art machine learning approaches. Framed within the broader thesis of validating quantum theory predictions, we dissect the capabilities, limitations, and appropriate applications of each method based on current experimental and benchmarking data. The following sections provide a detailed comparison of quantitative performance, underlying methodologies, and the essential toolkit required for researchers to navigate this rapidly evolving field.

Comparative Analysis of Predictive Methodologies

The table below summarizes the key performance metrics and characteristics of major approaches for predicting chemical reactions.

Table 1: Comparison of Chemical Reaction Prediction Methodologies

| Methodology | Primary Application | Key Performance Metrics | Computational Cost | Key Advantages | Major Limitations |

|---|---|---|---|---|---|

| Graph Neural Networks (e.g., GraphRXN) [21] | Forward reaction prediction (e.g., yield) | R² = 0.712 on in-house HTE Buchwald-Hartwig data [21] | Moderate (requires training data) | Learns reaction features directly from 2D structures; integrable with robotic workflows [21] | Performance is dependent on quality and scope of training data |

| Transformer Models (e.g., Molecular Transformer) [22] | Product prediction from text-based inputs (SMILES) | 90% Top-1 accuracy on biased USPTO dataset; lower on debiased data [22] | Moderate (requires training data) | State-of-the-art for product prediction on established reactions [22] | Opaque "black-box" nature; predictions can be based on dataset biases rather than chemistry [22] |

| Mechanistic Models (e.g., FlowER) [5] | Reaction outcome prediction with mechanism | Matches/exceeds existing approaches in pathway identification; high validity and conservation [5] | High (for training) | Explicitly conserves mass and electrons; provides mechanistic insight [5] | Scope currently limited; less robust for certain metals and catalytic cycles [5] |

| Transition State Prediction (e.g., React-OT) [6] | Transition state geometry and energy | Predictions in <0.4 seconds; ~25% more accurate than prior model [6] | Low (after training) | Extremely fast; enables high-throughput screening of reaction barriers [6] | Accuracy is tied to the diversity of its training data (9,000 QM-calculated reactions) [6] |

| Gold-Standard QM (CCSD(T)/CBS) [23] | Benchmark interaction energies | Gold-standard for noncovalent interactions; used to train ML models like SNS-MP2 [23] | Very High (O(N⁷) scaling) | Highest possible accuracy; serves as the benchmark truth [23] | Computationally prohibitive for large systems or high-throughput tasks [23] |

| Novel Theoretical Frameworks (e.g., Independent Atom Reference) [24] | Reaction energetics and bond breaking | Reproduces bond lengths/energy curves of established methods at lower cost [24] | Lower than conventional DFT | More affordable than conventional QM; retains physical grounding [24] | New method; full scope and limitations under investigation [24] |

Experimental Protocols for Model Validation

A critical step in employing any predictive model is understanding and validating its performance against reliable benchmarks. The protocols below outline standard procedures for training and evaluating models, as well as for generating the high-quality data needed for validation.

Protocol 1: Training and Evaluating a Graph Neural Network for Yield Prediction

This protocol is based on the development of the GraphRXN model, which predicts reaction outcomes from 2D molecular graphs [21].

- Reaction Featurization: Represent each component of a chemical reaction (reactants, reagents) as a directed molecular graph ( \mathbf{G}(\mathbf{V}, \mathbf{E}) ), where vertices (V) are atoms and edges (E) are bonds [21].

- Message Passing: For each molecular graph, perform iterative message passing steps. For a node ( v ) at step ( k ), aggregate the hidden states of its neighboring edges to form an intermediate message vector ( m^k(v) ) [21].

- Information Updating: Update the hidden state of node ( v ) by concatenating ( m^k(v) ) with its previous hidden state and processing it through a learned communicative function (e.g., a Gated Recurrent Unit, GRU) to obtain ( h^k(v) ). Simultaneously, update edge states [21].

- Readout: After ( K ) iterations, aggregate the final node embeddings into a single, fixed-length molecular feature vector using a GRU as the readout operator [21].

- Reaction Vector Aggregation: Combine the molecular feature vectors of all reaction components into one reaction vector, either by summation or concatenation [21].

- Model Training & Validation: Train a dense layer neural network to correlate the reaction vector with the experimental output (e.g., yield). Validate the model on a held-out test set, such as a high-throughput experimentation (HTE) dataset, and report performance metrics like R² [21].

Protocol 2: Generating a Gold-Standard Quantum Mechanical Benchmark

This protocol describes the generation of benchmark datasets like DES370K, which provides gold-standard interaction energies for validating more approximate methods [23].

- Monomer Selection and Preparation: Select a diverse set of closed-shell chemical species (neutral molecules and ions). Generate initial 3D conformations from SMILES strings and optimize using a molecular mechanics force field (e.g., OPLS_2005) [23].

- Quantum Chemical Refinement: Refine the force field-optimized monomer geometries using quantum mechanics. Perform geometry optimization at the MP2 level of theory with a triple-zeta basis set (aVTZ), applying constraints to hydrogen-containing bonds and angles for consistency with subsequent force field development [23].

- Dimer Construction and Sampling: Generate dimer geometries from the QM-optimized monomer structures. Create random relative positions and orientations, then optimize the dimer structures using a two-step QM procedure (DF-LMP2/aVDZ followed by DF-MP2/aVTZ) [23].

- Radial and Orientational Sampling: For each unique QM-optimized dimer geometry, perform one-dimensional radial scans along the intermolecular axis in fine steps (e.g., 0.1 Å) to sample both short-range and long-range interactions. To enhance diversity, also extract a large ensemble of dimer structures from molecular dynamics (MD) simulations [23].

- Gold-Standard Energy Calculation: Calculate the interaction energy for every dimer geometry in the dataset using the coupled-cluster method with single, double, and perturbative triple excitations [CCSD(T)] at the complete basis set (CBS) limit. This serves as the gold-standard benchmark [23].

Workflow Visualization: From Molecules to Predictions

The following diagram illustrates the logical relationships and workflows between the different predictive methodologies discussed in this guide.

Diagram 1: A map of computational chemistry prediction workflows, showing how machine learning and theoretical methods use molecular structures and quantum mechanical data.

Successful deployment of predictive chemistry models relies on a suite of computational "reagents" and datasets.

Table 2: Essential Computational Tools and Datasets for Predictive Chemistry

| Resource Name | Type | Primary Function | Relevance to Validation |

|---|---|---|---|

| USPTO Dataset [25] [22] | Chemical Reaction Data | Large corpus of reactions mined from US patents; used for training ML models. | Standard benchmark for retrosynthesis and product prediction tasks. |

| DES370K / DES15K [23] | Quantum Chemical Benchmark | Gold-standard CCSD(T)/CBS interaction energies for ~3,700 dimer types. | Validates and trains force fields, density functionals, and ML models. |

| High-Throughput Experimentation (HTE) [21] | Experimental Data | Robotic platforms generating large, consistent datasets including successful and failed reactions. | Provides high-quality, unbiased data for training and validating forward prediction models. |

| RDKit [25] | Cheminformatics Software | Open-source toolkit for cheminformatics; used for molecule manipulation and fingerprint generation. | Core utility for processing molecular structures and generating descriptors for models. |

| Reaction Templates (SMARTS) [25] | Chemical Knowledge Encoding | Rules of chemistry codified using SMARTS patterns for template-based retrosynthesis. | Provides a chemically intuitive, rule-based baseline against which data-driven models are compared. |

| SNS-MP2 [23] | Machine-Learned Quantum Method | Neural network approach predicting CCSD(T)-level interaction energies at low cost. | Extends the reach of gold-standard accuracy to larger systems for more comprehensive validation. |

Computational Workflows in Action: Designing and Simulating Chemical Reactions

The accurate prediction of transition state structures represents a fundamental challenge in computational chemistry, serving as a critical testing ground for the validation of quantum theory predictions in chemical reactions. These fleeting molecular configurations, which exist at the energy barrier between reactants and products, typically last only femtoseconds, making them nearly impossible to isolate experimentally [26]. For decades, quantum chemistry methods have provided the primary framework for transition state modeling, yet these approaches remain computationally expensive and time-consuming, often requiring expert supervision and rational initial guesses [27]. The emergence of machine learning (ML) has introduced transformative methodologies that not only accelerate transition state discovery but also provide new avenues for testing quantum mechanical predictions against data-driven models. This comparison guide objectively evaluates the performance of these rapidly evolving ML approaches, examining their capabilities in reproducing and extending quantum chemical predictions while highlighting their distinct advantages and limitations for research applications in chemical discovery and drug development.

Traditional Computational Methods and the Quantum Chemistry Benchmark

Traditional computational approaches for transition state determination have relied exclusively on quantum chemistry methods, primarily employing density functional theory (DFT) to explore potential energy surfaces [28]. These methods can be broadly categorized into single-ended approaches (such as the dimer method) that search from a single starting structure, and double-ended methods (including nudged elastic band (NEB) and growing string method (GSM)) that utilize both reactant and product geometries as boundary constraints [28]. While these quantum chemical methods provide valuable insights and have served as the gold standard for transition state prediction, they face significant limitations in computational cost and scalability. A single transition state calculation using DFT can require hours or even days of computing time [26], creating a substantial bottleneck for reaction exploration and mechanistic studies, particularly in complex systems relevant to pharmaceutical development.

Table 1: Performance Comparison of Quantum Chemistry Methods for TS Optimization

| Computational Method | Success Rate | Mean Absolute Error | Computational Time | System Size Limitation |

|---|---|---|---|---|

| B3LYP/def2-SVP | Not Reported | Not Reported | Hours to Days | ~23 atoms [27] |

| ωB97X/pcseg-1 | Higher than B3LYP [27] | Not Reported | Hours to Days | Similar small systems [27] |

| M08-HX/pcseg-1 | Higher than B3LYP [27] | Not Reported | Hours to Days | Similar small systems [27] |

| Direct Quantum Calculations | Not Applicable | Reference Standard | Days [26] | Small systems [28] |

Machine Learning Approaches: Methodologies and Experimental Protocols

The limitations of traditional quantum chemistry methods have spurred the development of diverse machine learning approaches for transition state prediction. These can be broadly classified into three categories: generative models that directly produce transition state structures, representation-based models that predict activation barriers from molecular features, and potential-based methods that accelerate quantum mechanical calculations.

Generative AI Models

Generative approaches employ advanced neural network architectures to directly produce transition state structures. The MIT research team developed a diffusion model that learns the underlying distribution of how reactant, product, and transition state structures coexist [26]. Their training dataset comprised 9,000 chemical reactions calculated using quantum computational methods. During experimentation, the model generates multiple possible transition state solutions (typically 40 per reaction), with a confidence model then predicting which states are most likely to occur [26]. This approach incorporates rotational and translational invariance, allowing it to recognize reactants in any orientation as representing the same chemical reaction, significantly improving training efficiency and accuracy.

Representation-Based Machine Learning Models

Representation-based models employ various featurization strategies to represent chemical reactions for predicting activation barriers. These include:

- 2D Structure-Based Representations: Morgan Fingerprints (MFP) and Differential Reaction Fingerprints (DRFP) that identify circular substructures or take symmetric differences between reactants and products [29]

- 2D Graph-Based Models: Condensed Graph of Reaction (CGR) representations that use atom-mapped reaction SMILES to describe atomic centers transformed during a reaction [29]

- 3D Structure-Based Models: Thermochemistry-inspired representations like SLATMd and B2R2 that use molecular representations of reactants and products as proxies for transition state geometry [29]

- Equivariant Neural Networks: Models like EquiReact that utilize 3D structural information from reactants and products while incorporating physical symmetries [29]

The experimental protocol for these models typically involves dividing datasets into training, validation, and test sets, with careful attention to atom-mapping accuracy, which significantly impacts model performance [29].

Machine Learning Potentials

ML potentials such as NequIP (Neural Equivariant Interatomic Potentials) represent a hybrid approach that combines the accuracy of quantum mechanics with the speed of machine learning. In experimental applications, these models are trained on quantum chemical data and then combined with traditional reaction path search methods like NEB and GSM [30]. The training process involves using the Transition1x dataset and selecting the most efficient model through performance comparison before application to transition state identification and exploration [30].

Figure 1: Workflow of Machine Learning Approaches for Transition State Prediction

Comparative Performance Analysis of ML Methods

Success Rates and Accuracy Metrics

Comprehensive benchmarking studies reveal significant variations in performance across different ML approaches. The bitmap-based convolutional neural network methodology with genetic algorithm developed for hydrogen abstraction reactions achieved verified success rates of 81.8% for hydrofluorocarbons and 80.9% for hydrofluoroethers [27]. The MIT generative AI model produced transition state solutions accurate to within 0.08 Å compared to quantum-generated structures [26], while NequIP combined with NEB methods demonstrated a remarkable 96.6% success rate with a mean absolute error of 0.32 kcal/mol for barrier prediction [30].

Table 2: Performance Comparison of Machine Learning Methods for TS Prediction

| ML Method | Architecture | Success Rate | Accuracy/MAE | Speed Advantage | Key Limitations |

|---|---|---|---|---|---|

| Bitmap CNN with Genetic Algorithm [27] | Convolutional Neural Network | 81.8% (HFC), 80.9% (HFE) | Not Reported | Not Reported | Limited to specific reaction types |

| Generative Diffusion Model [26] | Diffusion Model | Not Reported | 0.08 Å | Seconds vs. days with DFT | Primarily small molecules (~23 atoms) |

| NequIP with NEB [30] | Equivariant Neural Network | 96.6% | 0.32 kcal/mol | Significant acceleration | Requires training data |

| Condensed Graph of Reaction (CGR) [29] | Graph Neural Network | Varies by dataset | Competitive on barriers | Fast prediction | Sensitive to atom-mapping quality |

| EquiReact [29] | Equivariant Neural Network | Varies by dataset | Competitive performance | Fast prediction | Requires 3D structural input |

Benchmarking Across Chemical Spaces

Recent benchmarking efforts have evaluated various reaction representations across diverse datasets, including the general-scope GDB7-22-TS, single-reaction class dataset Cyclo-23-TS, and specific Proparg-21-TS dataset [29]. These studies demonstrate that 3D-structure-based models like EquiReact generally exhibit competitive performance, while 2D-graph-based approaches such as Chemprop with CGR representations show strong results when accurate atom-mapping is available [29]. The performance of fingerprint-based methods (MFP and DRFP) varies significantly across dataset types, with their effectiveness depending on the complexity and specificity of the chemical space being studied [29].

Research Reagent Solutions: Essential Tools for ML-Driven TS Prediction

Table 3: Key Research Reagent Solutions for ML-Based Transition State Prediction

| Tool/Category | Specific Examples | Function | Accessibility |

|---|---|---|---|

| Software Packages | Chemprop, OA-React-Diff, TSDiff, TSNet | Implements various ML architectures for TS prediction | Open-source (varies) |

| Atom-Mapping Tools | RXNMapper | Automates reaction atom-mapping for graph representations | Open-source [29] |

| ML Potential Implementations | NequIP, DeePMD, REANN | Provides neural network potentials for reaction path methods | Open-source [30] |

| Benchmark Datasets | GDB7-22-TS, Cyclo-23-TS, Proparg-21-TS, Transition1x | Standardized data for training and validation | Publicly available [29] [30] |

| Reaction Path Search Methods | Nudged Elastic Band (NEB), Growing String Method (GSM) | Locates transition states when combined with ML potentials | Widely implemented [30] |

Integration with Quantum Theory Validation

The relationship between machine learning transition state prediction and quantum theory validation is symbiotic rather than competitive. ML models depend on high-quality quantum chemical data for training, as evidenced by the use of 9,000 quantum-computed reactions for training generative models [26] and the Transition1x dataset for ML potentials [30]. Simultaneously, ML predictions provide a mechanism for validating and extending quantum theoretical predictions across broader chemical spaces. The remarkable agreement between ML-predicted transition states and those obtained through direct quantum calculation – such as the 0.08 Å accuracy achieved by generative models [26] – provides robust validation of quantum mechanical descriptions of reaction pathways. Furthermore, ML models can identify areas where theoretical predictions diverge from data-driven patterns, potentially highlighting limitations in current quantum chemical methods or suggesting refinements to theoretical frameworks.

Figure 2: Integration Cycle Between ML Prediction and Quantum Theory Validation

Machine learning approaches have dramatically accelerated transition state prediction while maintaining remarkable accuracy compared to traditional quantum chemical methods. Generative AI models provide sub-angstrom structural accuracy in seconds rather than days, while specialized neural network potentials achieve success rates exceeding 96% when combined with traditional reaction path methods. Representation-based models offer diverse strategies for balancing accuracy with computational efficiency across different chemical spaces. Despite these advances, current ML methods face challenges in data scarcity for certain reaction types, generalization to complex systems involving metals and catalysts, and dependence on accurate atom-mapping for optimal performance. The continued development of comprehensive datasets, improved model architectures with stronger physical constraints, and standardized validation frameworks will further enhance the role of machine learning in transition state prediction. As these methods mature, they will increasingly serve not only as predictive tools but also as validation mechanisms for quantum theoretical predictions, creating a virtuous cycle of improvement in both data-driven and first-principles approaches to understanding chemical reactivity. For researchers in pharmaceutical development and chemical discovery, these advances promise accelerated reaction exploration and mechanistic understanding, ultimately enabling more efficient design of synthetic routes and catalysts for useful products.

The validation of quantum theory predictions in chemical research finds a powerful application in the study of biomolecular systems. For complex environments like enzymes, solvated proteins, or drug-target complexes, a full quantum mechanical (QM) treatment is often computationally prohibitive. Hybrid Quantum Mechanical/Molecular Mechanical (QM/MM) methods address this challenge by combining the accuracy of QM for describing chemical reactions, electronic polarization, and metal coordination with the efficiency of Molecular Mechanical (MM) force fields for modeling the surrounding biomolecular environment [31] [32]. This integrative approach has become a cornerstone for simulating chemical reactivity in complex systems, providing atomistic insights that are crucial for advancing fields like drug design and biocatalysis [31] [33]. The core premise of these methods rests on the Born-Oppenheimer approximation, which simplifies the molecular Schrödinger equation by separating electronic and nuclear motions, making computations for large systems feasible [32]. The continued development and benchmarking of QM/MM protocols are fundamental to strengthening the predictive power of computational quantum chemistry in biological contexts.

Performance Benchmarking: QM/MM vs. Classical Docking

The comparative performance of QM/MM and classical docking methods varies significantly depending on the nature of the ligand-protein complex. A recent benchmark study evaluated these approaches across three diverse datasets, revealing distinct strengths and limitations [33].

Table 1: Docking Success Rate Comparison (RMSD ≤ 2.0 Å)

| Benchmark Set (Complex Type) | Number of Complexes | Classical Docking Success Rate | QM/MM Docking Success Rate | Key Findings |

|---|---|---|---|---|

| HemeC70 (Metal-Binding) | 70 | Not Specified | Significant Improvement | QM/MM is especially advantageous for metal-binding complexes; PM7 semi-empirical method offers a major improvement [33]. |

| CSKDE56 (Covalent) | 56 | 78% | Comparable (~78%) | QM/MM performs similarly to optimized classical algorithms for covalent bonds; DFT-level description requires dispersion corrections for meaningful energies [33]. |

| Astex Diverse Set (Non-Covalent) | 85 | High Accuracy | Slightly Lower | QM/MM preserves high accuracy but may show marginally lower success rates for standard non-covalent drug-like complexes [33]. |

This data demonstrates that QM/MM docking is not a universally superior replacement but a specialized tool. Its primary advantage is in systems where classical force fields struggle, particularly those involving metal coordination, where electronic effects are critical [33]. For covalent complexes, both methods can achieve high success rates, while for standard non-covalent complexes, the added computational cost of QM/MM may not be justified.

Core Methodologies and Experimental Protocols

Fundamental QM/MM Workflow and System Partitioning

The execution of a QM/MM simulation follows a structured workflow, beginning with system preparation and culminating in the analysis of reaction mechanisms or binding poses.

Key Experimental Protocols in Practice

1. QM/MM Docking of Covalent and Metal-Binding Ligands: The Attracting Cavities (AC) docking algorithm extended for QM/MM calculations exemplifies a modern protocol [33]. The system is partitioned so that the ligand and key active site residues (e.g., a catalytic cysteine or metal ion) form the QM region. This region is treated with semi-empirical methods (like PM7) or Density Functional Theory (DFT), while the rest of the protein and solvent is handled by an MM force field (e.g., CHARMM or AMBER) [33]. An electrostatic embedding scheme is used, where the MM point charges polarize the QM electron density. The docking success is evaluated by the root-mean-square deviation (RMSD) of the predicted ligand pose from the crystallographic reference, with an RMSD ≤ 2.0 Å typically considered a successful docking [33].

2. Enhanced Sampling for Reaction Pathways: To overcome the timescale limitation of spontaneous reactive events, enhanced sampling techniques are employed [31]. Methods like umbrella sampling or metadynamics apply a bias potential to system coordinates (collective variables) that describe the reaction progress, such as bond distances or angles. This forces the system to sample high-energy states, such as transition states, allowing for the calculation of free energy profiles and reaction barriers [31]. These profiles are essential for validating quantum theory predictions against experimental kinetic data.

3. Multiple Time Step (MTS) Acceleration: This protocol addresses computational cost by using different time steps for different force calculations [31]. The faster-moving bonded interactions in the MM region are computed with a short time step (e.g., 0.5 fs), while the more expensive QM forces are updated less frequently with a longer time step (e.g., 2-4 fs). This can lead to a significant speedup without a substantial loss of accuracy [31].

The Scientist's Toolkit: Essential Research Reagents and Software

Successful QM/MM studies rely on a suite of specialized software tools and computational resources. The following table details key "research reagents" for the field.

Table 2: Essential Computational Tools for QM/MM Research

| Tool Name | Type | Primary Function in QM/MM |

|---|---|---|

| CHARMM [33] | Molecular Modeling Program | Acts as a primary simulation driver, handling MM calculations and system setup via its QM/MM interface. |

| Gaussian [33] [32] | Quantum Chemistry Software | Performs the QM energy and force calculations for the defined QM region at various levels of theory (e.g., DFT, HF). |

| GROMACS [31] | Molecular Dynamics Software | Specialized in high-performance MD simulations, often used for the MM part and overall system dynamics in QM/MM setups. |

| MiMiC [31] | Simulation Framework | Enables efficient, flexible QM/MM simulations across diverse computing architectures, leveraging multiple specialized codes. |

| Attracting Cavities (AC) [33] | Docking Algorithm | A classical docking algorithm that has been extended to perform on-the-fly QM/MM calculations for pose prediction. |

The integration of QM/MM methodologies provides a validated and powerful framework for applying quantum theory to the complexity of biomolecular systems. Benchmarking studies confirm that QM/MM approaches offer a critical advantage for modeling challenging targets like metalloproteins and covalent inhibitors, where classical potentials are often inadequate [33]. While the computational cost remains higher, ongoing developments in enhanced sampling, multiple time step algorithms, and efficient software frameworks like MiMiC are steadily increasing the scope and accuracy of these simulations [31]. As quantum computing emerges to further accelerate QM calculations, the role of hybrid QM/MM approaches is poised to expand, solidifying their position as an indispensable tool for validating quantum mechanical predictions and driving innovation in chemical research and rational drug design [32].

The pursuit of validating quantum theory predictions in chemical reactions has driven the development of sophisticated high-throughput screening (HTS) methods for exploring chemical reaction networks. These automated approaches systematically map out potential reaction pathways, intermediates, and transition states, providing a rigorous experimental framework for testing quantum mechanical predictions at scale. By combining first-principles quantum calculations with automated exploration algorithms, researchers can now generate extensive reaction networks that either confirm theoretical predictions or reveal unexpected reactivity, thereby refining our fundamental understanding of chemical behavior. This comparative guide examines the leading computational methodologies enabling this scientific revolution, assessing their performance, applicability, and value in advancing chemical research.

Comparative Analysis of Automated Exploration Platforms

Performance Metrics and Key Differentiators

Automated reaction network exploration tools vary significantly in their computational approaches, target applications, and performance characteristics. The table below provides a systematic comparison of leading platforms and methodologies.

Table 1: Comparative Analysis of Automated Reaction Network Exploration Platforms

| Platform/Method | Core Approach | Target Applications | Key Advantages | Computational Demand | Validation Status |

|---|---|---|---|---|---|

| STEERING WHEEL with CHEMOTON [34] | Human-guided autonomous exploration with shell-based protocol | Transition metal catalysis, complex reaction mechanisms | Intuitive control via graphical interface (HERON), avoids combinatorial explosion | High, but managed through selective exploration | Demonstrated for catalytic cycle elucidation |

| ReNeGate [35] | Bias-free reactivity exploration | High-throughput screening of transition metal catalyst databases | Identifies reactivity patterns across catalyst families, automated analysis | High for extended databases | Applied to Mn(I) pincer complexes (preprint) |

| Hybrid Graph/Coordinate Model [36] | D-MPNN with on-the-fly transition state prediction | Reaction barrier height prediction for organic reactions | Only requires 2D graph input, leverages 3D information internally | Low after training, high for training | Validated on RDB7 and RGD1 datasets |

| Independent Atom Reference State [24] | Novel DFT reference state using atoms as fundamental units | Bond energy calculations, reaction energetics | More computationally affordable than traditional DFT, maintains accuracy | Lower than conventional DFT | Validated on small molecules (O₂, N₂, F₂) |

| Quantitative HTS (qHTS) [37] | Multi-concentration screening with Hill equation modeling | Drug discovery, toxicity testing | Lower false-positive/negative rates than traditional HTS | Moderate, depends on assay type | Statistical challenges in parameter estimation noted |

Quantitative Performance Assessment

The evaluation of computational efficiency and predictive accuracy provides critical insights for platform selection based on research requirements.

Table 2: Quantitative Performance Metrics for Reaction Screening Methods

| Method | Accuracy Metrics | Throughput Capacity | System Size Limitations | Experimental Validation |

|---|---|---|---|---|

| STEERING WHEEL [34] | Systematic exploration of relevant intermediates; reproducible cycle elucidation | Managed via selective steps; preview of calculation count available | No inherent size limits; combinatorial challenge managed by selection steps | Applied to well-studied transition metal catalytic systems |

| Traditional DFT [24] | Varies with functional; fails for strong correlation, dispersion interactions | Computationally expensive for large systems | Limited by electron interaction complexity | Benchmark for new theoretical approaches |

| Independent Atom Method [24] | Accurate bond lengths/energy curves; performs well at atomic separation | More affordable than traditional DFT; less processing power | Potentially broader applicability than electron-focused methods | Reproduces results of highly accurate, expensive methods |

| Hybrid Graph/Coordinate [36] | Reduced error for RDB7 and RGD1 datasets | Fast prediction after training; generative TS geometry | Depends on training data diversity | Compared to quantum mechanical calculations |

| qHTS with Hill Equation [37] | Parameter estimate variability; poor fits for "flat" profiles | 10,000+ chemicals across 15 concentrations simultaneously | Limited by assay design and concentration range | Issues with false positives/negatives documented |

Experimental Protocols for Automated Reaction Exploration

STEERING WHEEL Workflow Protocol

The STEERING WHEEL algorithm implements a structured approach to reaction network exploration through alternating phases of expansion and selection [34]:

Network Expansion Step:

- Reactive Site Identification: Define local sites in molecular structures using first-principles heuristics, graph-based rules, or electronegativity-based polarization rules

- Reaction Trial Generation: Push/pull potentially reactive sites together/apart to initiate reaction trials

- Transition State Location: Employ single-ended transition state search algorithms to locate elementary steps without product assumptions

- Batch Execution: Write calculation instructions to a database for execution on high-performance computing infrastructure

- Result Aggregation: Collect completed calculations back to the database for network construction

Selection Step:

- Structure/Compound Subsetting: Choose a targeted subset of structures or compounds from the emerging reaction network

- Reactive Site Filtering: Apply compound filters (e.g., Catalyst Filter) and reactive site filters to focus exploration

- Combinatorial Management: Reduce potential calculations from millions to manageable hundreds or dozens

- Protocol Adjustment: Dynamically adjust exploration strategy based on intermediate results

This protocol is implemented within the SCINE software package and integrated with the HERON graphical interface for intuitive human guidance of the autonomous exploration process [34].

Hybrid Graph/Coordinate Method Implementation

The graph-based prediction of reaction barrier heights incorporates both 2D structural information and 3D geometric insights through this multi-stage protocol [36]:

Feature Generation Phase:

- Molecular Graph Construction: Represent reactants and products as graphs with atoms as nodes and bonds as edges

- Atom/Bond Feature Calculation: Compute basic RDKit properties including atomic number, bond count, formal charge, hybridization, hydrogen count, aromaticity, and atomic mass

- Reaction Graph Formation: Superimpose reactant and product graphs into a Condensed Graph of Reaction (CGR)

- Transition State Geometry Prediction: Generate 3D TS coordinates using generative models (TSDiff or GoFlow) from 2D molecular graphs

Model Prediction Phase:

- Directed Message Passing: Process atom and bond features through a Directed Message Passing Neural Network (D-MPNN)

- Spatial Feature Integration: Encode 3D local environments around each atom into feature vectors

- Barrier Height Calculation: Predict activation energies from molecular embeddings using a feed-forward neural network

- Validation: Compare predicted barrier heights with quantum mechanical reference data from benchmark datasets

This approach uniquely combines the accessibility of 2D molecular representations with the chemical accuracy afforded by 3D structural information without requiring pre-computed quantum mechanical calculations during inference [36].

Figure 1: Automated Reaction Network Exploration Workflow

Research Reagent Solutions: Computational Tools for Reaction Screening

The experimental and computational tools required for implementing high-throughput reaction screening span software platforms, theoretical methods, and analysis frameworks.

Table 3: Essential Research Reagent Solutions for Reaction Network Exploration

| Tool/Method | Function | Implementation Requirements |

|---|---|---|

| SCINE CHEMTON [34] | Automated reaction space exploration based on quantum mechanics | High-performance computing infrastructure |

| STEERING WHEEL Algorithm [34] | Human-guided autonomous exploration with shell-based protocol | Integration with HERON graphical interface |

| Independent Atom Reference State [24] | Computationally efficient prediction of reaction energetics | Density functional theory implementation |

| Condensed Graph of Reaction (CGR) [36] | Representation of reactions as single superimposed graphs | RDKit for feature calculation, D-MPNN architecture |

| Directed Message Passing Neural Network [36] | Prediction of reaction barrier heights from graph representations | Pretrained models, molecular feature sets |

| Generative TS Geometry Models (TSDiff, GoFlow) [36] | Prediction of transition state geometries from 2D structures | Equivariant neural networks, diffusion models |

| Hill Equation Modeling [37] | Analysis of quantitative HTS concentration-response data | Nonlinear parameter estimation methods |

| Distribution of Standard Deviations (DSD) [38] | Assessment of variability in high-throughput screening data | Large-scale replicate measurements |

Integration Pathways for Method Validation

Figure 2: Quantum Theory Validation through Automated Screening

The validation of quantum theory predictions relies on integrating multiple computational and experimental approaches. Automated exploration platforms like STEERING WHEEL generate comprehensive reaction networks that provide testable hypotheses for quantum mechanical accuracy assessment [34]. Machine learning methods, particularly those incorporating 3D structural information, offer efficient screening of quantum-derived reaction barriers against established benchmarks [36]. Experimental high-throughput screening serves as the ultimate validation pathway, with quantitative HTS providing concentration-response data that either confirms or challenges computational predictions [37]. This integrated framework creates a virtuous cycle where quantum theory informs exploration priorities, while experimental results refine theoretical models, progressively enhancing predictive accuracy in chemical reaction research.

The development of high-performance energy storage systems increasingly relies on advanced materials that address multiple failure modes simultaneously. Traditional experimental methods for discovering these materials can be slow and costly. This case study examines the quantum chemical design and experimental validation of a multifunctional electrolyte additive for high-nickel lithium-ion batteries (LIBs), presenting a paradigm for computationally guided materials development. The research demonstrates how theoretical predictions can successfully guide the creation of functional materials that are later corroborated through experimental analysis, validating the role of quantum chemistry in modern chemical research [39] [40].

The context for this work addresses critical challenges in high-nickel cathode batteries (such as LiNi₀.₈Co₀.₁Mn₀.₁O₂, or NCM811), where reactive species generated from LiPF₆ salt decomposition—particularly HF and PF₅—cause cathode corrosion, transition metal dissolution, and rapid capacity fade [39]. While various electrolyte additives have been proposed, their development has traditionally followed a trial-and-error approach. The study we examine here reverses this workflow by using quantum chemical calculations as the primary design tool before any experimental validation is performed.

Computational Design Strategy

Molecular Design Rationale

The researchers designed N-Trimethylsilylimino Triphenylphosphorane (TMSiTPP) as a multifunctional additive through rational molecular engineering based on quantum chemical principles. The molecular structure incorporates two distinct functional groups bonded to a nitrogen atom, each serving a specific protective function [39]:

Trimethylsilyl (TMS) Group: This component contains an N-Si bond that is highly effective for scavenging HF, a destructive byproduct of LiPF₆ decomposition in battery electrolytes. The Si moiety reacts readily with HF, preventing it from corroding the electrode surfaces.

Triphenylphosphoranyl (TPP) Group: This component provides exceptional chemical stability under both oxidative and reductive conditions due to the electron-withdrawing characteristics of the three phenyl groups. The phosphorus atom also donates electron density to nitrogen, enhancing its ability to coordinate with Lewis acids.

The molecular design leverages the valency of nitrogen, where the double-bonded P=N functional group provides steric advantages for forming coordination bonds with PF₅ while maintaining molecular stability against nucleophilic attacks [39].

Quantum Chemical Calculations and Predictive Metrics

The team employed density functional theory (DFT) calculations to predict the electrochemical behavior and reactive properties of TMSiTPP before synthesis and testing. These computations focused on several key parameters [39]: