Variance-Based Shot Allocation: Maximizing Quantum Circuit Efficiency for Computational Drug Development

This article explores variance-based shot allocation, a critical technique for optimizing quantum measurement resources in the Noisy Intermediate-Scale Quantum (NISQ) era.

Variance-Based Shot Allocation: Maximizing Quantum Circuit Efficiency for Computational Drug Development

Abstract

This article explores variance-based shot allocation, a critical technique for optimizing quantum measurement resources in the Noisy Intermediate-Scale Quantum (NISQ) era. Aimed at researchers and drug development professionals, it provides a comprehensive guide from foundational principles to advanced applications. We detail how strategically distributing a finite number of quantum measurements (shots) based on operator variance significantly reduces the sampling overhead in algorithms like VQE and ADAPT-VQE, which are pivotal for molecular simulation. The content covers practical implementation methodologies, common troubleshooting pitfalls, and a comparative analysis of performance gains, concluding with the transformative potential of these methods for accelerating quantum-enabled drug discovery.

The Quantum Shot Problem: Why Measurement Efficiency is Critical for NISQ-Era Drug Discovery

In the Noisy Intermediate-Scale Quantum (NISQ) era, quantum computers are characterized by a limited number of qubits that are prone to errors and decoherence. Since these machines are noisy, each quantum circuit must be run multiple times to obtain a reliable, statistically significant result. The number of times a circuit is run is called the shot, the fundamental unit of quantum measurement [1].

The shot count represents a critical trade-off in quantum computation: more shots lead to greater precision in the result but incur higher computational cost and time. For variational quantum algorithms like the Variational Quantum Eigensolver (VQE) and its adaptive variants, the required number of shots can become a primary bottleneck, as these algorithms require extensive measurement for both parameter optimization and operator selection [2] [3]. This application note explores the role of shots in quantum measurement, framed within the context of variance-based shot allocation research, and provides detailed protocols for its implementation.

The Shot in Quantum Algorithm Execution

Fundamental Definition and Statistical Foundation

A shot refers to a single execution of a quantum circuit, from initial state preparation to final measurement, resulting in a single bitstring output. For meaningful results, especially when estimating the expectation value of an observable, many shots are required to build a probability distribution.

The expectation value of a measurement ( Z ) taken over ( n ) shots is defined as ( \mu = E[X] = \sum{i=1}^{n} xi pi ), where ( pi ) is the probability of outcome ( xi ). As ( n \to \infty ), the sample mean ( \mu ) converges to the true expectation value ( \mu0 ) [1]. The precision of this estimation is quantified by its variance, which for a noiseless circuit decreases as ( \frac{1}{\sqrt{n}} ), following the central limit theorem. This relationship makes the Relative Standard Deviation (RSD), defined as ( \sigma / \mu ), a key dimensionless metric for evaluating result quality.

Table: Key Statistical Metrics for Shot-Based Measurement

| Metric | Formula | Interpretation |

|---|---|---|

| Expectation Value | ( \mu = E[X] = \sum{i=1}^{n} xi p_i ) | Average result over many shots; converges to true value. |

| Variance | ( \sigma^2 = E[(X - \mu)^2] ) | Spread of the result distribution. |

| Relative Standard Deviation (RSD) | ( \text{RSD} = \sigma / \mu ) | Dimensionless measure of result precision. |

The Shot Overhead Challenge in Adaptive Algorithms

The Adaptive Derivative-Assembled Problem-Tailored VQE (ADAPT-VQE) is a promising algorithm for the NISQ era because it constructs efficient, problem-tailored ansatz circuits iteratively, reducing circuit depth and mitigating optimization challenges. However, a major limitation is its high quantum measurement overhead [2] [3].

This overhead arises because each iteration requires a large number of shots for two purposes: 1) optimizing the parameters of the current quantum circuit, and 2) selecting the next operator to add to the ansatz by measuring operator gradients. This dual measurement demand makes shot efficiency a critical research focus for scaling ADAPT-VQE to larger problems [2].

Variance-Based Shot Allocation: Principles and Applications

Variance-based shot allocation is a strategy that optimizes the distribution of a finite shot budget across different measurement tasks to minimize the total variance of the final result.

Core Principle

The theoretical foundation for this approach is that the number of shots allocated to a given term should be proportional to the variance of its measurement and its weight in the overall Hamiltonian [2]. Instead of uniformly distributing shots, this method prioritizes measurements that contribute most to the overall uncertainty. This is particularly powerful when combined with commutativity-based grouping of Hamiltonian terms or operator gradients, as it reduces redundant measurements [2].

Application in ADAPT-VQE

Research has demonstrated that applying variance-based shot allocation to both the Hamiltonian energy expectation and the gradient measurements for operator selection in ADAPT-VQE can lead to substantial reductions in the total shot count while maintaining chemical accuracy [2].

Table: Experimental Results of Shot Reduction Strategies

| System/Method | Shot Reduction (vs. Baseline) | Key Metric Maintained |

|---|---|---|

| Reused Pauli Measurements (with grouping) | 32.29% (average) | Chemical Accuracy |

| Variance-Based Shot Allocation (VPSR) on LiH | 51.23% | Chemical Accuracy |

| Variance-Based Shot Allocation (VPSR) on H₂ | 43.21% | Chemical Accuracy |

| Variance-Based Shot Allocation (VMSA) on LiH | 5.77% | Chemical Accuracy |

Integrated Protocols for Shot-Efficient ADAPT-VQE

The following protocols integrate two powerful strategies for reducing shot overhead: reusing Pauli measurements and variance-based shot allocation [2].

Protocol 1: Reuse of Pauli Measurements in ADAPT-VQE

This protocol reduces overhead by reusing quantum measurement outcomes obtained during the VQE parameter optimization phase in the subsequent operator selection step.

Workflow Overview

Step-by-Step Procedure

Initialization and VQE Execution:

- Begin a standard ADAPT-VQE iteration. Execute the VQE parameter optimization routine for the current ansatz state ( |\psi(\vec{\theta}) \rangle ).

- During this optimization, collect and store the results (expectation values and variances) of all Pauli measurements performed to compute the energy ( \langle H \rangle ). These measurements are typically grouped by commutativity (e.g., using Qubit-Wise Commutativity) to minimize circuit executions [2].

Data Storage:

- In a classical database, store the obtained expectation values ( \langle Pi \rangle ) and their associated empirical variances ( \sigma^2{Pi} ) for each measured Pauli string ( Pi ). Metadata such as the circuit parameters ( \vec{\theta} ) and ansatz structure should also be recorded.

Operator Selection Analysis:

- Proceed to the operator selection step for the next ADAPT-VQE iteration. This requires evaluating the gradients of the energy with respect to a pool of operators, ( { Ai } ), which involves measuring commutators ( \langle [H, Ai] \rangle ).

- Decompose the commutator ( [H, Ai] ) into its constituent Pauli strings. Let ( S{\text{grad}} ) be the set of all unique Pauli strings required for all gradient estimations.

Similarity Check and Data Retrieval:

- For each Pauli string ( Pj ) in ( S{\text{grad}} ), check if it is identical to any Pauli string ( P_k ) measured and stored in Step 2.

- If a match is found and the ansatz state ( |\psi(\vec{\theta}) \rangle ) has not changed significantly for that specific operator, reuse the stored expectation value ( \langle Pk \rangle ) and variance ( \sigma^2{P_k} ).

Gradient Calculation and Operator Choice:

- Compute the gradient ( \langle [H, A_i] \rangle ) using a combination of reused data and any necessary new measurements.

- Select the operator with the largest gradient magnitude to add to the ansatz.

Advantages: This protocol leverages the inherent overlap between the Pauli strings in the Hamiltonian and those in the commutators for gradient estimation. It directly reduces the number of new quantum measurements required, with minimal classical computational overhead for the similarity check [2].

Protocol 2: Variance-Based Shot Allocation

This protocol provides a detailed method for dynamically allocating shots based on variance to maximize the information gained per shot.

Workflow Overview

Step-by-Step Procedure

Term Grouping:

- Identify all terms ( { Oi } ) to be measured. This could be the Hamiltonian ( H = \sum ci Oi ) or the set of observables for gradient estimation ( { [H, Ai] } ).

- Group these terms into mutually commuting sets ( { G1, G2, ..., G_m } ) using a method like Qubit-Wise Commutativity (QWC) or more advanced grouping. This allows multiple terms within a group to be measured from the same circuit execution [2].

Initial Sampling and Variance Estimation:

- For each group ( Gj ), execute a fixed, small number of preliminary shots (e.g., ( n{\text{init}} = 1000 )) for the measurement circuit corresponding to that group.

- From this initial data, compute the empirical variance ( \hat{\sigma}^2i ) for each individual term ( Oi ) within the group.

Shot Budget Calculation:

- Determine the total shot budget ( N_{\text{total}} ) available for this measurement round. This budget can be fixed or determined adaptively based on a target precision.

Optimal Shot Allocation:

- Allocate the total shot budget ( N{\text{total}} ) across all terms ( { Oi } ) proportionally to their estimated variances and their coefficients' magnitudes. A common optimal allocation rule is: [ ni \propto \frac{ |ci| \hat{\sigma}i }{ \sumk |ck| \hat{\sigma}k } \times N{\text{total}} ] where ( ni ) is the number of shots allocated to term ( Oi ), and ( ci ) is its coefficient in the observable [2].

- Since terms are measured in groups, the shots for a group are determined by the maximum of the allocated shots for its constituent terms.

Final Measurement and Result Computation:

- Execute the measurement circuit for each group ( Gj ) with the allocated number of shots ( n{G_j} ).

- Compute the final expectation value of the total observable (e.g., ( \langle H \rangle ) or ( \langle [H, A_i] \rangle )) as the weighted sum of the results from each term.

Advantages: This protocol minimizes the overall variance of the final estimated observable for a given total shot budget. It is particularly effective when the variances of different terms vary significantly, as it directs more resources to the noisiest or most uncertain components [2].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Components for Shot-Efficient Quantum Measurement Research

| Item / Concept | Function / Description |

|---|---|

| Pauli Measurement | The process of measuring a quantum state in the eigenbasis of a Pauli operator (X, Y, Z). The fundamental building block for evaluating observables on quantum hardware. |

| Commutativity Grouping | A pre-processing technique that groups Hamiltonian terms or gradient observables into sets that can be measured simultaneously from a single circuit execution, drastically reducing the number of distinct circuits required. |

| Variance Estimation | The process of calculating the statistical variance of a measurement outcome. Serves as the critical input for determining optimal shot allocation. |

| ADAPT-VQE Operator Pool | A pre-defined set of operators (e.g., fermionic excitations) from which the algorithm adaptively selects to build the ansatz circuit. The composition of the pool influences the required gradient measurements. |

| Classical Shot Allocator | A classical software routine that implements the variance-based allocation algorithm. It takes variances and coefficients as input and outputs an optimal shot distribution. |

The Bottleneck of Sampling Overhead in VQE and ADAPT-VQE Algorithms

Variational Quantum Eigensolvers (VQE) and their adaptive counterpart, ADAPT-VQE, represent promising approaches for molecular simulation on Noisy Intermediate-Scale Quantum (NISQ) devices. These hybrid quantum-classical algorithms aim to determine ground state energies of molecular systems by combining quantum measurements with classical optimization. However, both algorithms face a critical bottleneck: prohibitively high sampling overhead, also known as shot requirements [4] [2]. This overhead arises from the need to perform numerous repeated measurements on quantum hardware to estimate expectation values and gradients with sufficient precision for chemical accuracy.

In the context of ADAPT-VQE, this challenge is particularly acute due to its iterative nature. At each iteration, the algorithm must evaluate energy gradients for all operators in a predefined pool to select the most promising one to add to the ansatz circuit [4]. This process requires decomposing commutators between the Hamiltonian and pool operators into measurable fragments, leading to a measurement cost that can scale as steeply as 𝒪(N⁸) with the number of spin-orbitals [5]. The combined requirements for operator selection and parameter optimization make measurement overhead the dominant bottleneck limiting the application of adaptive variational algorithms to larger molecular systems on near-term quantum devices [2].

Quantifying the Sampling Overhead Challenge

The sampling overhead in ADAPT-VQE originates from two primary sources:

Operator Selection: The original ADAPT-VQE protocol requires calculating the energy gradient for every operator in the pool using the formula: ( gi = \langle \psik \vert [\hat{H}, \hat{G}i] \vert \psik \rangle ) This necessitates decomposing the commutator into measurable Pauli terms and estimating each term's expectation value [4] [5].

Parameter Optimization: After adding a new operator, all parameters in the ansatz must be re-optimized, requiring repeated estimation of the energy expectation value (\langle \psi(\vec{\theta}) \vert \hat{H} \vert \psi(\vec{\theta}) \rangle) throughout the optimization process [4].

Quantitative Analysis of Overhead

Table 1: Measurement Overhead Reduction in State-of-the-Art ADAPT-VQE Implementations

| Molecule | Qubit Count | Original ADAPT-VQE | CEO-ADAPT-VQE* | Reduction |

|---|---|---|---|---|

| LiH | 12 | Baseline | 0.4% of original | 99.6% |

| H₆ | 12 | Baseline | 2% of original | 98% |

| BeH₂ | 14 | Baseline | 1% of original | 99% |

| H₂ | 4 | Baseline | 32.29% with reuse | 67.71% |

Data adapted from studies comparing measurement costs across molecular systems [2] [6].

Table 2: Performance of Variance-Based Shot Allocation Methods

| Molecule | Method | Shot Reduction | Accuracy Maintained |

|---|---|---|---|

| H₂ | VMSA | 6.71% | Yes |

| H₂ | VPSR | 43.21% | Yes |

| LiH | VMSA | 5.77% | Yes |

| LiH | VPSR | 51.23% | Yes |

Results demonstrate that variance-based shot allocation significantly reduces measurement requirements while preserving chemical accuracy [2].

Variance-Based Shot Allocation: Theoretical Framework

Variance-based shot allocation operates on the principle that measurement resources should be distributed according to the statistical uncertainty associated with each observable rather than uniformly across all terms [2]. This approach minimizes the total variance in the energy estimate for a fixed measurement budget.

The theoretical foundation lies in the observation that the Hamiltonian (\hat{H} = \sumi ci \hat{P}i) and gradient observables ([\hat{H}, \hat{G}i]) can be decomposed into Pauli terms with varying contributions to the total variance. For an observable (O = \sum{j=1}^L wj O_j), the optimal shot allocation according to the theoretical optimum derived in [2] assigns:

[ Sj \propto \frac{\sqrt{wj^2 \text{Var}(Oj)}}{\sumk \sqrt{wk^2 \text{Var}(Ok)}} \times S_{\text{total}} ]

where (Sj) is the number of shots allocated to term (j), (\text{Var}(Oj)) is the variance of the observable (Oj), (wj) is its coefficient, and (S_{\text{total}}) is the total shot budget.

This approach has been extended beyond Hamiltonian measurement to include gradient measurements in ADAPT-VQE, making it specifically tailored for adaptive algorithms [2]. When combined with commutativity-based grouping (such as qubit-wise commutativity), variance-based shot allocation delivers substantial reductions in measurement overhead while maintaining accuracy.

Experimental Protocols for Shot-Efficient ADAPT-VQE

Protocol 1: Pauli Measurement Reuse

Principle: Leverage measurement outcomes from VQE parameter optimization in subsequent operator selection steps by identifying shared Pauli strings between the Hamiltonian and commutator observables [2].

Step-by-Step Procedure:

Initial Setup:

- Precompute Pauli string decompositions of the Hamiltonian (\hat{H} = \sum{\alpha} w{\alpha} P{\alpha}) and all gradient observables ([\hat{H}, \hat{G}i] = \sum{\beta} v{\beta} P_{\beta}).

- Construct a mapping between Hamiltonian Pauli strings and those appearing in gradient observables.

VQE Execution:

- Perform shot allocation and measure expectation values of all Hamiltonian Pauli strings (P_{\alpha}) during parameter optimization.

- Store measurement outcomes (expectation values and variances) for all (P_{\alpha}).

Operator Selection:

- For each gradient observable ([\hat{H}, \hat{G}i]), identify Pauli strings (P{\beta}) that also appear in the Hamiltonian decomposition.

- Reuse stored measurement outcomes for shared Pauli strings instead of remeasuring.

- Measure only the unique Pauli strings not present in the Hamiltonian.

Iterative Update:

- Update the stored measurement database with new Pauli strings measured during operator selection.

- Repeat the reuse protocol in subsequent ADAPT-VQE iterations.

Validation: This protocol has been tested on molecular systems from H₂ (4 qubits) to BeH₂ (14 qubits) and N₂H₄ (16 qubits), reducing average shot usage to 32.29% of the naive approach [2].

Protocol 2: Variance-Based Shot Allocation for ADAPT-VQE

Principle: Optimally distribute measurement resources based on empirical variances of Hamiltonian and gradient observables [2].

Step-by-Step Procedure:

Observable Decomposition:

- Decompose the Hamiltonian (\hat{H} = \sum{i=1}^{LH} wi Hi) into Pauli terms (H_i).

- For each pool operator (\hat{G}j), decompose the gradient observable ([\hat{H}, \hat{G}j] = \sum{k=1}^{Lj} vk Ok) into Pauli terms (O_k).

Grouping Phase:

- Apply qubit-wise commutativity (QWC) grouping to both Hamiltonian and gradient observable terms.

- Form mutually commuting sets that can be measured simultaneously.

Initial Variance Estimation:

- Perform an initial calibration round with a small shot budget (e.g., 1% of total) to estimate Var((Hi)) and Var((Ok)) for all terms.

- For terms with zero initial variance, assign a small nominal variance to avoid division by zero.

Shot Allocation:

- For Hamiltonian measurement: [ Si^{\hat{H}} = \frac{\sqrt{wi^2 \text{Var}(Hi)}}{\sum{m=1}^{LH} \sqrt{wm^2 \text{Var}(Hm)}} \times S{\text{total}}^{\hat{H}} ]

- For gradient measurement: [ Sk^{[\hat{H},\hat{G}j]} = \frac{\sqrt{vk^2 \text{Var}(Ok)}}{\sum{n=1}^{Lj} \sqrt{vn^2 \text{Var}(On)}} \times S{\text{total}}^{[\hat{H},\hat{G}j]} ]

Iterative Refinement:

- Update variance estimates after each measurement round.

- Adjust shot allocation accordingly for subsequent iterations.

Validation: Applied to H₂ and LiH molecules, this protocol achieves shot reductions of 43.21% and 51.23% for VPSR method while maintaining chemical accuracy [2].

Protocol 3: Best-Arm Identification for Generator Selection

Principle: Reformulate generator selection as a Best Arm Identification (BAI) problem and apply successive elimination to minimize measurements on unpromising candidates [5].

Step-by-Step Procedure:

Initialization:

- Begin with the quantum state (\vert \psi_k \rangle) from the last VQE optimization.

- Initialize the active set (A_0 = \mathcal{A}) containing all pool operators.

Adaptive Rounds:

- For each round (r = 1) to (L): a. Set precision level (\epsilonr = cr \cdot \epsilon) with (cr \geq 1). b. For each generator (\hat{G}i \in Ar), estimate gradient (gi) with precision (\epsilonr). c. Compute (\mathcal{M} = \max{i \in Ar} |gi|). d. Eliminate all generators (\hat{G}i) satisfying: [ |gi| + Rr < \mathcal{M} - Rr ] where (Rr = dr \cdot \epsilon_r) is a confidence interval.

Final Selection:

- In the final round ((r = L)), set (c_L = 1) to estimate the selected gradient to target accuracy (\epsilon).

- Select the generator with the largest gradient magnitude from the remaining active set.

Validation: This approach has shown substantial reduction in the number of measurements required while preserving ground-state energy accuracy across molecular systems [5].

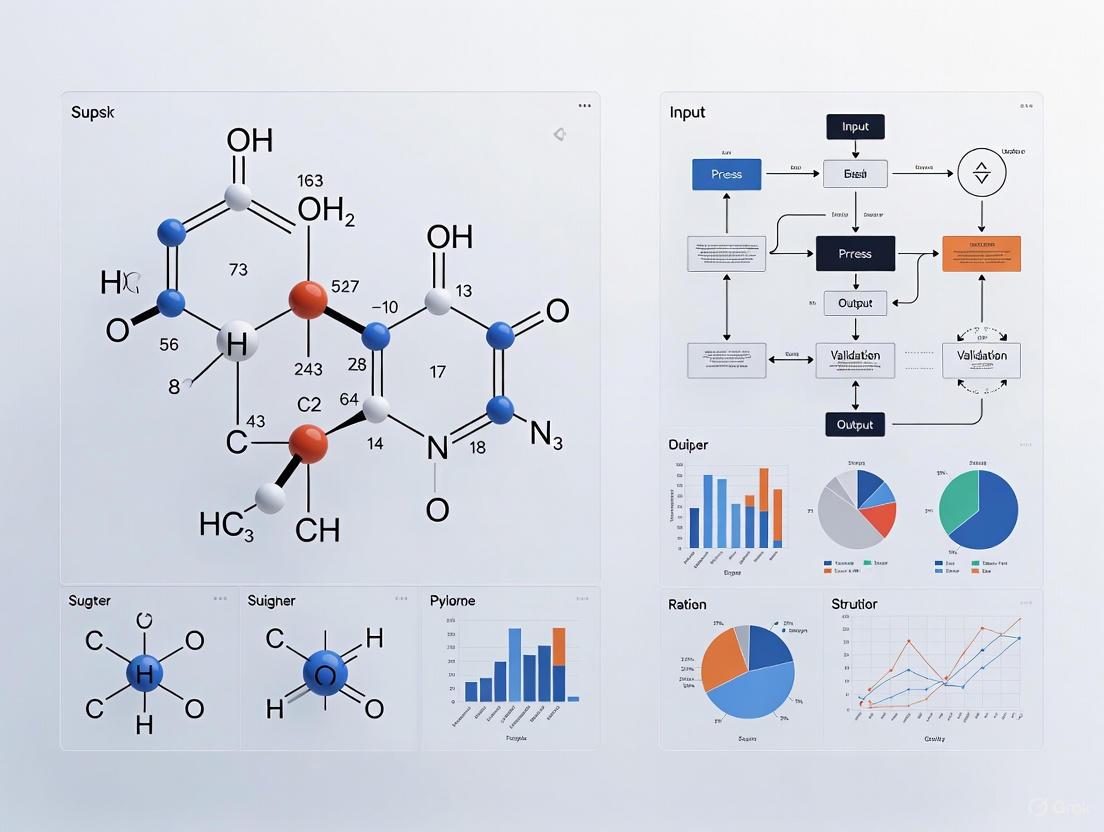

Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for Shot-Efficient ADAPT-VQE Implementation

| Component | Function | Implementation Notes |

|---|---|---|

| Qubit-Wise Commutativity (QWC) Grouping | Groups Pauli terms into mutually commuting sets for simultaneous measurement | Reduces number of distinct measurement circuits; compatible with variance-based allocation [2] |

| Coupled Exchange Operator (CEO) Pool | Compact operator pool specifically designed for reduced measurement requirements | Reduces CNOT count by up to 88% and measurement costs by up to 99.6% compared to original ADAPT-VQE [6] |

| Variance Monitoring System | Tracks empirical variances of Pauli terms for dynamic shot allocation | Enables optimal resource distribution based on statistical uncertainty [2] |

| Successive Elimination Framework | Implements Best-Arm Identification for generator selection | Progressively eliminates unpromising operators to focus measurements [5] |

| Measurement Reuse Database | Stores and retrieves Pauli measurement outcomes across algorithm iterations | Avoids redundant measurements of shared Pauli strings [2] |

| Error Mitigation Integration | Combines shot-reduction techniques with error mitigation methods | Enhances result quality under limited sampling budget [7] |

The integration of variance-based shot allocation with complementary techniques like Pauli measurement reuse and best-arm identification represents a significant advancement in making ADAPT-VQE practical for near-term quantum devices. The experimental protocols outlined in this document provide researchers with concrete methodologies for implementing these shot-efficient approaches.

These strategies collectively address the fundamental sampling overhead bottleneck that has limited the application of adaptive variational algorithms to larger molecular systems. When combined with improved operator pools such as the Coupled Exchange Operator pool, these techniques reduce measurement costs by up to 99.6% while maintaining chemical accuracy [6].

For drug development professionals and researchers investigating molecular systems, these protocols enable more efficient exploration of potential energy surfaces and reaction mechanisms on current quantum hardware. As quantum devices continue to improve in qubit count and fidelity, these shot-reduction techniques will become increasingly critical for bridging the gap between experimental demonstrations and practically useful quantum chemistry simulations.

In quantum computation, the inherent probabilistic nature of quantum mechanics means that running a quantum circuit once provides limited information. The standard deviation of these outcomes, which quantifies the spread of measurement results around the expected value, is a direct indicator of uncertainty. The process of running a quantum circuit multiple independent times is referred to as taking multiple "shots" [8] [1]. The variance (the square of the standard deviation) of the outcomes across these shots is the fundamental metric for quantifying this statistical uncertainty. It is crucial for researchers to understand that this variance is not static; it is directly influenced by the number of shots and the presence of hardware noise, which can inflate uncertainty [1].

Effectively managing this variance is a primary challenge in the Noisy Intermediate-Scale Quantum (NISQ) era. For tasks requiring a specific precision—such as estimating the expectation value of a molecular Hamiltonian in drug development—predicting the required number of shots is essential for allocating computational resources efficiently [1]. Furthermore, the impact of noise means that more shots are required on noisy hardware to achieve the same level of precision possible on a noiseless simulator. This article details the core principles and practical protocols for leveraging variance to predict and control measurement uncertainty in quantum applications.

Quantitative Foundations of Variance

The Relationship Between Shots and Variance

The relationship between the number of shots and the resulting variance is a cornerstone of statistical analysis in quantum computing. For a noiseless quantum circuit, the Central Limit Theorem dictates that the variance of the estimated expectation value decreases inversely with the number of shots, n [1]. This principle provides a predictable foundation for shot allocation. However, in real-world scenarios involving NISQ devices, various noise sources disturb this ideal relationship. These noise effects act as additional random variables, increasing the overall variance beyond the fundamental quantum limit [1]. Consequently, for a desired level of precision (variance), more shots are required on a noisy quantum processor compared to an ideal, noiseless simulation.

Quantifying Noise Contributions to Variance

The total variance in a measurement outcome is an aggregate of contributions from independent noise processes. Research has focused on characterizing four primary, well-studied noise sources, treated as independent random variables [1]:

- SPAM noise: Errors occurring during state preparation and measurement (e.g., a

0being misread as a1). - Amplitude damping (

T1): Energy relaxation of the qubit from the excited state (1) to the ground state (0). - Phase damping (

T2): Loss of quantum phase coherence without energy loss. - Gate noise: Imperfections in the application of quantum logic gates.

The following table summarizes the characteristics of these noise sources and their impact on variance.

Table 1: Primary Noise Sources and Their Impact on Variance

| Noise Source | Description | Effect on Variance |

|---|---|---|

| SPAM Noise | Asymmetric readout errors (e.g., p₀→₁ ≠ p₁→₀) |

Shifts the expected value and increases variance [1]. |

Amplitude Damping (T₁) |

Qubit energy relaxation | Introduces bias and additional fluctuations in outcomes. |

Phase Damping (T₂) |

Loss of quantum coherence | Reduces measurement fidelity, increasing variance. |

| Gate Noise | Imperfect gate operations | Accumulates errors, leading to higher outcome uncertainty. |

Protocols for Variance Estimation and Management

Protocol 1: Estimating Variance for a Target Precision

This protocol provides a systematic method to estimate the number of shots required to achieve a desired variance for a specific quantum circuit on a given quantum processor.

Procedure:

- Define Target Precision: Determine the maximum allowable variance (

σ²_target) for the computation based on the application's precision requirements [1]. - Characterize QPU Noise Profile: Before execution, calibrate the Quantum Processing Unit (QPU) to obtain current error rates for SPAM,

T1,T2, and gate fidelities [1]. - Initial Circuit Execution: Run the target quantum circuit on the characterized QPU with a preliminary, feasible number of shots (

n_init), such as 1,000 or 10,000. - Variance Calculation: From the results, calculate the observed variance (

σ²_obs) of the measured expectation value. - Decision Point: Compare

σ²_obswithσ²_target. Ifσ²_obsis sufficiently small, proceed to step 7. If not, proceed to step 6. - Shot Estimation: Use a statistical model (e.g., based on the concept that variance scales inversely with the number of shots, adjusted for the characterized noise) to estimate the required number of shots,

n_req, needed to achieveσ²_target. Return to step 3 with an updated shot count. - Final Execution: Execute the circuit with the determined

n_reqshots to obtain a result within the desired precision tolerance.

Protocol 2: Distributed Shot Allocation Across Multiple QPUs

This protocol leverages the "shot-wise" framework to distribute a single quantum circuit's shots across multiple heterogeneous QPUs. This approach mitigates the variability and individual weaknesses of any single device, often leading to more stable and reliable results [8].

Procedure:

- QPU Reliability Assessment: Pre-evaluate the accuracy and reliability of each available QPU. This can be based on published fidelity metrics or bespoke benchmark circuits [8].

- Policy-Based Shot Splitting: Distribute the total shot budget (

N_total) among the QPUs according to a predefined policy. Key policies include:- Uniform Policy: Shots are split equally across all available QPUs.

- Reliability-Weighted Policy: Shots are allocated proportionally to the pre-assessed reliability of each QPU [8].

- Concurrent Circuit Execution: Execute the same quantum circuit on each QPU with its allocated number of shots.

- Result Merging: Collect the individual output distributions (histograms of measurement outcomes) from each QPU and merge them into a single, aggregated output distribution. The merge can be a simple weighted average based on the shot allocation [8].

Protocol 3: Variance Analysis in Variational Quantum Algorithms

Variational Quantum Algorithms (VQAs), like the Variational Quantum Eigensolver (VQE), are central to quantum chemistry and drug discovery. These algorithms use a classical optimizer to train a parameterized quantum circuit. The uncertainty in the energy measurement (the cost function) at each iteration, dictated by variance, directly impacts the optimizer's performance [9] [10].

Procedure:

- Circuit Execution and Sampling: For a given set of parameters

θ, run the variational quantum circuitntimes (shots) to estimate the expectation value of the molecular Hamiltonian,⟨H(θ)⟩. - Variance Tracking: At each optimization step, record the variance associated with the energy estimate

⟨H(θ)⟩. This variance is a function of both the circuit parameters and the number of shots. - Adaptive Shot Allocation: Implement a strategy where the number of shots is dynamically adjusted during optimization. In early stages, use fewer shots to find a rough minimum quickly. As the optimization converges, increase the shot count to reduce variance and precisely pinpoint the minimum energy [9].

- Gradient-Free Optimization: To combat issues like barren plateaus where gradient information vanishes, use gradient-free classical optimizers (e.g., particle swarm optimization) that rely only on the function value and are robust to its inherent stochasticity (variance) [10].

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item Name | Function / Description | Application Note |

|---|---|---|

| Noisy Quantum Simulator | Software that emulates real quantum hardware by simulating effects of noise (SPAM, T1, T2). | Used for prototyping variance estimation protocols and testing shot-allocation strategies before running on expensive QPUs [1]. |

| Statistical Modeling Script | A custom script (e.g., in Python) implementing the relationship Variance ≈ f(noise_parameters) / n_shots. |

Core to Protocol 1; used to predict the required number of shots to achieve a target variance for a specific circuit and QPU [1]. |

| Quantum Hardware Aggregator | A software framework (e.g., based on the "shot-wise" methodology) that manages distribution of shots across multiple QPUs from different providers. | Essential for executing Protocol 2; improves result stability and mitigates the risk of relying on a single noisy device [8]. |

| Gradient-Free Optimizer | A classical optimization algorithm (e.g., Particle Swarm Optimization) that does not require gradient information. | Critical for optimizing VQAs (Protocol 3) in the presence of high measurement variance and barren plateaus [10]. |

| Benchmarking Circuit Suite | A collection of simple, well-understood quantum circuits used to characterize QPU performance and reliability. | Used for the initial reliability assessment of QPUs in Protocol 2 [8]. |

In computational science, the principle of uniform resource allocation presents a significant and often unexamined inefficiency. In molecular simulations, particularly those enhanced by machine learning (ML) and quantum algorithms, uniformly distributing computational "shots" or cycles across all system components ignores the varying impact and uncertainty inherent in different parts of the system. This approach leads to substantial computational waste, slowing discovery in critical fields like drug development and materials science. This application note details these inefficiencies and provides protocols for implementing variance-based shot allocation, a strategy adapted from quantum circuit research that can dramatically improve the cost-effectiveness of molecular simulations. By focusing resources on the most uncertain or influential components, researchers can achieve higher accuracy with fewer computational resources, accelerating the pace of scientific discovery.

The Inefficiency of Uniform Allocation in Molecular Simulation

Molecular simulations, whether using classical Molecular Dynamics (MD) or quantum algorithms like the Variational Quantum Eigensolver (VQE), are computationally intensive. The traditional approach of uniform allocation—spending equal effort on every molecular interaction, system state, or Hamiltonian term—fails to account for the fact that some elements contribute more significantly to the overall uncertainty or final result.

In Classical MD and ML-Driven Simulations: High-throughput MD simulations generate extensive datasets for training ML models that predict material properties [11]. In this context, uniform sampling of the vast chemical space means that computational time is wasted on stable, predictable regions rather than being focused on complex molecular interactions that dominate emergent properties. For instance, simulating all solvent mixtures with equal computational effort ignores the fact that certain non-obvious intermolecular interactions are more challenging to model and thus require more sampling [11]. Enhanced ML molecular simulations used for optimizing processes like flotation selectivity similarly suffer if computational resources are not directed toward capturing crucial, hard-to-predict dynamical events at mineral-water interfaces [12].

In Quantum Computational Chemistry: The inefficiency is even more pronounced in quantum algorithms. The ADAPT-VQE algorithm, used for finding molecular ground states, suffers from a "high quantum measurement (shot) overhead" [2]. A "shot" refers to a single measurement of a quantum system. Naively, measuring all Pauli terms in the Hamiltonian with an equal number of shots is highly inefficient, as the variance—and thus the uncertainty—of these terms varies greatly. This uniform approach is a major bottleneck for scaling quantum computations to larger molecules [2] [3].

Table 1: Comparative Performance of Uniform vs. Optimized Shot Allocation

| Allocation Method | Key Principle | Reported Efficiency Gain | Application Context |

|---|---|---|---|

| Uniform Allocation | Equal shots per operator or simulation step | Baseline (0%) | Naive quantum simulation; Standard MD sampling |

| Variance-Based Shot Allocation (VPSR) | Shots allocated inversely proportional to variance | Up to 51.23% reduction in shots for LiH [2] | ADAPT-VQE for molecular energy calculation |

| Reused Pauli Measurements | Reusing measurement outcomes from previous optimization steps | ~32% reduction in average shot usage [2] | ADAPT-VQE for molecular energy calculation |

Protocol for Implementing Variance-Based Shot Allocation

This protocol adapts strategies from quantum computation [2] for use in broader molecular simulation contexts, focusing on identifying and reducing inefficiencies.

Experimental Setup and Workflow

The following diagram illustrates the core workflow for implementing a simulation with variance-based resource allocation, contrasting it with the inefficient uniform method.

Step-by-Step Procedures

Protocol 1: Identifying Inefficiencies in an Existing Simulation Pipeline

This protocol helps diagnose the cost of uniform allocation in a current workflow.

- System Component Identification: Enumerate all discrete elements (K) that consume computational resources during a single simulation cycle. In quantum chemistry, these are the Pauli terms of the Hamiltonian [2]. In classical MD or ML, this could be different force terms, conformational states, or specific molecular interactions within a mixture [11] [12].

- Baseline Resource Profiling: Run a short, representative simulation using your current uniform allocation method. Record the total computational cost (e.g., CPU-hours, wall time, or number of quantum shots).

- Variance Calculation: For each of the K components identified in Step 1, calculate the variance of its contribution to the final property of interest (e.g., energy, density, enthalpy of mixing) over the simulation run.

- Inefficiency Metric: Compute the Inefficiency Factor (IF) using the data from Step 3. [ IF = \frac{\text{Sum of Variances}}{\text{Sum of Standard Deviations}^2} \times K ] A higher IF indicates greater inefficiency and a larger potential gain from variance-based allocation. An IF significantly above 1.0 signals that resources are being wasted on components with low uncertainty.

Protocol 2: Implementing Variance-Based Shot Allocation for a Molecular Simulation

This protocol provides a concrete methodology for implementing an optimized simulation, inspired by shot-efficient quantum algorithms [2].

- Resource Definition: Define your total computational budget (B), which is the total number of shots, cycles, or samples available for a single simulation measurement. For example, B = 100,000 shots.

- Preliminary Sampling: Allocate a small fraction of the total budget (e.g., B_prelim = 10% of B) to take uniform measurements of all K components.

- Variance Estimation: From the preliminary data, calculate the estimated variance (σ²_i) for each component i = 1,..., K.

- Optimal Budget Allocation: Calculate the optimal number of resources (Ai) to allocate to each component i for the main production run using the formula: [ Ai = \frac{\sigmai}{\sum{j=1}^{K} \sigmaj} \times (B - B{\text{prelim}}) ] This allocates more resources to components with higher uncertainty (standard deviation).

- Production Simulation & Aggregation: Execute the main simulation, allocating A_i resources to each component. Aggregate the results from all components to compute the final property of interest.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Optimized Simulations

| Tool / Resource | Function / Description | Relevance to Protocol |

|---|---|---|

| Molecular Dynamics Engine (e.g., GROMACS, NAMD, OpenMM) | Software to perform classical MD simulations, generating trajectories and property data [13]. | Provides the computational environment for running simulations and collecting variance data on force terms or molecular interactions. |

| Quantum Simulation Framework (e.g., Qiskit, Cirq, Pennylane) | Provides the environment to run VQE and ADAPT-VQE algorithms on simulators or quantum hardware [2]. | Essential for implementing variance-based shot allocation and Pauli measurement reuse in quantum chemistry calculations. |

| OPLS4 Force Field | A classical molecular mechanics force field parameterized to accurately predict properties like density and heat of vaporization [11]. | Used in high-throughput MD to generate consistent, reliable training data for ML models, forming the basis for variance analysis. |

| Variance Analysis Script | A custom script (e.g., in Python) to calculate component-wise variances and compute optimal resource allocation. | Core tool for implementing Protocol 2, Steps 3 and 4. Can be integrated into simulation workflows. |

| Commutativity Grouping Algorithm | An algorithm to group Hamiltonian terms (Pauli strings) that commute, allowing them to be measured simultaneously [2]. | Reduces quantum measurement overhead further when combined with variance-based allocation, a key step in shot-efficient ADAPT-VQE. |

The high cost of uniform allocation is a pervasive but solvable problem in computational molecular science. By identifying the variances in system components and strategically reallocating resources, researchers can achieve the same—or even higher—levels of accuracy at a significantly reduced computational cost. The protocols and tools outlined here, drawing from cutting-edge research in quantum circuit optimization, provide a practical roadmap for integrating variance-based shot allocation into both classical and quantum simulation workflows. Adopting these efficient practices is crucial for accelerating drug development and the design of novel materials.

Linking Shot Efficiency to Practical Drug Development Workflows

The integration of quantum computing into pharmaceutical research presents a transformative opportunity to accelerate critical path activities, most notably in the early stages of drug discovery and development. This document details the application of variance-based shot allocation—a technique for optimizing quantum circuit measurements—to enhance the efficiency of computational tasks foundational to modern drug development. By strategically reducing the quantum measurement (shot) overhead in the Adaptive Variational Quantum Eigensolver (ADAPT-VQE), these methods can make quantum-assisted molecular simulations more feasible and resource-effective within established R&D workflows [2] [3].

The high measurement costs associated with variational quantum algorithms have been a significant bottleneck for their practical application on current Noisy Intermediate-Scale Quantum (NISQ) hardware. This protocol outlines how integrating shot-efficient algorithms directly addresses this limitation, potentially reducing the computational resources required for high-accuracy simulations of molecular systems, a task central to target identification and lead compound optimization [2].

Application Notes

The implementation of shot-efficient algorithms provides a tangible bridge between abstract quantum computation and practical pharmaceutical challenges. The core value lies in making quantum chemical calculations more scalable and integrable into existing R&D pipelines, which are increasingly reliant on in silico methods and Model-Informed Drug Development (MIDD) approaches [14].

Table 1: Quantitative Impact of Shot Optimization Strategies

| Optimization Strategy | Average Reduction in Shot Usage | Key Application in Drug Development | Maintained Fidelity |

|---|---|---|---|

| Reused Pauli Measurements (with grouping) | 32.29% [3] | Molecular system simulation for target identification | Yes [2] [3] |

| Variance-Based Shot Allocation (VPSR for LiH) | 51.23% [2] | Lead compound optimization and toxicity prediction | Yes [2] [3] |

| Combined Strategy (Grouping & Reuse) | >30% [3] | High-accuracy simulation of complex molecular systems | Yes [3] |

The "fit-for-purpose" principle in MIDD emphasizes that modeling tools must be closely aligned with the specific Question of Interest (QOI) and Context of Use (COU) [14]. The shot-efficient ADAPT-VQE is particularly fit-for-purpose for:

- Target Identification and Validation: Accurately simulating molecular interactions and binding affinities for novel disease targets [14] [15].

- Lead Optimization: Predicting the electronic properties and reactivity of small molecules to guide the design of safer, more effective drug candidates with improved solubility, stability, and bioavailability [14] [15].

These applications directly support the industry's goal of reducing late-stage attrition by improving the prediction of pharmacokinetics (PK), pharmacodynamics (PD), and toxicity profiles earlier in the development process [16] [15].

Experimental Protocols

Protocol A: Implementing Shot-Efficient ADAPT-VQE for Molecular Simulation

This protocol describes the methodology for applying variance-based shot allocation and Pauli measurement reuse to simulate molecular systems relevant to drug discovery, such as small protein ligands or potential drug metabolites [2].

Objective: To determine the ground state energy of a target molecule (e.g., LiH) with chemical accuracy while minimizing the total number of quantum measurements required.

Materials:

- See "The Scientist's Toolkit" for essential research reagents and computational resources.

Procedure:

- System Hamiltonian Preparation:

- Define the molecular system, including its geometry and active space.

- Generate the fermionic Hamiltonian (

H_f) under the Born-Oppenheimer approximation [2]. - Transform the fermionic Hamiltonian into a qubit Hamiltonian using a suitable transformation (e.g., Jordan-Wigner or Bravyi-Kitaev), resulting in a sum of Pauli strings:

H = Σ_i c_i P_i.

Commutator Grouping for Gradient Measurement:

- For the operator pool {A_i}, compute the commutator

[H, A_i]for each operator. - The gradient component for operator selection is given by

∂<ψ(θ)|H|ψ(θ)>/∂θ_i = i<ψ|[H, A_i]|ψ>[2]. - Decompose the commutator

[H, A_i]into a sum of Pauli terms. - Perform qubit-wise commutativity (QWC) grouping on the combined set of Pauli strings from the Hamiltonian

Hand all commutators[H, A_i]to minimize the number of distinct measurement circuits [2].

- For the operator pool {A_i}, compute the commutator

Variance-Based Shot Allocation:

- For a given iteration and parameter set

θ, allocate the total shot budget across the grouped Pauli terms. - The number of shots

S_ifor a Pauli termP_iis proportional to the square root of its varianceVar[P_i]divided by its coefficient|c_i|, following the relation:S_i ∝ (√(Var[P_i]) / |c_i|)[2] [17]. - This allocation strategy prioritizes shots for terms with higher uncertainty and computational weight, maximizing the information gained per shot.

- For a given iteration and parameter set

Pauli Measurement Reuse:

- During the VQE parameter optimization step, execute the quantum circuits and store the outcomes (bitstrings) for all measured Pauli observables.

- In the subsequent ADAPT-VQE iteration, for the operator selection step, reuse the stored measurement outcomes to compute the expectation values for any Pauli strings that are identical to those already measured [2] [3].

- This avoids redundant state preparation and measurement, directly reducing shot overhead.

Iterative ADAPT-VQE Execution:

- Begin with a simple reference state (e.g.,

|ψ_0> = |HF>). - For each iteration until energy convergence is achieved:

a. Operator Selection: Calculate the gradients for all operators in the pool using the shot-optimized measurement protocol from steps 2-4. Select the operator with the largest

|gradient|. b. Ansatz Growth: Append the selected operator (as a parameterized gate, e.g.,exp(-iθ_i A_i)) to the quantum circuit. c. Parameter Optimization: Re-optimize all parametersθin the expanded ansatz using a classical optimizer (e.g., iCANS [17]), employing the shot allocation strategy from step 3.

- Begin with a simple reference state (e.g.,

The following workflow diagram illustrates the integrated, shot-efficient protocol:

Protocol B: Integration with a Broader Drug Discovery Pipeline

This protocol outlines how to embed the shot-efficient quantum simulation from Protocol A into a classical AI-driven drug discovery workflow, creating a hybrid pipeline for accelerated lead compound identification [18] [15].

Objective: To utilize a shot-efficient quantum simulation to provide high-fidelity data on molecular properties for a machine learning model tasked with predicting drug efficacy and toxicity.

Procedure:

- Target Identification: Use classical AI tools (e.g., NLP on biomedical literature, genomic data analysis) to identify and validate a novel disease target [15].

- Compound Library Generation: Employ generative AI or access existing chemical libraries to create a set of candidate molecules predicted to interact with the target [16].

- Classical AI Pre-screening: Use established ML models (e.g., QSAR, Random Forests) to perform initial, rapid screening of the candidate library. Filter out compounds with predicted poor pharmacokinetic (ADME) properties or high toxicity [15].

- Quantum Simulation of Shortlisted Candidates: For the top candidates (e.g., 5-10 compounds) from the pre-screening, perform detailed electronic structure calculations using Protocol A to compute precise molecular properties (e.g., binding affinity, reaction energy profiles) that are computationally prohibitive or less accurate with purely classical methods [2].

- Data Integration and Model Refinement: Feed the high-fidelity quantum simulation results back into the classical AI model. This can be used to retrain or validate the ML model, improving its predictive accuracy for future screening cycles [18].

- Lead Candidate Selection: Combine the results from classical AI and quantum simulation to select the most promising lead candidate for in vitro preclinical testing.

The following diagram illustrates this hybrid workflow:

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Shot-Efficient Quantum Drug Discovery

| Item Name | Function/Description | Relevance to Workflow |

|---|---|---|

| ADAPT-VQE Algorithm | A variational quantum algorithm that iteratively builds a problem-tailored ansatz circuit, reducing depth and improving trainability [2]. | Core computational framework for quantum simulation. |

| Qubit-Wise Commutativity (QWC) Grouping | A technique to group Hamiltonian Pauli terms and commutator terms that can be measured simultaneously, reducing circuit executions [2]. | Critical for minimizing measurement overhead in Protocols A & B. |

| Variance-Based Shot Allocation Scheduler | A classical software routine that dynamically allocates the quantum measurement budget based on the calculated variance of each observable [2] [17]. | Enables the shot-efficient core of the protocol. |

| iCANS Optimizer | An adaptive classical optimizer for variational algorithms that frugally selects the number of measurements for each gradient component [17]. | Efficiently handles parameter optimization in noisy, shot-limited environments. |

| Classical AI/ML Models (e.g., QSAR, CNN, RNN) | Machine learning models used for initial compound screening, property prediction, and target identification [15]. | Forms the classical pre-screening and data integration layer in Protocol B. |

| High-Throughput Computing Cluster | Classical computational resources for running ML models, managing data, and controlling quantum hardware interactions. | Supports the extensive classical computation and data management required. |

Implementing Strategic Shot Allocation: From Theory to Practice in Quantum Chemistry

In the Noisy Intermediate-Scale Quantum (NISQ) era, quantum computations are fundamentally statistical. A quantum circuit is executed multiple times (shots) to estimate the expectation value of an observable, a process critical for algorithms like the Variational Quantum Eigensolver (VQE). Given the constraints of noisy hardware and finite computational resources, a central challenge is determining how to optimally allocate these shots to minimize the statistical error, or variance, of the final result. This application note details the core principle of allocating shots proportional to the variance of individual operators within a Hamiltonian, a method grounded in classical statistics that directly minimizes the overall variance of the estimated energy. Framed within broader thesis research on variance-based shot allocation, this document provides the theoretical foundation, a practical experimental protocol, and supporting visualizations for implementing this strategy.

Theoretical Foundation and Motivation

The goal of many variational quantum algorithms is to estimate the expectation value of a Hamiltonian ( H ), which is typically decomposed into a sum of simpler operators: ( H = \sum{i=1}^{L} ci Hi ). The expectation value ( \langle H \rangle ) is then approximated by ( \sum{i=1}^{L} ci \langle Hi \rangle ).

Each term ( \langle Hi \rangle ) is estimated from a finite number of measurement shots, ( Ni ), and has an associated variance ( \text{Var}(\langle Hi \rangle) ). The total variance of the energy estimate is then: [ \text{Var}(\langle H \rangle) = \sum{i=1}^{L} ci^2 \text{Var}(\langle Hi \rangle) ] Assuming the individual terms are estimated independently, the variance of each term scales inversely with the number of shots allocated to it: ( \text{Var}(\langle Hi \rangle) \propto \sigmai^2 / Ni ), where ( \sigmai^2 ) is the intrinsic variance of the operator ( H_i ) for the given quantum state.

The core optimization problem is to distribute a fixed total number of shots ( N{\text{total}} = \sum{i=1}^{L} Ni ) in a way that minimizes ( \text{Var}(\langle H \rangle) ). The solution, derived using the method of Lagrange multipliers, is to allocate shots proportional to the product of the coefficient's magnitude and the operator's standard deviation: [ Ni \propto |ci| \sigmai ] This allocation strategy ensures that more resources are directed towards measuring terms that contribute more significantly to the overall uncertainty, thereby minimizing the total variance most efficiently [1] [19].

Quantitative Comparison of Shot Allocation Strategies

The table below summarizes the key characteristics of different shot allocation strategies, highlighting the advantages of the variance-proportional approach.

Table 1: Comparison of Quantum Shot Allocation Strategies

| Strategy Name | Core Principle | Key Advantage | Reported Performance | ||

|---|---|---|---|---|---|

| Uniform Allocation | Distributes shots equally across all Hamiltonian terms: ( Ni = N{\text{total}} / L ) | Simplicity of implementation | Serves as a baseline; often inefficient [19] | ||

| Coefficient-Proportional | Allocates shots based on the weight of the Hamiltonian coefficient: ( N_i \propto | c_i | ) | Accounts for term importance | Improved over uniform, but ignores quantum state information [19] |

| Variance-Proportional (This Protocol) | Allocates shots based on ( | c_i | \sigma_i ) | Minimizes total variance of the expectation value | Theoretically optimal for a fixed shot budget; foundational for advanced methods [1] |

| Distribution-Adaptive Dynamic Shot (DDS) | Dynamically adjusts shots per VQE iteration based on output distribution entropy | Reduces total shots by ~50% while maintaining accuracy vs. fixed-shot methods [19] | 60% higher accuracy than tiered allocation in noisy simulations [19] | ||

| Shot-Wise Distribution | Distributes a circuit's shots across multiple, heterogeneous QPUs | Improves result stability and robustness against individual QPU noise [20] [8] | Performance aligns with or exceeds average single-QPU outcomes [20] |

Experimental Protocol: Variance-Proportional Shot Allocation for VQE

This protocol provides a step-by-step methodology for implementing variance-proportional shot allocation in a VQE experiment aimed at finding the ground state energy of a molecular Hamiltonian.

Research Reagent Solutions

Table 2: Essential Computational Tools and Methods

| Item Name | Function/Description | Example/Note | |

|---|---|---|---|

| Molecular Hamiltonian | The target operator for the VQE algorithm, defining the problem. | Generated via classical electronic structure packages (e.g., PSI4, PySCF). | |

| Parameterized Quantum Circuit (PQC) | The ansatz that prepares the trial quantum state ( | \psi(\vec{\theta})\rangle ). | Hardware-Efficient Ansatz or Unitary Coupled Cluster (UCC) ansatz. |

| Classical Optimizer | Updates the parameters ( \vec{\theta} ) to minimize the estimated energy. | Gradient-free optimizers (e.g., COBYLA, SPSA) are often used. | |

| Quantum Simulator / QPU | Executes the quantum circuits to obtain measurement statistics. | Can be a noisy simulator modeling real hardware or an actual QPU. | |

| Variance Estimator | A subroutine to compute the intrinsic variances ( \sigmai^2 ) of each operator ( Hi ). | Requires preliminary circuit executions to collect measurement data. |

Step-by-Step Procedure

Problem Formulation and Initialization: a. Input: A Hamiltonian ( H = \sum{i=1}^{L} ci Hi ), a parameterized quantum circuit ( U(\vec{\theta}) ), and a total shot budget ( N{\text{total}} ) for a single energy evaluation. b. Initialize the classical optimizer with a random set of parameters ( \vec{\theta}_0 ).

Calibration and Initial Variance Estimation (at each optimization step ( k )): a. Prepare the quantum state ( |\psi(\vec{\theta}k)\rangle ) using the PQC. b. For each term ( Hi ) in the Hamiltonian, allocate a small, fixed number of calibration shots (e.g., ( N{\text{cal}} = 1000 )) to measure its expectation value ( \langle Hi \rangle ) and, crucially, its variance ( \sigmai^2 ). c. The variance for a Pauli string operator ( Hi ) can be computed from the measurement counts of its eigenvalues (±1). If ( p+ ) is the probability of measuring +1, then ( \langle Hi \rangle = 2p+ - 1 ) and ( \sigmai^2 = \langle Hi^2 \rangle - \langle Hi \rangle^2 = 1 - (2p_+ - 1)^2 ).

Optimal Shot Allocation: a. Using the variances ( \sigmai^2 ) estimated in Step 2, calculate the optimal number of shots for each term: [ Ni = \frac{ |ci| \sigmai }{\sum{j=1}^{L} |cj| \sigmaj} \times N{\text{total}} ] b. Round the ( Ni ) values to the nearest integers, ensuring ( \sum{i} Ni = N{\text{total}} ).

Primary Measurement and Energy Estimation: a. For each term ( Hi ), execute the corresponding measurement circuit ( Ni ) times to obtain a refined estimate of ( \langle Hi \rangle ). b. Compute the total energy estimate: ( E(\vec{\theta}k) = \sum{i=1}^{L} ci \langle H_i \rangle ).

Classical Optimization Loop: a. Pass the energy estimate ( E(\vec{\theta}k) ) to the classical optimizer. b. The optimizer proposes a new set of parameters ( \vec{\theta}{k+1} ). c. Repeat Steps 2-5 until the optimization converges to a minimum energy.

The following workflow diagram illustrates this protocol, with a focus on the quantum-classical feedback loop.

Advanced Integration and Error Suppression

The core principle of variance-proportional allocation can be integrated with other advanced compilation and error suppression techniques to further enhance performance on NISQ devices.

Integration with Circuit Ensembles for Error Suppression

A powerful synergy exists between dynamic shot allocation and the use of circuit ensembles. As detailed in [21], an input circuit can be partitioned into blocks, and each block can be compiled into an ensemble of approximate circuits. When the outputs of these ensemble members are averaged, the overall error in the final result can be quadratically suppressed (( \epsilon \rightarrow \epsilon^2 )).

Integrated Workflow:

- Partitioning: The target circuit is split into manageable subcircuits.

- Ensemble Synthesis & Diversification: For each subcircuit, a diverse ensemble of ( M^{(k)} ) circuits is generated, each approximating the target unitary to within a tolerance ( \epsilon ).

- Ensemble Optimization: A convex optimization determines weights ( p_i^{(k)} ) for each ensemble member to maximize accuracy.

- Execution with Dynamic Shot Allocation: The overall circuit is executed by sampling from these optimized ensembles. For each sampled circuit, the variance-proportional shot allocation protocol is applied to measure the final Hamiltonian. This combines the error suppression of ensemble averaging with the statistical efficiency of optimal shot allocation [21].

Logical Workflow for Integrated Strategy

The following diagram outlines the high-level integration of circuit ensembles with the measurement process.

In the Noisy Intermediate-Scale Quantum (NISQ) era, variational quantum algorithms (VQAs) have emerged as promising candidates for achieving practical quantum advantage. However, a significant bottleneck limiting their scalability and practical implementation is the immense measurement overhead—often requiring thousands of independent circuit executions, or "shots"—to obtain reliable results. This application note details the theoretical frameworks and experimental protocols for variance-based shot allocation, a strategy designed to derive the optimum shot budget for quantum computations. By dynamically distributing measurement resources based on the statistical variance of observables, researchers can achieve chemical accuracy in tasks like molecular simulation with dramatically reduced measurement costs [22] [2] [17]. This approach is particularly relevant for drug development professionals seeking to leverage quantum computing for efficient molecular modeling and energy calculations.

Theoretical Foundations of Shot Allocation

The core principle behind variance-based shot allocation is that not all measurements contribute equally to the precision of the final calculated expectation value. The optimal strategy minimizes the total number of shots required to achieve a desired accuracy by investing more resources in measuring terms with higher statistical variance.

Core Mathematical Principle

For a Hamiltonian decomposed into a sum of Pauli terms, ( \hat{H} = \sumi ci \hat{P}i ), the total variance of the energy estimate is ( \sigma^2{\text{total}} = \sumi \frac{ci^2 \sigma^2i}{Si} ), where ( \sigma^2i ) is the variance of Pauli term ( \hat{P}i ), and ( Si ) is the number of shots allocated to it. The optimal shot allocation, derived by minimizing the total variance under a fixed shot budget, is given by: [ Si^* \propto \frac{|ci| \sigmai}{\sqrt{\sumj |cj| \sigma_j}} ] This framework ensures that shots are distributed preferentially to terms that are more difficult to measure precisely (those with larger coefficients and higher variances) [2] [17].

Integration with Adaptive Algorithms

This shot allocation strategy can be seamlessly integrated into adaptive VQEs, such as the ADAPT-VQE algorithm. In ADAPT-VQE, the ansatz is built iteratively, and each iteration requires estimating the energy and calculating gradients with respect to the pool operators. Applying variance-based shot allocation to both the Hamiltonian energy measurement and the gradient measurements significantly reduces the total shot cost of the algorithm without compromising the fidelity of the result [2].

Diagram 1: Integrated shot-efficient ADAPT-VQE workflow, showcasing the synergy between measurement reuse and variance-based allocation.

Frameworks for Shot Budget Optimization

Two primary, complementary frameworks have been developed to tackle the shot budget problem: one that optimizes shots within a single algorithm on a single Quantum Processing Unit (QPU), and another that distributes shots for a single circuit across multiple, heterogeneous QPUs.

Table 1: Comparative Analysis of Shot Budget Optimization Frameworks

| Framework | Core Principle | Key Advantage | Reported Shot Reduction | Primary Application Context |

|---|---|---|---|---|

| Integrated Shot-Optimized ADAPT-VQE [22] [2] | Reuses Pauli measurements from VQE optimization in subsequent gradient steps and applies variance-based shot allocation. | Tightly integrated, algorithm-specific optimization; maintains high accuracy. | 32-51% compared to naive measurement schemes. | Molecular energy calculations (e.g., H₂, LiH, BeH₂). |

| Shot-Wise Distribution [20] [8] | Distributes the total shot budget for a single circuit across multiple, heterogeneous QPUs. | Enhanced fault tolerance, reduced waiting times, and robustness against individual QPU noise. | Improves result stability and often outperforms single QPU runs. | Executing quantum circuits in distributed, multi-device computing environments. |

| iCANS Optimizer [17] | An adaptive optimizer for stochastic gradient descent that frugally and independently selects the number of shots for each gradient component. | Reduces the number of shots required for convergence, especially effective in noisy environments. | Outperforms state-of-the-art optimizers in simulation, particularly with noise. | General variational quantum algorithms (VQEs, quantum compiling). |

The Shot-Wise Distribution Framework

This framework challenges the conventional view of a quantum circuit's execution as a monolithic unit. Instead, it proposes that the total number of shots for a single circuit can be "split" across multiple available QPUs based on customizable policies (e.g., equally, randomly, or proportionally to QPU reliability). The partial results (output distributions) from each QPU are then merged into a final, unified result [20] [8]. This approach turns the limitations of NISQ devices into an advantage, offering robustness and flexibility.

Diagram 2: Logical workflow of the shot-wise distribution framework, splitting a single circuit's shot budget across multiple QPUs.

Experimental Protocols

This section provides detailed methodologies for implementing the shot-efficient ADAPT-VQE protocol, a leading approach for molecular simulations.

Protocol: Shot-Efficient ADAPT-VQE with Variance-Based Allocation

Objective: To compute the ground state energy of a molecule with chemical accuracy while minimizing the total number of quantum measurements required.

Pre-experiment Preparation:

- Molecular System Definition: Define the molecule, its atomic coordinates, and active space.

- Hamiltonian Formulation: Express the electronic Hamiltonian in the second quantized form, ( \hat{H}f = \sum{p,q} h{pq} ap^\dagger aq + \frac{1}{2} \sum{p,q,r,s} h{pqrs} ap^\dagger aq^\dagger as ar ), and map it to a qubit Hamiltonian, ( \hat{H} = \sumi ci \hat{P}i ), where ( \hat{P}_i ) are Pauli strings [2].

- Operator Pool Definition: Select a pool of operators (e.g., fermionic excitations) from which the adaptive ansatz will be constructed.

Step-by-Step Procedure:

- Initialization: Start with a simple reference state (e.g., Hartree-Fock) as the initial ansatz.

- ADAPT-VQE Iteration Loop: For each iteration ( k ):

A. VQE Energy Optimization

- Group Commuting Terms: Group the Hamiltonian Pauli terms ( \hat{P}i ) into mutually commuting sets (e.g., using qubit-wise commutativity) to minimize the number of distinct circuit executions [2].

- Allocate Shots: For each group ( g ), calculate the variance ( \sigmag^2 ) (estimated from a pre-allocation of shots or from previous iterations) and allocate shots ( Sg ) according to ( Sg \propto |cg| \sigmag ).

- Measure and Optimize: Execute the parameterized quantum circuit with the current ansatz for the allocated shots. Use a classical optimizer (e.g., SPSA) to minimize the energy expectation value ( E(\theta) = \langle \psi(\theta) | \hat{H} | \psi(\theta) \rangle ). Store all Pauli measurement outcomes.

- Termination: The loop continues until the energy convergence is below a predefined threshold (e.g., chemical accuracy of 1.6 mHa) or the gradient norms fall below a cutoff.

Post-processing and Validation:

- Compare the final computed energy with classically computed full configuration interaction (FCI) results for validation.

- The shot reduction can be quantified as ( \text{Reduction} = (1 - \frac{S{\text{optimized}}}{S{\text{naive}}}) \times 100\% ), where ( S_{\text{naive}} ) is the shots required with a uniform shot distribution.

Table 2: Exemplar Experimental Results from Shot-Efficient ADAPT-VQE

| Molecular System | Qubits | Optimization Strategy | Reported Shot Reduction | Accuracy Achieved |

|---|---|---|---|---|

| H₂ [2] | 4 | Variance-Based Shot Allocation (VPSR) | 43.21% | Chemical Accuracy |

| LiH [2] | ~12 (approximated) | Variance-Based Shot Allocation (VPSR) | 51.23% | Chemical Accuracy |

| BeH₂ [2] | 14 | Pauli Measurement Reuse & Grouping | Avg. 32.29% (with grouping & reuse) | Chemical Accuracy |

The Scientist's Toolkit

This section details key resources for implementing variance-based shot allocation protocols.

Table 3: Essential Research Reagent Solutions for Shot Budget Experiments

| Tool / Resource | Function / Description | Example Use Case |

|---|---|---|

| VQE/ADAPT-VQE Software Stack | A quantum computing software framework (e.g., Qiskit, PennyLane) that allows for the definition of molecular Hamiltonians, construction of adaptive ansatze, and calculation of gradients. | Core platform for implementing the shot-efficient ADAPT-VQE protocol. |

| Commutativity Grouping Algorithm | A classical algorithm to partition the Pauli terms of a Hamiltonian (or gradient commutator) into mutually commuting sets. Qubit-wise commutativity (QWC) is a common, efficient method. | Reduces the number of distinct circuit executions required per measurement round [2]. |

| Variance Estimator | A classical subroutine that estimates the variance of each Pauli term (or group) from a preliminary set of shots. This data drives the optimal shot allocation. | Essential for dynamically determining the shot budget ( S_i ) for each term in the Hamiltonian. |

| Cloud-Based QPU Access | Access to multiple, heterogeneous quantum devices (e.g., via IBM Cloud, Amazon Braket) for running variational algorithms and shot-wise distribution experiments. | Essential for experimental validation on real hardware with realistic noise profiles [23]. |

| Classical Optimizer (eCANS/iCANS) | An adaptive classical optimizer designed for VQAs that dynamically adjusts the number of shots per gradient component to minimize resource consumption [17]. | Can be used in conjunction with or as an alternative to the variance-based allocation for Hamiltonian terms. |

Theoretical frameworks for deriving the optimum shot budget, particularly variance-based shot allocation, are critical for unlocking the potential of NISQ-era quantum computers. By moving beyond uniform shot distribution and leveraging statistical principles and resource distribution across QPUs, these methods significantly reduce the quantum measurement overhead—a major bottleneck in variational algorithms. The detailed protocols and toolkits provided herein offer researchers and drug development professionals a practical pathway to implement these strategies, bringing us closer to efficient and accurate quantum simulations of complex molecular systems. Future work will focus on further integrating these techniques with advanced error mitigation and testing their performance on larger, real-world molecular systems using cloud-accessible quantum hardware.

Within the framework of variance-based shot allocation research, Grouping Commuting Pauli Terms stands as a foundational technique to minimize the quantum measurement overhead inherent in variational quantum algorithms like the Variational Quantum Eigensolver (VQE) and its adaptive variants. The "measurement problem" arises because the molecular Hamiltonian, expressed as a sum of numerous Pauli terms, requires a large number of individual expectation value measurements, which is a primary bottleneck on Noisy Intermediate-Scale Quantum (NISQ) devices [2] [24]. For instance, while an H₂ molecule Hamiltonian may have 15 terms, a water (H₂O) molecule Hamiltonian can have over 1,000 terms [24].

The core principle of Qubit-Wise Commutativity (QWC) grouping is to identify and batch together Pauli terms that can be measured simultaneously in a single quantum circuit execution, thereby drastically reducing the total number of circuit executions required [25]. This efficient grouping is a critical precursor to applying variance-based shot allocation, as it reduces the number of distinct measurement groups whose shot budgets need to be optimized.

Theoretical Foundation and Definitions

A Hamiltonian for a quantum chemical system is typically decomposed into a linear combination of Pauli terms: [H = \sumi ci hi] where each (hi) is a Pauli string (a tensor product of Pauli operators (I, \sigmax, \sigmay, \sigma_z)) [24].

- Commutativity: Two operators (A) and (B) are compatible if they commute, i.e., ([A, B] = AB - BA = 0). This means they share a common set of eigenvectors and can be measured simultaneously without disturbing each other's state [25].

- Qubit-Wise Commutativity (QWC): A stricter and more hardware-friendly form of commutativity. Two Pauli terms are qubit-wise commuting if they commute on each qubit individually [26]. This avoids the need for complex, entangled basis transformation circuits required by Fully Commuting (FC) grouping strategies.

Performance and Comparative Analysis

The following table summarizes the performance gains and characteristics of QWC grouping as demonstrated in recent research.

Table 1: Performance Metrics of QWC Grouping and Related Techniques

| Metric / Method | Reported Performance / Characteristic | Context & Notes |

|---|---|---|

| Shot Reduction (QWC Grouping) | Up to ~90% reduction in measurement circuits [24]. | Demonstrated for molecular Hamiltonians. |

| Shot Reduction (Grouping + Reuse) | Average shot usage reduced to 32.29% of original [2]. | In ADAPT-VQE, combining QWC grouping with Pauli measurement reuse. |

| Variance Reduction | GALIC (a hybrid method) lowers variance by ~20% avg. vs. QWC [26]. | Highlights the variance-performance trade-off between QWC and FC grouping. |

| Key Advantage | Requires no entangling operations for measurement [26]. | Results in low-depth, high-fidelity measurement circuits suitable for NISQ devices. |

| Key Trade-off | Higher estimator variance compared to Fully Commuting (FC) grouping [26]. | FC grouping uses fewer, larger groups but requires more complex circuits. |

Standardized Experimental Protocol

This protocol details the steps for implementing QWC grouping within a VQE experiment, for example, using the PennyLane library.

Table 2: Reagents and Computational Tools for QWC Grouping

| Item / Resource | Function / Description | Example / Implementation |

|---|---|---|

| Molecular Hamiltonian | The target observable for the VQE algorithm. | Generated via PennyLane's qml.data.load() for molecules like H₂ or H₂O [24]. |

| Grouping Strategy | The algorithm for identifying commuting observables. | "qwc" (Qubit-wise Commutativity) in PennyLane [25]. |

| Quantum Simulator/Device | Executes the parameterized quantum circuits. | qml.device("default.qubit") in PennyLane [24]. |

| Classical Optimizer | Minimizes the energy cost function. | Optimizers like NELDER-MEAD or MONTE CARLO [25]. |

Protocol Steps:

System Definition and Hamiltonian Generation:

- Define the molecular system (atoms, geometry, basis set).

- Generate the qubit Hamiltonian using a fermion-to-qubit mapping (e.g., Jordan-Wigner or Bravyi-Kitaev). The Hamiltonian will be a

Sumobject of Pauli terms.

Apply QWC Grouping:

- Use the library's grouping function to partition the Hamiltonian's Pauli terms into QWC groups.

- PennyLane Code Snippet:

- The output is a list of Hamiltonian subsets, where all terms within a subset are mutually qubit-wise commuting.

Circuit Execution and Expectation Value Calculation:

- For each group generated in Step 2, construct a single quantum circuit.

- Apply a basis-transforming gate sequence to rotate the entire group into the computational (Z) basis. For QWC groups, this typically involves only single-qubit gates (e.g., Hadamard for X,

Rx(π/2)for Y). - Execute the circuit for a allocated number of shots (

n_shots), and collect the measurement outcomes. - From the single result histogram, compute the expectation values for every Pauli term in the group through classical post-processing.

Energy Estimation and Classical Optimization:

- Reconstruct the total Hamiltonian expectation value by summing the contributions from all terms across all groups: (\text{cost}(\theta) = \sumi ci \langle h_i \rangle).

- Feed this energy value to a classical optimizer, which updates the quantum circuit parameters

θfor the next iteration.

The workflow from the original Hamiltonian to the final energy estimation, incorporating grouping, is visualized below.

Diagram 1: Workflow of QWC Grouping in VQE

Advanced Variations and Future Directions

The basic QWC technique serves as a starting point for more sophisticated grouping strategies that offer different trade-offs.